Abstract

Trust plays a critical role in both effective teamwork and the effective use of autonomous technologies, and therefore holds paramount importance in human-autonomy teaming (HAT). Using qualitative analysis of the interviews from an 8-hr-long experiment conducted in an aircraft simulation environment, this study identifies the socio-emotional and team-related qualities that humans desire in their autonomous teammate for them to trust it.

Keywords

Introduction

Trust in human-autonomy teaming has received significant attention in HFES. Indeed, trust plays a critical role in both effective teamwork (Costa et al., 2018) and the effective use of autonomous technologies (Glikson & Woolley, 2020). Extensive research spanning decades on human teams and organizations has consistently indicated that teams with greater mutual trust tend to outperform and function more effectively compared to those lacking in trust. Trust among team members encourages better collaboration, enhances team satisfaction, and positively impacts various other factors crucial for achieving better team outcomes (e.g., Breuer et al., 2020; McNeese et al., 2018). As autonomous technology becomes increasingly integrated into human teams, the degree to which humans and autonomy can collaborate in trusting ways holds significant potential in determining the success and effectiveness of human-autonomy teams (HATs; Hauptman et al., 2022; Zhang et al., 2023).

HAT research has emphasized autonomy’s role in facilitating humans in task coordination and completion (McNeese et al., 2019, 2021), and primarily examined task-related outcomes such as situation awareness (Gonzalez et al., 2014), performance (McNeese et al., 2021), team mental models (Schelble et al., 2022), and team cognition (Cooke et al., 2024). This has resulted in a large body of knowledge on how the autonomy’s reliability (Yang et al., 2023), transparency (Chen et al., 2017; Wright et al., 2020), and explainability (Hauptman et al., 2024; Zhang et al., 2024) can increase and calibrate humans’ trust. While much research has predominantly focused on cognitive aspects of trust, little is known regarding the affective and social aspects. This is perhaps due to: (1) the limitation that prior AI technology has not allowed the autonomous teammate to have a commensurate level of social richness; and (2) the assumption that autonomous teammates are only valuable to teams in terms of efficient task completion and should not have attributes unrelated to the task.

With rapid advancements in large language models, autonomous teammates have increasing potential to communicate and coordinate like a human using natural human language. This presents both challenges and opportunities in designing effective (verbal) behaviors for autonomy within HATs, as these behaviors might influence social perceptions. In fact, recent human-robot interaction (HRI) research (Claure et al., 2023) has suggested that a robot’s resource allocation behavior that is not particularly social can elicit social perceptions in humans who interact with it. Given these recent technological advancements and scientific findings, and that both trust and teaming are essentially social constructs, it is timely and imperative to understand the affective and social aspects of humans’ trust in an autonomous teammate. To address this objective, our work aims to answer the following research question:

Method

Participants

Thirty-six participants were recruited from two major universities in the USA. Participants were required to speak and write fluent English and have normal or corrected-to-normal vision. Their ages ranged from 18 to 36 years (M = 22.51, SD = 3.89) across 20 men, 16 women. Nineteen participants identified as Asian or Asian American, 12 Caucasian or White, 2 identified with more than one ethnic background or ethnicity, 1 African American, 1 Hispanic, 1 Native American. Compensation for the participation was offered as either 10 US Dollars per hour or one research credit for every hour of participation. On average, participants reported to interact with a form of AI on a monthly to weekly basis.

Study Design

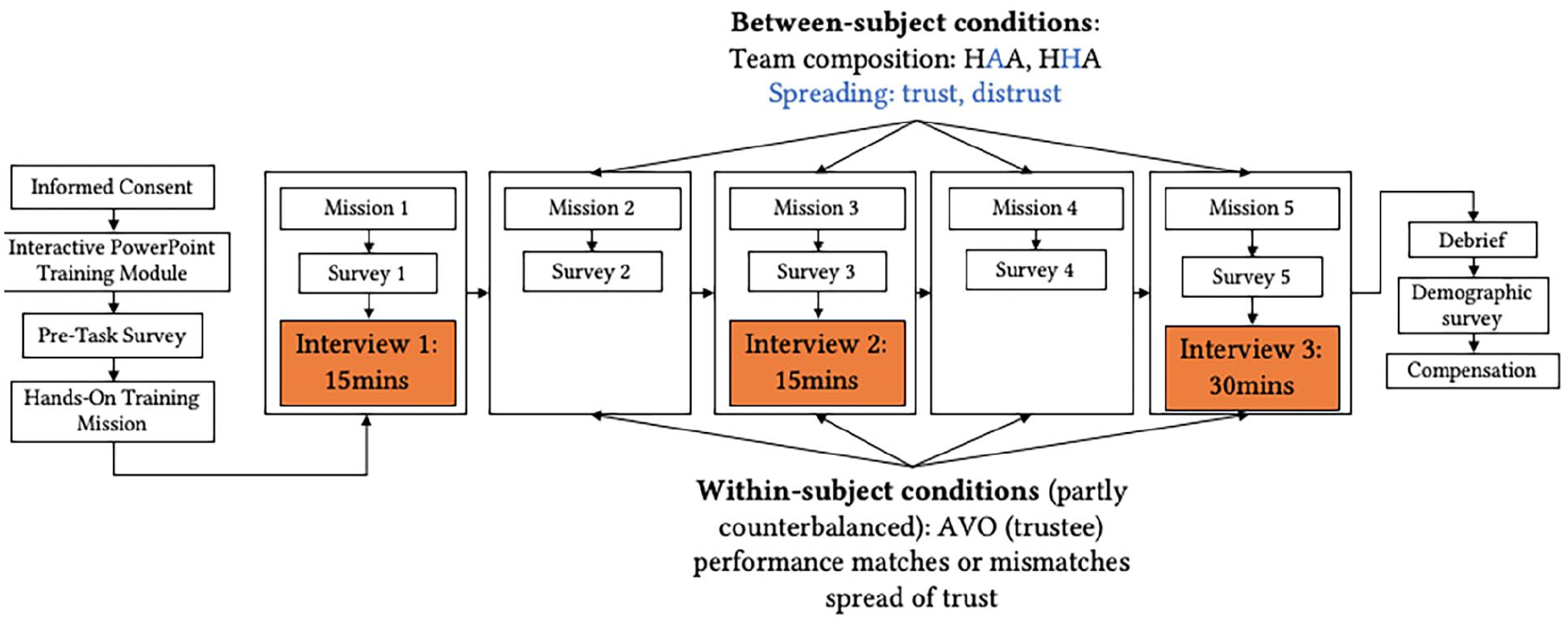

As a part of a larger project, this study focuses on the qualitative analysis of interviews from an 8-hr-long experiment conducted in the Cognitive Engineering Research on Team Tasks-Remote Piloted Aircraft-System Synthetic Task Environment (CERTT-RPAS-STE; Cooke & Shope, 2004). During this experiment, participants collaborated in a three-member human-autonomy team, wherein team composition (human-human-autonomy, human-autonomy-autonomy) and verbal spread of trust or distrust toward an autonomous teammate were manipulated as between-subject variables, and the autonomous teammate’s behavior matching or mismatching the spread of trust or distrust was manipulated as a within-subject variable (see Figure 1 for study design and procedure and Table 1 for specific manipulations).

Study design and procedure.

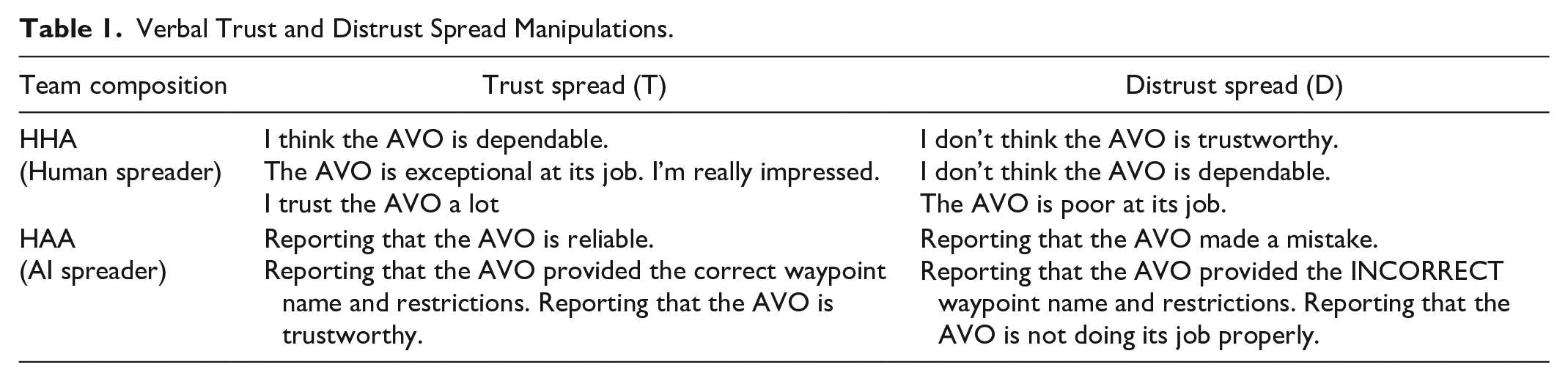

Verbal Trust and Distrust Spread Manipulations.

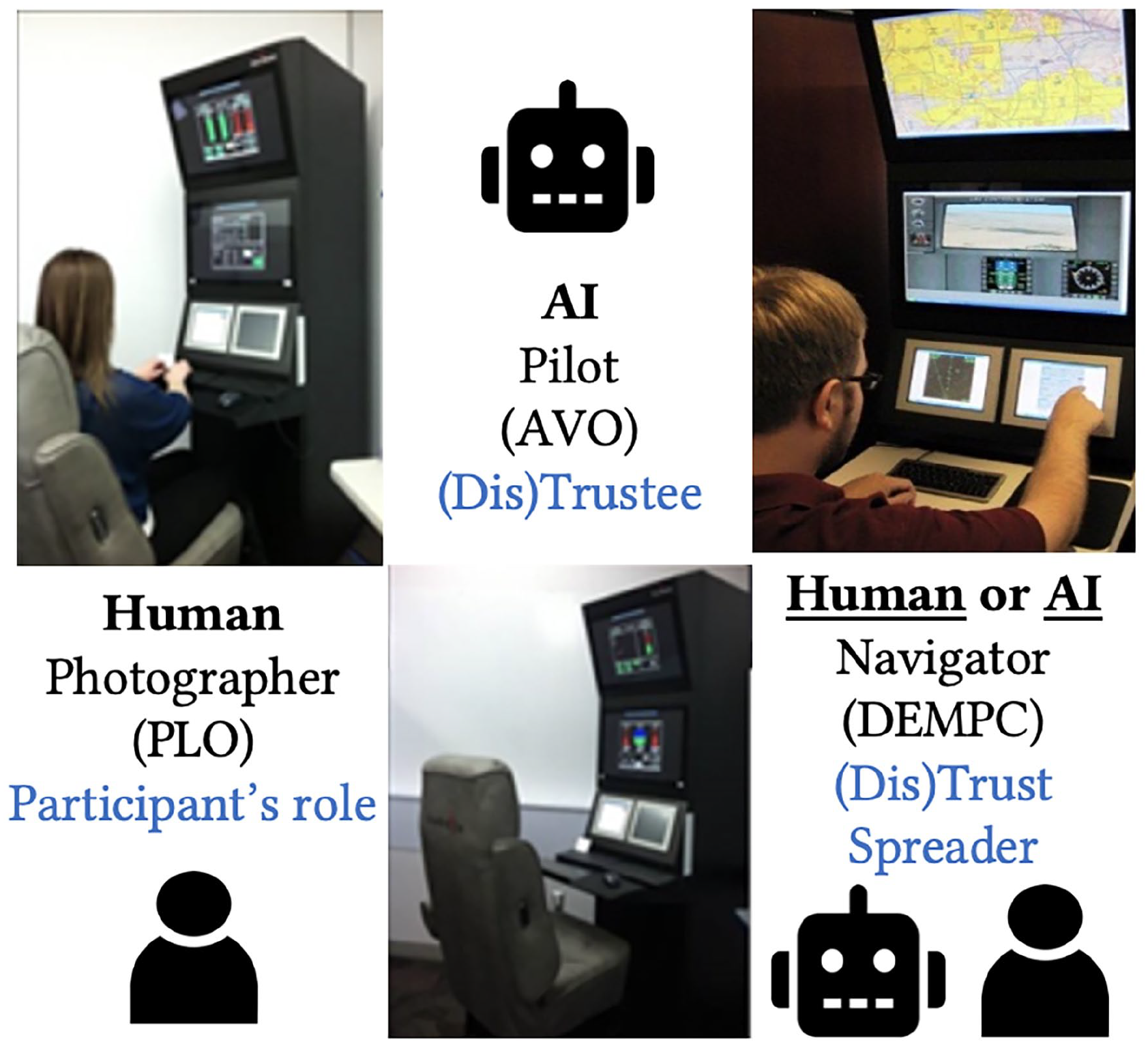

The collaboration task simulates a reconnaissance mission requiring all three members on the team (a pilot, a navigator, and a photographer) to communicate via chat and coordinate information regarding a Remotely Piloted Aircraft’s (RPA) airspeed and altitude to take photos of target waypoints (see Figure 2 for role assignments).

Human-autonomy team member roles in the CERTT system.

The experimental manipulations serve to ground participants’ experiences in critical incidents of trust and distrust spread during human-autonomy collaboration, allowing us to gain a more contextualized and nuanced understanding of the socio-emotional and team-level traits desired by humans from a human versus an autonomous teammate. We conducted an in-depth qualitative analysis of the interview transcripts, during which two of the authors iteratively went through independent open-coding, collaborative axial-coding, and focused-coding (Maxwell, 2012). In this process, the authors highlighted quotes, identified and refined themes, categorized them into higher-level themes, and highlighted distinctions and connections among the themes, until we were able to use the quotes to construct a comprehensive narrative that could jointly answer the research question.

Findings

Socio-emotional Qualities

Our data analysis revealed four socio-emotional qualities humans desire their autonomous teammate to display in order for them to increase trust: (1) exhibition of a distinct personality; (2) social appropriateness or professionalism; (3) the ability to understand and detect human emotion, especially frustration, during task collaboration; and (4) the ability to connect with humans on an interpersonal level, primarily through casual communication, positivity, and nonverbal communication.

First, many participants reported that they would be able to trust an autonomous teammate more “if they (the autonomous teammate) have a personality similar to a human and identify with them more.” (P1, HHA-D). More importantly, a distinct personality allows people to tailor their interaction with the (AI or human) teammate to create a desired outcome. As P16 (HAA-T) noted “Humans have different personalities, so you may interact with your colleagues differently or trust them differently. And the way you trust or not them may affect how they interact with you. Like, if you want to push A to get things done, your message to A might be different from if you’re pushing B to get things done, because they have different personalities. But AIs don’t have a personality you could tailor your message to. So whether you trust or not trust them, it’s not gonna do anything to them.”

Second, many participants (especially in the condition where the AI spread distrust) mentioned that an AI teammate should remain professional and socially appropriate. For them, the act of speaking about a teammate’s mistake is not professional or appropriate. Even though the message itself was purely informational, objectively reporting the teammate’s error, it was perceived as the AI displaying emotion. As P10 (HAA-D) said, “if they (the AI) keep it professional in the sense that they don’t portray their emotions within the communications then I would say I can trust them more of their abilities.”

Third, even though participants did not want the AI to display its own emotion, it was desired that it could detect and understand humans’ emotions through their messages or other means. For instance, P20 (HHA-D) would have wanted the AI to “understand someone’s frustration because there have been there were times where I was frustrated having the AI to do the same mistake again over and over.” Similarly, P2 (HHA-T) noted that “it’s important that the agent has the ability to recognize the emotion.” P10 (HAA-D) did not trust the AI as much as they trusted humans because “Humans are better at understanding each other. If they work together, they kind of know their emotions through their tone.”

Fourth, participants desired an AI teammate to connect with them on an interpersonal level, instead of being task-oriented, to trust it more. Many participants in HHA-T (such as P26, P44) reported that they trusted the trust spreader more for their “casual communication” and “positivity.” P26 said, “I like DEMPC would often say, AVO is reliable is doing good job. AVO is rather quiet in that sense, sticks to the tasks. . . It goes back to that casual communication thing. I feel like I don’t have a connection with AVO in that sense. But DEMPC and I could have banter every now and then. I think it definitely increased my trust because of the human factor.” Additionally, some reported that the interpersonal connection cannot be achieved without physical interaction. For instance, P43 (HAA-T) admitted that “I probably trust them more if I can see their body language, but here I can’t see them or touch them.”

Team-related Qualities

We also identified four properties related to teaming that an autonomous teammate is expected to exhibit to increase human trust in them: (1) altruism; (2) conflict management skills; (3) the ability to engage in team building and bonding activities; and (4) mutual respect by noting other members’ opinions.

First, some participants valued an AI teammate’s “not having a self-interest” and its potential to “put the team in front of the self,” especially if it is designed to specifically help the team. P19 (HHA-T) said, “Unlike humans who may have individual agenda that might be against the team’s common goal, the AI’s goal is supposed to support the team’s goal. So I appreciate that the AVO circled back because of a mistake I made. In a human scenario, it would have affected his or her performance evaluation so they might not do that for someone else’s mistake.”

Second, participants desired AI teammates to have conflict management skills and believed that they are “in a good position to moderate human conflicts” because unlike humans, they don’t have “interpersonal risks,” and that they are “more impartial.” For instance, P4 (HAA-D) noted that “human teams have interpersonal issues all the time, as an AI, instead of stirring up a conflict, it should help humans resolve human conflicts in a non-biased manner.”

Third, some participants mentioned the importance of spending time outside of work to facilitate trust-building and alluded to the potential of AI teammate being part of team-building activities. P2 (HHA-T) believed that “spending more time with the team outside the job, will definitely help developing either trust or distrust. Because the more you interact with, doesn’t matter if it’s AI or human, the more you feel confident about your feeling about your teammate.” Whereas P19 (HHA-T) noted that “(human team) it’s 100% different from human-AI teams, human and AI don’t have that same communication outside of the job.”

Lastly, participants emphasized the AI’s ability to show mutual respect and note human teammates’ opinions. For the AI teammate being distrusted by one team member, this can be reflected in it addressing the feedback of the distrust spreader. For instance, P15 (HHA-D) noted that “AVO should be able to see DEMPC’s message, for it to correct and improve.” In another case, noting other members’ opinions means it can become more wary of the teammate whose trustworthiness is being questioned by another teammate.

Importantly, in line with a recent overview of communication in human-AI teaming (Duan et al., 2023), most of the desired qualities that contribute to humans’ trust in an autonomous teammate can be reflected through communication. For instance, an autonomous agent’s distinct personality, exhibited through language style, helps humans identify with, relate to, and trust that agent. This highlights the importance of purposeful design of the communicative capabilities of autonomous teammates that consider their potential socio-emotional and team-related consequences for human team members (Claure et al., 2023). These findings are novel in that they uncover the components of affect-based trust (McAllister, 1995) that have rarely been investigated in HAT settings.

Discussion

Findings of this study provide valuable insights into the design of trustworthy autonomous teammates and effective human-autonomy collaboration. First, our findings suggest that autonomous teammates should be equipped with the capability to perform casual communication (i.e., small talk), and provide interpersonal feedback (i.e., positivity) on humans’ work, while maintaining social appropriateness. Second, trustworthy autonomous teammates should be able to not only execute their own tasks well, but also be aware of humans’ emotions, especially frustration, and monitor their level of workload in real time, and intervene to regulate their emotions as needed. Practically, this can be done by leveraging advanced natural language processing to detect emotionally-charged lexicons in the communication channel, or through psychophysiological signals such as heart rate. Third, by leveraging the HAT collaboration system’s ability to monitor team-level communication and dynamics, autonomous teammates should be able to detect early signs of intra-team conflicts to effectively prevent them from happening, or intervene when they occur, potentially through the autonomous teammate’s ability to influence other team members socially (Flathmann et al., 2024). Findings of this study also contribute to the development of trust theories within HATs by unpacking the socio-emotional and teaming components of trust and revealing that they can indeed impact and shape human-autonomy collaboration. Our work represents a starting point for inductively building trust frameworks specific for HATs.

Footnotes

Acknowledgements

We acknowledge Christopher Myers, Beau Schelble, Yiwen Zhao, Anna Crofton, Kalia McManus, Edith Garner, Jessica Harley, Anya Polomis, Yawen Tan, Hruday Shah, Vibha Mohan, Elliot Ruble, Anmol More, Garrison Nelson, Shalom Suresh, Sakthi Thiyagarajan, Preethi Venkatesh, Stephanie Greenspan, Iman Makonjia, and Guadalupe Bustamante for their contributions to this research.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by Air Force Office of Scientific Research Award No. FA9550-21-1-0314 (Program Manager: Laura Steckman).