Abstract

Augmented reality (AR) technology has shown great promise for its ability to seamlessly integrate into the real world and simulated environments. Commercially available headsets offer great functionality for tasks ranging from gaming to productivity. However, the main limitation that these headsets have is that they are bulky, cause visual strain, and being in mixed reality causes some to experience motion sickness-like symptoms. Therefore, we set an objective of this study as to design prototype AR glasses to detect physical workload in real time using a machine learning algorithm. The glasses are made from low-cost, commercially available components that emphasize ergonomics while maintaining functionality. Preliminary results from our pilot study shows that visual strain, bulkiness, and simulator sickness are all decreased when using the prototype AR glasses as compared to the Meta Quest Pro.

Introduction

Previous studies have shown the viability of augmented reality glasses in several domains such as rehabilitation (Pereira et al., 2020), education (Khosravi et al., 2022), surgery (Condino et al., 2018), manufacturing (Kortekamp et al., 2019), and commercial use (Zhou et al., 2020). These studies often used commercially available headsets such as the Meta Quest or the Microsoft HoloLens 2. On board, the headsets offer an array of features ranging from eye and hand tracking to spatial mapping and large field of views. Although these headsets function well, they have some limitations such as bulkiness (Endsley et al., 2017) and visual fatigue (Lee et al., 2021). The weight of the entire headset can cause strain on the neck after prolonged periods of use. Additionally, one’s eyes can get fatigued from looking at the screen within the headsets for extended periods. A symptom that stems from visual fatigue is simulator sickness (Vovk et al., 2018), which causes discomfort, nausea, and disorientation because of perceived discrepancies in the real world and the simulation. These side effects can limit the time an individual can spend with the device.

Limitations of Current Design

Previous studies and these use cases have helped highlight discrepancies in design that commercial headsets have. The bulkiness of the headsets stems from the hardware that is used. To achieve high performance and fidelity, sufficiently powerful hardware is required. Furthermore, the physical design of most devices is a headset that rests on the user’s head and is held on by a tightened strap on the back. This inherently puts weight on the user’s neck and shoulder muscles, and over time can cause fatigue. The Microsoft HoloLens 2 is tailored to be an AR headset that aids workflow, but the aforementioned bulkiness can make it hard to complete tasks for long periods of time (Flick et al., 2021). This causes the scope of applicability to decrease to more static tasks.

The display technology for AR glasses is another factor that inhibits users. Some headsets display the view in front of the user through cameras that stream the video in real time to the user. This method of showing AR is a cause of simulator sickness, as the slight spacial discrepancies in the screen and reality cause discomfort and nausea. Furthermore, using a screen to display objects in front of the user can cause issues with depth of field. This is known as vergence accommodation conflict (Condino et al., 2020) and it becomes an issue when the user sees the object in 2D on the screen and the object is farther away in reality. The eye cannot accommodate the object both in 2D and 3D, resulting in visual fatigue.

Significance of Bio Signal Feedback

The human body releases signals to control and regulate processes and tasks. For this study, we are interested in electromyography (EMG) and electrocardiography (ECG). Studies have been done using EMG signals ranging from gesture detection (Kim et al., 2008) to visual arm illusions (Aung & Al-Jumaily, 2014). ECG signals have been used to detect heart rate patterns (Nikan et al., 2017) and classify emotions (Peng, 2022). Studies combining the two have shown the capacity to understand the stress the body is under, both physically and mentally (Rijken et al., 2016). But there is a research gap in integrating these bio signals with AR technology. The extent of the studies done involves proposing to use EMG signals to interact with objects in augmented, or virtual reality (VR) (Abrash, 2021), and creating guidelines for developing and testing biofeedback in VR environments (Silveira et al., 2023). A study done using AR glasses to reduce freezing of gait for individuals with Parkinson’s disease shows great promise for integrating biological indicators with AR (Janssen et al., 2017). Although this study used 3D capture technology instead of bio signal sensors, it shows the potential for AR and biofeedback.

There are many devices on the market that track the user’s bio signals passively. For example, the Apple Watch or Fitbit. The issue with these is that the user would have to look down at their wrist to obtain this data. Furthermore, to get the full summary of the data a mobile app is often required. With AR glasses, one would simply have to glance at the information in front of their eyes. This is beneficial for physically and mentally demanding tasks that prevent the user from readily accessing their wrist or phone (Klose et al., 2019; Tran et al., 2018). Additionally, the information can be put in a more concise manner (Arregui et al., 2022).

Problem statement and objectives

The components of our prototype glasses would have to be lightweight to ensure they adhere to our ergonomic requirements. They would need to be straightforward to use to allow users from any background to be able to interact with the technology. Cost is a non-ergonomic related requirement that is important to consider when developing these prototype glasses as well (Motti & Caine, 2014). Although this approach removes a lot of the functionality of commercial headsets, it is an ergonomic centered solution which is an aspect highly valued by users (Lu & Bowman, 2021).

Method

Components

This study began with literature review of previous studies involving AR glasses development and design, as well as ECG and EMG signal processing. This paved the way for the components to be determined and sourced. One of the main elements of the glasses is the system that would control all the processes and data collection. For this, we went with the Arduino Nano RP2040 Connect. This board features both Wi- Fi and Bluetooth making it ideal for the prototype, as Wi-Fi connectivity was crucial for our design. Additionally, the dual core 32-bit Arm® Cortex®-M0+ is a more than capable processor for this project.

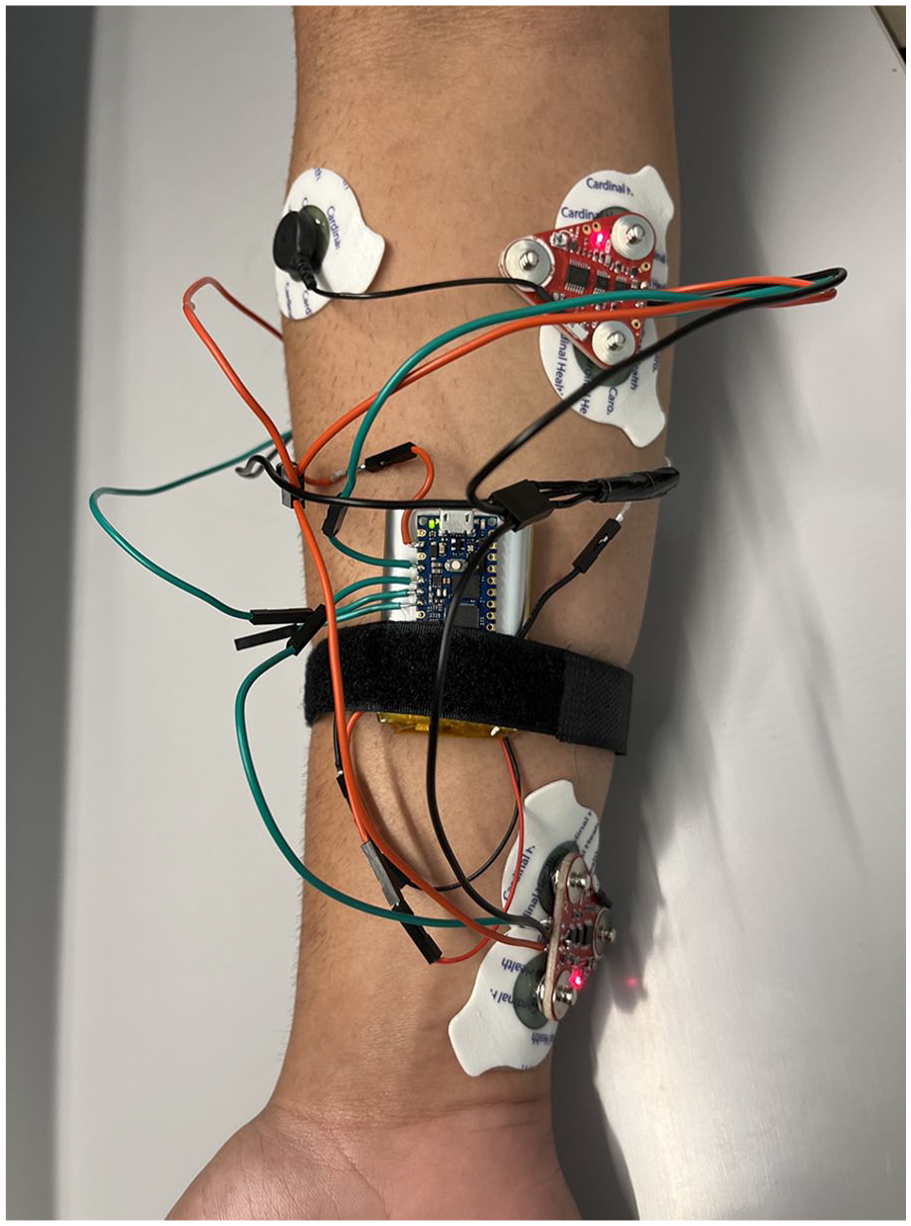

After the board was determined, the next crucial component was the sensors (Figure 1). Many commercially available EMG sensors are large and require electrodes to be worn on the target muscles. Additionally, they all have wires connecting the electrodes to the main board which does all the signal processing. These elements would interfere with our goal of minimizing intrusion on the user’s body. Therefore, in this study, we went with the MyoWare 2.0 Muscle Sensors. It is an EMG sensor that does all the signal processing and collection on a small triangular shaped board with a short cable for a reference electrode. It can output rectified, raw, or envelop data. We decided to use the envelop data as the board does some processing before sending it to our board. This would limit the amount of filtering Arduino would have to do when collecting data. Most commercially available ECG sensors utilize photoplethysmography, a technique where light is used to detect changes in blood circulation. This technology is easy to implement but hard to perfect. The ones available from retailers are often cheaply built and do not have good quality LEDs and sensors. Due to this we tested many sensors and went with a generic one that we were most satisfied with. It is important to note that both sensors are not medical grade.

Sensor placement.

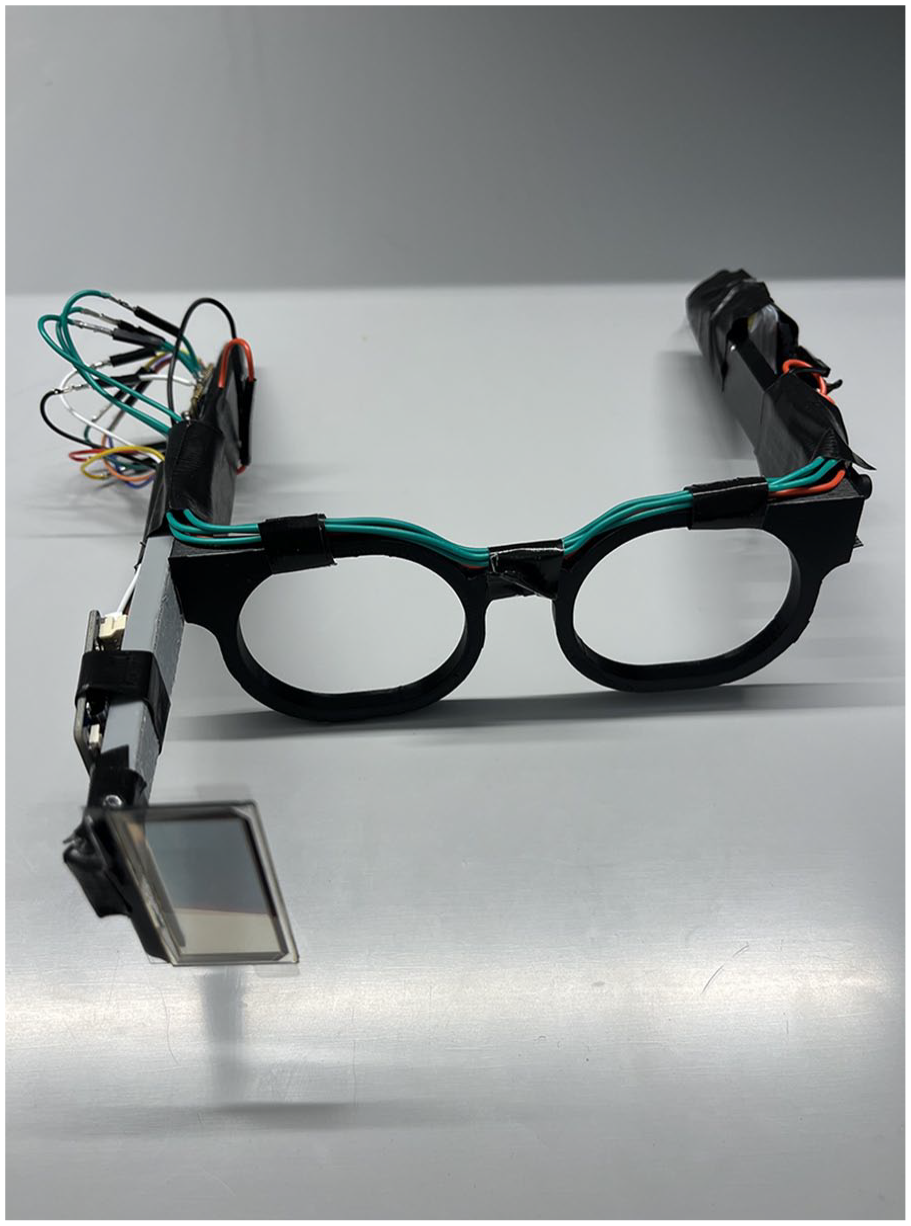

Moving onto the components that would make up the frame (Figure 2). To combat many of the limitations mentioned previously, we opted for a transparent OLED display. This display is a small rectangle that would show all the information the user would need to see. For the actual glasses, we designed a 3D model using Fusion360 and 3D printed it using polylactic acid (PLA). This allowed us to customize every aspect to address the bulkiness of commercial headsets. Another benefit of 3D printing is that we had the ability to test designs fast. All the components would be powered by rechargeable lithium-ion polymer (LiPo) batteries.

Manufactured augmented-reality glasses.

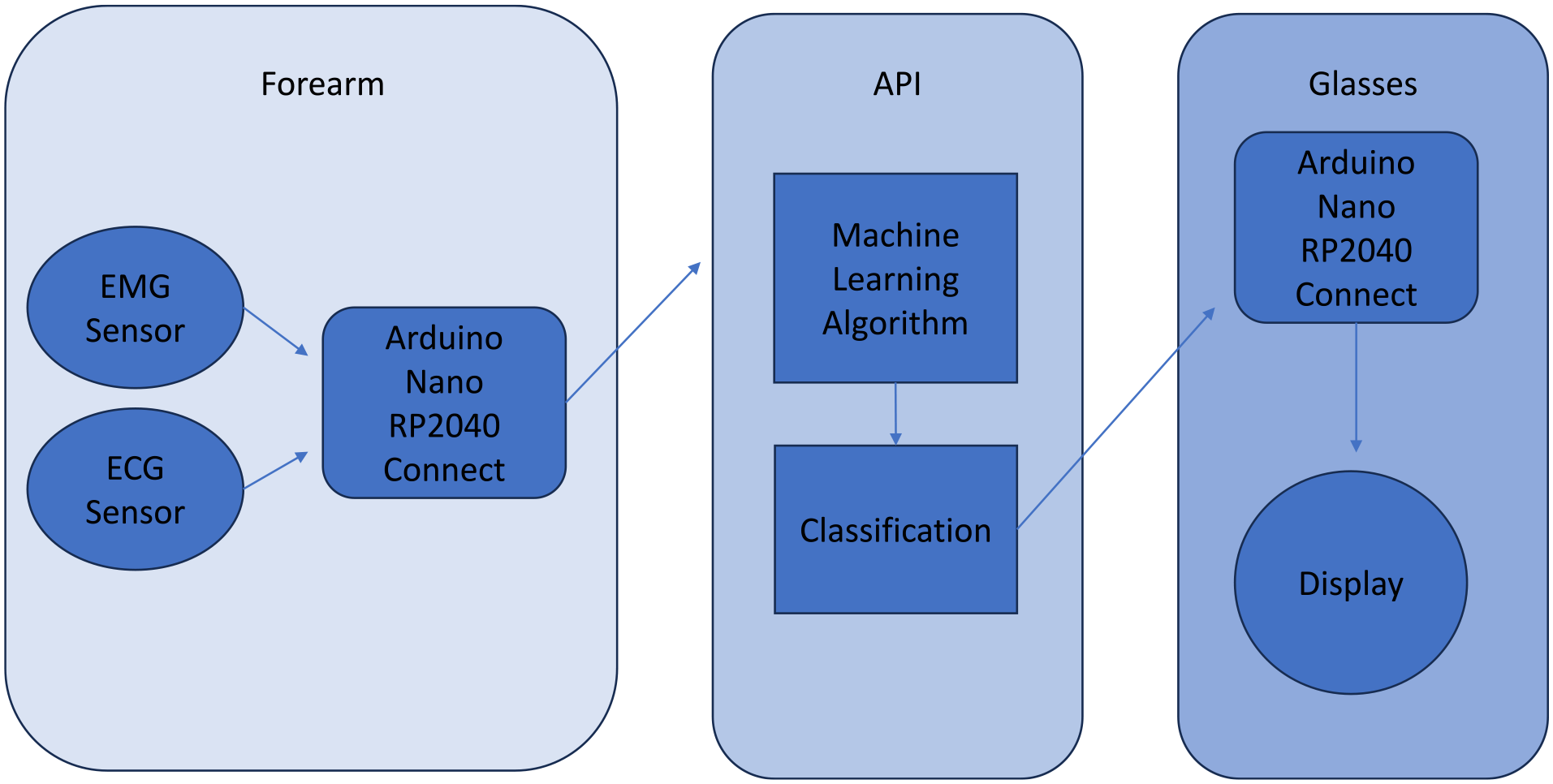

Design and Data Flow

The design of the glasses involves two major parts, data collection and display. For data collection, on the user’s right forearm there would be three EMG sensors placed on the flexor carpi ulnaris, flexor digitorum, extensor carpi radialis longus, respectively. The EMG signals from these muscles are easy to classify and they are prominent enough to find on most individuals. The heart rate sensor is placed on the user’s finger. Then all the data would be sent to an Arduino Nano RP2040 on the forearm. From the Nano it would be sent to our custom-built API where it would be processed by our machine learning algorithm. Then the classified data would be sent to another Arduino Nano RP2040 on the frame of the glasses and a message would be displayed on the transparent OLED based on the physical stress classification (Figure 3). The glasses themselves have three buttons to turn the display on and off, and to dismiss a message that may have been misclassified or is no longer relevant. If the display is turned off but the user is under physical stress the display will turn back on.

Data processing.

Testing

To test the functionality, workload, and accuracy of our machine learning model we conducted a pilot test involving three participants (age M = 18.33 years, SD = 0.57 years, two males and one female). Of the participants, one was familiar with AR/VR, another had slight familiarity with AR/VR, and one had minimal to no experience with AR/VR. The participants were instructed to complete two tasks two times, one time with the Meta Quest Pro Headset on and one time with our prototype AR glasses.

The first task was to lift boxes from one location to another and back using both their hands five times. The box was about 6.80 kg and 43 cm by 33 cm by 25 cm. This would test the prototype glasses’ ability to gage the user’s physical stress, and it would show how the bulkiness impacts the user’s ability to move around. The second task was to write a small passage on a piece of printer paper using a pen, this would test the visual capabilities of both devices. These tasks were done in order, first with the prototype AR glasses and second with the Meta Quest Pro. Additionally, there were no time constraints on the tasks.

At the end of the tests the participants filled out the NASA-TLX form (Hart & Staveland, 1988), one for the Meta Quest Pro and one for our prototype glasses. They also wrote down comments about their experience with both devices and rated how accurately they felt their physical workload was tracked by the system on a scale from 1 to 10, one being very inaccurate and 10 being very accurate.

Results

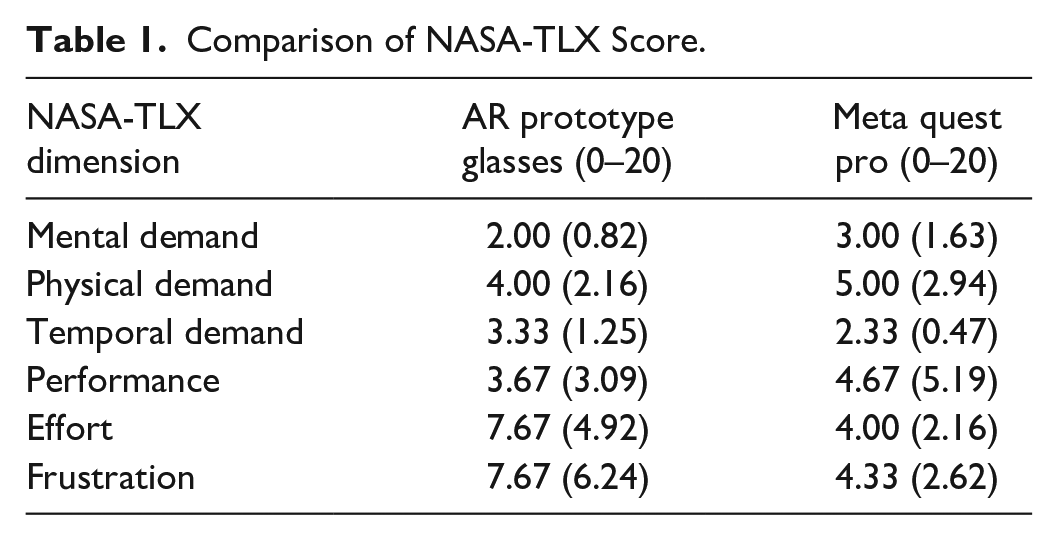

NASA-TLX

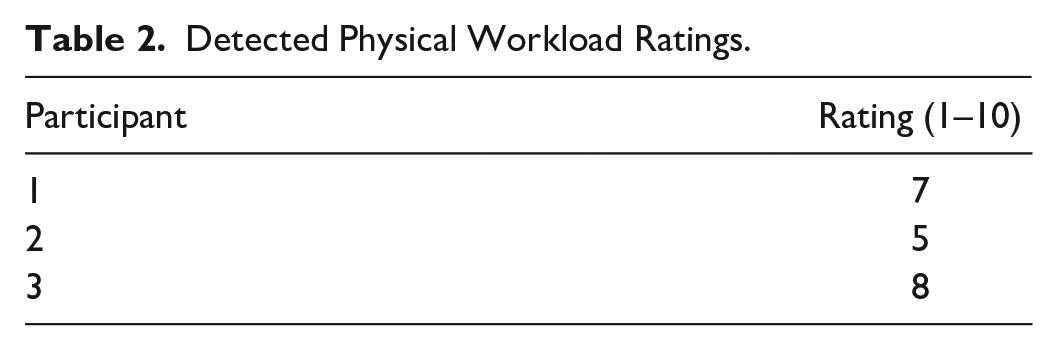

The NASA-TLX survey results are in the tables below. The averages (Table 1) show that overall, the prototype AR glasses performed better than the Meta Quest Pro in all categories except frustration. Furthermore, the results on workload detection (Table 2) shows that the machine learning model has moderate accuracy. In reference, if the values were below five, we would consider classification inaccurate, we interpreted values of five and above as having medium to high accuracy. These results support our initial observations about commercially available headsets.

Comparison of NASA-TLX Score.

Detected Physical Workload Ratings.

Discussion

Findings from Participant Comments

The participant comments on the glasses revealed additional information about the devices. Some participants felt the AR glasses were a bit too big for their head and thus during the tasks, especially the writing task, the glasses would slip off their head a little bit. This required them to readjust the fitting every so often. The problem of fitment is one that we knew was going to be a limitation when going with the glasses design instead of the headset design. With the headset design, it is easy to adjust fitment by adjusting the strap that holds the headset on the user’s head. Having to readjust the glasses was likely a factor in why the frustration levels were high during the experiment.

Another factor that participants noticed was the visual discrepancy when using the Meta Quest Pro. In the box moving task, some participants saw visual stutter when walking with the box in hand. The participants noted that their lower peripheral vision saw the box intact, but the view from the glasses showed stuttering. This led to some visual strain and simulator sickness from two of the participants. A similar phenomenon was observed during the writing task. If the participant was sitting at a comfortable height and writing, the writing on the paper was not clearly visible. This caused one participant to have to lean in closer to be able to see while they wrote out the passage. Other participants noted having to squint their eyes to read out the words they wrote with the headset on.

Limitations

The results from the pilot tests validated our objectives to reduce bulkiness and visual strain that commercial headsets had. However, there are several places where the implementation and design can be improved to better the functionality and ergonomics.

The factor that heavily limited this study was the bio signal sensors. Since both EMG and ECG sensors were intended for hobbyist use, the data output was often inconsistent. For the EMG sensors, even the slightest movement in the power or data wires connecting the sensor to the Arduino Nano would output inaccurate data. A similar thing happened with the ECG sensor where if the sensor did not have consistent contact and pressure on the user’s finger the data would lose accuracy. This inconsistency in data made training our machine learning algorithm difficult and led to overfitting.

For the testing portion of the study, it would have been more beneficial to test on a bigger set of individuals. Specifically, having a bigger variance in the experience participants have with AR/VR would lead to more precise results and differentiation in the frustration and effort aspect of our experiment. From the current data, it is clear in the level of frustration from participant-to-participant which ones had more AR/VR experience and which ones were unfamiliar with the technology. Additionally, a more diverse group of participants would be a true test of our machine learning algorithm’s ability to classify workload on different individuals.

Future Work

The AR glasses showed great promise for integrating a more ergonomic design. There is room for improvement in all aspects of the glasses. To solve the issue with inconsistent sensor data the next logical step would be to use medical grade sensors. The caveat that comes with this is a steep increase in price, as many medical grade EMG and ECG sensors are expensive. It is a tradeoff worth considering for the future development of this technology as it will only produce better results.

From a design perspective, there are some potential improvements. To reduce the sizing issues, looking into creating hot swapable side pieces and frames is a possible solution. Furthermore, condensing all the wires, boards, buttons, and power delivery onto a single circuit board is another avenue worth pursuing for future iterations of the AR glasses.

This study has shown the viability of integrating AR and bio signals together. More specifically it has shown how useful it is to have information displayed right in front of you without an app or separate device. Utilizing this idea, many use cases come to mind. For example, a fire fighter in a burning building may be putting their own life at risk by trying to push forward, but their body is no longer capable of doing so. In this situation a pair of AR glasses that monitor and notify about physical stress in real time is very useful. Furthermore, if the firefighters outside have access to the sensors on the firefighter inside, they can monitor their vitals in real time and advise their next move.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.