Abstract

This research investigates fatigue’s impact on arm gestures within augmented reality environments. Through the analysis of the gathered data, our goal is to develop a comprehensive understanding of the constraints and unique characteristics affecting the performance of arm gestures when individuals are fatigued. Based on our findings, prolonged engagement in full-arm movement gestures under the influence of fatigue resulted in a decline in muscle strength within upper body segments. Thus, this decline led to a notable reduction in the accuracy of gesture detection in the AR environment, dropping from an initial 97.7% to 75.9%. We also found that changes in torso movements can have a ripple effect on the upper and forearm regions. This valuable knowledge will enable us to enhance our gesture detection algorithms, thereby enhancing their precision and accuracy, even in fatigue-related situations.

Introduction

Gesture-based interactions are gaining prominence across diverse domains such as gaming (Chezhiyan et al., 2022), augmented (Challenor et al., 2023)/virtual reality (Zhang et al., 2022), and human-computer interactions (Hosseini et al., 2023). However, more research needs to be conducted to understand the influence of fatigue on human body gestures and its implications for developing more effective and reliable applications. This knowledge gap necessitates further exploration to bridge the understanding between fatigue and gesture-based interactions, enabling improved and dependable applications in these fields.

Our focus is to explore the impact of individual fatigue on arm gestures during augmented reality (AR) environments. We have gathered precise participant body movement data through real-time motion tracking sensors. The primary objective is to evaluate the recognition rate of two specific arm gestures and determine their ability to be accurately identified and captured, even in the presence of fatigue. Fatigue can lead to various changes in body posture, such as slouching, decreased muscular control, and reduced stability (Bazazan et al., 2019). These alterations can deviate from the anticipated or predefined postural patterns, posing challenges for the algorithm to identify and interpret the intended posture precisely. By studying the posture variations caused by fatigue, we can design advanced motion gesture computer algorithms that can adapt, perform accurately, promote user comfort, and enhance the overall user experience in various applications. The outcome of this study will improve the reliability and effectiveness of gesture-based interactions.

In the realm of AR, the development of accurate and reliable gesture-based interaction holds immense significance for improving usability. Users must seamlessly engage with virtual and physical objects within an AR environment. To gain a comprehensive understanding of users’ actions during tasks, the integration of highly accurate gesture-based interaction becomes necessary. As a result, it is crucial to prioritize the development of precise and dependable gesture-based interaction among the various applications of AR. During the utilization of AR environments, users may undergo fatigue due to prolonged interaction, the weight of the AR device, or the cognitive load imposed by the tasks (Gabbard et al., 2019; Guo & Kim, 2021; Kalra & Karar, 2023; Kim et al., 2019). This fatigue can have a detrimental impact on the user’s capability to execute arm gestures accurately and efficiently, consequently resulting in errors or a decline in overall performance. Hence, in this study, we examined the alterations in arm gestures over time due to fatigue and explored how these changes impacted the accuracy of arm gesture recognition.

The experiment involved using the Microsoft Hololens 2 headset to perform mirror movements of arm gestures, mimicking the actions demonstrated by a human avatar in the AR environment. The participants were required to continuously perform two sets of gestures for 15 min without any breaks, leading to fatigue. These specific gestures were chosen to avoid confusion or complexity that could hinder participants’ ability to follow along. To assess the accuracy of arm gestures under fatigue, real-time motion capture sensors were placed on the participants’ vertebrae and upper extremity regions. The experiment occurred in a motion capture sensor lab, where digitally augmented content was overlaid onto the physical wall.

Methodology

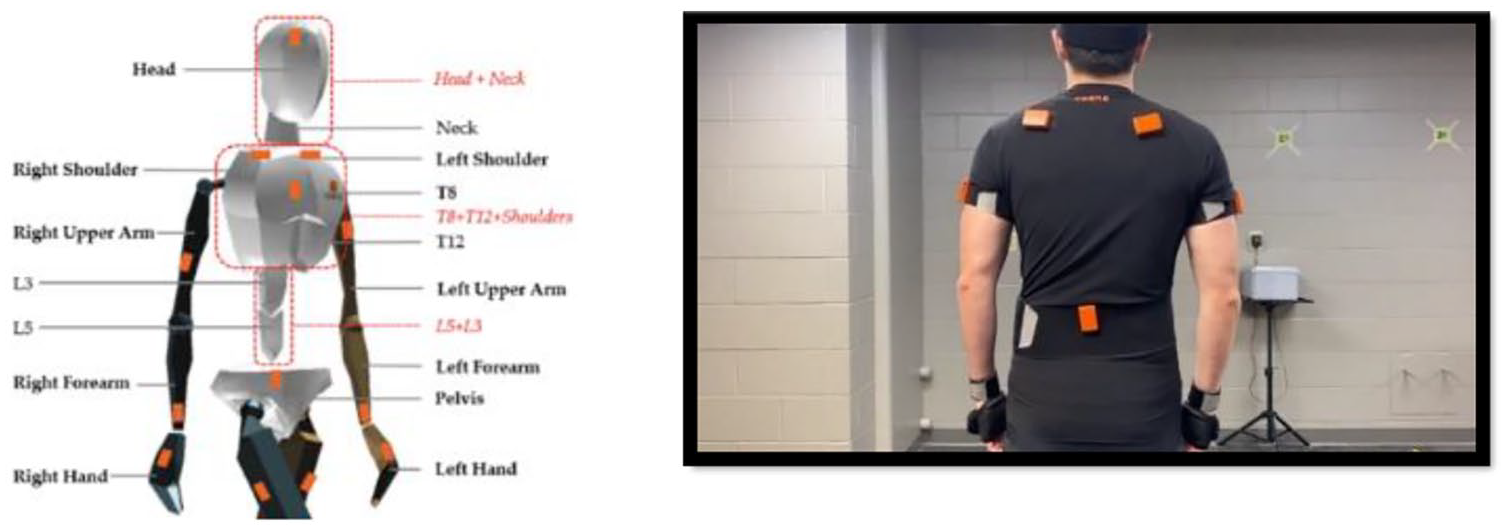

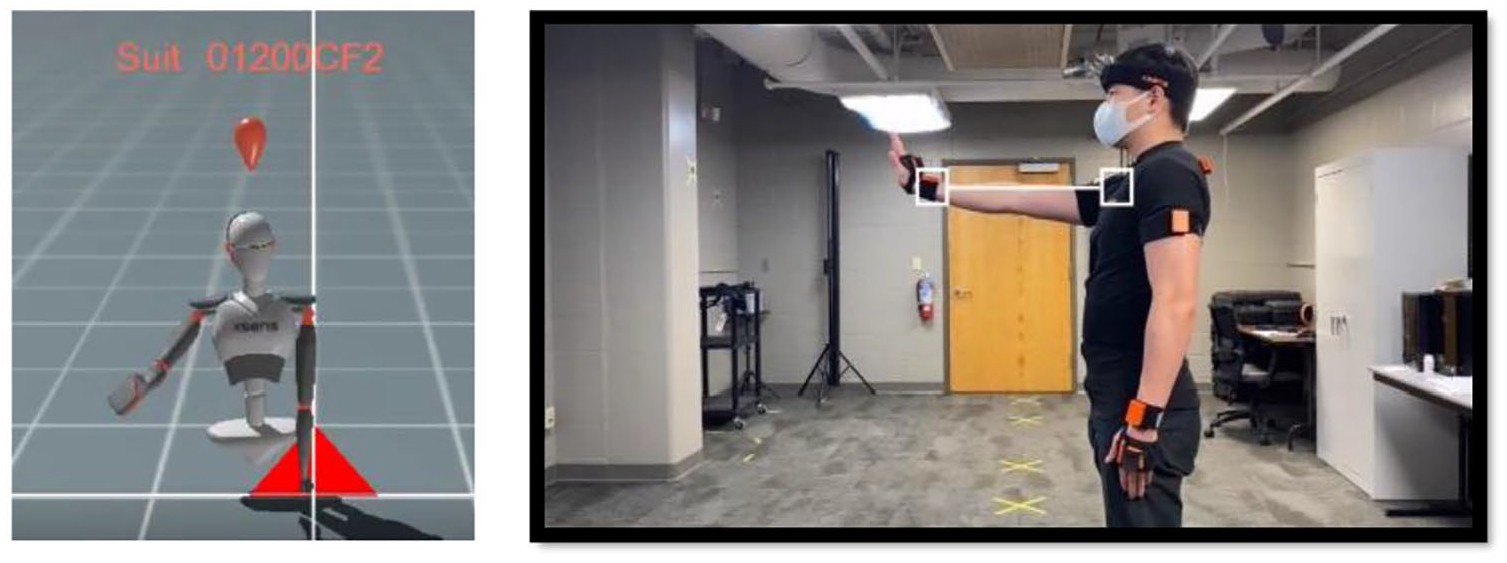

From our previous study (Yu et al., 2023), we developed a client program in C# based on the API of the motion capture system in which AR scene should be triggered by receiving the position coordinates. A total of 25 participants aged between 18 and 25 took part in the study, with each participant performing the same task consecutively. Before starting the experiment, participants were asked to complete a questionnaire encompassing general inquiries about their age, gender, academic status, and prior immersive experiences with AR technology. Subsequently, a comprehensive overview of the Hololens device and Xsens motion capture sensors was provided, elucidating the specific tasks to be performed during the experiment. Following this, a set of 11 Xsens motion capture sensors (head, sternum, pelvis, right shoulder, left shoulder, right upper arm, left upper arm, right lower arm, left lower arm, right hand, left hand) were affixed to the participant’s body. While attaching these sensors, careful attention must be paid to their orientation. Moreover, it was crucial to ensure that the upper and lower arm sensors were positioned beneath the straps, facilitating more precise data capture. Given the participant’s rotational movements, securing the arm straps, especially for those wearing long sleeves, is advisable. After that, a thorough verification process was mandatory to confirm the accurate attachment of all sensors to their designated positions (left/right/arm/hand/shoulder) and prevent potential misplacement. After the successful detection of sensors, the participants were instructed to remain stationary for 3 s, followed by 13 s of walking. As the participant nears approximately 3 s, guidance was given to return to the initial position, stand still, and face the computer screen until the calibration concludes. Upon completion of calibration, the program prompted the participant to press “Enter” to commence the recording phase. The participants were directed to execute the two designated gestures during the recording. They were trained to follow the procedure below:

a. The participant should be oriented toward the wall marked with numbers, as depicted in Figure 1.

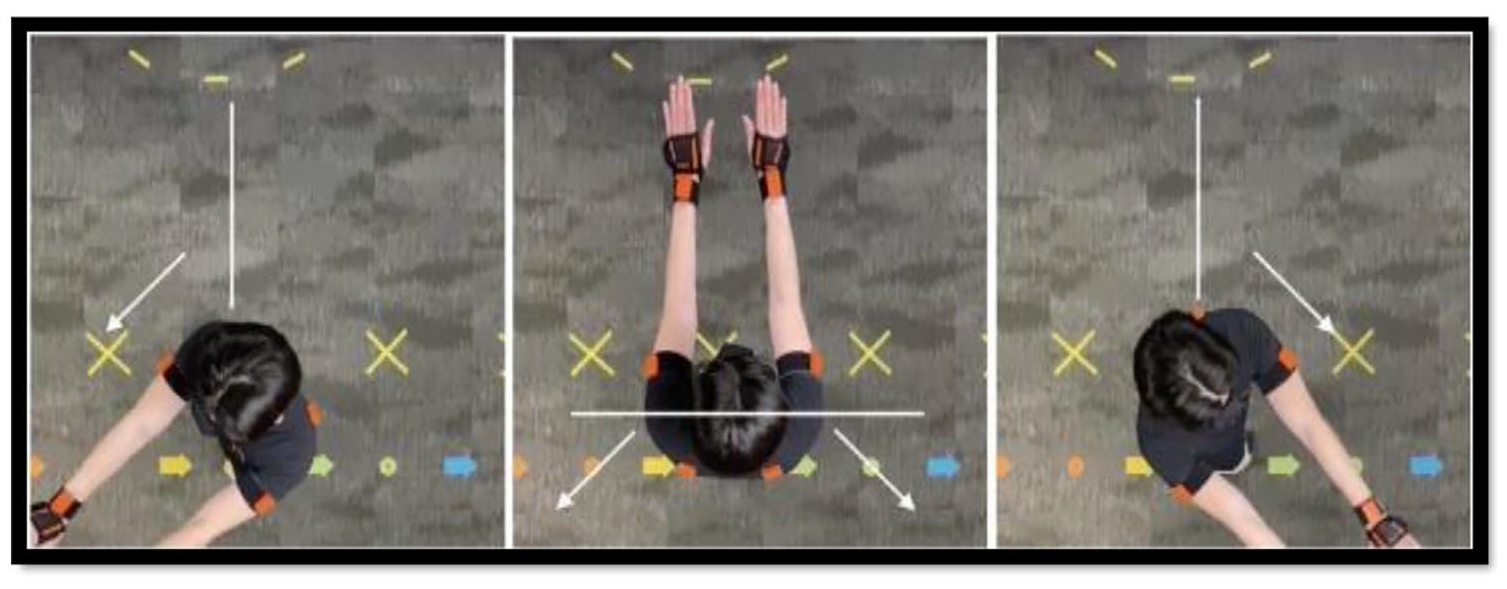

b. Both arms should be fully extended, maximizing their reach, as illustrated in Figure 2.

c. The height of the forearms must align with the sternum’s height.

d. Both arms should maintain proximity and avoid excessive spreading (refer to Figure 3).

e. Initiating by raising both arms, the participant should proceed to rotate toward the right or left rear (as depicted in Figure 3)

Calibration process.

Initiating arm gesture.

Two designed arm movements.

Following this stage, we informed the participants that the gestures demonstrated by the virtual instructor involved mirrored movements. Emphasis was placed on avoiding incorrect rotational directions. Additionally, if the virtual instructor performed the two designated gestures (see Figure 3), the participants were expected to replicate them accurately as previously instructed. Subsequently, the participants were directed to focus on the numbered marker on the wall until the designated scene became visible. To access the HoloLens program, the MOCAP (Motion Capture) app was activated. After that, the AR environment displayed a human avatar. The participants were prompted to imitate the avatar’s movements. The duration of each movement has been chosen to increase participant fatigue from continuously performing the defined gestures for an extended period.

Each participant action produced a log detailing the execution of gestures using the MOCAP app, which pinpoints instances when a specific gesture (right/left) was enacted. The action interval is every 6 s. However, due to diverse factors like latency, the time required to comprehend the action, and the duration to complete the gesture, the recording intervals could be fluctuated. These variables differed for each participant and spanned from 0 to 3 s.

Results

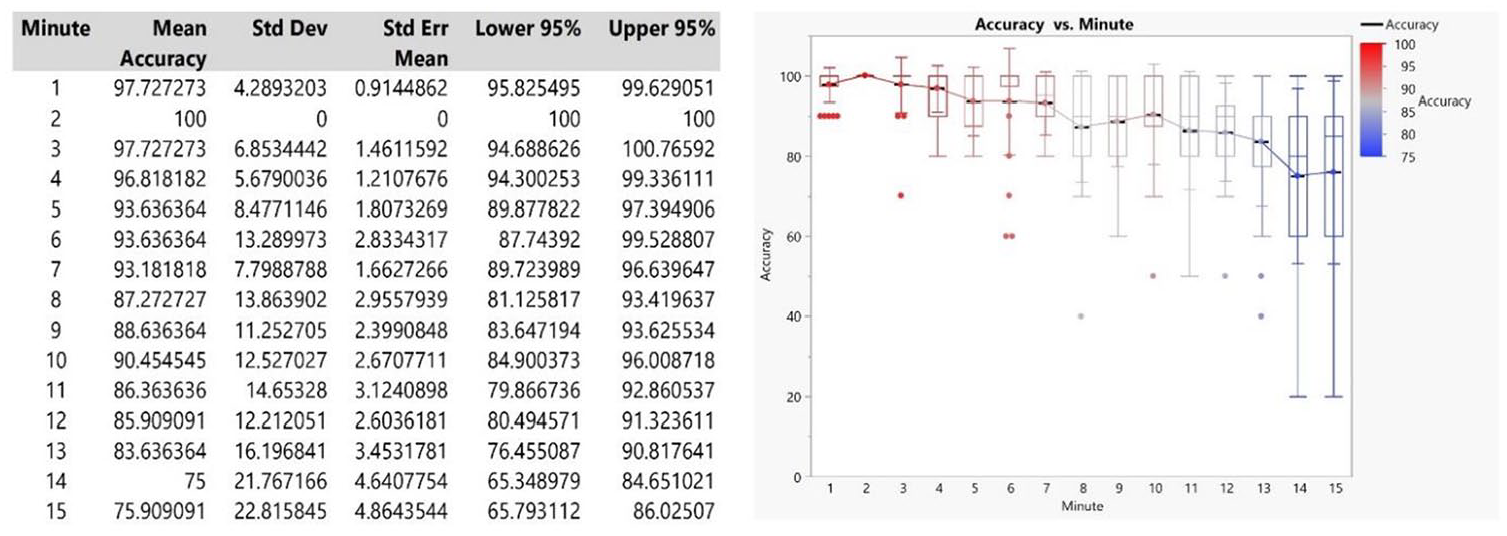

Upon completion of data collection, the subsequent step involves analyzing the miss count. Within this context, it is essential to understand that the virtual human instructor within the HoloLens performed a distinct number of gestures, denoted as α. Presuming that all participants successfully completed the experiment, the approximate value of α would be 150 gestures. Our existing methodology for data analysis entails a comparison of timestamps associated with each gesture, contrasting them against those documented by the HoloLens 2 device. A temporal variance of 0 to 3 s signifies simultaneous gesture execution. The count of such consistently synchronized gestures, denoted as “Recognized Gestures” is labeled as γ. For a better examination of the data, a segmentation of the 15-min experiment into 15 distinct intervals is crucial. Examining the instances of misses and computing accuracy for each segment requires a thorough and careful approach. To achieve this, the entire 15-min video was meticulously reviewed for each segment. Subsequently, the accurate gestures within each segment were computed using the formula [(γα) / × 100%]. Consequently, the accuracy for each participant within each segment is documented in Figure 4.

The accuracy results in every minute.

This refers to instances when the virtual human instructor executed a gesture at a particular timestamp, yet the gesture-detecting program failed to notice the participant’s corresponding execution at that same timestamp. We tabulated the count of these untriggered right/left gestures.

The accuracy percentage associated with each segment reflects the participants’ proficiency in executing the task during various time intervals. The results show a significant decline in accuracy spanning the 15-min duration. It implies that the participants encountered fatigue as they persisted in performing the assigned task. As previously mentioned, fatigue is described as a sensation of tiredness, weakness, or depletion that emerges after extended physical exertion. In this context, the continuous full-arm movements required by the task likely contributed to the onset of fatigue among the participants. The accuracy results show a meaningful negative correlation between accuracy and duration (

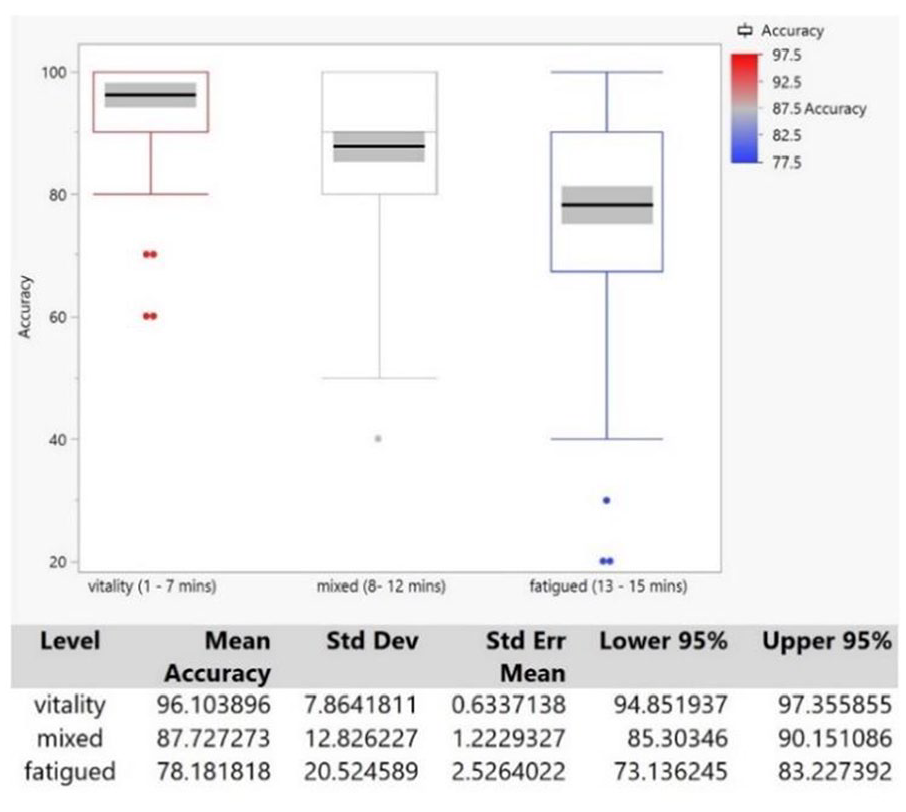

We also performed the analysis using the Fit Mixed Effects Model on a dataset of 22 participants. The outcomes indicate the delineation of three distinct groups (see Figure 5). Initially, during the initial 7 min, participants exhibited a high accuracy in executing the assigned task, with an average accuracy of 96.1% (standard deviation = 7.86). However, between the 8 and 12 min mark, accuracy was substantially declined, amounting to 87.7%. Beyond the 13-minute threshold, accuracy was further reduced below 80%.

The accuracy comparisons by groups.

To comprehend the effects of physical fatigue on posture, we categorized the entire dataset of segment positions into the following labels: (1) Performance of Left Full Arm Gesture, (2) Performance of Right Full Arm Gesture, and (3) Other Gestures. Based on this categorization, we extracted the maximum values of body sensors (neck, T8, left shoulder, right shoulder, left upper arm, right upper arm, left forearm, and right forearm) observed when the participants performed both left and right full arm movement gestures.

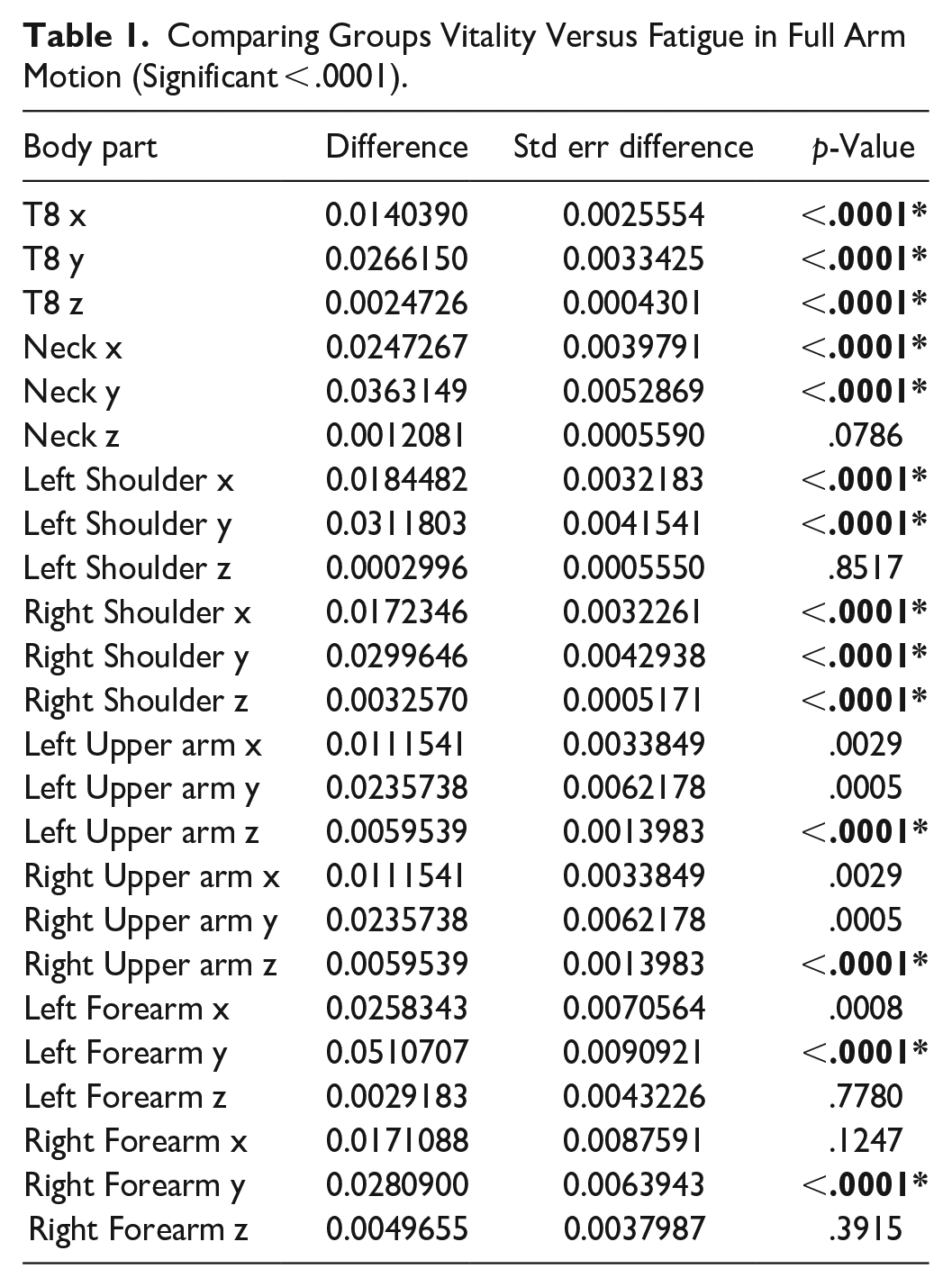

The outcomes indicated significant alterations (p < .0001) in segment positions in x and y coordinates for sensors placed on the neck, T8, left shoulder, and right shoulder (see Table 1). These alterations were observed when participants engaged in the left and right full-arm movement gestures for a duration exceeding 7 min. Especially, a consistent pattern emerged across all these segments, characterized by decreases in x and z-axis positions, accompanied by an increase in the y-axis position for the left full arm movement gesture. On the other hand, a steady pattern occurred across all these segments, characterized by an increase in the x-axis position, with a decrease in y and z-axis positions for the right full-arm movement gesture.

Comparing Groups Vitality Versus Fatigue in Full Arm Motion (Significant < .0001).

Regarding the upper arms, executing the full arm gesture led to substantial alterations in segment positions in z coordinate among participants. As for the forearm sensors, a distinct decreasing trend was evident in the y-axis positions, although not in x and z-axis positions.

Discussion

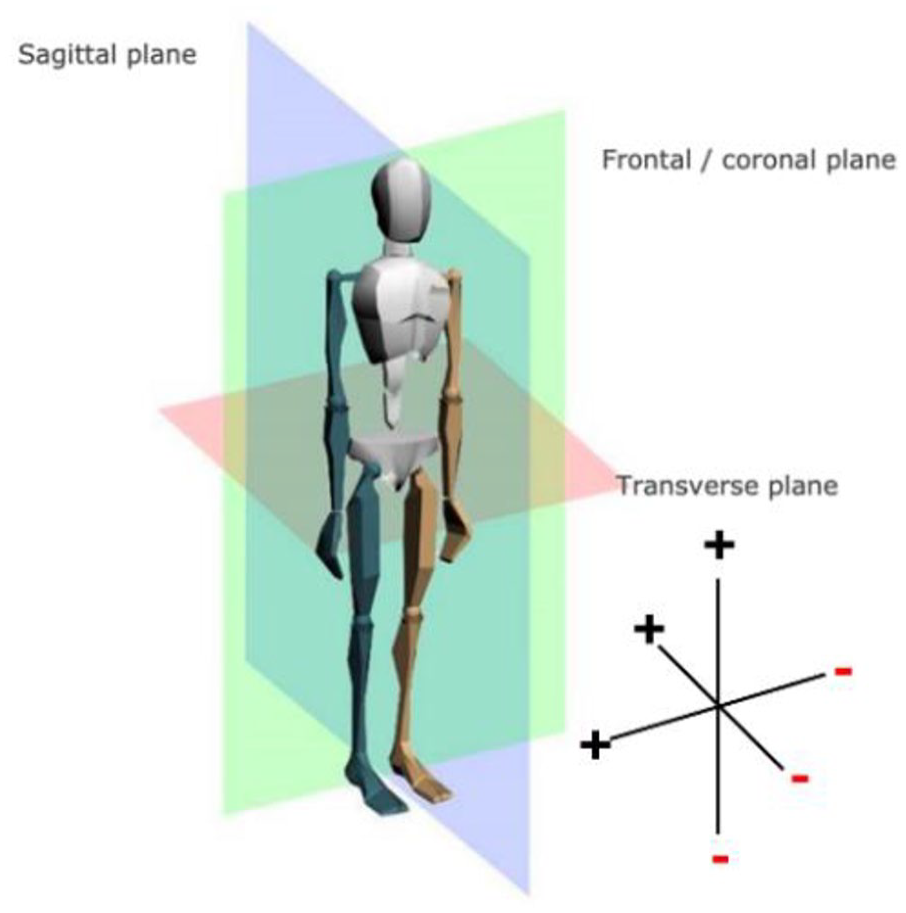

The present study aims to address how body posture changes in response to fatigue while performing full-arm gestures in the performed a full arm motion gesture. Referring to the directional cues shown in Figure 6, during the execution of the left full arm gesture under conditions of physical fatigue, the neck, T8, and shoulder shifted 5 to 8 cm in the forward-right direction. Conversely, when the participants performed the right full arm gesture, those body parts moved 3 to 6 cm in the left and backward direction. This suggests that fatigue caused changes in the neck and back movement patterns of the trunk. That was the main reason the precision of posture detection was significantly reduced from 97.7% to 75.9%.

Segment position directions.

As indicated by our findings, fatigue triggered a decrease in the muscle strength of upper body segments during participants’ full arm movement gestures. This, in turn, posed challenges for participants in sustaining accurate and well-regulated body movements. As fatigue sets in, they exert more effort and engage in larger body motions to compensate for decreased muscle function and stability. In our study, using upper body motion sensor data, physical fatigue can be quantified by measuring changes in the range of motion over time. When the body is fatigued during full arm gestures, its ability to produce and maintain force decreases, leading to changes in the range of motion of various body segments. The neck and back of the trunk play a pivotal role in upholding core stability. When fatigue affects these regions, it can impact overall posture and stability, potentially compromising the accuracy of gesture detection. Our data analysis indicates that alterations in the torso movement can extend their influence on the upper and forearm regions. Consequently, tracking changes in the movement of the neck, T8 (thoracic vertebra), and shoulders can offer early insights into the impact of fatigue on users in AR environments.

Focusing exclusively on alterations in arm movement may overlook the broader context of how fatigue impacts the overall body’s movement and stability. Comprehending the effects of fatigue on these specific areas can yield valuable insights for enhancing the precision of computer algorithms designed to interpret human gestures accurately. To mitigate these challenges, it is crucial to consider the effects of fatigue during algorithm development. This may involve adapting the algorithm to account for fatigue-related postural changes, implementing real-time feedback mechanisms to assist users in maintaining proper posture despite fatigue, or collecting data specific to fatigued conditions to train the algorithm for improved accuracy in such scenarios.

AR environment. The findings revealed a correlation between body posture and fatigue. More specifically, the range of body segments for the neck, T8 (thoracic vertebra), and shoulder sensors show meaningful patterns to evaluate fatigue levels. The results indicate that physical fatigue significantly influenced the ranges of motion in these body parts when the participants.

Conclusion

Spending an extended duration on educational AR material can undoubtedly lead to profound fatigue, particularly when confronted with protracted or demanding content. The negative consequences of physical and mental exhaustion should not be overlooked. However, the intensity of this weariness can differ depending on multiple factors, including the specific AR technology employed, the complexity of the educational material, and the overall arrangement of the learning environment. Understanding the effects of physical fatigue during AR learning is crucial in assessing their impact on individuals in specific contexts. Through the findings of the current study, we are able to reveal how physical fatigue can manifest muscular strain, diminished range of motion, heightened muscle tension, and a general sense of bodily discomfort. By using motion capture technology, we can effectively monitor alterations in body movements and detect patterns that signify the onset of physical fatigue. Physical fatigue can influence the overall learning experience and performance of individuals engaged in extended periods of AR-based learning. By investigating the impact of fatigue on body segment movement during prolonged standing and AR-based learning, we could gain insights into the relationship between fatigue and human performance. These insights can contribute to developing strategies to reduce fatigue and enhance performance in AR environments.

The current study analyzed upper body motion data based on the fatigue level of participants in the AR learning environment. The utilization of motion capture technology enables the monitoring of an individual’s movements, allowing for the identification of obvious patterns indicative of physical fatigue, such as a restricted range of motion movements. Through the analysis of participant movements, researchers and AR design engineers can enhance their comprehension of how fatigue and other factors can influence user engagement and performance. Gaining insights into the movement patterns associated with fatigue can contribute to a better understanding of the impact of fatigue on body posture and provide valuable knowledge for ergonomic design considerations in AR environments.

For the limitations, the HoloLens device may have contributed to the physical strain and fatigue experienced by participants. In future investigations, exploring the potential impact of different AR device form factors and configurations on physical strain and fatigue would be beneficial, aiming for a more comprehensive understanding of their effects. Furthermore, upcoming research initiatives might explore integrating machine learning methodologies to create an advanced human gesture recognition system capable of discerning precise fatigue-related movement patterns and sequences within the AR learning environment. Lastly, future studies may contemplate enlarging the sample size and extending the duration of data collection to enhance statistical robustness and enable more precise pattern identification. With a larger and longer-term observation period, capturing a broader array of movement patterns and potentially discerning nuanced variations associated with fatigue becomes feasible

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research is supported by NSF IIS-2202108.