Abstract

The Department of Defense emphasizes digital engineering using Model-Based Systems Engineering (MBSE), which includes systems modeling language (SysML)-based models. Analysis of system impacts on human operators and their performance typically occurs through non-MBSE approaches. One current process evaluates human performance and workload using IMPRINT, a discrete event simulation tool. IMPRINT primarily exists as a standalone tool with limited built-in functionality to integrate with an MBSE tool. This research develops a generalizable and updateable human performance modeling architecture that combines SysML-based model development with a new To-Be process. The To-Be process creates traceability from the SysML-based model to IMPRINT, using a custom Excel tool that organizes the data from the model into an importable format for IMPRINT. Comparisons of the current and To-Be process determined that the To-Be process supports alternative model development with minimal manual rework by the modeler, while maintaining the SysML-based model as the authoritative source of truth.

Keywords

Introduction

The United States Department of Defense (DOD) seeks to evaluate the impact of future system modifications on human operators and performance, in pursuit of understanding the impact of these modifications on mission effectiveness. This need is not limited to the DOD. Commercial applications exist for understanding the impacts of system modifications on human performance, such as how automation in cars affect the workload of the drivers (Stapel et al., 2019).

System modifications include the introduction of new equipment or features into an existing system. The DOD’s acquisition process focuses on the acquisition of new systems and system modifications to improve mission performance. To modernize and guide the acquisition process, the DOD released the Digital Engineering Strategy in 2018 and the Digital Engineering DoD Instruction in 2023. Both documents require the adoption of digital engineering practices through model-based systems engineering, known as MBSE (Office of the Deputy Assistant Secretary of Defense for Systems Engineering, 2018; Office of the Under Secretary of Defense for Research and Engineering, 2023). DoD Instruction 5000.95 which requires the consideration of Human Systems Integration (HSI), explicitly cites the “use of human modelling and simulation” as a required HSI activity (Office of the Under Secretary of Defense for Research and Engineering, 2022, p. 7). These documents highlight the need to integrate human performance modeling with MBSE.

To provide system designers the ability to anticipate the effect of technology changes on human and system performance, this research focused on development of a generalizable human performance modeling architecture, as shown in Figure 1, that integrates MBSE and traditional human performance modeling using a discrete event simulation (DES) tool. The model, when documented in an MBSE tool, serves as an authoritative source of truth to support system design and acquisition, a key tenet of the Digital Engineering Strategy (Office of the Deputy Assistant Secretary of Defense for Systems Engineering, 2018).

Human performance modeling architecture.

A modeling architecture that spans different tools but remains grounded in the MBSE model and provides traceability across the tools ensures a well-documented approach to model maintenance. This research investigates how a stakeholder should model a complex mission beginning with execution in an MBSE tool and then in a DES tool. The modeling aims to accurately represent the complexity of a mission with multiple operators and ongoing tasks. The research also explores how the model configuration should permit the incorporation of additional tasks, operators and resources into the baseline model to create an alternative model that represents real-world additional impacts to workload during a complex mission, without substantial investment in repeating the modeling effort. Finally, understanding the requirements that exist to support integration of MBSE tools with the DES tool is a principal aspect of this research.

Cognitive workload can serve as a response variable for human performance models. The HC-130J aircraft and its four standard aircrew members provide a case study for the current research. Therefore, the model must capture the cognitive load data as the workload value for each user during each task within the task network that the humans are conducting within the mission of interest. Currently, one process for creating a workload analysis model is through the DES software tool, named the Improved Performance Research Integration Tool (IMPRINT). The United States Army and Air Force have used IMPRINT to develop models for years (Mitchell, 2000). The United States Army supported development of this tool, which other DOD organizations have applied across the DOD. This tool permits modelers to apply workload estimates to represent task sharing, shedding or delay, which facilitates analysis of the impact of human limitations on human system performance. For the purpose of this research, this process is called the As-Is process. This process does not require the use of MBSE.

One MBSE tool is CATIA Magic Systems of Systems Architect (CATIA) and is increasingly used by modelers to describe and document systems, including systems with human operators. IMPRINT currently possesses limited built-in functionality for data integration with MBSE tools, like CATIA. Integration of DES tools and MBSE tools potentially reduces the time and effort necessary to model and assess human performance early in the system development lifecycle. The process developed to integrate IMPRINT and CATIA, or the To-Be process, required development of a custom-built Excel tool to organize the task network data from CATIA into an importable format for IMPRINT.

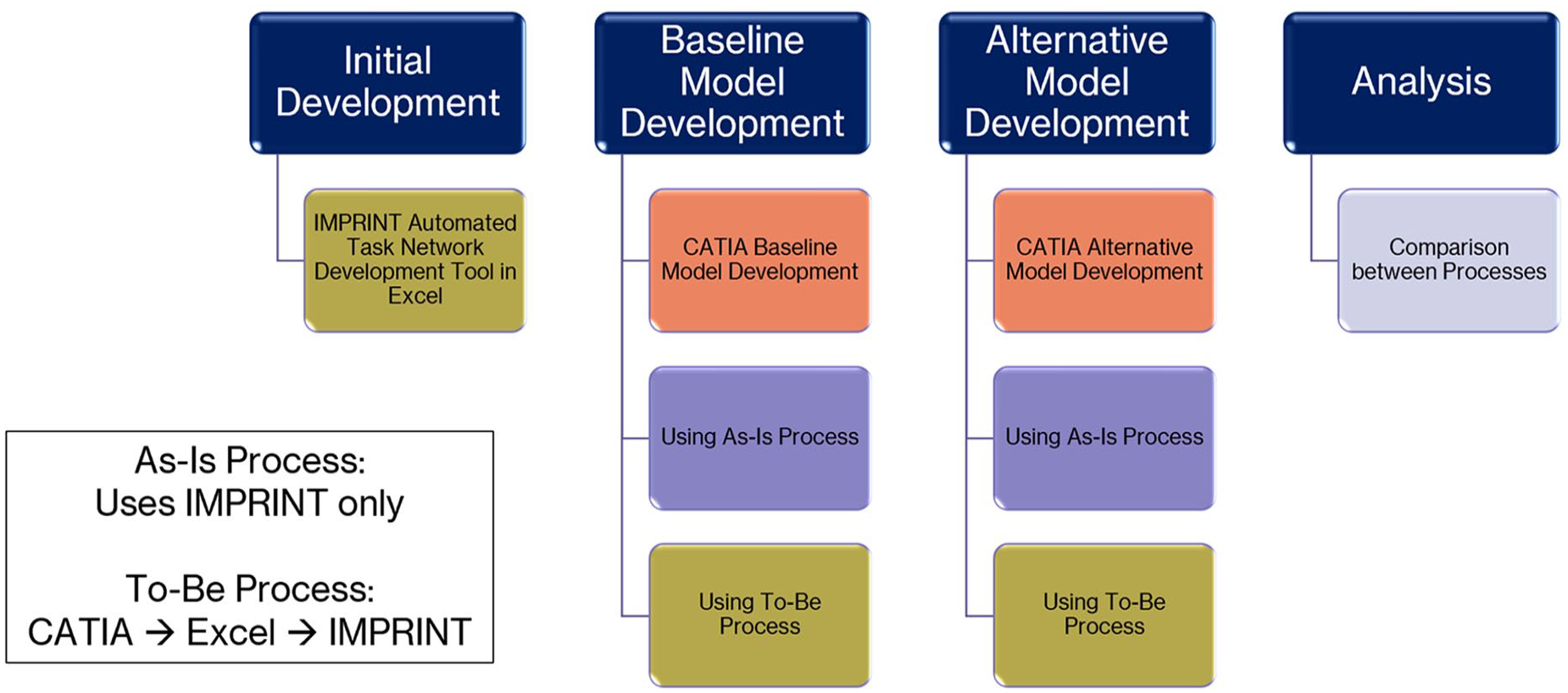

This research was a proof-of-concept demonstration and consisted of seven procedures: (1) model development in CATIA, (2) development of the custom Excel tool, (3) development of the baseline model using the As-Is process, (4) development of the baseline model using the To-Be process, (5) representation of new technology through updates to the CATIA model, (6) development of the alternative model using the As-Is process, and (7) development of the alternative model using the To-Be process. The analysis includes comparisons of the two processes for both the baseline and alternative model development, as well as analysis of the CATIA model development.

Method

The design of this research method stems from previous work executed for the United States Air Force on HC-130J mission workload analysis. The methodology includes two processes. The As-Is process relies solely on using IMPRINT to evaluate workload. The To-Be process uses CATIA, Microsoft Excel, and IMPRINT. Currently, IMPRINT supports import of an activity diagram from CATIA. However, the IMPRINT model requires significant manual rework to make the imported diagram readable and to create an executable model that runs a simulation. Due to this inefficiency and limitation, the To-Be process seeks to provide a streamlined approach to creating an editable model. The To-Be process, combined with model development in CATIA, comprise the human performance modeling architecture, as shown in Figure 1.

The To-Be process uses a function-to-task structure due to the format of the available data and because this structure is the proven organization for task networks. Aldrich, Craddock, McCracken & Szabo developed the foundational methodology for workload prediction using task analysis and grouping tasks into functions as part of task networks, which Bierbaum & Szabo utilized to further develop baseline models for workload prediction (Bierbaum et al., 1989). Another benefit of using the function-to-task structure is that in the baseline CATIA model, the function-to-task structure allows the modeler to update only the functions and tasks within the model that change. IMPRINT uses the task network structure, allows for resource-interface pairs and also allocation of values on the Visual Auditory Cognitive Psychomotor (VACP) scale.

The data set used for the HC-130J mission includes a task list that organizes the functions and tasks in sequence and includes task times and cognitive load values for each task, assignment of the aircrew performing each task and the equipment required for each task. Application of the human performance architecture to other systems will require creation of task lists to create a usable task network. This research requires the use of CATIA and IMPRINT as the modeling tools, with Excel as an intermediary tool. However, the human performance modeling architecture is conceptually applicable to other MBSE and DES tools.

The use of Excel as the intermediary tool to organize the data into a format that IMPRINT supports for import assumes that other MBSE tools hold the ability to export into Excel files. As long as tools can export tables for data for the functions and tasks, then the Excel tool can accept import of the data and organize it as a task network for import to IMPRINT.

One assumption for developing the process as a proof-of-concept is that the crew members execute the tasks in sequence. In real-life, tasks occur in parallel, are repeated, are initiated but not fully executed, and are not always occurring in series. This assumption ensures that the network paths within IMPRINT connect in series or that the modeler can adjust the IMPRINT model to support alternate arrangements.

As shown in Figure 2, the research overview includes seven procedures and a comparison of the processes. Construction of separate models using both the As-Is and To-Be processes occurs for the baseline system representation and the alternative system representation.

Research overview.

Initial Development of the Excel Tool

Development of the Excel tool included writing code in Visual Basic for Applications (VBA) to create an Excel workbook that automates development of a task network in the format that IMPRINT accepts.

CATIA Baseline Model Development

Development of the model representing the system functions and tasks, as well as system operators and resources, occurs in CATIA. This procedure included modeling 51 functions and 112 tasks as the baseline model, based on the data set’s task list. The model includes allocation of each task to operators and to interface technologies.

As-Is Process for Baseline Model Development

The As-Is process involves developing an IMPRINT model using the same tasks as in the To-Be process. Development of this process occurs within IMPRINT, where the modeler manually builds the task network in IMPRINT and then runs the simulation to generate workload results.

To-Be Process for Baseline Model Development

This process involves developing a separate model with the same data as used during the As-Is process. To accomplish this process, the modeler exports the same data from CATIA, uses the Excel tool to automate task network development, and uploads the worksheet into IMPRINT to generate a task network. The task network is edited as needed, due to limitations of IMPRINT’s data import functionality, which requires manual copying and pasting of workload demands and operator assignments into the IMPRINT model. Then, the simulation is executed.

Modifications to the System

To represent modifications to the system, eight additions (one new function and seven new tasks) from the data set’s task list were added to the model. The addition of blocks to represent new equipment and the function and task additions occur in the baseline CATIA model. Once changed, this model now represents an alternative model that is simulated.

As-Is Process for Alternative Model Development

The As-Is process for developing the alternative model involves manually updating the baseline IMPRINT model.

To-Be Process for Alternative Model Development

The To-Be process for developing the alternative model includes exporting the generic tables in CATIA filtered for the model modifications, using the Excel tool to organize the data into a task network, importing the task network into IMPRINT as a modified model, manually updating within IMPRINT as needed, copying and pasting the modified functions into a copy of the baseline model to create an alternative model, and finally, executing the simulation.

Comparisons Between Processes

To provide a quantitative measure, the researcher recorded the time to complete each procedure of the methods. Because the goal of this research is a repeatable process, the intent is that the time to complete the process is reasonable for future modelers. In this way, the variable of interest for this research is the time to model, with the operational definition as the ease of use of the human architecture modeling process. The time to create the baseline model in CATIA was recorded. The times to complete the As-Is and To-Be processes for both the baseline and alternative models were also compared.

Results

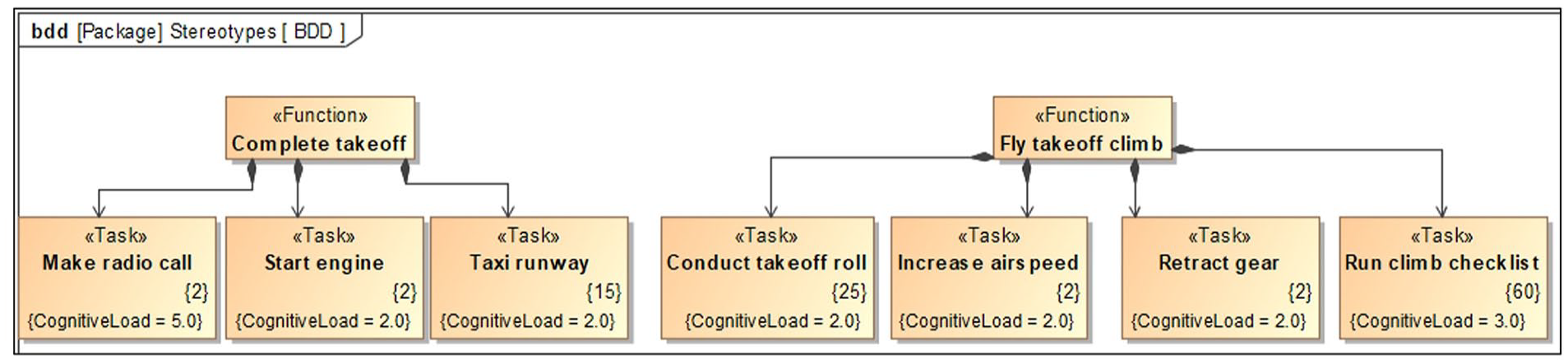

Development of the CATIA model took 14.7 hr and resulted in six products for the architecture. Figures 3 to 7 are screenshots of the products from an example demonstration problem having the same elements as the products in the research. However, the functions, tasks and values are shown for demonstrative purposes. Shown in Figure 3, the first product is an activity decomposition block definition diagram (BDD) that took 6.90 hr to generate for the 51 functions and 112 tasks. Duration constraints represent the task duration, and attributes represent the cognitive load based on the example. However, to support a full IMPRINT model, additional attributes could be created for each of the VACP values.

Activity decomposition BDD.

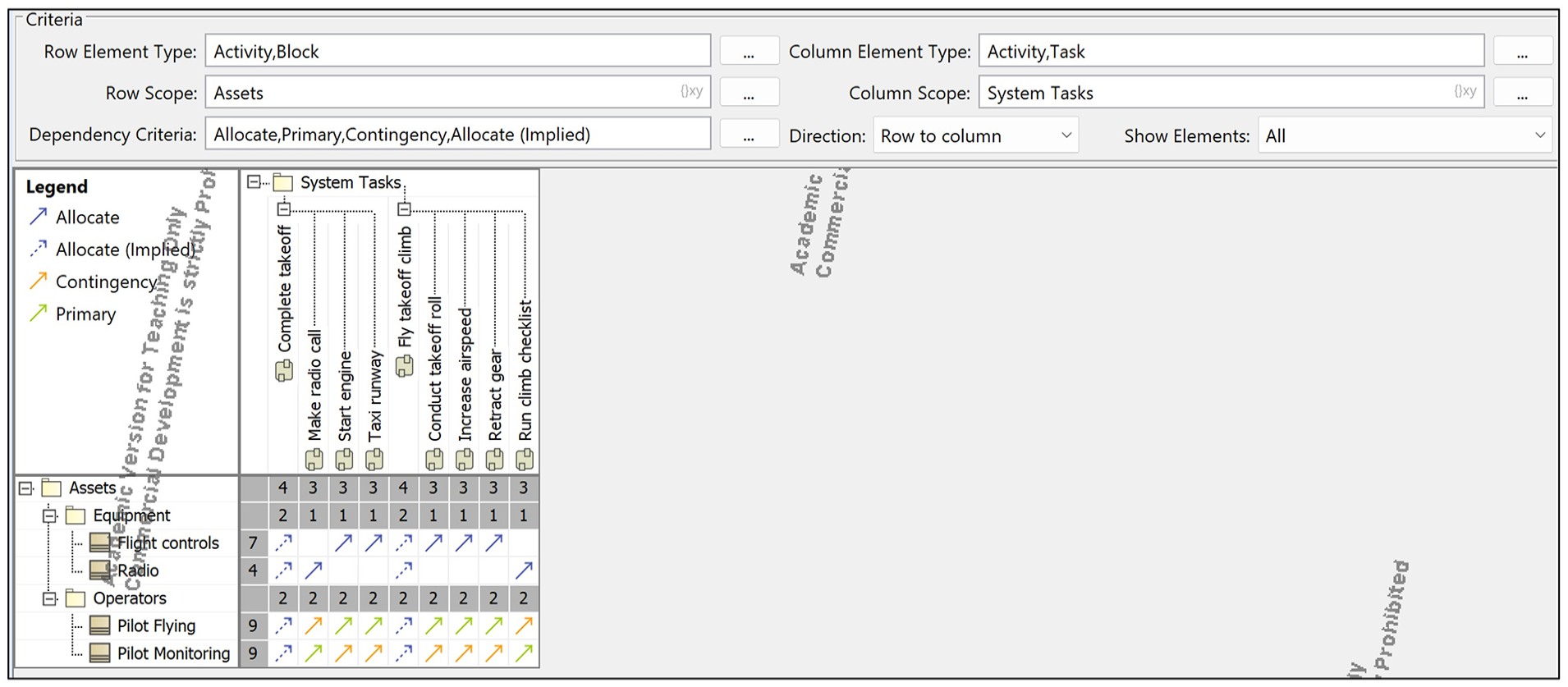

Asset allocation matrix.

Generic table for functions.

Generic table for tasks.

Profile diagram.

The second product, shown in Figure 4, is an asset allocation matrix, which shows the allocation of tasks to aircrew and equipment, which are created in the models as blocks.

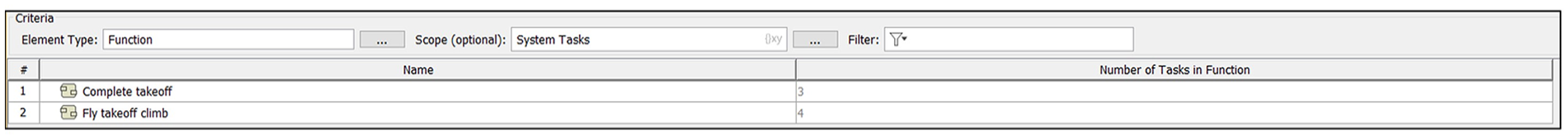

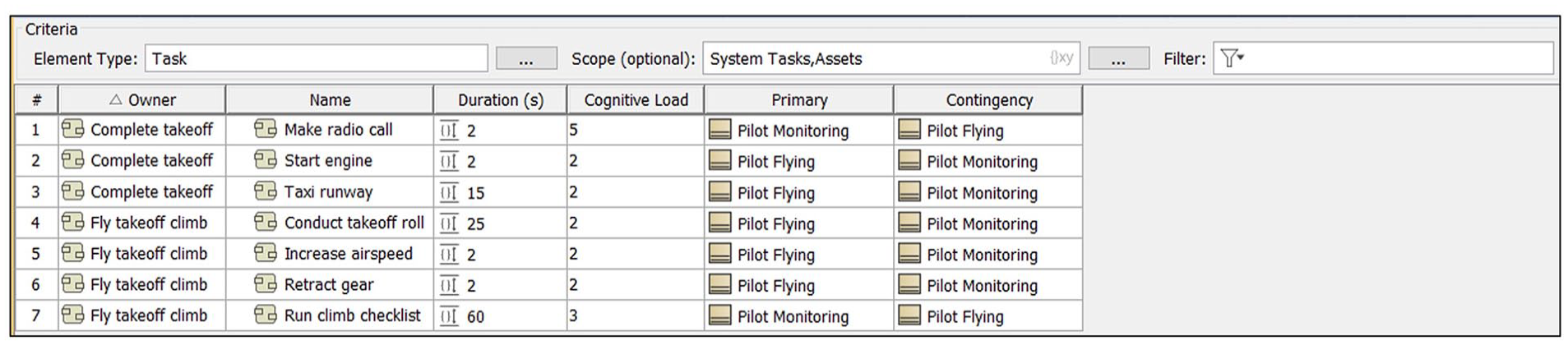

The third and fourth products are generic tables for both functions and tasks, illustrated in Figures 5 and 6.

Table development involved creating custom columns that organized the data in the format needed for the Excel tool to allow for import into IMPRINT.

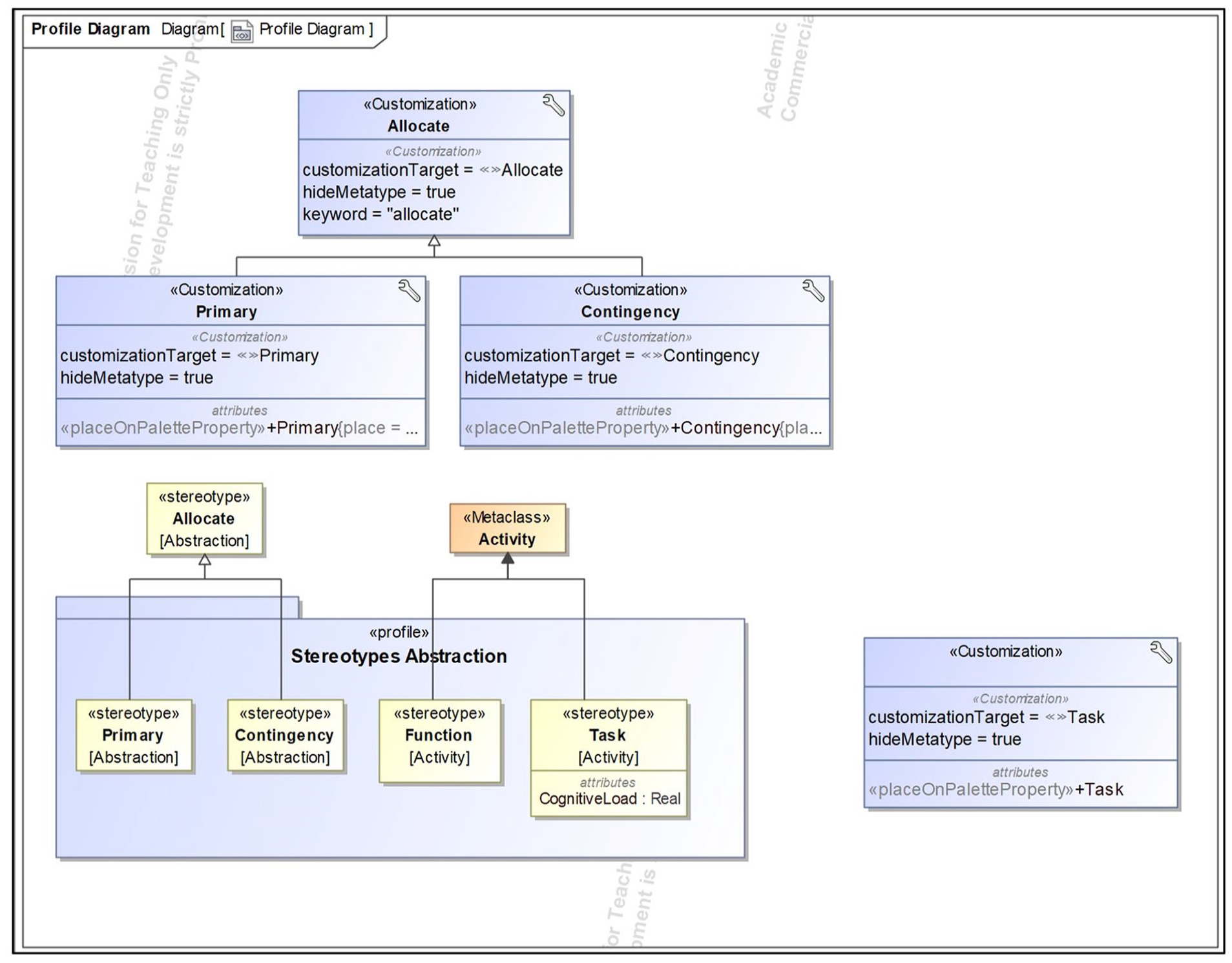

The fifth product is the profile diagram, shown in Figure 7, that holds the stereotypes and customizations for the model, and the sixth product is a glossary of terms based on the acronym list in the data set.

The Excel custom tool is an Excel Macro-Enabled workbook that organizes the tables, like in Figures 5 and 6, after they are exported from CATIA into an importable worksheet format for IMPRINT. The Excel tool is comprised of one module with 23 Sub procedures.

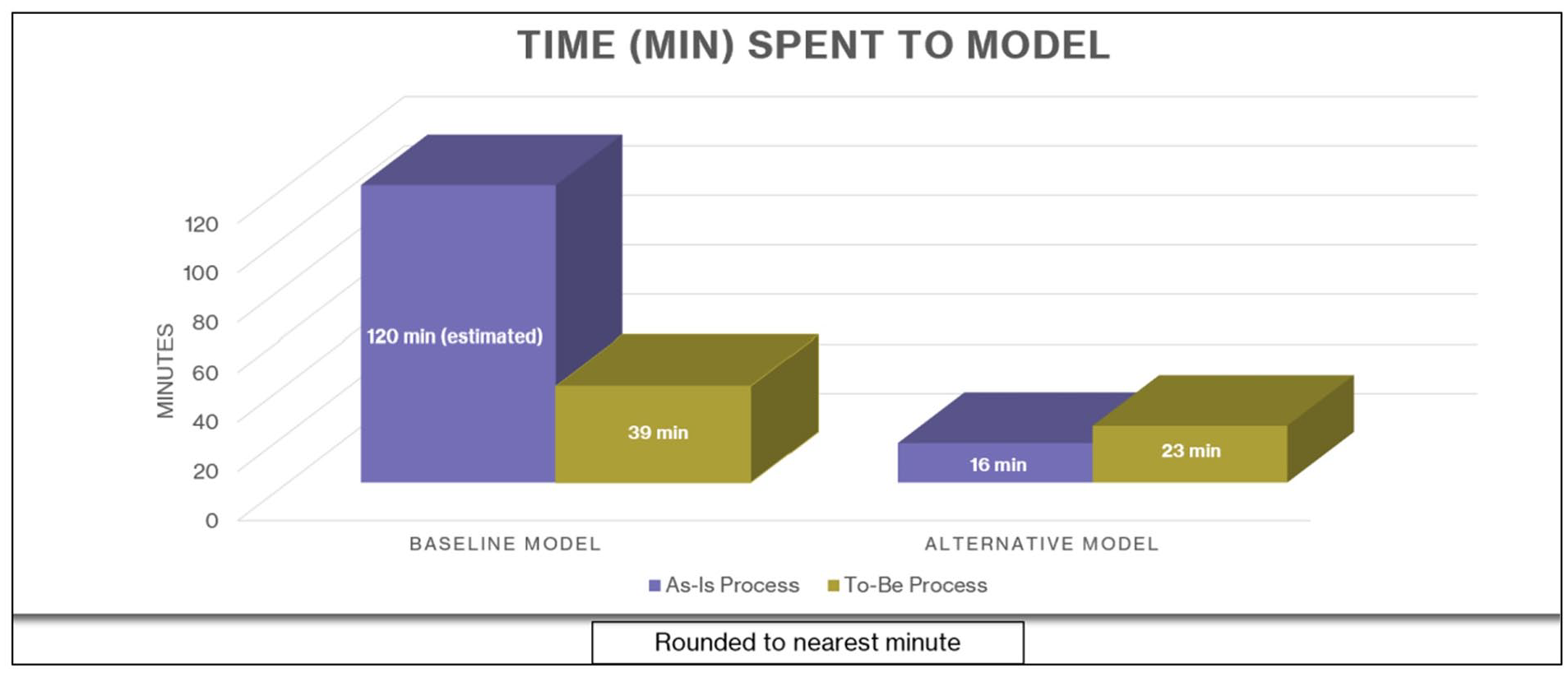

The overall time for the As-Is process for IMPRINT model development of 26 functions and 68 tasks was 71.30 min. Using this value as an estimate, the complete model with all 51 functions and 112 tasks is then expected to take 117 min, rounded to an estimate of approximately 2 hr, as shown in Figure 8. Development of the baseline model using the As-Is process included manual IMPRINT model development, function and task development, the addition of task times and operator assignments, called “Warfighter” assignments within IMPRINT, the connection of network paths, model refinement, and simulation execution. The function and task development comprised the most amount of time during the As-Is process.

As-Is vs To-Be comparisons.

The To-Be process for baseline model development (using the same data as during the As-Is process) included use of the Excel tool, import into IMPRINT, initial IMPRINT model development, the addition of workload values, the addition of operator assignments, the connection of network paths between functions, model refinement and simulation execution. In total, the time spent developing the baseline model using the To-Be process was 39.30 min for complete model development of the IMPRINT model with 51 functions and 112 tasks, as shown in Figure 8.

Using the data set’s task list, specific equipment in the task list not used previously in the modeling represented new equipment used in the system. The tasks allocated to this equipment in the task list represented added capabilities to the system. In total, eight additions (one new function and seven new tasks) were added to the baseline CATIA model, along with the task’s equipment and resource allocation, task duration, and cognitive load score. This updated model is the alternative model. Because the custom column for the number of tasks in a function updates automatically based on the relationships in the activity decomposition BDD, and the custom columns in the generic table for the tasks for the primary and contingency allocations automatically update based on the asset allocation matrix, both generic tables for functions and tasks update automatically for the new additions in the model.

The researcher then completed the As-Is process again, using the alternative model. The total time to model the eight additions was 16.05 min, as shown in Figure 8. Although the baseline IMPRINT model for the As-Is process only included the first 26 functions with 68 tasks and the researcher estimated the time to complete the entire model, the eight modifications to the baseline model occur within the first 26 functions. Therefore, the time of 16.05 min accurately represents the time used to manually modify the IMPRINT model with eight additions, which included making a copy of the baseline model in IMPRINT, with the copy existing as the alternative model. The researcher then manually updated the copied model with the names of the functions and tasks to represent the modifications and new elements, updated task times, operator assignments, and cognitive load demands. The researcher then executed a simulation of the model.

Next, the researcher created an alternative model using the To-Be process. The total time to develop the alternative model that included the eight additions was 22.68 min, as shown in Figure 8. This process involved filtering the modified CATIA model to highlight the elements that were changed in the model, exporting the generic tables that were filtered for only the modifications, using the custom Excel tool to organize the data in a task network, and importing the task network into IMPRINT. The newly imported task network represented the modified model, and this step also included updating the cognitive channel to the crew station interface (resource-interface pair), as well as updating the task demands by referencing the cognitive load values in the task network and allocating operators to each task. The functions were copied and pasted into a copy of the baseline model to create the alternative model, and then the model was executed as a simulation.

Figure 8 displays the comparisons of the time to model for each process for development of the baseline and the alternative model.

After executing the simulation for each model created for both the baseline and alternative systems, IMPRINT generates performance reports that include workload graphs. In this research, because the data set only included cognitive load values, the workload graphs displayed the cognitive load values for each operator throughout the mission. Comparisons of the results of the IMPRINT simulation for both the baseline and alternative models show an increase in cognitive load for one specific aircrew operator between the baseline and alternative models. This result indicates that the additions to the baseline model that created the alternative model impacted the operators and is quantified in the workload results generated by IMPRINT.

In total, the time to complete the human performance modeling architecture took 16.3 hr for the researcher to complete the 51 functions and 112 tasks, as well as the eight additions. This time value can be used to estimate time to use the human performance modeling architecture, scaled as needed for the size of their model. However, this time would be reduced significantly if one assumes that the CATIA model with appropriate elements was created in support of other MBSE modeling effort and only the custom tables, export, execution of the Excel tool, and import into IMPRINT had to be performed to establish an initial IMPRINT model.

Discussion

As a proof-of-concept demonstration, the To-Be process’s 39.30 min used to build all 51 functions and 112 tasks resulted in a 67.3% decrease in time from the approximate 120 min estimated as needed for the time to develop the baseline IMPRINT model and run the simulation using the As-Is process. This decrease in time supports the efficiency of using the To-Be process. While the development of the alternative model using the To-Be process was 6.63 min longer than using the As-Is process, the difference in time between processes is expected to decrease for models with a larger number of modifications than the eight additions used in this proof-of-concept. This expectation is due to the work required to manually update the baseline model to create an alternative model using the As-Is process, versus using the Excel tool to organize the modified task network in the To-Be process that is then imported and copied and pasted into a copy of the baseline model to create the alternative model.

Updating the CATIA baseline model to represent changes to the baseline system is relatively efficient, but due to IMPRINT requiring a function-to-task structure for its network diagram, if modelers make changes to an existing task that only include the allocation of new equipment or a new role, a new task is still required since only tasks are exported from CATIA and imported into IMPRINT through the Excel tool.

For MBSE modelers without high levels of familiarity with IMPRINT or human performance models, the human performance modeling architecture and Excel tool create an accessible and efficient way to develop initial workload analysis models while maintaining the foundation of the modeling in digital engineering concepts, since the CATIA model serves as the authoritative source of truth. The custom Excel tool requires minimal work and user intervention but does require the ability to access the VBA code in the Developer tab for troubleshooting. The human performance modeling architecture is generalizable to any system that requires analysis of human operator task performance and enables data traceability across tools.

Multiple tools exist across the modeling communities. Using one tool might be ideal but is unrealistic due to the unique needs of various specialty engineering disciplines, including human factors engineering. The custom-built Excel tool successfully demonstrates data integration and knowledge transfer of task networks between two modeling tools, preserving the modeler-entered data in CATIA through transfer to IMPRINT. The manual rework in the IMPRINT model is due predominantly to IMPRINT’s import limitations.

Modelers can use a different tool than CATIA as long as the modelers organize data in a function-task structure and create tables in the Excel tool’s accepted format. If IMPRINT is not the chosen DES tool, because the code for the Excel tool is written in VBA in an accessible macro within the Excel workbook, future users can customize the tool for their data integration needs. The human performance modeling architecture and Excel tool are updateable and support efficient evaluation of the impact of system modifications. To validate this research, future work quantifying the To-Be process across multiple modelers and systems is needed, but this research provides a proof-of-concept into the effectiveness of the human performance modeling architecture originating in an MBSE tool and demonstrates the ability to successfully integrate data across tools.

Footnotes

Acknowledgements

The authors gratefully acknowledge the sponsorship of the Air Force Life Cycle Management Center and the 711th Human Performance Wing.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Disclaimer

The views expressed in this article are those of the authors and do not necessarily reflect the official policy or position of the Department of the U.S. Air Force, U.S. Department of Defense, nor the U.S. Government.