Abstract

This work investigates driver takeover times in non-urgent, low consequence scenarios within conditionally automated driving. Using physiological and behavioral data from 46 participants in a driving simulator, classification algorithms were applied to predict metrics of takeover time following a takeover request (TOR). Eye-tracking, heart rate variability, and computer-vision based body posture features were analyzed for their predictive power. The Naïve Bayes algorithm outperformed other models, achieving an accuracy of 78% when predicting the time to first gaze in the driving scene following a TOR. Results from feature selection showed eye-tracking metrics to have the most predictive power. These results suggest that eye-tracking metrics and simple, computationally efficient, 2-class algorithms may be sufficient for predicting takeover time in non-urgent, low-consequence scenarios. This research provides evidence for integration of physiological sensing into adaptive automated driving systems (ADS) to develop context-aware TOR alert systems to improve road safety.

Keywords

Introduction

With the progression of automated driving systems (ADS) in vehicles, understanding the dynamics of driver takeover becomes critical. This study focuses on conditionally automated driving, where drivers engage in non-driving related tasks (NDRTs) but must be prepared to take control of the vehicle swiftly upon request (SAE, 2021). The research investigates the efficacy of various classification machine learning (ML) models in predicting high or low takeover time, focusing on non-urgent, low-consequence scenarios where the urgency and perceived consequences of takeover are minimal (Eriksson & Stanton, 2017; McDonald et al., 2019).

Methods

The study involved participants aged 19 to 45, using a medium-fidelity driving simulator with simulated Level 3 ADS while performing NDRTs and collecting physiological and behavioral data. Metrics from eye-tracking, heart rate variability, and body posture were downselected using a frequency analysis of the Boruta feature selection algorithm and used to train and compare various classification algorithms.

Results

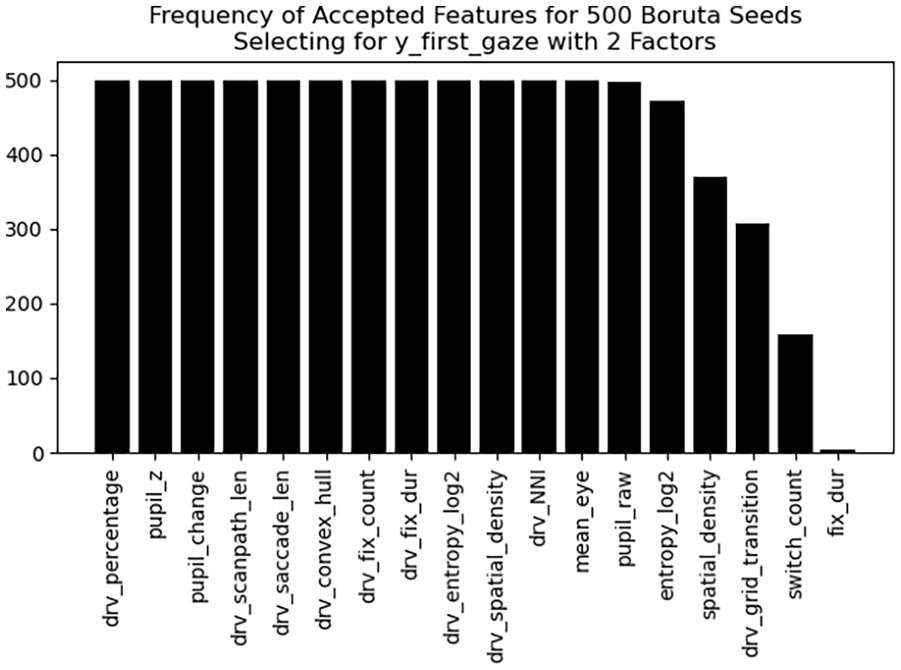

The Naïve Bayes algorithm showed superior performance in predicting the time to first gaze in the driving scene post-takeover request (TOR), achieving an accuracy of 78%. Boruta feature selection favored eye-tracking based metrics (Figure 1), which were the most influential in classifying takeover times (Liang et al., 2021).

Histogram from frequency analysis of the Boruta feature selection algorithm optimizing for classifying high/low takeover time from the “y_first_gaze” feature defined as time from TOR until the first gaze in the driving scene AOI.

Discussion

This study demonstrated the potential of sensor-based measurements to predict driver takeover time of drivers engaged in NDRTs in underexplored non-urgent and low consequence scenarios. Simple, computationally efficient ML algorithms trained on minimized feature sets demonstrated sufficient accuracy in classifying driver takeover times, which could be crucial for the development of context-aware TOR alert systems to improve road safety (Weaver & DeLucia, 2022; Zhang et al., 2019). In the future, this work will be extended to predicting further takeover performance measures.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research study was supported by the Ford Motor Company.