Abstract

Design issues that affect the usability of health technologies for marginalized populations may lead to further exacerbating health inequity. To address this concern, we propose the use of failure modes and effects analysis (FMEA) to systematically identify potential inequities that result from usability evaluation methods. This new application of FMEA grounds the traditional FMEA in critical theory and introduces two key concepts: the equity failure mode and reflexivity. To test our approach, we engaged 13 usability practitioners with expertise in healthcare in a series of four workshops. We found that when participants located themselves reflexively in their practice of usability evaluation, they were able to identify more nuanced equity failure modes. Through reflecting on our experience using this method, we aim to illustrate that the critical FMEA is a viable approach human factors practitioners and researchers can use to anticipate equity failure modes in design and evaluation methods.

Introduction

One of the strategic equity, diversity, and inclusion goals of the Human Factors and Ergonomics Society is to “advance the science and practice of HF/E to address current and emerging societal problems” (Human Factors and Ergonomics Society [HFES], n.d.). Poor usability is a barrier for health technologies to fulfill the promise of advancing health equity. To address this aim, we propose a new approach to failure modes and effects analysis (FMEA) that we use to identify inequities in usability evaluation methods. In this work we prioritize the identification of a broad range of failure modes and leave the identification of corrective actions for future work.

In the following sections, we start by developing the problem that motivates this work; thereafter we explicate the theoretical framework that guides our FMEA approach. We then present how we conducted the FMEA along with preliminary findings. Finally, we conclude with our reflections on our experience.

Background

Health technologies are designed to improve the health outcomes of a population; however, the benefits of these technologies may accrue disproportionately to those already advantaged within the population. The result, then, is that health technologies can exacerbate existing health inequity—differences in health that are unnecessary and avoidable, but importantly, are also unfair and unjust (Whitehead, 1992).

We first draw on insights from health informatics literature to implicate usability in this health equity challenge. Recognizing that health informatics interventions have the potential to exacerbate health inequity, Veinot et al. (2018) borrow the term intervention-generated inequality (IGI) from public health literature. They explain that inequalities in health outcomes can manifest through inequality in access, adoption, adherence, or effectiveness of the intervention.

According to Veinot et al. (2018), inequalities in adoption and adherence, in particular, can be influenced by the usability of health informatics interventions. This could be due to some populations exhibiting less computer-savvy or digital literacy, increasing the burden of usability problems, and thus affecting their adoption and adherence. Usability, then, becomes an important quality in the design of health informatics interventions to mitigate disparities in health due to lack of experience with computers.

Comfort with technology in itself, however, is not the only factor to consider when designing usable health informatics interventions. In response to the urging of the Institute of Medicine (US) Committee on Understanding and Eliminating Racial and Ethnic Disparities et al. (2003) to enhance the cultural appropriateness of healthcare delivery services, Valdez et al. (2012) developed a framework for the design of culturally-informed health information technology (HIT). They make the case that HITs, especially those intended for use in the home, need to be culturally-informed—arguing that technologies designed with only the cultural values of the majority embedded are likely to result in poorer usability for those structurally marginalized in society. The authors again theorize that this disparity in usability can, in turn, result in disparities in health outcomes.

While these insights are drawn from health informatics literature, we argue that the effects of a person’s comfort using technology and the embedded cultural values of a technology are not limited in scope to health information systems. These factors apply more broadly to health technologies, construed as systems, devices, tools, or equipment used to improve health. Our point is that for health technologies to have equitable benefit to society, we should attend to usability.

Of course, developers of health technologies have become increasingly concerned with usability given the patient safety consequences of use errors (Carayon et al., 2014), and as such, usability evaluations of health technologies have become a matter of best practice (U.S. Food and Drug Administration [FDA], 2016; International Electrotechnical Commission [IEC], 2015; Weinger et al., 2011). However, a series of studies examining the reproducibility of usability evaluations showed that our methods are not reliable: different teams of usability practitioners, with different samples of participants, evaluating the same interface, tend to find different sets of usability problems (Molich, 2018). Given this lack of generalizability, calls for truly representative samples are well-reasoned and urgent (Gibbons et al., 2014; Veinot et al., 2018).

While these findings in no way amount to a wholesale indictment of the practice of usability evaluation—there is plenty of evidence that usability evaluations help us to produce better designs—there is an opportunity to improve the way we conduct evaluations such that: (1) more participants from marginalized groups are included; and (2) evaluation methods are sensitive to the usability problems that affect them. In short, we need more equitable usability evaluations if we are to design more equitable health technologies.

FMEA

To understand how we might begin to move toward an equitable usability evaluation practice, we use FMEA as our analysis framework to identify the potential inequities in various usability evaluation methods. The FMEA is a systematic, prospective risk analysis approach for identifying potential failures in the design of a system, process, product, or service (IEC, 2018). Its early development is found in various industries such as defense, aviation, automotive, and manufacturing. In healthcare, the FMEA may be used as a risk management process in the development of a medical device, or to anticipate human error in the delivery of care. The Veterans Affairs National Center for Patient Safety determined that existing models of prospective risk analysis were inadequate for improving care processes and instead introduced an adapted version of FMEA, the Health Care Failure Mode and Effects Analysis (HFMEA)™ (DeRosier et al., 2002). We draw on this history of its successful adaptation in many different contexts as inspiration for adapting the method for our own purposes here.

Theoretical Framework

The use of FMEA to identify inequities is somewhat novel. Whereas typical failure modes might be a mechanical part failure or human error, our application of FMEA seeks to identify the ways in which usability evaluation methods reproduce the systems and structures that benefit some groups and marginalize others.

Qualitative researchers may recognize this approach to inquiry as informed by critical theory. We draw on theory from critical qualitative inquiry to ground our approach to FMEA. Kincheloe and McLaren (2011), before presenting a description of a contemporary account of critical theory, caution that “(a) there are many critical theories, not just one; (b) the critical tradition is always changing and evolving; and (c) critical theory attempts to avoid too much specificity, as there is room for disagreement among critical theorists” (p. 287). The authors also claim, earlier in the same text, that critical theory is “often evoked and frequently misunderstood” (p. 286). Within this context, we attempt to describe the critical theoretical principles that we use in our approach.

According to Guba and Lincoln (1994), research informed by critical theory aims to critique and transform power structures that oppress people based on race, gender, sexuality, and other factors. The quality of this kind of research is judged by how well it addresses these power structures, explains how they oppress, and inspires action to change them.

A commitment to critical theory also has epistemological implications: questions of what knowledge is. Guba and Lincoln (1994) explain that knowledge in critical theoretical paradigms is constructed through interactions between investigators and participants. This inextricably links both groups with the findings of the research. This view is distinct from positivistic models of inquiry, commonly associated with quantitative research, where the influence of the investigator is seen as a threat to validity to be erased by methodological rigor. Critical research instead embraces the presence of the investigator and focuses attention to the context of inquiry.

Even though engineering analysis, like FMEA, may not typically focus on theoretical frameworks, Guba and Lincoln (1994) argue that all inquiry is influenced by some theory. Collins and Stockton (2018) add that considering both methodology and theory leads to richer findings. In view of this, we underscore our critical perspective that shapes and drives our work, guiding the methods we describe next.

Methods

We conducted a critical FMEA as a series of workshops with usability experts focusing on various usability evaluation methods. Our critical FMEA approach predominantly followed the typical steps of a standard FMEA (IEC, 2018):

1. Define the objectives and scope of analysis.

2. Subdivide the process into steps.

3. Identify potential failure modes, effects, and causes for each step.

Our primary objective was to identify a broad range of potential inequities in usability evaluation methods. Criticality analysis, sometimes done as part of FMEA to prioritize identified failure modes, was left as a secondary objective and only explored briefly. Additionally, we leave the identification of actions to mitigate identified failures as a future opportunity.

A central concept we needed to define was the equity failure mode: a way, or mode, in which a process step can reproduce structures that work to oppress certain groups. As an example, when defining recruitment criteria for an evaluation of a health technology, an equity failure mode might be that racial diversity is not a criterion. This may be caused by the belief that race does not affect interactions with this particular technology. The effect of this equity failure could be that the resulting sample does not represent the racial diversity of the intended user population of the technology. The final effect of this on the process as a whole might be that usability problems affecting racial groups unrepresented in the sample are not uncovered.

In keeping with a critical qualitative approach, we felt it necessary to encourage the practice of reflexivity amongst participants. Since, in an epistemology congruent with critical theory, knowledge that is produced through inquiry is inevitably influenced by the investigator’s values (Guba & Lincoln, 1994). A critical qualitative researcher would then engage in a process of reflexivity to evaluate how their values influence their findings. Reflexivity, as Berger (2015) summarizes, is “the process of a continual internal dialog and critical self-evaluation of researcher’s positionality as well as research process and outcome” (p. 220). According to Berger, the researcher’s positionality can affect the research in three ways: (1) access to the site; (2) the researcher-researched relationship; and (3) the researcher’s lens through which they make meaning of the study. Accordingly, in addition to our own practice of reflexivity, we asked participants to engage in reflexivity to locate their positionality both in this FMEA and in their usability evaluation practice.

Setting

This critical FMEA was conducted with a human factors consultancy firm that is embedded within an academic hospital in Toronto, Canada and has >30 staff members who serve healthcare clients globally. In addition to offering a broad range of research and design services, they have specific expertise in conducting usability evaluations for regulated medical devices. Access to this site allowed us to draw insight from a breadth and depth of expertise with health technologies and usability engineering.

The firm also emphasizes the strength of their diverse backgrounds in informing their approach to problems and devising solutions. They espouse the value of designing for equity, where they acknowledge that a diversity of lived experiences is essential to designing systems that benefit us all. This orientation toward equity, along with deep usability expertise, made this an ideal site to conduct a critical FMEA to identify inequities resulting from usability evaluation methods.

Data Collection

Four workshops were conducted between February and April of 2024. Workshops were 2 hr long, conducted in-person at the firm’s office, and facilitated by the lead author (RT). The workshops were organized by topic, each focusing on a different category of usability evaluation methods.

The first three workshops focused on formative evaluations, summative evaluations, and inspection methods, respectively. Each workshop included four human factors specialists. In total, 10 human factors specialists were included. This was because two participants in the third workshop were unexpectedly unable to participate and were replaced with two human factors specialists who already participated in one of the previous workshops. These human factors specialists ranged from 2 years to >10 years of experience.

While these human factors specialists had significant experience evaluating regulated medical devices, we also had access to designers who had more experience with digital health products and service design projects. We decided to include these designers in a fourth workshop, that included three designers, to analyze their design research and evaluation methods.

The workshop agenda was structured as follows:

1. Review purpose of workshop and consent.

2. Discuss concepts related to equity and oppression.

3. Discuss reflexivity and positionality.

4. Introduce critical FMEA approach.

5. Define scope of analysis.

6. Outline process steps.

7. Identify failure modes, effects, and causes.

8. Reflect.

Workshops were scheduled on days that participants were already in office and occurred over lunch where meals were provided. These workshops were considered a quality improvement project, and protocols were reviewed by an affiliated hospital’s Quality Improvement Review Committee. In addition to capturing discussions collaboratively in a FMEA worksheet and on whiteboards, audio from the workshop was recorded and transcribed.

Data Analysis

We returned to the audio transcription to generate the final FMEA report. For each workshop, we first simultaneously coded sections with the usability evaluation method and the process step it referenced. Then, we analyzed all the sections relating to a particular usability evaluation method and a particular process step and assigned descriptive codes that described a failure mode, effect, and/or cause, again simultaneously.

With these codes we were able to generate an FMEA report for each usability evaluation method. However, comparisons across usability evaluation methods would allow us to generate higher order themes. To offer a sense of what we produced through this critical FMEA method, we present some brief examples of equity failure modes in the following section.

Preliminary Findings

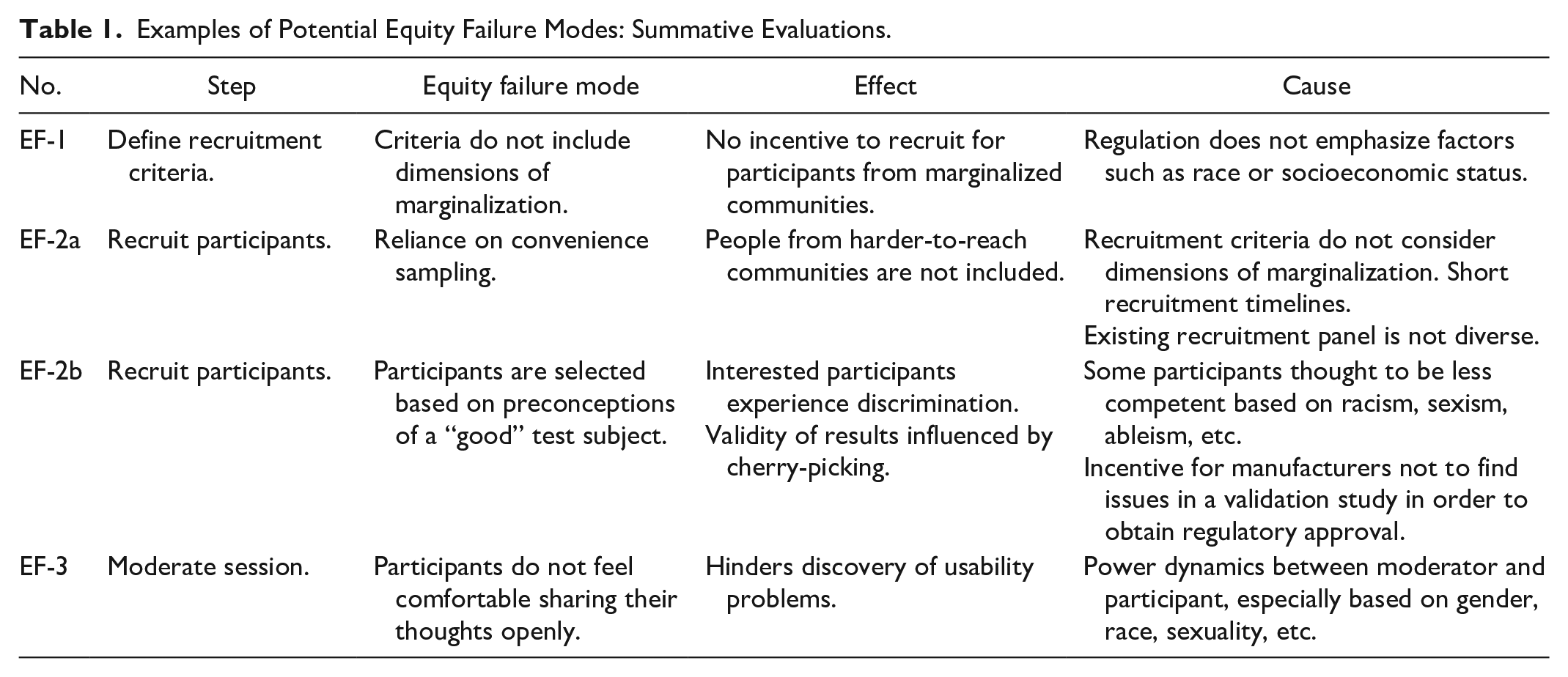

In Table 1 we present some examples of equity failure modes for summative evaluations that we believe are illustrative of the effectiveness of the critical FMEA approach. Since participants were experts in conducting human factors validation studies for manufacturers aiming to obtain approval from the U.S. Food and Drug Administration (FDA), much of the conversation around summative evaluations pertained to summative evaluations in this context.

Examples of Potential Equity Failure Modes: Summative Evaluations.

Some equity failure modes such as EF-1 and EF-2a were identified, in a sense, objectively from participants’ experiences conducting usability evaluations and interfacing with the FDA. For EF-1, participants explained that race was not typically specified when defining recruitment criteria nor was it collected from participants for the purposes of recruitment, further explaining that the definition of recruitment criteria was tied directly to the wants of the FDA. In particular, they noted that based on their experience, with regards to demographics, less emphasis was placed on factors such as race or socio-economic status, and more on factors such as age, years of experience, and medical training.

For EF-2a, as much as defining some a priori recruitment criteria implies the use of purposive sampling, participants noted that because of short timelines they may rely on convenience sampling thereafter where anyone who meets the initial criteria is included. This was related to the urgency of manufacturers to get to market, a prevalent theme in workshop discussions. Convenience sampling was further problematized as existing recruitment panels or participant pools they had access to might not be diverse. We can also see that equity failure modes are related, where EF-1 is another potential cause for EF-2a.

Perhaps more nuanced equity failure modes such as EF-2b were produced by contextualizing the experiences that participants shared. For example, workshop participants shared experiences where they felt that stakeholders were hesitant to include usability participants they thought might not perform well, potentially based on race or disability. Then, situating these experiences in the context of market pressures that manufacturers face, we theorized that if human factors validation is considered a regulatory hurdle to overcome in order to get a product to market, medical device manufacturers may be incentivized to ensure that their product “passes” the usability evaluation. This may inadvertently lead to selecting usability participants that are projected to “do well” in the evaluation. Not only would this be an anti-goal for usability evaluations, this attitude would tend to privilege those that are imagined to be good with technology.

Equity failure modes were also drawn from participants engaging in a reflexive process, such as in EF-3. One participant posited that gender may have an effect on the candidness of participants in a usability evaluation. They suggested that based on their experience when both the evaluator and the usability participant are women, the usability participant may feel more comfortable and thus share their thoughts more candidly. Extending this discussion further, another participant recounted an experience where they felt that the usability participant was dismissive toward them as the evaluator because they presented as a woman. Through acknowledging their own gender identities these participants were able to describe ways that power dynamics may affect the moderation of a usability evaluation.

Reflections

In this section we reflect on how the grounding of this FMEA in critical theory was important for producing these equity failure modes, for generating dialog in workshops, and for our analysis.

Returning to EF-3 in Table 1, this equity failure mode was first identified through one workshop participant who discussed how usability evaluations might feel like an examination of the usability participant’s skills rather than an evaluation of the technology. They posited that this may affect how the usability participant provided feedback about the technology, perhaps blaming themselves rather than pointing out how the technology hindered their ability to complete the task. They noted that it becomes the responsibility of the evaluator to diffuse this dynamic of the evaluator as an authority figure. Then, participants implicated their own gender in this power differential; they identified that gender concordance between evaluator and participant may help to diffuse the power differential. We can see in this example that examining the interpersonal interactions that occur in a usability evaluation through a critical lens allows us to identify the ways we might need to account for power dynamics and the effects of constructs such as gender in usability evaluation methods. In this same example we also see the benefits of engaging in a reflexive process, where participants were able to identify how their own identity can influence the usability evaluation.

The use of a critical lens also enabled us to examine the practice of usability evaluation in a broader social context. From EF-2b, we can see that a critical lens enabled us to further analyze what we heard in workshops and theorize potential equity failures in the interplay of economic forces and social biases. If we had only considered participants’ recounting of instances of discrimination in recruitment in isolation, we may have only understood this issue as acute instances of overt discrimination. Through contextualizing their experiences within social structures, we can begin to tease out how more subtle cases of discrimination may occur. By understanding usability evaluations as an economic enterprise, we can imagine how usability evaluations may reproduce or reinforce existing inequities.

Encouragingly, at the end of a workshop, participants reflected that through the preceding discussions they felt that they gained a better understanding, not just of equity concerns, but also, of their use of usability evaluation methods. They commented on how they could see the nuances that go into the decisions they make in doing evaluations—perhaps another demonstration of reflexively acknowledging their active role in usability evaluations. Overall, this sentiment resonates strongly with the aims of critical inquiry which includes the empowerment of researcher and participant alike to enact change.

Conclusion

We grounded a traditional engineering analysis, the FMEA, in critical theory in order to systematically identify potential inequities that result from usability evaluation methods. We illustrated through examples produced from the critical FMEA that our approach was effective at identifying these potential inequities, highlighting the central role of critical theory. Based on our experience, we believe that the critical FMEA is a viable approach that human factors practitioners and researchers can use to anticipate equity failure modes in design and evaluation methods.

Footnotes

Acknowledgements

We would like to thank the staff at Healthcare Human Factors at the University Health Network for their input and enthusiasm for this work.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Department of Mechanical & Industrial Engineering, Faculty of Applied Science & Engineering, University of Toronto; TRANSFORM Heart Failure (TRANSFORM HF); and University Health Network.