Abstract

Absentee voting presents a unique challenge for U.S. uniformed service members, as they often struggle to request and return absentee ballots while deployed, sometimes stationed far from their registered voting area. This research evaluates the usability of a proposed absentee voting system for military voters, which allows instant ballot requests and enables voters to verify their own votes, focusing on whether the user interface supports effective use among military personnel. Our evaluation revealed that military voters frequently relied on external assistance to navigate the electronic voting system, revealing opportunities for its improvement and design recommendations to facilitate absentee voting for U.S. military personnel.

Introduction

Ensuring voting access comes with inherent difficulties, especially for uniformed service members of the U.S. stationed all over the world. Despite record domestic civilian turnout in 2020 (74%), active duty military members had a significantly lower turnout (47%; Federal Voting Assistance Program [FVAP], 2021). The Uniformed and Overseas Citizens Absentee Voting Act (UOCAVA) has aimed to improve their access to elections since 1986, yet the disparity in turnout persists among those who protect the democracy of their nation. This discrepancy continues, largely because military personnel stationed away from their voting residence face obstacles and greater time constraints in updating registration, requesting absentee ballots, and ensuring timely voting. The most common reason for rejected UOCAVA ballots is late arrival, accounting for 44% of rejections in 2020, compared to 12% for non-UOCAVA ballots (United States Election Assistance Commission, 2022).

In response to the chronic need for improved absentee voting solutions for military personnel, a new voting system has been developed by VotingWorks to enhance access and security for military voters. Key features of this proposed system include requesting a ballot at the time of voting and generating a paper ballot for voters to independently verify the system’s accuracy in interpreting and recording their votes.

Since the system is intended for use at military bases and forward operating bases where traditional polling resources are likely unavailable, user testing with the target population in a representative setting is required for understanding how military voters actually use the system. This testing aims to determine whether voters can use the system securely and effectively without assistance and, if they cannot, identifies ways to improve their overall experience with the voting system.

To this end, the current work examines how military voters perceive the proposed absentee voting system. The experiment was designed to test a prototype version of the voting system, evaluating basic voting functionalities and user interface (UI) elements to establish baseline metrics of user performance and usability among military personnel. Metrics obtained from this assessment will serve as benchmarks to inform the refinement of the UI and other necessary components to support military members in participating in elections.

Method

Participants

We recruited participants on-site at the U.S. Army base at Fort Knox, Kentucky. Inclusion criteria required participants to be at least 18 years old; English-speaking; and either an active-duty or retired member of the uniformed services or their dependent.

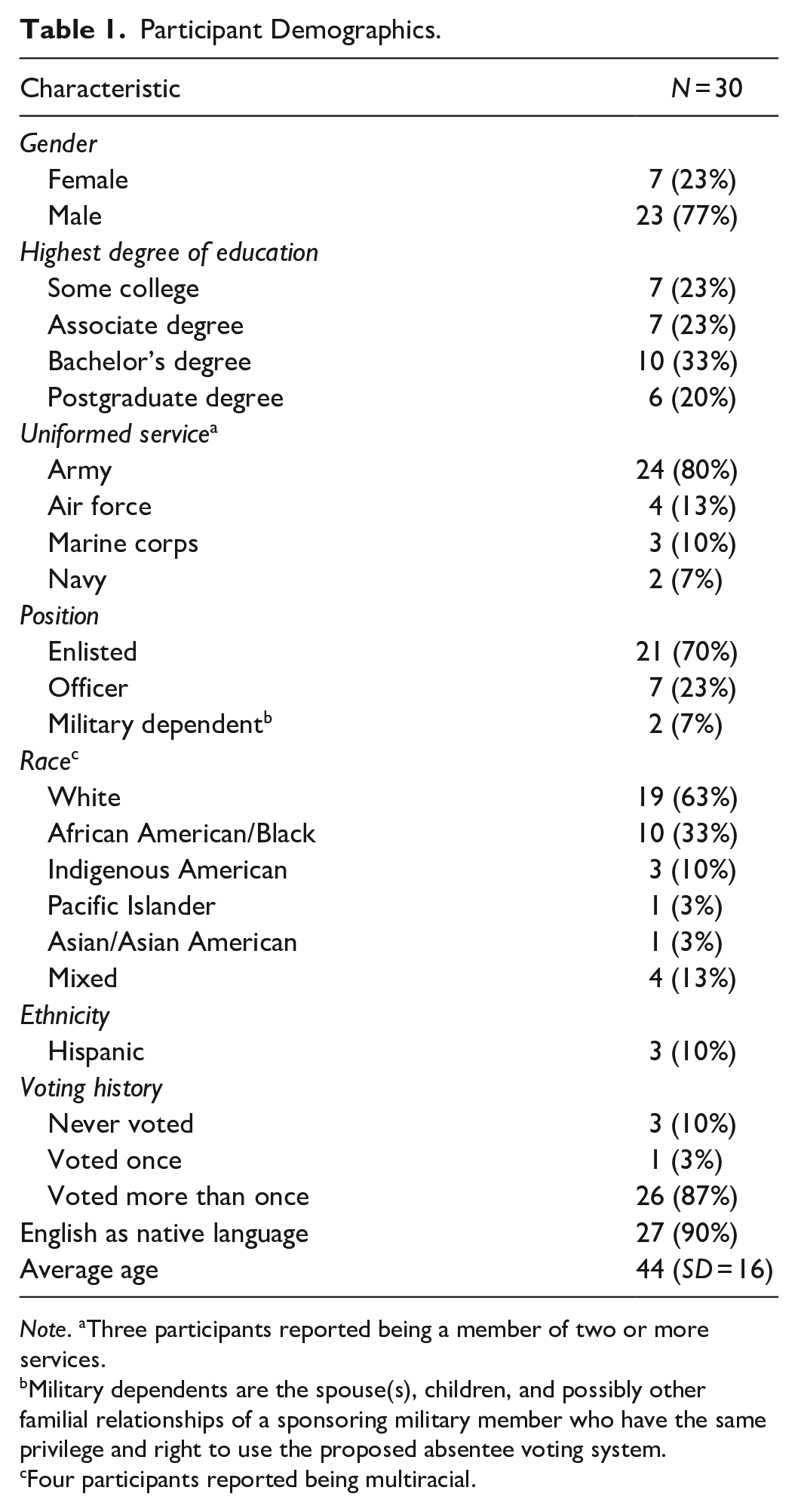

In total, 30 military members (23% female) completed the study. The mean age was 44 years (SD = 16). The majority (87%) of participants indicated that they had voted in multiple elections before, whereas one (3%) voted once and three (10%) had never voted before. Table 1 shows full participant demographics.

Participant Demographics.

Note. aThree participants reported being a member of two or more services.

Military dependents are the spouse(s), children, and possibly other familial relationships of a sponsoring military member who have the same privilege and right to use the proposed absentee voting system.

Four participants reported being multiracial.

Design

The usability of the voting system was assessed based on the three metrics recommended by ISO 9241, part 11: effectiveness, efficiency, and satisfaction (ISO 9241-11, 2018).

(1) Effectiveness of the system was evaluated based on vote casting ability, errors, assists during task performance, and subjective workload. Participants successfully cast their vote and completed the voting task by submitting their ballot into a ballot scanner. Error was measured by deviations in the voter’s ballot from the provided slate. An assist referred to the voter’s request for help while voting or using the voting system. Subjective workload was measured with participants’ responses to the NASA Task Load Index (NASA-TLX; Hart & Staveland, 1988).

(2) Efficiency of the system was measured as the time taken to print a paper ballot, representing the time spent interacting with the BMD in the mock election.

(3) User satisfaction with the system was evaluated based on participants’ subjective ratings on the System Usability Scale (SUS; Brooke, 1995) and the Adjective Rating Scale (ARS; Bangor et al., 2009).

Materials

Voting System

The voting system consisted of a ballot-marking device (BMD) in the form of a touch-screen tablet enclosed in a ruggedized carrying case. While voters used the digital interface to vote and review their selections, the BMD was connected to a printer to generate a paper record of the voter’s selections for security and auditing purposes. Figure 1 shows the experimental setup of the voting system.

Experimental Setup.

Ballot

The voting system was configured for a mock election comprising 26 races with made-up names and propositions: 19 single-candidate races, one multi-candidate race, and six yes/no propositions. Mentions of state and county were de-identified to ensure that the ballot did not represent any particular district in the U.S.

Procedure

The study began with IRB-approved informed consent. Then, participants performed a voting task, in which they used the voting system to vote for a standardized slate of candidates and propositions in a mock election. After voting on the system, participants completed a demographic form, as well as the SUS, ARS, and NASA-TLX regarding the voting system.

Results

We conducted a summative assessment of the voting system’s usability, evaluating its effectiveness, efficiency, and satisfaction. Based on this assessment, we identified areas for improvement and offered design recommendations for subsequent formative testing.

Effectiveness

Vote Casting

Twenty-three participants (77%) successfully cast a vote, whereas seven (23%) could not cast a vote without the help of an investigator. Participants who failed to cast a vote without assistance did not know what to do next after printing their ballot, or they handed their ballot to an investigator.

Assists

Ten participants (33%) asked for help during the ballot printing step, hesitating to ask an investigator whether they should actually print their ballot. Some of these participants assumed that they were done voting once they saw the review screen on the BMD. Fifteen participants (50%) asked for help to locate the ballot scanner or for what to do next before they submitted their ballot into the ballot scanner, indicating that only 50% of voters successfully cast a vote independently.

Errors

Thirteen participants (43%) made errors in following the provided slate. Specifically, seven did not follow the slate as instructed and made ≥10 wrong selections. Two erred exclusively in the multicandidate race, underscoring the necessity of UI modifications that clarify affordances for selecting multiple candidates. Finally, four made ≤2 errors outside the multicandidate race but otherwise voted correctly.

Efficiency

Participants spent an average of 204 s (SD = 69) to print their paper ballot. This result is comparable to the average vote casting time of 264 s using STAR-Vote—one of the first end-to-end voting systems proven to be both highly usable and secure in a summative usability assessment conducted by Acemyan et al. (2018). To note, their study involved 30 eligible voters (i.e., 18 years or older and U.S. citizens) and a similar ballot comprising 27 races.

Satisfaction

Despite numerous errors, participants expressed high satisfaction with the voting system, as indicated by a mean SUS rating of 87.3 (SD = 14.1) and a mean ARS score of 6.0 (SD = 0.7), corresponding to “Excellent.” Perceived workload was low, with a mean NASA-TLX score of 13.6 (SD = 10.2).

Design Recommendations

Clarify Voting Steps and User Inputs

Without clear UI elements, navigating through a multi-step voting process can be disorienting for voters, particularly when multiple digital and physical components are involved. During our testing, we observed that military voters frequently sought assistance to proceed through the voting process, expressing uncertainty regarding whether to print their ballot and how to cast their vote. Introducing a progress map within the voting system that delineates critical voting steps could provide clarity around the sequence of actions and signal to the voter when they have completed their interaction with the system.

Provide Timely Confirmation

Confirmation messages that appear too early in a process run the risk of premature completion errors, leading to failure to cast an official vote. During testing, military voters frequently requested outside assistance to confirm whether they should proceed with the ballot printing step. This uncertainty around ballot printing stemmed from their assumption that the voting process was complete upon reaching the review screen displayed on the BMD.

In the current voting system, the review screen provides voters with the option to navigate to any contest to change their selection(s), accompanied by instructions to “Confirm your votes are correct,” alongside a button labeled “I’m Ready to Print My Ballot.” However, terms like “Confirm” and “I’m Ready” might inadvertently imply to voters that they had completed the voting process. This is a particular vulnerability for electronic voting systems that digitally record and count votes while also generating paper ballots as physical evidence. It is crucial to ensure that prior subtasks do not overshadow the critical step of physically submitting the paper ballot, as the vote is not officially cast until this step.

To prevent premature assumptions of completion, the language used in intermediate steps should be modified to prompt a response or input from the voter, guiding them forward. For example, the instructions that command confirmation could be posed instead as the question, “Are your votes correct?” with options to respond “Yes” or “No.”

On the other hand, lack of confirmation may leave voters uncertain about whether they have completed the voting process correctly. It is crucial for the voting system to provide clear confirmation signaling the end of voting, specifically when the voter is ready to cast their official ballot.

Discussion

We explore several key areas that emerged from our research on the usability and effectiveness of the proposed absentee voting system for military personnel, including the impact of political partisanship on task errors, the importance of design that supports voter independence, and the critical role of voter trust in the system. Each section highlights the challenges and potential solutions to improve future testing as well as the voting experience for military voters.

Task Errors Influenced by Political Partisanship

Voters made numerous errors while marking the ballot; however, some of these errors may have been due to voters’ inclination to vote based on political partisanship rather than adhering to the provided slate. Although candidate names were fictional, the representation of political parties in this testing reflected that of a real election, including the Republican, Democrat, Libertarian, and Independent parties. Candidates and proposition motions were selected randomly, resulting in a slate of candidates with mixed party affiliations.

While some of the seven who made 10 or more incorrect selections appeared to vote randomly without considering party affiliation, two participants deviated from the slate to avoid selecting Republican candidates, whereas another voter avoided selecting Democratic candidates.

Among the four participants who made two or fewer errors outside the multicandidate race, three deviated from the slate in the first race to vote for the Republican presidential candidate instead. This was the most common error among all candidates not listed on the slate. Therefore, only one of these trials could be categorized as a true “slip,” or an unintentional error made while following the slate.

Future testing should replace real political party names with neutral, fictional party names to prevent voting selections influenced by political preferences rather than adhering to task instructions, thus facilitating the interpretation of errors. Otherwise, task instructions should be revised and clarified to prevent participants from voting randomly.

Supporting Independence with Good Design

The effectiveness of a polling place’s personnel and resources can influence voters’ perceptions of voting system usability more significantly than the specific technology used, as demonstrated in a study comparing paper and digital ballots during Election Day (Stein et al., 2008). However, in places where this proposed absentee voting system will likely be deployed (overseas military bases and forward operating bases), external assistance may be absent and voters will have to depend solely on the voting technology. Our testing underscores the need for clear instructions and overall reliability, ensuring that voters can navigate the system independently.

Measuring Voter Trust

Voters need to trust the voting system to use it effectively; without trust, they may hesitate to engage with the system or may not fully utilize its features such as ballot verification (Acemyan & Kortum, 2012; Oostveen & Van den Besselaar, 2004). Conversely, over-trusting voters may fail to verify their printed ballot and overlook anomalies or errors (Budurushi et al., 2016). The ability to independently verify the technology’s accuracy is vital in the proposed absentee voting system for military personnel. However, our testing indicates that military voters often make errors and fail to thoroughly check their ballots—if they check them at all—consistent with prior research (Acemyan et al., 2013; Bernhard et al., 2020; Kortum et al., 2020).

While the failure to verify votes suggests a need for clearer instructions on ballot verification, it is unclear whether this failure stems from a lack of trust or over-trust in the voting system, which requires further investigation. Previous studies have examined the trustworthiness of voting systems based on their features, asserting that cryptographic methods and other voting schemes achieve trustworthiness. However, these studies have not measured trust as a psychological construct among voters (Ansper et al., 2009; Blanchard & Selker, 2020; Paul & Tanenbaum, 2009; Randell & Ryan, 2006; Sameon & Ramli, 2012).

To understand how voter trust is mediated by the voting technology, user testing and psychometrically validated instruments for measuring trust are essential. For this purpose, we will include the Trust in Voting Systems measure (TVS; Acemyan et al., 2022) in future assessments of the voting system. The TVS allows us to explore how voters’ perceived trust contributes to voting system effectiveness, in addition to considering voting errors that go unchecked and voters’ confidence in their actions while using the system.

Conclusion

Despite high user satisfaction with the voting system, military voters often depended on external assistance to cast their votes, reflecting how voters often depend on poll workers or other resources typically available at traditional polling places. This evaluation of a proposed absentee voting system highlights opportunities to enhance clarity in design and instructions to ensure that voters are guided to cast an official vote while seamlessly interacting with a combination of digital and physical components, without external assistance. This evaluation reveals strategies to improve the assessment of voting system use and establishes benchmarks for evaluating the effectiveness of future designs for voting systems that are to be used by military personnel in the field.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by a grant from the Defense Advanced Research Projects Agency (DARPA) via VotingWorks.