Abstract

Generative AI tools (e.g., HeyGen, Adobe Firefly, Invideo AI) now enable marketers to translate videos not only by converting language but also by adjusting speech style, voice, and lip movements. Following this advancement, this exploratory study examined differences in perceived translation quality between AI-translated and human-translated marketing videos in international contexts. Two between-subjects experiments were conducted, involving English-to-Indonesian translation (Study 1) and Indonesian-to-English translation (Study 2). AI translation consistently yielded lower perceived naturality and accent neutrality than human translation. For language comprehension, AI performed worse in Study 1 but better in Study 2, indicating that translation direction matters. However, despite the perceptual differences, the two translation methods did not affect customer engagement intention. This study offers early evidence on how consumers evaluate AI video translation and provides 12 directions for future research.

Keywords

In the context of international marketing, videos are difficult to adapt across languages and cultures. Unlike text translation, which can be executed with relative ease, video translation often requires brands to create new voiceovers, dub existing footage, or reshoot scenes entirely. These processes are time-consuming and costly. For example, when a global brand adapts a short-form social media video for multiple markets, the process typically requires sourcing new talent for each target language, resynchronizing the audio, and reediting the footage to preserve timing, tone, and message delivery. Even for 30-second video content, these repeated production cycles accumulate substantial costs and delays, making video translation a nontrivial, operationally demanding task in social media content work for international marketing.

Generative artificial intelligence (AI) that can translate videos (e.g., HeyGen, Adobe Firefly, Invideo AI) may help solve the problem. These advanced AI systems enable marketers to translate videos, not only by changing the spoken language but also by modifying the voice, speech style, and lip synchronization to match the target language. With this translation capability, generative AI promises more efficient and scalable video translation, which may eventually improve brands’ business performance. In this case, McKinsey & Company (2023) estimates that generative AI's capabilities, including the capability to automatically translate content, could contribute $2.6 trillion to $4.4 trillion in annual financial benefits for brands. However, despite this outlook, a critical question remains: What do consumers think of AI-translated video content?

While the practical concern is increasingly relevant for global marketers, academic research has yet to provide clear answers. In international marketing literature, most language-related studies focus on code-switching (Lin and Wang 2016), localization strategies (Wahid et al. 2023), or the persuasive effects of using a local versus a foreign language (Krishna and Ahluwalia 2008). These studies provide valuable insights into how language choices shape consumer perception. However, they disregard the examination of whether the method of translation (i.e., whether a human or a machine translates the content) affects consumer response. At the same time, machine translation literature primarily analyzes traditional machine translation systems (e.g., rule-based and statistical models) (Sitender and Bawa 2021; Zbib et al. 2012). While these conventional techniques have long offered cost-effective solutions for translating text, they typically operate within fixed language pairs and lack flexibility in complex media formats. In contrast, generative AI systems are trained on large-scale multilingual datasets, enabling them to handle diverse language structures and contexts more fluidly (Brown et al. 2020). Indeed, some work has begun to explore the more advanced machine translation models, such as neural machine translation (Dabre, Chu, and Kunchukuttan 2021) and generative AI (Brown et al. 2020; Fu and Liu 2024). Nonetheless, the focus has been mostly on textual translation, not videos. Moreover, those studies have primarily positioned themselves within the technical, legal, or education domains. To the best of our knowledge, there are no scholarly works that investigate AI-generated video translation within the area of marketing. This oversight matters because video translation is becoming increasingly automated, yet little is known about how consumers perceive AI-translated video content. Without empirical evidence, international marketers lack guidance on whether to implement generative AI or continue relying on humans for video translation. Therefore, there is a need to offer initial insight into how AI video translation is received in comparison to human translation in the international marketing context. Driven by these theoretical gaps and practical needs, this exploratory study aims to examine consumer perceived translation quality of AI-translated (vs. human-translated) videos in international markets.

Measures of Translation Effectiveness

To understand consumer evaluation of AI-translated versus human-translated marketing videos, we focus on four outcome variables: language comprehension, naturality, accent neutrality, and customer engagement intention. The selection of outcome variables is grounded in established evaluative practices across three domains: machine translation (language comprehension; Scarton and Specia 2016), AI-generated content (naturality; Reisenbichler et al. 2022), and marketing communication (accent neutrality and customer engagement intention; Laroche et al. 2022; Wahid et al. 2023).

We first measure language comprehension. It demonstrates whether the message is clear and understandable in the target language. In machine translation research, comprehension is a key benchmark of success, as it determines whether the content fulfills its communicative intent (Scarton and Specia 2016). Then, following research on AI-generated content, we assess the naturality of AI-translated video content. In this research stream, naturality refers to the extent to which content appears realistic and organically produced, rather than synthetic or mechanically rendered (Reisenbichler et al. 2022). Assessing naturality is important because it captures the viewer's immediate sensory judgment before any evaluative interpretation occurs. Finally, aligning with research on marketing communication (Laroche et al. 2022; Wahid et al. 2023), we examine accent neutrality and customer engagement intention. Accent neutrality describes the extent to which the speaker in a marketing message sounds native. Evaluating accent neutrality is necessary because nonnative or foreign accents may reduce the effectiveness of marketing messages (Laroche et al. 2022). For the customer engagement construct, it denotes “a customer's voluntary resource contribution to a firm's marketing function, going beyond financial patronage” (Harmeling et al. 2017, p. 316). Marketing scholars often operationalize customer engagement as behavioral intention to engage with content, such as the intention to like, share, and comment on content (e.g., Alhabash et al. 2015). This construct is particularly crucial in digital and international marketing contexts as customer engagement often serves as a proxy for campaign success (Wahid et al. 2023). Collectively, these four outcome variables provide a parsimonious yet comprehensive framework that captures linguistic clarity, perceptual realism, and behavioral relevance, enabling a robust evaluation of how consumers perceive AI-translated versus human-translated marketing videos.

Study 1: Video Translation from English to Indonesian

In Study 1, we conducted an online experiment with a one-factor, two-level (conditions: human vs. AI) between-subjects design. This experiment featured a fictitious U.K.-based mobile game company distributing a social media video to its target audience in Indonesia. To recruit participants, two of the authors (who are Indonesians) distributed the experiment link via social media platforms to their personal and professional networks in Indonesia.

For the human condition, we recruited an Indonesian man to speak Indonesian (see Video Appendix 1). For the AI condition, we hired an English man to speak in the original English version of the video (the speakers’ characteristics in the human and AI conditions did not differ significantly; see Web Appendix A, where we explain the stimulus validation tests for Study 1 and Study 2). We then used HeyGen to translate this English-speaking video into Indonesian. HeyGen is a generative AI tool that produces high-quality videos. One of HeyGen's capacities is video translation. Operating this function is simple: Users upload their videos to HeyGen and choose the target language. In our case, we imported the English-language video of our stimulus into HeyGen and selected Indonesian as the target language. Within a short time, HeyGen spawned the requested video translation. Visually, the video is a deepfake, and thus the man's lips move as if he were indeed speaking Indonesian (see Video Appendix 2 for the AI condition). The scripts for each video in Study 1 and Study 2 are in Web Appendix B. All the videos (high-resolution versions) and datasets from Study 1 and Study 2 are available on OSF (https://doi.org/10.17605/OSF.IO/TGAUV).

At the beginning of the online experiment, we asked the participants to provide their age and gender. Then, the participants indicated their English language proficiency, attitude toward mobile games, and attitude toward rewarded challenge. After answering these queries, the participants were randomly exposed to one of our experimental stimuli. Based on the stimulus they saw, the participants then reported their perceived language comprehension (Lin and Wang 2016), naturality (Reisenbichler et al. 2022), accent neutrality, and customer engagement intention (Alhabash et al. 2015) (Web Appendix C documents all the measures in Study 1 and Study 2).

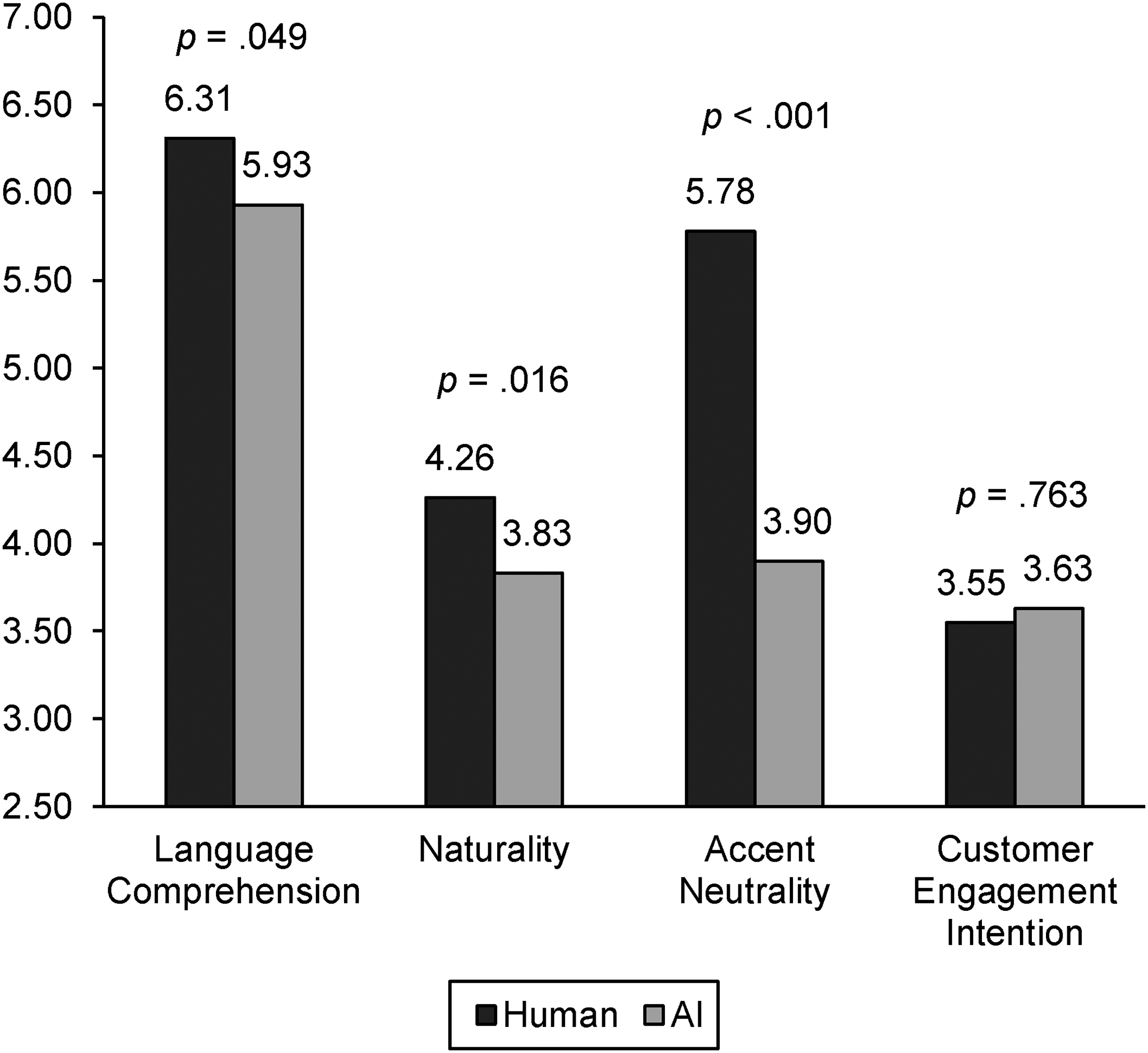

After cleaning the data, we had a total of 140 participants (Nhuman = 76, NAI = 64; 94 women, 46 men; Mage = 22.77 years, SD = 4.82). We executed a series of ANCOVAs (with five covariates: age, gender, Indonesian proficiency, attitude toward mobile games, and attitude toward rewarded challenge) to unveil the effects of the translation conditions (human vs. AI) on language comprehension (α = .81), naturality (α = .88), accent neutrality (α = .73), and customer engagement intention (α = .92). The results showed significant effects on language comprehension (F(1, 133) = 3.95,

Mean Comparisons in Study 1.

Study 2: Video Translation from Indonesian to English

In Study 2, we ran another online experiment with a one-factor, two-level (conditions: human vs. AI) between-subjects design on Prolific, involving consumers in the United States and United Kingdom

1

(independent-samples t-tests confirmed that responses did not differ significantly between U.S. and U.K. participants on language comprehension, naturality, accent neutrality, or customer engagement intention with all

As influencer marketing research (Han and Balabanis 2024) indicates, a person's characteristics (e.g., appearance, persuasiveness) in digital content may influence consumers’ evaluations. Thus, we ran two tests (i.e., a post hoc test and a pretest) to ensure that the speakers’ characteristics in Study 1 and Study 2 did not differ significantly. We found no statistical differences among the speakers in Study 1 and Study 2 in terms of attractiveness and persuasiveness (see the rationales and procedures for these tests in Web Appendix A).

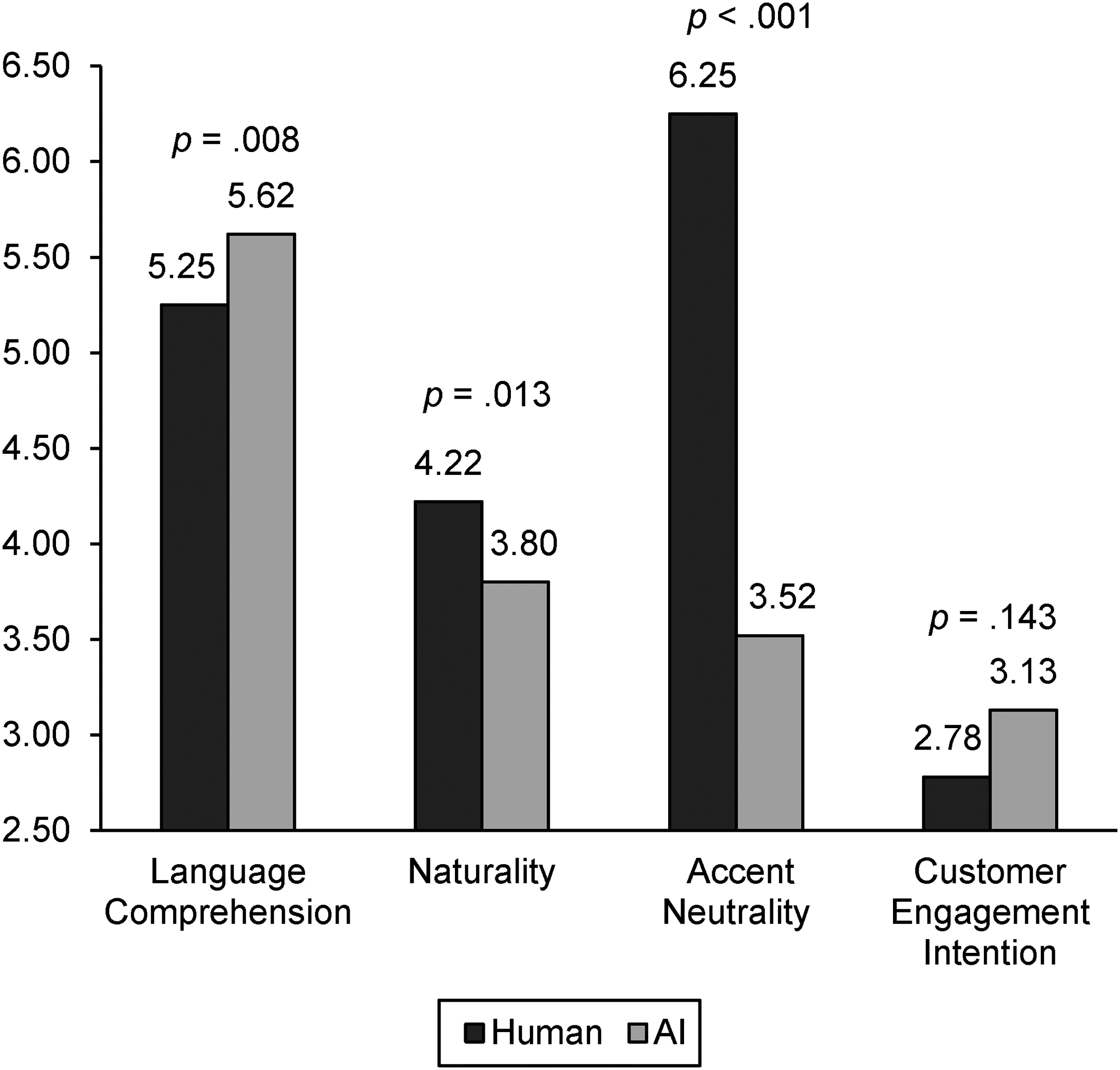

The procedures and measures were mostly equivalent to those in Study 1, except that we controlled for English proficiency rather than Indonesian proficiency. The measures for Study 2 are available in Web Appendix C. After data cleaning, the final sample consisted of 161 participants (Nhuman = 77, NAI = 84; NU.S. = 81, NU.K. = 80; 85 women, 76 men; Mage = 44.43 years, SD = 12.28). We ran a series of ANCOVAs (controlling for country, age, gender, English proficiency, attitude toward mobile games, and attitude toward rewarded challenge) to measure the effects of the translation conditions (human vs. AI) on language comprehension (α = .87), naturality (α = .90), accent neutrality (α = .95), and customer engagement intention (α = .93). The analyses revealed significant effects on language comprehension (F(1, 153) = 7.26,

Mean Comparisons in Study 2.

Discussion and Implications

Across both studies, we provide initial empirical evidence for how consumers currently evaluate AI video translation relative to human translation. In Study 1 (English → Indonesian), AI video translation yielded lower language comprehension, lower naturality, and lower accent neutrality than human translation. In Study 2 (Indonesian → English), this pattern partially changed: AI video translation yielded higher language comprehension, but still resulted in lower naturality and accent neutrality than human translation. A plausible explanation for this difference is that English has much richer representation in current AI training corpora (Brown et al. 2020). As a result, AI systems may generate more semantically accurate speech when translating into English than when translating into Indonesian. However, despite the differences in language comprehension, naturality, and accent neutrality, both studies consistently showed no difference in customer engagement intention between AI and human translation.

Our research offers preliminary practical implications for marketers. The findings indicate that AI video translation does not uniformly perform worse (or better) than human translation. Rather, certain attributes appear more sensitive than others. AI translation yielded lower naturality and accent neutrality across both translation directions, suggesting that marketers should pay particular attention to perceptual cues in AI-generated delivery. However, AI translation yielded higher perceived comprehension in Study 2 (Indonesian → English), implying that AI may already be competitive—or even advantageous—in some translation directions. At the same time, these perceptual differences did not translate into differences in customer engagement intention in either study, suggesting that lower perceptual quality does not necessarily undermine downstream behavioral intention. Taken together, these findings suggest that AI video translation may offer an emerging option for global content adaptation, while careful monitoring of perceptual quality remains warranted.

Theoretically, our research offers significant contributions to the literature on international marketing and machine translation. First, we extend the international marketing literature on language by moving beyond studies of code-switching (Lin and Wang 2016), localization strategies (Wahid et al. 2023), or persuasion (Krishna and Ahluwalia 2008) to examine how translation modality (i.e., whether content is translated by humans or AI) shapes consumer perceptions. Our findings suggest that a translation method is not a neutral conduit of meaning but an influential factor in how consumers perceive marketing messages. This opens new theoretical ground for examining how language-related automation tools influence message delivery. Second, we contribute to the body of research on machine translation by shifting the empirical focus from technical or textual domains to marketing communication in audiovisual formats. While prior studies often evaluate translation accuracy or linguistic fidelity (Brown et al. 2020; Dabre, Chu, and Kunchukuttan 2021; Fu and Liu 2024; Scarton and Specia 2016; Sitender and Bawa 2021; Zbib et al. 2012), our work highlights the importance of the perceptual quality of naturality and accent neutrality as key evaluative dimensions in consumer-facing content. In doing so, we position translation quality as a multidimensional construct that must account not only for comprehension and accuracy but also for sensory cues, particularly in multimedia settings.

Limitations and Future Research Directions

We note that the findings should be interpreted conservatively. The relative performance of AI in our two studies reflects one specific AI translation tool (i.e., HeyGen), one specific translation pair (Indonesian–English), and one specific point in time. Because generative AI is improving quickly, newer systems may perform differently. In addition, each condition in our research relied on one video stimulus, and speaker-level characteristics (e.g., accent strength, fluency, delivery style) may bias perceptions. These factors limit the generalizability of the present findings.

The limitations point to several immediate directions for future research. First, ensuing work may compare outcomes across different AI translation tools and track changes as this technology advances. Second, future inquiries may explore other languages (e.g., Finnish, Hungarian) to test whether our results still hold. The quality of AI-generated translation of other languages and how consumers perceive that quality may differ from the findings of the current study. This is because different language systems vary in their grammatical structures, phonetic complexity, and representation in AI training datasets (Brown et al. 2020). For example, widely spoken languages such as English typically benefit from abundant training data, which improves translation quality, whereas less-spoken languages often suffer from limited data, leading to lower accuracy. Such systematic differences across language families may yield distinct consumer perceptions of AI-translated videos. Third, future studies should incorporate multiple speakers and video formats to capture a broader range of translation scenarios.

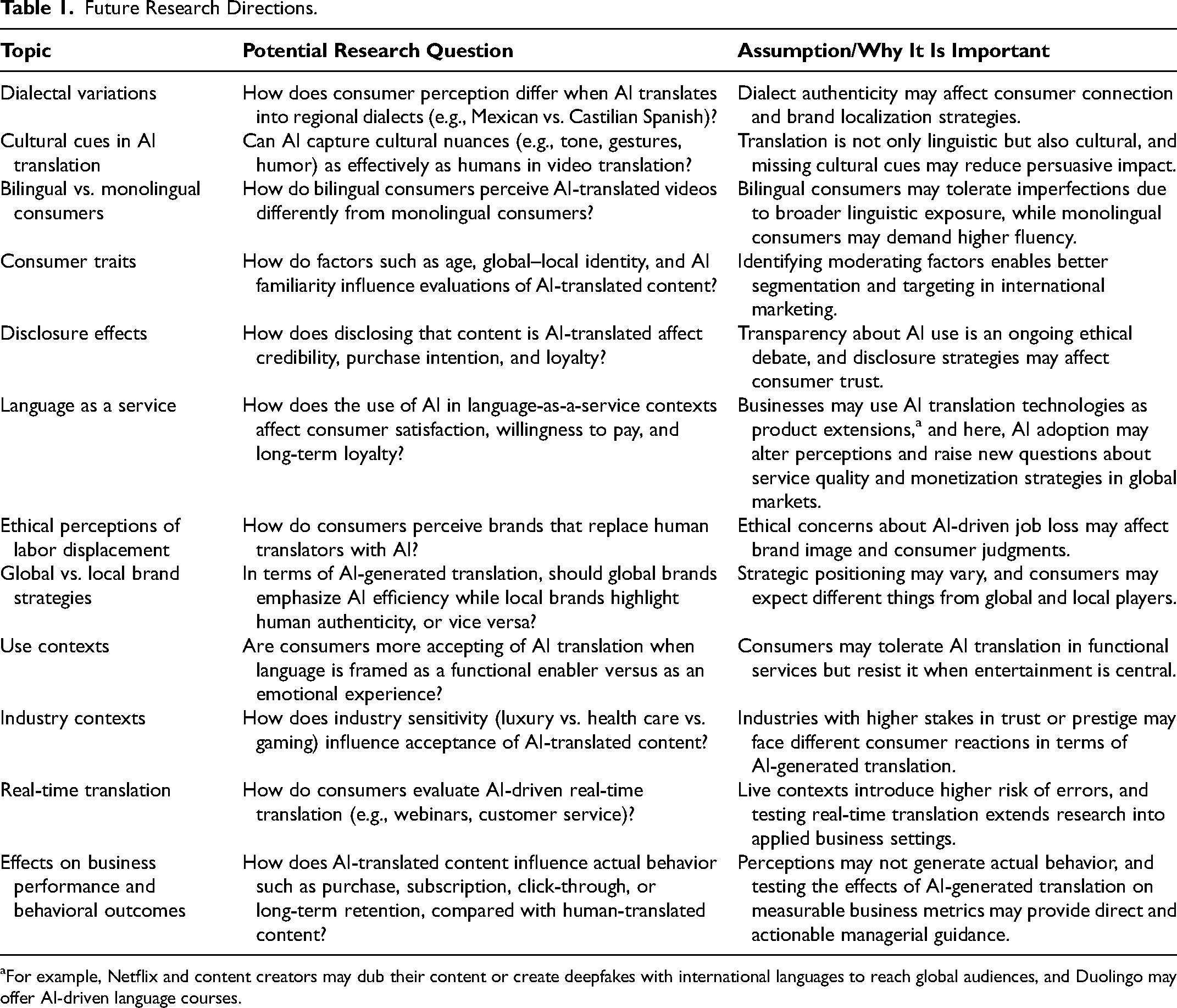

Despite the limitations, we view our research as a foray into understanding the trade-offs international marketers face when choosing between human-generated and AI-enabled translation. As translation technologies continue to evolve and adoption accelerates, this domain presents a timely and fertile space for international marketing research. Building on this, we propose 12 research avenues (see Table 1) involving topics such as disclosure, ethics, platform differences, and real-time translation, across multiple translation modalities (e.g., video, audio, text) supported by contemporary generative AI systems.

Future Research Directions.

For example, Netflix and content creators may dub their content or create deepfakes with international languages to reach global audiences, and Duolingo may offer AI-driven language courses.

Supplemental Material

sj-pdf-1-jig-10.1177_1069031X251404843 - Supplemental material for Generative AI for Video Translation: Consumer Evaluation in International Markets

Supplemental material, sj-pdf-1-jig-10.1177_1069031X251404843 for Generative AI for Video Translation: Consumer Evaluation in International Markets by Risqo Wahid, Jiseon Han, Nizar Fauzan, and Heikki Karjaluoto in Journal of International Marketing

Footnotes

Acknowledgments

The authors thank the

Editor

Ayşegül Özsomer

Associate Editor

Timo Mandler

Consent to Participate

Informed consent was obtained from all individual participants involved in the study.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research is funded by Marcus Wallenberg Foundation.

Data Availability

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.