Abstract

Internalizing and externalizing problems are common targets for school mental health screening. Prior research supports the interpretation of scores from the Youth Internalizing Problems Screener (YIPS) and the Youth Externalizing Problems Screener (YEPS), which were developed separately yet intended as companion measures. We extended previous work by evaluating the psychometric defensibility of integrated measurement models that combined items from the YIPS and YEPS into a unified screener (YIEPS). Specifically, we evaluated (a) a unidimensional model, (b) a correlated-factors model with two latent variables representing internalizing and externalizing problems, and (c) a bifactor model with two specific factors—internalizing and externalizing—and a general factor representing global mental health problems. We then tested the reliabilities of the several factors from these models and the informational value added of the competing models. Results indicated the bifactor YIEPS model had the best data-model fit for representing the unified screener. However, exploratory analyses suggested an alternative bifactor model with three specific factors—parsing attention problems from externalizing and internalizing content—might be an even better fit for the data. Reliability findings suggested the general factor—representing global mental health problems—was the most psychometrically defensible. Future directions for research and practice are discussed.

Earlier age of onset coupled with rising prevalence rates of emotional and behavioral problems in school-age youth warrants the use of efficacious school-based screening measures aimed at identifying and supporting those at risk of more chronic concerns (Allen et al., 2019; Danielson et al., 2021). It is estimated that 20% of school-age children and adolescents suffer impairment resulting from a mental health disorder each year (Merikangas et al., 2011; Perou et al., 2013). Youth spend a significant amount of time learning and socializing within schools throughout the formative years of development, and mental health problems have been shown to contribute to a host of undesirable outcomes in this setting, including early school attrition, impoverished interpersonal relations, attenuated academic performance, difficulties staying on task and completing work, and poor school attendance (Law et al., 2017).

Despite concerning prevalence rates of mental health problems among youth and the clear need for more and earlier intervention, school-age children and adolescents remain underserved and disorders within this demographic remain underidentified (Allen et al., 2019). In a recent study surveying 454 public schools, 81.1% of schools reported conducting universal academic screening procedures (e.g., targeting reading and math competencies), whereas only 12.6% of schools were conjointly conducting schoolwide mental health screenings (Bruhn et al., 2014). A follow-up study found that as many as 98.8% of schools were not conducting any form of preventive universal mental health screening (Wood & McDaniel, 2020). Such low levels of school-based screening are problematic when considering that approximately 80% of mental health disorders originate in childhood, and that fewer than half of children with such problems actually get diagnosed or treated, with fewer than 25% of those identified receiving intervention within a school setting (Goodman-Scott et al., 2019).

Schools are an ideal setting for facilitating early identification and intervention of mental health problems because of their broad scope of contact with youth across demographics, allowing for more accessible and equitable service delivery (Gaias et al., 2022). Establishing ways by which behavioral and emotional screening measures can be feasibly integrated within schools is therefore of vital importance to addressing the public health crisis and disparities related to youth mental health problems. Yet, however well-intentioned or motivated educators and school mental health professionals may be, there are a variety of implementation challenges that make it difficult for schools to adopt and sustain evidence-based mental health screening initiatives (Gaias et al., 2021). Given behavioral and emotional problems can manifest in a variety of constellations and conditions, the first challenge schools typically encounter is the decision regarding which mental health targets are the most important to screen for (Dowdy et al., 2010). Although there is far from unanimous agreement on this point, one widely adopted approach is to screen for risk relevant to the most common yet general classes of youth mental health concerns: externalizing (or behavioral) and internalizing (or emotional) problems.

Internalizing and Externalizing Problems

Externalizing (or behavioral) problems are characterized by excessive and disruptive behaviors directed toward the social environment (Forms et al., 2011). These behaviors include physical aggression, disobeying rules, and destruction of property. There are two major categories of externalizing problems, categorized by inattention/hyperactivity-impulsivity and conduct problems/oppositional defiance. Symptoms of inattention, hyperactivity, and impulsivity are usually diagnosed with attention-deficit/hyperactivity disorder, whereas symptoms of aggressive behaviors and conduct problems are usually diagnosed with either conduct disorder or oppositional defiant disorder (Kimonis et al., 2019). Research indicates that the prevalence rate for externalizing problems ranges from 7% to 10% in children and adolescents, with a higher prevalence rate in males than females (Samek & Hicks, 2014). There is also significant concern regarding the effect of externalizing behaviors on youths’ social life, academic performance, and risk related to engagement in criminal activities and recidivism (e.g., Kalu et al., 2020; Kremer et al., 2016). Students with externalizing behavior problems have earlier school dropout rates and a diminished chance of completing a high-school education (Esch et al., 2014; McLeod & Kaiser, 2004). Furthermore, among adolescents in the juvenile system who have mental health problems, externalizing behavior disorders are the most common presentation (Thompson & Morris, 2016).

Internalizing (or emotional) problems, unlike externalizing symptoms, are often covert and therefore more difficult to identify (Forms et al., 2011). Internalizing disorders often involve inward-focused symptoms and typically fall within the camps of depressive and anxiety-spectrum disorders (Hartman et al., 2017). Internalizing problems represent the most common mental health disorders among children and adolescents and are associated with a host of negative outcomes across various life domains (Merikangas et al., 2011; Wergeland et al., 2021). Undesirable outcomes that have been reported to concur with internalizing problems include strains in social adjustment and functioning, attenuated academic achievement, social isolation, decreased self-esteem, suicidal ideation and behavior, substance misuse and abuse, truancy, and absenteeism (Fornander & Kearney, 2020; Melkevik et al., 2016).

Students experiencing internalizing problems report frequent experiences of rumination, withdrawal, sadness, loneliness, or anxiety (Ehrenreich & Underwood, 2016). In contrast to externalizing concerns, however, students exhibiting internalizing problems have increased risk of underidentification and underdiagnosis, as internalizing symptoms do not attract the same level of social attention as that yielded by externalizing behaviors (Allen et al., 2019; Hartman et al., 2017). Increased difficulty in detection and diagnosis should not preclude the necessity of providing proper screening, identification, and data-driven intervention, as youth with internalizing problems may otherwise “fly under the radar” or “suffer in silence.” This discrepancy in identification and intervention trends among youth exhibiting mental health concerns has come to be known as the squeaky wheel phenomenon—referencing the idiom, “it’s the squeaky wheel that gets the grease”—with research showing that students with internalizing symptoms plus average academic performance are no more likely to receive school-based supports than are students who are nonsymptomatic (Bradshaw et al., 2008).

School-Based Screening

School-based screening can play a pivotal role in identifying students exhibiting externalizing, internalizing, and co-occurring types of mental health problems. As mentioned above, there are many perceived and actual barriers impeding the implementation of effective school-wide screening initiatives (cf. Gaias et al., 2021). Perceptions that screenings are excessive in cost, difficult to administer, and overly time-consuming are common concerns from educators and school administrators (Bruhn et al., 2014; Dever et al., 2012). For example, although both the BASC-3 Behavioral and Emotional Screening System (BESS; DiStefano et al., 2017) and the Systematic Screening for Behavior Disorders (SSBD; Erickson & Gresham, 2019) are widely used and evidence-based measures of both internalizing and externalizing problems, neither is free nor publicly available. Moreover, although the Screen for Child Anxiety Related Emotional Disorders (SCARED; Kragh et al., 2019) and the Revised Children’s Anxiety and Depression Scale (RCADS; Chorpita et al., 2005) are widely used, evidence-based, and freely available to the public, both only measure internalizing concerns—neither account for externalizing problems. As yet another example, the Social, Emotional, Academic, Behavior Risk Screener (SAEBRS; von der Embse et al., 2016) is a viable, evidence-based option for school-based screening, but it is both trademarked and developed from an alternative theoretical framework (i.e., academic enablers theory) that prevents straightforward interpretation of its scores as representing internalizing and externalizing problems per se. Thus, the present practice landscape is characterized by few evidence-based measures that effectively tap into externalizing and internalizing symptoms while also minimizing financial costs and remaining highly usable—being affordable and brief with straightforward administration and scoring procedures.

Some screeners that do meet all of the aforementioned considerations—(a) gauging both internalizing and externalizing problems, (b) free/low-cost, and (c) highly usable—include the Strength and Difficulties Questionnaire (SDQ; Goodman, 1997) and the Student Risk Screening Scale–IE (SRSS-IE; Lane et al., 2014). Combing the Student Internalizing Behavior Screener (SIBS; Cook et al., 2011) with the Student Externalizing Behavior Screener (SEBS; Hartman et al., 2017) or the Youth Internalizing Problems Screener (YIPS; Renshaw & Cook, 2016) with the Youth Externalizing Problems Screener (YEPS; Renshaw & Cook, 2019) would also meet these considerations. Although the SDQ, SRSS-IE, SIBS/SEBS, and YIPS/YEPS could each be considered psychometrically defensible for screening externalizing and internalizing problems, their available validity evidence is not commensurate. For example, there appears to be an abundance of validity evidence favoring interpretation and use of scores derived from the SDQ (e.g., Kersten et al., 2016) and SRSS-IE (e.g., Kilgus, Eklund, et al., 2018), whereas there are only a few studies supporting interpretation and use of scores from the SIBS/SEBS and YIPS/YEPS systems (i.e., those cited earlier). Second, although the multidimensionality of the SDQ and SRSS-IE has been confirmed within integrated measurement models—yielding distinct factors representing internalizing and externalizing problems—this type of structural validity evidence has not yet been produced for the SIBS/SEBS and YIPS/YEPS systems. Thus, there are limited options for schools seeking free, publicly available, evidence-based measures that can truly offer an integrated screening of both internalizing and externalizing problems. Such limited options are especially problematic for underresourced schools supporting marginalized or minoritized student populations, which do not have the funds or professionals available to use and implement the most widely used yet financially costly screening systems (cf. Gaias et al., 2021).

The Present Study

Given the background sketched above, the overarching purpose of the present study was to advance the quality of validity evidence for one of these promising yet underdeveloped school mental health screening systems for gauging both internalizing and externalizing problems. Specifically, we evaluated the psychometric defensibility of an integrated measurement model for the YIPS and YEPS system—hereafter referred to as the YIEPS—as a unified screener for both externalizing and internalizing problems. Integrating these measurement models also allows for the evaluation of a global mental health problems construct, indicated by both internalizing and externalizing problems. We accomplished our aim by using a series of confirmatory factor analyses (CFAs) to examine different integrated YIEPS models, including (a) a unidimensional model, (b) a correlated two-factors model, and (c) a bifactor model comprised of specific factors and a general factor. We anticipated the bifactor model would be the best representation of the YIEPS, given that bifactor models impose few constraints (Bornovalova et al., 2020), and that it would indicate a psychometrically defensible global mental health problems factor. We also expected results would allow for greater confidence in the interpretability of scores derived from the YIEPS screening system, as bifactor models allows for calculating the unique reliability of specific versus general factors within the measurement model (Rodriguez et al., 2016).

Method

Participants

Data were collected from 1,072 adolescent student participants in October 2020. Participants were enrolled at selected U.S. high schools partnering with the Character Lab Research Network (CLRN; https://characterlab.org/research-network). The identity of partner schools was not known by the researchers, with data collection facilitated by CLRN staff using a larger Qualtrics survey protocol developed by the researchers yet implemented by CLRN and local school staff. The YIEPS accounted for approximately one quarter of the items included in the full survey protocol. The nature of the larger CLRN-sponsored study was a longitudinal exploration—consisting of three data collection occasions over one academic year—investigating the relative predictive value of general and school-specific well-being indicators on youths’ social-emotional and educational outcomes. The YIPS/YEPS data evaluated in this study were collected in the first measurement occasion, given it was the largest subsample. This larger longitudinal study was approved by CLRN’s governing institutional review board (IRB), provided by Advarra (https://www.advarra.com).

The present sample was derived from the CLRN’s partner schools within Stratum E (mostly White, high SES, mostly suburban), which represent 21% of all secondary schools in the United States. This stratified sampling method was developed by CLRN in consultation with their Scientific Advisory Council member Beth Tipton, using the method she developed and implemented in The Generalizer (https://thegeneralizer.org). Student participants completed the full electronic survey through Qualtrics during a dedicated 25-min block of in-class time on a school day. Average completion time for the survey was 12.3 (SD = 6.42) minutes. Participants self-reported a median age of 15 (M = 15.5, SD = 1.3) and were distributed across Grades 9 (37%), 10 (25%), 11 (24%), and 12 (13%). Approximately 49% of participants self-identified as female, followed by 47% as male and 2% as other gender identities.

Measures

As described earlier, the primary measures of interest in the present study were the YIPS (Renshaw & Cook, 2016) and YEPS (Renshaw & Cook, 2019), which are 10-item self-report screeners of internalizing and externalizing problems, respectively. When combined, the integrated YIEPS measure is therefore comprised of 20 items, all of which are arranged along the same 4-point response scale (1 = almost never, 2 = sometimes, 3 = often, 4 = almost always). All YIEPS items are directly stated and thus no reverse-scoring is needed. A free copy of the combined YIPS and YEPS measure, along with a brief user guide, is available on the Open Science Framework at https://osf.io/ets7c. The YIEPS user guide recommends that each 10-item set (for the YIPS and YEPS, respectively) be summed and interpreted separately as distinct mental health indicators, with higher scores indicating relatively greater levels of internalizing or externalizing problems.

Statistical Analyses

To evaluate the integrated YIEPS measurement model, a series of analyses for model comparison were conducted using the Lavaan package (Rosseel, 2012) in the R statistical environment (R Core Team, 2021). Categorical CFAs (Jöreskog et al., 2001) of the unidimensional model (see Figure 1), correlated-factors model (see Figure 2), and bifactor model (see Figure 3) were conducted using the weighted least squares estimator with mean and variance adjustments (WLSMV). Independent test results were interpreted based on model fit indices and by comparing the change in the indicators to a unit change in the latent factor. Nested model comparison indices were evaluated for all three models to determine informational value added (p < .05) based on model fit while accounting for differences in parameters and degrees of freedom. Based on results from the model comparison, a latent construct reliability analysis was conducted to determine the consistency of the latent variables for the strongest overall measurement model.

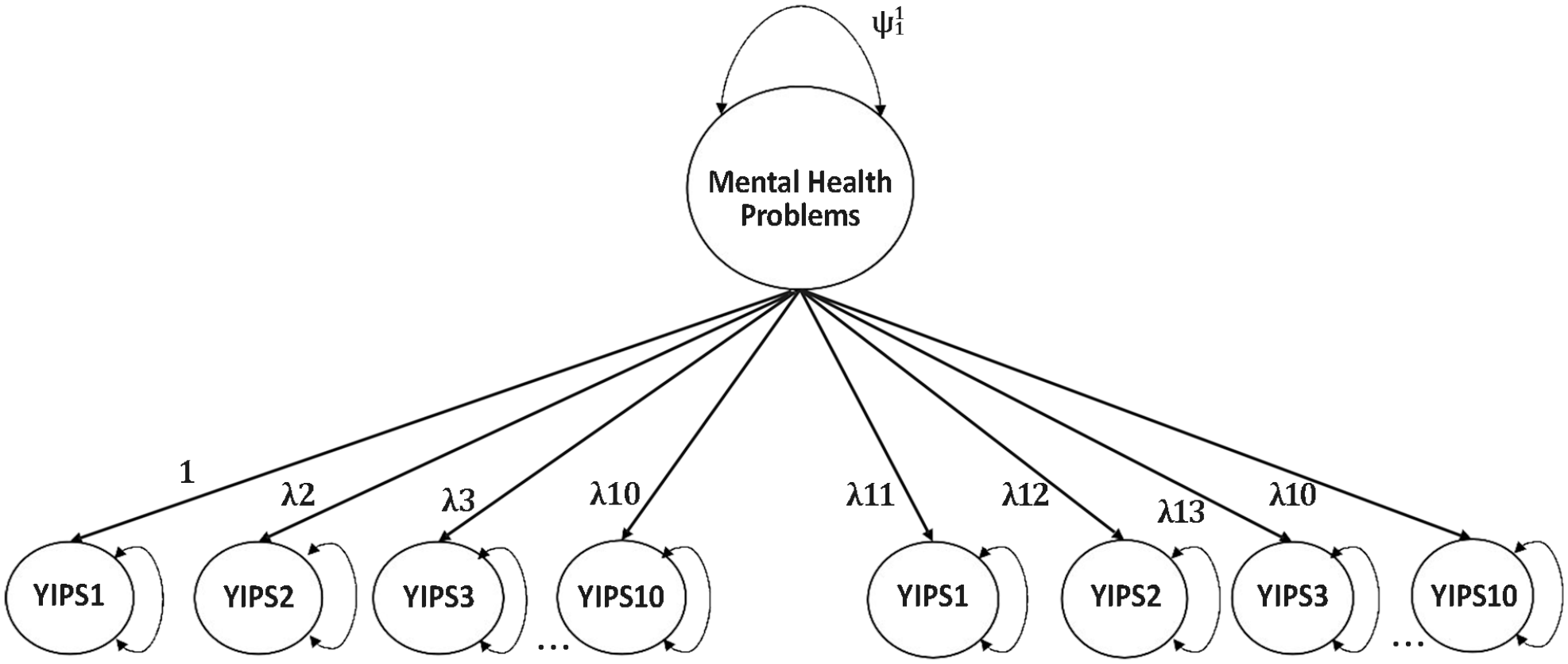

Unidimensional YIEPS Measurement Model.

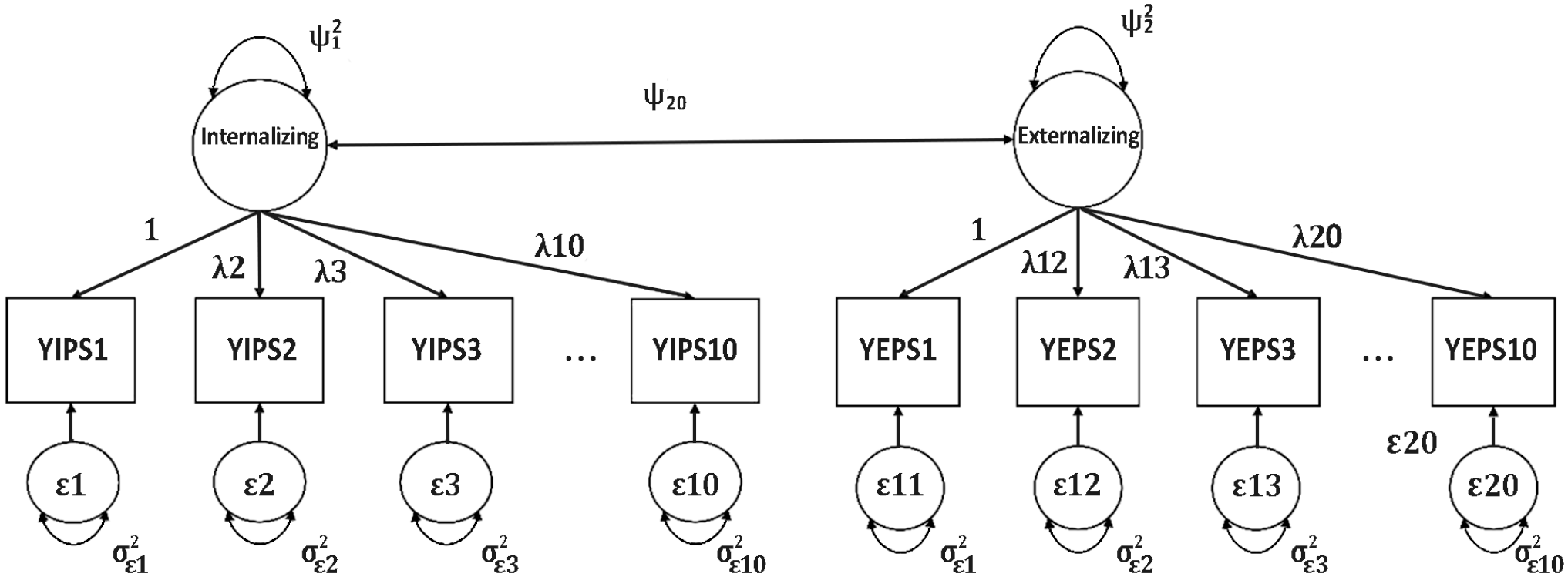

Correlated Two-Factors YIEPS Measurement Model.

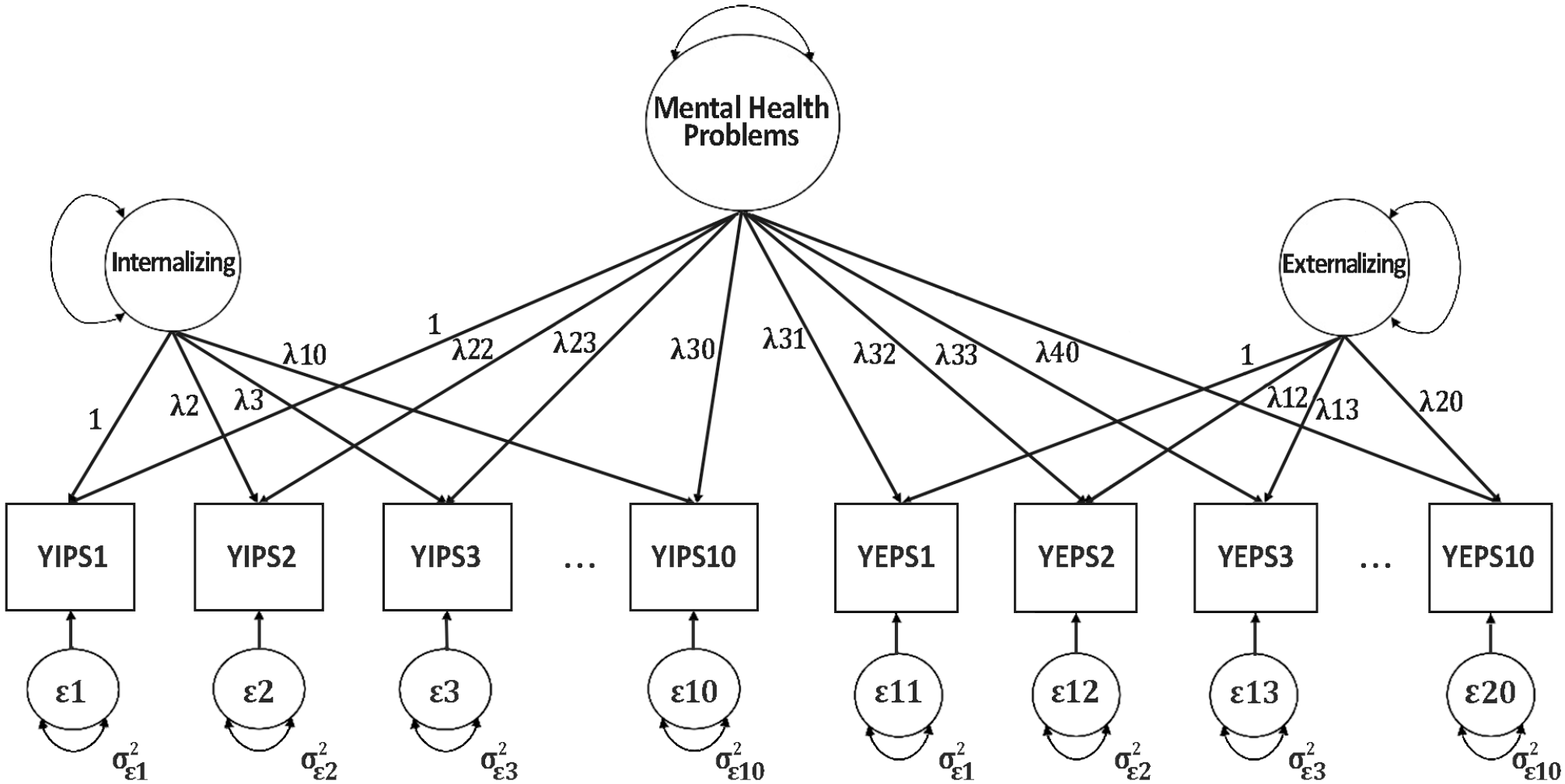

Bifactor YIEPS Measurement Model.

Results

Measurement Models

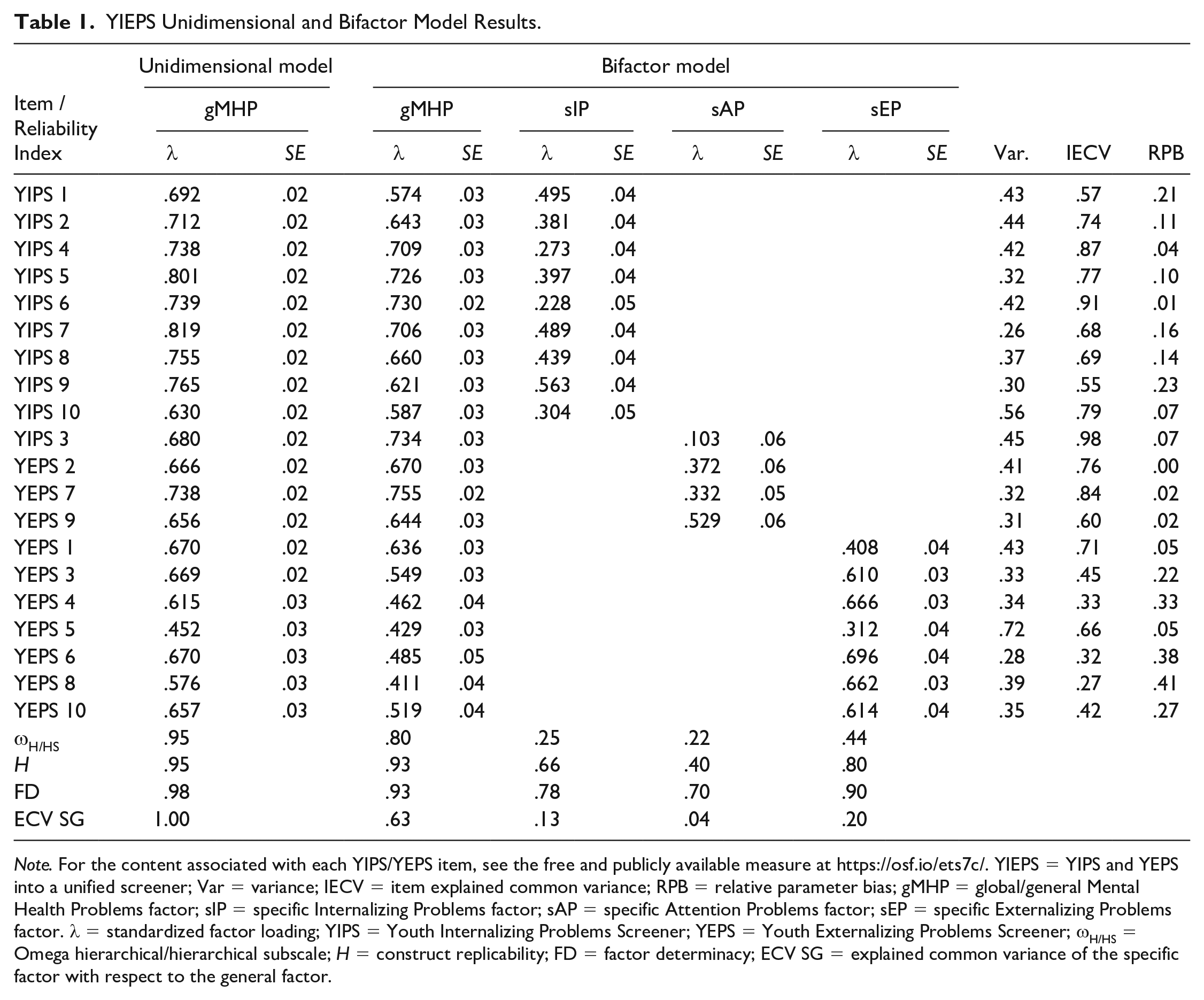

Initially, a categorical CFA of a unidimensional measurement model (see Figure 1) evaluating the loading of all 20 items from the YIEPS on a global factor—referred to as Mental Health Problems—was conducted using the WLSMV estimator. Results showed this unidimensional model exhibited poor data-model fit (root mean square error of approximation [RMSEA] [90% confidence interval (CI)] = 0.117 [0.113, 0.121], comparative fit index [CFI] = 0.849, standardized root mean squared residual [SRMR] = 0.114), with factor loadings ranging from .452 to .819 (see Table 1).

YIEPS Unidimensional and Bifactor Model Results.

Note. For the content associated with each YIPS/YEPS item, see the free and publicly available measure at https://osf.io/ets7c/. YIEPS = YIPS and YEPS into a unified screener; Var = variance; IECV = item explained common variance; RPB = relative parameter bias; gMHP = global/general Mental Health Problems factor; sIP = specific Internalizing Problems factor; sAP = specific Attention Problems factor; sEP = specific Externalizing Problems factor. λ = standardized factor loading; YIPS = Youth Internalizing Problems Screener; YEPS = Youth Externalizing Problems Screener; ωH/HS = Omega hierarchical/hierarchical subscale; H = construct replicability; FD = factor determinacy; ECV SG = explained common variance of the specific factor with respect to the general factor.

Following, an ordinal correlated two-factor YIEPS measurement model (see Figure 2), with items loading onto respective latent variables of Externalizing Problems (10 items) and Internalizing Problems (10 items), was estimated via categorical CFA using WLSMV. This correlated two-factor model showed a mediocre model fit (RMSEA [90% CI] = 0.087 [0.083, 0.091], CFI = 0.917, SRMR = 0.085) that was improved compared with the unidimensional model, with standardized loadings for the Internalizing Problems factor ranging from .660 to .846 and loadings for the Externalizing Problems factor ranging from .509 to 0.822.

After running these two initial models, the bifactor YIEPS measurement model (see Figure 3) was evaluated via categorical CFA by cross-loading each of the 20 items onto both its respective specific factor (i.e., Internalizing Problems or Externalizing Problems) and a general Mental Health Problems factor. Results from the bifactor model showed good model fit (RMSEA [90% CI] = 0.052 [0.048, 0.057], CFI = 0.974, SRMR = 0.040) that was much improved compared with both the unidimensional and correlated two-factors models. As expected, items loaded most strongly onto the general Mental Health Problems factor (λ = 0.467 to 0.845) compared with the specific factors. However, it was noteworthy that a few loadings—specifically, YEPS Items 2, 7, and 9—were not associated with increases of the Externalizing Problems specific factor (λ = −.036, −.135, and −.205, respectively), suggesting misfit for those items with that factor. A nested model comparison index was evaluated to compare the value of the unidimensional and correlated two-factor models against that of the bifactor model. Based on model comparison criteria (p < .05), the more complex YIEPS bifactor model was determined to provide more informational value than the unidimensional model (Δχ2 = 1052.80, df = 20, p < .001) and the correlated-factors model (Δχ2 = 534.73, df = 19, p < .001), indicating empirical superiority.

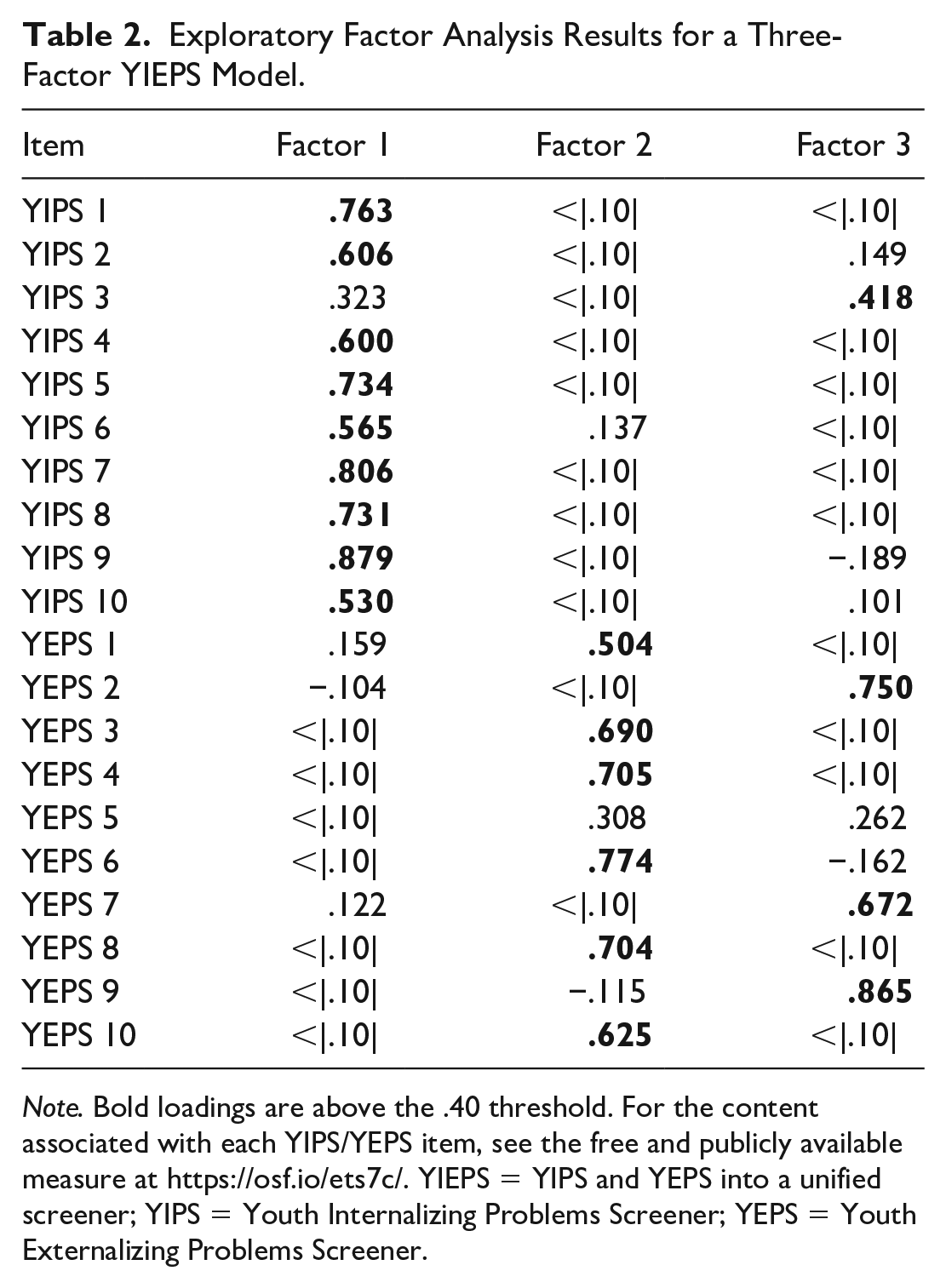

Considering the misfit loadings from the bifactor model, however, we decided to reevaluate the item factor structure of the integrated YIEPS measurement model via exploratory factor analysis (EFA) with a promax rotation. This decision was informed by our exposure to (just prior to running our analysis) a study by Kim and Choe (2022), who found that the measurement model for the Korean version of the YEPS (evaluated separately from the YIPS) could be improved by further parsing the items targeting hyperactivity-impulsivity/inattention into a distinct factor from the items targeting oppositional defiance and conduct problems. Kim and Choe (2022) likewise found improved data-model fit for the Korean version of the YIPS (evaluated separately from the YEPS) when parsing the items targeting anxiety from those targeting depression. These findings suggested that our bifactor model might be further improved by including additional specific factors to represent narrower internalizing and externalizing constructs. We used a combination of EFA methods, including the Kaiser rule, scree test, and Horn’s parallel analysis, to explore the appropriate number of factors measured by the integrated set of 20 items from the YIEPS. Results indicated a range of possible factors to retain, spanning from two to four. Preliminary assessment, starting with Kim and Choe’s four YIPS/YEPS factors, was considered based on factor loading thresholds of .40 and eigenvalues greater than 1 (Peterson, 2000). Results from the four-factor EFA model suggested that the YIEPS would likely be best explained by three latent variables, as only one item loaded onto the fourth factor.

Ultimately, a three-factor EFA with a promax rotation was run in the R statistical environment using the psych package (Revelle, 2018). Results appeared to support the assumption that the items on the YIEPS were better represented by more than just two factors. Specifically, the EFA showed that three items from the Externalizing Problems factor (i.e., “I have a hard time sitting sill when other people want me to” [ YEPS Item 2], “I have a hard time focusing on things that are important” [YEPS Item 7], and “I get distracted by the little things happening around me” [YEPS Item 9]), along with one item from the Internalizing Problems factor (i.e., “I find it hard to relax and settle down” [YIPS Item 3]) strongly loaded onto a third factor (λs > 0.40; see Table 2). All three factor eigenvalues were greater than 1 and, together, accounted for 48% of the model variance. After reviewing the specific items loading onto the third factor, we determined that the third factor could be reasonably interpreted as representing the construct of inattention, so we labeled it Attention Problems. Parsing these items from the other factors did not seem to change the interpretation of the Internalizing Problems factor. However, removing these items from the Externalizing Problems factor did narrow the scope of the construct represented by that factor, suggesting it should be more narrowly interpreted as representing oppositional defiance and conduct problems, sans inattention and hyperactivity-impulsivity content. Based on our EFA findings, the process to compare the value of a bifactor model compared with the unidimensional and correlated two-factors models was reevaluated using three specific factors representing internalizing, externalizing, and attention problems.

Exploratory Factor Analysis Results for a Three-Factor YIEPS Model.

Note. Bold loadings are above the .40 threshold. For the content associated with each YIPS/YEPS item, see the free and publicly available measure at https://osf.io/ets7c/. YIEPS = YIPS and YEPS into a unified screener; YIPS = Youth Internalizing Problems Screener; YEPS = Youth Externalizing Problems Screener.

The new correlated three-factor CFA was run using the Lavaan package in R statistical environment by constraining the first indicator parameter in each of the three factors and using the WLSMV estimator. Results indicated this revised correlated three-factors model had a reasonable data-model fit (RMSEA [90% CI] = 0.058 [0.054, 0.063], CFI = 0.963, SRMR = 0.054) and strong standardized item loadings with minimal range (λ = 0.666–0.849), showing improvements over the original, correlated two-factors measurement model (see above). Following, the new bifactor model that accounted for the three specific factors was run and showed good model fit (RMSEA [90% CI] = 0.052 [0.048, 0.057], CFI = 0.973, SRMR = 0.042) that was roughly equivalent to that of the original bifactor model with two specific factors (see above). However, it was noteworthy that the factor loadings for the new bifactor model were improved over the original, with no specific factors showing misfit loadings (see Table 1).

A nested model comparison evaluated the value of the new correlated three-factors model compared with the new bifactor model and found the updated bifactor model provided more information (Δχ2 = 154.37, df = 17, p < .001), suggesting it was empirically superior to the revised correlated-factors model. Comparisons of the relative value of the two bifactor models, with two and three specific factors, respectively, were not possible due to the two models sharing the same degrees of freedom (df = 150) and the ordinal nature of data. Based on past literature (Kim & Choe, 2022), EFA and CFA findings in the current study, and negligible differences in the bifactor data-model fit indices for the two and three factor models, we chose the revised bifactor model—including the specific Attention Problems factor—as the preferred structure for the 20 items of the integrated YIEPS.

Factor Reliabilities

After the preferred measurement model was settled, factor reliabilities for this bifactor model were computed using Dueber’s (2017) Excel-based bifactor indices calculator (see Table 1 for results). The ωH for the general Mental Health Problems factor is .80, which is at the threshold for a model that is considered essentially unidimensional (Reise et al., 2013). All of the specific factors—Internalizing Problems, Externalizing Problems, and Attention Problems—had poor ωHS values, suggesting low reliability in support of interpreting these factors. In addition, of the three specific factors, Attention Problems was consistently less reliable across the metrics of factor determinacy, replicability, and systematic variance. Overall, then, factor reliability results indicated the Attention Problems factor is likely not an adequate standalone factor compared with the Internalizing Problems and Externalizing Problems factors, possibly due to the limited number of items in this factor (i.e., 4) compared with the other factors (i.e., 7 and 9). Based on reliability results for the preferred bifactor model, it appears that there is evidence supporting the reliability of the general Mental Health Problems factor, but less so for the specific factors, and especially inadequate evidence for the specific Attention Problems factor.

Discussion

The increase in prevalence rates of youth mental health problems and their associated deleterious outcomes, including school-based concerns like poor attendance and academic failure, has drawn the attention of many mental health professionals toward the promise of school-based screening for detecting externalizing and internalizing problems (e.g., Allen et al., 2019; Law et al., 2017). To date, however, most schools are not adequately employing screening to identify students at risk of mental health concerns, although schools remain in a unique position to access, evaluate, and intervene across levels with numerous youth who are unlikely to access services in other settings (Wood & McDaniel, 2020). Due to implementation and sustainability issues regarding screeners, most schools still fail to capture the swell of mental health problems occurring within their student bodies (Gaias et al., 2021). Youths presenting with externalizing problems are receiving the majority of attention and services from schools, whereas youths experiencing internalizing problems are more often unidentified and underserved due to the covert nature of their symptoms (Hartman et al., 2017). A partial solution to these implementation concerns is developing and disseminating evidence-based, integrated mental health screening measures or systems—targeting both externalizing and internalizing problems—that are free, publicly available, efficient to administer and score, and easy to interpret and use, especially within the context of underresourced schools.

As the introduction illustrated, screening measures that meet these cost and usability criteria are limited, making it difficult for schools to have confidence in broad, evidence-based screeners that match their needs. Prior to the current study, the YIPS and YEPS were two brief measures that attempted to address some of these barriers related to cost and usability by gauging internalizing and externalizing problems, respectively, via quick, psychometrically defensible, free self-report rating scales (Renshaw & Cook, 2016, 2019). Both screeners were developed and validated independently, yet they were eventually intended to be administered and scored as companion measures that contribute to a broad mental health screening system (see the YIEPS user guide at https://osf.io/ets7c). To our knowledge, however, no study had yet generated structural validity evidence to support the integrated application and interpretation of responses to the combined version of the YIPS and YEPS. The current study set out to do just that by conducting a series of CFA on the integrated YIEPS measurement model: comparing the value of a unidimensional model, a correlated two-factors model, and a bifactor model.

Interpretation of Results

Findings from the current study indicated the original bifactor model, which posited two specific factors (i.e., Internalizing Problems and Externalizing Problems) and one general factor (i.e., Mental Health Problems), was more psychometrically sound compared with both the unidimensional and correlated two-factors model. These results supported our initial predictions, as we anticipated the bifactor measurement model would better represent the data. Interestingly, though, several item loadings seemed misfit with their theoretical factor orientation, which we did not expect. Specifically, three items from the Externalizing Problems factor (i.e., YEPS Items 2, 7, and 9) and one from the Internalizing Problems factor (i.e., YIPS Item 3) showed weak to inverse loadings onto their respective latent variables. In an attempt to further optimize the YIEPS measurement model, we conducted post hoc EFA on the 20-item YIEPS model. Findings from this exploratory analysis indicated that the variance in responses to YIEPS items might be better explained by three factors, rather than two, parsing attention problems from the broader domains of internalizing and externalizing problems. Despite the addition of a new specific factor (i.e., Attention Problems), follow-up CFA comparing the new bifactor model (i.e., three specific factors and one general factor) with the correlated three-factors model and the unidimensional model showed that the updated bifactor model was still the strongest representation of the data. When compared with the original bifactor model (with two specific factors), we decided the new bifactor model (with three specific factors) should be the preferred model, as it had similarly good data-model fit while also being characterized by more robust and consistent factor loadings. That said, factor reliability coefficients for the new bifactor model indicated that the Attention Problems factor was not defensible to interpret as a standalone variable and that the general factor—representing global Mental Health Problems—was by far the strongest and most defensible variable within the YIEPS measurement model.

Findings for the current study aligned somewhat with past research on internalizing and externalizing screeners with youth. For example, as mentioned earlier, Kim and Choe (2022) found that the measurement models of the YEPS/YIPS were better parsed into four latent constructs with a Korean sample, differentiating anxiety from depression as well as conduct/oppositional defiance from hyperactivity/attention problems. While Kim and Choe’s (2022) findings motivated our initial EFA in response to observed misfit among CFA loadings, we did not verify the four unique factors they reported; rather, our EFA identified three factors as the best representation of the data, with the new specific factor indicated by items related to attention problems. Thus, our study supported Kim and Choe’s findings regarding the YEPS items, but not the YIPS items. It is noteworthy, however, that our findings are not directly comparable with theirs, as we used a bifactor approach for the integrated YIEPS model, whereas they used separate correlated-factors approaches for independent YIPS and YEPS models. Such differences in the modeling approaches alone may largely account for these discrepancies in results.

At the outset of this study, we did not predict that attention problems would be pulled from the broader internalizing and externalizing problem factors into a standalone factor. Yet, our exploration of the literature following these results suggests that others have considered attention problems (or inattention) as a distinct mental health construct that both concurs with and predicts internalizing and externalizing problems. For example, attention problems have been found to be frequently comorbid with internalizing and externalizing problems (Litner, 2003). Studies attempting to understand the directionality or temporality of the relationships among these issues seem to posit attention problems as a preceding condition that predicts future internalizing and externalizing problems (Yip et al., 2013). The expected relationship between attention problems and both externalizing and internalizing problems is so consistent and strong that some researchers recommend using formal attention assessments as transdiagnostic measures of these broad mental health problems (e.g., Racer & Dishion, 2012). Moreover, research shows that youth with attention problems struggle to self-regulate, resulting in behavioral impulsivity and emotional negativity (Kim & Deater-Deckard, 2011). Attention (or inattention) has also been found to moderate the presentation of more specific internalizing and externalizing problems, including trait anxiety (Derryberry & Reed, 2002), peer influence on conduct problems (Gardner et al., 2008), and depression (Dishion & Connell, 2006). Thus, parsing attention problems into a distinct factor within the YIEPS measurement model seems defensible on both empirical and theoretical grounds, as there are good reasons to position attention problems as distinct yet interdependent concerns with internalizing and externalizing problems.

Although our results show the YIEPS measures three different specific constructs—internalizing, externalizing, and attention problems—it is noteworthy that the several reliability coefficients converged to indicate the general factor of global mental health problems was the strongest and most interpretable. This suggests that scores derived from the YIEPS are best used at the overall composite level, which is different from the original intention of integrating the YIPS and YEPS to obtain complimentary scores representing internalizing and externalizing problems (Renshaw & Cook, 2019). Yet, the practice of using a single score for screening broad mental health problems is not uncommon, as the SDQ Total Difficulties Scale (e.g., Renshaw, 2019) and SAEBRS Total Behavior Scale (e.g., Kilgus, Bonifay, et al., 2018), among others, are used for similar purposes. In fact, using one score for detecting risk for students who might be struggling with any or all of the factors measured by the YIEPS may provide an integrated and simpler screening method for schools. Using one score universally, to gauge school climate and inform universal intervention, or even at the targeted level, to identify specific students at risk of mental health problems, could be a more feasible and efficient approach compared with screening for multiple mental health indicators. In addition, given the structural validity evidence supporting the bifactor model investigated here, schools could still use information from the YIEPS to further understand the contributing factors to students’ global mental health problems by looking at scores from the specific subscales of internalizing, externalizing, and inattention problems. We suggest similar guidelines toward this end as Kilgus, Bonifay, et al. (2018) recommend for interpreting SAEBRS scores: the YIEPS general factor or total score (i.e., global Mental Health Problems) should be interpreted first and foremost, followed by interpretation of specific factors or subscale scores (i.e., Internalizing Problems, Externalizing Problems, and Attention Problems) for supplemental purposes.

Overall, we suggest the integrated YIEPS measurement model offers schools another evidence-based self-report screening system for gauging broad mental health among older children and adolescents. Compared with several other screeners on the market that serve similar functions, the YIEPS is also an option that might help overcome the implementation barriers related to screening specifically and mental health programming more generally by offering a quick, simple, free, publicly available, and easy-to-use method for detecting risk for broad mental health problems. Moreover, using measures that capture internalizing, externalizing, and attention problems as part of a global mental health construct may allow for more balanced and equitable service provision. Typically, students exhibiting externalizing problems receive a disproportionate amount of attention and intervention from schools (see the squeaky wheel phenomenon; Bradshaw et al., 2008). The YIEPS allows for intentional screening for the less noticeable internalizing problems and also seems capable of discerning attention problems from the broader class of externalizing problems. In addition, by providing schools with a feasible option for screening, the YIEPS has the potential to extend equitable identification to youth who might be missed for mental health concerns outside the school or who might be misdiagnosed within the school. Students of color and other marginalized student groups are often underserved for mental health problems or overdiagnosed for behavioral or externalizing problems (Gaias et al., 2021). Thus, service provision driven by evidence-based screeners like the YIEPS at the universal and targeted levels can be tailored and informed by data, rather than by intuition or teacher-referral based on “squeaky wheels” or crisis events, resulting in more equitable and socially just mental health care in schools.

Limitations and Future Directions

The structural validity results supporting the YIEPS measurement model, at least as it stands from the current study’s findings, suggest it is appropriate to recommend the measure as a potential screener for school-based research and practice. However, given the exploratory nature of some of the analyses and the unanticipated findings implying a potential third factor of Attention Problems, we believe additional studies are needed to confirm the utility of this new bifactor YIEPS measurement model. The present study is also limited by the demographic representation of the sample (i.e., mostly White, high SES, and suburban), which is like only 21% of all secondary schools in the United States. Generalization studies are therefore warranted with more diverse samples, including more students of color, with more socioeconomic variability, located within other geographic settings (e.g., rural and urban). Generalization studies with students in lower grades levels (e.g., Grades 6–8) would also be beneficial.

Finally, given reliabilities of the Attention Problems factor were particularly low in this study, future studies should consider reevaluating and refining the YIEPS by testing the value added of additional, new items intentionally targeting attention problems. Another option would be to evaluate the psychometric defensibility of the YIEPS when the attention items are dropped from the measurement model. In addition, researchers might evaluate the relative convergent validity of scores derived from the YIEPS with similar self-report screeners that are intended to screen broad mental health problems, such as the SDQ, as well as with other brief measures that gauge both internalizing and externalizing problems. Much work remains to further validate the YIEPS for its intended purposes.

Footnotes

Acknowledgements

Data collection was sponsored and facilitated by the Character Lab Research Network (CLRN; ![]() ) as part of a larger project evaluating student social-emotional well-being and educational outcomes. We are grateful for the generosity of the CLRN staff, their partner schools, and participating students in making this project possible.

) as part of a larger project evaluating student social-emotional well-being and educational outcomes. We are grateful for the generosity of the CLRN staff, their partner schools, and participating students in making this project possible.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.