Abstract

School-based youth violence is a worldwide concern. One common approach to dealing with this problem is implementing state-sponsored intervention programs for large populations of pupils. However, there is a growing concern that school-based interventions (SBIs) are being implemented without sufficient evaluations of their efficacy and cost-effectiveness. We take Israel as a national case study. Although its state education system strongly advocates an evidence-based approach to implementing “best-practice” SBIs, it is unclear to what extent Israeli SBIs are informed by impact evaluations. We coded information on all SBIs aimed at reducing violence in the state education system and reviewed the quality of evaluations associated with each SBI. Of 1,510 SBIs, 113 were dedicated to violence prevention programs in schools. Only 15 of these programs (13%), however, had any quantitative appraisal, and only two completed tests of SBIs were assessed using randomized controlled trial designs; five programs in total were evaluated under sufficiently rigorous conditions. We conclude that without valid causal estimates of treatment effects, Israeli pupils will continue to be at risk of being exposed to unhelpful and potentially damaging SBIs.

A common approach to dealing with youth violence in schools is to implement school-based interventions (SBIs) and other policies (Sullivan et al., 2008; Wilson & Lipsey, 2007). In countries with a centralized government administration and a national education system, the state funds a range of treatment providers (e.g., the police, nongovernmental organizations, for-profit entities, educators, and virtual media) to implement SBIs (Anderson et al., 2018; Barnes et al., 2017; Spiel & Strohmeier, 2011). These interventions take different forms including one-on-one counseling, group sessions, role-playing exercises, knowledge and capacity building, and more bespoke educational treatments such as music, art, or animal therapy (Grommon et al., 2018). The common theme, however, is their national or regional reach and in particular state sponsorship (Brunzell et al., 2016; Burrus et al., 2018; Clayton et al., 2001; Spiel et al., 2011).

Given the breadth of SBIs, we are interested in the extent to which these taxpayer-funded interventions are based on evidence. This is an ongoing concern that has troubled scholars for some time (Flynn et al., 2015; Peterson et al., 2001). On the one hand, the literature on SBIs highlights the need for policies that are based on rigorous testing prior to implementation and initiatives that are based on scientific evidence (Astor et al., 2005, 2008; Benbenishty et al., 2008; Eisner et al., 2016; Hall, 2017). Policy makers in the highest levels of the Ministry of Education in Israel, for example, commonly express support for evidence-based SBIs. On the other hand, some scholars have suggested that some programs were applied without any assessment of their effectiveness (Benbenishty et al., 2006). Implementing treatments that are logical or based on what “has always been done” but lack systematic and empirical support may lead to worsening effects. The American Scared Straight program, for example, exposed at-risk youth to living conditions in prisons in order to deter them from a life of crime, but it resulted in an increase in their criminal behavior (Petrosino et al., 2003). There are also cost implications in implementing harmful or ineffective SBIs (see e.g., Mccabe, 2007; Welsh & Farrington, 2001).

When considering these issues globally, rigorous evaluations of SBIs are even scarcer outside of English-speaking countries. With the exception of Scandinavian nations (Eisner et al., 2016; Garner et al., 1998; Rasmussen & Montgomery, 2018; Seedat et al., 2009), the global evidence on the effectiveness of SBIs to reduce violence is problematically thin. As there are clear cultural, economic, psychosocial, and other variations between countries, there is a need for localized independent evaluation programs that would increase the generalizability of our understanding of SBIs for violence reduction in non-English-speaking countries (Hillis et al., 2016; Mihalic & Elliott, 2015).

Taking Israel as a case study, we explore the extent to which SBIs designed to prevent school-based violence are supported by local evaluations and the methodological rigor of this body of evidence. We use “evidence maps,” which is a methodological approach that resembles the approach of systematic reviews but address applied practice rather than evidence produced through research (Miake-Lye et al., 2016; Saran & White, 2018). The process includes two steps. First, a protocol-based, methodical, and transparent review of practices that share a common aim. Second, an assessment of the rigor of the research applied to quantify the effectiveness of these applied practices. The outcomes of these evaluations can then be synthesized meta-analytically or descriptively, depending on size and range of the available evidence. However, the key feature of evidence maps is the ability to systematically, and critically, review the scientific evaluations that may or may not have contributed to the implementation of certain practices. We use the Maryland Scientific Methods Scale (Farrington et al., 2002; Sherman et al., 1998) to gauge whether the evaluation of each program provides valid estimates of program effectiveness, particularly in terms of the internal validity of the methodologies used.

SBIs

Causal research on the effects of school-based programs on violence is rich, and there are multiple systematic reviews on the efficacy and cost-effectiveness of several interventions (e.g., Cox et al., 2016; De La Rue et al., 2017; Gavine et al., 2016; Hall, 2017; Lester et al., 2017; Waschbusch et al., 2019). This interest is well placed, as exposure to school violence can have devastating educational and psychological effects, and it can alter a student’s life trajectory (Astor et al., 2009; Bender & Lösel, 2011; Knafo et al., 2008; Peguero et al., 2018; Ttofi et al., 2012). Meta-analyses illustrate how exposure to violence in childhood is linked to elevated risks related to a broad range of problems (e.g., Ttofi et al., 2016).

In response to school violence, education ministries around the world have implemented a broad range of SBIs. These state-sponsored programs are interventions available to public school pupils that opt to implement them for youth who are at risk of either exposure or committing a violent assault against their peers. Importantly, there is a convincing body of research that has demonstrated the effectiveness of certain SBIs (see Farrington & Ttofi, 2009; Farrington et al., 2017; Matjasko et al., 2012; Mytton et al., 2006; Thakore et al., 2015). However, the treatment effects vary significantly—although it appears that behavioral and cognitive behavioral strategies were found to be more effective than violence reeducation strategies (Waschbusch et al., 2019). Moreover, some intervention characteristics have been linked to enhanced treatment effects: (a) the composition and organization of a school (e.g., the number of students), (b) interventions aimed at specific types of aggressive or violent behavior, (c) interventions designed for young children, (d) high-quality implementation, and (e) interventions that include social–emotional learning. Programs are also more likely to lead to desirable outcomes if they include improved teacher supervision and parental involvement (Bierman et al., 2008; Botvin & Griffin, 2007; Newton et al., 2017; Ttofi & Farrington, 2011; Wilson et al., 2003).

Thus, there are SBIs that are likely to work. What we are interested in, however, is the extent to which the SBIs for school violence that are already in place have been measured for effectiveness. As national policy makers decide which SBIs to adopt, we expect research findings on the utility of the interventions to help these decision makers endorse effective treatments if this effectiveness is supported by empirical evidence.

However, Wandersman et al. (2008) have shown that, overall, only a small proportion of SBIs adopted by national education ministries can be described as evidence-based (see also Waschbusch et al., 2019). We wish to understand whether the same can be said about violence prevention SBIs in Israel. Which SBIs are presently being used in Israel? How many of them have been evaluated and, if assessed, how rigorous were the tests used? What can a synthesis of the evidence tell us about these SBIs?

Method

Setting

School violence in Israel remains high compared to other countries. A national survey showed that one in every seven pupils in the fourth to sixth grade reported being subjected to severe physical violence in school (Craig et al., 2009). Marginalized or minority youth populations are at an even higher risk of violence (Astor et al., 2011; Erhard & Brosh, 2008; López et al., 2018).

To target school-based violence, the Israeli state implements regional or national prevention programs. These SBIs are officially supported and funded by the state and are managed by the Israeli Ministry of Education’s Unit for Programs Management and Inter-Sector Partnership (Educational Programs Databases, n.d.). Israel’s 5,000 state-funded schools can choose which SBIs to implement from a list of 1,510 approved programs. These programs aim to produce a wide range of outcomes including educational attainment, resilience, and community engagement; some are also implemented to directly or indirectly prevent youth violence. These programs are included in our evidence map.

Evidence Maps

For this purpose, we apply an “evidence map” method, a relatively new approach aimed at describing the characteristics and magnitude of evidence in a specific area of interest (e.g., Behague et al., 2009; Miake-Lye et al., 2016; Saran & White, 2018). An evidence map is particularly useful when an unknown “quantity” of empirical data is available to answer a single question or when there is a need to describe the state of the evidence (Marshall et al., 2017).

Here, we use this approach to create a map of the available evidence on SBIs aimed at reducing youth violence in schools, which is already implemented. Through a systematic, transparent, and replicable process, an evidence map is created by accounting for all the programs that are implemented as SBIs and then critically assessing the extent to which these programs were properly evaluated before or after implementation. Therefore, unlike systematic reviews, which start by collecting research evidence about the effectiveness of a particular intervention for a specific problem, evidence maps begin by asking what practices are already in place and then assess the body of research evidence associated with these practices.

Data Retrieval

Following this approach, we systematically hand searched the Educational Programs Database for SBIs aimed at preventing or reducing violence in Israel. The database is hosted and maintained by the Israeli Ministry of Education. Evaluation documents for these SBIs are not publicly accessible, so we requested this information from the contact information listed in each program’s synopsis. These included representatives from the for- and not-for-profit organizations that delivered the intervention, counselors who were involved in the evaluation of the treatments, and headmasters. What unifies these treatment providers, however, is the funding and approval they have received from the Ministry of Education to implement the intervention.

For our purposes, eligible programs must have the objective of preventing violence, defined broadly as any type of behavior in which pupils may be physically assaulted by other pupils. Programs aimed at strengthening protective factors and improving the school climate are also included as researchers have found a strong link between these factors and the absence of violence (Khoury-Kassabri, 2012). When it was not clear whether specific programs were designed to reduce violence or related antisocial conduct, we contacted the treatment providers by email or phone to clarify this question.

In order for a program to be included in the evidence map, the following characteristics were required: The SBI is deployed in state-funded schools, at the school level or a large-group basis. Direct or indirect SBIs for reducing violence at the school level, including programs for leadership and life skills aimed at, among other targets, preventing or reducing violence. Programs that explicitly encourage learning, active civilian life, or active community life, but do not deal with violence, were excluded. Similarly, programs dealing with cyberbullying or any other cyber violence were excluded, as it is not possible to limit the outputs and the outcomes to school violence. Any type of violence prevention programs, except for those that aim to reduce sexual violence. The SBI targets populations of children and teens aged 3–18. There is no exclusion based on the racial or ethnic composition of the school. The program is designed for both secular and religious populations in Israel.

Coding Data on an Evidence Map

We catalog the eligible programs according to the following features: target population, prevention type, age, program type, main outcome, sector, number of institutions, evaluation stated, evaluation conducted, and whether the program is still operating (see Supplemental Materials for a breakdown of all eligible SBIs aimed at reducing violence in Israel).

More importantly, we rank the methodological quality of the evaluation studies available for each program from 1 (least rigorous) to 5 (most rigorous) on the Maryland Scientific Methods Scale. We expect variations in the scientific value of the SBI evaluations (Ariel, 2018; Sutherland et al., 2017). There are different levels of quality of evidence associated with impact evaluations, particularly in the ability to control for threats against internal validity (e.g., having proper comparison group[s]). The Maryland Scientific Methods Scale is a particularly useful model for cataloging evaluations based on their methodological strength. It was initially developed by Sherman et al. (1998) for the U.S. National Institute of Justice to identify effective crime prevention programs. According to Farrington et al. (2002), the Maryland Scientific Methods Scale’s main aim is to “communicate to scholars, policymakers, and practitioners in the simplest possible way that studies evaluating the effects of criminological interventions differ in terms of their methodological quality” (p. 13). On the Maryland Scientific Methods Scale, a cross-sectional design scores 1; a one-group before-and-after design scores 2; quasi-experiments, which depend on statistical matching techniques and is employed when random assignment is not available, score 3 or 4; and a randomized control trial (RCT), which is considered the most rigorous research design, scores 5 (Farrington et al., 2002). Impact evaluators should concentrate on producing Level 3–5 studies—experiments that use comparison groups when testing the relative effectiveness of different approaches against no-treatment conditions (Feder & Boruch, 2000; Weisburd & Taxman, 2000; Welsh & Farrington, 2001); we use this benchmark as well to critically assess the evaluation studies.

Results

Eligible Programs

Of the 1,510 SBIs funded by the Ministry of Education in Israel, 113 were found to be relevant to the evidence map as they were, prima facie, about violence. However, 43 explicitly stated that no evaluation of the SBI had been conducted, so they also were excluded from the analysis.

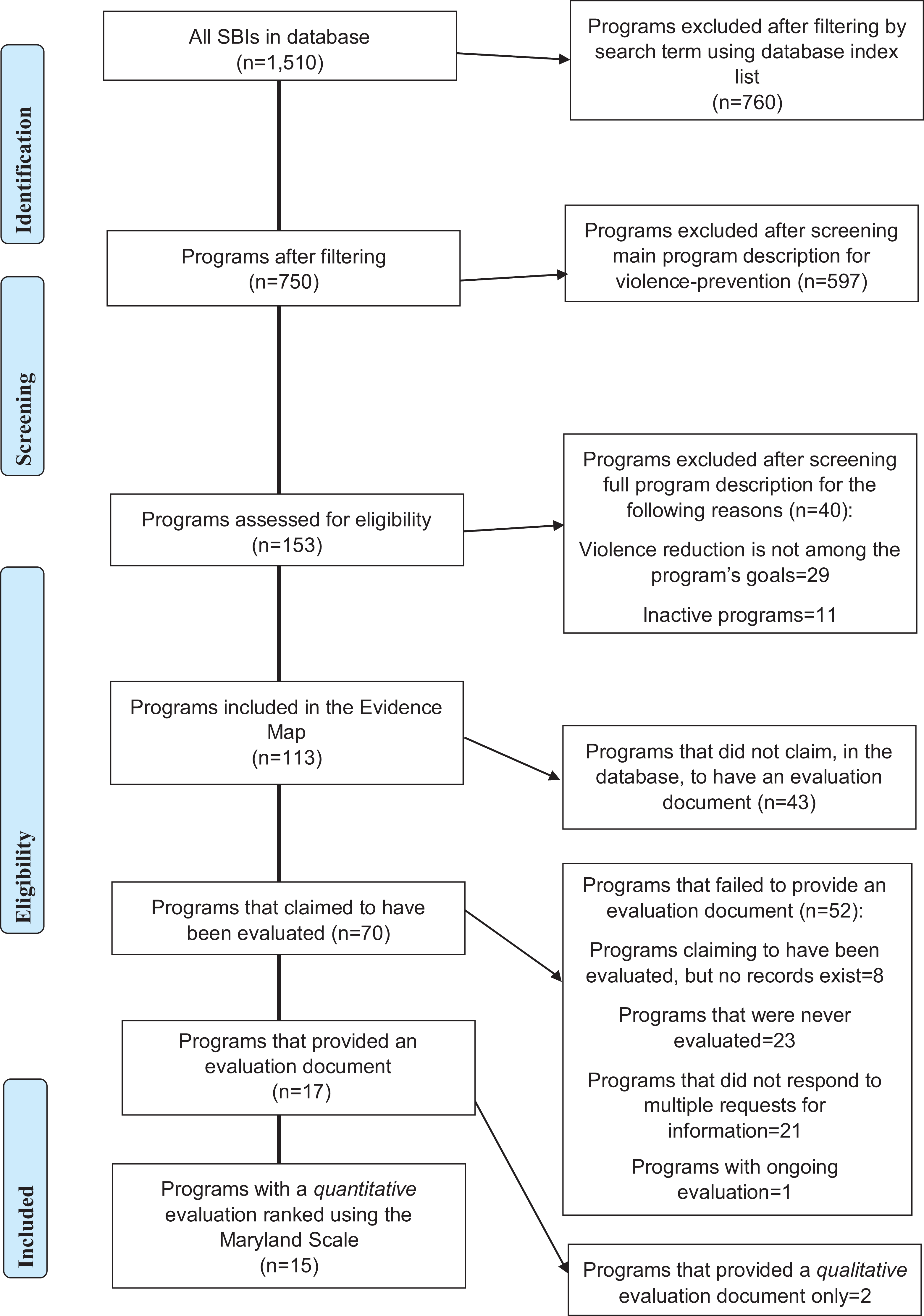

The descriptions of the remaining 70 programs claimed to involve an evaluation process, so we contacted each treatment provider via email or telephone to request the evaluation records. However, as depicted in the flowchart in Figure 1, 21 of the 52 program designers or treatment providers did not respond, despite at least three attempts to contact them; 23 programs responded that their program had had no evaluation component (even though it was listed in the program description). Eight described the evaluation process but did not offer any data. One evaluation program was ongoing at the time contact was made, so it was excluded as well. The remaining 17 programs provided an evaluation document, but two of these evaluations included only a qualitative process with no quantitative component. Thus, only the 15 programs that had some type of measurable evaluation (13% of SBIs for the prevention of violence in Israeli schools) were included in our evidence map.

Flowchart.

Exposed Populations

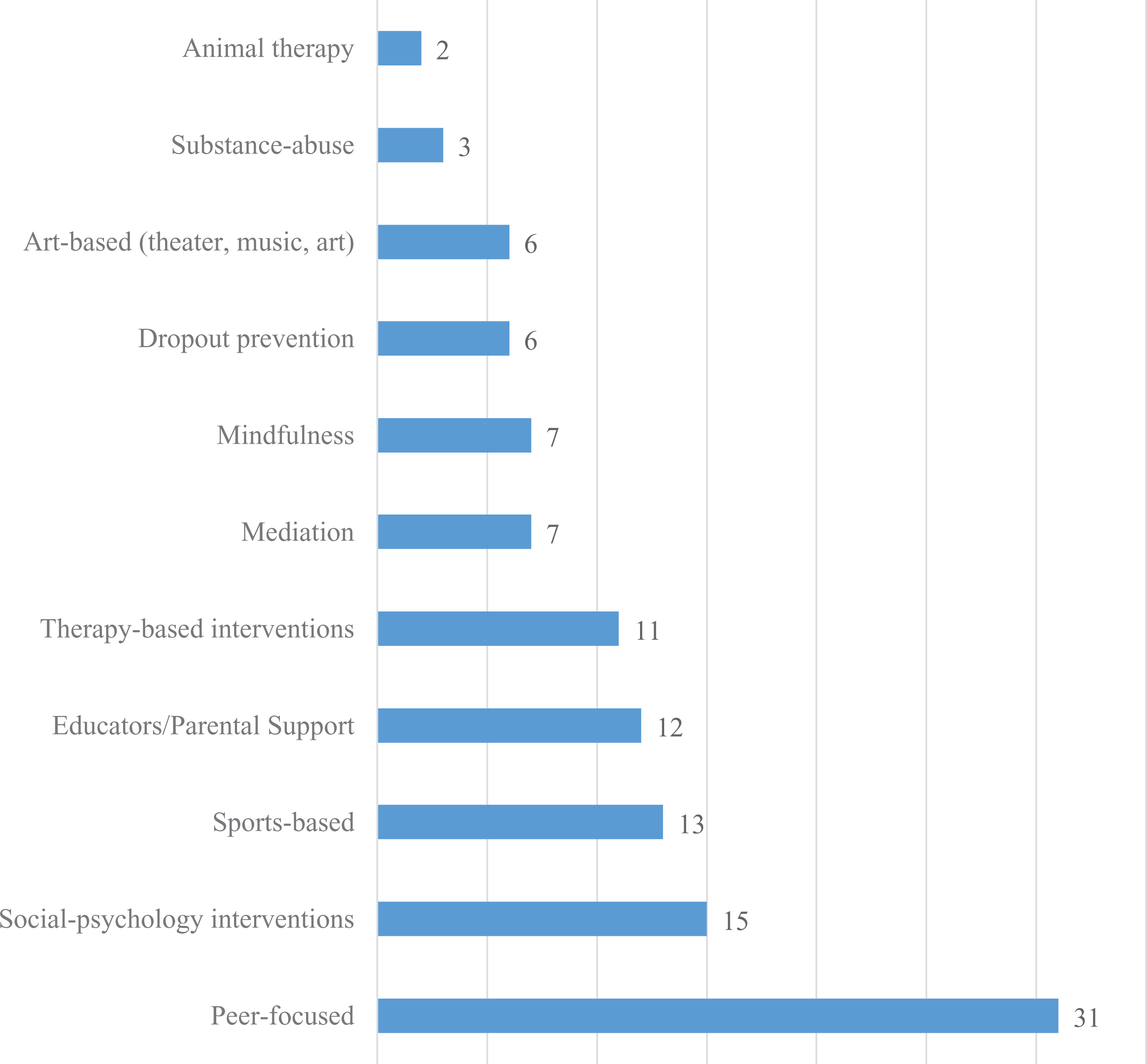

The peer-focused programs are the prevalent type of violence reduction SBIs in Israel, followed by social psychology interventions at the group level and sports-based interventions (see Figure 2). Only a small proportion of the evaluated programs are designed for children aged 3–5 (see Supplemental Materials). All evaluated programs are equally distributed among the remaining age categories, while most of the unevaluated programs are designed for children aged 6–12. The mean number of operating years for evaluated programs is M = 11.4 (SD = 6.3).

Content of violence prevention SBIs in Israel (N = 113).

Classification of Evidence According to the Maryland Scientific Methods Scale

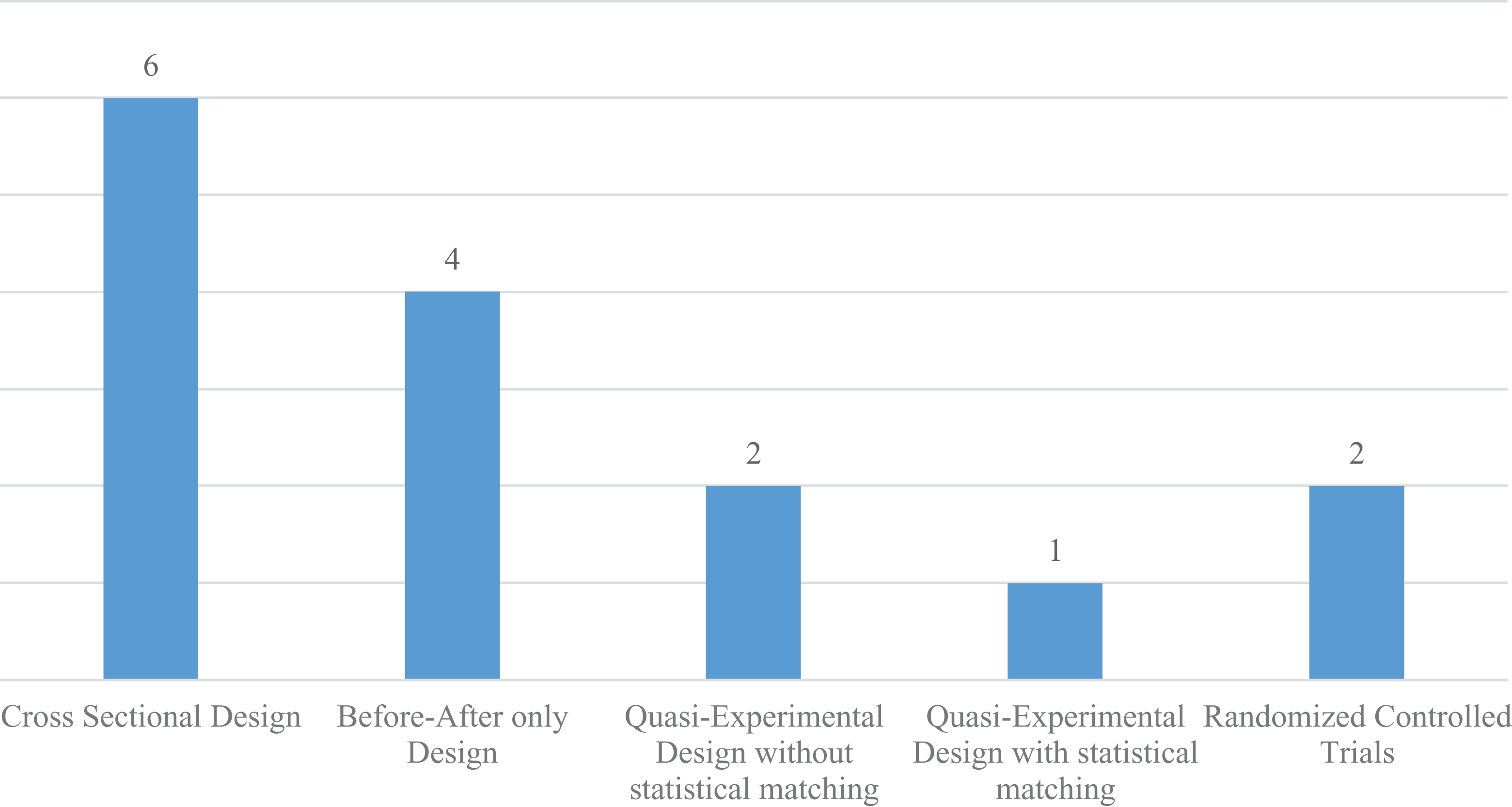

Figure 3 shows the 15 programs in the final sample categorized by methodological rigor. Only two involved RCT designs and could thus be given a score of 5 on the Maryland Scientific Methods Scale. In three other evaluation studies, the methodology design was robust and was scored 3 or 4. Four programs scored only 2 because there was no demonstrated comparability between the treatment and a comparison group. The remainder of the evaluation studies (n = 6) scored 1 because they used a cross-sectional design.

Number of evaluated programs by methodological rigor (Maryland Scientific Methods Scale).

What Works and What Doesn’t in SBIs

The evaluations of the 15 SBIs for violence reduction showed beneficial effects, with one evaluation showing no significant differences between treatment and control conditions. However, since “not all evidence is created equal” (Ariel, 2018), we focus on the findings from the five evaluations that have high internal validity—at least Maryland Scientific Methods Scale 3 ≤.

Of the five programs in Categories 3–5 of the Maryland Scientific Methods Scale, three (Positive Psychology, Power, Common Elements) have been evaluated by their developers, with the results published in peer-reviewed journals (see Supplemental Materials). The Common Elements program deals with risky behavior through social learning theory (Somech & Elizur, 2012). It includes a high level of parental involvement and is aimed at children aged 3–5. The evaluation study found statistically significant improvement in terms of conduct problems as well as the quality of the parent–child relationship (Somech & Elizur, 2012). An analysis of variance (ANOVA) of the Eyberg Child Behavior Inventory—a measure of child behavioral problems—revealed the following: in the treatment group (N = 125, completers), pretreatment mean = 87.88 (SD = 10.49), posttreatment mean = 78.89 (SD = 12.77), and follow-up mean = 79.84 (SD = 11.96); in the control group (N = 57), pretreatment mean = 88.27 (SD = 13.26); posttreatment mean = 88.11 (SD = 12.80; ES = .70, p < .001), and follow-up mean = 86.69 (SD = 16.99). Interestingly, conduct problems were found to be mediated by parental behavior.

In the Power program, the operative model provides life skills, such as self-control and cognitive behavioral treatment. The program was applied in small groups, with teachers as facilitators of the intervention. An ANOVA of teacher-reported student aggression (T-Agg) as the dependent measure yielded a significant Time × Group interaction, F(1, 310) = 17.77, p < .001). The self-reported student Aggression Questionnaire (total AGQ) measure also yielded a significant Time × Group interaction effect, F(1, 375) = 6.22, p < .01). That is, the intervention group (N = 167) showed a significantly greater reduction in aggression compared to the control group (N = 280; Ronen & Rosenbaum, 2010). In a follow-up study conducted 1 year after the conclusion of the program, its effects persisted, though with some treatment decay (Sherman, 1990).

The Positive Psychology program is based on Seligman’s PERMA model (Positive emotions, Engagement, Positive relationships, Meaning, and Achievement). This multidimensional model implements positive psychology while using emotional learning strategies. Operationally, the program teaches social skills and life skills by strengthening resilience factors. It was found to have positive effects on all the outcome measures examined: subjective well-being, peer relations, emotional engagement, and cognitive engagement. Follow-up studies found that the positive effects of the program steadily diminished; 1 year after its conclusion, no significant differences in school attendance were found between the treatment and control groups. Using hierarchical linear modelling for the peer-relation outcome variable, the treatment group (N = 1,262) showed linear growth trajectories (b = .062, p < .001) from the beginning of the program to 1 year after it concluded (mean change = 2.35, d = 0.39), with a small deceleration rate in the follow-up year (b = −.011, p < .001), whereas the control group did not show a significant change over time (Shoshani et al., 2016).

The Mindfulness for Junior High School and Big Kids Don’t Drink programs are rated as Level 3 on the Maryland Scientific Methods Scale. Mindfulness exercises (Tang et al., 2015) help self-regulation and emotional regulation processes. They aim to improve the ability to relax, intensify prosocial behavior and feelings of happiness, and enhance empathy (Greenberg et al., 2003; Masten & Motti-Stefanidi, 2009; Metz et al., 2013). Similarly, prevention strategies integrated in the Big Kids Don’t Drink program were also found to be effective.

The evaluation of the Mindfulness for Junior High School program was conducted in the context of an MA thesis (Vaisfailer, 2017) and did not find a significant treatment effect. The evaluation of the Big Kids Don’t Drink program found a statistically significant change in the subjective norms of the students and in their perception of control regarding drinking alcohol )in the treatment group [N = 970], posttreatment mean = 3.38, SD = 0.65, t = −4.77, p < .001; in the control group [N = 152], posttreatment mean = 3.32, SD = 0.72, t = 0.88, p = .376.( However, significant differences in attitudes toward drinking alcohol and parental involvement were not found between the treatment and control groups.

Discussion

State agents are increasingly receptive to “evidence” when considering which policies and programs to implement. Evidence-based policy making is common in medicine, public health, and engineering, as well as in the pharmaceutical industry, and more recently, it has been adopted in other areas of government business (see Lum et al., 2012; Telep, 2017). “Professional judgment” not supported by evidence is increasingly being challenged, and public policies that lack impact evaluations seem to be on the wane, in certain parts of the world, especially English-speaking countries (Lum & Koper, 2015). The evidence-based policy paradigm calls for the use of rigorous, systematic, and objective research methods to determine which prevention programs are effective, that is, what works, what doesn’t work, and what is or looks promising (Farrington et al., 2002; Royse et al., 2015; Sherman et al., 1998). The implementation of programs that have never been scientifically or effectively evaluated wastes limited budgets and undermines the status of prevention science (Horner et al., 2019; Mihalic & Elliott, 2015).

However, our evidence map suggests that SBIs that aim to reduce school violence in Israel are not properly appraised. After mapping all the eligible SBIs, we identify an alarming lack of rigorous evaluation research on SBIs in Israel. Indeed, the overwhelming majority of the programs have never been assessed. Thus, we cannot quantify the intervention effects and their dispersion patterns nor can we compute valid cost-to-benefit ratios. Our primary conclusion is therefore that there is limited evidence of the effectiveness of SBIs on violence prevention in Israeli schools.

However, we cannot conclude that the SBIs are ineffective either. Many programs are founded on research-heavy methods such as cognitive behavioral therapy (CBT; Butler et al., 2006) or mindfulness-based therapy (Hofmann et al., 2010). It is likely that many SBI treatment providers implement the treatments with fidelity and that school violence has decreased due to these interventions. However, proper tests remain a basic requirement of the scientific method. We want to know, rather than assume, that the interventions are impactful. As there are distinct differences between cultures, societies, and settings, the Israeli pupils and the schools they attend may react differently to SBIs based on CBT or mindfulness than students in other countries. The treatments could result in different outcomes under certain conditions, and we currently cannot compare the relative utility of any single SBI, as there is insufficient data in the research notes associated with the SBIs to synthesize the information. To make things worse, those programs that have been evaluated are generally characterized by inadequate methodological rigor (i.e., lower than Level 3 on the Maryland Scale; see Figure 3).

Given the existing body of evidence-based knowledge on SBIs and the growing effort to provide convenient access to it, the lack of evidence-based SBIs in Israel raises two fundamental questions. First, as most SBIs for violence prevention are based on approaches developed and tested in English-speaking locales, it is not immediately clear whether Israeli practitioners can blindly rely on this body of research. Our position is that the creation of evidence-based knowledge is needed at the local level (national, regional, and school district) because “the development of evidence-based knowledge […] can benefit from the use of comparative effectiveness research strategies using a range of research methods tailored to specific questions and resource requirements, and which examine effectiveness and cost-effectiveness” within the unique conditions in which these strategies are applied (Mullen, 2014, p. 59). We stress that, under certain conditions, knowledge from one area can be translated to other settings, but the risk seems unjustified, if not unethical, given the target population (Weisburd, 2003; Astor et al., 2010). Therefore, the first step toward establishing an evidence-based culture in school-based violence prevention in Israel is not just to embrace a strong evidence-based policy rhetoric but also to increase the number of controlled studies on SBIs.

Goldacre (2013) offers some insights and calls for making assessment a prerequisite for the implementation of interventions at the local level. Decision makers should not assume that results from one country are valid for all countries; what works in Scandinavian countries, for example, may not work in countries in the Middle East. Evidence gathering on effectiveness should become part of everyday life, so that practitioners will have access to scientific knowledge and incorporate findings about what works at the local level, enabling any decision to incorporate interventions into practice.

The second, wider question is why there is such paucity of rigorous impact evaluations of SBIs in Israel. We speculate that the reasons for this state of affairs are endemic, and our findings lead us to express a growing concern about the lack of rigorous causal research on Israeli policies, including policing and corrections (Jonathan-Zamir et al., 2019; Weisburd & Hasisi, 2018). There is a cultural block in Israel when it comes to implementing evidence-based practices because professional experience, tradition, and logic seem to be valued above independent research. Creating the infrastructure for evaluation research does not seem to be the main feature of policy making, save for the biomedical and IT professions, which mandate “evidence” as part of the decision to implement initiatives. The police, the prison system, rehabilitation services, and now SBIs for violence prevention are not characterized by a systemic endorsement of evaluative research as part of the decision-making process. Until these systems mimic the implementation of preventative programs in other countries (see Biesta, 2010; Gorard et al., 2017; Slavin, 2002), or in other disciplines, we will not be able to quantify the utility of state-sponsored interventions to prevent violence in schools.

We stress that our concern is not just in terms of scope (i.e., the number of available evaluative studies) but also in terms of the quality of studies rated at least Level 3 on the Maryland Scale. Evidence-based policy requires a continuous dialogue between research and practice, at least some of which must be founded on controlled experiments with the random allocation of units into treatment and control conditions (Shadish et al., 2002). The scarcity of randomized controlled trials is troubling, given the known issues with observational research and the methodological superiority of randomized trials for impact evaluations (see Cook & Campbell, 1979; Farrington et al., 2002).

Future research should attempt to understand the barriers to engaging with research, as tackling these obstacles may foster a transition to a culture of governance in which policies are supported by evidence (Cooper et al., 2009; Horner et al., 2019; Levin, 2011a, 2011b; Velle, 2015). Research focusing on the practitioners, policy makers, and researchers’ attitudes toward the use of evidence-based practice is needed as well (Levin, 2011a, 2011b).

Conclusions

Our evidence map of Israeli SBIs for violence prevention unearthed only 15 quantitative assessments associated with these programs (out of a total of 153), with generally weak research designs in terms of causal inference. Only five completed evaluations had reasonable controls to address threats to the internal validity of the tests. More causal research is needed, but until such a body of evidence is produced, Israeli SBIs would benefit from a clearer understanding of the antecedents that have led to this paucity of rigorous impact evaluations.

Supplemental Material

Supplemental Material, ICJR_suppl_mat - Evidence Map of School-Based Violence Prevention Programs in Israel

Supplemental Material, ICJR_suppl_mat for Evidence Map of School-Based Violence Prevention Programs in Israel by Hagit Sabo Brants and Barak Ariel in International Criminal Justice Review

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.