Abstract

In this article, recent developments in the assessment of writing, especially informational writing, are connected with research-based instruction that is likely to have an impact on students with disabilities and the wide range of student writing development in Grades 3 to 8. The objectives are for educators to assess, interpret, and set goals for five components of writing that have the greatest influence on informational writing development and connect directly with instruction. The five components are: informational text structure, vocabulary, typing fluency, spelling, and sentence accuracy. Educators are introduced to instructional routines that can be embedded in any curriculum within general education or special education programming.

Addressing students’ needs in writing is imperative, as only 5% of students with disabilities and less than 25% of elementary and middle school students perform at proficient levels in writing on the National Assessment of Educational Progress (U.S. Department of Education et al., 2012). Furthermore, students with language-based learning disorders such as dyslexia and developmental language disorder (DLD) can be taught to write when instruction addresses their development in word, sentence, and discourse levels of written and oral language (Berninger & O’Malley May, 2011; Hebert et al., 2018; Williams et al., 2013). Given that students demonstrate wide-ranging achievement in writing, it is very challenging for educators to know where to start. Some students are still learning to write their letters, while some are writing sophisticated essays and everything in between. Complicating matters, writing is a multidimensional process, involving knowledge, skills, strategies, and motivation (Graham et al., 2012). Educators frequently ask which components to address in more explicit instruction and where to spend more time in instruction, especially for students with and at-risk of learning disabilities (Berninger & O’Malley May, 2011). Further complicating matters is that students are expected to write three different genres (i.e., opinion/persuasive, informational, and narrative), but the requirements for instruction are different across those genres (Graham et al., 2015).

This article provides educators with steps to assess and score informational essays using an assessment protocol. This protocol is used as a diagnostic tool and is directly aligned to research-based writing instructional practices. To guide educators on “where to start” with instruction for developing writers, the assessment protocol and its alignment with an empirically validated theory of writing development are discussed next.

The Writing Architect

The Writing Architect project innovated writing assessment by identifying five high-leverage components of writing (where students are most likely to benefit from more instruction) and directly linking those five components to research-based instruction (Collins et al., 2021; Sarmiento et al., 2024; Truckenmiller et al., 2022). The five components are: (a) the text structure elements of an informational essay, (b) vocabulary (defined as word complexity), (c) spelling (defined as word accuracy), (d) sentence accuracy, and (e) typing fluency. These five components are also highlighted as critical areas of explicit instruction needed for students with dyslexia and DLD (Adlof & Hogan, 2018; Catts et al., 2005). The five components align nicely with an empirically validated model of how writing develops—the Direct and Indirect Effects of Writing (DIEW) model (Kim & Graham, 2022).

In their research, Kim and colleagues demonstrated how students’ knowledge of different components of writing influence each other to form a complete written composition (Kim & Graham, 2022) in the DIEW model. The DIEW model has extensive empirical support (data collected on hundreds of students) for identifying the components with the greatest direct influence on writing and the components that have an important indirect influence. The DIEW model demonstrates the relation between: (a) socio-emotional factors like motivation and self-concept; (b) content knowledge; (c) the interaction of phonology, orthography, morphology, and handwriting/typing to produce the “code” or spelling of words; (d) understanding the meaning of words (vocabulary) and how to build a meaningful sentence (grammar); (e) the structure of discourse at the paragraph and text level; (f) cognitive and regulation skills, such as reasoning, perspective taking, and inferencing; and (g) executive functions, such as working memory, cognitive shifting, and attention (Kim & Graham, 2022). Kim and Zagata (2024) provide detailed explanations of how each component of the model influences the others and also co-occurs with reading development.

The five components identified in the Writing Architect project are aligned with components c, d, and e in the DIEW model. These five components represent the strongest place to start with writing instruction for three reasons. First, the five components are highly correlated to valued writing outcomes (Collins et al., 2021; Sarmiento et al., 2024; Truckenmiller et al., 2022). Second, the five components represent the two strongest pillars in the DIEW model—the code pillar and the language comprehension pillar. And finally, these two pillars are necessary in assessment and intervention for two of the most common learning disabilities: dyslexia (needs code-focused instruction) and DLD (needs language comprehension instruction; Adlof & Hogan, 2018; Catts et al., 2005). Students with dyslexia have a similar trajectory in learning to write as other students, but are in an earlier developmental stage in their code skills development. Students with DLD have a similar trajectory in learning to write as others, but are in an earlier developmental stage in their vocabulary, sentence construction (grammar), and/or text structure development. Therefore, understanding where students are in their development of the code pillar for writing (spelling and typing fluency/handwriting fluency) and the language pillar (vocabulary, sentence construction, and text structure) is important for most students and especially students with learning disabilities. Although the other components of the DIEW should not be ignored, the protocol of assessment and instruction presented here focuses on these five components because a large number of students with disabilities and students performing below grade level cannot build on foundational written language skills that have not been adequately taught.

To translate the DIEW model into classroom-based assessment and instructional routines, the Writing Architect team conducted a series of studies. The findings resulted in a new approach to classroom-based writing assessment (the five components identified above) and a curated repository of research-based practices that align with the assessment results. The Writing Architect team created a web-based application that integrates an assessment platform, a scoring platform, and an instructional repository around the five components of writing. The Writing Architect web application will be available as an open-source application in 2026 for any large education agency to host and use for free. However, it will require considerable resources (technology expertise and systems-level literacy expertise) for the education agency to launch the application. To facilitate free use by smaller organizations and individual educators, a free protocol was created to guide educators on how to implement the assessment and associated instruction themselves. The protocol with administration instructions is available at writingarchitect.org, where educators can register their email address to gain free access to materials and receive updates when new materials are added. Before delving into how to use the assessment protocol, a discussion of the protocol in the context of a coordinated multi-tiered system of supports (MTSS) follows.

Assessing Writing Within an MTSS Framework

When implementing writing instruction within a MTSS framework, schools create a set of decision rules for providing increasingly more intensive intervention to students based on students’ performance on a coordinated set of assessments. The coordinated assessments needed in an MTSS framework include benchmarking, progress monitoring, and diagnostic assessment.

Benchmarking

Unlike reading and math, there are no commercial assessments of writing that have adequate classification accuracy statistics predicting end-of-year expectations (i.e., state tests). In Grades 3 through 8, students’ performance on the previous year’s state test of writing should be used to determine which students need additional instruction, and the percentage of students scoring below proficiency should be used to determine the adequacy of Tier 1 instruction at the school. For monitoring students’ improvement across the school year, some schools use a rubric and report the rubric scores each marking period, or they monitor with curriculum-based measurement in written expression (CBM-WE) three times per year. The protocol described in this article could replace the rubric or CBM-WE process in grades 4 through 8 for the students who score below proficiency on the Writing Architect protocol.

Progress Monitoring

For students receiving more intensive intervention (Tiers 2/3 or through an Individualized Education Program [IEP]), MTSS frameworks recommend that schools progress monitor more frequently than three times per year. For writing, there are currently no studies to suggest that weekly progress monitoring will detect growth in writing in Grades 3 through 8. At least three studies demonstrate that the slope of growth is zero or negative when intervention is not occurring (Romig & Olsen, 2021; Truckenmiller et al., 2014; Valentine et al., 2021). In light of the current state of research, weekly progress monitoring in writing is not recommended. Rather, when implementing an intervention, effects can be detected by administering a pretest and posttest. For example, if the informational writing unit is 6 weeks in length, educators may consider administering an assessment before and after the writing unit. For students with IEPs, educators may consider administering an assessment at the end of each reporting period (i.e., every 9 weeks). Valentine and Truckenmiller (2025) provide details about writing IEP goals and objectives with the components of writing described in this article. For Kindergarten through second grade, more frequent progress monitoring has been effective, but only within the context of an evidence-based transcription (i.e., handwriting and spelling) intervention (see Poch et al., 2021).

Diagnostic

The most likely reason that writing assessment studies show little growth is because the writing assessment may not be providing enough information to guide educators to select the most effective instruction/intervention for their students. Diagnostic assessment provides information that is more intuitively linked with instructional practices. In the MTSS context, diagnostic assessment is not for diagnosing a disability, but rather for “diagnosing” what skills, knowledge, strategies, or motivation is needed for any individual or group of students (Graham et al., 2012). Because writing is such a complex skill, diagnostic writing assessment may be the most useful type of assessment for improving outcomes for students (Truckenmiller et al., 2022). Diagnostic is the type of assessment described here.

Assessing Writing

School-created rubrics are the most common form of writing assessment. Although rubrics provide a valid holistic evaluation of writing, they typically have vague descriptions that make it difficult for students or teachers to see incremental progress (Truckenmiller et al., 2022). Instead of a rubric, several studies have found instructional advantages and measurement advantages by using frequency counts within a CBM framework. The Writing Architect protocol adopts a CBM framework and uses frequency counts (e.g., the number of complex vocabulary words).

Curriculum-based measurement in written expression was created to detect incremental growth in writing to be used in Individualized Education Program goals and to guide decisions in response to instruction (Espin et al., 2005). A CBM-WE probe consists of a prompt, a brief amount of time to write (e.g., 3–5 minutes), and one or more scores that involve counting what the student produced (e.g., counting words or correct sequences). The prompts and scores are flexible to match students’ developmental stage and the writing expectations in the curriculum (Espin et al., 2005; Truckenmiller et al., 2020).

For example, in earlier grade levels, a narrative prompt with three minutes of writing time, and scoring that counts the number of correct sequences and subtracting the number incorrect sequences (called CIWS) had the greatest evidence of predictive validity (Romig et al., 2017). However, students are expected to write more informational writing as the grade levels progress (National Governors Association [NGA] & Council of Chief State School Officers [CCSSO], 2010). The Writing Architect protocol is the first set of CBM prompts to match the expectation that students respond to an informational passage and that students type their response (Truckenmiller et al., 2020). It is essential to use an informational prompt because the expectation on most state tests is that students can type an essay in response to an informational passage starting in grade 4. Furthermore, there are different structure expectations in narrative versus informational essays, different instructional practices, and differential performance by students depending on the genre assessed (Graham et al., 2015; Valentine et al., 2021).

Administering Informational Writing Prompts

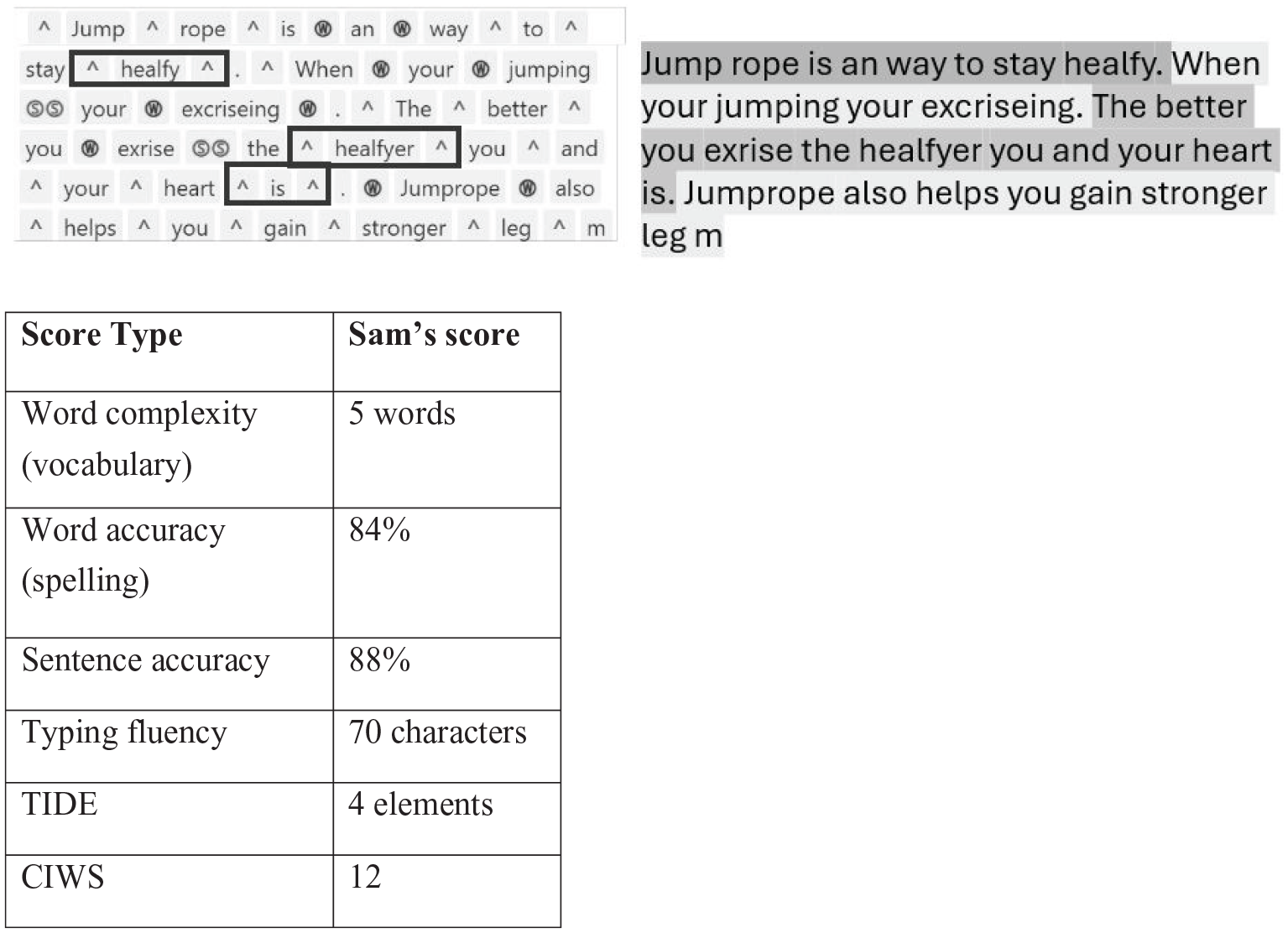

Although the Writing Architect provides a web application to administer the CBM-WE prompt and assist with scoring, any educator could create their own informational passage prompts with an associated question. The protocol with administration instructions is available at writingarchitect.org. Administration involves reading aloud an informational passage (from social studies or science curriculum or from an English Language Arts curriculum that emphasizes knowledge building) and asking the student a question about that passage, followed by 3 minutes of planning time and 15 minutes of typing the essay. If using a word processing program like Microsoft Word or Google Docs for recording the essay, spelling and grammar checks should be turned off so that an accurate account of students’ spelling and grammar without the use of accommodations can be assessed. It can be turned off because the purpose of this assessment is not for accountability, but rather to inform instruction. After completing the essay, students are given 90 seconds to copy a paragraph. A paragraph with 147 words was used for the typing fluency prompt. The essay is scored for four components, and the copied paragraph is scored for typing fluency. Figure 1 contains a scored example for Sam, a fifth-grade student who needs additional instruction to meet end-of-grade goals.

Example of Essay Scoring for Sam.

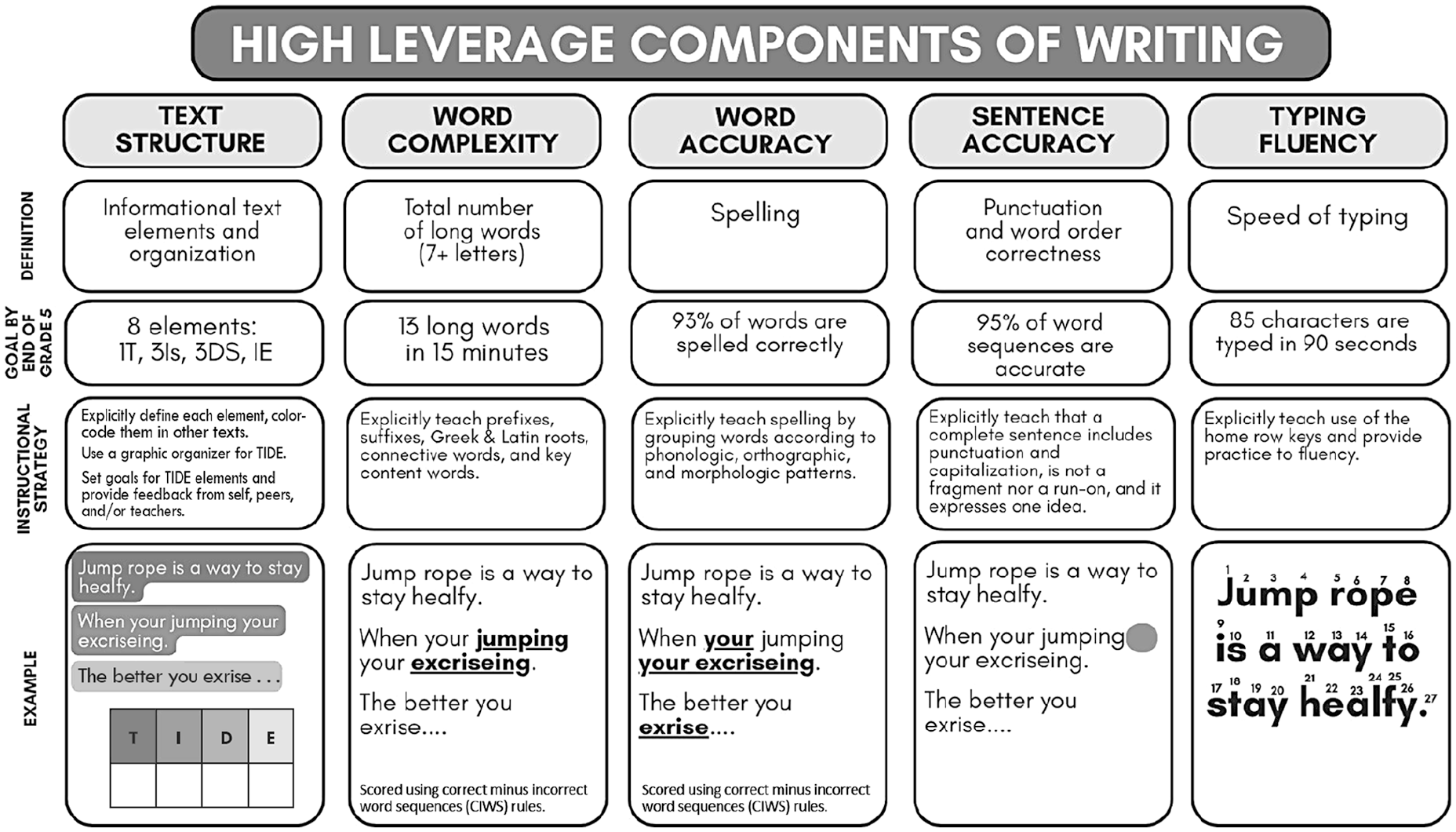

Using Sam’s writing sample in Figure 1, the next section includes information on (a) how to score each of the five components, (b) interpretation of the score for instruction, and (c) research-based instructional strategies that have had an impact on that component of writing. Figure 2 summarizes each of the five score types for educators to use as a quick reference. Figure 2 also provides a goal score for the end of elementary school (i.e., end of grade 5) for each component skill to make it more concrete and feasible for teachers and students. The goals in Figure 2 were based on an unpublished analysis of the scores associated with passing the Michigan 5th grade state English Language Arts test. As such, they should be treated as a general guide and not as strict cut points. Goal scores for the end of middle school (i.e., grade 8) are planned for a future analysis. Goal scores are not provided for each grade level because writing grows in much smaller increments in late elementary and middle school and written essays are only measured on state tests once at the end of elementary and once at the end of middle school, so the goals are set to those grade levels accordingly (Truckenmiller et al., 2020).

Graphic Representation of How the Five Components of Writing Are Assessed and Connected to Instruction.

Scoring, Interpreting, and Teaching Text Structure

Scoring

To measure students’ ideas and organization of the ideas for an informational text, the number of informational essay elements is counted. Students receive one point for each of the following if they are present: Topic sentence, Ideas, Details, and Ending (TIDE). The student can receive a second point for each element when the element is sophisticated. If a student includes multiple ideas and multiple details, they receive points for each idea and detail. In Figure 1, Sam received one point for a Topic Sentence (i.e., “Jump rope is an way to stay healfy.”), two points for each of two Ideas (i.e., “When your jumping your excriseing.” And “Jumprope also helps you gain stronger leg. . .”), and one point for one Detail (i.e., “The better you exrise the healtfyer you and your heart is.”) for a total of four points. The TIDE total score is highly correlated to performance on a state test and nationally normed assessments of writing (Truckenmiller et al., 2023).

Interpreting

Text structure refers to the organization of elements (i.e., ideas) in a piece of writing, that are specific to the genre (e.g., informational, narrative, persuasive). Although every subgenre (e.g., compare/contrast, description) of writing has a different structure, the TIDE structure is a useful place to start because it has high utility for most informational writing, including science and social studies content areas, and most subgenres are some variation of TIDE (Collins et al., 2021). In writing development, TIDE aligns with the discourse language component of DIEW, which is the strongest direct predictor of overall writing quality and therefore should be prioritized for instruction (Kim & Graham, 2022). In Figure 2, Sam included four structural elements of an informational text. Because effective essays typically include a topic sentence, three ideas with three associated details, and an ending, Sam would benefit from additional explicit instruction in the components of an information essay.

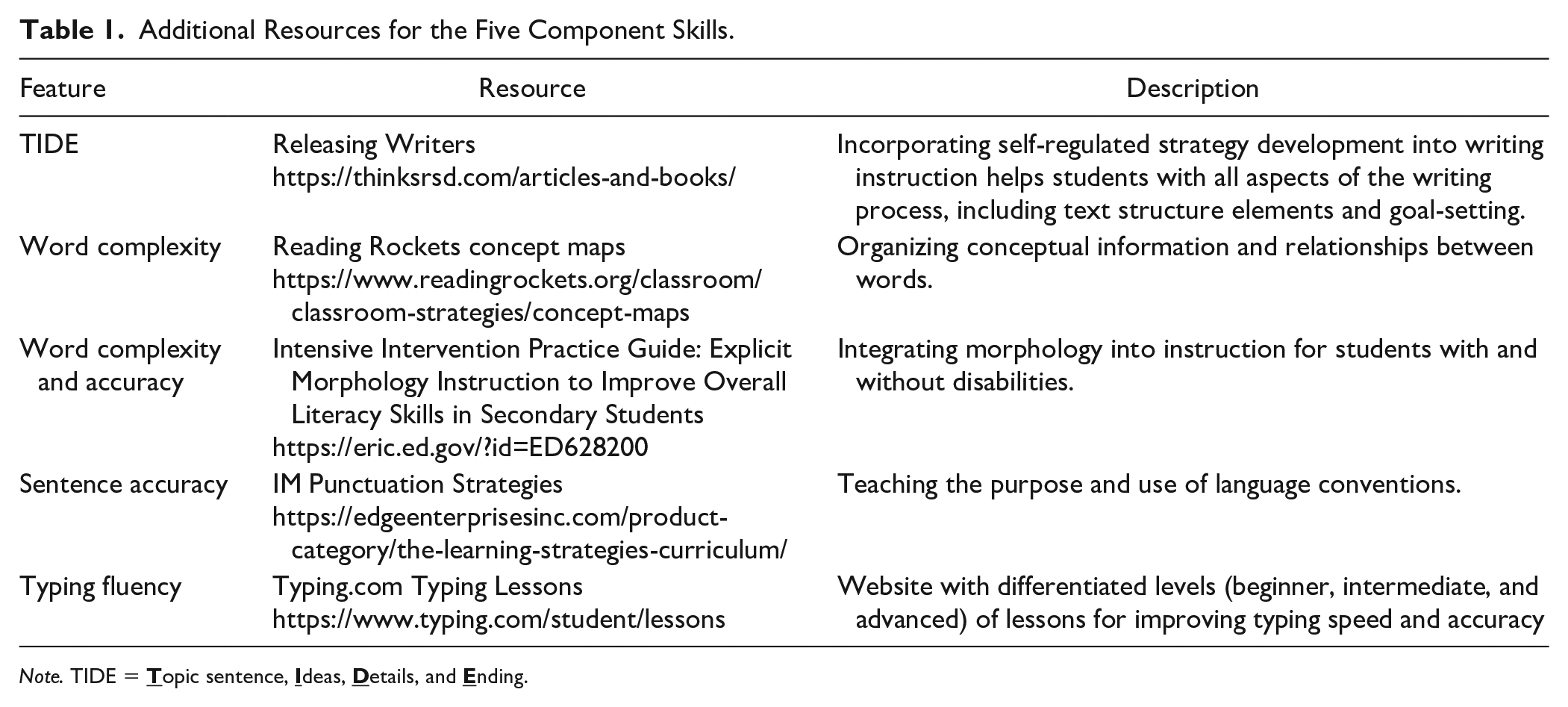

Teaching

Explicit instruction for text structure begins with students examining an exemplar essay to identify the text structure elements, examining their own previous writing for those elements, learning to use a graphic organizer for their next essay, and finally writing and organizing their own essays with this structure (Laud & Patel, 2023). This instructional cycle should be repeated with teacher feedback as students gain independence and fluency in their own writing. Teaching the TIDE structure is most effective when it is used within self-regulated strategy development (SRSD; Collins et al., 2021). More resources can be found in Releasing Writers (Laud & Patel, 2023) and ThinkSRSD.com (ThinkAUM, 2024). These additional resources are gathered in Table 1 for all five component skills.

Additional Resources for the Five Component Skills.

Note. TIDE =

Scoring, Interpreting, and Teaching Word Complexity

Scoring

Word complexity (a dimension of vocabulary) is measured by counting the total number of long words—words with seven letters or more, regardless of spelling accuracy. A recent study showed that the number of long words is more related to students’ writing performance than the most popularly used measures of vocabulary (Sarmiento et al., 2024). The number of long words can be hand-scored by counting the long words, or it can be automatically scored by a computer using a Python script available for free at writingarchitect.org. In Figure 1, Sam had five long words, namely “jumping,” “exercising,” “healthier,” “jumprope,” and “stronger.” Note that words do not need to be spelled correctly to count as long words. It is better for students to attempt to use more complex vocabulary words and spell them incorrectly than to avoid more complex words because they cannot spell those words.

Interpreting

In Sam’s essay, the long words were key concept words. Use of these words demonstrates Sam’s understanding of the relationship between key concepts of “exercise” and “health,” through the mechanism of “jumping.” Sam correctly showed the relationship between these concepts despite not being able to spell them, which is an excellent developmental step that should be encouraged. By giving students more opportunities to write about informational concepts, they can reinforce and/or question how the concepts are related to each other. Informational writing especially requires more abstract, technical, and precise words, often referred to as academic language. Therefore, students need their educators to provide more opportunities to write about science, social studies, and math concepts, for students to use the concept words they are taught.

Teaching

Teaching vocabulary can be daunting because there are millions of words; it requires intensive instruction to master just a few words; and many students seem to acquire hundreds of words a week without explicit instruction. With these considerations in mind, we point to three more generative ways of approaching vocabulary than lists of words (Sarmiento et al., 2024).

First, teach connective words (e.g., therefore, however, for instance) by pointing them out in text, defining what they mean, and explicitly discussing how they show relationships between ideas in a text. Second, use concept maps to show how vocabulary and concepts within a text relate to each other (Schroeder et al., 2018). Third, teach students the morphemes (i.e., prefixes, suffixes, Greek and Latin roots, inflectional endings) within words by peeling away morphemes from words, add morphemes to words, or swapping out morphemes in words to change the meaning of the whole word (Fallon & Katz, 2020; Hennenfent et al., 2022). To illustrate, a teacher may embed morphology instruction in a science unit on photosynthesis, which is an important discipline-specific word necessary for understanding the content. The morphemes in this word (e.g., photo-, synth-, -sis) may be encountered in previous or future units. The teacher may ask students to find the meaning of each morpheme in the word and, given the meaning of these word parts, generate a definition for the whole word (e.g., photo- means light, synth- means to put together, and –sis means a process). Therefore, the word photosynthesis refers to an assembly process using light.

Scoring, Interpreting, and Teaching Word Accuracy

Scoring

Word accuracy is synonymous with spelling. To score word accuracy, any word that is misspelled or has missing capitalization is marked as inaccurate with the ⓦ symbol. The total number of words misspelled is divided by the total number of words the student wrote and subtracted from 100% to get the percentage of words spelled correctly. For example, in Figure 1, Sam misspelled five words (e.g., “an,” ‘your,’ and “exercise”) and wrote a total of 32 words. Applying the formula, Sam has a total of 84% of words spelled correctly. The word “healfy” is addressed in the cultural responsiveness section later.

Interpreting

The percentage of words spelled correctly in a student’s informational written composition is an adequate representation of their overall spelling abilities (Truckenmiller et al., 2022). When word accuracy is below about 93%, it can constrain students’ production of their ideas (Sumner et al., 2013). Imagine the frustration and fatigue Sam might be feeling having to interrupt their train of thought at almost every 8th word (84% accuracy) to figure out the spelling of the word.

Teaching

Traditionally, students were taught spelling through lists of phonetically unrelated words, using repetition tasks (e.g., writing each word 5 times, etc.) and a spelling test at the end of each week (Pan et al., 2021). Researchers, however, suggest reframing spelling instruction to match how word accuracy develops, which requires a focus on integrating phonological, morphological, orthographical, and etymological knowledge (Pan et al., 2021; Reed, 2012).

Furthermore, morphemic spelling instruction, (i.e., teaching spelling based on units of meaning such as prefixes, root words, and suffixes) is extremely beneficial because these patterns frequently appear across different words (Reed, 2012). For example, the suffix “tion” has a consistent spelling, pronunciation, and meaning when it is found at the end of words. It should also be noted that handwriting and spelling are deeply entwined and should be taught together. The act of handwriting the shape of a letter provides proprioceptive feedback to reinforce the spelling of a word (Troia et al., 2020).

Scoring, Interpreting, and Teaching Sentence Accuracy

Scoring

Sentence accuracy is scored using the rules of incorrect word sequences (IWS) from CBM-WE (Espin et al., 2005). Traditional IWS rules combine misspelled words and sentence errors in one category. The Writing Architect studies found that misspelled words can be treated as a separate category from sentence-level IWS because it has different instructional implications (Truckenmiller et al., 2022). Therefore, sentence accuracy includes only the sentence-level errors, which are grammar, missing words, and missing or incorrect punctuation, and are marked with the Ⓢ symbol. More specific scoring rules, along with frequently asked questions and examples, are available in the scoring manual at WritingArchitect.org. In Figure 1, Sam had two missing commas, which resulted in four incorrect sequences because there is one sequence on either side of a comma (see the word sequences scoring rules in the protocol). Sam had four sentence errors and 35 total word sequences, resulting in 88% correct sentence sequences. The formula for calculating sentence accuracy is one minus the total sentence errors divided by the total number of sequences.

Interpreting

Sentence accuracy significantly predicts state and nationally normed writing achievement up to about grade 5 (Truckenmiller et al., 2022). Punctuation, marking the end of a complete thought, which prevents run-ons and sentence fragments, is the most important component of sentence accuracy. Assessing and teaching sentence accuracy has a much smaller influence on overall writing development than word-level and text-level components (Graham et al., 2012). Therefore, educators may consider integrating sentence accuracy with their word and/or text instruction.

Teaching

To build the punctuation component of sentence accuracy, the IM Punctuation Strategies Program has evidence for effectiveness (Schumaker et al., 2019) and includes six computer-delivered lessons on eight punctuation marks. At the sentence level, many educators also provide grammar instruction and sentence combining instruction. Grammar instruction, like teaching parts of speech and diagramming sentences, has not been shown to be effective. On the other hand, sentence combining has been more effective, especially when embedded in essay writing (Graham et al., 2012). For example, explicit instruction and practice with repeating, expanding, and combining sentences have significant effects when integrated with instruction in overall discourse structure (Graham et al., 2012).

Scoring, Interpreting, and Teaching Typing Fluency

Scoring

In the Writing Architect, the computer automatically calculates the number of characters students copy from a paragraph in 90 seconds. Many free typing programs (e.g., typing club, typing.com) also include typing fluency progress monitoring that can be used instead of the Writing Architect typing fluency task.

Interpreting

Within the context of computer-administered essays for elementary-age students, typing fluency is the most constraining factor on writing quality for elementary-age students, with fifth-grade students who typed fewer than 82 characters in 90 seconds being the most likely to not be proficient on the state test (Truckenmiller et al., 2022). In the example in Figure 1, Sam only typed 70 characters in 90 seconds. The goal by the end of Grade 5 is 85 characters typed in 90 seconds. A student who takes almost 2 seconds to find each key on the keyboard, even with a large capacity working memory, would find it difficult to remember the next word or the next idea to write.

Teaching

Although research on specific typing programs has not been conducted, explicit instruction in typing will likely be powerful (Donica et al., 2018; Donne, 2012). Students should be taught to anchor their fingers on the home row keys and to practice the motor movements along each pathway from the home row to other keys. Additional examples of explicit typing instruction include using the texture on the “f” and “j” keys to anchor on the home row and to use both pinky fingers to press the shift key for capital letters. Instruction can be embedded easily as a daily warm-up activity. For example, when students sign into their computers, teachers can have them type their first and last name on a document while keeping their fingers on the home row keys. Instructing peers to monitor each others’ use of home row positioning may add motivation. Other regular activities can include incorporating free typing games into independent work or choice time (e.g., race car typing, typing ninja, dance floor typing). To encourage typing practice at home, teachers can also use group contingencies: if every student logs at least “x” total minutes of typing practice, the class gets a free pass for “y.”

Cultural Responsiveness and An Important Note About the Purpose of Assessment

The word and sentence accuracy rules provide an opportunity for educators to promote linguistic justice and cultural responsiveness. Previously, scoring rules for IWS stated that correct and incorrect sequences should be marked according to standard rules of English (Espin et al., 2005). However, there is no linguistic “standard” English. Rather, it is more accurate to say that every person speaks a variation (also called a dialect) of English (Wolfram & Schilling-Estes, 2016). All variations of English are rule-governed and linguistically accurate. Therefore, the rules in the Writing Architect mark lingustic variations as correct.

For example, in Sam’s essay, they use the common cluster reduction of the “th” sound to an “f” sound, which is marked as correct in the current rule set. At the end of the third sentence, Sam also uses the singular verb “is” instead of “are.” Often, these variations are treated as errors by educators, which can create a feeling of shame for students using their community’s oral language variation. Given that students have a right to use their community’s language variation (Committee on College Composition and Communication, 1974), educators are encouraged to teach in ways that disrupt the implied power hierarchy of the different language variations. The Writing Architect scoring manual provides specific rules for word and sentence accuracy that are linguistically accurate in oral language variations. These rules were aggregated from dozens of research studies (Horton et al., 2018). The Writing Architect website includes instructional routines for aiding educators in talking about grammar that avoids calling nonmainstream variations errors.

Some students and their families may have a specific goal to write in a way that is different from how they speak, and it is our belief that it should be a choice for students and their families. A study by Johnson et al. (2017) showed that explicit dialect awareness instruction can help students learn to make these conscious choices about language use. Students who received explicit instruction in dialect awareness adjusted their grammar significantly more than students who received traditional grammar instruction.

A separate but important consideration for educators is distinguishing between language variations and learning differences. There are five grammar errors commonly made by individuals with DLD that can also be classified as grammatically accurate rules in African American English (Lee-James & Johnson, 2022). This “overlap” of features makes it particularly challenging for educators to determine when students need more intensive intervention in grammar usage due to DLD. Educators can consult a speech language therapist with expertise in this area to determine when more intensive intervention is needed (see Oetting et al., 2019).

When speaking with parents and other professionals, it is important to note that the purpose of assessment is not to blame the student or indicate that they are not capable of achieving. Assessment does not indicate that the student cannot learn; rather it is the opposite. It identifies the areas where the current level of instruction is inadequate and more intensive instruction is needed to equip students with the skills that will reduce frustration in school and access greater content area (science, social studies, math) achievement (Graham et al., 2015). In addition, the goal numbers listed in Figure 2 should not be treated as valid for use in high stakes decisions (e.g., special education identification or grade retention).

Goal Setting, Self-Regulation, and Feedback

With each of the five components of writing, one of the key mechanisms that increases the effectiveness of instruction is the use of goal setting. Goal-setting enhances student attention, motivation, and effort; facilitates effective use of strategies and writing endurance; improves writing quality; and may be especially essential for students with disabilities (Graham et al., 2012).

To teach goal-setting, introduce the definition of a goal (“A goal is a result that we work toward to achieve success”) and the characteristics of effective goals -specific and structured in manageable pieces. For example, “Good writers use goals that are bite-sized and will help them improve their writing. My goal is to improve my informational writing by including one topic sentence, two ideas, two details, and an ending sentence.” Goals can be set for any of the scoring metrics discussed above. Then, teachers can show students how to graph their progress toward goals as an effective method of self-reinforcement.

Teachers can also use a goal-setting menu, like the one in Releasing Writers (Laud & Patel, 2023). When using the goal-setting menu, teachers and students can score an exemplar essay according to the items on the menu. Next, students identify which elements scored the highest and lowest and label these as areas of strength and growth. Students then pick one low-scoring area of their own writing about which to develop a bite-sized goal. The goal-setting menu also functions well for feedback. A student can use this menu themselves as part of revising their essay. A peer or a teacher could also provide feedback to students based on the elements listed on the menu.

Conclusion

Writing is a complex skill, and it is challenging to know where to start for different students, especially when setting achievable and meaningful Individualized Education Program goals and choosing research-based instruction that will have a practical impact on students’ writing development. In this article, we emphasized that the most effective assessment points to effective instruction. Instruction in writing is necessary to improve English Language Arts outcomes and outcomes in Social Studies and Science (Graham et al., 2015). Regardless of where students are in their development of writing, the practical steps in this article can be used to assess information writing skills and plan instruction for any student in grades 3 through 8.

Footnotes

Acknowledgements

The authors would like to acknowledge Greenflux, LLC for programming the Writing Architect and Thomas Toaz for programming the website.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The research reported here was supported, in part, by the Institute of Education Sciences, U.S. Department of Education, through grant no. R305A210061 to Michigan State University, through grant no. H325H190003 from the U.S. Department of Education, Office of Special Education Programs (OSEP) to Vanderbilt University, and the Rick Stiggins Endowment for the Improvement of Classroom Assessment Literacy of Teachers and School Leaders to Michigan State University. The opinions expressed are those of the authors and do not represent views of the funders.