Abstract

Reading outcomes at a national level have remained stagnant for more than two decades. One reason why is that the field has struggled with how to address poor reading comprehension when reading words is not the problem. Another is that limited insight into the causes of poor comprehension performance is offered by traditional reading comprehension measures. This article describes the Multiple-choice Online Causal Comprehension Assessment (MOCCA), a measure of reading comprehension designed to assess the cognitive processes in which Grade 3 to 6 students engage when trying to comprehend as they read. In contrast to traditional reading comprehension assessments, MOCCA provides diagnostic information about how students who are struggling with reading comprehension cognitively approach the comprehension task. Multiple-choice Online Causal Comprehension Assessment scores are directly aligned to instructional decisions and are most useful when triangulated with other reading curriculum-based measures, for which this article provides two examples and a decision-making heuristic.

Ms. Liu is a third-grade teacher with 25 students, 10 of whom perform at or above average on a reading comprehension measure. Ms. Liu is fortunate in that her school uses a reading curriculum-based measurement (r-CBM; Deno, 1985, 2003) system for screening and progress monitoring. But like so many r-CBMs, her school’s r-CBM has a measure of reading comprehension that simply detects difficulty with comprehension. To understand the roots of a student’s comprehension difficulties, she can only look to other r-CBM assessments of foundational skills, like word reading, oral reading fluency, and vocabulary.

Thus, to determine what to do next, Ms. Liu, the teacher, consults oral reading fluency r-CBM results. Results reveal 10 of the students clearly struggle with oral reading fluency, so that she knows what to do next for those students, but the remaining five present a conundrum. That is, these readers performed well on oral reading fluency but underperformed on the reading comprehension measure. Puzzled, Ms. Liu checked their vocabulary results and found that while two of the students did poorly, the other three did well. Out of options, Ms. Liu is unsure how to help those three students who seem to have mastered foundational skills, but struggle with reading comprehension (see Note 1).

This scenario is not uncommon in intermediate and middle-grade classrooms, and this article lends insight into how teachers can learn more about students who specifically exhibit poor comprehension. First, what research has demonstrated is at the root of these readers’ struggles with specific reading comprehension difficulties (SRCD) is reviewed. Next, a new reading assessment, Multiple-choice Online Causal Comprehension Assessment (Davison et al., 2018, 2024) or MOCCA, that can be used diagnostically with these readers is introduced. This is followed by an illustration of how MOCCA results can be coordinated with other r-CBM results to inform instruction. Finally, the article ends with the types of reading comprehension interventions that could be implemented to help improve struggling readers’ comprehension skills based on MOCCA and other reading data.

Causal Inferences for Coherence

Consider the following very short story. Tyrese decided to bake a pumpkin pie for dessert. He looked in the pantry. Tyrese was disappointed.

In this story, one can infer that Tyrese did not find all the needed ingredients in the pantry. Most proficient readers will immediately and effortlessly infer that. In fact, they also infer that Tyrese must think the ingredients will be in the pantry. Proficient readers may be entirely unaware that they have made those two necessary or causal inferences. What makes them causal and necessary is that the three sentences become non sequiturs without those two inferences. Those two causal inferences fill “gaps” in the story without which the text does not make complete sense. When causal relations are not explicit in a text, readers generate causal inferences by activating and integrating textual information with background knowledge (Kintsch, 1988). Moreover, the ability to make causal inferences is linked significantly to comprehension (Graesser et al., 1994; Laing & Kamhi, 2009).

There are other inferences that readers can make about this story as well, ones that are unnecessary. For instance, one might infer Tyrese’s gender, what Tyrese looked like, what specific ingredients were missing, the type of dish the pie might be baked in, or even the layout of Tyrese’s kitchen. While all these inferences elaborate and enrich the story, making it more interesting and complete, they are not necessary for understanding the central plot of the text. They do not relate directly to the chain of cause-and-effect events. Thus, they would not be considered causal inferences.

Elaborative inferences involve activating and integrating textual information and background knowledge that extends explicit textual information (Barnes et al., 1996; Cain et al., 2001). Causal inferences, which have also been referred to as explanatory inferences (Laing & Kamhi, 2009; Trabasso & Magliano, 1996), gap-filling inferences (Cain & Oakhill, 1999), and coherence inferences (Barnes et al., 1996; Cain et al., 2001), are generally considered a sub-category of elaborative inferences that specifically build the causal coherence of the reader’s mental representation of the text. As such, causal inferences can be considered necessary for comprehension, whereas other elaborative inferences can be considered unnecessary (Barnes et al., 1996; Cain et al., 2001).

Readers with SRCD struggle specifically with making causal inferences like this (Cain & Oakhill, 1999; Oakhill & Cain, 2018). Even when they have the requisite background knowledge, such as how to make a pumpkin pie and what a pantry is, they still tend not to make these coherence-building inferences (Cain et al., 2001). Moreover, when asked to think aloud as they read sentence-by-sentence, readers with SRCD tend to either paraphrase the text excessively or to make elaborative inferences (Carlson et al., 2014; Kraal et al., 2018; McMaster et al., 2012; Rapp et al., 2007). Readers with SRCD have shown improvement when exposed to training in inference-making (Yuill & Oakhill, 1988) and questioning techniques (McMaster et al., 2012, 2015).

Part of the challenge facing teachers like Ms. Liu is that traditional reading comprehension assessments provide limited insight into why a student who can read fluently comprehends poorly and, thus, offer no guidance on how to improve the situation (Pearson et al., 2020). Most reading comprehension assessments focus almost exclusively on comprehension as a product (i.e., what is remembered after reading) and provide little information about how a reader arrived at that product: the comprehension process, which, among other things, depends heavily on generating causal inferences during reading. When reading comprehension measures only report the extent to which readers demonstrate comprehension relative to a criterion or a normative sample, they leave schools and interventionists uninformed of the underlying reasons for poorer comprehension (Klingner, 2004; Snyder et al., 2005; Wixson et al., 1994). As a result, educators and researchers have repeatedly called for measures that reflect individual differences in the comprehension process to better support targeted intervention with students who struggle specifically with comprehension (Klingner, 2004; Pearson & Hamm, 2005; Pearson et al., 2020; RAND Reading Study Group, 2002; Snyder et al., 2005; Wixson et al., 1994). Multiple-choice Online Causal Comprehension Assessment is precisely this type of tool.

MOCCA and the Diagnostic Assessment of Reading Comprehension

Like other r-CBMs, MOCCA identifies students struggling with comprehension. Unlike other r-CBMs, MOCCA also has a diagnostic dimension to it that categorizes readers with SRCD based on their over-reliance on one of the two cognitive processes consistently identified by research: paraphrasing and generating elaborative but unnecessary inferences (e.g., Carlson et al., 2014; Kraal et al., 2018; McMaster et al., 2012; Rapp et al., 2007). That is, MOCCA is a unique r-CBM assessment that offers scores for screening and progress monitoring and it offers a second score that is validated specifically for diagnostic use. This article focuses specifically on this second type of score due to its unique affordances for teachers in Ms. Liu’s position.

How MOCCA Works

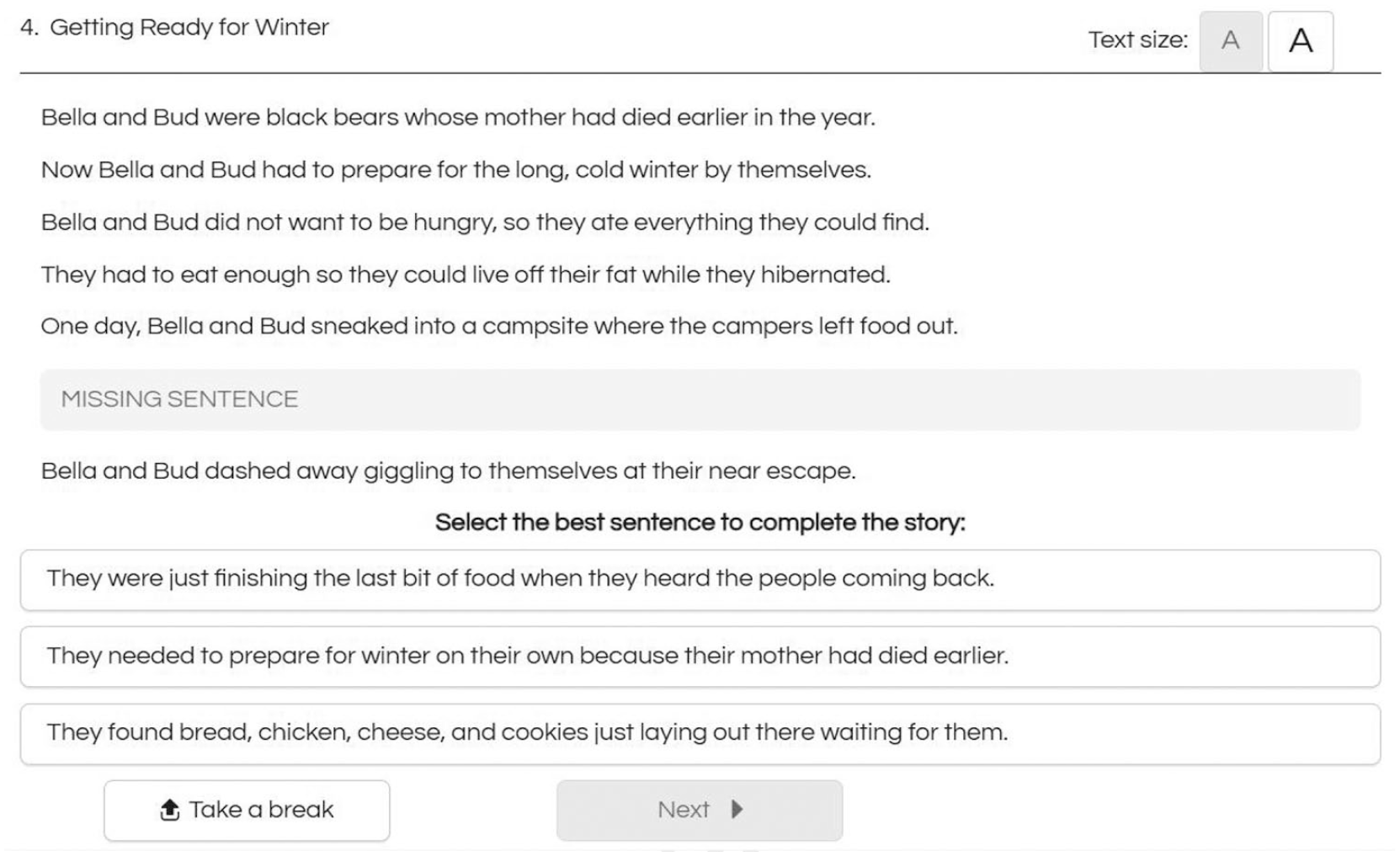

Multiple-choice Online Causal Comprehension Assessment (MOCCA) classifies students who struggle with comprehension through its use of informative distractors and patterns in the distractors students choose when they do not choose the right answer (i.e., the causal inference). Each MOCCA item is a brief, self-contained passage in which its next-to-last sentence is missing. Students taking MOCCA must select among three or five sentences the one that best completes the passage. Each MOCCA passage includes a minimum of three response options: the correct sentence (i.e., the causal inference), one or two paraphrase distractor sentences, and one or two that represent plausible, but unnecessary elaborative inferences (see Figure 1 for a sample MOCCA item).

Sample Multiple-choice Online Causal Comprehension Assessment (MOCCA) Item.

Multiple-choice Online Causal Comprehension Assessment tracks how many of each response option a student selects and uses that information to derive two-item response theory (IRT; Van der Linden, 2018) scores: a reading comprehension scale score based on the correct responses, and a descriptive classification (i.e., causal, paraphrasing, elaborating, or inconclusive comprehender) based on patterns in the incorrect responses. Both MOCCA scores have consistently shown good to excellent reliability and validity for informing instructional decisions (Biancarosa et al., 2019; Davison, Biancarosa, Carlson, et al., 2018; Davison, Biancarosa, Seipel, et al., 2018; Davison et al., 2021, 2024; Liu et al., 2019). Moreover, MOCCA scores together better predict proficiency on a state test of reading than either score alone does (Biancarosa et al., 2019). The first score is used for r-CBM purposes of screening and progress monitoring, whereas the second is used to assist teachers like Ms. Liu in how to intervene with readers with SRCD. To understand the latter, the next section unpacks the classifications.

MOCCA Classifications

“Causal comprehenders” are those readers who regularly generate causal inferences. Faced with a story like the one about Tyrese, they make causal inferences about him lacking the needed ingredients for his pie. Students who are categorized as causal comprehenders by MOCCA consistently generate causal inferences during reading. In the sample item about Bella and Bud (see Figure 1), they choose the first answer choice and similarly locate and choose the causal inference on most items. As a result, they fall above the causal comprehension cut-score threshold on MOCCA and are highly likely to perform at the proficient level on a general outcome measure of reading comprehension (Davison et al., 2024), indicating that these students do not need further work on this essential reading comprehension skill. Nonetheless, they may still require work on other reading skills as will become clear in the section exploring how to coordinate MOCCA results with other reading assessments.

“Elaborating comprehenders,” sometimes called Elaborators, are readers who regularly make inferences that elaborate on the text (Carlson et al., 2014; Kraal et al., 2018; McMaster et al., 2012; Rapp et al., 2007). Faced with the story about Tyrese, they make elaborative but unnecessary inferences about what Tyrese looks like and where his pantry is in his kitchen, but not the necessary ones about not having the ingredients he needs. Likewise, on the Bella and Bud item (see Figure 1), they end up choosing the final answer choice, which is a plausible but unnecessary inference that elaborates on the food they find. With a consistent pattern of selecting elaborative inferences over causal inferences, these students are unlikely to perform at a proficient level on a general outcome measure of reading comprehension (Davison et al., 2024), indicating that these students need instruction to help them make causal inferences.

“Paraphrasing comprehenders,” sometimes called Paraphrasers, are readers who do not regularly make causal inferences and instead tend to rephrase or restate prior text information (Carlson et al., 2014; Kraal et al., 2018; McMaster et al., 2012; Rapp et al., 2007), making few, if any inferences. Presented with the Tyrese story, they are most likely to repeat it back without any inferences at all, such as saying, “He wanted to bake a pie,” but not to provide any reason for why Tyrese was disappointed. On the Bella and Bud item (see Figure 1), this tendency is revealed through their choice of the second answer, which reinstates old information. With a consistent pattern of selecting paraphrases over causal inferences, these students are also unlikely to perform at a proficient level on a general outcome measure of reading comprehension (Davison et al., 2024). The key insight paraphrasing comprehenders need to gain is that reading requires a reader to supply information not explicitly stated in a text, which helps deepen comprehension.

“Inconclusive comprehenders” are readers who do not show a clear pattern in the cognitive processes they use when they fail to make causal inferences on MOCCA. That is, they tend to exhibit both elaborative inferences and paraphrases without favoring one over the other. For example, on the Bella and Bud item (see Figure 1), they might choose the elaboration, but on the very next item choose the paraphrase. Rather than label these readers as paraphrasing or elaborating, MOCCA does not classify a student whose pattern of responses is unreliable and might be attributable to measurement error. Students categorized as inconclusive tend to exhibit guessing behavior. Some inconclusive comprehenders may struggle with foundational reading skills, such as reading words and fluency. Inconclusive comprehenders who demonstrate strong foundational reading skills on other assessments may be struggling with literal comprehension, especially if other assessments also indicate a comprehension problem. In some cases, the student may not be prioritizing reading for meaning, which can occur when instruction is overly focused on foundational reading skills, or a student is not taking the MOCCA assessment seriously. Finally, inconclusive comprehenders are sometimes good readers who have an off day.

Coordinating MOCCA Results With Other Reading Data

It is critical to triangulate MOCCA results with data from other reading measures, especially for, but not limited to, inconclusive comprehenders. First, MOCCA is an untimed measure of reading comprehension. Thus, it is possible to perform well on MOCCA but still demonstrate less than ideal oral reading fluency. Second, MOCCA is focused on a necessary part of the reading comprehension process, but it does not consider every necessary part of the reading process. As a result, MOCCA results are most useful and informative when interpreted together with other reading assessment results. To illustrate, the next sections explore two different scenarios.

Measures of Academic Progress

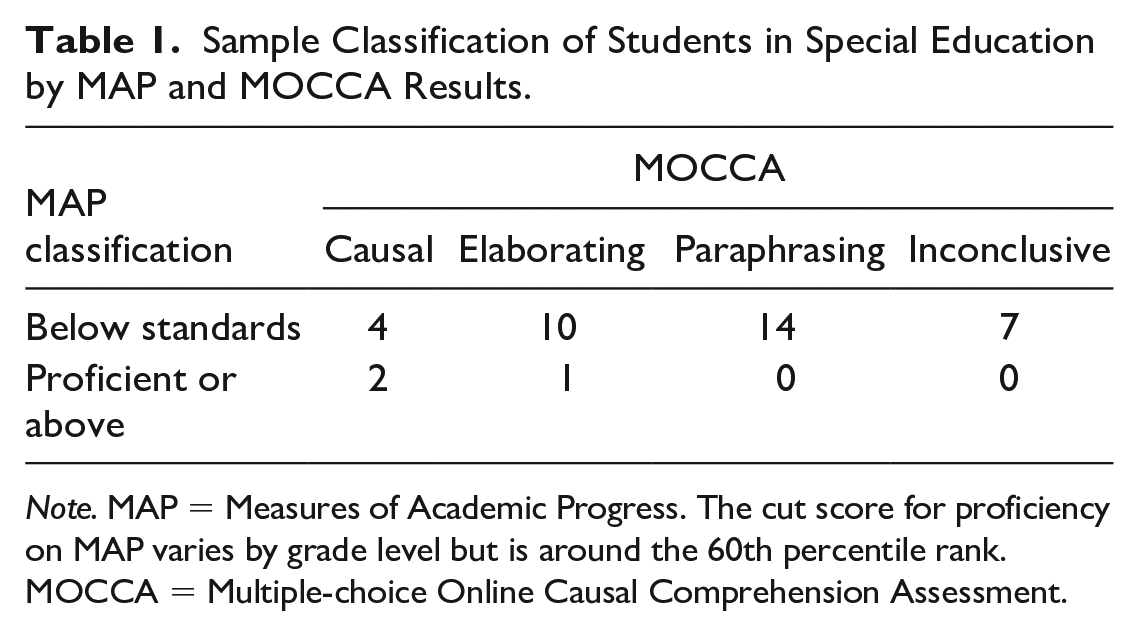

Consider a school in which all students take a reading comprehension assessment to screen for reading difficulties. For example, in two California schools where Measures of Academic Progress (MAP; Northwest Education Association, 2019) served as the universal screener, students also took MOCCA. Of the 38 Grade 3 to 5 students receiving special education services, 35 scored below standards on MAP, indicating that they were at risk for not meeting grade-level standards on their state test but offering no diagnostic guidance for instruction.

In practice, interventionists can think of MOCCA and MAP as creating a two-by-four grid of results that can inform instruction (see Table 1). As is evident in Table 1, the 35 students who performed below standards and the three who performed at or above standards on MAP were classified into multiple subgroups based on their ability to make causal inferences and what they did when they failed to make such inferences. For example, 10, or 29%, of the 35 students with below standards MAP scores were identified as elaborating comprehenders, and 14, or 40%, as paraphrasing comprehenders. These results help interventionists target Tiers 2 and 3 instruction to practices that will help these students make progress in how they approach making meaning during reading.

Sample Classification of Students in Special Education by MAP and MOCCA Results.

Note. MAP = Measures of Academic Progress. The cut score for proficiency on MAP varies by grade level but is around the 60th percentile rank.

MOCCA = Multiple-choice Online Causal Comprehension Assessment.

Four, or just above 10%, of the below-standards readers were classified as causal, suggesting causal inferencing is not what challenges their comprehension. Finally, seven, or 20%, of the below-standards readers were classified as inconclusive; for these readers, additional data are needed to determine why they are struggling with comprehension. One likely candidate is that their decoding skills need further development. Students who struggle to decode often end up guessing randomly on an assessment like MAP or MOCCA; MOCCA captures this lack of a methodical approach to answering in its inconclusive category. Inconclusive results also illustrate the utility of triangulating MOCCA results with other assessments of component reading skills, as described next.

easyCBM

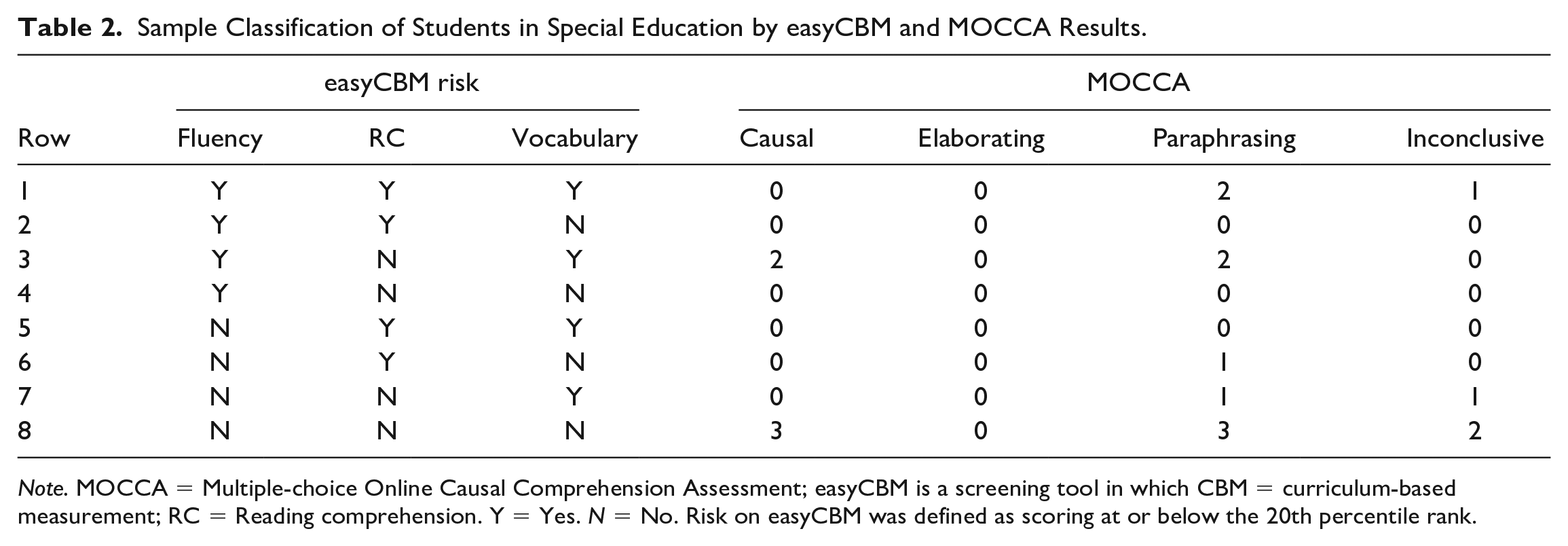

Now consider the situation in a school that uses a curriculum-based measurement approach to universal screening, as Ms. Liu’s school does. For example, in one Oregon school, easyCBM (Alonzo et al., 2006) is used to screen for risk for reading difficulties, and students took MOCCA as well. easyCBM includes subtests for passage reading fluency, reading comprehension, and vocabulary. Risk is determined by performance across these subtests, such that performing below the 20th percentile rank leads to a designation of some risk, and below the 10th percentile to a designation of high risk; performance at some risk on even one indicator leads to an overall designation of some risk and various combinations of some and high risk on one or more indicators can lead to a designation of high risk. Of this school’s 18 students receiving special education services in Grades 3 to 5, 15, or 83%, were designated as having some or high risk for not meeting year-end grade-level reading goals based on their performance across easyCBM subtests.

In practice, triangulating MOCCA results with easyCBM, or similar r-CBM results, is more complicated because of the three subtests and two cut-scores included in easyCBM. To simplify interpretation, the high risk and some risk categories for easyCBM were combined, which reduces the number of combinations of easyCBM risk categories to eight (see Table 2). Thus, interventionists can think of MOCCA and easyCBM results as creating an eight-by-four grid of results that can inform instruction (see Table 2).

Sample Classification of Students in Special Education by easyCBM and MOCCA Results.

Note. MOCCA = Multiple-choice Online Causal Comprehension Assessment; easyCBM is a screening tool in which CBM = curriculum-based measurement; RC = Reading comprehension. Y = Yes. N = No. Risk on easyCBM was defined as scoring at or below the 20th percentile rank.

In the first row of Table 2 are three students in special education who were flagged as at risk on all three easyCBM measures and who also performed poorly on MOCCA. For these students, working on decoding and fluency is clearly a priority regardless of how they performed on MOCCA. Interestingly, though, two of the students were classified as paraphrasing comprehenders, suggesting that when working on comprehension with these students who are struggling with fluency, MOCCA-informed intervention practices will be helpful. In the third row, an additional four students were categorized as at risk on both fluency and vocabulary by easyCBM, but two of them were causal comprehenders, suggesting that when given unlimited time to read, they can make causal inferences. The other two were again paraphrasing comprehenders, again suggesting MOCCA-informed intervention would be useful.

Further scrutinizing Table 2 reveals in the sixth row one reader that easyCBM flagged as at risk only on reading comprehension; MOCCA results confirmed this result and further classified the reader as paraphrasing. Another two readers in the seventh row were flagged by easyCBM as at risk only due to their vocabulary scores, but MOCCA scores suggest that these readers are also struggling with causal inferences. Finally, in the eighth row, eight students in special education were not flagged as at risk at all based on easyCBM results, but MOCCA results agree for only three of these students. Three of the other five were paraphrasing comprehenders and two had inconclusive results.

Taken together, this triangulation of MOCCA and easyCBM data show how Ms. Liu might find MOCCA results to shed further light on her puzzling students. Indeed, when Ms. Liu administers MOCCA to her students she finds not only that two of her three puzzling students are paraphrasers and the third is an elaborator, but she also finds that some of her 10 students with oral reading fluency weaknesses have a comprehension strength as witnessed by their MOCCA causal comprehender scores, whereas others have clear profiles as elaborating, paraphrasing, or inconclusive. With this knowledge in hand, she can group her elaborators and paraphrasers into small groups for targeted intervention.

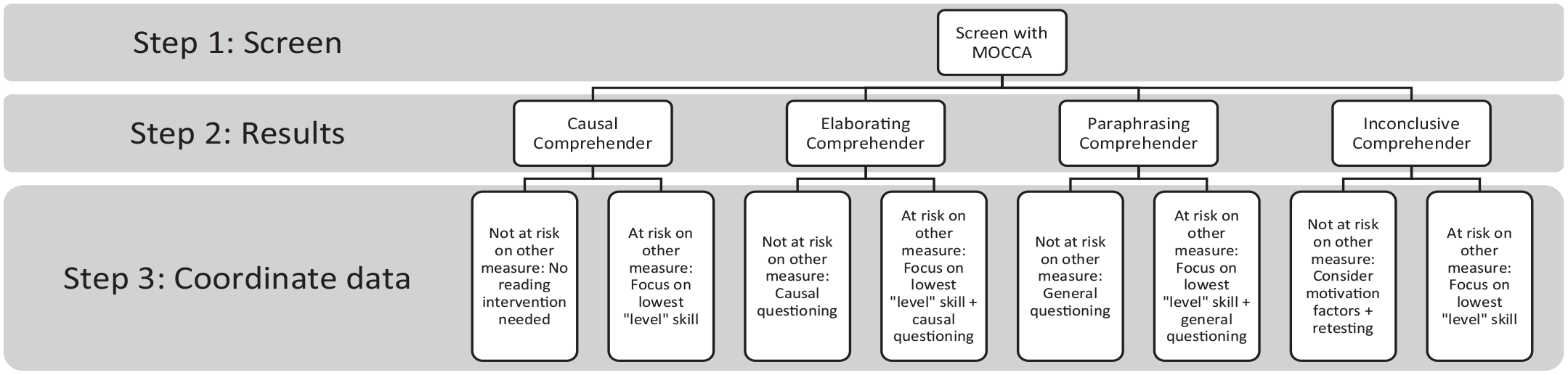

MOCCA-Informed Instructional Decision-Making

Another way of conceptualizing how to make use of diagnostic information from MOCCA results is to consider decision-making as occurring in stages. Based on MOCCA results, one can derive recommendations for instruction. When results exist from one or more additional r-CBMs, then one needs to consider whether (a) the other assessment(s) indicate a need for intervention and (b) the results indicate a foundational reading skill, like decoding or vocabulary, is a source of challenge. An example decision-making tree is shown in Figure 2. Where the other r-CBM does not indicate a need for intervention, you can simply follow the MOCCA instructional recommendations. In cases where the other r-CBM does indicate a need for intervention, however, a key question is whether the assessment holistically measures reading comprehension, like MAP or the easyCBM reading comprehension subtest, or a more discrete component skill of reading, like the easyCBM fluency and vocabulary subtests. In the former case, MOCCA recommendations take precedence, but in the latter case, addressing foundational skills takes precedence. Nonetheless, when specifically providing comprehension instruction to students in special education who struggle with foundational skills, keeping MOCCA questioning strategies in mind can help inform that instruction.

Decision-Making Diagram for MOCCA With Other r-CBMs.

For inconclusive MOCCA results, data from other sources are especially invaluable. In cases where students with inconclusive results do not perform at risk on other r-CBMs, it is important to consider whether the student was taking the MOCCA assessment seriously or was having a bad day. In those cases, retesting is often a good idea.

Interventions Informed by MOCCA Results

As noted above, readers identified as elaborating or paraphrasing comprehenders would benefit from targeted intervention to help them improve at making causal inferences. Causal comprehenders do not require intervention because they are already successful at making these inferences. Thus, in the following sections, MOCCA-informed interventions are described solely for elaborating and paraphrasing comprehenders.

Causal Questioning for Elaborating Comprehenders

The instructional implications for elaborating comprehenders are simple. To improve their comprehension, these readers can benefit from being prompted to make causal (i.e., necessary) inferences (McMaster et al., 2012). As mentioned above, these inferences use a reader’s background knowledge to fill in important information that is not explicitly stated in a text and tend to focus on why events occur or why characters behave as they do.

In a previously developed causal questioning instructional approach (McMaster et al., 2012), a teacher asks “why” questions designed to focus the reader’s attention on causal relations in the text. This questioning approach can be implemented using direct and explicit instructional practices, using an excerpt from Aesop’s “The Lion and the Mouse” as a sample text.

A Lion lay asleep in the forest, his great head resting on his paws. A timid little Mouse came upon him unexpectedly, and in her fright and haste to get away, ran across the Lion’s nose. Roused from his nap, the Lion laid his huge paw angrily on the tiny creature to kill her. “Spare me!” begged the poor Mouse. “Please let me go and someday I will surely repay you.” The Lion was much amused to think that a Mouse could ever help him. But he was generous and finally let the Mouse go. (Aesop, n.d., para. 1–3)

Step 1: Definition and Modeling

First, a teacher can both define what an inference is and model how to make one. Here is an example of defining and modeling an inference with “The Lion and the Mouse.” Everything we read requires us to make inferences. Inferences help us connect the new information we read to previous information we read and to our own knowledge of the world. When we make connections, we are making inferences. For example, we read “A Lion lay asleep in the forest, his great head resting on his paws. A timid little Mouse came upon him unexpectedly, and in her fright and haste to get away, ran across the Lion’s nose.” Now, we know the mouse stumbled upon the lion by accident, and we know that lions are large and scary. When we connect these ideas, we can infer that the mouse ran away because the mouse was afraid of the lion. When we connect something, we read to something we already know to make a new idea, which is an inference. Good readers make inferences as they read. We are going to practice making inferences.

A teacher can use a text that is being read currently in class or choose a short one for the explicit purpose of explaining and modeling causal inferences.

Step 2: Practice With Causal Questioning

Next, a teacher can engage students in group practice at making these inferences. In this stage, the teacher no longer provides all the information for generating an inference but instead prompts students to do so using questioning. These questions work in whole class, small group, and individual settings. Here are some questions that specifically prompt causal inferences:

Why do you think that just happened? Why do you think that character did that? Why do you think that character said that? Why do you think that character wants that?

Ideally, answers to these questions are followed up by the prompt, “What clues in the text make you think that?” This signals to students that the text holds clues as to the inferences needed.

Here are some questions related to continuing to read “The Lion and the Mouse.”

“Roused from his nap, the Lion laid his huge paw angrily on the tiny creature to kill her.” Why do you think the Lion is angry? What in the text makes you think that? “‘Spare me!’ begged the poor Mouse. ‘Please let me go and someday I will surely repay you.’” Why did the mouse offer to repay the lion? What in the text supports that idea? “The Lion was much amused to think a Mouse could ever help him.” Why do you think the Lion is amused? What clues in the text make you think that?

Previous research has shown that elaborating comprehenders benefit more than other readers from causal questioning (McMaster et al., 2012). While all readers improved as a result of this questioning approach, elaborating comprehenders benefited significantly more than their peers. Although the reason for this difference is not clear, one explanation may be that elaborating comprehenders are already making elaborative inferences and thus need prodding to focus on the most necessary (i.e., causal) elaborative inferences.

General Questioning for Paraphrasing Comprehenders

To improve paraphrasing comprehenders’ comprehension, these readers can benefit from being prompted to make general inferences (McMaster et al., 2012). These inferences use a reader’s background knowledge to fill in important information that is not explicitly stated in a text but does not necessarily relate to the causal chain of events in a text. While causal inferences tend to focus on why events occur or why characters behave as they do, general inferences can make any number of connections within a text and to background knowledge.

Paraphrasing comprehenders need prompting to become more active agents in the comprehension process and to see that not all necessary information is explicit in a text. They need help making inferences that connect ideas from different places in a text, which are often called bridging (e.g., Albrecht & O’Brien, 1993), connecting (e.g., van den Broek, 1994), or text-to-text (Raphael & Pearson, 1985) inferences. They also need prompting to use their background knowledge to make elaborative inferences.

In essence, paraphrasing comprehenders adhere closely to the text source but do not make the implicit connections necessary for comprehension. As with the causal questioning approach, this general questioning approach can be implemented using direct and explicit instructional practices, again utilizing the excerpt from Aesop’s “The Lion and the Mouse” as a sample text.

Step 1: Definition and Modeling

A teacher can utilize the same definition of an inference provided under the causal questioning approach, but modeling would look different. Here is an illustration of modeling a bridging (also known as connecting or text-to-text) inference.

We read “A Lion lay asleep in the forest, his great head resting on his paws. A timid little Mouse came upon him unexpectedly, and in her fright and haste to get away, ran across the Lion’s nose.” Now, we know the mouse was timid, and we know that the mouse becomes frightened. When we connect these ideas, we can infer that timid might mean easily frightened. When we connect something we read to something we already know to make a new idea, that is an inference. Good readers make inferences as they read. We are going to practice making inferences.

Note that the definition of inference is identical here as to the one used in the causal questioning condition. That is because it is unnecessary for students to understand the different types of inferences.

Step 2: Practice With General Questioning

As with the causal approach, a teacher or interventionist can also use questioning to prompt Paraphrasing Comprehenders to make inferences when reading. These questions work in whole class, small group, and individual settings. Here are two questions that specifically prompt connecting inferences:

How does what we just read connect to what we read earlier? How does what we just read connect to [state an earlier important idea from the text]?

Here are two questions that specifically prompt elaborative inferences (including a predictive inference):

What do we already know about [state main topic from the text]? What do you think will happen next?

As an example, here are a few of these questions in the context of continuing to read “The Lion and the Mouse.”

We just read “Roused from his nap, the Lion laid his huge paw angrily on the tiny creature to kill her.” How does that connect to what we read earlier in the text? “The Lion was much amused to think that a Mouse could ever help him. But he was generous and finally let the Mouse go.” What do you think will happen next? What in the text makes you think that?

As with the questions for elaborating comprehenders, note how each question directs readers to look toward the text. This direction keeps readers focused on the text as the primary source of meaning.

In contrast to elaborating comprehenders, previous research revealed that paraphrasing comprehenders benefited less from the causal questioning approach and more from this kind of general questioning approach (McMaster et al., 2012). Although all readers improved as a result of this questioning approach, paraphrasing comprehenders benefited from it significantly more than their peers did. Although there is no definitive explanation for these results, one possibility may be that paraphrasing comprehenders are relatively passive in their attempt to comprehend what they read and need help to become more active in the comprehension process by simply making connections in the text.

Conclusion

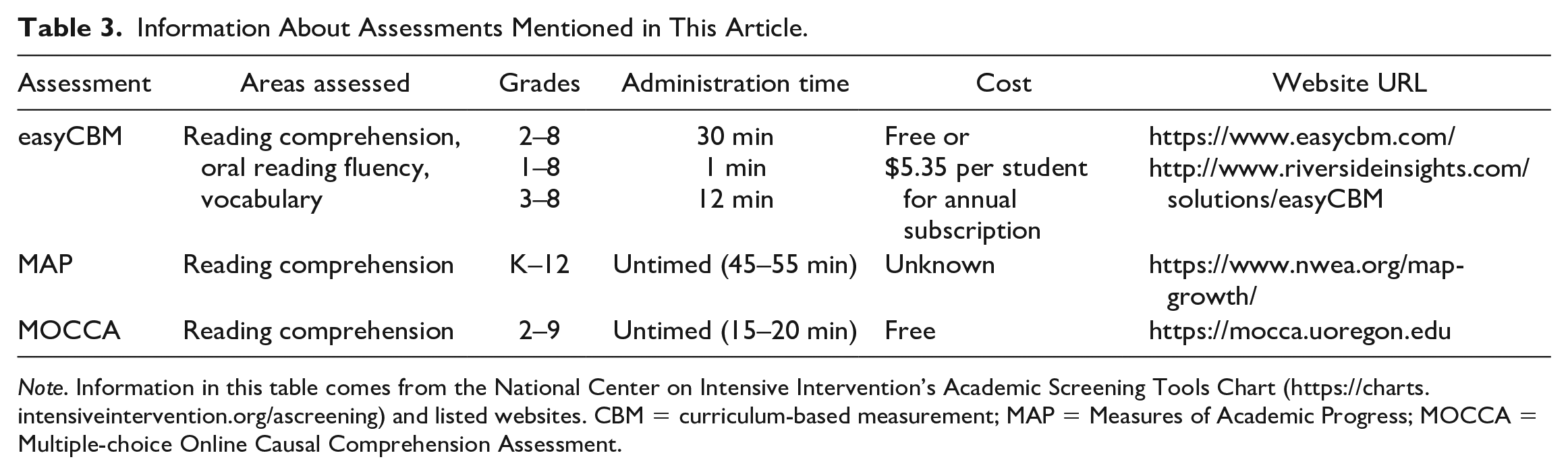

What this article demonstrated is that no one assessment provides enough information for definitive decision-making on a student’s instructional needs. However, adding a diagnostic assessment such as MOCCA in conjunction with other reading measures, can help refine how to meet the needs of readers, especially those with SRCD. Each of the assessments mentioned in this article are summarized in Table 3.

Information About Assessments Mentioned in This Article.

Note. Information in this table comes from the National Center on Intensive Intervention’s Academic Screening Tools Chart (https://charts.intensiveintervention.org/ascreening) and listed websites. CBM = curriculum-based measurement; MAP = Measures of Academic Progress; MOCCA = Multiple-choice Online Causal Comprehension Assessment.

Multiple-choice Online Causal Comprehension Assessment is a unique reading comprehension assessment, in that it offers not only a holistic view of whether a student is comprehending well, but also a diagnostic classification based on patterns in students’ responses when they get items incorrect. When these patterns are psychometrically precise enough, MOCCA will classify students as relying on either elaborative inferences or paraphrasing, at which point interventionists can follow intervention recommendations for these two types of readers accordingly. Just as importantly, MOCCA offers an inconclusive classification for students whose response patterns cannot be distinguished from random guessing. In these cases, the MOCCA recommendation is to consult other r-CBMs to determine whether difficulties with foundational skills are at issue or the results are anomalous. Overall, the diagnostic approach MOCCA takes to analyzing student errors is what results in diagnostic sub-scores that can elucidate why a student struggles with reading comprehension specifically, and help educators intervene accordingly.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The research reported here was supported by the Institute of Education Sciences, U.S. Department of Education, through Grants R305A140185 and R305A190393 to the University of Oregon. The opinions expressed are those of the authors and do not represent the views of the Institute or the U.S. Department of Education.