Abstract

From its origins in the 1990s, the qualitative health research metasynthesis project represented a methodological maneuver to capitalize on a growing investment in qualitatively derived study reports to create an interactive dialogue among them that would surface expanded insights about complex human phenomena. However, newer forms positioning themselves as qualitative metasynthesis but representing a much more technical and theoretically superficial form of scholarly enterprise have begun to appear in the health research literature. It seems imperative that we think through the implications of this trend and determine whether it is to be afforded the credibility of being a form of qualitative scholarship and, if so, what kind of scholarship it represents. As the standardization trend in synthesis research marches forward, we will need clarity and a strong sense of purpose if we are to preserve the essence of what the qualitative metasynthesis project was intended to be all about.

Introduction

As editor and associate editor of journals publishing qualitative work in the health field, I have witnessed a proliferation of submissions in recent years of “quick and dirty” technical reports that position themselves as products of “qualitative metasynthesis.” In keeping with the more typical convention that has become popular in evidence synthesis reporting, they focus considerable effort on search, retrieval, and selection decisions, including the deployment of rather arbitrary “quality checklists,” such that the majority of available qualitative publications are generally excluded from their final data set. From there, they tend to report superficial findings comprised of thematic similarities, rarely tapping into anything of interest relative to methodological, theoretical, or contextual variance within the selected set of studies. Although they often cite classic qualitative metasynthesis methods references as their analytic resource, the final products of these exercises reflect very little by way of inductive analysis or interpretive examination. Unfettered, I believe this trend in what a qualitative metasynthesis represents could serve to greatly discredit the wider methodological genre.

In this article, I revisit the foundational ideals of adapting sociological metasynthesis methods for the health research field and draw upon that aspiration as a basis for challenging the merit of this new species of “scholarly project.” Drawing upon a critically reflective comparison between what qualitative metasynthesis was designed to accomplish and what it seems to have become in the hands of a growing cadre of researchers, I suggest that we now need to propose terminological, practical, and epistemological solutions toward preventing the undoing of what still may be among the most marvelous methodological tools in our qualitative health research armament.

Genesis of the Qualitative Metasynthesis Project

The foundational idea of qualitative metasynthesis is a compelling one. It capitalizes on the major investment made by multiple qualitative scholars in often different contexts and settings, entering them into a collective interpretive dialogue about the phenomena that inspired their original inquiries. Its purpose is to generate a form of knowledge that is enriched by the different disciplinary angles of vision, methodologies, samples, and interpretive lenses each original investigator brought to the challenge. It holds that there is value in understanding a body of this kind of work in a particular way, such that the limitations and idiosyncrasies of the original investigators and their subjects are countered by the weight of analytic contributions from different perspectives. As such, it promises the possibility of knowing—more fully, more deeply, and more convincingly—the complex human phenomena that attract our qualitative curiosity.

As it is currently understood in the health research world, the qualitative metasynthesis research tradition was borrowed and adapted from the social sciences, specifically the sociological work of scholars such as Zhao (1991) and Ritzer (1991), and the applied anthropological investigations of educational theorists such as Noblit and Hare (1988; Thorne, Jensen, Kearney, Noblit, & Sandelowski, 2004). It first appeared in the qualitative health literature as a viable methodological option in the mid-1990s, variously expressed as

Current Manifestations of Metasynthesis

Despite the exhortations of the methodological mentors toward ensuring that metasynthesis approaches must retain the original intention and complexity of the qualitative products upon which they interpret, the wider world of synthetic research products has also evolved in parallel. Interest in shifting from more conventional literature reviews toward those that are styled as systematic, integrative, or scoping reviews (and therefore presumably publishable as discrete pieces of scholarship) has led to a plethora of guides for conducting a comprehensive search of the available literature and for displaying the findings of such reviews in a tabular extracted form (Armstrong, Hall, Doyle, & Waters, 2011; Booth et al., 2016; Dixon-Woods et al., 2006; Grant & Booth, 2009; Webb & Roe, 2008; Whittemore & Knafl, 2005). We have entered an era in which increasing numbers of scholars, including newer researchers entering the field, are being encouraged to conduct some form of systematic or integrative review as a stand-alone study or as an adjunct to a larger program of research (Clark, 2016). It is argued that users of reviews may well be interested in answers to the kinds of questions that only qualitative studies can provide, but “are not able to handle the deluge of data that would result if they tried to locate, read and interpret all the relevant research themselves” (Thomas & Harden, 2008, p. 2). The result has been an explosion of technical reports that focus on documenting meticulous search and display strategies, but actually tell us very little of an interpretive nature about the full body of qualitatively derived literature that has been so carefully harvested toward that purpose.

As has been the case with mass production of redundant and often useless systematic reviews in the qualitative domain (Page & Moher, 2016), this “quick and dirty” variety of metasynthesis tends not to be particularly useful as a distinctive scholarly contribution and can be directly counterproductive to the qualitative research enterprise. When it assumes a methodological authority to summarize findings across many studies and then proceeds to aggregate what are extracted as key findings and summarize common patterns or themes across a set of studies, metasynthesis recreates the kind of interpretive error that further compromises both our understanding of the phenomenon in question and also the credibility of the qualitative genre to advance knowledge in a meaningful manner. Limiting metasynthesis study reports to the self-evident by drawing on the most simplistic commonalities across the set of available studies therefore deprives the reader of any true sense of the depth, complexity, richness, and diversity inherent in the original works (Finfgeld-Connett, 2016). Returning to the analogy of the Sufi elephant, these products eliminate the trunk, ears, tail, and flowing movement from the equation, settling on the common factors that can be confirmed across the full set of highly selected static data points, thereby encouraging us to believe with a stronger conviction than was previously possible that the phenomenon under study is merely a large patch of thick and hairy skin.

What one sees in these not particularly intellectually rigorous published products is a technical report that puts the reader at a considerable distance from the thinking scholars who generated the original qualitative findings. Often these reports mimic the style and format of the products of the evidence synthesis done by the Cochrane Collaboration with which we have all become familiar. However, although the Cochrane approach explicitly aims to narrow a field to extract the most conclusive evidence for decision making, qualitative studies are generally aimed at producing a thick, rich, and detailed elaboration beyond that which is well-recognized or agreed upon (Thorne, 2016). Thus, these kinds of studies produce a form of technical report that strips the context from the original studies, typically characterizing the body of work through oversimplifications of complex human phenomena. They often pitched these reports in the form of dramatically inflated truth claims that draw conclusions about the entire field. As such, in attempting to compete in the arena of the evidence hierarchy, they misrepresent the entire point of what the qualitative genre of scientific inquiry was meant to offer.

Assumptions Underlying the Current Methodological Drift

When one reviews the kinds of reports that have started to appear in the health literature as products of qualitative metasynthesis, it is possible to detect a number of problematic assumptions and misconceptions as to the nature of the work. These represent a form of faulty logic that ought to have been picked up by journal reviewers. However, the fact that it has not may be a product of insufficient critical dialogue in the published literature to alert those who scrutinize submissions for publication to the need for expert advice on such work and the risks associated with publishing synthesis products that claim a level of authority they cannot sustain according to established standards of logic or credibility. Among the problematic assumptions that seem to have been driving this newer species of metasynthesis products are the mistaken notions that rigor is merely a matter of a clearly defined methodology, that reporting standards can serve as a proxy for quality criteria, and that textual “sound bites” reflective of themes found with some frequency across a data set can effectively serve as a reasonable representation for a complex and dynamic conceptualization. Whatever the drivers for this new trend—whether they be the increasing pressure for multiple publications, the desire to capitalize on the normal effort of literature review by configuring it as a separate publishable entity, or the increasing prestige of something that claims to be a synthesis product—its proliferation creates the risk of normalizing a form of problematic logic that cannot serve the qualitative health research community well.

Mistaking Tightness for Rigor

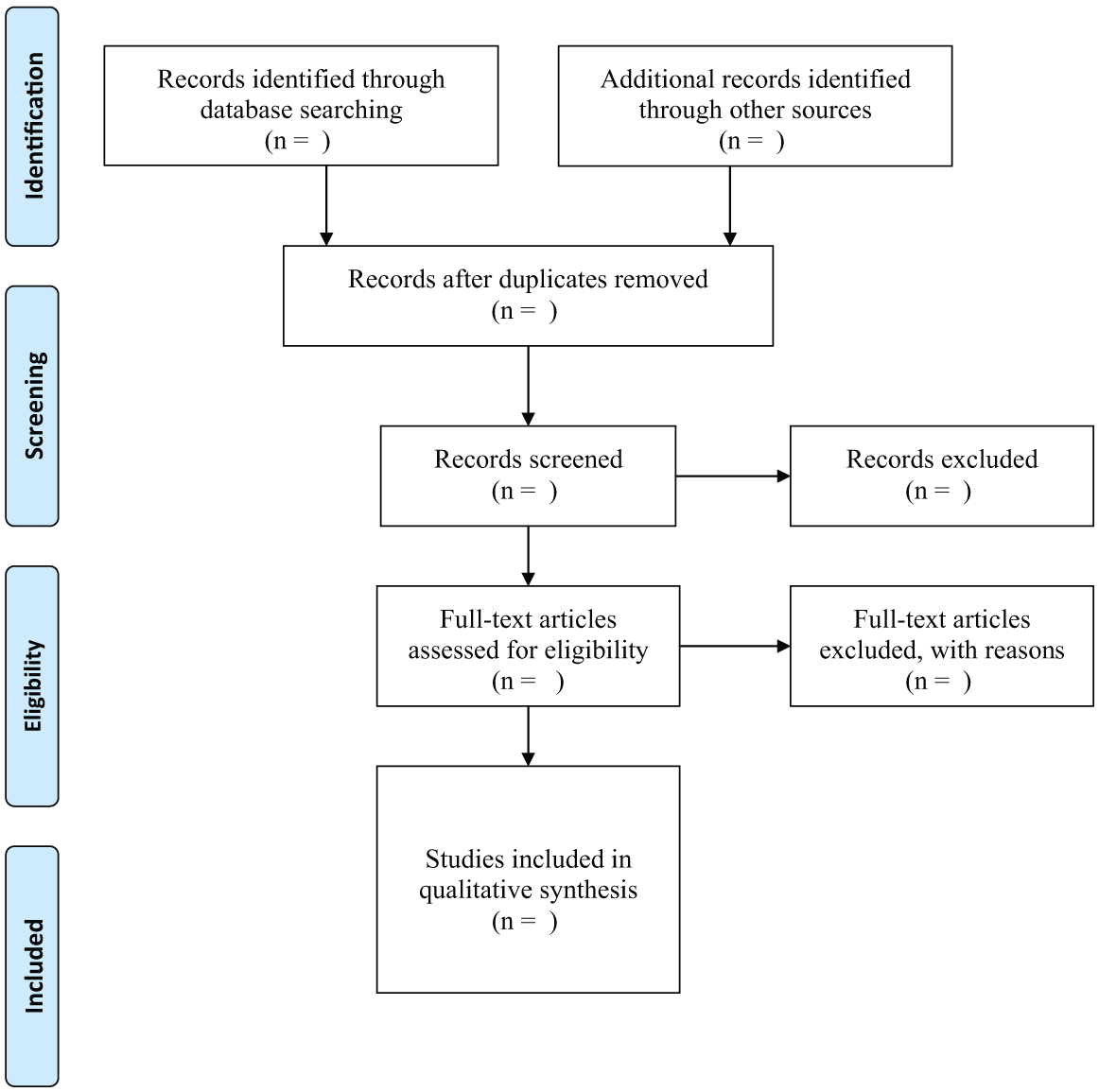

Many of the more recent initiatives that are creeping into the qualitative health literature are quite a different breed of activity from that which was envisioned by the pioneers of metasynthesis application in health research. They often draw from the same subset of available guides that seem to emphasize the technical operations over the interpretive work. The initial step in these approaches is a demonstrably exhaustive search in which the full range of electronic databases searched is documented and a set of terms, Boolean operators (such as “and, or”) to limit or broaden the search and wildcards (represented by *) to reflect all possible truncations, is listed. The second step is typically an enthusiastic enumeration of the formal reduction process through which thousands of possible sources are reduced to the handful that will be subject to tabularization. The familiar Preferred Reporting Items for Systematic reviews and Meta-Analyses (PRISMA) flowchart that has become the ubiquitous hallmark of such studies (Moher, Liberati, Tetzlaff, Altman, & the PRISMA Group, 2009; see Figure 1), reveals what would appear to be a highly discriminating form of reduction and exclusion of “noise” into a final set of what are positioned as legitimate primary sources. However, in most instances, it merely represents the imprecision of electronic search capacity using keywords to identify what is research, what is qualitative, and what the substantive field includes. Although the documentation of these stages conveys the impression that an enormous effort was made to extract only the very best study reports from a very large volume of material (often in the tens of thousands), a closer read of the text tends to confirm that the total number of abstracts actually read was perhaps a few dozen, and the actual number of manuscripts harvested and read in full just a handful. It is not atypical for such reports to winnow what initially appears as a massive body of studies into a very modest collection of reports that become the substance of the actual summary and synthesis.

PRISMA flow diagram.

Further complicating the situation is the tendency of many of the authors of this newer form of metasynthesis to reference the classic methodological authorities in an effort to position claims that their interpretation is based on a highly integrated and rigorous analytic process (Frost, Garside, Cooper, & Britten, 2016; Ludvigsen et al., 2016). Noblit and Hare’s meta-ethnography has been identified as a particular favorite in this regard (Thomas & Harden, 2008), despite little evidence in many instances that the authors have actually read the original or demonstrate any understanding of the level of intellectual work or interpretive rigor for which those authors were arguing. Instead, they tend to cite a single pat phrase that purportedly sums up what they believe they have done in keeping with that tradition, such as a statement to the effect that they have “identified key concepts from the original studies and translated them into one another.”

By conforming to a highly technical set of sorting and selecting operations, all of which are attaining increasing credibility as expectations for manuscripts claiming to be metasynthesis reports, and rendering findings that reflect only the most superficial of commonalities across the final subset of studies, they are privileging standardized technique over interpretive imagination, conceptual depth, and the insights that could be obtained from cross fertilization across diversities. These kinds of technical reports often reveal nothing of the gorgeous and evocative depth and details reported in the original studies, and grossly misrepresent what they reported as findings by virtue of ignoring that which is not common across the full body of work. And although they may list such factors such as the year, location, and discipline of the original investigator(s) in their tabularized summaries of the key facts of the studies they summarize, they rarely take any of the chronology and temporality of the evolving body of exploration into critical consideration. Thus, ideas that have been surfaced, challenged, and deconstructed get reported as common themes, rather than lively dialogues between scholars over time and within their distinctive perspectives. In this manner, the ultimate findings become “distorted into clarity” (Sandelowski, Voils, Barroso, & Lee, 2008).

Conflating Reporting Standards With Quality Criteria

Considerable work has been done in recent years to try to improve the reporting standards of health research such that the various attempts to synthesize are operating with comparable sets of information about each of the studies they are attempting to integrate into a credible synthesis. As the originator of the EQUATOR (Enhancing the Quality and Transparency of Health Research) Network has written, “Unless methodology is described the conclusions must be suspect” (Altman, 2016). In keeping with this imperative, another misunderstanding that seems rife within the new species of qualitative metasynthesis reporting is the interpretation that reference to reporting standards can serve as a proxy for determining the quality of the original studies. Thus, in some instances, the mere presence or absence of reference to a specific reporting criteria checklist, such as PRISMA (Moher et al., 2009), which is a general reporting guide, not really intended for qualitative research, or more qualitatively oriented reporting standards such as COREQ (Consolidated Criteria for Reporting Qualitative Research; Tong, Sainsbury, & Craig, 2007), or SRSQ (Standards for Reporting Qualitative Research; O’Brien, Harris, Beckman, Reed, & Cook, 2014), can feature prominently in explanations of the screening process with respect to quality. As those checklists are themselves highly subjective and technical (including, for example, the presence of a statement about ethical review, which tells us nothing about the quality of the findings and much more about the national legislative context within which the study was conducted), the reader has no capacity to judge what gorgeous but imperfect interpretations may have been excluded, and what technically correct but “bloodless” and unimaginative findings may have been privileged in delineating the final metasynthesis sample.

As it seems self-evident from the assumptions underlying the entire enterprise of quantitative meta-analysis and research synthesis enterprises that the integrity of a synthesis is entirely dependent on assurance of the quality of the studies upon which it was rendered, the question of quality is a natural consideration in this kind of work. However, looking beyond that apparent truism to consider the distinct and various disciplinary and epistemological traditions that have informed the evolving body of qualitative health research methodologies, it has been well recognized that attempts to distinguish high from low quality among published qualitative research studies is a tremendously complex challenge, subject to considerable subjective variance, and highly dependent on interpretations of intended purpose (Sandelowski, 2014). For example, a study report that might not reflect the best example of a coherent and fulsome ethnographic report could well surface some insights about the population under study that shed light on an aspect of experience, not previously understood by health care practitioners caring for patients within that population. Thus, the decision to eliminate that study arbitrarily by virtue of its fit with ethnographic research guidelines may obscure a germ of possibility that, if used to interrogate the reports of other studies, could have led to important new angles of consideration (Pawson, 2007). What has been observed by teams working on qualitative metasynthesis is that the more junior and inexperienced a team, the easier it is to discredit study reports as not meeting certain standards. However, more seasoned and experienced scholars tend to have a much broader capacity to comfortably include a broader range of study forms and types because they appreciate the history and tradition from which qualitative health research has grown and the various “languages” within which qualitatively derived insights can find their way into our collective wisdom.

Thus, although efforts to use reporting toward improving the caliber of syntheses involving qualitative studies, such as AMSTAR (Assessing the Methodological Quality of Systematic Reviews; Shea et al., 2007), ENTREQ (Enhancing Transparency in Reporting the Synthesis of Qualitative Research; Tong, Flemming, McInnes, Oliver, & Craig, 2012), and RAMSES (Realist and Meta-Narrative Evidence Synthesis; Wong, Greenhalgh, Westhorp, Buckingham, & Pawson, 2013a, 2013b), are both necessary and well-intended, it is important to remember that defining reporting synthesis standards that can do justice to the broad spectrum of qualitative methodologies and approaches is notoriously difficult (O’Brien et al., 2014). High-quality qualitative research reports are not merely repositories of facts, but often quite complex documents reflective of an iterative engagement, reflection, and writing process through which a researcher has created a comprehensive account of a phenomenon (Sandelowski, 2008). The more varied the interpretive repertoire of a scholar, the greater will be their capacity to tune in to and account for a multiplicity of ways in which to interpret and treat a set of data (Sandelowski, 2011). Thus, meaningful evaluation of the quality of a piece of qualitative research cannot be relegated to a “mindless consumption of any single set of criteria” but instead constitutes a “positioned, perspectival human judgement” situated within a specific community of practice (Sandelowski, 2014, p. 91).

Muddling Messages With Meanings

Having undertaken the exhaustive work of counting, sorting, and extracting to obtain a manageable body of primary qualitative studies about a topic, the actual analysis to generate findings begins. And although investigators venturing into these metasynthesis studies often presume that the outcome will be

What this kind of representation misses is that most qualitative scholars will position their new conceptualizations within the context of what is generally known and understood about a phenomenon. So, for example, if I am studying how patients understand and make sense of their experience with a certain chronic condition, I would expect that they would orient the inquirer into that understanding by telling something of how it all began. However, although there may be some commonalities in their accounts of being diagnosed with that condition, their various trajectories over time may evolve into a wide diversity of experience. If I synthesize the common account as if it represents the main story, I may have missed the most important ingredients of what shapes the experience, which will be reflected in the understandings we can obtain from cross-interrogation of the conditions of those diversities among and between cases. Another feature of the body of qualitatively derived study reports is the fact that what exists in prior publications can play an influential role in subsequent studies by other researchers. We know that it is an expected convention to draw upon prior work in justifying a new study. Where certain scholars have been particularly dominant in a field, communities of researchers from specific disciplines have been especially productive within the overall body of published studies, or particular theoretical or methodological fashions have been especially influential in relation to certain kinds of questions in a field, there is considerable likelihood that these ideas will have filtered into the shaping of the research questions and study designs by subsequent researchers. Thus, the repetition of an idea, as a body of work evolves, may not reflect its inherent importance as much as revealing the expectations of researchers (and/or supervisory committees, examiners, and journal editors) that coherence in a field over time is consistent with credibility. Context and interpretation necessarily change over time and are informed by what has gone on before. Thus, conflating frequency of an observation across studies with its relevance in relation to the topic, rather than deploying such observations from a critical perspective to try to discern their potential meaning, becomes a worrisome kind of illogic in the metasynthesis enterprise.

Another problematic feature in this newer species of qualitative metasynthesis product is the apparent perception that the complexities that characterize a robust primary study report can be appropriately summed up in “sound bites” that capture the essence of the conclusion to which the original investigator had arrived. As part of the technical procedure in conducting a qualitative metasynthesis, it has become common to display key elements of the body of studies under consideration in tabular format, so that key features such as date of publication, methodological orientation, theoretical framework, sample size, and demographics can be presented in summary format. Such tables were advocated in some of the early methodological guides (such as Paterson et al., 2001), as one option for visualizing the whole, such that patterns within it could become apparent. For example, in an early synthesis of qualitative studies pertaining to adapting to and managing diabetes, this technique helped surface the reality that the body of research in this field had been conducted almost exclusively on the most conveniently accessible subset of the affected population—well-educated Caucasian women who lived with a partner or caregiver (Paterson, Thorne, & Dewis, 1998). In a 2002 metastudy of chronic illness experience, we also used such a technique to illuminate the theoretical and methodological assumptions that had shaped the qualitative work being done, discovering clear social constructions within concepts such as stigma or biographical disruption that had been widely applied in relation to some diseases and not others to whom they might have equally applied (Thorne, Paterson, Acorn, Canam, Joachim, & Jillings, 2002). However, the tabular display depicted in those early guides was intended as only one aspect of a much more comprehensive set of operations to facilitate the hard work of inductive analysis and not as a result in itself. In other words, it was intended to create questions worthy of further rigorous investigation, not merely a reporting of patterns.

As the metasynthesis movement has evolved, a technique that has been increasingly taken up by researchers is including a “key message” statement within the tabular representation of the main ingredients of the set of studies under consideration. Thus, in addition to the data on samples and methods, the table will include a column in which the investigator has captured what is believed to be the essence of the findings in a brief listing of categories or a set of phrases. Although a word string that allows the researcher quick recall of the substance of each distinct study may seem a reasonable data management heuristic, it does not follow that the main messages of qualitative study reports can be meaningfully captured in the space of a few words. Thus, when this technique is used to create the answers rather than serving as a springboard for new lines of deep inquiry into the database as a whole, the exercise narrows the focus and extracts a key idea from its larger context, in other words enacting a maneuver that is precisely counter to the richness and depth that is supposed to be the hallmark of qualitative inquiry (Ludvigsen et al., 2016). In so doing, it has fallen prey to the intellectual trap foreshadowed by Sandelowski and colleagues (1997), when they warned against metasynthesis as a means by which to “sum up a poem” (p. 366).

Thus, the current proliferation of superficial technical reports as a legitimate metasynthesis product seems to have been fueled by a set of uncritically held assumptions: that scholarly integrity within metasynthesis is primarily a product of adherence to a formulaic approach to data management, that it is appropriate to base judgments about the quality of past scholarly contributions on new sensibilities associated with standardized reporting, and that the identification of commonalities and patterns across a set of published studies constitutes a reasonable approximation of their collective contribution. Taken together, these assumptions have played a role in creating a hollow and rather useless form of scholarship that does not substantially add value to the field, and instead, conveys the message that what has been superficially summarized is perhaps all that was there.

Toward Terminological, Practical, and Epistemological Solutions

Unless we abandon the aspirations of the original qualitative metasynthesis project, it seems fair to suggest that this new breed of technical product places the genre at considerable risk for confusion and misrepresentation. When a metasynthesis product claims to represent the essence of a body of prior research in a simplistic manner, there seems a serious risk of entrenching stereotypic disparaging attitudes about the potential value and relevance of the qualitative enterprise. Furthermore, as it is not uncommon for those who seek to do a metasynthesis project to have to justify its originality and relevance to a funder or review panel, the availability of a pre-existing qualitative metasynthesis report on that topic may make it more difficult to defend. In the world of health care delivery, in which evidence claims are increasingly the arena within which we determine what does and does not matter, and scholars are increasingly being asked to give a nod to patient-reported outcomes, we run the risk that reference to these rather hollow and technical kinds of synthesis reports will come to be seen as the best that qualitative science can offer.

Going forward, I see promise in several directions. I believe we would benefit from better terminological clarity so as to offer scholars a coherent set of options that better reflect the kind of work they are doing. Similarly, there seems a need for some practical guidance as to how metasynthesis ought to be conducted and the base of expertise requisite to it making sense within a field. Finally, I suggest we put engage in ongoing development of quality criteria by which we could recognize a useful metasynthesis product when we see it.

Creating Terminological Consistency

The metasynthesis space in the qualitative literature is currently being occupied by a rather wide range of synthesis exercise types, variously (and sometimes inconsistently) known by such names as metastudy, meta-ethnography, scoping reviews, integrative reviews, and systematic reviews. I suggest we might want to consider reserving the “meta” language for those studies that genuinely allow for critical reflection on bodies of qualitatively derived knowledge, such that we can understand more deeply how they got to be the way they are, and the trends and fashions of theory and method that have allowed us to reach the conclusions we have reached thus far. High-quality qualitative metasynthesis should be a deconstructive and interpretive exercise, judged by its capacity to allow us to see the evolving collective knowledge in its unfolding, to understand its intricate particularities, and to appreciate the limits and conditions of what we comfortably agree upon and where the debate may continue. In contrast, this new species that wears the qualitative metasynthesis mantle is a misrepresentation of the intent, just as dabbling with a bit of qualitative data in a “mixed methods” study and calling it qualitative analysis misrepresents the kind of knowledge that the qualitative research genre is designed to generate. If we believe there is value in this new species of enterprise, let us find a new name for it. Perhaps we can call it something like “meta-tabulation” or reprise the older term “meta-aggregation.” But let us not confuse the issue by allowing it to co-opt the term

Creating Practical Wisdom on the Conduct of Metasynthesis

Because this kind of project seems particularly popular among newer scholars, it may well be that many of those entering into a qualitative metasynthesis actually lack the capacity to do justice to the aspects of a phenomenon that the primary qualitative methods were designed to illuminate. In the conventional wisdom of those who brought the method into the health research armament in the first place, qualitative metasynthesis was sufficiently complicated as to require a team of researchers, ideally possessed of deep experiential knowledge of a wide range of qualitative methods, and an appreciation for the variety of disciplinary traditions that have applied them to the various health questions that have been qualitatively investigated. The team approach allowed for capitalizing on the subtle nuances of a study, such as the language signifiers by which a reader might discern analytic direction and detect subtle interpretive turns. It permitted illumination of the points of tension and difference among and between the various primary studies and the texts within which they were reported. As such, it brought to the project a broader set of possibilities for deconstructing the implications of certain methodological maneuvers and for bringing the interpretive arguments of the original researchers into a critically reflective dialogue with one another. This kind of experienced imaginal capacity to reflect on the implications of each approach allowed for deep thinking about what the collective whole might represent. Thus, in this conventional team style of working a qualitative metasynthesis project, it became possible to envision a deeply informed, complex, and conceptually well-integrated set of new findings.

Beyond the expertise and organizational structure we might deem as most conducive to a qualitative metasynthesis, better guidance on the actual doing of such a project might be useful. We might, for example, liken the extracted database of a synthesis project to the collection of a set of interviews in a primary qualitative study. Simply having obtained the data is not equivalent to having done the analysis. We do possess considerable sophistication around being able to ask questions of a primary study to determine whether the justifications for its analytic path are sufficiently robust. We interrogate whether there is evidence that the ideas brought into the study were appropriately managed in the process of shaping the findings and how the researcher has made a convincing argument with respect to what has been identified as relevant with the data set as well as the sense that has been made of that relevance. Similarly, by insisting that these kinds of qualities be demonstrably apparent in a metasynthesis report before its findings can be justified, we can help researchers move beyond the technical expectations and into those of a more intellectual nature. Finally, we will need to remain highly cautious of explicit linguistic requirements as proxies for the intellectual rigor of our products. We cannot simply recreate the litany of accepted credibility claims that has become the hollow trop of the current generation.

Building Epistemological Distinctions Between Varieties of Knowledge Claims

To move toward a more coherent and logical form of science based on metasynthetic approaches, it seems evident that we need to create some clarity around the kind of knowledge claims that qualitative metasynthesis can, and should, be supporting. As George Noblit rhetorically asked us to consider, “Is a synthesis part of rationalization of human life or part of politics and emotions? Does it serve truth seeking or truth making?” (Thorne et al., 2004, p. 1350). Although some of the early proponents of qualitative metasynthesis may have entertained the hope that it might offer the potential for larger and stronger truth claims than did the original body of studies on their own, serious scholars who have considered the epistemological basis upon which qualitative studies and syntheses are generated recognize that these products are much more consistent with the search for a more nuanced and in-depth understanding of the inherently complex phenomena about which we are concerned.

From this, we can conclude that the kind of metasynthesis work that will be most useful to the evolving knowledge field (including the body of evidence for practice) will be that which critically reflects upon, and at times even deconstructs, the ideas that have been taken into and drawn out of the qualitative research enterprise over time and across multiple contexts (Frost et al., 2016; Sandelowski, 2006). When our exploration into a body of qualitatively derived studies helps us better appreciate how we have come to think about a phenomenon in the manner that we have, and to grapple with what aspects we can take hold of with some confidence in contrast to those which will require caution, then we are using metasynthesis techniques in a constructive and informative manner. To achieve the kind of wisdom about phenomena that metasynthesis aspires to, it will be important that we have a basis upon which to collectively reflect on what constitutes the difference between high- and low-quality work. Unsurprisingly, I would argue forcefully that the hollow technical species of metasynthesis—the kind that extracts only a like subset of studies from the total available body of work, then draws conclusions based on their commonalities—ought not to be treated as a serious form of scholarship. Rather, the kinds of metasyntheses that make a genuine and distinctive contribution to knowledge, that build something meaningful upon the insight, understanding and comprehensive comprehension of which the qualitative enterprise is capable, will be those that tap the available knowledge as widely as is feasible and study it as deeply as is conceivable. They will be those that include the full spectrum of methodological orientations within the perspectival kaleidoscope, making informed interpretations about the disciplinary and theoretical traditions within which each primary researcher or research team was operating, and the influence those conditions may have had upon what they chose to articulate as their findings. Furthermore, the better quality metasynthesis studies will be those that not only expect variations among and between the primary studies but also exploit them as the impetus for expanding layers of critical reflection as to the complex constellation of possible explanations. Although I have found metastudy particularly useful in this regard, because it forces separate analyses on the basis of method, theory, and data before any more general synthetic conclusions can be considered, the important point is that a relevant, rigorous approach to a metasynthesis that is worth the doing must necessarily be critically reflective rather than summative or aggregative (Gough, 2013).

If we can come to an agreement on articulating and enforcing this style of metasynthesis as the expected standard, I believe we can make progress on taming the monster that we seem to have created. Toward this end, I propose the use of the following questions in assessing the publication worthiness of a metasynthesis product:

Are the exclusion processes justified by the explicit aims of the review?

Have the mechanisms for data display demonstrably furthered the analytic capacity?

Is there evidence of critical reflection on the role played by method, theoretical framework, disciplinary orientation, and local conditions in shaping the studies under consideration?

Does the interpretation of the body of available studies reflect an understanding of the influence of chronological sequence and advances in thought within the field over time?

Does the synthesis tell us something about the collection of studies that we could not have known without a rigorous and systematic process of cross-interrogation?

Conclusion

In 2004, a group of methodological scholars expressed concern about the overly rapid proliferation of glorified form of literature reviews purporting to be a brand of qualitative metasynthesis with actual evidential status (Thorne et al., 2004). It seems that the problem has been further complicated by the bells and whistles of formulaic search and selection techniques, such that this new species of work has taken hold. It is regrettable that the idea that spawned qualitative metasynthesis seems to be getting lost in the translation. In putting these arguments to ink, I would hope to further the kinds of conversations that may help us return to the promise that qualitative metasynthesis offered as a scholarly approach with the potential to advance a field of study.

Rigorous and carefully conducted qualitative metasynthesis can expand upon the diversities of human experience that may be missed in individual qualitative studies, especially of the smaller variety. It can also provide a bird’s eye view on the theoretical and methodological fashions that may have skewed our sense that we understand something in a particular way. As such, it can correct some of the assumptions that a thoughtful reader might otherwise make on the basis of a more conventional focused read of the literature. However, I believe we must hold it to a standard of being demonstrably what it says it is.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.