Abstract

Mental health conditions such as depression, anxiety, and stress are among the most prevalent psychological concerns worldwide, particularly in trauma-affected populations. Survivors of sexual violence frequently report co-occurring emotional symptoms and are at elevated risk for persistent psychological distress (Easton, 2014). These challenges often intersect with gendered dynamics: male survivors may face unique barriers to disclosure and help-seeking due to hegemonic masculinity norms and stigma (Addis & Mahalik, 2003; Seidler et al., 2016), while female survivors may more readily disclose symptoms but often experience high levels of depression and anxiety (Tolin & Foa, 2006; World Health Organization, 2021). These gendered differences underscore the need for assessment tools that are both psychometrically sound and equitable across diverse populations.

The Depression Anxiety Stress Scales (DASS) are widely used self-report instruments designed to assess three interrelated yet distinct domains of emotional distress—depression, anxiety, and stress—based on the tripartite model of affective disorders (Clark & Watson, 1991; Lovibond & Lovibond, 1995a). The 42-item DASS was reduced to the 21-item version (DASS-21) by the authors, who retained seven high-performing items from each subscale (Lovibond & Lovibond, 1995b). Numerous studies across clinical and non-clinical populations have validated the DASS-21's internal consistency, factorial structure, and cross-cultural applicability (e.g., Henry & Crawford, 2005; Oei et al., 2013; Sinclair et al., 2012; Zanon et al., 2021).

Despite its relative brevity, the DASS-21 may still be burdensome in time-limited or high-stress contexts, particularly among trauma survivors. In trauma counseling or longitudinal monitoring settings where repeated assessments are needed, ultra-brief forms may be preferable to reduce respondent fatigue while retaining reliability and validity (Barkham et al., 2023; Medvedev et al., 2020). This has led to the development of multiple short forms of the DASS, ranging from 20 items to as few as eight items, each varying in item content, structure, and psychometric performance (e.g., Ali et al., 2021; Halford & Frost, 2021; Kyriazos et al., 2018; Monteiro et al., 2023; Wise et al., 2017).

However, most validation studies of these short forms have been conducted in student, psychiatric, or general population samples, with limited focus on trauma-affected groups or gender-based psychometric equivalence. This gap is significant, as trauma-related symptoms may manifest differently across gender, and instruments not tested for structural equivalence may yield biased interpretations (Putnick & Bornstein, 2016; Vandenberg & Lance, 2000). To ensure accurate and equitable assessment, it is essential to examine whether these short forms perform consistently across both male and female survivors of sexual violence.

The present study builds directly upon previous work that validated six DASS short forms in a sample of male-only survivors of sexual violence (Yun, 2025). To address the limitations of that initial study and the broader gaps in the literature, the current research was designed as a replication and extension to evaluate the psychometric properties of these same six short forms in a mixed-gender sample of adult survivors of sexual violence (N = 893; men = 451, women = 442). Specifically, this study extends the prior work by (a) enhancing the concurrent validity analysis via the inclusion of a trauma-specific measure (i.e., Impact of Event Scale-Revised [IES-R]; Weiss & Marmar, 1997), and (b) introducing a test of gender-based measurement invariance to assess the scales’ psychometric equity. By identifying psychometrically sound and gender-responsive screening tools, this research aims to support more accurate and efficient assessment of emotional distress in both research and practice with trauma-affected populations.

Literature Review: Psychometric Validation of DASS-21 Short Forms

As this study is a replication and extension of previous work (Yun, 2025), this literature review serves two purposes. First, it provides a comprehensive overview of the existing abbreviated versions of the DASS-21, organized by their theoretical factor structure. Second, it establishes the psychometric landscape from which the short forms for the present study were selected. For a detailed, item-by-item summary of the psychometric properties and factor structures of the short forms discussed in this review, readers are referred to a comprehensive table in the previous study (Yun, 2025).

Since the original 42-item DASS was condensed to the 21-item version (Lovibond & Lovibond, 1995a, 1995b), numerous researchers have sought to create even shorter forms to reduce respondent burden. These abbreviated versions have been validated using various psychometric techniques and are best understood when categorized by their factor structure. In the following sections, internal consistency is evaluated using Cronbach's alpha, with coefficients interpreted according to the widely used guidelines proposed by George and Mallery (2019): α > .90 = Excellent, α > .80 = Good, α > .70 = Acceptable, α > .60 = Questionable, and α < .60 = Poor.

One-Factor Models

A unidimensional approach collapses the three DASS constructs into a single measure of general psychological distress. The most notable example is a 17-item version proposed by Ali and Green (2019). Analyzing data from 149 Egyptian drug users, they found that a three-factor model fit the data poorly. Using exploratory and parallel analyses, they determined that a unidimensional structure was more appropriate, which showed good internal consistency (α = .88).

The primary strength of the one-factor approach is its parsimony. However, this simplicity comes at a significant cost: the loss of the theoretical tripartite structure that distinguishes depression, anxiety, and stress. This limits its utility for clinical or research purposes that require a nuanced differentiation of symptom profiles.

Two-Factor Models

Two-factor models offer a compromise, typically by combining anxiety and stress. Halford and Frost (2021) developed a DASS-10 using two large Australian clinical samples (N > 2,000). Their CFA supported a hierarchical model with two first-order factors—Depression and Anxiety-Stress—which demonstrated strong fit (CFI = .96, RMSEA = .07) and good internal consistency for its two subscales (α = .85 and .83, respectively). A different two-factor approach was taken by Duffy et al. (2005) with a DASS-20. In a sample of 216 Australian adolescents, they proposed a model with “generalized negativity” and “physiological arousal” factors, which showed acceptable fit (CFI = .90, RMSEA = .06), though internal consistency was not reported.

These two-factor models provide pragmatic solutions for efficient assessment, though they can be distinguished by their alignment with the original DASS theory. The DASS-10 arguably presents a more theoretically coherent model, as its two factors (Depression and a combined Anxiety-Stress factor) are a direct simplification of the DASS's original tripartite structure. In contrast, the DASS-20's two factors—“generalized negativity” and “physiological arousal”—appear to be a greater departure, representing new categories that do not map as cleanly onto the original theory (see Lovibond & Lovibond, 1995b). Consequently, its theoretical rationale could be viewed as less direct. While both models offer brevity, they sacrifice the ability to differentiate between anxiety and stress.

Three-Factor Models

The majority of abbreviated forms retain the original tripartite structure.

DASS-20 Short Form (20 items): Several 20-item versions have been proposed. Medvedev et al. (2020), using Rasch analysis with 420 New Zealand adults, created a 20-item, three-factor model that demonstrated good fit to the Rasch model [χ2(15) = 20.28, p = .161]. Subscale reliability was supported by a Person Separation Index ranging from .71 to .79, and total scale reliability was excellent (person reliability = .91; item reliability = .99). Park et al. (2020), in a sample of 123 Australian adults with Autism Spectrum Disorder, also created a modified 20-item, three-factor model, which showed acceptable fit (CFI = .924, RMSEA = .077) and good to excellent internal consistency (Cronbach's α = .93 for Depression, .84 for Anxiety, and .88 for Stress). DASS-18 Short Form (18 items): Oei et al. (2013) developed a DASS-18 in a large sample of employees (N = 2,613) across six Asian countries. Their EFA and CFA supported a three-factor structure with good fit (CFI = .94, RMSEA = .06) and acceptable to good reliability (α = .86 for Depression, .81 for Anxiety, and .70 for Stress). Similarly, Wittayapun et al. (2023) validated an 18-item Thai version in 3,705 nursing students, which demonstrated excellent model fit (CFI = .98, RMSEA = .032) and acceptable to good internal consistency (α = .87 for Depression, .79 for Anxiety, and .73 for Stress). DASS-14 Short Form (14 items): Wise et al. (2017) developed a 14-item version using principal components analysis in a sample of 343 Australian health professionals. Their three-factor scale demonstrated acceptable to good internal consistency (α = .88 for Depression, .73 for Anxiety, and .84 for Stress). DASS-12 Short Form (12 items): Multiple 12-item versions have been validated, revealing a pattern of highly inconsistent psychometric properties across studies. A notable trend emerged from research in Malaysian youth samples. Yusoff (2013), in a sample of 706 medical school applicants, reported excellent model fit (CFI = .989, RMSEA = .024) but poor internal consistency (α values of .55 for depression, .60 for anxiety, and .56 for stress) (George & Mallery, 2019). Similarly, Osman et al. (2014), in a large sample of 2,980 secondary school students, also found good model fit (CFI = .96, RMSEA = .04) but poor to questionable reliability (α values of .68 for depression, .53 for anxiety, and .52 for stress). In stark contrast to these findings, Monteiro et al. (2023) validated a DASS-12 in a large, international adult sample (N > 2,000) and reported both good model fit (CFI = .97, RMSEA = .07) and good to excellent reliability (McDonald's ω = .81–.96; Hair et al., 2013). DASS-9 Short Form (nine items): A similar pattern of inconsistency is seen for the DASS-9. In a sample of 706 Malaysian medical school applicants, Yusoff (2013) reported a nine-item model with excellent fit (CFI = .997, RMSEA = .011) but poor internal consistency (α values of .52 for Depression, .57 for Anxiety, and .55 for Stress). Teo et al. (2019) validated a DASS-9 in 126 responses from 42 Brunei nursing students, reporting good fit (χ2 = 57.1, p < .001, CMIN/DF = 2.38, SRMR = .037). However, the study's limitations include its small sample size and the fact that internal consistency estimates were not reported for the proposed nine-item model itself. DASS-8 Short Form (eight items): Ali and colleagues developed and validated the briefest version, the DASS-8, across two key studies. The initial development study in Saudi Arabia (N = 1,160) reported excellent model fit (e.g., CFI ≥ .998, RMSEA ≤ .059) and good internal consistency for all three subscales (α values ranging from .79 to .89) (Ali et al., 2021). A subsequent validation of the English-language version in a large international sample (N = 1,032) also found excellent model fit (CFI = .99, RMSEA = .04) (Ali et al., 2022). In this sample, the Depression and Anxiety subscales demonstrated acceptable to good reliability (α = .80 and .78, respectively), but the two-item Stress subscale showed questionable internal consistency (α = .69).

The three-factor approach is the most popular as it remains faithful to the DASS's tripartite theory. However, a review of these models reveals a clear tension between the goals of brevity and maintaining psychometric integrity. The longest of the short forms, such as the DASS-20, offer only negligible gains in brevity. Mid-length versions like the DASS-18 and DASS-14 also show limitations, including development in non-English contexts that raises concerns about linguistic equivalence and instances of borderline reliability on certain subscales (e.g., the Anxiety subscale of the DASS-14). This trade-off becomes more acute in the ultra-brief versions. The DASS-12 and DASS-9, for instance, demonstrate highly inconsistent performance across studies, suggesting their reliability may be highly sample-dependent. Finally, even versions with otherwise strong model fit can have structural weaknesses, such as the two-item Stress subscale in the DASS-8. A factor with only two indicators is considered a structural weakness because it is not independently identified and can lead to unstable model estimation, challenging the psychometric convention that a robust latent factor requires at least three indicators (Brown, 2015; Kline, 2016).

Collectively, these findings indicate that while the three-factor structure is theoretically desirable, no single short form has emerged as universally robust, with significant variations in reliability and validity across different versions and populations.

Gaps in the Literature

Despite the proliferation of DASS short forms, their psychometric properties remain underexplored in key contexts. First, few studies have systematically evaluated their performance in trauma-exposed populations. Second, concurrent validity has rarely been assessed using trauma-relevant measures like the IES-R. Finally, while some studies like Monteiro et al. (2023) and Ali et al. (2022) have established gender-based measurement invariance for their respective scales, this crucial analysis is absent in most validation studies. This leaves a significant gap in understanding the structural and cross-group validity of these short forms for diverse trauma-affected populations.

The Present Study

Informed by this literature, the present study focuses on six short forms selected for their English-language development and potential for use in clinical settings. In selecting appropriate short forms for the current study, four inclusion criteria were applied.

First, only versions developed and validated in English were considered, to minimize bias due to translation and ensure item-level consistency (Streiner & Norman, 2015). This approach also helps reduce the confounding effects of cultural and linguistic variation, which can obscure the true psychometric structure of a scale and complicate cross-study comparisons. Second, selected forms were required to retain a multi-factor structure, measuring distinct domains of emotional distress rather than collapsing them into a single, unidimensional construct. This requirement ensures the abbreviated versions remain faithful to the tripartite model's theoretical underpinnings, which separate depression, anxiety, and stress, allowing for a nuanced understanding of symptom domains (Lovibond & Lovibond, 1995a, 1995b). Third, each subscale required a minimum of three items for stable factor identification in CFA, as factors with fewer indicators risk being underidentified and yielding unstable solutions (Brown, 2015; Kline, 2016). Finally, the abbreviated form needed to offer a meaningful reduction in length compared to the DASS-21, eliminating minimal-reduction variants like the DASS-20. Although the DASS-20 by Medvedev et al. (2020) showed excellent psychometric properties, its limited brevity did not justify inclusion given the aims of this study.

Based on these criteria, the following six short forms were selected for further analysis: the DASS-14 (Wise et al., 2017), DASS-12 (Monteiro et al., 2023), DASS-12 (Yusoff, 2013), DASS-10 (Halford & Frost, 2021), DASS-9 (Teo et al., 2019), and DASS-9 (Yusoff, 2013).

Research Question

The present study seeks to determine which of these English-language DASS short forms demonstrates the strongest psychometric properties when applied to adult male and female survivors of sexual violence. To address this, the study evaluates the structural validity (via CFA) and concurrent validity (via correlations with the IES-R) of six short forms. It then compares their psychometric performance between genders and formally tests the best-performing models for gender-based measurement invariance.

Method

Research Design and Participants

This study employed a secondary analysis of archival data. The data originate from a comprehensive dataset coordinated by a lead community-based trauma agency in a region of Ontario. This agency serves as the lead for a network of 18 government-funded programs supporting male survivors across 12 counties. As part of its routine service delivery and program monitoring, the agency utilized the DASS-21 for intake assessments. The original de-identified dataset contained 2,986 client records collected from January 2010 to May 2022.

A rigorous, multi-stage screening process was applied to refine this administrative dataset into a final analytic sample. First, records of individuals under the age of 18 were excluded. Next, cases with more than 10% missing responses on the DASS-21 were removed. Finally, the remaining records were screened again to exclude those with more than 10% missing data on the IES-R. This sequential exclusion procedure yielded a final sample of 893 participants. Ethical approval for this secondary analysis of de-identified data was granted by the author's institutional Research Ethics Board.

The final sample was composed of 451 men and 442 women, with a wide age range from 18 to 88 years (M = 38.57, SD = 13.52). The vast majority of participants (97.6%) reported English as their primary language. The sample reflected diverse educational backgrounds, with 36.1% having completed high school, 30.2% holding a bachelor's degree, and 25.7% possessing a college diploma. A significant portion of participants (40.9%) were unemployed. Among the 284 individuals who provided income details, over half (50.4%) reported an annual income below $20,000. Most participants were single (52.6%), and 2.2% identified as Indigenous.

Measure

DASS Short Forms

The DASS-21 is a 21-item self-report measure assessing depression, anxiety, and stress, with seven items per subscale. Items are rated on a 4-point Likert scale from 0 (did not apply to me at all) to 3 (applied to me very much or most of the time). In the present sample, internal consistency for the DASS-21 was good for all three subscales: Depression (α = .89), Anxiety (α = .82), and Stress (α = .83). These values all meet the criterion for good reliability (α > .80) according to the guidelines used in this study (George & Mallery, 2019).

Six abbreviated versions of the DASS-21 were selected for validation: the DASS-14 (Wise et al., 2017), DASS-12a (Monteiro et al., 2023), DASS-12b (Yusoff, 2013), DASS-10 (Halford & Frost, 2021), DASS-9a (Yusoff, 2013), and DASS-9b (Teo et al., 2019). These short forms were chosen based on four criteria: English-language development, alignment with a multi-factor structure, inclusion of at least three items per subscale, and a meaningful reduction in length. The DASS-14 retains a three-factor structure with its items distributed across depression (five items), anxiety (three items), and stress (six items) subscales. Two distinct 12-item versions were included: the DASS-12a, which has a balanced structure of four items per subscale, and the DASS-12b, which also proposes a three-factor, four-items-per-subscale model. The DASS-10 employs a two-factor structure, combining anxiety and stress items into a single six-item subscale alongside a four-item depression subscale. Finally, two nine-item versions were examined: the DASS-9a, which includes three items for each of the three subscales, and the DASS-9b, which proposes a different three-factor, nine-item solution. The specific survey questions that comprise each of these six short forms are detailed in the previous study (Yun, 2025). All short forms were administered using the original 4-point Likert scale.

IES-R

The IES-R (Weiss & Marmar, 1997) is a 22-item self-report measure that assesses symptoms of posttraumatic stress related to a specific traumatic event. It includes three subscales—Intrusion, Avoidance, and Hyperarousal—aligned with DSM-IV criteria for PTSD. Participants rated the degree of distress experienced during the past seven days on a 5-point Likert scale ranging from 0 (not at all) to 4 (extremely). In the present study, the IES-R was used to assess the concurrent validity of the selected DASS short forms. Internal consistency for the IES-R in this sample was excellent, with Cronbach's α = .92 for the total scale and α values of .79, .88, and .85 for the Avoidance, Intrusion, and Hyperarousal subscales, respectively.

Preliminary Analyses

Prior to conducting the primary analyses, the dataset was screened to ensure it met key statistical assumptions for CFA, specifically regarding missing data, normality, and multivariate outliers. Following the exclusion of participants under 18, cases were removed based on a sequential screening for missing data: first for the DASS-21 (>10% missing), and then for the IES-R (>10% missing). For the remaining cases, no missing data imputation was performed for demographic variables. To retain statistical power and reduce bias, missing values on the DASS-21 and IES-R were imputed using the Expectation-Maximization (EM) method. The EM algorithm was selected because it is a maximum-likelihood approach that provides more reliable parameter estimates than older methods like listwise deletion or mean substitution (Little & Rubin, 2002).

Univariate normality was assessed using skewness and kurtosis statistics. All primary variables met conventional thresholds for normality (|skew| < 2.0; |kurtosis| < 7.0), consistent with recommendations by West et al. (1995). Although Kolmogorov-Smirnov tests indicated significant departures from normality (p < .001), this was expected given the clinical nature of the sample and large sample size. Given this violation of the normality assumption, the Bollen-Stine bootstrap procedure (with 2,000 resamples) was planned to provide a more robust evaluation of model fit.

Multivariate outliers were identified using Mahalanobis distance with a critical value of χ2(43) = 79.08 (p < .001). A total of 21 cases out of the 893 participants (2.4%) exceeded this threshold. These cases were retained in the analytic sample, as their scores, while high, were clinically plausible for survivors of sexual violence and did not appear to be data entry errors. Removing them could have artificially reduced variance and compromised the external validity of the psychometric evaluation in this clinical group (Enders, 2010).

Additionally, an examination of the inter-item correlation matrix for the 21 DASS items was conducted. No extremely high correlations (r > .90) were found, suggesting that multicollinearity was not a concern for the subsequent CFA analyses.

Statistical Procedure / Data Analysis

All analyses were conducted using SPSS 30 and AMOS 30. Descriptive statistics were computed for all study variables. CFA was used to evaluate the factorial validity of each DASS short form. The total sample size of N = 893 was considered ideal for these analyses. This provides a subject-to-item ratio of over 40:1, far exceeding the 10:1 ratio often recommended for stable model estimation and sufficient statistical power (Hair et al., 2013; Kline, 2016).

Model fit was assessed using multiple indices: chi-square (χ2), the comparative fit index (CFI), the Tucker-Lewis index (TLI), the Akaike information criterion (AIC), the root mean square error of approximation (RMSEA), and the standardized root mean square residual (SRMR). Because the assumption of multivariate normality was not met, the Bollen-Stine bootstrap procedure (with 2,000 resamples) was also used to provide a more accurate p-value for the chi-square test. Following established guidelines, model fit was deemed acceptable when CFI and TLI were ≥ .90, RMSEA was ≤ .08, and SRMR was ≤ .08 (Hu & Bentler, 1999). AIC was used for relative model comparison, with lower values indicating better fit.

Internal consistency was evaluated using Cronbach's alpha. Coefficients were interpreted according to the guidelines proposed by George and Mallery (2019): α > .90 = Excellent, α > .80 = Good, α > .70 = Acceptable, α > .60 = Questionable, and α < .60 = Poor. Concurrent validity was assessed through Pearson correlations between each DASS short form and the total score of the IES-R (Weiss & Marmar, 1997). Group differences were examined by correlating gender with DASS subscale scores. Lastly, measurement invariance across gender was tested using multigroup CFA in AMOS. Configural, metric, and scalar models were evaluated sequentially. Model comparisons were based primarily on changes in CFI (ΔCFI ≤ .01), in line with Cheung and Rensvold (2002).

Results

This section reports the psychometric properties of the six DASS short forms. Given the data's non-normal distribution, robust estimates for model fit (Bollen-Stine p-values) and parameter estimates (95% confidence intervals) were derived from bootstrapping with 2,000 resamples. The evaluation was conducted in two stages. First, to ensure an unbiased comparison for selecting the top-performing scales, CFA was conducted on the full sample (N = 893) using the original, unmodified models for all six short forms. Based on this initial analysis, the top three forms were selected. Second, for the subsequent gender subgroup and measurement invariance analyses, these top three models were refined with theoretically justified modifications (e.g., correlating error terms) to establish the best possible model fit before they were compared across groups.

Model Fit

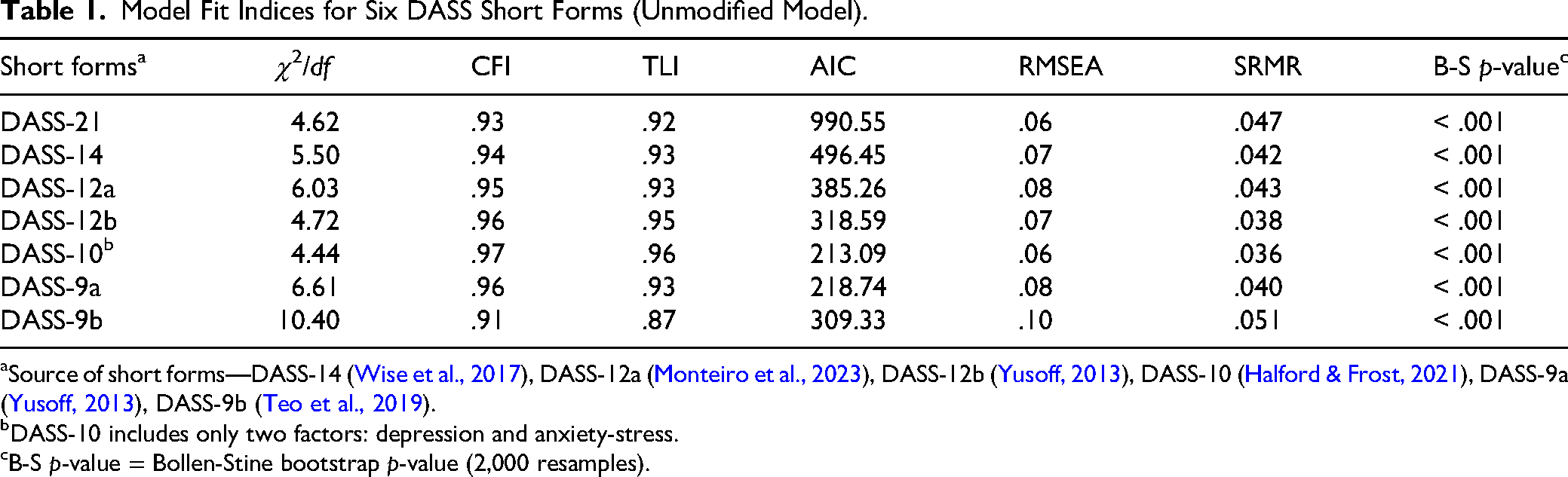

CFA was conducted to evaluate the factorial validity of the six DASS short forms. As shown in Table 1, the chi-square test, corrected for non-normality with the Bollen-Stine bootstrap, was significant for all models.

Model Fit Indices for Six DASS Short Forms (Unmodified Model).

Source of short forms—DASS-14 (Wise et al., 2017), DASS-12a (Monteiro et al., 2023), DASS-12b (Yusoff, 2013), DASS-10 (Halford & Frost, 2021), DASS-9a (Yusoff, 2013), DASS-9b (Teo et al., 2019).

DASS-10 includes only two factors: depression and anxiety-stress.

B-S p-value = Bollen-Stine bootstrap p-value (2,000 resamples).

This is a common finding in large samples, so the evaluation of model adequacy relied on a composite of relative fit indices, with conventional criteria indicating acceptable fit when CFI and TLI are ≥ .90 and RMSEA is ≤ .08 (Hu & Bentler, 1999). Based on these criteria, the DASS-10 and DASS-12b demonstrated excellent relative fit. The DASS-10 yielded the strongest profile (CFI = .97, TLI = .96, RMSEA = .06), followed closely by the DASS-12b (CFI = .96, TLI = .95). The DASS-14, DASS-12a, and DASS-9a also showed acceptable fit. In contrast, the DASS-9b failed to meet the criteria for acceptable fit, with a TLI of .87 and an RMSEA of .10.

Reliability and Validity

Internal Consistency

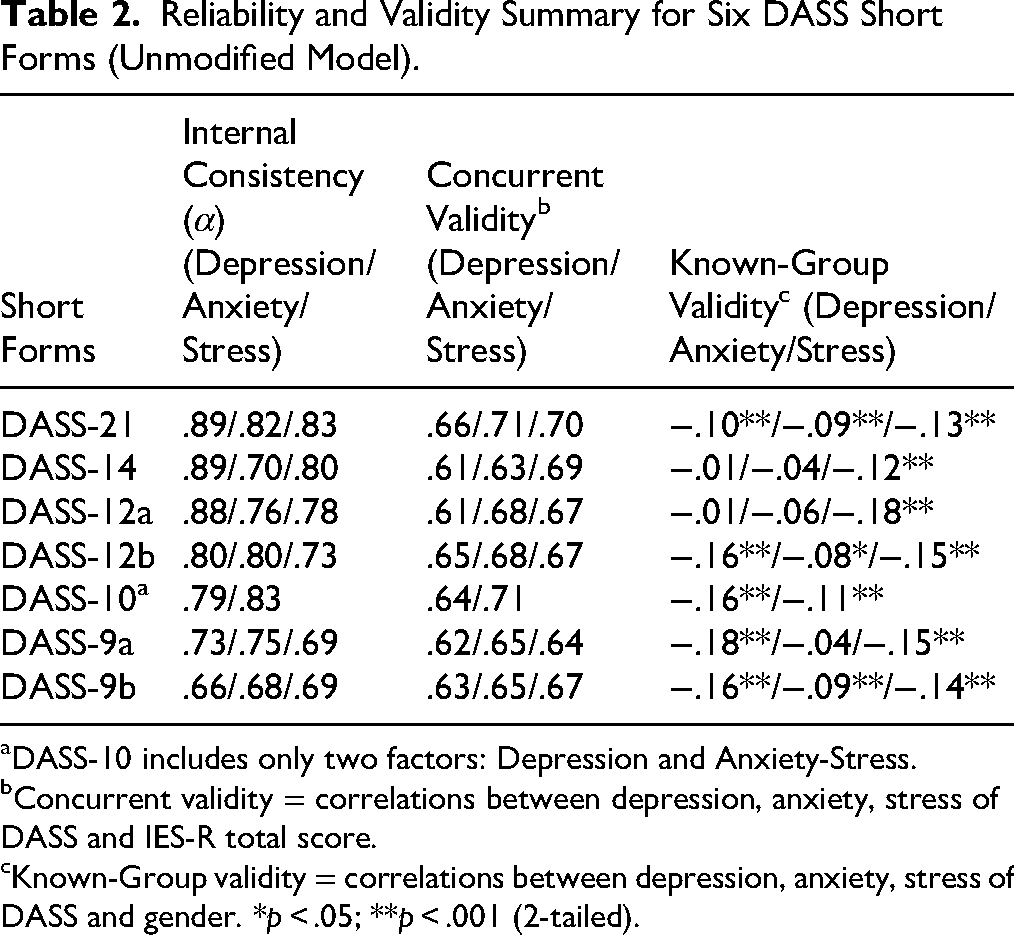

As detailed in Table 2, internal consistency reliability was evaluated for each short form using Cronbach's alpha.

Reliability and Validity Summary for Six DASS Short Forms (Unmodified Model).

DASS-10 includes only two factors: Depression and Anxiety-Stress.

Concurrent validity = correlations between depression, anxiety, stress of DASS and IES-R total score.

Known-Group validity = correlations between depression, anxiety, stress of DASS and gender. *p < .05; **p < .001 (2-tailed).

The DASS-21, DASS-14, and DASS-12a demonstrated good to excellent internal consistency, with most alpha coefficients well above .80. The DASS-12b and DASS-10 also exhibited acceptable to good reliability across their respective subscales. In contrast, the nine-item versions demonstrated the weakest psychometric properties; the DASS-9a had one subscale approaching the limit of acceptability (α = .69), while the DASS-9b was the least reliable form, with all three of its subscales falling below the .70 threshold.

Concurrent Validity

Concurrent validity was assessed by examining the Pearson correlations between each DASS subscale and the IES-R total score (see Table 2). All short forms demonstrated a strong positive relationship with trauma-related symptoms. According to Cohen's (1988) conventions, the effect sizes for these relationships were large, with Pearson's r coefficients ranging from .61 to .71 across all short forms.

Known-Groups Validity

Known-groups validity was evaluated by examining correlations with gender (coded as Female = 0, Male = 1), with results also presented in Table 2. Most subscales showed small but significant negative correlations, indicating that female participants tended to report slightly higher levels of distress. A consistent pattern of statistically significant gender differences across all subscales was found for the DASS-12b, DASS-10, and DASS-9b, while other forms showed a more mixed pattern of results.

Selection of Top-Performing Models

Based on a holistic evaluation of the psychometric evidence presented in Tables 1 and 2, three short forms—the DASS-10, DASS-12a, and DASS-12b—were selected for further analysis. The DASS-10 emerged as the most psychometrically robust model overall. It demonstrated the best relative model fit of any scale (CFI = .97, RMSEA = .06), maintained good internal consistency (α = .79–.83), and showed the strongest concurrent validity with trauma symptoms (r = .71). The DASS-12b was also a consistently strong performer, with excellent relative model fit (CFI = .96, RMSEA = .07) and balanced reliability across its three subscales (α = .73–.80). Finally, the DASS-12a was retained due to its strong internal consistency, particularly for the Depression subscale (α = .88), and its adequate model fit and validity evidence. Therefore, these three models were carried forward for the subsequent subgroup and measurement invariance analyses.

Psychometric Evaluation by Gender

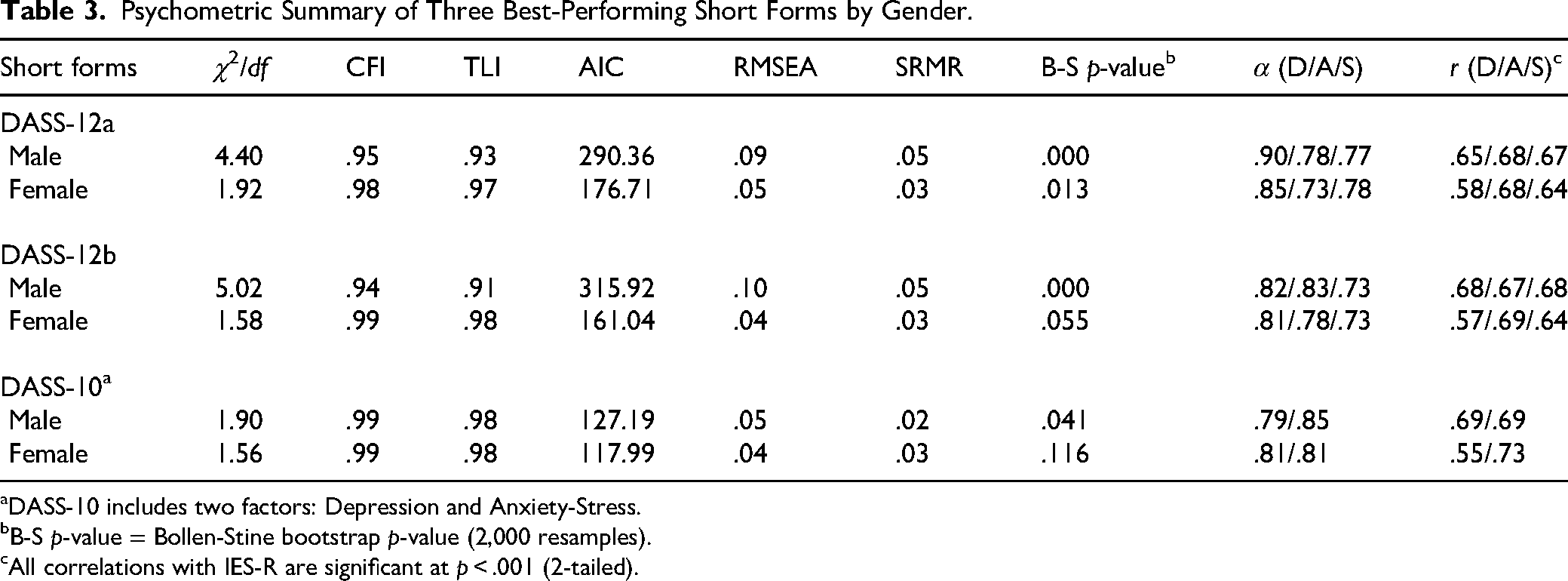

Table 3 summarizes gender-specific psychometric properties for the three best-performing short forms (DASS-10, DASS-12a, and DASS-12b) on model fit, internal consistency, and concurrent validity with the IES-R, based on subgroup analyses conducted prior to formal measurement invariance testing.

Psychometric Summary of Three Best-Performing Short Forms by Gender.

DASS-10 includes two factors: Depression and Anxiety-Stress.

B-S p-value = Bollen-Stine bootstrap p-value (2,000 resamples).

All correlations with IES-R are significant at p < .001 (2-tailed).

Female subsamples generally showed stronger fit (χ2/df = 1.56–1.92; CFI = .97–.99; TLI = .97–.98; RMSEA = .04–.05), with Bollen-Stine bootstrap p-values that were non-significant or marginal. The DASS-10 provided the most consistent fit across genders: for males, model fit was good (p = .041), and for females, it was excellent (p = .116). In contrast, the male 12-item variants showed elevated residual misfit (RMSEA = .09–.10; Bollen-Stine p < .001) despite acceptable incremental fit (CFI = .94–.95; TLI = .91–.93). AIC favored the more parsimonious DASS-10 in both genders.

Internal consistency was adequate to excellent across all forms (α = .73–.90). The highest reliability was observed for males on the DASS-12a Depression subscale (α = .90), while the DASS-10 showed consistently good α for both factors across gender. Concurrent validity with the IES-R was uniformly significant (p < .001), with correlations ranging from r = .55 to .73; the strongest associations were again observed for the DASS-10 (males: r = .69 for both factors; females: r = .73 for Anxiety–Stress).

Collectively, these subgroup findings indicate broadly comparable psychometric performance across gender and provide the empirical basis for the measurement invariance testing reported next.

Measurement Invariance Across Gender

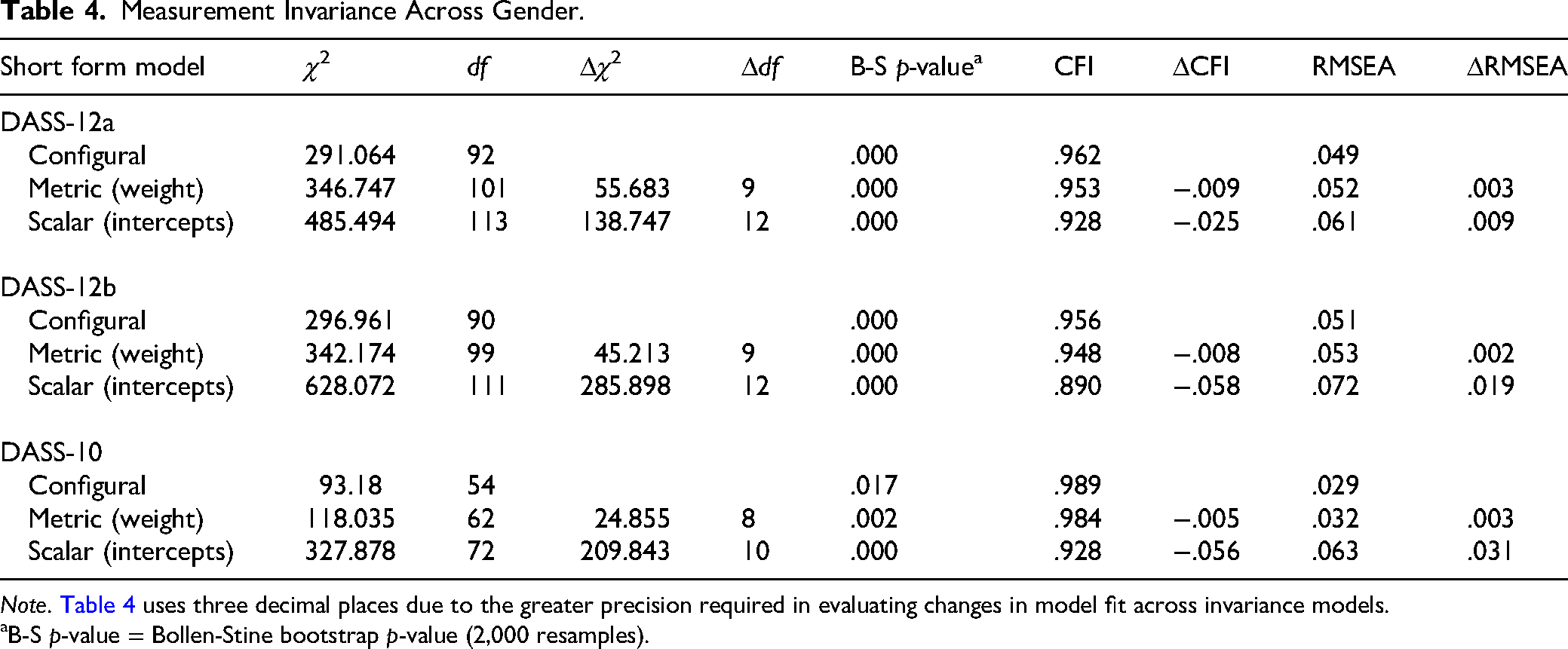

To evaluate whether the short forms operate equivalently across gender, multigroup CFA tested configural, metric, and scalar invariance. Model fit and changes between nested models are summarized in Table 4.

Measurement Invariance Across Gender.

Note. Table 4 uses three decimal places due to the greater precision required in evaluating changes in model fit across invariance models.

B-S p-value = Bollen-Stine bootstrap p-value (2,000 resamples).

Configural Invariance

All three short forms showed acceptable-to-excellent configural fit (CFI = .956–.989; RMSEA = .029–.051), indicating that the same latent structure—three factors for DASS-12a/12b and two factors for DASS-10—was recovered in both male and female groups. Establishing configural invariance supports the conclusion that the basic pattern of fixed and free factor loadings is comparable across gender, providing the required baseline for testing equality constraints on parameters.

Metric Invariance (Equality of Factor Loadings)

In the second step, factor loadings were constrained to be equal across groups. Constraining factor loadings to equality across gender produced small changes in fit—DASS-12a (ΔCFI = −.009; ΔRMSEA = + .003), DASS-12b (ΔCFI = −.008; ΔRMSEA = + .002), and DASS-10 (ΔCFI = −.005; ΔRMSEA = + .003). Following conventional criteria (e.g., Chen, 2007; Cheung & Rensvold, 2002; metric invariance supported when ΔCFI ≤ .010 and ΔRMSEA ≤ .015), all three short forms met the thresholds. Thus, metric invariance was supported for DASS-12a, DASS-12b, and DASS-10. Decisions were based on change indices (ΔCFI, ΔRMSEA); full fit indices are in Table 4. This indicates that the items relate to the underlying factors in the same way for men and women.

Scalar Invariance (Equality of Intercepts)

Constraining item intercepts to equality yielded clear decrements in fit for all three short forms. Relative to the metric model, DASS-12a showed ΔCFI = −.025 and ΔRMSEA = + .009—exceeding the ΔCFI ≤ .010 criterion (Chen, 2007; Cheung & Rensvold, 2002) even though the RMSEA change remained within the ≤ .015 guideline. Larger declines were observed for DASS-12b (ΔCFI = −.058; ΔRMSEA = + .019) and DASS-10 (ΔCFI = −.056; ΔRMSEA = + .031), both breaching conventional cutoffs (Chen, 2007; Cheung & Rensvold, 2002). The poor absolute fit of the scalar models was further confirmed by their significant Bollen-Stine bootstrap p-values (p ≤ .002). Thus, scalar invariance was not supported for any of the three short forms, precluding direct comparisons of latent mean scores across genders.

Discussion and Applications to Practice

This study was designed as a replication and extension of previous work (Yun, 2025) to evaluate the psychometric properties of six DASS short forms in a mixed-gender sample of adult survivors of sexual violence. The findings offer crucial new insights. Overall, three abbreviated forms—the DASS-10, DASS-12a, and DASS-12b—demonstrated adequate psychometric performance. Subgroup analyses revealed that model fit was generally stronger for the female subsample, with the DASS-10 yielding the most consistent and optimal fit across both genders. Reliability was adequate to excellent (α = .73–.90), and concurrent validity with the IES-R was uniformly strong, confirming the theoretical link between negative affect and trauma-related distress.

A key finding from the measurement invariance testing was that all three top-performing models achieved configural and metric invariance but failed to achieve scalar invariance. This indicates that while the scales have a similar structure and meaning for men and women, direct comparison of their average scores is not advisable without further adjustment. These conclusions are strengthened by the use of robust statistical methods, including the Bollen-Stine bootstrap to correct for non-normality and the evaluation of invariance based on established change indices (e.g., ΔCFI).

A central finding of this study was the significant empirical overlap between the anxiety and stress constructs, as evidenced by the consistent lack of discriminant validity in the three-factor models. This result aligns with a large body of DASS literature that has frequently reported high inter-factor correlations between these two domains (e.g., Antony et al., 1998; Clara et al., 2001; Henry & Crawford, 2005; Oei et al., 2013; Zanon et al., 2021). This overlap is often explained by Clark and Watson's (1991) tripartite model, which posits that while depression is uniquely characterized by low positive affect, both anxiety and stress share a common core of high negative affect and physiological hyperarousal. This shared variance provides a strong theoretical and empirical rationale for why the two-factor DASS-10, which pragmatically combines the anxiety and stress subscales, demonstrated such a robust and stable model fit in the present study.

The findings provide clear, evidence-based guidance for practitioners and agencies. Confirming the findings from the previous study (Yun, 2025), the accumulated evidence from this larger, mixed-gender sample again supports prioritizing two forms in practice: the DASS-10 and the DASS-12a. The choice between them should be driven by the clinical task. For rapid screening, intake, or routine outcome monitoring, the DASS-10 is the recommended tool due to its brevity, robust two-factor structure, and stable model fit across genders. For more detailed clinical assessment or treatment planning where distinguishing between the nuances of depression, anxiety, and stress is important, the DASS-12a is the superior three-factor option, offering particularly strong reliability for the Depression subscale among men.

Beyond selecting the right tool, the lack of scalar invariance highlights several crucial principles for gender-responsive interpretation. Most importantly, the scales are best used to track an individual client's change over time, as this is a valid and powerful application. Consequently, practitioners should avoid directly comparing the average scores of men and women. Instead of using a single universal cutoff score, they should rely on gender-specific reference ranges or locally normed data to make fairer and more accurate clinical decisions. Adhering to these principles aligns with a trauma-informed approach to care by ensuring that brief assessments are interpreted in a fair, client-centered, and contextually sensitive manner.

Several limitations should be considered. The findings derive from a single, cross-sectional sample, which precludes assessment of test-retest reliability or sensitivity to change. Furthermore, while this study successfully extended previous work by including a mixed-gender sample, the sample was limited to one region in Canada and was not sufficiently diverse to test for invariance across other important dimensions like race, ethnicity, or Indigeneity. Moreover, the study's focus on a binary comparison of men and women, while a necessary first step, overlooks the experiences of nonbinary, transgender, and other gender-diverse survivors, who are often disproportionately affected by trauma. The significant sample attrition resulting from the use of administrative data may also have introduced selection bias. Finally, the lack of scalar invariance remains a key limitation for cross-gender mean comparisons.

Future research should address these gaps. Replication in independent clinical samples is needed to consolidate these findings. Longitudinal studies are required to examine invariance over time and responsiveness to change. Establishing partial scalar invariance would be a critical next step to allow for cautious cross-gender comparisons. Broader invariance testing across cultural groups—including dedicated validation with Indigenous and gender-diverse populations—would strengthen generalizability and support equity-oriented implementation. Such efforts will ensure that these abbreviated DASS forms evolve into scientifically rigorous and practically responsive tools that can advance social work research and strengthen trauma-informed practice.

In conclusion, this study navigates the persistent challenge in clinical assessment: the trade-off between pragmatic brevity and psychometric rigor. By replicating previous work and extending it to a mixed-gender sample, the findings confirm that the DASS-10 and DASS-12a are the most robust short-form options available. However, the consistent failure to achieve scalar invariance across all tested models delivers a critical cautionary note. Ultimately, this research underscores a key message for trauma-informed practice: while brief measures are essential tools, their responsible implementation demands a sophisticated understanding of their limitations, particularly regarding gender equity. The goal is not merely to measure distress, but to do so in a way that is valid, fair, and just for all survivors.

Footnotes

Ethical Approval

This study is approved by the University of Windsor Research Ethics Board (# 12-080).

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by the Canadian Institutes of Health Research (CIHR; #392373), Social Sciences and Humanities Research Council (SSHRC; #892-2023-3003 and #890-2024-0022).

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability

Data are not publicly available due to REB-approved ethical restrictions. This study involved secondary analysis of de-identified data previously collected by partnering agencies.