Abstract

Purpose:

“Social Workers in Schools” (SWIS) is a school-based intervention aiming to reduce the need for children to receive child protection services in England. This article reports the findings of a randomized controlled trial (RCT) designed to evaluate SWIS.

School-based social work is a well-established subdiscipline in many countries, but it is not common in England. The practice itself varies greatly, with different intentions and goals that are often more associated with educational than social work outcomes. Moreover, there is also relatively limited causal evidence about the impact of school-based social work on any type of outcomes (Rafter, 2022), and learning around impact is often not shared beyond individual schools (Bye et al., 2009). In this article, we present the main findings from a cluster randomized controlled trial (RCT) which examined the impact of a school-based social work program on the need for state interventions for abuse and neglect, and on students’ educational attendance and attainment. The study took place within 21 local authorities, which are the lowest tier of elected government in the United Kingdom (UK) and are tasked with providing children's services. It involved 278,858 students, across 268 schools in England.

The program was called “Social Workers in Schools” (SWIS) and involved social workers being based within schools and performing social work activities, including statutory child protection work, with children enrolled in that school. This differed from usual practice, in that social workers typically operate from a central office and are not linked with specific schools. Instead they visit children at various schools occasionally, on an individual basis to do casework. After three pilot studies conducted between 2018 and 2020 suggested SWIS was promising (Westlake et al., 2020, Westlake, Melendez-Torres, et al., 2024), the Department for Education commissioned a larger-scale program and this accompanying RCT (Williamson, 2020). The program and evaluation were funded through What Works for Children's Social Care, which is now called Foundations, the What Works Centre for Children and Families, and has a remit to improve the amount and quality of evaluation in the sector.

The Case for SWIS in the Context of UK Children's Social Care

In the decade prior to this study, sustained increases in the numbers and rates of children receiving statutory services in the UK presented challenges for the sector. Rates of child protection inquiries in England steadily increased from 111.3 per 10,000 children in 2012/13 to 168.3 per 10,000 children in 2018/19, reaching their highest recorded level in 2022 (Department for Education, 2022a). Likewise, the number of children in care in England has also increased, from 68,110 in 2012/13 to 82,170 in 2021/22 (Department for Education, 2022b). This creates moral, practical, and financial dilemmas and has contributed to a growing consensus that the system is in a state of “crisis” and in need of reform (Holt & Kelly, 2020; Hood et al., 2020; Lepper, 2022; Munby, 2016; Thomas, 2018). A recent review, commissioned by the UK Government, concluded a “dramatic whole system reset” was required (MacAlister, 2022). For any such shift to happen there is a need for interventions that safely and effectively reduce the need for children to receive statutory services, and for these outcomes to be tested and verified by reliable causal evidence about impact.

SWIS was identified as a contender for such an intervention in 2020, following the aforementioned pilots. It was attractive for policymakers for several reasons and embodied the kind of multi-agency working that the government has promoted for many years (Department for Children, Schools and Families, 2010; Department for Education, 1999; HM Government, 2018). More specifically, the pivotal role of schools in protecting children from abuse and neglect is evident when sources of referrals to children's social care (CSC) are ranked by volume (Morse, 2019), and they typically emerge as the second most frequently referring agency (behind the police). There was also the logistical advantage of school-based interventions being relatively easy to scale, given the large number of schools in England and the high proportion of children attending these institutions. Finally, the prevalence of school social work in other countries suggested the potential for developing something similar in the UK.

Previous Research on School-Based Social Work

There was a relative absence of school social work in the UK prior to this program, and most previous research on school-based social work comes from the USA. We discussed the evidence base when considering the SWIS pilot evaluations, and concluded the empirical foundations of school social work remain relatively limited (Westlake, Melendez-Torres, et al., 2024). The pilot studies added to the evidence base in two ways. First, they provided indicative evidence of impact, through a quasi-experimental analysis that compared the schools that took part in the study with a matched group of comparator schools, who continued providing standard practice without an on-site social worker. This study suggested that there were potentially positive effects on reducing the need for Child in Need and child protection interventions. These relate to children who are usually living with their birth parents, but where there are concerns about their welfare due to abuse or neglect, or they are disabled. Where concerns are more serious, child protection inquiries take place—known as “section 47s” as they fall under Section 47 of the Children Act 1989—and often a child protection plan is instigated thereafter. In two of the geographical areas where pilots took place, significantly fewer child protection inquiries took place in SWIS schools, and in one case SWIS was associated with a drop of 35% in the incidence of such interventions. Nonetheless, the pilots were not RCTs, the results were mixed, and issues with low sample sizes and incidence rates led us to conclude that the findings “should be replicated at a larger scale before we can draw firm conclusions” (Westlake, Melendez-Torres, et al., 2024).

The second contribution of the pilot evaluations was the program theory and logic model, which delineated the mechanisms through which SWIS was thought to operate to have these effects. This suggested there were three key pathways where causal effects might occur, and two of these were more relevant to secondary schools. The first was an enhanced school response to safeguarding issues, where more channels of communication between school and social work staff enable the social worker to give advice and support to the school, can challenge the status quo and ways of working within the school, and provide support to students at an earlier stage. The second was a greater opportunity for direct work with children and young people in schools, through the social worker being on site and available for them to engage with. This was thought to improve relationships between social workers and students, which was expected to be linked with other benefits (such as increased disclosure and reduced stigma).

Aside from the SWIS pilot studies, which were designed to lay some empirical foundations for the current RCT, much of the other evidence that is available relates to educational as opposed to safeguarding or other social care outcomes (Franklin et al., 2009; Isaksson & Sjöström, 2017; Rafter, 2022). In many examples—both in the USA and elsewhere—the objectives of school social workers are more aligned with educational goals (Lee, 2012). This is reflected by the fact that some early examples of the role were called “visiting teachers” (Culbert, 1921), and some contemporary school social workers in poorer countries spend some of their time actually teaching students (Tedam, 2022).

The UK context differs significantly. Compared to some other nations, schools in the UK have relatively extensive resources for supporting students” needs beyond education (Dyson & Jones, 2014). It is common for schools to have nurses, counselors, and welfare officers who may perform some of the tasks undertaken by school social workers in other countries. Since around 1999, the concept of “extended schools” has been influential in the UK. This is the notion that schools should extend beyond their core business of teaching and provide other services for students and the community, and it has been viewed as a poverty reduction strategy (Cummings et al., 2007; Diss & Jarvie, 2016). While the first decade of this century saw a rise in extended school provision, as part of New Labour's “Every Child Matters” agenda, specific funding for schools to provide extra services ended in 2011 after the Conservative-led coalition government came to power (Department for Education and Skills, 2004; Diss & Jarvie, 2016). This appears to have frustrated efforts for schools to go beyond a narrower educational remit, although some schools continued to offer additional services.

Nevertheless, it is uncommon for social workers to engage in statutory casework within a school setting, even in countries where school social work is prevalent. Research exploring this dimension of school social work is also limited, though some studies address how serious concerns like abuse and neglect are reported. For instance, a study in New Zealand focused on school social workers’ roles in reporting such concerns. In findings that were largely supported by the SWIS pilots shortly afterward, this revealed significant variability in processes and procedures among schools, along with role confusion and tensions between social workers and school staff (Beddoe & de Haan, 2018).

Other research highlights common themes applicable to the UK context, despite its statutory focus. For example, integration and professional identity are cited as significant challenges, with school social workers often perceived as outsiders struggling to integrate into school environments (Beddoe, 2019; Isaksson & Larsson, 2017). These tensions echo broader research on inter-agency working, which underscores how differences in organizational culture, routines, and goals can impede effective collaboration (Barton & Quinn, 2001).

In the UK, research on school-based social work is limited, though several pilot programs have been conducted. Bagley and Pritchard (1998) evaluated a 3-year program placing social workers in a socioeconomically deprived primary school, showing positive impacts on truancy, bullying, and exclusions. Another study by Wigfall et al. (2008) evaluated a 6-month pilot with social workers in four schools, with generally positive feedback and a consensus for continuation. The “Social Work Remodelling Project” of 2008–2011, involving embedding social workers in schools, highlighted benefits such as increased capacity for early intervention, enhanced accessibility, and flexibility (Baginsky et al., 2011).

More recent research in Wales by Sharley (2020) explored the role of schools in addressing neglect, revealing differences in agency responses, and the factors influencing them. Despite there being several hurdles to overcome, embedding social care staff within education is considered beneficial, aiding social workers’ understanding of the education system and providing increased opportunities for direct work with children and families (Gregson & Fielding, 2012; Parker et al., 2003). Sharley suggests creating a distinct “school social worker” role to improve multi-agency cooperation and enhance training on decision-making, neglect, and children's well-being in school. The three pilot studies (Westlake et al., 2020; Westlake, Melendez-Torres, et al., 2024) explored the feasibility of this idea, and generated a signal of potential impact. They found high levels of acceptability among key stakeholders, and suggested that the intervention may reduce the need for social care interventions. This RCT set out to build upon and extend this evidence base with a particular focus on implementation and impact.

The Current Study

This study included three main components: (1) an impact evaluation to test the effectiveness of SWIS; (2) an implementation and process evaluation (IPE) of the extent to which SWIS was delivered in accordance with the funder's expectations (as set out in a manual), the mechanisms through which SWIS was thought to operate, and the perspectives and experiences of those involved; and (3) an economic evaluation to assess the cost-effectiveness and cost-consequences of SWIS. This article details the results of the impact analysis, including estimates of the effectiveness of SWIS on social care and educational outcomes, and summarizes the findings of the IPE. More details about the IPE and the economic analysis are found elsewhere (Schroeder et al., 2024; Westlake et al., 2023).

Research Questions

The article includes analyses relating to the following research questions, which pertain to school-level effects across two academic years, from September 2020 to July 2022:

What was the impact of SWIS in reducing rates of section 47 inquiries compared to usual practice?; What was the impact of SWIS on rates of referral to CSC, section 17 (Child in Need) assessments, and children entering care?; What was the impact of SWIS on the number of days children spent in care?; What was the impact of SWIS on educational attendance and attainment?; Was SWIS implemented as intended?

Method

Design

The evaluation took place from September 2020 to July 2022. It was a pragmatic open-label cluster RCT with two arms—a social worker assigned to and present in a school (intervention) versus usual CSC service alone (control). Mainstream secondary schools were the unit of randomization. A cluster design was used because the intervention was delivered at the school level.

An IPE and economic evaluation were conducted alongside the trial. This article reports on the main methods and results, up to and including a longer-term follow-up at 35 months post-randomization for selected outcomes.

The trial was registered retrospectively with the International Standard Randomised Controlled Trial Number (ISRCTN) registry [ref: ISRCTN90922032]. A protocol was published at the outset (Westlake et al., 2020) and updated on two occasions when the trial was extended by the funder (Westlake, Pallmann, Lugg-Widger, Forrester, et al., 2022; Westlake, Pallmann, Lugg-Widger, White, et al., 2022). A summary of the changes made to the original protocol can be found in Version 3 (Westlake, Pallmann, Lugg-Widger, White, et al., 2022).

Intervention

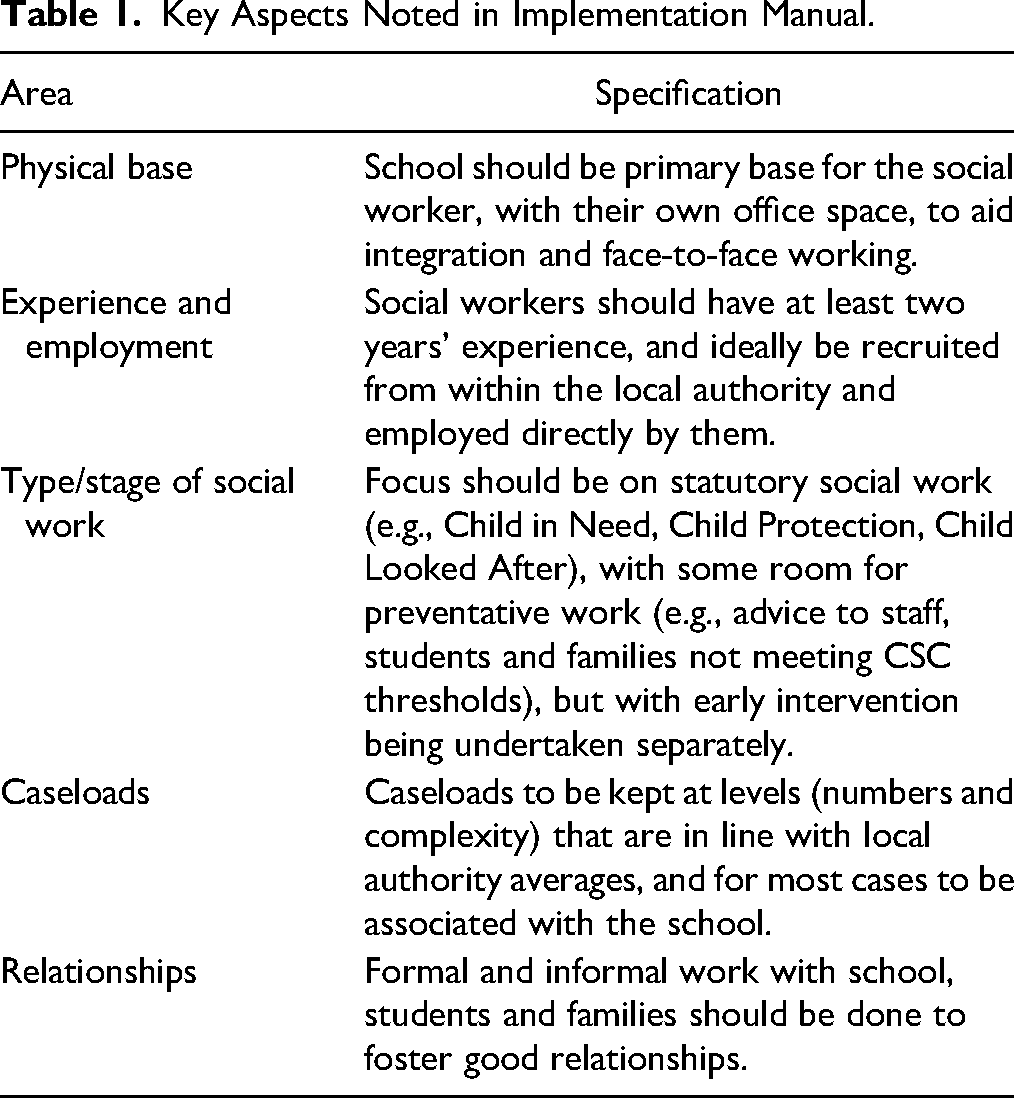

The intervention physically located social workers within schools with the aim to build better working relationships with school staff, students, and families—and for this to contribute to reduced need for statutory interventions through more timely and direct social work input. The social worker was embedded in the school rather than working with students, schools, and families from a local authority office base. The funder published a brief manual that specified key aspects of implementation (see Table 1). However, SWIS was not heavily manualized and the funder emphasized the need for “a certain degree of flexibility… due to the diverse nature of schools and their differing needs” (What Works for Children's Social Care, 2020). Full details of the intervention and initial logic model are published in the protocol and publications relating to the pilot studies (Westlake et al., 2020; Westlake, Pallmann, Lugg-Widger, Forrester, et al., 2022; Westlake, Melendez-Torres, et al., 2024). The control group received CSC services as usual, which involved social workers being based in local authority headquarters and working only with allocated students, and making relatively infrequent visits to schools.

Key Aspects Noted in Implementation Manual.

Participants

Local authority areas in England, the local government agencies responsible for providing children's services, applied to be included in the trial and liaised with schools to ascertain willingness to participate.

School eligibility was that schools were mainstream schools within a participating local authority and were able to submit data for the trial. Mainstream secondary schools are the main school provision for young people aged between 11 and 16 or 18 depending on the type of school provision (UK school years 7 to 11 or 13). All students attending the schools were eligible for the trial. Schools could opt out of participation in data collection related to the IPE while remaining in the trial.

Outcomes

Primary outcome: Rate of child protection (section 47) inquiries over 23 months.

Secondary outcomes:

Rate of referrals to CSC over 23 months. Rate of Child in Need (section 17) assessments over 23 months. Rate of children entering care over 23 months. Number of days in care over 23 months (followed up until 35 months). Educational attendance over 23 months. Educational attainment over 23 months.

All CSC data were collected from local authority CSC departments at termly intervals. CSC data was recorded at the individual level on local authority databases and was aggregated to the school level before sharing with the trial team. The aggregated count variable was the outcome measure. Full details can be found in the study protocol (Westlake et al., 2020; Westlake, Pallmann, Lugg-Widger, White, et al., 2022).

Educational outcomes data was obtained from the National Pupil Database, via the Office for National Statistics. Educational attendance was measured as a percentage of unauthorized absences—the percentage of sessions (half days) that children were absent without being authorized, out of the number of sessions possible per term (autumn, spring, summer). Educational attainment was measured at the Key Stage 4 point, where children in England receive their General Certificate of Secondary Education (GCSE) results. This is the most important educational attainment outcome for secondary school pupils, and the variables used were:

Attainment 8—a measure of a pupil's average grade across eight GCSE subjects. English Baccalaureate Average Point Score—an agreed set of GCSE subjects (English language and literature, maths, the sciences, geography or history, and a language). % English and maths, grade 5 and above. This was coded as a binary variable, coded 1 if the pupil achieved a level 5 or higher in both English and maths, and coded 0 otherwise.

Sample Size for Impact Evaluation

At the trial design stage, the funder advised that a minimum of 280 mainstream schools would be available to be randomized. Our sample size calculations were based on comparator school data from the three pilot studies (Westlake et al., 2020), which aided our estimation of these parameters. Assuming an average of 925 students per school, an average rate of 12.6 section 47 inquiries per 1000 students per school year under usual practice conditions, and a between-cluster coefficient of variation of 0.45 of the primary outcome (section 47 rate) within arms, randomizing 140 mainstream schools to each group provided 80% power to detect a decrease in rates from 12.6 to 10.48 per 1000 pupils per school year (i.e., a rate ratio of 0.832). This was based on a two-sided 5% type I error level when using a Poisson regression model accounting for cluster randomization (Hayes & Bennett, 1999). The minimum detectable effect size of 80% for 268 mainstream schools (the final sample size) was a decrease in section 47 rates from 12.6 to 10.43 per 1000 pupils per school year (i.e., a rate ratio of 0.828).

Randomization

Schools were randomized in blocks of up to 16, with each local authority acting as a block. Mainstream schools were allocated to the SWIS intervention or usual practice in a 1:1 ratio using a covariate balancing method for cluster-randomized trials with multiple blocks (Carter & Hood, 2008). For the first block, the standard imbalance metric (Equation 1 in Carter & Hood, 2008) was used. The allocation of subsequent blocks was conditional on blocks already allocated, using a modified imbalance metric (Equation 2 in Carter & Hood, 2008).

Balancing variables were school size (total number of students enrolled in year 7 and upward) and percentage of students eligible for free school meals as an indicator of the level of deprivation. Both balancing variables were weighted equally and adjusted for in the final statistical analysis by including them as covariates in the regression models. The rationale for selecting these variables is reported in detail elsewhere (Westlake, Pallmann, Lugg-Widger, Forrester, et al., 2022). During randomization, the statistician had sole access to the imbalance metrics for schools already randomized, in order to reduce the risk of allocations for new local authorities being predictable. The trial statistician performing the analysis was not involved in the randomization.

Statistical Methods for Impact Evaluation

All analyses were “intention to treat” meaning schools were analyzed based on their originally assigned groups regardless of adherence to the intervention. Statistical tests and confidence intervals were two-sided. For all analyses, school-level data was combined and totaled over the whole school irrespective of the month or the year group. All analyses were performed in Stata version 17 (StataCorp LLC, 2021).

Outcomes were standardized per year per 1000 students where appropriate (i.e., for rates) to allow for a fair comparison between arms and across time points. To estimate an adjusted incidence rate ratio of section 47 inquiries over 23 months between SWIS and comparator schools, we fitted a multivariable Poisson regression model with cluster-robust standard errors to reflect the clustering structure of schools within local authorities (Mansournia et al., 2021) with section 47 inquiries as the outcome variable, allocation as the explanatory variable and the number of students per school as the exposure scaling variable and adjusted for the following covariates:

Section 47 inquiries for the 2018/19 academic year (baseline). Percentage of students eligible for free school meals. Number of students enrolled per school.

Secondary outcomes were also analyzed by fitting multivariable regression models with cluster-robust standard errors depending on the type of outcome: Poisson with the number of students per school as the exposure scaling variable for counts (referrals to CSC, section 17 assessments, children entering care) and linear regression for continuous variables (days in care per child entering care, educational attainment, educational attendance). We included the same fixed-effect covariates in the model as for the primary outcome (allocation, baseline outcome from 2018/19, percentage of students eligible for free school meals, and number of students per school).

Noncompliance was defined as an intervention school not adopting the intervention at all. As part of a “per protocol” sensitivity analysis, we excluded the only non-compliant school and then repeated the analysis to assess the impact of non-compliance. Another sensitivity analysis fitted two-level mixed-effects models with random local authority effects for the primary outcome and estimated the intraclass correlation coefficient (ICC). We also fitted a quasi-Poisson regression model with an overdispersion parameter rather than cluster-robust standard errors for the primary outcome as an additional sensitivity check.

In a pre-specified subgroup analysis, we assessed the hypothesized mechanisms of change outlined in the Westlake et al. (2020) logic model at the 23-month follow-up. We did this by creating a variable for implementation, as detailed in Westlake et al. (2023) based on a re-analysis of pilot data. This categorized schools into “gold,” “silver,” and “bronze” groups. As the categories of implementation quality only apply to the SWIS arm of the trial, we created a new factor variable with four levels (control, gold, silver, bronze) and used it as a covariate in the models for both unweighted and weighted versions of levels of implementation quality instead of using allocation and implementation quality covariates separately.

We used per-term outcome data (for autumn 2020, spring 2021, summer 2021, autumn 2021, spring 2022, and summer 2022) in another pre-specified subgroup analysis and included term as an additional covariate, as well as its interaction with allocation, to explore potential implementation effects and/or seasonality. Implementation effects refer to the possibility that the intervention might have exhibited delayed onset at the beginning of the autumn 2020 term due to the slow recruitment of social workers in some schools. Seasonality refers to fluctuations in the observed outcomes across the six terms between SWIS and comparator schools. Per-term data also enabled us to assess whether COVID-19 had an impact on the outcomes by checking if there was a marked difference in the outcomes during the terms that were affected by periods of “lockdown” restrictions.

For educational attainment and attendance, a subgroup analysis explored the possibility that the effects of the intervention varied by the percentage of students eligible for free school meals. We repeated the regression analysis and additionally included an interaction term between allocation and the percentage of students eligible for free school meals. The p-values generated from the subgroup analyses were adjusted for multiplicity using Hochberg's step-up procedure.

Implementation and Process Evaluation

The focus of this article is the impact of SWIS on the outcomes. We also include our analysis of how well SWIS was implemented, and how it was experienced by professionals. Other aspects of the IPE are detailed elsewhere, such as the development of program theory (Westlake et al., 2023) and the experiences of students (Bennett et al., 2024). Data was collected from professionals through surveys (circulated each school term to senior school professionals), interviews (conducted with individuals in “case study” local authorities), and data returns completed by team managers in all local authorities.

Survey links were distributed each term, with weekly reminders to nonresponders via survey software Qualtrics, in addition to manual emails where required. Lead personnel in each local authority and SWIS team managers (who supervised all SWIS workers within a local authority) also supported and encouraged participation. Team managers in each SWIS team were interviewed, using a semi-structured format, about the set-up and delivery of the program, in term two (spring 2021) and at follow-up in term five (spring 2022).

Team managers also submitted data about staffing, including start and end dates and recruitment of social workers (for example whether they were internally recruited from the pool of staff within the local authority, externally recruited from outside the local authority, or through an agency). Returns were finalized at the end of term six (summer 2022) in tandem with the social worker team manager exit interviews.

The dosage of SWIS was calculated as a percentage for each school by dividing the intervention period into 99 discrete weeks (Monday–Sunday), week 1 commencing 7 September 2020 and week 99 commencing 25 July 2022. We assigned SWIS presence or absence for each week in each school. A SWIS was considered present if any date that the social worker was “in school” (i.e., excluding any initial training period, but including those who had to start work remotely) was within the date range of each week 1–99.

We measured implementation quality using a novel “gold, silver, bronze” rating approach for each school, based on key implementation criteria (see Westlake et al., 2023, for more details). This drew upon data collated from social worker and school staff surveys, SWIS team manager interviews, and SWIS staffing returns. It was grouped into the following domains: physical base/embeddedness, integration, personnel, management and oversight, delivery, and role. We assigned schools a gold, silver, or bronze rating depending on the extent to which each criterion was implemented, based on thresholds we pre-set before analysis. We scored each criterion three, two, or one (for gold, silver, or bronze, respectively) and calculated the mean rating across terms two, three, four, and five, and rounded to the nearest whole number. We then used mean ratings in each domain to calculate a single score for each school, and adjusted scores according to the percentage of time that each school had a SWIS in post.

Extensions to Trial Duration

The study was initially due to run for one academic year, starting in September 2020 and ending in July 2021. However, it was extended twice by the funder, first in Spring 2021 and then again in Spring 2022 to ameliorate the disruption caused by the COVID-19 pandemic. As a result, it ran for two academic years, from September 2020 to July 2022.

Ethical Considerations

Cardiff University School of Social Sciences Research Ethics Committee granted ethical approval for the trial on 26 August 2020 (ref: SREC/3865).

Results

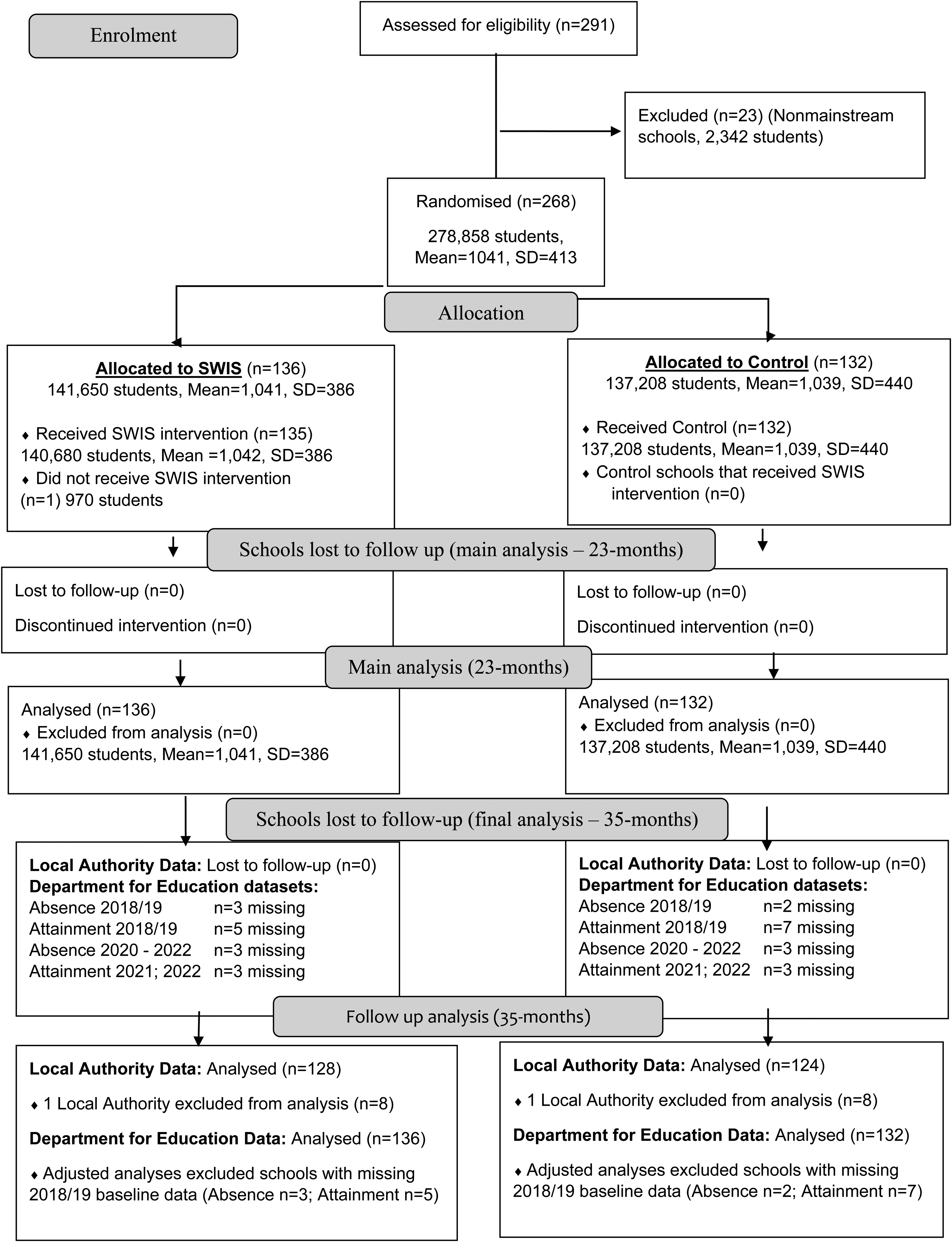

The CONSORT diagram for the trial is shown in Figure 1. A total of 291 schools were assessed for eligibility and 23 schools were excluded due to being non-mainstream. (Non-mainstream schools were randomized using simple randomization (as opposed to minimization as used when randomizing the mainstream schools), as a fair way of deciding which would receive the intervention, but were excluded from the trial.) A total of 268 schools were randomized, within which there were 278,858 students (with a mean of 1041 and a standard deviation of 413). At allocation, 136 of these schools were randomized to the SWIS intervention arm, and this included 141,650 students (with a mean of 1041 per school and a standard deviation of 386). A total of 135 of these schools received the SWIS intervention (140,680 students, with a mean of 1042 and a standard deviation of 386). One school with 970 students did not receive the SWIS intervention as the local authority did not succeed in recruiting a social worker for this school.

CONSORT diagram for the SWIS trial (mainstream schools). It shows the details of the schools at different stages of the SWIS trial, from enrollment of schools into the trial, allocation to the SWIS or control arm, follow-up, and analysis at both 23 and 35 month.

The other 132 schools were randomized to the control arm and continued with “business as usual” practice. This included 137,208 students (with a mean of 1039 and a standard deviation of 440). Across both arms of the trial, no schools were lost to follow-up or discontinued the intervention. All 136 schools in the SWIS arm and 132 schools in the control arm were included in the analyses at 23 months. After excluding 1 LA due to unresolvable data discrepancies, 128 schools in the SWIS arm and 124 in the control arm were included in the follow-up analysis of care outcomes at 35 months. All school pupil numbers reported here were collected from publicly available data at baseline.

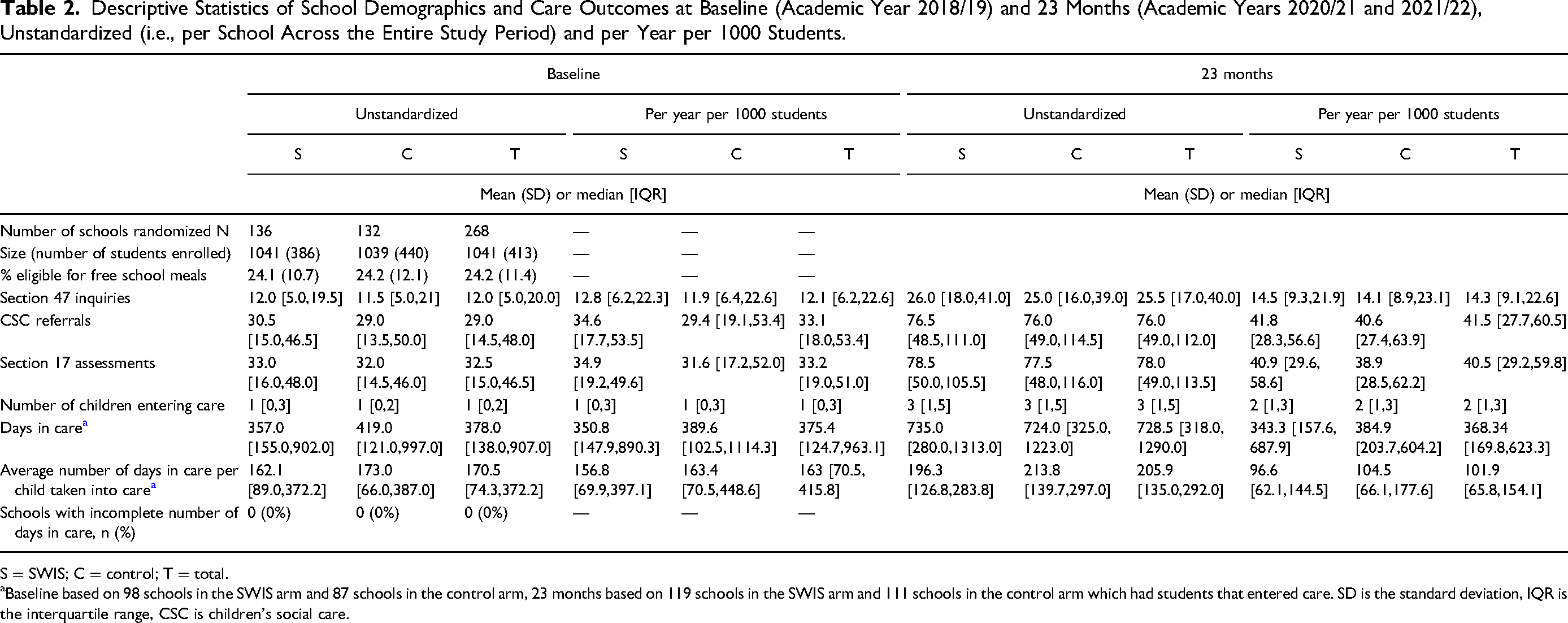

Descriptive Analysis

A good balance was achieved between arms on the two randomization balancing variables (school size and percentage of students eligible for free school meals), as shown in Table 2. School size and percentage of students eligible for free school meals were approximately normally distributed and hence are summarized by mean and standard deviation (SD) while all the outcome measures were positively skewed and therefore summarized by median and interquartile range (IQR), No school had incomplete numbers of days in care for example due to children moving schools or other scenarios. The outcome variables are standardized and presented per year to allow for comparison with the outcomes collected over 23 months post-baseline (Table 2) and per 1000 students because we would expect schools with more students to have more outcomes. The unstandardized versions are also presented in the tables. Overall, there was an increase in the median outcomes over 23 months from baseline values except for days in care which dropped slightly.

Descriptive Statistics of School Demographics and Care Outcomes at Baseline (Academic Year 2018/19) and 23 Months (Academic Years 2020/21 and 2021/22), Unstandardized (i.e., per School Across the Entire Study Period) and per Year per 1000 Students.

S = SWIS; C = control; T = total.

Baseline based on 98 schools in the SWIS arm and 87 schools in the control arm, 23 months based on 119 schools in the SWIS arm and 111 schools in the control arm which had students that entered care. SD is the standard deviation, IQR is the interquartile range, CSC is children's social care.

Main Outcome Analysis

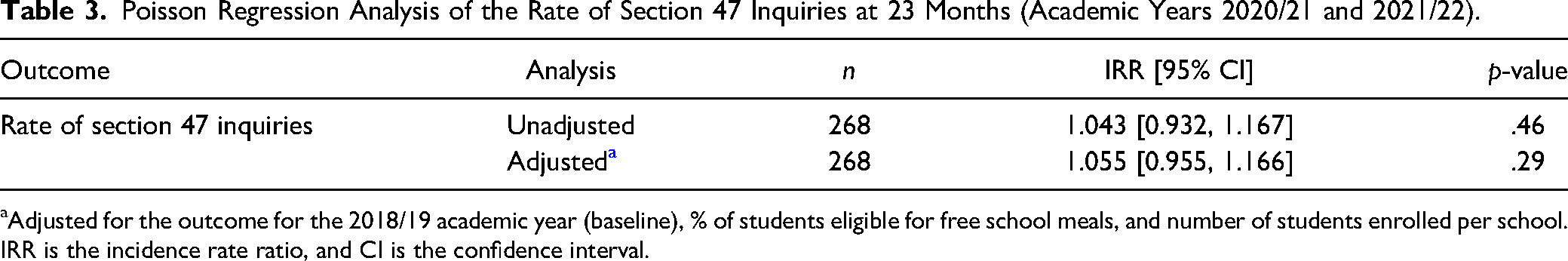

Primary Outcome—Rates of Section 47 Child Protection Inquiries

We found no evidence of benefit from the SWIS intervention on the primary outcome from the multivariable Poisson regression model: after adjusting for the percentage of students eligible for free school meals, baseline rate of section 47 inquiries, and school size, the rate of section 47 inquiries was estimated as 5.5% higher in the SWIS arm than in the control arm but this effect was not statistically significant at 5% level of significance (p = .29). The 95% confidence interval (CI) ranges from a 4.5% decrease to a 16.6% increase (Table 3).

Poisson Regression Analysis of the Rate of Section 47 Inquiries at 23 Months (Academic Years 2020/21 and 2021/22).

Adjusted for the outcome for the 2018/19 academic year (baseline), % of students eligible for free school meals, and number of students enrolled per school. IRR is the incidence rate ratio, and CI is the confidence interval.

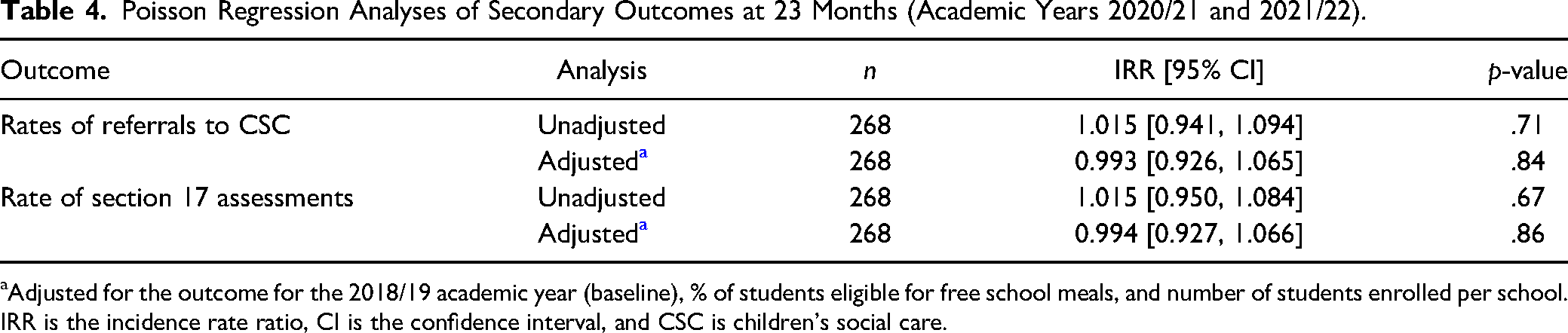

Secondary Outcomes—Social Care

All effects of SWIS on the secondary outcomes were similarly small and statistically nonsignificant at the 5% level of significance. After adjusting for the baseline outcome from 2018/19, percentage of students eligible for free school meals and school size, the rates of CSC referrals, and section 17 assessments at 23 months were estimated as 0.7% lower (95% CI: 7.4% lower to 6.5% higher, p = .84) and 0.6% lower (95% CI: 7.3% lower to 6.6% higher, p = .86) (Table 4), respectively, in the SWIS arm than in the control arm.

Poisson Regression Analyses of Secondary Outcomes at 23 Months (Academic Years 2020/21 and 2021/22).

Adjusted for the outcome for the 2018/19 academic year (baseline), % of students eligible for free school meals, and number of students enrolled per school. IRR is the incidence rate ratio, CI is the confidence interval, and CSC is children's social care.

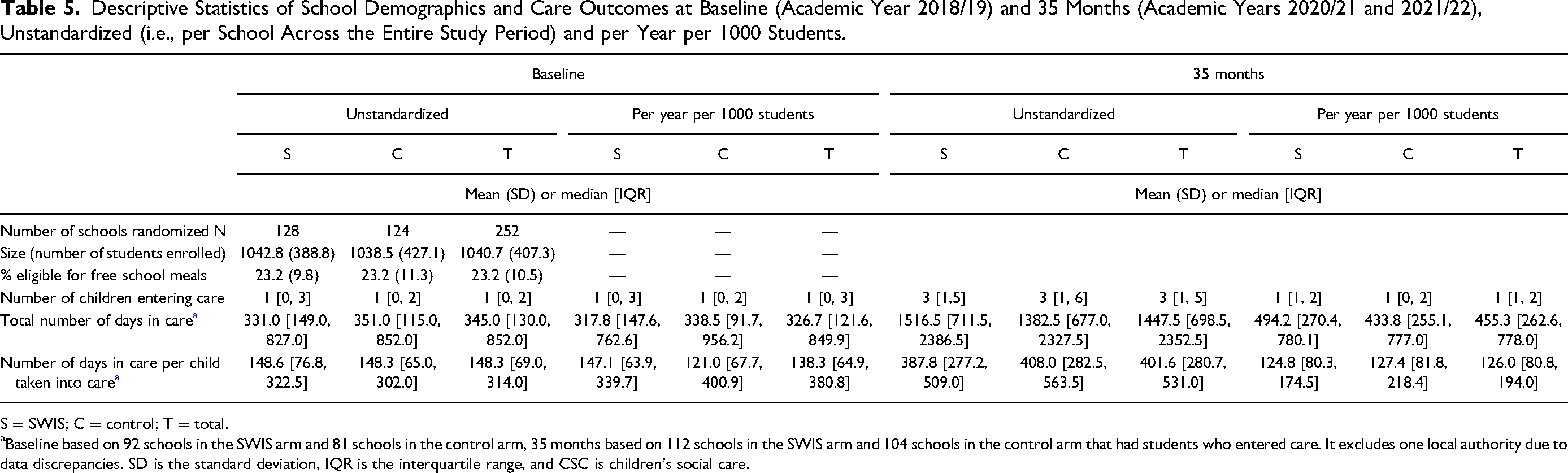

Descriptive Statistics of School Demographics and Care Outcomes at Baseline (Academic Year 2018/19) and 35 Months (Academic Years 2020/21 and 2021/22), Unstandardized (i.e., per School Across the Entire Study Period) and per Year per 1000 Students.

S = SWIS; C = control; T = total.

Baseline based on 92 schools in the SWIS arm and 81 schools in the control arm, 35 months based on 112 schools in the SWIS arm and 104 schools in the control arm that had students who entered care. It excludes one local authority due to data discrepancies. SD is the standard deviation, IQR is the interquartile range, and CSC is children's social care.

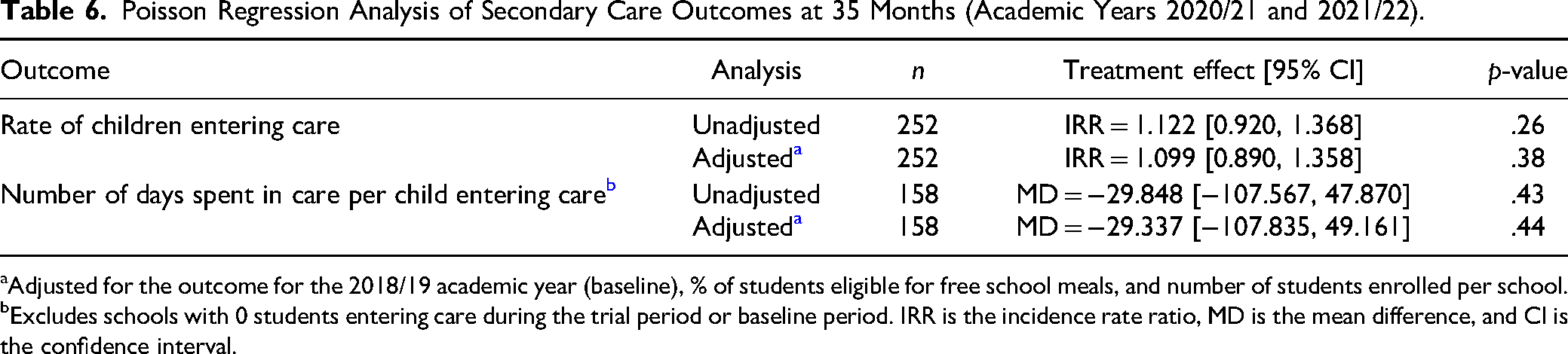

Some secondary outcomes relating to care are reported at the longer follow-up point, 35 months after baseline, because this minimized the censoring of data through children remaining in care beyond the earlier analysis point (Table 5). Further details about results at both stages are available in Westlake et al. (2023) and Westlake, Holland et al. (2024), and the latter report includes a discussion of data consistency issues that became apparent at the second follow-up. These were where data returns submitted at the final follow-up contradicted previous data returns. 1

After adjusting for the baseline outcome from 2018/19, percentage of students eligible for free school meals and school size, the rate of children entering care was estimated as 9.9% higher (95% CI: 11.0% lower to 35.8% higher, p = .38) and the mean number of days spent in care per child entering care was estimated as 29.34 days lower (95% CI: 107.84 days lower to 49.16 days higher, p = .44) (only schools reporting at least one student entering care during the trial period (N = 158) were included in this analysis), in the SWIS arm compared to the control arm (Table 6).

Poisson Regression Analysis of Secondary Care Outcomes at 35 Months (Academic Years 2020/21 and 2021/22).

Adjusted for the outcome for the 2018/19 academic year (baseline), % of students eligible for free school meals, and number of students enrolled per school.

Excludes schools with 0 students entering care during the trial period or baseline period. IRR is the incidence rate ratio, MD is the mean difference, and CI is the confidence interval.

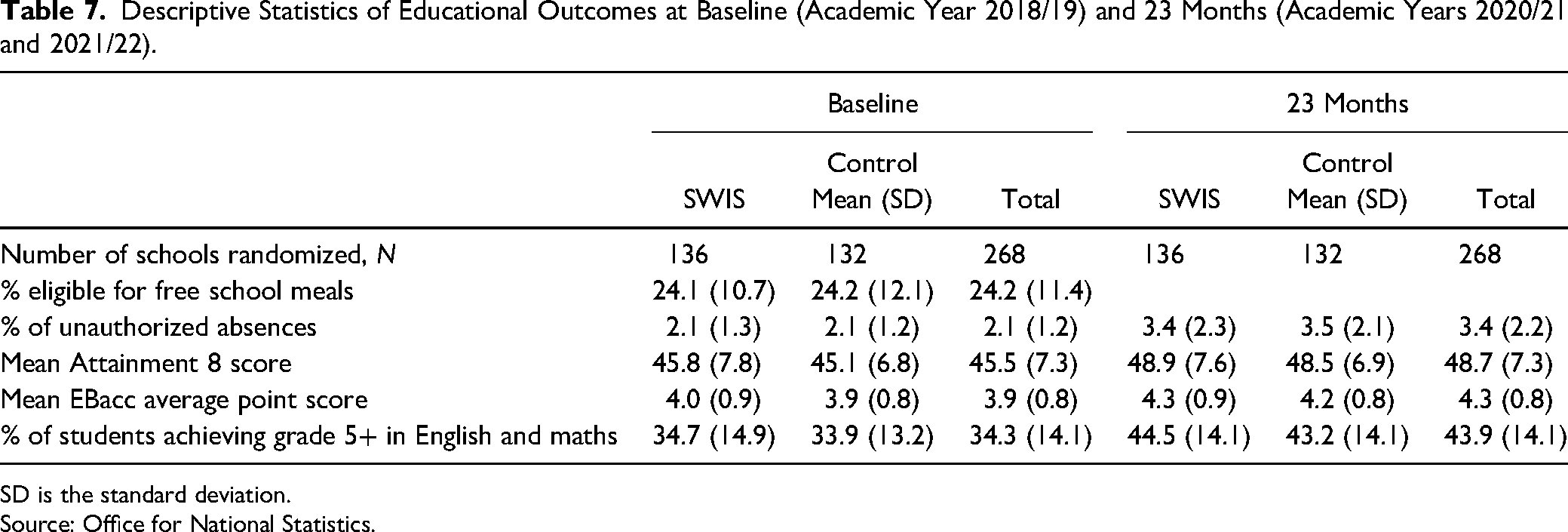

Descriptive Statistics of Educational Outcomes at Baseline (Academic Year 2018/19) and 23 Months (Academic Years 2020/21 and 2021/22).

SD is the standard deviation. Source: Office for National Statistics.

Secondary Outcomes—Education

Educational Attendance

After adjusting for the baseline percentage of unauthorized absences, percentage of students eligible for free school meals, and school size, the percentage of unauthorized absences was estimated to be 0.080 percentage points lower (95% CI: 0.43 lower to 0.27 higher, p = .64) in the SWIS arm compared to the control arm, and therefore not statistically significant at the 5% level.

Educational Attainment

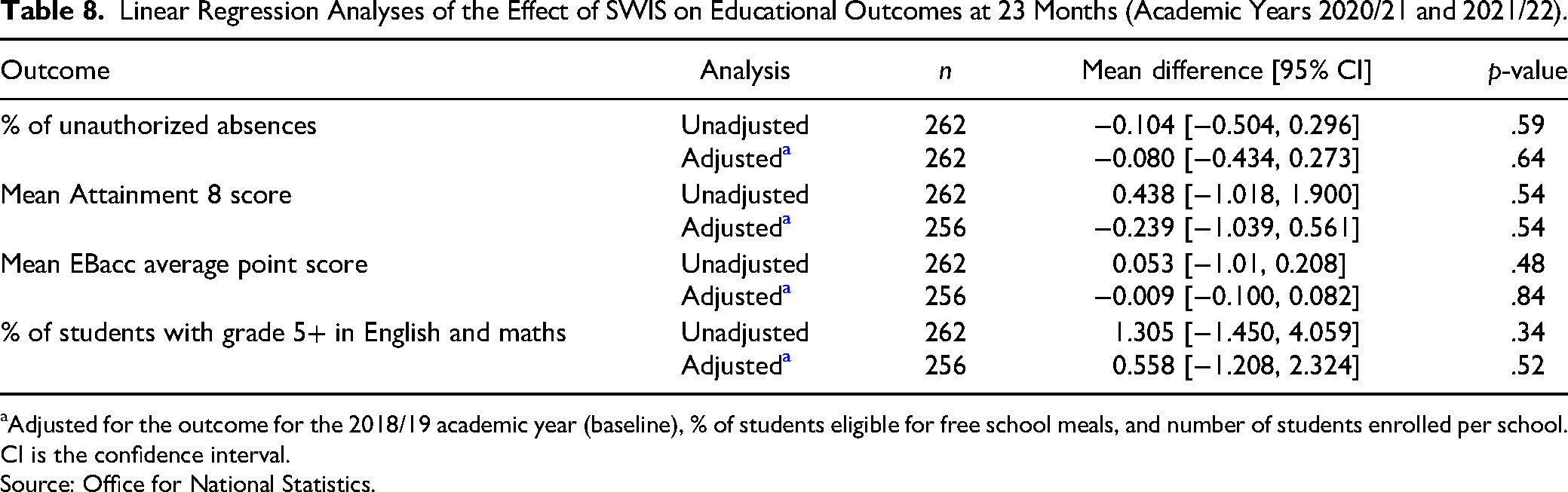

After adjusting for the baseline means for each attainment outcome, percentage of students eligible for free school meals, and school size, we found no statistically significant results on any outcome. The mean Attainment 8 score was estimated to be 0.24 points lower (95% CI: 1.04 lower to 0.56 higher, p = .54), the mean English Baccalaureate average point score was estimated to be 0.01 points lower (95% CI: 0.10 lower to 0.08 higher, p = .84), and the percentage of students who achieved grade 5 and above in English and maths was estimated to be 0.56 percentage points higher (95% CI: 1.21 lower to 2.32 higher, p = .52) in the SWIS arm compared to the control arm (Table 8).

Linear Regression Analyses of the Effect of SWIS on Educational Outcomes at 23 Months (Academic Years 2020/21 and 2021/22).

Adjusted for the outcome for the 2018/19 academic year (baseline), % of students eligible for free school meals, and number of students enrolled per school. CI is the confidence interval.

Source: Office for National Statistics.

Sensitivity Analyses

Sensitivity analysis using multi-level Poisson regression with local authority random effects produced similar results and the same conclusions as the multivariable Poisson models with cluster-robust standard errors above. Additional sensitivity analysis of the primary outcome using quasi-Poisson regression to account for potential overdispersion also arrived at the same conclusion as the standard Poisson regression with cluster-robust standard errors above. The results from the sensitivity analysis excluding the noncompliant schools also had no impact on the results since there was only one school in the intervention arm that did not have a social worker.

Subgroup Analysis by Term

We examined whether intervention effects varied by term, to ascertain whether this varied across the trial period—with a particular interest in whether periods of more acute COVID-19 pandemic restrictions being in place affected the rates of outcomes being reported. This analysis generated no evidence that the intervention effects varied across the six terms, and the absence of an observed trend across the six terms means there is no evidence of implementation effects or outcomes being affected by COVID-19. None of the unadjusted p-values for the effect of SWIS on term data are statistically significant at the 5% level of significance. Consequently, after adjustment for multiplicity using the Hochberg step-up procedure to control the familywise error rate across all terms, they remain statistically nonsignificant at the 5% level of significance.

Subgroup Analysis by the Percentage of Students Eligible for Free School Meals

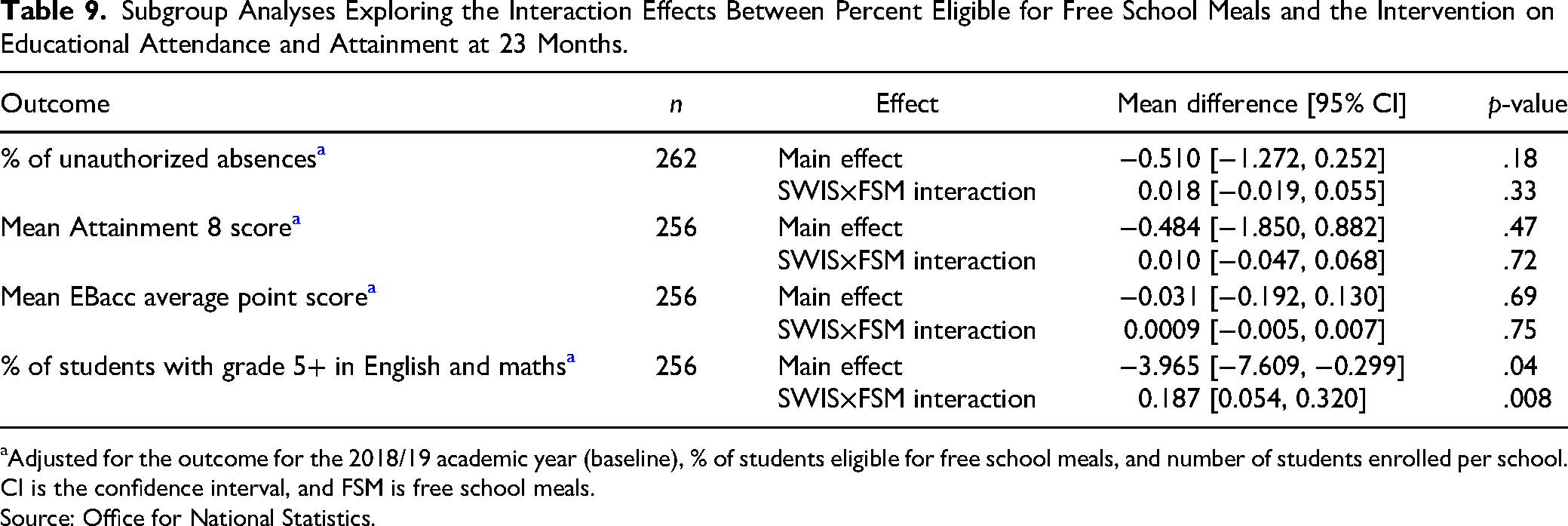

We explored whether the intervention effects on educational attainment and unauthorized absences varied according to the percentage of students eligible for free school meals. We found no evidence of statistically significant interaction effects at the 5% significance level between allocation and percent eligible for free school meals with regard to the percent of unauthorized absences, Attainment 8 score, or EBacc average point score (Table 9).

Subgroup Analyses Exploring the Interaction Effects Between Percent Eligible for Free School Meals and the Intervention on Educational Attendance and Attainment at 23 Months.

Adjusted for the outcome for the 2018/19 academic year (baseline), % of students eligible for free school meals, and number of students enrolled per school. CI is the confidence interval, and FSM is free school meals. Source: Office for National Statistics.

We found evidence of an interaction effect between allocation and the percentage eligible for free school meals with regard to the percentage of students who achieved grade 5+ in English and maths. For each unit increase in the percent of students eligible for free school meals, the percent of students who achieved grade 5 + in English or maths increases by 0.19 percentage points [95% CI: 0.05, 0.32, p = .008] in the SWIS arm compared to the control arm. However, after adjustment for multiplicity using the Hochberg step-up procedure to control the familywise error rate, the p-values are no longer statistically significant at the 5% level of significance (.04 adjusted to .39, and .008 to .10) (Table 9).

Subgroup Analysis by the Levels of Implementation Quality

We explored whether the intervention effects varied by the level of implementation quality. Initially, all domains of implementation were unweighted, and then the analysis was repeated using a version of the measure in which domains were weighted according to their relative importance. Weighting was informed by the IPE.

Unweighted Level of Implementation Quality

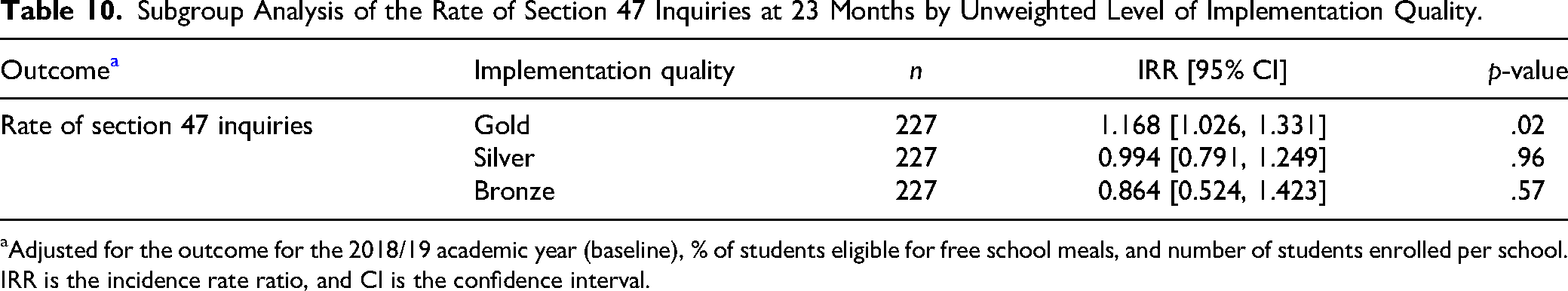

The results from the subgroup analysis of the unweighted level of implementation quality on the primary outcome show that the rate of section 47 inquiries was estimated to be 16.8% higher (95% CI: 2.6% higher to 33.1% higher, p = .02) in gold schools than in control schools, 0.6% lower (95% CI: 20.9% lower to 24.9% higher, p = .96) in silver schools than in control schools, and 13.6% lower (95% CI: 47.6% lower to 42.3% higher, p = .57) in bronze schools than in control schools. The 95% CI for gold versus control excludes 1; therefore, the effect is statistically significant at 5%, while the 95% CIs for silver versus control and bronze versus control both include 1 (Table 10). However, after adjustment for multiplicity using the Hochberg step-up procedure to control the familywise error rate, none of the p-values are statistically significant at the 5% level.

Subgroup Analysis of the Rate of Section 47 Inquiries at 23 Months by Unweighted Level of Implementation Quality.

Adjusted for the outcome for the 2018/19 academic year (baseline), % of students eligible for free school meals, and number of students enrolled per school. IRR is the incidence rate ratio, and CI is the confidence interval.

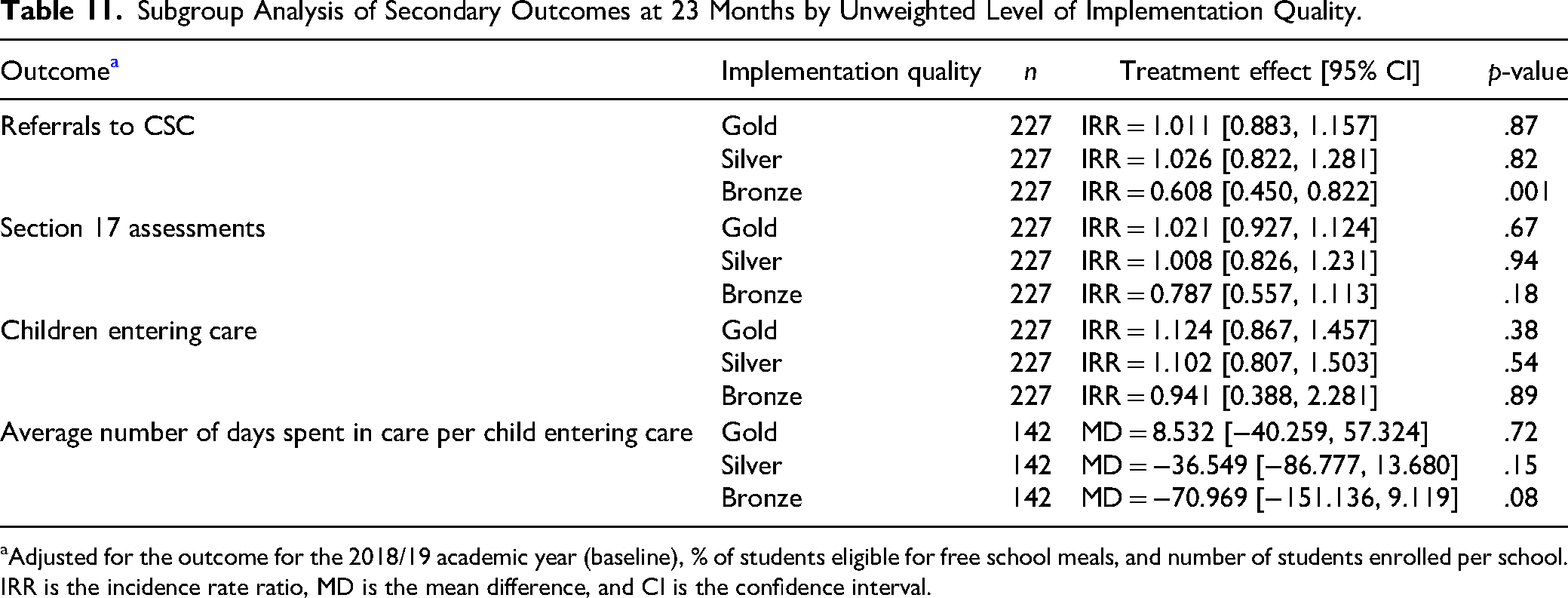

The results from the unweighted level of implementation quality on the secondary outcomes were similar to what was observed for the primary outcome above, with gold schools always having higher rates of outcomes than control schools (Table 11). However, the 95% CIs include 1 and therefore these effects are not statistically significant at the 5% level. Only the effect of SWIS on referrals to CSC in bronze schools compared with control schools remains statistically significant after adjustment using the Hochberg step-up procedure. The rate of referrals to CSC is estimated to be 39.2% lower (95% CI: 55.0% lower to 17.8% lower, p = .001) in bronze schools compared with control schools after adjusting for the percentage of students eligible for free school meals, baseline referrals to CSC and school size (Table 11).

Subgroup Analysis of Secondary Outcomes at 23 Months by Unweighted Level of Implementation Quality.

Adjusted for the outcome for the 2018/19 academic year (baseline), % of students eligible for free school meals, and number of students enrolled per school. IRR is the incidence rate ratio, MD is the mean difference, and CI is the confidence interval.

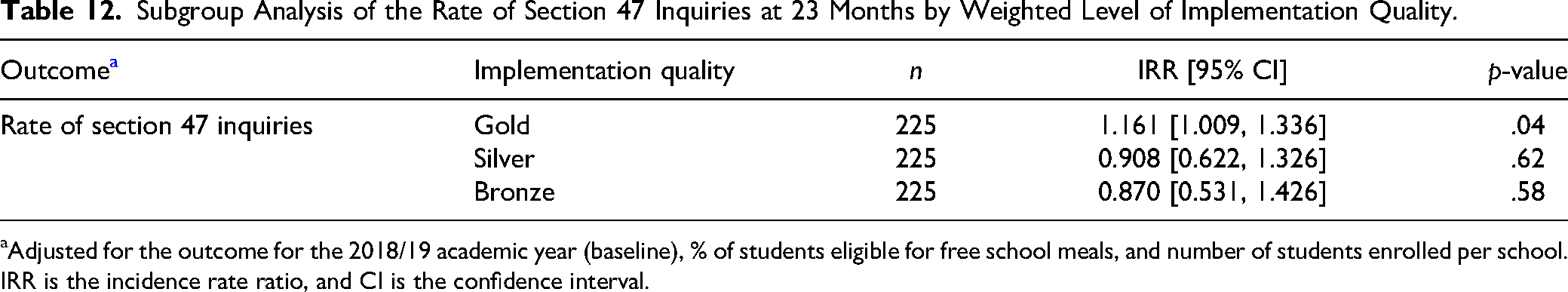

Subgroup Analysis of the Rate of Section 47 Inquiries at 23 Months by Weighted Level of Implementation Quality.

Adjusted for the outcome for the 2018/19 academic year (baseline), % of students eligible for free school meals, and number of students enrolled per school. IRR is the incidence rate ratio, and CI is the confidence interval.

Only the effect of SWIS on referrals to CSC in bronze schools compared with control schools remains statistically significant after adjustment using the Hochberg step-up procedure. The rate of referrals to CSC is estimated to be 39.2% lower (95% CI: 55.0% lower to 17.8% lower, p = .001) in bronze schools compared with control schools after adjusting for the percentage of students eligible for free school meals, baseline referrals to CSC and school size (Table 11).

Weighted Level of Implementation Quality

The rate of section 47 inquiries is estimated to be 16.1% higher (95% CI: 0.9% higher to 33.6% higher, p = .04) in gold schools than in control schools, 9.2% lower (95% CI: 37.8% lower to 32.6% higher, p = .62) in silver schools than in control schools, and 13.0% lower (95% CI: 46.9% lower to 42.6% higher, p = .58) in bronze schools than in control schools after adjusting for percentage of students eligible for free school meals, baseline section 47 inquiries and school size. The 95% CI for gold vs control excludes 1 therefore the effect is statistically significant at 5%, while the 95% CIs for silver versus control and bronze versus control both include 1. However, after adjustment for multiplicity using the Hochberg step-up procedure, none of the p-values are statistically significant at the 5% level (Table 12).

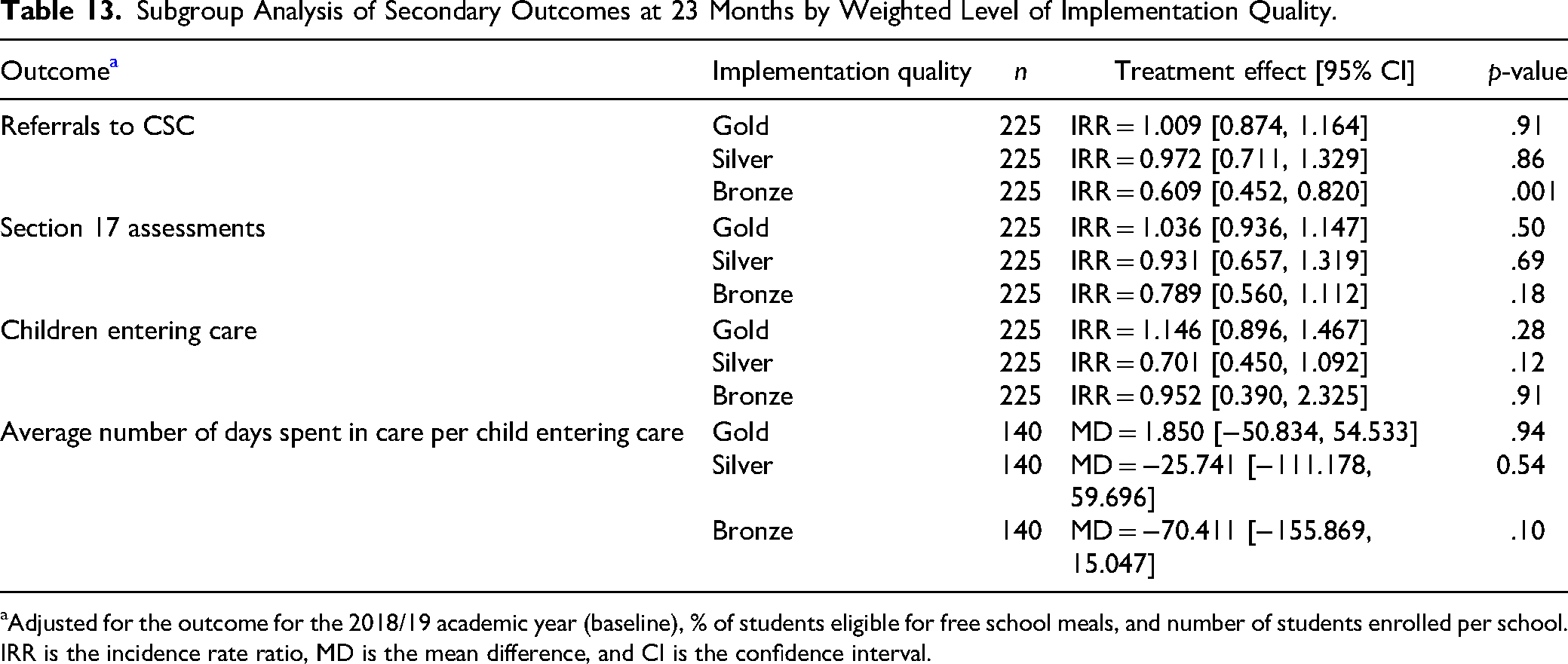

A similar trend was observed for the weighted level of implementation quality on the secondary outcomes, with gold schools always having higher rates of outcomes than control schools. However, the 95% CIs include 1 and therefore these effects are not statistically significant at the 5% level.

Only the effect of SWIS on referrals to CSC in bronze schools compared with control schools remains statistically significant after adjustment using the Hochberg step-up procedure. The rate of referrals to CSC is estimated to be 39.1% lower (95% CI: 54.8% lower to 18% lower, p = .001) in bronze schools compared with control schools after adjusting for the percentage of students eligible for free school meals, baseline referrals to CSC and school size (Table 13).

Subgroup Analysis of Secondary Outcomes at 23 Months by Weighted Level of Implementation Quality.

Adjusted for the outcome for the 2018/19 academic year (baseline), % of students eligible for free school meals, and number of students enrolled per school. IRR is the incidence rate ratio, MD is the mean difference, and CI is the confidence interval.

Implementation

The findings of the IPE help us to interpret these results, and in particular, the extent to which they may be explained by problems of implementation. At a basic level, the extent to which social workers were present in schools indicates whether it was possible to assign social workers to schools and get them onto school premises, so this is the first analysis we present here. This fed into a multivariate understanding of implementation quality, which we describe briefly thereafter and in more detail elsewhere (Westlake et al., 2023).

Recruitment and Deployment of Social Workers in Schools

There were some difficulties in implementing SWIS during the set-up period, with all local authorities reporting some “recruitment drag” in the early stages. This was particularly notable in some local authorities. For instance, LA 14 did not have any social workers in post during the first school term following the launch of SWIS, LA 13 had only one social worker in post, and that social worker started near the end of the term. While most schools had social workers in place during term two, continued delays in some local authorities meant a minority of schools (5/136) did not have a social worker working in them by the end of the second term. Two schools had to wait until the second year of SWIS for their social worker to start, and unresolved problems meant one did not receive a social worker at all.

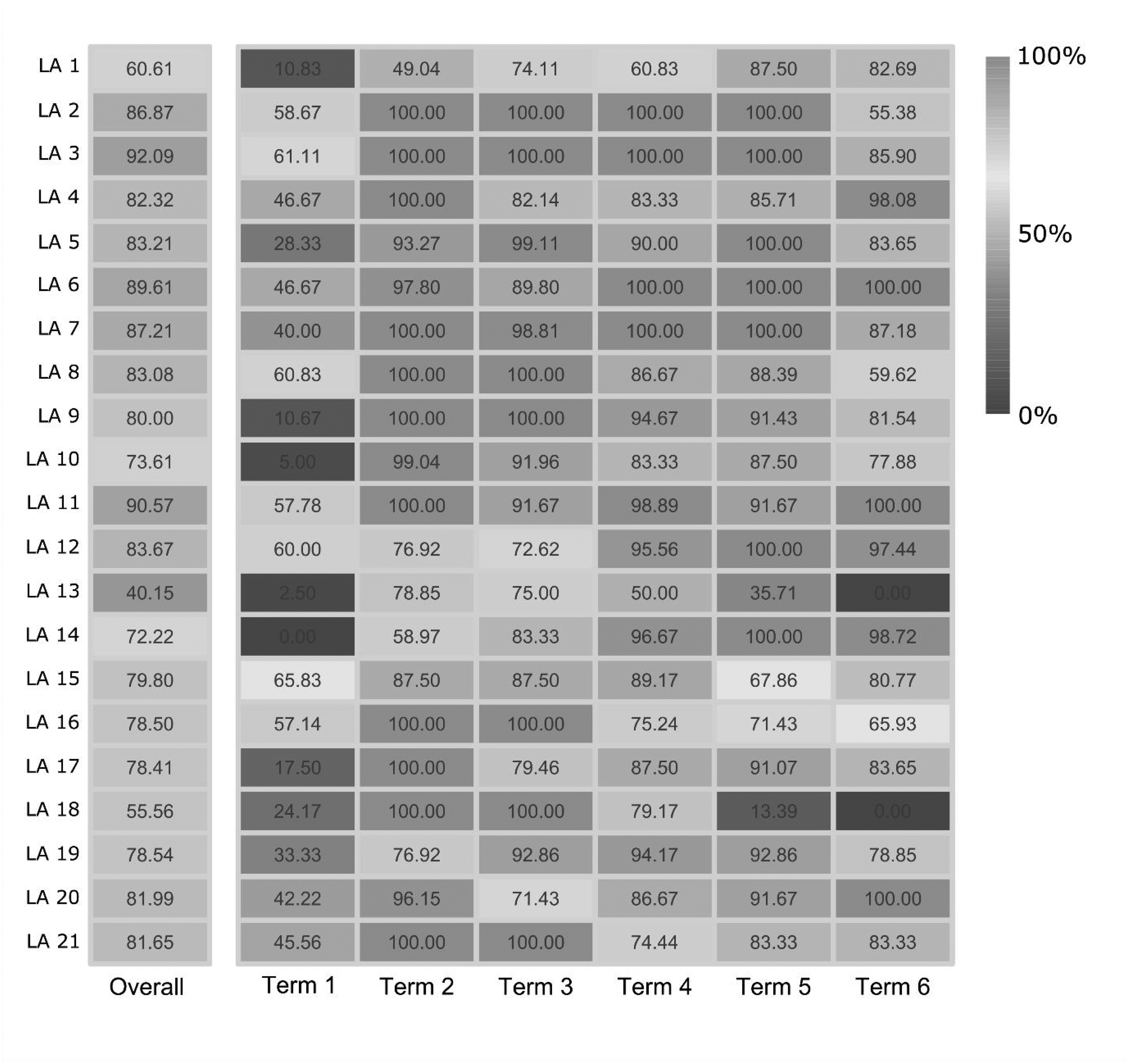

Much of the delay can be attributed to the short timeframe local authorities had to recruit, with only five weeks between confirmation of being involved in the trial and the start of term. This was not long enough for any schools to have a social worker in week one, and most did not have a social worker until at least week four. Recruitment did not reach 50% until 10 weeks into the trial period, and 75% were in position by 15 weeks. Despite this, the duration of the study being extended to include two academic years diluted this and made the period of recruitment drag relatively small. Although no school had a social worker in post for 100% of the trial period, and positions were filled or vacant in an irregular pattern over time, the overall percentage of weeks that social workers were in post in schools in each local authority exceeded 75% in 16/21 local authorities, and only one had a social worker in post for less than 50% of the intervention period (the school which failed to recruit). These data are illustrated in Figure 2.

Heat map of percentage of time SWIS workers were in post, by term and local authority. Each column represents overall, or termly, percentage of time a local authority had social workers in post across their schools in a particular time period. Each row represents one local authority.

Moreover, in terms two and three staffing levels were up to 100% for many local authorities. Notwithstanding the slow start, some local authorities (e.g., LA 3, 6, 7, and 11) maintained a high level (∼90%) of social workers in their schools throughout the trial period. Even though social worker staffing was considerably less complete in other local authorities, the mean proportion of time social workers were in post across the 21 local authorities was 78%.

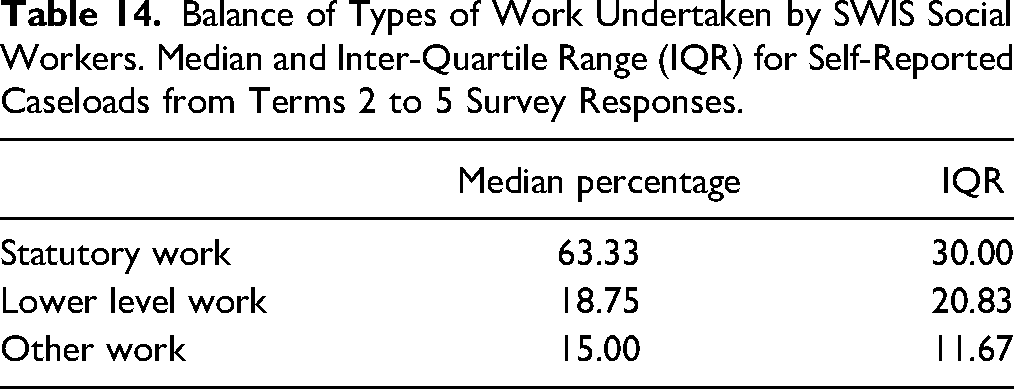

Focusing on Statutory Social Work

The distribution of statutory social work, lower-level preventative work, and other work (such as collaborating with school staff) varied greatly between—and within—local authorities, for example for one school in LA 7, they did no statutory work, but in other schools in the same local authority, more than 50% of their workload was statutory. In LA6 4 out of 7 schools (for whom we had data) spent at least 50% of their time on lower-level work whereas 1 school social worker did not spend any time on lower-level work. However, with some notable exceptions, the majority (66.3%) of work SWIS social workers in most schools undertook was statutory and aligned with the manual (Table 14).

Balance of Types of Work Undertaken by SWIS Social Workers. Median and Inter-Quartile Range (IQR) for Self-Reported Caseloads from Terms 2 to 5 Survey Responses.

Ratings of Overall Implementation Quality

Ratings were based on points set out in the SWIS manual, and calculated for 69% (101/136) of schools assigned to SWIS. These ratings were calculated for 95 schools based on sufficient survey responses from social workers and school staff across terms two, three, four, and five (Spring 2021–Spring 2022) in addition to the percentage time each school had a social worker in post. A further six schools automatically received a bronze rating as they had a SWIS in post for less than 33% of the intervention period. The remaining 45 schools were unrated due to insufficient data.

Of the 101 schools rated, most (70) achieved a gold rating, 24 were rated silver and only seven were rated bronze. However, these results should be interpreted with caution as the relationship between IPE survey nonresponse and poor implementation for around 30% of schools is unknown. Furthermore, these are based on average ratings calculated from survey responses that varied in completeness between schools and across the four terms.

Discussion

There is no evidence that SWIS reduced the rates of section 47 inquiries, CSC referrals, section 17 assessments, number of children entering care or number of days children spent in care. The fact that the longer-term follow-up analysis of the care outcomes, conducted 35 months after baseline, also found no effects adds weight to this finding. The same picture emerges when we consider the impact on educational outcomes we measured. We found no evidence of beneficial effects on the attendance or attainment measures we analyzed.

The disruptive role of COVID-19 may have presented a challenge for implementing SWIS and affected the extent to which students and social workers were in schools at certain points, and the work they were able to do, but this does not seem to have materially affected the results. We found no evidence of patterns associated with the acute phases of the pandemic when we examined effects over different stages of the trial period (when disruption from the pandemic was more or less acute). More broadly, the IPE findings suggest that the null effects cannot be explained by problems of implementation. While there were delays in recruitment and other difficulties associated with implementing SWIS, these were relatively minor in the context of the program as a whole. The majority of schools had the intervention running for most of the time period in which effects were measured, and the messy reality of delivering such an intervention does not detract from what can be seen as a relatively successful process of implementation.

Sometimes social programs fall short of implementation objectives, and null findings are ascribed to problematic implementation (Fixsen et al., 2009; Solomon et al., 2014). Conversely, some evidence-based programs tend to rely on high levels of fidelity to the model (Bezeczky et al., 2019). Yet in this case, our IPE findings give us confidence to reject implementation problems as a likely reason for the lack of effectiveness. Our process evaluation found the intervention was mostly delivered in line with the manual and was implemented relatively well. SWIS is a relatively flexible intervention, and the implementation manual permits a range of approaches. However, this is not uncommon, indeed a level of flexibility is often found among evidence-based programs. It is a feature of other more established school-based programs, such as “Families and Schools Together” (FAST). Up to 60% of FAST is considered to be adaptable to local contexts and has been the subject of at least 10 RCTs (Valentine et al., 2019). It is therefore unlikely that implementation failure contributed to the lack of effects found on the outcomes we measured.

Other RCTs in this field have shown a similar contrast between effect estimates and qualitative impressions. Perhaps the most notable one from the UK is the evaluation of the Family Nurse Partnership, which also reported a null finding despite practitioners being positive about the intervention (Robling et al., 2016). The intervention website acknowledges these results but also emphasizes softer outcomes such as strengthened relationships (Family Nurse Partnership, n.d.). It is however essential that social programs achieve what policymakers intend them to achieve, and in the case of SWIS their intention was to reduce rates of statutory interventions, and that ambition was not realized.

A further, and more challenging, candidate explanation for the lack of effects is that SWIS does not target the root of the problem. Arguably demand for child protection and care interventions is largely shaped by social determinants (such as poverty) which are structural and pervasive (Bywaters et al., 2015). Other setting-based behavioral interventions (e.g., school-based obesity prevention) which, for example, target diet and activity, but not the antecedents of these factors have been found to be similarly ineffective (Hung et al., 2015). The one factor that was significantly associated with outcomes across some of our models was eligibility for children to receive free school meals. This is a form of support that is available to families in the UK who receive various income-related benefits and tax credits and is therefore linked to the social determinants of disadvantage (Gorard, 2012). This seems to offer a partial explanation for why SWIS did not have the intended effects, despite its generally positive reception.

The broader literature on school social work has focused less on the need for statutory interventions and more on other outcomes, such as emotional and behavioral difficulties and learning outcomes (Ding et al., 2023). While there has been a range of positive effects reported in relation to these outcomes, the current study suggests that this does not translate into reduced need for statutory child protection services or improvements in the educational outcomes we measured. The intervention may have benefits that are separate from the outcomes measured, as the qualitative evidence from the current study supports (see Westlake et al., 2023). Likewise, different forms of school social work practiced in other countries may have different effects, but these should be studied separately. The current study demonstrated the feasibility of using an RCT design to do this, and therefore the potential to close the existing gaps in a literature that is lacking in such studies.

Strengths and Limitations

Although the findings were disappointing for many of the practitioners involved, the study was successful. It built upon evidence from the pilots that suggested SWIS was promising in relation to these outcomes, and it was sufficiently powered to statistically detect a meaningful effect size of the primary outcome, had such an effect existed. There was little imbalance between arms in outcomes at baseline. A pre-specified analysis plan took account of clustering and multiplicity, and the findings were robust across a range of sensitivity analyses.

A measure of this is that there was no loss of data at baseline or follow-up, which is unusual for social work RCTs (and indeed social work evaluations more generally). This appears to be due to two factors; one related to how the study was commissioned and the other related to how it was conducted. The program was commissioned as a research study, rather than being an existing program that was subsequently evaluated. Funding to deliver the intervention was offered to local authorities on the condition that they participated in the research, and the funder assisted us in encouraging local authorities to submit their data returns, and in reminding them when deadlines had passed. This link between the program and the study created a level of “buy-in” among senior leaders that may have been difficult to generate otherwise, and helped us engage individuals lower down the organizational hierarchy. This undoubtedly contributed to the success in collecting data.

The other factor that made this possible was the amount of resources we assigned to data management. With so many research sites involved, it was vital that we invested time and effort into building and maintaining relationships with key contacts within the 21 local authorities. We communicated regularly with the person leading the collation of administrative data as well as a more senior contact in each authority throughout. Maintaining relationships with multiple individuals per authority was fruitful because several personnel changes occurred during the study and this smoothed handovers and introductions. Successful relationship building meant that at the crucial point in data collection—the final data return before analysis—there were no significant delays and data was received in time for cleaning and analysis.

Nonetheless, the study also has limitations. The number of days in care is only partially known for children who had not left care by the end of the follow-up period, as some remained in care beyond the study timeframe. Some other limitations are apparent in the IPE. Response rates to the surveys of social workers, school staff, and students were relatively low at 34–51%, 43–60%, and 11%, respectively, and this could have introduced selection bias, which we are unable to investigate. Similarly, there was a relatively low completion rate for information used in the economic evaluation on the amounts of time social workers spent in schools, which could result in an under- or overestimation of costs. It is however positive that the impact analysis was not affected by either of these limitations.

Conclusion

In conclusion, we found no benefit in delivering the SWIS intervention in England for policy-relevant CSC outcomes. This is despite the finding that the local authorities implemented SWIS at scale relatively successfully, delivering key elements of what was described in the manual.

The study proceeded successfully, with unusually high levels of data capture and retention, in a field where RCTs are often reported to be challenging (Dixon et al., 2014). The significance of this is amplified by the fact it took place during the COVID-19 pandemic, which wrought unprecedented disruption in all areas of society and threatened to derail research studies involving schools. It is also notable that, to our knowledge, this is the largest RCT ever conducted in social work, and among the largest RCTs ever conducted in the English school system. This demonstrates the feasibility of doing RCTs at this scale.

The study also demonstrates the value of the RCT design for establishing causal influences (and establishing an absence of causality). As well as identifying interventions that are effective, it is equally important for research to highlight the approaches that do not work. This study highlights the potential for research designs that combine rigorous between-group comparisons with other types of evaluation. For example, the IPE served as both a stand-alone assessment of implementation and process conducted blind to the results of the impact evaluation. As such, it aided our interpretation of the findings and increased our confidence in our conclusions. We therefore suggest that future RCTs in CSC build on this design. One way in which they could do this, if resources allow, is by collecting more data on the proposed mechanisms and processes that are thought to produce outcomes. Our experience of working with a large number of local authorities to collect administrative data was also positive, and this is encouraging for future research. Despite the disruption caused by the COVID-19 pandemic, collecting this data via local authorities worked well. It would not have been possible, or, if it had been possible, it would have been very difficult and costly, to have collected CSC outcome data directly from schools. End-to-end support from the funder in managing the roll-out of SWIS alongside the trial, and encouraging local authorities to return data, is likely to have reduced the time we spent on these activities. The coupling of intervention and research funding is therefore a model that should be considered more widely.

Based on the evidence presented here, we recommended that SWIS was not continued or scaled up further because it did not appear to have the impact on CSC outcomes that policymakers intended. Future efforts to address increases in children being involved with CSC services should aim to tackle the structural problems, such as poverty, which are correlated with such involvement. While they are not yet complete, studies that involve direct cash transfers to families involved with CSC (Edbrooke-Childs et al., forthcoming) and young people leaving care (Westlake, Holland, et al., 2024) may prove valuable in this area.

Following the publication of the primary analysis (Westlake et al., 2023), the Department for Education took this recommendation on board, discontinued funding for SWIS, and canceled plans to scale the intervention further. This decision was directly influenced by the results of the study and created a multimillion pound saving for the public purse, releasing funds that could be used to explore other potential ways to improve outcomes for disadvantaged children.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the What Works for Children's Social Care (grant number N/A).