Abstract

There is a continued call for the use of practices supported by evidence to improve the quality and effectiveness of services provided for students with disabilities. Despite best intentions, our education systems continue to struggle to adopt these practices and transfer them into consistent, sustained use by practitioners. Implementation science, the multi-disciplinary study of methods and strategies to promote use of research findings in practice, seeks to address this by providing frameworks to guide creation of conditions that facilitate use of evidence-based practices. The present article describes how an implementation science approach, Active Implementation Frameworks, was used by a national technical assistance center to cultivate systemic change and create improved outcomes for students with disabilities within several state, regional, and local education agencies. A summary of the lessons learned thus far and resulting considerations for practice and policy are presented. A key lesson was that state education agencies (SEAs) supporting districts and schools in implementation of a specific, educator–student-level practice realized improved outcomes for their students with disabilities. SEAs implementing frameworks or processes without an operationalized and measurable educator–student- level practice had limited or no evidence of improved student outcomes.

Keywords

There is a continued call for the use of practices supported by evidence to improve the quality and effectiveness of services provided for students with disabilities. Despite best efforts, our education system continues to struggle to adopt these practices and utilize them in consistent, and sustained ways (Burns & Ysseldyke, 2009; Madon et al., 2007). This gap between what we know works and application of those practices in real world settings denies individuals with disabilities proven benefits (Dew & Boydell, 2017). Contributing to this divide is the lack of effective implementation strategies. Implementation science, the multi-disciplinary study of methods and strategies to promote use of research findings in practice, seeks to address this by providing frameworks to guide creation of conditions and activities that facilitate use of evidence-based practices (EBPs; Eccles & Mittman, 2006).

Findings from implementation science indicate there are three critical factors necessary for achieving desired impact: Effective Practices × Effective Implementation × Enabling Context = Socially Significant Outcomes (Fixsen et al., 2015). To benefit students, organizations select practices supported by evidence and matched to population needs and organizational goals (i.e., effective practices). An infrastructure for training, coaching, use of data and leadership is built to support practitioners to implement in a deliberate and adaptive manner (i.e., effective implementation). Finally, a hospitable environment with a culture of continuous improvement is nurtured to ensure implementers and effective practices thrive and sustain (i.e., enabling context).

The purpose of this article is to describe the application of an implementation science approach within state education systems to support progress toward improved outcomes for students with disabilities. Specifically, the Active Implementation Frameworks (AIFs; Fixsen et al., 2005) will be described, followed by a depiction of how a national technical assistance center used the AIFs to methodically cultivate systemic change and achieve improved outcomes for students with disabilities within several state, regional, and local education agencies. A summary of the lessons learned and the resulting considerations for practice and policy are presented.

An Implementation Approach: Active Implementation Frameworks

The AIFs provide a guide for clearly delineating and building roles, structures, and functions critical across all levels of the educational system to support and bring to scale high-quality implementation of EBPs for students with disabilities (Fixsen et al., 2005). Frameworks include (a) usable innovations, (b) linked implementation teams, (c) implementation drivers, (d) implementation stages, and (e) improvement cycles.

Usable Innovations

The Usable Innovation AIF specifies criteria and processes for selecting practices or programs and ensuring they are “teachable, learnable, doable, and assessable” (Fixsen et al., 2013; Flay et al., 2005). Criteria for an innovation to be usable include (a) clear description of underlying philosophy, principals, theory of change, and intended beneficiaries; (b) specification and operationalization of the essential components needed to achieve intended outcomes; and (c) a measure of its use as intended (i.e., fidelity). When selecting an EBP, the framework guides organizations to consider match of EBPs to needs of the focus population; evidence-base; available supports (e.g., training, coaching, data systems); capacity of implementing site (e.g., staffing, fiscal supports); fit with philosophy, values and existing initiatives in use at the implementing site; and usability (e.g., availability of a fidelity measure, acceptability, evidence of successful replication, and level of specification).

Implementation Teams

Implementation teams are accountable for deliberate application of implementation science methods and tools to ensure stakeholders (including families and community members) and staff are engaged, practices are well-defined and fit with the context, implementation supports are in place, fidelity is measured and improved, and outcomes are achieved and sustained (Greenhalgh et al., 2004; Higgins et al., 2012). Implementation teams are composed of three to five individuals who are executive leaders and persons with skills and knowledge about the context and decision-making authority and have hands-on experience in implementing EBPs (Coffey & Horner, 2012; Horner et al., 2018; Newton et al., 2014). Key roles include a team coordinator and a data specialist who can support the team in accessing and using data systematically. Research has shown that using implementation teams to actively and intentionally make changes produces higher rates of success more quickly than traditional methods of implementation (Higgins et al., 2012; Metz et al., 2015). Within state educational systems, interconnected teams at state, regional, district, and school levels provide a network across which information, data, and feedback can flow to facilitate learning about contextual responses to change.

Implementation Drivers

Almost all published implementation frameworks and compilations of implementation strategies include capacity-building and infrastructure development as critical components of successful implementation (Powell et al., 2012, 2015). In the AIFs, these components are referred to as Implementation Drivers and are needed to support practice, organizational, and systems change (Fixsen et al., 2015; Metz & Bartley, 2012). There are competency drivers (e.g., selection, training, coaching and fidelity assessment) and organization drivers (e.g., decision support data systems, facilitative administration and systems interventions) both supported by effective leadership. The implementation drivers are integrated and compensatory. Integration means the philosophy, goals, knowledge, and skills related to use of the EBP are consistently and thoughtfully expressed in each of the drivers. In terms of compensatory, more robust drivers can make up for less well-developed supports within another driver.

Competency drivers. These are factors necessary to develop and improve staff efficacy in using EBPs as intended. They include selection of individuals with required skills and abilities to use the practices; training to ensure individuals’ knowledge and skills to use practices with fidelity; coaching using a set of behaviors to support ongoing use of EBPs; and use of fidelity data to support ongoing improvement and understanding of outcomes. Coaching behaviors with an empirical support for practice change include prompting (Freeman et al., 2017; Massar, 2017), scaffolding of supports (Browder et al., 2012; Myers et al., 2017), performance feedback (Cavanaugh, 2013; Freeman et al., 2017), using data (Hasbrouck, 2017), and relationship building (Knight, 2009). Coaching using fidelity data is critical to the transference of knowledge and skills gained in training or professional learning into the school and classroom setting.

Organizational drivers. These are roles and structures necessary to create the enabling processes, procedures, and environment. A decision support data system captures data at individual, group, and system levels to describe the health of practices and processes to support use and scaling of the EBP and its impact on student outcomes. Through facilitative administration, leaders ensure provision of supports needed for successful EBP use, make efforts to decrease burden for teachers and staff as they navigate internal challenges (e.g., scheduling trainings while maintaining coverage of classrooms), and use data for continuous improvement. Systems intervention refers to the processes needed for engaging external stakeholders in the implementation process and for lifting challenges that cannot be resolved at the local level to higher levels of the system for problem solving.

Leadership is foundational for ensuring effective use of the infrastructure and for addressing implementation challenges encountered. Adaptive leadership skills are needed when sources of problems are not clear, solutions are not known, and attempts at solutions involve multiple people and technical leadership skills are needed when problems are more focused and can be solved by organizing existing staff and using available data to identify problems and provide indicators of solutions (Heifetz & Linsky, 2017). Both kinds of leadership skills are essential to support effective EBP use and change outcomes for students with disabilities.

Implementation Stages

Implementation is a mission-oriented, iterative process involving multiple decisions, actions, and corrections designed to make full and effective use of practices. Use of stages provides a planned and purposeful approach for a sequence of implementation activities. The AIF contains four discernible stages: Exploration, Installation, Initial Implementation, and Full Implementation (Metz & Bartley, 2012).

In Exploration, organizations make decisions about fit, timing, capacity, and commitment to use an EBP and begin to create readiness with stakeholders. When commitment to move forward with an EBP is reached, organizations transition to Installation stage activities, including securing necessary resources and developing the infrastructure to support systemic change (i.e., Implementation Drivers). Organizations enter Initial Implementation once practitioners begin using the practice. This stage represents a fragile time during which preliminary changes and disruptions in the system occur as educators infuse the EBP in interactions with students. Transition into Full Implementation begins when the EBP becomes “the way of work” (at least 50% of the intended practitioners meet fidelity standards for using the practice). Although linear in presentation, an organization can be in multiple stages at a time and movement between stages in either direction is not unusual as challenges (e.g., staff turnover) or new areas of need arise.

Improvement Cycles

Teams engage in use of data to continuously improve implementation supports and systematically adapt practices to be responsive to contextual needs and accelerate student success. The Plan-Do-Study-Act (PDSA) cycle is a commonly used improvement method for ensuring effective implementation. As implementation issues arise, teams use PDSA cycles to make small tests of change, help define and refine implementation supports for scale-up efforts and inform alignment of policies and guidelines to support use of the practice. They do require considerable time and resources to implement effectively (Tichnor-Wagner et al., 2017). Development of an organizational culture that fosters continuous learning and improvement routinized in the mission, vision, and practices is critical for effective use of improvement cycles (Bryk et al., 2015; Lee et al., 2012).

The AIFs operationalize the critical implementation activities and strategies to ensure effective practices, effective implementation and enabling context necessary to achieve outcomes for students with disabilities. However, state, regional and local education agencies don’t necessarily have knowledge and skills needed to use the AIFs to support EBP use. The shortage of individuals trained in implementation science has been cited as a reason for inadequate use of EBPs to improve outcomes (Straus et al., 2011). Next, we describe a national technical assistance center’s efforts to develop capacity of state education agencies and their respective regional and local educational agencies to use the AIFs to achieve systemic change and improved outcomes for students with disabilities.

Methods for Developing Implementation Capacity

The need to strengthen capacity for change and develop aligned infrastructures in education has been noted for several decades (McIntosh et al., 2015; Tseng, 2012). In 2006, the U.S. Department of Education’s Office of Special Education Programs (OSEP) recognized the potential for implementation science to address that need, leading to the development of the State Implementation and Scaling-up of Evidence-Based Practices (SISEP) Center. The Center’s goal is to use implementation science research and practice to expand selection, adoption and sustained use of evidence-based educational practices that result in positive outcomes for students with disabilities. The SISEP Center has been engaged in supporting state education agencies (SEAs) to develop an infrastructure to support systemic change at the LEA and school levels for use of EBPs by teachers and school staff with fidelity at the classroom level to improve opportunities and outcomes for students with disabilities.

Procedure

Using the Implementation Stages AIF as a guide, the center supports states to develop and build capacity of linked implementation teams. Within each SEA, roles, structures, and functions are identified and cultivated to support infrastructure development and the systemic change process. These include executive leadership sponsors (e.g., chief deputy superintendent); a state management team (e.g., chief state school officer and agency leaders) to provide governance for the work; and two full-time state transformation specialists (STSs) to lead infrastructure development activities with the SEA and their respective agencies.

Center staff work with the STSs and the state implementation team (SIT) to support formation of implementation teams at regional, district, and school levels; build and support teams’ implementation capacity development; and establish the needed implementation infrastructure for the selected EBP(s). A small number of linked implementation teams allows for changes to be made simultaneously at multiple levels of the education system to create conditions to sustain effective supports and practices at the school and classroom levels. Linked teams are critical to enable practice policy feedback loops key to reducing systems barriers to high-fidelity implementation. “Good” policy must be present to enable good practice, but practice must also inform policy. Linked teams ensure communication back to policy levels to inform decision making and continuous improvement (Metz & Bartley, 2012).

Participants

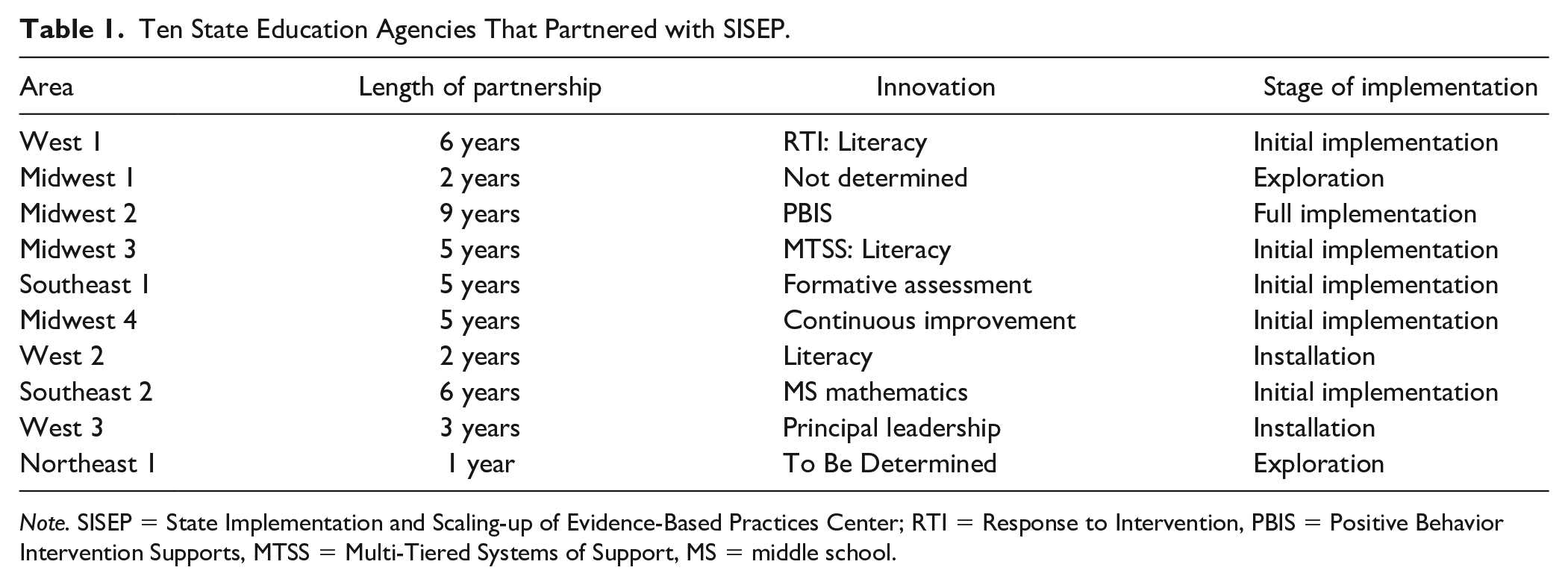

A total of 10 SEAs located in the U.S. West, Northwest, Midwest, Southeast, and Northeast regions have partnered with SISEP since 2006. The SEAs varied in size (120–850 local districts), demographics, and complexity. The percentage of students served under the Individuals with Disabilities Education Act for ages 3 to 21 years ranged from 11.2% to 16.9% of the student population enrolled (average = 14.1%). Five of the 10 states had an existing regional structure of independent agencies (e.g., service cooperatives, intermediate school districts, education service districts) that participated in implementation capacity development. The other SEAs constructed regional implementation team(s) using their SEA staff or contracted regional consultants. All SEAs partnered with a small number of regional and local education agencies and schools, typically 3 to 4 LEAs representative of their state. See Table 1 for information on each SEA (e.g., length of partnership, innovation, and implementation stage obtained).

Ten State Education Agencies That Partnered with SISEP.

Note. SISEP = State Implementation and Scaling-up of Evidence-Based Practices Center; RTI = Response to Intervention, PBIS = Positive Behavior Intervention Supports, MTSS = Multi-Tiered Systems of Support, MS = middle school.

Measures

The SISEP TA center uses a number of measures to inform their progress in developing implementation capacity and evaluate the effectiveness of their TA supports. These measures include

annual perception survey of the quality, utility, and relevance of services and products;

assessment of knowledge in the use of implementation practices and strategies to align policy with practice;

semi-structured interviews with key stakeholders within SEAs;

logs of TA services provided as intended;

reach of their TA supports through Google analytics, social media statistics, and administrative data; and

implementation capacity assessments (i.e., State Capacity Assessment, Regional Capacity Assessment, District Capacity Assessment, and Drivers Best Practices Assessment at the school level; see https://sisep.fpg.unc.edu/resources-and-tools for more information on capacity assessments).

In addition, SEAs provide SISEP with summaries of aggerated student outcome data relevant to their selected practice (e.g., academic proficiency and growth data, discipline data, attendance data, graduation rate data) and fidelity data for the selected EBP(s) being implemented at the school and classroom levels.

The capacity assessments are administered by a trained administrator and completed by identified respondents as a group/team using consensus-based scoring. The use of consensus-based scoring produces greater depth of discussion, exchange of knowledge and information, generation of items for action planning, and collective commitment to continuous improvement. The administration method also helps to address power differentials that may be present and protects voices from diverse perspectives. The capacity assessments are in various stages of development, but all meet the criteria of being (a) relevant (items that are indicators of key leverage points for system change), (b) sensitive to changes in capacity, (c) consequential (items are important and prompt action planning), and (d) practical. Psychometrically, the DCA’s content validity has been established. It has an adequate internal structure (RMSEA = .071, CFI = .93, TLI = .92), internal consistency (Cronbach alphas of 0.91 for the total score and 0.79–0.81 for the subscale scores), and test–retest reliability (r = .98 for Leadership, .78 for Decision Support Data System and Competency Scales; Ward et al., 2021).

Key Lessons

SISEP has systematically captured lessons learned through bi-annual reviews of capacity, fidelity, outcome, perception data; external evaluator observations; and interviews with state teams and their stakeholders. Lessons learned are organized by AIF and represent multiple state contexts and their use of various frameworks (e.g., Multi-Tiered Systems of Support, Positive Behavior Intervention Supports) and EBPs in different content areas (e.g., mathematics, literacy).

Usable Innovation

Selecting and developing the evidence-based practice to be a usable innovation for students with disabilities or for all students inclusive of students with disabilities has been an area of challenge for SISEP partners. SISEP has identified lessons learned related to the prevalence of use of EBPs, locus of control for selection of EBPs, and selection of frameworks or processes. In 2007, the original design of SISEP was based on assumptions that (a) evidence-based education practices were in use in every state and most districts, (b) fidelity measures were available to assess the presence and strength of any evidence-based practice in use, and (c) data systems in states and districts included measures of education capacity and processes related to producing high levels of student learning. The purpose of SISEP, then, was to strengthen the capacity of state and district teams to provide supports to schools and teachers based on implementation science. SISEP learned that these assumptions were rarely met. Evidence-based practices were often not identified. Instead, practices were typically selected based on theory of research and few to no fidelity measures and data systems were available to inform needed changes for improvement. As a result, SISEP spent time developing capacity of the state and their stakeholders (e.g., regional staff, LEA staff, staff from participating educator preparation organizations and various state associations) to operationalize evidence-based practices, work with subject matter experts to develop fidelity measures and relevant data systems for decision making and establish demonstration of outcomes within participating LEAs and schools.

The locus of control for selection of practices was another area of learning. All 10 SEAs have been able to use data to identify a specific area of improvement, however, few have been willing to identify a specific evidence-based practice for use within the classroom or at the interaction level between students and educators. The majority of states have demonstrated willingness to identify, operationalize, and develop an infrastructure for a research-based process or framework (e.g., continuous improvement process or multi-tiered systems of support framework). However, the identification of a specific practice used within a process or framework is left to districts and schools. The rationale presented for this approach has been “local control” as written by their legislative guidelines. One state was willing to name a menu of evidence-based programs and curriculums but found their local districts unwilling to select from the menu. As a result, the SEA identified and operationalized evidence-based instructional practices within a specific content area and grade span and districts then identified various programs or curricular resources to support use of the state-operationalized practices using common training, coaching, and data use systems and measures.

States that identified a process or framework, in the absence of a specific practice that a teacher uses, have been slow to realize improved outcomes for students with disabilities (Ryan Jackson & Ward, 2019). Frameworks and processes often are complex and operationalized at the district or school level, not the level of direct interaction between teachers and/or staff members and students. Lessons learned in this area do not suggest that those processes and frameworks are not useful or effective but do indicate that unless specific, usable educator–student-level practices are identified and operationalized, the length of time to outcomes may be greater and an aligned infrastructure for specific practices at the student level may not be easily replicable or scalable.

Implementation Teams

SISEP identified lessons learned around team formation and membership, team use of data, and team functioning. The notion of using a teaming structure is not novel in education. A key lesson learned was to examine current teaming structures and refine or repurpose an existing team to hold necessary functions. In the repurposing of a team, however, SISEP learned not to make assumptions that an existing team has the necessary representation and skills needed to function as an implementation team. Many state, regional, and local education agencies have “leadership” teams that allocate resources, communicate with stakeholders (e.g., board and policy makers), and solve problems. However, many of these teams do not hold functions critical for implementation of EBPs, including (a) visibly promoting the work (e.g., ability to talk to and answer questions about what it takes to effectively implement the EBP), (b) creating opportunities with stakeholders to build a shared understanding of need for selected practices and implementation work, and (c) using implementation data (e.g., fidelity, capacity, reach) in conjunction with outcome data for continuous improvement efforts (Aarons et al., 2014; Moullin et al., 2018). Therefore, whether teams were new or repurposed, ongoing training, and coaching were needed to build and enact these leadership functions.

SISEP found it critical for teams to include a representative from executive leadership or an individual who could make decisions regarding personnel and resources without having to consult a higher authority. To support cohesion and alignment, representatives with executive leadership from both special education and general education offices were needed at all levels (state, regional, district, and school). Teams that lacked accountability and leadership structure struggled to make significant progress. To assist with cohesion, consistency, and sustainability and to mitigate potential negative impact of team member turnover on team progress, other key lessons learned were to have redundancy in various needed perspectives and competencies and to ensure membership on the team was reflected as a responsibility in job descriptions.

Another key learning was to ensure different types of data (e.g., training effectiveness data, fidelity data) were being accessed and used by the implementation team within the first 6 months of team formation. Teams who struggled to access and use data past the 6-month mark often faded away. Attendance at meetings would decline and the teams struggled to accomplish specific implementation work. Use of systematic protocols for decision making using data often are found needed among district and school teams (Algozzine et al., 2016; Newton et al., 2012). The Center also found that in addition to being able to access data, teams needed support to effectively use data to identify or solve problems and teams who used decision making protocols consistently produced actionable plans for improvement. Finally, it was critical for teams to specify operating procedures for roles and responsibilities, decision making methods, and communication protocols. Without these, teams lacked focus and failed to make decisions, often halting work. In addition to within-team communication protocols, development of transparent and written communication protocols between linked teams was crucial for establishing trust and creating efficiencies for problem-solving implementation challenges.

Implementation Drivers and Improvement Cycles

For the Implementation Drivers and Improvement Cycles, SISEP identified lessons learned about needing effective coaching systems, using multiple forms of data in decision making, using policy-practice feedback loops, and facilitating cross-agency collaboration. Although SEAs and districts demonstrated strength in development of their professional learning systems as evidenced by high scores in this domain on capacity assessments, establishment of coaching systems that incorporate evidence-based coaching practices (e.g., observation, modeling, performance feedback) consistently presented challenges (e.g., baseline capacity assessment scores for coaching range = 0%–18% across 550 local education agencies). Education agencies often struggled to identify funding or resources to hire coaches or release teachers to serve coaching roles. Even when resources were available, state, regional, and local education agencies rarely had high-quality selection processes and competency development activities for those serving in coach roles.

Identifying data needed by whom, in what form at each level of the system, and how to use data within a systematic data-based decision-making process were frequent areas in need of support. SISEP found that education agencies frequently reviewed student outcome data, however, collection and use of implementation data (e.g., fidelity, training and coaching effectiveness, capacity) was rare. Teams required support in identifying feasible methods to collect these data and ensure their sensitivity to growth and importance to stakeholders. In addition, teams needed significant modeling and scaffolding on use of these multiple sources of data (e.g., training and coaching effectiveness in combination with fidelity and outcome data) to provide a comprehensive picture of implementation effectiveness and inform decision making and improvement. When teams were reviewing fidelity data prior to working with SISEP, they often relied on examination of adherence only and, therefore, required support in understanding multiple facets of fidelity beyond adherence (e.g., quality, dosage, participant responsiveness).

Organizationally, implementation teams at all levels of the system consistently struggled with the Systems Intervention driver as evidenced by lower scores on capacity assessments. Creating practice-policy feedback loops and engaging stakeholders authentically not only takes time to build trusting relationships but also requires skill in using co-design processes that address power differentials. It takes on average three to four Plan-Do-Study-Act cycles of sharing data and information to understand the processes and use responses from stakeholders. To be effective, many additional cycles of improvement with on-going coaching support were required for practice-policy feedback loops to become embedded into practice.

A final area of learning was around the creation of structures to facilitate cross-agency collaboration at the SEA and local levels. A prerequisite to a partnership between SEAs and SISEP is active involvement from not only the special education office but also from the general education or school improvement office. Every SEA demonstrated a need for processes of collaboration and alignment and varying levels of willingness to address these needs. Facilitators to this collaboration included identification of executive sponsors from each office/division within the agency and state transformation specialists, allocation of resources leveraging and braiding funding from each office/division, sharing data needed for state legislative and federal accountability purposes, shared responsibility for co-design of implementation activities such as selection and operationalization of EBPs, development of training and coaching supports, and shared support for districts and schools identified by accountability systems.

Implementation Stages

To effectively use a stage-based approach, it was critical that SISEP partners make time for Exploration activities (e.g., not only conducting needs assessment, but also engaging in fit and feasibility assessment for practice options to address the need). A critical aspect of this was supporting the different levels of the system to engage in purposeful selection of their partnering agencies. Support was needed for all states to critically outline selection criteria and develop a selection protocol to engage in purposefully and systematically to ensure a “mutual” fit among partners at all stages of implementation process. Time was also needed for installation activities (e.g., developing training, coaching, and data systems). During initial implementation, teams needed continued coaching to support data use for continuous improvement and persistence to obtain outcomes. In the initial implementation stage (often Year 3 of the implementation work), education agencies were most at risk for being distracted. They often had to navigate changes in turnover in executive leadership, changes in legislation, and competing demands for resources while also trying to create readiness for expanding to additional regions, districts, and schools and continue to support implementation efforts underway. Creating skills and competency at the middle level of the SEA was found to be a buffer during these times of turnover and transitions within several states. At full implementation, a key lesson was to continue measuring fidelity, maintaining high quality support, evaluating the impact on achieving intended outcomes, and continuing to use data for improvement purposes while processes become embedded as a way of doing business.

Many lessons have been identified in the use of the AIFs by state, regional, and local education agencies. Several partners demonstrated improvement in outcomes for students with disabilities as measured on benchmark assessments, state summative assessments, and state graduation rates through their use of AIFs with fidelity in support of identified EBPs in the areas of mathematics and social-emotional practices (Kloos et al., in press; Ryan Jackson et al., 2018). For example, one Midwest state demonstrated that districts receiving ongoing support within the linked teaming structure improved mathematics outcomes for students with disabilities and students who are Black compared to a matched district (Ryan Jackson et al., 2018). It should be noted that causal inferences cannot be made or supported with results of the SISEP TA center use of the AIF with education agencies given the lack of an experimental research design. Improvements seen within samples of students with disabilities within at least 3 of the states are drawn from descriptive analyses only. The SISEP Center continues to systematically evaluate these outcomes and lessons learned, make adjustments to the plan, and apply the lessons learned.

Implications for Policy

Using a specified set of intentionally applied activities, policy can affect practice (Ejler et al., 2016) and together they can affect student outcomes for better or worse (Cohen & Hill, 1998). Kendi (2019) compels us to understand the “policies lurking behind the struggles of people” as “people are in our faces and policies are distant” (p. 28). Given this, the field of implementation science and lessons learned from its application can provide implications for policy makers to consider when creating and revising enabling policies. A discussion of these potential policy implications is organized by the AIFs.

Usable Innovation

As noted previously, SEAs found commitment to selection of a practice to be used at school and classroom levels to be a difficult task. Compelling executive leaders at every level of the system to authentically engage stakeholders so they understand the real challenges faced when policy does not support effective practice is a key challenge. Policy makers should take a user-centered approach to identification of needs and in selection of practices or programs and creation of initiatives. This approach includes not simply requesting input from stakeholders, but listening to and heeding the wisdom of those closest to the ground (Villanueva, 2018). Policy makers must authentically engage those who would be most affected yet whom have most often gone unheard in design of policy—with disabilities themselves, families of students with disabilities, and practitioners. These key stakeholders should be part of co-creation or co-design of usable innovations from clearly defining foci for change, identifying existing assets that can be leveraged in change, providing guidance on the contextual fit of potential practices, and being active decision makers in selection of practices (Metz, 2015).

Practitioners will continue to struggle with effective implementation if practices are not well defined at the classroom level. Policy makers can address this by ensuring selected innovations are supported by evidence and have clearly operationalized core components. If policy makers are not willing to identify and/or mandate innovations due to local control, they can still influence use of effective practices by carefully outlining what gets funded and ensuring that those receiving funds are selecting and operationalizing evidence-based and usable practices.

Another key factor that hinders successful implementation is introduction of new innovations that compete for resources, are misaligned with existing initiatives, or are redundant. Policy makers often inadvertently perpetuate these challenges by developing new initiatives without careful review of their fit with existing initiatives. At the practice level, schools and teachers become overwhelmed with trying to navigate implementation of multiple initiatives, often resulting in redundancy and lack of resources to implement any of them effectively. Frequently this includes continued use of programs or practices that are ineffective. When considering new initiatives, research suggests that scanning for existing initiatives and/or initiatives with common core components with potential to compete can assist in determining the need for the new initiative. If a new initiative is needed, policy makers can work with stakeholders to ensure alignment of the new initiative with existing initiatives and leverage resources for efficiency and effectiveness in supporting practitioners. Just as important as selecting and aligning initiatives is policy makers’ willingness to identify and de-implement or end use of practices and programs not contributing to improved results for students with disabilities. Careful selection, alignment, and deselection of practices that includes the meaningful participation of all key stakeholders may decrease burden and initiative fatigue at the practice level and increase potential for effective implementation (Rycroft-Malone et al., 2013).

Implementation Teams

Engaging policy makers as active members of implementation teams leading and supporting implementation work to learn what it takes may not only improve current policy but also inform the development of future policies. As a starting point toward active engagement as team members, leadership and implementation team members should design opportunities to engage policy makers with other stakeholders in key implementation activities (e.g., review and use of data to identify and/or develop strategies to address challenges). Several LEAs within one state successfully engaged school board members as active participants in the assessment of their implementation capacity for their identified practice to address outcomes for students with disabilities. Another implication for policy related to implementation teams is to ensure that policy outlines the use of implementation teaming within a linked teaming structure and that the implementation teaming structure authentically engages stakeholders.

Implementation Drivers and Improvement Cycles

A number of policy implications arise from development and use of an aligned implementation infrastructure. When considering competency supports, policy makers have the opportunity to lay out the necessary implementation roles and their needed specific criteria in legislation and its related funding. Different skill sets are needed to build infrastructures and provide implementation supports than the skill sets typically needed for monitoring the appropriate use of funds and progress on an identified set of indicators. Thus, positions such as the state transformation specialists, with the necessary systemic change knowledge and skills, are needed with dedicated time and support to lead implementation efforts.

Criteria for high quality training and coaching supports can be outlined and specified. The design of a policy initiative to resource training and coaching systems influences teachers’ access to expertise, as well as the depth and substance of professional practice (Coburn & Russell, 2008). Once a usable innovation is defined and operationalized, trainers and coaches in a state can replicate the process and design common training and coaching systems that are ready to be used by LEAs and schools. Evidence suggests when SEAs commit to alignment of supports for use by schools, they can improve student outcomes and close disparity gaps within two years of an LEA selecting its first schools (Ryan Jackson & Ward, 2019).

Leadership and policy makers have an opportunity to plan for building capacity to reduce over-reliance on often more expensive and less intensive/available training and coaching supports provided by purveyors. Instead of working with purveyors to provide the supports directly, they can work with them to build capacity of others (e.g., state coaches, regional agencies, LEAs) to provide supports over time. Often regional agencies serve as brokers for professional learning and not in a direct capacity-building role, creating a missed opportunity. Depending on funding structures within a state, policy makers can examine roles of regional agencies and potentially shift roles from broker/monitor to support provider for innovation-specific and generalized capacity to support use of EBPs.

Finally, the education literature is replete with evidence calling for a system that uses data from teachers’ practice (qualitative and quantitative) to inform adequate resourcing and sustainability of effective instructional practices (Chaparro et al., 2020; Darling-Hammond et al., 2017). Policy needs to value and require the use of a variety of implementation data in addition to impact or outcome data (e.g., implementation fidelity data, social validity data, reach or scale data, and capacity data). These different types of data help construct the implementation story for funders, community members, and other stakeholders. They are often the first data to be available to help motivate staff engaged in the work and support continuous improvement efforts. Even more importantly, they help us understand the level of outcomes being achieved.

Implementation Stages

Research indicates that taking the time to explore needs and carefully examine contextual fit and readiness for implementation, within the Exploration Stage, is key to saving time and money and improving chances for success in implementation (Romney, 2011; Saldana et al., 2012). Yet, current practice in creation of initiatives and requests for proposals (RFPs) does not often consider this need. Policy makers and funders can support the exploration process by structuring RFPs to fund time for organizations to engage in needs sensing, examination of contextual fit of practices, and development of readiness for implementation. Once practices have been selected, time is needed in the Installation Stage to make necessary infrastructure changes. If practitioners are expected to begin implementation right after selection of the practices, frustration is often experienced because the necessary supports were not planned for and provided to support practitioner confidence and competence (Knoster et al., 2000). Policy makers allowing necessary time and funds for planning before expected use helps ensure practitioners have the needed supports to be successful.

There are also key considerations for the scope of implementation in the Initial Implementation Stage. Though the end goal should be broad implementation at scale, initiatives with expectations of beginning with too broad of a scope (e.g., all special education teachers at all grade levels across all K–12 settings) are not likely to be implemented with fidelity, implemented at scale, nor sustained. Policy makers and funders have an opportunity to address this by allowing for initial implementation of initiatives to start small (e.g., small number of sites, teachers, grade levels), learn from initial practitioners about what it takes to implement effectively, continuously improve the implementation infrastructure and supports, and build capacity. In other words, to increase the likelihood of long-term efficiency and effectiveness, it is key to allow for “starting small” and “getting better” before scaling up.

Even when a majority are implementing with fidelity and outcomes are being achieved in Full Implementation, research indicates that there may likely continue to be a drift back to old practices when support is faded. However, initiatives are often funded and planned in ways that do not take sustained use of practice into consideration. Policy makers can prevent these situations where projects may lead to improve outcomes for a few but fade out over time by having the goal of sustainability and scaling at the outset and utilizing a stage-based approach. It is important to consider and ensure projects have funding available for booster support and plans to support LEAs early in implementation to identify and leverage funds and resources with an eye toward sustaining effective implementation supports themselves as original funds fade.

Finally, when following a stage-based approach, implementing sites may take 2 to 4 years before realizing outcomes. It is important for policy makers to manage expectations and recognize that systems changes are complex and take time. This suggests using indicators of progress (e.g., changes in organizational capacity to support implementation, practitioner fidelity to use of selected practices, and outcomes for students on proximal measures) toward outcomes and allowing appropriate time for organizations to achieve intended outcomes.

Summary

Policy matters. All staff and stakeholders within the state education system have a responsibility to bear. Policy makers and executive leaders—who control the resources to redesign an education system that serves diverse children, students, and communities—must come together with their communities to co-create an implementation infrastructure that can be effectively used, tested, scaled and sustained from one teacher to the next, one school to the next, one LEA to the next, one region to the next, and one state to the next, until we have transformed the system. To support this occurring, education agencies need to have capacity in the use of implementation and improvement science practices. Purposeful attention and development of implementation capacity can be scaled through policy makers’ and funders’ attending to and calling for use of science of implementation within legislation and funding opportunities. Together, we can imagine a different future and serve the unique needs of students with disabilities, if we ignore the odds and embrace the possibilities.

Footnotes

Acknowledgements

The authors would like to acknowledge the significant contributions of Dean Fixsen and Karen Blase, co-founders of the State Implementation and Scaling-up of Evidence-Based Practices Technical Assistance Center, for their innovation and leadership in the field of implementation science and support of implementation capacity development in K–12 education with an intentional focus of improving outcomes for students with disabilities.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

Preparation of this article was supported, in part, by a grant from the U.S. Department of Education, #H326K080001, Jennifer Coffey, Project Officer. However, the contents do not necessarily represent the policy of the U.S. Department of Education, and endorsement by the federal government should not be assumed. The funder had no role in writing the manuscript or the decision to submit it for publication.