Abstract

Buyers in online markets pay higher prices to sellers who promise a high-quality product in auctions of used goods, even though they cannot assess quality until after the sale. The principal argument offered in prior work is that reputation systems render sellers’ ‘cheap talk’ credible by allowing buyers to publicly rate sellers’ past honesty and sellers to build a reputation for being honest. We test this argument using both observational data from online auctions on eBay and an internet experiment. Strikingly, in both studies we find that unverifiable promises are trusted by buyers regardless of seller reputation or the presence of a reputation system, and sellers mostly refuse to take advantage. We conclude that the prevailing conception of markets in economic sociology as made possible by opportunism-curtailing institutions is “undersocialized”: Reputation systems may be used to identify more reliable providers of a product, but that they would be needed to prevent otherwise rampant deceit relies on a cynical assumption about human behavior that is empirically untenable.

Introduction

Every day, millions of second-hand items are auctioned off, sent at an agreeable price to a satisfied new owner. While perhaps at first glance a mundane social fact, we contend that what enables such smooth cooperation between perfect strangers is poorly understood, yet is a matter that goes to the heart of the social sciences. Online auctions of used goods are characterized by great variability in the quality of products. The same seller sometimes has an item to offer that is in excellent condition while at other times something that is not in great shape but nonetheless of interest to some buyer at a reduced price. To differentiate between these situations, sellers communicate the state of the item before auctioning it off. Research on auctions for used goods shows that most sellers honestly report on defects, and when they make promises of high quality, their items are indeed sold for higher prices (Anderson et al., 2007; Lewis 2011; Neto et al. 2016; Rawlins and Johnson 2007). Why do buyers believe anonymous sellers’ promises if they have limited recourse in case of deceipt and why do sellers not take advantage?

An ostensibly naive answer is that most people have been raised as honest human beings and believe others have too. Such would rely on a theory of action some would consider “oversocialized” (Granovetter 1985; Wrong 1961). Yet, as we will show, this is precisely the conclusion to which our research leads us. The consensus view in the literature on online auctions instead is that most people are absolutely capable of deceit when this serves them, but buyers can trust sellers’ claims because sellers care about their reputation (Lewis 2011; Ba and Pavlou 2002; Houser and Wooders 2006; Snijders and Zijdeman 2004; Neto et al. 2016; Rawlins and Johnson 2007; Bolton et al. 2004; Depken and Gregorius 2010; Melnik and Alm 2005). This view constitutes a special case of the more general perspective in economic sociology that institutions such as reputation systems are needed to resolve issues of distrust and malfeasance in markets (Nee and Swedberg 2020; Zucker 1986; Kollock 1999; North 1990; Erikson et al., 2006; Greif 1989; Cook et al. 2005; Powell 1990; Simpson and Willer 2015; Thiemann and Lepoutre 2017; Sabin and Reed-Tsochas 2020).

In the specific case of second-hand auctions, many platforms allow buyers to publicly report and look up cases of honest behavior or broken promises with minimal effort. Reputation systems for online auctions communicate not only the quality of past goods or services delivered by a seller, but also their honesty about that quality. The quality of a product may be low, but a buyer will not complain about it unless it was unexpected. On eBay, reviews may contain comments like: ‘Just what I ordered!’ or ‘Item description was very accurate’. Buyers are more satisfied with their purchase if the product matches the product description than when the seller overstated the quality of the product. More satisfied buyers will provide better reviews, and good reviews are important for sellers, because they allow their products to be sold at high prices in the future. By providing clues from the past about the present intentions of the sellers, reputation systems would help buyers distinguish between sellers who intend to provide a high-quality good and sellers who do not intend to do that (Gambetta, 2009; Przepiorka and Berger, 2017; Przepiorka and Diekmann, 2013; Raub, 2004). Reputation systems would thus attach a cost to a false promise (Lewis 2011; Ba and Pavlou 2002; Houser and Wooders 2006; Snijders and Zijdeman 2004; Neto et al. 2016; Bolton et al. 2004; Depken and Gregorius 2010; Melnik and Alm 2005).

Reputation systems are therefore regarded as instrumental for trust in anonymous online markets (Teubner et al., 2017; Teubner et al. 2017; Cook et al. 2005; Przepiorka et al. 2017; Raub and Weesie 1990; Resnick et al., 2000; Tadelis 2016; Jiao et al., 2021). After all, in the counterfactual scenario of no reputation system the situation would be much like Akerlof’s (1970) classic “market for lemons” (Diekmann et al., 2014). Sellers’ promises should then not affect buyers’ decisions, as dishonest information can be provided without consequence (Akerlof 1970; Duffy and Feltovich 2006; Baldassarri 2015) when a buyer and seller will not meet again (Molm et al. 2000). The information asymmetry would therefore pose a risk of adverse selection that would drive high quality products out of the market (Akerlof 1970; Kollock 1994; Macy and Skvoretz 1998; Buchan et al. 2002). Buyers would not be willing to pay a high price for a product if they did not believe the seller was honest about its quality. 1 Sellers, in turn, could not profit from the sale of a high quality good. Sellers should then only provide low-quality products, and buyers should only pay low prices. Such market failure would be prevented by reputation systems.

While this institutional argument has repeatedly been made to account for buyers’ trust in auction sellers’ quality assurances, we are not aware of any empirical study in which it is evaluated. We tested the argument both in an observational study as well as in an online experiment. The observational data we draw on were collected by (Lewis, 2011; 2019) and contain information on a large number of auctions completed on eBay. The online experiment allows a controlled test of the argument by systematically varying the presence of institutions that allow sellers to signal quality and buyers to publicly post evaluations. As it turns out, both studies refute the theoretical consensus: We find that unverifiable promises improve seller outcomes regardless of seller reputation or the presence of a reputation system. Reputation systems do not appear to add any weight to quality assurances. We conjecture that buyers correctly believe that most sellers are too decent to lie even when they can get away with it, so that a good reputation, while reassuring, is not instrumental to building trust.

Theory

Here we flesh out in greater analytical depth the oft-made argument that we end up falsifying, that in auctions for second-hand products reputations render seller claims about product quality credible (Lewis 2011; Ba and Pavlou 2002; Houser and Wooders 2006; Snijders and Zijdeman 2004; Neto et al. 2016; Bolton et al. 2004; Depken and Gregorius 2010; Melnik and Alm 2005).

Sellers who sell second-hand products in auctions sometimes have high-quality products and sometimes only low-quality products. Buying second-hand products online involves several risks for buyers, due to defection by sellers or problems beyond sellers’ control (Axelrod and Dion 1988). First, the quality of most products deteriorates over time, so the quality of used products will vary both between and within sellers. Second, after the buyer has paid for the product, the seller may not send the product, send it too late or send an inferior product. A classic example of this situation is Akerlof’s (1970) market for second-hand cars in which buyers do not know if they are buying a good or a bad car. In many of these markets, sellers and buyers are strangers to each other and only interact once, and they have no incentive to invest in a good relationship (Lo Iacono and Sonmez, 2020; Yamagishi et al., 1998). This lack of dyadic embeddedness (Granovetter 1985; Uzzi 1996) is also typical for modern-day exchanges that are mediated by online platforms. The internet increases the radius of the trust problem: by lifting geographical barriers the internet allows interactions beyond the locally embedded social circles (Kuwabara 2015; Delhey et al. 2011). Online markets are therefore widely regared as posing trust problems between buyers and sellers and have been widely studied as such (see Jiao et al., 2021 for a recent meta-analysis of more than one hundred studies of trust in online markets).

The prices buyers are willing to pay depend on product quality: They are willing to pay a higher price for a high-quality good than for a low-quality good. They want to avoid paying a high price for a product of inferior quality. Both buyers and sellers benefit more when a high-quality product is sold for a high price, than when a low-quality product is sold for a low price. However, without the prospect of future interactions, rational and selfish sellers have interests that are opposed to those of buyers: they benefit more when they provide a low-quality product for a high price. In those markets, buyers are not willing to pay a high price for a product if they are not confident about its quality, and sellers will not provide a high quality good for a low price. This situation leads to adverse selection: it drives high quality products out of the market (Akerlof, 1970). To achieve better market outcomes, it would thus be beneficial if buyers could tell the difference between high- and low-quality products.

In online auctions, the final price is determined by the highest bidder. eBay is currently the largest platform for online auctions. Both individuals and businesses can sell their products via this platform. Sellers can list their products, choose a duration for their auction to run, set a starting price and provide a description of the products. Sellers can also add a reserve price, which is the minimum price that must be bid for the product to be sold. These reserve prices are not communicated to bidders, so they do not function as a signal of quality. 2 Bidders make bids that are publicly available. If the highest bid at the end of the auction is higher than the seller’s reserve price, the buyer with the highest bid pays the price they offered and receives the product. 3

Credible information may help a buyer estimate the probability that a seller will provide a high quality good. Different types of information can be communicated to buyers; one can distinguish between cheap and costly signals. Costly signals are type separating, i.e. sellers who intend to provide a high-quality good will produce the signal, while sellers who intend to send a low-quality good do not produce the signal (Przepiorka and Berger 2017; Gambetta 2009). When the net benefits of producing a signal are positive for sellers who intend to provide a high-quality good, they are negative for sellers who do not have that intention. In that case only sellers who really intend to send a high-quality product will produce the signal, while other sellers will not produce the signal. When a signal is not type-separating, it can easily be faked, and it is considered a cheap signal. The net benefits of producing a signal depend on the costs of producing the signal and on the expected (future) benefits of sending that signal.

One way for sellers to convince buyers of the high quality of their products, is to communicate this to buyers, for example through product descriptions. However, these descriptions are non-binding and can be produced at trivial cost by sellers who intend to provide a high-quality good and by sellers who intend to send a low-quality good alike. Hence, a description need not reflect a seller’s true intentions and should be considered a cheap signal.

When a seller promises to provide a low-quality product, the best response for the bidder is to offer a low price, to avoid paying a high price for a low-quality good. When a seller promises to deliver a high-quality product, the best response of the bidders depends on whether they believe that the seller will really provide a high-quality product. If they believe the message, the best response is to offer a high price. Otherwise they can best offer a low price. In the short term, sellers benefit more when buyers offer higher prices. This means that there is no reason for any rational and selfish seller to describe the product as a low-quality product, since they can in that case be sure that the buyer will not pay a high price. In the absence of a reputation system, under the assumption of goal-directed and self-interested behavior, sellers will always promise that they will provide a high quality good, so product descriptions are cheap signals.

However, empirical research shows that these product descriptions do affect buyers’ decisions (Lewis 2011; Anderson et al., 2007; Rawlins and Johnson 2007; Neto et al. 2016; Snijders and Zijdeman 2004; Heijst et al., 2008; Sena et al. 2005; ter Huurne et al., 2021a). For example, Lewis (2011) found that sellers on eBay who auction second-hard cars receive higher prices when they include in the product description that the car does not have scratches, dents or rust. Sellers who mention any of these deficits receive lower prices than sellers who do not mention these deficits.

While such observations seem in line with the general obervation in experimental research that communication tends to enhances cooperation in social dilemmas (Balliet, 2010), it is not straightforward that these effects translate to the online context. For instance, Bichhieri and Lev-On (2007) argue that computer-mediated communication in general tends to be less effective than face-to-face communication in fostering cooperation, and that this effectiveness crucially depends on features of the online social environment that allow the establishment of a social norm of promise-keeping. The presence of a reputation system is such a feature that may explain buyers’ trust in product descriptions.

Reputation systems collect, aggregate and distribute feedback about sellers to buyers (Resnick et al., 2000). In markets in which sellers repeatedly sell a single product of fixed quality, ratings left behind by previous customers help buyers differentiate between sellers of good and bad products (Buskens, 2003; Cook et al., 2005; Kuwabara, 2015; Raub and Weesie, 1990; Sorenson, 2014; Weigelt and Camerer, 1988; Yamagishi and Yamagishi, 1994). Studies indeed show that positive ratings positively affect seller choice and price in homogenous markets (Boero et al., 2009; Charness et al., 2011; Duffy et al. 2013; Fehrler and Przepiorka 2013; Bolton et al. 2004; Diekmann et al., 2014; Simpson and Willer 2015; Resnick and Zeckhauser 2002; Frey and Van De Rijt 2016).

In contrast to such homogenous markets, sellers of second-hand items sometimes have high-quality products and sometimes low-quality products for sale. Each product is sold in a dedicated online auction. Prior to the auction the seller describes the state of the product. At this stage a selfish and dishonorable seller could willingly portray their product as better than it really is. Reputation systems may help buyers determine how accurate quality claims made by seller about a specific product are (Lewis 2011; Ba and Pavlou 2002; Houser and Wooders 2006; Snijders and Zijdeman 2004; Neto et al. 2016; Melnik and Alm 2005; Depken and Gregorius 2010). Reputation systems may thus help overcome Akerlof’s adverse selection problem when there is heterogeneity in product quality between different offerings by the same seller.

Through numeric ratings and reviews, online reputation systems often contain information about the quality of past products sold by the seller and about the accuracy of a seller’s past quality claims. Reputation systems thus do not only allow buyers to select sellers on the basis of the quality of the products they have delivered in the past. They also allow buyers to assess the extent to which sellers have been honest in the past. Sellers who have lied in the past about the quality of their products are likely to have worse ratings and reviews than sellers who have not lied in the past. When assessing the accuracy of a product description, buyers can thus use the information in the reputation system. Sellers who have lied in the past can reasonably be expected to do so again in the future. Sellers previously found honest who promise to deliver a high-quality product are therefore expected to receive higher prices than sellers previoulsy found dishonest when making the same promise. Buyers should then be more likely to believe information if that information is provided by more honest sellers.

From this theoretical argument, we now derive a number of hypotheses. First:

The better the reputation of a seller for being honest is, the more likely buyers are to trust claims about quality from this seller. If one assumes that reputation systems also contain information about the accuracy of past product descriptions, reputation systems do not only provide an incentive to deliver high-quality products, but also an incentive to be honest in describing products when they are of low quality. In case of a product of inferior quality, it may be tempting to selfish sellers to lie about the quality of the products in order to attract a higher price. However, in the presence of a reputation system the long-term benefits of communicating honestly may outweigh the short-term costs. In the long run, sellers may benefit from the reputational gains from honest communication in the past. If the expected future reputation gains are larger than the short-term costs, sellers are expected to provide a high quality good if they can, and to communicate honestly when they sell a product of inferior quality. The reputation system thus provides an incentive for honest communication, which makes that communication more credible. While under assumptions of goal-directed and self-interested behavior, sellers who promise to deliver a high-quality product in the absence of a reputation should not be trusted, the reputation system creates the conditions for these messages to be credible. Combining communication with reputation may thus make cheap talk costly. According to this argument then, sellers who want to keep up a good reputation need to be honest about the quality of their products to buyers. In the presence of a reputation system, sellers should then be more likely to do as promised.

The correlation between promises made by sellers and their actual behavior is stronger in the presence of a reputation system. Because the information that is provided by sellers about the quality of their products is expected to be more truthful when there is a reputation system, buyers should rely more on that information when there is a reputation system.

The effect of promises by sellers to honor trust on trust of the buyers is stronger in the presence of a reputation system. When sellers and buyers interact only once and when there is no reputation system, noncooperative behavior has no direct disadvantage for individual sellers, but it leads to negative outcomes for the entire market. In the presence of a reputation system, and with the possibility to communicate, sellers can credibly communicate the quality of their products, and buyers can adjust the prices they offer to the quality of the products. They are expected to pay higher prices to goods of better quality, and lower prices to products of inferior quality. Without the reputation system, communication between buyers and sellers is cheap talk, and buyers are not expected to pay higher prices when sellers promise to deliver better quality products. When product quality within sellers is sufficiently heterogenous, reputation alone is not enough to solve the trust problem without a communication system for providing feedback on promises kept and broken. When there is only a reputation system, but no communication between buyers and sellers, the reputation system only contains information about the quality of the product, not about the accuracy of past product descriptions. However, when the quality of the products offered by the same seller varies greatly over time, the quality of products delivered in the past are no guarantee for the future. Consequently, in markets with heterogeneity in product quality within and between sellers neither communication nor a reputation system are expected to be a sufficient condition for better market outcomes. There should then be no difference in trust between cases where only reputation building or only communication are possible or where neither is possible, and that trust increases only when both reputation building and communication are enabled. Both communication and a reputation system would be necessary to increase trust.

Buyers place more trust in sellers when there is a reputation system and a communication system than when there is a) only communication, b) only reputation or c) neither communication nor reputation.

Empirical strategy: an observational study and an experiment

To the best of our knowledge, the consensus argument that communication between buyers and sellers affects buyers’ decisions because there is a reputation system is never tested in any of the studies that advance it (Lewis 2011; Ba and Pavlou 2002; Houser and Wooders 2006; Snijders and Zijdeman 2004; Neto et al. 2016; Rawlins and Johnson 2007; Bolton et al. 2004; Depken and Gregorius 2010; Melnik and Alm 2005). We are aware of one study on the interaction between seller reputation and claims made by sellers: studying auctions of baseball cards on eBay, Jin and Kato (2006) do not find evidence that buyers rely more on claims made by more reputable sellers. However, their dataset contained both auctions of graded and ungraded cards. While the quality of ungraded cards is very difficult to detect online, this is not true for graded cards. For the latter category, claims and ratings of sellers have a different effect (Jin and Kato 2006). Moreover, the rating of the seller and the claims about the quality of the cards made by the seller were found to be correlated with the price of the cards, as well as with whether the card was graded or not. Based on the pooled results for graded and ungraded cards we cannot conclude that the effect of claims is equally strong for reputable and for unreputable sellers of ungraded cards.

We test H1 in Study 1 by using data from eBay auctions of second-hand cars. When using data from real platforms one is bound to the institutional design of that platform. eBay allows its sellers to communicate with buyers, and to build a reputation. However, to test H2-H4, we will need to systematically vary the presence of a reputation system and the opportunity for sellers to communicate with buyers. We are aware of a few studies in which the combined effect of reputation systems and communication systems is tested (Brosig-Koch and Heinrich 2018; Cason and Mui 2014; Denant-Boemont et al. 2011; Duffy and Feltovich 2006; Wilson and Sell 1997). However, none of these studies implement the Akerlof problem of quality heterogeneity characteristic of online auctions and are therefore of little use for understanding the problem at hand. In Study 2 we use an online experiment with quality heterogeneity within and between sellers in which we test if the presence of a reputation system changes the effect of communication on trust (H2-H4), while also testing H1 once more. 4

Study 1: observational data from an online auction platform

For Study 1, we use publicly available 5 data collected by (Lewis, 2011; 2019), containing information on 146,734 auctions of cars offered between March and October 2006 on eBay. Paying for a car involves a major trust problem, because cars are expensive items and the buyer cannot know whether the car is of high quality. If buyers offer a (higher) price for a car, this can thus be regarded as an expression of trust in the seller. Trust was therefore operationalized as the number of bidders, the highest bid and the probability that the car was sold. We analyzed whether the seller’s reputation and claims about the quality of the car affected these dependent variables in accordance with H1. Following Lewis (2011), we excluded auctions of new cars, cars that were built before 1950, incomplete or incorrect observations (e.g. with a negative mileage) and salvaged cars. We also excluded cars that could be bought immediately for a specified price and cars with a warranty, because the trust problem is less severe in those cases. A single outlier with a bidding price of $860,100 was excluded (this is more than $600,000 above the next highest price, and more than 80 standard deviations above the average). The remaining dataset contains 65,243 observations.

Positive seller reputation was operationalized as the number of positive ratings the seller obtained, and negative reputation was defined as the number of negative ratings. Because these distributions were highly skewed, with many sellers having no or a few ratings and only very few sellers with many ratings, the measures we include in the analysis are the natural logarithm of these numbers, after first adding 1 to avoid ln (0). 6

In our measurement of claims about product quality made by sellers we follow Lewis (2019) who identified words in product descriptions that were relevant for the value of the car (e.g. rust, dent and scratch) and were frequently used. He then identified for each car if the seller mentioned the word and in which context. Sellers either did not mention a problem, informed the buyer that the car had the problem (‘car has rust’), or explicitly mentioned that the car did not have the problem (‘car is rust free’). We used this information to construct two variables counting the number of problems acknowledged and denied by the seller.

To test H1, we regressed measures of buyer trust on measures of seller reputation. We used the reputation of the seller and claims made by the seller as independent variables. We used three different dependent variables. First, we took the natural logarithm of the price offered by the highest bidder. Listings that did not receive any bids are excluded from this specific analyses. We used linear regressions for this variable. For the second dependent variable (whether the car was sold or not) we used logistic regression. The third dependent variable is the number of bidders, which is a count variable. We used negative binomial regression for this variable.

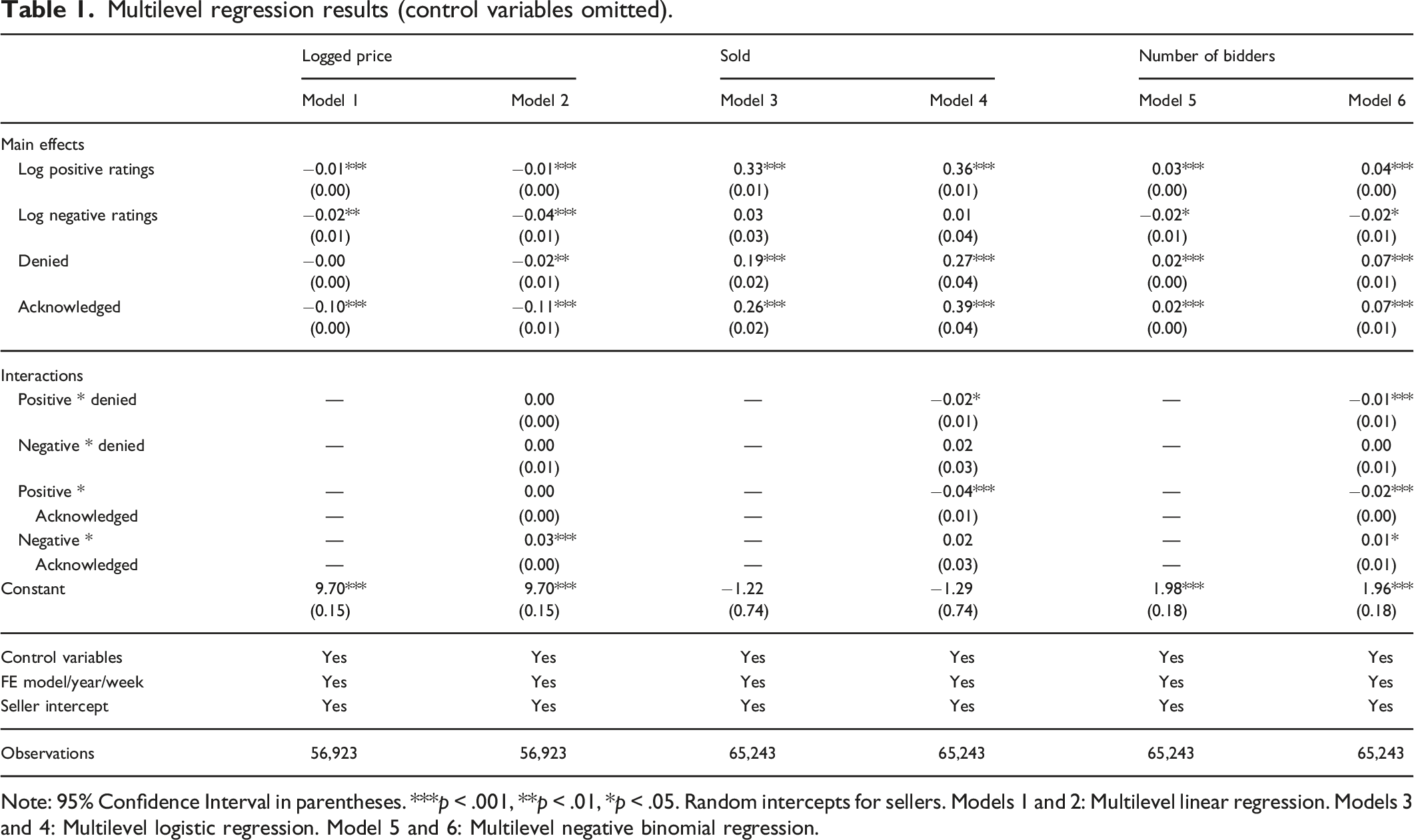

For every dependent variable, we first constructed a model with the independent variables and all control variables, as well as three sets of fixed effects, for car model, year of production and week of the auction (models 1, 3, and 5). Some cars were auctioned by the same seller, so we included random intercepts for sellers. In a second model (models 2, 4, and 6) we added the interaction between the reputation variables and the number of problems denied and acknowledged by the seller to test if bidders rely more on claims made by sellers with a better reputation (H1).

In all models we controlled for several car characteristics: logged mileage, logged length of the product description (in bytes), whether the car was a featured listing, and the number of options a car had (categorized as none, one or two, or more than two, because about half of the cars has up to two options). We also controlled for the duration of the auction (in days) and for several seller characteristics: whether the seller provided a phone number and address and whether the seller was a power seller. The histogram of mileage shows a relatively normal distribution, except for a high peak at the maximum of 500,000 miles. The results do not change when these cars are excluded from the analyses.

Table A1 in the online appendix contains the descriptive statistics for the variables used in the analyses. The average seller has many more positive reviews than negative reviews. This skewness of rating scores has been reported for various kinds of platforms (Teubner and Glaser 2018; Kas et al., 2021; Bridges and Vásquez 2018) and suggests that dishonest seller behavior is rare. The descriptive statistics also show that on average, sellers acknowledge more problems than they deny.

Results of study 1

Multilevel regression results (control variables omitted).

Note: 95% Confidence Interval in parentheses. ***p < .001, **p < .01, *p < .05. Random intercepts for sellers. Models 1 and 2: Multilevel linear regression. Models 3 and 4: Multilevel logistic regression. Model 5 and 6: Multilevel negative binomial regression.

We also replicate the main effects of denying and acknowledging problems reported in Lewis’ (2011). On average, denying problems did not affect prices (model 1), but had a positive effect on the probability that the car was sold (model 3) and on the number of bidders (model 5). 8 Acknowledging problems had a negative effect on prices (model 1), but a positive effect on the number of bidders (model 5) and the probability that the car was sold (model 3). It is plausible that sellers who acknowledge problems set lower secret reserve prices, so that they are more likely to sell their car despite lower prices, and when auctions stay low they likely attract more bidders.

Our main interest is in the interaction effects between reviews and claims about car quality. To test H1 that buyers are more likely to believe claims made by sellers who have been more honest in the past, we interacted the reputation variables with the claims made by the sellers. The key test of H1 lies in the interaction effect of problems denied (that is, promises of quality) and reputation. Contrary to theoretical expectations, the significant interactions between the number of problems denied and the number of positive reviews on the probability that the car was sold (model 4) and on the number of bidders (model 6) suggest that sellers with more positive reviews benefit less from denying problems than sellers with fewer positive reviews. Further contradicting H1, the effect of positive quality claims on prices did not differ across sellers with many or few negative reviews.

We furthermore find that the effect of acknowledging problems on sales probability and number of bidders is more strongly negative for sellers with more positive reviews, while sellers with more negative reviews suffer less from acknowleding problems: the negative effect of acknowledging problems on the price is smaller for sellers with more negative reviews, and the positive effect of acknowledging problems on the number of bidders is larger for them. This suggests that, because low-reputation sellers are less likely to be trusted in the first place, acknowledging problems is most damaging to high-reputation sellers who have “more to lose” in terms of trust. While this latter result is in itself not inconsistent with the theory, overall, the results do not consistently show that quality claims more likely to be believed when sellers have a better reputation, so we reject H1. 9

In defense of the theory of reputation, one could offer as potential explanation for why we did not find clear support for the predicted effect that we could not distinguish between having a reputation for honesty and a reputation for providing high-quality products. Reputation was operationalized by the numeric ratings provided by other users on the platform. These ratings were the results of a users’ (mental) aggregation of the evaluation of different aspects of the interactions. The data do not provide any details on what these aspects were and their weighing, so we do not know if these ratings were the result of providing a high-quality product or of honest communication or both. Whereas rating scores tend to be overly positive, written reviews have been found to be more detailed and nuanced (Bridges and Vásquez 2018). The written reviews could thus provide more insight here, but these were not available in the data.

The observational nature of the data also prevents us from ruling out confounding effects of omitted variables. Both reputation scores and trust in promises made by sellers may be caused by other, unobserved factors. For example, earlier studies on discrimination in the platform economy show that demographic characteristics such as ethnicity affect an individual’s chances of being trusted (Edelman and Luca 2014; Kas et al., 2021; Tjaden et al. 2018). Given that on most platforms, reviews can only be written after a completed transaction, these initial differences in the chances of being trusted may be reflected in the number of reviews an individual has managed to accumulate. Main effects of reputation and interaction effects between reputation and quality claims may then spuriously reflect main and interaction effects involving ethnicity. Similarly, individual personality differences may theoretically lead to spurious effects. For instance, traits like “agreeableness” (Ashton and Lee, 2007) may be correlated with behaviors in interpersonal interactions that affect the likelyhood of being trusted, which again could be reflected in numbers of reviews. To address these issues, we conducted a controlled experiment in Study 2.

Study 2: an online experiment

We conducted a second test of the theory in an online experiment in which we systematically varied the presence of a reputation system and the possibility for communication between buyers and sellers. This allowed us to test H1 in a controlled environment, eliminating the influence of omitted variables through randomization, and to test H2, H3 and H4 regarding the presence of a reputation system.

The experiment consisted of 36 rounds of an adaptation of the Trust Game (Dasgupta 1988; Kreps 1996) widely used to study effects of reputation. Subjects in a session were divided into groups of six or eight participants. At the beginning of each round, half of the participants in a group were assigned to role A (buyer), the other half was assigned to role B (seller). The game was divided into three blocks of 12 rounds. In each of these blocks, every subject played six rounds in role A and six rounds in role B, but the order in which they played in the different roles was determined randomly (cf. Charness et al., 2011). 10 In every round, each player A was randomly and anonymously matched with a player B.

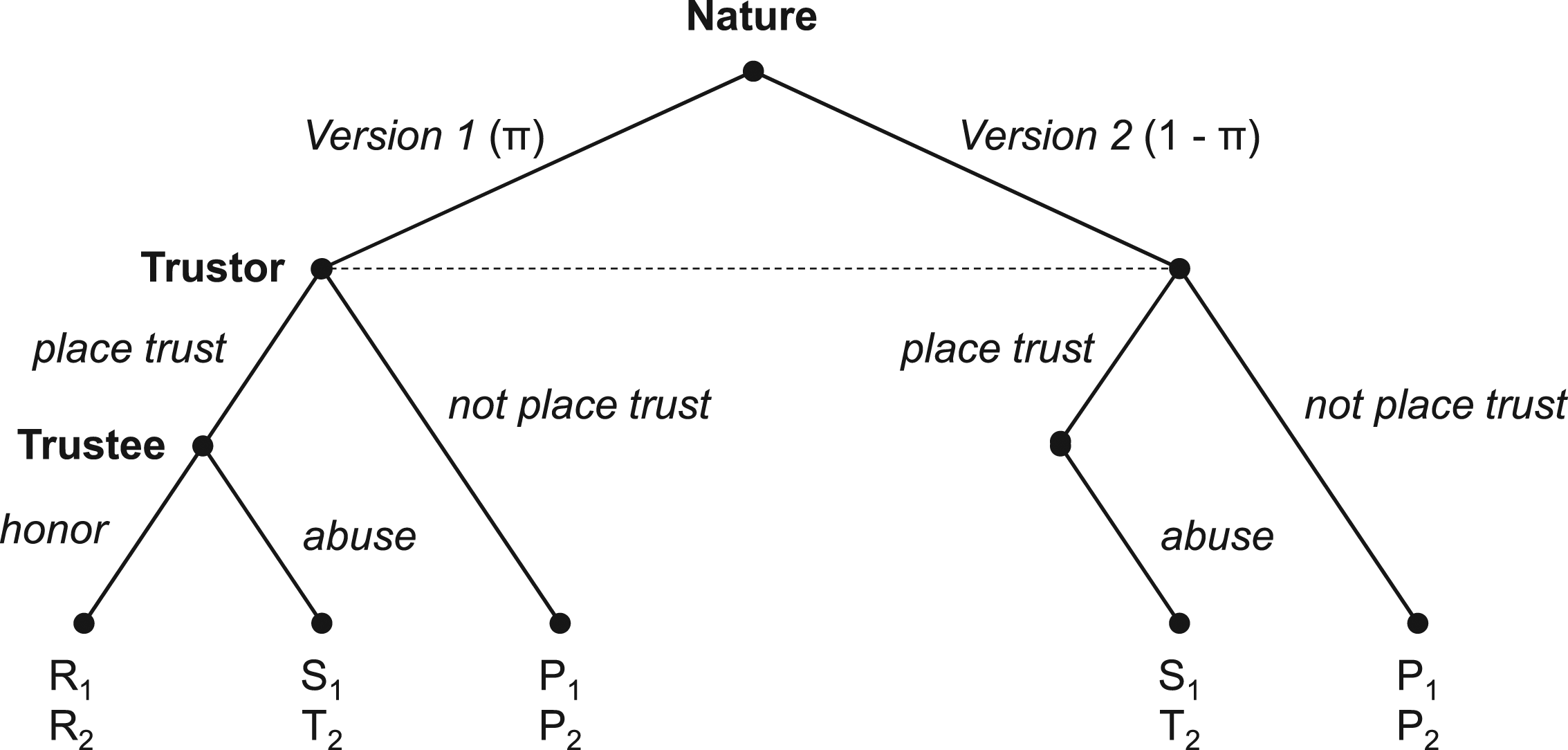

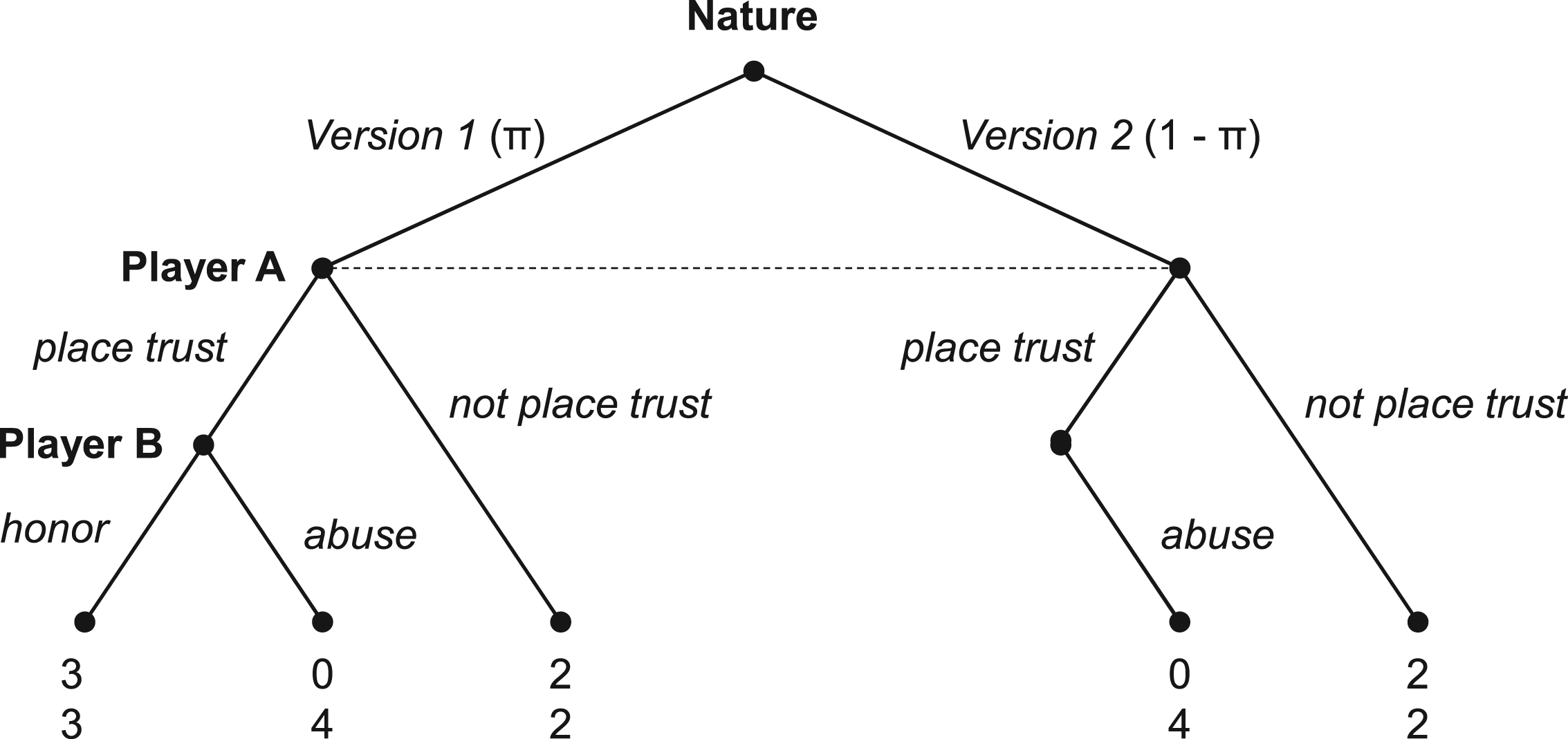

The pairs played one of two versions of a Trust Game. The left part of Figure 1 shows the first version of the game, which was a regular Trust Game. In that game, player A could choose to place trust (‘pay a high price’) or to not place trust (‘pay a low price’) in player B. If player A did not place trust, the game ended and both players received a payoff of two points. If player A chose to place trust, player B could choose between honoring (‘deliver a high-quality product’) or abusing trust (‘deliver a low-quality product’). If player B honored trust, both players received a payoff of 3 points. If player B abused trust, player A received 0 points and player B received 4 points. The second version of the game was similar to the first version. The only difference was that player B did not have the option to honor trust if player A placed trust. This version therefore represents a situation in which a seller (player B) only has a low quality product. Together, the two versions capture the seller heterogeneity that is crucial to our theoretical theoretical approach: without it, a negative signal would always mean that the trustee is choosing to cheat in a situation where they could have honored trust and announces this to trustor ahead of time. The right panel of Figure 1 shows the structure of one round of this game without a reputation system. In this figure P, R, S and T represent the material payoffs of the actors. Figure 2 contains the numbers used in the current experiment. One round of the game used in the experiment, where T2 > Ri > Pi > S1. Numerical example of the game used in the experiment.

In each round, the pairs played version 1 or two of the game with equal probability (π = ½). Players B were informed about which version of the game they were assigned to at the beginning of the round. Players A never knew which version they were playing. This heterogeneity introduced uncertainty for players A, since they did not know when a player B had the opportunity to honor trust and when not. This captures the key element characteristic of second-hand markets that a potential buyer does not know whether a seller can deliver a high quality product or only a low quality product. Even when a seller is very trustworthy and always chooses to send a high quality product when they have one available, they may frequently have only low quality products to sell.

The experiment had a two (No communication versus Communication) by two (No reputation versus Reputation) between-subjects design. Each session was assigned to one of four conditions. We refer to the treatment with both communication and reputation as the ‘Combined’ condition. In the two Communication treatments, players B (sellers) could send a binary, non-binding message at no cost to players A (buyers) in which they revealed what their choice would be if player A were to place trust. In the two Reputation conditions, reputation was operationalized as information about previous decisions made by players B. Before choosing to place trust or not, players A were informed about the actual decisions made by player B in the rounds in which player B acted in role B. In the Combined condition players A were also informed about promises made by players B in the past. The complete instructions for the participants can be found in the online appendix (B).

Procedures

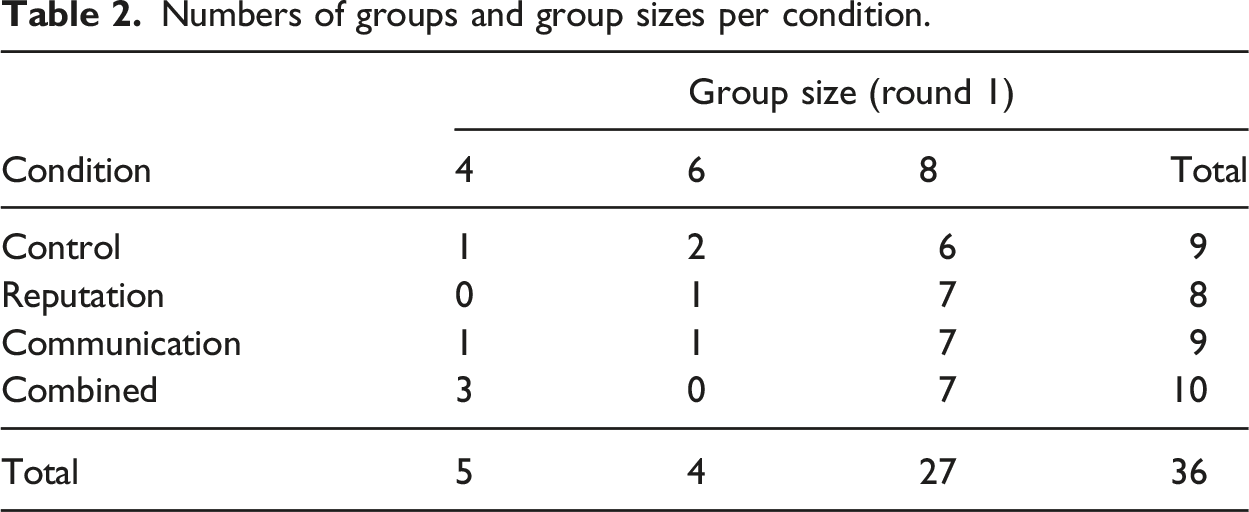

Numbers of groups and group sizes per condition.

The subjects received on-screen instructions. After reading the instructions they completed some comprehension questions. They could only continue with the experiment after they answered all questions correctly. The experiment was computerized with the software oTree (Chen et al. 2016), commonly used in online networked experiments. Figure B2 in the online appendix displays an example screen participants saw during the experiment. Participation was anonymous and no deception was used. 15 After playing the game, the subjects completed a short survey asking about some demographic characteristics as well as about their experience with game theory and their idea about the goal of the study. 74% of the subjects was female, 20% male, one subject indicated ‘other gender’ and the remaining subjects did not indicate their gender. The age of the subjects ranged from 18 to 79 (mean = 39). 12% of them indicated to be a student. 8% of the subjects had no degree, or only completed elementary school. About one third completed high school, another third obtained an undergraduate degree and 16% held a graduate degree.

Variables

Two treatment dummies were constructed that indicated whether a subject participated in a game with or without a reputation system, and whether player B could communicate with player A. The main dependent variable in most of the analyses was the decision of player A whether or not to place trust in player B.

The message sent by player B was operationalized by two dummy variables. These dummies indicated whether player B promised to honor trust or informed player A that they would abuse trust. The reference category was not sending a message. Honest communication was defined as promising to abuse trust, or as promising to honor trust and actually honoring trust. Dishonest communication entailed promising to honor trust, but then abusing trust. When player B promised to honor trust, but did not get the opportunity to prove that they were honest because player A did not place trust, players A could not judge whether that promise was honest or dishonest. Rounds in which the promise could not be classified as honest or dishonest have missing values for this variable. Note that all players B always had the possibility to be honest: either by promising to honor trust and actually honoring trust, or by warning in advance that they would not honor trust.

Player B’s reputation was measured by four variables that were constructed in two different ways. The first method included all previous decisions made by a player B in role B in the reputation variables. The first pair of variables counted the number of times player B honored and abused trust in the past. The second pair of reputation variables was only constructed for subjects in both conditions with communication and counted the number of times player B communicated honestly or dishonestly in the past. The second method accounts for the fact that although players A had access to all previous decisions of player B, they had to scroll down in the history window when they wanted to see more than the last six rounds (see Figure B2 in the online appendix). For this second method, the four reputation variables were constructed in same way, except that only the last six (more readily observable) rounds in which player B acted in role B were included. In every analysis, only one pair of reputation variables is included, to avoid severe multi-collinearity.

Results of study 2

Descriptive statistics

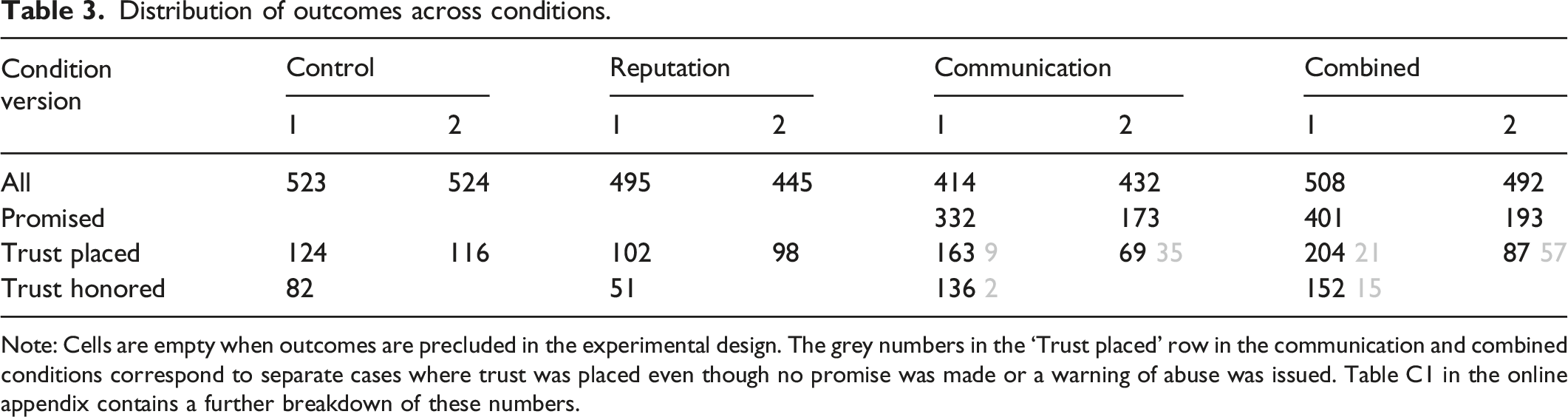

Distribution of outcomes across conditions.

Note: Cells are empty when outcomes are precluded in the experimental design. The grey numbers in the ‘Trust placed’ row in the communication and combined conditions correspond to separate cases where trust was placed even though no promise was made or a warning of abuse was issued. Table C1 in the online appendix contains a further breakdown of these numbers.

Group-level tests

H2 states that sellers are more likely to be honest in the presence of a reputation system. Table 3 shows no increase in the fraction of honored promises when going from the Communication condition to the Combined condition. A rank-sum test confirms that also at the level of (independent) groups there was no significant difference in the fraction of honest messages between the two conditions (MCommunication = 0.73, MCombined = 0.71, z = 0.089, p = 0.929, N = 18). 16 We therefore also do not find support for H2.

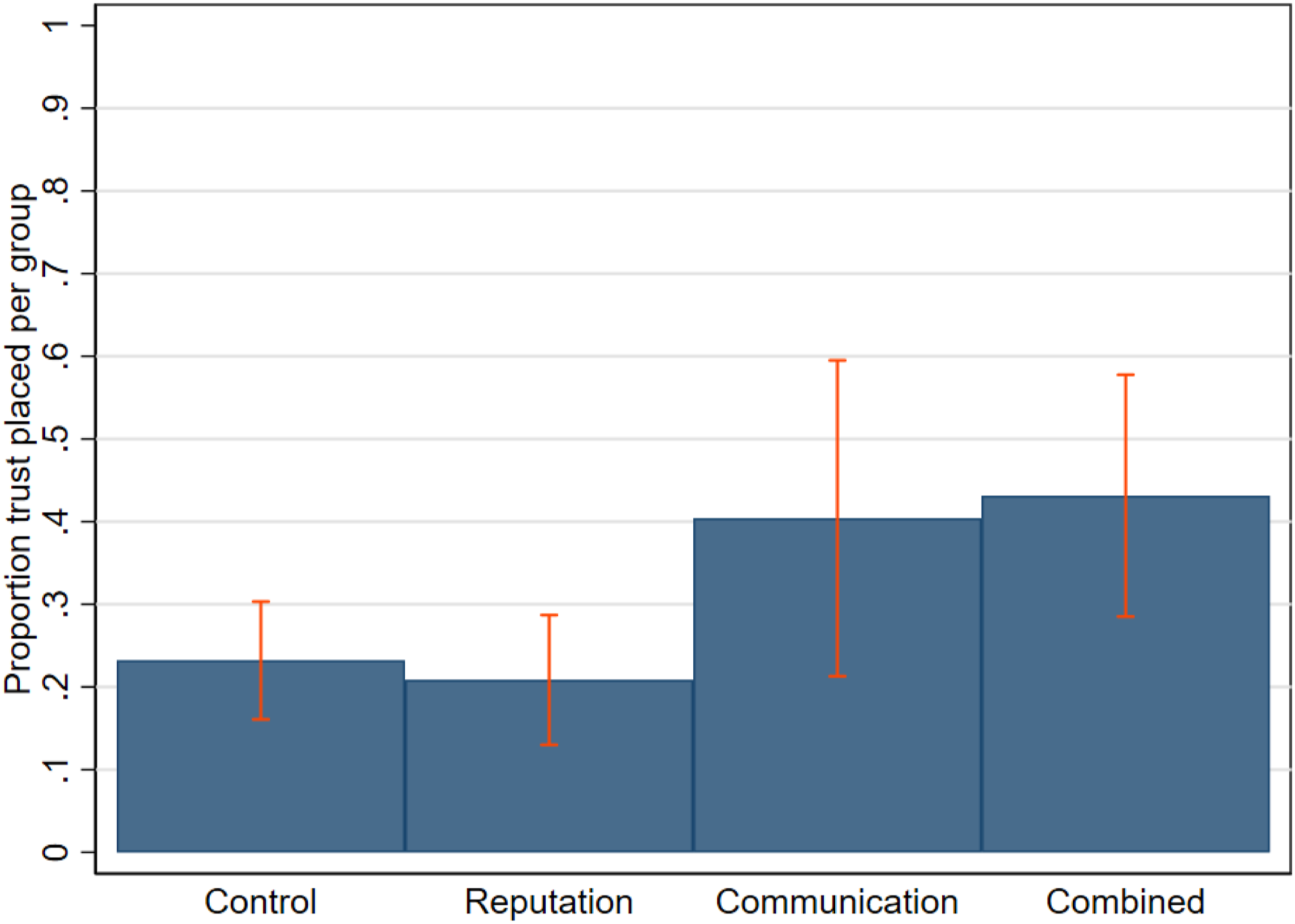

H4 predicts that trust will be placed more often if both a reputation system and the opportunity to communicate are present. To test H4, we compare the trust level of the Combined condition with each of the other conditions (Figure 3). The trust level is indeed the highest in the Combined condition, although the difference with the Communication condition is very small. For a robust test of the differences, we use three rank-sum tests at the group level. These show that players A placed more trust in groups in the Combined condition than in groups in the Control condition (z = 3.136; p = .002, N = 19) and groups in the Reputation condition (z = 2.887, p = .004, N = 18), but not when compared with groups in the Communication condition (z = 0.572, p = .567, N = 19). We do not find support for H4. Mean proportion of trust placed per group, by experimental condition (N = 36). Error bars represent 95% confidence intervals.

We note, though, that the number of cases at the group level is relatively small. Indeed, an approximate power analysis suggests that with the given number of cases and the observed means and standard deviations, finding a significant difference would require a rather large true effect. It is thus possible that this null finding is the result of a lack of statistical power. For this reason, we also test the hypothesis at the individual level (below). So far, however, the evidence suggests that the ability to make a promise, even if from a self-interest perspective such a promise would be empty, is key to trust, and not the ability to build a reputation for being honest.

It takes time for trustees to build a reputation, so the hypothesized effects should be stronger in the second half of a game. We repeated the rank-sum tests of differences between the level of trust between the conditions, this time only including the second half of each game. The results of these tests are no different from the main tests, suggesting that the lack of an effect of a reputation system cannot be explained by the limited number of reviews accumulated in the first half of a game.

Individual-level tests

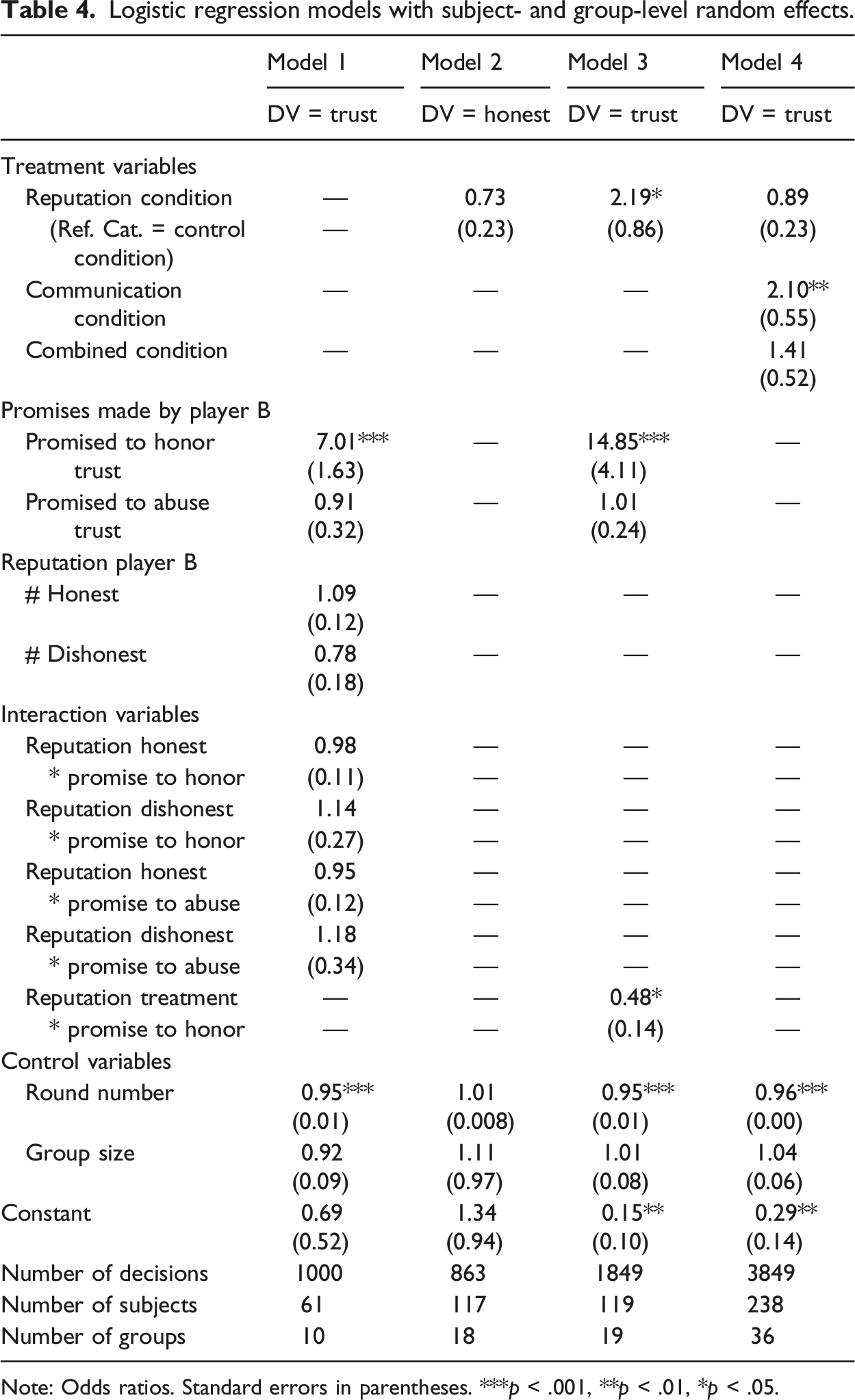

H1 and H3 do not have group-level analogues and must be tested at the level of individual decisions. We regressed individual decisions in game rounds. 18% of the variance was explained at the individual level and 2% at the group level.

Logistic regression models with subject- and group-level random effects.

Note: Odds ratios. Standard errors in parentheses. ***p < .001, **p < .01, *p < .05.

As an additional robustness check, we repeated the analysis with a slightly different calculation of reputation scores. Each player A viewed player B’s reputation in a history box on their screen. The last six rounds in which player B acted in role B were always visible, but to see earlier rounds, player A had to scroll down. When using reputation variables that are only based on the last six rounds in which the current player B also acted as player B, the results do change. The interaction between the number of times player B has been honest and the promise to honor trust turns positive and significant (odds ratio = 1.254, z = 2.04, p = .041). This means that players A were significantly more likely to place trust in a player B who promised to honor trust when that player B had been more honest in just the last six rounds.

Model two in Table 4 shows the results of a logistic regression of both communication conditions with the honesty of player B as the dependent variable. The dummy variable that indicated if player B promised to honor trust was interacted with the dummy variable for the reputation treatment. The logistic regression results corroborate the findings from the group-level rank-sum test of H2. We find that players B were not more likely to make an honest promise in the presence of a reputation system than when there was no reputation system. The results did not change when fitting a linear probability model. We reject H2.

Model 3 in Table 4 contains the results of a logistic regression of both communication conditions with the decision of player A as the dependent variable. We interacted the variable that indicated if player B promised to honor trust with the reputation treatment variable to test H3. Contrary to theoretical expectations, players A attached less value to B’s promise to honor trust when there was a reputation system than when there was no reputation system. When running a linear probability model, we find that the interaction turns insignificant. Neither model supports the hypothesis that the effect of promises to honor trust is stronger in the presence of a reputation system. We also reject H3.

To evaluate H4 at the individual level, we estimated a logistic regression with the variables that indicated to which treatment the subjects were assigned as the independent variables and with the decision of player A as the dependent variable. Model 4 contains the results of this regression. Corroborating the finding from the Wilcoxon rank-sum test at the group level, we find that more trust was placed when players B could make promises to players A than when they could not. The presence of the reputation system did not affect the probability with which player A placed trust. Running a linear probability model instead does not alter the results. We reject H4.

To summarize, support for H1 was weak and inconsistent while H2, H3, and H4 were unequivocally rejected. The results from the experiment do not support the theory.

Discussion

We are left with a puzzle. Unverifiable assurances about product quality made by sellers in second-hand markets increase the prices buyers are willing to pay (Lewis 2011; Anderson et al., 2007; Rawlins and Johnson 2007; Neto et al. 2016; Snijders and Zijdeman 2004; Heijst et al., 2008; Sena et al. 2005). Buyers mostly report to be satisfied with sellers’ products; strangers thus cooperate successfully in this context. We have firmly rejected the going explanation that this is enabled by reputation systems that provide a necessary rational incentive for sellers to behave honorably so that buyers can trust them. Both in observational data from eBay auctions and controlled data from an original experiment we found that buyers trust quality claims no less when coming from sellers who lack a good reputation and just as much when sellers cannot even build a reputation. Why then do buyers trust promises made by sellers when these commitments can be reneged on without consequence, and why do sellers not take advantage? How was second-hand market platform Craigslist able to reduce solid waste by a third of a pound per capita (Fremstad 2017; Dhanorkar 2019) without a reputation system?

We contend the puzzle arises from an undersocialized conception of human behavior that assumes away essential normative behavior in markets. The middle ground between generalized morality and economic utilitarianism (Granovetter 1985) that the new institutionalism and economic sociology have settled on is one that firmly replaces atomism with networks and perfect-with bounded rationality, but often still implicitly maintains economics’ self-interested behavior as theory of action except in embedded relations (Granovetter and Swedberg 1992; Fourcade and Healy 2007; Krippner and Alvarez 2007; Zelizer 2012; Hitlin and Vaisey 2013; Nee 2005; Nee and Swedberg 2020; Bandelj 2020). Just as in the new institutionalism in economics, markets are in essence still understood as functioning properly only because individuals’ natural inclination to lie and cheat when this pays off is reined in by effective institutions that reward self-control over those urges. We do not wish to bend the stick too far the other way and deny the possibility of occasional deceit by bad actors or suggest that institutions like reputation systems or network embeddedness would never be important for market functioning. Rather, we suggest that there are identifiable limits to what most people are willing to do for selfish gain and that this is common knowledge so that problems that go beyond those limits are solved without institutions, e.g. through socialization into self-respecting individuals. Once identified, these limits will imply conditions under which institutions can be expected to be instrumental for market functioning.

One concrete limit suggested by the present paper is one drawn between simple selfish behavior and being a liar. There is overwhelming evidence that in homogenous markets where suppliers always offer the same product or service, reputation systems do precisely what a theory of self-interest would predict (Boero et al., 2009; Bolton et al. 2004; Charness et al., 2011; Duffy et al. 2013; Fehrler and Przepiorka 2013; Frey and Van De Rijt 2016; Jiao et al., 2021; Teubner et al. 2017; Buskens et al. 2010; Ba and Pavlou 2002). In homogeneous markets, the challenge is to find a seller who will reliably deliver a good product. Failure to deliver a product or not producing a very good product can happen to a well-intended person. They also happen to sellers who in order to save cost choose not to invest in a better product or a more reliable delivery service and are not thinking too hard about disappointed customers. The problem is one of avoiding such lousy transaction partners. Reputation systems help buyers find sellers who perform better for the same price (Yang 2008; Weigelt and Camerer 1988)

We contrast this with the heterogeneous markets we have studied in this paper. In online auctions of second-hand goods, the challenge is instead to avoid sellers who do something most of us would feel ashamed of: to make a promise with the intention of breaking it. We posit that for this reason reputation systems are much less needed here. Few sellers are willing to go so low and because buyers know this, they trust sellers at their word even if they do not have a reputation for keeping their promises. In the eBay study, quality assurances coming from second-hand car sellers without a good reputation seemed to be believed just as much and we found the vast majority of reviews to be highly positive, also when given to a seller lacking a reputation. Even in the online experiment, where the context of mere game play makes deceit much less depraved, we found that reputations have no noticeable impact on buyers’ willingness to trust promises and that a majority of sellers honor their promises.

While the multiple research methods and data sources used in the present study have complementary strengths and as such provide a robust basis for drawing these conclusions, evidence on mechanisms is weak. We cannot rule out that the reason buyers do not combine reputation information and promises made by sellers when making decisions is that they act habitually rather than strategically. Perhaps they simply do not stop to think and realize sellers could lie and get away with it. To anticipate the future behavior of sellers who in turn anticipate future behavior of other buyers, current buyers need to reason multiple steps ahead and believe sellers do too. Similarly, it may be that many sellers do not anticipate the reputational consequences or lack thereof when lying or speaking the truth. Also, when buyers do not condition their behavior on the seller’s reputation for honesty, there is no reason for sellers to communicate more honestly, which could also explain why we did not find a difference in the communication of the sellers between the experimental conditions. While failure to reason strategically is an implausible account for behavior in the experiment, as subjects practiced strategic play in the instructions phase prior to the experiment, we cannot rule out that many sellers and buyers in everyday markets for used goods would act normlessly if not for a widespread oblivion about incentives, opportunism, and the strategic importance of reputations. A more qualitative approach including interviews with buyers and sellers could help differentiate between our conjecture of internalized norms, bounded rationality and yet other behavioral logics that could undergird non-reliance on reputations in the making, believing and honoring of promises in second-hand markets.

Supplemental Material

Supplemental Material - Trust, reputation, and the value of promises in online auctions of used goods

Trust, reputation, and the value of promises in online auctions of used goods by Judith Kas, Rense Corten and Arnout van de Rijt in Rationality and Society

Footnotes

Acknowledgements

We thank Vincent Buskens, Werner Raub, and two anonymous reviewers for helpful comments and suggestions.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Dutch Research Council (452-16-002).

Data Availability Statement

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.