Abstract

In the last two decades, evaluator competency frameworks have become ubiquitous. Many have been developed by reviewing the literature and engaging in various research processes to ask evaluation practitioners what they do, in order to determine what evaluators should know and be able to do. Due to this practice focused approach, the underlying philosophical and theoretical assumptions of competencies are rarely questioned. In this article, the authors explore this territory by categorising competencies using two taxonomies. Mertens and Wilson’s 2018 Program evaluation theory and practice provides a philosophical framework, and Schwandt & Gates’ 2021 Evaluating and valuing in social research provides a theoretical framework. The authors apply these frameworks to the three evaluation specific domains of the Australian Evaluation Society (AES) Evaluators’ Professional Learning Competency Framework as refined for the Learn Evaluation Assessment Platform in 2020. Findings of this exploratory study suggest that the AES’ conception of evaluator competencies aligns primarily with Mertens and Wilson’s pragmatist/neopragmatist paradigm and Schwandt and Gates’ expanded frame. The authors discuss implications of the results and propose ideas for further improvement and testing of this research approach.

• Evaluator competency frameworks have become ubiquitous in evaluation in the last decade. • The AES published their evaluator competency framework in 2013. • Many evaluation taxonomies classify evaluation practice and theory, and these can be used to understand theoretical underpinnings of evaluation materials. • Method provides a way to make the underlying philosophical and theoretical assumptions of the AES Competency Framework explicit. • Findings suggest that the AES Competency Framework promotes evaluation characterised by an appraisal of values, context, stakeholders, and rigorous research methods, but does not address First Nations cultural specificities. • Tool used for this analysis could be refined for future research on any kind of evaluation materials.What we already know

The original contribution the article makes to theory and/or practice

Introduction

Evaluator competency frameworks have become ubiquitous in the field of evaluation in the last decade. The literature suggests that these frameworks may serve many functions – they can be used to guide evaluators’ professional development; they can be used as a self-reflection tool for practitioners to improve their practice; they can help advance evaluation research; and they can assist in professionalising the field (Lake, 2005; Stevahn et al., 2005). In 2013, the Australasian Evaluation Society (now the Australian Evaluation Society, AES) published its Evaluators’ Professional Learning Competency Framework (AES Professional Learning Committee, 2013). To establish this framework, the AES drew on competency frameworks from other voluntary organisations for professional evaluation such as the Aotearoa New Zealand Evaluation Association, the European Evaluation Society, and the Canadian Evaluation Society (AES Professional Learning Committee, 2013). Additionally, they consulted AES members to determine what evaluators currently do. Finally, they developed the competency framework to prescribe what evaluators ought to know.

However, since the field has yet to agree on a common definition of evaluation (Gullickson, 2020), there are still many ways to conceptualise and enact evaluation practice. Consequently, each evaluator competency framework reflects the inclinations of the voluntary organisation for professional evaluation that develops them. Based on the literature reviewed, a systematic analysis of how evaluator competency frameworks conceptualise evaluation practice is yet to be conducted. Meanwhile, these normative frameworks are disseminated without a good understanding of their underlying philosophical and theoretical assumptions.

This article therefore seeks to expand the literature on competency frameworks by exploring how evaluation practice is conceptualised within the AES competency framework. The authors began by systematically categorising three domains of the AES competency framework into two evaluation taxonomies. Evaluation taxonomies are conceptual frameworks that organise evaluation theorists and theories in distinct and often mutually exclusive categories.

On one hand, Mertens and Wilson’s (2018) evaluation taxonomy classifies 76 theorists into four paradigms and their associated practical focus: (a) the postpositivist paradigm which focuses on methods, (b) the pragmatic paradigm which focuses on use, (c) the constructivist paradigm which focuses on values, and (d) the transformative paradigm which focuses on social justice. On the other hand, Schwandt and Gates’ (2021) evaluation taxonomy identifies three ways of conceptualising and practicing evaluation: (a) the conventional frame, (b) the expanded frame, and (c) the alternative frame.

In this analysis, we combined two frameworks to compare different theoretical perspectives on evaluation. We describe these frameworks and how they are used in further detailed in the evaluation taxonomy section. The three AES competency domains categorised in these taxonomies are: Domain 3 Theoretical foundations, Domain 4 Attention to culture, stakeholders, and context, and Domain 5 Research methods and systematic inquiry. We selected only these three domains as they are specific to the practice of evaluation and are theoretically oriented, while the other domains emphasise atheoretical knowledge or interpersonal skills. In the following sections, we cover the relevant literature and conceptual framework for our investigation, describe our methods and findings, and discuss the implications for the results and potential future uses of this framework for deepening understanding of evaluation competencies.

Theoretical framework

In this section, we provide a historical perspective on the development of competency frameworks and their uses. Guided by this narrative, we justify the choice of the Australian Evaluation Society’s competency framework as a valuable case study. We conclude this section by introducing the conceptual frameworks used for this investigation and their inherent limitations.

The development and uses of competency frameworks

Evaluator competency frameworks are normative written documents that systematically compile a set of competencies to inform the public on the roles of the evaluator and practice of evaluation. According to Diaz et al. (2020), competencies represent the skills, knowledge, and attitudes necessary to successfully complete tasks in a job – the job of program evaluation in this case. They echo Wilcox and King (2013) and King and Stevahn (2015) when explaining that competencies are different from the concept of competence. While competencies are the observable skills or attitudes necessary to complete specific tasks in a job, competence is the general quality of being competent for that job (Diaz et al., 2020).

Competency frameworks are generally developed through a mix of literature review, expert panels, consultations with practitioners, and comparison to evaluation standards and other institutional frameworks (Garcia & Stevahn, 2020). During their development phase, these frameworks try to answer the questions: what do evaluators do? And what should good evaluators know and be able to do? However, the frameworks are rarely appraised at a macro level to decipher what kind of evaluation they promote.

The literature on competency frameworks started in the 1980s with idiosyncratic lists of competencies developed by specific authors (Fathy, 1980; Ingle & Klauss, 1980; Kirkhart, 1981; Scriven, 1996). While these remain useful documents, more comprehensive competency frameworks were developed in the 2000s. For example, the Essential Competencies for Professional Evaluators, first developed in 2001 by King et al. and revised in 2005 by Stevahn et al. became a reference point for other competency frameworks around the world. This framework was later revised and endorsed by the American Evaluation Association in 2018 (AEA, 2018).

As a trailblazer in the world of competency frameworks, Stevahn et al.’s (2005) Essential Competencies for Professional Evaluators framework generated numerous discussions on its value and potential use. It has been used to compare the competencies developed during graduate education with those sought by evaluation employers (Dewey et al., 2008), and to assess the relevance of the framework’s competencies in other cultural contexts such as Taiwan (Lee et al., 2012, 2013). Galport and Azzam (2017) and Froncek et al. (2018) use the framework as an assessment tool for practitioners. In this way, the framework helps practitioners to determine which competencies they believe are important while identifying which domains they should develop further. Some authors drill through specific domains, to understand what makes great evaluation managers (Baizerman, 2009), or how to improve evaluators’ reflexive practice (Smith et al., 2015). In contrast, other authors embrace the entire framework as a tool to define evaluation quality (Cooksy & Mark, 2012).

Following the American impetus, multiple voluntary organisations for professional evaluation around the world developed their own sets of competencies. These include Aotearoa New Zealand, Australia, South Africa, the UK, Europe, and the International Development Evaluation Association, to cite a few (King & Stevahn, 2015; Kuji-Shikatani, 2015). Canada, Japan, and Thailand also use their frameworks for evaluator credentialing, which demonstrates the link between competencies and professionalisation of the field (King & Stevahn, 2015; Kuji-Shikatani, 2015). Nonetheless, compared to the North American competency frameworks, other frameworks have not generated as much literature.

Additionally, while the literature reviewed mostly addresses how the frameworks were developed and how they are used, it lacks a thorough analysis of their underlying assumptions. As such, this article seeks to expand on the competency framework literature by filling two gaps. First, it investigates the AES framework, which has rarely been discussed so far. Second, it attempts to draw out the implicit philosophical and theoretical assumptions that underpin this framework. By making these assumptions explicit, this article reveals the theoretical orientation of the AES framework, thus highlighting its limits.

Competency frameworks are increasingly used as a basis for evaluator education. Examples of this competency-based approach can be found in special issues of the Canadian Journal of Program Evaluation (Bourgeois, 2021) and Evaluation Program Planning (Gullickson et al., 2021). With this approach, educators design professional-development materials and graduate studies in line with the competencies. Following this trend, the University of Minnesota recently used the AEA competency framework to redesign its Master and PhD in Evaluation Studies (LaVelle & Johnson, 2022).

Since competency frameworks are used for evaluator education, it is imperative to clearly understand how they conceptualise evaluation practice, because each conceptualisation has limits. Understanding this would also be valuable for practitioners, as it situates the competency framework within a frame or paradigm. It therefore softens the normativity of the framework and invites practitioners to reflect on other competencies that are, or could be, useful for their practice.

Consequently, this article attempts to make the underlying assumptions of the AES competency framework explicit to answer the following questions: how is evaluation conceptualised in the AES competency framework? What kind of evaluation does the AES promote through their competency framework? In the next section, we briefly describe the evaluation taxonomies used for this analysis.

The AES Evaluators’ Professional Learning Competency Framework

We chose the AES competencies for our analysis as they were recently refined for the Learn Evaluation Assessment Portal and are likely to undergo a full revision in the next few years. The AES framework comprises 96 competencies divided into 7 domains. The different domains are numbered from 1 to 7 as follows (Gullickson et al., 2024, this issue): 1) Evaluation activities 2) Evaluative attitude and professional practice 3) Theoretical foundations 4) Attention to culture, stakeholders, and context 5) Research methods and systematic inquiry 6) Evaluation project management 7) Interpersonal skills

While all domains of the framework are related to evaluation, we only analysed Domain 3, 4 and 5, as these are what makes evaluation unique as a field. In contrast, Domain 1 is quite general, with broad statements regarding the foundations of evaluation and no particular theoretical orientation; Domain 2 mostly corresponds to desirable dispositions for the evaluator; and Domains 6 and 7 constitute more general skillsets that are useful for evaluation.

Taxonomies of evaluation practice

There are many taxonomies that classify the various ways of practicing and conceptualising evaluation. For this study, we have chosen the following two taxonomies: • Schwandt and Gates’ (2021) Evaluation Frames • Mertens and Wilson’s (2018) Evaluation Paradigms

We refer to these as evaluation frameworks and taxonomies interchangeably thereafter. Before delving into the content of these taxonomies, we use Arbour’s (2020) ‘framework clarification’ tool to clarify their characteristics and justify our choice.

Frameworks constitute sets of ideas that provide a perspective on a particular object and proposes four dimensions to understand them (Arbour, 2020). These dimensions include: (1) the type of ideas, for example, is the framework clarifying concepts or determining causal theories; (2) the object, what is the framework referring to; (3) the type of claims on these objects, are these claims positive or normative; (4) the fourth dimension is optional and refers to the institutional character of a framework, is it part of a norm or regulation for behaviours in the field of evaluation. We do not address the fourth dimension as the taxonomies from Mertens and Wilson (2018) and Schwandt and Gates (2021) are not institutionalised frameworks.

As frameworks, Schwandt and Gates’ (2021) evaluation frames and Mertens and Wilson’s (2018) evaluation paradigms share similar characteristics on the first and second dimensions, however, they differ on the third. Both are conceptual frameworks (1), which means that they provide information on a set of concepts related to a particular object. In this case, the object (2) concerns the various ways in which evaluation practice is conceptualised and enacted. They categorise how evaluation is thought about and practiced by various actors in different historical and geographical contexts. It is important to recognise that these are not theoretical frameworks, as they do not posit any causal links between different propositions related to evaluation practice. Instead, they classify different types of evaluation practice.

These two conceptual frameworks on evaluation practice make different types of claims (3). While Mertens and Wilson (2018) provide positive claims, Schwandt and Gates (2021) make normative claims. On one hand, Mertens and Wilson (2018) depict the different trends in evaluation practice using the concept of research paradigms. They lay out the four paradigms they have identified, their characteristics, and place various theorists and theories within these paradigms. Although the authors are themselves situated in the transformative paradigm, they do not suggest that it is the one paradigm that everyone should follow. They simply state that there are different approaches to evaluation practice.

On the other hand, Schwandt and Gates (2021) depict three frames of evaluation and encourage the readers to practice evaluation within a particular one, the alternative frame. This is a normative claim, as they justify why it is important to move evaluation practice towards that direction, rather than practicing within the other evaluation frames.

Evaluation frames

Schwandt and Gates’ (2021) evaluation frames taxonomy is innovative due to the language and perspectives it uses to describe the types of evaluation practice. The authors use Martin Rein’s definition to clarify the concept of frame: ‘A frame is a way to understand the things we say and see and act on in the world. It consists of a structure of thought of evidence, of action, and of interests and of values’ (Martin Rein, in Schwandt & Gates, 2021, p. 67). The ideas present in this framework have currency in Australian evaluation communities of practice, as evaluation experts often discuss this framework with the aim to enhance their practice (The University of Melbourne, personal communication, March 7, 2022).

The three evaluation frames depicted by Schwandt and Gates (2021) are used for the first part of the analysis. First, they outline two broad types of evaluation that are concurrently happening: evaluations undertaken within the conventional frame, and those within the expanded frame. Third, they depict an emerging alternative frame and encourage evaluators to shift their practice towards the principles of that alternative frame.

The conventional frame corresponds to evaluations driven by technical rationality and the idea of performance measurement. In the conventional frame, evaluators generally evaluate the outcomes of a program as per the commissioner’s requirements while relying heavily on experimental methods. The issues of values, knowledge, and power are not addressed in this frame, as the goal is to measure performance based on stated goals. As a result of ongoing critics about the limits of the conventional frames, evaluators developed the expanded frame.

In the expanded frame, evaluators start by questioning the rationale for the program, the stated goals, and the way the program has been designed to address the issue identified. Evaluators in this frame appraise the role of values and knowledge and consider the benefits of a program for both upstream and downstream stakeholders. This means that not only decision-makers are considered, but the program beneficiaries are also consulted during the evaluation.

In addition to the conventional and expanded frames, an alternative frame is emerging. In the alternative frame, evaluators push the boundaries by advocating for social justice and challenging the assumptions around the evaluand. This frame recognises that we live in an increasingly complex world, and that power relationships inherent in society are manifest in programs.

Evaluation in the alternative frame has multiple goals. First, it seeks to challenge the status quo and orient evaluation practice towards a morally committed social science. Second, it sees programs as part of complex systems with many moving parts. Third, it sees evaluation as a collaborative approach through which various stakeholders should be involved in order to change or transform the situation evaluated (Schwandt & Gates, 2021). Consequently, the authors encourage evaluators to incorporate different theoretical approaches in their practice, such as systems thinking, complexity science, boundary critique and Indigenous standpoints. Some would argue that the alternative frame is not new and that certain evaluators have been challenging evaluands’ assumptions for a while, but that is another debate. For this study, we use these three frames as reference.

Evaluation paradigms

Mertens and Wilson’s (2018) evaluation paradigms taxonomy expands on established evaluation taxonomies, such as Guba’s analysis of alternative paradigms, Pawson and Tilley’s (1997) paradigm taxonomy, and Christie and Alkin’s (2004) evaluation tree. They use Guba and Lincoln’s definition of paradigms, which are understood as metaphysical constructs characterised by specific philosophical assumptions (Mertens & Wilson, 2018). These philosophical assumptions are linked to ontology, epistemology, methodology, and axiology, and the authors address these four categories for each paradigm.

In their taxonomy, Mertens and Wilson (2018) present four evaluation paradigms and their associated concern. The postpositivist paradigm is concerned with methods, the pragmatist/neopragmatist paradigm is concerned with use, the constructivist paradigm with values, and the transformative paradigm with social justice. We refer to Mertens and Wilson’s (2018) evaluation paradigms in the second part of this analysis.

Limitations of the frameworks

Although they may be adequate for their original purpose, each framework presents limitations for this study. The description of evaluation frames lacks the consistency of the evaluation paradigms, as Schwandt and Gates (2021) do not address the various aspects of methodology, ontology, axiology, and epistemology for each frame. This leads to inconsistencies, which have tangible implications for the results of the study.

When thinking critically about the AES competency framework, it was important to invoke different taxonomies. Schwandt and Gates’ (2021) evaluation frames and Mertens and Wilson’s (2018) evaluation paradigms are complementary in that regard. Schwandt and Gates’ (2021) normative claims open the discussion on what evaluation should become, while Mertens and Wilson (2018) allow us to apprehend the underlying philosophical and theoretical assumptions inherent in evaluation practice. Together they provide a perspective on the foundations of evaluation and its future.

In this theoretical section, we reviewed the literature on competency frameworks and discussed the two conceptual frameworks used for this analysis. The empirical section that follows explains the methods and results of this exploratory study.

Methods and findings

In this section, we discuss the methods and findings of the study.

Methods

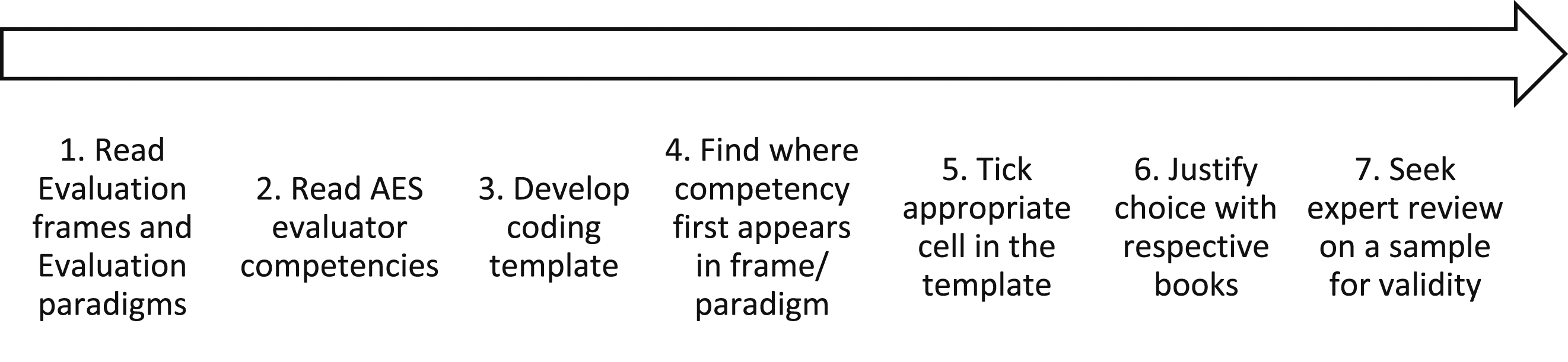

We used the two conceptual frameworks to categorise the AES competencies; the steps are mapped in Figure 1. First, we critically read Evaluating and valuing in social research by Schwandt and Gates (2021) and Program evaluation theory and practice: A comprehensive guide by Mertens and Wilson (2018). We also examined the updated AES competencies (Gullickson et al., 2024, this issue). Afterwards, we developed a coding template to classify the AES evaluator competencies across the evaluation frames and the evaluation paradigms. Only the following three domains were included in the classification: • Domain 3 - Theoretical foundations • Domain 4 - Attention to culture, stakeholders, and context • Domain 5 - Research methods and systematic inquiry Process map for the exploratory analysis of the AES competencies.

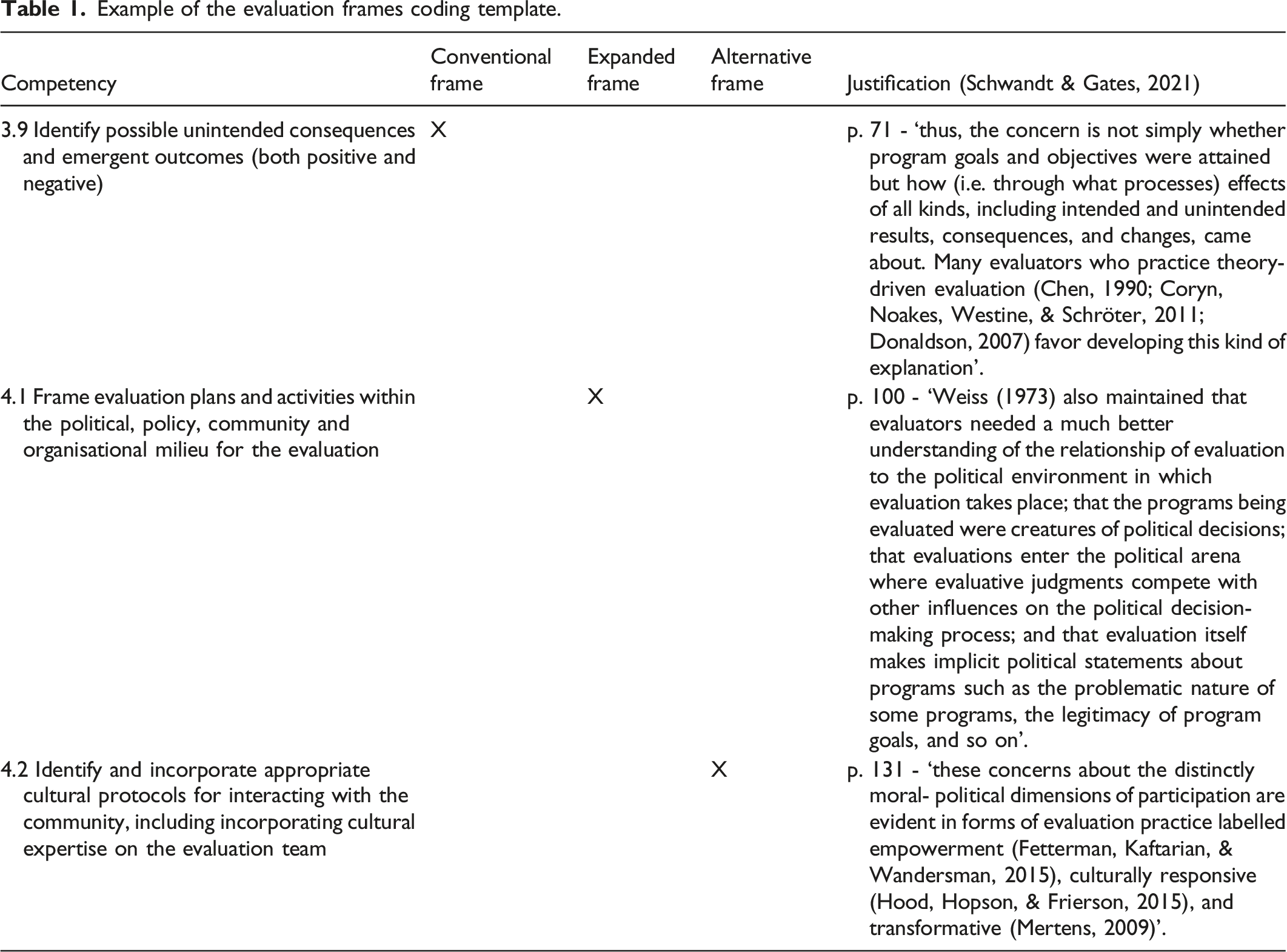

Example of the evaluation frames coding template.

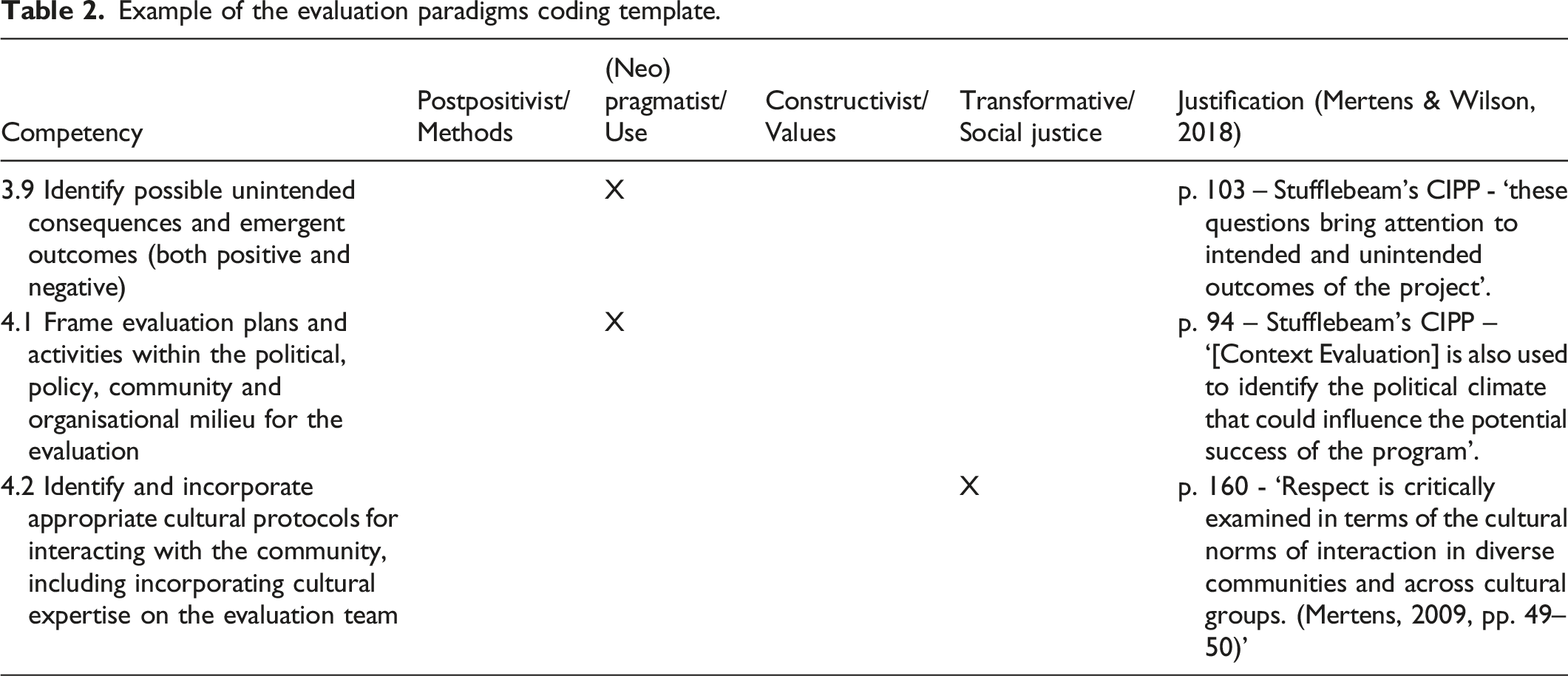

Example of the evaluation paradigms coding template.

The analysis consisted in categorising each competency in the frame or paradigm in which this competency first emerged. This point is crucial because the most recent frames and paradigms have built on the previous ones. In other words, the newer frames and paradigms incorporated previous theoretical, practical, and methodological approaches. Without following this process, most competencies would be categorised in all frames and paradigms, which would provide no useful information.

Results

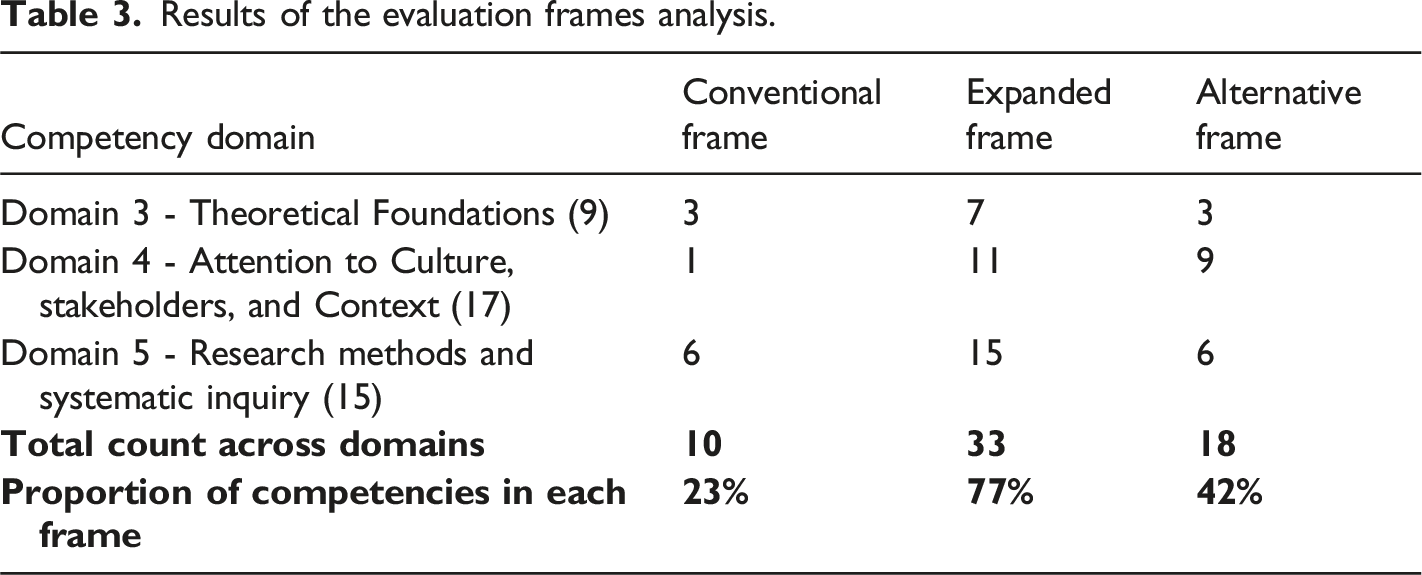

The three domains analysed account for a total of 43 competencies, divided as follows: • 9 competencies in Domain 3 Theoretical foundations; • 17 competencies in Domain 4 Attention to culture, stakeholders, and context; and • 15 competencies in Domain 5 Research methods and systematic inquiry.

Results from the evaluation frames analysis

Results of the evaluation frames analysis.

All 15 competencies from Domain 5 can be found in the expanded frame. This result is surprising given that systematic inquiry and objective knowledge were features of the conventional frame. As such, one would expect to find most of the Domain 5 Research methods and systematic inquiry competencies in the conventional frame. However, this result is linked to a limitation of the evaluation frames framework.

In the conventional frame, the authors mostly discuss the philosophy of practice. While conventional evaluation is driven by technical rationality, research methods are not discussed. Consequently, Domain 5 Research methods and systematic inquiry returns incorrect results in this analysis.

Meanwhile, the alternative frame gathered 9 out of 17 competencies from Domain 4 Attention to culture, stakeholders and context. This shows that the AES does promote an appraisal of culture and power relationships in evaluation. However, there are currently no mentions of conceptual and theoretical tools specific to the alternative frame such as boundary critique, systems thinking and complexity science, or Indigenous standpoints.

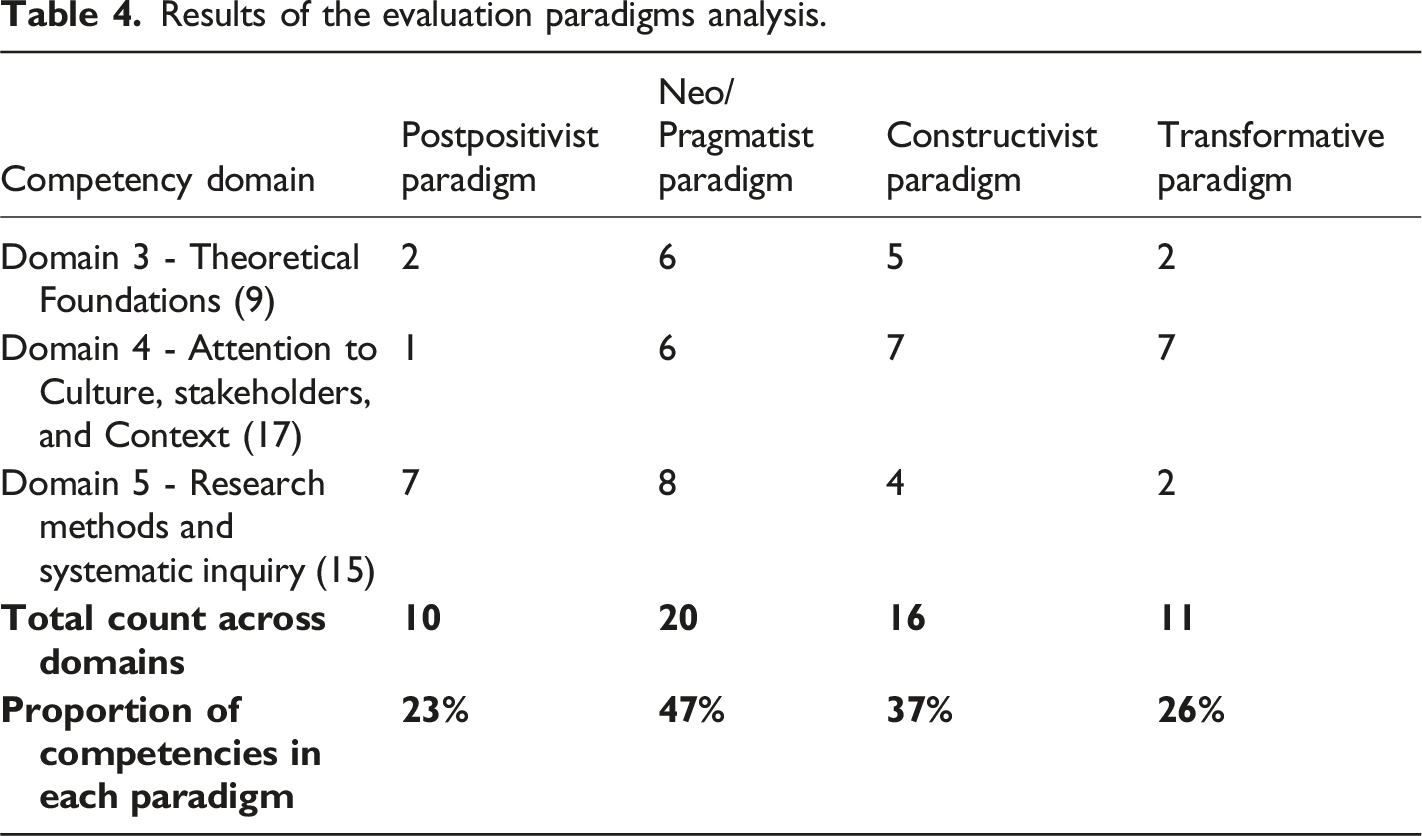

Results from the evaluation paradigms analysis

Results of the evaluation paradigms analysis.

Most of the competencies present in the postpositivist paradigm are situated in Domain 5 Research methods and systematic inquiry (7 out of 10), while most of the competencies in the transformative paradigm are situated in Domain 4 Attention to culture, stakeholders, and context (7 out 11). These results are coherent, given that the postpositivist paradigm places a strong emphasis on research methods, while the transformative paradigm focuses on aspects of culture, stakeholder responsiveness, and power relations.

However, there is one caveat regarding the constructivist paradigm. Three of the competencies that are directly linked to Scriven’s contributions are classified in the constructivist paradigm, in accordance with the authors’ taxonomy. These competencies are: 3.1 Understand the purpose of evaluation (e.g. making judgements of merit, worth, and/or significance). 3.4 Use the logic of evaluation, that is, identifying criteria of merit, setting performance standards (thresholds), choosing measures, synthesising evaluative judgements using synthesis methodologies which bring together facts and values for reaching evaluative judgements. 3.8 Undertake evaluative actions, for example, grade, rate, score, rank, apportion, compare, or attribute to establish merit or worth.

Classifying these competencies in the constructivist paradigm is somewhat incorrect, as we would argue that Scriven is more aligned to the pragmatist/neopragmatist paradigm. Once again, a limitation of the framework has a direct impact on the results of this analysis.

This exploratory study reveals that the AES promotes evaluation practice that aligns mostly with the expanded frame (Schwandt & Gates, 2021) and the pragmatist/neopragmatist paradigm (Mertens & Wilson, 2018). While the analysis tool used could be refined to provide more accurate results, the results of these two separate analyses give us a good idea of the AES’s stance regarding evaluation practice.

Discussion

In this section, we discuss what is currently missing from the framework by noting the lack of representation of Australian First Nations. We also suggest highlighting the transformative power of evaluation to guide evaluators towards social justice. Finally, we encourage readers to enhance the tool developed during this project with the aim of conducting further research in evaluation theory.

Competencies and the AES competency framework

While the original American competency framework and its offspring have garnered interest regarding its potential uses, the AES framework has rarely been addressed in the literature. Additionally, the literature reviewed lacks an in-depth exploration of the theories underpinning competency frameworks. This is an issue for three reasons.

First, competency frameworks are written documents that are not updated regularly; they are mostly static. For example, the AES competency framework was initially published in 2013 and was refined for the self-assessment process in 2020 (Gullickson et al., 2024, this issue). In a fast-changing world, seven years is a considerable amount of time. During this time, contexts of practice have changed within AES, including the launch of the First Nations Cultural Safety Framework (Gollan & Stacey, 2021) and accompanying workshops (AES, n. d.). The AES Systems Evaluation Special Interest Group and new ways of approaching evaluation have emerged (Schwandt & Gates, 2021). Therefore, it is important to outline the theoretical grounding of a competency framework at a certain point in time, as this orientation is contextual.

Second, the rise of the competency-based approach reveals that educators use these frameworks to develop educational material (LaVelle & Galport, 2020; LaVelle & Johnson, 2022; Poth et al., 2020; Poth & Searle, 2021). Clarity about how the competencies conceptualise evaluation is essential, as it underpins the knowledge and skills they prioritise, and the resources developed to support learning. Lack of clarity may lead those teaching and learning evaluation to inadvertently develop evaluators that perpetuate postcolonial and other contextually inappropriate practices.

Third, the normative nature of these frameworks undermines the diversity of knowledge that evaluation practitioners bring to their practice. As such, outlining that the AES framework follows a particular theoretical stance softens its normativity and highlights its limits. Practitioners and educators should refer to this framework with a critical eye to avoid limiting themselves to a static set of competencies.

The findings of this exploratory study reveal that the AES framework conceptualises evaluation through a pragmatic lens, which fits into the expanded frame of evaluation. It is comforting to see that the AES does not focus on the postpositivist paradigm and conventional frame, as these have garnered substantial critics (Mertens & Wilson, 2018; Schwandt & Gates, 2021). Nonetheless, in the Australian context, one might wish to see further emphasis on the alternative frame and transformative paradigm to address the postcolonial structures affecting Aboriginal and Torres Strait Islanders, and other minority groups. The pragmatic paradigm and expanded frame were historically developed in North America in the 1970s and 1980s (Mertens & Wilson, 2018; Schwandt & Gates, 2021). In this context, the concept of decolonisation was not at the forefront of evaluation practice. As such, endorsing a normative framework that aligns with these conceptualisations of evaluation could perpetuate practices of cultural domination and oppression.

The AES competencies do not currently fulfil Schwandt and Gates’ (2021) request to move towards a morally committed social science. However, whether the AES wishes to move in that direction is up for debate. Aside from the emphasis on values and social change, the alternative frame provides elaborate conceptual tools to assess evaluands. Therefore, it might still be relevant to integrate the concepts of boundary critique, systems thinking and complexity science within the competency framework, as these may constitute useful knowledge for evaluation practice.

We hope this analysis generates discussions around the theoretical positioning of the AES framework. Other voluntary organisations for professional evaluation such as the Aotearoa New Zealand Evaluation Association (ANZEA) are clearer in terms of their conceptual underpinnings. With a strong focus on the Treaty of Waitangi, the ANZEA competency framework leans towards the alternative frame and transformative paradigm (ANZEA, 2011). However, with the Treaty of Waitangi, Māori people and culture are more politically represented in Aotearoa New Zealand than Aboriginal and Torres Strait Islander peoples and cultures are in Australia. As such, the AES and ANZEA competency frameworks seem to be reflective of their political context.

Considering this, it is evident that the AES competency framework entertains the status quo on that question, rather than pushing for a better representation of Australian Indigenous perspectives in evaluation. The concepts of Indigenous standpoints and Aboriginal and Torres Strait Islander cultures are yet to be mentioned explicitly in the AES competency framework. While Domain 4 Attention to culture, stakeholders, and context refers to cultural aspects, the specificities of Australian First Nations groups are obscured by the broad language on culture. In this context, culture could mean anything, from an organisational point of view to an industry perspective. Practicing evaluators (and those who teach evaluation) need to acknowledge these limits when reflecting on their teaching and evaluation skills and knowledge using this framework. As such, authors who situate themselves in the alternative frame and transformative paradigm might encourage the AES to include competencies on how Australian First Nations cultures and peoples are represented and catered for in evaluation.

The investigation of the theoretical underpinnings and limitations of the AES competency framework can be useful for evaluation practitioners, educators, and commissioners who refer to them. Not only does it clarify where the AES competency framework stands, it also shows that there are still missing areas relevant to the Australian context. This information can support the AES in revising its competencies and provide those engaged in evaluation capacity building a framework for considering which knowledge and skills are prioritised in their work.

Refining the analysis tool for future research

The analysis tool used for this exploratory study would require improvements to be valuable in other contexts. While it was necessary to invoke two taxonomies for this analysis, using two separate frameworks may not be sustainable for future research. Although we have used Schwandt and Gates’ (2021) and Mertens and Wilson’s (2018) taxonomies, other ones could be used. A future analysis tool could use a blend of taxonomies aiming for a balance of perspectives and ability to code accurately. Future research could focus on building a robust and valid tool using this study as a reference point, and engage critically with additional taxonomies. The research would need to meticulously document how the tool is created, the choices that underpin it, and appropriate uses.

Conclusion

Our analysis highlights the theoretical and philosophical underpinnings of the AES Evaluators’ Professional Learning Competency Framework for the first time. The results of this exploratory study reveal that the AES competency framework’s conceptualisation of evaluation aligns with Schwandt and Gates’ (2021) expanded frame and Mertens and Wilson’s (2018) pragmatist/neopragmatist paradigm. While the AES competency framework comprises elements of the alternative frame and transformative paradigm, it does not advocate for using evaluation as a way to promote social justice. Moreover, despite encouraging cultural understanding and appropriateness, the refined version of the AES competency framework fails to mention First Nations’ peoples and cultures, which are important characteristics of the Australian context. Considering this, the article seeks to initiate discussions about the current underpinnings of the AES competencies, and whether these will remain appropriate in the future.

There are, however, limitations to this study. Since the analysis relies on evaluation taxonomies that were not designed for this purpose, the results have room for varied interpretation. Nonetheless, this analysis provides a novel and important perspective for understanding competency frameworks. Despite the challenges associated with creating a robust analysis tool for similar research in the future, using this type of approach could yield valuable information for the field of evaluation. Not only could other competency frameworks go through a rigorous inspection, but education materials, job postings, or requests for proposals could also be examined. Given the wide array of definitions and conceptualisations of evaluation (Gullickson, 2020), we would argue that analysing evaluation materials with such a tool could bring clarity on the various perspectives in the field.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.