Abstract

Realist evaluation is a theory-based approach to evaluation which examines what works in what respects and to what extent, for whom, in what contexts and how. It focuses on identifying underlying mechanisms which cause outcomes and the contextual factors necessary for their operation. Despite realist evaluation being ‘agnostic’ about methods, qualitative approaches have been heavily favoured in practice. This paper argues that the use of surveys can considerably strengthen standard approaches to realist analysis while still being consistent with the philosophical underpinnings of realist evaluation. It provides examples drawn from various research and evaluation projects. The paper demonstrates that when program theory is well-developed, quantitative data collected through surveys can be used to examine all three elements of realist hypothesis testing: contexts, mechanisms and outcomes. The paper concludes with five principles for the design and analysis of survey data in realist evaluations.

What we already know

• Realist evaluation was intended to adopt primarily mixed methods but in practice most frequently uses qualitative research techniques. • Realist evaluation is often represented as being ‘agnostic’ about the particular methods used in any study, but there have been exceptions to this since the seminal text

Original contribution to theory and/or practice

• The article identifies the relative infrequency of surveys in realist evaluation and common problems with their use. • The article demonstrates, with examples, how surveys can be used in realist evaluation and provides five principles for their use.

Introduction

Realist evaluation is based on a realist philosophy of science, which assumes that underlying and usually invisible mechanisms cause outcomes, while features of context determine which mechanisms operate (Bhaskar, 1975; Pawson & Tilley, 1997). Realist evaluation is often adopted for complex interventions where outcomes differ across contexts or participant groups and is usually framed around the basic realist question of what works, for whom, in what contexts, how and why (Pawson & Tilley, 1997). Realist hypotheses usually take the form of Context-Mechanism-Outcome (CMO) propositions or some variant of this heuristic.

It is widely supposed that realist evaluation is ‘agnostic’ about methods. In their seminal text,

This article takes up the point about tailoring the choice of methods. It accepts that methods should be tailored to the ‘exact form of hypotheses developed earlier’: that is, that methods should provide valid evidence for part(s) of the theory being tested. It goes one step further by arguing that methods should not contradict the primary assumptions of realism – generative causation, context dependence, open systems and so forth (Westhorp, 2014) – or ignore the implications of those methods for the nature of findings. Methods in realist evaluation should not only be fit for purpose in the general sense; they should be ‘fit for

While there is guidance on realist interviewing (Manzano, 2016; O’Rourke et al., 2022; Westhorp & Manzano, 2017), there is almost no guidance on constructing or using surveys to be fit for realist purpose. This article therefore seeks to provide some initial guidance on this issue and thereby instigate wider discussion of the use of surveys in realist evaluation. It provides examples drawn from various research and evaluation projects and demonstrates how survey findings were relevant both for realist analysis and for policy or practice.

For evaluation in general, surveys offer several advantages compared to interviews and focus groups. These include obtaining information from a larger number of participants and getting responses to more questions, allowing a broad range of data to be collected in a relatively short period of time. Surveys can be very cost-effective and (subject to technology) can be accessible for participants in hard-to-reach areas. Statistical techniques in analysis can provide the strength of an effect or association and determine whether it is statistically significant or due to chance. Surveys can also make it easier for participants to express controversial views, whereas social pressures in interviews or focus groups may dissuade open expression. Finally, conducting surveys at different intervals can identify changes over time, including in outcomes of interest. Of course, qualitative methods have their own advantages compared to surveys, which is one of the reasons that mixed methods are so often appropriate, and recommended (see Pawson & Manzano-Santaella, 2012) for realist evaluations.

Two notes on terminology are required. Firstly, the term ‘realist evaluation’ will be used throughout the article but the principles apply equally to any form of realist primary research. Secondly, there are technical differences between the terms ‘survey’ and ‘questionnaires’: the latter refers only to the instrument and the former to the whole process of collecting and analysing data. For ease of reference, the term ‘survey’ will be used from here forward, unless the reference is specifically to the instrument itself.

In the remainder of this article, we review the use of mixed methods in realist evaluation in theory and in practice; provide examples of uses to which surveys have been put in realist evaluation; consider issues when undertaking statistical analysis of quantitative data from a realist perspective; and provide five principles for the use of surveys in realist evaluation.

Mixed methods in realist evaluation

Realist evaluation was originally conceived as adopting mixed methods (Pawson & Manzano-Santaella, 2012; Pawson & Tilley, 1997): As a first approximation one can say that mining mechanisms requires qualitative evidence, observing outcomes is quantitative, and that canvassing contexts requires comparative and sometimes historical data (Pawson & Manzano-Santaella, p. 182).

As a second approximation, we now add that whether qualitative or quantitative methods are required depends as much on the stage of theory development as it does on whether the data relate to context, mechanism or outcome.

Consider the use of primary data in developing initial theory. One cannot collect quantitative data on outcomes if the nature of outcomes is not yet known. This might be the case in pilot programs, where some intended outcomes will likely be anticipated but others not; or in community development programs where community members will determine what issues to address or indeed whether the program will be issues focused. For cases like this, qualitative data are required to identify the nature of outcomes. Subsequent data collection may revert to quantitative methods to measure the extent and distribution of those outcomes. However, realists expect variation in the type of outcomes as well as the extent, so some qualitative data to capture novel outcomes will remain useful.

Similarly, comparative data cannot be collected about contexts if the appropriate categories are not yet theorised or about mechanisms if those have not yet been hypothesised. That is, it is very likely that qualitative data will be required for all of context, mechanism and outcome during initial theory development. If, on the other hand, the program theory is well developed, then quantitative data can be used for all three categories, as will be demonstrated below.

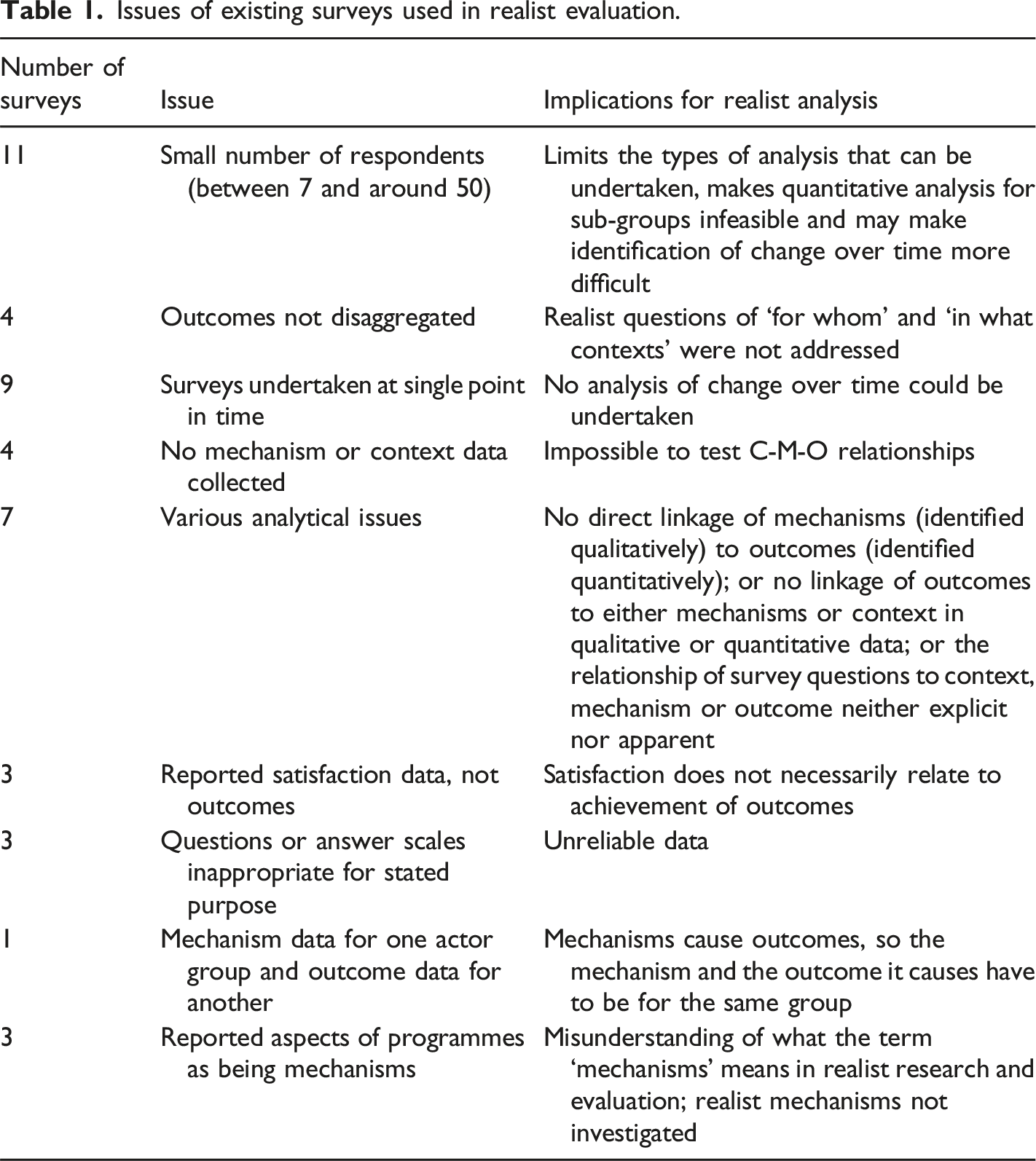

Issues of existing surveys used in realist evaluation.

There were also weaknesses in reporting (albeit restrictions on article length may have played a role for some of them). Quantitative data was inadequately reported in seven articles. This included inadequate identification of what data was quantitative and what was qualitative, no information being provided on response rates, no description of how quantitative data contributed to analysis or survey findings not being reported. Another five articles provided insufficient information about survey design, questions or implementation, meaning that the quality of the process and potentially the data itself could not be assessed.

This litany of failings may suggest that all is doom and gloom in the use of surveys in realist evaluation. This is not in fact the case. Four articles reported very specific uses of surveys that did not contribute directly to realist hypothesis testing but were, nevertheless, fit for purpose (establishing sub-groups, identifying whether a particular technology had been used, baseline assessment of resistance to an intervention and using survey outcomes to design interview questions). Some used pre–post survey designs appropriately, and five used specific survey or quantitative methods (Q-sort, social network analysis, qualitative comparative analysis and existing health instruments). Another five made use of relatively advanced statistical methods, although issues were identified with some of these as well. One used structural equation modelling to analyse relationships between mechanisms and outcomes but defined context simply as three different intervention sites: this is a misunderstanding of the realist use of the term ‘context’. Another similarly identified the setting of the intervention as the context and identified an activity within the intervention as a mechanism. Another analysed relationships between context and outcome and mechanism and outcome but did not examine the relationship between context and mechanism. Two used regression analysis appropriately (although this too can be seen as problematic – see the discussion of statistical analysis in realist evaluation, below).

Additional searches were conducted to identify whether guidance for the use of surveys in realist evaluation had previously been published. Two searches were conducted using the general search facility of a university library (i.e. not restricted to particular databases), with the terms ‘realist evaluation’ OR ‘realistic evaluation’ AND ‘survey’ OR ‘questionnaire’ covering the period 1997 to 2021. To manage time constraints, only the first 100 items from each search were retained for title and abstract review. Protocols, meta-evaluations, realist reviews, book reviews and items that did not report a realist evaluation or for which no PDF or URL was available were excluded. A total of 128 items were retained. Only one (Schoonenboom, 2017) provided any methodological guidance or focused demonstration of the use of realist surveys, and that assumed a single, very narrow purpose (testing correlational models).

The use and benefits of surveys in realist evaluations

Surveys can serve multiple purposes in realist evaluation. They can be used in relation to a single theory component, for example, quantifying the nature and extent of change – or perceptions of change – over time. More usefully, with information about context and outcome, data can be disaggregated to examine outcomes for different groups or contexts, testing hypotheses about for whom or in what contexts programs ‘should’ work. Surveys can also be used to refine the understanding of mechanisms and how they work.

In large enough data sets, surveys can also be used to assess the distribution of Context-Mechanism-Outcome configurations. For the program manager or policy maker, knowing the

The sub-sections below provide examples of uses of surveys in realist evaluations.

Investigating context using surveys

Greenhalgh and Manzano (2021) undertook a systematic review and identified that the term ‘context’ is used in two main ways in realist work: ‘1) context conceptualised as tangible, fixed, observable features that trigger mechanisms 2) context conceptualised as relational and dynamic features that shape the mechanisms through which the intervention works’ (p. 2).

Earlier, Pawson and Tilley (2004) had suggested that context may refer to ‘… • the • the • the • the

Characteristics of

The Pacific Community is a research and development organisation owned by its 27 member countries and territories. Amongst other things, it provides and commissions capacity building in Pacific Island countries and territories using training workshops, mentoring, coaching, peer-to-peer exchanges, attachments, extension visits, micro-qualifications, technical assistance and multi-modal programs. In 2019, the Pacific Community commissioned a realist evaluation to understand how, for whom and in what contexts particular outcomes were achieved (RREALI, 2020).

Initial program theory was developed for several of the modalities, including contextual factors – many of them are program implementation factors. These included factors relating to trainers (e.g. technical expertise and whether from a similar cultural background to participants), participants (e.g. prior qualifications), topics (e.g. their cultural sensitivity and occupational requirements), duration (e.g. up to a week for workshops and up to four weeks for peer exchanges) and accreditation requirements.

A purpose-designed questionnaire was developed, included the contextual factors just described, and sub-group indicators for training participants (gender, age group, type of employer and region within the Pacific). This meant that outcomes could be disaggregated by sub-groups and contexts. The questionnaire was distributed to all participants in capacity building over a 12-month period, receiving 365 responses (a response rate of 23.6%, just over the 20% average for surveys).

Analysis identified the features of implementation participants reported as contributing most strongly to their learning, and the features most and least likely to be reported in which modalities. So, for example, the element ‘Examples and exercises were immediately relevant to my situation’ rated as the most important single feature for participants’ learning. However, it was least likely to be reported as being ‘true’ (i.e. having happened) and ‘important to my learning’ in workshops – the most common capacity building modality. The programming implications were clear: either increase the use of relevant examples in workshops or increase the frequency of other modalities which provided the most relevant examples. The Pacific Community has since reported use of the findings to inform its designs for training and capability building and to inform its new strategic plan, in the design of the Terms of Reference for an independent institutional review and to inform conversations with its members.

Sub-group analyses were run using analysis of variance tests (ANOVAs) or, where necessary, the non-parametric equivalent, the Kruskal–Wallis test. Differences in outcomes were identified including for men and women (some outcomes were stronger for men), by age group (those aged 35–45 years were most confident to put new learning into practice) and by employer type (those in government and community service organisations were less likely to report leadership support for changed workplace practices after training). These tests are, by their very nature, linking two elements of program theory (context and outcome). These sub-groups had not been specifically addressed in the initial program theory, by the time the quantitative analysis had identified the differences in outcomes, interviews were already completed. This meant that explanations for the differences were not developed or tested in the evaluation. However, having been identified, explanations could be hypothesised in the final report for future investigation.

This example demonstrates that surveys can address multiple aspects of context, including aspects of program delivery (‘implementation’) and sub-group analysis (‘for whom’), and that realist surveys can identify the frequency and distribution of CMOs or their elements. The example also demonstrates that analysis can be stronger where more comprehensive theories are developed before data collection. Finally, it highlights the importance of sequencing qualitative and quantitative data collection strategies, where the timeframe of the evaluation allows. Surveys conducted before interviews can support sampling for interviews (in this case, e.g. we may have sampled by gender, age group or employer type to further investigate the differences found). If surveys are conducted after interviews, they can be used to quantify aspects of theory.

Investigating mechanisms through surveys

Quantitative data can be used to investigate mechanisms, assuming those mechanisms have been adequately hypothesised in advance. Three examples are provided below, each demonstrating a different aspect of theory testing.

Example 1: Adjudicating between mechanisms

Pawson, 2006 has emphasised that theory testing includes adjudicating between competing explanations.

Maths for Learning Inclusion was a South Australian education program conducted in 40 schools. It aimed to improve mathematics learning outcomes for disadvantaged students in Grades 3 to 5 of primary school.

During program theory development, one important discussion revolved around the primary mechanism(s) of change at teacher level: was it improved mathematical knowledge? Improved maths pedagogy? Better understandings of disadvantage and strategies for learning inclusion?

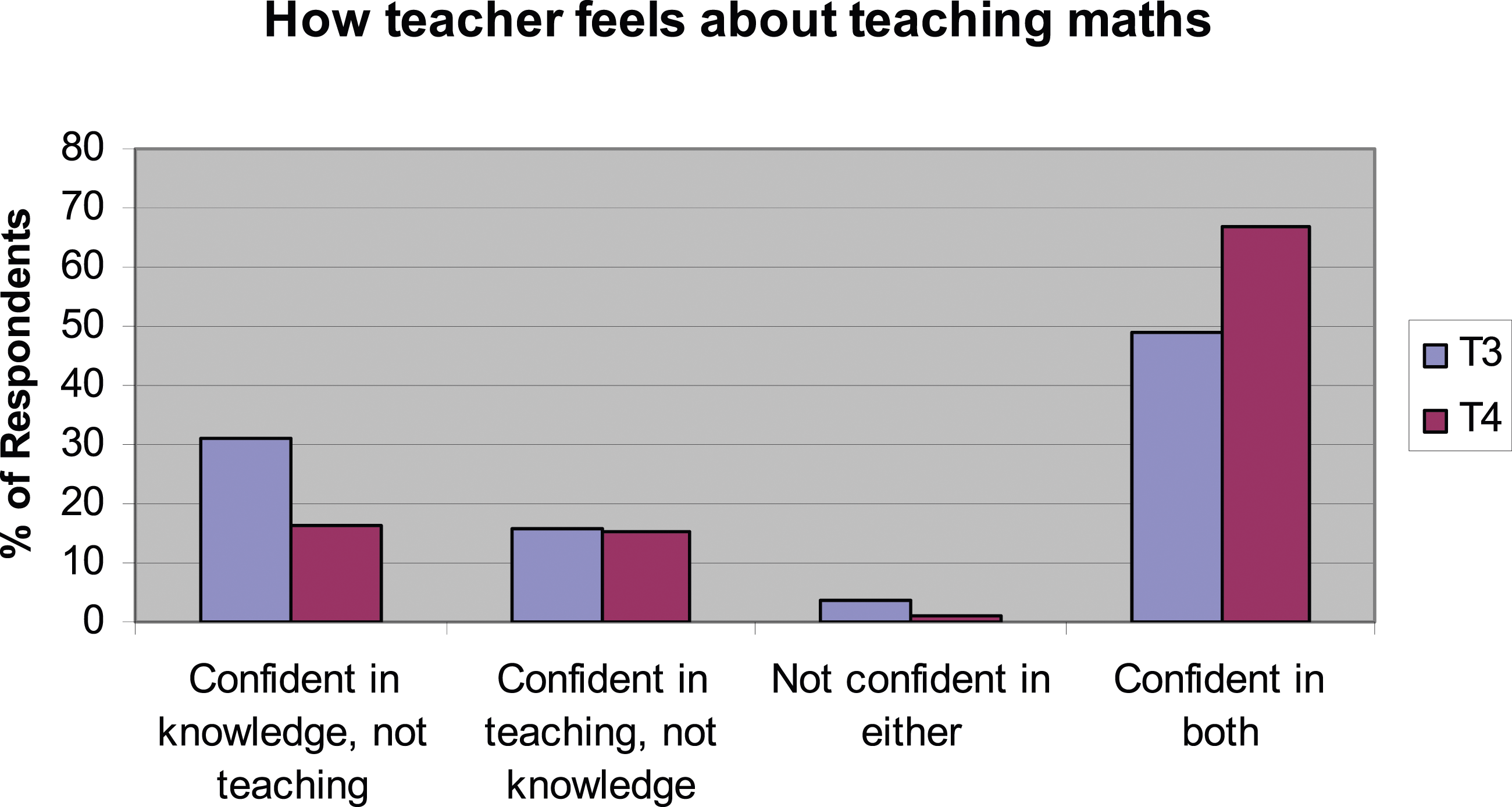

A teacher questionnaire was purpose-designed for the evaluation. One multiple-choice question, introduced in the second year of the program, proved particularly useful. The answer options were as follows: 1. I’m confident in my maths knowledge, but not in teaching mathematics in interesting and engaging ways. 2. I’m confident about my teaching of mathematics, but not my mathematics knowledge. 3. I’m not confident about either my mathematics knowledge or my mathematics teaching. 4. I’m confident about both my mathematics knowledge and my mathematics teaching.

From the beginning to the end of the second year of the program, there was a large (around 30%) and statistically significant ( Confidence in teaching/knowledge Year 2 of a Maths for Inclusive Learning program. *T3 = Time 3, the beginning of the second year of the program. T4 = Time 4, the end of the second year of the program.

This example demonstrates that in some cases, a single, relatively straightforward survey question can adjudicate between competing explanations, as well as providing useful data to improve programming.

Example 2: Intermediate outcomes as resources in mechanisms

The second example demonstrates a case where an intermediate outcome was also expected to operate as a ‘resource’ in a mechanism (using Pawson and Tilley’s construct of program mechanisms, i.e. an interaction between a resource provided by a program and the reasoning of program subjects). This is itself an example of the wider point made in this section: that surveys can be used to examine different aspects of mechanisms.

Citizen Voice and Action programs are used in international development to hold governments and service providers accountable to communities for the availability and quality of services in areas such as health and education. This is expected to contribute to improved quality of services and consequently service outcomes. Wahana Visi (World Vision Indonesia) conducted a Citizen Voice and Action program to improve the quality of health services for pregnant women, mothers and children up to the age of 5 years, over 3.5 years in 60 villages in three districts in eastern Indonesia.

Realist program theory was developed collaboratively in a multi-stakeholder workshop. One of the hypothesised mechanisms was the following:

Two surveys were conducted at baseline and annually thereafter, one for service users and one for village officials and health cadres (volunteers involved in local health service provision). Clear evidence of increased knowledge was obtained, but differences were identified in (a) the rates at which knowledge increased for the two groups, (b) the times at which increases in household knowledge occurred for local and district-level care and (c) for which services knowledge did and did not increase. All of these findings had implications for program and service improvement.

Example 3: Factor analysis for mechanisms

The third example (Westhorp, 2018) demonstrates how surveys can be used to investigate how elements of mechanisms fit together, as well as how mechanisms fire together to generate outcomes. Multiple mechanisms being required to generate outcomes is a standard expectation in critical realism and realist evaluation.

Pathways for Families was a two-generation project designed to improve child development outcomes for children under five, and health and life outcomes for their families. It was conducted in a disadvantaged area in southern Adelaide. Multiple agencies provided programs of different types within a single venue.

A post-program parent questionnaire was developed and used with five programs, each with different program theories. An intensive, attachment theory-based parenting program for mothers and their focus child was expected to work through therapy, education and peer support; a less intensive, attachment theory-informed parenting program for fathers which was expected to work through education and peer support; a ‘time out’ and nurturing program for mothers which was expected to work through stress reduction and social support; and two play-group programs which were expected to work through modelling of positive interactions between parents and their children as well as social support.

The section of the survey addressing the ‘reasoning’ component of mechanisms comprised 14 elements which had been identified through earlier interviews with program staff and participants or in the literature. The elements were introduced with a question stem of ‘This program helped me to: …’. The elements included ‘get back a sense of my own identity (not just my role as a parent)’, ‘believe that the choices I make can make a difference’ and ‘understand the messages my child is trying to communicate through his/her behaviour’. The answer scale was ‘This applies to me …

The data were analysed twice. The first treated each element as a mechanism in its own right. The second acknowledged that each element could be a component of a broader mechanism: supporting attachment, stress reduction, reduction of guilt and shame and increasing internal locus of control.

Factor analysis was conducted to determine whether the results reflected these four mechanisms. Principal component analysis using a Varimax rotation identified three underlying factors. The first factor included four elements related to understanding the child’s needs, feelings, perspectives and behavioural messages. This was supported by all three ‘locus of control’ items. A final item (feeling as though ‘I’m not the only one’) also loaded on this factor. This suggests a program mechanism (‘increased understanding of the child + enhanced internal locus of control’, which together one might term ‘increasing parental efficacy’) which may fire most strongly in a context of peer support.

The two ‘reflective capacity’ items loaded on a second factor, supported by two items relating to reduction of guilt, and less strongly by the locus of control items and a sense of regaining one’s identity. This suggests a ‘therapeutic’ mechanism, in which dealing with one’s own issues reduces guilt and contributes to increased internal locus of control.

The third factor related to stress reduction and was supported by two items related to guilt reduction and one related to choice-making. These findings enabled the program theory to be refined and, importantly, ‘lifted’ the 14 detailed items to a higher (‘middle’) level of abstraction, as is intended to enable portability and generalisation of realist program theory (Pawson & Tilley, 1997).

Importantly, this section of the post-program parent questionnaire was not intended to operate as a single

This has practical implications for the interpretation of the findings. It means that there is no reason to assume that the items that ‘fired together’ are manifestations of the same underlying cause. For example, there is no need to assume that ‘improved understanding of the child’ and ‘increased internal locus of control’ share the same root cause, even though they may operate in tandem to create a shared effect. This is consistent with the realist principles that the same program may trigger many different mechanisms and that multiple mechanisms can be required to achieve a single outcome. Correlation analysis was used to identify the relationships between mechanisms and outcomes.

This example demonstrates that realist surveys can be used to identify the multiple component elements of mechanisms, to clarify theory about the nature of mechanisms, to lift program theory to a higher level of abstraction and to identify which mechanisms fire together and contribute to which outcomes. All these findings can contribute to clarifying or revising program theory, and thus improving programs.

Using surveys to identify outcomes

All of the survey examples provided above included questions about program outcomes. Collecting evidence of outcomes in the same survey as data about context and mechanism enables statistical analysis of the relationships between them.

In each case, the outcomes had to be described in a general manner. In two cases, this was because the evaluation covered multiple programs, each with their own outcomes. In the Pacific Community evaluation, for example, the questionnaire included nine generic outcomes of capacity building programs (e.g. understanding the topics covered, increasing skills and taking on new roles at work). Similarly, the Pathways for Families questionnaire included six short-term outcomes ‘at home’, relating to changes in parenting behaviours and family relationships, and four ‘in the community’, relating to social contact and use of other services. In the Maths for Learning Inclusion and Citizen Voice and Action evaluations, generalised description of outcomes was required because respondents may have learned different things and/or changed different behaviours.

In all cases, the outcomes were self-reported and therefore share all the risks inherent to self-report data. Findings were both triangulated and explained using data from interviews or focus groups with service providers and supervisors/managers of participants.

Different strategies are required to investigate outcomes at a ‘whole of population’ level. In the Wahana Visi Citizen Voice and Action program, intended outcomes included knowledge of the services available to the target group by mothers who had not necessarily participated in the program and by village officials and health service cadres.

Finding out whether knowledge changed over time required population-level surveys for the two groups, which in turn required that the results be generalisable to the two populations. Different strategies were required for the two groups because their nature and circumstances differed. The first group comprised all expectant women and mothers of young children across the 60 villages. Data quality issues to be managed included ensuring randomisation of respondents and avoiding ‘learning effects’ from participating in the survey influencing findings in subsequent years. The required sample size was 566 households, so a target of 10 households per village was set. To ensure randomisation, field staff selected two locations and walked in a straight line, doorknocking until five households had completed the survey. In subsequent years, data collection started from different locations within the village, to avoid sampling the same people each year, thus managing for learning effects. The sample size and randomisation processes together meant that the results could be generalised to the wider population of ‘mothers of children under 5’.

For village officials and health service cadres, however, the circumstances were different. It was likely that a reasonable proportion would remain in their positions over the duration of the program, and most were likely to be captured in the survey each year. It was also much more likely that their knowledge and awareness would be directly affected by their participation in the program than it was for mothers. Consequently, changes in their knowledge could reflect both direct learning through the program and learning effects from the survey. Consequently, randomisation was neither possible nor appropriate. Interpretation of the findings had to acknowledge both the possibility of changeover of personnel and the possibility of learning effects from the survey. The former could tend to decrease effect sizes for changes in knowledge because new personnel may have missed out on earlier learning opportunities. The latter could tend to over-estimate the effects of the program because part of the effect could have been due to the survey rather than the program itself. These were determined to be acceptable (and unavoidable) risks to data quality.

The household survey identified increased knowledge of services over time, and in knowledge of health service standards. More than half of the increase in knowledge of services occurred in the last year of the program. This underlines the importance of duration of programs to achieve population-level outcomes. It also identified differences in which services mothers knew about, thereby highlighting important areas for future action.

Statistical analysis in realist evaluation

While we have argued that the use of surveys can be consistent with realist evaluation, some discussion of statistical methods is warranted. We have already shown, but it is worth emphasising, that some statistical tests can draw linkages between different elements of realist theories. For example,

These techniques might be challenged on the basis that the mathematical assumptions underpinning them are linear, while realism, with its basis in complexity theory, assumes non-linear relationships. Where relationships and/or the theories under test are relatively straightforward, this may not be a serious concern. It is probably worth mentioning this potential weakness, however, in the ‘methods’ section of a report or article. For a recent discussion of other issues in quantitative analysis in realist evaluation, see Dyer and Williams (2020).

Outside of realist evaluations, a very common approach to the analysis of quantitative data is multivariate regression analysis (in its various forms). CMO configurations, prima facie, lend themselves to regression analysis. Through surveys, data could be collected and the outcome could be used as a dependent variable. A regression model seeks to explain the variation in the values of the dependent variable (the outcome) that can be explained using independent variables (the hypothesised mechanism and context). Given that context and mechanism need to interact to produce an outcome, the two variables that proxy them could be interacted with one another in the regression model (as well as being included by themselves). A statistically significant parameter on the interaction variable would identify whether both the C and M are needed together to achieve a higher (or lower) outcome. Moreover, other factors which might be important for determining the outcome could be included (controlled for) in the regression to ensure that the statistical finding is not driven by omitted variables.

However, regression analysis must be used with care in realist evaluations. Again, standard regression models often assume (amongst other things) that there are linear relationships between the variables while realist evaluation assumes that relationships are non-linear. Secondly, regression analysis typically identifies empirical regularities, examining relationships on average. Realists are not particularly interested in average outcomes, but in explaining differences in the nature or extent of outcomes for different sub-groups of participants and/or in different contexts. While this can be explored in a regression framework by including context as an independent variable, it does require ample observations across all of the different contexts to provide meaningful results. Given that multiple contextual factors may be necessary for a single mechanism to ‘fire’ and that multiple mechanisms may be necessary to cause a particular outcome, this may make the number of observations required infeasible. Thirdly, establishing causality as opposed to associations in a regression framework is incredibly challenging. Causality can only be ascertained if, for example, there are no omitted independent variables, no sample selection issues and no measurement error. When causality can be inferred, it is successionist in nature: a cause is seen to operate constantly and regularly to produce an effect. The actual process by which, or reason why this is the case, is not identified and thus differs from generative causality examined in realist research.

Principles for using surveys in realist evaluation

This section of the article builds on the preceding examples by presenting five principles for design and analysis of survey data in realist evaluation. While some of the advice would apply to surveys in any type of evaluation, we focus here on relevance to realist evaluation. In each case the principle is presented in bold text as a heading, with explanation following.

Design the questionnaire to test program theory

In realist evaluation, data are used to ‘confirm, refute or refine theories about the programme’ (Wong et al., 2016, p. 3 of 18). Writing a questionnaire to test program theory requires that the program theory be relatively well developed. Questionnaires can provide data for whether hypothesised elements exist or, in the case of outcomes, are achieved; for relationships between elements (between context and mechanism, and between mechanism and outcome); and for the distribution of elements and outcomes. Providing direct evidence of relationships between C and M, M and O and distinguishing between sub-groups can be a great strength of survey data. As demonstrated in the examples earlier in this article, Analysis of Variance (ANOVA) can test differences between sub-groups, paired

Develop the sample to test program theory

Realist evaluation assumes that different people have different information to provide to an evaluation, depending on their role (Pawson & Tilley, 1997). Assuming that programs will work for some people or in some contexts, but not for others, requires that the survey sample be designed to collect data from and about the sub-groups for whom programs are hypothesised to work differently.

If collecting data directly from participants (as may be necessary for mechanism data, at least), the sample should be designed to collect sufficient responses from each targeted sub-group for valid statistical analysis. The number of sub-groups affects the sample size required.

Related to this, the questionnaire must collect data that enables the sub-groups to be identified in the analysis. This is simple enough if sub-groups happen to follow demographic boundaries (e.g. gender, age group and religious affiliation) or easily identified roles (e.g. doctor, nurse and allied health worker). However, these groupings are not always the most useful in realist evaluation, as attitudes, world views and ‘reasoning’ vary within them. It is somewhat more complicated, but still possible, to identify sub-groups if they are defined instead by clusters of attitudes or characteristics. Some realist evaluators have overcome this problem by using Q methodology to identify sub-groups and their world views (e.g. Harris et al., 2019).

Sample size and structure are also relevant to generalisation. Realist work may require two types of generalisation. Generalising from a sample within a program population to that program population requires

Collect only theory-relevant demographic data

Continuing the theme of theory relevance and the issue of demographic data, some evaluation surveys may not require demographic data at all. If gender (or age, income status or other demographic variables) are not relevant to the program theory and there is no other good reason to collect the data (e.g. monitoring equity in program delivery), these items should not be included in the survey – it is a breach of research and evaluation ethics to collect unnecessary data. Where demographic data are relevant, disaggregation based on the demographic indicator alone is not sufficient to qualify as realist analysis. For example, it is not enough to identify that males, females and other-identified respondents achieved different outcomes from their program participation; it is necessary to identify

Analysis then tests the hypothesised relationships.

Use multiple indicators for mechanisms

Mechanisms invariably involve interactions and processes (Westhorp, 2018). As a result, single indicators rarely do justice to a mechanism. A single indicator may tell us that a component element of a mechanism is or is not present or that a single step in a process has or has not occurred. Consequently, multiple indicators are usually required to evidence the whole of a mechanism, as was the case in the Citizen Voice and Action and Pathways for Families examples above (the exception to this is where mechanisms can be represented at a middle level of abstraction, as in the Maths for Learning Inclusion example above).

It is critical that both the questions and the response items are tightly crafted. However, what surveys really tap is respondents’ opinions, beliefs or reported experiences – not the mechanism itself. This is a weakness shared with qualitative data.

But here-in lies an advantage for surveys: they can offer a particular form of triangulation of evidence for mechanisms. To do so, multiple indicators must be incorporated in relation to a single mechanism and those indicators must tap different structural elements of the mechanism and/or different stages of its process. Evidence for all elements and/or stages of a mechanism provides stronger evidence than evidence for one: each indicator in effect triangulates for the others.

Follow standard survey design criteria (usually)

Most of the ‘standard’ advice for development of surveys applies to realist surveys. Each question must be a single question – there can be no ‘and’, ‘or’, ‘because’ or ‘so’ built into individual questions. This is because questions with two parts are impossible to analyse. To investigate ‘if/when’ questions (context) concurrently with ‘because’ questions (mechanisms), the elements should be separated into distinct questions. Correlation analysis can then be used to identify whether there are relationships between specific contextual factors and specific mechanisms and, if so, the strength of those relationships. The same logic applies for mechanism and outcome questions.

The same fundamental analytic requirements apply to realist as to standard surveys. There must be sufficient respondents to undertake the types of statistical analysis required, statistical tests should be appropriate to the types of data and so on. One possible exception to this arises from the nature of evaluation (rather than from it being realist evaluation). In small- to medium-sized programs, it may not be necessary to generalise results to a larger population because the whole of the eligible population is involved in the program and can be surveyed. In larger programs, it will be necessary to construct a sample and to be able to generalise from it.

A final note again applies in all forms of research or evaluation. Methods inevitably carry with them processes for analysis as well as for data collection, and those processes set limits to the interpretations that can legitimately be made. In simple terms, findings must not be interpreted to mean more than they do.

Conclusion

This article has demonstrated that surveys have not been as widely used in published realist evaluations as qualitative data and that there have been many problems with their use. It has argued that any method used in realist evaluation should be ‘fit for realist purpose’ and demonstrated that surveys can be so. Surveys can contribute to analysis of contexts, mechanisms, outcomes and the relationships between them, thus contributing to testing realist program theories. It has also provided a short list of principles for the construction and analysis of realist surveys. The intent is to strengthen realist evaluation practice by increasing appropriate use of surveys.

Footnotes

Acknowledgements

The authors are grateful to Dimitri Renmans for the provision of recent articles using quantitative methods in realist evaluation, to the organisations who gave permission for their examples to be used and to Liz Meggetto, Cara Donohue, Kerryn O’Rourke, Emma Williams and Phillip Belling for comments on an earlier draft of the article.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.