Abstract

The 2015 United Nation’s ‘Eval Year’ declaration heightened program evaluation’s significance in achieving the Sustainable Development Goals. However, uncertainties persist regarding evaluating high-quality evaluations and addressing social justice concerns in meta-evaluations. The field lacks consensus on both conducting meta-evaluation and the standards to use. To address this, we reviewed meta-evaluation literature, mapped the American Evaluation Association’s foundational documents with the United Nations Evaluation Group’s Norms and Standards to explore their intersectionality on social justice, and analysed 62 United Nations Population Fund evaluation reports alongside their management responses. Our findings indicate that addressing social justice concerns in meta-evaluation is contingent on context rather than established standards. Thus, it’s crucial for evaluators to prioritise social justice in evaluation design and implementation, and to select quality assurance tools that match the evaluation context and professional association guidelines, especially in the absence of standardised guidelines.

Keywords

• The field of evaluation is confronted with major concerns regarding the demand for high-quality evaluations and the centrality of social justice in the absence of a consensus on standardised guidelines. • There are no one-size-fits-all guidelines for meta-evaluation and for addressing social justice in evaluations.

• Meta-evaluation practices and social justice concerns in evaluation are contextual, rather than based on standardised guidelines in the field. • The UN Evaluation Group’s intentional inclusion of gender and human rights in evaluation ensured that these concerns were integrated into the evaluation design, methodology, recommendations, and management response. • Evaluators should prioritise quality assurance and social justice in evaluation design and implementation, using tools that fit the evaluation context. • Further research is needed to develop a common language and definition of ‘high-quality’ evaluation and criteria for conducting meta-evaluation in the field.What we already know

The original contribution the article makes to theory and/or practice

Introduction

Background

The United Nations (UN) made a historic declaration that 2015 was the international year of evaluation or what is commonly referred to as ‘EvalYear 2015’ (International Organization for Cooperation in Evaluation, nd). This declaration came at a time when the Millennium Development Goals ended and were replaced by the Sustainable Development Goals. The UN’s historic declaration that made program evaluation visible to the world is important for making a huge global demand for ‘high-quality’ evaluations of the Sustainable Development Goals. However, there is a lack of consensus among evaluators on what constitutes high-quality evaluations and how to assess them, or how to conduct meta-evaluations.

The term meta-evaluation was first coined over five decades ago by Michael Scriven (1969) in one of the earliest attempts to set standards for ensuring the quality of evaluations. Worthen (1994) argued 25 years later that setting standards for evaluation practice is crucial for the development of evaluation as a profession, as it helps to guide both practitioners and users of evaluation reports. He reasoned that setting standards to guide professional practice is a hallmark of any profession because standards ensure that professional practice is of high quality. In fact, (Worthen, 2003) questioned a decade later how evaluation can be considered a profession if its practitioners do not conform to the technical or ethical standards usual for professional bodies. Notably, the field of program evaluation lacks consensus on how meta-evaluation should be conducted and what standards should be used to assess primary evaluations. Furthermore, despite the adoption of the Joint Committee on Standards for Educational Evaluation Program Evaluation Standards by professional associations worldwide including the American Evaluation Association and Australian Evaluation Society, these standards may not be suitable for judging the quality of social programs, international development interventions, and humanitarian programs. The Program Standards were intended for educational evaluations. Additionally, there is a need to address social justice concerns in the evaluation process (Tucker et al., 2023; Symonette et al., 2020; Thomas & Campbell, 2021).

In this article, we explore evaluator perceptions of meta-evaluation and social justice to contextualise the study within the current discourse on evaluation quality. We present the UN Evaluation Group Norms and Standards as the theoretical framework for our study and show how these Norms and Standards are operationalised in the UN Population Fund Evaluation Quality Assurance and Assessment. The methods section provides information on the sample reports and the procedure for data collection and analysis. We present the results of mapping American Evaluation Association’s foundational documents to UN Evaluation Group Norms and Standards and an assessment of how gender and human rights are integrated in UN Population Fund Evaluation Quality Assurance and Assessment and the management responses to evaluation recommendations. Finally, we discuss the implications of this study and future research areas.

Discussion of meta-evaluation and social justice in evaluation literature

Meta-evaluation was first defined in 1969 by Michael Scriven as ‘the evaluation of evaluations’ (Scriven, 1969). Since then, much has been written about meta-evaluation and its importance in the field of evaluation, such as Stufflebeam (2001a, 2001b) article ‘The metaevaluation imperative’. Additions to meta-evaluation, such as Stufflebeam’s checklist (Stufflebeam, 2001a, 2001b), are widely regarded as an essential tool for meta-evaluation. The inclusion of meta-evaluation in the Joint Committee on Standards for Educational Evaluation’s Program Standards (Third Edition, 2010) cements the importance of including meta-evaluation in every evaluation. In fact, meta-evaluation has various forms, including summative (Scriven, 1969), client-centred (Reineke & Welch, 1986), concurrent (Hanssen et al., 2008), responsive (Sturges & Howley, 2017), and internal formative meta-evaluations (Harnar et al., 2020). Early work in the development of meta-evaluation recognised the challenge of establishing criteria focused on technical quality criteria, such as reliable measures, valid conclusions, and cost-effectiveness, while remaining flexible enough for stakeholder needs (Apthorpe & Gasper, 1982; Smith, 1982).

More recent articles have continued to emphasise the importance of criteria that can be generalised across various industries. Harnar et al. (2020) identified a tension between evaluator and stakeholder perceptions of quality, emphasising that stakeholder needs and desires can sometimes conflict with an evaluator’s view of quality. This underscores the need for standards that are flexible enough to accommodate various stakeholder perspectives and broad enough to provide guidelines for the field. Cooksy and Caracelli (2005) similarly recognised this need for tailored criteria that consider stakeholder culture and sensibilities. However, they also acknowledged the challenge of navigating disagreement with clients due to the plethora of options available as standards.

Perhaps the most enlightening body of the literature was the category of projects that created their own standards. The most common sentiment in this category was that the criteria of the meta-evaluation must flex with the context. Stufflebeam (1978) emphasised the need for evaluators to be flexible and strike a balance between technical adequacy, utility, and cost-effectiveness, rather than insisting on optimising a single criterion. In the context of our literature review, it becomes evident that while every meta-evaluation that established its own standard made some reference to the Joint Committee on Standards for Educational Evaluation Program Evaluation Standards, each of these studies undertook the task of adapting, revising, or modifying these standards to align with the specific evaluation context. Grasso (1999) consolidated the 1995 American Evaluation Association’s Guiding Principles for Evaluators and the 1994 Joint Committee on Standards for Educational Evaluation Program Evaluation Standards into a meta-evaluation template. Uusikyla and Virtanen (2000) established criteria for each component using the 1996 European Commission’s meta-evaluation guidelines. Sturges and Howley’s (2017) Responsive Meta-evaluation Approach used a matrix inspired by Stufflebeam’s 1999 Meta-evaluation Checklist, the 2011 Joint Committee on Standards for Educational Evaluation Program Evaluation Standards, and the 2004 American Evaluation Associations Guiding Principles. Sturges and Howley’s approach emphasised stakeholder inclusion in the process. However, notwithstanding the meta-evaluation criteria that make reference to the Joint Committee on Standards for Educational Evaluation Program Evaluation Standards, it is crucial to emphasise that a 2020 survey of 923 American Evaluation Association members conducted by Harnar et al. (2020) revealed that 70% of evaluators possessed only moderate to minimal familiarity with the Joint Committee on Standards for Educational Evaluation Program Evaluation Standards. This lack of familiarity with Joint Committee on Standards for Educational Evaluation Program Evaluation Standards among evaluators is particularly pertinent, as it underscores the nuanced nature of adapting and tailoring meta-evaluation criteria to the specific evaluation context, as exemplified by the diverse approaches observed in the projects that developed their own standards.

This trend towards customising standards to fit stakeholder needs demonstrates the necessity for flexible standards. The UN Evaluation Group Norms and Standards is an example of an organisation that created their own meta-evaluation standards. To illustrate how one UN agency implements the UN Evaluation Group’s Norms and Standards for conducting meta-evaluation in practice, we used UN Population Fund’s evaluation quality assessment tool as a case study. Before examining the UN Population Fund’s Evaluation Quality Assurance and Assessment in our methods section, we will explore how meta-evaluation literature and practice define and address social justice and cultural competence.

Social justice in meta-evaluation

There is a growing movement within the field of evaluation to integrate social justice concerns into practice (Thomas & Campbell, 2021). This is an important development, as social justice involves ensuring that all members of society have access to the resources and opportunities necessary to lead fulfilling lives (Thomas & Campbell, 2021). This is particularly important due to the historical legacy of racism and structural discrimination which have created significant disparities in access to resources and opportunities in many countries around the world, including the USA, Australia, and New Zealand. While the American Evaluation Association and Australian Evaluation Society recognise the importance of social justice values, such as cultural competence, diversity, equity, and the common good in evaluation, there is still work to be done to ensure that these values are fully integrated into evaluation practice in the USA and Australia. In fact, the Aotearoa New Zealand Evaluation Association is more advanced in the integration of social justice concerns in their Evaluation Standards and Competencies. The integration of social justice concerns into evaluation practice is an important development that has the potential to improve outcomes for marginalised communities. Symonette et al. (2020) stressed the importance for evaluators to acknowledge their power and privilege and to use that power to combat oppressive systems and promote social justice. This requires intentional effort and ongoing discussion within the field.

Social justice now encompasses global issues of human rights and gender equality, expanding beyond its previous focus solely on racism as in the US context. While the UN doesn’t explicitly employ the term ‘social justice’, its emphasis on human rights closely aligns with advancing fairness and equality for marginalised and oppressed groups. The UN defines human rights as universal, irrespective of factors like race, sex, nationality, ethnicity, language, or religion (United Nations, 2022, United Nations Evaluation Group, 2022; United Nations, n.d.). Human rights centre on individuals, while social justice tackles wider societal issues (United Nations, 2006), with equal rights forming its bedrock. For instance, the UN Population Fund’s target population are women and young people whose ability to exercise their right to sexual and reproductive health is often compromised (UN Population Fund, nd).

Purpose and research questions

This study investigated the extent to which and ways that social justice is addressed in meta-evaluation literature and practice. This study was guided by four research questions: 1) To what extent do the UN Evaluation Group Norms and Standards align to the American Evaluation Association foundational documents regarding social justice and culturally responsive evaluation concerns? 2) To what extent do the various meta-evaluation quality assessment criteria in the UN Population Fund evaluation quality assurance and assessment instrument relate to the gender and human rights assessment criteria? 3) How might the UN Evaluation Group-specific focus on gender and human rights potentially result in a clearer focus on social justice issues within an evaluation? 4) To what degree does the required UN Population Fund management response suggest acceptance of recommendations related to gender and human rights?

Guiding framework

The study utilised the UN Evaluation Group Norms and Standards framework and the UN Population Fund Evaluation Quality Assurance and Assessment (2019) template for a meta-evaluation. A 2021 meta-evaluation conducted by the UN’s Inspection and Evaluation Division on evaluation reports of various UN agencies found that the UN Evaluation Group Norms and Standards were a significant reference for evaluators globally. Thirty-three UN Evaluation Group member agencies incorporated these Norms and Standards in their evaluation policies/guidelines. UN Evaluation Group uses the Norms and Standards to improve and standardise evaluation practice, and they serve as the foundation for the UN Evaluation Group evaluation competencies, peer reviews, and benchmarking initiatives. Below are brief explanations of the normative principles that govern UN Evaluation Group evaluations.

The first norm requires evaluation managers and evaluators to promote the UN’s commitment to the 2030 Sustainable Development Agenda. The Utility Norm requires intentional use of evaluation analysis to contribute to organisational learning and empower stakeholders. The Credibility Norm requires ethical and professional conduct of evaluators with independence, impartiality, and rigorous methodology. The Independence Norm mandates evaluators to freely express their assessments without undue influence and the evaluation function to be positioned independently from management functions. The Impartiality Norm requires evaluators to have no direct responsibility for policy setting or management of the evaluation subject and to maintain objectivity, professional integrity, and absence of bias. The Ethics Norm requires evaluators to respect confidentiality, informed consent, and sensitive data, and manage evidence of wrongdoing. The Transparency Norm requires evaluations to be publicly accessible to establish trust and stakeholder ownership. The Human Rights and Gender Equality Norm requires integrating these values and principles into all stages of evaluation. The National Evaluation Capacities Norm commits to supporting national evaluation capacities of UN Member States. The Professionalism Norm requires evaluations to be conducted with professionalism, integrity, and adherence to UN Evaluation Group Norms and Standards.

In addition, UN Evaluation Group has four institutional norms for the evaluation in the UN system: Enabling Environment, Evaluation Policy, Responsibility for the Evaluation Function, and Evaluation Use and Follow-up. These norms are interrelated and require an organisational culture that values evaluation as a basis for accountability, an explicit evaluation policy, an independent and competent evaluation function, and addressing evaluation recommendations by integrating them into an organisation’s policies and programs.

The five standards governing evaluations in the UN Evaluation Group agencies operationalise the evaluation norms and standards. The Standard on Institutional Framework covers various evaluation functions and evaluation products, which should be publicly accessible. The Management of the Evaluation Function requires the head of evaluation to ensure that the evaluation function is fully operational, independent, and conducted according to the highest professional standards. The Evaluation Competencies Standard requires individuals who perform evaluations in the UN Evaluation Group agencies to possess core competencies and conform to ethical standards. The Conduct of Evaluations Standard requires evaluations to provide timely, valid, and reliable information, and evaluators to undertake an evaluability assessment. It also requires rigorous evaluation methodologies, inclusion and engagement of diverse stakeholders and reference groups, human rights-based approaches, gender mainstreaming strategy, and accessibility to the local population. The Standard on the Conduct of Evaluations requires that the evaluation reports and products meet the needs of intended users, contain evidence-based findings, conclusions and recommendations based on evidence and analysis, and that communication and dissemination of the evaluation products are designed to enhance evaluation use. The final standard concerns quality assurance and quality control as the final stage of evaluations.

Method

Sample

We analysed a real-world example of meta-evaluation by collecting publicly available reports, including evaluation quality assessments, evaluation reports, and management responses, from one UN Evaluation Group member. We conducted a system-wide search of UN Population Fund meta-evaluation evaluation reports published on their website from 2010 to 2021, including reports in English, French, Portuguese, and Spanish. Our search of UN Population Fund meta-evaluation reports yielded 242 reports, including 152 in English and 89 in non-English languages. Some non-English reports had Evaluation Quality Assurance and Assessment reports in English, but the corresponding evaluation reports were not in English. Our analysis focused on evaluation reports with evaluation quality assessments. Evaluation reports before 2010 were excluded as they did not meet our selection criteria. We also included reports with management responses, but only if they had Evaluation Quality Assurance and Assessment reports, as our primary focus was on analysing Evaluation Quality Assurance and Assessments. Management responses come from each organisation’s management providing a formal response to each recommendation, which includes whether the organisation agrees or disagrees with the recommendations and includes clear, time-bound actions to implement the recommendations they agreed with (United Nations Evaluation Group, 2016). We analysed around 10 reports per year, with 2017 having the most reports in the study. This period corresponds to the coming into force of the 2016 UN Evaluation Group Norms and Standards. Reports analysed per year ranged from 3 to 23, with a total of 62 reports analysed. The largest proportion of reports were Country Program Evaluation (82%). We analysed reports from all regions except Western European and other states, with the African States region having the largest number of reports analysed (23 reports).

Instruments

The UN Population Fund Evaluation Quality Assurance and Assessment Tool

We explored the implementation of UN Evaluation Group Norms and Standards using the UN Population Fund Evaluation Quality Assurance and Assessment reports and management responses as a case study. The UN Population Fund Evaluation Quality Assurance and Assessment aims to ensure the production of high-quality evaluations through quality assurance and assessment. According to UN Population Fund, quality assurance is integrated throughout the evaluation process, from the terms of reference to the final report. Quality assessment, conducted by the independent UN Population Fund Evaluation Office, is limited to the final report and assesses compliance with Evaluation Quality Assurance and Assessment quality criteria. The Evaluation Quality Assurance and Assessment measures seven criteria, including the structure and clarity of reporting, design and methodology, data reliability, analysis and findings, conclusions, recommendations, and gender. The UN Population Fund website emphasises that both the evaluation manager and team leader must ensure that draft and final reports meet quality assessment criteria. The UN Population Fund Evaluation Quality Assurance and Assessment assesses seven criteria on a four-point scale of unsatisfactory, fair, good, and very good to derive an overall quality score (United Nations Population Fund, 2019). However, each of the seven criteria is further evaluated based on a three-point Likert scale of yes, no, or partial, with each criterion having between three and ten associated sub-criteria. The overall criteria rating is determined by combining the sub-criteria ratings and assigning a quality score out of 100.

Procedure

In this study, we conducted a comprehensive analysis of the UN Evaluation Group Norms and Standards, as well as the Program Evaluation Standards (Yarbrough et al., 2011), the American Evaluation Association Public Statement on Cultural Competence in Evaluation (American Evaluation Association, 2011), the AEA Evaluator Competencies (American Evaluation Association, 2018), and the AEA Guiding Principles (American Evaluation Association, 2018), collectively referred to as the ‘American Evaluation Association foundational documents’. We used a table-based comparative approach to examine each section and component of the UN Evaluation Group Norms and Standards and compared them to the corresponding elements in the American Evaluation Association foundational documents. To ensure equitable matching, we required similar wording in each section of the UN Evaluation Group Norms and Standards and the American Evaluation Association foundational documents, particularly regarding human rights concerns, such as gender considerations. However, we excluded components that referred to human rights, social justice, or culture without specific reference to the same concept, as defined by the United Nations. While the UN Evaluation Group Norms and Standards provide valuable guidance for evaluation, we excluded sections that did not have similar guidance in the comparison documents. We compared UN Evaluation Group Norms and Standards items that aligned with those in the American Evaluation Association foundational documents and those that did not, identifying key themes and areas of focus in the UN Evaluation Group Norms and Standards not addressed in the American Evaluation Association foundational documents. We summarised the outcome of our comparative analysis in tables, highlighting key themes that emerged.

We also conducted statistical analyses of the UN Population Fund Evaluation Quality Assurance and Assessment tool criteria, examining relationships between quality assessment criteria and the relationship between gender and other criteria. Descriptive statistics were calculated at the criteria level, including a comparison of scores across time to assess overall trends in evaluation report quality. Our definition of evaluation report quality was based on composite scores for the quality assessment criteria given by external independent meta-evaluation teams. Additionally, we calculated descriptive statistics and Pearson Correlations for sub-criteria within each criterion, creating scatter plots to show trends in average ratings for each criterion from 2016 until 2021. All statistical analyses were run in R (R Core Team, 2019).

Results

Our study aimed to achieve three objectives. First, we aimed to evaluate the alignment of the UN Evaluation Group Norms and Standards with the American Evaluation Association foundational documents in integrating social justice and culturally responsive evaluation concerns. Second, we aimed to examine the relationship between the gender integration criterion and the various meta-evaluation quality assessment criteria in the UN Population Fund Evaluation Quality Assurance and Assessment. Third, we aimed to explore how the UN Evaluation Group’s focus on gender and human rights could lead to a clearer focus on social justice issues within an evaluation. Fourth, we aimed to examine the relationship between the required UN Population Fund management response and acceptance of recommendations in these areas.

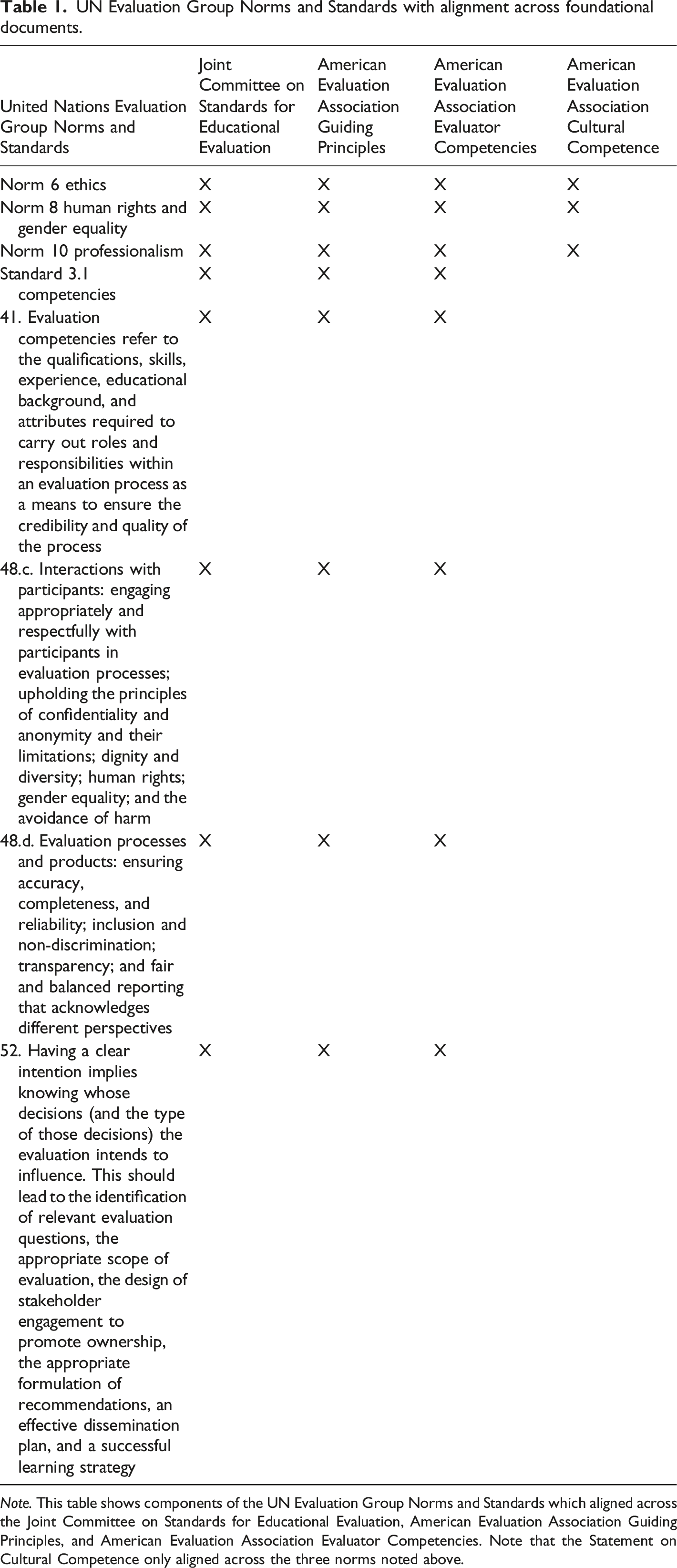

UN Evaluation Group Norms and Standards with alignment across foundational documents.

Note. This table shows components of the UN Evaluation Group Norms and Standards which aligned across the Joint Committee on Standards for Educational Evaluation, American Evaluation Association Guiding Principles, and American Evaluation Association Evaluator Competencies. Note that the Statement on Cultural Competence only aligned across the three norms noted above.

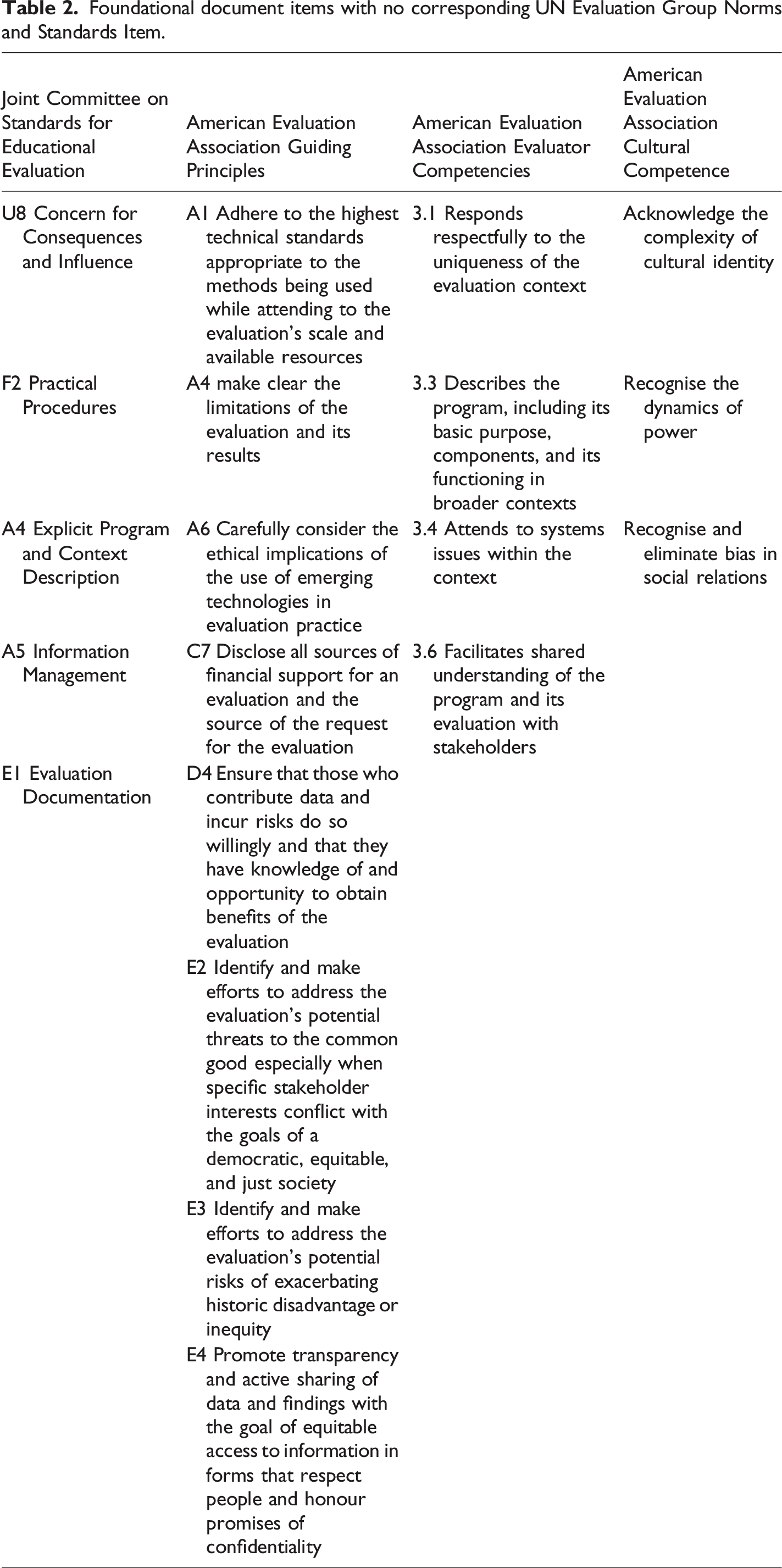

Foundational document items with no corresponding UN Evaluation Group Norms and Standards Item.

The UN Evaluation Group Norms that aligned with the American Evaluation Association foundational documents focused on ensuring the integrity of evaluation processes and products and evaluator interactions with participants. In comparison, the language in the Joint Committee on Standards for Educational Evaluation domains, American Evaluation Association Guiding Principles, Evaluator Competencies, and Statement on Cultural Competence tended to focus on cultural responsiveness more broadly, while the UN Evaluation Group Norms focused on human rights, with a specific focus on gender equality. We will discuss these language differences in the next section. However, it is important to note that all these documents, except for the Statement on Cultural Competence, emphasised the importance of evaluator competencies for conducting high-quality evaluations. Table 1 shows that the UN Evaluation Group Standard on competencies highlights the significance of evaluators having the necessary qualifications, skills, experiences, and educational background and attributes to conduct credible and quality evaluations.

Non-alignment in norms and standards

In our analysis, we observed that the UN Evaluation Group Norms and Standards lacked alignment with American Evaluation Association foundational documents in areas such as evaluation context and social justice concerns. Table 2 presents the American Evaluation Association foundational document items that did not have corresponding items in the UN Evaluation Group Norms and Standards. The American Evaluation Association foundational document items focused on responding to the evaluation context, describing the context, and addressing systems issues within the context, while the UN Evaluation Group Norms and Standards did not have language related to context. Moreover, the American Evaluation Association foundational document items emphasised social justice concerns, including data contributors obtaining benefits from the evaluation, acknowledging the complexity of cultural identity, recognising the dynamics of power, and addressing historical disadvantage or inequity. In contrast, the UN Evaluation Group Norms and Standards centred around human rights and gender equity but did not specifically address social justice or historical disadvantage. The UN Evaluation Group Norms and Standards provided specific guidance for organisations to conduct evaluation and quality control, such as internal and external meta-evaluation, but these areas lacked alignment with American Evaluation Association foundational documents.

The UN Evaluation Group Norms and Standards exhibit non-alignment in the area of evaluator independence and impartiality, particularly with regards to the elements of objectivity, professional integrity, and absence of bias emphasised in the UN Evaluation Group Norm on Impartiality. This has implications for evaluation methodology, particularly in the interactions between evaluators and evaluation participants. While UN Evaluation Group’s emphasis on Impartiality may suggest a positivistic approach of neutral and detached evaluators, the American Evaluation Association foundational documents emphasise a social justice focus that employs constructivist epistemologies where evaluators co-create meaning with participants to address issues of culture, equity, diversity, and inclusion. For example, the American Evaluation Association Statement on Cultural Competence aims to challenge stereotypes and promote full participation of evaluation participants, emphasising that evaluators should recognise and eliminate bias in language rather than focusing on the impartiality of the evaluator. We will further discuss the differences between the UN Evaluation Group and American Evaluation Association documents in the next section.

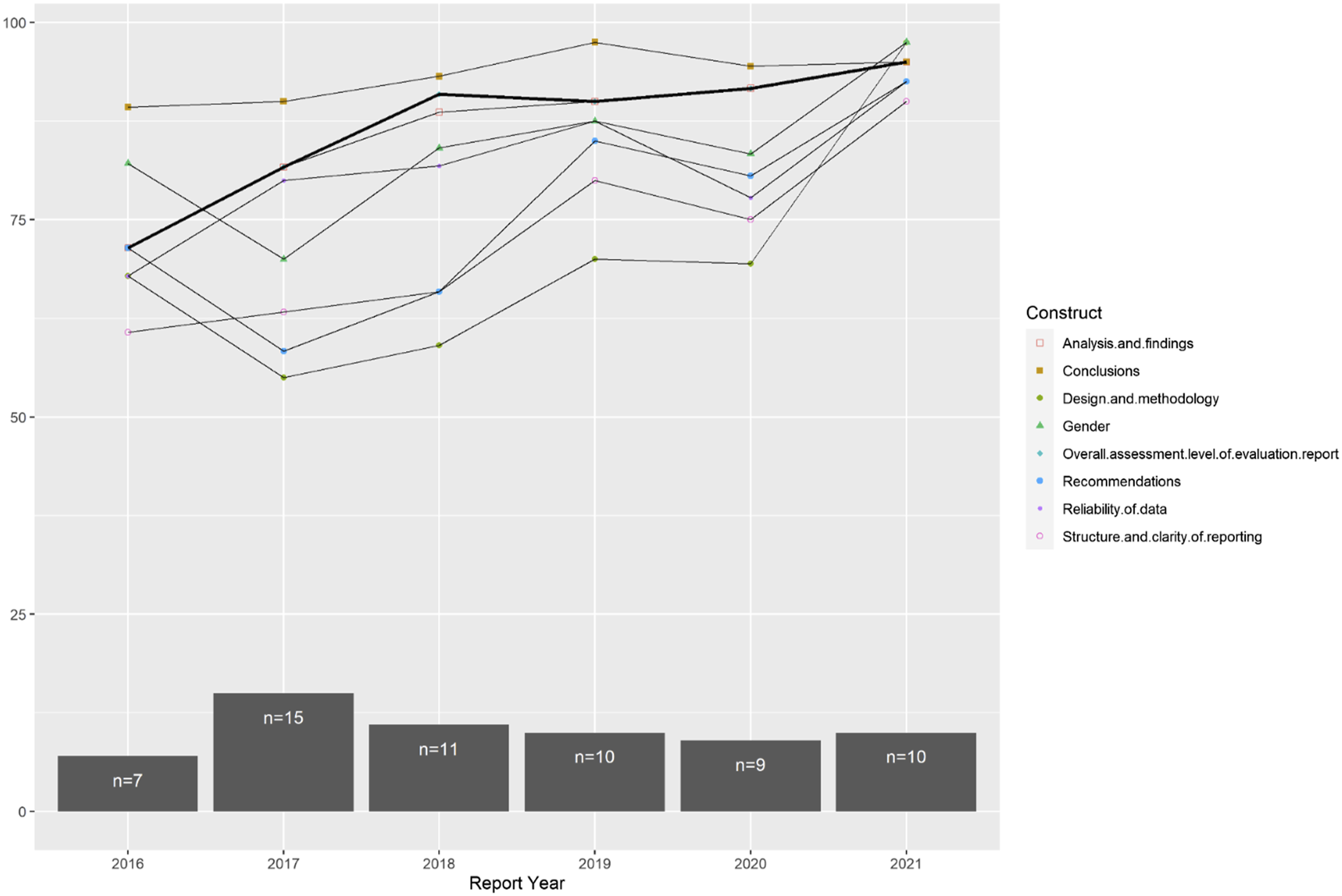

Trends in evaluation quality

We analysed 62 UN Population Fund Evaluation Quality Assurance and Assessment reports to assess the correlation between evaluation quality assessment criteria and sub-questions. Our research aimed to evaluate the relationship between Gender Integration criterion and meta-evaluation quality criteria in UN Population Fund EQAA. Descriptive statistics were conducted to observe the quality trend of UN Population Fund evaluations from 2016 to 2021. Figure 1 illustrates the mean Overall Quality rating of the eight evaluation quality assessment criteria over the years, with a focus on Gender Integration. The average rating showed an upward trend from 2016 to 2021, with a slight decrease in 2019. Similarly, the rating for individual evaluation quality assessment criteria also steadily increased over the same period. In terms of Gender Integration, Figure 1 shows that its rating decreased in 2017, then gradually increased from 2017 to 2019 before a slight decrease in 2020, and then an increase in 2021. Overall, Gender Integration’s rating increased from 3.29 to 3.90 on the four-point Likert scale over the years. Quality of UN Population Fund average construct rating per year over 2016 to 2021.

Overall quality and sub-scale correlations

We conducted a Pearson’s Correlation analysis to examine the relationship between the meta-evaluation quality assessment criteria in the UN Population Fund Evaluation Quality Assurance and Assessment instrument and the Overall Quality of the evaluation report. To better understand the characteristics of the eight sub-scale scores, we calculated the means and standard deviations of each sub-scale score. Our goal was to identify any correlations between the sub-scales and the Overall Quality of the evaluation report. We also examined the relationships between the quality assessment criteria, or sub-scales, in the Evaluation Quality Assurance and Assessment instrument, with a specific interest in determining whether gender was correlated with all the other sub-scales. Once we established the characteristics and dimensionality of the sub-scales, we then investigated how they correlated with the proportion of recommendations accepted in the management reports. The management response to evaluation recommendations is a critical aspect of UN Evaluation Group’s Norms and Standards as it ensures evaluation use. The Norms and Standards require management to provide their views on the evaluation recommendations, including whether and why they agree or disagree with each recommendation, and to implement concrete actions to address those recommendations.

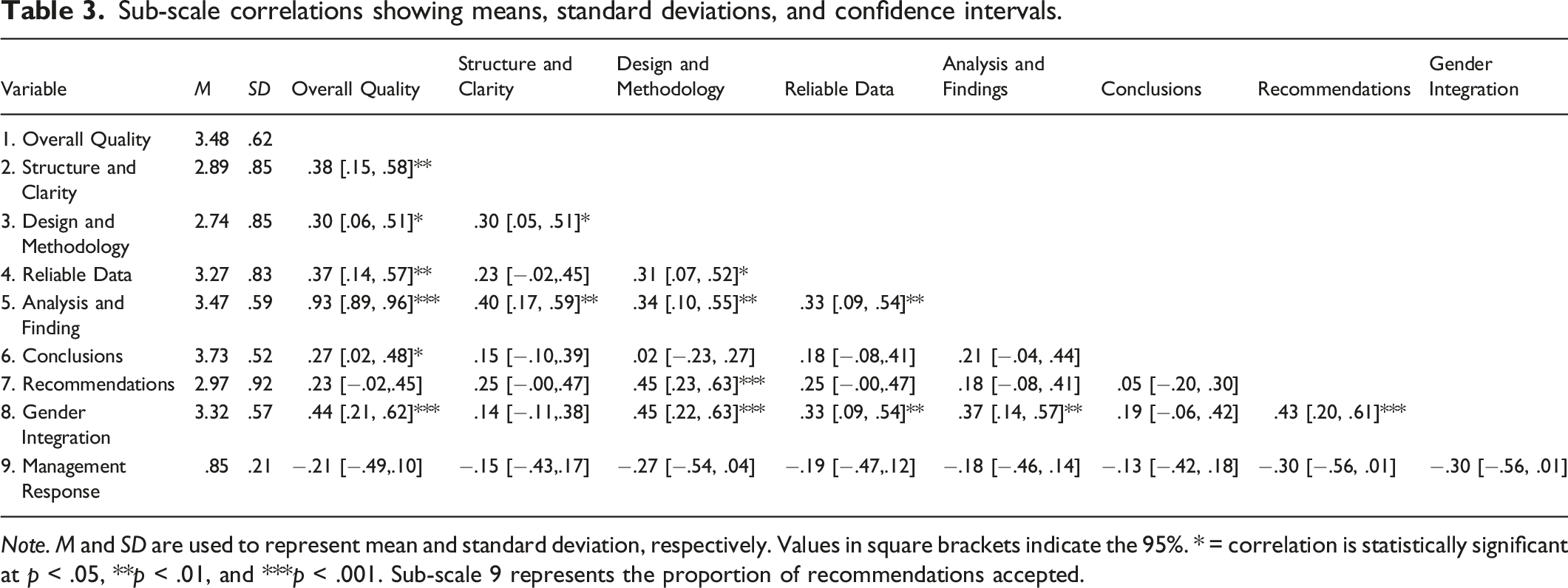

Sub-scale correlations showing means, standard deviations, and confidence intervals.

Note. M and SD are used to represent mean and standard deviation, respectively. Values in square brackets indicate the 95%. * = correlation is statistically significant at p < .05, **p < .01, and ***p < .001. Sub-scale 9 represents the proportion of recommendations accepted.

Our findings indicated that gender-responsive data collection tools and methods of analysis had moderate correlations with Design and Methodology (r = .45, p < .001), Reliability of Data (r = .33, p = .009), Analysis and Findings (r = .37, p = .003), and Recommendations (r = .43, p < .001). However, there was no statistically significant correlation between Gender Integration and the Structure and Clarity of the report (r = .14, p = .261) and the Conclusions of the evaluation (r = .19, p = .129). This finding suggests that when evaluators intentionally integrate gender equality and women’s empowerment concerns in the evaluation Design and Methodology, these concerns are more likely to be addressed in the evaluation Analysis and Findings and Recommendations. Conversely, the lack of a relationship between Gender Integration and Conclusions of the report indicates that evaluations that do not integrate gender and human rights concerns in the Design and Methodology also lack content to include in the Conclusions.

Regarding the relationship between constructs, we found that having a sound and credible Analysis and Findings of the evaluation had a moderate correlation with the Structure and Clarity of the report, r(60) = .40, p = .001. The Structure and Clarity assessed the report’s comprehensiveness and user-friendliness, including the presence of an executive summary. Additionally, having a sound evaluation Design and Methodology was moderately correlated with the Recommendations of the evaluation reports, r(60) = .45, p = .007. This finding indicates that reports with a solid evaluation Design and Methodology have evidence-based Recommendations. The Design and Methodology construct assessed whether evaluators (a) described the evaluation context, (b) developed and used logic models and evaluation matrices, (c) justified the choice of data collection tools and analysis strategy, (d) described a sampling strategy, and (e) engaged stakeholders in the evaluation process. Logic models and evaluation matrices are essential components of any evaluation.

Despite being moderately correlated with Design and Methodology, there was no statistically significant correlation between Recommendations and other quality assessment criteria in the Evaluation Quality Assurance and Assessment, which is unusual given that the UN Evaluation Group Standards require evaluation Recommendations to be firmly based on evidence and follow from the evaluation findings and conclusions. However, Evaluation Analysis and Findings were moderately correlated with three sub-scales, including Design and Methodology (r(60) = .34, p = .007), Reliability of Data (r(60) = .33, p = .008), and Structure and Clarity of the report (r(60) = .40, p = .001). This suggests that sound Design and Methodology and Reliable Data were positively correlated with evidence in Evaluation Findings, which was indirectly related to Recommendations. Additionally, Reliability of Data had a small yet significant positive correlation with Design and Methodology (r(60) = .31, p = .014). The Reliability of Data sub-scale assessed whether the evaluation clearly identified and used reliable qualitative and quantitative data sources and whether there was evidence that the data collected had sensitivity to issues of discrimination and other ethical considerations.

Sub-criteria correlation and management response

We explored the relationship between the different sub-criteria in the UN Population Fund Evaluation Quality Assurance and Assessment instrument and found some interesting correlations. Specifically, we observed a strong correlation between sub-criteria 2.2 (description of context) and sub-criteria 2.10 (appropriateness of evaluation design and methodology for assessing cross-cutting issues), indicating that a clear description of the evaluation context may impact evaluators’ ability to assess cross-cutting issues such as equity, vulnerability, gender equality, and human rights. Additionally, we found a correlation between sub-criteria 2.2 and 2.7 (acknowledgement of methodological limitations and evaluator bias), suggesting that a lack of awareness of evaluator biases may limit their ability to fully describe the evaluation context and assess cross-cutting themes. Moreover, we observed that the integration of gender equality and women’s empowerment indicators in the design of data collection tools was strongly correlated with the evaluation findings, conclusions, and recommendations reflecting a gender analysis. This indicates that the inclusion of such indicators in the data collection design can impact how gender-related issues are reported and addressed in the evaluation results. However, we also noted that UN Population Fund’s requirement for evaluators to be impartial in the evaluation process may conflict with the methodological assessment of cross-cutting issues.

Regarding management responses to evaluation recommendations, we found no correlation between the proportion of management responses and Evaluation Quality Assurance and Assessment sub-scales. However, there was a consistent increase in the proportion of accepted or partially accepted recommendations over the past decade, which aligns with the general improvement in the quality of UN Population Fund evaluation reports. Prior to 2015, gender and human rights were not required reporting in the Evaluation Quality Assurance and Assessment templates. Our analysis of published evaluation 104 reports since 2010 showed a positive trend in the acceptance of gender and human rights recommendations by management. We found that almost 40% of recommendations related to gender and human rights and that 99% of these recommendations were accepted. Gender and human rights recommendations in evaluation reports addressed practical and strategic needs for all genders. Practical gender concerns included women’s economic empowerment and preventing unintended pregnancies, unsafe abortions, teenage pregnancy, trafficking, and female genital mutilation. Strategic gender concerns included mainstreaming gender and human rights, conducting gender audits, providing technical assistance, involving men and boys, and supporting women’s enrolment and training in law enforcement and medical schools.

Most rejected recommendations had budgetary or administrative implications, were out of context due to inadequate understanding of the intervention contexts, or were deemed impractical or exceeding UN Population Fund’s resources or mandate. Some recommendations were rejected for adding limited value to the overall program needs. Others were rejected due to potential negative impacts on collaboration with national governments or coordination with other UN agencies for joint advocacy and program implementation. For instance, management noted in one response that the evaluation document lacked justification or background information, making the recommendation out-of-context, while in another response, management found the recommendation to be impractical or vastly more costly than UN Population Fund’s limited resources permitted.

Discussion and implications for future research

The field of evaluation faces major concerns regarding the demand for high-quality evaluations and the centrality of social justice, which have been highlighted eight years into the implementation of the UN’s Sustainable Development Goals, replacing the Millennium Development Goals in 2015. By comparing the American Evaluation Association foundational documents with the UN Evaluation Group Norms and Standards, we found that both the United Nations and American Evaluation Association share a commitment to social justice. Our crosswalk of UN Evaluation Group Norms and Standards with the American Evaluation Association foundational documents provides new insights into the current discourse among evaluators on evaluation quality, meta-evaluation, and the inclusion of social justice in evaluations. This has implications for UN member states, including the US, and the principles and guidelines for conducting evaluations. Studies by Sturges and Howley (2017) and Harnar et al. (2020) have also addressed these concerns, while Symonette et al. (2020) and Thomas and Campbell (2021) have focused specifically on the inclusion of social justice in evaluations.

Our findings support the claim that meta-evaluation is crucial for ensuring high-quality evaluations at both the formative and summative stages (Scriven, 1969; Stufflebeam, 2001a, 2001b). Previous research has also emphasised the importance of quality evaluation (Hanssen et al., 2008; Harnar et al., 2020; UN Evaluation Group, nd). The UN Evaluation Group Norms and Standards address these concepts as quality assurance and quality assessment, respectively. Quality assurance is integrated throughout the entire evaluation process, while quality assessment is restricted to the final evaluation report.

While meta-evaluation is important for ensuring high-quality evaluations, our study found inconclusive evidence regarding the need to standardise criteria for meta-evaluation while also being flexible to address stakeholder needs (Apthorpe & Gasper, 1982; Stufflebeam, 1974). The call for standardisation and flexibility in meta-evaluation criteria presents a paradox, highlighting the lack of consensus in the field. Currently, there are no one-size-fits-all guidelines for meta-evaluation or for addressing social justice in meta-evaluations. Instead, evaluators should intentionally incorporate quality assurance and social justice in the design of meta-evaluations, utilising tools that best fit the evaluation context. The UN Population Fund Evaluation Quality Assurance and Assessment serves as an example of the importance of intentional inclusion of human rights and social justice requirements in meta-evaluation criteria that are context specific. According to UN Evaluation Group, evaluators and evaluation managers must take responsibility for integrating the values and principles of human rights and gender equality into all stages of evaluation to ensure that these values are respected, addressed, and promoted, in line with the commitment to the principle of ‘no-one left behind’.

The variability in standards and norms for addressing social justice in evaluations may depend on context and the compromises evaluators make to meet stakeholder needs. However, further research is necessary to develop a common language and definition of what constitutes a ‘high-quality’ evaluation and common criteria for conducting meta-evaluations in the field. Currently, what constitutes a ‘high-quality’ evaluation is not determined by common guidelines but by the context of the evaluation. Consistent with prior research that highlighted the need for American Evaluation Association members to address varied definitions of core concepts in foundational documents (Tucker et al., 2023), UN Evaluation Group, American Evaluation Association, and Australian Evaluation Society would all benefit from adopting shared standards for meta-evaluation, moving beyond working in isolation. UN Evaluation Group and other VOPEs could gain from Aotearoa New Zealand Evaluation Association’s integration of social justice concerns into Program Evaluation Standards and Competencies. Collaboration between representatives of VOPEs could address the various definitions and approaches to assessing quality assurance and quality assessments of evaluations. This is particularly important in the ongoing debate on the professionalisation of the field, so that evaluation practitioners are guided by common umbrella standards in their practice of meta-evaluation. For instance, if the UN Evaluation Group Norms and Standards adopted language related to culture, this could provide guidance on issues that elevate human rights concerns to addressing societal barriers, such as calling out racial or ethnic disparities that could distort program outcomes. American Evaluation Association and Australian Evaluation Society on the other hand could benefit from adopting language related to human rights and specifically elevating the rights of individuals for certain groups, as the UN Evaluation Group has done.

In our study, we investigated the impact of UN Evaluation Group’s focus on gender and human rights on evaluation and management acceptance of related recommendations. We found that gender and human rights-specific recommendations and their acceptance by management have increased over the past decade. However, we also observed that rejected recommendations were often related to budget or administrative concerns or inadequate evaluator understanding of the intervention and its contexts. This indicates that including gender and human rights in the evaluation scope and quality assurance criteria ensures that these concerns are embedded in the evaluation design, methodology, recommendations, and management response. In contrast, the American Evaluation Association Guiding Principles emphasise the importance of contributing to the common good and an equitable society, but it is not clear how these values are applied in evaluation practice. Further research is needed to engage American Evaluation Association members in exploring the meaning of social justice values and public good implications and how they can be integrated into evaluation practices like those in UN Evaluation Group.

A unique contribution of our study is UN Evaluation Group’s Evaluation Use and Follow-up (Norm 14), which requires the intentional use of evaluation analysis, conclusions, or recommendations to inform decisions and actions. The UN Evaluation Group Utility Norm mandates that management of the UN agency commissioning an evaluation provide their views of the evaluation recommendations, including whether and why they agree or disagree with each recommendation and concrete actions to implement those recommendations. Additionally, UN Evaluation Group requires that the management response be publicly available. This approach differs from American Evaluation Association Utility Standards, which do not explicitly outline concrete actions for organisations to take in response to evaluation findings and recommendations. The importance of evaluation use has been recognised by decision-oriented evaluation scholars, who see use as central to evaluation practice (Stufflebeam, 1983; Provus, 1971; Wholey, 1983; Cousins & Earl, 1992; Preskill & Torres, 2001; King, 1988; Owen & Lambert, 1998).

The UN Population Fund Evaluation Quality Assurance and Assessment is an example of projects that created their own standards, highlighting the need for flexible standards in meta-evaluation (Cooksy & Caracelli, 2005; Harnar et al., 2020). The UN Evaluation Group management response aligns with Worthen’s (1994) belief that setting standards to guide professional practice is a crucial aspect of any profession. The accountancy profession requires audit clients to provide a written response to audit findings in the form of a management response, and American Evaluation Association could follow suit by adopting UN Evaluation Group’s Utility Norm to advance and normalise evaluation use. This would contribute to the professionalisation of program evaluation and make evaluations truth tellers of the effectiveness of public action, as accountants are for money matters (Bickman, 1997). Further research is needed to explore the effectiveness of management response among all UN Evaluation Group members and to compare EQAs from different UN agencies with mandates for addressing gender equality and human rights concerns. Our assessment found that the UN Population Fund Evaluation Quality Assurance and Assessment is an appropriate measure of evaluation quality and that mainstreaming gender equality and human rights concerns in the design, methodology, and implementation of the evaluation is important in ensuring that social justice is addressed in the management response letter.

Limitations of the study

Our study aimed to examine the extent to which social justice was addressed in meta-evaluation literature and practice by comparing American Evaluation Association foundational documents with UN Evaluation Group Norms and Standards and analysing the UN Population Fund Evaluation Quality Assurance and Assessment. However, there were limitations to our study. Firstly, comparing documents based on specific language used can be subjective. Furthermore, we assumed that the UN Evaluation Group Norms and Standards are pertinent to audiences in Australasia, particularly countries that are United Nations members. Another limitation was that our sample size (n = 62) was small when assessing the UN Population Fund Evaluation Quality Assurance and Assessments, preventing us from conducting psychometric analyses. The disproportionate weighting of the criteria also made it challenging to conduct factor analyses. While our study found statistically significant relationships between the criteria, we were unable to confirm whether sub-scales measured the same underlying construct of high-quality evaluation. Future research incorporating EQAs from all UNEG members could provide larger samples for psychometric analyses. Harmonising social justice approaches in evaluation and aligning UN Evaluation Group Norms and Standards with American Evaluation Association, Australian Evaluation Society, and Aotearoa New Zealand Evaluation Association foundational documents could be a first step in reaching a consensus on evaluation quality assurance standards, promoting the professionalisation of the evaluation field and socially just evaluations. However, further research is needed to address the limitations of our study.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.