Abstract

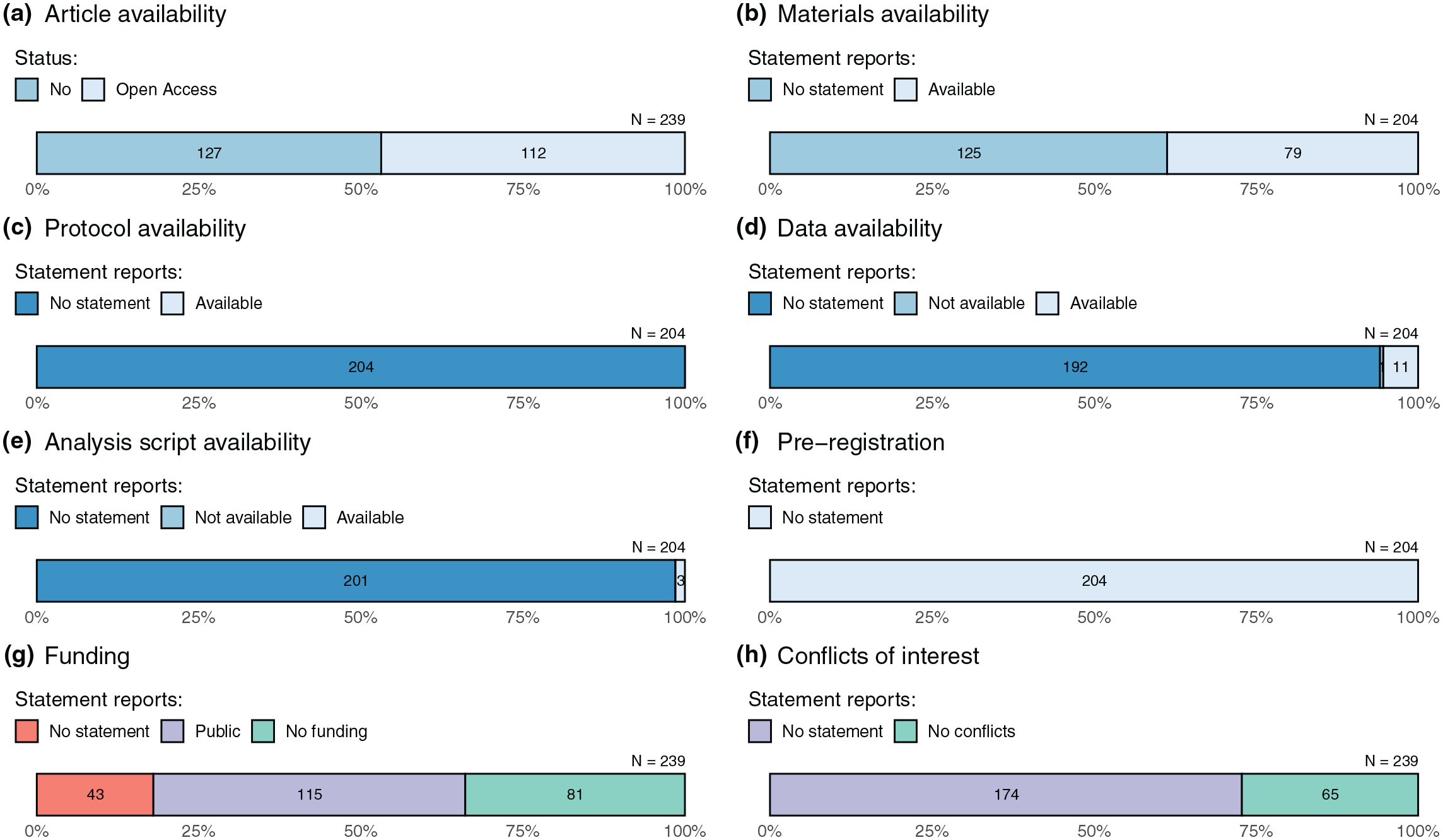

Social sciences are navigating an unprecedented period of introspection about the credibility and utility of disciplinary practices. Reform initiatives have emphasized the benefits of various transparency and reproducibility-related research practices; however, the adoption of these across music psychology is unknown. To estimate the prevalence, a manual examination of a random sample of 239 articles out of 1,192 articles published in five music psychology journals between 2017 and 2022 was carried out. About half of the articles were publicly available (112/239) and 39% share some of the research materials, but 5% share raw data and 1% analysis scripts. Pre-registrations were not observed in the sample. Most articles (82%) included a funding disclosure statement, but conflict of interest statements were less common (27%). Replication studies were rare (3%). Additional searches of replication studies were conducted beyond the sample. These analyses did not find substantially more replication studies in music psychology. In general, the results suggest that transparency and reproducibility-related research practices were far from routine in music psychology. The findings establish a baseline that can be used to assess future progress toward increasing the credibility and openness of music psychology research.

Over 10 years ago, Frieler et al. (2013) reported on about the status of replication in music psychology. Their results suggested that practice of replication was only minimally adopted (0.02%–0.03% of published studies, just 18 in total out of 3,530 studies analyzed) by the year 2013. Nevertheless, they conclude their text on a positive note: “Replication seems an interesting, fruitful and much needed approach in music psychology at the moment, as evidenced by the great interest in special symposia at recent conferences” (Frieler et al., 2013, p. 273).

Now, after a decade, it is time to take stock and reflect on how much progress the field has made. Since 2013, calls for the adoption of reproducible and transparent research practices have become more compelling (Ioannidis, Greenland, et al., 2014; Miguel et al., 2014; Munafò et al., 2017; Nosek et al., 2015; Wallach, Gonsalves & Ross, 2018). Psychology in particular has been flagged as a discipline subject to biases and misleading results (Ioannidis, Munafò, et al., 2014; Open Science Collaboration, 2015; Szucs & Ioannidis, 2017). There have been attempts to address the issues collectively (Hardwicke et al., 2020; Hardwicke & Ioannidis, 2018; Vazire, 2018) so as to enforce self-correction and credibility by releasing study materials, data, and analysis materials; to promote the replication of studies; and to preregister study details before collecting data. At the level of publishing, a journal article can be considered a unit of scholarship, but often the primary materials (materials, protocols, data)—or even the article itself in the case of variable institutional access—may not be shared (Piwowar et al., 2018). Furthermore, information on sources of bias such as funding or conflicts of interest is not always available (Cristea & Ioannidis, 2018). When this information is shared, it facilitates meta-research such as replication and meta-analysis, and generally improves the quality and credibility of scholarship activities (Klein et al., 2018). Overall, transparency and reproducibility-related practices have been only minimally adopted in the fields of biomedicine (Iqbal et al., 2016; Wallach, Boyack & Ioannidis 2018), the social sciences (Hardwicke et al., 2020), and psychology (Hardwicke et al., 2022, 2024).

The purpose of the present study was to assess the state of affairs so far as transparency and reproducibility are concerned in music psychology. The indicators adopted were those used to estimate these qualities in psychology (Hardwicke et al., 2022), namely, open access to published articles; availability of study materials, protocols, raw data, and analysis scripts; pre-registration; disclosure of funding sources and conflicts of interest; and conduct of replication studies.

Methods

Design

The design of this study is a retrospective observational study with cross-sectional sampling. Sampling units were individual journal articles. The measured variables are shown in Table 1.

Measured variables. The variables measured for an individual article depended on the study design classification.

Sample

A sample of music psychology articles published between 2017 and 2022 from five discipline-specific journals, Psychology of Music (PoM), Music Perception (MP), Musicae Scientiae (MS), Journal of New Music Research (JNMR), and Music & Science (M&S) was obtained. These journals are the leading journals of the discipline and also highly ranked journals for music according to ASJC journal rankings (MS #1, JNMR #4, PoM #5, MP #7, M&S 12) in 2022 (Scopus). The pool of all articles published during the period is 1,192, out of which a stratified random sample (20%) across journal and year (n = 239) was taken. The sample size was comparable to a recent transparency sample (n = 250) of psychology articles (Hardwicke et al., 2022), which was used as an analysis template for the present study.

Procedure

Data collection took place between 27 September and 27 October 2023. Data extraction for the measured variables shown in Table 1 involved a manual examination of the articles based on previous investigations in social sciences (Hardwicke et al., 2020) and psychology (Hardwicke et al., 2022). Additional analyses were carried out with additional larger samples that consisted of (a) all published papers in discipline-specific journals between 2017 and 2022, and (b) a wider check of non-discipline specific journals, both using Scopus searches, to check the coverage of the results obtained from the random sample.

Analysis

The results describe the proportion of articles that meet the evaluated indices. No inferential statistics were performed. The counts are supplemented with 95% confidence intervals based on the Sison–Glaz method for estimating confidence intervals of multinomial probabilities, which is based on Poisson approximation and has been demonstrated to be more accurate than other estimates, especially for small samples (Sison & Glaz, 1995).

Results

Sample characteristics

Sample characteristics for all 239 articles are displayed in Table 1. In the sections below, the term primary data will be used for articles reporting empirical data (from laboratory studies, surveys and interviews, corpus-based studies, and computational studies) as opposed to those types of article reporting no empirical data (systematic/critical reviews, editorials, commentaries, and theory proposals).

Article availability (open access)

Open access was defined through Scopus (Document Type). Among the 239 articles, 112 were open access (see Figure 1[a]), while 127 were only accessible through a paywall. There are different types of publishing model for open-access articles; the sample consisted of 48 Green, 14 Gold, 13 Hybrid Gold/Green, 12 Bronze, 12 Gold/Green, 8 Bronze/Green, and 5 Hybrid Gold. 1

Assessment of transparency and reproducibility-related research practices in music psychology.

Materials and protocol availability

Of the 204 articles that involved primary data (see Table 2), 79 contained a statement regarding the availability of original research materials, such as survey instruments, software, or stimuli (39% [32% to 46%]; where the numbers in square brackets refer to estimated 95% confidence interval, see also Figure 1[b]). Of the 204 articles involving primary or secondary data, none reported the availability of a study protocol (Figure 1[c]).

Sample characteristics for the 239 randomly sampled articles from music psychology journals.

Countries with more than 10 articles published are shown.

For the 78 articles that had materials that could be accessed, they were made available in the article itself (e.g., in a table or appendix; n = 28), in a journal-hosted supplement (n = 38), on a personal or institutionally hosted (non-repository) webpage (n = 4), or in an online third-party repository (n = 8, mainly OSF or GitHub). Most materials contained additional information about the study (an additional statistical table, an outline of an interview structure, a list of musical pieces analyzed, survey items, graphical representations of stimuli, or simply vaguely stated “additional methodological details”).

Data availability

Of the 204 articles that involved primary or secondary data, 11 contained data availability statements (5% [3% to 9%]; Figure 1[d]). For one data set, the availability was declined because “participants of this study did not agree for their data to be shared publicly.” One data set was obtainable upon request from the authors. Of the 10 accessible data sets, nine were available via an online third-party repository, where OSF was the most frequent repository (4), GitHub the second (2), and Mendeley and Zenodo were mentioned once. Six data sets had incomplete data and documentation, two data sets were incomplete, and only the remaining two appeared complete and clearly documented. When a data set was not sufficiently clearly documented, it often contained data in proprietary format (SPSS .sav files) or undocumented csv files. Adding a codebook or readme file explaining the columns and the rows would be the minimal additional information needed.

Analysis script availability

Of the 204 articles that involved primary or secondary data, an analysis script was shared for three articles (1% [0% to 3%]; Figure 1[e]), through a third-party repository.

Study registration

Pre-registrations were not present in the sample. I will return to this topic after the sample description.

Funding and conflicts of interest statements

Of the 239 articles, 196 included a statement about funding sources (82% [77% to 87%]; Figure 1[g]). Most of the articles disclosed public funding (n = 115), and the remaining 81 (34%) disclosed that the work had received no funding.

Sixty-five of the 239 articles included a conflicts of interest statement (27% [22% to 33%]; Figure 1[h]). Of these 65 articles, all reported that there were no conflicts of interest (n = 65, 100%).

Replication

Of the 204 articles involving primary or secondary data, 7 (3% [1% to 6%]) claimed to include a replication study. Three indicated replication in the title (Baker et al., 2020; Bullack et al., 2018; Wolf et al., 2018), three mention replication in the abstract (Friedman et al., 2021; Kou et al., 2018; Lim et al., 2022), and one mentions replicating a previous study in the text (Nineuil et al., 2022). All except one replication (Friedman et al., 2021) were deemed successful despite some minor caveats. Most of these can be considered direct replications, although two of the replication studies are conceptual replications (Friedman et al., 2021; Lim et al., 2022), which use a different methodology to answer broadly the same question (for this distinction, see Nosek & Errington, 2017).

As the sample provided only modest evidence for formats supporting credibility and open science initiatives such as replications and registered reports, an additional analysis was carried out to establish the overall frequency of these types of studies in all published articles. An analysis of articles in the five specialist journals between 2017 and 2022 (N = 1,192) was carried out. The titles and abstracts of the articles were searched for the word replication. This resulted in 17 studies involving a replication. Of these, three articles were included in the random sample. In terms of prevalence, this suggests a prevalence of 1.40%, which is about half the prevalence in the random sample, 2.90%. The same operation was performed for registered or pre-registered studies, yielding no studies.

Replication studies in this field can be published outside specialist journals. To estimate the prevalence of replication studies beyond specialist journals, a Scopus search was executed for articles that had replication and music in the title, keywords, or in the abstract published between the years 2017 and 2022, excluding the five specialist journals. This resulted in 60 potential articles from which closer scrutiny identified 25 articles that involved replication. This hit rate from the pool of 32,752 articles with the keyword music during the same period suggested that a substantial number of further replication studies involving music is unlikely to be found outside the specialist journals.

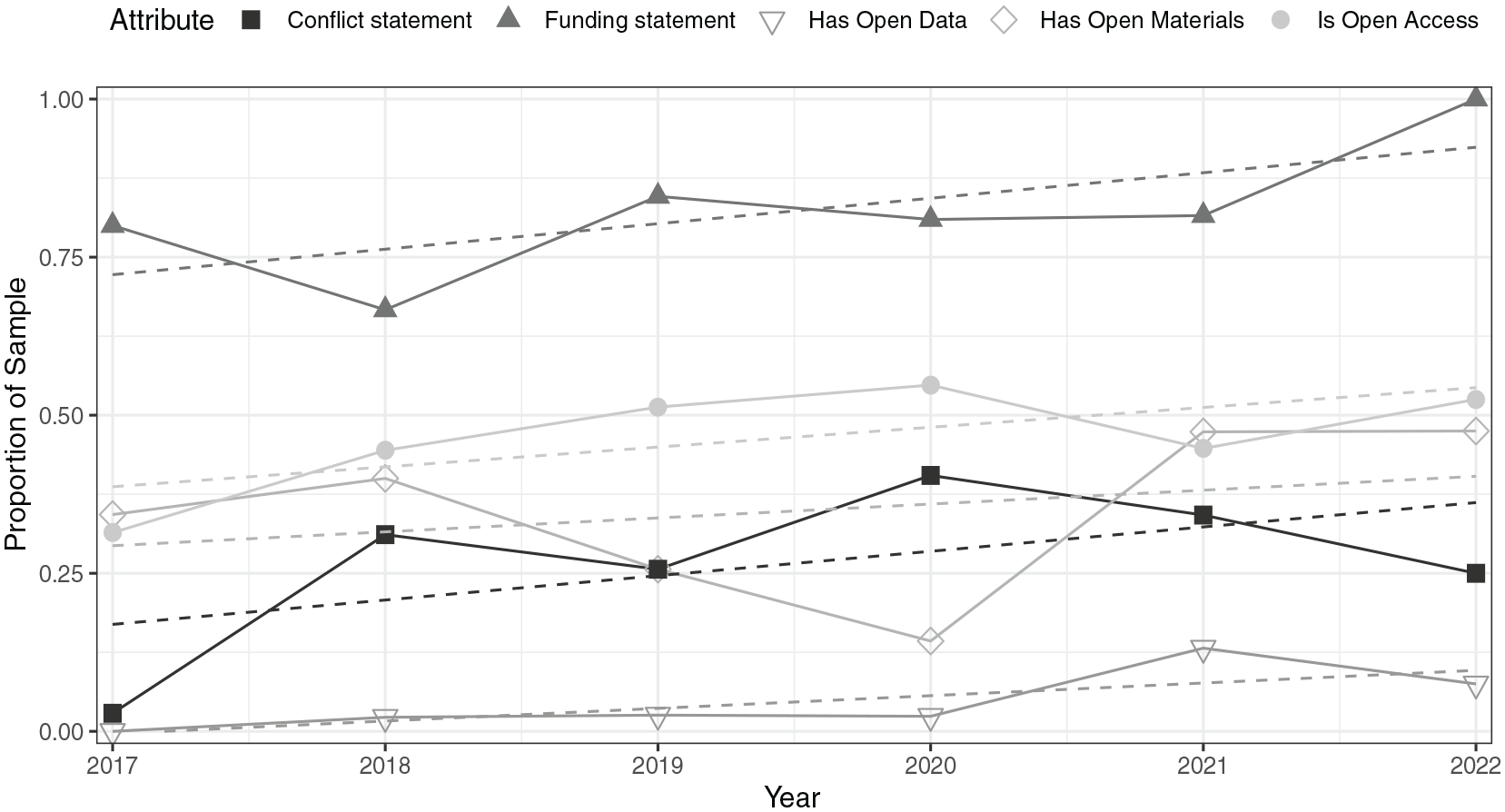

The chronology of the transparency indices (conflict and funding statements, open data, open materials, and open access) shown in Figure 2 suggests that while the proportions remain low to modest in many categories, there is a steady upward trajectory in all of these. Some of the temporary downward trends in open access in the last two sample years may reflect the changes in the policies. For example, Plan S started in 2021, which controversially mandates that research on public grants needs to have compliance with open-access platforms or journals (Gornitzka & Stensaker, 2024; Smits & Pells, 2022).

Proportion of sample attributes over the 6 years studied.

Discussion

This evaluation of transparency and reproducibility-related research convention in a random sample of 239 music psychology articles published between 2017 and 2022 stresses that the essential elements of research—data, and analysis scripts—were rarely publicly available. No pre-registrations were observed and replication studies were rare. The availability of the articles themselves was poor (<50%), but the disclosure of funding sources and conflicts of interest was moderately good (>%80 for funding sources). The findings suggest that actions to promote transparency in music psychology are marginal at the moment. This is a low baseline, but will allow future researchers to examine changes in practices over time.

For nearly half of the articles examined (47%), a publicly available version was available. This is higher than recent open-access estimates obtained for biomedicine (25%; Wallach, Boyack & Ioannidis, 2018) and the social sciences (40%; Hardwicke et al., 2019), but not quite at the level of psychology (65%, Hardwicke et al., 2022). Limited access to publications hinders the opportunities for researchers, practitioners, policy makers, and the general public to discover and capitalize on the relevant evidence. However, the open-access publishing models, requiring high charges for article processing, may also be an obstacle for researchers from developing countries (Meagher, 2021). One step music psychologists could take to improve public availability of their articles is to upload them to the free preprint server PsyArXiv (https://psyarxiv.com/), where journal policies permit, and institutional repositories.

The reported availability of research materials was good in the articles examined (39%), comparable to recent estimates in the social sciences (11%; Hardwicke et al., 2020) and psychology (14%; Hardwicke et al., 2022), although this estimate typically included additional materials related to the report itself (additional tables, analyses), rather than the full study materials. The availability of the actual research materials enables the research to be assessed properly and facilitates independent replication attempts (Open Science Collaboration, 2015). Music psychologists can share their material online in various third-party repositories such as the Open Science Framework (OSF), although copyright restrictions prevent sharing of the copyrighted music. This may limit the overall availability of materials in this discipline, but it is also worth promoting alternatives to sharing audio and video tracks through common indexing database (such as MusicBrainz) or using music and sounds with a creative commons license such as Audio Commons (Font et al., 2016) and FreeSound (Fonseca et al., 2017) (for a full discussion, see Jensenius, 2021).

Data availability statements in the articles examined were rare (5%). This is consistent with accumulating evidence suggesting that the data underlying scientific claims are rarely immediately available (Alsheikh-Ali et al., 2011; Iqbal et al., 2016), although some modest improvement has been observed in recent years in biomedicine (Wallach, Boyack & Ioannidis, 2018). The sharing of data allows for verification through an independent assessment of analytic and computational reproducibility. Data sharing also enhances meta-analysis (Tierney et al., 2015) and allows re-analyses (Voytek, 2016).

Of the articles examined, only three (Ehret et al., 2021; Kempfert & Wong, 2020; Platz et al., 2022) shared analysis scripts and data, consistent with assessments in biomedicine (Wallach, Boyack & Ioannidis, 2018), the social sciences (Hardwicke et al., 2020), biostatistics (Rowhani-Farid & Barnett, 2018), and psychology (Hardwicke et al., 2022). Analysis scripts provide the most comprehensive documentation of how raw data was filtered, processed, and analyzed. Verbal descriptions of analysis procedures can be ambiguous and do not adequately capture sufficient detail to enable analytic reproducibility (Hardwicke et al., 2018; Stodden et al., 2018).

Pre-registration, which involves creating a permanent record of the objectives, hypotheses, methods, and analysis plan of a study on an independent online repository, was not present in the articles examined. Pre-registration fulfills a number of potential functions (Nosek et al., 2019), including clarifying the distinction between exploratory and confirmatory aspects of research (Kimmelman et al., 2014) and enabling the detection and mitigation of questionable research practices such as selective outcome reporting (Franco et al., 2016). The number of pre-registrations (and the related registered-report type of article) is still small but increasing in psychology (Hardwicke & Ioannidis, 2018; Nosek et al., 2018). There are registered reports in music psychology, but they have only recently been published (Armitage & Eerola, 2022; Eerola & Lahdelma, 2022; Lahdelma & Eerola, 2024), and the present analysis may have underestimated the adoption of this convention due to lag between execution and publishing.

The current findings suggest that music psychology articles were more likely to include funding statements (82%) and conflict of interest statements (27%) than social science articles in general (31% and 15%, respectively; Hardwicke et al., 2020). It is possible that these disclosure statements are more common than most other practices examined because they are often required by journals (Nutu et al., 2019). Disclosing funding sources and potential conflicts of interest in research articles helps readers make an informed judgment about risk of bias (Bekelman et al., 2003; Cristea & Ioannidis, 2018). Because the absence of a statement is ambiguous, researchers should ideally always include one, even if it is to explicitly declare that there were no funding sources and no potential conflicts.

Of the articles examined, 3% claimed to be a replication study, which is slightly higher than an estimate in psychology of 1% (Makel et al., 2012) and a similar estimate of 1% in the social sciences (Hardwicke et al., 2020). A complete population analysis of the specialist journals suggested the prevalence of 1% for replication in music psychology, tempering the inference that music psychology is ahead of psychology or social sciences.

The current study has several limitations. First, the findings are based on a random sample of 239 articles, and the estimates obtained may not necessarily be generalizable. Second, although the focus of this study was transparency and reproducibility-related practices, this does not suggest that simply adopting these practices is sufficient to promote the goals they are intended to achieve; poorly documented data may not enable analytic reproducibility (Hardwicke et al., 2018) and inadequately specified pre-registrations may not sufficiently constrain the options available for the researcher (Bakker et al., 2020; Claesen et al., 2019). Third, only published information was used in making inference about openness of data. Direct requests to authors could have yielded additional information; however, such requests to psychology researchers are often unsuccessful (Vanpaemel et al., 2015).

The present findings imply minimal adoption of practices related to transparency and reproducibility in music psychology. Although researchers appear to recognize the problems of low reproducibility (Frieler et al., 2013) and endorse the values of transparency in principle (Hodges, 2021), they are often wary of change (Fuchs et al., 2012; Houtkoop et al., 2018) and routinely neglect these principles in practice (Hardwicke et al., 2020; Wallach, Boyack & Ioannidis, 2018). To improve the situation, funder and journal policies are an effective way to instigate change (Hardwicke et al., 2018; Nuijten et al., 2018). Journal policies in particular seem to be conducive to stimulating the adoption of transparency reforms (Giofrè et al., 2017). Unfortunately journal policies are far from uniform (Nutu et al., 2019), although a new set of standards for Transparency and Openness Promotion (TOP) outline four levels of trust for journals that should help authors and publishers to adopt higher levels of transparency over time (Mayo-Wilson et al., 2021). At the moment, Music Perception and Music & Science accept registered reports/pre-registered studies, and Psychology of Music, and Musicae Scientiae advocate for transparency and openness in sharing materials, data, and analyses. Some of these journals are explicit about including conflict of interest and funding statements, whereas others encourage reporting these. Aligning academic rewards and incentives (e.g., funding awards, publication acceptance, promotion, and tenure) with better research practices may also be instrumental in encouraging wider adoption of these practices (Moher et al., 2018). Studies of resistance to reforms suggest a variety of reasons for not adopting them, ranging from concerns as to how to implement them under specific conditions (e.g., respecting privacy, allowing exploratory research to flourish, adding administrative burdens), which will require further discussion, support, and reasonable adaptation to the policies (Washburn et al., 2018). The culture for transparency changes slowly, but promoting these through data champions to share good practice is one tactic (Woods & Pinfield, 2021), and it is crucial for cultural change that senior scholars should support transparency (Robson et al., 2021).

Frieler et al. (2013) framed music psychology as a low-gain science that tends to adopt a storytelling mode of research that satisfies curiosity rather than engaging in rigorous, costly, more replicative and, perhaps at times, less innovative research. The present findings do not dispel this characterization, but we collectively need to work toward a more robust, transparent, and replicative kind of research in music psychology that allows for the incremental accumulation of knowledge.

Footnotes

Acknowledgements

I thank Tom Hardwicke for encouragement and permission to utilize the reproducible manuscript and analysis formats of their study.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Open practices statement

The determination of our sample size, data exclusions, manipulations, and all measures in the study are fully reported. All data (https://osf.io/3pz6d/), and analysis scripts (https://osf.io/qv5g7/) related to this study are publicly available. To facilitate reproducibility, this manuscript was written by interleaving regular prose with analysis code and is available in a Code Ocean container (![]() ) which re-creates the software environment in which the original analyses were performed.

) which re-creates the software environment in which the original analyses were performed.

The following search strings were used to obtain a full population of articles (1,192) in specialist journals from Scopus:

SRCTITLE(Psychology of Music) OR SRCTITLE(Musicae Scientiae) OR SRCTITLE(Journal of New Music Research) OR SRCTITLE(Music & Science) OR SRCTITLE(Music Perception) AND TITLE-ABS-KEY(replication) AND PUBYEAR > 2016AND PUBYEAR < 2023

This search string was used to expand the search criteria beyond the specialist journals from Scopus:

DOCTYPE(ar) AND TITLE-ABS-KEY(replication) AND TITLE-ABS-KEY(music) AND PUBYEAR > 2016 AND PUBYEAR < 2023 AND NOT SRCTITLE(Psychology of Music) AND NOT SRCTITLE(Musicae Scientiae) AND NOT SRCTITLE(Journal of New Music Research) AND NOT SRCTITLE(Music & Science) AND NOT SRCTITLE(Music Perception)

This search pool consisted of 14,714,625 articles out of which the search string provided 60 matches. Manual check confirmed 25 of these to involve a replication of some kind involving music.