Abstract

This article discusses the risks that artificial intelligence (AI) poses for work. It classifies risks into two types, direct and indirect. Direct risks are AI-induced forms of discrimination, surveillance and information asymmetries at work. Indirect risks are enhanced workplace automation and the increasing ‘fissurisation’ of work. Direct and indirect risks are illustrated using the example of the transport and logistics sector. We discuss policy responses to both types of risk in the context of the German economy and argue that the policy solutions need to differ according to the type of risk. Direct risks can be addressed by European and national regulation against discrimination, surveillance and information asymmetries. As for indirect risks, the first step is to monitor the risks so as to gain an understanding of sector-specific transformations and establish relevant expertise and competence. This way of addressing AI-induced risks at work will help to improve the prospects of decent work, fair remuneration and adequate social protection for all.

Introduction

Over the past decade, investment in and the use of so-called ‘artificial intelligence’(AI)-enabled tools has grown dramatically. The global annual value of venture capital investments in AI firms increased from less than US$3bn in 2012 to close to US$75bn in 2020. In 2020 alone, investments increased by 20 per cent (OECD, 2021). AI-empowered applications and technologies are used increasingly in many areas of our daily lives, including health care, transport, logistics, entertainment, agriculture, marketing and banking. Because of AI’s wide area of applicability and its tremendous capability to facilitate waves of economic improvements and innovations, smart technologies are considered to have a significant potential to enhance economic growth (Szczepański, 2019). According to the McKinsey Global Institute (2018), AI could create additional economic output of around US$13trn by 2030 and increase global GDP by about 1.2 per cent a year. These investments in AI and AI’s increasing utilisation raise questions about the impact on societies, business and the world of work. While some aspects of this debate emphasise the potential of AI as a fast track towards the digital economy, others are more concerned about AI’s wider repercussions for society (Özkiziltan and Hassel, 2021).

Concerns about the social, political and economic impact of digitalisation has attracted widespread attention in the European public debate (Eurofound, 2021). The European Union (EU) has embarked on a series of initiatives aimed at governing the digital transformation of labour markets. Among these, the General Data Protection Regulation (GDPR), which governs data protection issues related to, among other things, people’s private sphere, and the ‘European Skills Agenda’, which enables people to pursue lifelong learning have already been put into effect (European Parliament, 2021).

Some important AI-related initiatives are still under discussion. The most relevant include: the Artificial Intelligence Act, aimed at ensuring a high level of protection for fundamental rights, including those of workers (European Commission, 2021a); the Proposal for a Directive on improving working conditions in platform work, designed to provide adequate labour market protection for platform workers (European Commission, 2021b); and a comprehensive European industrial policy on artificial intelligence and robotics, addressing and protecting workers’ interests in the face of increasing penetration of AI into socio-economic life (European Parliament, 2019). These EU initiatives are promising, but also still in the making. As a Eurofound (2021: 30) publication states: ‘it is presently unclear how these deliberations will translate into strategies and organisational action’. In this article we argue that a key strategy for overcoming this uncertainty would be to deal with AI-induced work-related risks in accordance with whether they are direct or indirect.

We argue that AI harbours direct risks, such as new forms of discrimination and surveillance at work, as well as increasing information asymmetries in favour of employers, all generated by AI solutions for workforce management. The hazards of these risks are directly observable across the economy. Indirect risks, by contrast, are related to AI’s role in further automation and ‘fissurisation’ of work, which needs to be contextualised in a wider socio-economic and political environment. The consequences of these risks are not directly observable, as they are highly intertwined with other dynamics, such as broader processes of economic restructuring and supply chains. Furthermore, they are more likely to harm workers with particular skills and jobs from certain sectors that are more conducive to digital automation and fissurisation practices. While direct risks can be addressed through regulation, indirect risks require comprehensive expertise on sector-specific transformations. Policies include education and training, social security but also regulation of subcontracting and the responsibility of employers.

The article is structured as follows. First, there is a brief overview of AI as a technology and its use at work. Next, we provide a review of the literature on the types of risk entailed by AI-enabled workplace tools. This section classifies the risks as direct and indirect. We present a short case study of the logistics and transport sector and then apply the policy discussion to the case of Germany. Finally, we discuss policy solutions. We assert that AI has different effects on different aspects of the world of work, which will require contextualised responses. This way, policy solutions can efficiently address and manage the aggregate effect of AI-induced risks, namely expansion and intensification of precarity at work.

AI as a technology and its application in the world of work

AI can be seen as one element in the digital toolbox of intelligent tools and systems (Tyson and Zysman, 2022; Zysman and Nitzberg, 2020). Thus, AI on its own does not revolutionise societies, but in interaction with other tools (such as powerful computers, the internet and platforms) it can have an enormous impact on the way we live and work. AI lacks an official standard definition and available definitions in the literature are highly varied (Özkiziltan and Hassel, 2021). One useful definition refers to AI as the ‘systems that use ML (machine learning), a sub-discipline of AI, trained on large corpora of data to make predictions and determine actions’ (Zysman and Nitzberg, 2020: 5).

Machine learning is currently the most widely utilised AI technique. It draws on computational statistical inference, utilises big data and produces successful outcomes in well-defined, narrow problem areas (Zysman and Nitzberg, 2020). ML-enabled tools have a wide range of applications. For instance, autonomous driving vehicles recognise other cars or pedestrians and slow down. Some facial recognition software systems send the alarm to authorities when certain types of individuals are identified. ML-powered chatbots respond to clients and operators and offer predefined solutions. Smart machines, in sum, are designed to adjust to their environment by overcoming physical, as well as communication hurdles. To do so, they learn from data to solve problems that cannot be precisely quantified and improve performance on the task for which they were designed (AI HLEG, 2019).

Even though ML-powered tools are involved in many areas of our lives, current AI applications are ‘narrow’ systems. These applications can compete with or even surpass human intelligence in the areas they were designed for, be it the game of chess, speech, or image recognition. Having been programmed to perform precisely specified tasks in one domain, however, most AI solutions are unique to a specific sector or operation in a work environment, and putting them to use across a wider ecosystem is beset with difficulties (Zysman and Nitzberg, 2020). Thus currently, algorithmic responses to work and business situations are generated in areas in which collection of and repeated access to data enable machine learning algorithms to discover patterns, make predictions and take decisions. This restricts the use cases of AI-enabled workplace tools to areas such as observation of health and safety precautions, shift schedule optimisation, productivity augmentation, personality judgements, and hiring, firing and promotion decisions (Özkiziltan and Hassel, 2021).

Some argue that algorithm-based decision-making at the workplace is superior to the judgement of human managers because it is based on evidence and impersonal, neutral data (Rogers, 2020; Youyou et al., 2015). But it should be noted that this argument is often pushed by technology companies in marketing their products (Mateescu and Nguyen, 2019). In contrast, critics note that utilisation of AI-powered workplace applications entail a range of work-related risks.

Work-related risks of AI

In most policy debates on AI, the focus is on AI’s potential for the digital economy, which embraces new business models and economic sectors, and new forms of work and employment. Some of these discussions also forecast the risks of AI and their socio-economic repercussions (Özkiziltan and Hassel, 2021). None of these studies classify the risks in accordance with their distinct effects on the world of work. The following subsections provide a discussion of five types of AI-related risks in accordance with the threats they pose to the world of work, with the aim of providing a critical understanding of the key policy challenges.

Direct risks of AI: discrimination, surveillance and information asymmetries

In the past few years, employers have increasingly deployed smart machines at work. A recent report indicates that 40 per cent of international companies, mostly US based, deploy AI solutions for HR management, including for their recruitment and hiring processes (PwC, 2017). In some cases, candidates are not only pre-selected, but also interviewed by an intelligent machine before a real person decides, based on a detailed machine-produced report, whether they are a good fit for the company (Agrawal et al., 2019; Algorithm Watch, 2019; Harwell, 2019).

Some large corporations require their employees to wear ID badges with a built-in microphone, Bluetooth, infrared sensors, and an accelerometer. This technology provides employers with an insight into workers’ performance and their activities, such as how much time they spend with people of the same/opposite gender, how active they are during the workday, and how much they speak or remain silent (The Economist, 2018).

Commonly referred to as ‘algorithmic management’, such HR practices automate or semi-automate managerial decisions related to working conditions and workers’ control. To achieve this, algorithmic management utilises AI and feeds it with big data (Adams-Prassl, 2019; De Stefano, 2019; Kellogg et al., 2020; Mateescu and Nguyen, 2019) collected from numerous sources, such as workers’ CVs, keyboard and mouse movements, call logs, screenshots, webcams, application logging activities, wearable devices, and wellness programmes. AI-empowered HR decision support tools pose some direct risks that affect large parts of the workforce, regardless of occupation and sector of employment and skill levels. These risks are direct in nature because they are relatively easy to discern and are absent when AI is not in use. These represent new forms of discrimination and surveillance at work and an exacerbation of information asymmetries in favour of employers.

Regarding discrimination, the first concern is related to the values embedded in algorithmic management tools by their human programmers, such as productivity maximisation, competence development and success assurance. These built-in stereotypes are likely to prompt discriminatory managerial decisions against those who do not correspond to them, for examples immigrants who speak with an accent or nervous interviewees (Crawford et al., 2019; De Stefano, 2019; EPSC, 2018). The second concern pertains to the use of large amounts of personal data, which might result in unfair workplace practices. Hiring decisions might reflect past decisions and firings based on gender, race or genetic inclination to certain diseases (Ajunwa et al., 2017). Even if the data gathered from employees are anonymised, or their content is not analysed, individuals can still be identified and sensitive personal information can be used against them (Adams-Prassl, 2019; Article 29 Data Protection Working Party, 2017; De Stefano, 2019).

Regarding surveillance, the literature suggests that algorithmic management tools are designed to increase employers’ capabilities to monitor and control workers (Crawford et al., 2019; De Stefano, 2019; EPSC, 2018; Kellogg et al., 2020; Servoz, 2019). This raises two issues. The first is the blurring of boundaries between work and private life, with serious implications for workers’ right to privacy (Adams-Prassl, 2019; Moore, 2019a; Servoz, 2019). The second issue is related to the diminishing possibilities of workers’ voice (Kellogg et al., 2020; Moore, 2019a) because algorithmic management tools render ‘the sites of everyday resistance facilitated by worker-to-worker communication penetrable by management’ (Moore, 2019a: 126). Employers use new worker monitoring tools to measure previously unmeasurable aspects of work, such as attitudes, tiredness, mental well-being and stress (Moore, 2019a). In doing so, algorithmic control enables management to individualise its surveillance over workers through custom-made nudges, rewards and penalties (Kellogg et al., 2020). ‘This, in turn, can transform the modalities of worker resistance’, argue Kellogg et al. (2020: 386), for according to them ‘[w]hereas previous systems of control allowed collectives of workers to organise and share resistance tactics over time, especially regarding shared rewards and penalties, algorithmic control can make such initiatives and contestations harder to achieve’.

When it comes to information asymmetries, it is important to note that some algorithmic decisions are produced by highly complex neural network systems. This renders them opaque, that is to say, they are hard to understand even for their human creators (Burrell, 2016; Moore, 2019b), let alone by the workers whose livelihoods are dependent on them (Crawford et al., 2019; Gaudio, 2021; Kellogg et al., 2020; Mateescu and Nguyen, 2019; Rosenblat and Stark, 2016). Thus, according to critics, in companies where complex algorithmic managements tools are used, opacity is used to manage the workforce, enabling managers to shift the responsibility for managerial decisions onto machines (Adams-Prassl, 2019; Crawford et al., 2019; Kellogg et al., 2020; Mateescu and Nguyen, 2019). This manoeuvre masks their decisions on work and employment relations, which might go against labour and/or data protection regulations (Gaudio, 2021; Moyer-Lee and Kountouris, 2021; Rosenblat and Stark, 2016). In some cases, this leaves workers with no one to appeal to when they face disciplinary measures or lose their jobs (Bearson et al., 2019; Kellogg et al., 2020).

Indirect risks of AI: automation and fissurisation

Labour economists maintain that smart machines often complement human labour rather than totally replace it (Agrawal et al., 2019; Brynjolffson and McAfee, 2017; EPSC, 2018; Evans-Greenwood et al., 2017; Servoz, 2019). According to this argument, when AI is utilised to augment human labour, the collaborative outcome becomes particularly valuable for humans (Agrawal et al., 2019; Brynjolffson and McAfee, 2017; Evans-Greenwood et al., 2017) because workers can specialise in more complicated and/or pleasant tasks (OECD, 2019; Servoz, 2019), leaving repetitive, mundane and dangerous tasks to machines.

Many researchers also share the view, however, that AI might replace a much larger number of workers than previous digital technologies (Blit et al., 2018; Makridakis, 2017; Manyika et al., 2017; Moore, 2019b). This is underpinned by the argument that pre-AI digital automation is routine-biased, replacing mental and physical tasks such as bookkeeping, clerical work and assembly line production. These technologies have threatened mid-skilled/income workers with a higher risk of unemployment than low- and high-skilled/income workers, which paved the way for employment polarisation in the labour markets of advanced economies beginning in the mid-1980s. Employment polarisation pushed the mid-skilled/income workforce towards either low- or high-skilled/income jobs (Arntz et al., 2016; Autor et al., 2003; Nedelkoska and Quintini, 2018), with its upskilling effects more pronounced than its downskilling ones across the advanced market economies (Hassel et al., 2022).

On the other hand, AI tools aim to imitate human skills such as matching, prediction, perception and cognition, which only recently were widely thought to be non-automatable (Manyika et al., 2017). In this way, AI-powered applications become capable of displacing labour in a much wider set of jobs and tasks and across most of the skill and wage spectrum (Blit et al., 2018; Makridakis, 2017; Manyika et al., 2017; Moore, 2019b).

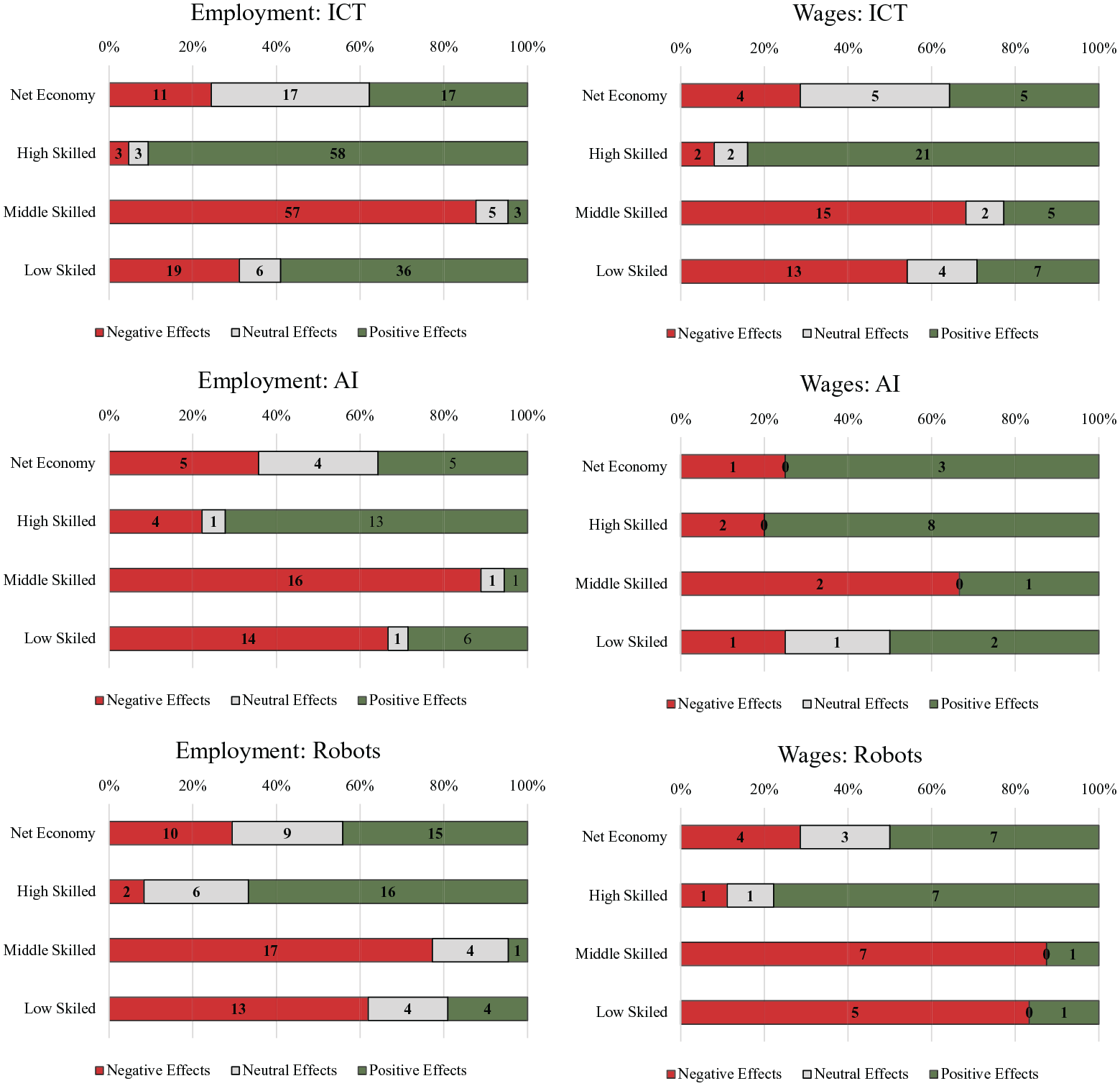

A recent scoping study by Hassel et al. (2022) reveals mixed results as to whether workers in lower-, mid- or high-skilled/income jobs will be the ones most affected by AI-led automation. As presented in Figure 1, while 89 per cent of the available research indicates that AI is more likely to replace human labour in the mid-skilled/income category, this number decreases to 67 per cent in the low-skilled/income group. Three-quarters of the literature, however, anticipates that AI is likely to create new jobs in the high-skilled/income category. There is less consensus on AI’s impacts on the overall economy, with 36 per cent of studies foreseeing negative, another 36 per cent positive and 29 per cent neutral effects. This lack of consensus might indicate that the number of jobs replaced and created by AI utilisation are likely to counterbalance each other at the general level of the economy. Regardless of disagreement on the employment effects of AI-driven automation, Figure 1 illustrates that its labour-replacing effects appear to be strong for middle- and low-skilled workers, and to favour high-skilled workers.

Research results on employment effects of AI.

In addition to the employment effects, some authors also point out AI’s capability to reinforce the broader trend toward workplace fissurisation. Fissurisation refers to a process (Weil, 2014) whereby firms focus entirely on their strategic activities by outsourcing others and engaging in complex employment relations involving subsidiaries and subcontractors (Hassel and Sieker, 2022; Rahman and Thelen, 2019). Observed since the 1980s, the fissurisation of work is aimed at boosting firms’ competitiveness. As Weil (2014) highlights, it is facilitated by advancements in information and communication technologies, which have greatly increased companies’ ability to monitor and communicate with their extensive network of contractors.

Two recent trends have accelerated workplace fissurisation. The first is the use of AI-enabled workplace tools and other advanced technologies to further outsource substantial proportions of work in the forms of subcontracting, use of temporary agencies and labour brokers, franchising, licensing, and third-party management (Weil, 2019; Weil and Goldman, 2016; Wood, 2021). In these business models, technology assumes a double role. First, it acts as ‘an organisational glue to ensure that the networks of organisations working under the lead company keep to standards and do not undermine core competencies’ (Weil, 2019: 159). Second, it is instrumental in shifting employer responsibilities from the lead company onto contractors, paving the way for deteriorating wages and working conditions for employees in general (Weil, 2019).

The second trend accelerating workplace fissurisation is related to the emergence of platform companies. Platform companies utilise AI extensively, particularly algorithmic management tools (Eurofound, 2019; ILO, 2021). This helps them to closely manage and monitor their complex network of operations and efficiently outsource their business functions (ILO, 2021; Rahman and Thelen, 2019). Additionally, platforms have sole discretion to determine how their algorithms function and to alter the rules and regulations governing their business (Kenney et al., 2019; Mateescu and Nguyen, 2019). This power, in turn, endows platform owners with the capability to circumvent or avoid, to a large extent, state regulation and taxation imposed on other businesses, including those related to employment.

Many platforms classify workers who complete tasks for their business operations as self-employed. Self-employed people in the platform economy are often deprived of decent working conditions because they do not enjoy the rights granted to workers by national labour legislation, including but not limited to minimum wages, pensions, national insurance contributions, parental leave, collective interest representation and bargaining (Moore, 2019a, 2019b; Servoz, 2019).

Given the importance of technology for the platform business, it is no surprise that platform companies are among the pioneers of AI development. A study by Bryson and Malikova (2021) investigated the distribution of AI patents and showed that most are held by US and Chinese platform companies, with Google, Microsoft, Alibaba and Tencent in the lead. These findings are confirmed by Dernis et al. (2021), whose research shows that most AI patents are in information and communication technology (ICT)-related technologies, such as computer technology, digital communication, electrical machinery and transport.

How direct and indirect risks interact: transport and logistics

Transport and logistics is a core sector that widely utilises AI technologies because it intersects with new business models in retail, particularly that of platforms, the automation potential of low-skilled work and the availability of big data (Dilmegani, 2020; The European Business Review, 2021). According to McKinsey (2021), effective AI utilisation has enabled businesses to improve logistics costs by 15 per cent, inventory levels by 35 per cent, and service levels by 65 per cent.

In the transport and logistics sector, a wide variety of tasks have been automated, including demand forecasting, damage detection, dynamic pricing, route optimisation, document processing, sales and marketing analytics (Dilmegani, 2020), passenger handling, and claims management (PwC, 2021).

There is also evidence of algorithmic surveillance and discrimination, as well as increasing information asymmetries in the transport and logistics sector. For instance, the employee tracking system that was widely used in Amazon warehouses until at least mid-2019 measured employees’ performance at work, and automatically warned or even fired employees in the case of underperformance without any involvement by human decision-makers (Lecher, 2019). Another example comes from the ride-hailing company Uber. As reported by the company itself, Uber monitors its drivers’ driving behaviour through their smartphones, with harsh braking and acceleration habits used as indicators of unsafe practices (Beinstein and Sumers, 2016). Uber also uses a range of psychological incentives, such as video game techniques, graphics and rewards of little monetary value to influence its drivers concerning the time, duration and location of work (Scheiber, 2017). To make sure Uber drivers do not log off, the ride-hailing company notifies them about how close they are to the earnings goal they previously set in the system or provides them with another ride opportunity even before they complete the current one (Scheiber, 2017).

When it comes to algorithmic discrimination, it is reported that, in the case of employee discontent, algorithmic management systems enable logistics and transport companies to distribute the workload in such a way that penalises troublemakers and rewards those who assent to managerial decisions (Rosenblat and Stark, 2016; Tassinari and Maccarrone, 2020). Regarding the exacerbation of information asymmetries, deleting the accounts of logistics and transport workers or terminating their contracts seem to have become the order of the day, due to the opaque nature of the algorithmic decision-making tools (Mateescu and Nguyen, 2019).

Workplace fissurisation facilitated by AI-enabled management systems, on the other hand, has become an essential component of a broader restructuring process in the transport and logistics sector. This process was driven by Amazon’s entry into the logistics market in some OECD countries and its consequent challenging of the incumbent firms in the sector. Most of these established business practices previously operated under public sector rules and were partially/entirely controlled or owned by nationalised corporations and heavily unionised. Thus, they traditionally paid higher wages and offered secure employment. The privatisation of these companies and establishment of new private logistics hubs and centres, as well as the growing deployment of AI-facilitated last-mile delivery models and platform work have accelerated the competition in the sector. A comparative study by Hassel and Sieker (2022) found a close association between Amazon’s expansion in the online retail sector and increasing workplace fissurisation in the logistics sector, particularly in last-mile delivery in Germany, the United Kingdom and the United States. While these results are still preliminary, it is very likely that AI-driven expansion of tech companies into the transport and logistics sector will further widen workplace fissurisation, especially for businesses such as last-mile delivery companies, which are dependent on a low-skilled, non-routine workforce.

AI at work: the German technology paradox

With more than 80 per cent of the German workforce using information and communication technologies, digitalisation affects almost every worker in Germany (BMWI et al., 2017; DGB, 2016). Nevertheless, Germany has become a technological paradox among leading industrialised countries, for there is a strong contrast between the high levels of robot-led automation in manufacturing industry, the expansion of platform companies in transport and logistics, and a rather slow and reluctant adoption of latest digital technologies in the country.

This disparity becomes particularly evident in scholarly debates and in Germany’s rankings in international indexes. For instance, the country ranks fourth globally when it comes to density of industrial robots, after Singapore, South Korea and Japan, with 346 robots per 100,000 employees (IFR, 2021). Consequently, in the period 1994–2014 each robot replaced two workers in the German manufacturing sector, adding up to approximately 275,000 jobs (Dauth et al., 2017). However, Germany has failed to take the lead in some of the prominent international rating systems designed to measure countries’ digital capabilities. The country ranks 11th out of 27 EU countries in the Digital Economy and Society Index (European Commission, 2021c), which compares the performance of Member States in digital public services, digital skills, digital connectivity, and online activity. Similarly, it ranked 18th out of 64 in the Institute for Management Development’s World Digital Competitiveness Ranking for 2021. This is an international comparison measuring countries’ capacity and readiness to adopt and explore digital technologies as a key driver for economic transformation in business, government and wider society (IMD, 2021).

Germany’s digital performance becomes even more concerning with regard to current AI utilisation rates. According to a recent survey by Bitkom (2021), only 8 per cent of companies with 20 or more employees use AI technologies and these are predominantly restricted to less-sophisticated tools, such as targeted advertising (71 per cent), automated answering of customer queries (63 per cent), customer behaviour analytics in sales (53 per cent), and transport route planning in logistics (35 per cent). Only 21 per cent of the companies utilising AI make use of this technology in recruitment processes, for example in the preselection of applicants. ‘At the moment, companies tend to use artificial intelligence for simple tasks and where it can quickly bring them concrete benefits’, says Bitkom President Achim Berg, and continues, ‘in the public debate, the use of AI in companies is often about personnel issues, for example about discriminatory application procedures. In most companies, the use of AI to select applicants is not an issue at all’ (Bitkom, 2020).

Despite German companies’ sluggish efforts towards further digitalisation, the public discourse is dominated by studies that predict a future for German labour markets in which AI and other state-of-the-art digital technologies are in extensive use (Kriechel et al., 2016; Zika et al., 2018). Consistent with this forecast, findings from recent research show that during 2014–2018, the need for digital skills in the German labour market increased across all occupation groups (O’Kane et al., 2020).

A good way to explain Germany’s technological paradox lies in the way companies utilise AI and other advanced digital technologies in their businesses. As the evidence suggests, AI solutions for the algorithmic management of the workforce have not yet been adopted extensively by German companies. In contrast, robots in the manufacturing sector and AI-enabled tools in the transport and logistics sector have already become an integral part of companies’ efforts towards further workplace automation and, in some cases, fissurisation. Thus, while cases of AI-induced discrimination, surveillance and information asymmetries are not yet prevalent in Germany, the work-related consequences of AI-led/digital automation in manufacturing and AI-led/digital automation and fissurisation in the transport and logistics sector are already observable and in need of appropriate attention from policy-makers and trade unions.

Governing the work-related risks of AI: implications for the German government and trade unions

The distinction between the direct and indirect risks related to AI at work is helpful in order to avoid one-size-fits-all policy responses and proposals that fail to consider context-specific consequences posed by these risks.

The direct risks of discrimination, surveillance and information asymmetries are clearly and easily observable in the workplaces and sectors in which AI-empowered HR decision support tools are used. Once these applications become a key part of HR practices, AI-induced direct risks become relevant for employees regardless of occupation and sector of employment and skill levels. Despite workers’ increasing exposure to these risks (Özkiziltan and Hassel, 2021), their impact on the overall workforce remains almost negligible in the economies with low deployment levels of AI workplace tools. As only a fraction of companies has adopted AI solutions for algorithmic management, there is low exposure for the German workforce. AI-induced discrimination, surveillance and information asymmetries are likely to affect a growing number of workers only if AI penetration increases substantially.

The direct risks inflicted by AI workplace tools can be addressed by proactive regulation. The recent EU Artificial Intelligence Act and the Proposal for a Directive on improving working conditions in platform work are two initiatives. The Artificial Intelligence Act imposes bans on certain applications and introduces ex-ante regulations for high-risk applications. However, as pointed out by Haataja and Bryson (2022), the Artificial Intelligence Act proposal in its current version ignores stakeholder participation in managing the diversity, non-discrimination and fairness of AI systems, which would facilitate further development of high-risk systems. This shortcoming could be improved by inclusion in the Artificial Intelligence Act of provisions preventing AI-driven discrimination at work or its transparent regulation of workplace AI tools.

The proposed Directive on platform work, on the other hand, aims to accurately define platform workers’ employment status. The proposal seeks to establish a list of criteria to determine platforms’ employer status and puts the burden of proof on platforms to justify the denial of employment relationships. Additionally, it enhances transparency in algorithmic management methods through human monitoring of algorithmic decisions related to working conditions and by giving workers and their representatives the right to dispute them. The draft proposal is a favourable point of departure in dealing with the power asymmetries between platform workers and managers, and the consequent lack of decent work opportunities, as well as the rising incidence of algorithmic discrimination and surveillance in the platform economy.

As stated by ETUC (2022), however, the draft proposal suffers from shortcomings related to collective labour rights. The law needs to communicate clearly that platform workers are fully entitled to the right to establish and join trade unions and bargain collectively. In this respect, the law should replace the term ‘workers’ representatives’ with that of ‘trade unions’, as this frame of reference might significantly undermine the independence of workers’ movements within the platform ecosystem.

In addition to the proposals, there are a series of EU- and national-level regulations in place that serve as a point of reference for German policy-makers and trade unions in dealing with the direct risks posed by AI. For instance, in Germany works councils are equipped to deal with some of the direct risks of AI. Works councils are responsible for making sure that employers comply with existing regulations, including discrimination, data protection and health and safety. If employers develop criteria for assessing job applications via AI, works councils must be consulted. Any AI implications regarding workers’ performance and behaviour have to be discussed with works councils. The new law on modernising works councils that was adopted in 2021 strengthens their rights by giving them the right to consult experts on AI, if the employer plans to use AI at work. The law does not define AI, however, and therefore gives a rather broad mandate to works councils.

The General Data Protection Regulation (GDPR) requires companies to obtain employees’ consent for the use of workplace surveillance tools and the data obtained from them. In line with this, Germany has strict limits on employers’ prerogatives on employee monitoring through the German Data Protection Act, the Works Constitution Act and the German Telemedia Act. Under this body of rules, companies need to obtain the works council’s approval to launch and use workplace surveillance tools (Eurofound, 2020). While this legal framework has been instrumental in preventing and redressing AI-induced inequality and discrimination, its protection could be strengthened in those areas, particularly with regard to the platform economy, where workers encounter serious difficulties with interest and voice representation.

The EU gender equality and non-discrimination legal framework, despite offering important safeguards, is diluted by ‘inconsistencies, ambiguities, and shortcomings [. . .] limit[ing] its capacity to deal with algorithmic discrimination’ (Gerards and Xenidis, 2021: 75). But existing non-discrimination law in Germany is not fully capable of eliminating the risk of discrimination produced by AI-enhanced tools (Fröhlich and Spiecker genannt Döhmann, 2018). Addressing and mitigating the discrimination-related risks and challenges arising from the use of algorithms in Germany’s labour markets require dialogue and collaboration among policy-makers, employers and worker representatives, and computer and data scientists. This will help to build an ‘ecosystem of trust’ providing ‘citizens the confidence to take up AI applications and give companies and public organisations the legal certainty to innovate using AI’ (European Commission, 2020: 3).

The indirect risks of AI posed by ongoing automation and fissurisation of work are difficult to isolate, as these are firmly embedded in the wider context of socio-economic and political dynamics. As the literature on AI-driven automation indicates, its labour replacing effects appear to strongly impact middle- and low-skilled workers. Further workplace fissurisation through platforms, on the other hand, is likely to exacerbate precarity at work and diminish decent work opportunities. However, materialisation of these risks hinges on countries’ governance practices and societies’ political and socio-economic priorities.

The indirect risks associated with AI-driven automation are partially addressed by the European Parliament (2019) in its report on a comprehensive European industrial policy on artificial intelligence and robotics, with an emphasis on the importance of revising and refashioning training and education programmes, labour market policies, social security schemes and taxation. These and many other measures have long been under discussion because AI’s areas of application are highly diverse and require consideration of its impacts beyond the traditional realm of work and employment relations.

The repercussions of AI’s work-related risks will be comprehensively understood when AI utilisation is considered in a broader context of the expansion of platform firms and the transformation of entire sectors. As our brief illustration using the transport and logistics sector demonstrates, a combination of big data, the use of algorithmic management tools and the rise of the market shares of platform firms such as Amazon have led to a visible transformation of the transport and logistics sector and a further expansion of precarious forms of work. The use of AI can be found in many areas of this process: in the business models of online retail via platforms, in the organisation of last-mile deliveries and entire logistic chains, as well as in the use of surveillance tools at work, including automatic HR processes. We argue that a better understanding of the transformative powers of AI for specific sectors can help us to identify the best policy tools.

For trade unions and the government, we propose investment in competence centres for research and regulation on technology-driven industrial restructuring. They should particularly investigate the role of AI regarding companies’ outsourcing capacity and corporate restructuring. Based on these analyses, new proposals for the regulation of subcontracting, employers’ liability in the supply chain (the German government has already addressed this issue for last-mile deliveries in logistics) and the regulation of self-employment in transport and logistics are essential. They could also inform future-oriented collective bargaining implemented in future-oriented agreements (Zukunftstarifverträge) in the metal and engineering sector in 2021. They should aim to foster negotiations between works councils and management regarding future business strategies before a crisis arises (Krzywdzinski et al., 2023; Molina et al., 2023). Sector-specific policy expertise will strengthen and enhance AI-related universal policy measures, such as comprehensive social security including the self-employed, employment status, vocational training systems in fluid organisations, and the responsibility of employers, which are still under discussion in Germany and beyond.

Footnotes

Funding

The research for this article is funded by the German Ministry for Labour and Social Affairs in the context of the project ‘The Governance of Work in the Knowledge Economy’. It is based on expert interviews and findings from two scoping studies and a literature review on automation and platforms conducted in the scope of the project (Hassel et al., 2022; Lessenska et al., 2021; Özkiziltan and Hassel, 2021).