Abstract

The increasing use of AI technologies in the workplace raises significant concerns about their implications for workers’ rights and the future of work. The EU’s Artificial Intelligence Act aims to ensure that innovation is not at the expense of fundamental rights. While the Act seeks to promote human-centric and trustworthy AI applications, this article highlights several regulatory gaps that are likely to foster two key trends shaping the future of work in Europe: the increasing autonomy of AI tech companies over the design of workplace AI and the reinforcement of power imbalances in the workplace. These trends leave EU workplaces vulnerable to biased and intrusive AI, thereby hindering the fair integration of AI into the work of the future. This article critiques the Act’s shortcomings in regulating AI at work and advocates a dedicated EU directive to mitigate adverse impacts of AI in the workplace and reinforce human-centric AI principles for societal benefit.

Introduction

The European Union’s Artificial Intelligence Act (EU, 2024) defines an ‘artificial intelligence’ (AI) system as:

a machine-based system that is designed to operate with varying levels of autonomy and that may exhibit adaptiveness after deployment, and that, for explicit or implicit objectives, infers, from the input it receives, how to generate outputs such as predictions, content, recommendations, or decisions that can influence physical or virtual environments.

This comprehensive definition underscores AI systems’ versatility and transformative potential. They have rapidly evolved in recent years and have found applications across diverse domains. In particular, ‘workplace artificial intelligence technologies’ (WAITs) exemplify this evolution, performing an increasing array of both routine and non-routine mental and physical tasks. This offers companies the prospect of combining the unique strengths of humans and machines to complete tasks more efficiently, accurately and rapidly (Özkiziltan and Hassel, 2021). Although the number of organisations deploying WAITs remains contested, a clear trend is evident: European companies are increasingly embracing AI technologies (EU-OSHA, 2022a). Furthermore, some scholars predict that this trend will continue in the coming years (Arntz et al., 2016; Brynjolffson and McAfee, 2016; Frey and Osborne, 2017; Nedelkoska and Quintini, 2018).

The growth in WAITs deployment has instigated vibrant scholarly discussions regarding their future impacts on work and employment relations. These debates focus primarily on the potential of large-scale replacement of human labour with WAITs (Arntz et al., 2016; Frey and Osborne, 2017; Nedelkoska and Quintini, 2018) and the changing nature of work (Muro et al., 2019; Servoz, 2019). A key conclusion from these discussions is that WAITs are likely to exacerbate existing power asymmetries (Kellogg et al., 2020; Özkiziltan and Hassel, 2021) and aggravate unfair and exploitative practices at work (De Stefano, 2019; Mateescu and Nguyen, 2019; Özkiziltan and Hassel, 2021).

Mounting concerns about the socio-economic implications of the increasing use of WAITs have contributed to a heightened awareness at the EU level (EESC, 2017), leading to political initiatives, including the European AI Strategy (European Commission, 2018), Ethics Guidelines for Trustworthy AI (AI HLEG, 2019), the 2030 Digital Compass (European Commission, 2021), the International Outreach for Human-Centric Artificial Intelligence Initiative (European Commission, 2022), and the European Declaration on Digital Rights and Principles (EU, 2023). Though diverse in scope and focus, these initiatives aim to create human-centric AI that serves people, protects fundamental rights and empowers businesses. This EU-level human-centric approach to AI also extends to the world of work. It seeks to restrict the growth of managerial discretion enabled by AI-led decision-making tools (EU, 2023), as well as to prepare labour markets for an AI-led future world of work (EU, 2023; European Commission, 2021).

The human-centric approach to AI has also informed the policy-making process of the EU’s Artificial Intelligence Act (the ‘Act’). The Act entered into force in August 2024 and it is set to be implemented gradually over time (Future of Life Institute, 2024). The intent behind this pioneering legislation is to strive for a delicate balance: fostering technological innovation and competitiveness within the EU, while safeguarding its citizens’ safety and fundamental rights (Council of the EU, 2024; Madinier, 2021). The Act’s core principle, declared in Recital 6, unequivocally emphasises its aspiration to uphold human-centric AI systems: ‘As a prerequisite, AI should be a human centric technology. It should serve as a tool for people, with the ultimate aim of increasing human well-being.’ This guiding principle translates into the Act’s purpose: ‘to promote the uptake of human centric and trustworthy artificial intelligence (AI) while ensuring a high level of protection of health, safety, fundamental rights as enshrined in the Charter of Fundamental Rights of the European Union (the ‘Charter’)’ (Recital 1).

The Act is laudable for its commitment to advancing the adoption of human-centric and trustworthy AI to improve human well-being and its adherence to the principles enshrined in the Charter. However, this article contends that several shortcomings significantly undermine the Act’s effectiveness in protecting workers’ rights and freedoms, as set out in the Charter. These include the following. The Act, legally based on Article 114 of the Treaty on the Functioning of the European Union (TFEU), confines the protection of fundamental rights to a provision granting the EU general authority for internal market harmonisation. It relies on providers of high-risk AI systems to self-assess and self-certify their products’ compliance. It exempts private companies deploying high-risk WAITs from conducting Fundamental Rights Impact Assessments and from the mandatory registration requirement for these systems. It undermines the right of workers to an explanation for decisions made by high-risk AI systems that impact their health, safety or fundamental rights. It also leaves general-purpose AI under-regulated, carves out exemptions for banned emotion-recognition systems in workplaces, and neglects the broader socio-economic impacts of WAITs by focusing exclusively on algorithmic management tools.

This article argues that these regulatory gaps will probably give rise to two key trends shaping the future of work in Europe: the increasing autonomy of AI tech companies with regard to the design of WAITs and the solidification of power imbalances in the workplace. These trends could render EU workplaces vulnerable to biased and intrusive AI, thereby hindering AI’s fair and democratic integration into the work of the future. Consequently, regarding issues related to the world of work, the Act fails to fulfil its promise of promoting the uptake of human-centric and trustworthy AI to enhance human well-being. To prevent such an unfavourable outcome, as European trade unions have rightly advocated, an EU directive is needed dedicated to addressing the unique challenges posed by WAITs and rectifying the shortcomings of the EU AI Act (ETUC, 2023; IndustriAll, 2024). Only through such targeted legislation can workers be adequately protected from the potential harms of WAITs, ensuring that AI technology is human-centric and trustworthy, and benefits EU society as a whole.

The article is structured as follows. It begins with a brief overview of the deployment of WAITs, followed by an analysis of the key factors contributing to WAITs outcomes that are detrimental to workers’ interests, and continues with an examination of the possible impacts of WAITs deployment on workers’ rights as guaranteed by the Charter. Next, it scrutinises the EU AI Act’s shortcomings related to the world of work. The article concludes with a discussion of these shortcomings, emphasising their potential impact on the future world of work and advocating for an EU directive that mitigates AI’s adverse impacts and strengthens human-centric and trustworthy AI principles in the workplace.

Deployment of AI in the workplace

At their current level of development, WAITs touch almost every facet of the world of work, from task automation to workplace safety, from hiring, firing and promotion decisions to productivity monitoring (Coworker.org, 2021; De Stefano and Wouters, 2022; Negrón, 2021b; Özkiziltan and Hassel, 2021). Despite ongoing debates on whether AI will make human labour obsolete (Özkiziltan and Hassel, 2021), the available research reveals that WAITs adoption so far has not replaced workers in such a way as to increase technology-induced job losses (Acemoglu et al., 2020; Felten et al., 2019). However, researchers predict an increase in the risk of labour displacement as a result of AI-led automation over the coming two or three decades, affecting workers across all skill levels (Muro et al., 2019; OECD, 2019; Webb, 2020).

Notwithstanding the possibility of WAITs severely impacting the types of jobs available in the future, many experts predict a future of work in which WAITs design will focus on new possibilities of human-machine collaboration in such a way as to make the best of the available capabilities (Brynjolfsson et al., 2018; Eurofound, 2024; Manyika, 2018). Accordingly, some WAITs are likely to deskill workers, particularly in cases in which machines are allocated to carry out intricate tasks related to information processing and decision-making, while workers are demoted to routine tasks and/or assigned to follow the instructions of the machines (EPRS, 2021; Jarrahi, 2019; Moore, 2019). Some WAITs are also deployed to complement and augment human labour, particularly when they are designed to relieve humans from performing routine and/or hazardous tasks. This improves human capabilities by allowing workers to concentrate on more complex, satisfying or creative tasks (OECD, 2019; Servoz, 2019).

AI-empowered solutions for workforce management, often referred to as ‘algorithmic management tools’, also belong to WAITs. These applications are designed to completely or partially take over managers’ decision-making roles in issues related to workforce management. Algorithmic management tools are reported to yield opposing outcomes on work and employment relationships. Regarding their adoption to benefit both workers and employers, for instance, some construction and mining companies use algorithmic management tools for real-time worker safety, while in the hotel business, panic buttons are used to assist workers working in isolation to call for help in emergency and/or dangerous situations (Bernhardt et al., 2021). When it comes to their deployment in ways tailored to favour the interests of employers, the scholarly literature is replete with examples including, but not limited to, employers’ pervasive surveillance of workers’ activities, AI-led biased hiring and firing decisions, and unrealistic productivity goals (De Stefano and Taes, 2022; EU-OSHA, 2022b; Özkiziltan and Hassel, 2021). Some key factors contributing to the negative impact of WAITs deployment on workers’ interests are discussed below.

AI as a threat to workers’ interests

In recent years reports and announcements have increased concerning the development of WAITs with advanced capabilities, purportedly approaching or even surpassing human abilities (Negrón, 2021b; Özkiziltan and Hassel, 2021). As a lucrative product line for AI developers, however, WAITs often prioritise corporate objectives, such as boosting productivity and cutting costs (Negrón, 2021b; Özkiziltan and Hassel, 2021; Rani et al., 2024). It is commonly declared in scholarly discussions that many WAITs produce outcomes detrimental to workers’ interests (De Stefano and Taes, 2022; Özkiziltan and Hassel, 2021; Servoz, 2019). An examination of the relevant literature identifies three underlying factors of particular relevance to this tendency.

First, one significant factor is that of the data fed into AI systems, which are considered to be rarely neutral or flawless (EPSC, 2018; Moore, 2019; Williams et al., 2018). Such data are often described as ‘easy to manipulate, may be biased, may reflect cultural, gender and other prejudices and preferences and may contain errors’ (EESC, 2017: 6). It has been argued that algorithms that rely on biased data are highly prone to producing their outcomes based on the patterns and preferences ingrained in that data, which could potentially advance the interests of employers (De Stefano, 2019; EPSC, 2018; Özkiziltan and Hassel, 2021). As a result, algorithmic decisions could be used to justify discriminatory practices, rationalise unequal treatment or perpetuate exploitative workplace dynamics, ultimately undermining workers’ rights and interests (Özkiziltan and Hassel, 2021; Williams et al., 2018).

The second factor concerns AI tech workers, namely those involved in creating, developing and maintaining AI-enabled technology products and services. The number of AI tech workers is reported to be very small, reflecting the worldwide scarcity of AI talent (McKinsey, 2022; Yuan et al., 2020). Most of these workers lack diversity in terms of race, geography, social class and gender (De Stefano, 2019; EPSC, 2018; Linkedin, 2019; Moss and Metcalf, 2020). While this lack of diversity is a global issue within the AI talent pool, it is particularly concerning for AI products developed by US-based tech companies. Observers have noted that in many US-based AI firms, AI tech workers enjoy a substantial degree of independence from management in the pursuit of their work (Cihon et al., 2021; Metcalf et al., 2019). Accordingly, AI tech workers, given their above-mentioned characteristics and privileges, are likely to devise AI systems, including those used in the world of work, that accord with their values and norms, which are claimed to revolve around concepts such as productivity maximisation, competence development, success sustenance and cultural compatibility (Crawford et al., 2019; De Stefano, 2019). As the argument proceeds, these may conflict with the principles upheld by individuals from various social, economic, ethnic, or cultural backgrounds when they encounter AI-driven technologies (EPSC, 2018; Linkedin, 2019; Servoz, 2019).

The third factor that renders WAITs outcomes detrimental to workers’ interests is the lack of robust ethical practices among AI companies. As highlighted by a Deloitte (2022) survey, this issue is a global concern as the primary focus of most companies developing and deploying emerging technologies tends to be on technical requirements and minimal compliance rather than on robust ethical considerations designed to maximise the benefits of these technologies while minimising potential harm. When considered in the context of WAITs, this limited focus on ethical issues becomes particularly concerning for US tech companies. As Negrón (2021a) observes, these companies are leading in WAITs development, suggesting that many WAITs deployed in the EU are likely to be of US origin. According to Metcalf et al. (2019), many US-based AI companies avoid potentially shameful public exposure of their products and practices by creating a façade of ethical effort instead of making the necessary structural changes to address the underlying ethical issues inherent in their products. This approach makes WAITs developed and marketed by US-based tech companies highly likely to perpetuate exploitative workplace practices that undermine workers’ rights and freedoms, as these systems are often designed to prioritise efficiency and profitability over fairness, well-being and trust in the workplace (Özkiziltan and Hassel, 2021). Next, we will discuss the impact of AI on workers’ rights and freedoms, as outlined by the Charter.

AI as a threat to workers’ rights in the European workplace

The fundamental rights and freedoms that protect workers are enshrined in the Charter, which guarantees, amongst other things, respect for private life (Article 7), the protection of personal data (Article 8), freedom of assembly and association (Article 12), non-discrimination (Article 21), equality between women and men (Article 23), workers’ rights to information and consultation (Article 27), the right of collective bargaining and action (Article 28), and fair and just working conditions (Article 31).

The deployment of WAITs, as documented in scholarly research, may pose significant challenges to affirming some of these fundamental rights. For example, the extensive use of WAITs in monitoring and surveillance threatens workers’ rights to privacy and the protection of personal data (Adams-Prassl, 2019; Ajunwa et al., 2017; Rani et al., 2024). Using WAITs trained on biased data and flawed algorithms in hiring, firing and promotion decisions may perpetuate discrimination and undermine the principles of non-discrimination and gender equality (De Stefano, 2019; Özkiziltan and Hassel, 2021). Algorithmic management tools may also infringe upon workers’ freedom of assembly and association by exerting control over their activities and limiting their ability to organise and act collectively (Kellogg et al., 2020; Servoz, 2019).

Moreover, deploying WAITs without adequate participation from workers or their representatives risks undermining the right of workers to information and consultation. To add to this, algorithmic management tools that set unreasonable work hours, distribute shift schedules contrary to workers’ needs and apply arbitrary performance criteria (Özkiziltan and Hassel, 2021; Rani et al., 2024) could violate the right of workers to fair and just working conditions. Additionally, as WAITs become more prevalent in labour markets, workers whose tasks and skills are highly susceptible to automation may encounter excessively long or short working hours, reduced wages, insecure temporary work contracts, and diminished bargaining power (Özkiziltan and Hassel, 2021). These conditions further infringe upon the right of workers to fair and just working conditions. Next, we examine how the EU AI Act governs WAITs deployment and development and its shortcomings.

The EU AI ACT

The EU AI Act, which came into force in August 2024 with its requirements set to be implemented gradually over time (Future of Life Institute, 2024), marks a significant milestone as the world’s first comprehensive legal framework for AI governance (Council of the EU, 2024; Madinier, 2021). It aims to achieve a two-pronged effect: to regulate AI that poses a risk to fundamental rights, while bolstering Europe’s global competitiveness in AI development. The legislation adheres to a risk-based approach, whereby the AI systems with a higher risk of harm face stricter regulations (Council of the EU, 2024). The Act stipulates that providers of high-risk AI systems must ensure that their products comply fully with the relevant EU legislation (Article 8). The Act also requires that high-risk AI systems be designed and developed to allow for effective human oversight during deployment (Article 14). The AI Act identifies AI systems used in employment, workers’ management and access to self-employment as high-risk, requiring compliance with European standards within 24 months of the Act’s entry into force (Future of Life Institute, 2024).

The Act promotes human-centric and trustworthy AI, positioning it as a tool for people’s well-being. It fosters an EU-wide commitment to these principles by upholding the Charter’s protections for health, safety and fundamental rights. With regard to the world of work, the Act strongly emphasises its application to foster employment (Recital 2) and underscores its commitment to ensuring workers’ protection (Recital 9). Although the Act’s efforts to align AI development with EU values are commendable, a critical analysis suggests that several shortcomings significantly undermine its effectiveness in protecting workers’ rights and freedoms, as set out in the Charter.

First, despite championing the principles of a human-centric and trustworthy AI, the Act’s primary goal is to establish a cohesive legal framework to facilitate AI integration into the internal market (Recital 1). This is because legally the Act is based on Article 114 (The Act, Recital 3) of the TFEU. This legal foundation confines the protection of fundamental rights to a provision that grants the EU general authority for harmonising regulations related to the internal market (Almada and Radu, 2024; Ebers, 2024). This focus on market integration may incentivise prioritising economic considerations over the robust safeguarding of fundamental rights and freedoms of workers as outlined by the Charter, particularly concerning the principles of respect for private life (Article 7), freedom of assembly and association (Article 12), non-discrimination (Article 21), equality between women and men (Article 23), the right of workers to information and consultation within the undertaking (Article 27), the right to collective bargaining and action (Article 28), and fair and just working conditions (Article 31).

Second, the EU AI Act allows providers of high-risk AI systems to self-assess and self-certify the compliance of their products with the Act (Article 43 and Annex VI). This approach prioritises industry ease of compliance, sidestepping the involvement of independent external bodies, workers and trade unions. This is particularly risky for high-risk WAITs, in relation to which providers might prioritise speed to market over thorough compliance checks during their self-assessments. Such systems risk allowing harmful technologies into workplaces, potentially violating workers’ rights and freedoms protected by the Charter, including respect for private life (Article 7), freedom of assembly and association (Article 12), non-discrimination (Article 21), equality between women and men (Article 23), the right of collective bargaining and action (Article 28), and fair and just working conditions (Article 31).

The third shortcoming is the EU AI Act’s exemption for private companies deploying high-risk WAITs from conducting fundamental rights impact assessments (FRIAs). FRIAs play a crucial role in identifying potential risks of harm to fundamental rights. The aim of such assessments – where appropriate, with the involvement of relevant stakeholders – is to pinpoint particular ways in which AI applications could negatively impact fundamental rights within the context of their use. Fundamental rights impact assessments go beyond simply identifying risks; they also involve determining mitigation strategies specific to the use context (Article 27 and Recital 96). The absence of mandatory FRIAs for private employers raises the risk that harm may be inflicted by deploying high-risk WAITs. Indeed, for one thing, it places the responsibility for confirming that AI systems do not pose potential harm to workers’ fundamental rights solely on the providers’ self-assessment. For another, this exemption effectively bypasses the involvement of workers and trade unions in assessing WAITs before and during their use. The Act’s exemption of private deployers of high-risk AI from conducting fundamental rights impact assessments thus heightens the risk of overlooking or underestimating the potential adverse impacts of WAITs on fundamental rights guaranteed by the Charter, particularly concerning the principles of respect for private life (Article 7), freedom of assembly and association (Article 12), non-discrimination (Article 21), equality between women and men (Article 23), the right of workers to information and consultation within the undertaking (Article 27), the right of collective bargaining and action (Article 28), and fair and just working conditions (Article 31).

The fourth shortcoming is the lack of mandatory registration requirements for high-risk AI systems deployed by private companies (Article 49). Indeed, while private sector deployers are exempt from this requirement, providers of high-risk AI systems and public authorities using these systems must register them (Annex VIII). This omission makes it more difficult for workers independently to identify which WAITs are being used at their workplace, weakening their rights to information and consultation within the undertaking, as outlined in the Charter (Article 27).

Closely related to this, the fifth shortcoming concerns the right of workers to an explanation for decisions made by high-risk AI systems impacting their health, safety or fundamental rights. Even though in principle this right is enshrined in the Act (Article 86), it fails to provide meaningful safeguards in practice. This is because this requirement does not include consultation with workers (IndustriAll, 2024). Neither does it guarantee a detailed description of how the AI system makes decisions (Hacker, 2024) before the harm has occurred. This means that the Act entitles workers to exercise this right only when a decision produces legal effects or similarly significant impacts that they perceive as adversely affecting their health, safety, or fundamental rights. Consequently, workers would not be able to anticipate or understand what might lead to a harmful decision until after the harm has occurred. Additionally, deployers may only know as much as the AI providers disclose, and research suggests that even the developers may not fully understand complex systems (Özkiziltan and Hassel, 2021). This shortcoming impedes workers’ ability to effectively challenge biased and unfair AI-driven decisions in the workplace, infringing upon workers’ rights to information and consultation within the undertaking, as outlined in the Charter (Article 27).

Sixth, the under-regulation of general-purpose AI (GPAI) is a significant concern. According to the Act, the providers of foundational general-purpose AI models play a critical role in the AI value chain, as their models often serve as the building blocks for downstream applications. Consequently, it is crucial that downstream users possess a comprehensive knowledge of these models to ensure effective integration and adherence to regulatory requirements (Recital 101). Despite this importance, the Act focuses primarily on advanced GPAI models that present systemic risks (Articles 51 and 55). It mandates that their providers evaluate the products using standard protocols, identify and mitigate systemic risks, and report serious incidents to the AI Office and relevant national authorities. In contrast, providers of GPAI models classified as not posing systemic risks are subject only to specific transparency standards. They must maintain detailed records of their product development and evaluation processes and share this information with businesses interested in using their GPAI models while safeguarding their intellectual property (Article 53).

Interpreted in the context of WAITs, this compliance framework implies that developers relying on GPAI models that are not considered to carry systemic risks may not receive sufficient information from the providers of these models about the potential downstream impacts on the systems they design. While the Act provides a framework for assessing the compliance of AI products, Kak et al. (2023: 2, brackets added) highlight a concerning aspect: ‘GPAI models carry inherent risks . . . [that] can be carried over to a wide range of downstream actors and applications, [and] they cannot be effectively mitigated at the application layer.’ This situation is particularly concerning for the design of WAITs, which use GPAI models as their foundation, given that their potential for bias and discrimination might be amplified when these powerful models are embedded within these tools. Indeed, as Kak et al. (2023: 4) aptly put it: ‘the fact that such GPAI models can be fine-tuned for specific uses and tasks only heightens the risk that the results would be unfair, inaccurate, or harmful in unanticipated ways’. This leaves the door open for violations of workers’ rights within the EU, particularly concerning the principles of non-discrimination (Article 21) and equality between women and men (Article 23) enshrined in the Charter.

Seventh, the Act creates a grey area by allowing an exemption for safety reasons for emotion-recognition systems otherwise banned in the workplace (Article 5(1f) and Recital 44). What is more, this loophole is exacerbated by the narrow definition of emotion-recognition systems, which excludes physical states, such as pain or fatigue, and readily apparent expressions, such as a frown or a smile, except when used to identify or infer emotions (Recital 18). This raises critical questions about how broadly the safety exemptions will be interpreted (Ponce del Castillo, 2023); whether these exemptions would create a loophole for potentially intrusive workplace technologies infringing on workers’ rights protected by the Charter, for instance, respect for private life (Article 7) (Gremsl and Hödl, 2022) and non-discrimination (Article 21); how emotions that might be expressed differently in different cultures will be considered; and whether alternative, less intrusive methods could achieve the same goal.

Last but not least, the EU AI Act’s focus on WAITs used to manage work and employment relations – in other words, algorithmic management tools – overlooks critical concerns regarding the challenges posed by an increasingly AI-driven world of work (Grossi et al., 2024). Indeed, as WAITs take hold of labour markets, workers whose tasks and skills are at high risk of automation are expected to face competition from machines or lower-skilled workers who can now handle previously higher-skilled tasks with AI assistance. This transformation threatens to deskill workers or replace them with machines, potentially undermining their fundamental right to fair and just working conditions as protected by the Charter (Article 31), leading to job losses, excessively long or short working hours, low wages, insecure temporary work contracts, and diminishing bargaining power (Özkiziltan and Hassel, 2021).

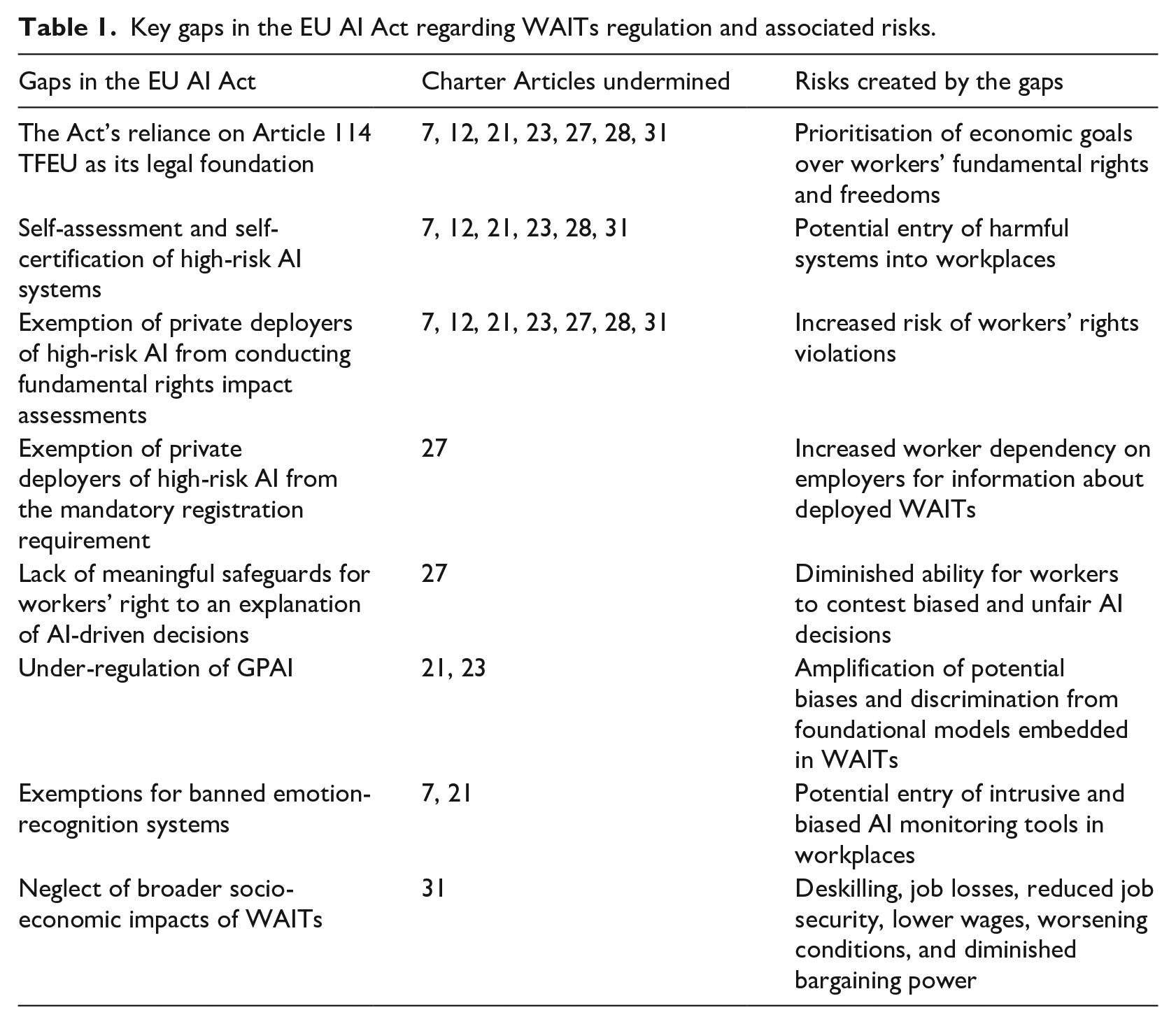

It is important to note that these shortcomings, an overview of which is provided in Table 1 below, are not exhaustive. Moreover, new issues could arise during or after the 24-month compliance period for high-risk AI systems. In any case, the limitations concerning the world of work raise significant concerns about the EU AI Act’s ability to safeguard workers’ fundamental rights and freedoms as enshrined in the Charter in the face of rapid AI-driven transformations in the European workplace. The following section discusses the implications of the Act’s shortcomings regarding the world of work and concludes.

Key gaps in the EU AI Act regarding WAITs regulation and associated risks.

Discussion and conclusion

The forthcoming EU AI Act represents a landmark effort to regulate AI within the EU, aiming to foster safety and competitiveness. As the first comprehensive legislative framework for AI, it introduces a risk-based approach to regulation, emphasising stricter oversight for high-risk AI systems, including those used in employment and workers’ management. The Act is commendable for its commitment to advancing the adoption of human-centric and trustworthy AI to improve human well-being and its adherence to the principles enshrined in the Charter. Nevertheless, its effectiveness in protecting workers’ rights and freedoms, as set out in the Charter, is significantly undermined by several critical shortcomings. These include the following. The Act, legally based on Article 114 TFEU, confines the protection of fundamental rights to a provision granting the EU general authority for internal market harmonisation. It relies on providers of high-risk AI systems to self-assess and self-certify the compliance of their products. It exempts private companies deploying high-risk WAITs from conducting FRIAs and from the mandatory registration requirement for these systems. It undermines the right of workers to an explanation for decisions made by high-risk AI systems impacting their health, safety or fundamental rights. It also leaves general-purpose AI under-regulated, carves out exemptions for banned emotion-recognition systems in workplaces, and neglects the broader socio-economic impacts of WAITs by focusing exclusively on algorithmic management tools.

The Act’s shortcomings are likely to give rise to two key trends that could significantly influence the future of work in Europe. The first is the increasing autonomy of AI tech companies in system design, as the Act permits them to self-assess and self-certify the compliance of their high-risk AI systems. Although this lack of external oversight is a valid concern for most companies developing AI-led products, it is particularly troubling for US WAITs developers. Indeed, research by Negrón (2021a) suggests that US tech companies are leading in WAITs development, implying that many WAITs deployed in the EU are likely to be of US origin. As already discussed, many AI companies based in the United States tend to fabricate a façade of ethical commitment instead of directly addressing the fundamental ethical issues in their products and practices (Metcalf et al., 2019). Moreover, research indicates that AI tech workers in US-based tech companies often enjoy significant independence from management in pursuing their work (Cihon et al., 2021; Metcalf et al., 2019), resulting in systems that reflect their values, often prioritising productivity, competence, success and cultural compatibility (Crawford et al., 2019; De Stefano, 2019). We thus contend that allowing self-assessment and self-certification for high-risk AI systems grants significant autonomy to AI tech companies, raising particular concerns about the US-based firms developing WAITs. This trend could diminish the Act’s effectiveness in fostering the development of trustworthy WAITs, undermining its commitment to human-centric AI that serves people and protects fundamental rights.

The Act’s shortcomings in regulating AI also contribute to a second significant trend: a further shift in the balance of power between employers and employees in favour of the former. This trend emerges from several critical loopholes in the legislation, which could be outlined as follows. The Act prioritises market integration over the safeguarding of fundamental rights. Indeed, while the Act champions the principles of human-centric and trustworthy AI, its foundation in Article 114 of the TFEU ‘shoehorns’ (Almada and Radu, 2024) the protection of fundamental rights into the general framework designed to prioritise the removal of barriers to trade in the internal market. In doing so, the Act risks undermining the protection of fundamental rights and freedoms in favour of market-driven objectives (Almada and Radu, 2024; Ebers, 2024), normalising development and deployment of WAITs optimised for competitiveness at the expense of workers’ interests and well-being.

What is more, the Act allows deployers to bypass fundamental rights impact assessments, effectively excluding the involvement of workers and trade unions in assessing WAITs before and during their use. This means that the potential for AI systems to infringe on workers’ rights and freedoms may be unaddressed until after harm has occurred. The Act also exempts private companies deploying high-risk WAITs from registration requirements for these systems. This creates a lack of transparency, resulting in an asymmetry of information that could compel workers to rely on their employers to understand which high-risk WAITs are being deployed and how they are being utilised. Additionally, the Act fails to provide meaningful safeguards for the right of workers to an explanation for decisions made by high-risk AI systems that impact their health, safety or fundamental rights. Particularly, the Act fails to ensure mechanisms for consultation with workers and requirements for a detailed description of how the AI system makes decisions. This shortcoming may impede workers’ ability to effectively challenge biased and unfair AI-driven decisions in the workplace.

Moreover, the Act creates significant regulatory grey areas by excluding general-purpose AI models classified as not posing systemic risks from robust oversight. This might allow such systems to be deployed without adequate scrutiny despite their potential downstream effects in terms of amplifying bias and discrimination in the workplace. Furthermore, the Act carves out exemptions for otherwise banned emotion-recognition systems in workplace settings. This risks creating a regulatory loophole that could lead to invasive and potentially biased uses of WAITs in monitoring workers’ behaviour and emotional states. Last but not least, the Act’s exclusive emphasis on algorithmic management tools neglects the broader socio-economic impacts of WAITs. This narrow regulatory focus risks exacerbating existing inequalities and discrimination by allowing WAITs to be deployed in ways that replace or deskill workers, potentially perpetuating unfair and precarious working conditions within the European workplace. Subsequently, the Act’s shortcomings in regulating WAITs undermine transparency and accountability in the workplace, leaving EU workplaces vulnerable to opaque, biased and intrusive WAITs, thereby further empowering the employers vis-à-vis the workers.

In sum, AI tech companies’ acquisition of significant autonomy over the design of their systems and the solidification of power imbalances in the workplace represent two key trends in Europe’s future world of work fostered by the Act’s shortcomings. These trends may hinder the fair and democratic integration of WAITs into the work of the future, with the risk that the deployment of WAITs designed with profit-driven priorities takes precedence over human-centred AI in the European workplace. This scenario poses significant risks to the protection of workers’ rights and freedoms as enshrined in the Charter, particularly concerning the principles of respect for private life (Article 7), freedom of assembly and association (Article 12), non-discrimination (Article 21), equality between women and men (Article 23), the right of workers to information and consultation within the undertaking (Article 27), the right of collective bargaining and action (Article 28), and fair and just working conditions (Article 31).

In conclusion, concerning issues related to the world of work, the EU AI Act fails to fulfil its promise of promoting the adoption of human-centric and trustworthy AI to enhance human well-being. Instead, as it stands, the Act creates opportunities for the infringement of workers’ rights protected by the Charter. This situation undermines human-centric and trustworthy AI principles, thereby compromising the high level of protection of health, safety and fundamental rights in the EU. To prevent such an unfavourable outcome, as European trade unions have rightly advocated, an EU directive is needed dedicated to addressing the unique challenges posed by WAITs and rectifying the shortcomings of the EU AI Act (ETUC, 2023; IndustriAll, 2024). This directive should be based on Article 153 TFEU, which provides the legal basis for adopting measures to improve working conditions that uphold fundamental rights and freedoms outlined in the Charter. It should ensure robust oversight of WAITs developers and deployers to enable workers and their representatives to fully exercise their rights and freedoms, as outlined in the Charter. It should also guarantee that WAITs are transparent, explainable, free from bias and discrimination, and aligned with workers’ interests. Only through such targeted legislation can workers be adequately protected from the potential harms of WAITs, making AI technology human-centric, trustworthy and beneficial to EU society as a whole.

Footnotes

Funding

The open access publication was funded by the WZB Berlin Social Science Centre via the Sage Journals Consortium coordinated by the Bayerische Staatsbibliothek (BSB).