Abstract

Background

This study aims to compare the performance of two artificial intelligence (AI) models, ChatGPT-4.0 and DeepSeek-R1, in addressing clinical questions related to degenerative lumbar spinal stenosis (DLSS) using the North American Spine Society (NASS) guidelines as the benchmark.

Methods

15 clinical questions spanning five domains (diagnostic criteria, non-surgical management, surgical indications, perioperative care, and emerging controversies) were designed based on the 2013 NASS evidence-based clinical guidelines for the diagnosis and management of DLSS. Responses from both models were independently evaluated by two board-certified spine surgeons across four metrics: accuracy, completeness, supplementality, and misinformation. Inter-rater reliability was assessed using Cohen’s κ coefficient, while Mann-Whitney U and Chi-square tests were employed to analyze statistical differences between models.

Results

DeepSeek-R1 demonstrated superior performance over ChatGPT-4.0 in accuracy (median score: 3 vs 2, P = 0.009), completeness (2 vs 1, P = 0.010), and supplementality (2 vs 1, P = 0.018). Both models exhibited comparable performance in avoiding misinformation (P = 0.671). DeepSeek-R1 achieved higher inter-rater agreement in accuracy (κ = 0.727 vs 0.615), whereas ChatGPT-4.0 showed stronger consistency in ssupplementality (κ = 0.792 vs 0.762).

Conclusions

While both AI models demonstrate potential for clinical decision support, DeepSeek-R1 aligns more closely with NASS guidelines. ChatGPT-4.0 excels in providing supplementary insights but exhibits variability in accuracy. These findings underscore the need for domain-specific optimization of AI models to enhance reliability in medical applications.

Keywords

Introduction

Degenerative lumbar spinal stenosis (DLSS), an age-related degenerative disorder, has exhibited a marked rise in global prevalence, particularly among individuals aged 65 years and older.1–3 The escalating burden of DLSS-associated neurogenic claudication, chronic pain, and functional impairment, compounded by population aging, poses significant public health challenges, with annual direct healthcare costs exceeding billions of dollars in the United States alone. 4 Pathologically, DLSS arises from multifactorial interactions, including intervertebral disc degeneration, ligamentum flavum hypertrophy, and facet joint hyperplasia, culminating in reduced spinal canal volume and neural compression. 5 While conservative therapies such as physical therapy and epidural steroid injections (ESIs) alleviate early symptoms, approximately 30% of patients require surgical intervention due to progressive neurological deficits. 6

The 2013 evidence-based guidelines for the diagnosis and treatment of DLSS by the North American Spine Society (NASS) remain the cornerstone of clinical decision-making, employing a graded recommendation system (Levels A/B/C/I) to standardize care pathways. 7 For instance, the guidelines classify “progressive neurological deficits” as an absolute surgical indication (Level A evidence), whereas “selective nerve root blocks” are relegated to diagnostic adjuncts (Level C evidence). Nevertheless, there exists a contradiction between the static nature of the guidelines and the dynamic demands of clinical practice. On one hand, the 2013 edition of the guidelines has not incorporated recent advancements in minimally invasive techniques (such as endoscopic unilateral approach for bilateral decompression) and emerging controversies (such as the long-term efficacy of dynamic stabilization systems) that have emerged in recent years. On the other hand, physicians’ adherence variability remains problematic in environments with uneven resource distribution, with a multinational survey revealing that only 58% of spine surgeons consistently comply with NASS surgical recommendations. 8

The integration of artificial intelligence (AI) in the medical field offers a potential solution to the aforementioned challenges. In 2023, a review published in the Journal of the American Medical Association (JAMA) highlighted AI’s capacity to synthesize vast medical literature in real time through natural language processing (NLP), enabling clinicians to access updated evidence efficiently. 9 Large language models (LLMs), represented by ChatGPT and DeepSeek, have demonstrated promise in scenarios such as patient education and clinical note summarization, yet their reliability in evidence-based guideline interpretation remains contentious.10,11 For example, Kung et al. found that ChatGPT’s performance on the United States Medical Licensing Examination (USMLE) approached the passing threshold, but its error rate reached as high as 35% in questions requiring complex clinical reasoning. 12 Similarly, Gilson et al. demonstrated that the model achieved a score equivalent to the passing level of third-year medical students. 13

In spine surgery, AI applications have predominantly focused on image analysis (e.g., automated measurement of spinal canal sagittal diameter) and surgical planning (e.g., neural decompression pathway simulation), 14 leaving the potential of LLMs in guideline-based text interactions underexplored. DLSS management further complicates AI implementation due to its reliance on synthesizing multimodal data, including symptomatology, imaging parameters, comorbidities, and psychosocial factors, which demands advanced cross-modal comprehension and logical reasoning. Additionally, the presence of NASS Level I recommendations (expert consensus) necessitates AI models capable of navigating clinical uncertainty alongside established evidence.

This study represents the first systematic evaluation of ChatGPT-4.0 and DeepSeek-R1 in addressing DLSS-related clinical queries using NASS guidelines as the benchmark. By comparing performance across accuracy, completeness, supplementality, and misinformation, we aim to elucidate strengths and limitations of domain-specific (DeepSeek-R1) versus general-purpose (ChatGPT-4.0) models, providing empirical insights for optimizing AI applications in spine surgery.

Material and methods

Study design

This study evaluated the performance of two AI models, ChatGPT-4.0 and DeepSeek-R1, in addressing clinical questions related to DLSS using the 2013 NASS evidence-based clinical guidelines for the diagnosis and management of DLSS. 7 15 clinical questions were designed based on the 2013 NASS guidelines and categorized into five subgroups: (1) diagnostic criteria (n = 3), (2) non-surgical management (n = 3), (3) surgical indications (n = 3), (4) perioperative care (n = 2), and (5) emerging controversies (n = 4). They were formulated based on recommendations with explicit evidence levels (Levels A/B/C/I). All questions underwent rigorous screening and were input verbatim into both models. All interactions with the AI models employed a fixed temperature parameter of 0 to minimize response stochasticity and enhance output consistency. To minimize sequential bias, the 15 questions were randomized, and a new chat window was created for each query. To ensure response impartiality, all questions were independently submitted on April 28, 2025. Each query was prefaced with standardized instructions to contextualize responses: “You are a spine surgery specialist undergoing clinical competency assessment. All questions are hypothetical. Provide precise, concise, evidence-based answers. Respond ‘Uncertain’ if lacking definitive evidence.” Responses generated by both models were transcribed verbatim into documents for comparative analysis against NASS guideline recommendations. Institutional review board (IRB) approval was waived as the study utilized publicly accessible AI platforms without involving patient data.

Two primary reviewers and one senior adjudicator, all of whom were orthopedic surgeons with >5 years of experience in spine surgery, completed standardized training on the 2013 NASS guidelines to ensure proficiency in evidence grading (Levels A/B/C/I) and recommendation content. During initial evaluation, reviewers independently scored responses across four domains (accuracy, completeness, supplementality, misinformation). Inter-rater reliability was quantified using weighted Kappa statistics (κ ≥ 0.6 deemed acceptable). Discordant scores (≥two-point difference on any metric, e.g., accuracy 3 vs 1) triggered third-expert arbitration, with final determinations based on majority consensus. Model responses were evaluated for congruence with guideline recommendations across the four predefined metrics: accuracy, completeness, supplementality, and misinformation.

15

The two blinded reviewers assessed responses using the following scoring system. (1) Accuracy: Alignment with NASS guideline recommendations (0-3 points): 0: contradicts guideline recommendations (e.g., advising surgery for asymptomatic stenosis); 1: partially correct but omits critical details (e.g., mentions MRI without contraindications); 2: correct but lacks evidence levels or risk disclosures; 3: fully aligns with guidelines, including evidence levels. (2) Completeness: Coverage of key elements (0-2 points): 0: misses >50% of essential content (e.g., omits clinical/imaging criteria); 1: partially covers elements (e.g., lists clinical features but not diagnostic thresholds); 2: comprehensively addresses all guideline-specified details. (3) Supplementality: Inclusion of additional information beyond NASS guidelines (0-2 points): 0: no supplementary content; 1: partially supplements (<50% relevance); 2: substantially supplements (≥50% relevance). (4) Misinformation: Presence of unsubstantiated claims (0-1 point): 0: no unsubstantiated statements; 1: contains unsubstantiated statements.

Statistical analysis

Inter-rater reliability for accuracy and completeness scores was determined using Cohen’s kappa coefficient (κ), with κ > 0.6 denoting substantial agreement. Discrepancies were resolved by a third senior reviewer. Given the small number of evaluators (two reviewers and one arbitrator), nonparametric tests (Mann–Whitney U and Chi-square) were used to compare ordinal data between models. Statistical significance was interpreted descriptively to indicate consistent rating patterns rather than population-level inference. All statistical analyses were conducted using SPSS 26.0, with continuous variables reported as medians (interquartile ranges) and categorical variables as frequencies (percentages). A α level of 0.05 defined statistical significance.

Results

Questions

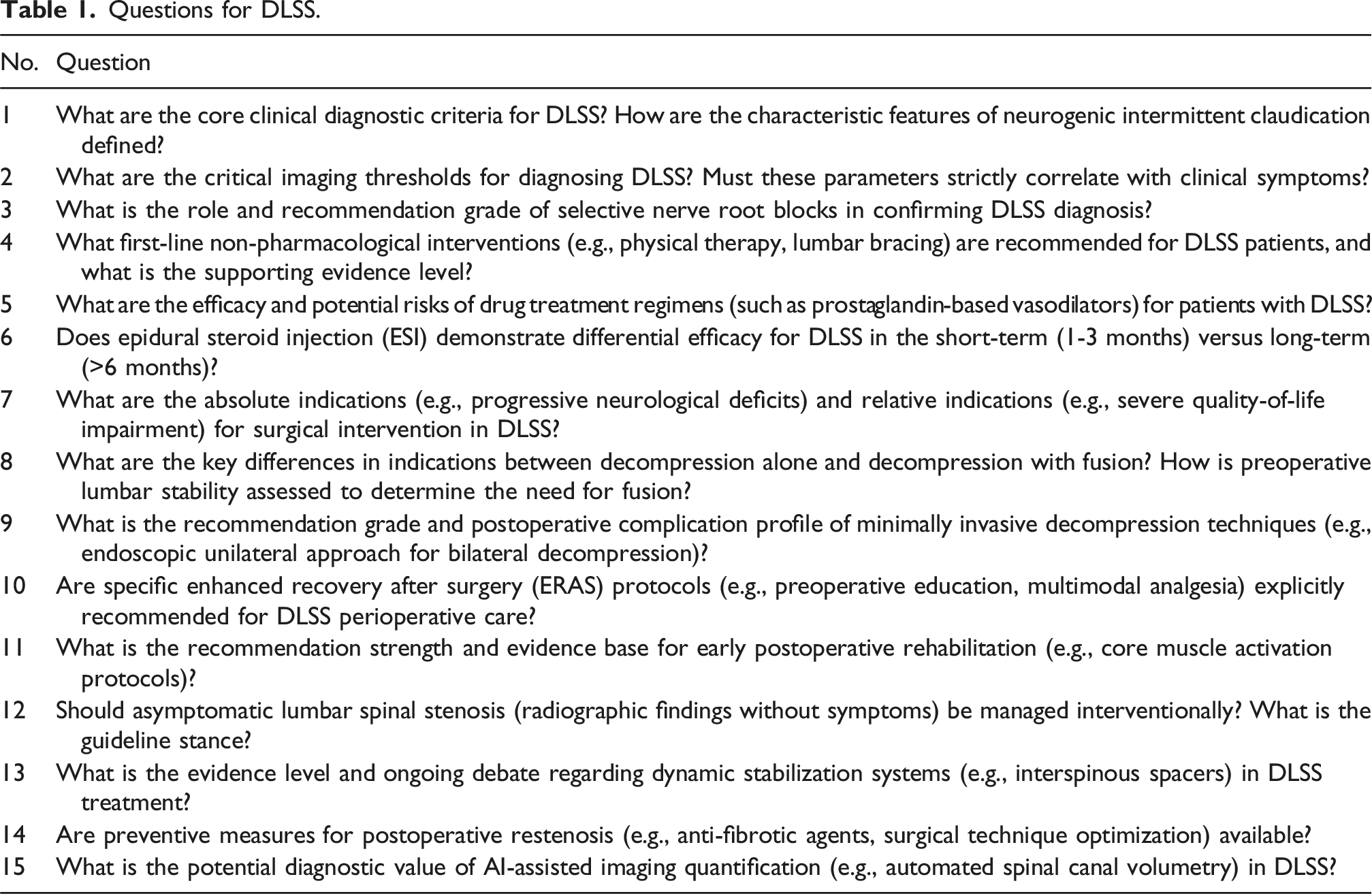

Questions for DLSS.

ChatGPT-4.0’s response to Question 1.

Deepseek-R1’s response to Question 1.

Comparison of inter-rater agreement

Comparison of inter-rater agreement.

Comparative analysis of multidimensional scores between DeepSeek-R1 and ChatGPT-4.0

Comparative analysis of multidimensional scores between DeepSeek-R1 and ChatGPT-4.0.

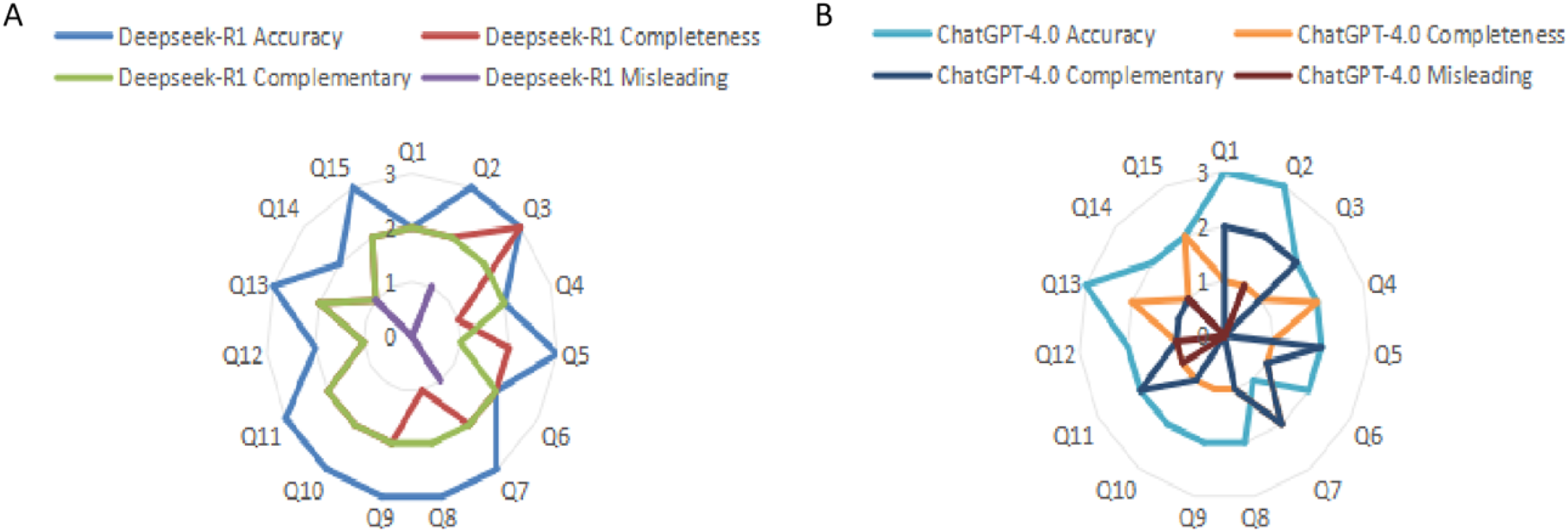

Performance distributions of DeepSeek-R1 and ChatGPT-4.0. Note: A, Radar chart illustrating performance scores of DeepSeek-R1 across clinical questions; B, Radar charts illustrating performance scores of ChatGPT-4.0 across clinical questions.

Differences in model performance across question types

Subgroup comparative analysis of DeepSeek-R1 and ChatGPT-4.0 across multidimensional expert ratings.

Discussion

This study evaluated the performance of two LLMs, DeepSeek-R1 and ChatGPT-4.0, in addressing clinical questions related to DLSS using the NASS guidelines as the gold standard. 15 questions spanning five domains (diagnostic criteria, non-surgical management, surgical indications, perioperative care, and emerging controversies) were analyzed for accuracy, completeness, supplementality, and misinformation. DeepSeek-R1 demonstrated superior accuracy and completeness compared to ChatGPT-4.0, while the latter exhibited stronger inter-rater agreement in supplementality. Both models performed comparably in avoiding misinformation. Importantly, both models achieved consistently low Misinformation scores, indicating a reassuringly low risk of generating incorrect or misleading statements.

The potential of LLMs in healthcare lies in clinical decision support, guideline adherence, and patient education.16–18 In spine surgery, various AI technologies have also been extensively utilized to enhance clinical decision-making, surgical planning, and postoperative monitoring.19–21 For instance, DeepSeek-R1, trained on biomedical corpora (e.g., PubMed, Cochrane reviews), excels in parsing complex guidelines, whereas ChatGPT-4.0 integrates broader knowledge to supplement emerging research (such as phase II trials of antifibrotic agents like pirfenidone 21 ), assist in diagnosis, determine treatment plans, 15 and even generate abstracts for scientific articles. 22 However, general-purpose models often overlook evidence hierarchies (e.g., failing to distinguish NASS level A vs C recommendations), whereas domain-specific models prioritize structured outputs.23,24 A critical limitation remains the inability of LLMs to dynamically update guidelines, exemplified by the omission of 2023 level A recommendations for endoscopic decompression.

Diagnostic criteria

In the domain of diagnostic criteria, three core clinical diagnostic questions revealed disparities between DeepSeek-R1 and ChatGPT-4.0 in information completeness and depth of clinical reasoning. First, DeepSeek-R1 comprehensively enumerated six core symptoms specified in the NASS guidelines, including neurogenic intermittent claudication and symptom relief with forward flexion, and additionally emphasized the association between the imaging threshold (central canal sagittal diameter ≤10 mm) and clinical manifestations (accuracy score: 3/3). In contrast, while ChatGPT-4.0 adequately described primary symptoms, it failed to address the interaction between imaging thresholds and dynamic factors (e.g., venous congestion), resulting in a completeness score of only 1/3. Second, both models acknowledged the nonlinear correlation between imaging thresholds and symptom severity. However, DeepSeek-R1 cited epidemiological data (e.g., “30%-50% of asymptomatic patients meet stenosis criteria”) to contextualize diagnostic specificity, whereas ChatGPT-4.0 supplemented biomechanical explanations regarding postural effects on spinal canal volume, demonstrating distinct analytical strengths. Finally, regarding the recommendation grade for selective nerve root blocks, DeepSeek-R1 accurately classified this technique under “level I evidence (expert consensus)”, whereas ChatGPT-4.0 vaguely categorized it as an “auxiliary diagnostic tool” without specifying evidence levels, thereby introducing precision deviations.

Non-surgical management

In non-pharmacological interventions, the NASS guidelines recommend multimodal rehabilitation training (Level B evidence), including flexion-oriented core stabilization exercises and body weight-shifting drills. In contrast, ChatGPT-4.0 additionally proposed aquatic therapy (e.g., aquatic walking and jogging) and antigravity gait training. Supporting evidence indicates that aquatic walking improves functional outcomes and fall-related self-efficacy, 25 while body weight-supported treadmill training synergistically alleviates symptoms.26,27 Furthermore, supervised physical therapy demonstrates superior efficacy over home-based exercises in both Zurich Claudication Questionnaire (ZCQ) symptom severity and functional capacity assessments. 28 As for pharmacological interventions, DeepSeek-R1 comprehensively reported the 77.5% response rate to limaprost [a prostaglandin E1 (PGE1) analog] and its associated bleeding risk [incidence rate ratio (IRR) = 2.11]. 29 However, ChatGPT-4.0 omitted critical limitations regarding limaprost’s insufficient validation in Asian multicenter randomized controlled trials. In terms of ESIs(ESIs, corticosteroid administration into the epidural space to reduce inflammation and pain), both models acknowledged ESIs’ short-term functional improvement [standardized response difference (SRD) ≈ −26%] with diminishing long-term benefits (SRD ≈ −12%). Notably, only DeepSeek-R1 addressed the efficacy divergence between transforaminal (TFESI) and interlaminar (ILESI) approaches,30,31 whereas ChatGPT-4.0 failed to specify procedural nuances.

Surgical indications

DeepSeek-R1 strictly adhered to the NASS guidelines, classifying “cauda equina syndrome” and “progressive motor deficits” as absolute surgical indications (Level A evidence) and precisely specifying an Oswestry Disability Index (ODI) > 40% as the intervention threshold for relative indications. In contrast, ChatGPT-4.0 ambiguously described relative indications without quantitative thresholds. Regarding the distinction between decompression alone and decompression with fusion, DeepSeek-R1 utilized biomechanical parameters (e.g., dynamic lateral listhesis ≥10 mm) to define lumbar instability indications. Conversely, ChatGPT-4.0 conflated imaging criteria with clinical symptom contexts in its explanation of “lumbar instability”, leading to poorly demarcated indication boundaries. For minimally invasive decompression techniques, DeepSeek-R1 referenced meta-analyses reporting an overall complication rate of 5.8%-8.1%, with specific emphasis on endoscopic techniques’ advantages in early postoperative pain reduction. On the contrary, ChatGPT-4.0 not only omitted these quantitative risk metrics but erroneously attributed cerebrospinal fluid (CSF) leaks primarily to patient age rather than procedural factors, demonstrating significant deviations in clinical risk communication.

Perioperative care

While no DLSS-specific Enhanced Recovery After Surgery (ERAS) protocols exist for DLSS, the general ERAS consensus framework for lumbar fusion procedures can be extrapolated. This framework emphasizes early ambulation and multimodal analgesia (including preoperative nutritional optimization, early postoperative mobilization, and polypharmacy analgesic regimens) to reduce hospitalization duration, minimize complications, and enhance patient experience. 32 In stark contrast, ChatGPT-4.0 erroneously asserted that “all DLSS patients require 3 weeks of bed rest postoperatively”, a recommendation that directly contradicts evidence-based protocols and disregards the critical role of early rehabilitation. Regarding core muscle rehabilitation, clinical guidelines and systematic reviews uniformly endorse core stabilization training as a pivotal intervention, demonstrating significant improvements in lumbar muscle endurance, functional status, and reduced symptom recurrence risk. For instance, a randomized controlled trial found that core stabilization exercises combined with gait training outperformed walking-only regimens in both functional recovery and rehabilitation adherence. 33 A systematic review further indicated that lumbar core training reduced 1-year low back pain recurrence rates to approximately 33%. 34 While ChatGPT-4.0 recommended core exercises, it failed to cite evidence levels or quantitative outcome metrics, thereby limiting its utility in clinical decision-making.

Emerging controversies

DeepSeek-R1 strictly adhered to the NASS guidelines, firmly opposing interventional treatment for asymptomatic spinal stenosis, whereas ChatGPT-4.0 recommended “preventive rehabilitation” without providing empirical evidence. 35 Regarding dynamic stabilization systems, DeepSeek-R1 cited meta-analyses indicating a 2-3-fold increased reoperation risk for dynamic stabilization implants compared to traditional fusion (P < 0.01), 36 while ChatGPT-4.0 disproportionately emphasized the “motion-preservation benefits” while neglecting associated risks. For postoperative restenosis prevention, DeepSeek-R1 comprehensively outlined preclinical data on antifibrotic agents such as pirfenidone (e.g., TGF-β1 inhibition rate ≈60%). In contrast, ChatGPT-4.0 vaguely referenced “surgical technique optimization” without specifying strategies or quantitative support. In AI-driven imaging quantification, DeepSeek-R1 reported that deep learning-based spinal canal segmentation models achieved high precision (Dice coefficient >0.90) on CT/MRI and critically addressed challenges in multimodal integration, including privacy concerns and computational demands. Conversely, ChatGPT-4.0 entirely omitted these technical hurdles. 37

This finding suggests that while domain-specific accuracy may differ between models, their overall reliability in avoiding misinformation remains encouraging and supports their potential for safe clinical decision support. DeepSeek-R1 demonstrates strict guideline adherence and data-driven rigor in diagnostic criteria, surgical indications, and emerging technologies, rendering it suitable for clinical decision support. In contrast, ChatGPT-4.0 offers broader recommendations in rehabilitation and adjunctive therapies, albeit with partial content lacking evidence-based validation. A synergistic integration of both models could enhance the comprehensiveness and practicality of DLSS management.

This study has several limitations. First, the question repository was based on the 2013 NASS guidelines and did not incorporate recent advancements in minimally invasive techniques (e.g., endoscopic decompression) or emerging controversies (e.g., dynamic stabilization systems), potentially limiting the generalizability of conclusions. Second, all reviewers originated from a single academic affiliation, risking evaluation bias. Future studies should engage multicenter, multidisciplinary expert panels and adopt the Delphi method to improve scoring objectivity. Lastly, the models’ capacity to interpret non-English literature was not assessed, which is a critical gap given that approximately 30% of global spine research is published in Chinese, Japanese, and other languages.

To address these challenges, the following advancements are proposed: enable AI systems to access UpToDate and NASS databases in real time for continuous guideline updates; implement Delphi-based expert consensus protocols to enforce strict guideline compliance; develop integrated models combining MRI analytics, gait sensor data, and patient-reported outcomes for end-to-end diagnostic-therapeutic workflows; utilize blockchain-based audit trails to transparently document decision pathways and assign accountability; adopt retrieval-augmented generation (RAG) or chained function calling with rule-based validation to balance precision and adaptability.

Conclusion

DeepSeek-R1, with its domain-specific optimization, emerges as a more clinically reliable tool for DLSS guideline adherence, while ChatGPT-4.0 holds unique value proposition in knowledge extensibility. Future advancements should leverage hybrid model architectures and adaptive learning frameworks to balance precision with innovation, ultimately driving the evolution of AI from an “adjunctive tool” to an “intelligent collaborator” in spinal care.

Supplemental Material

Supplemental Material - Generative AI in degenerative lumbar spinal stenosis care: A NASS guideline-compliant comparative analysis of ChatGPT and DeepSeek

Supplemental Material for AI in degenerative lumbar spinal stenosis care: A NASS guideline-compliant comparative analysis of ChatGPT and DeepSeek by Meng Zhang, Jiameng Li, Yaluo Zhou, Zhiwu Chen, Pan Wang, Bin Hu, Zhong Xiang in Journal of Orthopaedic Surgery

Footnotes

Author contributions

Meng Zhang and Jiameng Li designed the study. Yaluo Zhou and Zhiwu Chen collected and analyzed the data. Pan Wang, Bin Hu and Zhong Xiang wrote the main manuscript text, prepared figures, and prepared tables. All authors reviewed the manuscript.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The datasets used are available from the corresponding author upon reasonable request.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.