Abstract

Purpose

Research is vital for evidence-based surgery. Understanding scientometric differences among surgical specialties has scope to inform discussions within and across surgical specialities to develop and maintain a culture of research productivity. This study aims to quantify Australian orthopaedic surgical academic productivity compared to the other specialties within the Royal Australasian College of Surgeons’ (RACS).

Methods

A list of Australian surgeons registered with RACS was compiled using the “find a surgeon” function on the RACS Web site. This list was cross-referenced with the specialty databases on their respective websites. A name search of the SCOPUS database for each individual surgeon was performed. For each individual h-index, m-index, total active publishing years, total publications, and total citations were collected.

Results

Orthopaedic surgeons had the equal lowest h-index median 2 (interquartile range:3), the shortest duration involved in research median 5 years (14), produced the fewest articles median 2 (7) and attained the second lowest number of citations median 28 (116) of the Australian surgical specialties. When the 10 individuals with highest h-index are compared among specialties, orthopaedic surgeons rank second with a median of 37 (6.5).

Conclusion

Our objective data provides a factual comparison and baseline assessment of one aspect of research productivity. It can challenge currently held perceptions of performance and can inform conversations about strategic development. We recommend this assessment to other international Colleges and Societies on regular basis. These accurate academic productivity metrics provide opportunity for developing and maintaining a culture of sustained, significant contribution to surgical research.

Introduction

Research is vital for evidence-based surgery.1,2 The expansion and refinement of this evidence base is primarily driven by the goal of improving outcomes for surgical patients. However, there are also implications for academics and trainees, as evidenced by the value placed on research in training, career progression, academic appointments, and prestige. 3 Anecdotally, orthopaedic surgery has long prided itself on attracting some of the “best and brightest”, however the contribution of these bright minds to the surgical evidence base has not been quantified. 4

Scientometric analysis using citation-based metrics has become increasingly integral to individual research productivity analysis in the six decades since Garfield 5 ’s landmark proposition for “citation indexes for science”. Hirsch’s h-index and m-index are validated and currently well recognised as useful indicators of an individual’s research output, as well as having scope to provide insight into the broader research culture and productivity within surgical research.3,6–8 H-index (h) for a given author is the number of papers (h) cited at least (h) times. M-index is calculated by dividing h-index by years elapsed between their first and most recent publications, to account for disparity in active publishing years.

While h- and m-indices have been used to explore research impact between surgeons holding academic appointments, to our knowledge they have not been used to measure an entire surgical college’s or other learned societies’ academic performance and quantify differences in research productivity among surgical specialties within a surgical professional body.3,7–9

We provide a quantification of the Royal Australasian College of Surgeons’ (RACS) academic productivity and scientometric differences between surgical specialties with the purpose of quantifying the research output of Australian orthopaedic surgeons within the context of other surgical specialties. The objective of this paper is to inform conversations within and across surgical specialties and cultivate a culture of research vitality that supports the ongoing development of research capabilities for trainees, clinicians, and academics.

Materials and methods

An ethics waiver was obtained from Hunter New England Human Research Committee, based on the study using publicly available data (AU201909-15). The “find a surgeon” function on the RACS Web site was cross-referenced with the specialty databases on their respective websites to compile a list of surgeons currently practicing in Australia. Surgeons were arranged in their specialties: cardiothoracic surgery, general surgery, neurosurgery, paediatric surgery, plastic and reconstructive surgery, orthopaedic surgery, otolaryngology/head and neck surgery, urology, vascular surgery.

Two individual authors collected data by using a name search on SCOPUS to calculate and record h-index, m-index, total active publishing years, total publications, and total citations for each surgeon. SCOPUS was selected over Web of Science because of superior coverage of medical specialties (particularly now that SCOPUS covers citations from 1970 onwards) and over Google Scholar because of Google Scholar’s potential overestimation of citation metrics and difficulty in disambiguation of authors.10–13 Where there were multiple authors with the same name on SCOPUS, the Australian Health Practitioner Regulation Agency was cross-referenced to confirm middle initials and affiliations/institutions scoped to ensure the correct papers were used to calculate metrics for each individual surgeon. Outliers and random samples of data were checked and cross-checked for errors.

Statistical analysis was performed with IBM SPSS Statistics for Windows, version 26 (IBM Corp, Armonk, N.Y, USA). Given the non-parametric distribution of data determined by Shapiro Wilk’s test of normality (p < 0·05), median values with interquartile range (IQR) and non-parametric data analysis were utilised. The median values were assessed with an independent samples median test (ANOVA equivalent) in preference to a Kruskall Wallis test, as it is more accurate in the presence of outliers. A Spearman’s rank coefficient correlation was performed to assess the trend of h-index and increasing specialty size

Results

There were 59,337 publications and 1,560,490 citations by the 4788 surgeons. The median number of total publications was four with an interquartile range (IQR) of 11. The median number of total citations was 38 (181.5). The median active publishing years was 8.5 (17). The median h-index was two (5). The median m-index was 0.308 (0.574).

Surgeons with low h-index predominated (Figure 1). Orthopaedic surgeons had a higher percentage of surgeons with low h-index and a lower percentage of surgeons with h-index greater than five, compared to the other specialties. One quarter of orthopaedic surgeons had an h-index of zero. Clustered bar percent of h-index for Orthopaedics (black bars) v. Other specialties (white bars) presented as percentage of surgeons for each h-index.

Orthopaedic surgeons had the lowest median number of publications (Figure 2). Orthopaedic surgery, general surgery, and otolaryngology had a median of less than five publications per surgeon. Cardiothoracic surgery, neurosurgery, and paediatric surgery were the only specialties with median publications greater than 10 per surgeon. Median publications (black dots) and interquartile range (black lines) across surgical specialties, p < 0·001 for differences in median publications between each specialty.

Orthopaedic surgeons had the lowest median active publishing years (Figure 3). Once again cardiothoracic surgery, neurosurgery, paediatric surgery had the highest median publishing years, all producing research for median of greater than 15 years. Vascular surgery, plastic surgery, and urology were the middle three with median publishing years between 10 and 15. Otolaryngology, general surgery and orthopaedic surgery all produced research for a median of less than 10 years. Median active publishing years (black dots) and interquartile range (black lines) across surgical specialties, p < 0·001 for differences in median active publishing years between each specialty.

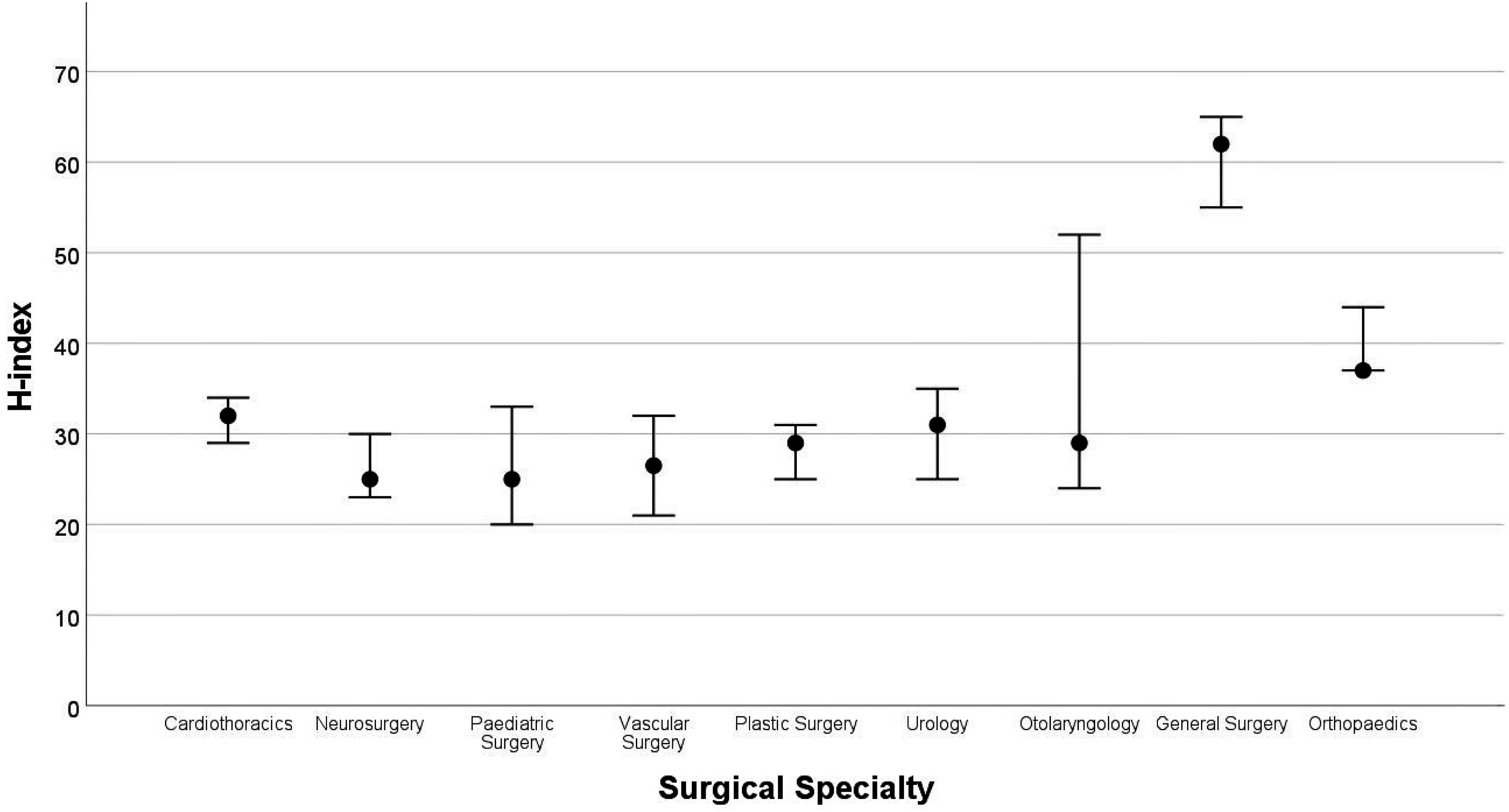

Orthopaedic surgeons, general surgeons and otolaryngologists had the lowest h-index (Figure 4). Cardiothoracic surgeons, neurosurgeons and paediatric surgeons had the highest median h-index. Six of the nine specialties had a median of three or less. Median h-index (black dots) and interquartile range (black lines) across surgical specialties, p < 0·001 for differences in median h-index between each specialty.

Otolaryngologists, urologists, and general surgeons had the lowest median m-index while cardiothoracic surgeons, neurosurgeons, and paediatric surgeons had the highest (Figure 5). M-index provides insight into research productivity when accounting for both citations and active publishing years. Orthopaedic surgeons improved their ranking to sixth for m-index. Median m-index (black dots) and interquartile range (black lines) across surgical specialties, p < 0·001 for differences in median m-index between each specialty.

Differences in h-indices, m-indices, total publications, and active publishing years between each specialty was statistically significant, with p < 0.001, using independent-samples median test.

There was a pattern of specialties with greater numbers of surgeons having lower median h-index (Figure 6). This relationship was statistically significant with a Spearman’s rank coefficient correlation providing a negative correlation of −0·193 (p < 0·001). Number of surgeons (grey bars, values on the left) and h-index (black dots, values on the right) within each specialty, p < 0.001 for Spearman’s rank coefficient correlation providing a negative correlation of −0·193 for larger specialties having lower h-index.

Elite academics within each specialty contributed disproportionately to highly cited literature. This was consistent across specialties but did not follow the pattern of larger specialties underperforming. When the 10 surgeons with highest h-index in each specialty were isolated and compared among specialties, general surgeons, orthopaedic surgeons, and cardiothoracic surgeons had the highest median h-index (Figure 7). Median h-index (black dots) and interquartile range (black lines) for the top 10 surgeons within each specialty.

Discussion

This study quantifies the research productivity of Australian orthopaedic surgeons and provides a comparison to the other surgical specialties within RACS. According to our data, the “median” Australian orthopaedic surgeon publishes research during only 5 years of their surgical career, two articles which will be cited at least twice. To put this in perspective, this corresponds to; the equal lowest h-index, the shortest duration involved in research, the fewest articles and the second lowest number of citations among the Australian surgical specialties. One quarter (293) of Australian orthopaedic surgeons have not contributed to cited literature. While orthopaedic surgeons were the equal lowest among specialties in h-index, their middling m-index suggests that there is potential to contribute more citable work to the evidence base if surgeons could be kept engaged in research for longer. Heavy clinical load, type of practice and no protected time for research could be contributing factors to these results.

The capacity for prolific research contribution within orthopaedics is also supported by the strong performance of the top 10 surgeons by h-index. Do these high-achievers reflect the competitive nature of orthopaedic selection and practice? Or does the disparity between the top 10 and “median” academics reflect an imbalance in research output across the specialty? Is this an undesirable anomaly or the best utilisation of individual talents for the progression of the specialty? Given surgical practice requires both academia and it’s practical application, perhaps a high research productivity core of surgeons to lead scientific and evidence-based surgery in the broader orthopaedic community is a pragmatic approach.

Surgeons in less populous specialties produced more publications, stayed involved in research for longer and had higher median h-indices. Possible explanations for this include exclusivity of the smaller specialties creating increased competition, proportionally greater number of academic positions, and different citation and authorship behaviours due to a relative higher proportion of surgeons involved in specialty collaborative networks. Nevertheless, it is evident that there is underutilised evidence-producing potential within the larger surgical specialties.

Although surgery is one of many scientific fields with increasing interest in examining citation metrics as a way of understanding research productivity and vitality, the findings of our study cannot be directly compared to existing literature. Existing literature evaluating citation metrics within surgical specialties has either limited to surgeons holding university positions, utilised only Google Scholar to calculate indices, used different statistical descriptors (mean rather than median in skewed data), examined only a snapshot of publications, not presented a breakdown of specialties, or a combination of these.3,9,14–16 These strategies result in much higher citation metrics than our all-inclusive methodology.

Our study has inevitable limitations. Some research profiles may have been over or underestimated due to the nature of multiple authors in similar fields with the same name. While careful methodology for disambiguation was utilised, there remains the possibility that some inaccuracies endured. This potential bias should have theoretically affected every specialty in the same extent by chance. Due to the non-parametric nature of data analysis, the results have lower statistical power, although this is mitigated by the considerable sample size. While h- and m-indices are good surrogates for research output, dissemination, and impact, they have known limitations; they don’t account for various citation behaviours (such as self-citation), place equal importance on each author regardless of their position on the paper such, and are limited in their ability to elucidate the quality of the research and its translation to patient-centred collaborative practice. The relevance of our results may not be directly applicable to other countries. It is impossible to comment on the academic standing of international orthopaedic surgeons in comparison to their national specialist peers without similar assessment in other countries.

Our study presents opportunities for developing and maintaining a culture of sustained, significant contribution to surgical research. It provides a yardstick for iterative assessment of surgical specialties and monitor the influence of curriculum development. The results provide objective data on productivity that may challenge currently held perceptions of performance, and inform conversations about research productivity and vitality. There is an opportunity for appreciative inquiry to understand how the strengths of one surgical specialty can inform progress in other specialties. 17 For example, orthopaedics can look to other specialties to develop strategies for keeping surgeons engaged in research for longer.

A future focus for monitoring research metrics could include prospective collation of all the articles authored by individuals within a specialty to build a dynamic picture of that specialty’s research environment, facilitating ongoing analysis. As per Hirsch 6 ’s original proposition, a group h-index could then be calculated, used to rank departments, and provide objective information on productivity. These objective outcome measures would be invaluable in devising and evaluating research strategies. Co-citation analysis, as proposed by Small, 18 could also be performed on the body of papers to create a research profile of each specialty. This would illustrate the core of the specialty’s research and could identify topic areas richly covered with the potential for collaboration, or areas sparsely covered with the potential for new work.

Conclusion

Orthopaedic surgeons had the equal lowest h-index, the shortest duration involved in research, the fewest articles and the second lowest number of citations among the Australian surgical specialties. We propose working with major citation databases to prospectively collect articles published by Australian surgeons to maintain up-to-date specialty h- and m-indices. We encourage surgical colleges from other parts of the world to utilise this methodology for self-assessment as a starting point to document improvement objectively.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.