Abstract

With the rapid penetration of artificial intelligence (AI) in healthcare, its associated ethical issues have become increasingly prominent. However, existing research often lacks systematic approaches and fails to explore cognitive differences thoroughly among healthcare professionals across regions, professions, and departments. To address this gap, this study systematically retrieved 19 qualitative studies from Embase, PubMed, and Web of Science databases. Quality was assessed using the JBI-QARI tool, and data were analyzed through thematic analysis, encompassing healthcare professionals from diverse backgrounds. Findings reveal that while AI enhances diagnostic accuracy and optimizes resource allocation, it also triggers ethical dilemmas such as algorithmic bias, data privacy breaches, and ambiguous accountability. Furthermore, cultural, resource, and policy disparities across regions significantly influence healthcare professionals’ perceptions, while differing professional roles and departmental responsibilities lead to distinct ethical priorities. Thus, AI applications in healthcare face multidimensional ethical challenges that disrupt practitioners’ workflows while profoundly impacting patient rights protection and healthcare system operations. Future efforts must develop systematic solutions across technological R&D, responsibility allocation, data security, and personnel training to balance innovation with ethics and advance sustainable AI-driven healthcare.

Keywords

Introduction

The application of artificial intelligence (AI) in healthcare has experienced rapid development over the past 50 years. Particularly with innovations in machine learning and deep learning technologies, the boundaries of AI applications have continuously expanded—from traditional medical algorithms to critical areas such as disease diagnosis prediction and treatment response evaluation. 1 Currently, AI implementation in medical settings primarily covers auxiliary screening, telemedicine, and tiered diagnosis and treatment systems. For instance, in May 2023, Belong.Life launched Dave, the world’s first conversational AI designed for cancer patients, providing 24/7 2 uninterrupted doctor-patient communication services. That same year, the National Institute of Health and Medical Big Data released “Hua Tuo GPT,” focusing on consultation support, patient education, and medical triage functions. 3 Furthermore, Wang et al. 4 highlighted AI’s significant role in assisting diagnosis and treatment for ophthalmic diseases like diabetic retinopathy and glaucoma. Alibaba’s DAMO Academy is also exploring generative AI’s potential in diagnosing corneal diseases. As medical AI technology evolves, the ethical controversies it raises have garnered increasing attention from academia and society. The Italian government temporarily banned ChatGPT due to privacy protection concerns. 5 Against this backdrop, AI medical ethics research has become a critical topic in both academic and policy spheres. From a theoretical framework perspective, international consensus has emerged around systems like Bartenschlager et al.’s six-principle AI ethics framework 6 (accountability, autonomy, diversity, etc.). The World Health Organization 7 has also published the Ethical and Governance Guidance for Health Artificial Intelligence, establishing six core ethical principles, including protecting autonomy and promoting public interest.

In terms of policies and regulations, countries are actively exploring AI medical ethics supervision paths suited to their national conditions. The United States passed the 21st Century Cures Act in 2016 to promote the sharing of medical data and the application of AI technology. 8 The Act emphasizes patient privacy protection and data security, requiring AI medical systems to follow strict compliance standards during development and use. The European Union’s Artificial Intelligence Act 9 implements a risk-based management approach for AI medical applications. For high-risk AI medical devices, such as AI systems used for disease diagnosis, it mandates rigorous standards of transparency, explainability, and security to safeguard patient rights. Japan has established the Principles for AI R&D, 10 focusing on the safety, reliability, and positive impact of medical AI, and encourages industry-academia collaboration to promote innovation in AI medical technology and the improvement of ethical norms. China is also accelerating the construction of an AI medical ethics supervision system. Policy documents such as the New Generation AI Ethics Norm 11 and the Reference Guidelines for AI Application Scenarios in the Health Industry 12 gradually build a localized AI medical ethics supervision framework based on aspects like relevant concepts, application scenarios, data management, and algorithm norms.

Although existing studies have approached the issue from the perspective of technical ethics—such as Ma et al. 13 stressing the explainability and accuracy of AI algorithms in pancreatic-cancer diagnosis and treatment, British scholars Winter and Carusi 14 arguing that the dominant role of healthcare professionals in AI applications must be safeguarded, and research in pediatric oncology 15 calling for respect for data privacy and trustworthy-AI principles—this body of work still displays pronounced limitations: first, there is a lack of systematic inquiry into an ethical-theoretical framework for AI in medicine, with most contributions remaining scattered pronouncements that have not coalesced into a coherent theoretical system; second, citations are fragmented, so the evolutionary thread of the field is blurred and the overall intellectual trajectory is hard to discern; and most crucially, almost no study has probed how perceptions of and attitudes toward AI-medical ethics differ across regions (whose cultural backgrounds, medical-resource distributions and policy environments vary), occupations (doctors, nurses, medical technicians, etc., whose roles and responsibilities in AI workflows differ and whose ethical foci therefore diverge), and clinical departments (surgery, internal medicine, radiology, etc., whose distinct service profiles and AI application scenarios generate different ethical challenges), while current research seldom disaggregates discussion along these dimensions and relies heavily on quantitative analysis while lacking any systematic synthesis of qualitative work, so the authentic thoughts, inner concerns and value judgments of clinicians when they confront AI-driven ethical dilemmas remain unexplored.

Given this, this study aims to address the aforementioned critical gap: by systematically reviewing qualitative research on AI medical ethics from the past decade both domestically and internationally, it conducts a categorized discussion among medical professionals across different regions, professions, and departments. The study reviews their perceptions and attitudes toward AI medical ethics issues, analyzes the underlying psychological mechanisms and influencing factors, and provides in-depth empirical evidence and policy recommendations for refining the ethical governance system and promoting the sustainable development of AI in healthcare. This study is registered on the PROSPERO website with registration number CRD420251004125.

Methods

Search strategy

This study searched three databases: Embase, PubMed, and Web of Science. A three-step retrieval strategy was adopted. First, a preliminary limited search was conducted in PubMed, analyzing text words in titles and abstracts alongside index terms used to describe articles. Subsequently, a second broad search was performed using all identified keywords and index terms. Finally, reference lists of retrieved articles were manually searched to identify any additional studies not initially included. The retrieval query for Web of Science is exemplified below: 1: ((TS = (AI)) OR TS = (ChatGPT)) OR TS = (algorithm) and Preprint Citation Index (Exclude – Databases). 2: (TS = (ethic*)) OR TS = (moral) and Preprint Citation Index (Exclude – Databases). 3: (TS = (healthcare)) OR TS = (medical) and Preprint Citation Index (Exclude – Database). 4: #3 AND #2 AND #1 and Preprint Citation Index.

Only studies published in English or Chinese were included. Publication years were within 5 years of the study’s commencement.

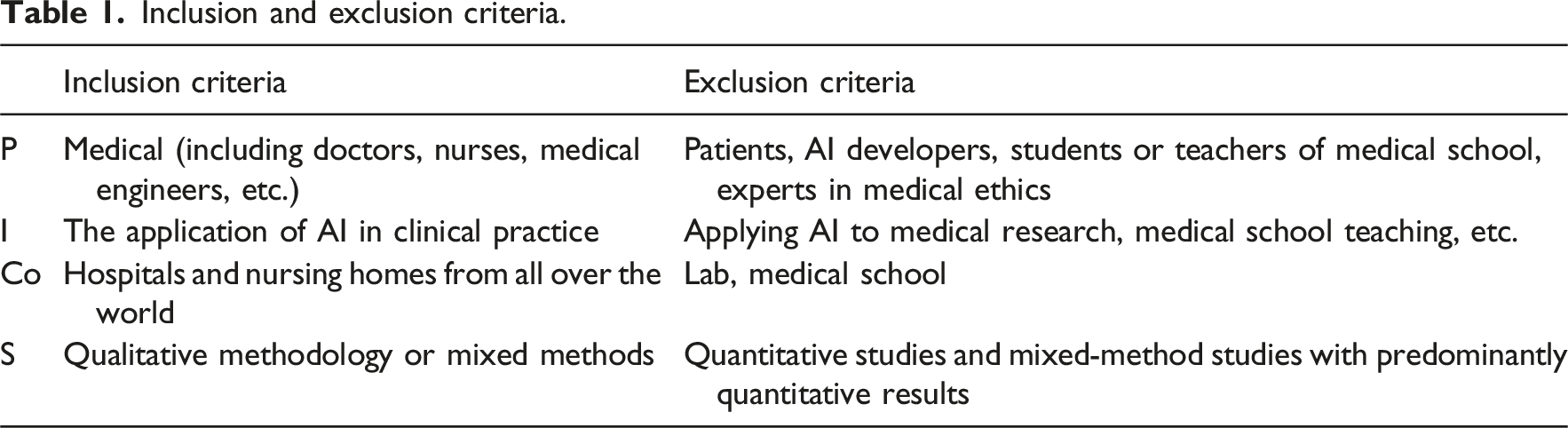

Inclusion and exclusion criteria

Inclusion and exclusion criteria.

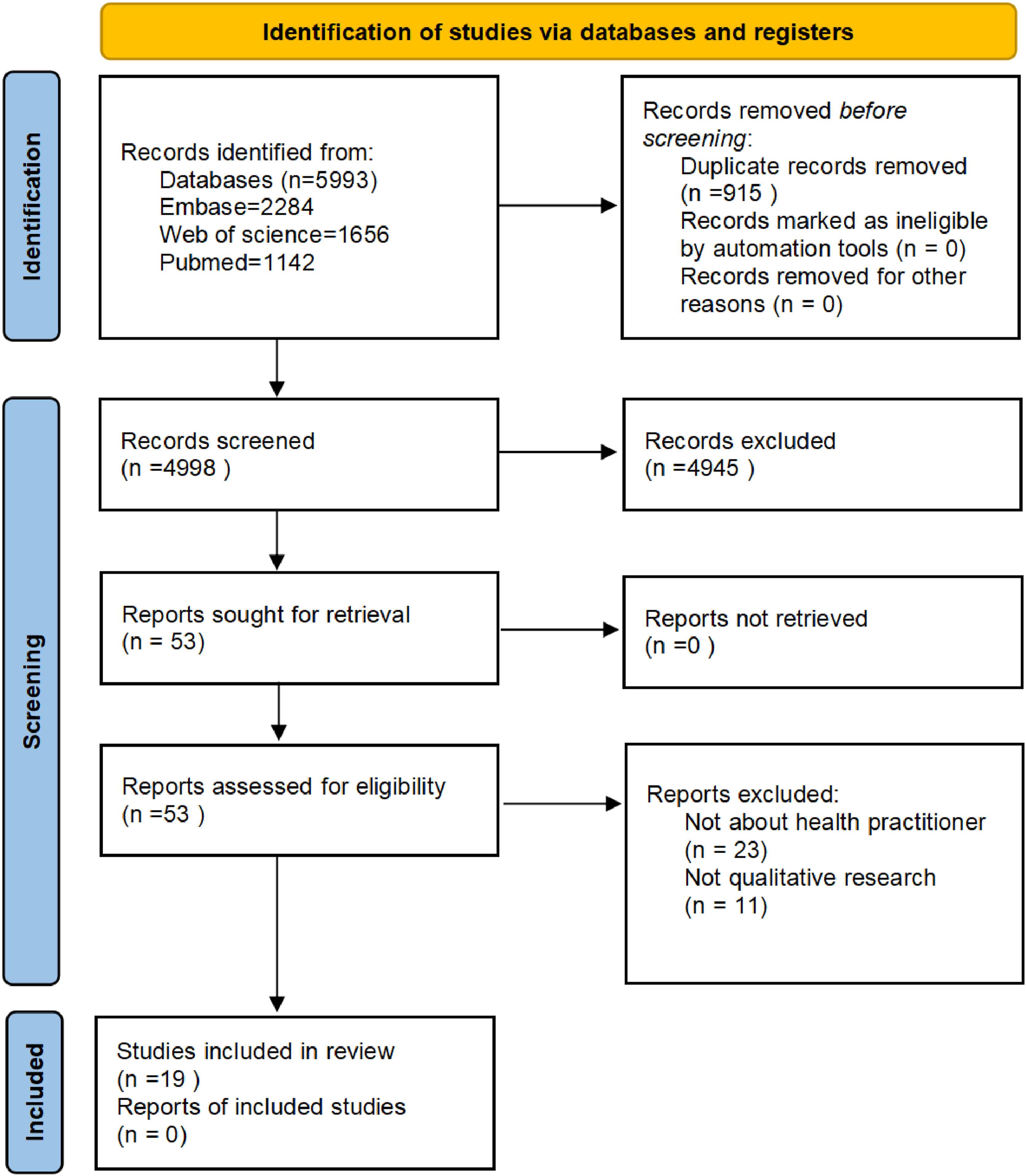

Literature screening PRISMA flow diagram.

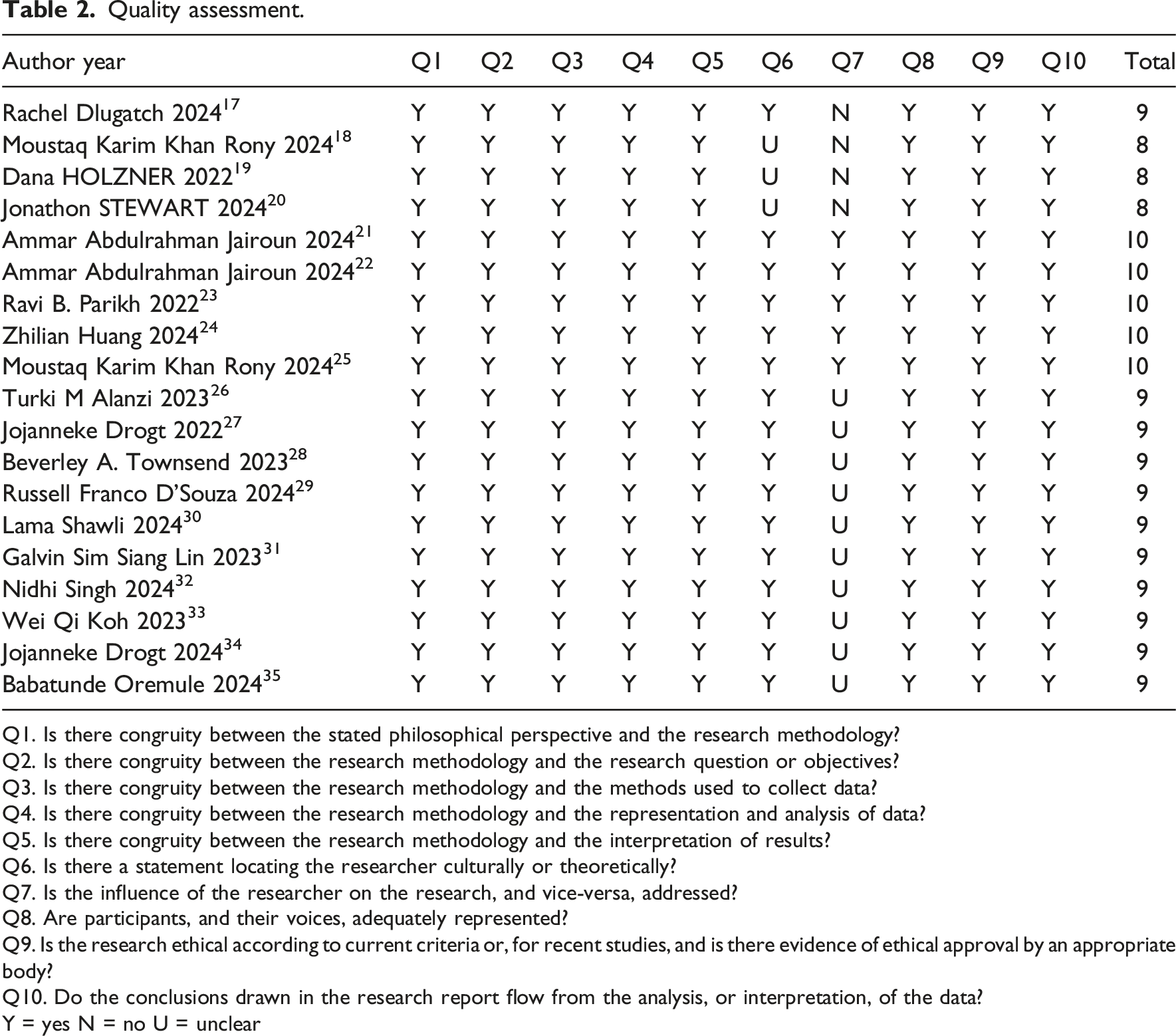

Quality assessment

Quality assessment.

Q1. Is there congruity between the stated philosophical perspective and the research methodology?

Q2. Is there congruity between the research methodology and the research question or objectives?

Q3. Is there congruity between the research methodology and the methods used to collect data?

Q4. Is there congruity between the research methodology and the representation and analysis of data?

Q5. Is there congruity between the research methodology and the interpretation of results?

Q6. Is there a statement locating the researcher culturally or theoretically?

Q7. Is the influence of the researcher on the research, and vice-versa, addressed?

Q8. Are participants, and their voices, adequately represented?

Q9. Is the research ethical according to current criteria or, for recent studies, and is there evidence of ethical approval by an appropriate body?

Q10. Do the conclusions drawn in the research report flow from the analysis, or interpretation, of the data?

Y = yes N = no U = unclear

Data extraction and synthesis

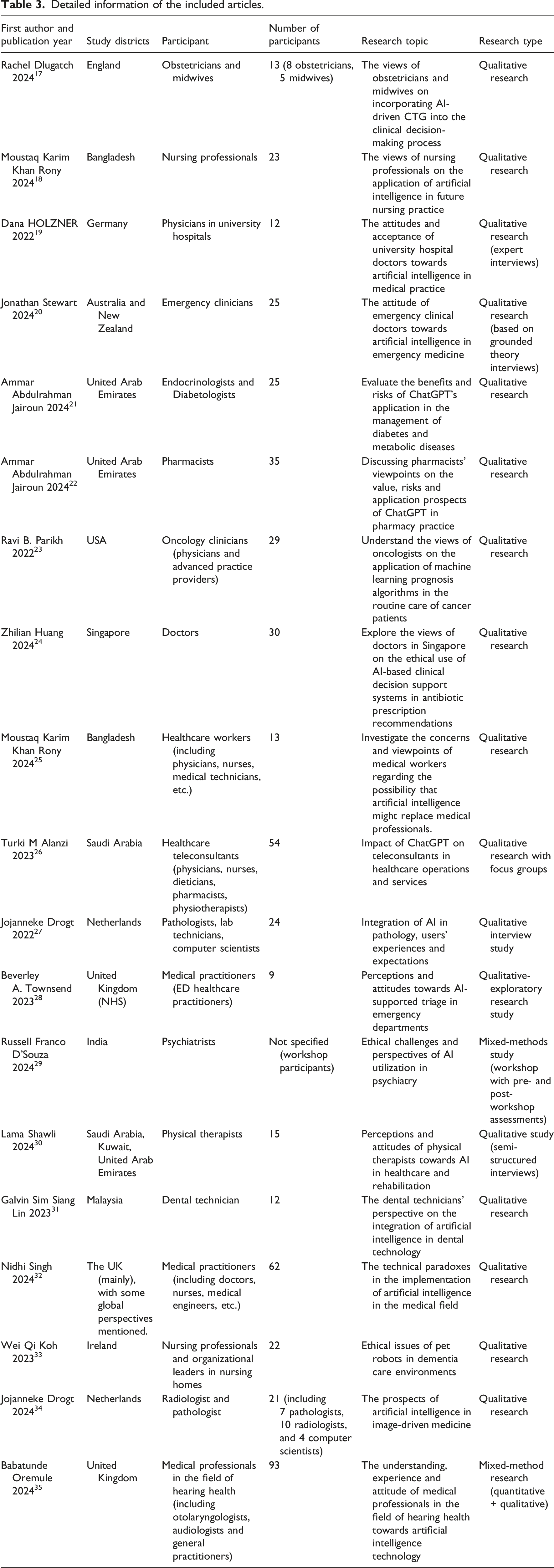

Detailed information of the included articles.

Results

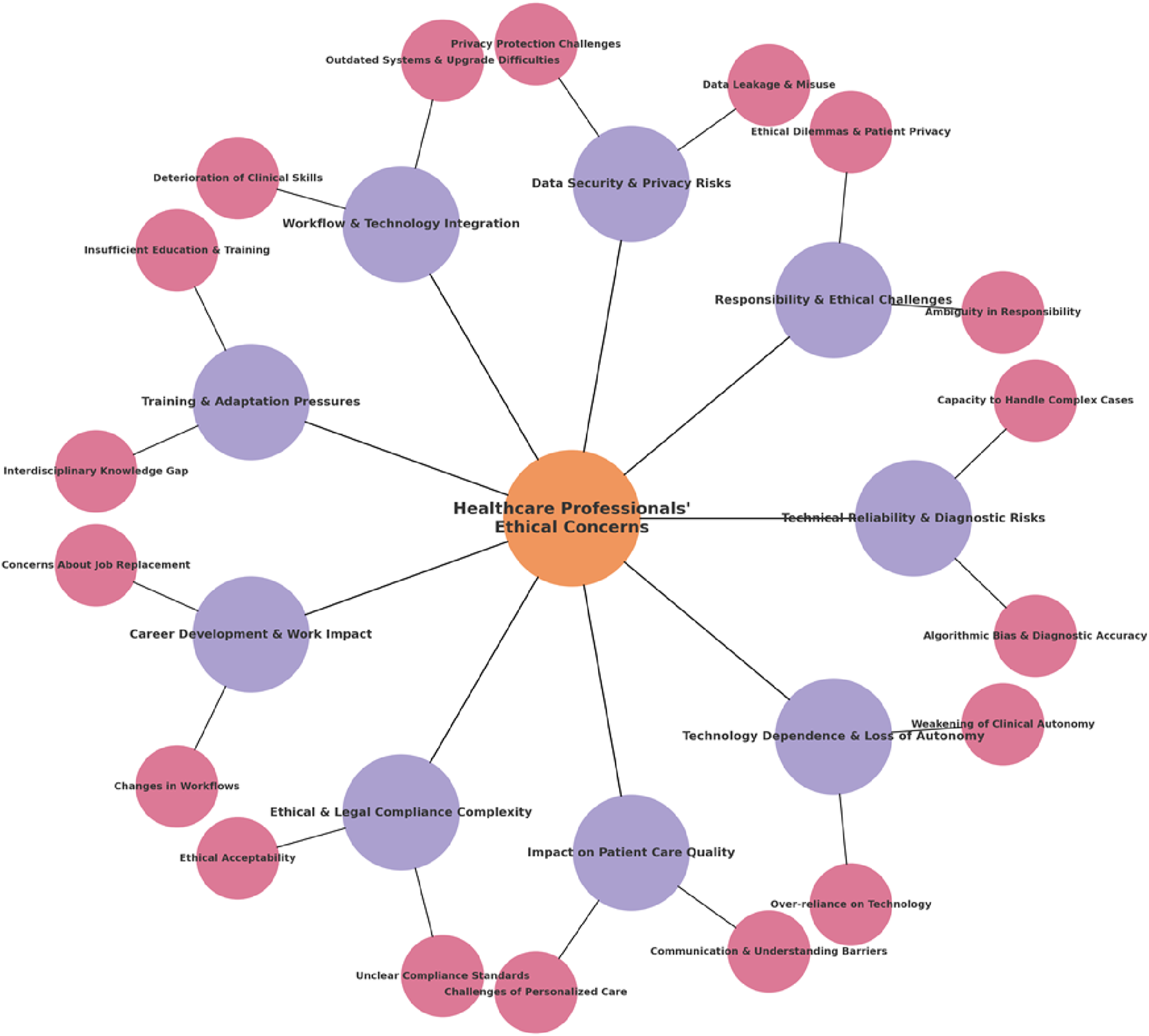

This paper provides a systematic review and qualitative synthesis of ethical issues surrounding the application of artificial intelligence in healthcare. A total of 19 qualitative studies were included, yielding findings categorized into 18 themes. These were further synthesized into nine key themes (see Figure 2), elaborated as follows: Result summary diagram.

Technical reliability and diagnostic risks

Algorithmic bias and diagnostic accuracy

Healthcare professionals maintain a high degree of caution regarding algorithmic bias and diagnostic accuracy in AI systems. The diagnostic precision of AI systems relies on the accuracy of their algorithms and the integrity of their data. However, current AI technology still faces limitations when handling complex cases. In clinical practice, accurate diagnosis forms the foundation for developing effective treatment plans, and any diagnostic error may have severe consequences for patient health. Algorithmic bias in AI systems may lead to misdiagnosis of specific populations or diseases, further heightening healthcare professionals’ concerns about diagnostic reliability. Additionally, AI systems remain inadequate in comprehensively analyzing individual patient differences, psychological states, and social contexts, making it difficult to fully replace the professional judgment of healthcare providers.

“Regardless of the technology introduced, our judgment ultimately determines the patient’s treatment plan, and we must bear that responsibility.” (Obstetrician 17 ).

“AI may have shortcomings in diagnosing critical illnesses, and it struggles to comprehensively consider multiple factors in complex cases.” (Healthcare Professional 28 ).

Handling complex cases

The limitations of AI in handling complex cases are also a major concern for healthcare professionals. While AI systems can provide analysis based on big data, they still fall short in considering individual patient differences, psychological states, and social backgrounds. Personalized care is a core principle of modern medicine, requiring healthcare providers to develop tailored treatment plans based on each patient’s specific circumstances. Inadequate AI capabilities in managing complex cases may lead to misjudgments, thereby impacting treatment outcomes and patient prognosis.

“AI remains limited in offering personalized care recommendations and cannot fully replace clinical judgment.” (Pharmacist 22 ).

“The reliability of AI in medical decision-making requires further validation.” (Healthcare Professional 32 ).

Responsibility attribution and ethical challenges

Ambiguous responsibility

Definition: Ambiguous responsibility attribution presents another major challenge for healthcare professionals. In clinical practice, responsibility for medical decisions typically rests with healthcare providers. However, the introduction of AI technology complicates this attribution. Developers of AI systems, healthcare institutions, and medical staff may all influence the final medical decision during an AI application. However, current laws, regulations, and ethical guidelines lack explicit provisions regarding responsibility allocation for AI technology in healthcare. This exposes healthcare professionals to potential legal risks when using AI, increasing their psychological burden. While transparent decision-making processes could clarify responsibility, the complexity and technical nature of AI systems make full transparency difficult in practical applications, further exacerbating the ambiguity of responsibility.

“If an AI recommendation leads to adverse outcomes, should responsibility lie with the developer, the healthcare institution, or the attending physician? There is currently no clear definition.” (Physician 19 ).

“The decision-making process of AI systems must be transparent to enable clear accountability in the event of legal disputes.” (Physician 24 ).

Ethical dilemmas and patient privacy

Ethically, patient privacy and data protection emerge as central concerns. The application of AI technology involves the collection, storage, and analysis of vast amounts of patient data, which contains personal privacy and sensitive information. Data breaches pose serious threats to patient privacy and security. Simultaneously, ethical dilemmas in AI applications, such as algorithmic bias, may exacerbate health inequalities. Furthermore, the use of AI technology may raise other ethical concerns, including informed consent and patient autonomy. When using AI, healthcare professionals must balance technological implementation with ethical principles to fully protect patients’ rights.

“Ensuring patient privacy and data security is paramount; any negligence could lead to the leakage of sensitive patient information.” (Psychiatrist 29 ).

“Ethical dilemmas in AI applications, such as algorithmic bias, may exacerbate health inequalities.” (Pharmacist 22 ).

Data security and privacy risks

Data breaches and misuse

Data security and privacy breaches are widespread concerns among healthcare professionals. In clinical practice, AI systems typically require integration of data from diverse sources, such as electronic health records, imaging results, and laboratory test outcomes. This consolidation and sharing of data heightens the risk of data breaches. Should a breach occur, patient information may be exploited for illicit purposes, including fraud and insurance scams. Additionally, researchers noted that healthcare professionals express concerns about data privacy and security within AI technologies. To ensure data security and privacy, effective technical and administrative measures are required, including encryption, access controls, and data anonymization.

“Data vulnerabilities in AI systems may lead to misuse of patient information, with heightened risks during multi-source data integration.” (Emergency Physician 20 ).

“Healthcare professionals express concerns about data privacy and security issues in AI technology.” (Healthcare Practitioner 35 ).

Privacy protection challenges

Privacy protection challenges in data sharing and algorithm training were also frequently mentioned. In AI applications, data sharing is crucial for algorithm training and optimization, yet it simultaneously poses privacy protection challenges. Striking a balance between data sharing and privacy protection is an urgent issue requiring resolution. Clinical engineers noted that maintaining data privacy confidentiality is difficult to guarantee, and controlling who has access to which data is challenging. Dental technicians expressed similar concerns, stating that ensuring patient data remains secure during AI system use is problematic.

“Data privacy confidentiality is difficult to ensure. Who has access to what data? The situation is difficult to control.” (Clinical Engineer 32 ).

“When using AI systems, it’s difficult to guarantee patient data won’t be leaked.” (Dental Technician 31 ).

Workflow and technology integration

Outdated systems and upgrade challenges

The introduction of AI technology poses significant challenges to existing workflows. In many healthcare institutions, current information systems have been in use for years and face compatibility issues with modern AI technologies. Integrating AI requires extensive upgrades and modifications to existing hardware and software, demanding substantial financial investment and overcoming technical hurdles. Additionally, researchers note that integrating AI with existing healthcare systems faces numerous technical hurdles, including system compatibility, data integration, and user interface usability. Achieving effective AI integration necessitates collaborative efforts among healthcare institutions, technology developers, and government agencies to resolve these integration challenges.

“Hospital systems were mostly developed 20 years ago, making AI integration require extensive hardware and software upgrades, which is highly challenging in practice.” (Emergency Physician 20 ).

“Integrating AI technology with existing healthcare systems presents numerous technical challenges.” (Healthcare Professional 34 ).

Deterioration of clinical skills

Healthcare workers express concern that overreliance on AI could lead to a decline in clinical skills. The automation capabilities of AI technology may reduce direct patient interaction among healthcare providers, thereby hindering the development of clinical competencies. Simultaneously, nurses noted the need for continuous learning and adaptation to new technologies to maintain clinical competitiveness. Healthcare institutions must provide corresponding training and support to help staff adapt to new technologies and enhance clinical competencies.

“Automated prognosis information may reduce my direct interaction with patients, potentially affecting the maintenance of clinical skills.” (Physician 23 ).

“We need to continuously learn and adapt to new technologies to maintain clinical competitiveness.” (Nurse 25 ).

Training and adapting to pressure

Insufficient education and training

Healthcare professionals widely believe that the current education system fails to adequately prepare them for the challenges posed by AI. The rapid advancement of AI technology demands higher standards of expertise and skills from medical practitioners. However, existing educational frameworks fall short in providing AI-related training, leaving healthcare workers struggling when utilizing AI technologies. Additionally, researchers emphasize that medical professionals require interdisciplinary training to adapt to evolving AI developments. AI technology spans multiple disciplines, including computer science, statistics, and medicine. Healthcare professionals require interdisciplinary knowledge and skills to better comprehend and apply AI technologies. Therefore, enhancing education and training in AI-related knowledge is essential to elevate the professional competence of healthcare personnel.

“We need more training on AI to better apply it in our daily work.” (Nurse 18 ).

“Healthcare professionals need interdisciplinary training to adapt to the development of AI technology.” (Healthcare Professional 27 ).

Interdisciplinary knowledge gap

Beyond insufficient training, healthcare professionals also face an interdisciplinary knowledge gap. Applying AI requires not only medical expertise but also understanding of computer science, data analysis, and related fields. However, current healthcare professionals exhibit significant deficiencies in interdisciplinary knowledge. Physical therapists noted that AI implementation in healthcare demands interdisciplinary collaboration, yet most professionals currently lack this knowledge. Researchers caution that healthcare professionals must continuously upgrade their skills to keep pace with AI’s rapid advancement. Healthcare institutions and educational bodies must also provide corresponding support and resources to help bridge this interdisciplinary knowledge gap.

“The application of AI in healthcare requires interdisciplinary collaboration, yet healthcare professionals currently lack such knowledge across the board.” (Physical Therapist 30 ).

“Healthcare professionals need to continuously upgrade their skills to adapt to the rapid development of AI technology.” (Healthcare Practitioner 26 ).

Career development and work impact

Job replacement concerns

The widespread adoption of AI technology has sparked widespread concerns among healthcare professionals about their career futures. Automated systems may replace certain workflows and tasks in the medical field as AI applications continue to expand. This has led healthcare workers to worry about job displacement and threats to their career advancement. Researchers also note that AI could reshape the employment structure within the healthcare industry, with some positions facing the risk of obsolescence. Healthcare professionals must stay informed about AI trends, proactively plan their careers, and enhance their irreplaceability.

“I’ve been a nurse for 15 years, and the thought of AI taking over some tasks terrifies me.” (Nurse 25 ).

“AI technology may reshape the healthcare industry’s employment structure, with certain roles potentially facing replacement risks.” (Healthcare Professional 33 ).

Workflow changes

AI’s impact on workflows also introduces uncertainty. The integration of AI technology will alter healthcare workers’ tasks and responsibilities, with some duties potentially handled by AI systems. Healthcare professionals need to adjust their roles and duties accordingly. Doctors also emphasized that AI implementation requires medical staff to redefine their roles and responsibilities. Healthcare institutions must provide corresponding support and training to facilitate a smooth transition to new work models.

“AI technology may alter how healthcare professionals work, requiring them to adapt to new work patterns.” (Healthcare Professional 34 ).

“The application of AI technology requires healthcare professionals to redefine their roles and responsibilities.” (Physician 23 ).

Complexities of ethical and legal compliance

Ethical acceptability

Ethical acceptability is a critical factor in the clinical application of AI technology. Patients’ acceptance of AI directly impacts its effectiveness in clinical practice. Healthcare providers must fully consider patients’ rights to informed consent and autonomy when using AI, ensuring their interests are adequately protected. Researchers also note that healthcare professionals’ ethical acceptance of AI is a key determinant of its application outcomes. Physical therapists discussing AI applications in rehabilitation medicine emphasized that AI must fully consider patient informed consent and ethical acceptance when providing personalized treatment plans. Therefore, strengthening ethics education to enhance ethical awareness among healthcare providers and patients is essential.

“When providing personalized treatment plans, AI must fully consider patient informed consent and ethical acceptance.” (Physical Therapist 30 ).

“Healthcare professionals’ ethical acceptance of AI technology is a key factor influencing its application effectiveness.” (Healthcare Practitioner 35 ).

Unclear compliance standards

Beyond ethical acceptance, unclear compliance standards further complicate clinical implementation. Currently, AI technology in healthcare remains in its developmental phase, with related laws, regulations, and ethical guidelines still incomplete. This exposes healthcare providers to potential legal risks when utilizing AI technology. Researchers emphasize that clear compliance standards are essential for ensuring the safety and efficacy of AI in healthcare. Healthcare professionals must remain vigilant about ethical and legal compliance when utilizing AI technologies. Therefore, strengthening the development of laws, regulations, and ethical guidelines is critical for guaranteeing that AI applications meet ethical and legal requirements.

“The application of AI technology in healthcare requires clear compliance standards to ensure its safety and effectiveness.” (Healthcare Professional 33 ).

“Healthcare professionals must remain vigilant about ethical and legal compliance issues when using AI technology.” (Healthcare Professional 26 ).

Impact on patient care quality

Challenges in personalized care

The application of AI in personalized care faces numerous challenges. Personalized care is a core principle in modern healthcare, requiring medical professionals to develop tailored treatment plans based on each patient’s specific circumstances. While AI systems can provide analysis results based on big data, they still fall short in considering individual patient differences, psychological states, and social backgrounds. Researchers emphasize that AI technology in healthcare must fully account for patients’ unique variations and needs. Therefore, AI technology in personalized care requires further optimization and refinement to better meet patients’ individualized needs.

“AI cannot fully replace the professional judgment of healthcare providers, especially in personalized care.” (Pharmacist 22 ).

“The application of AI technology in healthcare must fully consider patients’ individual differences and needs.” (Dental Technician 31 ).

Communication and understanding barriers

The application of AI technology may impact communication and understanding between doctors and patients. Effective communication is a crucial component of the medical process, fostering trust and enhancing patient satisfaction. Overreliance on AI technology may reduce direct interaction between healthcare providers and patients, negatively impacting the patient experience. Psychiatrists also note that AI technology cannot fully replace the humanistic care and communication between healthcare professionals and patients. Therefore, while utilizing AI technology, healthcare providers must maintain direct communication and compassionate care to improve the patient experience.

“Patients expect face-to-face interaction; overreliance on AI conversations may make them feel neglected.” (Nurse 18 ).

“AI technology cannot fully replace the human touch and communication between healthcare providers and patients.” (Psychiatrist 29 ).

Technological dependency and loss of autonomy

Overreliance on technology

Healthcare professionals express concern that excessive reliance on AI technology may lead to the atrophy of clinical skills. The automated functions of AI technology could diminish healthcare workers’ analysis and critical thinking regarding clinical data, resulting in the deterioration of clinical competencies. Simultaneously, researchers caution that overdependence on AI technology may cause healthcare professionals to lose their autonomy. Healthcare workers must maintain the capacity for independent thinking and autonomous decision-making when utilizing AI technology to avoid excessive reliance on it.

“Automated tools may cause healthcare professionals to neglect in-depth analysis and critical thinking of clinical data.” (Physician 23 ).

“Overreliance on AI technology may lead to healthcare professionals losing their autonomy.” (Healthcare Practitioner 28 ).

Weakening of clinical autonomy

The application of AI technology may erode healthcare professionals’ clinical autonomy. While AI system recommendations hold some reference value, healthcare professionals must make final decisions based on their professional judgment and clinical experience. Researchers noted that AI system recommendations may influence healthcare professionals’ decision-making processes, thereby weakening their clinical autonomy. Therefore, healthcare professionals must maintain clinical autonomy when using AI technology to ensure the scientific rigor and rationality of medical decisions.

“Recommendations from AI systems may influence healthcare professionals’ decision-making processes, thereby diminishing their clinical autonomy.” (Healthcare Professional 19 ).

“The application of AI technology in healthcare requires balancing the relationship between technology and healthcare professionals’ expert judgment.” (Surgeon 27 ).

Discussion

This study conducted a systematic review of 19 qualitative research studies to thoroughly analyze the ethical dilemmas and challenges associated with artificial intelligence (AI) applications in healthcare. Findings reveal that AI implementation in healthcare involves complex issues across multiple dimensions, including technological reliability, accountability, data security, workflow integration, training and adaptation pressures, career development, ethical and legal compliance, patient care quality, and technological dependency. These challenges not only significantly hinder the adoption and application of AI technologies in healthcare but also pose profound challenges to healthcare practitioners’ professional practice models, patient rights protection mechanisms, and the operational framework of the entire healthcare system.

Multidimensional analysis of healthcare professionals’ ethical concerns regarding AI application in clinical practice

Morley et al. 36 systematically categorized ethical issues surrounding AI in healthcare, identifying three primary dimensions: cognitive, normative, and traceability. Cognitive-level concerns primarily manifest as insufficient algorithmic explainability, unreliable evidence support, and lack of transparency in clinical decision-making. The normative dimension involves potential algorithmic bias and discrimination, fairness issues arising from technology deployment, and challenges to medical professional ethics, while accountability concerns center on decision responsibility attribution, fault attribution for errors, and the application of technology-related laws. Farhudet al. 37 further emphasizes that patient privacy protection and data security constitute core ethical issues in AI healthcare applications. The unique nature of medical data dictates its high sensitivity and critical importance, necessitating rigorous protective mechanisms throughout the entire process of data collection, storage, processing, and sharing.

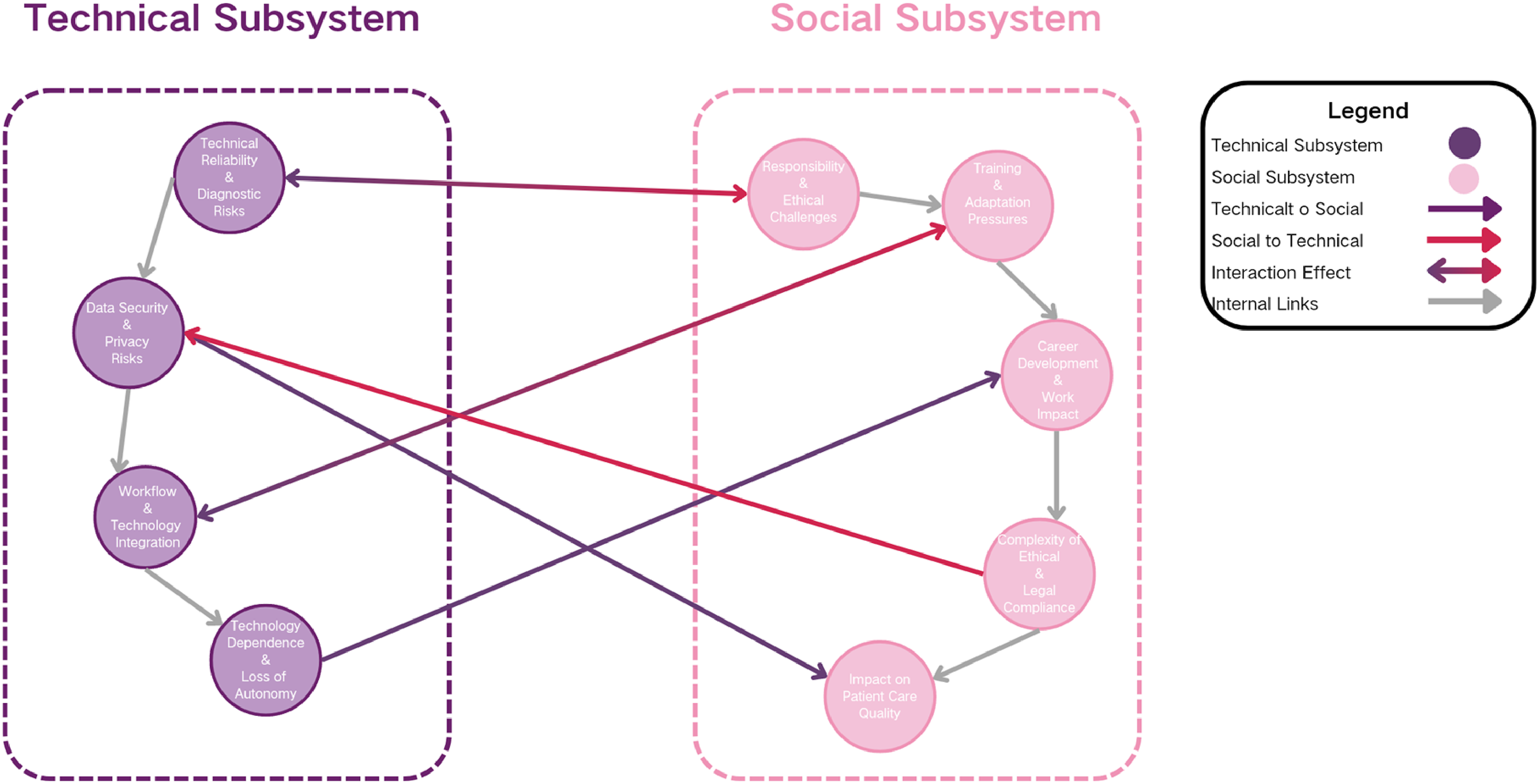

Based on the Social Technology Systems (STS) theory, the nine ethical issues identified by this study can be further categorized into two levels: the technical subsystem and the social subsystem (see Figure 3). The technical subsystem encompasses issues such as technological reliability and diagnostic risks, data security and privacy risks, and workflow and technology integration, as well as technological dependency and loss of autonomy. These topics primarily focus on the performance boundaries of AI systems themselves, their data foundations and infrastructure, and how they technically couple with existing medical processes. The social subsystem encompasses issues of accountability and ethical challenges, training and adaptation pressures, career development and work impacts, complexities of ethical and legal compliance, and implications for patient care quality. These factors collectively reflect the institutional responses, professional adjustments, and value-based tensions exhibited by healthcare organizations, medical personnel, and patient groups when confronting technological embedding. Diagram illustrating the inter-influential relationships among themes based on the theory of social-technical systems.

According to STS, the technical and social subsystems are not isolated but form complex ethical phenomena through dynamic interactions. 38 Limitations within the technical subsystem—such as AI instability in complex cases or algorithmic bias—spill over into the social subsystem. This blurs accountability boundaries between clinicians and developers, potentially creating bias and discrimination while triggering legal and ethical gray areas. When compliance standards are unclear or ethical acceptance is insufficient, this uncertainty amplifies, undermining trust and adoption within the social subsystem. Obermeyer et al.'s Science study confirmed that AI cardiovascular disease diagnostic models trained on U.S. Medicare data exhibited a 13.2% higher miss rate for African American patients compared to Caucasians due to underrepresentation of minority samples. This flaw not only exposes AI’s limitations in handling complex cases—where decision biases are pronounced in scenarios with scarce data like rare diseases or multiple comorbidities—but also directly triggers responsibility attribution and ethical challenges: Algorithm developers evade accountability by invoking “technological neutrality,” healthcare institutions struggle to pinpoint error sources due to “algorithmic black boxes,” and clinicians face the dilemma of “liability for algorithmic reliance versus algorithmic failure,” creating a strong correlation between “technical reliability defects” and “ambiguous responsibility boundaries.” While data security and privacy protections aim to safeguard patient rights, stringent restrictions may lead to scarce training data and impaired model generalization capabilities. Rejecting algorithmic failures, “creating a strong correlation between ‘technical reliability defects’ and ‘ambiguous liability determination.’” While data security and privacy protection aim to safeguard patient rights, strict restrictions may lead to scarce training data and reduced model generalization capabilities, thereby diminishing the feasibility of personalized care and increasing distrust in doctor-patient communication. 39 Simultaneously, societal subsystems exert feedback pressure on the technological subsystem: lagging ethical and legal frameworks constrain AI deployment and iteration, creating an “innovation-compliance” tension; healthcare professionals viewing AI as job replacements fosters emotional resistance, hindering deep integration with clinical workflows 40 ; inadequate interdisciplinary training may lead to over-reliance on AI, increasing diagnostic risks, 41 while insufficient training also impedes clinical AI adoption. 42 These feedbacks alter technological efficacy and shape the risk boundaries of the technical subsystem.

Within the technical subsystem, there is an inherent structural conflict: data security and privacy protection emphasize risk prevention and ethical legitimacy, whereas technical reliability pursues optimal performance and diagnostic accuracy, making the two goals difficult to reconcile. 43 Although highly standardized workflows boost efficiency, over time they may erode clinicians’ skill accumulation, leading to a loss of professional autonomy and heightened dependence on AI; this dependence, in turn, weakens the system’s long-term resilience, especially when AI coverage fails or an emergency exceeds the model’s training experience, amplifying risks. 44 The social subsystem also contains pronounced tensions: unclear accountability interacts with career-development anxiety; when medical errors are attributed to clinicians, legal exposure and job insecurity rise, fostering resistance to AI systems. 45 While unified ethical and legal compliance sets an industry baseline, excessive standardization suppresses individualized reasoning and differentiated communication, creating a clash between “institutional legitimacy” and “personal care,” a conflict that is continuously amplified through interactions among organizational culture, professional identity, and patient expectations. 46

Differences in healthcare professionals’ ethical concerns regarding AI applications in clinical practice across cultural contexts

Cultural contexts, institutional environments, and technological development levels across regions significantly influence healthcare professionals’ perceptions of AI applications in medicine. In Singapore, physicians demonstrate heightened concern regarding the ethical use of AI-assisted antibiotic prescribing systems, particularly emphasizing algorithm transparency, explainability, and data security—likely reflecting the nation’s stringent data protection regulations and healthcare oversight frameworks. Emergency physicians in Australia and New Zealand maintain a cautiously optimistic stance toward AI applications. While acknowledging its potential, they express concerns about technological limitations and ethical issues, reflecting the relative lag in establishing ethical norms for medical AI within the Oceania region. Physical therapists in the Gulf region (Saudi Arabia, Kuwait, and the UAE) prioritize AI reliability and privacy concerns, closely tied to the region’s emphasis on data sovereignty and cultural sensitivity. European healthcare professionals exhibit distinctly regulatory-driven concerns regarding AI implementation. In fields like medical image analysis and dementia care, European physicians commonly raise issues of algorithmic bias, data privacy, and technological reliability. This concern may be linked to the EU’s stringent data protection regulations (e.g., GDPR) and the ethical requirements of the AI Act. Across multi-country studies, despite undefined geographic boundaries, healthcare professionals universally express concerns about AI potentially replacing professional skills and raising ethical dilemmas. This coexistence of regional differences and universal themes reveals how cultural contexts and institutional environments shape perceptions of technology, indicating that AI medical applications require localized ethical adaptation mechanisms.

Differences in ethical concerns regarding AI application in clinical practice among medical staff across departments

Significant variations in operational characteristics and technical application scenarios across different medical departments result in discipline-specific concerns among healthcare professionals regarding AI. Diagnostic departments (e.g., radiology, pathology) prioritize accuracy and reliability in AI-driven image analysis and diagnostic support while remaining vigilant about algorithmic bias and data privacy, as diagnostic outcomes directly influence patient treatment plans and prognosis. Therapeutic departments (e.g., oncology, endocrinology) focus more on ethical risks in AI-based prognosis prediction and personalized treatment, fearing potential impacts on the precision of treatment decisions and patient safety. Healthcare professionals in rehabilitation and nursing departments express concerns about the limitations of AI technology in designing personalized treatment plans and supporting emergency decision-making, as these scenarios demand high levels of individualization and immediacy. These differentiated concerns underscore the necessity of comprehensively considering technical precision, ethical standards, and clinical needs in medical AI applications to ensure their safe and effective implementation across different departments.

Variations in ethical concerns about AI use in clinical practice across healthcare professions

Because roles and expertise differ, healthcare professionals express markedly divergent worries about AI. Physicians, as primary diagnostic and therapeutic decision-makers, overwhelmingly focus on AI’s accuracy, explainability, and ethical implications; this ambivalence is essentially a power struggle over who controls medical decisions, exposing the latent conflict between professional authority and technological empowerment. 47 Nurses, who deliver most bedside care, fear not only technical limitations but also the erosion of nursing’s professional value. Their work hinges on humanistic concern and emotional support, so they worry that AI could strip care of its human dimension and undermine the irreplaceability of their role. 48 In dementia care, nursing staff and organizational leaders give special weight to the privacy, autonomy, and technical reliability of companion robots, underscoring both the imperative to protect a vulnerable population and the downward flow of accountability within healthcare quality management. Pharmacists, operating in the pharmacy setting, chiefly worry about the accuracy and data security of AI tools such as ChatGPT, because flawed drug information or counseling can directly jeopardize patient safety and erode the pharmacist’s claim to professional authority. 49

Research limitations

Regarding research limitations, this study primarily analyzes secondary data from qualitative research, which may present the following shortcomings: First, the geographical coverage of the research sample is limited, focusing mainly on healthcare environments in developed countries, with insufficient exploration of AI applications in developing nations. Second, the methodology relies predominantly on literature review, lacking field investigations or experimental validation, which may restrict the universality of certain conclusions. Finally, the research content focuses more on current ethical challenges, with insufficient prediction of potential risks and opportunities for future AI technology development.

Future research and practice recommendations

Impact of regional, professional, and disciplinary differences on global ethical guidelines development

Cultural contexts, institutional environments, and technological development levels across regions significantly influence healthcare professionals’ perceptions of AI applications in medicine. For instance, Singaporean physicians demonstrate heightened concern regarding the ethical use of AI-assisted antibiotic prescribing systems, emphasizing algorithm transparency and data security—a stance linked to the nation’s stringent data protection regulations. Conversely, emergency physicians in Australia and New Zealand exhibit cautious optimism toward AI applications, reflecting the region’s relative lag in establishing ethical frameworks for medical AI. Such regional disparities underscore that global ethical guidelines must fully account for cultural and institutional variations to ensure applicability and effectiveness.

Healthcare professionals across different specialties and disciplines also exhibit distinct concerns regarding AI. Diagnostic departments (e.g., radiology, pathology) prioritize accuracy and reliability in AI-driven image analysis and diagnostic support while maintaining vigilance against algorithmic bias and data privacy issues. Therapeutic departments (e.g., oncology, endocrinology) focus more on ethical risks in prognosis prediction and personalized treatment, worrying about potential impacts on the precision of treatment decisions and patient safety. These specialty and discipline differences indicate that global ethical guidelines must comprehensively consider the operational characteristics and technical application scenarios of different departments to ensure the safe and effective deployment of AI technology across various fields.

Evolution of challenges and opportunities

With the rapid advancement of emerging AI technologies, the ethical challenges they pose are also evolving. On one hand, the complexity and lack of interpretability of AI technologies may further exacerbate the ambiguity surrounding responsibility attribution. For instance, deep learning-based AI systems may exhibit unpredictable decision-making processes when handling complex medical cases, making it difficult to identify responsible parties in the event of medical errors. On the other hand, AI advancements also present new opportunities to address ethical concerns. For instance, developing more sophisticated algorithms and data protection technologies can enhance the explainability of AI systems and strengthen data security.

Furthermore, emerging technologies like generative AI and reinforcement learning in healthcare may introduce new ethical risks, such as generating false medical information or performing inappropriate interventions on patients. However, these technologies can also deliver significant clinical benefits by enabling more personalized medical services and optimizing healthcare resource allocation. Therefore, future research and policy development must strike a balance between technological innovation and ethical safeguards to ensure that AI advancements genuinely serve the enhancement of human health and well-being.

Policy gaps and feasibility of measures

Currently, significant policy gaps persist globally in the field of AI medical ethics. Many countries and regions have yet to establish clear laws and regulations governing the application of AI technology in healthcare, particularly regarding data usage, sharing, and protection. This policy vacuum exposes healthcare professionals to potential legal risks when utilizing AI technology, increasing their psychological burden.

To address these challenges, we recommend the following specific measures: (1) Enhance technological R&D: Improve the explainability, reliability, and fairness of AI systems to mitigate ethical risks related to technical reliability and data security. (2) Establish accountability mechanisms: define responsible parties in cases of AI decision-making errors to reduce ambiguity in liability attribution. (3) Refine data security systems: Ensure the security and privacy of medical data while balancing the need for data sharing with privacy protection. (4) Enhance ethics training: Provide ongoing AI ethics training for healthcare practitioners to improve their technical application capabilities and ethical awareness.

The feasibility of these measures requires the joint participation and collaborative efforts of multiple stakeholders. Healthcare institutions, technology developers, government departments, healthcare practitioners, and patients must work together to ensure the sustainable development of AI technology in the healthcare sector. Through multi-stakeholder collaboration and systematic improvements, a balance can be achieved between technological innovation and ethical safeguards, thereby realizing the true value of AI in healthcare.

Future research should incorporate cross-regional comparative studies to investigate the ethical differences in AI issues between Eastern and Western healthcare environments. Mixed-methods research combining quantitative data analysis and qualitative interviews is recommended to validate the practical application outcomes and ethical challenges of AI across diverse healthcare settings. Furthermore, developing specific research design frameworks—such as empirical studies grounded in the Technology Acceptance Model (TAM) or Social Technological Systems Theory (STS)—can validate the adoption pathways and impact mechanisms of AI technologies in healthcare.

In summary, regional, professional, and disciplinary variations significantly influence the formulation of global ethical guidelines. The development of emerging AI technologies presents both new challenges and opportunities. By strengthening technological R&D, refining policies and regulations, and enhancing ethics training, a balance can be achieved between technological innovation and ethical safeguards, thereby promoting the sustainable development of AI technologies in healthcare.

Conclusion

This study reviewed 19 qualitative research projects to analyze ethical issues surrounding AI applications in healthcare. Findings reveal complex challenges across nine key themes—including technical reliability, accountability, and data security—that hinder AI adoption in medical settings while impacting healthcare professionals, patient rights, and healthcare system operations. Despite AI’s potential to enhance diagnostic accuracy, issues such as algorithmic bias constrain its development. To foster sustainable AI development in healthcare, future efforts must involve multi-stakeholder collaboration. Focus should be placed on technological R&D, responsibility delineation, data security management, and personnel training. Balancing technological innovation with ethical safeguards will enable AI to better serve human health.

Supplemental Material

Supplemental Material - Ethical concerns of AI in healthcare: A systematic review of qualitative studies

Supplemental Material for Ethical concerns of AI in healthcare: A systematic review of qualitative studies by Jiayu Hou, Xuan Cheng, Jiayu Liao, Zhiqiao Zhang, Weihong Wang in Nursing Ethics.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Education Department of Hunan Province [Funding number HNJG20230216].

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.