Abstract

With the objective to predict and pre-empt the emergence of political violence, the US Department of Defence (DoD) has devoted increasing attention to the intersection between neurobiology and artificial intelligence. Concepts such as ‘cognitive biotechnologies’, ‘digital biosecurity’ and large-scale collection of ‘neurodata’ herald a future in which neurobiological intervention on a global scale is believed to come of age. This article analyses how the relationship between neurobiology and AI – between the human and the machine – is conceived, made possible, and acted upon within the SMA programme, an interdisciplinary research programme sponsored by the DoD. By showcasing the close intersection between the computer sciences and the neurosciences within the US military, the article questions descriptions of algorithmic governmentality as decentring the human, and as juxtaposed to biopolitical techniques to regulate processes of subjectivity. The article shows that within US military discourse, new biotechnologies are seen to engender algorithmic governmentality a biopolitical dimension, capable of monitoring and regulating emotions, thoughts, beliefs, and subjectivity on population level, particularly targeting the minds and brains of ‘vulnerable’ populations in the global South.

Introduction

With the advent of artificial intelligence (AI) and big data, contemporary discourses and practices of global security have undergone dramatic and accelerated change. According to Roberts and Elbe, the use of algorithmic technologies to identify, predict and manage insecurity represents not only a technological revolution, but more profoundly a ‘considerable shift in the underlying problem, nature and role of knowledge within contemporary security practices’ (Roberts and Elbe, 2017: 58). Some commentators describe the algorithmic turn as transposing the focal point of security from the human to the machine, emphasizing the epistemological and ontological differences between ‘algorithmic governmentality’ (Rouvroy and Berns, 2013) and the biopolitical forms of human security seen to precede the rise of AI (e.g. Amoore, 2020: 169). Although biopolitics of human security, inter alia, sought to govern the ‘inner world of the subject’ (Chandler, 2013: 2), stressing the need for empathy and knowledge of human emotions to manage the motivations and desires of local populations, algorithmic governmentality has been described as removing the need to know or affect the minds of human subjects, privileging in contrast the identification of patterns in actual observable behaviour. In contrast to biopolitics, Rouvroy and Berns argue that ‘algorithmic governmentality produces no subjectification’ (Rouvroy and Berns, 2013: 171), in part because algorithmic reason is held to be incapable of and uninterested in ‘understand[ing] love, ethics, morals, solidarity, care, affects, and other distinct human qualities’ (Fuchs and Chandler, 2019: 15).

This article questions the seemingly unambiguous mode with which the distinction between biopolitical and algorithmic forms of governmentality is articulated in this line of critique. Rather than describing the emergence of algorithmic governmentality as a radical break from the biopolitics of human security, this article argues that algorithmic governmentality represents an extension of biopolitics – formed and re-formed through an intimate historical relation between the life sciences and the computer sciences. The article illustrates this interrelation by showcasing how shifting definitions of the human – most notably the adaptation by governmental discourses of the neurobiological plastic subject (Dillon and Reid, 2009; Malabou, 2008; Rose and Abi-Rached, 2013, Whitehead et al., 2018) – have made possible, and continue to inform, the rise of algorithmic governmentality. Indeed, as observed by, for instance, Malabou (2019: 86), there is a direct analogy between neurobiological definitions of the human brain as plastic, correlational and networked and the development of AI, including the use of AI as a means of governance (see also De Vos, 2020; Tegmark, 2017).

According to neuroscientist Rafael Yuste, founding member of NeuroRights Foundation, the synergy between neurobiology and AI has come to herald ‘a new neurotechnological age’ (quoted in Evans, 2022), in which emergent biotechnological tools to ‘read, write, code, and manipulate the activity of the mind’ (Evans, 2022) actualize a growing indistinction between the human and the machine. Through this indistinction, traits often deemed distinctly human, as well as outside the remit of algorithmic governmentality – such as emotions, ideas, desire and empathy – are increasingly referred to as ‘neurodata’ (Giordano and DiEuliis, 2021: 21): capturable, observable and open to regulation by algorithmic systems (Petrina, 2021: 228). In geopolitical discourse, this indistinction is often articulated in terms of an emergent neurobiological and AI arms race (Giordano and DiEuliis, 2021: 9; National Security Innovations (NSI), 2017a: 20; Wright, 2021a: 37), heralded by concepts such as ‘cognitive warfare’ (North Atlantic Treaty Organization (NATO), 2021), ‘cognitive biotechnology’ (Bernal et al., 2021) and a ‘digital biosecurity’ (Giordano and DiEuliis, 2021: 22).

It is the contours of this neurotechnological age – how it is envisioned, formed and militarized – that this study seeks to analyse and make visible. The rationale for this intervention is straightforward. If we want to understand how human security is practised today, how new forms of security operate and are legitimized, how they redefine what it means to be human, then I contend that we cannot exclude a priori questions of how algorithmic and biopolitical forms of security feed off, complement and enhance one another. We cannot only ask how algorithmic or automated systems remove or ‘de-center’ (Shaw, 2017: 451) the human, we must also ask how our ideas of the human define, limit and enable the algorithm. In the spirit of recent critical debates on algorithmic security, I thus aim to question popular ‘notions of the disembodied, non-human, robotic or automated actions of algorithms’ (Amoore and Raley, 2017: 8), highlighting in contrast the entangled relation between biopolitical and algorithmic forms of governmentality.

To realize this purpose, the article empirically analyses the US Department of Defense’s (DoD’s) Strategic Multilayer Assessment (SMA) programme – a US interagency network sponsored and facilitated by the US Office of the Secretary of Defense and the Joint Staff. In recent years, the SMA programme has sought to conjoin science on the human brain with insights and technologies from the computer sciences. The SMA programme’s objective is articulated as garnering the capacity for more precise prediction, identification and preemption of political violence: to eliminate the potential for ‘strategic surprise’ (NSI, 2018: 108) and to move war further away from the kinetic battlefield, what in the US military often referred to as ‘left of bang’ (NSI, 2011: 2). Born out of the ‘human-terrain system’ (Gezari, 2013), the SMA programme can in many ways be described as emblematic of human security and its biopolitical forms of governance. Self-labelled as an empathy-driven (NSI, 2018: 17) research programme, the SMA programme defines its aim as to generate knowledge of ‘the world as other people or groups of people see it’ (NSI, 2018), in order to ‘understand the cultural and social issues driving conflict’ (NSI, 2017a: 4). To that end, the SMA programme conducts, supports, coordinates and disseminates research on, inter alia, the ‘neurobiology of political violence’ (NSI, 2010), the cognitive ‘attractors’ of radicalization, and ‘cyberneurobiology’ (NSI, 2012a), as well as on ‘the power of love’ (NSI, 2013) and ‘empathy’ (NSI, 2012b: 178; NSI, 2013: 50; NSI, 2018: 17) to steer behaviour. More recent research includes studies on how algorithmic systems can employ large-scale databases of ‘neurodata’ (Giordano and DiEuliis, 2021), to access – and modulate – at population level what the SMA programme at times refers to as the ‘source of the mind’ (Giordano and DiEuliis, 2021: 21); that is, ‘thought, emotions, and behavior’ (Giordano and DiEuliis, 2021: 21). In 2017, it was estimated that the SMA programme comprised ‘around 3000 individuals, 95 universities, 14 US defense groups, and 8 foreign military groups’ (NSI, 2017a: 4).

Importantly, the SMA programme’s objective is not only to develop new technologies. To a large extent, the SMA programme is an advocate, aiming to persuade the US security apparatus at large to abandon rationalistic assumptions of the human, as well as the definitions and practices of war such assumptions are claimed to have engendered: to initiate ‘doctrinal changes’ (NSI, 2018: 20) at all levels of the US military. To that end, the SMA programme has published an abundance of conference reports, white papers, research articles, speaker series and podcasts over the years. Several SMA researchers have testified at the US Congress (e.g. Atran, 2008). It is this public ideological exposure – through which imaginaries of an automated neurobiological war, or ‘cognitive defence’ (Wright, 2021b), are produced, articulated and disseminated – that make up the empirical corpus of this article.

What this reading makes visible is that the combination of human-centric and algorithmic forms of security is envisioned to open up new technologies of security governance that the critique of algorithmic governmentality has as yet been unable to see. Among these are the promise of scaling up and automating human security’s focus on subjectivity: to engineer sentient machines capable of registering, analysing and ‘affect[ing] influence’ over individuals’ and groups’ cognitive, emotional and behavioural states, such as ‘hostility, disgust, arousal, aggression, [and] violence’ (NSI, 2014: 43). By making the brain’s neural network – and its correlations – accessible to the algorithm, it is envisioned that algorithmic systems will garner the capacity to monitor and intervene not only in actual behaviour, but in the very potentiality of being – steering and regulating processes of subjectivity as they emerge in the human brain. Through the convergence of AI and neuroscience, it is thus envisioned that ‘root causes’ of violence – such as ‘social alienation’, ‘poverty’, ‘inequality’, lack of ‘political opportunity’ and ‘basic necessities’ (NSI, 2018: 130) – will become manageable in the brains of individuals and populations.

The article proceeds as follows. The first theoretically oriented section engages how the relationship between algorithmic and biopolitical forms of governmentality has been defined in critical studies of security, paying particular attention to how algorithmic systems have been seen to redirect the governmental gaze from understanding the human mind onto the mapping of behavioural data. The section ends with a genealogical discussion that seeks to illustrate the historical relationship between biopolitical and algorithmic governmentality, finding the epistemological seeds of algorithmic governmentality within neurobiological conceptions of human intelligence. The second, empirically oriented section, analyses how the SMA programme envisions how the synergy between neurobiology and AI redefines war. The section employs a biopolitical analysis of the SMA programme’s security discourse, analysing: (1) how the SMA programme defines the relationship between human and artificial life and intelligence, (2) how the conflation of neurobiological insights and artificial intelligence is seen to afford the paradigm of human security new biopolitical possibilities to secure, govern and improve life, and (3) how war in the neurotechnological age redefines the relationship between freedom and security in liberal governmentality. I conclude the article by discussing the SMA programme’s imaginary of war in the neurotechnological age in relation to our conceptual understanding of the practices, scope and discourses of biopolitics and governmentality, as well as what this may entail for the possibilities and limits of critique.

The human and the machine: Algorithmic governmentality beyond biopolitics?

Popular narrations of AI’s origin story portray the early years of AI as made possible by a growing epistemological separation between artificial and human intelligence. According to this narrative, advancements in AI were achieved only through abandoning early ‘wild-eyed dreams’ (Aradau and Blanke, 2015: 5) of creating a ‘human-like machine’ (Aradau and Blanke, 2015: 6), modelled on Cartesian reason, controlled by an external mind or cogito. Although the Cartesian mind/body distinction, through which the body was conceived as a static pre-determined entity executing logical orders emanating from the cogito, had been key to the development of centrally controlled rules-based programs (Malabou, 2008), machine learning and artificial intelligence were, argued Dehn and Schank (1982: 353, emphasis in the original), ‘originally regarded primarily as an alternative to human intelligence’. 1 According to this narrative, the particularity of he algorithmic governing vision is characterized by a rejection of what Amoore calls ‘conventional human hypothesis or deduction’ (Amoore, 2020: 47) in place of the algorithm’s ‘abductive’ or ‘correlative forms of reasoning’ (Amoore, 2020). Based on correlations in large datasets, Amoore describes algorithmic predictions about human behaviour as being ‘derived . . . from contingencies’ (Parisi, 2013, quoted by Amoore, 2020: 47), rather than to be induced from predefined theoretical premises.

The shift from causation to correlation is often held as emblematic of a wider governmental shift in how best to organize, secure and monitor ‘the conduct of conduct’ (Foucault, 2000: 211) of populations in liberal societies. Through the use of algorithms, the ‘military model’ on which Foucault ([1975] 1991: 168) holds liberal governmentality to be built is claimed to be extended into ‘the most mundane and prosaic spaces’ (Amoore, 2009: 49) of liberal life. Within this governmental paradigm, Chandler (2019) argues that modernist top-down and linear models ‘of solutionism and progress’ (Chandler, 2019: 25), have been replaced with real-time monitoring of threats as they become actual – visible in data, identified through ‘association rules’ between ‘people, places, objects and events’ (Amoore, 2009: 49). According to Chandler, algorithmic systems have now replaced liberal governmentality’s historical reliance on a bounded ontology, with a ‘co-relational’ (Chandler, 2019: 33) ontology that has shifted the governmental gaze from centre to network, from the monitoring of specific properties of individual agents or objects to the mapping of entangled and networked relations.

In contrast to biopolitical forms of modern governmentality, which Chandler argues depend on universal theories of human behaviour and subjectivity, algorithmic governmentality is held to identify patterns in actual behaviour. ‘Digital governance’, claims Chandler (2019), ‘works on the surface, on the “actualist” notion that “only the actual is real”’ (Chandler, 2019: 24; see also O’Grady, 2021). So described, big data and AI are held to be uninterested in the inner life of human subjects – of human thoughts, dreams, fears and hopes – privileging in contrast correlational patterns in actual behaviour, observable and quantifiable. ‘In contrast to the psychologist and the neuroscientist’, De Vos (2020) posits, ‘Big Data does not care whether one knows or not: we do not need to be educated in theories about what is driving us: data-technology and algorithms work silently in the background simply drive, guide and steer our behaviour’ (De Vos, 2020: 17). As such, Chandler has argued that ‘rather than starting with the human and then going out to the world, the promise of Big Data is that the human comes into the picture relatively late in the process (if at all)’ (Chandler, 2015: 837). Rouvroy and Berns’ (2013) description of algorithmic governmentality as a form of government ‘without a subject, but not without a target’ (171) arguably echoes this understanding, as does Duffield’s description of digital governance as ‘eschew[ing] causality or a need to acknowledge the motives and beliefs that shape actual behaviour (Duffield, 2016: 147).

Such descriptions put algorithmic governmentality explicitly at odds with biopolitical forms of governmentality, and their focus on promoting and producing imaginaries of a good, healthy, pure and normalized political body (Bachmann et al., 2015: 66) through targeting subjectivity (Agamben, 2011: 6). The potentiality and becoming of life – often claimed to have been the primary target of human security discourses (Chandler, 2013; Dillon and Reid, 2009; Rose and Abi-Rached, 2013; Tängh Wrangel, 2017), as well as of biopolitics at large (Agamben, 1999: 147–148) – are equally placed outside the scope of algorithmic governmentality. Indeed, key texts of algorithmic governmentality either make no mention of biopolitics (Fuchs and Chandler, 2019; Rouvroy and Berns, 2013) or reference biopolitics only in passing in order to contrast biopolitics with the practices of algorithmic governmentality (Amoore, 2020: 169; Aradau and Blanke, 2022: 210). 2

In contrast to biopolitics, Rouvroy and Berns argue that ‘power’ grasps the subjects of algorithmic governmentality no longer through their physical body, nor through their moral conscience – the traditional holds of power in its legal discursive form – but through multiple ‘profiles’ assigned to them, often automatically, based on digital traces of their existence and their everyday journeys. (Rouvroy and Berns, 2013: 172–173)

Similarly, Amoore has argued that ‘algorithmic war’, unlike biopolitics, does not promote an ideal norm, nor seeks to establish universal parameters such as ‘shared values or moral principles’ (Amoore, 2020: 169) but rather regulates, polices and makes possible ‘the interplay of differential normalities – not forever settling out normal and abnormal, permitted and prohibited, but allowing degrees of normality’ (Amoore, 2009: 55, see also Aradau and Blanke, 2018). 3 According to Chandler, ‘Big Data welcomes the heterogeneity and multiplicity of the world’ (Chandler, 2015: 850), as diversity is seen to be generative of data.

Such descriptions depict the relationship between algorithmic and biopolitical forms of governmentality not only as separate but as antithetical, thus repeating a tendency within studies indebted to Foucault to emphasize the difference between different forms of power. As noted by Lemke, studies of governmentality tend to ‘assume a continuous rationalization of forms of government’ (Lemke, 2013: 39), through which ‘archaic and redundant’ forms of governmentality are incrementally replaced by more effective and ‘economic’ (Lemke, 2013: 39) models of governance. As Lemke argues, this practice risks concealing the ‘assemblages, amalgams and hybrids’ (Lemke, 2013: 41) through which different forms of power comes into being as well as ‘the heterogeneous and concrete ways (through which) different technologies interact’ (Lemke, 2013: 41). Rather than attempting to define ‘homogeneous and abstract types of rule’ (Lemke, 2013: 41), Lemke thus posits that critical inquiry should be attuned to ‘the co-existence, complementarity and interference of different technologies of rule’ (Lemke, 2013: 41).

Indeed, as a series of studies has shown, there was – already from the outset – a form of the ‘human’ inscribed in algorithmic systems. It is well documented that the dawn of ‘the computer age’ (Hassabis et al., 2017: 245) was characterized by a close affinity between the computer sciences and the human sciences, in particular the neurosciences; that is, the study of the human brain (e.g. De Vos, 2020; Dillon and Reid, 2009; Hayles, 1999; Malabou, 2019). Indeed, many of the founding thinkers of both AI and neurobiology were operative in both fields, with ‘collaborations between these disciplines proving highly productive’ (Hassabis et al., 2017: 245). AI pioneers such as Alan Turing, Norbert Wiener and Gregory Bateson were all inspired by neurobiological theories of the mind, among them Warren McCulloch’s and Gregory Pitts’ discovery of the similarities between the neural information processing that characterizes brain activity and that of binary computer calculation (De Vos, 2020, see also Dillon and Reid, 2009: 65; Hayles, 1999: 7). Such similarities have led Malabou (2019) to argue that the epigenetic turn of the neurosciences is a direct analogy to the epigenetic turn in the computer sciences, heralding and enabling the move from rules-based programs to AI.

As shown by Malabou (2019), the cross-collaboration between the computer sciences and the brain sciences was enabled by the radical critique of the Cartesian mind/body distinction – and its definition of bounded intelligence – that swept across the life sciences during the 20th century. Rather than conceptualizing human intelligence as predefined by birth – from the genes or from a metaphysical cogito or mind – the neurosciences define the human brain as dynamic: not a bounded system, but a plastic and relational organ, capable of adapting to and learning from its exposure to an external environmental (Malabou, 2008; Rose and Abi-Rached, 2013). The distinction between the algorithm and the human dissolves in the ‘neuromolecular style of thought’ (Rose and Abi-Rached, 2013: 41), exemplified by the neurobiological definition of the brain as a complex information-processing unit, guided by correlative forms of reasoning (Dillon and Reid, 2009; Grove, 2019: 163; Hayles, 1999). As argued by Hassabis et al., ‘in both biological and artificial systems, successive non-linear computations transform raw visual input into an increasingly complex set of features, permitting object recognition that is invariant to transformations of pose, illumination, or scale’ (Hassabis et al., 2017: 245).

In the neurobiological framework, affects and emotions are not positioned outside or in opposition to the logic of correlations. It is rather through bodily sensations that ‘reality’ is capable of being read by the brain, rendered into observable ‘neuron firing disposition[s]’ (Damasio, 1994: 102). In explicit contrast to the Cartesian distinction between reason and emotion, popular neuroscientist Antonio Damasio refers to emotions as a corporeal information system, designed to enable the brain to reduce the number of variables to calculate in order to make optimal decisions in a fundamentally uncertain and contingent environment (Damasio, 1994: 37). Defined as neural data points, it thus became possible to conceive of emotions as observable and quantifiable, capturable through technologies such as magnetic resonance imaging (MRI) scans, 4 electroencephalograms (EEGs 5 ) and functional near-infrared spectroscopy (fNIRS 6 ), as well as from the measurement of neurochemicals in the body. As argued by Rose and Abi-Rached (2013: 373), such technologies made it possible to measure how individuals react to external input, enabling governmental imaginaries of more effective prediction of behaviour.

Indeed, as noted by several studies, the ‘neurobiological revolution’ (Lynch and Laursen, 2009) within the life sciences has had a remarkable impact on biopolitical forms of governmentality, enabling phenomena like crime, inequality and resilience to be defined and explained in biological, rather than in social and political, terms (Rose and Abi-Rached, 2013; Whitehead et al., 2018). Through the concept of plasticity, the brain became perceivable as a site of intervention, open to external regulation, rendering, perhaps paradoxically, ‘a whole range of social problems . . . more, not less amenable to intervention’ (Rose and Abi-Rached, 2013: 51, see also Whitehead et al., 2018). Within this governmental imaginary, biology was thus seen ‘not as destiny but opportunity’ (Rose and Abi-Rached, 2013: 15; emphasis in the original). Practices of war and security were from the outset articulated as central to the development and institutionalization of this governmental imaginary (Dillon and Reid, 2009; Howell, 2017). Like algorithmic governmentality, Rose and Abi-Rached describe neurobiological forms of security as an anticipatory mode of governance, driven by a desire to ‘govern the future’ (Rose and Abi-Rached, 2013: 45); ‘neuroscientifically based social policy’, they argue, aims to identify those at risk – both those liable to show antisocial delinquent, pathological, or criminal behavior and those at risk of developing a mental health problem – as early as possible and intervene presymptomatically in order to divert them from that undesirable path. (Rose and Abi-Rached, 2013: 15, emphasis added)

The close entanglement between the neurosciences and the computer sciences – as well as their respective role in contemporary forms of governing a post-Cartesian subject – problematizes the conceptual separation between biopolitics and algorithmic governmentality discussed above. If we are to understand how algorithmic governmentality operates in the fields of global and human security, then I contend that it is imperative that we take this as our starting point, beginning with acknowledging ‘the deep and immanent forms of socio-technical and bio-machinic entanglement central to contemporary algorithmic life’ (Pedwell, 2022: 4), as well as the way ‘the liberal way of war’ has gone ‘both digital and molecular’ (Dillon and Reid, 2009: 110; emphasis added). In other words, we need to ask ourselves not only how digital technologies target human behaviour, but also how ideas and theories of the human are embedded and make possible ‘the way that we are datafied, profiled and traced’ (De Vos, 2018: 14). Indeed, as argued by Amoore and Raley, understanding of ‘how algorithms act in ways that are thoroughly embodied’ (Amoore and Raley, 2017: 5) is crucial if we are to analyse ‘what it means to seek human security in our times’ (Amoore and Raley, 2017: 5).

In the next section I will seek to engage the task given to us by Amoore and Raley, analysing how security is envisioned and imagined in the neurotechnological age. I will do so by turning to the SMA programme’s advocacy for neurobiologically informed algorithmic war, in order to demonstrate how the relationship between the human and the machine is articulated and acted upon within the US military apparatus: how neurobiology is seen to engender new technologies of promoting, optimizing and securing life from its own immanent processes of becoming violent.

The human machine: Military imaginaries of neurobiology and AI

In contrast to the popular distinctions between the human and the algorithm rehearsed above, researchers within the DoD-funded SMA programme make no distinction between the conditions for human security and those of algorithmic security. Centring the discussions within the SMA programme is a biological and digitalized view of the human, in which the inner life of humans is perceived as consisting of observable, measurable and capturable correlational patterns, amenable to external stimuli and intervention. On several occasions, human life and intelligence are discussed in the vocabulary of the computer – repeating a tendency in neuroscience to refer to the brain in computational terms (Grove, 2019: 163). For example, at the 2010 SMA annual conference, The neurobiology of political violence, James Olds – appointed as Head of the Biological Sciences Directorate at the US National Science Foundation in 2014– defined the difference between human and artificial intelligence as manifested not in how intelligence operates, but rather whether intelligence is ‘evolved’ or ‘engineered’ (NSI, 2010: 10). Lt Col David Lyle of the US Air Force (USAF) draws similar analogies, describing the brain as ‘the most powerful computational tool in the known universe’ (NSI, 2013: 59), one that is characterized, like AI, by its plasticity and as consisting of ‘a balance of structure and fluidity . . . driven by bottom-up emergence’ (NSI, 2013: 59). For William Casebeer, senior research manager in human systems and autonomy at Lockheed Martin and a vocal figure in the SMA programme, ‘we [thus] need to treat humans and machines the same way’ (NSI, 2017a: 17).

The definition of the human as an ‘evolved machine’ (NSI, 2010: 10) allowed the SMA programme from the outset to articulate the relationship between neurobiology and the computer sciences as a joint programme, a shared episteme rooted in complexity theory. 7 The language of convergence, and of ‘breaking the silos’ (e.g. Wright, 2021b: 27) between the computer sciences and the neurosciences dominates the SMA programme’s publications, as exemplified by the description of big data as a ‘force multiplier’ for neuroscience (NSI, 2018: 25) and by senior science advisory fellow to the SMA programme James Giordano’s statement that here is ‘convergence in neuroscience that joins genetics, nanoscience technology, and cyberscience technology’ (NSI, 2011: 41). Throughout various SMA publications this convergence is recognized as having, on the one hand, ‘driv[en] an exponential growth in Artificial Intelligence (AI)’ (Canton, quoted in NSI, 2018: 118) and, on the other hand, as having ‘accelerate[d] the development’ (NSI, 2018) of neuroscience. Rehearsing popular descriptions of AI as ‘helping neuroscientists crack the so-called neural code’ (Fan, 2019) by ‘digitally reconstruct[ing] massive portions of neural connections’ (Fan, 2019), it was argued at the 2017 SMA annual conference, From control to influence? A view – and vision for – the future, that big data had spearheaded a ‘neurocognitive revolution’ made possible by the ‘fusion of techniques for looking into the brain and changing behavior’ (NSI, 2017a: 16).

In particular, early SMA publications articulated a vision that emergent computer technology would allow the coming of age of neurobiological application in matters of war. The conference proceedings of the 2010 SMA annual conference underline what was described as a consensus within the SMA community at the time: although neuroscience offered great insights into the neurological processes undergirding radicalization and violence, it was in 2010 deemed too early to apply those insights to ‘real world problems within the national security and homeland defense space’ (NSI, 2010: 5). ‘Neuroscience research is still relatively basic’ (NSI, 2010: 7), Olds acknowledged in his presentation. At the 2014 SMA conference, A new information paradigm? From genes to Big Data and Instagram to persistent surveillance: Implications for national security, Giordano argued that neuroscience may have ‘put the brain at our fingertips’, but neuroscientific information and the insights – and capability – it yields will not be operational to the extent needed for national security intelligence and defense without the scope and depth of informational use that will be afforded by big data approaches. (NSI, 2014: 42)

In the early years of the SMA programme, the promise of neurobiology to security was hence articulated in a future tense, as a promise that the right investments would garner capacities ‘to predict acts of political violence from afar’ (NSI, 2010: 7) and to ‘craft targeted deterrence messages using . . . neuromarketing techniques’ (NSI, 2010: 8). At the 2012 SMA conference, several presenters emphasized that the computer sciences would allow neurobiological influence operations – at times referred to as ‘neurodeterrence’ or ‘AAT’ (Assess, Access, and Target) (NSI, 2012b: 50) – to go from theory to practice, from the monitoring of individual brains in closed experimental settings, to real-time population-wide interventions. In Casebeer’s prediction, the convergence between the neurobiological sciences and the computational sciences would allow the US military to bring ‘hard science to social interactions’ (NSI, 2012b: 58), to execute influence operations on both an ‘individual’ and a ‘systemic level’ (NSI, 2012b) and ‘finally [to be] in a position to scientifically confront what previously has been considered qualitative’ (NSI, 2012b).

In this framework of understanding, social relations, ideas and perceptions of reality are defined as neurological phenomenon, perceived to have a material corporeal ontology. According to Lyle, ‘the foundations for all of what we are concerned about in military strategy ultimately resides in the physical world—ideas are networks of neurons in the heads of individuals’ (NSI, 2013: 64). Other SMA researchers, such as Jeannette Norden, have argued similarly: ‘everything a human being does or thinks or feels is the result of neuron activity’ (NSI, 2011: 39). Processes of radicalization and of violence – which Olds defines as an emergent property of the brain (NSI, 2010: 10) – are thus perceived to be imprinted in the body, to bear measurable biological markings at a stage prior to consciousness and behaviour: ‘reflected in chemical levels in the brain’ (NSI, 2010: 47). From this perspective, Lyle argues that ‘we can describe the emergence of extremism from the bottom up, starting with the neurobiological processes of individuals’ (NSI, 2013: 61). At times, regulating correlations on the neural level are referred to in the SMA community as a means to affect causality, articulations that blur popular distinctions between correlation and causality: ‘Right now’, Casebeer explained in 2011, ‘the [defense] community is limited to pure correlations between inputs and behaviors. That is like trying to understand acceleration by observing what happens to the car when the foot presses on the accelerator—without ever looking under the hood’ (NSI, 2011: 43)

To make the inner life of individuals and populations accessible to the military gaze, the SMA community argues that big data needs to couple its current reliance on behavioural data with ‘health-related datasets’ (NSI, 2018: 83), thus combining social media data with ‘multiple sources of information . . . such as biological, behavioral, economic, environmental/ecologic reports, from myriad resources’ (Giordano and Wurzman, 2016). Most importantly, the SMA programme advocates for the use of ‘cognitive mapping’, which is described as ‘offer[ing] tantalizing glimpses of the “gray matter”’ (NSI, 2018: 23). Across the publications of the SMA programme, cognitive mapping is commonly described as the collection of ‘neurophysiological data’ (Giordano and Wurzman, 2016) or ‘neurodata’: ‘the gathering and use of multi-scalar information to (1) establish increasingly detailed assessment of brain structure and function and (2) develop large-scale databases to enable (descriptive and/or predictive) evaluative metrics (for clinical medicine, law, socio-economic, and potentially political uses)’ (Giordano and DiEuliis, 2021: 21).

The collection of neurodata includes the mapping of particular neural correlations in the brain through physical devices such as MRI scans, EEG and fNIRS (NSI, 2018: 52) among ‘representative’ individuals of populations of interest (NSI, 2018: 133), as well as population-wide measurement of neurotransmitters and hormone levels perceived to impact affective states. In this regard, particular emphasis is placed on oxytocin – a ‘trust modulator’ (NSI, 2010: 14) – as well as on dopamine – held to promote both well-being (NSI, 2013: 49) as well as altruistic behaviour and empathy (NSI, 2012b: 58). Within the SMA programme, both dopamine and oxytocin are seen as particularly lacking among impoverished communities in the Middle East, which is why these communities are claimed to be particularly vulnerable to radicalization (NSI, 2010: 46). Together, these variables are taken to describe what in the SMA community is repeatedly referred to as an individual and/or population’s ‘KABIB’ (knowledge, attitudes, beliefs, intentions and behaviours) (NSI, 2017b: 82; 2018: 49). Elsewhere, the collection of neurological data has been described as an ‘implicit measure’ (NSI, 2018: 51), a means to measure ‘unconscious, involuntary, and often unknown features’ (NSI, 2018: 49).

So presented, there is a stark difference between how the collection of neurodata is communicated within the US security apparatus and the public perception of big data as being unable to read the private thoughts and inner life of individuals (Aradau and Blanke, 2015: 2). It also appears as if the collection of neurodata differs from the diagnosis offered by Fuchs and Chandler that ‘computer algorithms cannot understand love, ethics, morals, solidarity, care, affects, and other distinct human qualities’ (Fuchs and Chandler, 2019: 15). Through the synthesis of neuroscience and AI technologies, the vision articulated in the SMA programme is precisely the opposite: to foster a machine capable of surveilling our potentiality, not merely our actuality, capable of making traits often deemed to be exclusively human, and thus inaccessible to the machine ‘come alive’ (NSI, 2018: 112), such as ‘memory, learning, and cognitive speed’, ‘mood, anxiety, and self-perception’ and ‘trust and empathy’, as listed by Giordano and DiEuliis (2021: 19).

It is thus believed that neurodata will be able to move the geography of war further ‘left of bang’ (Giordano and DiEuliis, 2021: 17; NSI, 2011: 2) than current algorithmic systems are held to be capable of, illuminating ‘potential, localized threat[s] that crowdsourcing algorithms might not elevate as important trend[s]’ (NSI, 2018: 11–12; emphasis added). As argued in a 2018 SMA white paper: ‘crowdsourced data often comes too late to be actionable. By the time sufficient data is available to illustrate a noteworthy trend, the majority of the socio-political forces are well entrenched and the output mostly has tactical value only’ (NSI, 2018: 110; emphasis added).

Although the collection of neurodata on a large scale with the aid of big data is already taking place, the SMA programme estimates it to grow exponentially in the coming years, given the institutionalization of the Internet of Things and the Internet of People – ‘the next major milestone in understanding populations’ (NSI, 2018: 79), which is seen to provide ‘far more densely interconnected brains, bodies and environments’ (Wright, 2021a: 35). By investing in ‘larger, more robust sociotechnical spaces’, such as ‘emerging fields of human–machine interfaces, human augmentation, and brain–computer interfaces’ (Wright, 2021a: 120), it is further envisioned that the collection of implicit neurodata – including ‘biometrics (sweat, heartbeat)’ (Wright, 2021a: 52), ‘digitalized health records’, ‘wearable physiological monitors’ (Wright, 2021a: 25) and ‘mass genomics’ (Wright, 2021a: 22) – is or will soon be possible at population level, without loss of analytical depth. As proclaimed by General Paul J. Selva, vice chairman of the Joint Chiefs of Staff, at the 2019 SMA conference, ‘We are on the verge of having nearly ubiquitous sensing across the entire span of the globe’, of developing a ‘synoptic view of the entire world . . . [through which] almost everything that happens on the surface of the Earth is knowable’ (NSI, 2019: 4).

With ubiquitous surveillance, it is believed that it will become possible to more effectively regulate processes of radicalization – aiding the US military to identify where and when to intervene. In this context, neuroscience has been referred to as a ‘science of power’ (NSI, 2015a: 46), offering, if harnessed systematically, the US military the capacity to use information for ‘more guided, selective, and effective means of manipulation’ of ‘brain states associated with hostility, trust, empathy, or cooperation’ (Canli et al., 2007: 7). SMA white papers connecting neurobiology to AI echo such aims, speaking of developing machines capable not only of gathering and analysing data, but of modifying cognitive states, i.e. to ‘design the future’ (NSI, 2018: 108), construct ‘identities’ (NSI, 2018: 111) and ‘inculcate norms, values, and ethics’ (NSI, 2018) at population level. The calling to produce and protect against ‘bioweapons’ geared towards ‘controlling individuals’ behavior’ (NSI, 2017a: 18) is another example of how this aim has been articulated.

To realize such aims, a myriad of different interventions has been proposed by the SMA programme throughout the years, including the ‘manipulat[ion] of health care and biotechnology sales markets’ (NSI, 2017b: 60) in areas with ‘the most vulnerable populations in terms of geography or physical capacity’ (NSI, 2013: 67), so as to affect levels of neurotransmitters such as oxytocin or dopamine. Other proposals, such as the use of algorithmic systems to disseminate individualized counter-radicalization messages are designed to act directly on the level of consciousness, thereby affecting how the brain mediates the external world (e.g. Casebeer and Russel, 2005; NSI, 2010: 12–14; 2013: 62).

To improve counter-messaging, the SMA programme has devoted significant attention to the power of narratives. According to Lyle, stories are ‘the attractors that drive mental model formation in the complex system of the human mind’ (NSI, 2013: 62). Casebeer, quoting neuroscientist Mark Turner, has similarly described ‘stories [as] a basic principle of mind’, suggesting that ‘most of our experience, our knowledge, and our thinking is organized as stories’ (Turner, 1998, quoted in Casebeer and Russel, 2005: 5). For Casebeer, narratives make a social body come alive; they allow ideas to grow, to move between bodies. As such, Casebeer holds narratives to be a key tool of radicalization, as they are seen to ‘provide incentives for recruitment’ (Casebeer and Russel, 2005: 6; emphasis in original), to ‘reinforce pre-existing identities’ (Casebeer and Russel, 2005; emphasis in original) and alternatively to ‘create necessary identities where none exist’ (Casebeer and Russel, 2005; emphasis in original). Narratives achieve this, Casebeer posits, by acting on the emotional register of the brain. The narrative of violent extremism, in particular, is claimed to draw on ‘the “power of love”[, on] fraternal or romantic love, belongingness, group solidarity, and devotion’ (NSI, 2012a: 9).

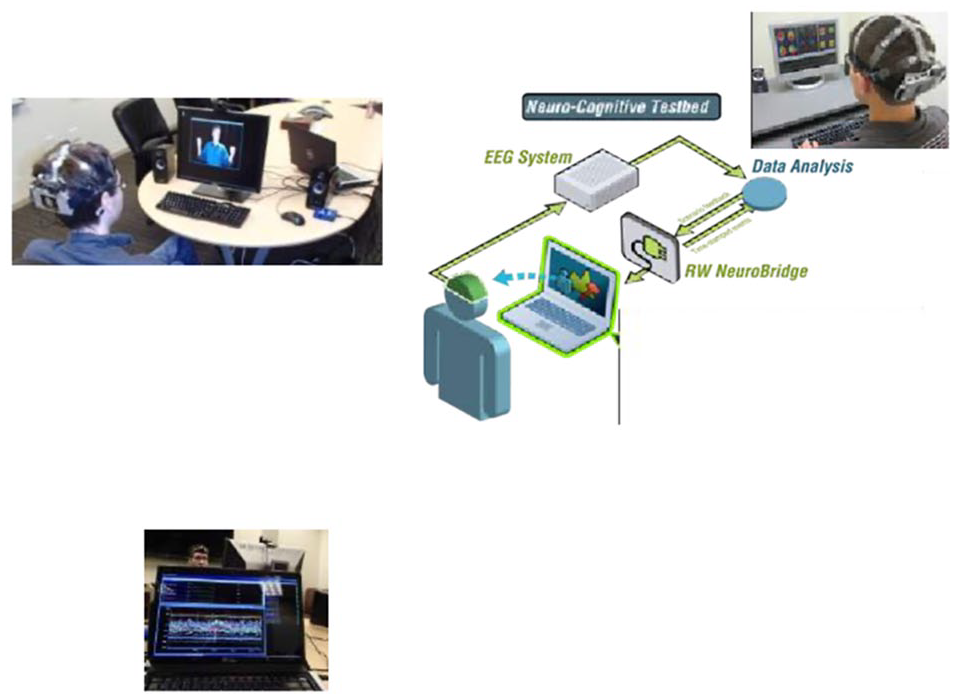

With emerging cognitive biotechnologies, it is envisioned that the power of narratives to regulate radicalization can become automated. Models such as Wurzman and Giordano’s (2015) NEURINT and Casebeer’s ‘human in the loop cognitive testing’ technology suite (NSI, 2018: 129–138) exemplify the technology available and imagined in recent SMA publications. Promising to automate the gap between surveillance and intervention, the suites make use of algorithmic systems trained on narrative data to identify individuals to be targeted by individualized counter-messaging. To guarantee the effectiveness of counter-messages, both NEURINT and Casebeer’s technology suite employ insights from neuromarketing – what Casebeer refers to as a human in the loop ‘stress-test’ (NSI, 2018: 130), in which ‘behavioral, psychological and physiological monitoring systems’ are connected to the brains of ‘representatives of the population one hopes to reach’ (NSI, 2018: 133); that is, individuals identified to have similar brain structures as those perceived within the SMA programme to be at risk of radicalization: the poor, the vulnerable or more generally the Middle Eastern adolescent Other, discussed at times as having developed a radically different brain from the Western brain (NSI, 2017a: 23). The stress-test is depicted in Figure 1 (NSI, 2018: 133).

Expanding the ‘EEG (electroencephalogram) human-in-loop testing’ Block-A prototype narrative influence and message analysis test bed (NSI, 2018: 133).

By 2031, it is speculated that ‘immersive technolog[ies]’ such as virtual and augmented reality (VR/AR) and ‘real-time holograms’ (Wright, 2021a: 35) will produce ever more effective counter-messaging, enabling the US to create ‘shifts in people’s perception of the world, and their decision-making process [thus] allowing the target audience to forget they are the audience and instead fall into a newly manufactured reality’ (NSI, 2018: 100; emphasis added). To that end, future imaginaries centre on the promise of ‘neurotechnological devices (e.g., sensory and brain stimulation approaches)’ (Giordano and DiEuliis, 2021: 19) that will have direct access to the neuroinformatic brain of flagged individuals. Inspired by Elon Musk’s Neuralink – a technology designed to connect brains to digital devices through implanted microelectrodes – Giordano and DiEuliss imagine a future in which ‘brain–machine interfaces’ will be able to ‘translate neurological signals into inputs for computers and machines, and vice versa (Giordano and DiEuliis, 2021: 11; emphasis added) – thus eliminating in practice the gap between surveillance and intervention.

Although such technologies may never become real, they represent a future of war that in many ways is already here, present as a political imaginary. As argued by Shaw, imaginaries are not simply ‘innocent and epiphenomenal projections’ of the future; they are performative of the here and now (Shaw, 2017: 467): they direct funding to research, they manifest themselves in infrastructure, they organize the political agenda, in part by removing alternative means to act on an unequal global society. The SMA programme is explicit on this account, arguing repeatedly against targeting material ‘root causes’ (NSI, 2018, 129) of violence, such as poverty or social inequality, and to focus instead on regulating how global power relations and exclusions are coded in the brains of vulnerable populations, in order to make ‘causal factors that contribute to violent mobilization . . . less efficacious’ (NSI, 2014: 54; emphasis added).

Undergirding such statements is an imaginary of war that defines the human as similar in form to what Chandler has called ‘the correlational machine’ (Chandler, 2019: 23). Like the algorithm, this form of life is seen as ‘co-relational’ (Chandler, 2019: 33): plastic, emergent and adaptable, formed and re-formed through its neural relations. Governed through exposure to data, this form of life is seen as capable of learning from and changing in tandem with its environment, making it, in the words of the SMA programme, an evolutionary being: capable of developing into an ‘optimized’ version of itself. Indeed, the neurotechnologies described within the SMA programme are articulated in a vocabulary of human enhancement, of realizing an ‘intelligent biology’ (Wright, 2021a, 2021b) and an ‘augmented cognition’ (Giordano and DiEuliis, 2021: 12), and of ‘bio-engineer[ing]’ and removing ‘aspects of ‘human weak links’ (Giordano and DiEuliis, 2021: 18). Paraphrasing Elon Musk, Giordano and DiEuliis argue that cognitive biotechnologies and emerging human–machine interfaces should be available to everybody ‘who wishes to achieve “better access” and “better connections” to “the world, each other, and ourselves”’ (Giordano and DiEuliis, 2021: 26).

Such technologies arguably blur popular distinctions between algorithmic and biopolitical forms of governmentality, portraying a future in which algorithmic systems operate directly on the level of potentiality, on processes of becoming. Equally blurred is the historical distinction – and tension – between freedom and security. In contrast to traditional interpretations of Foucauldian governmentality, the SMA programme does not perceive freedom to have an ontology of its own. Although liberal governmentality is often taken to operate through freedom (Dean, 1999), presupposing ‘a spontaneity of action that must be left to itself’ (Wallenstein, 2013: 25) in order to ‘extract utility, a material and intellectual surplus value, from the individual’ (Wallenstein, 2013: 25), the SMA programme conceptualizes freedom as a capacity that has to be built. Like neuro-based social policies at large (Rose and Abi-Rached, 2013; Whitehead et al., 2018), the SMA programme recognizes no such thing as free will or individual integrity. In contrast, will is perceived as a behaviour (NSI, 2017b: 28), a neurological firing pattern, open to intervention. The deployment of neurologically informed counter-messaging is in fact articulated as an act of freedom, an ‘objective’, ‘ethical’ and ‘safe’ (NSI, 2017b: 66) way to win the war of the mind, enabling the US to ‘speak truth to the power that violent non-state movements sometimes hold over innocent populations’ (NSI, 2018: 129), and to ‘prevent the exploitation of vulnerable populations’ (NSI, 2018: 131).

Although it is emphasized within the SMA programme that the plasticity of the human brain makes total control impossible (NSI, 2017a), no realm external to governmental power is thus identified by the SMA programme, nor is any space found outside war, as per the theme of the 2015 SMA annual conference: No war/no peace: A new paradigm of international relations and a new normal? (NSI, 2015b). For the SMA community, this is the paradox of complexity and plasticity: at the same time as plasticity issues a promise of security and preemption, enabling external engineering of human minds, plasticity is simultaneously seen to inscribe insecurity and contingency as the very nature of being. According to Wright (2021a), ‘Humans . . . are not static’ Hence, radicalization is percieved as a perpetual potentiality (7), a ‘vulnerability’ inherent in ‘human societies’ (Wright, 2021a: 9), that must be continuously mitigated. As such, no final state of security is perceived to be in reach: no ‘victory’, ‘endstate’ and/or ‘conflict termination’ imagined – only permanent regulation of ‘dynamic patterns of societal adaptation’ (NSI, 2012: 218, emphasis in the original).

Indeed, the advent of the neurotechnological age, which is described within the SMA programme as ‘outpace[ing]’ (Giordano and DiEuliis, 2021: 25) rather than being driven by ‘securitization’ (Giordano and DiEuliis, 2021: 25), is perceived to increase insecurity exponentially, rendering the whole of society into a ‘vast attack surface’ (Wright, 2021b: 26). The ‘combination of “blank slate” and “unknown ground” dimensions of neurotechnology’ is further seen to actualize as-yet unknown threats, making ‘realistic biosecurity forecasting and preparedness’ (Giordano and DiEuliis, 2021: 25) difficult if not impossible. For the SMA programme, war in the neurotechnological age is thus simultaneously described as never-ending, as omnipresent – requiring ‘a whole-of-nation (versus merely whole-of-government or military) approach’ (Giordano and DiEuliis, 2021: 29; emphasis in the original), and as inevitable: [I]t is not a question of whether neuroS/T [science/technology] will be utilized in military, intelligence, and political operations, but rather when, how, to what extent, and perhaps most importantly, whether or not the US and its allies will be prepared to address, meet, counter, or prevent these risks and threats. (Giordano and DiEuliis, 2021: 27)

Conclusion: Biopolitics and critique at the intersection of the human and the machine

Despite the sense of inevitability with which the SMA programme speaks of in/security in the neurotechnological age, the future is not set As Jensen et al. (2023) have recently argued, there is nothing inevitable about the ‘technological revolution’ of war heralded by the hype of new biotechnologies and AI. However, as this article has demonstrated, the epistemological revolution that AI is held to represent may be less radical than is often assumed. Building on the biopolitical practices and imaginaries of human security, it could very well be argued that the epistemological and organizational seeds of algorithmic governmentality already exist within the US military. Although concealed by popular definitions of algorithmic governmentality, as well as by popular narrations of AI’s origin story, algorithmic governmentality has a history, one that arguably is enabled within rather than in opposition to biopolitical forms of governmentality.

As the vision of war organizing the SMA programme clearly exemplifies, AI has not decentred the human as the epicentre of fear and enmity in global security discourses, but rather enhanced it, promising new means to govern a form of life seen as co-relational, plastic, networked and emergent. As the above analysis has shown, extending the correlative and predictive capabilities of algorithmic systems to the neuron speculates on the possibilities of extending the algorithm’s search for the anomaly (Aradau and Blanke, 2018; Glouftsios, 2021; Roberts and Elbe, 2017) with the normalizing ideals of disciplinary societies. By reinforcing and latching itself onto the anticipatory forms of security governance that have become the trademark of human-centred security, this new form of governance is proclaimed to be able to surveil and regulate both the actuality and the potentiality of being – intervening on a level not only prior to violence’s emergence, but on the corporeal and cognitive processes that are seen to condition who we are, what we believe and how we sense and act in the world.

If we are to critique and make visible this emergent form of governmentality – the racial (e.g. Noble, 2018; Vukov, 2016) and economic inequalities that algorithmic governmentality currently sustains (e.g. Eubanks, 2018) the borders it reproduces and maintains (Glouftsios, 2021; Hall, 2017), the violence it commits and enables (Bellanova et al., 2021; Shaw, 2017) – it is arguably not enough to call for algorithmic systems to become humanized: more open to cultural variation, more sensitive to understanding the complex processes of identity formation, more understanding of how poverty affects human well-being. Nor would it be enough to proclaim the necessity of seeing and recognizing traits such as empathy, emotions and ideas – as such a stance not only risks romanticizing and essentializing the human in our critique of the algorithm (e.g. Evans and Meza, 2022), but also risks reproducing a governmental logic that already identifies the human mind as an object of power and governance. As this article has shown, the human is not merely a ‘target’ (Rouvroy and Berns, 2013: 171) of algorithmic governmentality, but rather central to its organization and operation. In the neurotechnological age, particular ideas of what it means to e human and what it means to be empathic, imperfect and flawed have become intrinsic to algorithmic governmentality, informing not only what algorithms identify as data, and how apparatuses of security act on said data, but, more importantly, how freedom is pursued and how war is waged in the name of ‘optimizing’ life.

Footnotes

Acknowledgements

I wish to thank the two anonymous reviewers for their insightful and helpful comments, as well as the Security Dialogue editorial team for their support and guidance. This article has been read and commented on by a series of scholars and I particularly would like to thank Julian Reid, David Chandler, Simon Larsson, Patricia Lorenzoni, Gustaf Forsell and Michał Krzyżanowski for valuable and critical comments. I would also like to thank Mattias Gardell and Irene Molina for encouragement and support in the initial phases of the project.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publicationof this article: This research was supported by funding from the Swedish Research Council (2019-00683) .