Abstract

International relations scholarship has long emphasized that popular culture can impact public understandings and political realities. In this article, we explore these potentials in the context of military-themed videogames and their portrayals of weaponized artificial intelligence (AI). Within paradoxical videogame representations of AI weapons both as ‘insurmountable enemies’ that pose existential threats to humankind in narratives and as ‘easy targets’ that human protagonists routinely overcome in gameplay, we identify distortions of human–machine interaction that contradict real-world scenarios. These distortions revolve around videogames affording players enhanced human agency to dominate AI weapons to offer enjoyable gameplay, contradicting the same weapons being intended to diminish human agency on real-world battlefields. By leveraging the Actor-Network Theory concept of ‘translation’, we explain how these distorted portrayals of AI weapons are produced by entanglements between heterogeneous human and non-human actors that aim to make videogames mass-marketable and profitable. In so doing, we echo game studies research that calls for greater attention to the commercial and ludic dimensions of videogames so that international relations scholarship can better account for pop culture’s bounded abilities to impact public understandings and political realities.

Introduction

International relations scholarship explains that popular culture can shape how the public perceives and understands new technologies and their warfare applications (e.g. Muller, 2008; Nexon and Neumann, 2006; Power, 2007; Stahl, 2013; Weldes, 1999). Advanced military weaponry that integrates artificial intelligence (AI) in targeting functions, colloquially addressed as ‘AI weapons’ (Heyns, 2016), is a growing context for such examinations (Jarvis and Robinson, 2021). For example, pop-culture objects such as the Terminator films spark discussions regarding human–machine interaction in warfare. Young and Carpenter (2018) explain that pop-culture portrayals like The Terminator’s ‘killer robots’ predispose many individuals to oppose AI weapons. But their fictional qualities can equally downplay their relevance to burgeoning technical developments and real-world issues. Most prominently, by featuring fantastical or future-oriented narratives, pop culture can undermine meaningful public involvement in political discussions (Carpenter, 2016). These include discussions on lethal autonomous weapons systems under the remit of the United Nations Group of Governmental Experts since 2016 (Bode and Huelss, 2018).

In this article, we extend international relations scholarship that examines the bounded abilities of pop culture to shape public imaginaries by exploring videogame portrayals of weaponized AI. To do so, we leverage and extend insights from the intersections between game studies and international relations scholarship (e.g. Ciută, 2016; Höglund, 2014; Payne, 2012). Videogames are a contemporary interactive pop-culture staple that remains under-investigated in international relations (De Zamaróczy, 2017; Power, 2007). With series like Call of Duty presently having more than 100 million active players (Activision, 2020), examinations of videogame AI weapons portrayals are important, not least because videogames can impact how the public understands political developments (Robinson, 2012), but also, as we argue, because their gameplay requirements can constrain their potentials to become widely influential.

We demonstrate that because videogame production and consumption processes are premised on maximizing human agency to heighten consumers’ ludic experiences (Bos, 2018; Ciută, 2016; De Zamaróczy 2017; Jarvis and Robinson, 2021; Salter, 2011), portrayals of AI weapons that emerge in videogames tend to contradict how these weapons are intended to diminish human agency in the real world. To demonstrate this limitation for videogames to imprint upon the public, we unpack a paradox in how they portray weaponized AI and propagate distorted notions of human–machine interaction. This paradox concerns videogames portraying AI weapons as autonomous existential threats to humankind in narratives, which simultaneously are dominated by human protagonists during first-person shooter gameplay. These observations enable us to uniquely demonstrate that it is not just the narrative content but also the commercialization of pop-culture objects that blunts their potentials to inform the public regarding real-world political contexts.

Specifically, we reveal concerning distorted notions of human–machine interaction in military-themed videogames. Namely, AI weapons are positioned as subordinate to humans within a framework that presumes they predominantly stay within human control. That is, AI weapons ostensibly attack enemy forces while posing no threat to friendly forces and civilian populations (see Jarvis and Robinson, 2021: 198, 202). This contradicts the realities of how AI is applied in the military realm and dismisses mounting humanitarian concerns over AI weapons’ lethal potentials (e.g. Gettinger and Michel, 2017; Qiao-Franco and Bode, 2023). While we do not assume simple, linear causal effects of playing videogames (Ciută, 2016), such representations obscure human security and international stability concerns in international debates, rendering the ethical, legal, technological, and political issues of AI weapons invisible. Accordingly, we demonstrate the limitations of videogames in shaping public understandings, despite the fact that many of the games we have analyzed are intended by their developers to be exposés of AI-weaponization efforts and warnings of dystopian futures.

We theorize problematic AI-weapons videogame portrayals as emergent from the commercial market entanglements between a wide range of entities by utilizing Actor-Network Theory (ANT) (Canniford and Bajde, 2015). ANT is a material–semiotic sensibility that enables researchers to study a context as composed and shaped by ‘networks’ of humans and non-humans (Latour, 2005). This approach empowers scholars to theorize how human and non-human entanglements ‘translate’ objects by recontextualizing them across different areas of society (Callon, 1984). For instance, amalgamations of scanners, personnel, and security metrics render various everyday items as ‘threats’ when they enter aviation security contexts (Salter, 2019; Schouten, 2014). Such processes reflect geopolitical considerations (Robinson, 2019) and the commercial contexts in which objects become entangled (Bos, 2018; Godfrey, 2021; Payne, 2012). Likewise, we explore how networked human and non-human actors recontextualize AI weapons in videogame contexts.

As prior interdisciplinary research establishes, the production and consumption of videogames brings together various human and non-human actors (Ash and Gallacher, 2011; Denegri-Knott and Molesworth, 2013; Giddings, 2009; Godfrey, 2021). In our case, these actors range from consumers, developers, marketing press releases, YouTube gameplay videos, and game code to the real-world weaponized-AI systems that inspire many videogames. We examine this gamut of actors that translate AI weapons to entertain consumers. As we theorize, these actors play complex roles in either circumscribing or promoting notions of human agency during videogame production and consumption. In lieu of these roles, we leverage and extend game studies scholarship that spotlights the commercial and ludic dimensions of videogames. We do so by offering an ANT-informed theorization of how these dimensions can limit videogames’ potentials to impact public understandings of political realities (e.g. Ciută, 2016; De Zamaróczy, 2017; Salter, 2011).

The article proceeds as follows: First, we provide a theoretical background on the intersections between international relations, pop culture, game studies, and weaponized AI. Next, we explain our analytical framework, which is anchored by ANT’s translation concept (Callon, 1984; Latour, 2005). We then introduce our empirical context and the netnographic fieldwork that underpins our analyses (Kozinets, 2010), before unpacking our findings, which are derived from an analysis of 19 popular military-themed videogames and their portrayals of weaponized AI. Finally, we conclude by discussing the theoretical implications of our study for research at the intersections between international relations, pop culture, videogames, and AI weaponization.

Weaponized AI, popular culture, and game studies

AI’s military applications and integrations into weapons’ targeting functions have raised international debates over appropriate human–machine interaction in the use of force. For the time being, AI weapons with differing degrees of autonomy are considered decision aids for commanders, operated under direct human supervision (‘human-in-the-loop’) or with the possibility of a human veto before they engage a target they have selected (‘human-on-the-loop’) (Johansson, 2018; Sharkey, 2016). However, as militaries integrate autonomy in their arsenals to out-innovate adversaries, lethal autonomous weapon systems (LAWS) that select and engage targets without human involvement may soon become a reality (Bode and Huelss, 2018; Heyns, 2016). Drones that integrate AI features to ‘attack targets without requiring data connectivity between the operator and the munition’ (UN Security Council, 2021: 17) are one AI-weapon category that has drawn much attention. Drones’ frequent deployment in military conflicts, like the Libyan civil war, the Nagorno–Karabakh war, and the Russia–Ukraine war, have catalyzed debates over permissible AI applications in the use of force (Bode and Nadibaidze, forthcoming).

These international debates, however, have largely been limited to elite and interstate settings. Since 2013, the potential governance of LAWS has been discussed within the ambit of the 1980 United Nations Convention on Certain Conventional Weapons (CCW). In 2016, the United Nations Group of Governmental Experts (UNGGE) on LAWS was established under the CCW to replace hitherto informal meetings. As of the time of writing (October 2023), the UNGGE has met 11 times to discuss LAWS.

However, public opinion is an important factor that will inform determinations of AI-weapon permissibility by state and international officials (Horowitz, 2016). The Martens Clause in the Preamble of Additional Protocol II of the Geneva Conventions enables the public to have a say on what is, and is not, deemed permissible in armed conflict, especially where new technologies are concerned. Hence, pop culture’s potentials to shape how the public comes to understand AI weapons requires deeper examination.

Emerging public understandings over AI weapons can be approached through popular culture and world politics (PCWP) frameworks. These frameworks theorize that political discourses and battles can extend into everyday cultural imaginaries (Caso and Hamilton, 2015; Crilley, 2021; Grayson et al., 2009). At the heart of PCWP-oriented studies is the idea that pop-culture objects can shape ongoing real-world political discussions and actions while functioning as artifacts that capture a society’s evolving worldviews (Buzan, 2010; Daniel and Musgrave, 2017; Muller, 2008; Nexon and Neumann, 2006; Rowley and Weldes, 2012; Weldes, 1999). Germane to AI-weapons permissibility, PCWP frameworks examine how pop-culture objects render certain political realities commonsense and legitimate (Crilley, 2021; Weldes, 1999).

Studies at the intersection of weaponized AI and pop culture are emerging. This literature contends that pop culture is a predominant means of exposure the public has to the existence of AI weapons, such as AI-integrated drones. This exposure is important as fully autonomous AI weapons that do not require any human involvement are yet to be fully developed and deployed on the battlefield (Horowitz, 2016). These studies have examined how AI weapons are portrayed in films and TV shows – cultural forms apt for exploring their imagined possibilities that may be rendered commonsense and legitimate to the public.

Notably, this scholarship spotlights the trope of ‘killer robots’ that offers frames of reference for the public to understand AI weapons. Young and Carpenter (2018) explain that consumption of pop-culture objects containing this trope is associated with greater opposition to autonomous weapons. Related studies show how reference frames intersect with political discourse. For instance, Beier (2020) warns that killer robots propagate two problematic public perceptions of AI weapons. The first is that machines have sole agency over targeting decisions, which obfuscates the culpability of humans that set acts of killing in motion. The second is that humanoid robots distract attention away from more mundane AI integrations in drones, tanks, and ships. Concerning political action, Carpenter (2016) explains that pop-culture AI-weapon portrayals are used strategically by NGOs to champion their regulatory prohibition (e.g. Campaign to Stop Killer Robots). A key finding is that pop culture serves as a common language that political actors understand, effective for sparking public interest in conversations on the permissibility of AI weapons. However, Carpenter cautions that killer-robot portrayals equally stifle political action, as AI-weapon threats can be perceived as too far in the future to warrant immediate action. Implied is that pop culture is a double-edged sword that requires political actors to ‘de-science-fictionalize’ the AI-weapons context so consumers take it seriously after their attention is first captured.

Prior AI-weaponization studies have yet to consider videogame portrayals. We study videogames as their interactivity sets them apart from other cultural forms (Mantello, 2012; Payne, 2014; Power, 2007; Robinson, 2019). Games place consumers in the decisionmaker position (Giddings, 2009; Hirst, 2021). Despite being delimited by coded-in rules and structures, consumers are immersed in a ‘hermeneutic process’ in which the narratives and values of games are co-produced through their dynamics of play (Ciută, 2016: 209). The meaning-making of videogames thus takes place in their production in the ‘real world’ and consumption in the ‘in-game world’ where players develop ludic practices (Juul, 2009; Payne, 2012).

Game studies scholarship enables researchers to reflect on the production and consumption systems that contextualize videogames, particularly in international relations contexts. Seminal work on the ‘military–entertainment complex’ has well documented the long history between the entertainment industry, arms manufacturers, and the US military in videogame production (Der Derian, 2009; Godfrey, 2021; Power, 2007; Robinson, 2012). In essence, videogames produced within these relationships carry actors’ values, often reinforcing gendered, racialized, and colonial/imperial norms (De Zamaróczy, 2017). Through coded-in rules, selective subject construction, and information circulation, videogames can naturalize and legitimize uses of force, sanitize public perceptions of warfare, and elicit consent for militarization (Berents and Keogh, 2018; Mantello, 2012; Payne, 2014; Power, 2007; Robinson, 2019).

Game studies additionally emphasizes that the commercial nature of videogames can shift players’ interpretations of games away from creator-intended meanings. With active audiences in mind, games are designed to promote player freedom and agency (Giddings, 2009: 151; Hirst, 2021: 485). In part, this means that players are considered as critically engaged with the narratives of games and the values embedded in their gameplay (Ciută, 2016). In other words, playing games is rather playing with games (Hirst, 2021). This perspective emphasizes that games offer ludic experiences through their visual aesthetics, how challenging they are to play, and the excitement and feelings of control they can offer players (Ash and Gallacher, 2011; Huotari and Hamari, 2017). The role of videogame developers is thus to support consumers’ suggestions and demands. Implied is that game-design possibilities are limited by consumers’ willingness to pay. The ‘twin principles of pleasure and profit’ hence ground much of videogame production and consumption processes (Ciută, 2016: 211).

Although various human agencies in videogame production and consumption have been studied, only a selection of interdisciplinary game studies literature theorizes how these processes are also shaped by non-human objects (Ash and Gallacher, 2011; Bos, 2018; Denegri-Knott and Molesworth, 2013; Giddings, 2009). For instance, non-humans include the objects and characters inside game-worlds, along with the affordances of code, physical game controllers, and material circumstances of gaming venues in the real world. Our contention is that these human–non-human entanglements are conducive to social constructions that can undermine the real (Salter, 2011). Such considerations are especially undertheorized in international relations scholarship on AI weapons and pop culture. Hence, we require an analytic framework that can capture and understand the effects that human–non-human entanglements instill in videogame contexts.

Analytical framework

Our analytical framework is informed by Actor-Network Theory (ANT) (Latour, 2005). ANT is an analytical sensibility that guides the identification and investigation of relations between heterogeneous humans and non-humans embroiled in a context (Borg, 2021; Salter, 2019). What follows is that contexts are ‘black boxes’ that must be unpacked into their components to be understood (Schouten, 2014). By design, ANT tasks researchers to avoid a priori assumptions over what the objects of inquiry are, thereby not limiting analyses to human conduct alone (Rothe, 2017; Salter, 2019). Hence, researchers must ‘follow the actors’ (Latour, 2005: 68), with ‘actor’ denoting any human or non-human that seems to ‘modify, transform, perturb or create’ other entities in a context (Latour, 1999: 122). This challenge underscored by ANT inspires researchers to reveal contexts anew by foregrounding human and non-human actors and their often inconspicuous entanglements (Franco et al., 2022). For example, Schouten (2014) uses ANT to reveal airport security as composed of shifting relations between body scanners, guards, performance metrics, regulators, and cameras. Each study that applies ANT will feature its own unique ‘actors’ that bear different effects on the context studied (Franco et al., 2022).

ANT can make unique contributions to game studies and international relations through its ability to theorize how new realities are produced and made actionable when humans and non-humans become entangled (Callon, 1984; Giddings, 2009). The concept of ‘translation’ encapsulates this feature of ANT. Translation conceptualizes how actors are transformed by their relations to other actors (Brown, 2002; Law, 2009). In particular, translation describes how the qualities of an actor are re-presented by other actors, especially when such relations enable new objects to emerge (Callon, 1984). For instance, organizations, weather stations, computer simulations, scientists, and satellites translate climate change in ways that make it visible and governable through the images, data, and reports that entanglements between these actors produce (Rothe, 2017).

Importantly, ANT calls attention to the effects of non-humans alongside humans in the translative production of cultural objects (Denegri-Knott and Moleswoth, 2013; Giesler, 2012). For our purposes, this means that videogames and their portrayals of weaponized AI are not the sole products of developers’ and consumers’ creative visions, but of these in combination with the effects of various implicated non-humans. Beyond those identified in prior game studies scholarship (e.g. in-game characters and objects, software code, game controllers; see Bos, 2018; Denegri-Knott and Molesworth, 2013; Giddings, 2009), we highlight the imprints on videogames of tropes, films, media artifacts (e.g. YouTube videos), online community posts, envisioned future warfare scenarios, and real-world AI weapons in development themselves.

Key to our framework is ANT’s notion that objects (e.g. videogames) can contain distorted representations of other actors (e.g. AI weapons) that are uniquely translated by the entanglements in which they emerge (Callon, 1984; Giesler, 2012). As Brown (2002: 7) explains, ‘To translate is to transform, and in the act of transforming a breaking of fidelity towards the original source is necessarily involved.’ Analogous to translating words across languages, translation is equivalence albeit with imperfections that shift what things come to be and mean (Law, 2009). In our framework, we view videogames as containing translations of AI weapons that are prone to portraying them in distorted ways that deviate from their real-world circumstances. To unpack such distorted AI-weapons translations in videogames, we next introduce our empirical context and methods germane to our study.

A netnographic study of AI weapons in military-themed videogames

Since the 2010s, military-themed videogames have increasingly featured weaponized AI systems that select and engage targets free from in-game human-character involvement. This development can be attributed to greater international awareness of AI weapons and a trend towards videogames set in futuristic contexts (Robinson, 2019). First, greater awareness coincides with the founding of the International Committee for Robot Arms Control in 2009 and the Campaign to Stop Killer Robots in 2013 – two not-for-profit associations seeking AI-weapon regulation (Carpenter, 2016). For example, since this time, numerous Call of Duty titles feature autonomous sentry guns and close-in weapons systems that destroy incoming missiles and aircraft. Undeniably, this increasing awareness has imprinted on contemporary military-themed videogames.

Second, this awareness also permeates more elaborate AI weapons featured in titles set in the future. Examples include autonomous land and air drones armed with machine guns in Call of Duty series titles Ghosts (2013), Black Ops III (2015), and Black Ops 4 (2018); four-legged combat drones reminiscent of Boston Dynamic’s BigDog robot in Ghost Recon: Future Soldier (2012) and Battlefield 2042 (2021); and drone swarms in Call of Duty Advanced Warfare (2014). Notably, Ghost Recon: Breakpoint (2019) centralizes the issue of weaponized AI. Breakpoint is set in 2025, when a paramilitary group hijacks a technology conglomerate’s headquarters and research facilities. This group’s intent is to ‘secure the future with technology others developed but were too fearful to deploy’ – as the main antagonist states in a YouTube game trailer (Activision, 2019). As such, Breakpoint portrays various weaponized autonomous land and air combat drones to offer consumers’ entertaining experiences.

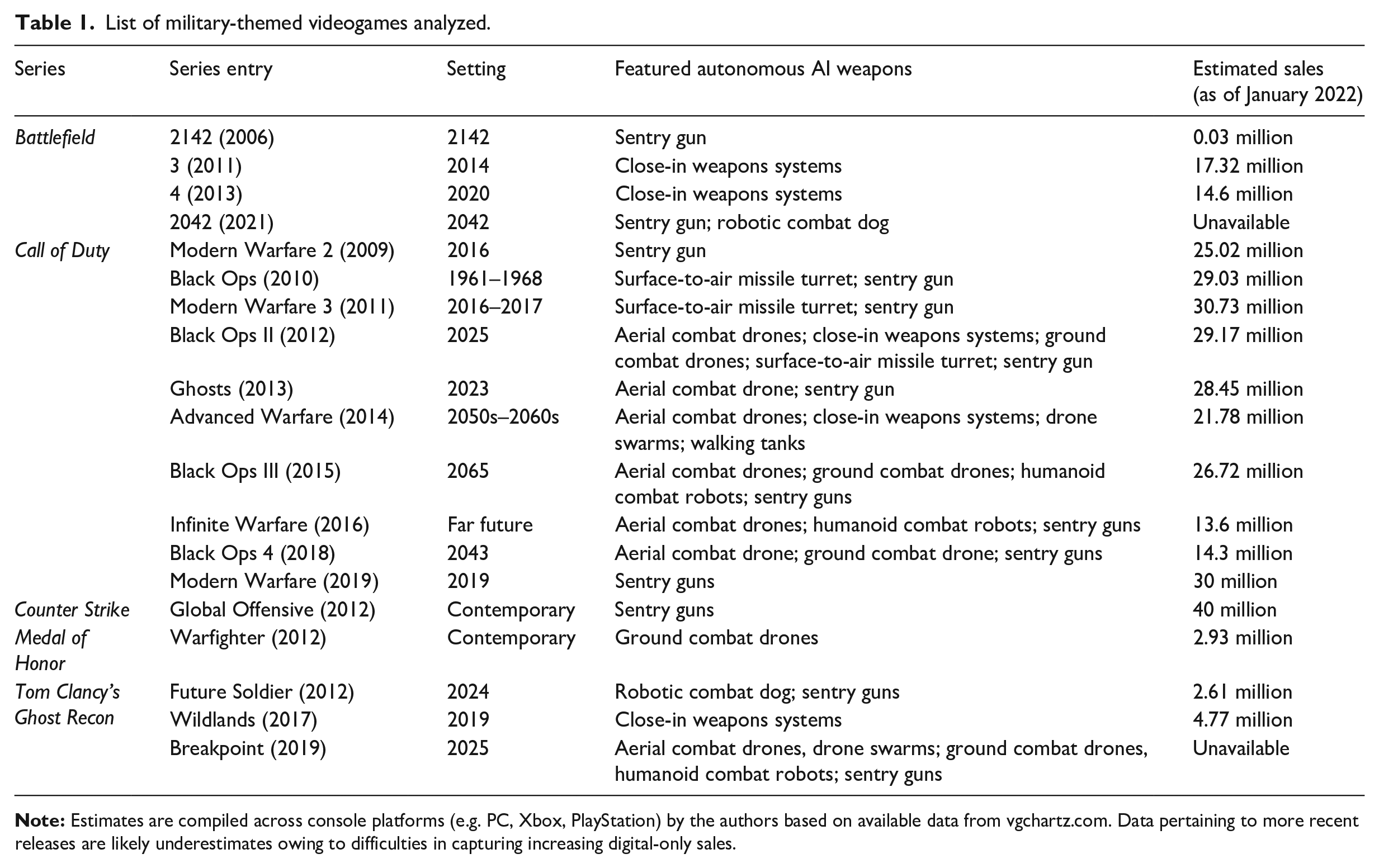

The 19 military-themed videogames we have identified as relevant to our study are set out in Table 1. Each videogame and its network of actors is a unit of analysis. Our inclusion criteria are that a videogame must be: (1) military themed and premised on conflicts not far removed from contemporary real-world circumstances (e.g. not featuring aliens – Halo series; or alternative histories – Metal Gear series); (2) popular, meaning widely circulated; and (3) it must feature at least one AI weapon (e.g. autonomous sentry guns, combat drones, militarized robots). To identify whether a videogame meets our inclusion criteria, we trawled through lists of the best-selling military-themed videogames (i.e. IMDb.com, VGChartz.com) and examined plot summaries and weapons/gadgets/vehicles lists compiled by community ‘Wiki’ pages for each videogame and/or series (e.g. callofduty.fandom.com).

List of military-themed videogames analyzed.

Given the digital nature of videogames and the fact that consumers, developers, and gaming media congregate on online platforms, netnographic qualitative fieldwork was employed (Kozinets, 2010). As we aimed to analyze all popular videogames featuring autonomous AI weapons we could identify, and not the social relations within online communities, we utilized a non-participatory approach (Bettany and Kerrane, 2016). Over six months, we immersed ourselves in the videos, imagery, and texts of Wiki pages, YouTube channels, and Reddit boards relevant to the games under study. Supplementing this data are press releases by videogame developers and news media stories. Overall, these materials enabled us to unpack various networks of humans and non-humans that shape AI-weapon portrayals in military-themed videogames.

The paradox of weaponized AI in videogames and distorted notions of human–machine interaction

We unpack our findings across the following two sections. In this first section, we identify a paradox in portrayals of AI weapons in military-themed videogames and the distorted notions of human–machine interaction they propagate. Specifically, we observe that weaponized AI is portrayed as fully autonomous existential threats to humans while also overcome by human protagonists who control and fight these same weapons with ease. In the following section, we theorize how these distorted translations of AI weapons emerge in light of our analytical framework.

Videogames set in contemporary settings include, but seldom emphasize, fully autonomous weaponized AI. These games feature autonomous sentry guns, as in many Call of Duty titles and Counter Strike: Global Offensive (2012), and close-in weapons systems like the real-world Phalanx in Call of Duty: Black Ops II (2012) and Centurion C-RAM in Battlefield 3 (2011). Inclusions of these weapons reflect developer intentions to offer plausible warfare scenarios that feel authentic. As put by Taylor Kurosaki, developers Infinity Ward aim for games like Call of Duty: Modern Warfare (2019) to live up to their name by portraying ‘what the modern battlefield looks like’ (Snider, 2019).

This sensibility towards realism transfers to titles set in futuristic contexts, albeit with some creative liberties. For example, Call of Duty: Advanced Warfare (2014), set in 2054, features AI weapons like armed drones that follow the player and kill enemies on their behalf and a swarm variant that brandishes machine guns. While these technologies appear far removed from present AI-weapons developments, Michael Condrey of Sledgehammer Games emphasizes that if game designers ‘can’t point to R&D or a prototype, it can’t go in the game’ (Stuart, 2014). These AI weapons draw inspiration from contemporary weapons-development initiatives, like drone swarm projects in the USA, China, Russia, and the UK (Hambling, 2021).

Portrayals of AI weapons are intended by many developers to be warnings of their envisioned possibilities as insurmountable enemies to humans. This is indeed the intention of Ghost Recon: Breakpoint (2019), which depicts a 2025 teetering on dystopia. As associate producer Lucas Gissinger expresses: We want players to have that feeling whenever they see a militarized drone. . . . You’re the hunted. . . . [Drones] can take more bullets . . . and last longer on the battlefield. . . . They are grounded in reality, but they are also high-tech enemies that perfectly fit the setting and lore of Ghost Recon Breakpoint. (Reparaz, 2019)

However, while intending to express their dangers, the same videogames feature human protagonists who routinely overcome AI weapons, particularly as consumers become proficient players. As a Reddit user explains to a new Breakpoint player: The drones go down much faster than you think and can almost be too easy to destroy if you know how to kill them. If you get detected, then go loud. Switch to full-automatic fire and take off your suppressor so you don’t give up -20% damage for stealth.

A similar gripe is shared by another player: ‘Drones: The flying ones shouldn’t take a few shots to wreck, period.’

The ease with which players learn to destroy drones is prominent in Call of Duty: Black Ops II (2012), notably in its penultimate campaign mission entitled ‘Cordis Die’. The mission requires the player to protect the US president from drones in a war-torn Los Angeles. As seen in a gameplay video (Figure 1), players must use a surface-to-air missile turret to down scores of drones to complete the mission. Given players’ ease in learning how to destroy these AI weapons, they are portrayed as easy targets. As such, foreboding narrative messages of AI weapons being insurmountable dangers intended by developers are undercut by portrayals in which they come across as easy targets in gameplay.

YouTube gameplay video screenshot of a player destroying scores of drones with a surface-to-air missile turret in Call of Duty: Black Ops II (2012).

These contradictory portrayals embody videogames’ challenges in maximizing human agency for gameplay purposes while trying to reflect real-world military AI-weapons developments where human agency is being minimized (Garcia, 2018; Heyns, 2016). On one hand, games that claim authenticity must approximate contemporary military technologies (Jarvis and Robinson, 2021; Payne, 2012). Weapons portrayed in narratives as operating with limited to no human involvement resemble real-world weapon-developments trends and partially arise from the need to provide suspense to players. On the other hand, in man-versus-machine scenarios, it is imperative for players to feel self-sufficient, powerful, and in-control (De Zamaróczy, 2017; Salter, 2011). These conflicting aims twist notions of human–machine interaction towards accentuated human agency and away from real-world scenarios in which it is intended to be limited by AI weapons.

Distorted notions of human–machine interaction are reflected in several ways in military-themed videogames. First, in terms of operation, AI weapons are positioned as subordinate to inviolable in-game humans who remain in control. Human protagonists, when delegating killing responsibilities to AI weapons, are technologically augmented. Even in videogames set in contemporary settings, autonomous weapons like sentry guns flawlessly avoid ‘friendly fire’, only posing threats to enemies. Such representations of AI weapons erase many real-world AI-weaponization threats to human control that challenge fundamental principles of international humanitarian law such as proportionality, distinction, and accountability (Altmann and Sauer, 2017; Heyns, 2016). For instance, current military applications of autonomous weapons like Identification Friend or Foe (IFF) systems are riddled with complexities in successfully discriminating between civilians and combatants, let alone allied and enemy forces (Sharkey, 2010).

Second, in terms of effects, the lethality of AI weapons to humans is not well depicted, especially if we assume that the efficacy of future versions will overcome present technical limitations. Resonating with prior scholarship (Berents and Keogh, 2018; Payne, 2014), we observe that popular videogames sanitize the potential of AI weapons to mutilate human beings. For instance, outside of rare instances in campaign-mode cinematic cutscenes, bodies keel over without blood or dismemberment commensurate to AI-weapons attacks with heavy armaments. As almost all of the games we studied, like other popular videogames, did not feature civilian casualties (Payne, 2014; Salter, 2011), the lethality of AI weapons is glimpsed only from the perspective of heroic in-game soldiers. The consequence is that debilitating real-world impacts of delegating life-and-death decisions to AI for human security purposes are skirted over. The games we examined would not enjoy the popularity, acceptance, and commercial success they do if they represented human–machine interaction otherwise. In the next section, we expand on this point.

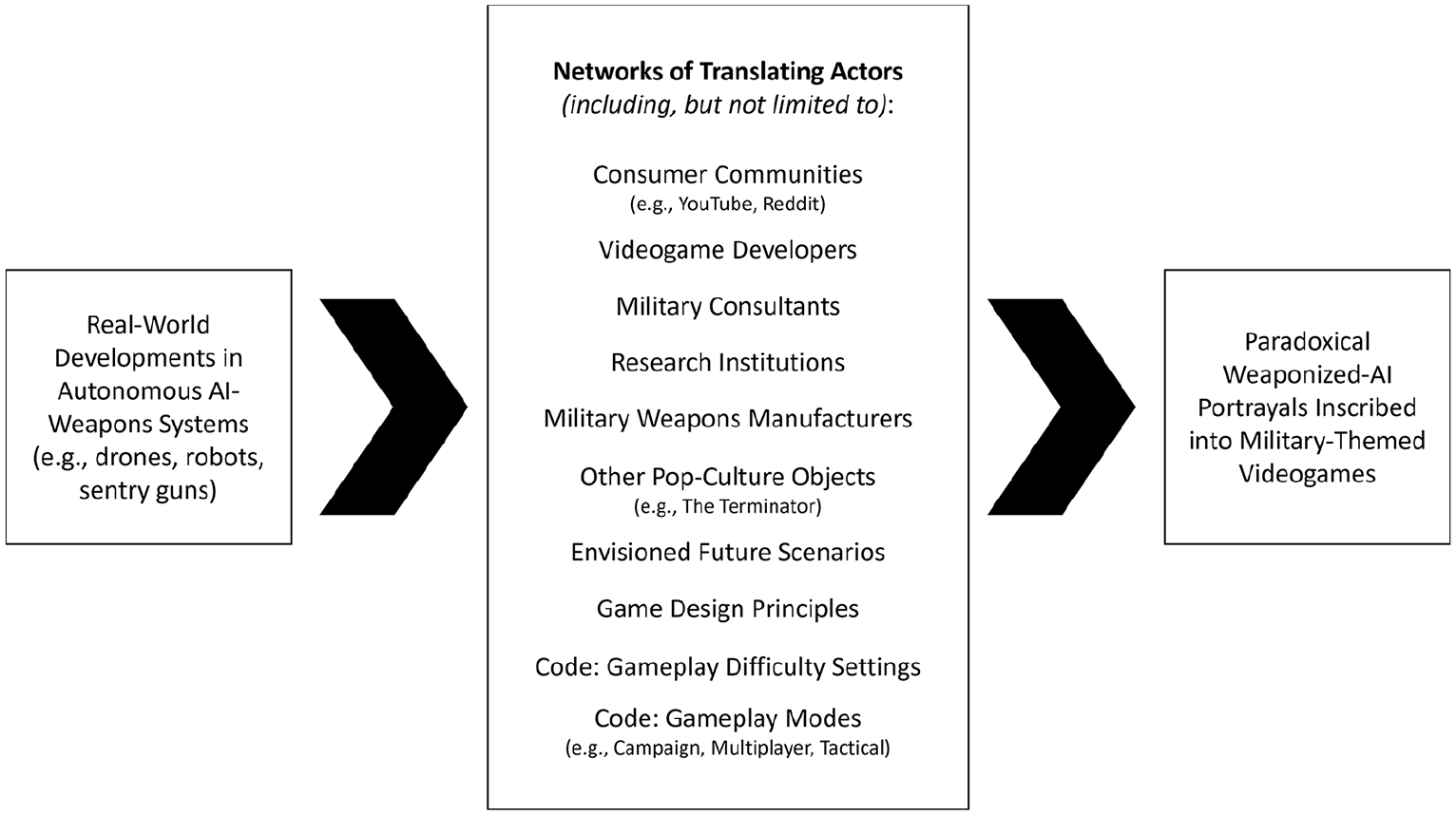

Commercialized translations of AI weapons in videogames

The AI-weapons paradox and the distorted notions of human–machine interaction it propagates are symptomatic of commercial influences that shape videogames as a form of consumer entertainment. According to our analytical framework, videogames have within them a particular translation of AI weapons inscribed by networks of human and non-human actors (Canniford and Bajde, 2015; Giesler, 2012). The actors involved in videogame production and consumption make certain representations of AI weapons appear more logical, reasonable, or inevitable than others.

We illustrate this translation process in Figure 2. In this diagram, we show that real-world AI-weapons developments serve as the foundations for their videogame translations. Quick examples include Israel’s Iron Dome air-defense system, Russia’s Uran-9 ground combat vehicle, and South Korea’s SGR-A1 anti-personnel sentry weapons (Bode and Huelss, 2018). However, our analyses also foreground inconspicuous actors that translate emerging AI weapons into the distorted forms that are inscribed into videogames. Below, we theorize how these actors shape AI-weapons portrayals when networked together.

Videogame AI weapons ‘translation’ process.

We begin with the effects of actors more obvious in their commercial influences on the videogames analyzed. Consistent with prior research on military-themed videogames, developers often hire military consultants to learn about current battlefield realities (Robinson, 2019). Our data show similar influences. However, in our context, military consultants are also tasked with projecting plausible and realistic future battlefields, and these consultations extend to research institutions. For instance, the developers of Call of Duty: Advanced Warfare (2014) invited military personnel and NASA consultants to inform this game’s vision of 2054 armaments. According to Michael Condrey of Sledgehammer Games: We research experts in the field and reach out directly. Retired Delta Commander, Dalton Furty, is an example.. . . The result is that every gun, vehicle and aircraft in the game has a basis in current weapons research. (Stuart, 2014) Walking tech . . . we saw it! . . . It was at NASA. It was a tank . . . that they had built to send it to mine rare minerals on asteroids. . . . [I]t had six legs and wheels and it could climb things . . . and you were a turret away from what you see in the game. (GamesCentral, 2014)

Consultations have likewise involved AI-weapons manufacturers. This was indeed the case for Ghost Recon: Breakpoint (2019). As reported by Bertz (2019): Ubisoft spoke with . . . a former operations officer in the French Ministère de la Défense. He told them to take a meeting with Milrem Robotics, a military contractor that’s been making drones since 2013 . . . . [After the meeting] Ubisoft felt good about making drones a centerpiece of the adversarial experience in Breakpoint.

ANT considers organizations as hybrid networks of humans and non-humans (Law, 1994). In our data, research institutions and weapons manufacturers span various types of personnel, facilities, and equipment, as well as AI machines in development themselves (armed/non-armed). Comparisons between Milrem Robotics’ advertised AI weapons on YouTube and many of the militarized drones in Breakpoint illustrate how such networks imprint upon videogames. We showcase one comparison in Figure 3.

YouTube video screenshot comparisons between the ‘THeMIS UGV’ advertised by Milrem Robotics (2017) and the ‘Andras’ UGV model in Ghost Recon: Breakpoint (2019) (Ubisoft, 2020).

Figure 3 illustrates the imprints of Milrem Robotic’s ‘THeMIS’ autonomous unmanned ground vehicle (UGV) on AI weapons like the ‘Andras’ combat drone in Breakpoint. The likenesses are found not just in the appearance of both AI weapons but also in how each moves on a battlefield. Analysis of THeMIS demonstration footage and Breakpoint gameplay shows how the in-game Andras drone similarly swivels its turret and base in different directions as it changes direction or engages targets.

However, one translated deviation between the THeMIS and the AI weapons in Breakpoint are the red lights that glow under the armor of the videogame iterations. Here we detect another non-human’s imprints. Breakpoint’s drones (and those in many Call of Duty titles) display the signature ‘glowing red eyes’ trope of the Terminator films’ killer robots. A few months after Breakpoint’s release, these imprints became less subtle as a licensed Terminator set of missions was made available to players. In these missions, the player battles the titular terminators from the films, with the narrative suggesting that these killer robots are descendants of the AI weapons featured in the base game.

Envisioned future scenarios especially imprint on portrayals of AI weapons through the game-worlds of titles set in futuristic contexts. These scenarios take on lives of their own as non-human actors that condition how advanced AI weapons technologies and their lethal efficacies are translated, at least in terms of narrative intentions. An example is the world of Call of Duty: Black Ops III (2015). Studio head Mark Lamia explains Treyarch’s approach to envisioning autonomous combat technologies in 2065: We look at Black Ops 2 and say, ‘OK, these drone strikes in 2025 occurred. What happens next in the world?’ . . . Politicians across the world create laser defense systems . . . . Suddenly, what does that do for warfare? It brings everything back down to the ground. What happens when we’re back down on the ground? . . . Massive investments in advanced military robotics. (Crecente, 2015)

Treyarch’s 2065 machinic warfare is most visceral at the end of Black Ops III’s first mission, entitled ‘Black Ops’, where the player fails to be extracted from a battlefield and is left behind with an enemy combat robot. During this cinematic cutscene, from a first-person perspective, the player’s arms are ripped from their sockets and one of their legs broken by the robot (Figure 4). This scene prompts bemused laughter and expletives by many players in the gameplay videos analyzed. While wildly entertaining, a rare sense of helplessness falls upon the player during this sequence as they vicariously experience the dismemberment. This is due to this sequence being an obligatory cinematic cutscene that is part of the game’s structured narrative (no gameplay).

Screenshot of cinematic cutscene in Call of Duty: Black Ops III (2015) in which the player is dismembered by an enemy combat robot.

Scenarios like these occasionally feature in the futuristic titles studied and attempt to translate weaponized AI as terrifying lethal threats that diminish human agency, consistent with current real-world visions of AI-augmented warfare. However, the imprints of another interrelated set of actors distort translations of AI weapons in such a way that players’ sense of control is instead enhanced. These non-human actors are game design principles, difficulty settings, and gameplay modes in which videogames’ code seeks to structure enjoyable gaming experiences for consumers on behalf of their human creators (Callon, 1984).

Call of Duty: Modern Warfare (2019) campaign gameplay director Jacob Minkoff and Ghost Recon: Breakpoint (2019) military advisor Emil Daubon explain that game design principles regarding player control and enjoyment steer videogame development: Jacob Minkoff: It’s so important for people to have entertainment products that feel like catharsis, so they get to see a hero overcome odds in a world they recognise as their own, to take power, to take action that makes the world that we all live in and fear a little better. (Forward, 2019, emphasis in original) Emil Daubon: It’s about striking that balance. It’s about maintaining authentic and real details while remaining playable . . . . The reality is that conflict can sometimes be boring. (Shacknews Interviews, 2019)

Such principles are implemented through the code of each game, which governs its game-world, characters, objects, and how they interact. We find that code shapes AI-weapon portrayals in video-games. For instance, gameplay difficulty settings are a common way that code translates AI weapons into distorted videogame forms. Consider the difficulty settings in Breakpoint that players set at the start of the game. These settings govern the AI weapons as much as computer-controlled human enemies: Arcade: Easiest mode, for a laid-back experience. Enemies take more time to detect the Ghosts [player’s human character/team]. Ghosts take less damage from enemies and regen health sooner. Strong aim assist. Regular: Normal mode, for most players. Enemies can compete with elite soldiers. Advanced: Strategic mode for advanced players. Enemies are more precise and deadly. Ghosts health regen will take longer to start. Extreme: Simulation mode, a realistic scenario for remarkably tactical players. Enemies are extremely lethal, aware and skilled. Ghosts health regen will take longer to start.

Many players expressed in forums that they choose the settings that best balance the game being fun and challenging according to their tastes: Regular is the most fun in my opinion. It’s not fun to get spotted from 600m away by a f-ing drone and get one-shotted. I usually play on Regular. If I want extended gunfights, I play on Arcade. That way, I don’t get killed in two shots from super aim enemies. I always play on advanced or extreme. Anything else and you are just cutting down enemies way too easily.

Difficulty settings translate the portrayals of AI weapons, varying how lethal they are to humans. We find that the touted real-world lethality of AI weapons routinely gives way when players choose lower difficulty settings that render such weapons easier targets. It is only when players opt for more challenging settings that the diminished human agency stressed in real-world discussions is translated somewhat more accurately. In these rarer situations, narratives of AI-weapon lethalness are not betrayed by gameplay (Hocking, 2009; Payne, 2014).

Last, we find that gameplay modes coded into military-themed videogames translate AI weapons in different ways (campaign vs multiplayer vs tactical modes). Campaigns offer branching series of linear missions that are interspersed with cinematic cutscenes that drive narratives. This mode is largely single-player in the games studied. The AI-weapons videogame paradox is heightened in campaign modes, as their focus on narratives leaves them prone to dissonances with their first-person shooter gameplay.

Although multiplayer modes emphasize gameplay, they too bear paradoxical AI-weapon translations. In multiplayer modes, players go head to head to amass the most kills as individuals or as a team. Players can delegate the killing of other players to AI weapons controlled by videogame code. However, the availability of AI weapons in multiplayer is famously governed in Call of Duty titles by their translation as ‘rewards’ when players slaughter multiple other players in a row without dying. However, despite these weapons’ hyped lethal efficacies when recontextualized as rewards, their performance as allies is a mixed bag for players. As an example, in a YouTube video, a player reviews the ‘Vulture’ aerial drone’s usefulness in Call of Duty: Infinite Warfare (2016). Its appeal is its ability to follow a player and autonomously fire upon enemy players for 60 seconds. However, the reviewer remarks, ‘the problem of the Vulture in general is that it’s fragile, it can be destroyed with basic weapon fire very easily’. In the comments, another player sympathizes, joking the Vulture is like ‘wet paper you pray will kill other players’. Based on our analyses, we suspect AI weapons’ variable multiplayer efficacy serves to give opposing human players a chance to overcome their purported threat as a challenge. This is another way that players’ human agency is enhanced at the expense of AI-weapons portrayals for gameplay-experience purposes.

Tactical modes in which players take concurrent strategic control of multiple entities on a battlefield from a bird’s-eye view were rare in our data. However, we highlight this mode as it offers a unique translative variant of AI weapons – as tools that must coordinate well with other humans and non-humans on the battlefield. For instance, in Call of Duty: Black Ops II (2012), success in the game’s ‘strike missions’ is intended to rely on players’ managing how well the soldiers, vehicles, and AI weapons they command work together to achieve mission objectives (see Figure 5). Players can intervene in these entities’ autonomous decisionmaking by redirecting their movements and targeted enemies if they desire, as part of responding to changing battlefield circumstances. By transporting players outside of a first-person perspective, tactical modes shift AI weapons from visceral threats to abstract entities akin to pieces on a chessboard.

Screenshot of a ‘strike mission’ in Call of Duty: Black Ops II (2012) in which players can control multiple soldiers, vehicles, and AI weapons at once. In this mission, the player must coordinate various entities to defend a convoy.

Notwithstanding tactical gameplay’s unique translation of weaponized AI, the AI-weapons paradox still manifests, albeit in a different form. Notably, in Call of Duty: Black Ops II (2012) many Reddit users lament how ineffective AI weapons are in autonomously killing in-game enemies they target, provoking them to take first-person control of soldiers or these weapons themselves. In effect, AI weapons are portrayed more as ineffective allies than the insurmountable strategic threats the game promises them to be, to the detriment of gameplay experience: Strike missions could have been fun, but the game’s AI [coding] sucks. You pretty much had to play first person as one of your soldiers and hope you can do the objective yourself before you run out of reinforcements. The missions were broken. The friendly game AI is horrific and I’m certain that the only reason I beat these missions was the badass effort I put in. Concept is cool, but the gameplay just didn’t work. Probably why the [developers] never included strike missions in the games since.

Thus far, we have explained how various human and non-human actors enable paradoxical videogame translations of AI weapons as both insurmountable enemies and easy targets. Our concern has been the distorted notions of human–machine interaction these portrayals of AI-weapons may instill in players. We next discuss how these concerns converse with various theoretical conversations relevant to research at the nexus of international relations, pop culture, game studies, and AI weaponization scholarship.

Popular culture, videogames, and the public

Our findings align with research that argues that pop culture’s effects on the public may be overstated in international relations scholarship. For instance, Bos (2018) finds that Call of Duty consumers who only play multiplayer modes over campaign missions absorb little of the geopolitical meanings the videogames offer (see also Höglund, 2014). Similarly, Carpenter (2016) illustrates that the more far future–oriented a pop-culture object is, the more consumers will not see the threats it portrays as warranting immediate political action. It is plausible that owing to futuristic warfare settings or players only playing multiplayer modes, the games we have studied have not made strong impressions on consumers. However, we argue that narrative content (or a lack thereof in multiplayer modes) is only part of the story when it comes to pop culture and its bounded abilities to imprint upon the public.

We uniquely illustrate that creators’ intentions to express politicized discourses to audiences through narratives can be complicated by the commercial requirements of pop-culture objects. This insight challenges extant international relations scholarship that implicitly assumes that pop-culture objects are the unfettered products of creators’ discursive intentions. Such an assumption can be seen in research approaches that connect academic readings of pop culture to public opinion expressed in surveys, blogs, and news media (e.g. Horowitz, 2016; Young and Carpenter, 2018). These studies consequently imply that any deviations from intended meanings simply stem from consumers and creators having different interpretive frames. Indeed, as Weldes (1999) points out, all forms of pop culture as ‘texts’ are prone to multiple readings. However, our work resonates with game studies scholarship in suggesting other possibilities.

By viewing developers as entangled in networks of human/non-human actors that imprint on their videogame creations, we offer game studies an ANT-informed theorization that shows that creator intentions can be complex (Payne, 2012). While not directly revealing actors’ intentions to legitimize militarization and sanitize visions of war as in literature on the military–entertainment complex, our data demonstrates that developers’ desires are contorted by commercial needs to entertain. That is, to be fun and profitable, videogames must intensify senses of human control in gameplay (Ciută, 2016; De Zamaróczy, 2017). This requirement runs contrary to developers’ narrative intentions to portray AI weapons as lethal threats when taking inspiration from real-world circumstances that stress that such technologies are applied in ways that work to diminish human agency on battlefields. As such, diminished human agency in AI warfare is difficult to translate into videogames vested in giving players senses of control, ironically through their interactivity.

Given this understanding, artistic exposés of current AI-weaponization efforts and warnings of dystopian futures are undercut if they are not safe bets to be popular and profitable through enjoyable gameplay (see Young and Carpenter, 2018). It follows that differing creator and audience interpretations can emanate from commercial forces that stunt pop culture’s potentials to translate the real world. Parallels abound for films, such as critically acclaimed social commentaries grounded in realism that rarely equate to box-office success or reach wide audiences (Payne, 2014).

Our work emphasizes that it is not so straightforward to reduce a pop-culture object to a ‘text’ that reflects how a society is thinking about new political developments – a common conceptual move in international relations (e.g. Buzan, 2010; Nexon and Neumann, 2006). Rather, intertextual treatments can miss other forces that steer content through the networks of actors involved in the creation of pop-culture objects (Payne, 2012). In our context, although developers warn of AI weapons’ dangers in narratives, these meanings are inevitably betrayed by conflicting gameplay design choices that are intended to make the same videogames fun and widely popular (Hocking, 2009; Payne, 2014). As many consumers in our data lament – it is not fun to be immediately killed in-game by lethal AI weapons. Ultimately, this means that pop culture can offer societal reflections on new political developments, albeit filtered and limited through lenses of what is not only socially acceptable but believed to be mass marketable to consumers (Payne, 2012).

It is crucial to pay attention to the consequences of videogames’ ludic/commercial dimensions in understandings of AI weapons and, more broadly, warfare technologies and arms control (Jarvis and Robinson, 2021). While not necessarily embedding support for AI weaponization, AI-weapons portrayals in the videogames we examined construct and perpetuate the idea that humans remain in control even though these weapons appear to be able to select and engage targets on their own. We find it important to highlight that our finding does not imply that consumer understandings of human–machine interaction are inherently distorted from playing such games. Experiences of playing a game are deeply individual – the player’s skills, previous experiences, and knowledge all play a role in shaping their interpretations (Huotari and Hamari, 2017). However, paradoxical portrayals of AI weapons in videogames steer possibilities for more occurrences of distorted notions of human–machine interaction to be instilled in consumers. Given the popularity of videogames, distorted understandings of human–machine interaction may blunt opportunities for public critique on various stakeholders’ desires to grant autonomy to weapons under the premise that they benefit humankind on a global scale.

Distorted AI-weapons portrayals in videogames also signal a lack of nuance and variety in how human–machine interaction is represented, stifling other possibilities to reach public awareness and understanding. In videogames, AI weapons are generally programmed as operating in a fire-and-forget mode to attack players or enemy forces with full autonomy. Beyond full autonomy to select and engage targets, there are other human–machine arrangements. These include, in order of increasing autonomy, an AI weapon: (i) only carrying out an attack on human-selected targets; (ii) providing humans with a list of targets it proposes to attack; (iii) selecting a target to attack itself, where the attack first requires human approval to initiate; and (iv) selecting a target and offering humans a time-restricted veto before its attack is initiated (Sharkey, 2016). At the UNGGE on lethal autonomous weapons systems, such forms of human–machine interaction and their appropriate application contexts are still being debated. Although we cannot conclusively comment on the basis of our data, we suspect that these possibilities that would reinsert players into targeting decisions are not translated as they may not always fit the fast-paced action popular in military-themed videogames. However, without translative representation in pop-culture objects, these possibilities are less able to inform the public imagination.

As a consequence, political engagement by the public cannot leverage these alternative possibilities for human–machine interaction that free AI weaponization from being reduced to an all-or-nothing issue regarding autonomy. In our view, a binary framing does a disservice to conversations unfolding between proponents and opponents of AI weapons (Beier, 2020; Bode and Huelss, 2018). In particular, we hope that public awareness of human–machine interaction alternatives can strike a balance between ‘good’ and ‘bad’ political, moral, and societal effects that AI weapons are envisioned to afford. Our hope is pragmatic, given that many academics concede that state and non-state competition likely makes AI weaponization inevitable (Horowitz, 2019). That is, if the ideal of arms-race prevention is unattainable (Garcia, 2018), regulation towards alternative possibilities for human–machine interaction is a realistic way forward.

Following these premises, if pop culture is a means through which the public can learn about AI weapons and their possibilities, then showing off the nuance and variety in human–machine interaction arrangements offers opportunities for policymakers, pop-culture producers, and other stakeholders. In the first instance, this nuance and variety can enable richer and more grounded translations of emerging AI-weapons realities to better inform the public. However, an additional benefit is that these alternative human–machine arrangements may also contain ideas that are provocative for audiences to consume, the caveat being whether or not these possibilities have ludic and commercial desirability.

Conclusion

In this article, we have explored a paradox in which military-themed videogames portray weaponized AI as both insurmountable enemies and easy targets for humans. This paradox captures distorted notions of human–machine interaction that deviate away from contemporary weapons development and deployment discussions in political and academic debates. To do so, we have leveraged ‘translation’ from ANT (Callon, 1984) to offer a theorization over how entangled humans and non-humans produce these conflicting portrayals in pursuit of making videogames fun, popular, and commercially viable. In so doing, we contribute to conversations at the intersections of international relations, popular culture, game studies, and AI weaponization. We specifically demonstrate that pop culture’s abilities to inform public understandings of political realities is bounded not just by its narratives, but also by the commercial requirements of its objects’ forms (e.g. films vs videogames). We additionally caution that academic treatments of pop culture as ‘texts’ can miss how the societal reflections they contain may be filtered to those most marketable to the public, conditioned by various human and non-human actors.

On the basis of our research, future scholarship will benefit from studying different cultural forms and how their international relations–related messages and public impacts are delimited by various forces. For instance, research can investigate what makes a particular pop-culture form commercially viable and able to disseminate an international relations–related message without undermining it. For instance, late-night TV satire à la Last Week Tonight with John Oliver likely needs to balance informativeness, marketability, and humor (paralleling gameplay in videogames). Extant research already suggests that the type of humor deployed affects how well arguments are received by audiences (e.g. irony versus sarcasm) (Polk et al., 2009). Links to how commercial viability is harmonized with humor and informativeness will say much about how impactful late-night TV can be on political discourse and action.

We ponder similar questions regarding what can make other cultural forms popular, commercially viable, and impactful in international relations contexts, from films, books, and theatre, to music. For instance, are international relations representations limited to what ideas fit a three-act narrative structure with a happy ending in blockbuster films or catchy choruses in music? Accordingly, we hope our research offers a much-needed lens through which to better understand what may hold pop culture back from effectively engaging the public on mounting issues like AI weaponization. In this manner, through greater public pressure for effective international regulation or prohibition (Bode and Huelss, 2018; Garcia, 2018), humankind can better navigate both the real and the imagined complexities that autonomous AI weapons will continue to provoke in the coming years.

Footnotes

Acknowledgements

The authors would like to thank the AutoNorms team, session participants at both the 2022 International Studies and Emerging Technologies Working Group Conference and the 2022 Future of War Conference, the Radboud Marketing Group, the Radboud Political Science Group, and the editors and anonymous reviewers for their constructive comments on prior versions of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The work presented in this article was supported by the European Research Council’s Horizon Europe program (Grant no. 852123).