Abstract

References to the Terminator films are central to Western imaginaries of Lethal Autonomous Weapons Systems (LAWS). The puzzle of whether references to the Terminator franchise have featured in the United States’ international regulatory discourse on these technologies nevertheless remains underexplored. Bringing the growing study of AI narratives into a greater dialogue with the International Relations literature on popular culture and world politics, this article unpacks the repository of different stories told about intelligent machines in the first two Terminator films. Through an interpretivist analysis of this material, we examine whether these AI narratives have featured in the US written contributions to the international regulatory debates on LAWS at the United Nations Convention on Certain Conventional Weapons in the period between 2014 and 2022. Our analysis highlights how hopeful stories about what we coin ‘machine guardians’ have been mirrored in these statements: LAWS development has been presented as a means of protecting humans from physical harm, enacting the commands of human decision makers and using force with superhuman levels of accuracy. This suggests that, contrary to existing interpretations, the various stories told about intelligent machines in the Terminator franchise can be mobilised to both support and oppose the possible regulation of these technologies.

References to the Terminator franchise are an ubiquitous feature of the study and popular imagination of Lethal Autonomous Weapon Systems (LAWS) defined as weapons which “select and apply force to targets without human intervention” (ICRC, 2021).

1

As former United States Under Secretary of State for Arms Control and International Security Christopher Ford asks: [w]ho, at this point, doesn’t approach a discussion of lethal autonomous weapons systems without thinking, even inadvertently, of the villainous “Skynet” . . . and the robot-assassins it dispatches against the noble but hard-pressed remnants of humanity in Hollywood’s Terminator franchise? (Ford, 2020: 2).

The media coverage given to LAWS regularly features images of metallic silver skulls with piercing red eyes – an iconography partially inspired by the T-800 Terminator played by Arnold Schwarzenegger (Carpenter, 2016: 57; Cave et al., 2018, 17; Dillon and Craig, 2022: 1). Terminator references also appear in the informal conversations between participants at the United Nation’s Convention on Certain Conventional Weapons (CCW) which has been meeting since 2014 to discuss the possible global governance of these technologies (Carpenter, 2016). As one of the most active contributors to the CCW process (Bode et al., 2023), the US’ regulatory position on LAWS has major repercussions. This article consequently examines how, if at all, the artificial intelligence (AI) narratives presented in the Terminator franchise featured in US statements on LAWS at the CCW between 2014 and 2022, and what this may mean for the international regulatory debates on these technologies.

Taking up the call to examine the reach and influence of the AI narratives presented in works of popular culture (Cave and Dihal, 2019: 74), our analysis begins from the premise that, despite its technical inaccuracies, the relationship between the Terminator franchise and the regulatory debate on LAWS is deserving of greater scrutiny. Natale and Ballatore (2020: 5) note that the type of technological ‘myths’ depicted in these films ‘may have deep effects, even if its tenets turn out to be grossly incorrect’. For example, science-fiction comparisons are widely criticised for distracting public attention from the more immediate challenges posed by technologies which operate with various degrees of autonomy (Hermann, 2023; Johnson and Verdicchio, 2017; Weber, 2021). Stuart Russell, a prominent AI researcher and public commentator on these technologies, has called on journalists to ‘stop using this image [of the Terminator] for every single article about autonomous weapons’ (CBC Radio, 2022). American policymakers have similarly criticised the Terminator imaginary, arguing that it narrows the space for more nuanced thinking about the challenges and opportunities presented by the development of these technologies (Ford, 2020). These assessments speak to the perceived influence of the Terminator franchise in shaping perceptions of the regulatory debates on LAWS and highlight the need for greater research in this area.

This article connects the emerging study of AI narratives with popular culture and world politics literature. Despite their identification as a ‘touchstone narrative’ (Cave et al., 2020: 5) in popular understandings of LAWS, the political ramifications of the various stories told about intelligent machines in the Terminator franchise have received little scrutiny within International Relations (IR) scholarship. 2 This is surprising given the attention paid to other popular culture franchises featuring ‘killer robots’ such as Battlestar Galactica (Buzan, 2010; Kiersey and Neumann, 2013) and these film’s perceived influence on American politics (Broxmeyer, 2010). The AI narrative literature, in contrast, has so far been principally focused on categorising the different types of stories that have and have not been told about these technologies (Cave et al., 2018; Cave and Dihal, 2019; Chubb et al., 2022; Hudson et al., 2023). While it is suggested that awareness of AI narratives may influence the governance of AI-associated technologies (Cave and Dihal, 2019: 74; Hudson et al., 2023: 198; Sartori and Bocca, 2023: 454), their circulation in the global regulatory debates on LAWS remains unexplored. This gap has wider implications for researchers interested in the study of emerging technologies because the CCW remains the principal institution at which states parties meet to discuss the possible governance of weaponised forms of AI. This article therefore has two principal aims. First, to unpack the potential repository of different stories told about intelligent machines presented in the first two Terminator films, paying particular attention to the depiction of what Charli Carpenter labels the ‘Good Terminator’ in Terminator 2: Judgement Day (Carpenter, 2016; Young and Carpenter, 2018). And second, to provide an interpretivist account of how these AI narratives featured in the content of US contributions to the CCW process on LAWS in the eight-year period between 2014 and 2022. 3

Our analysis of the LAWS imaginaries presented in the Terminator franchise – arguably, the most culturally enduring and impactful science-fiction depiction of ‘killer robots’ within the United States – highlights the value of broadening existing categorisations of AI narratives to include a hopeful set of stories about what we coin machine guardians: anthropomorphized forms of AI which protect humans from physical harm, follow instructions and can use force with superhuman levels of accuracy. This study also makes a theoretical contribution to the growing study of AI narratives. Much of the existing AI narratives literature assumes that stories about intelligent machines directly influence the perceptions of their audiences, whether this be scientific researchers or members of the public. Recent research highlights the role that states can play in mediating certain AI imaginaries and development pathways (Bareis and Katzenbach, 2022). In the case of the Terminator films, LAWS are simultaneously presented as an existential threat to humanity as well as its ultimate saviour (Carpenter, 2016; Young and Carpenter, 2018). Such unevenness invites the study of whether (and if so how) different stakeholder groups may promote specific sets of AI narratives featured in works of popular culture, and what this may mean for the global regulatory debates on LAWS.

Our main finding is that the US’s opposition to legally binding international regulation on LAWS at the CCW has mirrored aspects of the ‘Good Terminator’ imaginary. We suggest that the various stories told about intelligent machines in the Terminator films can be mobilised to both support and oppose the possible regulation of these technologies. Consistent with the potentially ‘disabling’ effects that explicit references to the franchise could have on policy outcomes (Carpenter, 2016; Neumann and Nexon, 2006; Stimmer, 2019), we find no direct references to the Terminator films in US statements at the CCW. Importantly, however, one of the contributions made by bringing the study of AI narratives into greater dialogue with the popular culture and world politics literature is to provide a framework for studying how the various imaginaries about intelligent machines featured in works of science-fiction can be invoked without directly mentioning these franchises by name. As we show, elements of the machine guardian narrative popularised in Terminator 2: Judgement Day have mirrored the US’ statements at the CCW, with both implying that these technologies can be developed to (1) protect humans from physical harm, (2) enact the commands of human decision-makers and (3) use force with superhuman levels of accuracy.

For the various stakeholder groups contributing to the ongoing debate on LAWS as well as researchers working at the intersection of IR and Science and Technology Studies (STS) scholarship, our analysis supports further research into how the various AI narratives featured in the Terminator films and other popular culture franchises are presented in the regulatory debates on LAWS and other emerging technologies. Building on earlier research (Carpenter, 2016), it also contributes towards future scholarship which examines how the various audiences participating in the CCW process infer meaning from the AI narratives presented in popular culture artefacts and assesses what impact this may have on political outcomes in this area.

The remainder of this article is structured into three parts. First, we synthesise insights from the growing literature on sociotechnical imaginaries and AI narratives to introduce the theoretical framework informing our analysis. Second, we unpack the different AI narratives featured in The Terminator and Terminator 2: Judgement Day to draw out the ‘machine guardian’ imaginary. And third, by drawing insights from the popular culture and world politics literature, we trace the circulation of some of the AI narratives featured in the Terminator films within the US’ regulatory discourse on LAWS. We conclude by drawing out the implications of our analysis for the global regulatory debates on these technologies.

Sociotechnical imaginaries and AI narratives: a framework for analysis

The sociotechnical imaginaries framework has become a prominent feature of recent Science and Technology Studies (STS) scholarship. It has been heavily influenced by Sheila Jasanoff’s (2015) research on the ‘co-production’ of scientific research and social order. Sociotechnical imaginaries are defined as the ‘collectively held, institutionally stabilized, and publicly performed visions of desirable futures, animated by shared understandings of forms of social life and social order attainable through, and supportive of, advances in science and technology’ (Jasanoff, 2015: 4). The substance of these sociotechnical imaginaries can reflect intersubjective understandings of ‘good’ and ‘evil’ and their meaning can be shaped by the actions of policymakers who promote certain imaginaries over others (Jasanoff, 2015). According to Jasanoff (2015), the study of sociotechnical imaginaries helps explain discrepancies in understandings of technology across different geographical and historical contexts. It can therefore be used to unravel the contingent ‘integrated material, moral, and social landscapes’ which reflect, and are in turn shaped by, scientific research (Jasanoff, 2015: 4).

Jasanoff’s conceptualisation of sociotechnical imaginaries as culturally distinct ‘way[s] of sense-making, and thus world-making’ (McCarthy, 2021: 297) has been applied to the study of many technologies including those related to AI. Jutta Weber (2021), for example, has unpicked the sociotechnical imaginaries featured in the 2017 Slaughterbots video released by the Future of Life Institute and Stuart Russell, highlighting the need for ‘new and productive imaginaries’ of LAWS. Jascha Bareis and Christian Katzenbach (2022) have mapped the imaginaries animating the national AI strategies published by China, France, Germany and the United States. They argue that examining the various ‘technology narratives’ presented in these documents enables researchers to better understand how these different cultures have conceived ‘desired [AI] futures’ (Bareis and Katzenbach, 2022: 859). As Lucy Suchman’s (2022) research into the Pentagon’s fielding of machine intelligence and automation technologies underscores, sociotechnical imaginaries can impact public policy by influencing the funding and design of emerging technologies (Bareis and Katzenbach, 2022).

The study of sociotechnical imaginaries has also invited debate on AI narratives which are broadly defined as the stories told about intelligent machines in works of fiction, non-fiction and in the media (Cave et al., 2018: 5). AI narratives serve as the ‘fundamental animators of sociotechnical imaginaries’ (Cave et al., 2020: 7). They are the conduits through which imaginaries regarding the meaning and possible impacts of AI-associated technologies can be communicated to multiple audiences (Cave et al., 2020: 7). The substance and reproduction of AI narratives is suggested to have far-reaching social, political and economic implications (Hudson et al., 2023: 198; Sartori and Bocca, 2023: 443). In some assessments, they ‘form the backdrop against which AI systems are developed, and against which these developments are interpreted and assessed’ (Cave et al., 2020: 7). Knowledge of particular AI narratives has been suggested to influence the views and actions of multiple stakeholder groups – AI developers, policymakers, members of the public – including those participating in the regulatory debates on these technologies (Cave et al., 2020: 7–10; Cave and Dihal, 2019: 74; Dillon and Schaffer-Goddard, 2022).

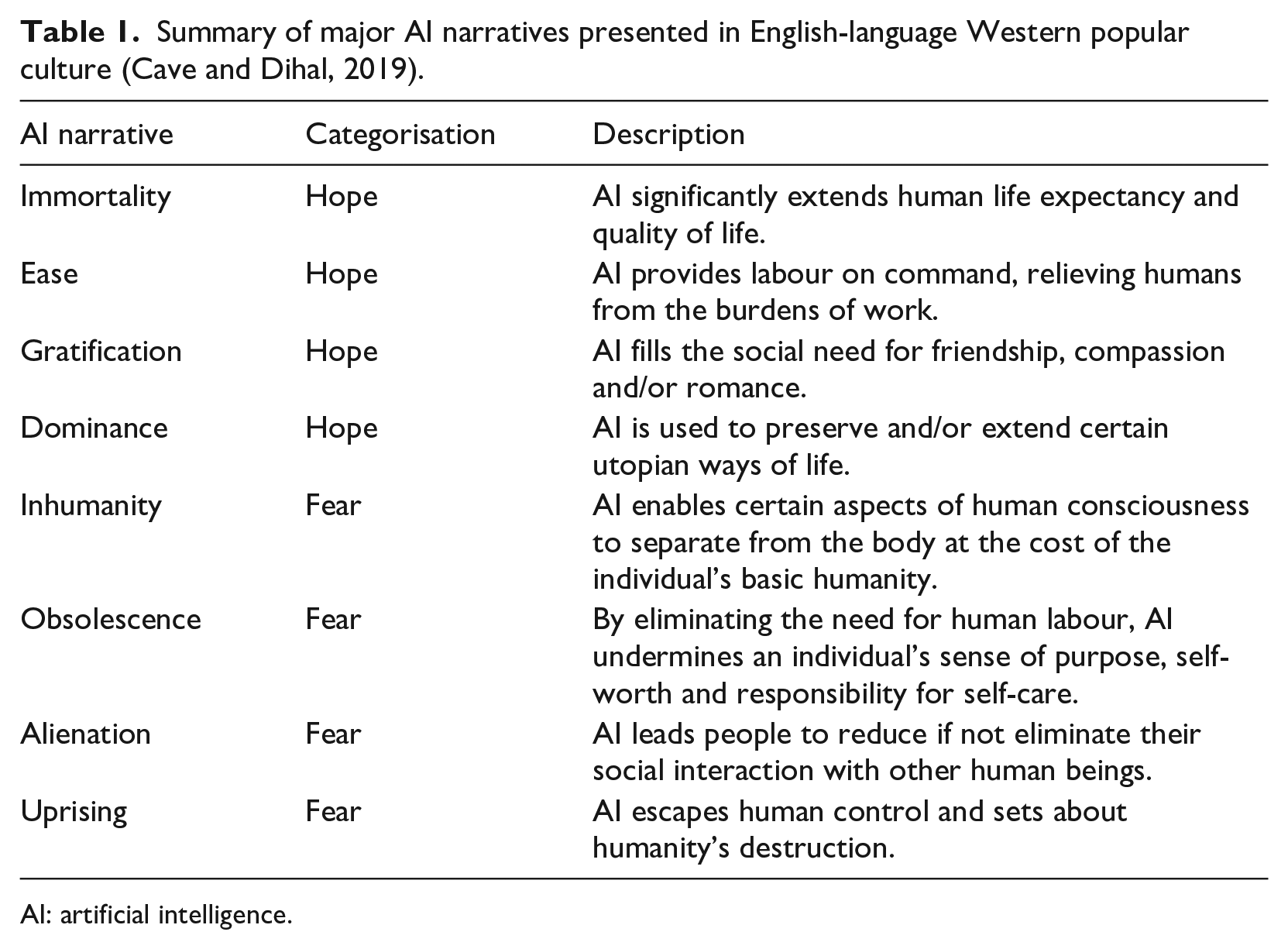

Derived from the study of three hundred contemporary works of fiction and non-fiction, Stephen Cave and Kanta Dihal (2019) have categorised the most common AI narratives featured in the Anglophone West. As summarised in Table 1, they have identified two overarching sets of AI narratives. Hopeful AI narratives present such technologies as a transformative cause of human immortality, ease and gratification, and as a vehicle for preserving the dominance of certain utopian ways of life. Fearful AI narratives, in contrast, are animated by the concern that autonomous machines will reduce (if not eliminate) human control over economic, political and military outcomes (Cave and Dihal, 2019: 75). They explore the idea that AI will trigger inhuman behaviours, produce human obsolescence and social alienation, and be the catalyst of a machine uprising in which these technologies overthrow and set out to destroy their human creators.

Summary of major AI narratives presented in English-language Western popular culture (Cave and Dihal, 2019).

AI: artificial intelligence.

The study of AI narratives requires qualifications. As encapsulated in the motif of anthropomorphized ‘killer robots’, the unrealistic depiction of intelligent machines in science-fiction can generate exaggerated hopes and fears regarding these technologies. Furthermore, AI is often used as a metaphor for telling stories about complex social issues including sexism and inequality (Hermann, 2023). In the context of the Terminator, the film’s creator James Cameron explains they are ‘not really about the human race getting killed by future machines’ but rather about ‘us losing touch with our own humanity and becoming machines, which allows us to kill and brutalize each other’ (Keegan, 2010).

Importantly, however, while the AI narratives literature provides an analytical framework for interpreting the plurality of different LAWS imaginaries presented in the Terminator films, it is underutilised in the study of the global regulatory debates on these technologies. Much of the existing AI narratives literature has focused on cataloguing the different types of stories which have (and have not) been told about intelligent machines in the Anglophone West (Cave et al., 2019; Cave and Dihal, 2021; Chubb et al., 2022; Hudson et al., 2023). This has invited the study of how these stories circulate among different audiences including the general public (Sartori and Bocca, 2023) and AI researchers (Dillon and Schaffer-Goddard, 2022). Audience interpretations of popular culture matter not only because these dynamics can help explain the political significance of such works (Carpenter, 2016; Crilley, 2021: 172–173; Young and Carpenter, 2018) but because interpretations of the various meanings encoded within these materials can be influenced by the contingent experiences and perspectives of audience members (Pears, 2016).

Our analysis contributes to the growing study of AI narratives by examining how certain stories about intelligent machines feature in the statements made by American policymakers participating in the global regulatory debates on LAWS. While more research remains needed in this area, one of the major innovations made by recent contributions to the popular culture and world politics literature has been to broaden the study of popular culture beyond elite policymakers to include a greater focus on its ‘everyday’ consumption (Crilley, 2021: 168). Our analysis, in contrast, focuses on the circulation of AI narratives in the statements of American policymakers because the global regulatory debates on these technologies have been held at the UN GGE: a forum which, while also involving the participation of NGOs and technical experts, is dominated by states parties. Moreover, as previously noted, while it has been suggested that the various stories told about intelligent machines ‘could influence how AI systems are regulated’ (Cave and Dihal, 2019: 74), the presentation of AI narratives at this forum is yet to be explored.

The depiction of anthropomorphized forms of malign AI is a common science-fiction trope explored in many Anglophone popular culture franchises, some of which predate the release of the first Terminator film in 1984 (Cave et al., 2018; Cave and Dihal, 2019). Among countless other works, this includes Isaac Asimov’s Foundation Series, Blade Runner, Battlestar Galactica, I: Robot, Robocop, Star Trek, Star Wars and Westworld. The various forms of ‘killer robot’ depicted in these franchises – Androids, Battledroids, Cylons, Hosts – nonetheless lack the same degree of cultural resonance and staying power as the T-800 Terminator and Skynet in American sociotechnical imaginaries about AI. The Terminator franchise is recognised to have ‘shap[ed] the public perception of AI since 1984’ (Cave et al., 2018: 16). In 2008, for example, the US Library of Congress selected The Terminator for entry into the National Film Registry: an annually updated list of movies of ‘enduring significance to American culture’ (The Library of Congress, 2008). The second film in the franchise – Terminator 2: Judgement Day – is popularly considered as being one of the greatest action movies of all time (YouGov, 2022b). It is particularly noteworthy since, in contrast to the first film, Arnold Schwarzenegger’s T-800 character is reimagined as a ‘Good Terminator’ (Carpenter, 2016; Young and Carpenter, 2018). Highlighting the cultural resonance of this imaginary, Arnold Schwarzenegger’s T-800 was the only character on the American Film Institute’s (AFI) (2003) 100 Years. . .100 Heroes & Villains index ranking movie characters which had ‘made a mark on American society in matters of style and substance’ to be listed as both a hero (#48) and as a villain (#22).

Further underscoring its importance for our analysis, the Terminator is also considered the ‘poster boy for any debate on lethal autonomous weapons’ (Menadue, 2017). According to Paul Scharre (2018), the author who led the Pentagon working group that drafted the Pentagon’s original 2012 Directive on Autonomy in Weapon Systems, ‘in nine out of every ten serious conversations on autonomous weapons [he has] had, whether in the bowels of the Pentagon or the halls of the United Nations, someone invariably mentions the Terminator’ (p. 264). This suggests that, while not its only audience, many American policymakers have watched the Terminator films either for leisure or for the more instrumentalist purposes of accessing the ‘social currency’ that popular culture references are understood to provide at the CCW (Carpenter, 2016: 62). It is therefore reasonable to assume that the American delegations participating in the international debates on these technologies possess at least some knowledge of the AI narratives presented in the Terminator franchise, even if it falls beyond the scope of our current analysis to draw a direct line between this and the substance of the US’ regulatory position on LAWS. 4

The Terminator franchise is best approached as an ‘extensive intertext that spans a variety of media’ (Weldes, 1999: 120). This includes a television show (The Sarah Connor Chronicles), multiple video games and a long-running comic series. Our analysis focuses on the first two Terminator films: The Terminator (1984) and Terminator 2: Judgement Day (1991). 5 This is because: (1) the available public opinion data suggests that they are the two most well-known Terminator films, including with people born after their release (YouGov, 2022a; YouGov, 2022b); 6 (2) the motifs introduced in Terminator 2: Judgement Day are reused in the later contributions to the franchise (French, 2021: 6); and (3) as Hollywood blockbusters, we can assume that The Terminator and Terminator 2: Judgement Day have had a wider audience than supplementary media (e.g. novels, videogames, comic books) also featuring the Terminator (Hermann, 2023: 320–321).

Reading AI narratives in the Terminator and Terminator 2: Judgement Day

The first movie in the Terminator franchise – The Terminator – was released in 1984 and ‘emphasise[d] the power of technology’ (Weber, 2021). According to its co-writer and director James Cameron, it was inspired by a dream involving ‘a robot walking out of a fire’ (French, 2021: 19). The Terminator’s plot revolves around the attempts of the titular T-800 Terminator to kill Sarah Connor. To briefly summarise its plot: 7 prior to the events depicted in the film, Skynet – a malign superintelligence originally built as a ‘defence network computer’ for the American military – had orchestrated a global nuclear exchange which killed three billion people. Realising its impending defeat in its ‘future war’ against humanity, Skynet uses a time machine to send the titular T-800 terminator back to 1984 Los Angeles to kill Sarah Connor thus preventing the birth of her son, the future resistance leader John Connor. In response to this threat, the human resistance elects to send one of their own agents – Kyle Reese – back to 1984. Over the course of the film, Sarah Connor and Kyle Reese fend off multiple attacks from the T-800, before its final destruction at the film’s climax.

The Terminator’s portrayal of the T-800 as a merciless ‘killer robot’ capable of sustaining immense physical damage is consistent with a common science-fiction trope: the depiction of anthropomorphised AI imbued with ‘superhuman qualities’ (Hermann, 2023: 320). As the character Kyle Reese describes it, the T-800 Terminator ‘. . .can’t be bargained with. It can’t be reasoned with. It doesn’t feel pity, or remorse, or fear. And it absolutely will not stop, ever, until you are dead’ (Cameron, 1984). The Terminator thus sits firmly within the ‘uprising’ AI narrative, providing a Cold War twist on the motif of ‘machines as monsters’ first popularised in Mary Shelley’s Frankenstein (Cave et al., 2018: 8; Hermann, 2023: 332). In this way, The Terminator speaks to the ‘fears underlying the human hope for dominance by means of AI’ (Cave and Dihal, 2019: 77) and anxieties about ‘AI yearning for domination’ (Sartori and Bocca, 2023: 453).

Terminator 2: Judgement Day

Terminator 2: Judgement Day released in July 1991, seven years after the first film. Its basic plot structure mirrors the original film: the protagonists are pursued by a machine assassin sent by the disembodied superintelligence Skynet. Separate to the events depicted in The Terminator, it is revealed that a T-1000 had also been sent back in time to 1995 to kill 10-year-old John Connor. Following his rescue by a reprogrammed T-800 which like Kyle Reese in the previous film had been sent back in time by the human resistance, John Connor sets about freeing his mother from Pescadero State Hospital before she can be killed by the pursuing T-1000. After being wounded by Sarah Connor and convinced of his role in the upcoming apocalypse, Miles Dyson – the scientist who, through reverse engineering the remnants of the Terminator destroyed at the end of the first film, ‘invents’ the neural-net processor which gives rise to Skynet – agrees to destroy his AI research. The film ends with the destruction of the ‘Bad’ T-1000, the Cyberdyne Artificial Intelligence lab and the ‘Good’ T-800 Terminator which sacrifices itself in the hope of averting a machine uprising.

The T-1000 Terminator – Terminator 2: Judgement Day’s primary antagonist – provides a twist on the theme of ‘technologized body. . .as a kind of ultimate threat’ (Telotte, 1992: 28) which casting Arnold Schwarzenegger had achieved in the original film. Built from a ‘mimetic poly alloy’ (Cameron, 1991), the T-1000 can assume the appearance of both non-mechanical objects and humans. As such, it sits firmly within the ‘uprising’ AI narrative, representing a more technologically sophisticated depiction of the machine assassin trope introduced in The Terminator.

The biggest narrative difference between the first two Terminator movies is that, in Terminator 2: Judgement Day, Arnold Schwarzenegger’s T-800 has been sent back in time to protect rather than kill Sarah and John Connor. At multiple points during the film, this reprogrammed T-800 rescues the human protagonists from certain death. In keeping with the ‘ease’ AI narrative, the machine also provides multiple forms of labour. One of its ‘mission parameters’ is to follow John Connor’s instructions (Cameron, 1991). These range from the childish (standing on one leg), the mundane (driving the vehicle carrying the two human characters), through to the heroic (helping John Connor rescue his mother from Pescadero State Hospital). Although the machine retains a capacity to cause non-lethal forms of harm, John Connor instructs the T-800 to no longer kill humans. At the film’s climax, for example, the machine uses a mini-gun to force the retreat of over a dozen blockading police officers without what appears to be a single death – an act demonstrating superhuman levels of precision and accuracy.

This more humanised depiction of the Terminator has its genesis in Arnold Schwarzenegger’s first meeting with James Cameron. Schwarzenegger was initially reluctant to play a villain in The Terminator fearing it would harm his acting career (Schwarzenegger and Petre, 2013: 300–302). As James Cameron explained it, however: ‘[t]he Terminator is a machine. It’s not good, it’s not evil. If you play it in an interesting way, you can turn it into a heroic figure that people admire because of what its capable of’ (Schwarzenegger and Petre, 2013: 301). Throughout Terminator 2: Judgement Day, the machine assumes the role of a pseudo father figure to John Connor (French, 2021: 48). This points to the presence of an implicit gratification narrative in representing the hope that AI can fulfil the human desire for companionship. Inverting Kyle Reese’s description of the T-800 in The Terminator as a machine which ‘doesn’t feel pity, or remorse, or fear’ (Cameron, 1984), Sarah Connor describes the reprogrammed T-800’s relationship with her son as one in which the machine would ‘never stop. . . never leave him, and . . . never hurt him, never shout at him, or get drunk and hit him, or say it was too busy to spend time with him. It would always be there’ (Cameron, 1991).

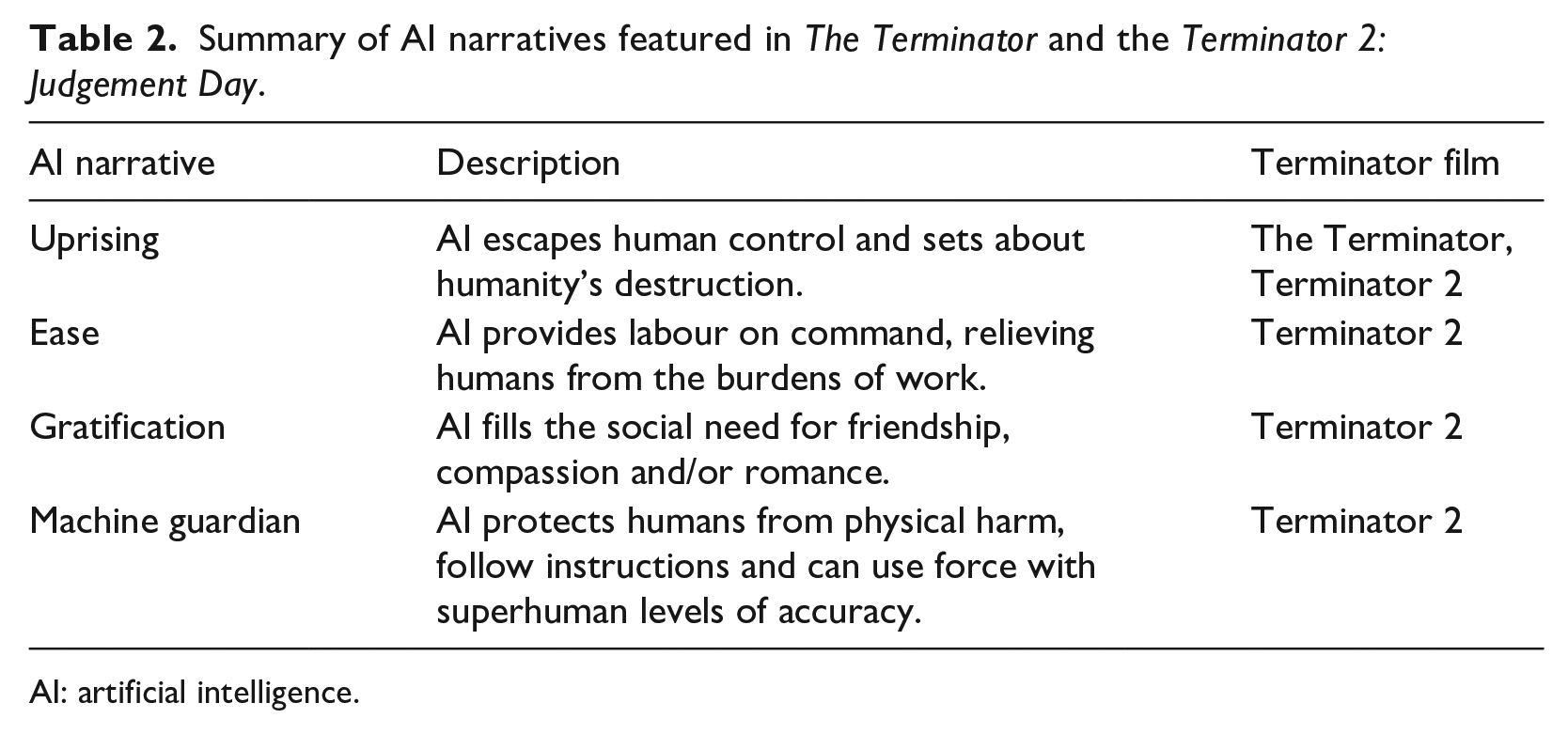

As summarised in Table 2, our analysis of the various stories told about intelligent machines in the first two Terminator films highlights the value of extending existing categorisations of popular AI narratives to include stories about what we coin machine guardians. In our understanding, this set of stories is a common AI narrative that permeates Western popular culture. While it draws elements from the ease and gratification narratives, what distinguishes the machine guardian narrative is its presentation of anthropomorphized forms of AI which protect humans from physical harm, follow instructions and can use force with superhuman levels of accuracy. These stories are ‘hopeful’ in the sense that they portray ‘intelligent machines [which] have a transformatively positive impact on the lives of some or all humans’ (Cave and Dihal, 2019: 75).

Summary of AI narratives featured in The Terminator and the Terminator 2: Judgement Day.

AI: artificial intelligence.

Speaking to its cultural reproduction, the motif of machine guardians has travelled throughout Western popular culture. Machine guardians are present in later Terminator films including the reprogrammed Model 101 featured in Terminator 3: Rise of the Machines, Marcus Wright in Terminator: Salvation, and ‘Pops’ in Terminator Genisys. Variants of this narrative can also be seen in the characters of K-2SO in Star Wars: Rogue One; Data in Star Trek, and Sharon ‘Athena’ Agathon (Number Eight) in Battlestar Galactica. Arnold Schwarzenegger’s reprogrammed ‘Good Terminator’ which ultimately ‘save[s] the day’ (Young and Carpenter, 2018: 567) is arguably the paradigmatic example of this machine guardian narrative. As outlined above, this character not only protects John Connor from physical harm, but by following the command to no longer kill, is shown to use force with an accuracy beyond the capabilities of almost any human. One of the only instructions which this ‘Good Terminator’ disobeys is John Connor’s command to not destroy itself at the film’s climax – an action which, being intended to avert ‘Judgement Day’, mirrors Sarah Connor’s description of the machine as having ‘learn[t] the value of human life’ (Cameron, 1991). Unlike the villainous Skynet which epitomises the uprising AI narrative, machine guardians act to preserve, rather than overthrow, anthropocentric social orders. While these machines exercise their own agency, these actions are bounded within the ethical values of the human characters whom they interact with and protect.

When situated within the wider history of stories told about intelligent machines, the machine guardian narrative pulls from aspects of Isaac Asimov’s Three Laws of Robotics – the substance, evolution and real-world applicability of which has been extensively debated by AI ethicists (Anderson, 2008; McCauley, 2007). Contrary to the machine uprising narratives featured in The Terminator and Terminator 2: Judgement Day, Asimov’s Three Laws of Robotics hold that machines can be programmed with immutable ethical principles to prevent them harming their human creators (McCauley, 2007: 3). They posit that: (1) ‘a robot may not injure a human being, or, through inaction, allow a human being to come to harm’.; (2) ‘a robot must obey the orders given it by human beings except where such orders would conflict with the first law’.; and (3) ‘a robot must protect its own existence as long as such protection does not conflict with the first or second law’ (Asimov, 1976, 1984 quoted in Anderson, 2008: 477–478). While the ‘Good Terminator’ featured in Terminator 2: Judgement Day openly circumvents these laws, both causing non-lethal forms of physical harm to humans and disobeying John Connor’s instructions not to destroy itself at the film’s climax, it does so with the ultimate intention of averting humanity’s destruction at the hands of Skynet. 8 The delineation of machine guardians as a distinct AI narrative is important, we argue, for understanding a key subtext of the Terminator imaginary: that the development of LAWS is not inherently inappropriate. What matters is ‘how machines are programmed’ (Young and Carpenter, 2018: 574) and what commands they receive from human agents.

The Terminator, AI narratives and US LAWS discourse

The repository of AI narratives presented in the Terminator films can be understood as possibly influencing US LAWS policy discourse in two ways: first, by offering ways of understanding the ‘appropriateness’ and desirability of these technologies; and second, by providing the conditions of possibility within which particular understandings of LAWS development emerge. In Jutta Weldes' (1999) classic formulation, popular culture references offer American policymakers a vehicle for helping legitimise certain policy positions by making them appear ‘commonsensical’. Drawing from the more recent IR literature on popular culture and world politics, we can extend these insights by theorising AI narratives as possessing both an ‘enabling’ and ‘disabling’ effect on policy outcomes (Carpenter, 2016; Neumann and Nexon, 2006; Stimmer, 2019). Depending on their discursive salience and framing, AI narratives could be mobilised by American policymakers to support a ban on certain types of LAWS. Conversely, the discursive prevalence of hopeful narratives could conceivably be mobilised in favour of developing the type of machine guardians featured in Terminator 2: Judgement Day – even if they are not necessarily designed in a humanoid form.

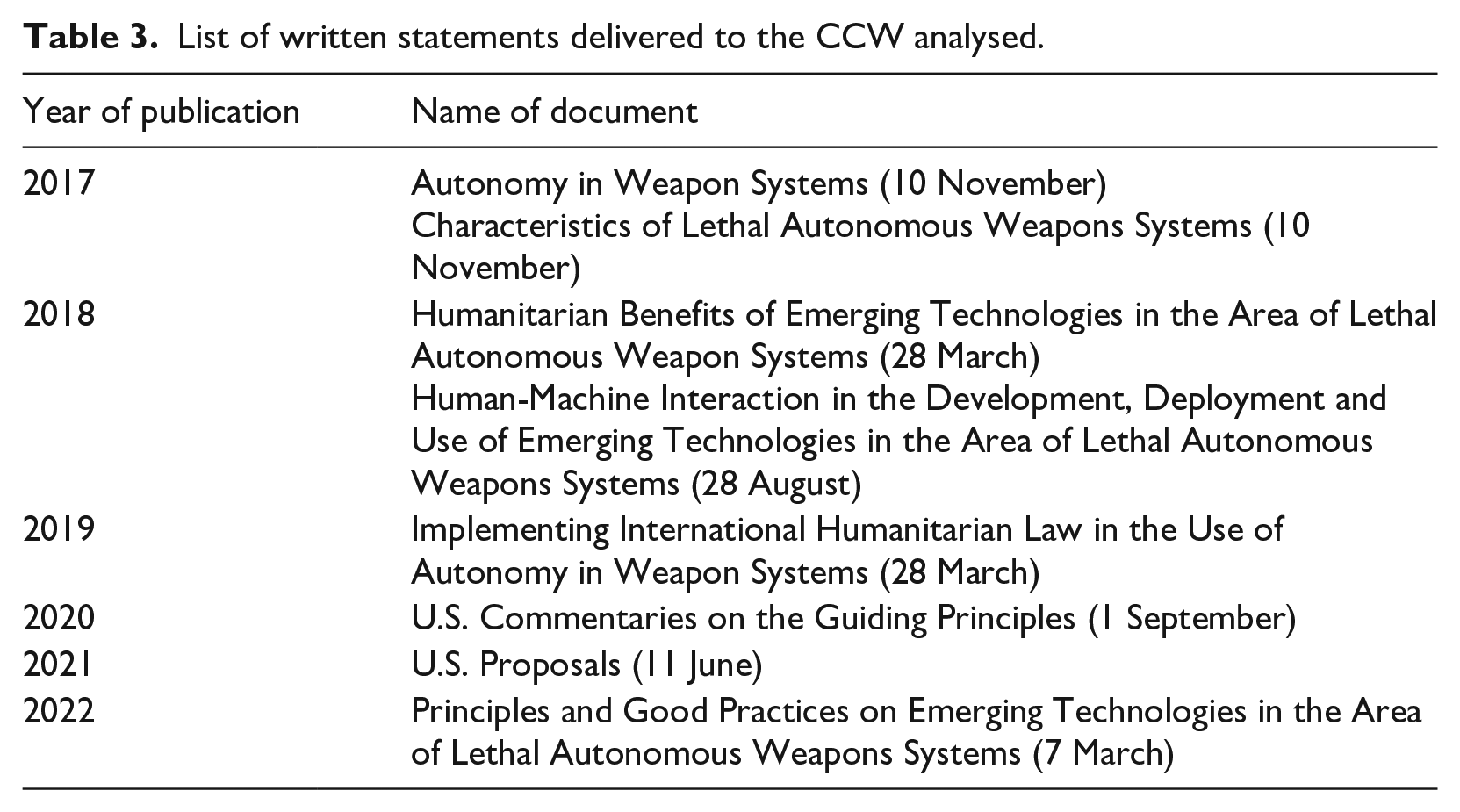

We have analysed the US’ statements to the CCW process for references to the AI narratives present in the Terminator films. Our empirical analysis is focused on the nine years between 2014–2022. The American use of weapon systems integrating autonomous technologies predates this period, as does the release of the first two Terminator films. The period after 2014 has been selected because it coincides with the announcement of the influential Third Offset Strategy (Work, 2017) and the start of debates on LAWS at the CCW. In total, we analysed eight written submissions to the CCW (see Table 3) in addition to some of the verbal statements delivered by US delegations during this time.

List of written statements delivered to the CCW analysed.

We conducted a qualitative thematic narrative analysis of the material. The focus of thematic narrative analysis is on the content of the texts studied, rather than on the language used. This invites a focus on ‘what’ is said more than ‘how it is said’ (Riessman, 2004: 706). We engaged in deductive-inductive thematic coding, using the four AI narratives we identified in the two Terminator films–uprising, ease, gratification, and machine guardian–as the starting point for our analysis. This involved categorising themes in the texts and mapping these to the AI narratives. The empirical material was coded by both authors, and we subsequently discussed our approach and potential ambiguities in the texts. It quickly became clear that US statements to the CCW predominantly featured the machine guardian narrative, some references to the ease narrative, as well as the occasional dismissal of the uprising narrative. Our empirical analysis will therefore concentrate on these three AI narratives.

The degree to which policymakers can promote specific AI narratives to influence the global regulatory debates on LAWS as well as the agency they can exercise when inferring the plurality of meanings these works can accommodate remain open research questions. We posit that the circulation of AI narratives could promote greater acceptance of certain pathways of AI development and ‘desired futures’ while also reflecting the content of real-world policies regarding these technologies (Bareis and Katzenbach, 2022: 858–859). While we argue that the three constitutive strands of the machine guardian narrative have been mirrored in US statements at the CCW, we do not claim that this is causally explained by viewership of the Terminator franchise. This category of claim can only be made based on interviewing and/or directly observing the stakeholder groups participating in the Group of Governmental Experts (GGE) process (Carpenter, 2016). Rather, our analysis is predicated on an interpretivist understanding of the relationship between these artefacts and the US’ regulatory position on LAWS. These accounts, which have been utilised in the study of other American science fiction franchises including Battlestar Galactica (Buzan, 2010; Kiersey and Neumann, 2013) and Star Trek (Weldes, 1999) are organised around ‘learn[ing] more about how popular culture (re)produces power relations, societal values, and political sentiments’ (Stimmer, 2019: 432; see also Neumann and Nexon, 2006). They treat popular culture as ‘data’ that can be reproduced in political narratives and shared social understandings (Carpenter, 2016: 55). As Charli Carpenter (2016) notes, ‘[i]nterpretivism helps us think critically about the origins and impacts of messaging in cultural products, and suggests hypotheses about how they might influence political thinking’ (p. 56). In this way, our study supports future empirical research that examines whether the ‘Good Terminator’ imaginary has informed, not just mirrored, elite thinking on the appropriateness of regulating LAWS.

US statements at the CCW and the Terminator

States parties to the UN CCW have been meeting in Geneva since 2014 to discuss the ethical, legal and military ramifications of emerging technologies in the area of LAWS. In 2017, these discussions were formalised through the creation of a GGE. Managed under the CCW’s auspices, the GGE has facilitated multilateral dialogue between states, NGOs and technical experts (Carpenter, 2016; Rosert and Sauer, 2021). Despite some areas of agreement such as the 11 Guiding Principles on LAWS published in 2019 which outlined a broad set of legally non-binding principles regarding the design and operation of these weapons (United Nations Office for Disarmament Affairs (UNODA), 2019), the debates at this forum have stalled (Bode et al., 2023). The CCW process requires consensus among all participating states parties to move forward, including the United States.

The US position on the global regulation of LAWS has been shaped by multiple factors including Washington’s perception of an accelerating global ‘race for AI supremacy’ (National Security Commission on Artificial Intelligence, 2021: 7) and the Pentagon’s increased investments in emerging weapon technologies as part of its Third Offset Strategy (Bode et al., 2023). The United States was the first country to formulate a formal policy for designing and operating AWS which it defines as ‘[a] weapon system that, once activated, can select and engage targets without further intervention by a human operator’ (U.S. Department of Defense, 2012: 13). First released in November 2012 and updated in January 2023, Department of Defence Directive 3000.09 does not prohibit the development of so-called ‘fully’ autonomous weapons systems – e.g. systems which can conduct strikes without direct human supervision (Allen, 2022). Rather, it couples a requirement for ‘commanders and operators to exercise appropriate levels of human judgment in the use of force’ (U.S. Department of Defense, 2012: 7) with a weapons review process intended to ensure that all systems operate in accordance with domestic and international law. The United States has opposed the principle of any new binding global regulation of LAWS. Its position has instead evolved to call for the development of a ‘non-binding Code of Conduct’ which ‘would help States promote responsible behavior and compliance with international law’ (US Delegation, 2021a).

Approached in this context, US delegations at the CCW can be read as attempting to avoid the potentially disenabling effect which the overt ‘science fictionalisation’ (Carpenter, 2016: 60) of the CCW dialogue could have on regulatory debates on these technologies. Consistent with this claim, the authors could find no evidence that the Terminator films have been directly referenced in US contributions to the CCW. In addition to attempting to insulate the process from the negative connotations popularly associated with aspects of the Terminator films (Young and Carpenter, 2018: 570), US delegations have worked to contest the uprising narrative popularised in The Terminator. A November 2017 working paper outlining the US’ position on whether states parties should develop a working definition of LAWS, for example, maintained that focusing too narrowly on the machines ‘could stimulate unwarranted fears that are more the product of science fiction and popular imagination than fact’ (US Delegation, 2017b: 2). As summarised in the statement on the subject of ‘LAWS and Human Rights/Ethics’ delivered in April 2016: [. . .] we believe that it is important that our discussion of autonomy does not lead to categorical statements about good versus evil. History has taught us that technology can be used to promote humanitarian goals, and we should not preclude or diminish our ability to use technology to mitigate human suffering in times of armed conflict. Nor should we succumb to the fear that taking advantage of emerging technology renders a future vision of killer robots run amok inevitable (US Delegation, 2016: emphasis added).

These remarks are consistent with a major subtext of US statements at the CCW: that LAWS-associated technologies should not be stigmatised (Authors, 2023). US delegations have consistently stressed the importance of considering the operational context in which LAWS are deployed in any assessment of their ethics and legality. This focus has translated into the repeated call to explore ‘both the challenges and opportunities presented by [LAWS]’ (US Delegation, 2017a: 1). The discussion of the perceived ‘opportunities’ of LAWS development has, as outlined below, mirrored the three constitutive strands of the machine guardian narrative: that these technologies (1) can be developed to protect humans from physical harm, (2) enact the commands of human decisionmakers and (3) use force with superhuman levels of accuracy.

According to US delegations, future advances in LAWS-associated technologies could strengthen compliance with international humanitarian law, including the principles of distinction and proportionality. Consistent with the first strand of the machine-guardian narrative, LAWS have been framed as a tool for reducing the risks of unintended civilian deaths and injury. The assertion that autonomy could ‘be used to reduce the likelihood of harm to civilians and civilian objectives’ (US Delegation, 2021b: 1) has been a persistent theme of US statements at the CCW. LAWS, it has been argued, could reduce civilian harm in five ways: (1) enabling the self-destruction and/or deactivation of munitions once fired; (2) helping pierce the ‘fog of war’ thereby improving the situational awareness of battlefield commanders; (3) allowing commanders to make more informed decisions on whether and when to use force; (4) increasing the ‘speed, precision, and accuracy’ with which targets can be identified, tracked and engaged; and (5) minimising the civilian harm created by the use of force in situations of self-defence (US Delegation, 2018b). US delegations to the CCW have highlighted two additional ways in which LAWS could be developed to protect humans from physical harm: first, by conducting operations in ‘hazardous, risky environments, for example, for search and rescue missions’ (US Delegation, 2016) which humans are either unable or incapable of performing; and second, as highlighted in the case of air defence systems, by providing greater protection to soldiers involved in combat operations (US Delegation, 2017a: 3, 2018a: 2, 2018b: 5–6).

Consistent with the ‘ease’ AI narrative, the American delegation to the CCW has also postulated that the further integration of autonomy into weapons systems could make the tasks of human soldiers and human commanders easier. By alleviating the need to focus on dull, labour-intensive tasks, for example, autonomy could free-up time for commanders to evaluate battlefield conditions, while also reducing the risk of unintended errors (US Delegation, 2018a: 2). Consistent with the Pentagon’s wider vision of human-machine teaming (Work, 2017), these technologies’ use to inform battlefield decision-making has also been discussed (US Delegation, 2020: 2, 2021b: 2). This includes situations in which autonomy is used to identify and track potential targets as well as ‘select[ing] and engag[ing] specific targets that the commander or operator did not know of when he or she activated the weapon system’ (US Delegation, 2019: 3). Such thinking overlaps with the second dimension of the machine guardian narrative: that, much like the reprogrammed T-800 featured in Terminator 2: Judgement Day, LAWS will enact the commands issued by human decisionmakers.

To this end, US delegations have argued that LAWS could help better ‘effectuate the intentions of commanders and operators’ (US Delegation, 2018a, 2020: 2, 2021b: 1). This claim is a central pillar of the US’ regulatory position on LAWS, underpinning the description of these technologies’ potential humanitarian benefits as well as the position that human agents must retain ultimate responsibility in line with international law for the operation of these systems (US Delegation, 2020: 4–5). US delegations have suggested that LAWS could operate to identify and engage the targeting profiles preselected by human commanders, while only operating in predesignated geographical areas (US Delegation, 2018a: 3, 2021b: 2–3). In the context of ‘smart’ munitions such as the AGM-114 Hellfire and Javelin missiles, the use of autonomy is claimed to have ‘increased the degree of control that States exercise over the use of force’ (US Delegation, 2019: 8: emphasis added).

As this suggests, much like the depiction of the reprogrammed T-800 using a mini-gun to disperse a group of blockading police officers without appearing to cause any direct physical harm, US delegations have also maintained that LAWS could be developed to use force with superhuman levels of accuracy. Such claims have been linked to the two other strands of the machine-guardian narrative. As part of a November 2017 working paper on autonomy in weapons systems it was claimed that these technologies could be used for the purposes of ‘more precisely applying force and causing less unintended destruction’ (US Delegation, 2017a: 4). It has similarly been noted that autonomy could, by enabling a system to ‘home in’ on targets designated by a human operator, ‘make weapons more precise and accurate in striking military objectives’ (US Delegation, 2021b: 1). Similar claims have reverberated throughout the US’ statements at the CCW, including in the context of discussions of the guidance system used on the AIM-120 Advanced Medium Range Air to Air Missile which has been described as allowing ‘commanders to strike military objectives more accurately and with less risk of harm to civilians and civilian objects’ (US Delegation, 2018b: 4). In some cases, such as with the operation of certain types of air defence systems, the US has gone as far as to argue that the use of autonomy ‘might be more appropriate’ than human control because of the ‘much greater speed and accuracy’ with which such systems can purportedly operate (US Delegation, 2018a: 2: emphasis added).

Conclusion

To the authors’ knowledge, this article has provided the first empirical account of how the various AI narratives presented in the first two Terminator films have been mirrored in the US’ opposition to legally binding international regulation on LAWS. Our analysis suggests that the various stories told about intelligent machines in the Terminator franchise can be mobilised to both support and oppose the possible regulation of these technologies. Drawing from the AI narratives and the popular culture and world politics literatures, we have argued that the Terminator franchise not only features the fearful AI narratives associated with robot uprisings but, in the case of the second film, stories about ease and gratification as well as what we coin machine guardians. Our empirical analysis has highlighted the greater prevalence of references to AI narratives featured in the Terminator franchise rather than direct references to the Terminator in American statements at the CCW in the period between 2014 and 2022. Throughout this time, American delegations have emphasised the potential opportunities presented by emerging technologies in the area of LAWS, including that these technologies can be developed to (1) protect humans from physical harm, (2) enact the commands of human decisionmakers and (3) use force with superhuman levels of accuracy.

Many stakeholders participating in the CCW process have criticised the frequency with which the Terminator films are referenced in the media coverage of these technologies. Campaigners argue that the Terminator imaginary distracts attention from the more immediate challenges to the principle of human control created by machines operating with less than ‘full’ autonomy. As Stuart Russell and others perceive it, the Terminator imaginary propagates an unrealistic expectation that LAWS threaten to become ‘conscious’ and develop a ‘hatred’ for humans (CBC Radio, 2022). Campaigners highlight the need for ‘better’, more realistic AI narratives as a steppingstone ‘to invent[ing] ‘better worlds’’(Weber, 2021). Hudson et al. (2023: 205) rightly note that ‘[a]n imaginary built around time-traveling killer robots occludes the messier quandaries of real machine intelligence as it is being implemented today, such as privacy, consent, and bias’.

As our analysis demonstrates, the various stakeholders contributing to the regulatory debates on LAWS should also scrutinise the role and potential political significance of the AI narratives featured in popular culture, not simply direct references to these works. Existing studies point to the influence which direct popular culture references can have on shaping the socio-political context in which American (and other) policymakers make decisions on emerging technologies including LAWS (Carpenter, 2016; Stimmer, 2019). Researchers working at the intersection of IR and STS scholarship must remain sensitive to the puzzle of why and how such narratives come to circulate in the policy debates on LAWS given that many works of popular culture including the Terminator franchise accommodate multiple imaginaries about the possible consequences of developing ‘killer robots’ (Carpenter, 2016; Young and Carpenter, 2018). The findings of our study supports further empirical research which, through the use of methods including focus groups and interviews (Carpenter, 2016; Crilley, 2021: 172–173; Pears, 2016), probes how elite audiences infer meaning from the Terminator franchise and whether it (and other works of popular culture) has had an ‘informing’ effect on the global governance of LAWS by ‘provid[ing] diffuse knowledge that people bring to bear on political issues’ (Neumann and Nexon, 2006: 16).

Future studies could develop a more fine-grained analysis of whether the US’ circulation of these AI narratives may have impacted the direction of the regulatory debates at the CCW as well as the political attitudes of its constitutive states parties. For example, we cannot assume that global audiences infer the same ‘hopeful’ meaning from the ‘machine guardian’ narrative as American audiences. Further empirical research is also needed to assess whether illusions to machine guardians have been effective in generating international support for the US’ preferred regulatory position on LAWS, as well as surveying how far the Terminator’s cultural resonance travels beyond the US. Similarly, building on earlier research (Carpenter, 2016), our study invites further investigation of the degree to which the AI narratives portrayed in other stories involving ‘killer robots’ feature in the global regulatory debates on LAWS, how the character of such references may vary in different institutional and bureaucratic contexts, and whether the presence of such narratives has evolved over time, for example following the March 2021 publication of a United Nations (UN) Panel of Experts on Libya report which suggested that Kargu-2 loitering munitions had been fielded autonomously in combat (Bode and Watts, 2023). For the various stakeholders participating in the CCW process as well as IR and STS scholars, continued scrutiny over how US (and other) policymakers reference the AI narratives presented in the Terminator franchise and other works of popular culture is therefore warranted.

Footnotes

Acknowledgements

The authors would like to thank the participants at the 2022 BISA International Studies and Emerging Technologies working group workshop, the AUTONORMS Algorithmic Warfare workshop, the Royal Holloway Centre for International Security seminar series, and the University of Southern Denmark International Politics seminar series for their constructive feedback on earlier drafts of this article. The authors would also like to thank Bradley Watts for his detailed discussion of the depiction of various forms of AI in Western popular culture and Katherine Chandler, Michelle Bentley, Guangyu Qiao-Franco and Anna Nadibaidze for their detailed comments on early drafts of this article. Any errors remain our own.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article. This work was supported by the European Research Council (ERC) under the European Union’s Horizon 2020 research and innovation programme (grant agreement no. 852123). Dr. Tom F.A. Watts’ contribution to this work was also supported by a Leverhulme Trust Early Career Research Fellowship (ECF-2022-135).