Abstract

Behavioral scientists aim to explain and predict behavior. In principle, these goals align; in practice, common approaches to pursuing them have become distinct traditions in tension with one another. The explanatory tradition often examines causal factors in isolation, establishing that they have some effect but not how much or how they combine. The predictive tradition learns how factors combine, but these patterns may not reflect a causal structure or hold when conditions change. Answering how much each factor matters, and how they combine across settings, requires both predictive accuracy and causal interpretation. This article examines three developments toward this integration: evaluation frameworks that emphasize generalization, systematic experimentation and flexible models, and interpretation tools. We present recent empirical examples that demonstrate how this integration enables the discovery of generalizable patterns and provides a path toward cumulative behavioral science.

A central goal of behavioral science is to understand human behavior. Researchers often pursue this goal by building models to explain behavior (identifying its underlying causes) and predict behavior (anticipating outcomes not yet observed). In principle, these goals align: A model that faithfully captures causal processes should generate accurate predictions (Hempel & Oppenheim, 1948). In practice, however, common approaches to explanation and prediction can put them in tension.

Behavioral phenomena are often causally dense, meaning that outcomes arise from many factors that interact in complex ways (Almaatouq et al., 2024a; Meehl, 1967, 1992). This creates challenges for both explanatory and predictive approaches. Much explanatory research examines causal factors in isolation, establishing that they matter but rarely how much they matter relative to others or how they combine. As a result, research programs can accumulate many partial explanations without improving our ability to predict outcomes under different conditions (Ward et al., 2010). Predictive models often learn statistical regularities that do not reflect the underlying causal structure, and their performance can degrade when conditions shift (Lazer et al., 2014). Although these are not the only ways to pursue explanation and prediction in behavioral science, they are established enough to constitute distinct research traditions, and the resulting tensions have generated significant debate: Should researchers prioritize causal explanations over predictive accuracy (Hofman et al., 2021; Yarkoni & Westfall, 2017)? How should scientists measure success when these goals diverge (Hofman et al., 2021; Rocca & Yarkoni, 2021)?

This article examines how recent developments are integrating these traditions. We focus on statistical and algorithmic models of the sort commonly used in quantitative behavioral science and data science, with examples drawn primarily from experimental research. We expect some of these developments to extend to theoretical modeling (e.g., mathematical, mechanistic, agent-based) and to observational work (e.g., causal inference), both essential counterparts, although we do not explicitly develop those connections here.

We first describe the explanatory and predictive traditions. We then discuss three developments toward integrating them: evaluation frameworks that emphasize generalization, systematic experimentation and flexible models, and interpretation tools. We conclude with empirical examples of the integrative approach in practice.

Two Modeling Traditions

What follows is admittedly a caricature. Both traditions contain more nuance and diversity than we can do justice to in this article. Each is defensible on its own terms, has generated productive research programs, and grapples with its own challenges and criticisms. But the simplification we adopt helps to clarify where tensions arise and how recent developments are bridging them.

The explanatory tradition

Behavioral scientists have traditionally prioritized explaining causes underlying behavior. The approach typically proceeds as follows. Researchers begin by formulating theory-informed hypotheses (e.g., whether Factor X causes Behavior Y or how Factor Z might mediate or moderate Behavior Y) and then empirically test these hypotheses, ideally through randomized experiments. If the estimated effect differs reliably from zero in the hypothesized direction, the result is interpreted as evidence that the hypothesized relationship holds, and the theory is treated as an explanatory account of the behavior.

This explanation-focused tradition values simplicity, often testing just one or a few causal factors at a time. Therefore, the associated experiments (or data sets) are designed to isolate the effect of interest while holding “all else equal.” This practice is valuable when demonstrating existence in a specific context is the goal, as in field experiments documenting hiring discrimination (Bertrand & Mullainathan, 2004). But it encounters challenges when testing theory-derived hypotheses about causally dense behaviors in the lab, where generalization is the aim (Meehl, 1992).

First, merely showing that an effect “exists” under some conditions adds little on its own: Anything that plausibly could have an effect is unlikely to have an effect of exactly zero (Gelman, 2011; Meehl, 1967). What remains unknown is the effect’s relative importance for predicting the outcome, how it interacts with other factors, and how those interactions shape outcomes. For example, one study might examine how financial incentives affect task performance while holding difficulty fixed; another might vary difficulty while holding incentives fixed. We may learn from these studies that both financial incentives and task difficulty matter. But which factor is more important? Do they interact? If so, how? Those questions remain difficult to answer.

Second, studies conducted under different all-else-equal assumptions are often difficult to compare or integrate. Each study selects its own context, measures, and held-constant variables, and these design choices differ across studies in ways that are rarely explicit, systematic, or documented. In the incentives-and-difficulty example, the two studies could also differ in time pressure, task type, and participant pool. Because the studies are incommensurable, post hoc efforts to combine findings (e.g., through meta-analysis) often struggle to answer the questions of which factors matter most and how they interact (Almaatouq et al., 2024a; Meehl, 1967, 1992). These challenges mean that research programs often accumulate many partial explanations without a clear picture of how they combine to predict behavior across contexts (Almaatouq et al., 2024b; Meehl, 1992; Yarkoni, 2020; Yarkoni & Westfall, 2017).

The prediction tradition

Behavioral science has increasingly emphasized prediction as a goal in its own right, often without explicitly aiming to identify causal effects. Although recently amplified by machine learning, predictive approaches have deep roots in behavioral science (Dawes et al., 1989). Unlike the explanatory tradition, in which “prediction” typically means demonstrating that a statistical relationship exists, “prediction” in this context refers to accuracy on observations not used to build the model (Hofman et al., 2021). Saying personality “predicts” academic performance, for instance, might mean the coefficient differs from zero (explanatory) or that one can accurately forecast a given student’s grades from their scores (predictive). To be clear, a regression model of the form y = βx can appear in both traditions depending on how it is applied and interpreted (Hofman et al., 2021). In explanatory modeling, the focus is typically on the right-hand side of the equation (i.e., the sign, statistical significance, and sometimes magnitude of the estimated coefficients). In predictive modeling, the focus is often on the left-hand side (i.e., how closely the predicted values match actual outcomes in previously unseen observations).

From a practical standpoint, accurate predictions can be valuable even when causal relationships remain unclear. Recommendation systems, for example, predict user preferences well enough to optimize engagement. They succeed without understanding the underlying psychological processes.

However, prediction-focused approaches face their own limitations, particularly in causally dense settings. First, predictive models learn statistical associations from observed data, and these associations may not hold when populations, contexts, or study designs differ from those used to build the model (Lazer et al., 2014). Associations learned in one setting may reflect features of that context that do not hold elsewhere. Second, predictive models are typically more complex than those in the explanatory tradition. They often use more parameters and allow for more flexible relationships between variables, which can make it hard to identify what the model is relying on or why it succeeds in some settings but fails in others. As a result, although prediction emphasizes accuracy on new observations, it does not by itself ensure generalization to new contexts or clarify how factors combine to produce outcomes.

The Gap

These different standards lead to different designs and analytical choices. Explanatory approaches favor narrow designs suited to isolating individual effects but not to learning how multiple factors combine. This limited scale is one reason experimental behavioral science has not yet widely benefited from machine learning (Almaatouq et al., 2024a). Predictive approaches have embraced machine learning, but largely for observational data that lack experimental control. As a result, the two traditions tend to operate on different empirical foundations: experiments that are too narrow to support flexible modeling and observational data sets that are too uncontrolled to support causal claims.

These different foundations leave a gap. Neither tradition can answer the question that causally dense settings demand (Almaatouq et al., 2024a, 2024b; Bryan et al., 2021; Watts, 2017): How much does each factor contribute to predicting the outcome relative to others, and how do they combine across settings? The explanatory question (“Does this effect exist?”) does not reveal the effect’s relative importance or how it interacts with other factors. The predictive question (“Can we predict the outcome?”) does not reveal whether those patterns will hold when conditions change. Answering this question requires predictive accuracy to learn how factors combine and experimental control to characterize when those relationships hold.

Toward Integration

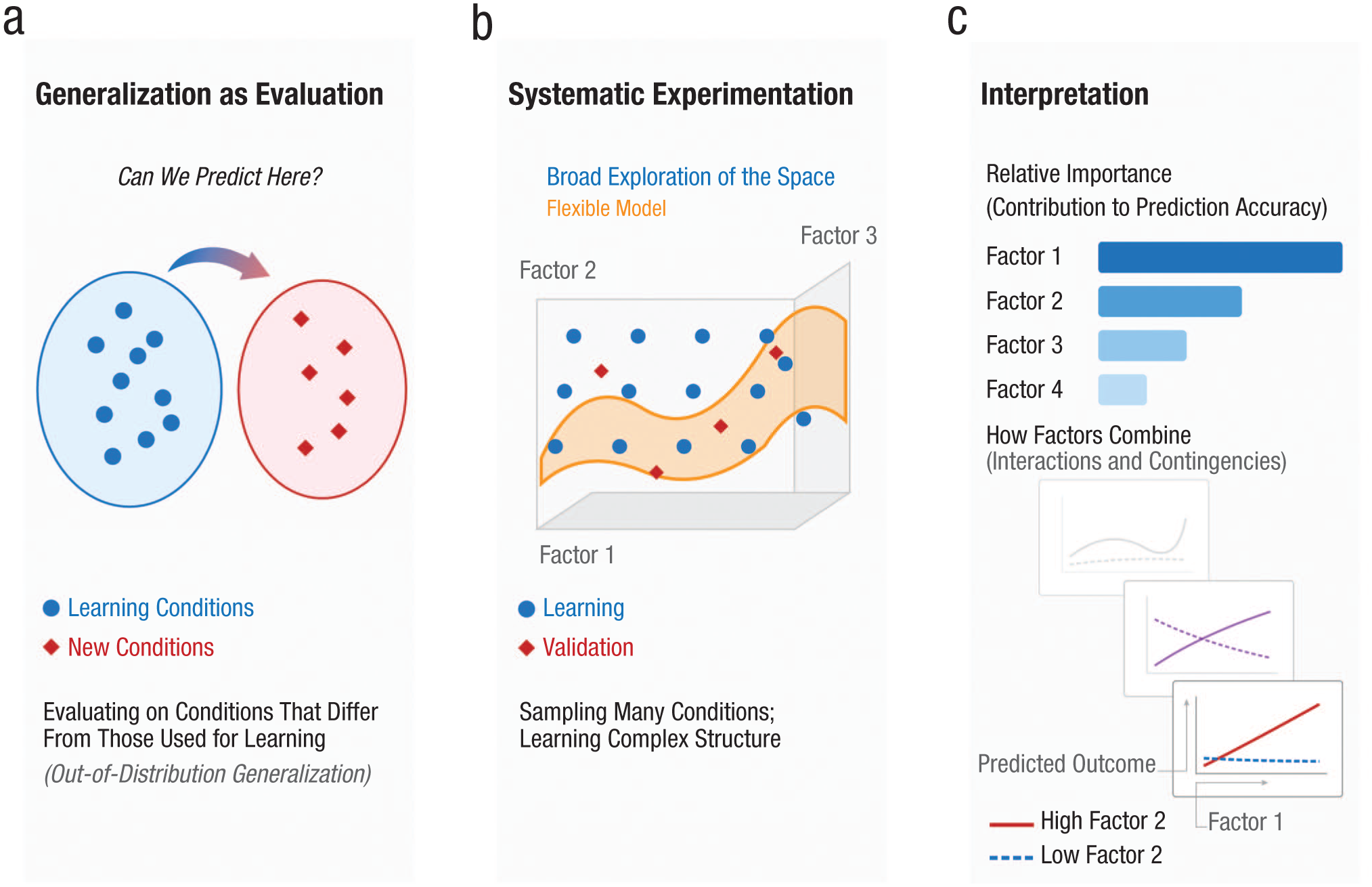

Three developments are integrating these traditions: evaluation frameworks that emphasize generalization, systematic experimentation and flexible models, and interpretation tools. These three developments are linked: The first defines the criterion, the second provides the means to meet it, and the third makes accessible what the model has learned (see Fig. 1).

Three developments toward integrating explanation and prediction. The first development is (a) generalization as evaluation, which tests whether models can predict outcomes under conditions that differ from those used for learning; the second development is (b) systematic experimentation, which samples broadly across a space of experimental conditions and uses flexible models to learn complex structure; and the third development is (c) interpretation tools, which reveals which factors matter most for prediction and how they combine.

Generalization as evaluation

Answering the question of “how much and when” requires evaluation standards that assess both predictive accuracy and causal structure. The typical standard in predictive modeling assesses accuracy on new observations from the same distribution (out-of-sample prediction). This means predicting outcomes for new participants drawn from the same population, completing the same experimental design with the same materials and procedures. In effect, this amounts to predicting outcomes from an exact replication of the original study. A more demanding criterion assesses accuracy when the distribution itself changes (out-of-distribution generalization). Such changes can range from small shifts in conditions (e.g., different stimuli or a modified procedure, as in conceptual replications) to entirely new settings (e.g., different populations or field contexts). Machine learning has helped systematize these evaluation frameworks, although the underlying logic of generalization testing has roots in behavioral science. The key question becomes whether the model can predict behavioral outcomes under conditions that differ from those on which it was trained.

This criterion is integrative because out-of-sample accuracy alone does not indicate that a model has captured causal relationships; it may reflect idiosyncratic correlations that break when conditions shift (Lazer et al., 2014). However, accuracy on unseen experimental outcomes, particularly across varied conditions, provides evidence that the model has learned generalizable structure rather than patterns specific to the training data (Almaatouq et al., 2024a; Hofman et al., 2021). Of course, some behaviors may prove inherently unpredictable because of high causal density or randomness. This would be disappointing because it would limit the potential for generalizable models, but characterizing such limits, rather than assuming they do not exist, is itself a form of progress (Almaatouq et al., 2024b; Hofman et al., 2021).

Systematic experimentation and flexible models

Meeting this standard of generalization requires broad experimental data sets that vary many factors systematically. Experiments in the explanatory tradition are typically designed to isolate individual effects, which limits their breadth of conditions. They also tend to produce findings that are difficult to compare or integrate because each study differs in unspecified ways, including different experimental procedures, populations, and all-else-equal assumptions (Almaatouq et al., 2024a). Several recent innovations confront these limitations, including metastudies (Baribault et al., 2018; DeKay et al., 2022), megastudies (Duckworth & Milkman, 2022; Voelkel et al., 2024), and integrative experiments (Almaatouq et al., 2024a). Although they differ in goals and implementation, these approaches share a common logic: Rather than leaving integration to a post hoc meta-analysis, they define a space of possible experiments and sample from it systematically. Brunswik’s idea of “representative design” articulated similar principles more than 70 years ago (Brunswik, 1955), but virtual labs, large-scale collaborations, and machine learning advances have recently made such approaches practical (Almaatouq et al., 2021; Stroebe, 2019). The resulting data sets combine experimental control with broad variation across conditions so that conditions become directly comparable and interactions become visible.

Because behavior is causally dense, design spaces are often large, and budgets are always finite. Researchers must decide which conditions to run and how to allocate observations across them. Exhaustive coverage is rarely possible, but it is also rarely necessary. When the goal is to learn how factors combine, sampling many unique conditions often yields more information than repeated measurements of a few (DeKay et al., 2022). Machine learning methods support this logic in several ways. Active learning can iteratively guide sampling toward regions of uncertainty or theoretical interest (Balietti et al., 2021). Large language models can serve as simulated participants (“homo silicus”), providing informative but imperfect responses that allow researchers to explore regions of the design space before committing to costly human data collection (Broska et al., 2025; Horton, 2023), which makes broader empirical coverage of conditions more practical.

Once interactions are visible in the data, models must be able to represent them. From a statistical perspective, the bias-variance trade-off identifies two ways a model can fail: It can be too simple, unable to capture structure that is present, or too complex, overfitting noise. As data sets from experiments about causally dense behaviors increase in size and breadth, oversimplification becomes the more likely cause of poor generalization (Almaatouq et al., 2024a; Dubova et al., 2025). Simple models that omit interactions for the sake of parsimony will fail when evaluated in new experiments because they have not learned how factors combine. Machine learning methods are well suited for capturing such interactions and learning complex structures not specified in advance. However, although model complexity can enable generalization in such domains, it also demands new tools for understanding what they have learned.

Interpretation

A model that generalizes well can be viewed as a kind of quantitative theory of behavior. It encodes how factors combine to produce outcomes, and the faithfulness of this encoding is what allows it to predict outcomes in new conditions (Almaatouq et al., 2024b). The question then becomes what the model has learned. Interpretation methods can reveal the relative importance of factors and identify previously unhypothesized interactions (Alsobay et al., 2025). They can also identify features that are influential but context-bound, which can help improve model performance under novel conditions. Such insights can open new lines of research, including mechanistic models that explain why a pattern holds.

“Interpretability” remains a contested concept in machine learning; here, we use the term to mean making what the model has learned cognitively accessible. A range of methods support this goal. Common examples include attribution methods (such as Shapley values, which estimate each factor’s contribution to a prediction by comparing model outputs with and without that factor, averaged across all possible combinations of other factors being present or absent), probing methods (tests of whether specific information is captured by the model), and adversarial attacks (deliberately crafted inputs designed to reveal conditions under which the model fails). Although such methods may clarify how predictions are generated, they necessarily simplify the model’s complexity to enhance accessibility to a human interpreter. They should be viewed as aids to understanding the target behavior rather than complete representations of what the model learned. For a broader overview of interpretability research in machine learning, see Molnar (2020); for interpretability specifically within scientific contexts, see Molnar and Freiesleben (2024).

Integration in Practice

An increasing number of empirical studies exemplify the integrative approach outlined above. We discuss one example from our own work, showing how evaluation, systematic experimentation, and interpretation work together, and then briefly point to related work across other domains.

Alsobay et al. (2025) asked what makes costly punishment effective in promoting cooperation efficiency in public goods games. Twenty-five years of prior research had identified many moderating factors, including game length, communication, group size, punishment cost, contribution framing, and outcome visibility. Each study varied one or a few factors while holding others constant in unspecified ways. The result was a long list of factors that had some effect on but did not answer the question the literature implicitly posed: If you are designing a system with peer punishment, will it improve cooperation or make things worse?

Alsobay et al. addressed this gap using the three developments outlined above. First, they defined a 14-dimensional design space covering these factors and sampled 360 experimental conditions, collecting 147,618 decisions from 7,100 participants (systematic experimentation). Second, to evaluate whether the observed heterogeneity could be predicted, new experiments were preregistered and run after the initial data collection; a machine learning model trained on the original experiments outperformed human forecasters (both experts and laypeople) in predicting how much punishment would help or harm welfare in these new conditions (generalization as evaluation). Third, interpretation methods revealed which factors mattered most and how they interacted (interpretation).

Punishment’s effect on welfare ranged from +43% improvement to −44% reduction depending on the combination of design parameters. Communication emerged as roughly three times more important than any other factor, followed by contribution framing (opt-in vs. opt-out), contribution type (variable vs. all-or-nothing), interaction length, and peer outcome visibility. The analysis also uncovered previously unhypothesized interactions. For example, longer games enhanced punishment’s effectiveness only when communication was available, and contribution framing effects depended on both contribution type and outcome visibility. Factors that had received substantial attention in prior research, such as punishment magnitude and group size, turned out to have less predictive power than expected.

Similar logic has been applied across domains. The Moral Machine experiment defined a nine-dimensional space of ethical dilemmas for autonomous vehicles, collecting more than 40 million decisions (Awad et al., 2018); Agrawal et al. (2020) then used machine learning to identify overlooked interactions, such as how the moral weight assigned to jaywalkers varies with their age or social role. In research on risky choice, Peterson et al. (2021) defined a 12-dimensional space of gambles (e.g., choosing between receiving $20 with 50% probability vs. $10 with certainty) and found that classic decision-making phenomena varied with context in ways that prospect theory did not capture. In research on group performance, Hu et al. (2026) defined a 24-dimensional task space in organizational settings and found that machine learning models predicted group advantage in new tasks far better than traditional categorical taxonomies did. In each case, researchers defined a space of possible experiments, sampled systematically, evaluated via generalization, and used interpretation to uncover structure.

As more researchers adopt integrative approaches, new challenges emerge. To name a few, constructing a design space requires deciding which factors to include, and different choices will lead to different conclusions (Almaatouq et al., 2024b). Even with the same data, different models can predict equally well but imply different conclusions about which factors matter and when (Black et al., 2022). And accurate models of causally dense phenomena may be too complex for intuitive sensemaking, whereas simpler models may sacrifice predictive power (Dubova et al., 2025). Best practices for navigating these trade-offs have not yet been established and will likely evolve with experience.

Conclusion

Explanatory research has established that many factors matter, but not which ones matter most or how they combine. Predictive models can learn how factors combine, but not whether those patterns will hold when conditions change. The developments reviewed here integrate predictive accuracy and causal interpretation to ask how much each factor matters across settings. The examples we reviewed suggest this approach can enable the discovery of generalizable patterns and provide a path toward more cumulative behavioral science.

Recommended Reading

Almaatouq, A., Griffiths, T. L., Suchow, J. W., Whiting, M. E., Evans, J., & Watts, D. J. (2024). (See References). Detailed treatment of the integrative experiment design framework.

Hofman, J. M., Watts, D. J., Athey, S., Garip, F., Griffiths, T. L., Kleinberg, J., Margetts, H., Mullainathan, S., Salganik, M. J., Vazire, S., Vespignani, A., & Yarkoni, T. (2021). (See References). Perspective on the tension between explanation and prediction in computational social science, with recommendations for integrating both.

Jolly, E., & Chang, L. J. (2019) The flatland fallacy: Moving beyond low–dimensional thinking. Topics in Cognitive Science, 11(2), 433–454. Critique of oversimplified models in cognitive science and case for embracing high-dimensional data.

Molnar, C., & Freiesleben, T. (2024). (See References). Introduction to supervised machine learning and interpretability methods in scientific contexts.

Rocca, R., & Yarkoni, T. (2021). (See References). Argument for benchmarking and predictive evaluation as standards for psychological theories.