Abstract

Several years ago, the world was stunned when the cute robot HitchBOT was destroyed. Does empathy for robots—sharing experiences and feeling compassion—make sense for humans? How do people empathize with robots, and what are the ethical and practical implications of doing so? How do people react when robots seem to be empathizing with them? In this review, we detail empirical work on empathy for robots, discuss the ethics of extending empathy toward robots, and consider how to engineer robots that elicit empathy. We then review empirical work on empathy received from robots to explore psychological, philosophical, and engineering implications. In our final section, we suggest how interactions with robots might cultivate human empathy. Can interactions with a robot build human empathy and help it to become more resilient and reliable?

Keywords

Empathetic feelings for and from robots are complex. People seemed to be stunned with myriad emotions when HitchBOT, the hitchhiking robot, was destroyed with apparent malice, 1 when a LimX robot was “abused” at the 2024 WorldAI conference, 2 and when the NASA Insight Mars lander shut down (with a post “Don’t worry about me”). 3 Are people able to share in the “experiences” of robots and generate concern for them? What would that entail, and what motivates such responses? Conversely, are people able to accept empathic expressions from robots, as in caregiving settings? 4

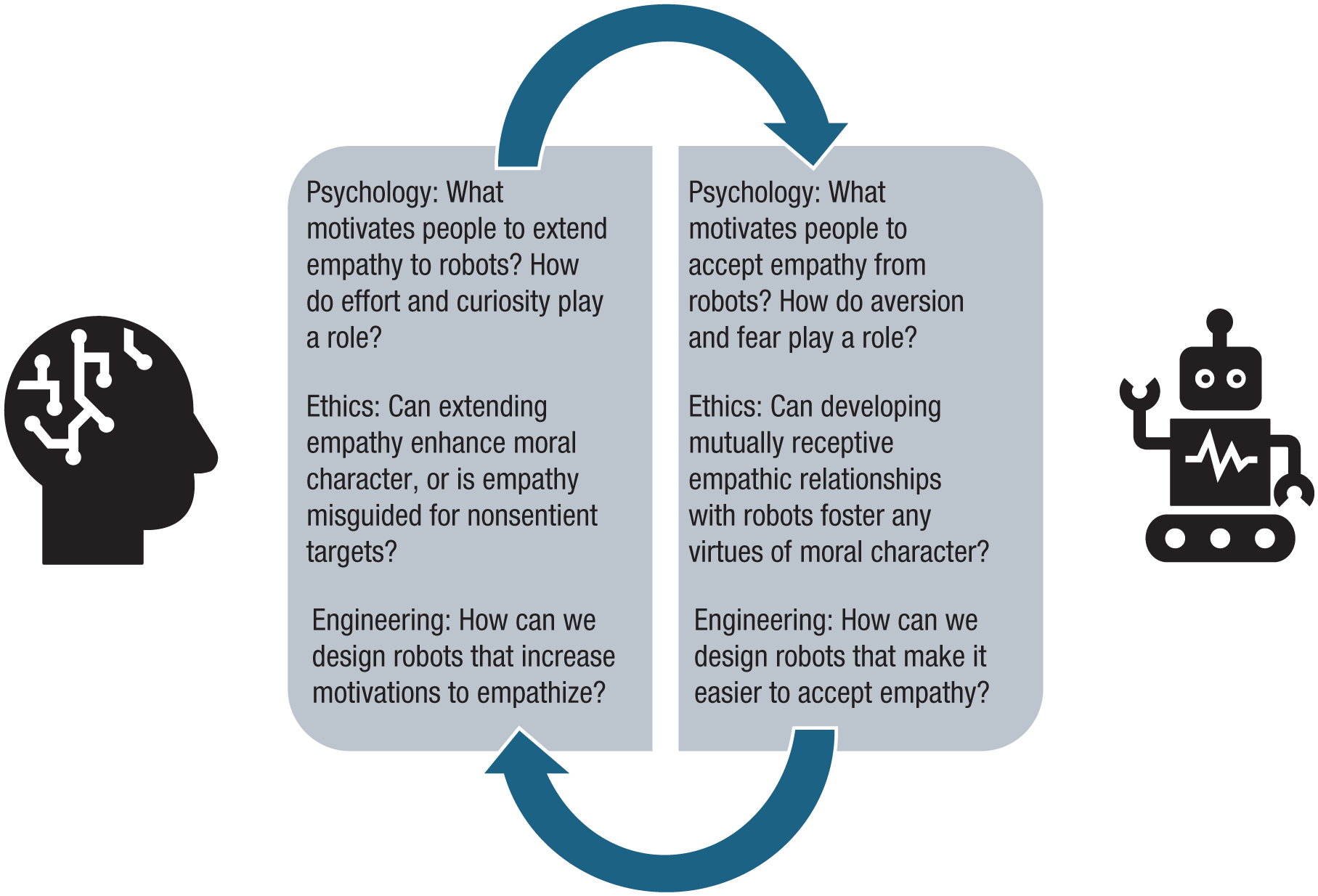

In this review, we cross disciplines to consider three perspectives: (a) At the level of psychology, do people give and receive empathy to and from robots? (b) At the level of normativity, ought people give and receive empathy during these interactions? (c) At the level of engineering, how do we design empathetic human-robot interactions? (see Fig. 1). We suggest that human-robot interactions can be a useful platform to expand human empathy, scaffolding against biases that can weaken its expression. We further suggest that trust is integral to building empathy in human-robot interactions 5 and that we should trust and not invalidate experiences of people who say they feel empathy for and from robots.

Questions about empathy and human-robot interactions.

Vicarious Emotion and Compassion for Robots

Psychological findings

What would it mean to empathize with a robot? Does the question make sense, and is it something people are capable of? First, we need to define the scope of our review.

To specify our empathy focus, we highlight vicarious emotion and compassion. Vicarious emotion entails taking on experiences one perceives in a robot or receiving an expression of shared experience in return. Compassion entails generating sympathy and concern for apparent harm or need (e.g., dysfunction) in a robot, or receiving warm care for oneself from it. We focus on these empathy facets in both directions within the human-robot dyad to delimit our scope in light of this field’s concerns about definitional precision (Hall & Schwartz, 2019). These facets connect with social-cognition constructs of anthropomorphism, dehumanization, and embodied cognition (Harris, 2024). Dehumanization of robots could result from empathy avoidance and could precede robot abuse. However, empathy involves ascription of mental states in a way that some kinds of humanization and moralization may not. Embodied cognition could matter, because the visceral sharing of target pain may be affected by the fact that robots lack biology (Suzuki et al., 2015). Enactive processes could include adaptively reinforcing empathy during human-robot interactions (Shamay-Tsoory & Hertz, 2022). Though we focus specifically on empathy facets, related constructs can inform understanding of why people engage.

We focus on social robots, defined as “‘embodied, autonomous entities that can communicate and enter into social relations with people’” (Malinowska, 2021, p. 362). These robots are just one type of AI with which humans can interact. Because of how motors operate and because robot skin is often a form of latex, embodied robots often generate negative responses—there is an uncanniness to them (Weber, 2013) that might inhibit empathy. Readiness to enter interactions with robots may also track (dis)belief about whether empathy is possible (Clark & Fischer, 2023). Although the conversational ease of AI chatbots might scaffold empathy without physicality (Inzlicht et al., 2024), we focus on embodied robots that have received extensive study and that may generalize more to human interactions (Nielsen et al., 2022).

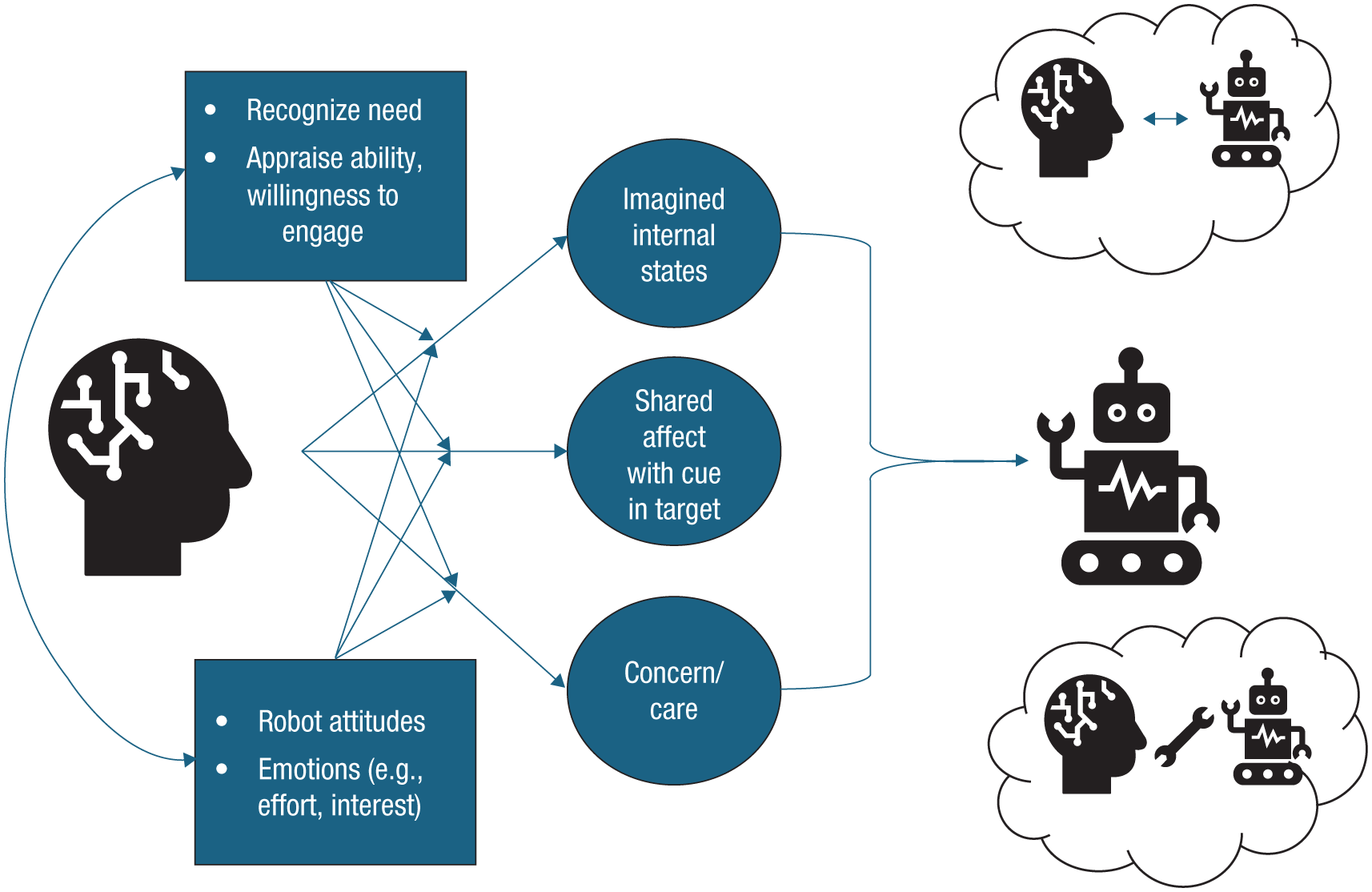

In Figure 2, we outline a process model for empathizing with robots. When perceivers encounter robots in need and perceive frustration (e.g., an obstruction), they may engage if there is a possibility of response. Empathetic responses could include projected visceral resonance with goal obstruction or compassion about the robot’s welfare. The reference point for empathy can include at least three possibilities. First, a responder might empathize with the robot itself. Second, a responder might empathize with the social reality created through interaction with the robot. (For instance, a robot-bullying situation, even without mind attribution, could still elicit concern about relational fairness if people generalize from cases of human bullying.) Relatedly, scenarios in which embodied robots are seen as “depictions of social agents” (Clark & Fischer, 2023, p. 1) might allow people to empathize with them as if they are characters in a performance, even though there is no sentient experience. Third, an observer might empathize with the creator of the robot, working backward to the designer’s intentions and goals.

Components and reference points of empathy for robots. First, respondents could empathize with the robot itself (rightmost arrow from human to robot). Second, respondents could empathize with the social relation created between the human and robot (thought cloud with a bidirectional arrow between them). Third, respondents could empathize with the creator of the robot as object (thought cloud with a tool between human and robot). Circles correspond to different facets of empathy that could be considered.

Regardless of whether robots are sentient, we think the representation of robots is what proximally shapes empathetic interactions and how we value them (or not). The process of trying to empathize can be distinguished from the outcome (Hall & Schwartz, 2019) so that attempts to empathize with robots may still matter even if robots cannot have subjective experiences. Suggesting that empathy is definitionally impossible because the target of empathy cannot feel empathy seems to occlude core aspects of what it means to opt into empathizing.

We ground our review in the perspective that empathy is guided by people’s motivated choices (Cameron et al., 2019) and by the subjective costs and benefits of empathy. Robots are an intriguing case for motivated empathy. Their dissimilarity from our experience may increase the effort of empathy (Cameron et al., 2019), yet their novelty could inspire curiosity that fosters approach rather than avoidance. Although robots are often on the fringe of people’s moral circles, the reactions we have to them might reveal what we care about and our willingness to empathize in human-human interactions (Wykowska, 2021).

With our assumptions outlined above, we can review evidence for empathy for robots. Prior studies have used a variety of tools to assess a range of empathy facets, with less extensive efforts along all method-by-facet combinations. Understanding how we empathize with robots is thus complicated by heterogeneity across the empathy facet being studied, by how empathy is measured, and by the type of robot. People exhibit higher skin conductance seeing a robotic dinosaur being harmed (vs. not), with increases in self-reported pity and anger but not in empathy (Rosenthal-von der Pütten et al., 2013). People rated humans being harmed with more negativity than robots being harmed (i.e., more negativity when humans are compared with machine-like robots than with human-like or animal-like robots; Mattiassi et al., 2021). People also showed comparable empathetic valence responses to humans and robots in competitive contexts (De Jong et al., 2021), and they showed changes in self-reported positive and negative affect on seeing robots in positive and negative situations, suggesting vicarious emotion (Yang & Xie, 2024). Last, people show stronger electrophysiological sensitivity and separation between painful and nonpainful situations for humans, compared with robots, in their early reactions (350–500 ms; Suzuki et al., 2015).

Most of these studies are about passive empathizing; we suggest exploring how people actively regulate empathetic feelings toward robots through their choices. Marchesi et al. (2019) assessed whether people freely choose mental states for robots (i.e., intention) over mechanistic terms to explain their behavior, finding that although mechanistic explanations were preferred, there was still some ascription of mind (i.e., preference for intentional explanations). Researchers should test empathy regulation toward robots and whether it relates to cognitive costs (e.g., the subjective effort of empathizing; Cameron et al., 2019) or to social motivations (e.g., maintaining human uniqueness; Vanman & Kappas, 2019). Such work could continue with roboticists’ emphasis on live, ongoing interactions and might include how empathy and trust in robots intersect during emergency situations (Wagner, 2021).

Motivational perspectives are consistent with developmental shifts in how robots are viewed; for example, children are more likely than adults to attribute experiential mental states to robots (Reinecke et al., 2025). As children develop, tendencies to include distant entities in the moral circle lessen (Marshall et al., 2025). To the extent that people rely on similar social ideologies and prejudices across humans and nonhuman animals (Dhont et al., 2016), people might generalize empathetic choices during human-robot interactions to human-human ones. Suggesting such influences, Spaccatini et al. (2023) found that people who engaged with a more (vs. less) anthropomorphic embodied robot were less likely to report empathic concern for a human target. However, this effect was complex, in that ascribing agency to the robot was associated with increased concern for the human, whereas ascribing experience to the robot was associated with decreased concern for the human.

We might consider how empathetic processes proceed differently for human-robot interactions compared with human-human (and human-animal) interactions. Human-robot interactions might seem unique in lacking visceral reference points for empathizing, but they share with human-animal interactions a dissimilarity to human experience that might seem to create more effort costs in empathizing. On the other hand, both may afford the opportunity for novelty that motivates exploration. There may be important differences in the empathic processes that are engaged; these are worth future investigation, because studies contrasting empathy for robots and humans seem to assume similarity in the empathetic processes engaged.

Ethical considerations

Is it a mistake to empathize with a nonsentient robot? Some have suggested that robots might be able to represent vulnerability (e.g., risk of damage; Christov-Moore et al., 2023) and that it could be helpful to empathize to predict robot behavior (Schmetkamp, 2020). Accordingly, successful human-robot interaction relies on ascribing mental states to machines, regardless of whether they possess them (Weber, 2013, p. 485).

Yet without sentience, empathy might seem meaningless. Furthermore, empathizing with robots might lead to exploitation of our moral capacities by designers whose ends might not align with our own (Weber, 2013). Speaking to the first concern, some have written about empathizing with robots imaginatively, as with fictional characters (Schmetkamp, 2020). To the second concern, we might balance the risk of empathy deterioration: If practicing empathy can improve character, then not empathizing with robots, like not empathizing with animals, might have negative effects on character (Coeckelbergh, 2018) or on one’s willingness to empathize with humans (Schmetkamp, 2020). Empathizing with robots might be worthwhile if it can expand one’s sense of what kinds of relationships are possible—“The moral status of robots depends on how people talk about it and to it” (Coeckelbergh, 2018, p. 150)—and make our communities more inclusive, producing “new knowledge of a very unfamiliar being-in-the-world, thereby broadening our horizons” (Schmetkamp, 2020, p. 894). To connect the motivated-empathy and ethics literatures, we could speculate that assuming that we can be detached and nonrelational with robots (because it would be “fake”) might itself be an act of empathy avoidance (building on Coeckelbergh, 2018).

Engineering applications

Might it be possible to design robots to elicit empathy? The forms and functions of robots can foster feelings of empathy. For example, the PARO is a robotic seal that responds to petting, and can learn to recognize a user’s voice and its own. Patients who use the PARO have been shown to have less stress, better pain management, better interaction with caregivers, and higher empathetic concern (Geva et al., 2020). With respect to this population and within the context of a therapeutic setting, robots can elicit and shape the motivations that underlie empathy.

Relatedly, Tan et al. (2018) showed that robots that expressed empathy toward a person were more likely to elicit empathy when being abused. They found that bystanders witnessing abuse of a robot were more likely to intervene when the robot displayed acts of empathy compared with a robot that was indifferent. Similarly, Connolly et al. (2020) observed that bystanders were more likely to intervene during the abuse of a robot if the robot expressed sadness in response to its abuse. This early work hints at the importance of ascribing mental states to the robot, suggesting that robots engineered to foster human assignment of a mental state to a robot may more successfully elicit empathy from people.

Vicarious Emotion and Compassion From Robots

Psychological findings

From a dyadic perspective, all that recipients perceive is an expression of empathy, which is the case with human empathy expressers as well. We can infer depth and authenticity of empathic expression, but it is a prediction and observation: How do people receive what seems like empathy expressed from robots? Although we ask what it would take for robots to be more effective in eliciting empathy reception in humans, this is separate from the question of whether robots themselves are capable of empathy.

To understand how people respond to robot empathy, a common empirical approach involves emotional modifiers of robot behaviors and testing human reactions in extended interactions. People are more likely to help a robot that acknowledges, through language and facial structure, that it feels the same way they do (Gonsior et al., 2012); actively (vs. passively) collaborating with a robot through complementary empathetic interaction appears to increase closeness (García-Corretjer et al., 2023).

Notably, many studies that embed empathetic displays from robots have small samples. Although small samples can limit the inferential value of studies, many have immersive engineering elements that are worth learning from and building upon in future work (e.g., with adaptive empathic encounters; Shamay-Tsoory & Hertz, 2022). In sum, there is suggestive evidence, awaiting more research with larger samples, that empathetic displays from robots can be received positively and correlate with positive effects. It is important to note that, in discussing the possibility of empathy for robots, we can distinguish zero-sum comparisons of empathy between humans and robots from absolute empathy felt for robots, which appears higher than zero.

Much of the debate about receiving robot empathy comes from literature on carebots. Some studies have examined health outcomes: For example, elderly individuals that received (vs. did not receive) carebot animals showed reduced psychiatric symptoms (Bradwell et al., 2022). From a motivated-empathy perspective, we suggest focusing on what recipients of robot empathy want, giving consideration to their subjective preferences and objective health outcomes.

Ethical considerations

Is it morally appropriate to receive expressions of vicarious emotion or compassion? If robots are not sentient, then although they might manifest empathy-appropriate behavior (such as words of comfort or physical care), the human receiver might be deceived into believing robots are actually empathizing and caring for them. This could undermine well-being; “failure to apprehend the world accurately is itself a (minor) moral failure” (Sparrow & Sparrow, 2006, p. 155).

Delegating caregiving to robots might undercut cultivation of caregiving as a virtue, and weaken motivations for empathizing (Vallor, 2011). Conversely, carebots might expand empathic abilities and motivations, allowing an objectivity that supplements human empathy, especially for situations that may make robot carers easier to tolerate (Borenstein & Pearson, 2010). They may open new possibilities, so that the social reality of human-robot interaction does not cleanly fall into dualities of “real” and “fake” (Coeckelbergh, 2018, p. 155). Just as motivated-empathy approaches focus on how individuals choose to relate to their emotions (Cameron et al., 2019), a social-construction approach to the ethics of carebots suggests that how we choose to relate to robots can create ethical value for robot empathy in those interactions (Coeckelbergh, 2018).

Engineering applications

Although concerns persist that development of empathetic robots might dilute our instinct to empathize or devalue acts of empathy altogether (Sparrow & Sparrow, 2006; Vallor, 2011), it is reasonable to ask how the design of an empathetic robot factors into our tendency to empathize with a robot. Park and Whang (2022) reviewed techniques for designing robots that can display empathy. They described verbal and nonverbal mirroring, synchronized hand motions and posturing, facial expressions, and dialogue as potentially effective ways for a robot to display empathy. Although context and the person’s capacity to feel empathy might affect how a person responds to a robot that displays empathy, evidence suggests that a variety of methods can be used by a robot to convincingly display empathy.

More generally, designing an empathetic robot will require insights from psychologists, for creation of methods to express empathy and for gauging the impact that different expressions of empathy by a robot will have on a person. Expressing empathy requires timing and context that robots typically lack. Moreover, for the expression of empathy to appear genuine, the display of empathy by the robot should be idiosyncratic and not simply a canned routine common across all robots or situations. Next, we consider how human-robot interactions may shape what we understand about limitations of empathy in humans.

Using Robots to Motivate Human Empathy

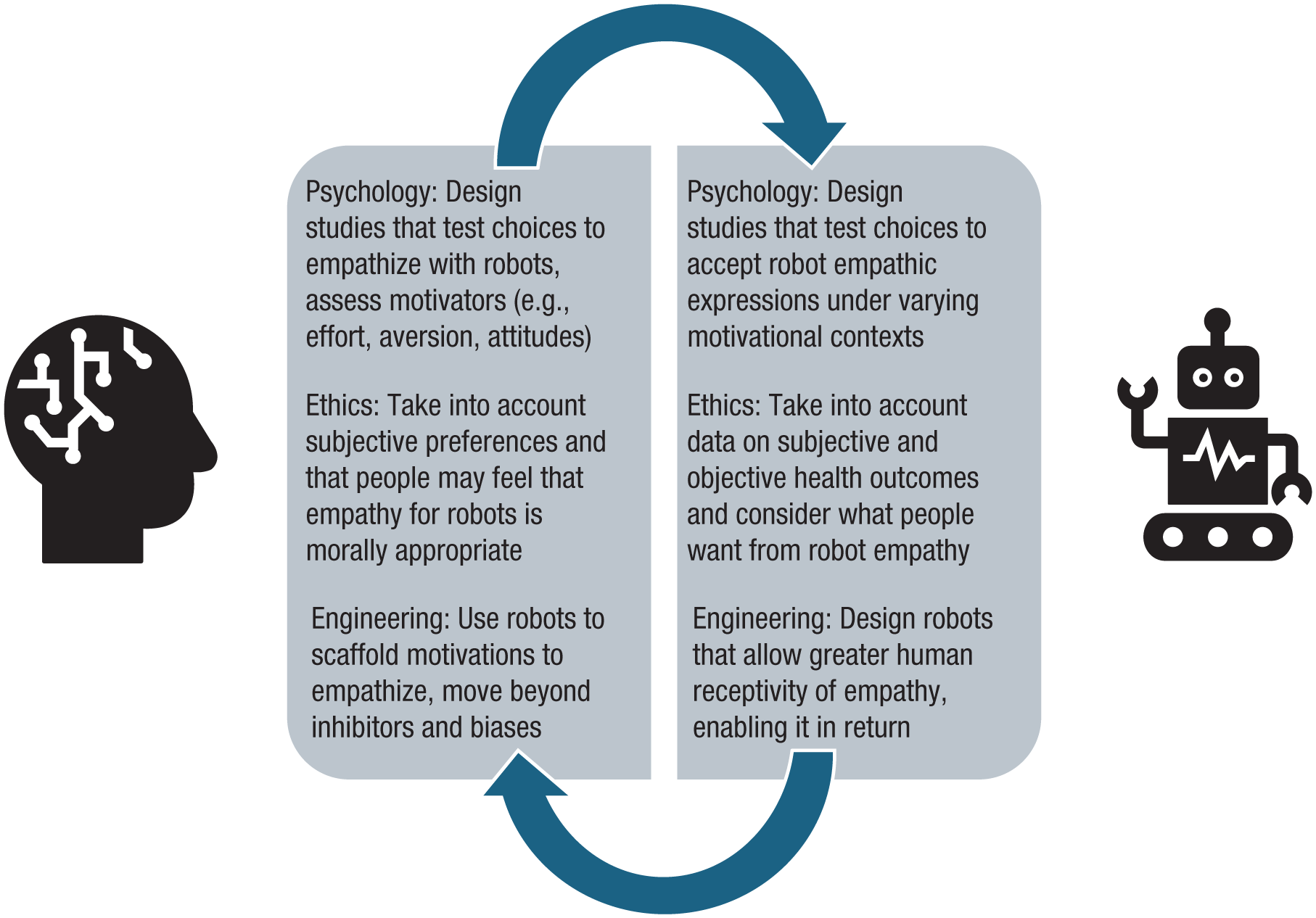

Looking ahead, there may be potential for using human-robot interactions to promote empathy, building on the findings that people can empathize with robots and the ethical arguments for why they should consider doing so (Fig. 3). People can find it difficult to share in others’ experiences and often avoid doing so (Cameron et al., 2019). We suggest (a) that human-robot interaction may afford opportunities to practice effortful empathy and (b) that these interactions may help offset biases in empathy, calibrating human empathy in a direction that could help people expand it beyond its limits (see also Christov-Moore et al., 2023, p. 5).

Next steps for empathy and human-robot interactions.

Human-robot encounters may afford safer environments in which to practice giving and receiving empathy. There have been calls for “virtuous robotics” (Cappuccio et al., 2021, p. 8) that would use such interactions to help people become ethically consistent decision makers. Human-robot interaction platforms can be useful for scaffolding people with socioemotional difficulties and disabilities (e.g., Kouroupa et al., 2022). Questions about whether empathy in these interactions is fake may undersell the importance of developing social skills.

Learning to generate empathy for robots may translate to a wider moral circle and increased propensity to extend empathy beyond in-group categories (e.g., “could nudge a child to interact with other children with whom he/she is not used to engaging in the effort to avoid ‘parochialism’”; Borenstein & Arkin, 2016, p. 41). To the extent that robots can be engineered to express empathy in ways that convey reduced bias, this might help teach people how to empathize in a more balanced and less biased way.

Conclusion

In closing, we might still wonder whether human-robot interactions are the right venue for tackling the limits of human empathy, given that people might just not empathize with robots. Yet from the perspectives of psychology, philosophy, and engineering, integrated through a motivational lens, we suggest a different take. People seem to respond with empathy to robots, and respond positively to empathy from robots, particularly if motivated to do so. However, heterogeneous methods and limited sampling suggest a need for integration and a deeper focus on the motivations we bring to empathy in these encounters. Philosophers might calibrate their interpretations on the basis of the scientific evidence but can bring frameworks that might shape which questions psychologists find compelling. Empathy in human-robot interaction need not be dismissed as fake because of nonsentience; instead, these relational realities might sustain empathy and cultivate virtues. Last, engineers might incorporate psychological evidence and normative implications to frame which applications they think are most pressing. The implementation of empathy by roboticists suggests that psychologists and philosophers consider adaptive, immersive, and longitudinal interactions. Our motivational approach suggests that these disciplines, in tandem, might help us develop social realities that could enrich empathy beyond its seeming limits so that humans and robots learn to be useful for each other.

Recommended Reading

Geiselmann, R., Tsourgianni, A., Deroy, O., & Harris, L. T. (2023). Interacting with agents without a mind: The case for artificial agents. Current Opinion in Behavioral Sciences, 51, Article 101282. https://doi.org/10.1016/j.cobeha.2023.101282. Discusses factors that influence whether people attribute mind to robots, focusing on the role of anthropomorphism and the heterogeneity that can exist across types of robots and AI.

Gray, K., Yam, K. C., Eng, A., Dillion, D., & Waytz, A. (2025). The psychology of robots and artificial intelligence. In D. T. Gilbert, S. T. Fiske, E. J. Finkel, & W. B. Mendes (Eds.), The handbook of social psychology (6th ed.). Situational Press. https://doi.org/10.70400/IKVM1257. A review chapter that discusses research on mind perception toward robots and AI as well as work on algorithm aversion in social psychology.

Harris, L. T. (2024). (See References). Catalogues neuroscience studies on reactions toward robots in social and economic games and paradigms. Although the focus of our review is not neuroscience, this article is helpful for understanding the breadth of prosocial behavior approaches and considering connections to neuroscience.

Henschel, A., Hortensius, R., & Cross, E. S. (2020). Social cognition in the age of human–robot interaction. Trends in Neurosciences, 43(6), 373–384. https://doi.org/10.1016/j.tins.2020.03.013. Discusses social-cognitive approaches to how humans and robots interact with one another, summarizing prior work in social psychology and neuroscience and suggesting new directions for this field of study.

Inzlicht, M., Cameron, C. D., D’Cruz, J., & Bloom, P. (2024). (See References). A short article discussing scientific and ethical implications of receiving empathetic expressions from disembodied AI chatbots that use large language models (LLMs, as in ChatGPT).

Ladak, A., Loughnan, S., & Wilks, M. (2024). The moral psychology of artificial intelligence. Current Directions in Psychological Science, 33(1), 27–34. https://doi.org/10.1177/09637214231205866. A brief article that discusses the relationship between morality and mind perception for robots, focusing on mind dimensions of agency and experience.

Nielsen, Y. A., Pfattheicher, S., & Keijsers, M. (2022). (See References). Highlights existing literature on prosocial behavior in response to robots, which can encompass empathizing but extends beyond empathizing per se.

Oliveira, R., Arriaga, P., Santos, F. P., Mascarenhas, S., & Paiva, A. (2021). Towards prosocial design: A scoping review of the use of robots and virtual agents to trigger prosocial behaviour. Computers in Human Behavior, 114, Article 106547. https://doi.org/10.1016/j.chb.2020.106547. Considers how human-robot interactions could influence prosocial behavior.