Abstract

Advances in AI have enabled chatbots to provide warm, personalized support. Yet little is known about the long-term consequences of AI companionship. Across a 12-month longitudinal study with more than 2,000 adults from four Western countries, we examined the bidirectional relationships between social chatbot use and loneliness.We found evidence that increased social chatbot use predicted increased loneliness, using a single-item measure of emotional isolation. When we used a broader and more stable measure of social connection, we found evidence that feeling less socially connected predicted subsequent increases in social chatbot use; however, chatbot use did not significantly predict decreases in social connection. Taken together, these findings provide initial evidence that being lonely may spur people to seek companionship through chatbots but that such use may, over time, exacerbate feelings of loneliness. We urge caution, however, in drawing strong conclusions given the exploratory nature of our analyses.

The release of ChatGPT in late 2022 ushered in a new technological era for humanity, with some comparing it to the introduction of fire (Friedman, 2023). This new generation of chatbots holds potent implications for well-being. In fact, millions of people are already turning to chatbots for companionship (Inzlicht et al., 2024; Zhou et al., 2020). AI companionship may prove to be a scalable tool for combating loneliness, but some scholars caution that such relationships may do more harm than good (Perry, 2023; Shteynberg et al., 2024) because AI is unable to genuinely experience emotions. Instead, AI can only “supply an illusion of understanding, empathy, caring, and love” (Shteynberg et al., 2024, p. 496). As a result, AI may be unable to offer critical ingredients of rewarding human relationships, such as making people feel understood (Reis et al., 2017). Given these shortcomings, could AI companionship ultimately aggravate rather than alleviate loneliness?

A growing body of work points to a more optimistic conclusion. Research suggests that people feel less lonely after texting with a chatbot than they feel, for example, after watching YouTube (De Freitas et al., 2024). In addition, several experiments have shown that chatbots reduce loneliness and increase mood on par with brief interactions with humans (De Freitas et al., 2024; Drouin et al., 2022; Folk et al., 2024; Ho et al., 2018). Even when people were asked to interact with a chatbot every day for a week, they still reported decreases in loneliness immediately after their interactions (De Freitas et al., 2024). Nevertheless, each of these studies focused only on the immediate impact of interacting with chatbots. Momentary reductions in loneliness could be indicative of a positive long-term impact, but these short-term benefits could belie long-term costs.

Parasocial relationships—illusory one-sided connections to popular figures—may offer a useful comparison for the potential pitfalls of AI companionship. Research suggests that people use parasocial relationships to compensate for a lack of social interaction because these relationships feel rewarding in the moment (e.g., Bond, 2021; Madison et al., 2016). Critically, however, such relationships do not mitigate loneliness to the same extent as real-world friendships (Stein et al., 2024).

Although there are important differences between chatbots and parasocial relationships (e.g., chatbots have conversations with their users), chatbots may serve a similar compensatory function. Indeed, there are countless reports of people using AI companions as friends (Metz, 2020; Pentina et al., 2023; Roose, 2024), romantic partners (Hill, 2025; Patel, 2024), and even replacements for deceased loved ones (Wilkinson, 2025). Research suggests people have an automatic tendency to perceive minds in otherwise inanimate objects (i.e., anthropomorphize; Epley et al., 2007) and that we treat even the most basic computers as if they were humans (Gambino et al., 2020; Nass & Moon, 2000). This work helps to explain why participants report feeling less lonely after brief interactions with chatbots (e.g., De Freitas et al., 2024).

Although people may be drawn to AI companions because they offer immediate emotional rewards (e.g., De Freitas et al., 2024), their limitations may become consequential only over time. In particular, increasing reciprocal disclosure is a key element in the development of rewarding and intimate human relationships over time (e.g., Altman & Taylor, 1973; Aron et al., 1997; Collins & Miller, 1994), but AI has nothing personal to disclose. Moreover, although AI companions can be programmed to share personal facts, one recent study suggested that people feel less connected to chatbots that engage in artificial self-disclosure (Folk et al., 2024). Accordingly, although people may initially enjoy talking only about themselves, the one-sided nature of AI-human conversations may limit the quality of companionship that AI can provide over the long term.

AI’s ability to provide endless expressions of care may also be initially appealing but grow stale over time. Although well-crafted messages of emotional support are comforting, they may lose emotional weight after users become accustomed to an endless supply of such messages. Indeed, human support is valued in part because we have a limited emotional capacity, making recipients of support feel unique and important (Perry, 2023).

Taken together, chatbots may be appealing and rewarding social partners in the short term but fail to provide the long-term social satisfaction that characterizes rewarding human relationships. Nevertheless, people may repeatedly turn to AI companions because they are always available and simulate human companionship in a manner that feels compelling in the moment. As such, relatively shallow interactions with AI may be crowding out more rewarding human interactions in people’s lives. On the basis of this theorizing, we might expect people who use chatbots for companionship to report feeling lonelier than nonusers. If we observed this relationship in cross-sectional data, however, it would not necessarily indicate that turning to AI for companionship leads to loneliness. Such a difference could instead reflect the fact that feeling lonely leads people to seek out chatbots. Of course, it is also possible that there is a bidirectional relationship, whereby loneliness promotes chatbot use, which in turn exacerbates loneliness.

To examine these two potential pathways, we analyzed data from 2,149 participants in four Western countries over a 12-month period. We asked them how often they had been using chatbots for social purposes over the past 4 months, such as asking for advice on life decisions or having regular social conversations (i.e., “social chatbot use”).

In each of our four surveys, we also measured how emotionally isolated and socially connected participants had been feeling. In psychological research, the term “loneliness” typically refers to a constellation of constructs related to people’s social well-being (Cunningham et al., 2025). Our measure of emotional isolation directly asked people how emotionally isolated they had been feeling from other people, whereas our measure of social connection provided a broader, more stable assessment of how people felt in relation to their friends, strangers, and society. Accordingly, we treat these as two related but distinct constructs that fall under the umbrella of “loneliness.” Throughout this article, we use the term “loneliness” to refer to this broader umbrella concept, and we use “emotional isolation” and “social connection” to refer to each measure individually.

Research Transparency Statement

General disclosures

Study disclosures

Method

Procedure

We invited participants from Prolific Academic to complete four surveys over the course of 12 months. Data collection occurred in four sequential waves: Wave 1 ran from November 2023 to February 2024, Wave 2 from March to June 2024, Wave 3 from July to October 2024, and Wave 4 from November 2024 to February 2025. We selectively opened each survey such that participants could access a new survey only 4 months after they completed the previous survey. This process ensured the time elapsed between subsequent surveys was 4 months and the total time elapsed between Waves 1 and 4 for each participant was 12 months. Participants were compensated $1.20 to $1.25 for completing each survey. All surveys included a captcha designed to prevent responses from bots.

We chose a 4-month interval because we assumed that social chatbot use would be relatively rare, and we wanted the interval between measurements to be long enough to detect changes in people’s social chatbot use. In addition, compared with longer intervals (e.g., 6 months), 4-month intervals allowed us to collect more waves of data over the course of a year while also being more cost-effective than collecting data in shorter intervals (e.g., 3 months).

The surveys included measures of social chatbot use and loneliness as part of an ongoing longitudinal study designed to explore the broader social and emotional ramifications of chatbot use. The complete data set and full set of measures are freely available on OSF, but because this study was not preregistered, all findings should be treated as exploratory, and p values should be treated with caution.

Sample

We recruited Prolific Academic users who were currently residing in the United Kingdom, United States, Canada, or Australia. A total of 2,149 participants completed at least one of the surveys across the four waves (mean age = 39.99 years, SD = 13.63; 49% male; 50% residing in the United Kingdom, 28% in the United States, 14% in Canada, and 8% in Australia). Of these, 979 participants (46%) completed all four surveys, 466 (22%) completed three surveys, 395 (18%) completed two surveys, and 309 (14%) completed only one survey. We did not exclude any participants; all participants who completed at least one of the surveys were included in the analyses. We included the participants who completed only one time point to maximize our sample size because the inclusion of these participants increases the precision of certain parameter estimates in random-intercept cross-lagged panel models (RI-CLPMs).

All of these participants were recruited from a larger group of participants (N = 2,592) who completed an earlier survey between July and October 2023. The demographic data were taken from this prior survey. In addition, prior to the Wave 1 survey, 567 of these participants were asked to interact with ChatGPT for 6 min as part of a separate online experiment (for analyses excluding these participants, see “Robustness Checks”).

Exclusion criteria

As mentioned, we did not exclude any participants in our primary analyses. However, some participants (n = 7) submitted two responses to the same survey, in which case we included only the first survey response submitted. It is also worth noting that two participants provided impossible age values (i.e., 3 and 367). We included these participants but assigned them values of “NA” for age.

Measures

As part of a broader longitudinal study, this survey included our key measures of social chatbot use, emotional isolation, and social connection, which were identical across the four surveys (for all measures included in the surveys, see https://osf.io/6xsuz/files/osfstorage).

Social chatbot use

We first asked participants a question about whether they had ever used a chatbot before for any purpose. Participants who answered “yes” were then presented with the question, “Over the past 4 months, how often have you used chatbots for social purposes such as: asking for advice on life decisions (e.g., asking the chatbot if you should take a job promotion), having regular social conversations (e.g., talking about your day, asking the chatbot about itself), to provide social companionship.” Response options ranged from never (1) to daily (6). Participants who had never used a chatbot were automatically assigned a value of 1.

Emotional isolation

We used a single item used in Gallup surveys (Helliwell et al., 2023) that asked, “In general, over the past 4 months, how lonely have you been feeling? By lonely, we mean how much you feel emotionally isolated from people.” Response options ranged from not at all lonely (1) to very lonely (4).

Social connection

We used a 20-item measure of social connectedness (Lee et al., 2001) to ask participants to “Please think about how you have been generally feeling over the past 4 months and rate the degree to which you agree or disagree with each statement using the scale below” with response options ranging from strongly disagree (1) to strongly agree (6). This measure was designed to assess people’s stable feelings of connectedness to the social world in general rather than their feelings of connection with respect to their interpersonal relationships alone (Lee et al., 2001). As such, it included some items that were similar to our measure of emotional isolation, such as “I am able to connect with other people.” However, it also included items that assessed people’s feelings of connection to society more generally (e.g., “I am in tune with the world”) as well as more stable features of people’s identity (e.g., “I fit in well in new situations”). Thus, we viewed this measure of social connection as related to but distinct from our measure of emotional isolation. In line with this interpretation, the correlation (r) between the emotional isolation and social connection measure at each wave ranged from −.63 to −.64 (for correlation table, see the Supplemental Material). It is worth noting that this measure underwent a validation procedure before being introduced in the literature (see Lee et al., 2001) and has been used extensively (the validation article has been cited more than 1,300 times). Like many psychological constructs, the validation process was limited in scope (Flake et al., 2017) but did outline the conceptual boundaries of the scale and provide evidence of convergent and discriminant validity for related constructs (e.g., social anxiety, self-esteem).

Experiencing social stressors

To control for major life events that could confound the relationship between chatbot use and loneliness, we asked participants at each survey wave whether or not they had experienced the following social stressors over the past 4 months: the end of a romantic relationship (e.g., divorce, breakup), relocations (e.g., moving to a new city), the beginning of a steady romantic relationship, or becoming a new parent. Binary indicator variables (0 = no, 1 = yes) were used for each stressor at each wave.

Analytic strategy

To investigate the reciprocal relationship between loneliness and social chatbot use, we used an RI-CLPM (Hamaker et al., 2015). We first conducted an analysis with our emotional isolation measure, and then we repeated the analysis using the measure of social connection.

The RI-CLPM is a widely used tool for investigating longitudinal relationships between pairs of variables, in part because it avoids the pitfalls of standard cross-lagged panel models (CLPMs; Hamaker et al., 2015; Lucas, 2023). The standard CLPM assesses the cross-lagged relationships between two variables, but it does not distinguish between within- and between-person variance. This results in biased parameter estimates, especially when the constructs being studied have a stable component (for discussions, see Hamaker et al., 2015; Lucas, 2023). The RI-CLPM, in contrast, introduces a random intercept that captures the stable component of the constructs and allows us to isolate the relationship between within-person fluctuations in the constructs of interest over time. Thus, the RI-CLPM accounts for each participant’s average levels of emotional isolation and chatbot use across our four time points, and the cross-lagged estimates in the RI-CLPM test how deviations from each individual’s own typical levels of emotional isolation and chatbot use predict one another over time. For example, if Susan reports feeling more emotionally isolated than usual at Wave 2, we can test whether she uses chatbots more than usual 4 months later (at Wave 3), and vice versa.

Model specifics

We fit the model using the lavaan package in R (Rosseel, 2012) and used full information maximum likelihood (FIML) estimation to handle missing data, meaning that all available data points were used to estimate model parameters (for information on any additional models used, see “Robustness Checks”). We allowed the residual covariances for the within-person components to vary across time points. However, as is typically recommended when the measurement points are equally spread out (Oh et al., 2022; Orth et al., 2021), we constrained the autoregressive and cross-lagged paths to be equal across the time intervals (the average time elapsed between surveys was 122.12 days between Waves 1 and 2, 121.24 days between Waves 2 and 3, and 120.90 between Waves 3 and 4). This approach of constraining the lagged associations to be equal results in more precise single estimates that represent the average effect across the duration of the study (Oh et al., 2022; Orth et al., 2021).

To account for the effect of social stressors, we included the four life events (breakups, relocations, new relationships, becoming a parent) as time-varying covariates. Specifically, experiencing each stressor at a given wave predicted within-person chatbot use and loneliness at the next wave, with these effects constrained to be equal across time intervals. We also estimated covariances between the random intercepts (stable individual differences in loneliness and chatbot use) and each stressor, constraining these covariances to be equal across waves (for full information on the model parameters, see the Supplemental Material).

Results

Frequency of social chatbot use

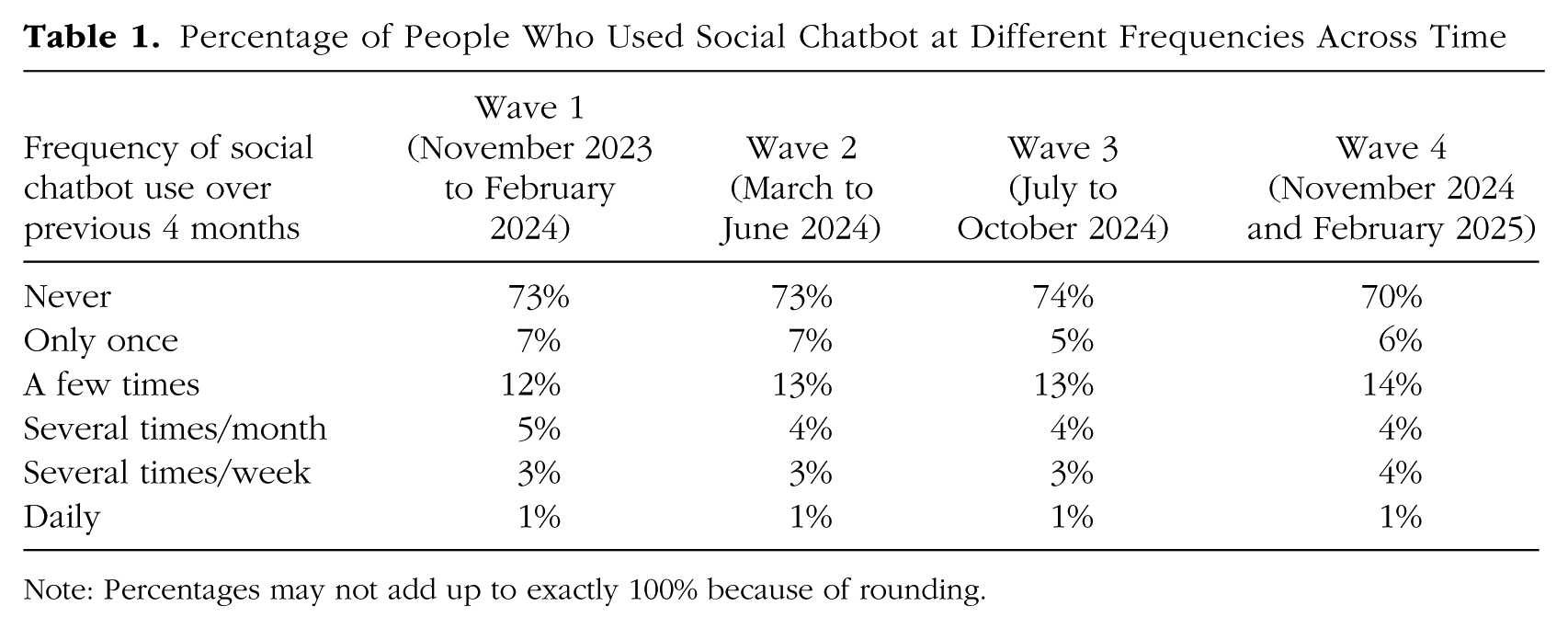

Across the four waves, 26.38% to 29.68% of participants reported social chatbot use at each time point (Table 1). Among these users, the modal amount of use was “a few times” over the previous months. In addition, a one-way analysis of variance revealed that the mean response to our social chatbot question did not significantly differ across waves (MWave 1 = 1.60, MWave 2 = 1.59, MWave 3 = 1.62, MWave 4 = 1.69; p = .96).

Percentage of People Who Used Social Chatbot at Different Frequencies Across Time

Note: Percentages may not add up to exactly 100% because of rounding.

Primary analyses

Model with emotional isolation measure

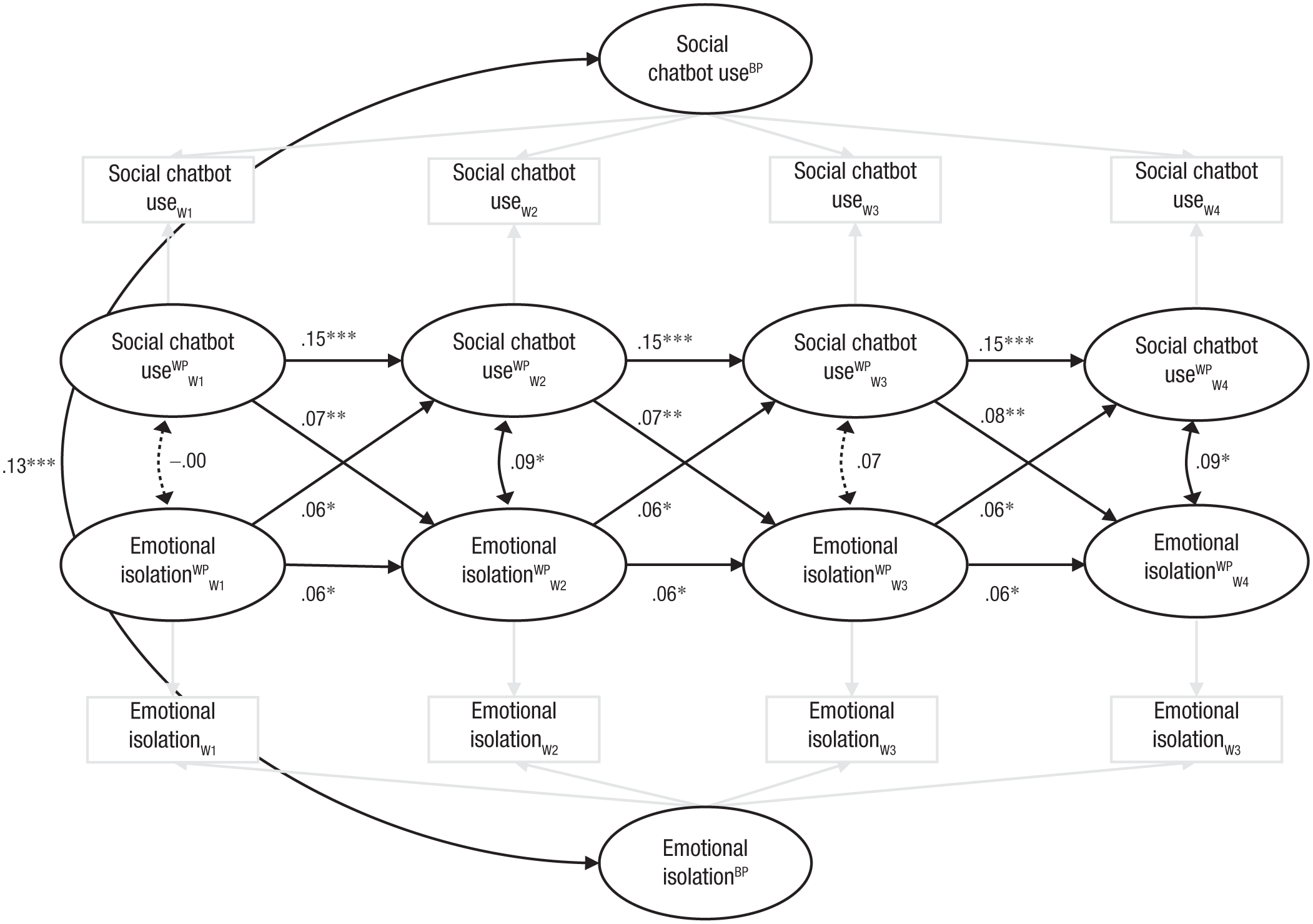

We found that increases in emotional isolation predicted significant increases in chatbot use 4 months later (βWave 1 → Wave 2 = 0.06, βWave 2 → Wave 3 = 0.06, βWave 3 → Wave 4 = 0.06; p = .023; see Fig. 1), consistent with the hypothesis that feeling lonely leads people to seek out chatbots. Importantly, after people increased their chatbot use, they reported increased emotional isolation 4 months later (βWave 1 → Wave 2 = 0.07, βWave 2 → Wave 3 = 0.07, βWave 3 → Wave 4 = 0.08; p = .006). Additionally, in two of the four waves, the within-person fluctuations in chatbot use and emotional isolation were significantly positively correlated, indicating people tended to report more social chatbot use during the times they were feeling more emotionally isolated than normal. There was no significant relationship between experiencing a breakup (p = .787), new relationship (p = .738), relocation (p = .275), or becoming a parent (p = .477) on subsequent chatbot use. There were also no significant relationships between these stressors and subsequent loneliness (ps > .104; for detailed results, see the Supplemental Material). That said, the prevalence of these experiences was low, with breakups (4.8%–5.8%), relocations (5.3%–6.6%), new relationships (2.9%–4.3%), and becoming a new parent (1.2%–1.8%) each affecting a small portion of participants at each wave.

Path diagram for the RI-CLPM with emotional isolation and social chatbot use controlling for the effect of breakups, starting new relationships, relocating, and becoming a parent (covariate effects not shown for clarity). Asterisks indicate significant paths (***p < .001, **p < .01, *p < .05). Nonsignificant paths (p > .05) are represented by dotted lines; paths that were fixed to 1 as part of model specification are represented by gray lines. All coefficients are standardized, meaning that even paths constrained to be equal have slightly different standardized estimates but identical unstandardized estimates. As noted, we constrained the unstandardized paths for the two emotional isolation → social chatbot cross-lagged effects to be equal. As a result, the p values for these sets of pathways are identical, but the standardized coefficients are slightly different because of the different standard deviations at different time points. We also constrained the unstandardized paths for the two social chatbot → emotional isolation cross-lagged effects to be equal, so the same principles apply to those estimates as well. RI-CLPM = random-intercept cross-lagged panel model.

Beyond the within-person relationships, there was a significant correlation between the stable between-person components of social chatbot use and emotional isolation (r = .13, p < .001). This suggests that people who typically feel emotionally isolated also tend to use social chatbots more in general. It is worth noting that our emotional isolation measure was relatively stable across time because the between-person emotional isolation component explained 70% to 73% of the variance in emotional isolation at any given wave.

Overall, our model had excellent fit according to traditional fit indices—comparative fit index (CFI) = .964, root-mean-square error of approximation (RMSEA) = .032, standardized root-mean-square residual (SRMR) = .045. The chi-square test was significant, χ2(133) = 427.87, p < .001, but this was unsurprising given our large sample size.

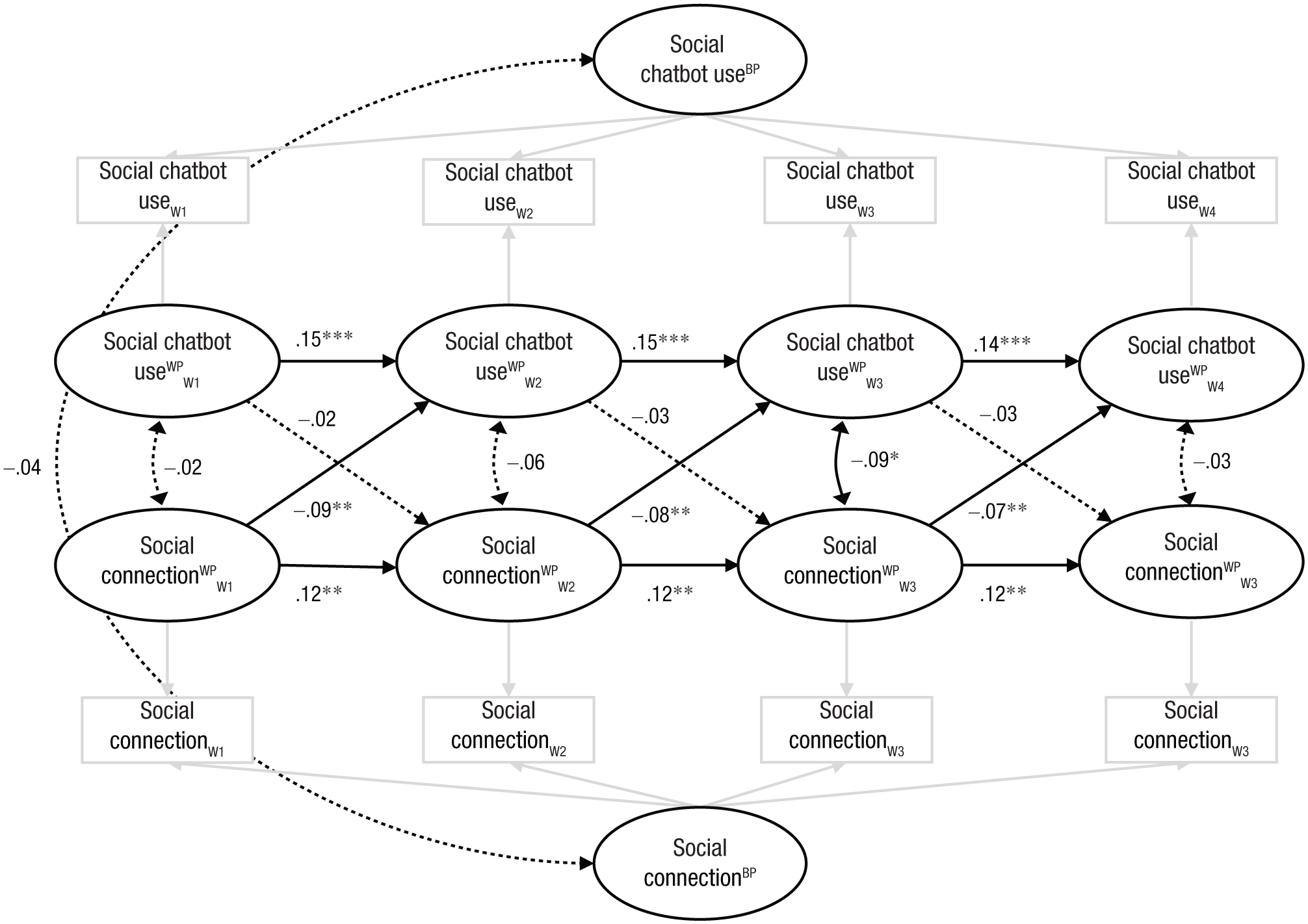

Model with social connection measure

We found that feeling less socially connected predicted significant increases in chatbot use 4 months later (βWave 1 → Wave 2 = −0.09, βWave 2 → Wave 3 = −0.08, βWave 3 → Wave 4 = −0.07; p = .004; see Fig. 2), consistent with our emotional isolation model. In contrast to our emotional isolation model, however, increased chatbot use at one time point did not significantly predict decreased connection 4 months later (βWave 1 → Wave 2 = −0.02, βWave 2 → Wave 3 = −0.03, βWave 3 → Wave 4 = −0.03; p = .369), although the relationship was in the expected direction. Experiencing a breakup did significantly predict subsequent social connection (βWave 1 → Wave 2 = −0.06, βWave 2 → Wave 3 = −0.06, βWave 3 → Wave 4 = −0.07; p = .004) but not subsequent social chatbot use (p = .902). There was no relationship between the other stressors and subsequent connection or chatbot use (ps > .125). The within-person fluctuation in social connection and social chatbot use was significantly correlated at Wave 3 (p = .033) but not at Waves 1, 2, or 4 (ps > .206). When interpreting all of these relationships, it is important to highlight that the social connection measure was highly stable across the study, with the between-person social connection factor explaining between 85% to 89% of the variance in social connection at any given time point.

Path diagram for RI-CLPM with social connection and social chatbot use controlling for the effect of breakups, starting new relationships, relocating, and becoming a parent (covariate effects not shown for clarity). Asterisks indicate significant paths (***p < .001, **p < .01, *p < .05). Nonsignificant paths (p > .05) are represented by dotted lines; paths that were fixed to 1 as part of model specification are represented by gray lines. All coefficients are standardized, meaning that even paths constrained to be equal have slightly different standardized estimates but identical unstandardized estimates. As noted, we constrained the unstandardized paths for the two social connection → social chatbot cross-lagged effects to be equal. As a result, the p values for these sets of pathways are identical, but the standardized coefficients are slightly different because of different standard deviations at different time points. We also constrained the unstandardized paths for the two social chatbot → social connection cross-lagged effects to be equal, so the same principles apply to those estimates as well. RI-CLPM = random-intercept cross-lagged panel model.

The between-person components of social chatbot use and social connection were not significantly correlated (r = −.04, p = .150). Overall, this model also had excellent fit according to traditional fit indices (CFI = .975, RMSEA = .032, SRMR = .043), χ2(133) = 429.551, p < .001.

Robustness checks

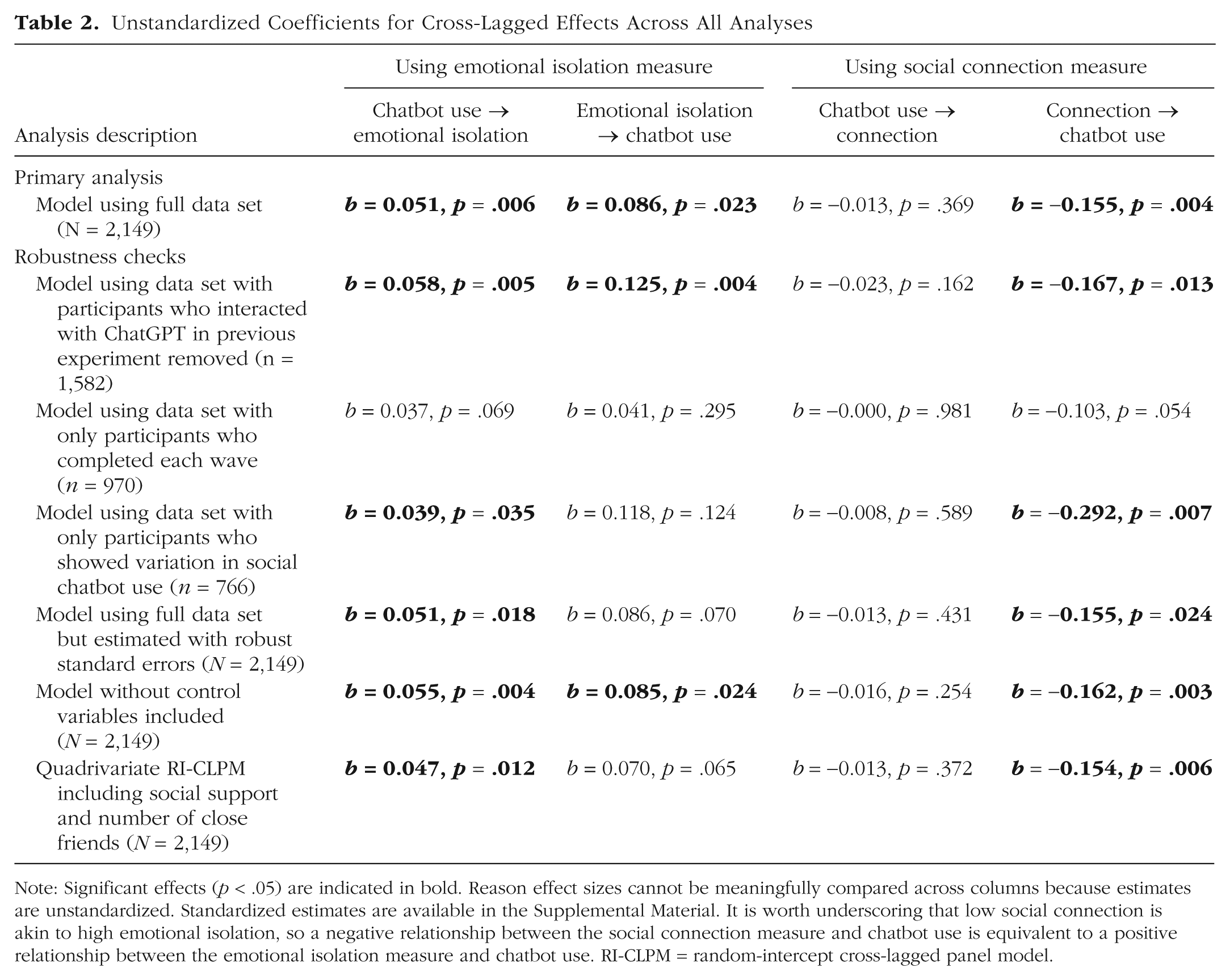

For each of the two models described above, we conducted several alternative analyses to assess the robustness of the cross-lagged relationships. We provided the standardized estimates for the cross-lagged effects in the above sections, but we provide the unstandardized estimates for all the analyses in Table 2. The unstandardized estimates are more easily summarized because unlike the standardized coefficients they are identical across waves in any given analysis. The detailed results for each of the robustness checks are available in the Supplemental Material.

Unstandardized Coefficients for Cross-Lagged Effects Across All Analyses

Note: Significant effects (p < .05) are indicated in bold. Reason effect sizes cannot be meaningfully compared across columns because estimates are unstandardized. Standardized estimates are available in the Supplemental Material. It is worth underscoring that low social connection is akin to high emotional isolation, so a negative relationship between the social connection measure and chatbot use is equivalent to a positive relationship between the emotional isolation measure and chatbot use. RI-CLPM = random-intercept cross-lagged panel model.

As mentioned, 567 participants in our sample had interacted with ChatGPT as part of an experiment prior to the Wave 1 survey. For our first robustness check, we fit our two primary models to a data set that did not include these 567 participants (remaining n = 1,582). For our next robustness check, we fit our two primary models to a data set of only the 970 participants who completed each survey without any missing data (i.e., listwise deletion). We also fit our two primary models on a much smaller data set that included only participants who exhibited changes in social chatbot use during the study (n = 766). In addition, we fit the same models used in our primary analyses, but we used FIML estimation while estimating robust standard errors on our entire data set. This method is robust to violations of multivariate normality, and the robust standard errors tend to provide a more conservative estimate than the standard model. Last, we repeated our primary analyses without the social stressor variables, resulting in a standard bivariate RI-CLPM with no control variables.

As seen in Table 2, there were two effects that emerged most consistently. When we used our emotional isolation measure, social chatbot use predicted subsequent increases in emotional isolation. When using the social connection measure, it was the relationship between connection and subsequent chatbot use that was largely robust.

Controlling for additional potential confounders

Our primary analyses controlled for experiencing several different social stressors, which we considered the most clear-cut potential confounders for the longitudinal relationship between chatbot use and loneliness. Nevertheless, additional constructs such as perceived social support and number of close friends could theoretically confound the chatbot-loneliness relationship. Alternatively, these variables could be downstream consequences of chatbot use and mediate the relationship between chatbot use and loneliness. This ambiguity is potentially consequential because if these constructs are mediators, controlling for them could bias our estimates of the loneliness and chatbot use pathways.

For completeness, we conducted additional robustness analyses that included single-item measures of perceived support and number of close friends. Specifically, we estimated quadrivariate RI-CLPMs with cross-lagged relationships between chatbot use, loneliness (either emotional isolation or social connection), perceived social support, and number of close friends. As with our primary models, we also accounted for the effect of experiencing each of the social stressors (for full results and more details, see the Supplemental Material).

Both the quadrivariate RI-CLPM using emotional isolation (CFI = .981, RMSEA = .024, SRMR = .035) and the quadrivariate RI-CLPM using social connection (CFI = .985, RMSEA = .024, SRMR = .034) fit the data well. Our key cross-lagged estimates were slightly smaller but remained largely unchanged from our primary models (see Table 2). In addition, chatbot use did not predict subsequent changes in either social support or number of close friends (ps > .322). Together, these results suggest that these constructs do not substantially confound or mediate the relationship between chatbot use and loneliness. However, it is worth emphasizing that we used single-item measures of support and friendship (for details on these measures, see the Supplemental Material) and that we were already controlling for the effects of social stressors that may be related to these constructs, such as experiencing a breakup.

To summarize across our robustness checks (see Table 2), social chatbot use significantly predicted subsequent emotional isolation in five of the six analyses, with the sixth being marginally significant (p = .069). Likewise, social connection predicted subsequent social chatbot use in five of the six analyses, with the sixth being marginally significant (p = .054).

Discussion

Across a 12-month longitudinal study with more than 2,000 adults from four Western countries, we examined the bidirectional relationships between loneliness and turning to AI for companionship. Using a single-item measure of emotional isolation, our results suggested there may be long-term costs of AI companionship: Turning to AI for companionship predicted increased emotional isolation 4 months later. We also found some evidence that feeling more emotionally isolated predicted increases in social chatbot use, although this relationship was less robust. When we used a broader and more stable measure of social connection, we found evidence that feeling less socially connected predicted later increases in social chatbot use; however, chatbot use did not significantly predict decreases in social connection. Together, these findings provide initial evidence that feeling lonely may spur people to seek companionship through chatbots but that such use may, over time, exacerbate feelings of loneliness.

Why did the pattern of results vary across our measures of emotional isolation and social connection? The isolation measure was narrow in its focus, whereas the social connection measure assessed people’s stable connection with the “social world in toto” (Lee et al., 2001, p. 310). Although the emotional isolation measure has high face validity, it is more susceptible to noise because it is only a single item. In contrast, the connection measure has 20 items, but many of them have questionable face validity (e.g., “I am in tune with the world”). It also exhibited little fluctuation between waves, perhaps because of its additional focus on stable social traits (e.g., “I see myself as a loner”). It seems reasonable that a small change in the way people use technology might shape their feelings of isolation without altering their more stable self-image. Yet when individuals do experience reductions in their overall sense of social connection, this larger shift might trigger them to change their behavior by seeking out AI. Of course, because our study was exploratory, we can only speculate about these differences across measures.

Although the primary focus of our study was the emotional isolation and social connection measures, our data set included other measures. Any study that is not preregistered creates a garden of forking paths (Gelman & Loken, 2013), and if our primary variables had not yielded interesting results, we may have justified turning our focus to other variables. As with all nonpreregistered studies, such uncertainty should result in a cautious interpretation of our p values (Gelman & Loken, 2013), many of which would not survive corrections for multiple comparisons.

Caution is also warranted in drawing strong causal conclusions. The cross-lagged effects in RI-CLPMs imply causality only if three strong assumptions of causal inference are met (Hernán & Robins, 2010; Mulder et al., 2024). The assumption of positivity requires that all participants have a chance of receiving the treatment (i.e., social chatbot use). We plausibly satisfy this assumption because all the participants could have conceivably used chatbots for social reasons. In addition, the assumption of exchangeability requires no hidden confounding. Although we controlled for what we considered the most obvious potential confounders, other unaccounted variables could have biased our results. For example, people may have asked a chatbot for advice about accepting a job offer, leading them to report social chatbot use at Wave 1. Taking the new job may have made them feel lonelier by Wave 2, but our analyses would attribute this increased loneliness to social chatbot use. The third key assumption is consistency, which requires that social chatbot use is clearly and uniformly defined. This requires, for example, that using chatbots “daily” represents the same type and intensity of interaction across all participants. We attempted to enhance the consistency of our social chatbot measure by using specific response options such as “only once” and “daily” instead of vague terms such as “a lot.” However, two participants who reported the same frequency may have still engaged in very different kinds of interactions. Thus, like most psychological research using RI-CPLMs (Mulder et al., 2024), we only partially satisfy these stringent assumptions, precluding strong causal conclusions.

It is also important to note that just under 30% of participants reported using chatbots for social purposes at any given wave. Although this proportion is relatively small, it is still higher than the proportion of Americans who reported using ChatGPT in 2024 (Sidoti & McClain, 2025). As such, our sample of Prolific workers is likely more technologically savvy than our broader target population of English-speaking Western adults.

Our findings add nuance to past experimental work documenting the short-term emotional benefits of chatbot use (e.g., De Freitas et al., 2024). One plausible explanation for our results is that conversations with chatbots may serve as substitutes for conversations with other people, eventually increasing loneliness. Indeed, people may grow accustomed to consistently supportive chatbots and begin to find the unpredictable nature of human interaction less appealing, even though such relationships are more rewarding in the long term. Researchers could test whether texting with a chatbot displaces interactions with friends by conducting daily diary studies in which participants are assigned to interact with a chatbot; to detect subtle changes, researchers may wish to avoid the social connection measure given its focus on people’s stable social identity.

Overall, it would be premature to make sweeping generalizations about the long-term impact of AI companionship given the need for longer term, confirmatory studies. That said, our findings suggest that focusing solely on the short-term benefits of chatbot interactions may obscure more pernicious long-term effects.

Supplemental Material

sj-pdf-1-pss-10.1177_09567976261427747 – Supplemental material for How Does Turning to AI for Companionship Predict Loneliness and Vice Versa?

Supplemental material, sj-pdf-1-pss-10.1177_09567976261427747 for How Does Turning to AI for Companionship Predict Loneliness and Vice Versa? by Dunigan Folk and Elizabeth Dunn in Psychological Science

Footnotes

Transparency

Action Editor: Amy Orben

Editor: Simine Vazire

Author Contributions

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.