Abstract

Metacognition—the capacity to monitor and evaluate one’s own knowledge and performance—is foundational to human decision-making, learning, and communication. As large language models (LLMs) become increasingly embedded in both high-stakes and widespread low-stakes contexts, it is important to assess whether, how, and to what extent they exhibit metacognitive abilities. Here, we provide an overview of the current knowledge of LLMs’ metacognitive capacities, how they might be studied, and how they relate to our knowledge of metacognition in humans. We show that although humans and LLMs can sometimes appear quite aligned in their metacognitive capacities and behaviors, it is clear many differences remain; attending to these differences is important for enhancing the collaboration between humans and artificial intelligence. Last, we discuss how endowing future LLMs with more sensitive and more calibrated metacognition may also help them develop new capacities such as more efficient learning, self-direction, and curiosity.

Metacognition refers to the human capacity to monitor, assess, and regulate our own cognitive processes and mental states. It is foundational for learning, decision-making, and communication. Within this framework, confidence judgments and uncertainty representations play central roles. Confidence is a specific form of certainty and involves an explicit evaluation that a given choice is correct. Confidence is therefore tied directly to evaluating one’s own decision (Pouget et al., 2016). In contrast, uncertainty can be considered the broader internal representation of possible states or outcomes that may or may not be explicitly expressed. Therefore, confidence is a particular, overt expression of uncertainty, and together these constructs provide measurable indicators of metacognition (Fleming, 2024; Pouget et al., 2016).

Importantly, confidence not only shapes an individual’s own decisions but also serves a communicative function. Expressing confidence enables humans to coordinate effectively by signaling when their judgments are likely trustworthy and when they may be error-prone (Frith, 2012). This communication of uncertainty allows groups to integrate knowledge efficiently and to calibrate trust across team members. Recent developments in artificial intelligence (AI) have placed considerable attention on uncertainty and its effective communication to human users. Large language models (LLMs), in particular, increasingly serve in advisory roles, providing recommendations, explanations, and answers to diverse inquiries. Consequently, LLMs must be able to communicate uncertainty effectively, enabling humans to appropriately calibrate their reliance on AI-generated recommendations and to understand clearly when such advice is dependable (Steyvers & Kumar, 2024; Steyvers, Tejeda, et al., 2025). Therefore, it is important to understand LLMs’ metacognitive capabilities and to explore their capacity to communicate uncertainty to facilitate their effective use in human collaboration.

Here we examine key recent findings in LLMs’ metacognitive capabilities in relation to the literature on humans, highlighting the methods for evaluating internal uncertainty and explicit confidence reporting with an emphasis on human-LLM collaboration. We provide insights into the parallels and divergences between human and LLM metacognition throughout and discuss potential pathways for enhancing metacognitive interactions between humans and LLMs. In closing, we consider how advances in LLM metacognition might contribute to the emergence of other cognitive functions relevant to intelligence.

Confidence and Uncertainty Quantification in LLMs

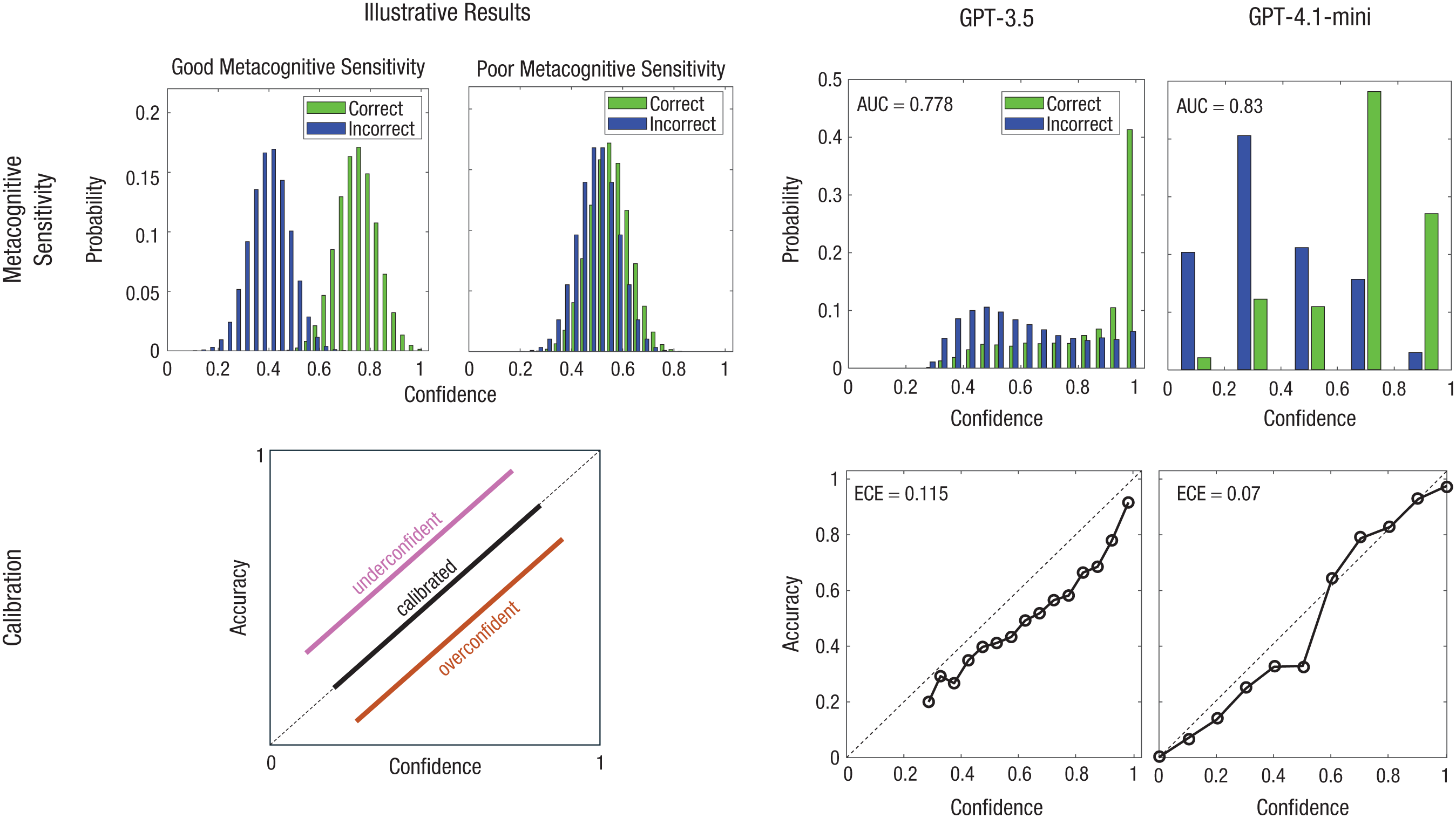

A key question regarding LLMs’ metacognition is whether they can accurately recognize and adequately communicate their own knowledge boundaries. Existing research is mixed in its conclusions. Some studies suggest that LLMs demonstrate limited metacognitive insight and struggle to recognize gaps in their own knowledge, leading to conclusions that LLMs lack essential metacognitive capabilities (Griot et al., 2025). Yet other findings suggest that LLMs can indeed detect their knowledge boundaries and can discriminate effectively between problems they can solve correctly and those for which they may fail (Kadavath et al., 2022; Steyvers, Tejeda, et al., 2025); for a few examples, see Figure 1. A contributing factor to these seemingly conflicting results is the diversity in methods used to quantify LLM uncertainty and the different ways in which the term “confidence” is used in the literature on machine learning and psychology. Broadly, two approaches for assessing uncertainty dominate current research: explicit and implicit methods.

Demonstrations of confidence-accuracy relationships using cartoons and an empirical example based on results from GPT-3.5 (Steyvers, Tejeda, et al., 2025) and a confidence–fine-tuned GPT-4.1 mini model (Steyvers, Belem, & Smyth, 2025), focusing on metacognitive sensitivity and calibration. Confidence distributions (top row) for correct (green) and incorrect (blue) answers allow the assessment of metacognitive sensitivity. Illustrative results show examples of different degrees of separations between the distributions, reflecting different degrees of metacognitive sensitivity. The empirical results using GPT-3.5 and GPT-4.1 mini show modest separation, with the AUCs (0.778 and 0.83, respectively) reflecting the probability that a randomly selected correct answer is assigned higher confidence than a randomly selected incorrect answer. Metacognitive calibration (bottom row) can be seen by plotting accuracy as a function of confidence. The illustrative results show examples of overconfidence, underconfidence, and properly calibrated confidence (points directly along the diagonal). The GPT-3.5 results, based on implicit confidence signals from token likelihoods on multiple-choice questions (MMLU), show overconfidence—predicted confidence exceeds actual accuracy. In contrast, GPT-4.1 mini was fine-tuned to generate explicit verbal confidence estimates on short-answer trivia questions (TriviaQA), yielding improved calibration. AUC = area under the curve; MMLU = Massive Multitask Language Understanding.

Implicit methods seek to infer model uncertainty by either consistency-based methods or token likelihoods. With consistency-based methods, the agreement between multiple generated answers from an LLM determines uncertainty: If the model is certain, the same question tends to produce more consistent answers (Liu et al., 2025). With the token likelihood method, in contrast, the likelihood assigned to tokens at the output layer of the LLM is taken as a measure of uncertainty (Liu et al., 2025; Steyvers, Tejeda, et al., 2025). For example, when answering a multiple-choice question with Options A, B, C, and D, the model generates a probability distribution over these choices that reflects its internal uncertainty about the answer option to generate. Unlike consistency-based methods, which often rely on sampling variability introduced through parameters such as temperature, the token likelihood approach uses the distribution computed during a single forward pass and does not depend on additional randomness or counterfactual generations. The token likelihood method extends to open-ended questions through the “p(true)” approach (Kadavath et al., 2022), in which the model first generates an answer and is then prompted with a follow-up query such as “Is this statement true or false?” The probability assigned to “true” versus “false” tokens is then taken as the confidence score. Although this approach involves issuing an additional query, it is still considered an implicit method because the model is not explicitly asked to verbalize its level of confidence; rather, researchers infer confidence from token likelihoods in the follow-up response.

These implicit measures of confidence can serve as indirect evidence for metacognitive computations, similar to how indirect evidence has been interpreted in nonhuman animal research: Rats can indicate higher confidence in a decision by waiting longer for a food reward, and their behavioral patterns precisely map onto explicit confidence reports in humans and monkeys (Stolyarova et al., 2019). However, the true test for LLM metacognitive confidence is through explicit methods that involve prompting the model to verbalize its own level of confidence—either through qualitative statements (e.g., “I’m not sure”) or quantitative confidence judgments expressed as percentages or probabilities (e.g., “I’m 70% sure”; Cash et al., 2025; Griot et al., 2025; Steyvers, Belem, & Smyth, 2025)—rather than an external observer inferring the uncertainty present in the model. These outputs are generated via text, relying on the model’s ability to represent and articulate its own uncertainty in language.

Both implicit and explicit methods have been used by various groups to assess LLMs’ metacognitive performance, that is, the degree to which LLMs’ confidence (or uncertainty) reflects their task accuracy. These studies have found that differences in model architecture and scale can influence how well LLMs express confidence in ways that reflect their underlying accuracy. For instance, some models appear better able to express high confidence for correct answers and lower confidence for incorrect ones (Kadavath et al., 2022; Xiong et al., 2023) or to express confidence levels that more closely match their actual probability of being correct. Yet direct comparisons between LLMs’ metacognitive capacities often involve mixed assessments, with some groups relying on explicit and others on implicit measures, and studies have consistently found that implicit confidence measures derived from token likelihoods tend to exhibit greater trial-by-trial correspondence between confidence and task accuracy than does verbalized confidence elicited through explicit prompting (Xiong et al., 2023). This discrepancy highlights an important distinction between what models internally “know” (or represent)—which can be accessed by an external observer—and what they can explicitly express. This underscores the need for consistent and precise evaluation methods to meaningfully assess metacognitive capabilities across LLMs.

Metrics for Assessing the Confidence-Accuracy Relationship

Several metrics have been used to assess the relationship between confidence and accuracy across both humans and AI systems. Although these metrics differ across disciplines, with some metrics originating in computer science and others in cognitive science, the metrics reveal two key facets of metacognitive ability: metacognitive sensitivity and metacognitive calibration (Fleming, 2023; Lee et al., 2025; Li & Steyvers, 2025). Figure 1 illustrates both concepts and compares them to empirical results for GPT-3.5 on a multiple-choice task and GPT-4.1 on a short-answer trivia task.

Metacognitive sensitivity (also called “metacognitive discrimination accuracy,” “relative accuracy,” or “monitoring resolution”) quantifies how “diagnostic” confidence judgments are of decisional accuracy (i.e., whether they reliably discriminate between correct or incorrect answers; Fig. 1, top row). Metacognitive sensitivity metrics in the literature on humans include phi (φ) correlation (i.e., the correlation between accuracy and confidence across trials), the area under the Type 2 receiver operating characteristic curve (AUROC2)—corresponding to the probability that a randomly sampled correctly answered question receives a higher confidence score than a randomly sampled incorrectly answered question—and a signal detection theoretic metric known as “meta-d′” (analogous to d′ from signal detection theory), among others (Fleming & Lau, 2014). Worth noting here is that most measures of metacognitive sensitivity (with the exception of meta-d′ of the measures discussed here) are “contaminated” by Type 1 accuracy, or the observer’s capacity to complete the target task. This means that an apparent increase in metacognitive sensitivity may trivially be explained by an increase in task performance if one of these uncorrected measures is used.

In contrast, metacognitive calibration refers to whether an observer reports a generally appropriate level of confidence given their probability of being correct. For example, if an individual—or an LLM—reports 75% confidence across multiple trials, calibration can be considered optimal when the actual percentage of correct answers in those trials is also 75% (Maniscalco et al., 2025). The expected calibration error (ECE) is often used in computer-science research to summarize the overall discrepancy between confidence and accuracy. The ECE is typically computed by binning predictions according to confidence levels and comparing average confidence within each bin to the empirical accuracy. Calibration curves—graphs plotting model confidence against observed accuracy—are also commonly used to visualize calibration performance (Fig. 1, bottom row). A perfectly calibrated system would exhibit a calibration line that falls on the diagonal (i.e., predicted confidence equals actual accuracy at all levels). Deviations from this line reflect systematic biases such as overconfidence (when predicted confidence exceeds accuracy) or underconfidence (when accuracy exceeds confidence). However, note that in the literature on human metacognition, apparent over- or underconfidence may in fact be mathematically optimal when considering reward functions or the observer’s global strategy or goals, such as whether it is more desirable to maximally avoid high-confidence errors given the consequences of such errors in the environment (Maniscalco et al., 2025).

Comparing Human and LLM Metacognitive Architecture and Behavior

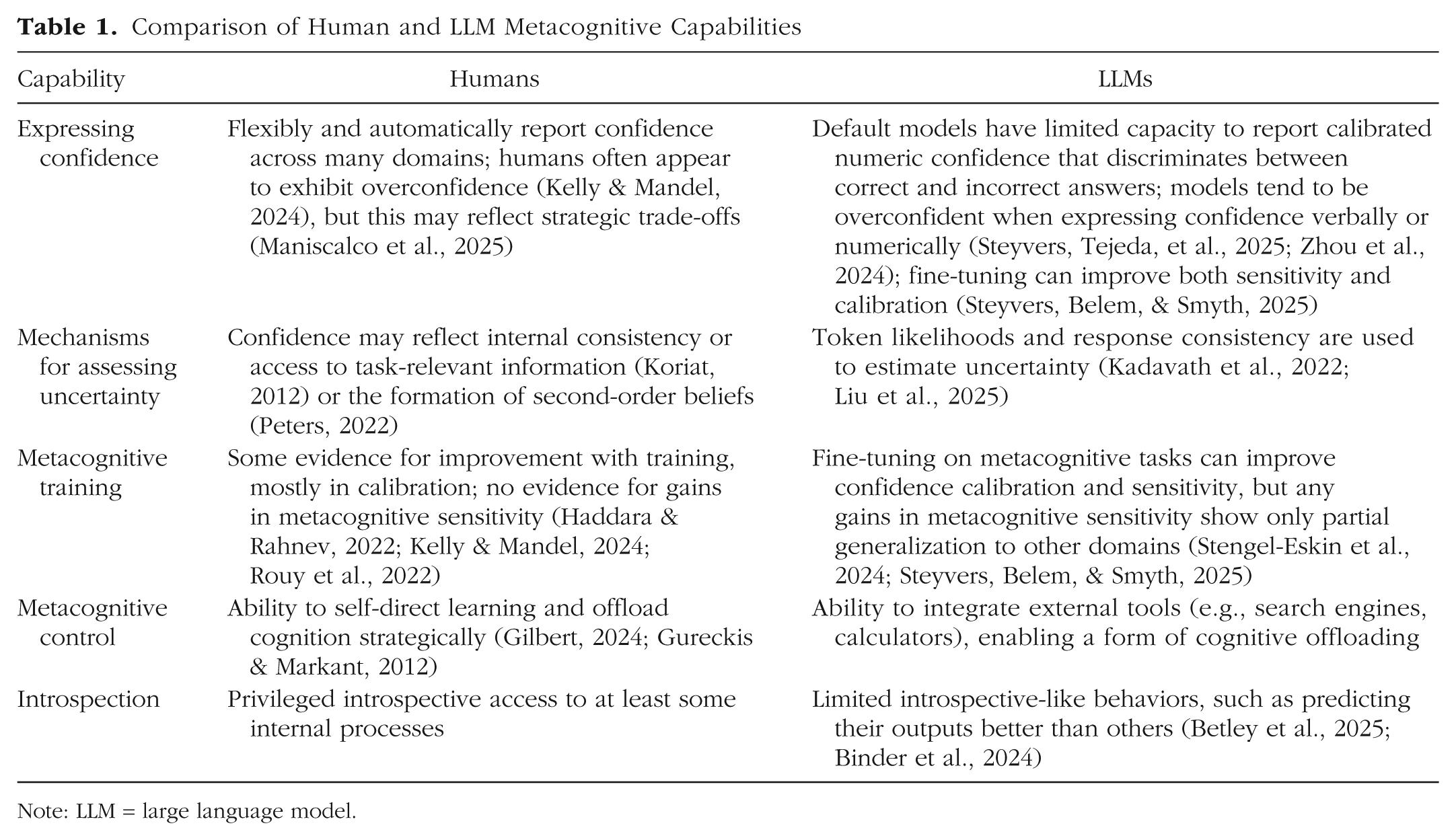

There are several notable parallels between how humans and LLMs not only generate and calibrate confidence but also express it (for an overview, see Table 1). These similarities may seem surprising given the fundamental architectural and cognitive differences between humans and LLMs, yet important differences also remain; exploring these differences and their consequences on collaborative behavior may be key to effective human-LLM collaboration.

Comparison of Human and LLM Metacognitive Capabilities

Note: LLM = large language model.

Similarities between humans and LLMs

One point of convergence may be with some mechanisms thought to generate confidence. In LLMs, one approach to estimating confidence leverages their probabilistic nature: the model can be prompted multiple times with the same question, and confidence can be inferred from the consistency of responses (Liu et al., 2025; Xiong et al., 2023)—similar to the other implicit measures of metacognition discussed above. Interestingly, this approach is similar to a proposed theoretical framework for human confidence in which subjective certainty arises from the self-consistency of internally generated candidate answers (Koriat, 2012). Although developed independently in AI and cognitive psychology, both approaches suggest that consistency across internally simulated alternatives may serve as a basis for confidence.

Another similarity concerns the outwardly visible behavioral patterns of calibration and sensitivity. Recent work has shown that LLMs and humans both tend to exhibit overconfidence when given the same task, and both can achieve a similar degree of metacognitive sensitivity—that is, their confidence ratings are similarly diagnostic of accuracy (Cash et al., 2025). Note, however, that this study used AUROC2—which is confounded with accuracy (Fleming & Lau, 2014)—to quantify metacognitive sensitivity but did not control for accuracy across the LLMs and humans. Nevertheless, the tendency toward overconfidence has long been observed in human cognition (Kelly & Mandel, 2024) and appears to extend to LLMs as well, possibly because of inductive biases or training data characteristics (Zhou et al., 2024).

Further parallels are found in the expression and perception of linguistic uncertainty. Humans often use terms such as “likely,” “probably,” or “almost certainly” to convey probabilistic beliefs, and so do LLMs when prompted for confidence statements. Research comparing the two has found that modern LLMs match population-level human perceptions of linguistic uncertainty reasonably well when asked to translate between verbal and numeric probabilities (Belém et al., 2024).

Last, metacognition in humans is thought to rely on introspective-like processes, defined specifically by the privileged access we have to our own thoughts over those of others (i.e., the difference between metacognition and theory of mind). Similarly, it has been suggested that LLMs can better predict their own behavior than the behavior of another LLM, which some researchers interpret to imply the presence of such privileged access in the LLMs tested (Binder et al., 2024). Evidence of introspective-like capacities may also come from LLMs’ demonstrated ability to describe their own behaviors after training even when those behaviors are not explicitly described in their training data (such as preferring risky choices), including behaviors displayed via “backdoors” in which models show unexpected or undesirable behaviors under certain trigger conditions (such as holding a goal to elicit certain behaviors from a human user). For example, asked models to describe their “tendencies” or “goals” in general, separate from a specifically prompted behavior, and found that they could describe these predilections or goals accurately—suggesting some degree of introspective access that they can explicitly report.

Differences between human and LLM metacognition

Despite a number of parallels, there remain important differences between human and LLM metacognition. In humans, many researchers suppose that the ability to form confidence judgments rests on the formation of a second-order representation: a separate evaluation or reassessment of the internal representations prompted by input information and that gave rise to a behavioral output (Peters, 2022; for a differing perspective, however, see, e.g., Zheng et al., 2025). Unless explicitly present in their architecture, LLMs may not form such second-order self-evaluative representations unless explicitly prompted to do so. Relatedly, LLMs may be less able to correctly evaluate the source of uncertainty in their internal representations, suggesting they lag humans in distinguishing between metacognition and theory of mind. LLMs are prone to conflate their own beliefs with those attributed to others; that is, they are less able to separate the speaker’s belief from their own compared with humans when interpreting uncertain statements (Belém et al., 2024).

Another difference is the extent to which metacognitive abilities can be improved through training. In the case of LLMs, research has shown that confidence verbalization can be improved by fine-tuning approaches that reward the LLM for accurately conveying uncertainty to a listener (Stengel-Eskin et al., 2024) or aligning overt confidence scores with implicit measures of uncertainty such as consistency scores (Steyvers, Belem, & Smyth, 2025). Both metacognitive calibration and sensitivity can be improved through training. However, although trained models show some generalizability to other knowledge domains and other types of questions (e.g., switching from multiple choice to short answers), there is no generalization between different types of metacognitive tasks (e.g., single-question confidence estimation, in which the model assigns a numeric certainty to its answer, and pairwise confidence comparison, in which the model selects which of two answers it is more likely to answer correctly; Steyvers, Belem, & Smyth, 2025). For humans, providing feedback, encouraging reflective reasoning, and explicitly targeting cognitive biases can reduce human miscalibration of confidence (Kelly & Mandel, 2024; Rouy et al., 2022). However, there is no evidence that human metacognitive sensitivity improves in the presence of feedback (Haddara & Rahnev, 2022), likely reflecting underlying architectural differences: Whereas LLMs’ metacognitive judgments can be fine-tuned through explicit training objectives, human metacognitive sensitivity appears to be constrained by more stable, possibly hardwired cognitive mechanisms that are less responsive to feedback.

Another difference may stem from the domain generality or specificity of metacognition in humans. It is thought that some shared processes that underlie metacognition about perception, memory, and cognition may exist and rely on common neural structures, whereas others may be domain-specific (i.e., separable computational or neural modules for perceptual vs. cognitive or memory metacognition; Morales et al., 2018). A comprehensive assessment of the domain generality of LLMs’ metacognitive capacity has not yet been undertaken; however, preliminary evidence suggests that fine-tuning a model on a particular task (including training specific metacognitive capacities in that task) may not automatically generalize to other tasks (Stengel-Eskin et al., 2024; Steyvers, Belem, & Smyth, 2025). As LLMs are increasingly integrated into many highly different tasks and reasoning domains, attending to their domain-specific versus domain-general metacognitive capacities will become increasingly urgent (for LLMs’ metacognitive failures in medical reasoning, see, e.g., Griot et al., 2025).

Communication of Uncertainty in Human-AI Interaction

To facilitate ideal collaboration between humans and LLMs, we must attend to the sources of metacognitive sensitivity and metacognitive bias in both populations—including cases in which LLMs seem to engage in metacognition similarly to how humans do but may not actually. Importantly, these behaviors and distinctions can have critical consequences for how levels of confidence can be effectively communicated between LLMs and humans.

As discussed above, metacognitive sensitivity is the degree to which confidence judgments can discriminate between right and wrong answers, which is critical to effective decision-making in humans (Fleming, 2024). For optimal interaction and humans’ trust of AI systems, LLMs thus must be able to convey to human deciders whether their decisions are likely to be correct (Kadavath et al., 2022; Lee et al., 2025; Li & Steyvers, 2025; Steyvers, Tejeda, et al., 2025). Problematically, LLMs appear reluctant to express uncertainty (Zhou et al., 2024). Because humans rely heavily on linguistic uncertainty expressions (Steyvers, Tejeda, et al., 2025; Zhou et al., 2024), the absence of expressions of uncertainty may raise humans’ reliance on model outputs even beyond the already overconfident judgments the models express. A potential reason for LLMs’ reluctance to express uncertainty may lie in the use of reinforcement learning from human feedback, in which models are fine-tuned to produce outputs that align with human preferences. These preferences often favor responses that sound confident—even when that confidence may not reflect higher accuracy—leading LLMs to avoid verbal expressions of uncertainty during generation (Steyvers, Tejeda, et al., 2025; Zhou et al., 2024). Unfortunately, this problem may be further exacerbated as LLMs are used for increasingly challenging applications, potentially by increasingly nonexpert users. Because individuals who do not possess topical expertise are less able to correctly assess the expertise of others (Bower et al., 2024), nonexpert users may be especially influenced by superficial aspects of LLM responses—such as the absence of uncertainty expressions or the length of the answer. Recent findings show that users tend to interpret longer LLM responses as more confident, even when the model’s internal confidence remains unchanged (Steyvers, Tejeda, et al., 2025). This suggests that response length and style can mislead users into overestimating the certainty or reliability of the model’s output, potentially leading to overreliance on answers that do not warrant such confidence. Humans and LLMs may also rely on different sets of cues when assessing their confidence in other humans, such as humans’ reliance on the time it takes to render a response (Tullis, 2018); these cues likely will not be used in the same way by LLMs. Together, these differences in the assumed computations and inputs to metacognition may strongly impact how humans integrate LLMs’ expressed confidence into their own beliefs and decisions.

Overall, it is clear that improving AI metacognition is a key priority: LLMs must be able to differentiate correct responses from incorrect ones. Yet our research trajectory must exceed simply improving LLMs’ self-evaluation capacities if they are to effectively collaborate with humans. Imbuing LLMs with appropriate metacognitive capacities must also include directed research into their communication of uncertainty to human users and explicit comparisons between how humans and LLMs evaluate their own uncertainty. New tasks and evaluation strategies may be beneficial in driving such development, such as building LLM capacities to recognize and name skills required to solve the task at hand (e.g., mathematical problems; Didolkar et al., 2024). Training regimes that drive alignment between LLMs’ verbalized confidence and the perceived confidence by humans (Stengel-Eskin et al., 2024), or that emphasize LLMs’ capacities to detect questions that are beyond the scope of their knowledge base or are unanswerable, may also be powerful paths forward.

Future Benefits of Improved AI Metacognition

Beyond the importance of improving LLMs’ metacognitive capacities to facilitate their effective integration into human-AI joint decision-making, imbuing LLMs—or any AI system—with improved metacognition may also play a role in progress toward more general forms of machine intelligence. In humans, metacognitive capacities—including metacognitive control, such as deciding what to learn and when—facilitate goal-directed behaviors, including learning, information-seeking, and more. For example, cognitive science has long recognized the role of metacognition in driving self-directed learning, which allows us to focus effort on acquiring information that we do not yet possess (Gureckis & Markant, 2012). These curiosity-driven behaviors may reflect a motivation to minimize uncertainty in our internal representations of the world (Schulz et al., 2023), with strong parallels to active-learning AI algorithms that can optimally select their own training data to maximize efficient acquisition of coherent skills or beliefs (Gureckis & Markant, 2012). Confidence signals can also help agents learn in reinforcement-learning contexts through explicit calculations of confidence-based prediction errors (Ptasczynski et al., 2022). Last, metaevaluations of one’s own metacognitive abilities can also drive humans’ learning (Recht et al., 2025), and the same could be true for AI systems. It is clear that promoting LLMs’ metacognitive capacities may significantly advance the design of AI systems with broader adaptive capacities.

Recommended Reading

Betley, J., Bao, X., Soto, M., Sztyber-Betley, A., Chua, J., & Evans, O. (2025). (See References). Demonstrates that LLMs can exhibit introspective-like awareness of their learned behaviors, highlighting emerging parallels with human metacognition.

Fleming, S. M. (2024). (See References). Reviews theories and evidence on confidence and metacognition.

Peters, M. A. K. (2022). (See References). Proposes a framework for identifying the canonical computations underlying subjective experience, linking metacognition to theories of phenomenal awareness.

Steyvers, M., Belem, C., & Smyth, P. (2025). (See References). Demonstrates that LLMs can be fine-tuned on metacognitive tasks to improve confidence calibration and sensitivity.

Steyvers, M., Tejeda, H., Kumar, A., Belem, C., Karny, S., Hu, X., Mayer, L. W., & Smyth, P. (2025). (See References). Examines the disconnect between what LLMs “know” versus what humans believe they know, illustrating challenges in aligning verbal confidence expression with true knowledge.