Abstract

Metacognition involves second-order judgments about first-order judgments. It remains unclear whether an individual’s confidence in being correct is generated by the same system across tasks (domain generality) or whether it is computed independently in the context of each task (domain specificity). Previous studies have focused on correlations across several tasks, yet the evidence is mixed, and more complex models of domain generality were not taken into account. Analyzing data from 10 tasks collected across three studies in Denmark and Poland (N = 253–547 adult participants), we found a fixed pattern of cross-task correlations for both metacognitive bias and metacognitive efficiency. In accordance with previous studies, we found that hierarchical estimation of metacognitive efficiency led to higher correlations. We used confirmatory factor analyses to investigate the existence of general processes. We found evidence for a weak domain generality with a metacognitive module for perceptual tasks and another for cognitive tasks.

In daily life, we often evaluate noisy information from the environment. One major type of such evaluation is confidence: the degree of certainty that information is correct (i.e., corresponds to the true state of the environment; Meyniel et al., 2015; Peters, 2022). Being able to have an accurate sense of confidence in a cognitive process is referred to as metacognition—an ability that enables us to monitor and regulate our cognitive processes. There is a growing interest in determining the computational architecture of confidence. Recently, Mazancieux et al. (2023) defined architectures from a strong domain generality (one metacognitive module that monitors several independent domains) and domain specificity (one metacognitive module per domain). They showed that current evidence supports the idea of a weak domain generality in which domain-specific metacognitive modules share processes in specific contexts or common sources of metacognitive inefficiency (Shekhar & Rahnev, 2021a).

The definition of a cognitive domain is not straightforward. Here, we define it in two ways: seeing each task as a potential domain and gathering tasks in a perception cluster (i.e., sensing and interpreting sensory inputs from different modalities) or doing so in a cognition cluster (i.e., higher-level processing). This distinction is close to, but different from, internally generated signals and externally generated signals, because motor abilities are internally generated signals involving both perception and cognition. This distinction has been previously studied in the context of cross-task correlations for metacognition (Arbuzova et al., 2023) and has shown a positive correlation between motor and perception tasks but a negative correlation between motor and memory.

The evidence in favor of domain generality is mainly based on within-subject correlations, implying that individuals with high levels of metacognition in one domain also have a high level of metacognition in another domain. Although metacognitive bias—the tendency to report high or low confidence, irrespective of task performance—consistently correlates across domains (Ais et al., 2016; Lee et al., 2018; Mazancieux, Dinze, et al., 2020; Mazancieux, Fleming, et al., 2020; McWilliams et al., 2022), evidence is weaker for metacognitive sensitivity (i.e., the ability to discriminate between correct and incorrect responses). Using large sample sizes, positive cross-task correlations for metacognitive efficiency—that is, metacognitive sensitivity controlled for first-order performance—were found (Benwell et al., 2022; Lee et al., 2018; Lund et al., 2023; Mazancieux, Dinze, et al., 2020; Mazancieux, Fleming, et al., 2020; McWilliams et al., 2022). However, the estimation of shared variance fluctuates greatly across tasks and studies. For instance, Mazancieux, Fleming, et al. (2020) found 38% of shared variance between a visual-perception domain and semantic-memory domain, whereas Benwell et al. (2022) found 0.8% of shared variance in the same two domains.

Such inconsistencies can be explained by different mechanisms. Larger correlations can occur when tasks share common characteristics. For instance, it has been shown that stimulus variability is related to metacognitive efficiency (Rahnev & Fleming, 2019). Increased stimulus variability, either objective variability or experienced variability due to learning or attention (Maniscalco et al., 2017), results in metacognitive efficiency inflation. Thus, one can suppose that two tasks that have the same amount of stimulus variability may have a higher cross-task correlation. This is notably the case during staircase procedures, where variability is controlled (the performance for each participant is fixed). Second, two main metacognitive sensitivity estimates have been previously used to explore the domain generality of metacognition: single-subject estimates (Maniscalco & Lau, 2012) and hierarchical Bayesian estimations (Fleming, 2017). However, comparisons between cross-task correlations for metacognitive efficiency using hierarchical and nonhierarchical estimates have led to inconsistent results, with hierarchical correlations being systematically larger (Lund et al., 2023; Mazancieux, Fleming, et al., 2020).

Because the correlational approach is limited, Lehmann et al. (2022) have recently used a latent-variable approach, which provides a framework for testing hypotheses about the underlying structure of the data. Using six different tasks gathered in three domains, they found evidence for both domain-general and domain-specific processes. Metacognitive efficiency for visual perception was mostly domain-specific, whereas metacognition for memory and attention subsystems were more related.

Here, we present data from three different studies all run as separate subprojects of the EU European Cooperation in Science and Technology (COST) Action CA18106 (The Neural Architecture of Consciousness). These studies provide confidence data across 10 tasks for a large number of participants (253–547 per study). We investigated the variability in the magnitude of correlations for metacognitive efficiency across modalities and domains, and we compared hierarchical and nonhierarchical Bayesian estimations of metacognitive efficiency. Our multiple data sets also allowed comparison of three types of first-order task performance control: no control (Study 1), partial control using two types of trial difficulty (Study 2), and continuous control in a staircase design (Study 3).

Notably, we tested the underlying structure of metacognitive efficiency using a latent-variable approach similar to Lehmann et al.’s (2022). Apart from the increased number of tasks and participants in the current study, some important differences between studies are present. Whereas Lehmann et al. (2022) limited each latent variable to only two tasks, ours included up to six. Moreover, they included visual perception only in their perception latent construct. Here, we extend this to auditory and somatosensory perception.

Research Transparency Statement

General disclosures

Study 1 disclosures

Study 2 disclosures

Study 3 disclosures

Method

Participants and data collection

The data were collected in the context of EU COST Action CA18106 and are part of a larger project that includes MRI and behavioral data collected from healthy, neurotypical participants. The current article encompasses three studies and includes data collected at the Center of Functionally Integrative Neuroscience (Aarhus University, Denmark) for Study 1, data from the Consciousness Lab (Jagiellonian University, Kraków, Poland) for Study 2, and data from both sites as a multisite study for Study 3. For all studies, participants were recruited through a local participant database and local advertisements.

The following inclusion criteria were used: absence of psychiatric disorder, brain damage, or brain surgery; age between 18 and 50 years (40 for Study 2); normal or corrected-to-normal vision; and normal hearing. Exclusion criteria were standard MRI contraindications; the use of neuropharmacological or other medicines that could affect neural states; pregnancy; and skin diseases. Additional exclusion criteria at the Jagiellonian University were cardiovascular conditions or chronic pain.

Study 1 included 253 participants (139 females) aged between 18 and 49 years (M = 25.08 years) who performed all tasks. Study 2 included 298 participants (187 females) aged between 18 and 40 years (M = 23.88 years). Not all participants performed all modalities (see the Results section). Study 3 included 547 participants (327 females) aged between 18 and 49 years (M = 24.42). Of these participants, 246 also performed the tasks of Study 1, 290 also performed the tasks of Study 2, and 15 performed only Study 3. In Study 3, only 4 participants did not perform both modalities. All participants gave written informed consent. This work was reviewed and approved by an institutional review board. More details on sample-size considerations can be found in Section 1.3.2 of the Technical Annex of the Action: https://e-services.cost.eu/files/domain_files/CA/Action_CA18106/mou/CA18106-e.pdf.

For each task, we excluded participants with above 95% or below 55% accuracy, as such levels can lead to biased metacognitive-efficiency estimates, as well as participants who used only one single confidence rating throughout the entire task. For Study 1, we excluded 82 participants on the episodic-memory task, 32 on the executive-function task, 1 on the semantic-memory task, and none on the visual-perception task. For Study 2, we excluded 34 participants on the auditory modality (8 others did not perform it), 9 on the visual modality (9 others did not perform it), 14 on the tactile modality (84 others did not perform it, and 2 others were excluded because they did not perform all trials), and 21 on the pain modality (39 others did not perform it). For Study 3, we excluded 17 participants on the auditory modality (3 others did not perform it) and 7 on the visual modality (1 other did not perform it). For hierarchical analyses, because one model was fitted for all tasks, we had to exclude participants if performance in one of them met the exclusion criteria (see Supplementary Results: Hierarchical M-Ratio in the Supplemental Material available online). For nonhierarchical analyses, we excluded 1 participant who had an aberrant M-ratio, defined as more than 3 standard deviations, compared with the rest of the group on the episodic-memory task (M-ratio above 3). For Study 2, we excluded participants who had a negative M-ratio (5 on the auditory task, 7 on the visual task, 3 on the tactile task, and 1 on the pain task) as well as 3 participants who had an aberrant M-ratio, again defined as more than 3 standard deviations, compared with the rest of the group on the auditory task (M-ratio above 2); there were also 2 such on the visual modality (M-ratio above 2) and 1 on the tactile modality (M-ratio above 2). For Study 3, we excluded participants with a negative M-ratio (14 on the auditory task and 6 on the visual task).

Materials and procedure

The three studies were planned by different investigators, but the designs and data collection were discussed and coordinated jointly in order to harmonize the data sets as much as possible. For all three studies, tasks were classic decision-making-with-confidence tasks. For each trial of each task, participants had to assess their level of confidence in their decision on a 6-point scale ranging from 50% confidence (guessing) to 100% (maximum confidence), in steps of 10%. Confidence was reported on a scale ranging from 1 (50%) to 6 (100%), using the keypad number keys. Following a joint discussion of whether and how to control first-order task performance, investigators of Study 1 decided that because it would not be possible for some tasks, it would not be applied to the four tasks of that study. The investigators of Study 3 opted for full, continuous performance control using a staircase procedure, whereas the investigators of Study 2 opted for a partial-control procedure. The differences are described further below and addressed at the analysis stage. First-order tasks differed across all studies and are described below (see also Fig. 1 for a summary of all tasks across the three studies).

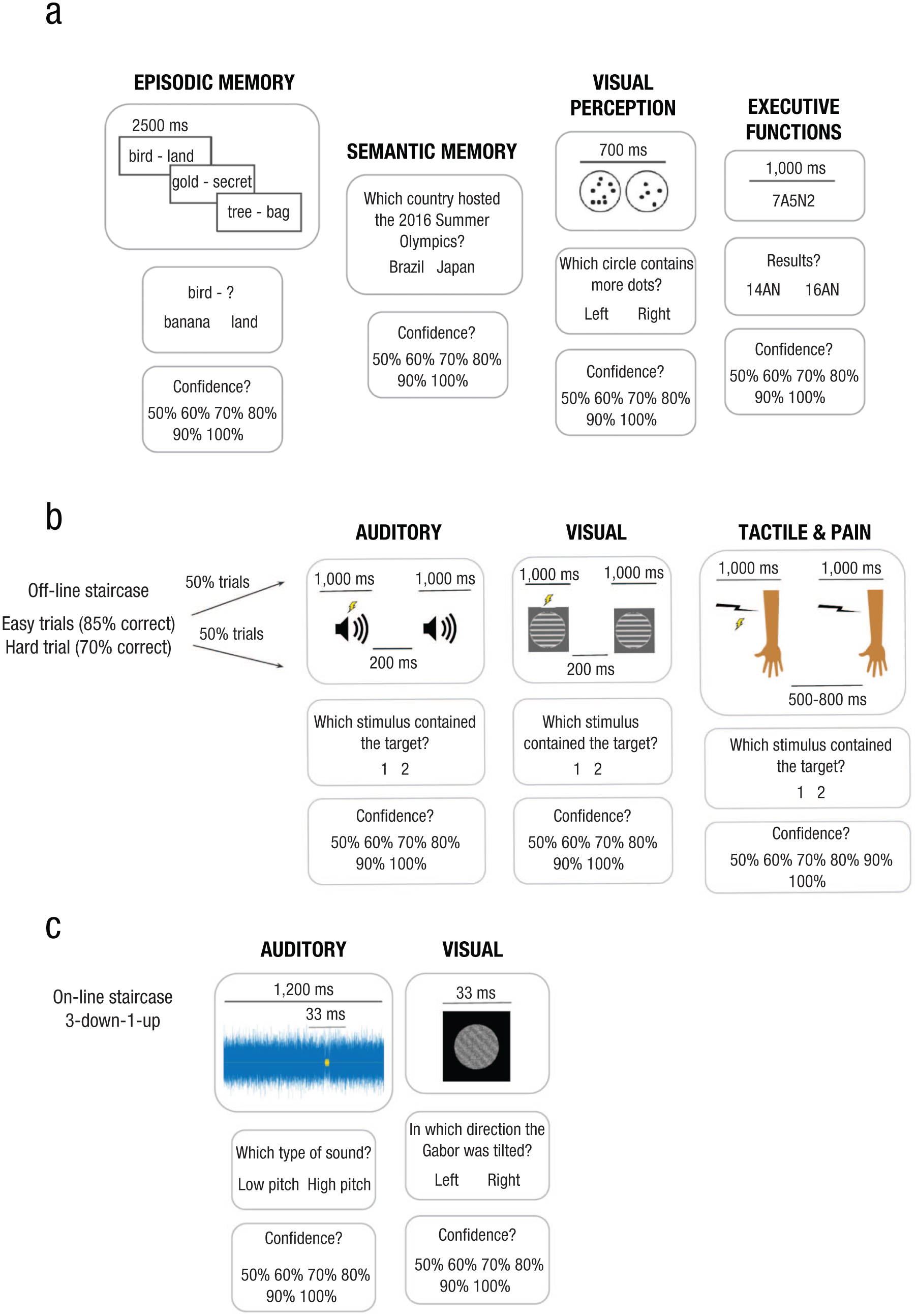

Summary of the tasks for the three studies. Study 1 (a) had four tasks with 120 trials each. Study 2 (b) had four tasks with 192 trials, except for the pain tasks, which had 144 trials. Study 3 (c) had two tasks with 152 trials each.

Study 1

Study 1 used a slightly modified version of the procedure of Mazancieux, Fleming, et al. (2020). This procedure included four separate two-alternative forced-choice (2AFC) tasks: an episodic-memory task, a semantic-memory task, an executive-function task, and a visual-perception task. The task order was randomly assigned to each participant.

The episodic-memory task was a paired associative-learning task. It started with an encoding phase in which participants were presented with unrelated pairs of words for 2,500 ms. During the test phase, participants performed a task in which they had to decide which one of the two target words was associated with the cue in the first phase. In the semantic-memory task, participants made decisions on general-knowledge questions. These questions included topics such as art, cinema, sport, science, history, and geography (e.g., “What does the A in DNA stand for?” “Which country hosted the 2016 Summer Olympics?” “When did the American Civil War end?”). The visual numerosity-perception task consisted of two black circles on a white background, each containing dots presented for 700 ms. Participants responded as to which one of the two circles contained more dots. One of the two circles always contained exactly 50 dots, whereas the other had either fewer or more than 50 dots; the number was randomly defined on each trial (between 25 and 49 for stimuli with fewer dots, and between 51 and 75 for stimuli with more dots). The fourth task was an attention/flexibility/working memory (or, arguably, executive-function) task. Participants were presented with a letter-number sequence of five symbols for 1,000 ms; the symbols then disappeared. Half of these sequences had three letters and two numbers, and the other half had two letters and three numbers (e.g., 7A5N2). Participants had to choose immediately after the presentation which one of the two presented responses corresponded to the sum of all numbers and letters (in the example above, the correct answer would be 14AN).

Participants had no time limit for providing either their first-order decision or their confidence judgment. For the first-order decision, participants had to press the “S” letter on a keyboard to select the left answer and the “L” letter to select the right answer. Each trial began by pressing the space bar. In contrast to Mazancieux, Fleming, et al. (2020), each task had 120 trials instead of 40. Verbal stimuli in the episodic and semantic tasks were translated into Danish and adapted (from French). Pretests were conducted on a Danish sample for these two tasks, and the task difficulty was adjusted. Because of low task performance during a pretest—likely caused by the increased trial number—the episodic-memory task was separated into two encoding-retrieval sessions (60 trials per session). The entire protocol duration lasted around 1 hour.

Study 2

Study 2 included two-interval forced-choice (2IFC) tasks for four different sensory modalities: auditory, visual, tactile, and nociceptive. For every trial of every modality, two consecutive stimuli were presented, and participants had to report which one of the two stimuli contained the target (i.e., some frequency modulation in the stimulus propriety) using arrow keys—left for the target in the first stimuli, right for the target in the second stimuli.

All modalities started with a staircase block to determine individual performance levels. A three-down-one-up staircase was used, starting with a very easy trial and progressively adapting the difficulty on the basis of participants’ performance. The staircase was terminated after eight reversals or after 60 trials had been completed. Individual values were then selected to obtain a difficult and an easy condition, with the desired type-1 performance of 70% and 85%, respectively. Because the staircase was used only for this block and not for experimental blocks, accuracy did not always match exactly 70% and 85%, possibly because of attention fluctuations or learning. Following the staircase, each task continued with individually matched difficult and easy conditions with a total of 192 trials for each of the visual, auditory, and tactile modalities (four blocks of 48 trials for each modality) and 144 experimental trials for pain (three blocks of 48 trials; a smaller number of trials because of ethical considerations). The trial order was fully randomized within each block. Participants were asked to answer quickly but to prioritize accuracy over speed, and they had no time limit to give their answers.

The auditory and visual modalities were performed in a single experimental session, and their order was balanced within the session across participants. The nociceptive and tactile modalities were performed in separate experimental sessions at least 4 days apart (6 days apart on average). The nociceptive task was always performed before the tactile task. Their order was not randomized, because pilot experiments indicated that if participants were not familiar with the target (a transient change in the rhythm of electric pulses; see below) they had difficulties detecting it and learning the task during practice because the overall intensity of the electric stimulation was very low. Once participants experienced the change in rhythm with stronger electric pulses they had no problem subsequently performing the task with weaker pulses as well. Thus, if the order were randomized, it would create a strong bias or disproportion in the overall tactile performance.

Visual task

The visual stimuli consisted of two consecutive drifting gratings presented in a circular display area centrally on a gray background. Each trial started with a red fixation dot in the center of the screen, which stayed there for the entire stimulus presentation. The gratings were white stripes superimposed on the background (four cycles per degree, 30% contrast), drifting downward. The control stimulus drifted at a constant velocity of 0.025 degrees, whereas the target had a brief acceleration in the drift velocity. The task difficulty depended on the amount of acceleration during the target presentation. There were 15 possible acceleration levels ranging from 1.0003 to 2.09 times the regular drift velocity at the maximum acceleration. On the basis of pilot experiments, we set the difficult condition as the mean staircase value starting from Trial 15 until the end of the staircase block. The easy condition was defined by adding either one acceleration level, if the staircase standard deviation was not greater than 2, or two levels otherwise. Each grating was presented for 1 s (60 frames) with an interstimulus interval (ISI) of 200 ms (12 frames).

Auditory task

The auditory stimuli consisted of two consecutive tones. Each trial started with a white fixation cross in the center of the gray screen which remained on the screen for the entire duration of auditory stimulation. The tones were 1-s long single-frequency sine waves (523 Hz), including 10-ms cosine ramps at each end of the stimulus. The target appeared in one of the 1-s tones that was between 375 and 625 ms into the presentation (total duration 250 ms) and consisted of a transient increase in pitch, ramped up and down gradually. During the staircase block, the step size was 1 Hz for the first 15 trials and 0.5 Hz for the remaining trials. On the basis of pilot experiments, we set the difficult level as the mean staircase value starting from Trial 15 until the end of the staircase block. The easy level was calculated by adding either 1 Hz, if the staircase standard deviation was not greater than 2, or 2 Hz if the staircase standard deviation was greater than 2. The ISI between the two tones was 200 ms.

Tactile and nociceptive tasks

The stimuli were delivered to the volar side of the nondominant forearm (participants self-reported hand dominance) as a sequence of electrical pulses using a Digitimer DS7A stimulator (Digitimer; Welwyn, Garden City, England). Each trial started with a white fixation cross in the center of the screen with a gray background that remained there for the entire duration of the stimulus presentation. A single sequence consisted of 11 pulses of 100 µs duration. The first three and last three ISIs were always 100 ms. The four central ISIs between pulses 4 and 8 were also 100 ms for control stimuli, but they were shorter than 100 ms for the target (experienced as a transient acceleration of the speed of the pulse sequence). The exact duration of this shorter central ISI was individually determined during the staircase block. The step size of the staircase (i.e., 100 ms − X ms) was 10 ms for the first 15 trials and 2 ms for the remaining trials. On the basis of pilot experiments, the difficult condition was defined individually per participant as the mean staircase value starting from Trial 20 until the end of the staircase block. The easy condition was calculated by subtracting 10 ms if the staircase standard deviation was not greater than 2, or 15 ms if the staircase standard deviation was greater than 2. The ISI between the first and second sequences varied between 500 and 800 ms (in steps of 10 ms).

Each of the somatosensory sessions started with the calibration procedure to adjust the stimulus intensity to the appropriate modality. Participants were presented with the Numerical Rating Scale (NRS; Hartrick et al., 2003) in which they were asked to assess the painfulness of the stimuli on a discrete scale from 0 (no pain) to 10 (the most intense tolerable pain). The stimulus during calibration was the same as a single pulse sequence in the main nociceptive/tactile task. The calibration started with a stimulation value of 0 milliamperes (mA) and increased by 0.5 mA after each trial. Participants did not know when the stimulation was occurring and were asked to start answering on the NRS scale as soon as they felt the stimulation. The calibration would stop once the participants gave a response of at least 3 on the NRS scale. Then the calibration process was repeated, and the stimulus intensity was calculated as the average intensity either of the first response (when they started to feel the electrical stimulation) or the last response (when the electrical stimulation would cause a desired level of pain) for touch and pain, respectively. Additionally, after each experimental block, participants were asked to rate their pain perception on the NRS.

Study 3

Study 3 included identification tasks for two modalities: visual and auditory. In each trial, one of two possible target stimuli was presented in noise, and the participants had to report which one of the two alternatives it was. Task difficulty (i.e., the signal-to-noise ratio) was controlled by a continuous three-down-one-up staircase. Participants performed 152 trials per modality in two separate blocks. The block order was randomly assigned to each participant.

Visual task

The stimulus was a circular grating (a Gabor patch) submerged in white noise and oriented 45 degrees clockwise or counterclockwise. Participants were familiarized with both stimuli during practice trials. The stimulus size was 3° of visual angle with two cycles per degree. The signal-to-noise ratio between the Gabor patch and white noise was controlled by a three-down-one-up staircase throughout the task. For example, if the signal-to-noise ratio was 0.05, the full-contrast grating would be multiplied by 0.05, and the full-contrast patch of white noise would be multiplied by 1–0.05. These two patches would be added together and scaled to range between −1 to 1. The final gray values for each pixel would be centered around 127 (given the normal RGB range of 0–255) by the following formula: 127 + 127 × 0.9 × (grating + noise), and rounded to the nearest integer. In the beginning of the block, the signal-to-noise ratio was 0.1. It was modified with a step size of 0.005 for the first 20 trials, 0.003 for the next 30 trials, and 0.002 for the remainder of the task. On each trial, a gray fixation cross was first presented in the center of a black screen for a random interval between 600 and 1,000 ms (in steps of 50 ms). Then the target stimulus was presented for one frame (16.67 ms). The target was followed again by the fixation cross, and the participants had to respond with the arrow keys to indicate whether the Gabor patch was tilted to the left (counterclockwise) or to the right (clockwise) and to rate their confidence in their decision.

Auditory task

The stimuli were short high-pitch or low-pitch chords submerged in a longer snippet of white noise. The fundamental frequencies of the stimuli were 698 Hz and 523 Hz, respectively. Participants were familiarized with both sounds during practice trials. The signal-to-noise ratio between the sound amplitude and white-noise amplitude was controlled by a three-down-one-up staircase throughout the task. The target sound was multiplied by the signal-to-noise ratio value, and the background noise was multiplied by 1 minus the signal-to-noise ratio value for the duration of the target; we then added the two components together. Finally, the entire sound trace was scaled to range between −1 and 1. In the beginning of the block, the signal-to-noise ratio was 0.1. It was modified with a step size of 0.005 for the first 20 trials, 0.003 for the next 30 trials, and 0.002 for the remainder of the task. On each trial, a fixation cross was presented in the center of the screen and remained there until the participant responded. Auditory white noise started to play from the beginning of the trial (synchronized to the fixation cross onset), and at a random interval between 600 and 1,000 ms (in steps of 50 ms), the target chord was added to the white noise for 33 ms. The total duration of the noise was always 1,200 ms. Note also that target onset and offset was marked by amplitude-attenuated protective regions (5-ms cosine ramps) to make the temporal detection of the target slightly easier. The participants had to respond with the arrow keys as to whether the target sound’s pitch was high or low; they then rated their confidence in their decision.

Data and statistical analyses

For all studies, we estimated first-order performance using d′ from signal-detection theory. Because first-order performance was not controlled in all studies, metacognitive bias was assessed using average raw confidence (a subjective estimate of the chance of being correct in percentage) minus the (objective) percentage of correct responses, as done in previous work (Mazancieux, Dinze, et al., 2020). The closer to zero the score, the more accurate it was; a positive score indicated overconfidence, and a negative score indicated confidence underestimation. This measure, which stems from the metamemory literature (Dunlosky & Tauber, 2016), was preferred because Study 1 (and, to a lesser extent, Study 2) had procedures that did not equal first-order performance across tasks and participants. Metacognitive efficiency was estimated using the meta-d′ framework (Maniscalco & Lau, 2012). Meta-d′ quantifies the sensitivity of confidence to first-order performance in units of d′. Because both are in the same units, they can be compared, which allows for control for differences in first-order performance. When M-ratio (meta-d′/d′) equals 1, participants have optimal metacognitive sensitivity under the ideal observer signal-detection-theory model.

We used Bayesian estimations of meta-d′ (Fleming, 2017) at the subject level (i.e., nonhierarchical estimates), which are similar to the maximum-likelihood-estimation approach developed by Maniscalco and Lau (2012) and to hierarchical estimations of M-ratio (HMeta-d). The hierarchical model has the advantage of taking into account both within- and between-subject variability as well as the estimation of covariances between estimates to assess the domain generality of metacognitive efficiency (Mazancieux, Fleming, et al., 2020). The significance of these parameters (group-level M-ratios and rho correlations) was determined by examining whether the 95% high-density interval (HDI) from the posterior distribution (Kruschke, 2014) overlapped with zero. With nonhierarchical estimates, Pearson correlations across individual fits were used to assess the domain generality of metacognitive efficiency.

We also used confirmatory factor analyses (CFA) to investigate the structure of metacognitive efficiency. We tested different models when (a) gathering all studies, (b) gathering only Studies 1 and 3 (including the same participants), and (c) gathering only Studies 2 and 3 (including the same participants). We assessed model fits using four commonly used indices: the chi-square test (χ²), the comparative fit index, or CFI (which compares the fit of a target model to the fit of an independent or null model), the standardized root-mean-square residual, or SRMR (which represents the square root of the difference between the residuals of the sample covariance matrix and the hypothesized model), and the root-mean-square error of approximation (RMSEA). Good-fitting models require a nonsignificant chi-square test (the model covariance matrix and the observed data matrix are not significantly different). The three other indexes used cutoff scores that were higher than 0.9 for the CFI, smaller than 0.08 for the SRMR, and smaller than 0.08 for the RMSEA (Schermelleh-Engel et al., 2003). Each model was fitted with R and the lavaan package (Rosseel, 2012) using a robust maximum likelihood estimator. Missing data were managed using the full-information maximum likelihood, which uses all available information to estimate the covariance matrix. To compare models, we used the Akaike information criterion (AIC), which determines the relative information value of the model using the maximum likelihood estimate and the number of parameters. Model selection using AIC was performed on the basis of AIC weight from the AICcmodavg package in R (Mazerolle & Mazerolle, 2017), which expresses the relative probability of each model being the best one. We also used likelihood ratio tests for nested models testing the null hypothesis that a model with a lower number of factors fits just as well as a model with a higher number of factors. Because we used a robust estimator for model estimations, we computed a robust difference test using the Satorra-Bentler scaled difference.

All analyses were done using R. The code is publicly available and can be found at https://osf.io/54t3h/.

Results

Task performance and metacognitive bias

In Study 1, participants performed an episodic-memory task, a semantic-memory task, a visual-perception task, and an executive-function task. In Study 2, they performed an auditory task, a visual task, a tactile task, and a pain task. Study 3 was completed by participants from both Study 1 and Study 2 and included an auditory task and a visual task (see the Method section for details).

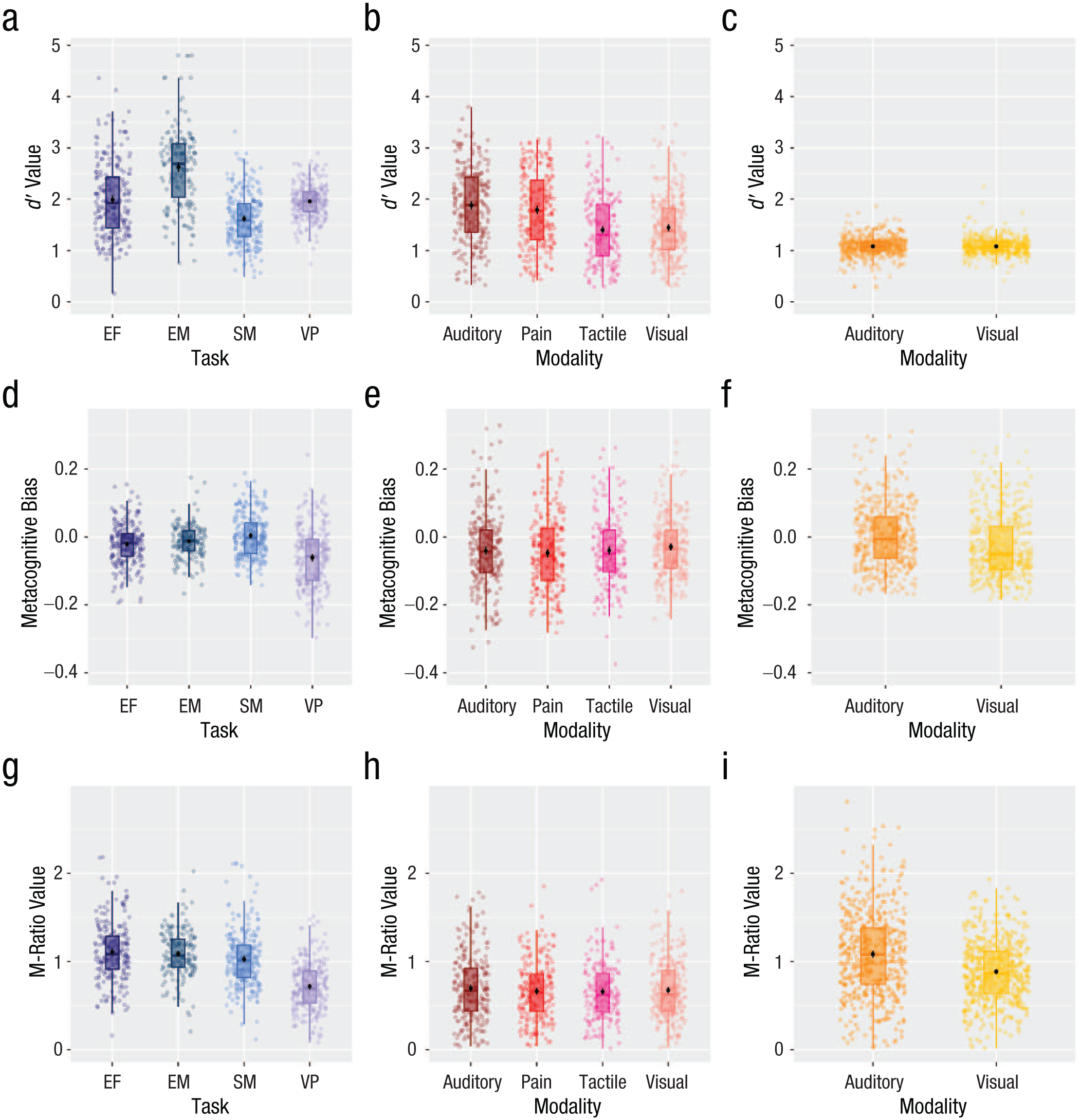

For each study and participant, we calculated first-order performance as d′ (Fig. 2a–c). For Study 1, which did not control first-order performance, we found differences in d′ across tasks. It was also the case for some tasks of Study 2, which used an offline staircase (except for no difference between the tactile and the pain task). No differences in first-order performance were found in Study 3, which used an online staircase. We also found moderate correlations for first-order performance for Study 1 (on 3 out 6 task pairings) but no correlation for Study 2 (except for a positive correlation between the tactile and the pain task) and Study 3, which involved staircases (see Supplementary Results: First-Order Performance in the Supplemental Material for more details, Table S1).

Distribution of d′ (first-order performance), metacognitive bias, and nonhierarchical M-ratio for Study 1 (a, d, g), Study 2 (b, e, h), and Study 3 (c, f, i). Scales differ across studies. EF = executive function, EM = episodic memory, SM = semantic memory, VP = visual perception.

We calculated metacognitive bias (Figs. 2d, 2e, 2f) as the difference between mean confidence and percentage of correct first-order responses in order to control for task performance (Mazancieux, Dinze, et al., 2020). Cross-task correlations for metacognitive bias were all medium to large, positive, and significant for the three studies (M = 0.50, min = 0.39, max = 0.82; see Supplementary Results: Metacognitive Bias in the Supplemental Material for more details, Table S2).

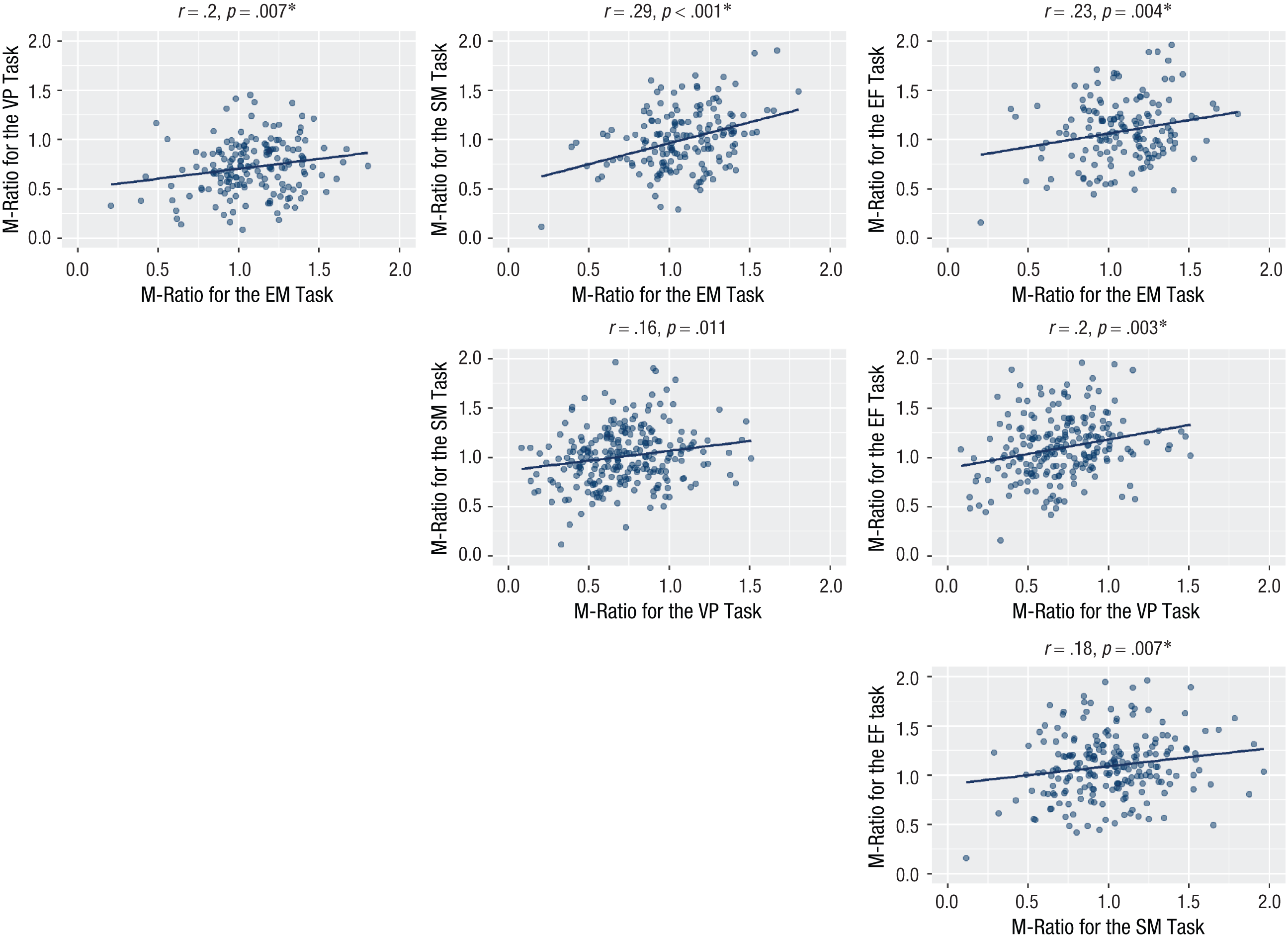

Metacognitive efficiency

We computed M-ratio (meta-d′/d′) using a Bayesian estimation both nonhierarchical and hierarchical (Fleming, 2017; see Supplementary Results: Nonhierarchical M-Ratio for task comparison within each study). Individual nonhierarchical fits are presented in Figure 2g, 2h, and 2i. To evaluate domain-general metacognitive efficiency using nonhierarchical fits, we correlated M-ratio across all task pairings. We found significant, positive cross-task correlations for all tasks except between the visual-perception and semantic-memory tasks for Study 1. Here, a numerically similar positive correlation was observed, but it did not reach significance after Bonferroni correction for multiple comparisons (Fig. 3). For Study 2, the visual-pain, visual-tactile and tactile-pain correlations were statistically significant at an uncorrected p < .05 threshold, but not after corrections for multiple comparisons (Fig. 4). For Study 3, the cross-task correlation between the visual and auditory was significant, r = .14, p < .001.

Cross-task correlations for M-ratio for each task pairing in Study 1. The significance threshold is 0.05/6 = 0.008. The asterisk indicates significant correlations. EM = episodic memory, SM = semantic memory, VP = visual perception, and EF = executive function.

Cross-task correlations for M-ratio for each task pairing in Study 2. Five out of six correlations were significant, but only two of them remained significant after correction for multiple comparisons (Bonferroni correction, significance threshold 0.05/6 = 0.008). The asterisk indicates significant correlations.

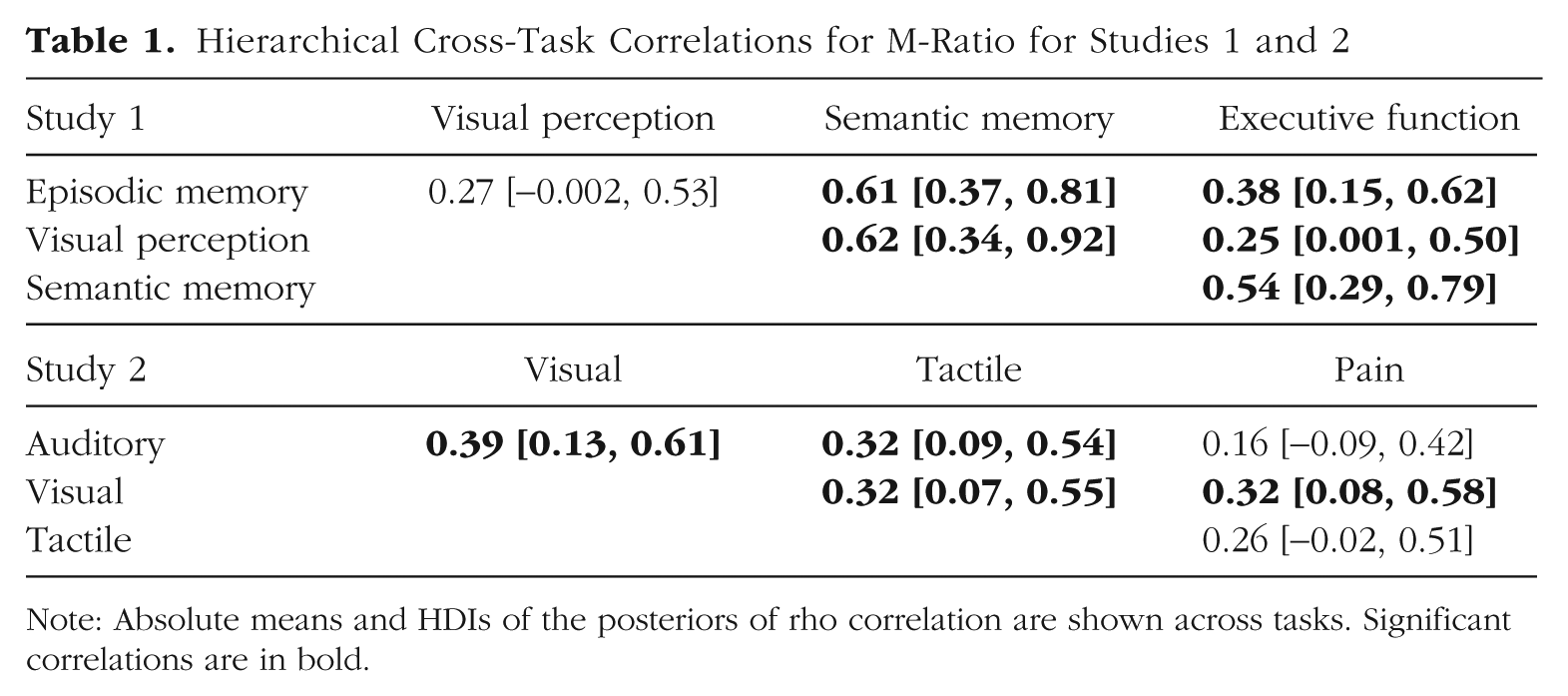

Using hierarchical estimation of cross-task correlations (see Supplementary Results: Hierarchical M-Ratio for task comparison within each study, Table S4), for Study 1, five out of six 95% HDIs on the posterior correlation coefficients did not overlap with zero, suggesting substantial covariance in metacognitive efficiency across domains (see Table 1). Only the HDI for the correlation between the visual-perception task and the episodic-memory task (ρ = 027, HDI = [−.002, .53]) slightly overlapped with zero for Study 1. For Study 2, four out of six 95% HDIs on the posterior correlation coefficients did not overlap with zero, suggesting substantial covariance in metacognitive efficiency across domains (see Table 1). Only the HDI for the correlation between the auditory and the pain tasks (ρ = .16, HDI = [−.09, .42]) and the tactile and pain tasks (ρ = .26, HDI = [−.02, .51]) overlapped slightly with zero (note that a very large part of the evidence is for positive correlation). Finally, the cross-task correlation between the visual and auditory was significant also for Study 3 (ρ = .26, HDI = [.10, .41]).

Hierarchical Cross-Task Correlations for M-Ratio for Studies 1 and 2

Note: Absolute means and HDIs of the posteriors of rho correlation are shown across tasks. Significant correlations are in bold.

Because hierarchical and nonhierarchical fits provided different cross-task correlations, and because the number of included participants in hierarchical and nonhierarchical fits differed (see Supplementary Results: Hierarchical M-Ratio), we recalculated the correlations for nonhierarchical fits with the same participants included in hierarchical fits, and a large difference persisted (see Supplementary Results: Nonhierarchical M-Ratio, Table S3).

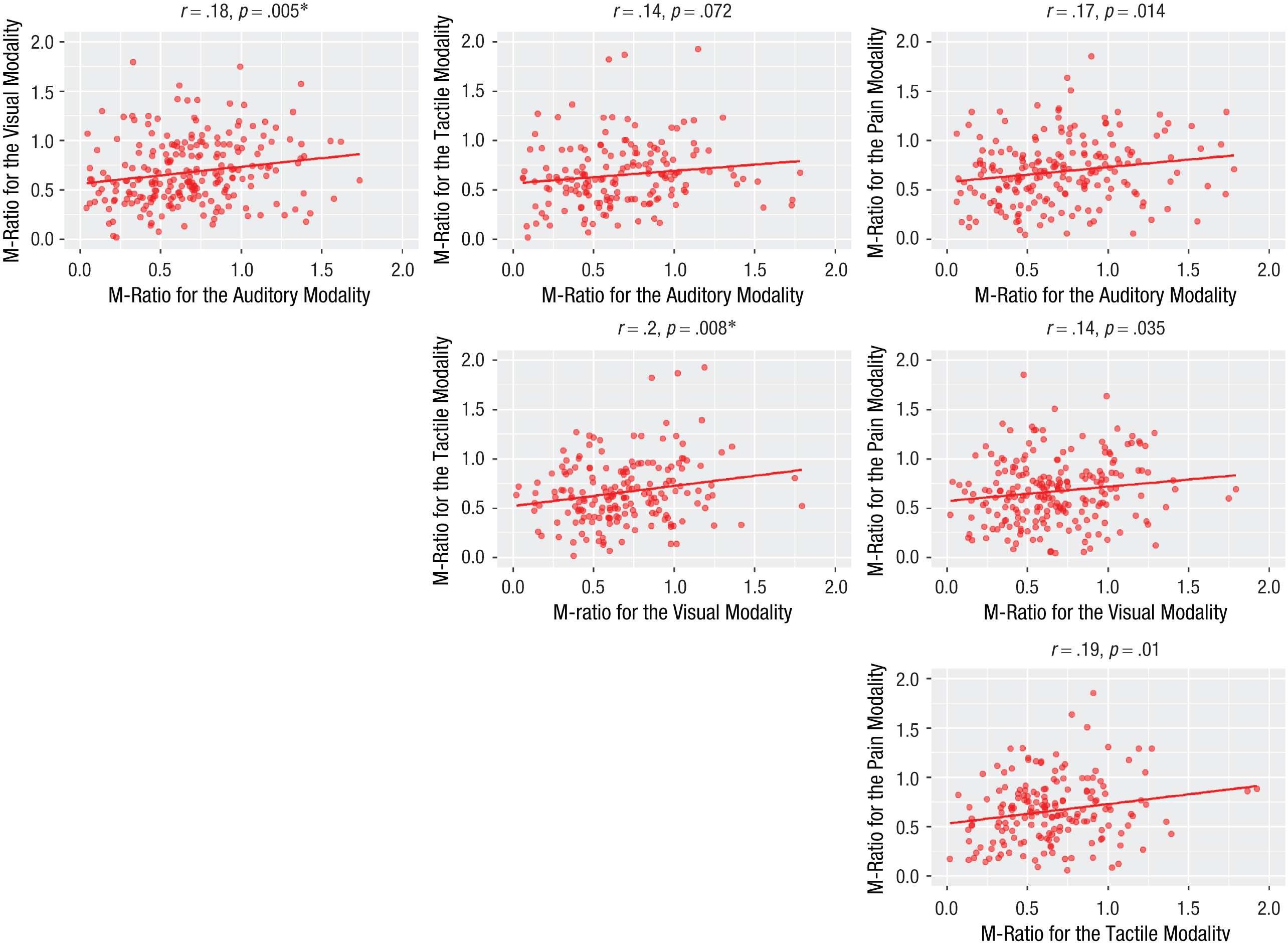

Confirmatory factor analysis for metacognitive efficiency

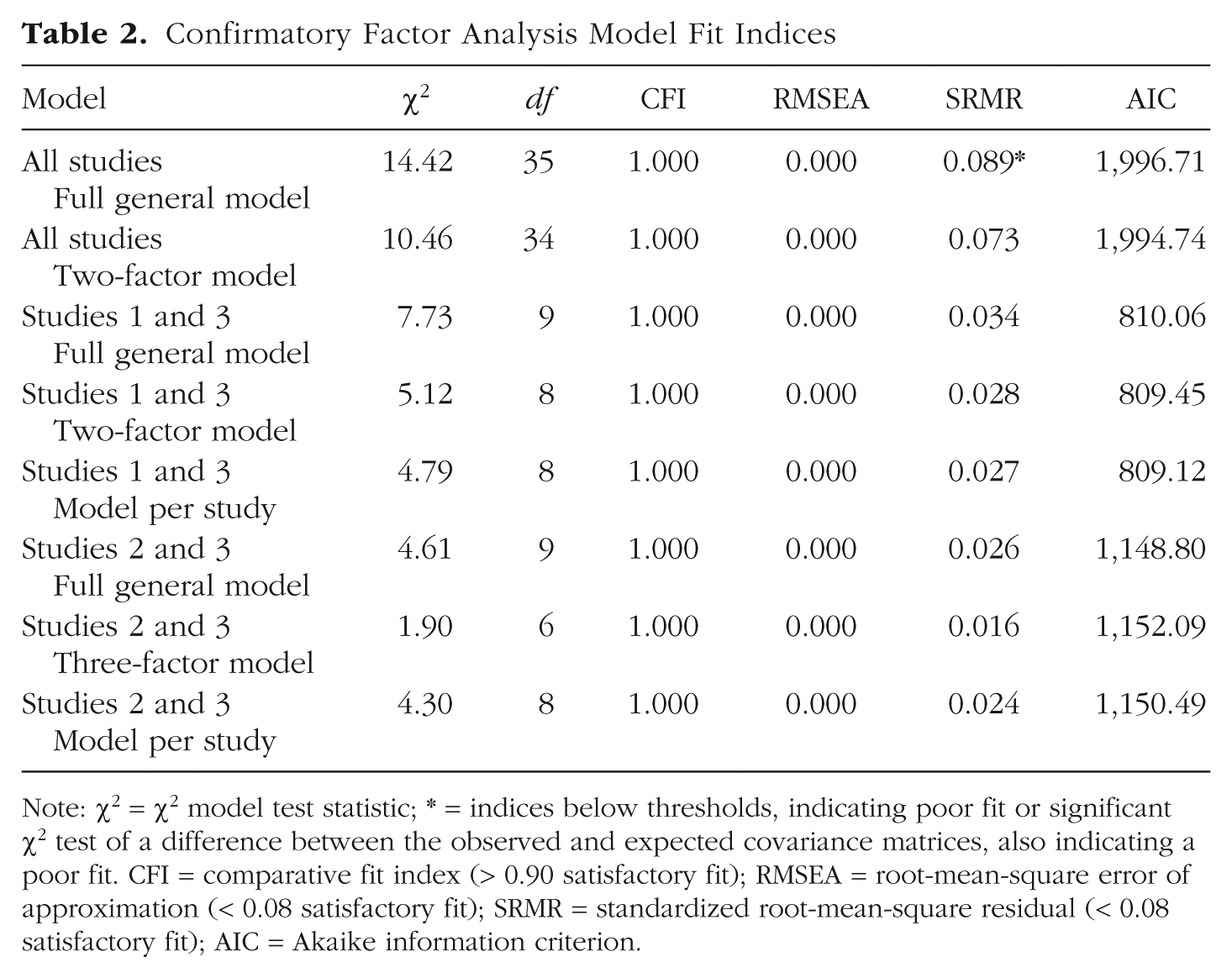

In order to go beyond correlations to test the domain generality of metacognition, we performed CFAs for metacognitive efficiency using individual nonhierarchical fits for M-ratio. The fit indices for the models are presented in Table 2.

Confirmatory Factor Analysis Model Fit Indices

Note: χ2 = χ2 model test statistic; * = indices below thresholds, indicating poor fit or significant χ2 test of a difference between the observed and expected covariance matrices, also indicating a poor fit. CFI = comparative fit index (> 0.90 satisfactory fit); RMSEA = root-mean-square error of approximation (< 0.08 satisfactory fit); SRMR = standardized root-mean-square residual (< 0.08 satisfactory fit); AIC = Akaike information criterion.

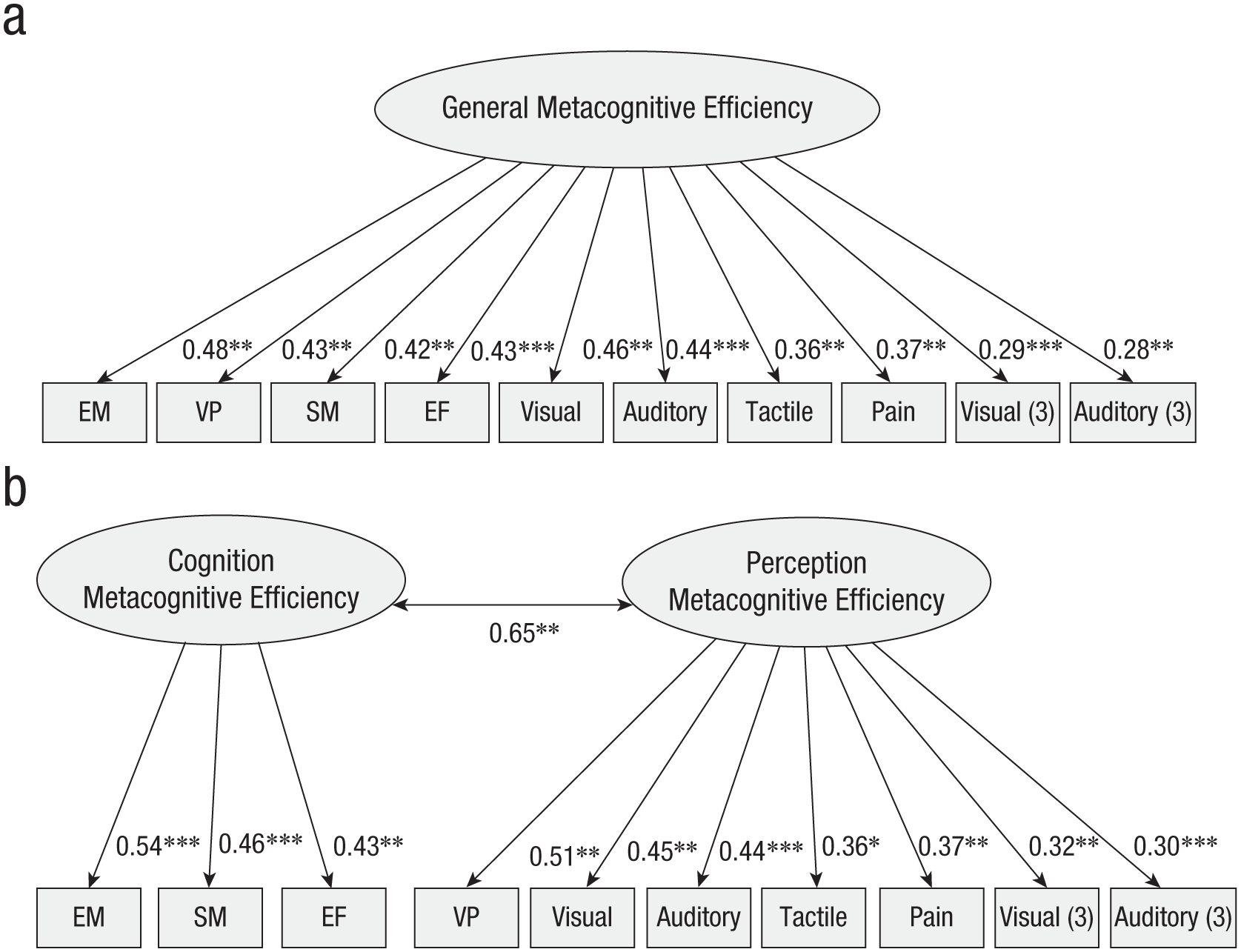

We first estimated several models for the three studies altogether: (a) a full general model estimating a single latent variable for metacognitive efficiency; (b) a two-factor model estimating one cognitive factor (including the episodic-memory task, the semantic-memory task, and the executive-function tasks) and one perception factor (including the remaining tasks). We also tested (c) a model estimating one factor for each study; (d) a four-factor model estimating a visual factor (including the three visual tasks), an auditory factor (including the two auditory tasks), a somatosensory factor (including the tactile and the pain tasks), and a cognitive factor (as in the previous model). However, the estimation of these latter two models did not converge, and no solution was found. The two remaining models demonstrated good fit to the data (see Table 2). Results showed that each item in its corresponding latent variable was significant for Models 1 and 2 (see Fig. 5 for a diagram of the two models, and see Table S5 in the Supplemental Material for details). For the two-factor model, a significant covariance across the two factors was found (covariance = 0.65, z = 2.82, p = .005). Comparing AIC weight for the two models showed that the best-fitting model was the two-factor model that carried 70% of the cumulative model weights compared with 30% for the full general model. This is consistent with the likelihood ratio test showing that the two-factor model outperforms the full general model, delta χ² = 5.26, p = .021.

Confirmatory factor analysis plots of the three studies together for the full model (a) and the two-factor model (b). In Study 1, tasks were labeled EM (episodic memory), SM (semantic memory), VP (visual perception), and EF (executive functions); in Study 2, tasks were labeled auditory, visual, pain, and tactile; and in Study 3, tasks were labeled Visual_study3 and Auditory_study3. * = significant factor loading.

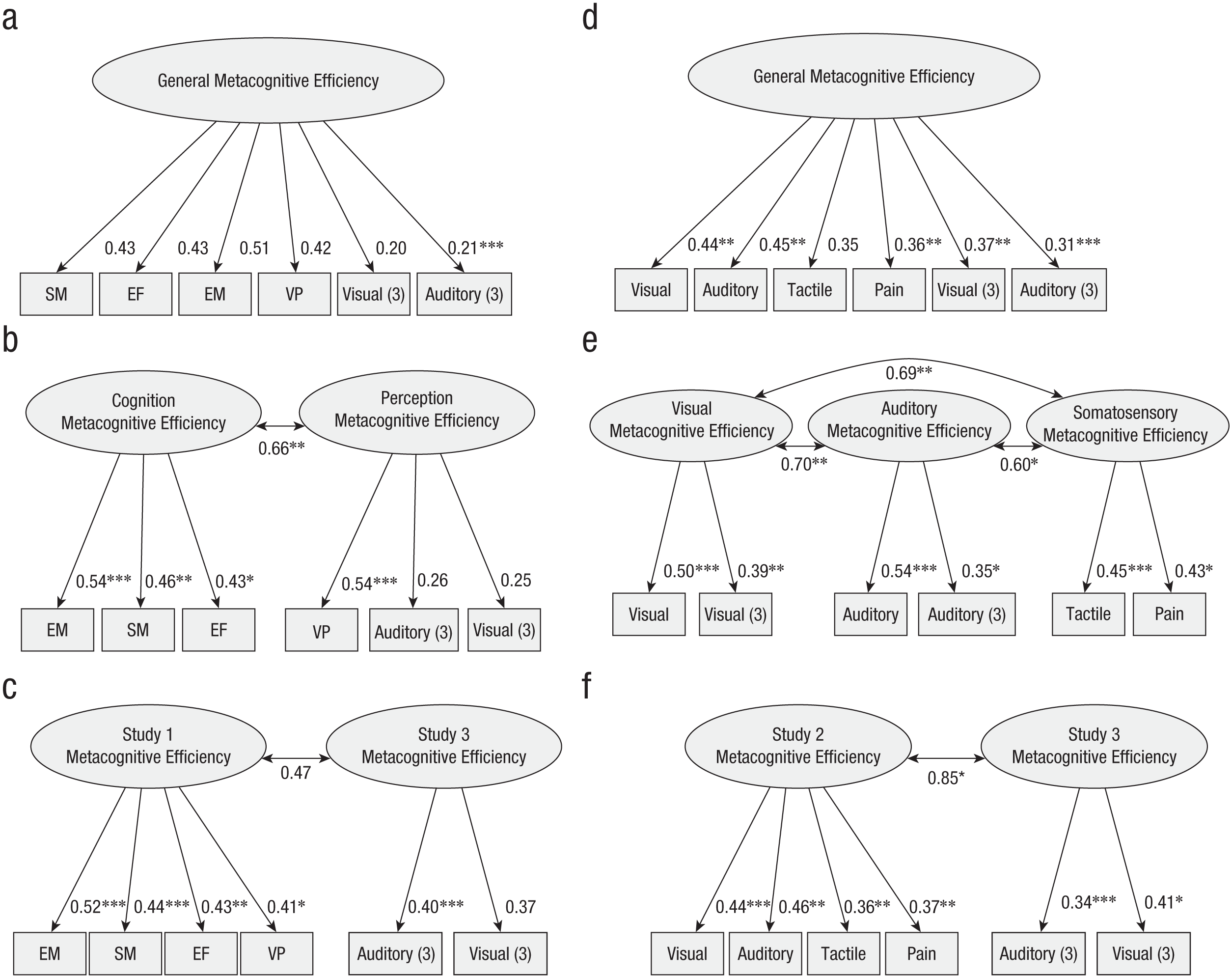

Because the model per study was not identified when gathering all studies (probably because of much missing data; Studies 1 and 2 were performed by different participants), we further tested models gathering Studies 1 and 3 in one analysis and Studies 2 and 3 in another. For Study 1 + 3, we tested (a) a full general model, (b) a two-factor model (perception and cognition), (c) a model with one factor per study (see Fig. 6a–6c for a diagram for the three models, and see Table S6 for details). Model-fit indices suggested a satisfactory fit to the data for all models (see Table 2). For the two-factor model and the model per study, each item in its corresponding latent variable was significant for the three models. For the two-factor model, a significant covariance across the two factors was found (covariance = 0.66, z = 2.65, p = .008). In contrast, a nonsignificant covariance across the two factors was found for the model per study (covariance = 0.47, z = 1.74, p = .081). Factor loadings for the full general model were mainly not significant (see Fig. 6). Comparing AIC weight for the three models (see Table 2) showed that the best-fitting model was the model per study that carried 39% of the cumulative model weight compared with the two-factor model (33%) and the full general model (29%). Using the likelihood ratio test, we showed that the two-factor model marginally outperformed the full general model, delta χ² = 3.65, p = .056, and that the model per study did not significantly improve the model fit, delta χ² = 2.63, p = .105.

Confirmatory factor analysis plots of Study 1 and Study 3 together for the full model (a), the two-factor model (b), and the model per study (c). Confirmatory factor analysis plots of Study 2 and Study 3 together for the full model are shown in (d), for the three-factor model in (e), and for the model per study (f). In Study 1, tasks were labeled EM (episodic memory), SM (semantic memory), VP (visual perception), and EF (executive functions); in Study 2, tasks were labeled auditory, visual, pain, and tactile; and in Study 3, tasks were labeled visual (3) and auditory (3). * = significant factor loading.

For Study 2 + 3, we tested (a) a full general model corresponding to one factor for all tasks, (b) a three-factor model (one for visual perception, one for auditory perception, and one for somatosensory perception gathering tactile and pain modalities), and (c) a model with one factor per study. For the three models, model-fit indices suggested a good fit to the data for all models (see Table 2). Each item in its corresponding latent variable was significant for the three models except for the tactile task in the full general model for which the factor loading was marginally significant, standardized factor loading = 0.35, z = 1.87, p = .061 (see Fig. 6d–6f for a diagram of the three models, and see Table S6 for details). For the three-factor model, significant covariances were found across all three factors—across the visual-perception and the-auditory perception factors (covariance = 0.70, z = 3.10, p = .002), across the visual-perception and the somatosensory-perception factors (covariance = 0.69, z = 2.87, p = .004), and across the auditory-perception and the somatosensory-perception factors (covariance = 0.60, z = 2.28, p = .022). For the model per study, a significant covariance across the two factors was found (covariance = 0.85, z = 2.11, p = .035). Comparing AIC for the three models (see Table 2) showed that the best-fitting model was the full general model that carried 67% of the cumulative model weight compared with the model per study (25%) and the three-factor model (8%). This is consistent with the likelihood ratio test showing that neither the three-factor model, Δχ² = 2.44, p = .486, nor the model per study, Δχ² = 0.30, p = .585, outperformed the full general model. Note that the full model here corresponds to a single perceptual module, because no cognitive tasks were included.

Exploratory analyses

Finally, we were interested in the relation between general metacognitive efficiency and metacognitive bias. Although these two constructs can theoretically be considered as independent (Fleming & Lau, 2014), correlations between the two are frequently observed (Xue et al., 2021). Thus, we investigated whether metacognitive bias could create spurious cross-task correlations for metacognitive efficiency. We regressed out metacognitive bias on metacognitive efficiency for each task of each study and then performed cross-task correlation using the residuals (for details, see Supplementary Results: Cross-task Correlations Controlling for Metacognitive Bias, Table S7). Overall, the pattern of results shifted only slightly between the two types of correlations.

Discussion

Whether metacognition follows domain-general or domain-specific rules remains unclear. Here, we compiled data from three large-sample studies to investigate the variability of the magnitude of cross-task correlations for both metacognitive efficiency and metacognitive bias. We compared hierarchical and nonhierarchical Bayesian estimations of metacognitive efficiency and used factor analysis to estimate latent constructs.

Consistent with recent findings (Lund et al., 2023; Mazancieux, Fleming, et al., 2020), we show that hierarchical M-ratio estimates led to higher cross-task correlations in the three studies (particularly for Study 1, as four out of six individual correlations for nonhierarchical correlations were below the lower bound of the HDI of hierarchical correlations). One explanation for the large correlations is that tasks that have large stimulus variability, particularly those that have predefined stimuli (as is the case in Study 1), have larger covariance. In the HMeta-d model (Fleming, 2017), the precision of the meta-d′ increases for participants who used the entire spectrum of the confidence scale, which may amplify the covariance between tasks. This is notably the case for the visual-perception task and the semantic-memory task, which both have high stimulus variability. As a result, the difference between hierarchical (r = .62) and nonhierarchical (r = .18) fits is one of the highest. For these two tasks, the variability in difficulty is also more similar across participants than for tasks such as the episodic-memory task, which likely increases the cross-task correlations in the hierarchical model.

Study 3 (with a staircase procedure) is also likely to have variability in stimulus difficulty. However, the experienced variability is probably lower than the one experienced in Study 1, because the staircase gradually adjusts difficulty. In Study 1, because of the random trial presentation, participants may experience difficult trials followed by easy trials, which increases the variability in the confidence scale. Because of the staircase, Study 3 may be the study least influenced by this confound. Study 2 had only two difficulty levels, but note that the experienced difficulty may also have varied across participants, thus subjectively creating several levels; further, easy and difficult trials were clearly identified in Study 2, which made this study more similar to Study 1. Study 3 has indeed one of the lowest differences between hierarchical (r = .26) and nonhierarchical fits (r = .14).

Both nonhierarchical and hierarchical metacognitive efficiency revealed that the pain task correlated the least with other tasks, although a significant correlation with the visual task was found. Previous work has suggested the existence of a specificity for metacognition about pain resulting from the arousal components intrinsically associated with pain (Beck et al., 2019). In contrast, our analysis showed that with a larger sample size (in the initial study N = 36), shared variance with other tasks can be identified.

For the reasons mentioned above, we suggest that hierarchical fits may inflate cross-task correlations for metacognitive efficiency. On the other hand, individual fits may be less stable because of trial numbers (Guggenmos, 2021). At the time of project design, it was known that M-ratio estimates could be noisy in experiments with fewer than 100 trials per participant (Fleming, 2017), and trial counts between 120 and 196 were thus chosen for all tasks. Subsequently, it has been found that test–retest reliability increases beyond this point (Guggenmos, 2021). The test–retest reliability sets an upper bound on the total variance that can be explained by a given measure and consequently on the magnitude of intertask correlations. The primary expected consequence of a decrease in trials is thus that the magnitude of domain-general factors might underestimate a true effect.

Consequently, we suggest that the individual fits used in CFA analyses are the most reliable results, because they do not inflate correlation for more difficult trials but instead consider the underlying structure of the data. CFA results support the idea that metacognitive efficiency is not fully domain general but that there is rather a metacognitive module for perception tasks and a metacognitive module for cognition tasks, supporting the idea of a weak domain generality (Mazancieux et al., 2023). These findings align with the work of Lehmann et al. (2022), in which the numerically highest fit among all models was obtained for a bifactor model with isolation of perception and a grouping between attention and memory modules. Here, we gathered memory and attention (which are involved in the executive-function task of Study 1) in a cognition module. We also found a significant medium-to-large covariance across the modules, suggesting that they may operate with similar processes. Because the model per study was not identified, we were unable to test the possible confound that only Study 1 included cognitive tasks and that Studies 2 and 3 were more similar in terms of task structure and better control of first-order performance. When running this model with only Study 1 and Study 2, the model per study carried 39% of the cumulative model weight compared with 33% for the two-factor model, and the likelihood ratio test showed that only the two-factor model marginally outperformed the full general model. Thus, correlated cognition and perception factors seem to be the best underlying structure for metacognitive efficiency. Gathering six tasks from Study 2 and Study 3 confirmed that perceptual metacognitive efficiency is domain general, which extends what Lehmann et al. (2022) found with two tasks.

Even though previous work has found a correlation between metacognitive bias and efficiency (Xue et al., 2021), our residual correlations did not show a strong change in the correlation pattern. Thus, we suggest that correlations found across tasks in the perception factor and in the cognition factor are not spurious correlations due to a domain-general metacognitive bias.

Finally, we suggest that one way to go beyond the examination of correlations would be to investigate mechanisms that apply across tasks (Hobot et al., 2023; Mazancieux et al., 2023). Model-based measures of metacognition may hold promise because they are based on models of metacognition derived from explicit processes (Boundy-Singer et al., 2023; Shekhar & Rahnev, 2021b). Another way to tackle domain-general metacognition is to investigate potential deficits in specific populations (see Meunier-Duperray et al., 2024, for work on older adults).

To conclude, we interpret our results as evidence for a weak domain generality of metacognition (Mazancieux et al., 2023), with one metacognitive module for perceptual tasks and another for cognitive tasks. The strength of our work is in identifying for the first time the cognition module that encompasses three different tasks. We also extended previous results using two tasks for the perception module to seven tasks with different task structures and modalities. We highlighted the importance of variability in trial difficulty for M-ratio estimations, and we propose that tasks with high variability in (objective) difficulty are likely to correlate more. This is particularly the case for hierarchical fits in which participants who use the entire spectrum of the confidence scale have higher precision which in turn has a higher weight in the cross-task correlations. This is particularly the case for tasks with random predefined trial difficulty compared with staircase, for which change in trial difficulty is more gradual. Finally, more work is needed to investigate the reliability of the cognition factor for metacognitive efficiency.

Supplemental Material

sj-docx-1-pss-10.1177_09567976251415354 – Supplemental material for Metacognition in Decision-Making Across Domains and Modalities: Evidence from Three Studies

Supplemental material, sj-docx-1-pss-10.1177_09567976251415354 for Metacognition in Decision-Making Across Domains and Modalities: Evidence from Three Studies by Audrey Mazancieux, Katarzyna Hat, Renate Rutiku, Michał Wierzchoń and Kristian Sandberg in Psychological Science

Footnotes

Acknowledgements

We thank Dunja Paunovic, Katarina Vulic, Povilas Tarailis, Tomasz Kostka, Karolina Wiercioch-Kuzianik, Helena Bieniek, Justyna Brżczyk, Eląbieta A. Bajcar, Julia Badzińska, Marianna Di Nardo, Stefanie Meeuwis, Mateusz Wasylewski, and the C-lab interns between 2020 and 2022 for their assistance in data collection. We thank Stephen Fleming for his input on Study 1 and Asger Roer Pedersen for guidance on confirmatory factor analyses.

Transparency

Action Editor: Vishnu Sreekumar

Editor: Simine Vazire

Author Contributions

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.