Abstract

People selectively enforce their moral principles, excusing wrongdoing when it suits them. We identify an underappreciated source of this moral inconsistency: the ability to imagine counterfactuals, or alternatives to reality. Counterfactual thinking offers three sources of flexibility that people exploit to justify preferred moral conclusions: People can (a) generate counterfactuals with different content (e.g., consider how things could have been better or worse), (b) think about this content using different comparison processes (i.e., focus on how it is similar to or different than reality), and (c) give the result of these processes different weights (i.e., allow counterfactuals more or less influence on moral judgments). These sources of flexibility help people license unethical behavior and can fuel political conflict. Motivated reasoning may be less constrained by facts than previously assumed; people’s capacity to condemn and condone whom they wish may be limited only by their imaginations.

Keywords

A voter blames the president for policies that did not actually harm the economy but that he believes almost did. A contractor reassures herself that she did not cheat her client as much as she could have. As these examples illustrate, the moral judgments we make about what to condemn and condone depend not only on the facts we know but also on the counterfactuals we imagine—what we believe “might have been” (see Roese, 1997). Prior work emphasizes that the way people think about counterfactuals explains moral judgment’s regularities— for example, by helping people reason about causality (Byrne, 2017). In this article, we reveal how it explains moral judgment’s irregularities. We argue that counterfactual thinking facilitates moral inconsistency.

Everyone is morally inconsistent sometimes: Our judgments about how much to condemn or condone a particular behavior do not always adhere to the same moral principles (Effron & Helgason, 2023). This inconsistency arises because moral principles can conflict (Bartels et al., 2015) but also because people selectively apply and enforce these principles (Ditto et al., 2009), such as when they condemn in-group leaders more than out-group leaders for the same transgression (Abrams et al., 2013). More broadly, moral inconsistency can reflect our tendency to let ourselves and others off the hook for wrongdoing when it suits us (Effron & Helgason, 2023).

One strategy enabling moral inconsistency is motivated reasoning about facts: People preferentially remember and strategically interpret reality so that it justifies preferred moral judgments (Campbell et al., 2023; Stanley & De Brigard, 2019). We suggest an underappreciated and perhaps more powerful strategy: motivated reasoning about counterfactuals. To justify their moral judgments, people may preferentially imagine and strategically interpret alternatives to reality.

Counterfactuals—possibly even more than facts—are appealing fodder for motivated reasoning. First, constructing counterfactuals is easier than collecting facts. Facts require observation; counterfactuals just require imagination. Second, counterfactuals make conclusions more resistant to falsification than facts. History cannot be rerun to test what would have happened in alternative circumstances. Third, there may simply be more counterfactuals than facts that justify a desired conclusion. Events happened only one way, but they could have happened in nearly infinite ways.

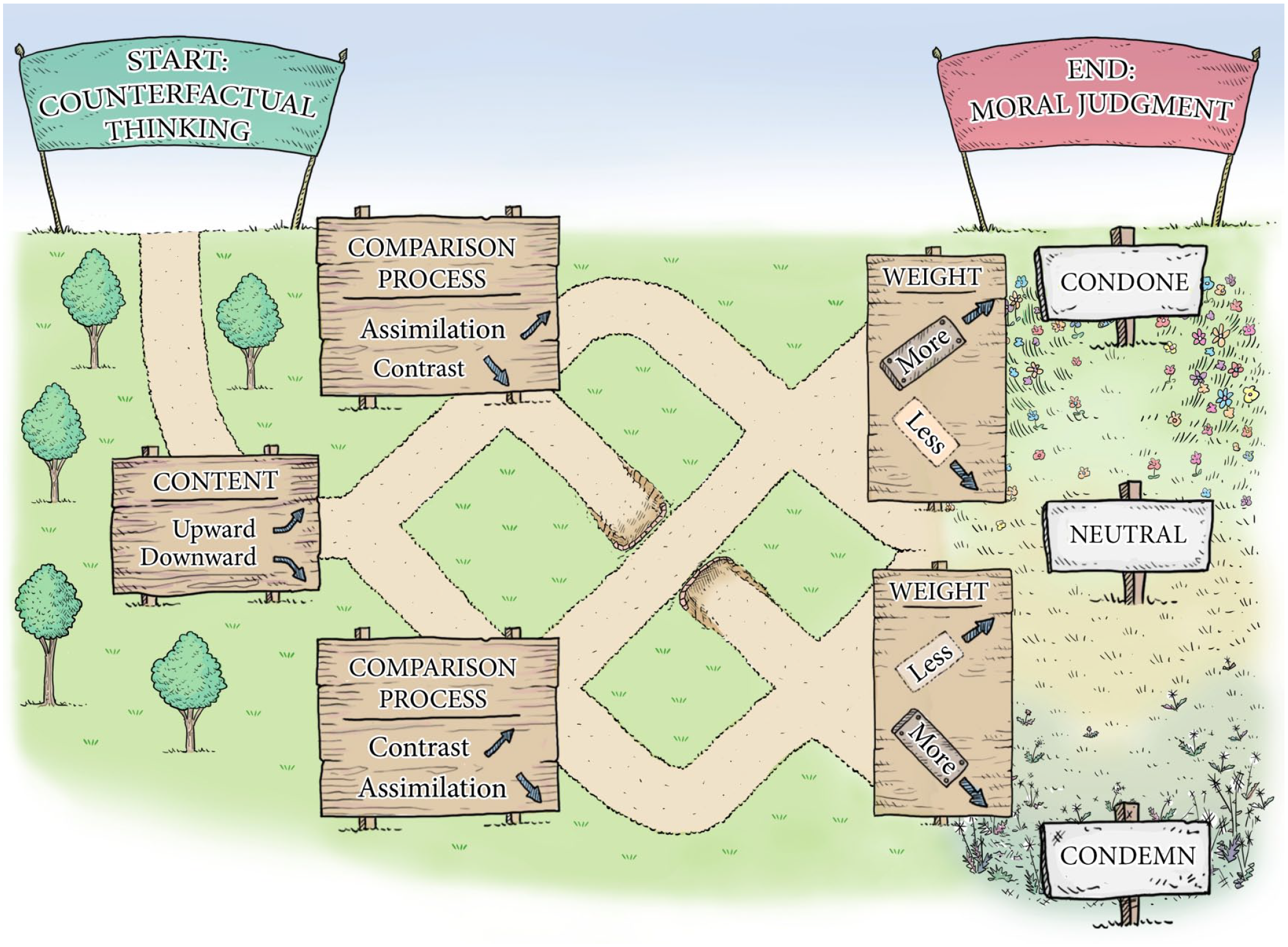

Perhaps more importantly, counterfactuals enable motivated reasoning because they are more flexible than facts. People face three forks in the path from counterfactual thinking to moral judgments (see Fig. 1): (a) They can choose the counterfactual content to imagine (e.g., focusing on how reality could have turned out better or worse), (b) they can select the comparison process they use to think about this content (i.e., focusing on how a counterfactual is similar to or different than reality), and (c) they can determine how much weight they give to the result of this comparison process (i.e., how much they accept or reject counterfactual thinking as a relevant input into their moral judgments). Each fork is a source of flexibility—a degree of freedom that people can exploit to justify a moral judgment they want to make. That is, motivated reasoning shapes the route people take in a “garden of forking paths” (see Gelman & Loken, 2013). Our thesis is that counterfactual thinking facilitates moral inconsistency because these degrees of freedom allow people to selectively adhere to and enforce their moral principles. We discuss each degree of freedom in turn, highlighting how, to condemn or condone what they want to, people can simply use their imaginations.

On the “garden of forking paths” from counterfactual thinking to moral judgment, motivated reasoning shapes which of three forks people take.

Content

How much should the U.S. president be blamed for the 1.1 million American deaths during the COVID-19 pandemic? 1 Your answer probably depends on your politics; partisans display moral inconsistency by blaming crises less on their own party than on the opposing party (Healy et al., 2014). Motivated counterfactual thinking may play a role. When asked how the current “COVID-19 situation” would be if Donald Trump had defeated Joe Biden in the 2020 election, Republicans tended to agree it would be “a whole lot better,” implying Biden deserves blame, whereas Democrats tended to agree it would be “a whole lot worse,” implying Trump deserves blame (Epstude et al., 2022).

This example illustrates the first degree of freedom: a counterfactual’s content—the specific events and outcomes we imagine “might have been.” Because we cannot prove what might have been, we have the flexibility to imagine content that fits with our existing beliefs (Tetlock, 1998), and the content we imagine in turn influences whom and what we condemn and condone (Effron, 2018). This process facilitates moral inconsistency: We generate counterfactual content that allows us to justify the moral judgments we prefer. In the COVID-19 example, the content dimension is the direction of comparison—whether people imagine scenarios that are better or worse than reality. However, motivation can also influence other dimensions of counterfactual content.

One such dimension is how much worse people imagine their own behavior could have been. When White undergraduates expected to appear racist in the future, they exaggerated the number of opportunities they had (and passed up) to make racist decisions on a previous task (Effron et al., 2012). When dieters expected to indulge in freshly baked cookies, they exaggerated the unhealthiness of foods they had previously declined to eat (Effron et al., 2013). In both examples, the anticipation of doing something “sinful” increased how “sinfully” they imagined they could haveacted in the past. Why? Imagining the sins you avoided licenses future sin (Effron et al., 2012, 2013). The result is moral inconsistency: People use counterfactual thoughts to give themselves permission for behaviors they would normally condemn.

Another dimension of counterfactual content is how others would have behaved if circumstances had been different. Consider the media pundits who criticized Barack Obama for using a coffee cup to salute marines. Would these pundits have criticized Donald Trump if he had done the same thing? Obama supporters thought not; Trump supporters thought so (Helgason & Effron, 2022a). The content of people’s counterfactual thoughts not only fit with their political beliefs; it also shaped their moral judgments. Prompting counterfactual thinking led Obama supporters, but not Trump supporters, to condemn these pundits as hypocrites. Partisans on both sides of the aisle accuse pundits of such counterfactual hypocrisy but only when the pundits criticize a leader the partisans support. Here, counterfactual thinking facilitated moral inconsistency by allowing partisans to condemn critics of their own political party more than critics of another party.

Comparison Process

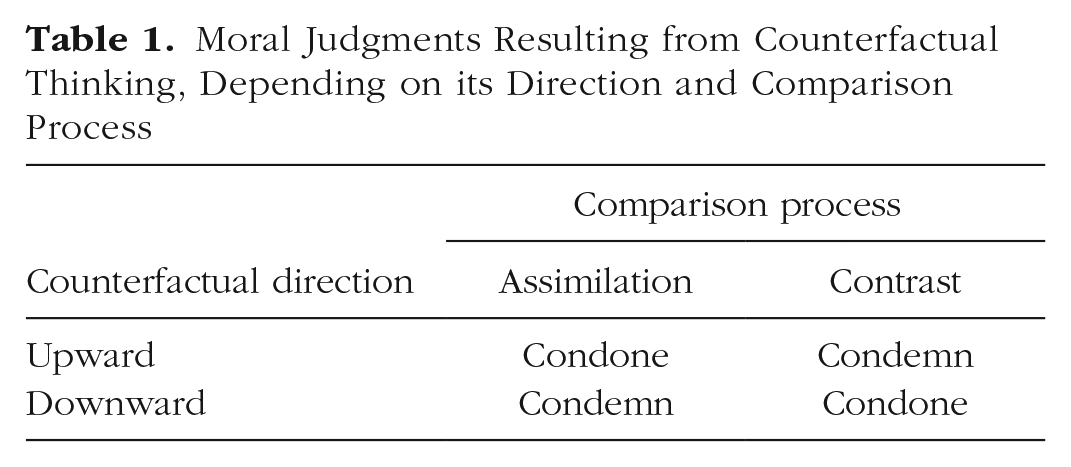

The second degree of freedom is the comparison process people use when thinking about a counterfactual’s content. As noted, counterfactual content can highlight better (upward counterfactual) or worse (downward counterfactual) alternatives to reality. The effect of this direction of comparison, however, depends on which of two processes operate (Markman & McMullen, 2003). People can focus on the differences between the counterfactual and reality, a contrast process emphasizing how the counterfactual never occurred, or focus on the similarities between the counterfactual and reality, an assimilation process that emphasizes how the counterfactual nearly occurred. As we explain next, the process people use may depend on the moral judgment they want to justify (i.e., whether to condemn or condone a behavior) and the direction of the counterfactual that is most salient (see Table 1).

Moral Judgments Resulting from Counterfactual Thinking, Depending on its Direction and Comparison Process

To condone, contrast downward or assimilate upward

Americans felt less outraged about U.S. soldiers’ abuse of Iraqi prisoners when prompted to imagine whether deposed Iraqi leader Saddam Hussein would have perpetrated worse abuses (Markman et al., 2008). White undergraduates felt more licensed to express views that could seem prejudiced after receiving an opportunity (which no one took) to accuse a clearly innocent Black suspect of a crime (Effron et al., 2012). Dieters were more likely to allow themselves to deviate from their “virtuous” health goals when, 2 weeks earlier, they had been asked to reflect on “sinful” foods they had declined to eat (Effron et al., 2013). In each of these examples, people displayed moral inconsistency by condoning behaviors they would otherwise have condemned because they have imagined how “it could have been worse,” contrasting reality against a downward counterfactual (see also Bertolotti et al., 2022). Presumably, participants were motivated to condone their nation’s transgressions and their own indiscretions.

People are also more likely to condone wrongdoing when they assimilate reality toward an upward counterfactual. For example, people will express weaker moral condemnation of wrongdoing if asked to imagine a scenario in which the wrongdoing would have been morally acceptable (Tepe & Byrne, 2022), and they feel more comfortable lying in situations that prompt them to imagine scenarios in which the lie could have been true (Briazu et al., 2017; Shalvi et al., 2011). In these examples, people treat an imagined counterfactual that is more moral than reality as if it actually occurred. This upward-assimilation process may be particularly likely when people are motivated to condone a wrongdoing. Consider how, after falsely claiming that Trump’s 2017 inauguration attracted the largest crowd in inaugural history, the Trump administration suggested that the crowd would have been larger if the weather had been nicer. This upward counterfactual—an alternative reality in which the falsehood is true—may have persuaded Trump’s supporters (but not his opponents) to judge the falsehood more leniently because “it could have been true.” Consistent with this possibility, people will judge a lie as less unethical if prompted to imagine how it could have been true (or might become true)—especially when the lie aligns with their politics (Effron, 2018; Helgason & Effron, 2022b). This assimilation process promotes moral inconsistency by allowing people to condone the lies they like.

To condemn, contrast upward or assimilate downward

In the previous section, we saw people assimilating toward upward counterfactuals and contrasting against downward counterfactuals to condone their own prejudice and indulgence, their political party’s falsehoods, and even their nation’s wrongdoing. When people are less motivated to condone a behavior, however, they may use the opposite comparison process on counterfactuals in each direction, resulting in moral condemnation (see Table 1). First, they may contrast reality against an upward counterfactual. For example, observers will blame someone more for negligence when they think about how that person could have acted less negligently (Murray et al., 2022). Thus, we sometimes condemn people because we imagine their behavior could have been more virtuous (Gilbert et al., 2015).

Second, people can assimilate reality toward a downward counterfactual. In such cases, we condemn people because we imagine their behavior or its outcome could have been worse. For example, when participants observed a boy decline to cheat on a test, they judged him as less moral than the average boy but only when they thought he was aware of a hidden camera (Miller et al., 2005). Apparently, they imagined that he would have cheated if he had not known about the camera (a downward counterfactual), thus treating this imagined behavior as if it actually occurred (i.e., assimilation).

People may be more likely to assimilate toward a salient downward counterfactual when they are motivated to negatively evaluate someone’s morality. Recall that when political partisans disliked (vs. liked) a pundit’s criticism, they were more likely to condemn the pundit for hypocrisy that they imagined the pundit would have displayed if given the chance (Helgason & Effron, 2022a). As another example, participants were more likely to blame a student for a failed attempt to burn her classmate when they imagined a counterfactual scenario in which the attempt was successful—but only when the student had no good reason for wanting to harm her classmate (Parkinson & Byrne, 2017). One explanation is that participants were more motivated to blame the student for counterfactual harm when her behavior was unjustified.

Thus, the process by which people compare a counterfactual to reality—along with the direction of counterfactual that is most salient—offers a degree of freedom to people forming moral judgments. When motivated to condone a behavior, people can assimilate toward an upward counterfactual or contrast against a downward counterfactual; when motivated to condemn a behavior, they can do the reverse (see Table 1). The result is moral inconsistency: The same behavior may get condemned or condoned depending on the interplay of motivation and counterfactual thinking.

Weight

We have argued that the content and process of counterfactual thinking—the first two degrees of freedom—can create evidence that supports a desired moral judgment. The third degree of freedom is that people have the flexibility to choose how much weight to give this evidence (Helgason & Effron, 2022a). That is, motivation may affect not only what counterfactuals people imagine but also how much influence these counterfactuals have on moral judgment (Burris & Branscombe, 1993). Counterfactual weight is a continuum: At one extreme, people can use counterfactuals as critical inputs to their moral judgments; at the other extreme, they can generate counterfactuals but ultimately dismiss them as irrelevant to the judgment at hand.

Several studies support this idea. In one, American partisans considered negative events that did not occur—but could have occurred—during Biden’s or Trump’s presidency (e.g., a catastrophic cyberattack on the banking system). As in other studies, partisanship predicted the content of the counterfactuals participants imagined: If participants opposed a particular president, they thought the negative event had come closer to occurring under his watch. Going beyond other studies, partisanship also predicted the weight participants gave to this content: Participants blamed whichever president they opposed, more than the president they supported, for negative events that they imagined “came close” to happening. For example, among Trump and Biden supporters who agreed that a catastrophic cyberattack had nearly occurred during Biden presidency, Biden received more blame from Trump supporters than from Biden supporters (Epstude et al., 2022). We observed the same results in an experiment that manipulated perceptions of closeness (Effron et al., n.d.). In another study (Helgason & Effron, 2022a), when partisans read about a journalist who had criticized a president whom they supported (vs. opposed), they were not only more likely to think that the journalist would have displayed double standards if given the chance (i.e., to imagine different counterfactual content) but also more likely to treat that imagined double standard as evidence of the journalist’s hypocrisy (i.e., to give counterfactual content more weight). Together, these results suggest that counterfactuals receive more weight when they point to desired moral conclusions.

Discussion

Counterfactual thinking provides a “garden of forking paths” (see Fig. 1) where people have discretion over the content of the counterfactuals they endorse and generate (e.g., upward vs. downward), the process they use to compare this content with reality (i.e., assimilation vs. contrast), and the weight they give to the result of the process. We have argued that people are more likely to take whichever forks in the path lead to their preferred moral judgments. By allowing people to condemn and condone whom they like, these forks—or degrees of freedom—promote moral inconsistency.

Our analysis adds nuance to the perspective that counterfactual thinking is adaptive (see Roese & Epstude, 2017). Although counterfactual thinking can help us learn from our mistakes, regulate our emotions, understand causation, and form reasonable moral judgments (Byrne, 2016, 2017), we suggest it also has a darker side: It facilitates moral inconsistency. Moral inconsistency may sometimes be adaptive for individuals, but it can also be detrimental to society, such as when it reflects a willingness to license ourselves and others to transgress (see Effron & Helgason, 2023). Our analysis also suggests that conflicts surrounding moral issues can arise because each side disagrees not only about the facts but also about the counterfactuals. For example, partisan conflict may arise over whether a politician’s dishonesty should be condoned or condemned not because one side perceives the lies as actually true but because that side thinks the lies could have been true (Effron, 2018). Strategies designed to mitigate such conflicts should strive not only to accurately represent the facts but also to surface and critically evaluate the content, process, and weight of the counterfactuals (see also Tetlock & Visser, 2000).

Although people may effortfully imagine infinite varieties of counterfactual content, there are constraints on the counterfactuals that spontaneously spring to mind. For example, according to Seelau et al. (1995), people generate counterfactuals that (a) preserve natural laws (e.g., do not violate physics), (b) are easy to imagine (e.g., because they undo a recent event; Byrne, 2017), and (c) serve a current goal (e.g., to improve vs. to feel better following failure). Although we have illustrated that counterfactuals can serve the goal of reaching a desired moral conclusion (constraint [c]), this goal is unlikely to eliminate the other two constraints. For example, someone motivated to blame a political out-group for a failing economy would probably focus on the actions of the out-group’s recent, rather than long-past, leaders (constraint [b]).

Our theorizing focused on how motivated reasoning exploits three degrees of freedom that people have once they have begun thinking counterfactually. This focus leaves two key questions open. First, how does motivation affect whether people spontaneously generate counterfactual thoughts to begin with? For example, whether a wrongdoing appears accidental or intentional depends on motivated reasoning, and intentional wrongdoings may be less likely to trigger counterfactual thinking (see Malle et al., 2014). Second, how does motivation affect semifactual thoughts—beliefs that an event would have occurred even if circumstances had been different and that shape moral judgments (see Branscombe et al., 1996; Byrne, 2020)? Future research should examine these questions.

Our framework could be leveraged to enhance moral consistency. People may not be aware of the forks they face in the road from counterfactual thinking to moral judgment and not realize that they are consistently choosing whichever path leads to their desired destination. Making people mindful of these forks—the three degrees of freedom we have discussed—may help them realize that counterfactual thinking could justify other moral judgments in addition to the one they prefer. For example, as part of an individual intervention or as part of an institutional decision-making process, people could be encouraged to imagine a range of counterfactuals (e.g., upward and downward), apply multiple comparison processes (i.e., assimilation and contrast), and consider different weights (e.g., treat counterfactuals as relevant vs. irrelevant for the judgment).

A central tenet of motivated reasoning is that people rarely jump to desired conclusions without evidence but instead process evidence in a biased manner to justify these conclusions (Kunda, 1990). Our analysis suggests that people are willing to construct this evidence by drawing on imagined alternatives to reality. Counterfactual thinking can exert a powerful influence on moral judgments, and the three degrees of freedom we have identified make counterfactual thinking an appealing target of motivated reasoning. Ultimately, then, people’s capacity to condemn and condone whom they wish may be limited only by their imaginations.

Recommended Reading

Byrne, R. M. J. (2020). (See References). Reviews research on how counterfactual thinking affects morally relevant judgments.

Effron, D. A., & Helgason, B. A. (2023). (See References). Reviews a program of research on the varieties and psychological causes of inconsistencies in moral judgments and behaviors.

Epstude, K., Effron, D. A., & Roese, N. J. (2022). (See References). Provides empirical examples of how motivation may influence the content and weight of counterfactual thinking, with implications for blame judgments.

Helgason, B. A., & Effron, D. A. (2022a). (See References). Provides empirical examples of how motivation may influence the content and weight of counterfactual thinking, with implications for hypocrisy judgments.

Roese, N. J., & Epstude, K. (2017). (see References). Reviews evidence for a key theory of why and how people think counterfactually and with what consequences.