Abstract

Scientists often use generics, that is, unquantified statements about whole categories of people or phenomena, when communicating research findings (e.g. “statins reduce cardiovascular events”). Large language models, such as ChatGPT, frequently adopt the same style when summarizing scientific texts. However, generics can prompt overgeneralizations, especially when they are interpreted differently across audiences. In a study comparing laypeople, scientists, and two leading large language models (ChatGPT-5 and DeepSeek), we found systematic differences in interpretation of generics. Compared with most scientists, laypeople judged scientific generics as more generalizable and credible, while large language models rated them even higher. These mismatches highlight significant risks for science communication. Scientists may use generics and incorrectly assume laypeople share their interpretation, while large language models may systematically overgeneralize scientific findings when summarizing research. Our findings underscore the need for greater attention to language choices in both human and large language model-mediated science communication.

1. Introduction

Science has a vital societal function, providing experts, policymakers, and the public with the knowledge required to make informed decisions (Kitcher, 2011). To fulfill its function, science needs to be communicated to people in ways that accurately capture research findings and avoid misinterpretations (Jamieson et al., 2017).

Prior research in science communication has found that different stakeholder groups, including scientists, policymakers, and members of the public, often diverged in their understanding and interpretation of scientific information (e.g. on genome editing technologies; Calabrese et al., 2021; Robbins et al., 2021). Yet, less is known about the specific communicative features that contribute to such interpretive differences (e.g. Venhuizen et al., 2019).

One potential source of misalignment may lie in scientists’ use of generalizing language. Studies found that when reporting research findings, scientists frequently used generics, which are commonly defined as present tense statements expressing generalizations without quantifiers (e.g. “some,” “many”), thereby referring to entire categories rather than numerically specified subsets of people, objects, or phenomena (e.g. DeJesus et al., 2019). For example, “people benefit from a Mediterranean diet” is a generic, whereas “some people benefit from a Mediterranean diet” is not. Similarly, “statins reduce cardiovascular events” is a generic, but “many statins reduce cardiovascular events” is not. While generics suggest that results apply to all or most members of a kind (people, statins, etc.) (Cimpian et al., 2010), corpus analyses found that many scientists used them to describe findings even when they were based on small, unrepresentative samples that did not justify such broad claims (DeJesus et al., 2019; Peters et al., 2024; Rad et al., 2018).

Scientists’ use of generics can be problematic. When study conclusions are expressed in generics, audiences may overgeneralize findings (e.g. about medical treatments) to populations for which they are not applicable (Chin-Yee, 2023). Overly broad claims can also deprive audiences of reliable information for decision-making and undermine public trust in science, especially when inflated expectations go unmet (Master and Resnik, 2013).

The risks of misunderstanding and overgeneralization are particularly high if science communicators and their audiences differ in how they interpret scientific generics. The likelihood of such interpretive misalignments is increased by the fact that generics are ambiguous (Cohen, 2022). For instance, “people benefit from a Mediterranean diet” can refer to some, many, or all people. Scientific expertise including stronger “epistemic vigilance”—the disposition to critically evaluate information source, content, and context (Sperber et al., 2010)—or shared scientific background knowledge (“common ground”) (Clark, 1996) may lead scientists to interpret generics more narrowly than laypeople. If so, then scientists may use them even though their audience might understand them differently than intended, potentially leading to miscommunication.

Recent studies comparing novices and experts in a domain outside of science (e.g. video gaming) found evidence of such expertise effects and adjustment failures by experts (Coon et al., 2021). However, potential differences in generics interpretation between scientists and laypeople remain unexplored (Haigh et al., 2020). Existing studies on the interpretation of generics have primarily tested undergraduates, a subset of laypeople, finding that they viewed generics as referring to almost all members of a kind (Cimpian et al., 2010), rating generics as more generalizable and important than past tense (DeJesus et al., 2019) or quantified claims (Clelland and Haigh, 2025). It is unknown whether laypeople and scientists interpret scientific generics similarly.

Moreover, people are increasingly using chatbots powered by large language models (LLMs), 1 such as ChatGPT, to learn about scientific findings, because LLMs can quickly summarize complex information in accessible terms (Van Veen et al., 2024). Their use for science summarization and communication is further encouraged by marketing campaigns presenting ChatGPT-5 as a “team of PhD-level experts in your pocket” (Yang and Cui, 2025). Given the wide reach of LLMs, if they interpret scientific generics differently than humans, their summaries may risk misinforming users on a large scale (Smith and Tolbert, 2025). Previous studies found that LLMs conflated generics with universalizing statements (Allaway et al., 2024) and, in summaries of scientific texts, often replaced qualified claims with generics (Peters and Chin-Yee, 2025). However, laypeople, scientists, and LLMs have not yet been compared on their interpretation of scientific generics.

While human individuals’ responses to generics may provide insights into how they understand and may use generics, current LLMs arguably do not “understand” language or have humanlike psychological states (e.g. beliefs about generics) (Shanahan, 2024). Nonetheless, LLMs exhibit systematic response patterns based on statistical regularities (i.e. probabilities of word occurrences within contexts) learned during training and fine-tuning (Kumar, 2024). Consequently, while LLM responses to survey-style prompts do not reveal beliefs or intentions, they can be used to probe these learned associations. If LLMs consistently rate generics as especially generalizable or credible, this indicates a stable input–output pattern in how such statements are evaluated within the models. These evaluations can help narrow the space of explanations for why generics frequently appear in LLM summaries (as previously documented; Peters and Chin-Yee, 2025) by showing that LLMs associate generic framing with greater perceived scope and evidential strength.

Combined, these considerations suggest that it can be valuable to directly compare how laypeople, scientific experts, and LLMs evaluate the same generic scientific claims, using a shared task that captures interpretive tendencies. Such comparison, however, has not yet been conducted.

We sought to address this research gap. Our focus was on scientific generics from psychology, where they are particularly common (DeJesus et al., 2019), and biomedicine, where their use has been deemed especially consequential due to their influence on clinical practice and policymaking (Chin-Yee, 2023). For LLM testing, we selected ChatGPT-5 and DeepSeek-V3.1. ChatGPT is the most widely used LLM in scientific contexts (Liang et al., 2024), and DeepSeek is among the only leading LLMs available without a subscription barrier, recently becoming the most downloaded free AI application in the United States (Jamali, 2025).

We showed laypeople, scientific experts, and LLMs scientific generics as well as past tense and hedged variants, asking them to rate each on how broadly it applied to people (generalizability), how credible it was (credibility), and how likely they were to engage with it further, for instance, by reading more about the finding, sharing it, or using it to inform their thinking (impact). Our three main research questions (RQs) were as follows: 2

RQ1. Across participants, does linguistic framing (generic, past tense, hedged) affect perceived generalizability, credibility, or impact of scientific conclusions?

RQ2. Across linguistic frames, do laypeople, scientific experts, and LLMs differ in their ratings of generalizability, credibility, or impact of scientific conclusions?

RQ3. Do laypeople, scientific experts, and LLMs differ in how linguistic framing (generics, past tense, hedged) affects their ratings of generalizability, credibility, or impact of scientific conclusions?

2. Methodology

Study design and procedure

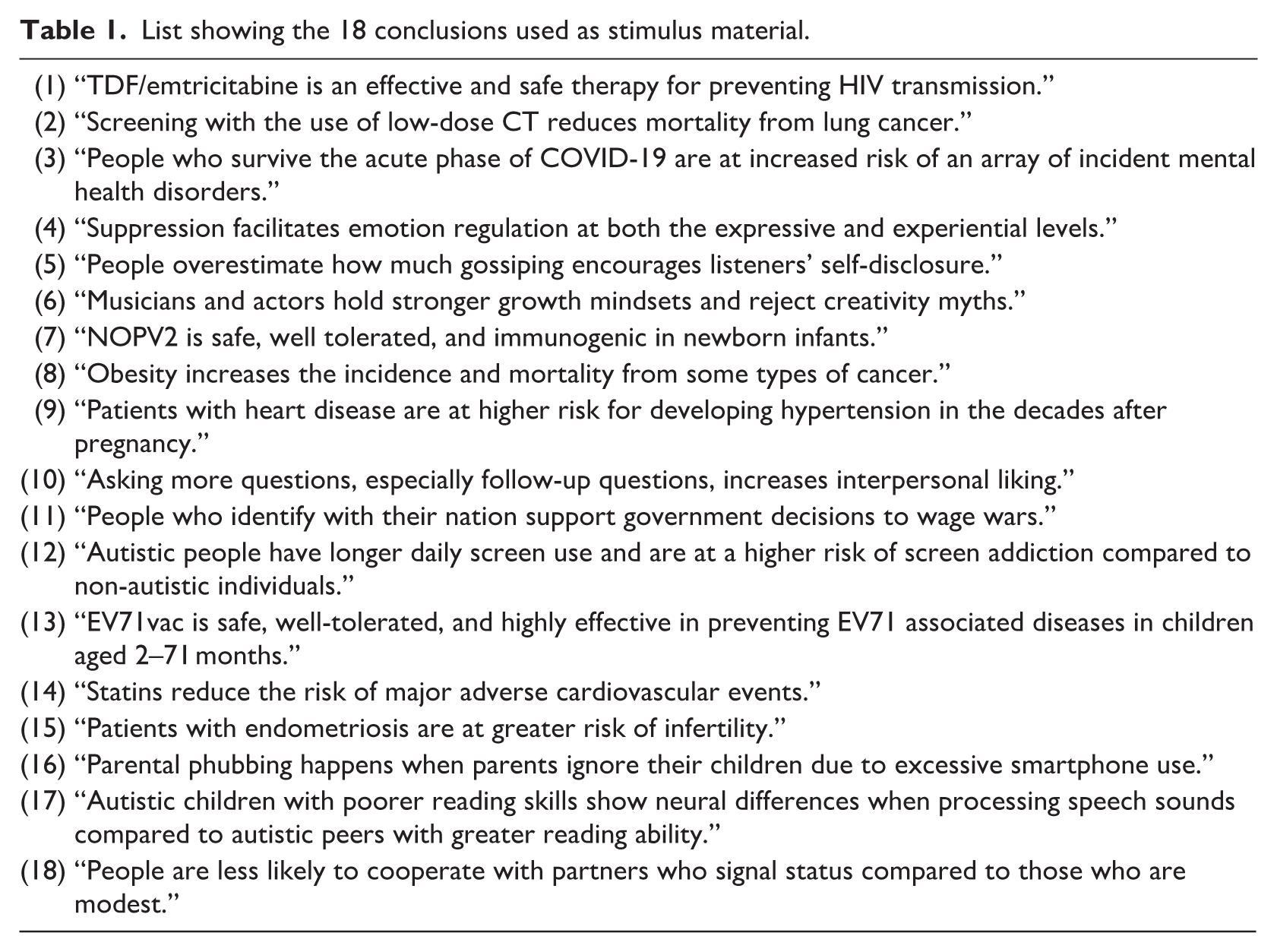

Participants were randomly assigned to one of three Qualtrics surveys, each containing 18 one-sentence research conclusions (9 from psychology, 9 from biomedicine) selected by a disciplinary expert from recent articles in top psychology and medicine journals (Table 1). In each survey version, six conclusions were bare generics (e.g. “statins reduce the risk of major adverse cardiovascular events”) that lacked a preface phrase (e.g. “the study found that [_]”). Six were past tense claims, treated as paradigmatically non-generic statements, and six were hedged claims—two with “might”, and four with “the study suggests that [bare generic].” In prior work, what we call “hedged claims” were treated as “hedged generics” (DeJesus et al., 2019). We classify these statements simply as hedged claims contrasting them with bare generic because the use of “might” and “suggests” explicitly introduces epistemic uncertainty and reduces communicative force relative to bare generics.

List showing the 18 conclusions used as stimulus material.

Each conclusion appeared once per survey but in all three forms (randomized) across participants, enabling between-subject comparisons of framing effects at the conclusion level (with 18 unique generics) while controlling for conclusion content. For each conclusion, participants rated generalizability (“only to the people studied” to “all people”), credibility (“not at all” to “extremely”), and impact (“not at all” to “extremely”) on 5-point scales.

To evaluate participants’ reasons, we also collected qualitative data through a free response question at the end of the survey. Participants were shown four frames for reporting a finding: “XYZ is an effective treatment,” “This study suggests that XYZ is an effective treatment,” “XYZ might be an effective treatment,” and “XYZ was an effective treatment.” Asked to assume that the finding was statistically significant with a medium effect, participants were prompted to indicate which wording would best communicate this kind of result and briefly explain why.

The study was preregistered on an Open Science Framework (OSF) platform 3 and approved by the first author’s institutional ethics board. All study material is available on the OSF platform.

Participants

Two main human groups were recruited: laypeople, defined as individuals with at most an undergraduate degree (e.g. high school, some college, or bachelor’s degree), and experts, defined as individuals holding graduate or professional degrees (Master’s, PhD, MDs, etc.). We focused on experts in psychology and biomedicine, but respondents could indicate their discipline using 11 options (Supplemental Material, Table S1). The final human expert group comprised four subgroups: psychologists, biomedical researchers, other scientists (including experts from the natural sciences, social sciences excluding psychology, and engineering/technology), and other experts (including humanities scholars and respondents who did not specify their expertise; Supplemental Material, Table S2).

To estimate the sample size required to detect a medium-sized main effect of expertise group with seven groups, a power analysis using G*Power 3.1 was conducted. It recommended a total sample of 231, meaning 33 per group.

We distributed the survey via Prolific and via emailing lists and personal contacts primarily in Belgium, Canada, Germany, Italy, the Netherlands, the United Kingdom, and the United States. However, the final sample included respondents from a wider set of countries (see Supplemental Material, Table S3). Respondents were eligible to participate if they were at least 18 years old and fluent in English.

All data were collected anonymously, with no direct identifiers (e.g. names, email addresses) retained. Demographic variables were recorded at a coarse-grained level (e.g. broad education, discipline categories), and no analyses or tables report small cells that could facilitate reidentification (e.g. n < 10). Participants provided informed consent prior to participation. Only fully anonymized datasets and materials are shared on OSF. Free text responses were screened to remove potentially identifying information before upload.

In all, 499 people responded; 67 participants were excluded for failing the attention check question in the survey (n = 38), not answering any question (n = 28), or not indicating their education level (n = 1), leaving 432 participants for analysis; 192 participants were laypeople and 240 experts, most coming from psychology (56.7%) and biomedicine (27.1%) (Supplemental Material, Tables S1–3).

We also included responses from ChatGPT-5 and DeepSeek-V3.1, treated as a sixth and seventh group. These models were selected because they are especially likely to be used for science communication purposes (Liang et al., 2024) and differ in processing architecture. ChatGPT-5 processes every input using the entire model, whereas DeepSeek activates only a small set of specialized modules for each input (Rahman et al., 2025). Including both models allowed us to test whether effects generalized across these distinct systems. Each new LLM chat was a separate random trial and, consistent with previous studies, was treated as one “pseudo-participant” (e.g. Strachan et al., 2024).

LLM responses were obtained by presenting one randomized conclusion and its three associated questions at a time. To approximate laypeople’s typical interactions with LLMs, responses were collected via the OpenAI and DeepSeek web-based user interfaces (UIs) (default settings) rather than through application programming interfaces (APIs), which require coding expertise and are primarily used by developers to access LLMs (Park et al., 2025). The same prompts as in the human survey were used, with item order randomized for each run. To mitigate personalization risks, we used three independent user accounts per platform, disabled memory, and initiated a new chat for every response. 100 LLM responses (50 per model) were collected, exceeding the per-group requirement from our power analysis and approaching the center of the observed range of human expert subgroup sizes, which was between 19 and 136, avoiding overrepresentation of LLMs relative to typical human groups.

All participant groups, except the “other scientists” and “other experts” groups, reached the (per group) sample size recommended by the power analysis (n ⩾ 33, Supplemental Material, Table S1).

Hypotheses

Our preregistration included six directional hypotheses (H1–H6). We focus here on the three most directly tied to our research questions (the remaining preregistered hypotheses are in the Supplemental Material).

Previous studies have found that generics were perceived as broader claims than qualified statements (DeJesus et al., 2019), laypeople interpreted such statements more expansively than experts in non-scientific domains (Coon et al., 2021), and LLMs often replaced qualified claims with generics in science summaries (Peters and Chin-Yee, 2025). Partly based on these findings, we preregistered the following hypotheses:

H1 (RQ1). Scientific conclusions presented in bare generic form will be rated as more generalizable, credible, and impactful than their past tense or hedged versions, across all groups.

H2 (RQ2, RQ3). Laypeople will interpret scientific conclusions, in general, and generics, in particular, more broadly than scientists and LLMs, providing higher generalizability, credibility, and impact ratings.

H3 (RQ2, group and frame interaction). Scientists will show less variation in their responses across different linguistic framings compared with laypeople and LLMs.

Because H2 applies to both RQ2 (broad group comparisons across frames) and RQ3 (group comparisons restricted to generics), we tested it in both contexts but retained its original preregistered numbering.

Statistical analysis

Linear mixed models were used, with generalizability, credibility, and impact ratings as dependent variables and random intercepts for participant and conclusion content to control for repeated measures and variation in the content of claims. Ratings for each conclusion were treated as separate observations, not as scale items with aggregation across ratings. Because the conclusions were intentionally heterogeneous in content, they were not assumed to measure a single latent construct, making factor-analytic or composite-score approaches less suitable.

Two linear mixed models per dependent variable were run. The first, addressing RQ1 and RQ2, included linguistic frame (three levels; generic, past, hedged), expertise (seven levels; laypeople, psychologists, biomedical researchers, other scientists, other experts, ChatGPT-5, DeepSeek-V3.1), English speaker status (native, non-native), and conclusion field (biomedical, psychological) as fixed effects. The second model, addressing RQ3, included frame, expertise (same seven levels), English speaker status, conclusion field, and the interaction between expertise and frame as fixed effects. English speaker status was included in all models to control for it, as non-native English speakers may interpret generics differently because of English proficiency.

No additional multiple-comparisons adjustment was applied, as multilevel models (e.g. linear mixed models) address multiplicity by estimating all effects jointly within a single hierarchical framework (vs independent tests) (Gelman et al., 2012). The inclusion of random effects for participants and conclusions triggers partial pooling, which shrinks noisy estimates toward the overall mean, reducing false positives and guarding against overinterpretation of chance fluctuations across multiple comparisons, making separate post hoc correction unnecessary in this context. All our analyses were also preregistered and had a theory-driven basis, further reducing the need for alpha adjustments (García-Pérez, 2023).

Models were estimated using restricted maximum likelihood with Satterthwaite-approximated degrees of freedom, as implemented by default in GAMLj3 (for model details, see Supplemental Material, section 5). Analyses were conducted using Jamovi (v2.6.26).

For the free response data, a traditional thematic analysis was performed (Braun and Clarke, 2006) because our goal was to identify theoretically meaningful justificatory patterns (e.g. informativeness). While, for instance, topic models (e.g. methods such as Latent Dirichlet allocation) are well suited for exploratory clustering of larger corpora (e.g. essays, Robbins et al., 2021), they can be less appropriate for capturing normative reasoning about communicative choices, which often depends on nuance, context, and evaluative judgments best identified through human-led analysis (Kapoor et al., 2024).

Two researchers independently coded the dataset using a prespecified classification scheme (see OSF material). Inter-rater agreement was assessed using Cohen’s kappa and was by conventional standard substantial (κ = .69, SE = 0.02; Landis and Koch, 1977). Discrepancies were resolved through discussion, and final classifications were subsequently quantified.

3. Results

We report the quantitative results organized by each of our three research questions, followed by the qualitative findings that contextualize participants’ interpretations.

Effects of linguistic frames across participants

Our first research question was: Across participants, does linguistic framing (generic, past tense, hedged) affect perceived generalizability, credibility, or impact of scientific conclusions?

As hypothesized (H1), scientific conclusions written in the past tense or with hedging (“might,” “the study suggests”) were judged as less generalizable than those written as bare generics (b = −.36 and −.30, both p values < .001). However, against H1, past tense statements were rated as more credible than generics (b = .05, p = .009), while hedged statements were rated as less credible (b = −.14, p < 0.001). For impact, compared with generics, both past tense (b = −.05, p = .04) and hedged claims (b = −.07, p = .003) were rated lower, as predicted by H1. In short, controlling for group membership, generic conclusions were interpreted as broader and more impactful than past-tense or hedged variants, although past-tense formulations were judged as more credible.

Group differences in ratings of scientific conclusions (adjusted for frame)

Our second research question was: Across linguistic frames, do laypeople, scientific experts, and LLMs differ in their ratings of generalizability, credibility, or impact of scientific conclusions?

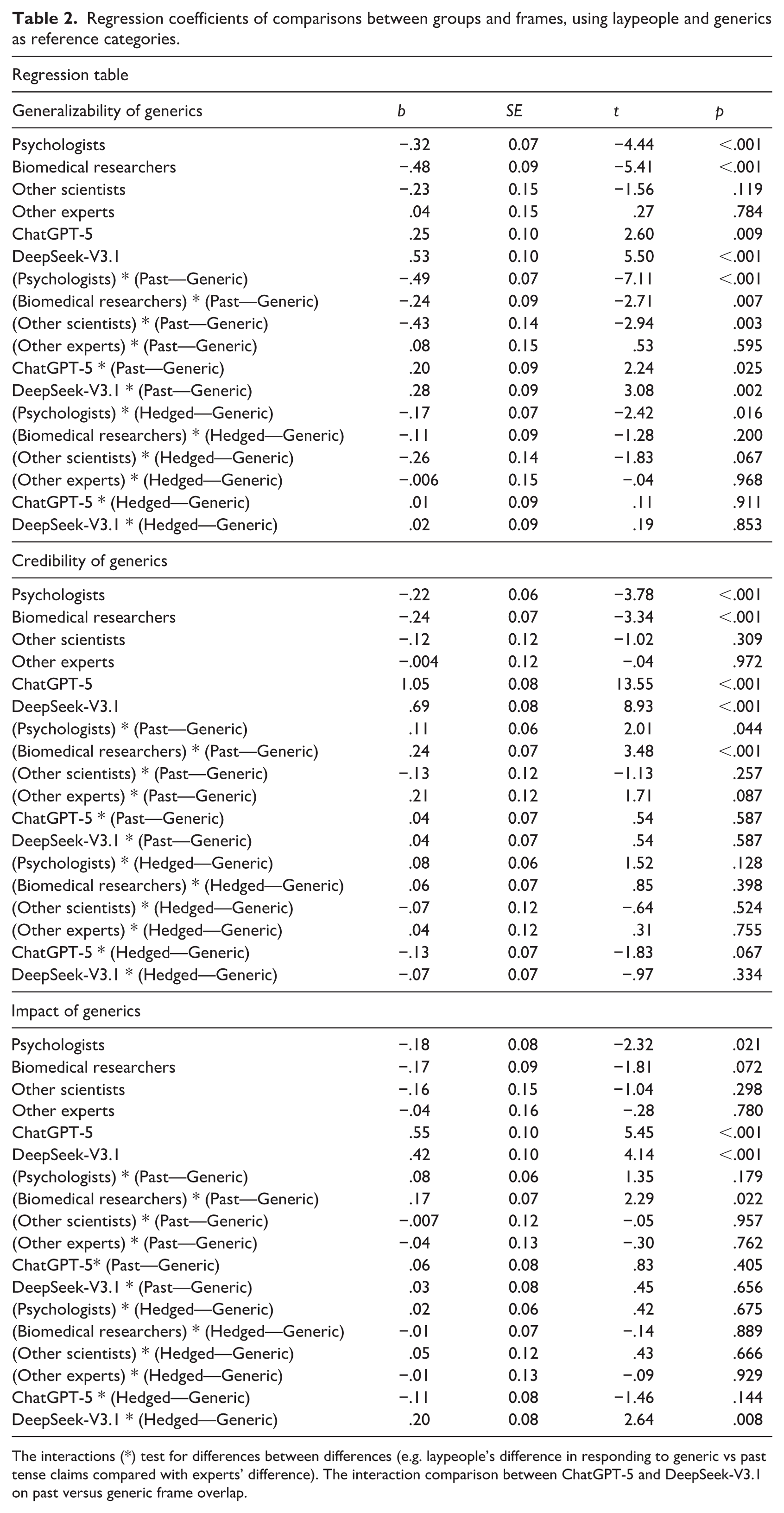

Starting with generalizability ratings, consistent with H2, which predicted that laypeople would interpret the conclusions more broadly than scientists and LLMs, we found that, compared with laypeople, psychologists and biomedical researchers judged scientific conclusions 4 as less generalizable, adjusting for systematic effects of linguistic framing (b = −.32 and −.48, both p values < .001). However, other experts did not differ significantly from laypeople. Moreover, against H2, both ChatGPT-5 (b = .25, p = .009) and DeepSeek-V3.1 (b = .53, p < .001) rated the scientific conclusions, controlling for frame, as more generalizable. In sum, psychologists and biomedical researchers interpreted conclusions more narrowly than laypeople, whereas both LLMs consistently judged them as more broadly applicable.

Turning to credibility ratings, consistent with H2, we found that, compared with laypeople, psychologists and biomedical researchers judged scientific conclusions (adjusted for linguistic frame) as less credible (b = −.22 and −.24, both p values < .001). Yet, against H2, the LLMs showed the opposite pattern, providing substantially higher credibility ratings than laypeople (ChatGPT-5: b = 1.05; DeepSeek-V3.1: b = .69, both p values < .001).

Finally, for perceived impact, psychologists showed lower impact ratings than laypeople (b = −.18, p = .02). However, biomedical researchers, other scientists, and other experts did not differ from laypeople. ChatGPT-5 (b = .55) and DeepSeek-V3.1 (b = .42) had higher impact ratings than laypeople (both p values < .001), contradicting H2. In short, only psychologists showed reduced impact ratings relative to laypeople, whereas both LLMs had substantially higher impact ratings.

Because frame was included as a fixed effect (generics as reference), the estimates reported here reflect group differences adjusted for overall framing effects. The interaction models reported in Table 2 use the same reference frame and therefore yield closely related baseline estimates, although they additionally allow framing effects to vary across groups (due to inclusion of interactions). These models also test whether groups respond differently to changes in linguistic framing, which leads us to our third research question.

Regression coefficients of comparisons between groups and frames, using laypeople and generics as reference categories.

The interactions (*) test for differences between differences (e.g. laypeople’s difference in responding to generic vs past tense claims compared with experts’ difference). The interaction comparison between ChatGPT-5 and DeepSeek-V3.1 on past versus generic frame overlap.

Group differences in the interpretation of generic conclusions

Our third and final main research question was: Do laypeople, scientific experts, and LLMs differ in how linguistic framing (generics, past tense, hedged) affects their ratings of generalizability, credibility, or impact of scientific conclusions? We will present the results separately by the three dimensions focusing primarily on generics.

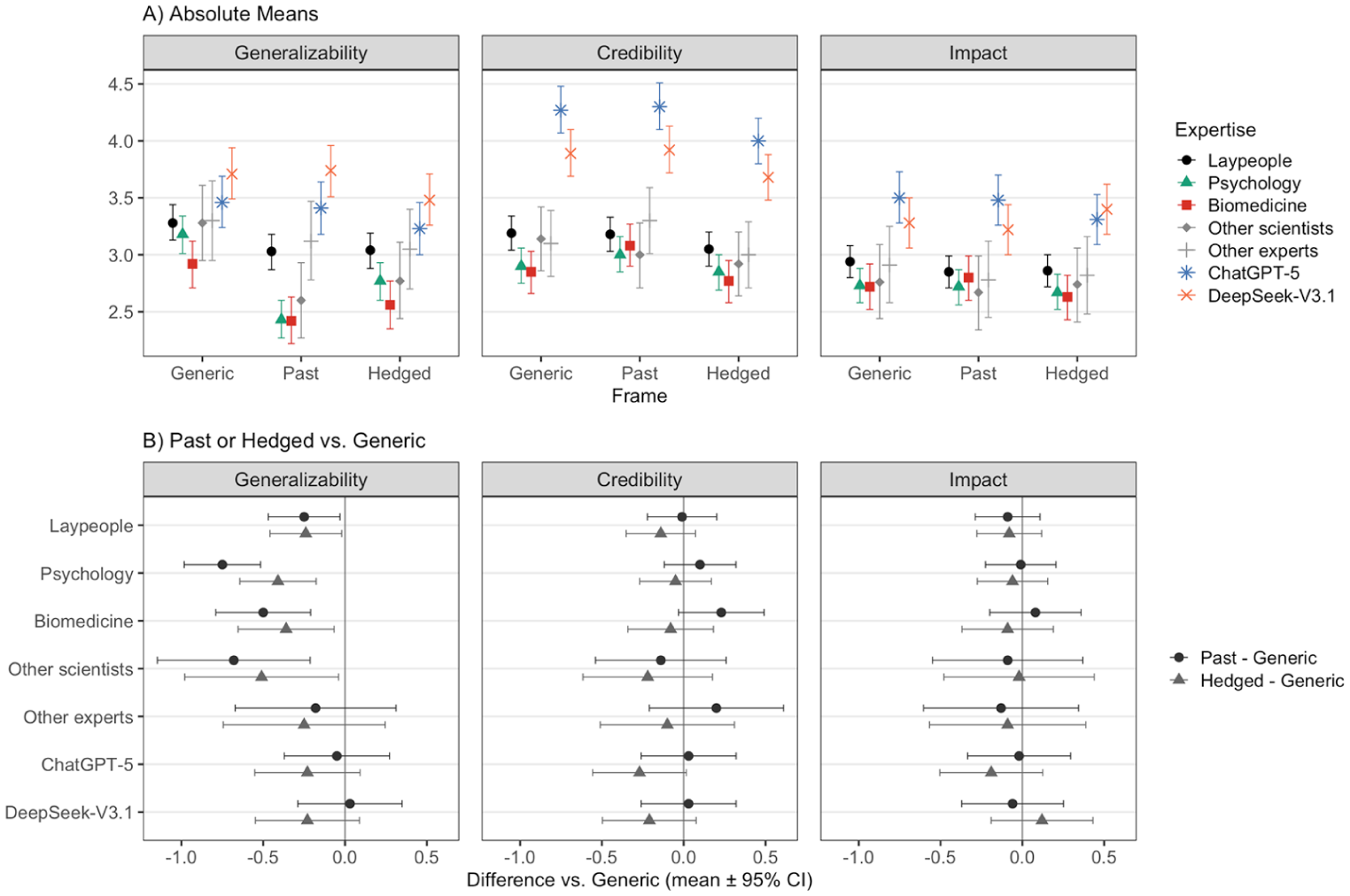

Perceived generalizability of generic conclusions

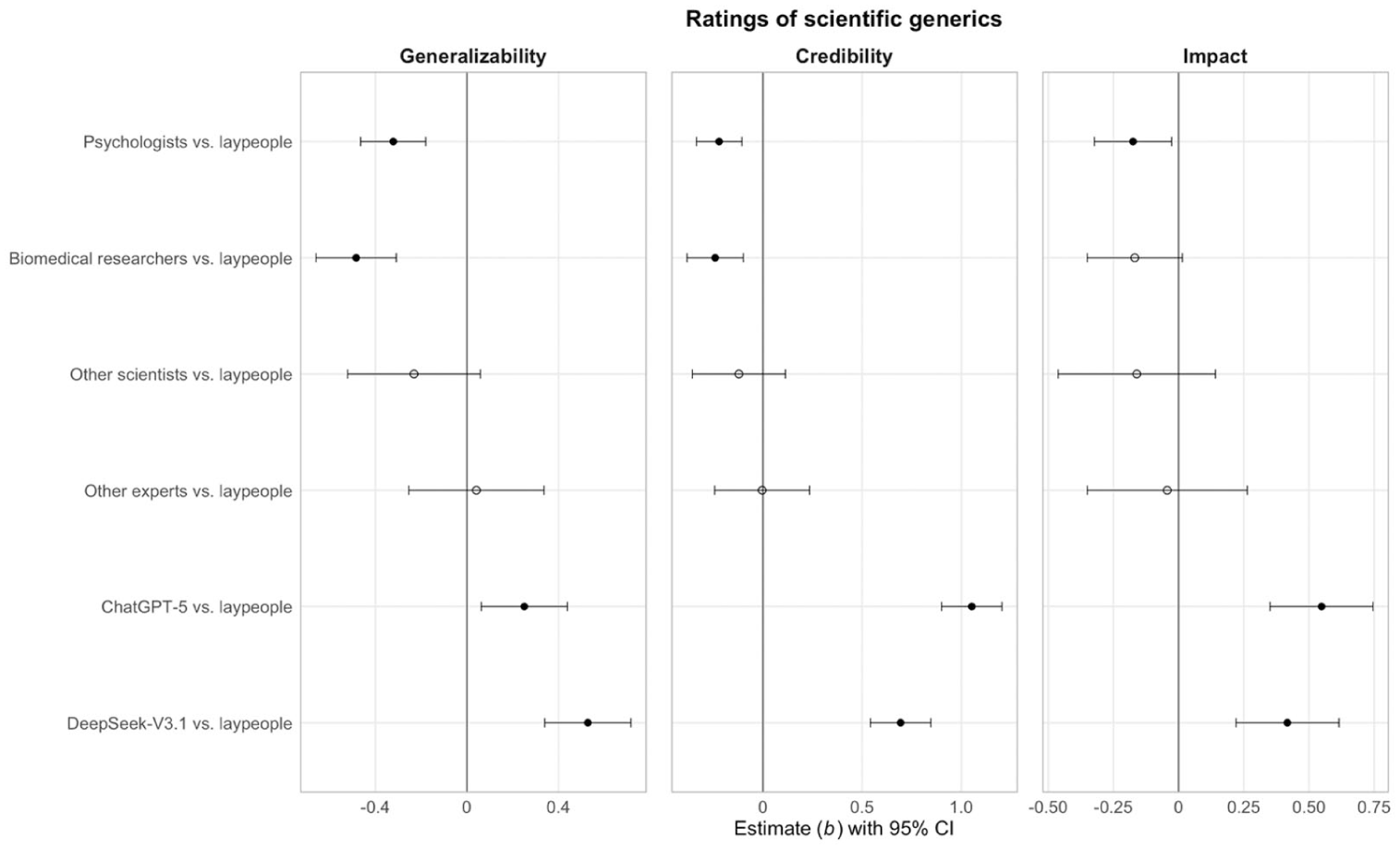

As shown in Figure 1 and Table 2, within the generic frame, psychologists and biomedical researchers rated statements as less generalizable than laypeople. This partly confirms H2. However, other experts did not differ, and both LLMs judged generics as significantly more generalizable, challenging H2. Figure 2 shows the estimate marginal means.

Forest plot showing regression coefficients (b) with 95% confidence intervals for ratings of generic scientific conclusions across groups, relative to laypeople, and by dimension (generalizability, credibility, impact). Estimates reflect baseline group differences at the generic frame (the reference category). The vertical line at b = 0 indicates no difference from laypeople; open circles denote non-significant effects. The model included linguistic frame, expertise, English speaker status, conclusion field, and the expertise * frame interaction as fixed effects, with random intercepts for participants and conclusions to account for repeated measures and variation in conclusions content.

Panel A shows estimated marginal means with 95% confidence intervals (CIs) for each group and frame, separately for each outcome. Panel B shows model-estimated contrasts of past and hedged frames relative to generics within each expertise group and outcome (positive values indicate higher ratings than generics). Zero line denotes no difference from generics. CIs are not intended for inference about between-group differences. Statistical significance was assessed using model-based pairwise comparisons from the linear mixed models (see Table 2).

Moreover, contrary to H3, which predicted that scientists would be less affected by framing differences than laypeople and LLMs, psychologists showed a larger drop in generalizability ratings than laypeople when moving from generic to past tense and from generic to hedged (Table 2) conclusions. Biomedical researchers also had decreased generalizability ratings from generics to past tense, but not from generics to hedged statements compared with laypeople. While other scientists also differed from laypeople in the same two ways as biomedical researchers, other experts did not differ from laypeople.

Notably, against H3, ChatGPT-5 and DeepSeek-V3.1 showed increased generalizability ratings when moving from generics to past tense, compared with laypeople, but not when moving from generic to hedged conclusions. In sum, domain experts adjusted generic interpretations downward more strongly than laypeople, while LLMs adjusted them upward.

Perceived credibility of generics

Within the generic frame, psychologists and biomedical researchers rated statements as less credible than laypeople (Table 2; Figures 1 and 2), thus partly confirming H2. However, other scientists and other experts did not differ from laypeople. ChatGPT-5 and DeepSeek-V3.1 rated the credibility of generics higher than laypeople, contradicting H2. In addition, contradicting H3, across frames, both psychologists and biomedical researchers rated past tense statements as more credible than generics compared with laypeople. No differences between other groups (including LLMs) and laypeople or transitions between frames were observed.

Perceived impact of generics

Regarding impact ratings, within the generic frame, psychologists rated generic statements as significantly less impactful than laypeople, partially supporting H2. No other human expert group differed significantly from laypeople. By contrast, both LLMs rated generics as substantially more impactful than laypeople, contradicting H2 (Table 2).

Turning to framing effects, and contrary to H3, biomedical researchers showed a stronger increase in perceived impact when statements shifted from generic to past tense frames compared with laypeople (b = .17, p = .022). No other human group differed from laypeople in their framing responses. Among LLMs, DeepSeek-V3.1, but not ChatGPT-5, showed a larger increase in perceived impact for hedged compared with generic statements relative to laypeople (Table 2). All remaining framing interactions were non-significant.

Qualitative preferences for reporting scientific findings

To better understand participants’ reasoning, we analyzed free responses explaining preferred reporting frames. When asked whether a statistically significant finding with medium effect should be reported with a (1) bare generic, (2) “study suggests,” (3) “might,” or (4) past tense frame, 445 participants, including LLM responses, provided a choice (Supplemental Material, Table S4).

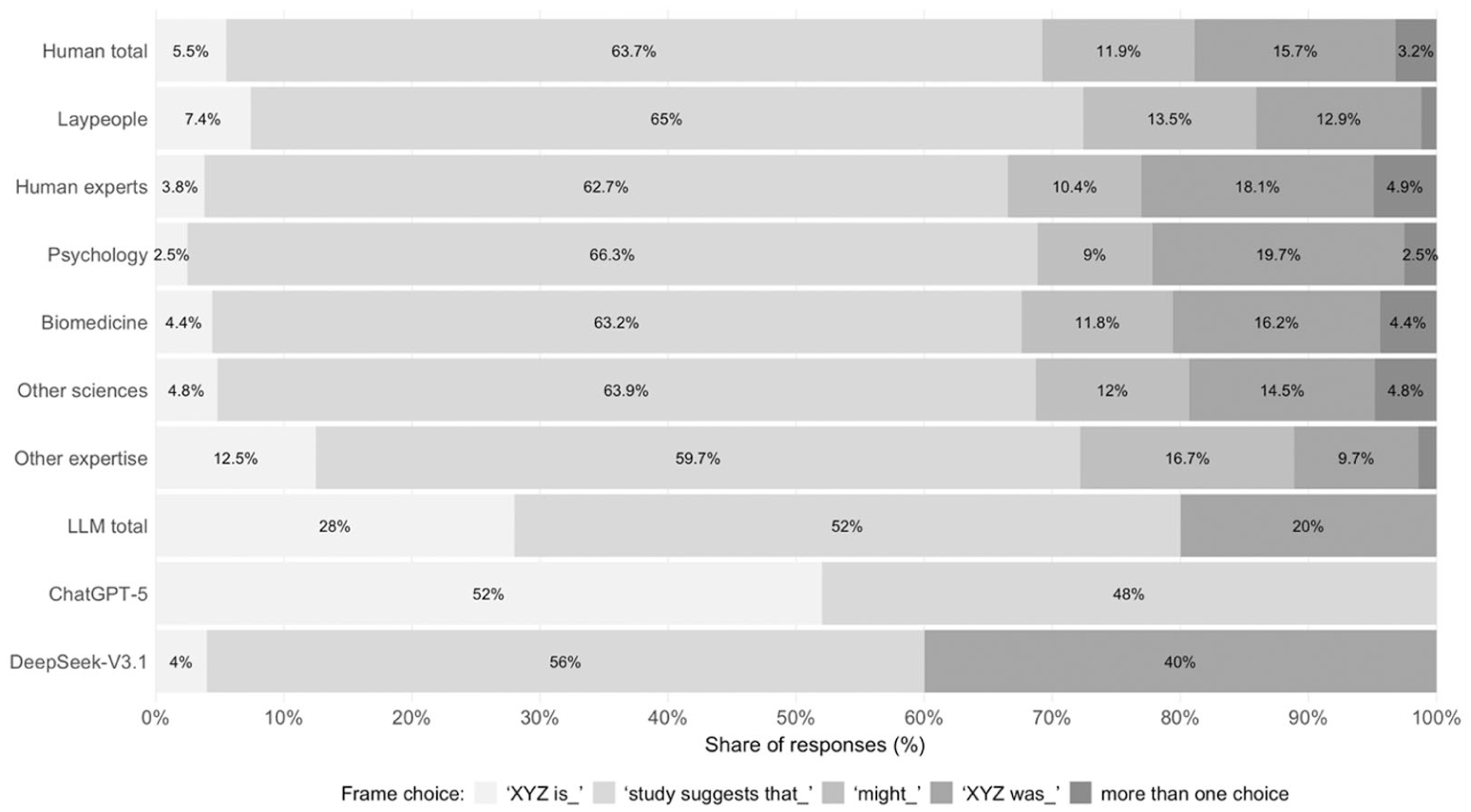

Among human participants, 64% favored the “study suggests” frame, with the preferences spread evenly across expertise levels. The second most preferred frame was past tense (16%), while bare generics were least preferred (6%). By contrast, ChatGPT-5 most often selected the bare generic frame (52%). DeepSeek showed a pattern closer to humans, preferring the “study suggests” frame (56%) (Figure 3).

Proportions of frame choices by groups. Proportions of patches without label are below 2%.

When iteratively reviewing the subset of 328 free responses that contained reasons for frame choices, three recurring themes emerged (Supplemental Material, Table S5). Multiple themes were often mentioned within a single comment:

Avoiding extremes. Results should be reported in a way that avoids overgeneralization beyond the studied population, but also without restricting applicability only to the tested sample.

Relativization to the study. Conclusions should signal that findings stem from a specific study, since broader generalizations require replication across studies.

Informativity concerns. Some frames (e.g. “might,” past tense) were viewed as insufficiently informative, overly vague, or less clear than alternatives such as generics.

Human participants frequently criticized the bare generic frame as “overconfident” (64), 5 suggesting it conveyed unwarranted universality. By contrast, ChatGPT-5 often favored this frame because it was “confident,” direct, and “easiest for non-expert audiences to interpret and use” (474). For instance, ChatGPT-5 explained that “hedging with “might” or overly attributing the claim to the study itself can dilute the message and make it harder for non-expert audiences to interpret the practical meaning” (445).

Human participants more frequently preferred the “study suggest” framing, which many described as striking “the right balance between confidence and caution” (415), as “it is 1 research finding of 1 study done in 1 specific way on 1 population, compared to a conclusion of an evidence synthesis” (67).

While the preference for the “study suggests” frame across free responses may seem inconsistent with our quantitative findings of lower credibility and impact ratings for hedged conclusions, hedged conclusions in the survey also included “might” claims, which were strongly disfavored across most free responses (Figure 3). This apparent discrepancy likely reflects differences between types of hedging: epistemic hedges such as “might” were often perceived as overly vague or uninformative, whereas attributional framing (“the study suggests”) was frequently viewed as an appropriate balance between confidence and caution. For instance, respondents wrote that a “might” conclusion “doesn’t need any study at all to say that” (44), and “is guarded to a point where it avoids stating anything at all” (64).

However, some participants also expressed reservations about the “study suggests” framing, noting that it “relies on a subjective interpretation of ‘suggests’” (44) and may communicate “smaller effect sizes or some statistical ambiguity” when this is unwarranted (81). Past tense was sometimes preferred, because it “limits discussion of the findings to those in the study” while (unlike “suggests that”) also “taking this stance with more authority” (4), inviting the “reader” to “judge for themselves how applicable the findings are to the general population based on other methodological details in the article” (164). But others cautioned that “using ‘was’” gives the impression the “findings only apply to the participants of the study” (81), or that (e.g.) a treatment “used to be effective but now it is not” (345).

Together, the free responses indicate that humans valued caution and study-specific framing, while rejecting formulations that seemed either too vague (“might”) or too broad. DeepSeek responses aligned with human participants. However, ChatGPT-5 often prioritized clarity, interpretability, and broad applicability, which frequently led it to select frames that human respondents perceived as overconfident (Figure 3).

4. Discussion

Our analysis of how laypeople, experts, and LLMs interpret scientific conclusions produced four key findings that offer new contributions to the theorizing about science communication and the public understanding of science. We briefly revisit each of these findings separately and discuss implications.

Scientific generics as more generalizable but less credible than past tense

We found that, overall, participants viewed generics as more generalizable than past tense or hedged (e.g. “might”) claims. This parallels prior research in which readers judged generics to be more generalizable than less sweeping alternatives (Clelland and Haigh, 2025; DeJesus et al., 2019). At the same time, our result contrasts with previous, laypeople-focused work finding no generalizability differences between past tense claims and generics (Clelland and Haigh, 2025), potentially because we tested not only laypeople but also experts.

However, overall, participants also rated past tense conclusions higher on credibility than generics. This can be instructive for science communicators. Scientists and other science communicators might fear that more qualified, past tense statements are less persuasive than broader, potentially exaggerated claims. Our results instead suggest that temporal qualification (past tense) enhanced credibility. This adds to previous work that found that qualifying claims in science communication (e.g. through uncertainty indicators) positively affected trust ratings (Steijaert et al., 2020).

Yet hedged conclusions including “might” or “suggests that” received lower credibility and impact ratings. This may be due to the limited informativeness of “might” conclusions, underscoring that qualification effects depend on qualification form (Roland, 2007).

Relatedly, DeJesus et al. (2019) distinguished between “hedged generics” (e.g. “might,” “suggests that”) and “framed generics” that signal evidential strength (e.g. “demonstrates,” “shows”). In their studies, framed generics were often judged by undergraduates as at least as conclusive, generalizable, and important as bare generics, whereas hedged generics tended to attenuate perceived strength.

Our findings are consistent with this pattern. The hedged conclusions in our study, all of which retained a generic predicate but were qualified by “might” or “the study suggests that,” received lower credibility and impact ratings than bare generics. Our results align with DeJesus et al.’s in that when generic claims were qualified by epistemic uncertainty (e.g. “suggests that”), their perceived force was reduced. Hence, not all framing of generics operates in the same direction: attributional frames that convey evidential support (e.g. “demonstrates that”) can preserve or amplify perceived strength, whereas hedging frames that convey uncertainty diminish it.

It might be suggested that the higher generalizability ratings for generics reflect participants’ recognition of the scope asserted by the linguistic form itself rather than endorsement of the generalization.

This account would predict that broader formulations should not be selectively penalized on credibility or impact, because people would just be responding to how broad the claim sounds across dimensions. However, that is not what we found. Instead, as noted, framing had opposing effects on generalizability versus credibility and impact, indicating that respondents seemed to distinguish between different epistemic properties of scientific claims. Nevertheless, because our measures did not explicitly separate perceived scope from belief about empirical generalization, future work more directly disentangling these components is desirable.

Misaligned interpretations of scientific conclusions

Consistent with research suggesting that the public generally trusts scientists (Cologna et al., 2025) while having a weaker understanding of research nuances and limitations (Keil, 2008), laypeople regarded scientific conclusions, adjusted for linguistic frame, as more generalizable, credible, and impactful than experts. Experts seemed to adopt a more epistemically vigilant stance (Sperber et al., 2010), potentially reflecting higher sensitivity to the conditional, contextual, and provisional nature of scientific results (Gannon, 2004).

Our finding of a misalignment in interpretation of scientific conclusions extends and corroborates earlier studies reporting that domain experts (vs laypeople) used more sophisticated approaches to judge the reliability of information sources (Brand-Gruwel et al., 2017; von der Mühlen et al., 2016). This finding can inform educational efforts, as most laypeople in our sample—though lacking advanced scientific training—had completed undergraduate degrees, often in scientific fields (Supplemental Material, Table S1). Since their generalizability, credibility, and impact ratings of scientific conclusions were higher than experts’, undergraduate students may need to be encouraged by educators to question assumptions, examine methodological boundaries, and appreciate uncertainty to help them bridging the gap between uncritical acceptance of scientific conclusions and expert-level epistemic vigilance (Bielik and Krell, 2025).

Misaligned interpretations of scientific generics and other frames

Moving from examining whether groups differ in baseline interpretations of scientific conclusions to examine whether and how these differences depend on linguistic framing, we found that psychologists and biomedical researchers viewed generics as less generalizable and less credible compared with laypeople, while also showing stronger between-frame effects. These results extend previous work on generics-related differences between non-scientific experts and novices (Coon et al., 2021) to the domain of science communication, while advancing research on the interpretation of scientific generics (Clelland and Haigh, 2025; DeJesus et al., 2019; Peters, 2023; Lemeire, 2024).

One possible explanation of the interpretive misalignment between laypeople and the two field-relevant scientific groups might be advanced education: Individuals with postgraduate training may read generics more cautiously and therefore adjust their ratings downward. However, the two other expert groups, other scientists (e.g. natural scientists, engineers), and other experts (e.g. humanities scholars), also held advanced degrees yet did not differ significantly from laypeople in their generalizability ratings of generics. Because the conclusions concerned psychological and biomedical findings, this selective pattern suggests that field-specific expertise, rather than advanced education or general scientific training alone, drove these differences.

To the extent that preexisting, field-specific beliefs about the plausibility or generalizability of particular findings influenced responses, our results are best interpreted as showing how linguistic framing is integrated with background knowledge, rather than isolating a purely language-only effect. But we did not measure item-level priors or familiarity, which limits our ability to adjudicate background knowledge effects. Future research could address this by measuring familiarity explicitly (e.g. conducting sensitivity analyses restricted to items participants report as unfamiliar).

Relatedly, psychologists and biomedical researchers may have been skeptical either of generics as a linguistic form, or of the specific findings conveyed in generic form (e.g. experts simply didn’t believe the result, regardless of how it was phrased). However, if experts’ skepticism targeted the finding itself (i.e. the content, not form) then ratings should have been similarly low across frames. Yet, psychologists and biomedical researchers showed strong differentiation between generic and qualified formulations (stronger than laypeople), suggesting heightened sensitivity to how linguistic framing interacts with evidential support, rather than global skepticism toward the claims themselves.

Combined, our findings indicate that psychologists and biomedical researchers were especially cautious about overgeneralization risks in their own disciplines, potentially due to field-specific methodological debates (Yarkoni, 2020) or heightened awareness of replication failures (Baker, 2016). Experts in other disciplines may lack these domain-specific sensitivities, which could explain why their ratings did not differ from those of laypeople. We refer to this proposal, that is, that field-relevant expertise increases sensitivity to discipline-specific generalization errors, leading to more conservative interpretations of generic claims, as the epistemic vigilance account of the observed differences.

For contrast, one might propose a common ground account, in which disciplinary expertise produces shared assumptions among insiders about a field’s methodological complexities, practices, and limitations (Clark, 1996; Dethier, 2022). On one possible version of this account, scientists interpret generics as already implicitly hedged and therefore acceptable because they assume shared awareness of constraints. If so, explicit qualifications would add little, and generics and qualified claims should be judged similarly.

Both accounts predict that experts reduce their ratings of generics compared with laypeople. However, on the common ground account, generics are reinterpreted as implicitly hedged, so their ratings converge with qualified claims, yielding weaker frame effects. By contrast, on the epistemic vigilance account, experts maintain the semantic distinction and evaluate it more sharply: they see generics as broader in scope than qualified claims but downgrade them relative to laypeople for overreach, while also resisting the upward inflation of qualified claims that laypeople showed (Figure 2). This double adjustment widens the gap between frames, producing stronger frame effects.

Our results align with the latter pattern. For generalizability, psychologists and biomedical researchers showed a larger drop when moving from generics to hedged or past tense claims compared with laypeople, indicating stronger frame sensitivity. These experts also rated qualified, especially past tense, claims lower than laypeople, who treated them as still broadly generalizable, only somewhat below generics (Figure 2). Thus, rather than assuming that generics are implicitly hedged and convergent with qualified claims in scope (as the common ground account predicts), experts seemed to downgrade generics more strongly than laypeople and resisted the upward adjustment that laypeople gave to qualified claims in ways that widened the relative rating gap between frames. This supports the epistemic vigilance account.

Notably, our finding that laypeople judged past tense conclusions as substantially more generalizable than did psychologists and biomedical researchers (Figure 2) suggests that advising authors to use past tense formulations may not reliably prevent lay audiences’ overgeneralization: laypeople’s interpretations of past tense claims were similarly misaligned with expert interpretations as their interpretations of generics. Past tense framing may therefore be less effective at mitigating overgeneralization than is often assumed (see also Clelland and Haigh, 2025), highlighting an important direction for future research.

Setting aside differences in past tense interpretations, if, as the epistemic vigilance account suggests, psychologists and biomedical researchers are more skeptical of generics, why do corpus analyses nonetheless show frequent use in these fields (DeJesus et al., 2019; Peters et al., 2024)? One explanation is that, independent of perceived generalizability, generics are rhetorically efficient, whereas extensive hedging may be discouraged by journal space limits or judged stylistically excessive (Roland, 2007). Their prevalence may also reflect a “generalization bias” among scientists, an unconscious tendency to extrapolate findings broadly even when unwarranted (Peters et al., 2022). Such a bias may shape the production of generics even as experts remain cautious in evaluation.

Finally, while using generics can have benefits for scientists (e.g. facilitating scientists’ coordination about the categories that are kinds for their field; Lemeire, 2024), immersed in disciplinary thinking, scientists may misjudge how non-experts interpret their statements and use generics not because they endorse sweeping claims, but because they inadvertently assume audiences will contextualize them as they would, leading to adjustment failures (Coon et al., 2022). Given this, the observed interpretive misalignments raise significant risks when scientists communicate research results to laypeople. Scientists may invite overgeneralizations in public-facing science communication by using generics (or even past tense claims) as they interpret them more narrowly.

LLMs’ ratings consistently exceeded laypeople’s ratings

We found that LLMs rated scientific conclusions and generics higher than laypeople and experts across domains. This result aligns with previous studies finding that ChatGPT consistently depicted science as reliable and trustworthy (Volk et al., 2025) while struggling to critically evaluate research (Thelwall, 2024). Our data advance research on how LLMs process generics by adding comparative survey-based insights (Allaway et al., 2024; Smith and Tolbert, 2025), raising the possibility that previously documented overuse of generics in LLM science summaries (Peters and Chin-Yee, 2025) may be due to LLMs’ overappraisal of generics’ credibility.

Developers may also fine-tune LLMs to trust scientific sources to reduce spread of misinformation (Ouyang et al., 2022), which may unintentionally encourage models’ overappraisal of scientific claims. In addition, while laypeople may often distrust scientists due to cultural (e.g. political) attitudes or motivations (Gerken, 2020), LLMs (still) lack psychological states, including motivations (Shanahan, 2024), that could induce doubt about science, potentially making them less skeptical of scientific claims including generics.

Conversely, LLMs’ interpretations of scientific generics may sometimes be appropriate. For instance, DeepSeek-V3.1 often justified ratings by citing established knowledge, related findings, or consensus (see the OSF LLM response dataset), situating conclusions within the literature. With access to a broader information base than human respondents, LLMs’ higher ratings can at times be warranted.

However, even if a body of research indicates that a result is robust and replicable, a generic could still mislead by glossing over variability and ignoring variation due to individual differences or context. Moreover, since LLMs rely on published literature, their outputs may be distorted by “publication bias,” that is, the underreporting of null findings. While scientific experts summarizing findings of multiple studies are trained to control for such bias (Duval and Tweedie, 2000), LLMs may not detect or adjust for it when summarizing studies.

More generally, people can be educated to balance trust with epistemic vigilance, but it is unclear how to instill such vigilance in LLMs (Scholten et al., 2024). The consistently higher generalizability, credibility, and impact ratings by two leading and architecturally distinct LLMs (Rahman et al., 2025) are therefore concerning. They may result in the chatbots summarizing scientific findings in ways that the experts producing these results would themselves find inappropriately broad and misleading.

Relatedly, in the free responses, ChatGPT-5 preferred generics for scientific reporting whereas human respondents clearly disfavored them. Caution is therefore warranted when using ChatGPT-5 for science summarization. By privileging generics where humans show caution, the model may normalize their use in science summaries and thus diverge from human communication preferences.

5. Limitations

Our study is limited in at least seven respects. First, we presented scientific conclusions without additional contextual information. As a result, our findings may not generalize to settings in which readers encounter conclusions embedded in full articles or broader explanatory contexts. However, given the increasingly overwhelming volume of published research (Hanson et al., 2024), scientists and laypeople may increasingly encounter scientific claims in decontextualized formats such as article titles, abstracts, or summaries, making this presentation ecologically relevant for many real-world settings.

Second, our stimuli and expert samples were restricted to two fields: psychology and biomedicine. Due to data sparsity, we were unable to analyze other expert subgroups in detail, and the conclusions used as stimuli did not cover the full diversity of research questions, methods, or evidential standards within these fields.

Third, participants’ prior familiarity with specific topics or claims was not measured and may have influenced their ratings. Our measures also did not explicitly disentangle participants’ recognition of the scope asserted by a linguistic formulation from their belief that a finding would in fact generalize. Consequently, some generalizability ratings (particularly for generics) may partly reflect sensitivity to linguistic breadth versus endorsement of epistemic warrant.

Fourth, our analyses relied on linear mixed models treating Likert-type-scale responses as continuous. Although this approach is common in large-sample repeated-measures designs (Norman, 2010), and model diagnostics did not indicate substantial violations of normality or homoscedasticity, alternative modeling (e.g. ordinal mixed-effects models) could be explored in future work. In addition, although our hypotheses were theory-driven, some contrasts (e.g. laypeople vs “other experts”) were exploratory (due to low sample size) and should be interpreted cautiously pending replication.

Fifth, our human sample consisted exclusively of participants from Western countries. This focus reflects both practical recruitment constraints and the Western-centric nature of the scientific literature from which our stimuli were drawn (Rad et al., 2018). While studying Western participants allowed us to examine interpretive misalignments between laypeople, experts, and LLMs within a shared communicative and epistemic context, interpretations of scientific claims and generics may differ across cultural contexts, particularly in societies with different norms of scientific authority, uncertainty communication (e.g. hedging) (Yang, 2013), or public trust in science (Antes et al., 2018).

Sixth, we examined only two leading LLMs and, to approximate typical public interactions, accessed them via website UIs rather than APIs (Park et al., 2025). UIs incorporate system-level parameters that providers (OpenAI, etc.) do not disclose (e.g. response randomness or moderation features) and that may affect output stability (Ouyang et al., 2025). Future research should test a broader range of models and compare UI-based and API-based access to assess robustness across interaction settings.

Finally, our results are correlational and do not permit causal inferences about the sources of the observed interpretive misalignments.

6. Conclusion

The interpretive misalignments between laypeople, scientific experts, and two leading LLMs that we uncovered carry significant communicative risks. By overlooking how laypeople’s interpretations diverge from their own, scientific experts may inadvertently encourage overgeneralization in their audiences when using generics in science communication. LLMs, in turn, may systematically produce scientific summaries with overly broad research conclusions because they interpret generics from original texts more widely than the authors may intend. Our results highlight the need to align expert and public perceptions of science and to monitor how generics and other conclusion frames are handled in LLM-mediated science communication. Future work should explore strategies, both in human communication and LLM design, for calibrating the interpretation and use of generics so that scientific conclusions are conveyed accurately without encouraging unwarranted generalizations.

Supplemental Material

sj-docx-1-pus-10.1177_09636625261425891 – Supplemental material for Generics in science communication: Misaligned interpretations across laypeople, scientists, and large language models

Supplemental material, sj-docx-1-pus-10.1177_09636625261425891 for Generics in science communication: Misaligned interpretations across laypeople, scientists, and large language models by Uwe Peters, Andrea Bertazzoli, Jasmine M. DeJesus, Gisela J. van der Velden and Benjamin Chin-Yee in Public Understanding of Science

Footnotes

Acknowledgements

U.P. would like to thank Oliver Lemeire for discussions during earlier phases of the project, and Oliver Braganza and Johannes Schultz for helpful feedback on the study design and assistance in the recruitment.

Ethical considerations

Ethics approval was obtained on 18 March 2025.

Name and institution of the review committee: Faculty Ethics Assessment Committee General Chamber at Utrecht University, Netherlands.

Contact:

Approval number: FETC-H reference 24-157-02

Consent to participate

Requirement for informed consent to participate was waived by the Ethics Committee.

Author contributions

U.P. conceived and managed the study, conducted data processing (analysis, visualization, interpretation), drafted the manuscript, and revised subsequent versions. A.B. assisted in study design, recruitment, data collection and interpretation, and provided feedback on the manuscript. J.M.D. advised on study design and recruitment and provided feedback on the manuscript. G.J.V. consulted on study design and recruitment and provided feedback on the manuscript. B.C.Y. contributed to study design, recruitment, data collection and interpretation, and manuscript revision.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The project is supported by a Volkswagen research grant on meta-science (“The Cultural Evolution of Scientific Practice”; WBS GW.001123.2.4).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

All material and data are available on an OSF platform here: https://osf.io/h23c8/overview (Preregistration: ![]() )

)

Supplemental Material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.