Abstract

It is well established both that women are underrepresented in the field of artificial intelligence (AI) and that media representations of professions have impact on career choices and prospects. We therefore hypothesised that women are underrepresented in portrayals of AI researchers in influential films. We tested this by analysing a corpus of the 142 most influential films featuring AI from 1920 to 2020, of which 86 showed one or more AI researchers, totalling 116 individuals. We found that nine AI professionals in film were women (8%). We further found that none of the 142 AI films was solely directed by a woman. We discuss a number of explanations for the paucity of women AI scientists in the media, including parallels between film and real-life gender inequality, the construction of the AI scientist as male through gendered narrative tropes, and the lack of female directors.

Keywords

1. Introduction

It is well established that women are underrepresented in artificial intelligence (AI), a sector that is not only growing, but widely considered to be central to the future shape of society. This is both intrinsically unjust and contributes to further injustices as AI systems reflect the gender biases of their makers (Young et al., 2021: 9–19). Tackling this underrepresentation is a huge and complex problem. One crucial aspect relates to cultural stereotypes of who is suited to a career in AI. Such stereotypes can influence not only young people in their career choices, but also managers hiring new AI staff, and co-workers who might be more supportive or hostile towards some kinds of colleagues than others. These cultural preconceptions therefore impact on every stage of the ‘pipeline’ of women into the field, and their experience within it.

Mainstream films are an enormously influential source and amplifier of these cultural stereotypes. We therefore hypothesised that the underrepresentation of women in the AI workforce would be paralleled in portrayals of AI scientists and engineers on screen. While previous research has examined the gendering of portrayals of scientists and engineers in general in mainstream media (e.g. 21st Century Fox et al., 2018; Geena Davis Institute on Gender in Media, 2018; Steinke, 2005, 2017), no work has looked at portrayals of AI researchers specifically. Given the growing social and economic importance of AI, we believe there is a pressing need to do so. This article aims to fill that gap, and so contribute both to the study of science communication and to the growing literature on the ethics and impact of AI from a gender studies perspective.

The article begins with a brief overview of the status of women in AI research and the causes of underrepresentation. We then review the literature on the role of popular media in gendering cultural perceptions of scientists and engineers, and describe our methodology. In the results section, we give figures for the genders of the AI researcher characters and the genders of the directors of the relevant works. In the discussion section, we offer three explanations for the underrepresentation of women in portrayals of AI researchers: that these works aim to reflect reality, and thus reproduce current gender imbalances in the AI industry; that the ideal AI scientist is constructed as male through certain key tropes; and the lack of women directors. Finally, we discuss limitations of this study and directions for future research.

2. Women in AI

AI is one of the most impactful and lucrative technologies of our age. As its global market size – valued at US$93.5 billion in 2021 – has a projected compound annual growth rate of 38% between 2022 and 2030 (Grand View Research, 2021), AI scientists will increasingly be among the most valued scientists in the global economy. While women are underrepresented across most scientific fields (Huang et al., 2020; Schwab et al., 2019), the AI sector is suffering from an even greater ‘diversity crisis’ (SM West et al., 2019: 3) than other science, technology, engineering, and mathematics (STEM) industries. While in 1955 only 12% of scientific authors were women, by 2005 this number had reached 35%; however, this increase does not account for disciplinary differences, as the percentage of female academic authors in maths, physics, and computer science still sits at around 15% (Huang et al., 2020). This has led Young et al. (2021) to suggest that the field of AI and machine learning is characterised by deep and persistent structural inequality along gendered lines.

Globally, only 22% of AI professionals are female (Howard and Isbell, 2020), as opposed to 39% across all STEM fields (Hammond et al., 2020). A study by WIRED and Element AI in 2019 found that women comprise only 12% of authors at leading AI conferences, while the AI Index 2018 reported that men comprise more than 80% of AI professors (Shoham et al., 2018; Simonite, 2018). Women are also underrepresented in leading AI firms and organisations, constituting only 10% of AI research staff at Google and 15% at Facebook (Simonite, 2018). Furthermore, a recent report showed that women in the field tend to be confined to lower-paid, lower-status roles such as software quality assurance, rather than prestigious sub-fields such as machine learning (Young et al., 2021: 23–25). Most worryingly, there are indications that women’s participation in the AI workforce in the United Kingdom is actually decreasing (M West et al., 2019).

The underrepresentation of women and other disadvantaged groups in the field of AI is an injustice that requires urgent attention. On the one hand, it represents a devaluing of these groups’ labour and expertise, and constitutes exclusion from an influential and well-remunerated field. A study by the World Economic Forum shows that the underrepresentation of women in ‘frontier roles’ such as cloud computing, data and AI, and engineering is compounding the gender pay gap (Schwab et al., 2019: 37). On the other hand, this relative exclusion of women from the AI development pipeline renders them more vulnerable to being adversely impacted by the technology (Williams, 2014). Given that male engineers have repeatedly been shown to engineer products that are most suitable for and adapted to male users, employing more women is essential for addressing the encoding of bias and pejorative stereotypes into AI technologies (Criado Perez, 2019; Williams, 2014).

Why are women so marginalised in the field of AI? Some studies have highlighted the ‘pipeline problem’, or the low uptake of STEM subjects by female students, and their unwillingness to apply for jobs within the AI and computer science industry, which leads to a disproportionately small number of female candidates for these positions (PwC UK, 2017). However, a focus on the ‘pipeline problem’ can offset blame onto women’s perceived shortcomings – such as a lack of interest, confidence or relevant skills (SM West et al., 2019: 23). Other studies focus more on the hostile workplaces and discriminatory practices that drive women away from the sector, such as the persistence of ‘brogrammer culture’ and cultures of hypermasculinity in the fields of AI and computer science (Jacobs, 2018); high levels of gender-based and sexual harassment in the technology industry (Women Who Tech, 2020); discriminatory hiring, promotion and pay practices (Williams, 2014; Women in Tech, 2019; Women Who Tech, 2020); and how aspects of the AI industry, such as working long hours, may differentially impact women, especially those who are performing the majority of reproductive and household labour (Williams, 2014; Women in Tech, 2019). The prejudices that underlie these inequalities were evidenced by former Google employee James Damore’s infamous 2017 anti-diversity manifesto, which argued that women, on average, have a ‘stronger interest in people rather than things’, are more agreeable and less assertive, and have higher levels of neuroticism (Conger, 2017).

The factors driving women’s relative exclusion from the AI workforce are systemic and multifaceted. In this article, we focus on a piece of the puzzle that can exacerbate many of them: the role of mainstream films in the cultural construction of the AI researcher.

3. Theoretical framework

Previous research has established that (a) cultural stereotypes and representations of scientists and engineers influence the ability of women to access and flourish in STEM fields, (b) such representations in popular media are overwhelmingly male, and (c) films directed by men are less likely to feature female protagonists. But no study has yet examined representations in popular media of AI scientists and engineers specifically, nor the gender of the directors responsible. In this article, we do not examine point (a) directly with regard to the field of AI, but focus on (b) and (c): how AI scientists are gendered, and the gender of the directors of major films featuring AI.

The role of film in gendering perceptions of AI

Research from a wide range of fields, from critical race studies to science communication, has elucidated the ways in which media representation functions as one of the preeminent sites in the production of inequalities, including inequalities related to gender and ethnicity. Patricia Hill Collins (2000) argues that media representations – in particular the role they play in reinforcing stereotypes – are crucial to a hegemonic culture that perpetuates racist and sexist ideologies (p. 5). There are numerous ways in which such ideologies can hinder the success of women in a field such as AI. These include dissuading women from attempting to enter, if they see a field as masculine; hindering their entry into the field, as they do not conform to gatekeepers’ perception of their ideal candidate; and harming their prospects once in post, through a culture that is sceptical of their abilities and tolerant of bullying and harassment.

The consequences of gendered role stereotypes have been explored to varying degrees in the context of computer science, the field of which AI is (largely) a part. For example, a 2017 study of computer science students found that university students believed gender norms, ‘including stereotypes and socialized beliefs about who can succeed in computing’, were the ‘main culprit’ for women’s lack of success in computing (Rodriguez and Lehman, 2017: 232). Gender norms shaped students’ ‘computing identity’, or the extent to which people are able to see themselves as computer scientists and as belonging to the computer science workforce and community (Rodriguez and Lehman, 2017).

A range of research on fictional representations of scientists more generally has evidenced their importance in perpetuating or disrupting these gender stereotypes and corresponding patterns of exclusion. For example, the 2018 study ‘The Scully Effect’ investigated the influence of the protagonist of the popular 1990s TV series The X Files, Dr Dana Scully (Gillian Anderson), a doctor turned paranormal investigator. The study revealed that ‘Nearly two-thirds (63%) of women that work in STEM say Dana Scully served as their role model’ (21st Century Fox et al., 2018: 5).

As noted above, gender stereotypes also create structural barriers to success for women within the field by reinforcing the established male elite’s reluctance to change a culture in which they see others like themselves as the best fit. In the AI sector, several reports note the importance of representations in the broadest sense for shaping women’s views of themselves as potential future AI scientists. For example, a PwC report suggests that the lack of female role models might affect female students’ uptake of STEM subjects (PwC UK, 2017). Similarly, in 2019, Women in Tech UK surveyed over 1000 women in the technology sector and found that 18% of those surveyed cited ‘perceptions’ as the most important reason why women are put off working in the technology sector (Women in Tech, 2019: 4). Consequently, Steinke’s (2017: 29) observation that a better understanding of cultural representations of women, specifically a better understanding of the portrayals of female scientists and engineers in the media, may enhance the efficacy of efforts to promote the greater representation of girls in science, engineering, and technology

applies to the field of AI. However, no study to date has systematically examined such perceptions and portrayals in mainstream media.

Previous studies of gender in representations of scientists and engineers

Our study follows a range of work investigating on-screen portrayals of scientists and engineers in the broadest sense (Flicker, 2003; Haynes, 2017; Weingart et al., 2003). For example, Jocelyn Steinke’s 2005 paper ‘Cultural Representations of Gender and Science’ analyses 97 films released between 1991 and 2001 that portray scientists and engineers, 23 of which (23.7%) feature female scientist and engineer primary characters (Steinke, 2005: 53). She builds on this work for her 2017 review article ‘Adolescent Girls’ STEM Identity Formation and Media Images of STEM Professionals’, in which she cites a broad spectrum of such work, concluding that Images of STEM professionals in popular media have for many years both created and perpetuated a cultural stereotype that depicts women as less likely than men to be present in STEM fields as well as less likely to be talented, successful, and valued in STEM fields. (Steinke, 2017: 2)

Starting in 2008, the Geena Davis Institute has produced systematic quantitative studies on the portrayal of women in STEM in film and TV. Several of their studies have provided figures for specific STEM fields as well as overall figures. Their 2012 report ‘Gender Roles & Occupations’, which focuses on children’s media from 2006 to 2012, states that a mere 21.6% of characters with STEM careers in children’s films and primetime TV programmes are female; for computer science, this percentage drops to 14.3%, and for engineering a mere 5.7%, with no female engineers at all in primetime children’s TV (Smith et al., 2012: 5). Their 2018 report ‘Portray Her’, which covers film, TV, and streaming media for all ages between 2007 and 2017, showed an improved overall figure of 37% women in STEM roles, but only 8.6% in computer science and an astonishing 2.4% in engineering (Geena Davis Institute on Gender in Media, 2018).

The gender gap in on-screen representation is even more striking when focusing specifically on the gendered portrayal of computer scientists. The 2015 ‘Images of Computer Science’ study involved a survey of several thousands of students, parents, teachers, and school administrators in the United States. The report argues that ‘enduring stereotypes might hinder the inclusion of underrepresented groups’. It then noted that only 15% of students and 8% of parents say they see women performing computer science tasks most of the time on TV or in the movies, and about 35% in each group do not see women doing this in the media very often or ever. (Google and Gallup, 2015: 12)

The role of film directors

When considering representations of who makes AI, it is also crucial to consider whose vision is realised in these cultural representations. Previous studies suggest there is a significant correlation between women on screen and women in behind-the-scenes roles. A study by Follows and Kreager showed that when women were directing, the number of women increased in the majority of key roles, from 7.4% on male-directed films to 65.4% on female-directed projects; such films are in turn more likely to write more substantial roles for women into the script (Follows and Kreager, 2016: 32). A US study similarly shows that of films with at least one female director, women comprised 53% of writers and 39% of editors, but only respectively 8% and 18% for films directed exclusively by men (Lauzen, 2021: 6). A 2010 report also found that ‘a higher percentage of girls/women are shown on screen when one or more females are involved in directing or writing films’ (Smith and Choueiti, 2010: 3). Patricia Hill Collins’ (2000) study of depictions of Black women in the media similarly concludes that Black female directors are more likely to create stories that focus on the experiences of Black womanhood (p. 96).

However, women are grossly underrepresented as directors of top-grossing films: a 2022 study by the Annenberg Inclusion Initiative on the gender and ethnicity of film directors across 1500 top-grossing films from 2007 to 2022 showed that only 5.4% of these directors were women (Smith et al., 2020). We therefore hypothesised that a low number of female AI scientists in film would correlate with a low number of women directing major films featuring AI.

Previous studies therefore lead us to expect women to be underrepresented in portrayals of AI scientists and engineers, and as directors of films featuring AI. Indeed, given the especially low representation of women in the actual field of AI, and the especially low representation of women in portrayals of computer scientists on screen, we hypothesised that representations of AI scientists and engineers were likely to be among the lowest for any subfield.

4. Methodology

Methodology overview

The aim of this study is to examine the gendering of portrayals of AI researchers in influential fiction film over the past century, 1920–2020. In this section, we explain our choice of media and period; our criteria for ‘AI researcher’; how we have coded gender; our criteria for ‘influential’ in film; and the corresponding sources of our corpus.

We have selected films for this study because of cinema’s significant impact on wider culture. While much research has been done on AI in literature (e.g. Cave et al., 2020), there are three main reasons why we have here prioritised films. First, the circulation of films featuring AI is wider than even the most popular AI novels. Second, the consumption of film extends beyond the cinema: for example, while Interstellar (2014) gained 20 million box office tickets in the United States, it also had 46 million illegal downloads, meaning that in 2014 more people pirated the film than attended higher education in the United States (Child, 2015; National Center for Education Statistics, 2013). Third, the advertising and merchandise accompanying prominent films further amplify their impact on cultural perceptions. For example, The Force Awakens (2015) earned the Star Wars franchise $2 billion dollars at the box office, while Star Wars merchandise was valued in 2012 at over $4 billion (Agar, 2016; HEROfarm Marketing & Public Relations, 2015).

We have focused on films with a significant impact on UK and US audiences, for a number of reasons. First, it is the cultural sphere in which we have the most expertise, and in which we are situated. Second, it is a cultural sphere with vast influence: the vast majority of highest-grossing films worldwide are made in the United States, in English (Box Office Mojo, 2021a). Third, this sphere is also the most influential in AI research, with the United States the global leader by most measures, and the United Kingdom the leader in Europe (Tortoise, 2020).

We have examined films over the course of a century, from 1920 to 2020. The total number of films featuring AI is sufficiently small that this large temporal range results in a corpus that is manageable but meaningful. 1920 is an appropriate start date both because of the rapid development of the cinema in the United States and Europe after the First World War, and because this decade saw the earliest high-impact portrayals of intelligent machines and their creators, in Karel Čapek’s play R.U.R. (1921) and Fritz Lang’s film Metropolis (1927).

Intersectionality and our focus on ‘women’

In this article, we focus on the underrepresentation of women in AI. At the same time, we recognise that our centring of ‘women’ excludes people of marginalised genders who are also underrepresented in AI, but about whom there is little data regarding their participation in the AI workforce (SM West et al., 2019). We also recognise that feminist scholarship has long problematised the meaning of the term ‘women’ and the kinds of silencing and exclusion generated by treating ‘women’ as a homogeneous group. In particular, drawing from Black feminist scholarship and work by feminists of colour, we are uncomfortable framing ‘women’ as a single gendered category without attending to how race and racialisation fundamentally shape normative concepts of gender and sexuality (Dillon, 2018; hooks, 2015; Roberts, 2017).

With these reservations in mind, we focus in this article on the category of women, broadly understood, due to the difficulties of collecting accurate data in relation to characteristics such as ‘race’/ethnicity, sexuality, and dis/ability. By comparison, it is relatively straightforward to collect data on a character’s gender, as we are able to draw on the gendered pronouns used to refer to the character during the film. However, it is less straightforward to capture characters’ racial or ethnic identity without epidermalising them (that is, attempting to rank their skin colour against a white norm). As a result, we believe that a full examination of the racialised representation of AI scientists requires a different methodological approach than the one employed in this article.

Defining and categorising the AI scientist

In identifying AI scientists, we have looked for a person who is referred to as having made an AI system, or is shown engineering, creating, improving, programming, or otherwise working on an AI system. Our focus on AI as technology means we have excluded characters who create artificial humanoids through other means, such as Victor Frankenstein’s (re-)animation of his creation through electrifying human remains. We also excluded alien species that did not show specific individuals responsible for the creation of AI, or corporations that did not show specific individuals in charge.

We categorise a technology as AI if it is an artefact that fulfils one or more of the following criteria: it demonstrates evidence of autonomous behaviour; it possesses conversational capacity; it is explicitly referred to as ‘AI’ in the film. This resulted in the exclusion of some technologies, particularly various fantastical gadgets and tools that in the real world would (if they existed) conceivably be marketed as ‘AI’ based on their use of data science. These three criteria meant that we also did not include cyborg technology (such as bionic limbs), unless the cybernetic attachment itself clearly met any of the three criteria, such as displaying autonomy.

Our corpus includes a range of AI creators, human and non-human, corporations, robots, and even animals. To define and categorise the gender of AI scientists within the corpus, a deductive approach to coding was undertaken. We coded our data using the following categorisations:

Human man;

Male robot/alien/AI/animal;

Corporation led by man/men;

Human woman;

Female robot/alien/AI/animal;

Corporation led by woman/women;

Non-gendered, other gender, or gender unknown;

Or null return where an AI system is shown, but its creator is unknown.

Directors

We also collected the name and gender of the director of each film. The gender of the director(s) was coded deductively. Every effort has been made to identify the gender of the director in line with that expressed by the director themselves at the time of filming/release of the film. However, where third-party reporting such as on websites like IMDb and Wikipedia has been relied upon, the potential for miscoding cannot be entirely negated.

Criteria for inclusion in the corpus

We sourced influential representations of AI engineers, scientists, or researchers in film by focusing on two categories. First, we aimed to capture the most popular representations by focusing on the highest-grossing films that feature AI. We used revenue as a proxy for viewership, working from the premise that financially successful films were watched by large numbers of people. We collated three lists of top-grossing films: Box Office Mojo’s (2021a) 1000 highest-grossing films in the United States of all time, adjusted for inflation; Box Office Mojo’s 1000 top grossing films of all time worldwide, not adjusted for inflation (Box Office Mojo, 2021b) (inflation-adjusted data was unavailable); and IMDB’s 100 top grossing SF films (IMDb, 2021). This broadly follows the approach taken by previous studies that have investigated the impact of films on the ambitions of young women and girls (Plan International and Geena Davis Institute on Gender in Media, 2019). Second, we examined collections and lists of highly acclaimed SF films. While some of the films on these lists may have been less widely viewed than the films in the first category, we selected these lists because critically acclaimed SF films are culturally significant within the field of AI and have shaped public perceptions of AI (Cave et al., 2019). We collected these films from lists curated by Ultimate Movie Rankings, The Guardian, Science, Wired UK, Vogue, Empire, Good Housekeeping, Vulture, and TimeOut (Ebiri, 2015; Hogan and Whitmore, 2015; Jeon, 2020; Plim et al., 2021; Powers, 2016; Shultz, 2015; Travis and White, 2021; Ultimate Movie Rankings, 2016; WIRED UK, 2020). Finally, we included Academy Award (‘Oscar’)-winning films featuring AI. This approach also allowed us to include historically significant films such as Metropolis (1927) and smaller films that shape contemporary AI discourse, such as Ex Machina (2014).

Coding process

The films were coded by a team of four researchers with diverse disciplinary backgrounds in philosophy, AI ethics, science communication, literary studies, gender studies and intersectionality, and film and media studies. To develop a common understanding, and to ensure intercoder reliability (Lombard et al., 2002) and consistency in coding decisions (O’Connor and Joffe, 2020), early in the process a subset of films was coded by multiple team members together. As the coding took place over an extended period of time, a cyclical approach was taken to ensure reliability of coding across the period within which it was completed. This meant that films initially coded early in the process were revisited later in the process to ensure that the original coding was consistent with later efforts.

5. Results

Gender of AI scientists and engineers in the corpus

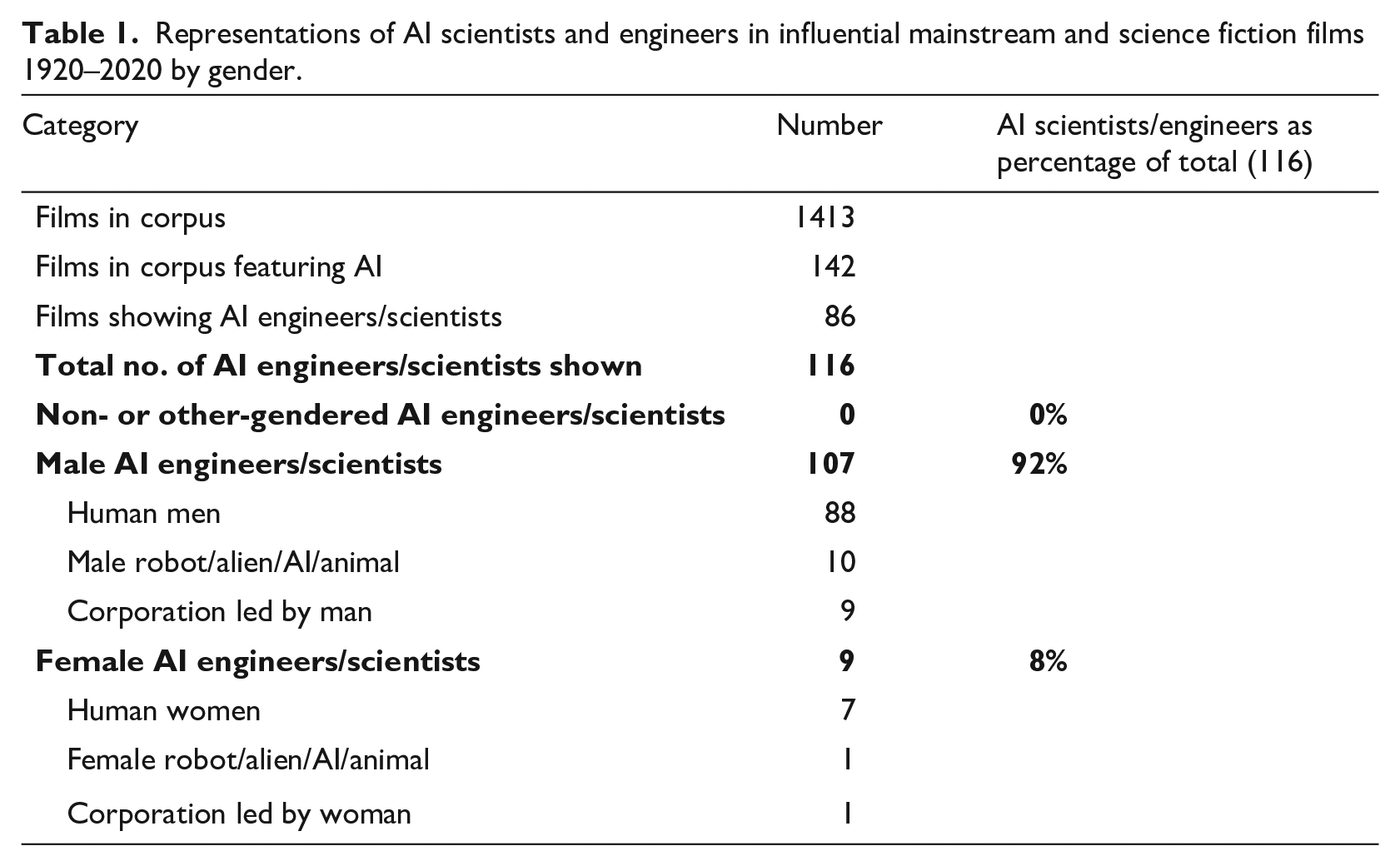

Of the 1413 films in our corpus, we identified 142 as featuring AI. Of these, 86 films clearly showed or referred to an AI engineer or scientist. The total number of AI engineers or scientists shown was 116, as 63 films showed only one such figure, 16 films showed 2 and 7 films showed 3 figures that met our criteria.

Of these 116 AI engineers or scientists, 88 were men, 10 were male robots, aliens, animals or AIs, and 9 were corporations led by men, giving a total of 107 male figures, or 92% of the total. Seven were human women and two were female non-humans, giving a total of nine female figures, or 8% of the total. These results are summarised in Table 1.

Regarding the female characters: the female alien is Quintessa in Transformers: The Last Knight (2017), and The Emoji Movie (2017) shows a corporation led by Smiler, a female emoji. The other seven, all human, are Shuri in Avengers: Infinity War (2018), Evelyn Caster in Transcendence (2014), Ava in The Machine (2013), Dr Brenda Bradford in Inspector Gadget (1999), Dr Susan Calvin in I, Robot (2004), Dr Dahlin in Ghost in the Shell (2017), and the earliest film in our corpus to feature a female AI creator is from 1997: Frau Farbissina in Austin Powers: International Man of Mystery.

Directors

We collected the names and genders of the directors of all the influential films featuring AI (whether or not they showed an AI engineer). The results are striking. The 142 AI films in our corpus were directed by a total of 161 people (i.e. 19 films were co-directed by a team of two). As we are considering each film separately as the relevant unit, we count each instance of a person directing – so the total of 161 counts some directors multiple times (e.g. Michael Bay or Ridley Scott). Of these 161 directors (or instances of directing), 159 are men and 2 are women.

One of these female directors is Anna Boden, who co-directed the film Captain Marvel (2019) with Ryan K. Fleck. The other, Jupiter Ascending (2015), which is credited to ‘Lana and Andy Wachowski’, requires some elaboration. The Wachowskis are trans women, whose work features four times in our corpus. When they made the first three of these (the Matrix films, 1999–2003), both presented as male. When they made Jupiter Ascending, Lana Wachowski had come out as female. Her sibling came out in 2016, as Lilly. We have counted the Wachowskis according to the gender they were presenting when each film was made, because that is the gender that shaped how they and their work were received, supported, and funded at the time. This results in counting them each time as male, except Lana Wachowski for Jupiter Ascending. If we counted them as women with our present knowledge, our corpus would contain 9 female directors out of 161, or 5.6%.

Bearing in mind these considerations, we conclude that, only 1% of directors in our corpus are women (2 films out of 142) and in both instances the women were co-directing with men. Therefore not a single influential AI film in history has been directed solely by a cisgender woman.

6. Discussion

In this section, we discuss three reasons for the underrepresentation of women in portrayals of AI researchers: (a) that film-makers are trying to reflect reality; (b) that the tropes shared by many films featuring AI scientists contribute to the construction of AI as a masculine field; and (c) the overwhelming preponderance of male directors of AI films.

Art mimicking life

One possible reason why AI scientists and engineers are overwhelmingly portrayed as male is that this is a reflection of the field. We can hypothesise that directors, screenwriters, and others might simply wish to accurately portray an AI research environment, and as we mentioned above, these are disproportionately – circa 70%–85% – staffed by men. Our results show that the proportion of AI scientists and engineers who are portrayed as men in mainstream films is actually even higher than this (92%). But this could be explained by art mimicking life: if most directors looking to cast an AI scientist or engineer choose a man for the role because in real life they are often male, then this will result in an even greater majority of male scientists on screen.

This hypothesis suggests an arrow of causation of underrepresentation of women in AI from the lab to film. But this does not negate the possibility of an arrow of causation also running the other way: it might also be the case that, for example, the lack of prominent female AI researcher role models in mainstream media impacts negatively on girls’ and young women’s perceptions of the field. We would then have a vicious circle: with directors and film executives unwilling to cast women as AI researchers because AI is in reality a male-dominated field, and AI remaining a male-dominated field in part because it is portrayed as such in film.

Gendered tropes

While the numbers of male and female AI scientists in film themselves are stark and telling, films about AI scientists also contain several recurring plot elements (‘tropes’) that contribute to perceptions of the field of AI as masculine. Elsewhere, we have identified four such tropes: portraying the AI scientist as a genius; associating AI with traditionally masculine milieus; men creating artificial life; and relations of gender inequality that relegate female AI engineers into positions of subservience and sacrifice (Cave et al., 2023).

Genius

The ‘genius’ of the AI scientist contributes to the perception of AI scientists as male. Out of the 116 AI scientists, 38 (33%) were coded as geniuses. Furthermore, 14 (12%) of the AI engineers, scientists, or researchers were explicitly represented as child prodigies or as being intellectually precocious from a very young age. However, the notion of ‘genius’ is deeply shaped by gendered and racialised concepts of intelligence that have historically been claimed by a white male elite (Cave, 2020). Numerous studies demonstrate that people across different age groups continue to associate brilliance and exceptional intellectual ability with men (Bian et al., 2018; Jaxon et al., 2019; Storage et al., 2020). This phenomenon, called the ‘brilliance bias’, suggests that men are more likely to be geniuses than women. In the films we examined for this article, 37 out of the 38 geniuses shown in films were male. The coding of AI scientists as geniuses risks entrenching the belief that women are less ‘naturally’ suited for a career in the field of AI. As Bian et al. have shown, fields that emphasise the importance of brilliance over other characteristics lower women’s interest in these fields (Bian et al., 2018). Hence, the portrayal of AI scientists as geniuses may discourage women’s career aspirations in the AI sector.

Masculine milieu

A strong trope across our corpus was the ‘corporate creator’. In 32 films (37% of our corpus), the AI was a product of a corporation, and in 10 of these instances no individual scientist was identified as being in charge of the AI. We counted the total number of instances where a clear figurehead of such a corporation was shown, in charge of such elements as commissioning, funding or deploying the AI (e.g. RoboCop, Moon). The only one of these 24 figureheads who was not a man (4%) was an emoji (voiced by a woman), in The Emoji Movie, a discrepancy which could be contributing to the perception of AI as a male domain. Women are extremely underrepresented in CEO positions: for example, only 5.4% of Fortune 500 companies had female CEOs in 2017 (Wang et al., 2018). Studies suggest that this is in part because stereotypes of corporate leaders overlap with stereotypical male attributes, such as ambition and dominance (Koenig et al., 2011).

A similar set of associations arises from the trope of AI as a military product, although it is slightly less prominent than the corporation and CEO tropes. Our corpus contained 10 films in which the AI was produced by military organisations. Needless to say, the military is strongly associated with stereotypical male attributes (Goldstein, 2003). It is therefore reasonable to conclude that the association between AI and the hypermasculine field of the military is amplifying the perceived masculinity of the field of AI.

Artificial life

In the early days of AI, when Freudianism was still current, it was considered almost a cliché that (at least some of) the researchers involved were motivated by ‘womb envy’ – that is, the envy of women’s reproductive organs and the attendant power to create life. In his highly influential 1973 report ‘Artificial Intelligence: A General Survey’, Sir James Lighthill wrote, it has sometimes been argued that part of the stimulus to laborious male activity in ‘creative’ fields of work, including pure science, is the urge to compensate for lack of the female capability of giving birth to children. If this were true then Building Robots might indeed be seen as the ideal compensation. (Lighthill, 1973)

While few works in the corpus discuss this idea directly, a significant number (19, or 22%) feature male creators who in some way fulfil their desires by creating a human-like AI. For example, there are nine instances in film of male creators replacing lost loved ones; five of male creators creating ideal lovers, and a further six of male creators who use AI to create copies or artificially intelligent versions of themselves. This suggests that the association of AI and masculinity might be further exacerbated by this association of the creation of artificial intelligence or life in the laboratory with maleness, in contrast to the female creation of natural intelligence/life.

Gender inequality

The trope of the male genius affects even those few films in which a female AI scientist is shown. Out of the eight female scientists and one female CEO, five of them are portrayed as subordinate to a man. In three films, the female AI scientist is the subordinate employee of a man (I, Robot, The Machine, and Austin Powers); in Transcendence and Inspector Gadget, the women are respectively the wife and daughter of a male genius AI creator. So while 8% of AI creators in this corpus are female, only four of them (3% of all AI creators) are not in a subordinate position to a man.

Furthermore, three out of the nine women either sacrifice themselves or are sacrificed as part of the film’s plot (The Machine, Ghost in the Shell, and The Emoji Movie). This is exemplified in The Machine, where the female scientist Ava is killed and the titular Machine is built based upon her image, brain scan, and likeness. In the polling website FiveThirtyEight’s project ‘Creating the Next Bechdel Test’, one of the tests judges films according to whether ‘a primary female character ends up dead’ (Hickey et al., 2017). As the authors note, these new gender equality tests aim to identify the ‘subtler and often more pervasive misogyny’ that characterises portrayals of women when they make it onto the screen (Hickey et al., 2017).

Director’s gender and the representation of women in AI

While we had expected there to be more male than female directors of major AI films, the scale of this imbalance surprised us. 119 of the 143 films were directed solely by a man, and a further 17 by men-only teams. As noted above, only two films were co-directed by a woman (1.2%). This is even worse than the industry average. Over the past 20 years, the proportion of female directors of major Hollywood films has consistently been much lower than that of male directors, ranging between 5% and 13% in the period 1998–2019 (Follows and Kreager, 2016: 5) with comparable figures seen in the United Kingdom (Milla, 2014). That the figures for women directing portrayals of AI is even worse than this suggests that there is something particular to AI productions, or the genres in which they are made, that is hampering the participation of women. As noted in the ‘Directors’ section, this is likely to be having a significant impact on the representation of women in these films.

7. Limitations and future work

We suggest two main areas for further work on this topic: intersectional analyses, and examining different corpora.

Intersectionality

In this article, we focused solely on the gendered representation of AI scientists. As noted in the ‘Intersectionality and our focus on “women”’ section, we believe the collection of data on the race and ethnicity of AI scientists requires different methods to those we have employed for this study. Other important considerations for future research include the role of sexuality, sexual identity, and queerness in the representation of AI scientists and engineers; the representation of disability among AI engineers, scientists, or researchers; and how disability intersects with gender and ethnicity/race.

Corpus and media types

We recognise the importance of other media types in shaping cultural constructions of the AI scientist. We plan to publish future work that examines the representation of AI scientists and engineers in television from 1920 to 2020 to examine whether our findings in film are replicated in TV shows, or whether TV shows, due to their longevity and more flexible format, offer more positive gendered representations of AI scientists.

It would also be worthwhile to examine how AI scientists and engineers are portrayed in nonfiction science media, including science news coverage and documentaries. Such a study could elucidate whether or not women are adequately represented as experts in the field of AI.

In addition, since we focused on mainstream films, further research on the representation of AI engineers and scientists in indie or non-mainstream films could determine whether they offer more subversive and radical portrayals of AI scientists. A final, important route for further exploration would be the representation of AI scientists and engineers in corpora that include a larger proportion of non-Western and non-Anglophone films.

8. Conclusion

In her seminal book on gender and AI, Artificial Knowing, Alison Adam lamented that Feminist research can have a pessimistic cast. In charting and uncovering constructions of gender, it invariably displays the way in which the masculine is construed as the norm and the feminine as lesser, the other and absent. (Adam, 1998: 156)

Adam’s own work focused on the conceptual frames and goals of AI research. Our study has shown that her observations on the construal of the masculine as the norm and the feminine as absent apply equally to the cultural construction of those behind the research. However, the remedy is so clear that some optimism might be justified: undoing the cultural construction of the AI scientist as male will require many more depictions in mainstream media of women in this role.

Supplemental Material

sj-pdf-1-pus-10.1177_09636625231153985 – Supplemental material for Who makes AI? Gender and portrayals of AI scientists in popular film, 1920–2020

Supplemental material, sj-pdf-1-pus-10.1177_09636625231153985 for Who makes AI? Gender and portrayals of AI scientists in popular film, 1920–2020 by Stephen Cave, Kanta Dihal, Eleanor Drage and Kerry McInerney in Public Understanding of Science

Research Data

sj-xlsx-2-pus-10.1177_09636625231153985 – Supplemental material for Who makes AI? Gender and portrayals of AI scientists in popular film, 1920–2020

sj-xlsx-2-pus-10.1177_09636625231153985 for Who makes AI? Gender and portrayals of AI scientists in popular film, 1920–2020 by Stephen Cave, Kanta Dihal, Eleanor Drage and Kerry McInerney in Public Understanding of Science

Footnotes

Acknowledgements

We are grateful to Tonii Leach for her advice regarding the methodology, and to Tomasz Hollanek and Jenny Carla Moran for their comments on our draft material. We are grateful to Emilia, Beatrice and Heloisa von Tiesenhausen Cave for their assistance with corpus analysis. We would further like to thank the participants of the LCFI Weekly Seminar and the HPS Science Communication Reading Group, both at the University of Cambridge, for their feedback.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: S.C. and K.D. are funded by the Leverhulme Trust (via grant number RC-2015-067 to the Leverhulme Centre for the Future of Intelligence) and Stiftung Mercator. K.D. was additionally funded through the support of grants from DeepMind Ethics & Society and Templeton World Charity Foundation, Inc. E.D. and K.M. were funded by Christina Gaw as part of the Gender and Technology project at the University of Cambridge Centre for Gender Studies, and are currently funded by Stiftung Mercator.

Supplemental material

Supplemental material for this article is available online.

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.