Abstract

Systematic reviewers planning quantitative meta-analysis usually choose between fixed effect meta-analysis, and random effects meta-analysis. An alternative method is called fixed effects (note the s in the name). This method has the unique property that the target estimand is defined by the variances of the studies found by the systematic review. This article considers each in relation to the quantitative analysis of data to be obtained by systematic review. For clarity, I refer to the traditional fixed effect method as the common effect method and the newer approach as fixed effects (plural). Case studies illustrate the post hoc nature of the fixed effects (plural) method, in which the population under study is determined by the data rather than by the protocol. Mathematical analysis shows that unlike common effect and random effects methods, the fixed effects (plural) method requires an additional, and unrealistic, assumption about the data obtained in systematic reviews. A simulation study demonstrates that confidence intervals from fixed effects (plural) meta-analysis do not account for the post hoc nature of the method. Fixed effects (plural) meta-analysis is neither a slot-in replacement for the common effect method nor for the random effects method of meta-analysis. Of the three methods considered here, the common effect method and the random effects method are potentially valid for the quantitative analysis of systematic reviews.

Keywords

Background

Many of us were taught, and have taught, that systematic reviewers planning a quantitative meta-analysis typically choose between two modelling approaches: on the one hand, methods based on a fixed effect model, on the other hand, methods based on a random effects model. The fixed effect model makes a modelling assumption that the true value of the effect of interest is the same in all included studies eligible for inclusion in the meta-analysis. The random effects model explicitly assumes a variety of different true values are possible, and models their distribution. 1 However, it is no longer the only model that allows for multiple true values. Rice et al. 2 introduced a third approach to meta-analysis, which has computational similarities to fixed effect meta-analysis even while using a model that is more general than random effects meta-analysis. Rice et al. call their model by the name ‘fixed effects’, for which reason some authors in recent years have begun to find new names for the long-established fixed effect method. In this article, I will refer to the long-established fixed effect approach as ‘common effect’ meta-analysis, and the approach of Rice et al. as ‘fixed effects (plural)’ meta-analysis, for clarity.

In an era of protocol-driven medical research, a systematic literature review begins with a protocol that prespecifies the aims and methods. The research question should be clearly framed. 3 Structured formats for framing a research question about treatment include the PICO format, in which P denotes that the population of interest should be specified; I and C denote that the intervention and comparator should be specified, respectively; and O denotes that the outcome should be specified. 4 When a systematic review is to be analysed quantitatively, the analysis methods should be well-chosen for the research question. In this article, I explore the applicability to systematic reviews of each of the three approaches to meta-analysis discussed above. A primary objective for the article is to examine whether the fixed effects (plural) approach can be considered simply a ‘re-evaluation’ of the common effect (formerly, ‘fixed effect’) approach, or whether it needs to be considered something wholly new. A secondary objective is to examine whether the fixed effects (plural) approach can be considered the most general of the three.

In Section 2, I establish notation for this article and use it to state formally the model assumptions, estimands and estimates for each approach. In Section 4.1, I illustrate the substantial differences in interpretation by applying each to a small case study. In Section 3, I show that an additional assumption is required for the fixed effects (plural) method. In Section 5, I conduct a simulation study of the performance of each approach as heterogeneity increases.

Throughout this article, I consider estimation of a single effect parameter. Quantification and testing of heterogeneity, and other developments such as bivariate meta-analysis and network meta-analysis, are beyond the scope here. It will be useful to distinguish between models and methods. In particular, there are a great many methods to estimate the parameters of the random effects model. 5 By ‘model’ I mean a set of assumptions expressed mathematically. In ‘method’ I include the choice of estimand as well as the formulae used for point, variance and interval estimation about an estimand of interest.

Models and methods

Common effect model and method

Suppose

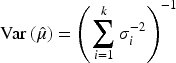

In this approach, the target estimand is a parameter

Strictly speaking, equation (2) should use the estimated variances

Other estimation methods exist for the common effect model.7,8 These are specific to the case that the

Typical methods based on the common effect model have the attractive property that study weights are proportional to the information size of the study.

Note on terminology: the approach described here as common effect meta-analysis is that originally known as fixed effect meta-analysis; the name ‘common effect’ is increasingly used to avoid confusion with the fixed effects approach described in Section 2.3.

In the random effects approach, we no longer assume a single constant

It is common to use

In practice,

Methods based on the random effects model have the attractive property that numerical heterogeneity between studies is reflected in the interval estimate – the more studies disagree, the wider the confidence interval. This is not true of common effect methods, in which confidence interval calculation is oblivious to the numerical homogeneity or heterogeneity between studies. However, random effects methods do not necessarily give studies weights that well-reflect the information size of the study, especially when heterogeneity is present: the smallest studies may have much more influence on the summary estimate than they would in a common effect meta-analysis.

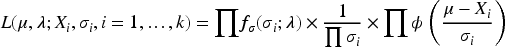

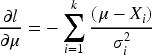

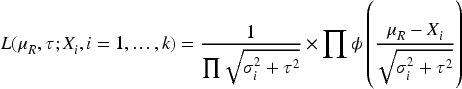

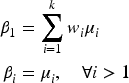

Rice et al. propose a model in which the

As in the previous sections, we assume first that the

Rice et al. then propose that the following estimand be of interest:

The fixed effects (plural) approach, therefore, differs from the common effect approach and the random effects approach in that the estimand itself is a combination of location measures and variances. In the traditional common effect method, variances appear in the formula for the estimate, but the thing we have decided to estimate, the estimand, is simply

Arguably, the sizes, variances and weights of studies in a meta-analysis should be considered random variables.

10

In the exposition in Sections 2.1 to 2.3, we made two assumptions about the study variances

Conditioning on the study-specific variances, as if they were constants, may seem analogous to conditioning on the sample size

In this section, I outline the straightforward proofs (Sections 3.1 and 3.2) that in a common effect meta-analysis, or a random effects meta-analysis, the study-specific variances are ancillary for the effect parameters, in order to contrast this (Section 3.3) with the fixed effects (plural) meta-analysis model.

Noting that ‘ancillary’ has multiple definitions,

11

for this article, I use the following: call

Common effect meta-analysis

We can show as follows that for common effect meta-analysis, estimation of the parameter

If we consider the

Suppose instead we choose to regard the

The arguments for the common effect model also extend to a random effects model for meta-analysis, such as (3).

If we consider the

Suppose instead the

Fixed effects (plural) meta-analysis

The arguments in Section 3.1 extend to estimation of the

However, the fixed effects (plural) method is not proposed for estimation of any

Case 1: Within-study variances treated as constants

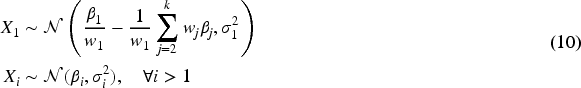

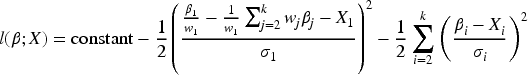

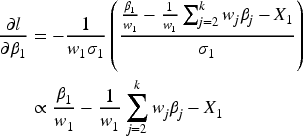

First, take the

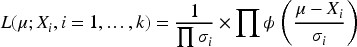

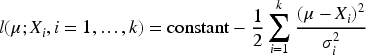

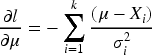

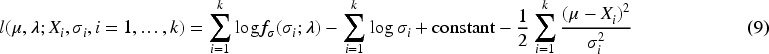

Before we can consider a likelihood function for estimation of Rice et al.’s

We can see that this is a valid re-parameterisation in that the model above for the probability distribution of the data

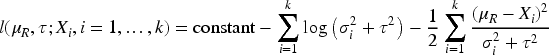

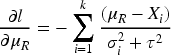

The log-likelihood is then

In summary, in the case that the variances are conceived to be known constants,

Extension to case 1: Within-study variances are constants, but must be estimated

Dominguez-Islas and Rice

6

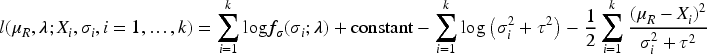

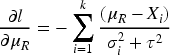

examine the distinction between the true study variances

Case 2: Within-study variances treated as random variables

Now suppose we regard the

If we seek to replicate the arguments of the previous case, difficulties now arise. The weighted sum

Since we have already noted that

If, as in Section 2.3, we take the fixed effects (plural) model to be a straightforward generalisation of the random effects model, it may seem surprising that a difficulty arises in the latter but not the former. To understand this, note that no difficulty would arise, in the fixed effects (plural) model, if we were interested in estimating any of the

Extension to case 2: Within-study variances treated as random variables that are not observed directly and must be estimated

Again, we may extend the argument to the case that the

The above sections used a likelihood framework for convenience, but likelihood estimation is not necessarily optimal. The ancillarity arguments can also be made with other definitions of ancillarity: for example, it can be shown by integration that the marginal distribution of

Papers by Domínguez Islas and Rice 6 make the case for the fixed effects (plural) approach by a minimum variance (or, in a Bayesian framework, maximum information) argument. 13 Minimum variance arguments are often made for using a particular estimator in statistics, but it is unusual to see a minimum variance argument being proposed to motivate an estimand. The proof of the minimum variance argument in Lemma 1 of Domínguez Islas and Rice 6 requires that variances be modelled as constants (whether or not they are known exactly) and, therefore, the fixed effects (plural) method still requires this additional assumption, which is not required for the common effect or random effects method.

Implications

Although the fixed effects (plural) model is more general than either the common effect model or the random effects model, the fixed effects (plural) approach is not. For

Each method of estimation requires a particular assumption. The constant effect method requires the constant effect assumption, and the random effects method requires a distributional assumption about the effects. We have now seen that the fixed effects (plural) method requires that we are prepared to model the study-specific variances as constants. If we think of the study-specific variances as data that will arise during the review, then we cannot select this method: the model (6) may apply, but the estimand (7) does not. Previous work 6 has shown that we can generalise from known constants to constants that are reported with error. The work here shows that we cannot generalise more widely to the case that they are not constants, but arise at random.

Investigators specifying either common effect meta-analysis or random effects meta-analysis need not debate whether the study-specific variances are data or constants: as shown here, this conceptual point does not affect inference for these two models. Only investigators specifying fixed effects (plural) meta-analysis need to adopt a position on whether the constant variance assumption is tenable.

Case studies

To examine the applicability of the different estimands to a systematic review, I apply common effect, random effects, and fixed effects (plural) meta-analysis to previously published systematic reviews. The common effect and fixed effects (plural) meta-analysis are applied by the inverse variance method, and random effects meta-analysis by the DerSimonian and Laird method. 14 This is sufficient to illustrate the differing interpretations but is not meant to imply a preference for these methods in practice.

Minton, 2008: Methylphenidate for fatigue in cancer patients

For simplicity of exposition I first select a previously published meta-analysis of just two studies. This is intended to illustrate differences in interpretation; it is not intended to imply that it is wise to attempt random effects meta-analysis, in particular, with so few studies.

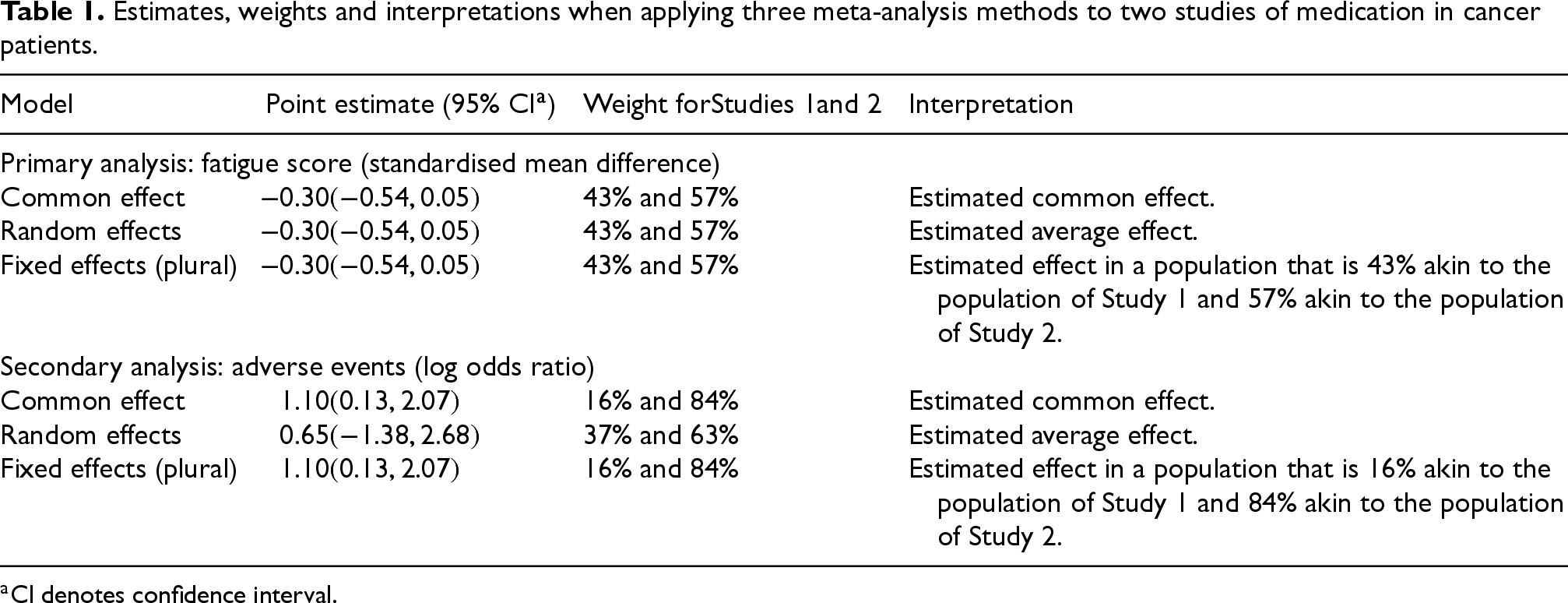

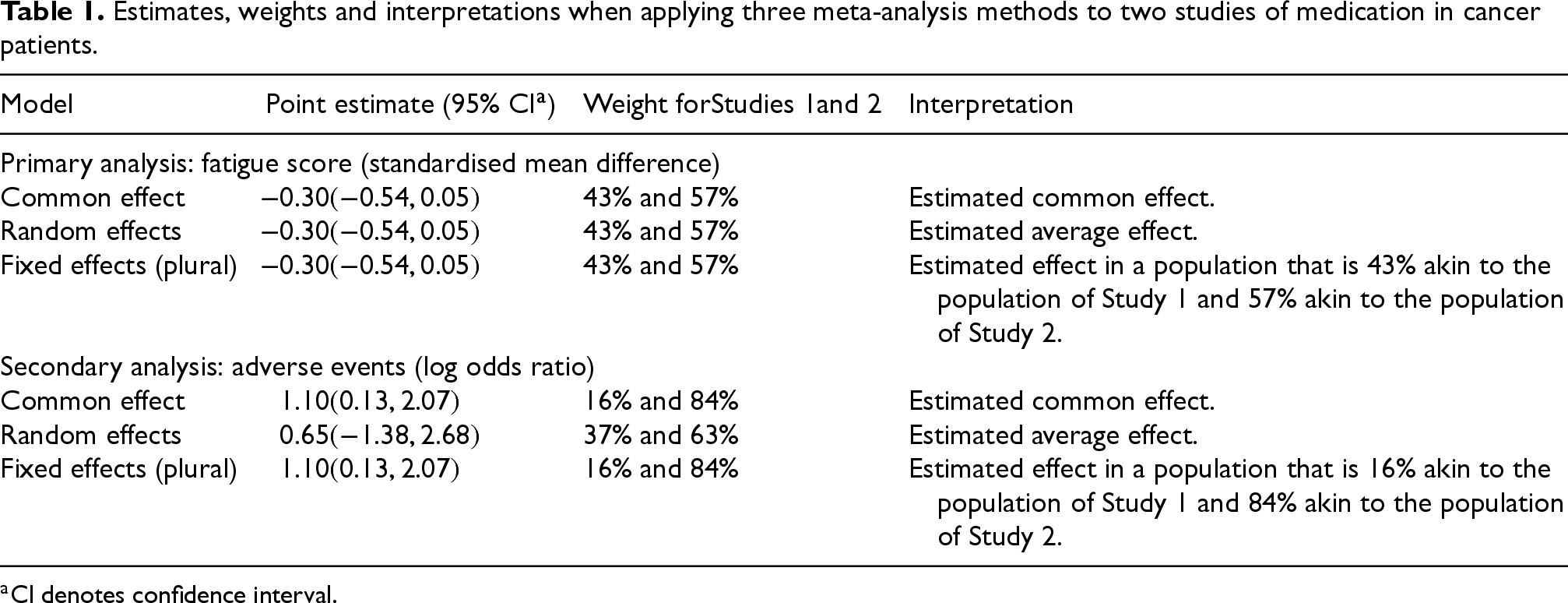

A systematic review by Minton et al. 15 found two studies that compared methylphenidate to placebo for fatigue in cancer patients. The study of Bruera et al. 16 recruited patients with any tumour type, not on chemotherapy. The study of Fleishman et al. 17 recruited patients with any tumour type, on chemotherapy. Figure 1 of Minton et al. shows a random effects meta-analysis of the standardised mean difference of fatigue score.

Table 1 shows the results of analysing the same data with either a common effect, random effects, or fixed effects (plural) meta-analysis. For the primary analysis, heterogeneity is low and all three analyses yield the same numerical results. For the common effect method, these can be interpreted as a point and interval estimate of a hypothetical true effect that is hypothesised to be constant across the different populations and settings in the Bruera and Fleishman studies. For the random effects method, the results should be interpreted as a point and interval estimate of the centre of some distribution, hypothesised to be normal, of possible true effects in Fleishman, Bruera and hypothetical other studies. For the fixed effects (plural) method, the results should be interpreted as a point and interval estimate of the average effect in a cancer population that is

Estimates, weights and interpretations when applying three meta-analysis methods to two studies of medication in cancer patients.

Estimates, weights and interpretations when applying three meta-analysis methods to two studies of medication in cancer patients.

The second half of Table 1 shows the results of analysing a secondary outcome, adverse events, for the same two studies. The common effect results should, again, be interpreted as a point and interval estimate of a true effect that is hypothesised to be constant across the different populations. That the weights are different (from those in the common effect analysis of the primary outcome) informs the estimates, but not the interpretation. The random effects results should, likewise, be interpreted as a point and interval estimate of the centre of a hypothetical Normal distribution of possible true effects. That the weights are different (from those in the random effects analysis of the primary outcome) informs the estimates, but not the interpretation. The fixed effects (plural) results should be interpreted as an estimated effect in a population that is 16% akin to the population of the Bruera study and 84% akin to the population of the Fleishman study. The weights are different from the corresponding analysis of the primary outcome, and these weights not only inform the estimates, but also define the target estimand and hence inform the interpretation.

For the common effect method, the primary analysis and secondary analysis are both applicable to the same population. When using the random effects method, the primary analysis and secondary analysis are both applicable to the same population, albeit a different population to the common effect analyses. The fixed effects (plural) method has the remarkable property that results from the primary analysis, and results from the secondary analysis, should not be considered applicable to the same population as each other.

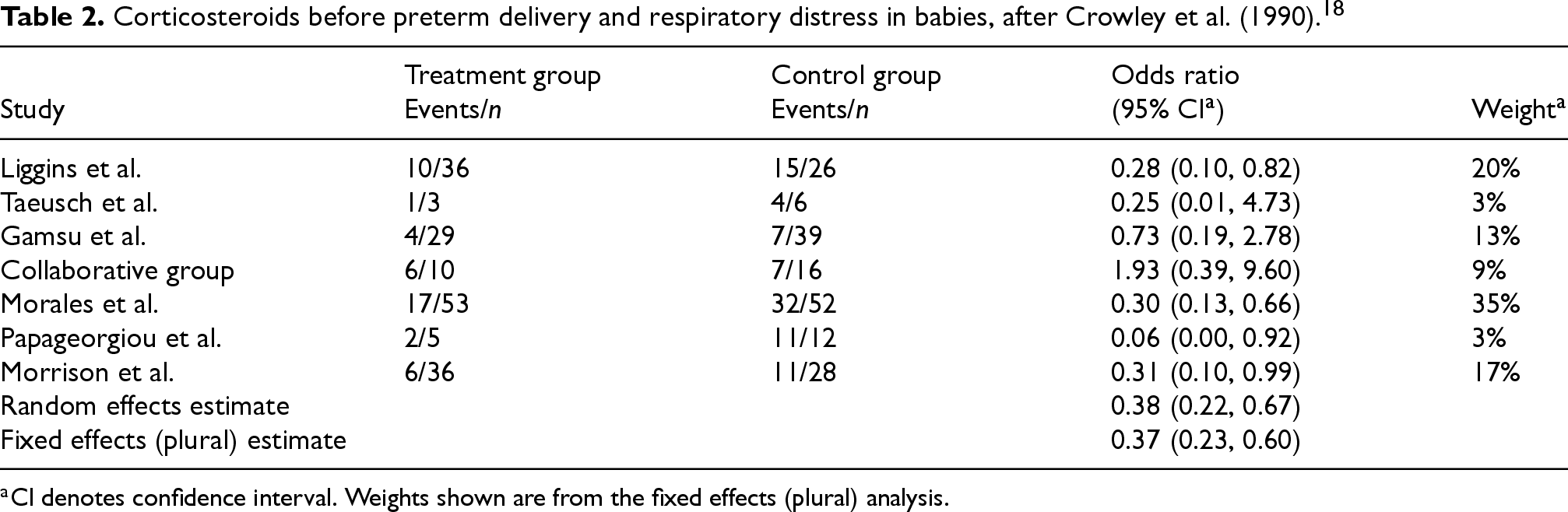

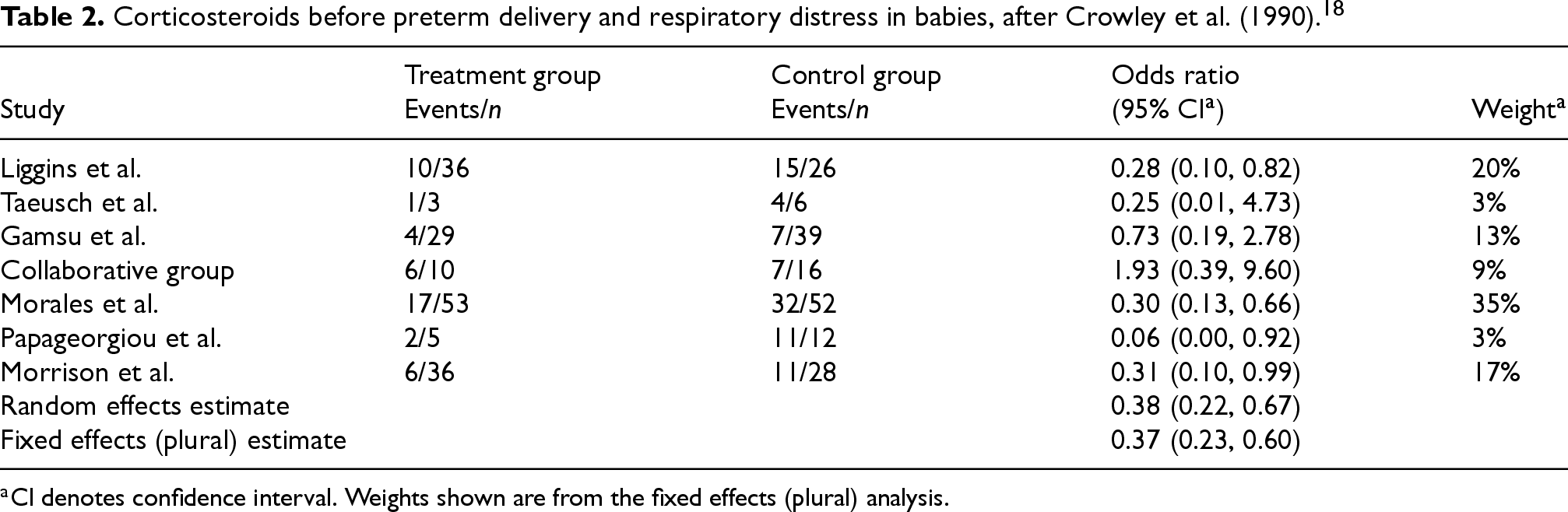

Crowley et al. 18 reviewed the literature on maternal corticosteroids before preterm delivery, including a meta-analysis of neonatal respiratory distress in seven trials. Table 2 shows the results of re-analysing the same data with random effects meta-analysis or fixed effects (plural) meta-analysis. Common effect meta-analysis yields the same numerical results as fixed effects (plural) meta-analysis and is not shown separately.

Corticosteroids before preterm delivery and respiratory distress in babies, after Crowley et al. (1990).

18

Corticosteroids before preterm delivery and respiratory distress in babies, after Crowley et al. (1990). 18

The common effect estimate is that the odds ratio for treated versus control children is

The fixed effects (plural) meta-analysis estimates a treatment effect in a meta-population that is 35% like the population studied by Morales et al., 20% like that studied by Liggins et al., 17% like that studied by Morrison et al., and so on. This composition of the meta-population is defined by the information sizes of the seven studies that were found in the systematic review. To this extent, the analysis has addressed a research question that was specified post hoc by the data obtained rather than by the investigators a priori.

The systematic review has been updated and re-analysed many times since the 1990 publication by Crowley, 19 each time yielding a revised estimate of the odds ratio. In the common effect (or ‘fixed-effect’, singular) framework used by the authors, these are revised estimates of the same population parameter. If the authors used a fixed effects (plural) framework, then each new estimate would be interpretable with respect to a different estimand relevant to a different population.

The point has been made before that, when interpreting a systematic review, the choice of a common effect or a random effects method ‘affects the interpretation of the summary estimates’. 1 Common effect, and random effects, meta-analysis address slightly different research questions. Fixed effects (plural) meta-analysis addresses a different research question from either, studying an estimand that applies to a conceptual ‘meta-population’, and an argument has been made that this approach, too, can be informative. 6 The case studies illustrate that the ‘meta-population’ under study depends on the data obtained. Therefore, it will not be known at the time a systematic review protocol is written. Investigators considering this approach should be aware of this, and also that there is no guarantee that the same ‘meta-population’ will be under study across all primary and secondary analyses in the review. The Crowley example also illustrates the assumption, in common effect meta-analysis, that the population parameter is constant across different studies – in this instance, an odds ratio common to all relevant studies. With seven included studies, it also illustrates the difficulty of an untestable normal distribution assumption in many systematic reviews.

Simulation study

The argument is made in Section 3.3 that even if the FE (plural) model is a generalisation of the random effects model, estimates produced by the FE (plural) method are not comparable with the random effects method. A simulation study is presented here, with the aim of comparing the behaviour of confidence intervals under different methods.

The data generating method is a scheme that has been used by previous authors20,21 for the random effects model. I simulate values of

The estimands have been documented in Section 2. The methods of analysis were as follows. Common effect and fixed effects (plural) meta-analysis are conducted using the function rma.uni from the metafor package,

23

with option method

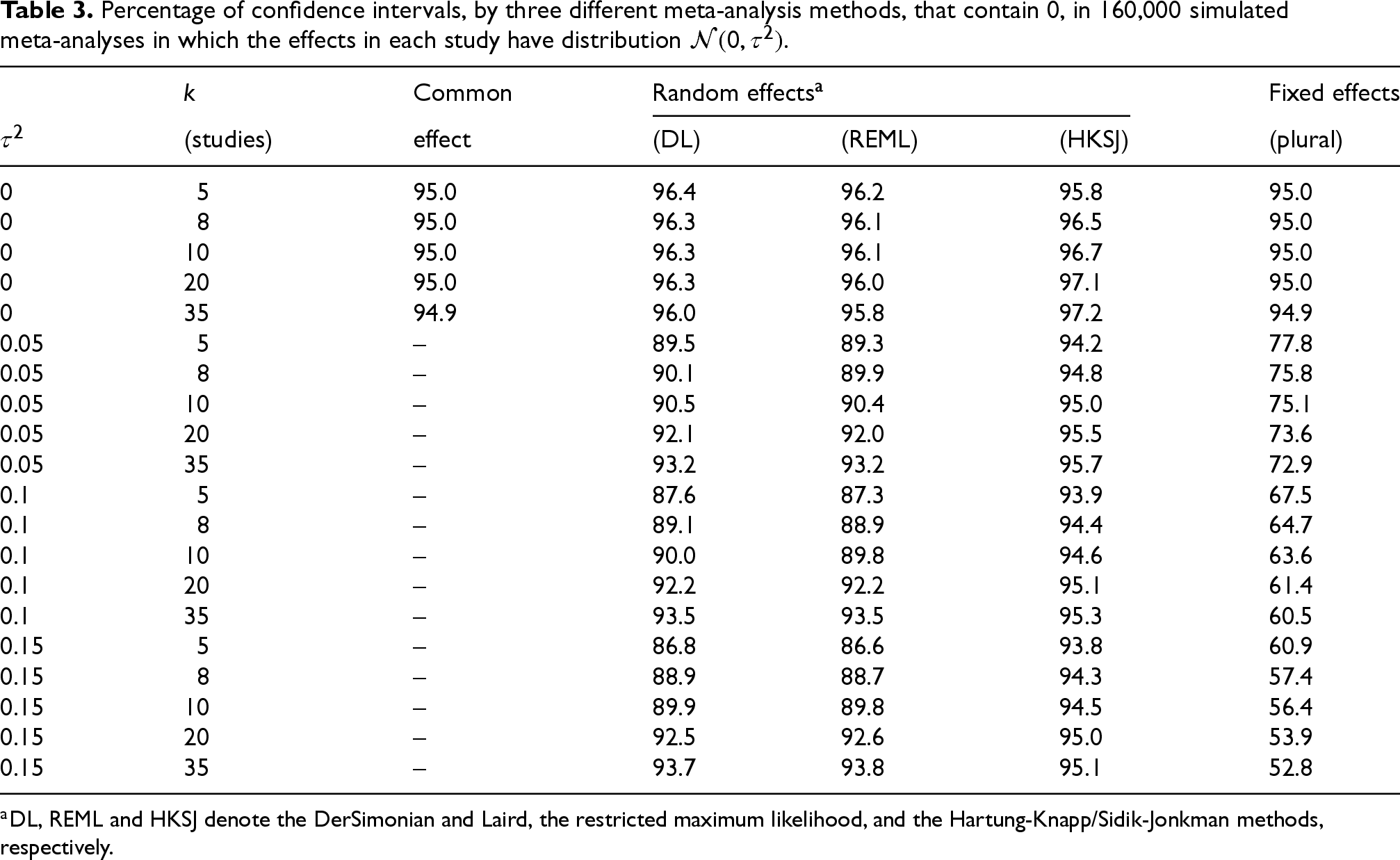

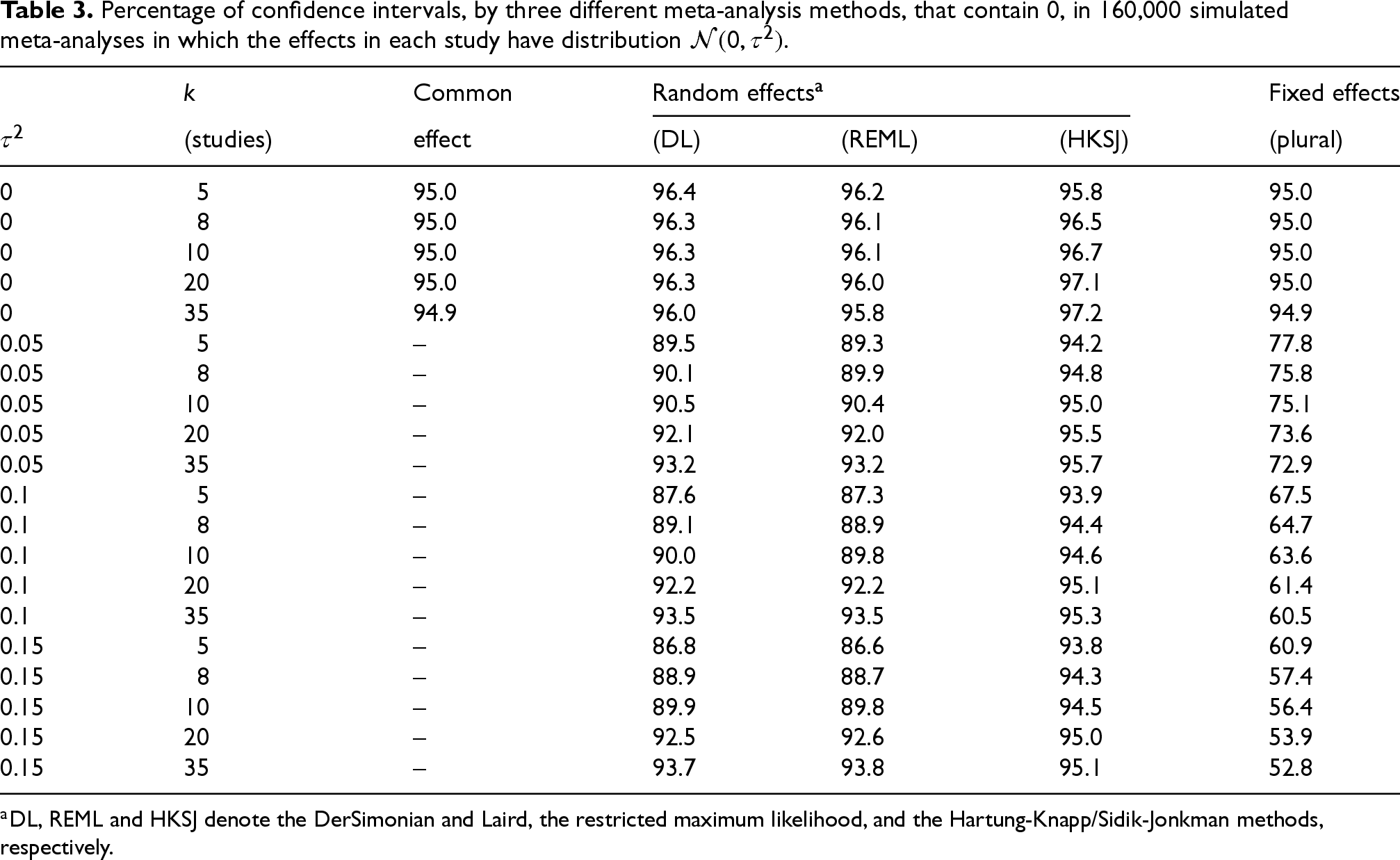

The measure of performance used was the proportion of confidence intervals that contain

Table 3 shows the percentage of 95% confidence intervals that contain

Percentage of confidence intervals, by three different meta-analysis methods, that contain

, in 160,000 simulated meta-analyses in which the effects in each study have distribution

.

Percentage of confidence intervals, by three different meta-analysis methods, that contain

The common effect model is only applicable when it is tenable to assume homogeneity of true effect(s), as has been observed often before. The random effects model is more widely applicable; systematic reviewers should be aware that good coverage properties for confidence intervals can depend in part on the specific random effect methods chosen.25,26

More interestingly, the results in Table 3 provide a quantitative demonstration that the fixed effects (plural) method is profoundly different, even if the model is a generalisation of both the others. The results in the last column show that inference about the estimand

Objections could arise that this simulation is not valid for the fixed effects (plural) method, either because the data was simulated from the wrong model, or because the wrong results are reported. It cannot be that the simulation is not valid for the fixed effects (plural) model: if it is valid for the random effects model (3), then it is valid for (6) because the latter is more general. Regarding the reporting: the data presented is fit for this purpose, which is to demonstrate, quantitatively, differences between the methods. Only if the last column were mis-presented as coverage probabilities for the target estimand would the data being wrong.

I have declined to present results for estimation of

Discussion

Summary of findings

The fixed effects (plural) approach is different from others in that the estimand is not defined until the systematic review is complete, the studies identified and their numerical information extracted. This is in contrast to the claim of Rice et al. (their Section 4, line 1) that the estimand is a ‘well-defined’ population parameter; instead, it is a data-dependent quantity. The case studies (Section 4) illustrate this, and the ancillarity arguments in Section 3 clarify how this arises. The mathematics also show that this is not the case for the common effect or random effects approach to meta-analysis.

The simulation study illustrates that these abstract points affect the applicability of the confidence intervals from a meta-analysis. The confidence intervals from a common effect meta-analysis or random effects meta-analysis are designed to have

To my knowledge, there is no other method in statistics in which the target of estimation is data-dependent. From a statistical perspective, confidence intervals in hierarchical data should reflect every level of sampling uncertainty. In evidence based medicine, the research question – including the population of interest – is expected to be specified in the protocol, not defined by the data collected. This is in part because we at least aspire to estimate benefits and harms with respect to the same population (it is true that in any systematic review, publication bias and reporting bias may make this difficult; this is usually regarded as a problem, or at least an undesirable limitation). In Section 4.1, we saw that the fixed effects (plural) method could not give us a consistent population of interest, even when analysing the same samples for different clinical outcomes.

Limitations

The arguments here are almost entirely concerned with estimation, omitting hypothesis testing for reasons of length. The simulation study is likewise limited, considering only a null central effect parameter and normal distributions; nevertheless it is sufficient to demonstrate the differences between approaches.

There are more approaches to meta-analysis than are considered here. There are advocates for unweighted means 10 and for weighting by quality of paper. 27 There are random effects meta-analysis methods that make different distributional assumptions from (3).28,29 There are advocates for using the precision-weighted mean even while making a random effects assumption. 20 Some authors have sought to account for between-study heterogeneity without using the term ‘random effects’ to describe their approach. 30 In this article, I have restricted attention to three model-based approaches to meta-analysis, and the numerical findings in the case studies and the simulation study do not fully explore the wide variety of methods available for the random effects model in particular. The poor coverage properties of this random effects approach in some scenarios should not be over-interpreted: see elsewhere for better examinations of the coverage properties of random effects meta-analysis methods.25,26

The use of likelihood arguments in Section 3 is not intended to imply a preference for likelihood estimation in meta-analysis. The finding that fixed effects (plural) meta-analysis requires a constant variance assumption is not restricted to a likelihood framework, as discussed in Section 3.4.

My treatment is primarily concerned with the estimation of effect measures. By extension, the results also have some relevance to testing hypotheses about these effect measures. These findings will be less relevant to investigators whose sole or primary interest is in quantifying heterogeneity.

Other applications of meta-analysis

The treatment here is intended to be applicable to the analysis of data obtained by systematic review. Another application of meta-analysis is the analysis of a multi-centre study. Here the

Thus, the difficulties with applying fixed effects (plural) meta-analysis to systematic review data are specific to systematic reviews, and do not arise when analysing a multi-centre study by this method.

Relation to the literature

Consideration of the different estimands in the common effect model and random effects model for meta-analysis is far from new. 1 There is more novelty in the study of the fixed effects (plural) estimand. Its behaviour across outcome measures, seen in the case studies, is implicit in its definition but to my knowledge has not been made explicit before. The ancillarity of the study-specific variances for common meta-analysis and random effects meta-analysis may not have been formally demonstrated before, but could be expected by analogy with the ancillarity of sample size in primary studies. That they are not ancillary for the estimand of the fixed effects (plural) approach is, I believe, novel. The simulation study demonstrates that it has practical as well as theoretical importance. This is the main contribution of the simulation study, since the results for common effect and random effects meta-analysis are not novel.

Conclusions

Systematic review investigators considering fixed effects (plural) meta-analysis must be aware that it is not simply common effect meta-analysis with a new rationale. Therefore the two should not be confused, even though the common effect method was once known as ‘fixed effect’ meta-analysis, because the target of estimation is different. Nor is it a generalisation of random effects meta-analysis, again because the estimand is different, but also because fixed effects (plural) meta-analysis requires a new assumption. This is the only approach to meta-analysis in which systematic review investigators need to reflect on whether they consider the standard errors recorded on their data extraction sheets to be ‘data’ or ‘constants’.

Systematic review investigators planning a meta-analysis should consider the usefulness of the different estimands. The usefulness and interpretation of the estimand in random effects model has been much discussed, including in the many papers cited here. The applicability and interpretation of the fixed effects (plural) estimand, and the ‘meta-population’ it represents, are defined only post hoc, and this conceptual difficulty is reflected in its confidence intervals. It cannot be recommended for use in systematic review.

Footnotes

Acknowledgements

I am grateful to Jake Olivier of University of New South Wales, and Thomas Fanshawe, Rafael Perera, Jason Oke, and the medical statistics group at Nuffield Department of Primary Care Health Sciences, for comments that have assisted the direction of this paper. This research was funded by the National Institute for Health and Care Research (NIHR) Applied Research Collaboration Oxford and Thames Valley at Oxford Health NHS Foundation Trust. The views expressed are those of the author(s) and not necessarily those of the NHS, the NIHR or the Department of Health and Social Care.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.