Abstract

Detecting internet hate speech automatically is an important but difficult task that is recognised as ethically problematic. In comparison to typical computer science approaches, the current study focuses on psychologically meaningful aspects of language, and not on terms pre-defined as hateful. Data consists of the naturally occurring discourse of a gender critical feminist group banned from the Reddit discussion platform for promoting hate based on identity; this is compared with discourse of a feminist group from Reddit, that has not been banned. Notable psychologically meaningful terms of the gender critical group include third-person plural pronouns, and metonymic acronyms that reference the gender critical outgroup, which may represent outgroup derogation, and outgroup homogeneity. It is noted that the banned forum, which is shown to be an online community, may be responding to threats to identity in recognised ways. It is concluded that a socio-cognitive discourse approach to hate speech detection may help address related ethical concerns, including potential social injustice.

Keywords

Introduction

Hate speech is a complex social phenomenon, that is intrinsically related to social identity, including relationships between groups. In comparison to offline hate, online hate may be more anonymous, more permanent, and may spread quickly to a large audience (Stop Hate, 2023). In internet-facilitated social groups in addition there may be echo chamber effects, in which beliefs are reinforced by their repetition and amplification within a closed system, which has been much discussed as an internet-related problem, including that it may have repercussions in the offline world (Garrett, 2009; O’Hara and Stevens, 2015).

In the area of computer science there has been increasing interest in automatic detection of internet-based hate speech, which is recognised as a difficult task. The most common computer science approach is supervised machine learning (Fortuna and Nunes, 2019; Schmidt and Wiegand, 2017), which is dependent on human annotators, such that there may be limited data, and inconsistency of classification (Ross et al., 2016; Waseem, 2016). The focus has been on hate speech related to racism, anti-refugee sentiment, politics, and homophobia (Fortuna and Nunes, 2019; Schmidt and Wiegand, 2017). However, the internet is also rife with misogynistic discourse (Ging and Siapera, 2018), such that women may be pushed out of digital spaces, and the topics they are allowed to address publicly are constrained and limited. As well as being problematic for individuals this is an issue of social justice (Sobieraj, 2018), such that Lillian (2007) has called for sexist discourse to be specifically recognised as hate speech.

For example, on the prominent discussion website Reddit (Reddit, 2022), sexist behaviour is identified as a particular concern (u/worstnerd, 2022). In both women-oriented and non-women-oriented Reddit communities around 0.3% of content is hateful content directed at women, such that many surveyed participants limit their engagement in women-oriented communities specifically to reduce the likelihood they will identified as women and consequently be subject to sexist abuse. Participants may use alternative accounts, or delete their comment and post history, in order that such participation is not noticeable: accounts perceived as women are 30% more likely to receive hateful responses in non-women-oriented communities, and 61% more likely to be sent a hateful reply on their first direct communication with another participant (u/worstnerd, 2020). This may be related to the finding that men are much more likely than women to post hate speech messages (Dadvar et al., 2012; Waseem and Hovy, 2016).

The complex social issue of hate speech is explored in the current study in relation to the r/GenderCritical forum (subreddit) banned by Reddit in June 2020 for violating Reddit rule 1, titled ‘Remember the human’, which states that ‘Communities and users that promote hate based on identity or vulnerability will be banned’ (Reddit, 2022). At the time of publishing these guidelines Reddit banned around 2000 subreddits for having consistently broken them, where subreddits are participant instigated and managed sub-fora relating to specific topics. While the detail of the Reddit method for identifying hate speech is not public, Reddit does state that their method is based on locating keywords identified as hateful, from a range of sources. Reddit acknowledges that it is difficult to accurately identify hateful language at scale, including for example that marginalised groups may use language in a way that is acceptable for them but not others (u/worstnerd, 2020).

The banned r/GenderCritical group subsequently relocated to an invitation-only forum at the independent website ovarit.com, on which being gender critical is defined as the set of beliefs that: (i) sex is biological; (ii) patriarchal oppression of women is based in both biological sex and the social expression of gender; and therefore (iii) women should have the right to organise on the basis of biological sex, including single-sex spaces and activities (ovarit.com, 2020). The conflicting non-essentialist view is that gender is not dependent on biological sex, and therefore is fluid, which in the UK is encoded in the Equality Act (Equality and Human Rights Commission, 2010). These differences in understanding of gender are a dominant social issue that continues to have a deep social impact, such that it is currently being addressed in law. For example, in response to severe disruptions in the personal and professional lives of two female academics at the University of Essex, UK, who expressed gender critical views, a judge-led tribunal panel into the ‘no-platforming’ of said academics by the University ruled that the gender critical stance is a philosophical belief that also should be protected under the UK Equality Act (Reindorf, 2020).

The gender critical issue is of fundamental importance in relation to identity for groups on either side of the debate. In the social identity approach to inter-group relations (Tajfel, 1978), an ingroup is a social group with which an individual identifies; an outgroup is a social group with which they do not identify. Related psychological phenomena include outgroup derogation, which has been linked to threat to social identity of the ingroup (Branscombe and Wann, 1994; Schlueter et al., 2008); and ingroup favouritism (Everett et al., 2015; Moscatelli and Rubini, 2021). For example, self-exclusive pronouns such as ‘they’, which may reference the outgroup, have been found to be consistently less selected for more positive contexts than self-inclusive pronouns such as ‘we’ and ‘I’, which reference the ingroup, with the first-person singular pronoun ‘I’ having more positive associations than the first-person plural pronoun ‘we’ (Gustafsson Sendén et al., 2014). Related to this, outgroups are generally seen as less variable or diverse than the relevant ingroup, a phenomenon referred to as outgroup homogeneity, even in situations in which there is no difference in frequency of exemplars for each group (Judd and Park, 1988). Hate speech may be related to asserted superiority of the ingroup, as well as othering or asserted inferiority of the outgroup (Warner and Hirschberg, 2012).

Lu and Jurgens (2022) investigate the ‘hateful masked rhetoric’ of gender critical discourse specifically, which they argue may appear positive, that is, in terms of promoting women’s safety and rights, but is actually transphobic. They identify such rhetoric as three themes: (i) ‘bad-faith arguments’; (ii) ‘concerns about transgender women competing in women’s sport’; and (iii) ‘biological essentialist exclusion of transgender women’ (Lu and Jurgens, 2022: 85). Lu and Jurgens produce supervised machine learning models to locate such ‘hateful’ language, to create a publicly accessible ‘TERFblocklist’ of ‘TERF’ social media user identities that may be used to block ‘TERFs’ from social media settings, where terf is a metonymic acronym of ‘trans-exclusionary radical feminist’ that is commonly used to refer to those who are gender critical. While the acronym terf was not originated as a slur, it is typically understood as a slur (Allen et al., 2018; Davis and McCready, 2020; Pearce et al., 2020), where a slur predicates membership in a social group ‘G’ while ‘simultaneously invoking a complex of historical facts and social attitudes about G’ (Davis and McCready, 2020: 63). The supervised machine learning models of Lu and Jurgens are trained on data hand-annotated by the two investigators. This may be a source of notable bias, including a potentially hateful stance, since in addition to the stated remit of their research, that is, to build a ‘TERF classifier’, the academic discourse of Lu and Jurgens itself positions those who are gender critical as an outgroup. This is visible in the use of the third-person pronoun their predominantly to negatively reference those who are gender critical as a group, for example ‘their attacks’, ‘their masked rhetoric’, and ‘their lines of argumentation’, and in the fact that Lu and Jurgens otherwise reference those who are gender critical exclusively with the metonymic acronym ‘TERF’, for example ‘Building a TERF classifier’. Metonymy, in which one aspect of something is used to stand for that thing as a whole, is a basic characteristic of cognition, and a major source of prototype effects (Lakoff, 2008). Metonymy applied to social categories is typically understood as having a dehumanising effect, in that it represents a single property of the referent as having dominant importance, such that the referent is stereotyped, or caricatured (Lakoff, 2008), which may be related to outgroup homogeneity, discussed previously.

While automatic identification of internet hate speech is important, the potential for bias of such models is a serious concern (Mathew et al., 2022), with social justice issues relating to natural language processing in general including exclusion, demographic misrepresentation, and bias confirmation (Hovy and Spruit, 2016). The current study takes a computational approach to this issue that, unlike typical computer science approaches, is based within an overarching Socio-cognitive Discourse Studies (SCDS) stance (van Dijk, 2017). Based on a cognitive linguistics approach, in which language is understood as representing our embodied interaction with the world context in which we live, such that categories we express in language may be understood as underpinned by cognition (Lakoff, 2008), in SCDS discourse structures are understood as relating to social structures via a socio-cognitive interface (van Dijk, 2017). In practical terms, this means that attention is paid to ‘the attitudes and ideologies of language users as current participants of the communicative situation and as members of social groups and communities’ (van Dijk, 2017: 28).

Methods

Natural language processing

In the current study, language data is processed using the spaCy natural language processing Python library (Honnibal and Montani, 2021), which uses statistical language models to process text according to its context. The spaCy parser has high accuracy, which is evaluated rigorously. Its English part of speech tagger uses the OntoNotes5 version of the Penn Treebank tag set, and tags are mapped to the Universal Dependencies v2 POS tag set. This allows terms to be analysed as the relevant parts of speech, for example for the topic modelling analysis of the current study only noun lemmas are included.

In addition, for analyses where noun lemmas only are included, typical bigrams and trigrams have been located, which provide information about word order for terms typically used together. In the current investigation n-grams are processed using the Gensim Python library Phraser (Řehůřek, 2022), which automatically detects n-gram collocations from a stream of sentences.

Topic modelling

The study starts with the unsupervised machine learning technique ‘topic modelling’ to identify core discourse topics and terms, with a special interpretive focus on potential metonymy, which as discussed previously may have particular relevance in discourse relating to social groups. In comparison to the supervised machine learning methods typically used for automatic detection of hate speech, this approach requires no data annotation, which as discussed previously may be biased and inconsistent, and it does not rely on terms previously identified as hateful. The topic modelling method used in the current study is Latent Dirichlet Allocation (LDA), a probabilistic model for finding groups of words that frequently appear together (Scikit-learn.org, 2021), which works well for the data in the current investigation in which each forum post is organised as a separate entity. The Gensim Python library ldamallet LDA model (Řehůřek, 2022) is used. A maximum of 10 topics is sought for each forum, plus an additional topic 0 – an overarching analysis that ranks all relevant terms from each forum. Topic numbers subsequent to 0 rank the prevalence of that topic in the data, and the terms within any topic are listed in order of relevance within that topic, where relevance takes into account both frequency, and exclusivity to the topic (Sievert and Shirley, 2014). In order to focus on the concepts and concerns of each group, for the topic modelling analysis of the current study just the noun lemmas were included, with bigrams and trigrams sought within these.

Sentiment analysis

The focus on psychologically significant aspects of language continues with sentiment analysis of forum posts, which in the current study is based on a ‘transformer’ deep learning language model (Vaswani et al., 2017), which evaluates tokens according to their context such that the same token may have a different value in different contexts. The Hugging Face Python library (Hugging, 2022) is used here for this analysis, with a RoBERTa-base language model trained on 123.86M tweets from January 2018 to December 2021, to classify text items with sentiment values (cardiffnlp, 2022). This language model returns three values for each text analysed, representing the proportion of that text that is negative, neutral, or positive, with these values summing to 1. This approach to sentiment classification is in contrast to the established lexicon-based methods TextBlob (Loria, 2022); and LIWC (Linguistic Inquiry and Word Count) (Pennebaker et al., 2015); which pre-assign an emotional valence for each word included in its lexicon. Recognised limitations of such lexicon-based approaches include that they can’t analyse complex language structures, and do not take into account the communicative context of the words that they count.

Keyword analysis

In keyword analysis the frequency of terms in a corpus of focus is compared to their frequency in a reference corpus, a method typically used to explore what a corpus is ‘about’. In comparison to the topic modelling analysis discussed previously, keyword analysis in the current study treats each separate corpus as a single text, such that there is no distinction between separate posts. In the current study the AntConc corpus analysis website (Anthony, 2022) is used to calculate the various keyword analyses. Log likelihood (p < 0.0001) is used to identify terms with a significantly different density of use in each corpus. In order to consider subject positions in relation to social groups and identity, for example as represented in personal pronouns, in comparison to the topic modelling analysis, in which only noun lemmas are included, all terms are included in the keyword analyses.

Personal pronouns

Personal pronouns have been shown in a wide range of studies to have social and psychological significance (Tausczik and Pennebaker, 2010). The analytic focus in the current study is on: (i) the first-person singular pronouns I and my, which reference the self alone; the first-person plural pronouns we and our, representing social connection to the group; the second-person pronouns you and your, which in the contexts explored here may reference other participants on the forum; and the third-person plural pronouns they, and their, which have been associated with social interests and outgroup awareness (Tausczik and Pennebaker, 2010).

Change over time

The SciPy linregress method (SciPy, 2022) is used here to calculate linear least squares regression (r) for the use of specific terms (i) by month over time; and (ii) with increased participation on the forum, based on the calculated participant post index (ppi), which for each person represents the order in which their posts were made, for example, first, second, etc. The ppi calculations apply across all threads on the forum, across all participants, and across all months of data, and represent level of participation on the forum. Interpretation of r is made such that 0.3 is taken to represent a weak relationship; 0.5 represents a moderate relationship; and 0.7 represents a strong relationship.

Online community

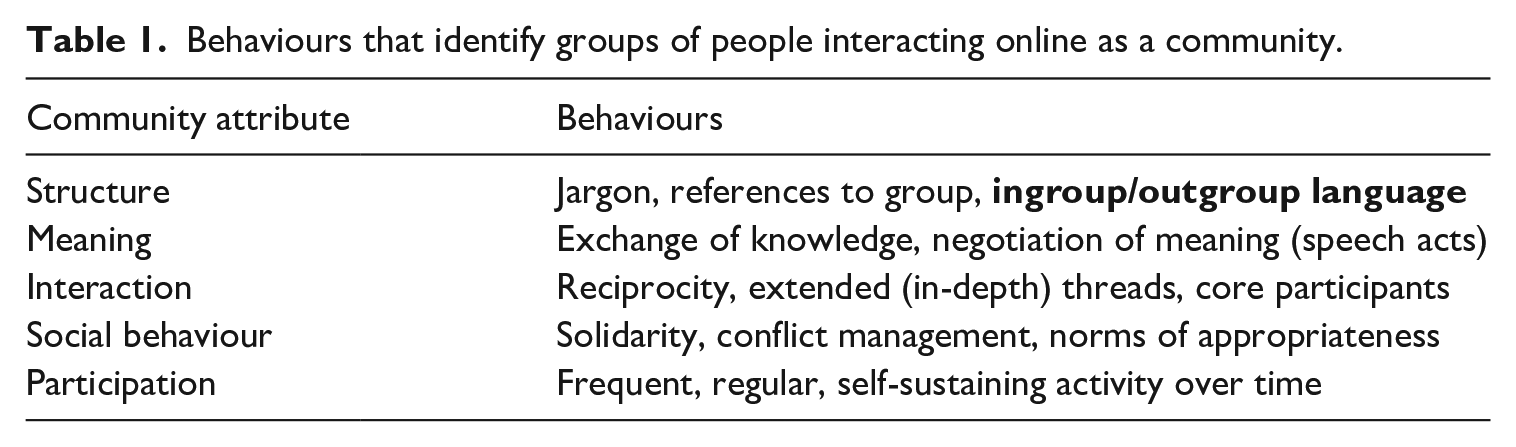

In addition, throughout this study, consideration is given to whether r/GenderCritical can be considered to be an online community, which has relevance in terms of social identity. This is based on relevant discourse behaviours, including structure, meaning, interaction, social behaviour, and participation (Herring, 2004), criteria for which are summarised in Table 1, in which it can be seen that ingroup/outgroup language is part of the structure criterion.

Behaviours that identify groups of people interacting online as a community.

Data

The main data used in the current study comes from the Reddit r/GenderCritical forum (1,397,991 posts made by 51,583 participants over 9 years to 2020), which as discussed previously was banned by Reddit in June 2020 for promotion of hate based on identity. For the purpose of locating potentially hateful speech in r/GenderCritical, comparison is made with r/Feminism (496,530 posts made by 100,909 participants over 13 years to 2022), which has not been banned. r/Feminism is similarly focused on feminist concerns, but unlike r/GenderCritical is not characterised by the specific gender critical stance on gender identity. The data in each comparative dataset consists of all posts made on each forum, which were collected in 2022 via the pushshift.io api (Baumgartner, 2022), which provides access to an ongoing archive of all Reddit posts. Each post is stored as a separate entity, alongside the date and time the post was made, and the anonymised id of the participant who made it.

It is notable that r/GenderCritical participants make more than five times as many posts on average (27) as r/Feminism participants (5). There are also more long-established participants on r/GenderCritical, where 4.52% of participants have made more than 100 posts, in comparison to 0.86% of r/Feminism participants. Thus r/GenderCritical may be said to meet the participation criterion for identification as an online community, which requires ‘Frequent, regular, self-sustaining activity over time’, and the interaction criterion, which requires ‘core participants’ (Herring, 2004).

Ethical considerations

Reddit specifically allows its data to be used for research, and is a data source for a wide range of research. In addition, the British Association for Applied Linguistics good practice guidelines (BAAL, 2021) state that it is acceptable from an ethics viewpoint to use linguistic frequency information from internet discourse, which is the approach of the current study, without specific consent. The British Psychological Society ethics guidelines for internet-mediated research (Hewson, 2021) similarly state that consent is not necessary where online data can be considered to be in the public domain, which is the case here. Where quotations are made from this data they consist of at most one sentence from any post, and are anonymised in terms of spelling errors, names, dates, and any other potentially identifying information.

Results

Topic modelling

The coherence of the 10 topics located via topic modelling is 0.76 for r/GenderCritical, and 0.79 for r/Feminism, representing that for each forum there is a high probability that the terms identified in topics are typically used together.

Overarching topic: Topic 0

Of the 30 terms in the overarching topic 0, 19 are common to both fora. These 19 terms, in order of relevance in r/GenderCritical, are: woman, man, people, sex, child, gender, male, thing, year, comment, post, time, friend, feminist, feminism, lot, guy, person and article. The terms woman and man in that order are the top ranked topic 0 terms for both fora. This overlap in dominant terms and related concerns supports the approach of using r/Feminism as a comparison for r/GenderCritical.

Terms that are present in r/GenderCritical topic 0 but not in r/Feminism topic 0 are, in order of relevance: tran, girl, kid, body, life, lesbian, female, reason, porn, boy and day. The trans issue, which is the specific distinct concern of r/GenderCritical in comparison to r/Feminism, is represented in the noun lemma tran(s) which is the most dominant term on r/GenderCritical that is not also a dominant term on r/Feminism. Terms that are present in r/Feminism topic 0 but not r/GenderCritical topic 0 are: rape, case, life, point, issue, group, work, victim, equality, problem and job.

Common topics

In terms of common topics, topic 4 for both fora is concerned with feminism, and has the following 18 out of 30 terms in common: feminist, people, feminism, group, movement, idea, oppression, view, belief, gender, community, history, misogyny, race, side, patriarchy, sexism and religion. For r/GenderCritical, topic 4 also includes the terms libfem, and radfem, relating to specific constructions of feminism, while r/Feminism topic 4 includes the initialisation mra (men’s rights activists) used to refer to the outgroup of men who are specifically anti feminism.

r/GenderCritical topic 10 and r/Feminism topic 9, each of which have woman, man, and people, as the most dominant terms, also contain the terms sport, and advantage, which are likely to relate to physical advantages attributed to trans-women in competition with cis-women. As discussed above, this was identified by Lu and Jurgens (2022) as one of three themes of ‘hateful rhetoric’ of ‘TERF’ discourse. For r/GenderCritical this topic also contains the terms space, bathroom, and room, which may reference the gender critical concern of trans-women in previously cis-woman only spaces. For r/Feminism this topic also contains the specific term tran.

Topic potentially representing hate based on identity

r/GenderCritical topic 8, which contains the specific identity terms identity and gender_identity, has been identified as potentially representing hate based on identity. There is no equivalent topic on r/Feminism that appears to reference outgroup identity. The terms in r/GenderCritical topic 8, in order of relevance, are as follows: sex, woman, people, gender, male, tran, man, lesbian, female, person, word, tim, trans, identity, penis, transgender, term, transwoman, pronoun, stereotype, dick, gender_identity, cis, definition, sense, vagina, fetish, biology, community, tif.

The metonymic acronyms tim (trans-identifying male), and tif (trans-identifying female), represent the specific stance of those who are gender critical that a trans-woman is still male, and a trans-man is still female. These acronyms are perhaps particularly resonant as a potential slur since they are male and female names. The term biology is also present in topic 8, which may also in this context represent the specific gender critical view that gender derives from biology, and may not be socially constructed. This is supported by the anatomy terms penis, dick (slang for penis), and vagina, also referencing this issue.

To explore the use of the metonymic acronyms tim and tif, for each of these separately, three random sentences were extracted in which that acronym functions as a noun. Examples a-c below include tim; examples d-e include tif.

Examples (a) and (c) both use tim alongside another topic 8 term, lesbian. All three tim examples invoke biological essentialism in relation to ostensibly indisputable social behaviours: sexual attraction (a and c); and religious doctrine (b). In addition, (a) and (c) reference severe social threats relating to denial of the trans position. This is further referenced in the separate tif examples (d) and (e), which appear to express that tifs are less threatening, or controversial, than tims, while tif example (f) explains that a tif is a trans man.

(a) the best is the tra logic that tims can be lesbians but if they are married to a woman and come out as a tim and she leaves because she’s ‘not into women/straight’, then she’s somehow still the most evil bigoted hater there ever was, even though \*she\* was honest from the beginning about her sexuality and expectations.

(b) i wonder how many Muslim women would remove their scarves and/or shake hands with a tim?

(c) when a tim, taller, heavier, and stronger than an average woman, tries to coerce a lesbian woman into sex with his ‘feminine penis’ and she refuses, he is liable to take to twitter, doxx her entire family, have her banned from her community, fired from her job, and send a mob of trolls after her with some death threats as well, if he doesn’t already resort to physical violence on the spot.

(d) i sat down next to this tif one day and was like, ‘dude what’s up!’ if it’d been a tim, major problems.

(e) notice how their example case is a tif, who never get talked about in activism except as a way to persuade the public to do something detrimental to women.

(f) a ‘non-cis man’ would be a trans man, aka a tif

Keyword comparison of r/GenderCritical with r/Feminism as the reference corpus

The 10 top keywords of r/GenderCritical in comparison to r/Feminism in descending order of log likelihood (p < 0.0001) are: (1) trans; (2) tims; (3) lesbian; (4) lesbians; (5) they; (6) tim; (7) gay; (8) transgender; (9) dysphoria; and (10) gc. The keyword most specific to r/GenderCritical in comparison to r/Feminism, then, is trans, which represents the specific difference in focus between these groups. The metonymic acronym tim(s) has already been discussed in conjunction with r/GenderCritical topic 8 as potentially relating to hate based on identity; topic 8 also contains the keyword lesbian(s). Similarly, lesbian was a key term in the r/GenderCritical overarching topic 0 that was not also present in the r/Feminism topic 0. Thus lesbian has specific relevance in r/GenderCritical, and in relation to specific gender critical concerns.

While lesbian is present in two of the randomly selected uses of tim discussed previously, three sentences containing the lemma lesbian specifically were randomly selected to further explore its relevance in the r/GenderCritical context. These examples (g-i below) provide more insights into some tensions related to this issue. They evoke a sense of confusion, and perhaps outrage, in relation to disputes around social categories. Example (h) again uses tim in conjunction with lesbian.

(g) lesbian and gay people being called bigoted because of our same-sex attraction

(h) next week’s escalation of bullshit: the first he/him lesbian tim complaining that he constantly gets misgendered as ‘her’

(i) they announce they’re proud to be ‘lesbian representation’ and that they’ve ‘only been a lesbian a few months’ how is this not a joke?

The third-person pronoun they is the most characteristic pronoun of r/GenderCritical in comparison to r/Feminism, and the fifth most significant keyword overall, which may suggest a dominant r/GenderCritical focus on the outgroup, which also has relevance in relation to the presence as an r/GenderCritical keyword of the dominant metonymic acronym for the outgroup: tim (and tims). This prominent r/GenderCritical use of jargon and ingroup/outgroup language meets the structure criterion for identification as an online community (Herring, 2004).

While the pronoun they is prevalent generally in comparison to the more context-specific keywords tim and lesbian, to consider use of they in the r/GenderCritical context, three random sentences containing the pronoun they were selected from the data (j-l below). Example (k) appears to specifically use they to reference an outgroup, and is combined with use of us to reference the ingroup. In examples (j) and (l) in comparison they appears to be used in a more general way, although these uses of they also appear to represent a group to which the speaker does not belong in relation to the topic.

(j) as long as she is talking shit about the dreaded sjws or evil feminazis in general, then they give her a seat at the table

(k) patriarchal systems allow us to have some feminist conversations because they are not threatened by us.

(l) i wear a mix of women’s and men’s clothes to work and they don’t care and i’m a lot happier.

Sentiment analysis

To further investigate the issue of hateful speech on r/GenderCritical, sentiment analysis was run to calculate a negative, neutral, and positive, value for each post, as discussed previously. This has shown that over half the posts for both r/GenderCritical (56.72%) and r/Feminism (54.31%) are predominantly negative. Overall, in comparison to r/Feminism, r/GenderCritical has slightly more posts that are predominantly negative or positive, and fewer posts that are predominantly neutral: there is not a notable difference in the valence of posts on each forum.

Keyword analysis based on sentiment

To investigate the content of negatively rated posts on r/GenderCritical, a keyword analysis was carried out with posts having a high negative sentiment rating of more than 0.9 (131,677 posts) as the corpus of focus, and posts with a high neutral or positive sentiment rating greater than 0.9 (80,072 posts) as the reference corpus. The 10 top keywords for the most negative r/GenderCritical posts, ranked in decreasing order of log likelihood, are: (1) they; (2) fucking; (3) fuck; (4) men; (5) shit; (6) hate; (7) people; (8) disgusting; (9) their; and (10) why. Thus, the outgroup-relevant third-person pronoun they is the most characteristic term for the most negative posts, with the related third-person possessive pronoun their also a keyword for negative posts.

Specifically negatively valenced keywords for the most negative r/GenderCritical posts include fucking, fuck, shit, hate, and disgusting. The remaining keywords men, people, and why, are typically more neutrally-valenced in general use, suggesting that in the r/GenderCritical context these terms are anomalously and specifically associated with negative sentiment.

A subsequent keyword analysis was carried out with the corpus of focus and reference corpus reversed, that is, with posts with a neutral or positive sentiment rating of more than 0.9 (80,072 posts) as the corpus of focus, and posts with a negative sentiment rating greater than 0.9 (131,677 posts) as the reference corpus. The top 10 keywords for the most positive or neutral r/GenderCritical posts, ranked in decreasing order of log likelihood, are: (1) thank; (2) love; (3) great; (4) thanks; (5) good; (6) you; (7) amazing; (8) awesome; (9) I; and (10) glad. For the most neutral and positive r/GenderCritical posts in comparison to the most negative posts, then, pronouns present as keywords are the second-person pronoun you, and the first-person singular pronoun I. These may be related to interaction between a speaker, represented in the first-person singular pronoun I, and other participant(s) on the r/GenderCritical forum, represented in the second-person pronoun you, suggesting that negative sentiment is not typical in that context, that is, in the context of ingroup discourse addressed to the ingroup.

Diachronic analysis of sentiment

Diachronic analysis of the emotional valence of r/GenderCritical posts shows that they become increasingly more negative (r = 0.4414), increasingly more positive (r = 0.6046), and increasingly less neutral (r = −0.7248), over time. Change over time of emotional valence of posts was also considered in relation to level of participation on the forum over the ppi range 1–30, which is based on the fact that participants on r/GenderCritical make 27 posts each on average. It was found that r/GenderCritical posts become significantly more negative (r = 0.6506) and significantly less neutral (r = −0.7119) with increased participation over the range of posts with ppi 1–30. There is no significant change in positive content of posts (r = 0.1984) over this range of participation.

For r/Feminism also posts become significantly more negative over time (r = 0.3332), and more positive (r = 0.3595), but there is no significant change in neutrality of posts (r = −0.1382). Change in emotional valence with increased participation on r/Feminism was not investigated because as noted previously participants make only five posts each on average on this forum.

Diachronic analysis of r/GenderCritical keywords relating to the outgroup

In this section the change in prevalence of use over time of identified r/GenderCritical keywords relating to the outgroup is investigated. These include the third-person pronouns they, and their, which have been found to be associated with the most negative r/GenderCritical posts. Change in density of use of the metonymic outgroup acronym tim (trans-identifying male), another social-identity relevant keyword of r/GenderCritical in comparison to r/Feminism, is also investigated. The acronym tim is sought here where it functions as a noun, not as a name.

Diachronic analysis for the forum as a whole

Analysis of change in density of use of outgroup-related keywords over time by month shows that use of the outgroup reference terms they (r = 0.6636), and tim (r = 0.8428), increases significantly over time on r/GenderCritical, with use of tim increasing more than use of they. In comparison, density of use of the third-person possessive pronoun their, which is related to they, does not increase significantly over time (r = 0.2563). A related analysis of the proportion of posts containing the specific outgroup referent tim shows that 0.89% of posts contain tim on average, with 5.73% of posts containing tim at its peak monthly use in January 2018.

Diachronic analysis based on level of participation on the forum

An analysis of change in density of use of the same outgroup-related keywords on r/GenderCritical based on level of participation on the forum, shows that density of use of they (r = 0.8409), their (r = 0.5522), and tim (r = 0.4947), all increase significantly with increased participation on r/GenderCritical over the range of posts with ppi 1–30, with use of they and their increasing more than use of tim.

Correlation of terms with negative sentiment

To further consider use of these outgroup-referencing terms over time on r/GenderCritical, their correlation with negative sentiment was calculated by month, taking the average values for each month. It was found that there is a significant increase in negative sentiment associated with use of the third-person pronouns they (r = 0.5719) and their (r = 0.459) over time on r/GenderCritical. This can be compared with r/Feminism, in which there is no significant change in sentiment associated with use of they (r = 0.2219) and their (r = 0.0018) over time. There is no notable change in association of tim with negative sentiment (r = 0.05) over time on r/GenderCritical, which is consistent with the fact that unlike they and their, tim was not a keyword of the most negative r/GenderCritical posts.

Significance for identity of r/GenderCritical as a community

These significant changes in discourse over time on r/GenderCritical, by month, and by level of participation on the forum, support fulfilment of the meaning (negotiation of meaning) and social behaviour (norms of appropriateness) criteria for identification of r/GenderCritical as an online community (Herring, 2004).

Discussion

In the current study the important issue of automatic hate speech detection was investigated in relation to the r/GenderCritical Reddit forum, which was banned in June 2020 for promoting hate based on identity. As discussed previously, automatic identification of hate speech is a difficult task, which may create social justice issues such as exclusion, demographic misrepresentation, and bias confirmation (Hovy and Spruit, 2016; Mathew et al., 2022). Taking a computational approach, and with no pre-defined terms of focus, discourse from the r/GenderCritical forum was compared to the related, but un-banned forum r/Feminism. A topic modelling analysis with high coherence showed that these fora do share similar concerns, for example they have in common 19 out of the 30 overarching topic 0 terms, and the same two top terms (woman, man). An r/GenderCritical topic relating to the specific gender critical concern of gender_identity was found to contain the metonymic acronyms tim (trans-identifying male), and tif (trans-identifying female), that represent the specific gender critical stance that trans women are still men, and trans men are still women, where metonymic language, and acronyms, may typically be used to stereotype, and denigrate, an outgroup. In the cognitive linguistics approach, metonymy is understood as a basic characteristic of cognition, and a major source of prototype effects (Lakoff, 2008).

The outgroup-related metonymic acronym tim and third-person pronoun they were found to be prominent keywords when r/GenderCritical was compared with r/Feminism. The pronoun they is also the highest-ranked keyword when the most negative r/GenderCritical posts are compared with the most positive or neutral r/GenderCritical posts. In comparison, the second-person pronoun you and first-person singular pronoun I are keywords when the most positive and most neutral r/GenderCritical posts are compared with the most negative posts, suggesting that ingroup discourse on r/GenderCritical, represented by these pronouns, is typically not negative. This echoes previous research showing that the outgroup referent they is significantly less associated with positive contexts than are the ingroup referents I and we, with I being the most associated with positive contexts (Gustafsson Sendén et al., 2014).

Use of the outgroup referents they and tim is significantly increased over time on r/GenderCritical, both by month, and by level of participation on the forum. This supports the view that these terms have high relevance for ingroup discourse and community membership, and social identity of participants, including that r/GenderCritical participants potentially become products of that discourse as well as producers of it (Cervone et al., 2021; Edley, 2001). This may be related to the finding in the current study that r/GenderCritical meets the criteria for identification as an online community (Herring, 2004): it has been argued that it is specifically within such ‘speech communities that identity, ideology and agency are actualized in society’ Morgan (2014: 2).

Diachronic analysis shows that sentiment becomes significantly more negative (r = 0.4414) and more positive (r = 0.6046) over time on r/GenderCritical, and significantly less neutral (r = −0.7248). Negative and positive sentiment also increase significantly, though with a less strong effect, on the r/Feminism forum. This change in valence may be a sign that the group starts to function as a community, in that (i) common negative targets are established, and (ii) positive valence is enhanced by participation in a similar-minded community, with, as discussed above, the ingroup-related pronouns I and you found to occur in the most positively and neutrally valenced r/GenderCritical posts. In comparison while use of the outgroup referents they, and their, on r/GenderCritical is associated with significantly more negative sentiment over time, that is not the case on r/Feminism. It is notable that use of the r/GenderCritical outgroup-referent tim is not associated with significantly more negative sentiment over time, which is consistent with the finding that unlike they and their, tim is not a keyword of the most negative r/GenderCritical posts.

The outgroup-specific referent tim is a representation of the specific gender critical stance, and, unlike they, in the r/GenderCritical context tim has not been found to be associated with negative sentiment. However, tim may from other social positions be understood as an intrinsically hateful construal of trans-women, equivalent to deliberate mis-gendering. If use of they and tim can be said to be hateful in reference to the identity of the r/GenderCritical outgroup, then r/GenderCritical could be said to be promoting hate based on identity, predominantly of trans women.

From a socio-cognitive perspective, use of tim on r/GenderCritical should also be considered in relation to use of the metonymic acronym terf, discussed previously, by r/GenderCritical outgroups. On r/GenderCritical, terf is understood as a slur, or hateful speech, to represent the gender critical stance. This perception is illustrated by the following three randomly selected uses of terf on r/GenderCritical:

(m) because terf means ‘bitch’.

(n) doesn’t his quote pretty much hit the nail on the head? terf is absolutely a slur against females brandished by males. that’s why it’s sketchy territory. . . too close to the truth.

(o) terf isn’t a slur but we are going to smash terfs out of existence but terf isn’t a slur.

As discussed previously, outgroup derogation and outgroup homogeneity are recognised social behaviours. For example derogation of the outgroup has been identified as a method of relieving stress in situations in which intergroup threats to identity are perceived (Amira et al., 2021; Sampasivam et al., 2016). And social identity has been found to be important in predicting collective action, with feelings of injustice relating to the ingroup found to produce stronger effects than non-affective (cognitive) perception of injustice, and with politicised identity found to produce stronger effects than un-politicised identity (van Zomeren et al., 2008). Perception of social injustice, then, may lead to a breakdown in intergroup communication (Dubé-Simard, 1983), which appears to be what has happened in the gender identity debate more widely, in which each position by its definition appears to undermine the rights of the other. It would be productive in future work to investigate the language of r/GenderCritical outgroups, since the behaviours of r/GenderCritical are linked to that wider social context. There is also the important related issue of whether the actions of Reddit may be said to have unjustly no-platformed the r/GenderCritical community, perhaps in response to dominant social mores, which may be of particular concern since as noted earlier the discourse of women is suppressed on Reddit, and on the internet more widely. To consider wider implications, and generalisability of findings, therefore, it would be informative to also compare the discourse of r/GenderCritical with that of other groups banned by Reddit for promoting hate based on identity. In conclusion, a socio-cognitive discourse study informed approach to hate speech detection, that pays attention to ‘the attitudes and ideologies of language users as current participants of the communicative situation and as members of social groups and communities’ (van Dijk, 2017: 28), may help address related ethical concerns, including potential social injustice.

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.