Abstract

Do individuals possess a “gaze fingerprint” that reveals how they uniquely look at the world? We tested this question by examining intra- and intersubject gaze similarity across 700 static pictures of complex natural scenes. Independent discovery (

Human gaze patterns provide one of the most important signals regarding what individuals find most salient about their environments. Gaze behavior varies considerably within and between individuals and can be influenced by a variety of statelike and traitlike factors. Statelike factors can be broadly construed as factors extrinsic to the individual that are stimulus-dependent, context-dependent, or situationally dependent, or a combination of these (e.g., bottom-up salience of stimulus characteristics, viewing conditions, task or social constraints, reward environments, novelty; Borji et al., 2013; de Haas et al., 2019; Einhauser et al., 2008; Guy et al., 2019; Henderson & Hayes, 2017; Itti et al., 1998; Xu et al., 2014). In contrast, traitlike factors imply an intrinsic or individualized uniqueness to gaze behavior that may be invariant across situations and context and that show characteristics such as temporal stability and idiosyncrasy (Avni et al., 2020; Broda & de Haas, 2022; de Haas et al., 2019; Guy et al., 2019; Keles et al., 2022; Linka & de Haas, 2020; Linka et al., 2022). The existence of traitlike components can be supported by neurodevelopmental research in twin populations. Such work has revealed heritable common genetic variation to be one such powerful within-individual mechanism constraining gaze behavior in infants and children (Constantino et al., 2017; Kennedy et al., 2017; Portugal et al., 2023). Computational modeling of gaze behavior also indicates that it is possible to isolate traitlike idiosyncratic gaze-behavior tendencies. For example, some recent work has suggested that idiosyncratic gaze patterns can be predicted by computational models that learn how gaze relates to an individual’s intrinsic conceptual priorities (Haskins et al., 2025). Thus, combined evidence from prior neurodevelopmental, genomic, and computational modeling suggests that it may be possible to isolate individuating signatures, or “fingerprints,” in gaze behavior that are of high neurobiological and developmental significance.

Gaining a better understanding of intrinsic traitlike components explaining human gaze is also important for its implications on new technology and other translational endeavors. New technologies are emerging that routinely collect eye-gaze data at scale in very large quantities. The ability to identify individuals by what they look at merged with other personal data could have a very large impact and raise issues regarding privacy (Cantoni et al., 2018; Kröger et al., 2020; Liebling & Preibusch, 2014; Rigas et al., 2016). Gaze-fingerprint markers may also be of high translational relevance for conditions in which gaze is atypical, such as autism. Atypical gaze is evident in autism both in early development (Constantino et al., 2017; Jones & Klin, 2013) and at older ages (Wang et al., 2015). Such atypical social-visual engagement in autism is theorized to be a manifestation of genetically constrained, yet atypical, biological-niche construction (Johnson, 2017; Johnson et al., 2015; Klin et al., 2020; Lombardo et al., 2019; Shultz et al., 2018). Gaze-fingerprinting markers could provide an individualized metric for underlying differential biology (Lombardo et al., 2019) and for assessing change as a function of manipulations to an individual’s environmental niche (e.g., early intervention).

In this study we investigate the phenomena of gaze fingerprinting—that is, identifying individuals on the basis of repeatable but unique spatial distributions of gaze patterns. Prior work focusing on saccade-based metrics (e.g., velocity, acceleration, vigor) has shown some promise as an individuating biometric (e.g., Bargary et al., 2017; Choi et al., 2014; Juhola et al., 2013; Rigas et al., 2016). In contrast, utilization of gaze measures that better define where and what someone samples in the environment (i.e., spatial-gaze-distribution patterns) have been much less studied. Prior work examining spatial-fixation density, gaze heat maps, or both has shown that individualized spatial-gaze patterns can be quite stable and systematic (Avni et al., 2020; Broda & de Haas, 2022; de Haas et al., 2019; Guy et al., 2019; Linka & de Haas, 2020; Linka et al., 2022). However, such work does not address whether the target individuals can be accurately identified when their gaze patterns are compared with the gaze patterns of many other distractor individuals.

To our knowledge, three prior studies have directly attempted to address whether individuals can be successfully identified solely on the basis of spatial-gaze patterns that define what they sample in complex visual environments (Keles et al., 2022; Kennedy et al., 2017; Rigas & Komogortsev, 2014). These studies suggest that some, though not all, individuals may be gaze-fingerprintable and that such gaze fingerprints may be potentially driven in part by genetic similarity. For example, two independent studies examining dynamic movie stimuli have reported identification rates around 30 to 40% (Keles et al., 2022; Rigas & Komogortsev, 2014). Both studies noted utilization of a relatively small number of dynamic movie stimuli that do not allow for sampling gaze patterns across a large variety of possible stimulus categories or contexts. Furthermore, both studies also reported observations that increasing the amount of gaze data sampled can lead to an increase in identification accuracy. These key limitations may suggest that sampling from a larger array of visual stimuli within individuals could lead to marked increases in our ability to gaze-fingerprint an individual.

To the best of our knowledge, no studies thus far have directly examined gaze fingerprinting the same individual on static pictures of complex scenes. Unlike movie viewing—an activity of longer duration—a broad array of different categories and contexts can be sampled in a short period of time with rapid presentations of many static pictures of complex visual scenes (e.g., de Haas et al., 2019; Xu et al., 2014). A study that was closely related to the concept of gaze fingerprinting showed that twin pairs could be accurately identified at a rate of 29% from gaze patterns to a small set of static pictures of complex scenes (Kennedy et al., 2017). In contrast, identification accuracy of dizygotic twin pairs was substantially lower at 7%, but higher than nontwin pairs and chance accuracy of 1% (Kennedy et al., 2017). Given the near 30% accuracy rate for identifying twin pairs, it is possible that accuracy rates for static stimuli on the same individuals may be much higher than ~30%, particularly when sampled in a big-data context with a stimulus-rich experimental design. Because the study found a dissociation in identification rate between monozygotic and dizygotic twins, it is conceptually related to gaze fingerprinting by demonstrating that the degree of gaze-similarity scales with genetic similarity. Furthermore, this twin study suggests that gaze similarity and gaze fingerprinting together may be a eye-tracking biomarker of something biologically more insightful regarding how heritable polygenic genomic architectures build early neural circuitry in ways that prepare it to seek out, explore, and construct similar environmental niches in which to develop.

The current set of studies considerably expands our understanding of the concept of gaze fingerprinting when expanded at scale in a stimulus-rich experimental design. We used a stimulus-rich experimental design that samples gaze across 700 stimuli of static pictures of complex natural scenes that span a wide range of semantic categories (Xu et al., 2014). Spatial distributions of gaze patterns were measured with gaze heat maps in a relatively large sample of participants across discovery and replication sets (discovery

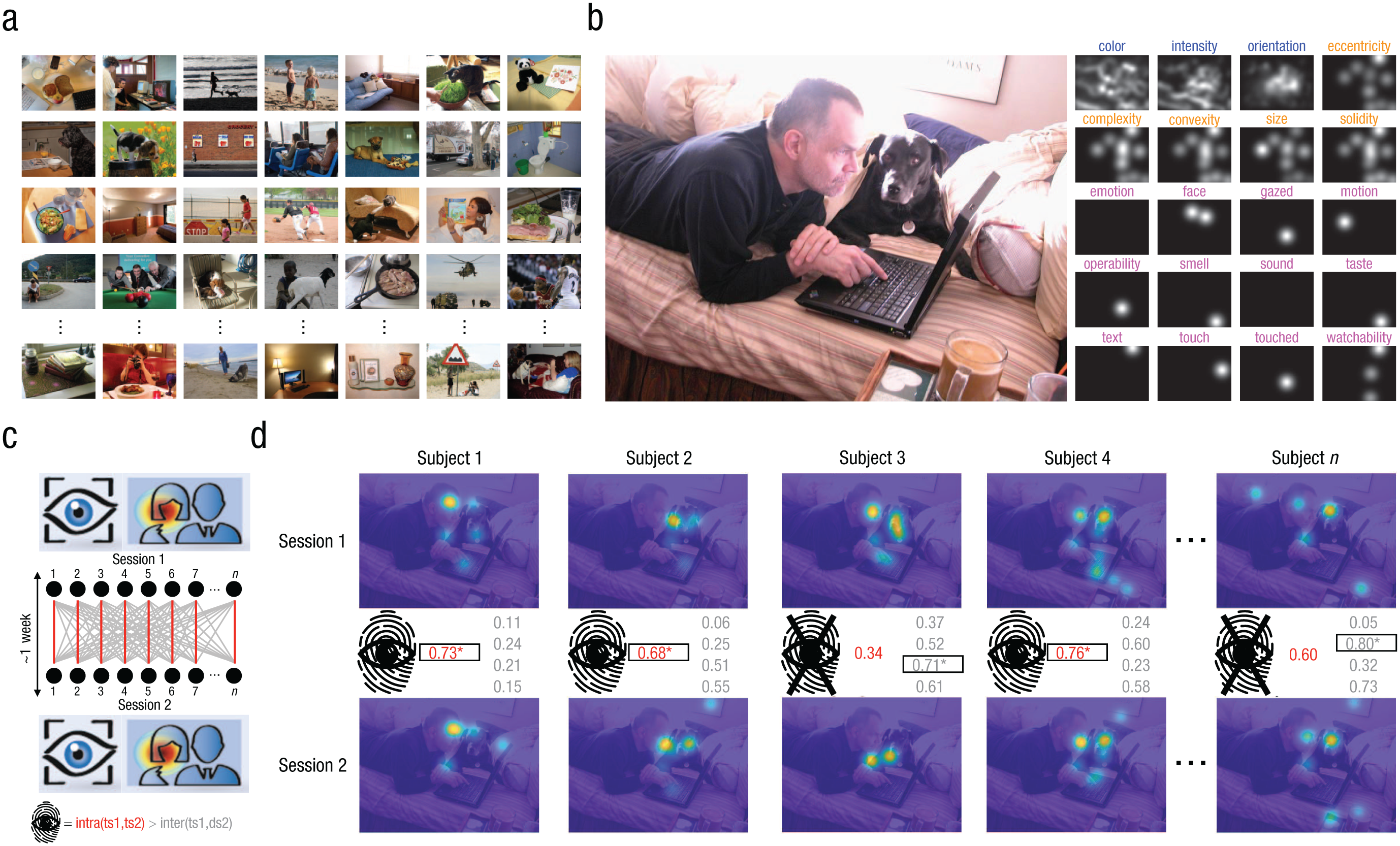

Experimental design and example of the gaze-fingerprinting approach. In (a) we show examples of the 700 stimuli in the Object and Semantic Images and Eye-tracking (OSIE) stimulus set (Xu et al., 2014). Participants were asked to freely view each of the 700 stimuli over a 3-s period in test and retest sessions separated by ~1 to 2 weeks. In (b) we show the rich annotation of the OSIE stimulus set across pixel-level (blue), object-level (orange), or semantic (pink) features. The example in (b) shows a stimulus on the left, whereas on the right are the various maps of different annotated features. In (c) we show a schematic example of the gaze-fingerprinting approach. Individuals (black dots) completing Session 1 (top) and Session 2 (bottom) are compared via their gaze heat maps for each stimulus. Red links between Session 1 and Session 2 represent the intrasubject gaze similarity between a target individual’s heat maps. The Session 1 heat maps for each target subject (ts) are also compared with Session 2 gaze heat maps for other distractor subjects (ds), and intersubject gaze similarity is computed (gray links). A gaze fingerprint is defined by a situation in which the target individual’s intrasubject gaze similarity (red) is higher than all other intersubject gaze similarity values (gray) between the target and distractor individuals, as shown in (c) at the bottom. In (d) we show a simple example of gaze fingerprinting in practice: the top row shows Session 1 heat maps, and the bottom row shows Session 2 heat maps. Intrasubject (red) and intersubject (gray) gaze-similarity values (e.g., Pearson’s

Research Transparency Statement

General disclosures

Study 1 disclosures

Study 2 disclosures

Materials and Method

Discovery data set (Study 1)

The discovery data set (henceforth “IIT”) comprised individuals tested in an eye-tracking study carried out within the Istituto Italiano di Tecnologia (IIT) Laboratory of Autism and Neurodevelopmental Disorders (IIT-LAND). This study was reviewed and approved by the local ethics committee (Comitato Etico per le sperimentazioni cliniche dell’Azienda Provinciale per Servizi Sanitari della Provincia Autonoma di Trento; APSS). All participants gave written informed consent and were reimbursed for their time participating in the study. Participants were primarily recruited through social media and convenience sampling within the University of Trento Center for Mind and Brain Sciences (CIMeC) department. A total of 120 typically developing adult participants (age range = 18–50 years) with normal or corrected-to-normal vision took part in this study. All participants participated in two sessions, and the separation between the two sessions was 7 days on average (

Replication data set

As a replication data set, we reanalyzed anonymized publicly available test–retest eye-tracking data reported from de Haas et al. (2019; https://osf.io/n5v7t/). Henceforth, this replication data set is identified by the original acronym assigned by de Haas and colleagues (“Gi”). The experimental design and stimuli for both the IIT discovery and Gi replication data sets were identical in that both utilized the OSIE data set of 700 stimuli, and participants were instructed to freely view the stimuli over a 3-s period in both test and retest sessions. The primary difference between the IIT discovery and Gi replication data sets was the duration of time between test and retest sessions. Although the IIT data set had a delay of 7 days on average (

Eye-tracking task and stimuli

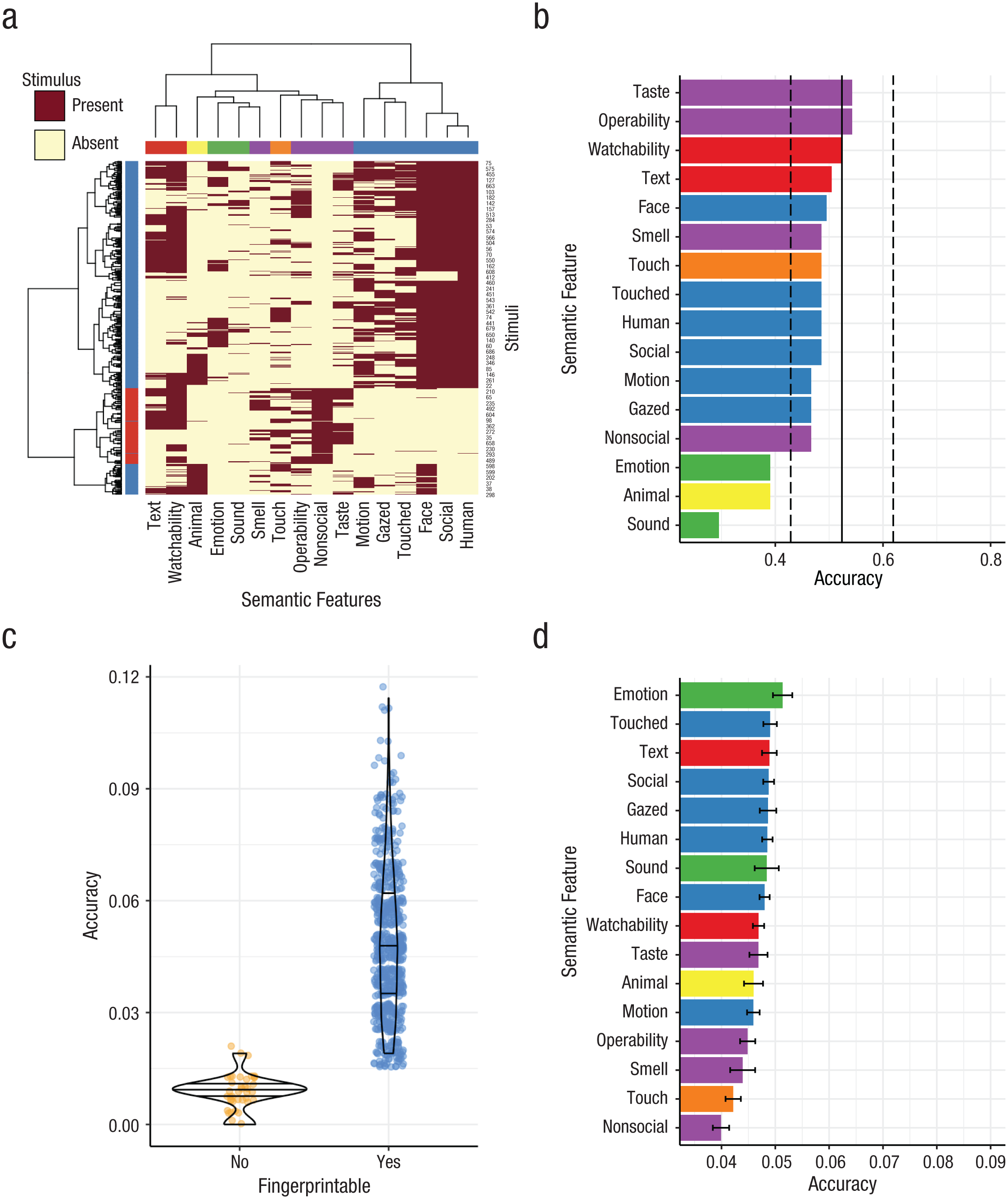

Participants were asked to freely watch 700 stimuli of complex natural scenes taken from the OSIE stimulus set (Xu et al., 2014). Stimuli in the OSIE set are annotated both for their low pixel- and object-level features as well as higher-level semantic features (Fig. 1b; Xu et al., 2014). Semantic features represented categories such as face, emotion, touched, gazed, motion, sound, smell, taste, touch, text, watchability, and operability. Further annotation beyond these 12 categories was done to label stimuli by presence or absence of humans or animals as well as a categorization of whether the stimuli had social (i.e., presence of human faces) or nonsocial (i.e., absence of humans or animals) content. Although all semantic features can be analyzed independently, they co-occur within the OSIE stimuli in a nonindependent manner (i.e., several semantic features can describe a stimulus). Therefore, to observe the emergent structure of co-occurrence of multiple semantic features as clusters, we utilized two-way agglomerative hierarchical clustering with Jaccard distance matrices and ward.D2 as the clustering agglomeration method to reveal data-driven clusters across all 700 stimuli and across all semantic features (Fig. 2a). This analysis reveals that there are two primary clusters among the 700 stimuli that largely define social versus nonsocial stimuli (Fig. 2a). Semantic features can be separated into six clusters (Fig. 2a).

Gaze-fingerprint identification rates computed on median gaze similarity across stimuli or for each individual stimulus. In (a) is shown a heat-map representation of the co-occurrence of various semantic features (columns) across all 700 stimuli (rows) in the OSIE stimulus set. Dark red indicates the presence of a stimulus in a particular semantic-feature category, whereas light yellow indicates the absence of a stimulus in a particular semantic-feature category. Colors for clusters of semantic features (underneath the column dendrogram) are also used in (b) and (d). Identification rates (i.e., accuracy) in the IIT discovery data set are illustrated in (b) through (d). The vertical black line in (b) shows accuracy (

Procedure

Eye-tracking data in the IIT data set was collected on a Tobii Pro Spectrum machine—diagonal screen size = 24 in, aspect ratio = 16:9, resolution 1920 × 1080, screen size in degrees of visual angle (DVA) 47.7° horizontally × 28° vertically—with a sampling rate of 150 Hz. Participants sat in front of the eye-tracking monitor at a distance of approximately 60 cm with their chin on a chin rest. Stimuli were 800 × 600 pixels and were scaled to the size of the screen during stimulus presentation, resulting in stimuli size, in DVA, of 36.9° horizontally × 28° vertically. Within the Gi replication data set, eye-tracking data was collected on an EyeLink 1000 (SR Research, Ottawa, Canada) with a sampling rate of 1 kHz. Participants sat with their chin on a chin rest at a distance of 46 cm from the screen. Stimuli were presented at a resolution of 1,000 × 750 pixels with a size in DVA of 41.9 degrees horizontally × 32.1 degrees vertically. Each participant in both data sets completed two eye-tracking sessions separated by an average of 7 (IIT) to 16 (Gi) days. Within each session, participants viewed all 700 stimuli, broken into 7 blocks of 100 stimuli each. Each stimulus was shown for a duration of 3 s. Participants were given the instructions to watch the stimuli in a natural fashion (e.g., “look at the images in any way [they] want”). Stimulus presentation was randomized in such a way that within each session participants viewed the stimuli in a random order. Between the presentation of each stimulus was a fixation cross in the center of the screen. Between stimuli, the IIT data set used a jittered duration between 500 to 1,000 ms, whereas in the Gi data set proceeding to the next stimulus was self-paced; a button press was required to move on. At the end of each block, participants were given the opportunity to rest before starting the next block. The entire eye-tracking session with all seven blocks lasted approximately 60 min in the IIT data set. Stimulus presentation was implemented within MATLAB 2019b and the Psychophysics Toolbox (http://psychtoolbox.org/) Version 3.0.16 for the IIT data set, and MATLAB 2016b and Psychophysics Toolbox Version 3.0.12 for the Gi data set. Calibration was conducted using 5 (IIT) or 9 (Gi) calibration and validation points and was repeated if necessary. Within the IIT data set, we accepted an average calibration accuracy across points and across the two eyes of less than 2° of visual angle. There were no differences in calibration accuracy in the IIT data set between Session 1 and Session 2 (Session 1: mean = 0.47°,

Behavioral measures

At the end of the first session in the IIT discovery data set, participants completed the Wechsler Abbreviated Scale of Intelligence (WASI) in order to characterize full-scale IQ. At the end of the second session, participants were asked to complete two self-report questionnaires measuring autistic traits, translated into Italian—the Autism Quotient (AQ; Baron-Cohen et al., 2001) and the adult self-report form of the Social Responsivity Scale, Second Edition (SRS-2; Constantino & Gruber, 2012). A small subset of individuals (

Gaze-fingerprinting analysis

Fixations were defined using a velocity-based classification algorithm in which eye movements are identified on the basis of a threshold when the velocity of directional shifts of the eye exceed 30° of visual angle per second. Data below this velocity-based threshold are considered fixations. Fixations with a duration below 100 ms were excluded. These criteria for defining fixations were identical across IIT and Gi data sets. The

Gaze fingerprinting analysis utilizes both intrasubject and intersubject gaze similarity values. A symmetric gaze-similarity matrix of size [

Gaze-fingerprinting analysis, as defined above, was applied at scale to every stimulus. However, there were some situations in which no gaze data was collected for a specific stimulus. When this occurred, no fixation heat maps could be computed, and thus all further subsequent steps of the fingerprint-analysis pipeline were not completed for that stimulus. In addition to gaze fingerprinting applied at scale to every stimulus, we also applied gaze-fingerprinting analysis to gaze-similarity data (i.e., intra- and intersubject similarity) that was summarized by the median over all of the set or subsets of the stimuli set, grouped by semantic features. To mimic the gaze-fingerprinting analysis procedure of Kennedy et al. (2017), we first computed median gaze similarity by computing the median over all 700 stimuli. The median was chosen to better reflect central tendency of the distribution than the mean, particularly in situations in which the distribution is skewed. When distributions are not heavily skewed, the mean and median should be relatively similar. With a three-dimensional gaze-similarity matrix of size [

The hit or miss nature of the gaze fingerprinting output is binary in nature and may hide information regarding the continuous nature of fingerprintability or the degree of gaze uniqueness. That is, the fingerprint output may be a miss because the intrasubject similarity is not the maximum gaze similarity among all distractor individuals. However, in the instance in which the intrasubject similarity is still ranked as very large (e.g., second-highest gaze similarity), this would be a situation in which the individual’s gaze is unique to some high degree, though not maximally unique when compared with all distractor individuals. Thus, to derive a continuous metric of degree of gaze uniqueness, we developed a score we call the

Gaze-fingerprint barcoding

Given the stimulus-rich nature of the OSIE data set (e.g., 700 stimuli), gaze fingerprinting at scale for each subject and stimulus allows for a binary output matrix [

Association analysis with autistic traits

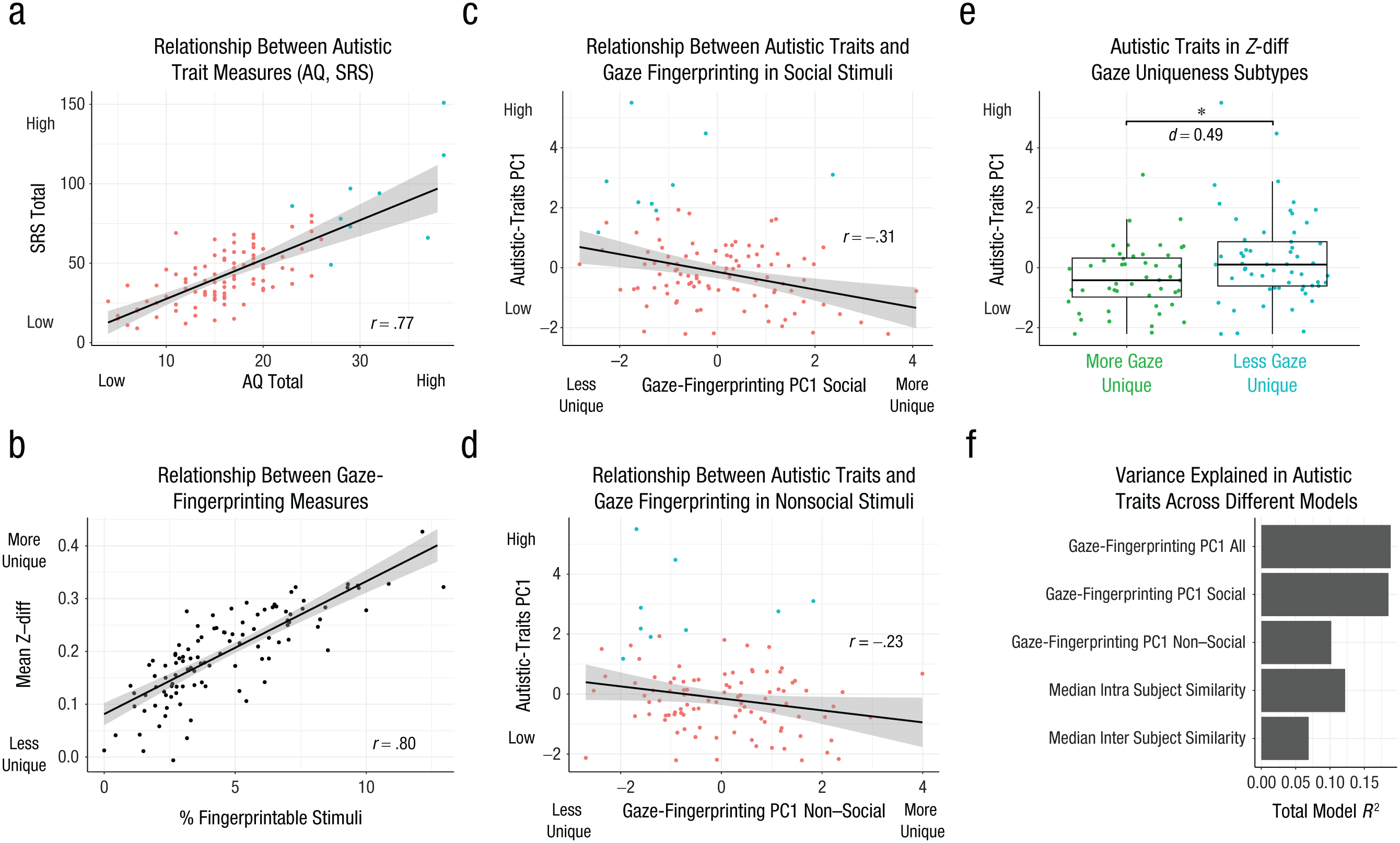

Robust linear regression modeling was utilized as the modeling strategy to test for association between gaze fingerprinting metrics and autistic traits. Autistic traits were considered the dependent variable in the model. Autistic traits were initially measured via two different questionnaires, the AQ and the SRS-2. These measures are highly correlated (

We also compared different models for predicting autistic-traits PC1. All of the models used percentage of missing-gaze data and sex as covariates. Model 1 utilized gaze-fingerprinting PC1 computed across all stimuli. Models 2 and 3 utilized gaze-fingerprinting PC1 as well, but stratified by social (Model 2) or nonsocial (Model 3) stimuli. Model 4 utilized the median intrasubject gaze similarity computed across all 700 stimuli. Finally, Model 5 utilized the median average intersubject gaze similarity computed across all 700 stimuli. Models 4 and 5 are helpful for understanding whether any relationships with the gaze-fingerprinting metrics (i.e., Models 1–3) are simply better explained by just intrasubject (Model 4) or intersubject (Model 5) gaze similarity. The total model percentage of variance explained (

Preregistered short-term and long-term delay follow-up experiments (Study 2)

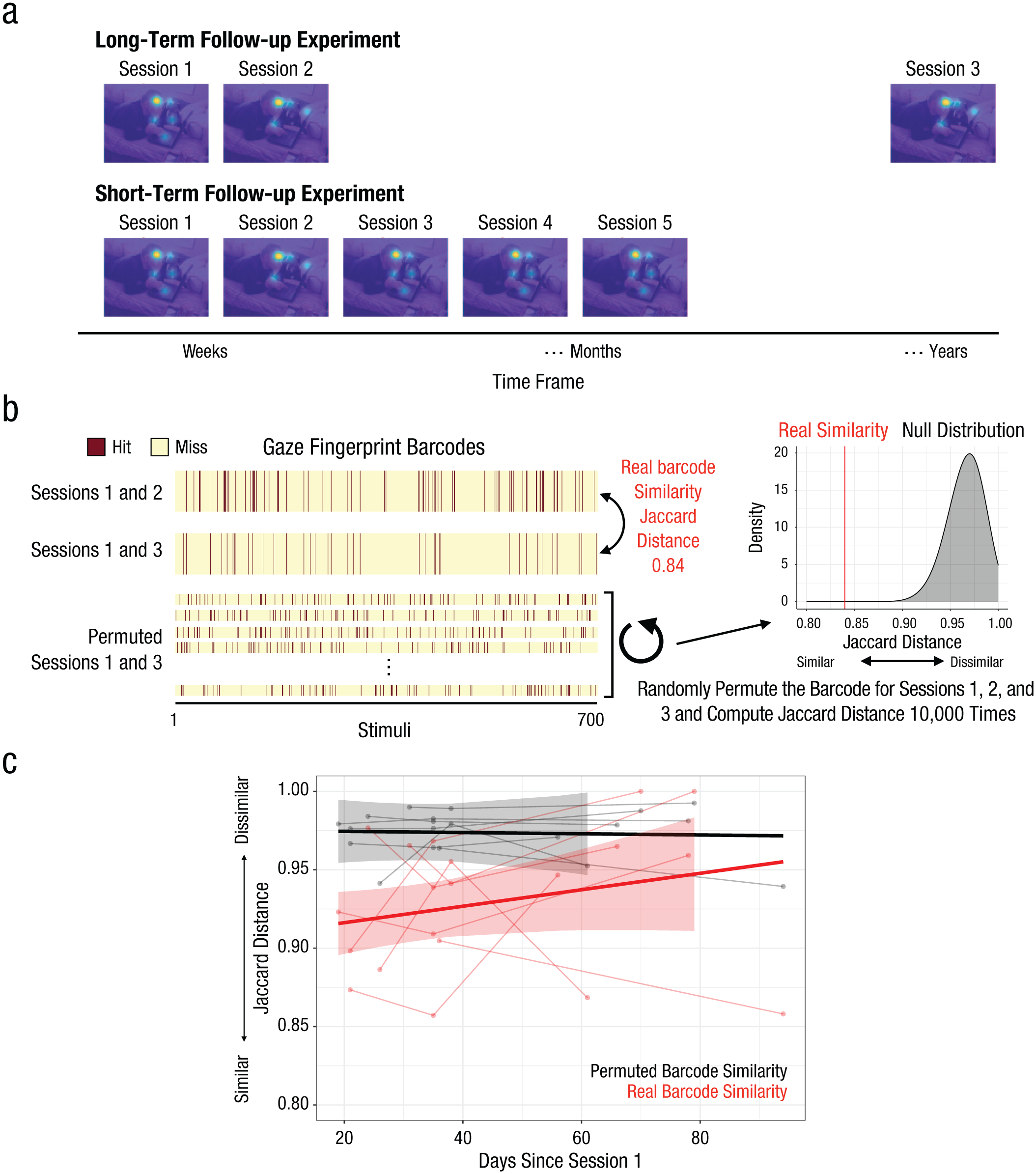

The gaze-fingerprint barcodes reported from the Discovery data set are derived from two eye-tracking sessions, which were separated by about 7 days. With only one barcode per individual, it could be claimed that the barcodes derived from the Discovery data set are essentially a random sequence of hits and misses. If this were true, it would lead to a hypothesis that measuring barcodes on the same individual in further follow-up repeat eye-tracking sessions would lead to barcodes that are not similar to the original barcodes derived from the original sessions (Session 1 and Session 2). To test this null hypothesis (i.e., that gaze-fingerprint barcodes are random and not significantly similar within individuals), we embarked on two follow-up preregistered experiments in which we measured gaze-fingerprint barcodes within individuals after either short-term delays (19–90 days) or long-term delays (~ 1.87–5.16 years) and then evaluated whether those later follow-up barcodes are significantly similar to the original barcodes derived from individuals in the original Sessions 1 and 2. The long-term follow-up experiment included only one additional eye-tracking session after the long delay period on the order of years. This allowed for one comparison of barcode similarity between Sessions 1 and 2 (a separation of 7 days) and Sessions 1 through 3 (a separation of 1.87–5.16 years). In contrast, the short-term follow-up experiment was designed to have five repeat eye-tracking sessions, which allowed for three repeated measurements of barcodes and for similarity between Session 1 and 2 barcodes (a separation of 7 days) to be compared with barcodes for Sessions 1 through 3, 1 through 4, and 1 through 5 (a separation of 19–90 days). Although the long-term delay experiment allowed for only one measurement of a barcode, and thus does not allow for multiple repeat barcodes to be derived after such long-term delays, the five repeat eye-tracking sessions included in the short-term follow-up experiment allowed for examination of barcode similarity within individuals as a function of time on the order of months. This unique longitudinal characteristic of the short-term follow-up experiment allows for tests of how barcode similarity changes (or not) over time within an individual. For the preregistration on the Open Science Framework (OSF), please see https://doi.org/10.17605/OSF.IO/ATJ7B. Below we will explain the methods and analysis behind these follow-up experiments.

For the experiment testing barcode similarity over long-term delays, we aimed to test 10 participants from the original Discovery data set in a single eye-tracking session, Session 3, that was completed about 5 years after the original eye-tracking sessions (Sessions 1 and 2). After applying the same data-quality filtering as in the original Discovery data set experiment (i.e., including only individuals with missing gaze data in less than 15% of stimuli), we retained these 10 individuals for further analysis. These individuals repeated the same experimental paradigm and procedure as in Sessions 1 and 2 (e.g., viewing 700 static pictures of complex natural scenes from the OSIE stimulus set). Session 3 with these individuals was separated from earlier sessions by an average of 3.58 years (

It is significant that for all computed barcodes employed in these follow-up experiment analyses, Session 1’s data was always used as a primary baseline data set, because it represented the only pure measure of an individual’s traitlike gaze tendencies not influenced by statelike factors introduced by repeat viewing contexts. In the long-term follow-up experiment, we computed two gaze-fingerprint barcodes derived from each individual—one barcode derived from Sessions 1 and 2, separated by approximately 7 days, and another barcode derived from Sessions 1 and 3, separated by 1.87 to 5.16 years. Using these two barcodes, we can test the null hypothesis that over long periods of time (e.g., years), gaze-fingerprint barcodes are random and not a repeatable individuating code signifying what is unique about individual gaze—that is, what an individual looks at within complex natural scenes of static pictures. To quantify the similarity between barcodes in Sessions 1 and 2 and Sessions 1 through 3, we computed Jaccard distance between these two barcodes, identical to the similarity metric used in the original Discovery data set clustering analyses. We then compared the real barcode similarity to a distribution of Jaccard distance values simulated under the null hypothesis: We did this by permuting the barcode for Sessions 1 through 3 and recomputing Jaccard distance with the barcode from Sessions 1 and 2. This permutation analysis was run 10,000 times to build the null distribution of Jaccard distance between barcodes. A

The second preregistered follow-up experiment was conducted to examine barcode similarity over short-term delays of 19 to 90 days. This was done as a contrast to what occurs in the long-term delay experiment, but also to examine within-individual longitudinal change in barcode similarity over time. In this short-term follow-up experiment, we aimed to test 10 individuals over five repeat eye-tracking sessions that were identical to those in the original Discovery and long-term delay experiments. Although the aim was to collect 10 individuals, one participant was dropped because of lack of data on return sessions (Sessions 3–5). Furthermore, after applying data-quality filtering identical to that of the original Discovery data-set experiment (i.e., including only individuals who were missing gaze data for fewer than 15% of stimuli), the final sample sizes were 7 for the delay between Sessions 1 through 3, 6 for the delay between Sessions 1 through 4, and 7 for the delay between Sessions 1 through 5. The delay between Sessions 1 and 2 was similar to the delay in the original Discovery data set (e.g.,

Data and code availability

All code and data for reproducing the analyses can be found here: https://doi.org/10.5281/zenodo.17224834.

Results

In Study 1, we used the IIT discovery data set to test whether gaze fingerprinting is possible. If gaze fingerprinting is possible, we also examined how good it is at accurately detecting individuals as a function of semantic features within stimuli. As a first approach to answering these questions, we computed median intra- and intersubject gaze similarity across all 700 stimuli before running classification analysis to predict individual identity. The step of computing median gaze similarity over all stimuli is important as it emphasizes how similar individuals’ gaze is, either with themselves or with other people computed as a central tendency over all stimuli. Here we found fingerprint accuracy (i.e., identification rate) of 52.40% in the IIT Discovery data set (Fig. 2b) and 62.98% in the Gi replication data set (see Fig. S1c in the Supplemental Material). Both identification rates are well above the theoretical (e.g., ~1%–2%) and empirically derived (via permuting subject identifier) chance identification rate (

We next reran the fingerprint-classification analysis for each of the different subsets of stimuli reflecting high-level semantic features. Again, identification rates for all semantic features were well above chance levels in both discovery and replication sets (IIT: 29%–54%, all

Given the observation that identification rates tend to drop with decreased number of stimuli present within a semantic-feature category, it is likely that identification rate at the level of individual stimuli would also result in relatively low rates. Indeed, we found that individual stimuli identification rates were heavily reduced (e.g., IIT: ~2%–11%, Fig. 2c; Gi: ~4%–21%, Fig. S1b) compared with rates observed for median gaze similarity. Despite this attenuation, identification rates are still much higher than chance levels for the majority of stimuli. For example, around 94.2% (660/700) of stimuli in the IIT discovery data set (Fig. 2c) and 62.2% (436/700) of stimuli in the Gi data set (Fig. S1b) are identified above chance rates (FDR

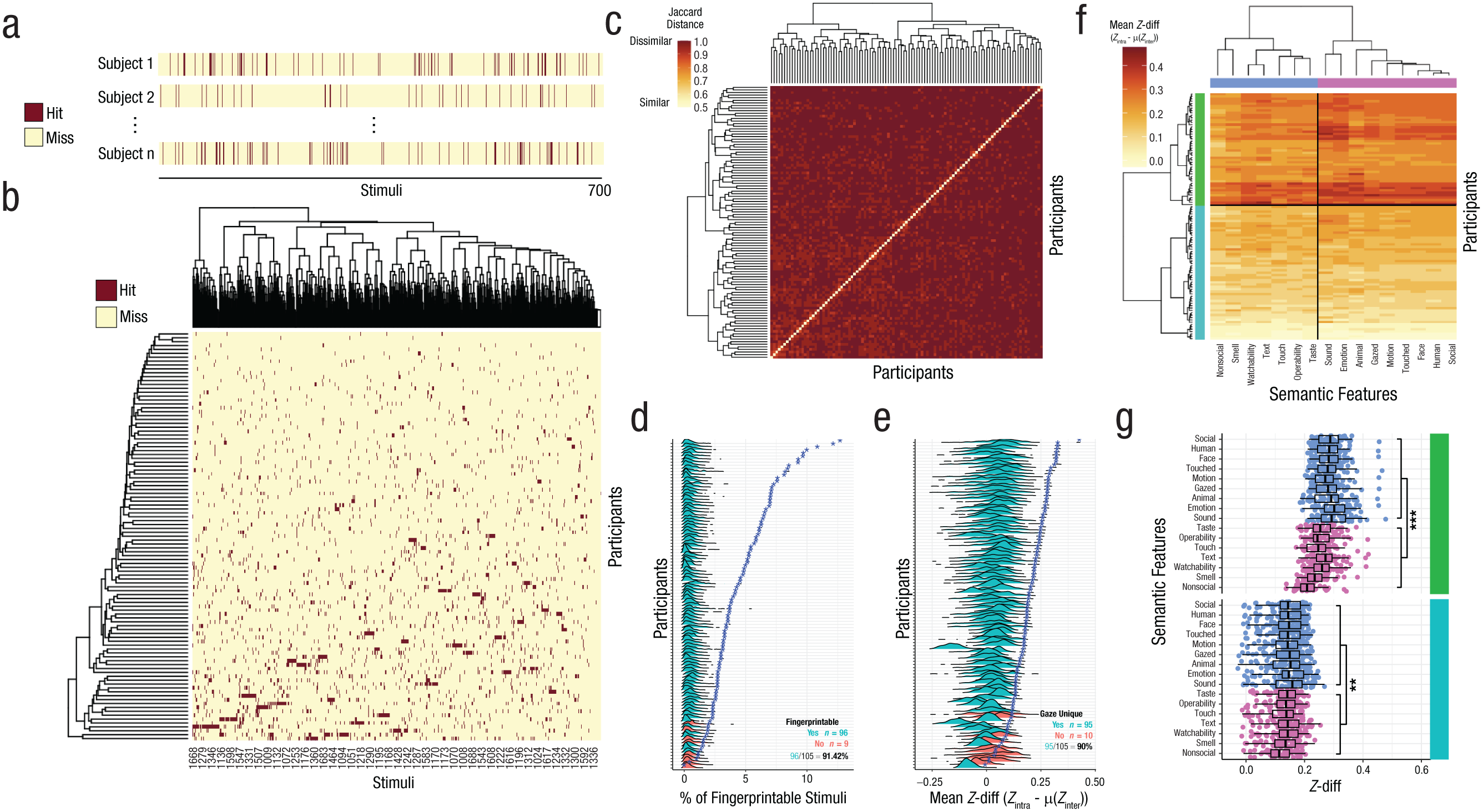

Although the 52% to 63% identification rate for median gaze similarity is already impressive and much higher than previous work on twins or during movie viewing (e.g., ~29%–40% accuracy; Keles et al., 2022; Kennedy et al., 2017; Rigas & Komogortsev, 2014), it is possible that the use of a central tendency summary statistic, such as the median, on gaze-similarity estimates over a large range of stimuli may hide important individuating information within the pattern of fingerprintability across stimuli. Given the unique stimulus-rich characteristic of our data set, we next employed a gaze-fingerprint-barcoding approach (Fig. 3a) to test whether the patterning of fingerprintability across a range of stimuli would allow for more individuation. The gaze-fingerprint-barcoding approach uses the binary vector of hits or misses across all 700 stimuli as a potentially unique individuating gaze-fingerprint pattern, or barcode, for an individual. As shown in Figure 3b, gaze-fingerprint barcodes are relatively sparse because each individual has a small but potentially unique pattern of fingerprintable stimuli across all 700 stimuli. Using Jaccard distance to quantify how similar or dissimilar the binary gaze-fingerprint barcodes are between individuals, we next used clustering to test whether the optimal number of clusters would be the practical upper bound of clusters detectable (e.g.,

Gaze-fingerprint barcoding and degree of gaze uniqueness. In (a) we show a visual example of gaze-fingerprint barcodes. Stimuli from 1 to 700 are shown on the horizontal axis. The burgundy color indicates a stimulus for which the individual can be correctly identified (a hit), whereas yellow indicates a stimulus for which the individual could not be correctly identified (a miss). Along the rows are example individuals (e.g., Sub 1, Sub 2, . . . Sub

We can also summarize information within gaze-fingerprint barcodes by quantifying the percentage of stimuli an individual could be gaze-fingerprinted on and then test whether this measure is significantly larger than what we would expect at chance when the subject identifier is randomly permuted. Here we find that the percentage of fingerprintable stimuli varies widely between individuals, with some individuals showing very minimal levels (e.g., 1%–2%), whereas others can be identified on the basis of 12%–14% of all stimuli (Fig. 3d). We replicably find that nearly all individuals in both discovery and replication data sets (IIT: 96/105 or 91.42%, Fig. 3d; Gi: 44/46 or 95.65%, Fig. S2c) had percentages of fingerprintable stimuli that were significantly higher than chance when the subject identifier is randomly permuted. Thus, although the information present within gaze-fingerprint barcodes is sparse, it is much higher than would be expected at chance when the subject identifier is randomly permuted.

As an alternative to a binary vector representing the gaze-fingerprint barcode, we also have introduced the concept of a continuous degree of gaze uniqueness via

We next clustered the continuous

One alternative explanation for the high level of individuation in gaze-fingerprint barcodes (Figs. 3b and 3c) could simply be that the barcodes are random binary vectors that could not be repeatedly detected within an individual. To test this alternative explanation, we ran two preregistered follow-up experiments (i.e., Study 2) to measure barcodes repeatedly from the same individuals and then assess their similarity. In the first follow-up experiment, we called back 10 of the original individuals tested in the IIT discovery data set for a third eye-tracking session in which they repeated the same paradigm as in Sessions 1 and 2. However, this third eye-tracking session was conducted after years of delay compared with Session 1 (e.g., mean delay = 3.58 years,

We next conducted another preregistered short-term follow-up experiment in which individuals were gaze-tracked over five repeat sessions separated by 19 to 90 days, as a contrast to tests of barcode similarity on the timescale of years (e.g., the long-term follow-up experiment). Barcodes computed from Sessions 1 and 2 were compared with barcodes from Sessions 1 through 3, 1 through 4, and 1 through 5, with a similar permutation and binomial-test approach. Compared with barcodes from Sessions 1 and 2, 71% of individuals had significantly similar barcodes in Sessions 1 through 3 (number of successes = 5, number of trials = 7,

Stability of gaze-fingerprint barcodes over time. In (a) we show a schematic of the design of the preregistered long-term and short-term follow-up experiments in which we tested individuals after a delay from Session 1 on the order of years (long term) or weeks to months (short term). The short-term follow-up experiment had five repeat eye-tracking sessions, which additionally allowed for tracking of gaze-fingerprint barcodes within individuals over the course of 2 to 3 months. In (b) we show a schematic of the data-analysis approach for assessing similarity of gaze-fingerprint barcodes. Jaccard distance was utilized to quantify degree of barcode similarity, with a smaller Jaccard distance being indicative of more barcode similarity. Barcodes were then randomly permuted 10,000 times to build a null distribution of Jaccard distance under the hypothesis that the barcodes are random. Statistical inference can be implemented on a within-individual level by computing a

In a final set of analyses, we expanded scope on the relevance of gaze fingerprinting to autistic traits. Autistic traits represent a continuous and highly heritable phenotype linked back to polygenic or omnigenic common genetic architecture (Constantino & Todd, 2003; Robinson et al., 2016). Degree of gaze similarity is also strongly linked to genetic similarity (Constantino et al., 2017; Kennedy et al., 2017), and atypical gaze patterns are commonly observed in autism (Constantino et al., 2017; Jones & Klin, 2013; Wang et al., 2015). Thus, we reasoned that variation in autistic traits may be linked to individualized patterns of gaze captured by the gaze-fingerprinting approach. Here we used two self-report questionnaires that are widely used to measure autistic traits—the SRS-2 and the AQ. These measures are highly correlated (

Gaze-fingerprinting association with autistic traits. This figure showcases how gaze-fingerprinting metrics are associated with variation in autistic traits. In (a) we show the two autistic-trait measures utilized in this study—the Autism Quotient, or AQ (

Next, we compared models using gaze-fingerprinting PC1 on all stimuli or social and nonsocial stimuli as the independent variable, or models that use only median intrasubject or intersubject gaze similarity. These model comparisons were done to better understand which aspect of gaze fingerprinting best predicts autistic traits (i.e., intra- or intersubject gaze similarity alone, or the combination of both via gaze fingerprinting on all stimuli or on social or nonsocial stimuli). Here we found that the model using gaze-fingerprinting PC1 on all stimuli accounted for 18.82% of the variance in autistic traits. Similarly, gaze-fingerprinting PC1 on social stimuli accounted for 18.51% of variance in autistic traits. In comparison to these two models, all other models accounted for less than 12% (gaze-fingerprinting PC1 nonsocial = 10.17%; median intrasubject gaze similarity = 12.19%; median intersubject gaze similarity = 6.88%; see Fig. 5f). Of these other models, only median intrasubject gaze similarity was significantly related to autistic traits, in the direction of increasing autistic traits with decreased intrasubject gaze similarity (see Table S2 in the Supplemental Material). Thus, in direct model comparisons it is clear that gaze-fingerprinting PC1, particularly for social stimuli, provides significantly enhanced utility in predicting autistic traits over and above other models that compute gaze fingerprinting from nonsocial stimuli or that account for intra- or intersubject gaze similarity alone.

Discussion

In this work we discovered that it is possible to identify individuals from spatial-gaze patterns sampled from static pictures of complex natural scenes. Here we replicably show that we can gaze-fingerprint individuals at levels much higher than chance (52%–63% vs. 1%–2% chance levels). Although these identification rates are much higher than in previous studies (e.g., 29%–40%; Keles et al., 2022; Kennedy et al., 2017; Rigas & Komogortsev, 2014), there are some caveats to comparing identification rates between studies. First, identification rates may be dependent on the number of stimuli sampled per individual. This work demonstrates that identification rates can drop with the size of the stimulus set (e.g., Fig. 2d). Thus, identification-rate comparisons between the current and prior studies must be interpreted relative to the number of stimuli sampled within individuals. Second, two of the prior studies examined identification of the same target individual (Keles et al., 2022; Rigas & Komogortsev, 2014), whereas the other examined identification of twin pairs (Kennedy et al., 2017). It may be expected that accuracy in identifying the same target individual would be higher than in identifying an individual’s twin. However, for the prior studies that examined identification of the same target individual (Keles et al., 2022; Rigas & Komogortsev, 2014), comparing identification rates between studies may be limited because those prior studies utilized dynamic movie stimuli. In contrast, the current work examines a large range of static pictures of complex scenes. Theoretically, the idea of a traitlike gaze fingerprint suggests that a degree of invariance should be expected and should thus generalize across static versus dynamic stimuli or even real-world scenarios. An open question for future work should be to examine level of invariance of gaze fingerprints across dynamic real-world scenarios versus static picture viewing.

The current work shows some utility for summarizing central tendencies of gaze similarity over a large range of stimuli. However, such data-reduction techniques may risk potentially hiding unique and important information for the purpose of gaze fingerprinting. This point has been underscored in the past with analogous literature in functional neuroimaging that contrasts mass univariate analysis to multivariate pattern-decoding techniques (Haxby et al., 2001). Analogous to the stimulus-rich experimental-design strategies of representational similarity analysis (Kriegeskorte et al., 2008), our gaze-fingerprinting design’s stimulus-rich aspect allows us to fingerprint individuals on each stimulus and then summarize gaze fingerprintability over different semantic features; we found that most semantic features had relatively similar levels of identification rates. These results are similar to recent observations made by Broda and de Haas regarding how domain-general mechanisms may be helpful in explaining individualized gaze biases rather than domain-specific mechanisms (e.g., face-specific biases; Broda & de Haas, 2024).

At a more granular level than semantic-feature categories, there may also be useful information at the level of individual stimuli. Although identification rates for any one stimulus are generally much lower than rates for median gaze similarity across stimuli, the gaze-fingerprinting output per subject and stimuli may still represent a unique and novel gaze-fingerprint barcode. We show that gaze-fingerprint barcodes are sparse, but far from random. Evidence supporting this statement comes from observations that > 90% of all individuals have a percentage of fingerprintable stimuli that is significantly higher than the null distribution when the subject identifier is randomly permuted. Clustering gaze-fingerprint barcodes also results in the optimal number of clusters reaching the upper practical bound. Furthermore, preregistered follow-up experiments (i.e., Study 2) show that similar gaze-fingerprint barcodes can be identified within individuals over short time frames (e.g., weeks to months) and long time frames (e.g., years). One caveat to the barcoding approach applied in this work is that Session 1’s data is utilized in both short-term and long-term barcodes. The reason for this is that Session 1’s data is psychologically the most important for making inferences about traitlike tendencies because it samples gaze behavior upon an initial first viewing rather than on subsequent repeat viewings. Repeat-viewing behavior may differ psychologically from initial first viewings through the incorporation of other statelike factors (e.g., habituation, memory; Chakravarthula et al., 2025; Schmidig et al., 2025). However, it is impossible to eliminate the reliance on Session 1’s data because we cannot sample initial-viewing behavior to the same stimuli independently across two occasions within the same individual.

Another salient aspect of this work to underscore is the stimulus-rich sampling of gaze across a large array of visual stimuli. The 700 stimuli utilized here represent a much larger group than the stimuli used in other work on the topic. However, it is but a fraction of the total number of stimuli that possibly reveal unique individuating signatures for how individuals uniquely view the world. Thus, there is likely vast potential for expanding on this approach with much larger data sets and with expansion into dynamic stimuli (e.g., naturalistic movie viewing). Extending this work into real-world or naturalistic viewing scenarios would also enable testing of the robustness and generalizability of gaze fingerprinting under static viewing conditions. There is also probably much potential for taking a thin-slices approach (Byrge & Kennedy, 2019) to identifying what would be the smallest amount of data across stimuli that would be useful for gaze fingerprinting. Such a thin-slice approach is beyond the scope of the current work but should be explored in future research.

Finally, we also show that decreased gaze fingerprintability is associated with higher levels of autistic traits. This finding can be contrasted with two prior studies (Avni et al., 2020; Keles et al., 2022) that primarily differ from the current work by examining gaze data during naturalistic movie viewing and by also directly examining clinically diagnosed autistic individuals. Keles et al. found similar gaze-fingerprinting identification rates between typically developing and autistic adults, as well as intact within-individual reliability. However, these authors did find that autistic-gaze heat maps were significantly more dissimilar to reference-gaze heat maps representing the average typically developing or autistic individual (Keles et al., 2022). This result may be suggestive of increased heterogeneity of gaze patterns in autism. Another study by Avni et al. (2020) found that autistic children showed more idiosyncratic gaze, operationalized as reduced intra- and intersubject gaze correlations. In contrast to these two prior studies, the current work did not find evidence of a relationship between intersubject gaze similarity and autistic traits. However, we did observe that reduced intrasubject similarity was predictive of higher autistic traits. Directly comparing with these prior studies may be difficult because of the difference between movie and static-picture viewing, but also because of the difference in examining clinically diagnosed autistic individuals (Avni et al., 2020; Keles et al., 2022) versus examining covariation of the autistic traits in otherwise nondiagnosed populations (i.e., the current study). Future work using our approach in clinically diagnosed populations and in movie-viewing contexts is needed. Nevertheless, the general idea that degree of gaze idiosyncrasy may be important for autism, autistic traits, or both matches up well with other hypotheses regarding enhanced neural or behavioral idiosyncrasy in autism (Avni et al., 2020; Benkarim et al., 2021; Bleimeister et al., 2024; Bolton et al., 2018; Byrge et al., 2015; Cavallo et al., 2018; Dinstein et al., 2012, 2015; Hahamy et al., 2015; Hasson et al., 2009; Keles et al., 2022; Milne, 2011; Nunes et al., 2019; Pegado et al., 2020; Rubenstein & Merzenich, 2003; Sohal & Rubenstein, 2019; Trakoshis et al., 2020). Furthermore, given the idea that both individualized gaze patterns and autistic traits are underpinned by heritable polygenic common genetic architecture (Constantino & Todd, 2003; Constantino et al., 2017; Kennedy et al., 2017; Portugal et al., 2023; Robinson et al., 2016), future work should investigate whether these phenomena are underpinned by shared or distinct genetic architecture. Finally, the relationship between gaze fingerprintability and autistic traits is an important general confirmation for the idea of a traitlike factor between individuals that helps explain variance in gaze behavior. Such a finding matches well with other work that generally identifies a link between personality traits and gaze behavior (Wu et al., 2014; Yamashita et al., 2023). It is also notable that intrasubject gaze correlations for fingerprintable stimuli (mean

We should also discuss some limitations and caveats of the current work. First, the sample size of Study 2 was relatively small, because many of the original participants were not able to come back for the long-term follow-up testing sessions (some delays were on the order of years). Future work with larger sample sizes for long-term follow-up sessions may be needed. Second, although these results appear in the context of brief viewings of static pictures of complex natural scenes, future work should examine generalizability to other contexts, such as naturalistic movies. Third, the correlations with autistic traits were discovered in otherwise typically developing individuals without an autism diagnosis. Thus, stronger statements about relevance to autism will require future experiments in clinically diagnosed autistic samples.

In summary, we show that gaze fingerprinting is possible both by (a) examining a central tendency of gaze similarity over a relatively large range of stimuli and (b) by preserving the gaze-fingerprint information held within gaze-fingerprint barcodes. The insights of this study were enabled by the use of a stimulus-rich design and have strong methodological support via replicable evidence across discovery and replication sets, as well as confirmatory hypothesis tests from preregistered longitudinal follow-up experiments. Such work is important for future research decomposing individually sensitive information within gaze patterns and may be of high neurodevelopmental and biological significance.

Supplemental Material

sj-pdf-1-pss-10.1177_09567976251415352 – Supplemental material for Detection of Idiosyncratic Gaze-Fingerprint Signatures in Humans

Supplemental material, sj-pdf-1-pss-10.1177_09567976251415352 for Detection of Idiosyncratic Gaze-Fingerprint Signatures in Humans by Sarah K. Crockford, Eleonora Satta, Ines Severino, Donatella Fiacchino, Andrea Vitale, Natasha Bertelsen, Elena Maria Busuoli, Veronica Mandelli and Michael V. Lombardo in Psychological Science

Supplemental Material

sj-xlsx-2-pss-10.1177_09567976251415352 – Supplemental material for Detection of Idiosyncratic Gaze-Fingerprint Signatures in Humans

Supplemental material, sj-xlsx-2-pss-10.1177_09567976251415352 for Detection of Idiosyncratic Gaze-Fingerprint Signatures in Humans by Sarah K. Crockford, Eleonora Satta, Ines Severino, Donatella Fiacchino, Andrea Vitale, Natasha Bertelsen, Elena Maria Busuoli, Veronica Mandelli and Michael V. Lombardo in Psychological Science

Footnotes

Transparency

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.