Abstract

Integrating mobile eye tracking and optoelectronic motion capture enables point of gaze to be expressed within the laboratory co-ordinate system and presents a method not commonly applied during research examining dynamic behaviors, such as locomotion. This paper examines the quality of gaze data collected through the integration. Based on research suggesting increased viewing distances are associated with reduced data quality; the accuracy and precision of gaze data as participants (

Introduction

The past two decades have seen a notable increase in the development of research methods incorporating mobile eye tracking technologies to examine the visual control of behavior across a variety of scientific domains (Tatler et al., 2011). By way of example, methods used in the study of social attention (Laidlaw et al., 2011) and expertise in sport (Vickers, 1996) have been enriched, leading to new proposals of dynamic visual control, which consider the function of eye movements relative to the coordination of the head and body (van der Kamp & Dicks, 2017). Within the field of perceptual-motor control, the accurate study of coordination between the eyes, head, and body has arguably been limited by the lack of suitable technology (Kothari et al., 2020). In such fields, researchers using head-worn eye trackers are predominantly interested in questions such as which objects in the world a person fixated, in what order, and for how long (Ellmers et al., 2020; Matthis et al., 2018; Niehorster, Hessels, et al., 2020). As such, eye movement data collected via head mounted eye tracking technology requires mapping onto locations in world space (Hessels et al., 2018). While mobile eye trackers deliver gaze data in a head-centered reference frame, simultaneous motion capture provides the means to express gaze data in a world-centered reference frame. Specifically, by combining eye position, derived from retroreflective markers attached to the mobile eye tracker, and eye orientation data, point of gaze can be expressed relative to the world-space coordinate system. 1

Although the integration of mobile eye tracking and motion capture systems to express point of gaze with a world-centered frame of reference is uncommon (however, see: Burger et al., 2018; Essig et al., 2012; Matthis et al., 2018), research capturing kinematic and gaze data has begun to progress our understanding of perceptual-motor behaviors (Domínguez-Zamora et al., 2018; Domínguez-Zamora & Marigold, 2021). In particular, Matthis et al. (2018) recently developed a method of integrating gaze and kinematic measures, expressing point of gaze within world-space whilst participants walked over terrains of differing complexity. Deriving eye and horizontal floor plane position from inertial measurement unit (IMU) sensors located on each participant's head and feet, and gaze orientation data from a mobile eye tracker, point of gaze was computed as the location where the gaze vector intercepted the floor plane 2 . Matthis and colleagues’ method developed understanding of how people successfully overcome challenging environments, revealing that gaze and kinematic behaviors are adapted relative to the environment's specific demands. As such, their method signposted the potential benefits of integrating these distinct measurement systems in perceptual-motor control research domains requiring skillful coordination, such as locomotion (Higuchi, 2013; Matthis et al., 2018) and sport performance (Dicks et al., 2010), as well as domains such as environmental representation (Tatler & Tatler, 2013) and object manipulation (Draschkow & Võ, 2016; van Dijk & Bongers, 2014).

Although data collected using motion capture and eye tracking systems have been integrated previously (Burger et al., 2018; Jantunen et al., 2020; Matthis et al., 2018) the quality of data, such as point of gaze accuracy and precision, in terms of world-space co-ordinates has yet to be evaluated. As such, this paper centralizes around accuracy, defined as the difference between the recorded gaze position and the actual gaze position (Wang et al., 2017), and precision, defined as the ability to reliably reproduce a measurement given a fixating eye (Pastel et al., 2020) of data collected through the integration of mobile eye tracking and optoelectronic motion capture and then expressed in world-space co-ordinates.

Evaluating Optoelectronic Systems for Gaze Data Collection

When precise position or orientation-related analyses of human movements are required, optoelectronic systems represent the gold-standard in terms of accurately tracking (<1 mm) anatomical positions (Spörri et al., 2016; van der Kruk & Reijne, 2018). Determining the position of the eye is essential to accurately expressing point of gaze in relation to the world co-ordinate system, therefore, utilizing optoelectronic motion capture may enhance gaze data quality compared to alterative motion capture systems. Moreover, unlike IMU, optoelectronic systems allow features such as objects, obstacles, or foot targets to be digitized, and expressed within the world-space coordinate system (van der Kruk & Reijne, 2018; Matthis et al., 2018).

The ability to accurately translate point of gaze onto the environment may play a pivotal role in analyzing gaze data. Traditionally, gaze data analysis has been undertaken by frame-by-frame analysis where researchers manually classify fixations or saccades and then identify the locations participants fixate (Vansteenkiste et al., 2014). More recently, event-based analysis has streamlined this analysis process by using algorithms to classify gaze events based on eye orientation data (Benjamins et al., 2018; Hessels et al., 2017; Niehorster, Hessels, et al., 2020). However, these algorithm based approaches are limited when evaluating gaze behaviors in locomotor settings as classifying gaze behaviors based exclusively on eye orientation may not account for disparity between the participants eye and bodily motion (Hessels et al., 2018; Lappi, 2015). As such, as a person moves through the environment, determining point of gaze relative to environmental features has predominantly been achieved by frame-by-frame analysis of scene camera footage (Hessels, Benjamins, et al., 2020; Jongerius et al., 2021; Kothari et al., 2020). In particular, a frame-by-frame approach has been commonly applied to consider the amount of time a person's point of gaze is located on designated environmental locations (Ellmers et al., 2016, 2020; Mele & Federici, 2012; Parr et al., 2020). However, it has been suggested that frame-by-frame analysis methods are prone to inter-observer error and have been considered time consuming (Duchowski, 2007; Hessels, Niehorster, et al., 2020; Kiefer et al., 2017). Further, researchers evaluating eye-tracking data analysis have recently noted that eye-tracking research would benefit from more standardized analysis procedures to enhance comparison between studies and assessment of study quality (Jongerius et al., 2021). The capacity of optoelectronic data collection to express both point of gaze and the location of environmental features within a world-centered reference frame addresses these points by facilitating greater automation of the data analysis process, which promotes time efficiency, reduces inter-observer error, and greatly increases the number of trials that can be analyzed. In turn, these attributes increase statistical power, which due to the time needed to manually code gaze data has been frequently recognized as low (Jongerius et al., 2021; Knudson, 2017). In addition to the advantages associated with automated data analysis, the ability to express both point of gaze and environmental features within the laboratory coordinate system allows researchers to evaluate the accuracy and precision of gaze data in terms of world-space and contributes to effective experimental design.

Gaze Data Accuracy and Precision

Aside from establishing the origin of the gaze vector (e.g., eye position), expressing point of gaze with a world-based frame of reference can be affected by the accuracy and precision of the eye tracking system (Ooms, 2015). Multiple factors influence gaze calibration and data quality, including task factors such as viewing distance defined as the distance between a participant and the location being fixated (such as Figure 1 ‘C’) and viewing angle defined as the angle between gaze origin and the location being fixated (such as Figure 1 ‘

Computation of

MacInnes et al. (2018) considered the influence of viewing distance and angle by evaluating the quality of gaze data at distances of 1, 2, and 3 m and lateral angles of −10°, 0°, and 10°, reporting the mean accuracy of the Tobii Pro Glasses 2 system across the aforementioned conditions as 1.42° of visual angle. Although accuracy may be expressed in terms of visual angle (e.g., 1.42°), it is important to consider the effects the relationship between visual angle and viewing distance may have on accuracy when considering a world-based reference frame. For example, adaptive locomotor research indicates that visual information is exploited from distances within approximately 6 m preceding a foot target or obstacle (Cornus et al., 2009; Lee et al., 1982; Montagne et al., 2000; van Andel et al., 2018). Assuming a constant accuracy value of 1.42°, viewing an eye-height target located on a vertical plane (such as a wall) from a distance of 1 m translates to a world-space accuracy of 2.5 cm, whereas a viewing distance of 6 m translates to an accuracy of 14.9 cm. As such, an increase in viewing distance would lead to reduced world-space accuracy due to constant angles covering larger areas at larger distances.

During everyday locomotion people predominantly attend to task relevant ground-based locations in the lower visual field (Matthis et al., 2018; Marigold & Patla, 2008). When considering the world-space accuracy of ground-based fixations, the angle of incidence must be considered alongside viewing distance. Assuming a constant eye height and constant accuracy (in visual angle), the distance along the horizontal plane increases non-linearly with viewing distance. For example, a fixed eye height of 1.57 m (Adler, 1999) and an accuracy of 1.42° is associated with a distance along the horizontal plane of approximately 5.5 cm for a person fixating a floor based target, 1 m ahead of their location. However, accuracy would decrease to 60.8 cm when fixating a floor-based target that is located at a distance 6 m away.

Although the combined influence of viewing distance and visual angle outlined above are simple to translate into world-space values using trigonometry, the accuracy of eye-tracking systems (in terms of visual angle) has also been shown to be influenced by a range of participant factors such as eye color, contact lens use, and eye lash length (e.g., Blignaut & Wium, 2014; Thibeault, et al., 2019). Furthermore, MacInnes et al. (2018) also reported that accuracy decreased as viewing distance increased. Specifically, visual angles of 0.8, 1.6, and 1.8° were reported for distances of 1, 2, and 3 m, respectively. The fact that viewing distance and participant factors impacted eye tracking accuracy (MacInnes et al., 2018; Pastel et al., 2020) raises important considerations when measuring gaze behaviors throughout situations such as adaptive locomotion, where attending to distal information has been noted as vital (Higuchi, 2013). Additionally, MacInnes and colleagues reported reduced precision when viewing angle increased, a result possibly associated with the location of the pupils relative to the eye-tracking system's cameras (Hornof & Halverson, 2002; Pastel et al., 2020). This is again an important consideration as a participant fixating a proximal ground-based location would exhibit greater viewing angles compared to when fixating a target positioned at eye height, possibly reducing gaze data quality (MacInnes et al., 2018). Such findings indicate that world-space accuracy may not be wholly defined geometrically and establishes a need to consider the influence of viewing distances and angles on gaze data quality in terms of world-space co-ordinates when viewing floor-based locations.

Processing Gaze Data

Noise has commonly been acknowledged as a limitation with kinematic data (Camargo et al., 2020; Winter, 2009) and is particularly prevalent with optoelectronic data collection methods. As optoelectronic motion capture provides the information required to translate point of gaze into the world-centered reference frame, data processing methods commonly employed to overcome noise may improve gaze data quality and accuracy. To reduce the influence of noise, and improve data quality, researchers have commonly applied a low-pass Butterworth filter to data collected via optoelectronic motion capture (Parr et al., 2020; Spörri et al., 2016; van der Kruk & Reijne, 2018). Accordingly, filtering eye position data may improve data quality and accuracy when translating gaze position to a world-centered reference frame.

In eye-tracking data, noise has also been identified as a property inherent to the measurement device (Niehorster, Zemblys, et al., 2020). Similar to treating kinematic data, previous researchers have utilized a low-pass Butterworth filter to treat gaze data during locomotor pointing tasks (Domínguez-Zamora et al., 2018), or to smooth gaze data prior to event classification (Houpt et al., 2018). However, these approaches commonly apply standardized one-size fits all filter parameters to gaze datasets. Such an approach may not reflect the variable nature of gaze behaviors that are common both between and within participants (Dicks et al., 2017). In recognition of the individual nature of gaze data, an auto-correlation approach to determining the optimum low-pass filter parameters (Challis, 1999) may be particularly beneficial and allow filter parameters to be objectively determined on a trial-by-trial basis (Davis & Challis, 2020).

Missing data has been acknowledged as a limitation of data collected through both optoelectronic motion capture (e.g., marker occlusion) and eye tracking (e.g., pupil identification loss; Blignaut & Wium, 2014; Duchowski, 2007; Hessels et al., 2015; Spörri et al., 2016). Across a range of disciplines, including eye tracking research, the use of splines has been commonly advocated as a simple yet robust method to interpolate missing data (Frank et al., 2009; Hessels et al., 2017; Howarth & Callaghan, 2010; Kamali et al., 2020). In particular, quintic, compared to cubic, splines have been recognized as capable of interpolating complex or variable movements (Grimshaw et al., 2019; Winter, 2009), qualities often noted within gaze data sets (Dicks et al., 2017). However, diminished accuracy has been identified as a limitation of treating larger gaps using splines (Howarth & Callaghan, 2010). To mediate this limitation, an upper gap size threshold of 100 ms has been adopted when applying splines to interpolate gaze data (Frank et al., 2009; Hessels et al., 2017). However, as considered, a standardized one-size fits all gap size threshold parameter may not be best suited to treating variable gaze behaviors (Dicks et al., 2017). As such, the use of splines as a method of filling different sized gaps in gaze data, relative to world-space accuracy merits exploration.

While mobile eye trackers deliver gaze data in a head-centered reference frame for many research topics, such as adaptive locomotion, gaze data in a world-centered reference frame is considered beneficial (Matthis et al., 2018). One way to achieve this translation is to perform simultaneous motion capture of the participant's eye position, which provides the information required to perform the head to world transformation. Findings concerning eye-tracking data have alluded to task and participant factors that inhibit gaze data quality (Blignaut & Wium, 2014). Such findings establish a need to consider the influence of greater viewing distances and viewing angles on gaze data quality in terms of world space co-ordinates. As such, this study primarily aimed to assess the accuracy and precision of eye tracking data collected using the integrated Tobii Pro Glasses 2 and Qualisys motion capture systems. Based on the importance of ground-based fixations throughout many activities of daily life, including locomotion, gaze data accuracy and precision shall be considered as participants attend to floor-based targets at distances between 1 and 6 m. Building on previous work highlighting reductions in gaze data accuracy at greater distances and viewing angles (MacInnes et al., 2018), we hypothesize a reduction in accuracy when participants attended to the most proximal and distal floor targets (1 and 6 m, respectively). Issues such as noise and missing data have also been identified in both optoelectronic and eye tracking data sets (Camargo et al., 2020; Hessels et al., 2017). Subsequently, our second aim was to explore if signal processing techniques, such as filtering and interpolation, may help overcome such limitations and improve data quality when considering gaze data in terms of world space co-ordinates.

Methods

Participants

G*Power (Faul et al., 2007) was used to perform an

Hardware

A Tobii Pro Glasses 2 mobile eye tracker (Tobii, 2018) was used to measure gaze behavior. The eye tracker consists of a lightweight (45 g) fixed geometry frame containing four cameras, and 12 illuminators, which project near infrared light to create a pattern on the cornea of both eyes. The system allows the position of each eye to be recorded at 50 Hz as per recommendations for research concerning larger viewing angles, such as the current study (Tobii, 2018). The glasses were connected to a portable recording unit which used image-processing algorithms and a physiological 3D model of the eye to estimate the position of the pupil relative to the glasses 3 . The recording unit allowed gaze data to be streamed real-time via Wi-Fi and interfaced with the Qualisys motion capture interface (QTM version, 2018.1).

To track the origin of each eye's gaze vector in the lab, a cluster marker (provided by Tobii) set comprised of six retroreflective markers was attached to the fixed geometry body of the eye tacking glasses (Figure 2). Within Qualisys track manager (QTM) software, a 6 degrees of freedom model was created which defined the position of each eye relative to the marker set, in turn allowing the location of each eye to be expressed relative to the lab coordinate system (QTM, version 2018.1).

Tobii Pro glasses 2 with motion capture markers attached (Tobii, 2018).

Laboratory Environment

A 14-camera system (Qualisys Oqus 300 + ) set to capture at 100 Hz was used to record the position of the eye tracker cluster marker set. In order to account for variations in participant stature, cameras were typically positioned further back from the calibrated area and focused on the upper body. An area (approximately 6 × 1 × 2 m,

Procedures and Data Collection

Participants were asked to tie back long hair and remove eye make-up before the eye tracker was fitted and secured in place using a head strap to reduce movement and ensure a fixed relationship between the marker set and eye position in lab space. The eye tracker was calibrated following Tobii's one-point calibration method. Following initial calibration, data were collected directly though the QTM interface with the gaze vector position visible in real time (Figure 3). Following the steps outlined by MacInnes et al. (2018), viewing the real-time gaze vector position allowed researchers to judge calibration accuracy by asking participants to fixate on prominent eye-height features, such as the motion capture cameras. Large discrepancies in vector position, such as divergent or erratic gaze vectors, resulted in the glasses being refitted and the calibration process repeated until accuracy was considered acceptable.

Gaze vector (yellow), retroreflective markers (red), and axis orientation visible in the QTM environment (

Following calibration, participants completed a single 30 s (1500 sample) data collection participants were instructed to remain standing in a designated location whilst fixating on six ground-based retroreflective markers (Target 1–6) positioned at 1 m intervals along the

Data from each eye was collected separately, and exported to MATLAB (MathWorks Inc, 2019) for processing, which allowed gaze accuracy and precision measures to be evaluated across 22 data sets (i.e., one data set per eye). The export consisted of a matrix of six rows and a column per sample of data collection. The first three rows represented the origin position within the lab coordinate system and the second three rows represented gaze orientation as the dimensions of a unit vector.

Calculating Gaze Location

Three distinct elements are required to be accurately measured to compute the gaze vector's intercept with the laboratory environment. These are the origin of the gaze vector, comprising of the coordinates of the gaze vector origin (e.g., eye position), gaze direction, and information about world layout, such as areas of interest, specified within the lab coordinate system. This section details the process for generating coordinates for the intercept of the gaze vectors with the floor, as specified as having a

First, the angle of the gaze vector relative to the vertical plane was calculated (Figure 1,

Measures and Analyses

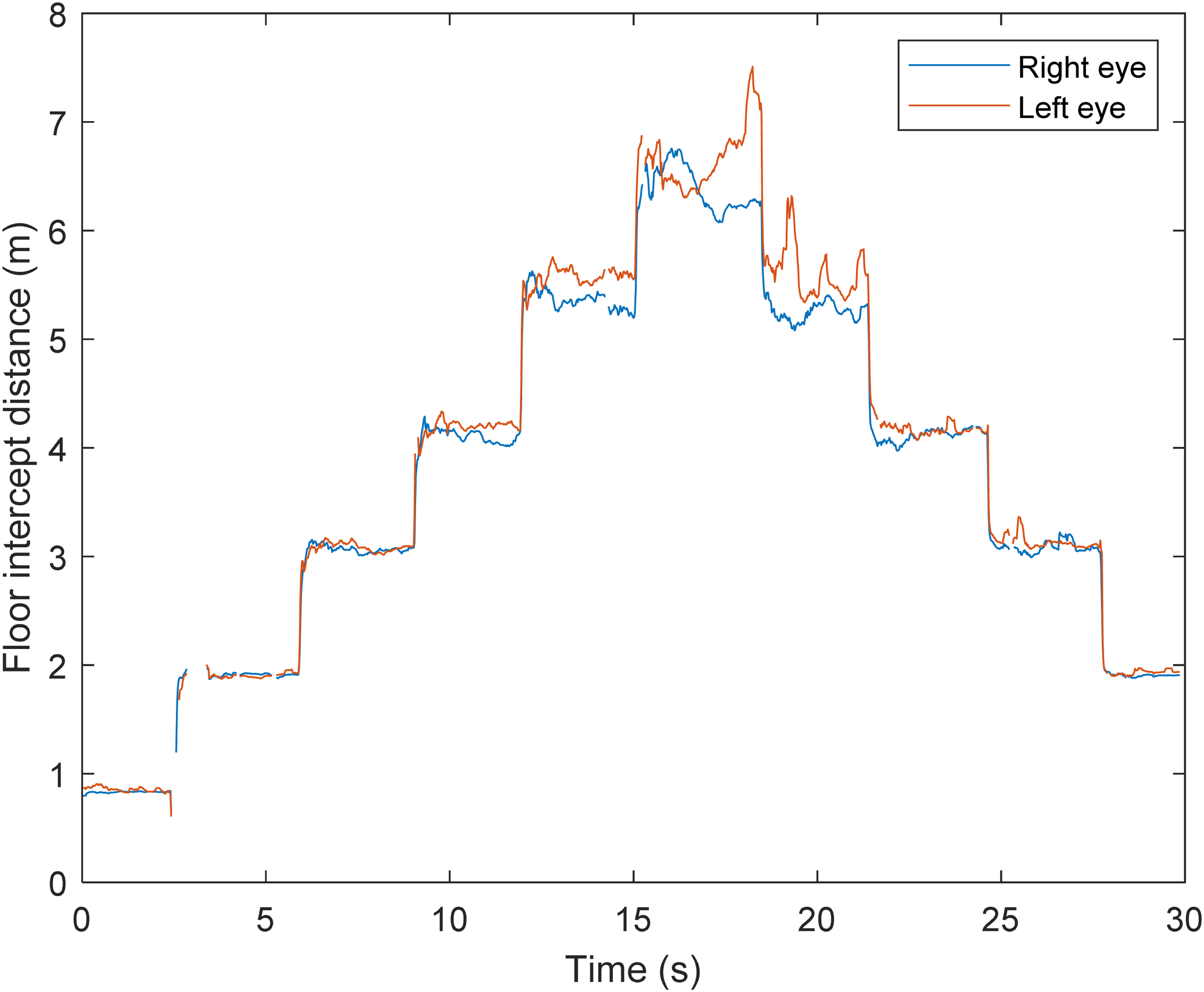

Using a custom MATLAB script which identified peaks in point of gaze velocity, the gaze data that corresponded with the start and end of each floor target viewing period was identified. These viewing periods were then manually confirmed though comparison to a plot of each participant's point of gaze, prior to data treatment (e.g., Figure 4). This approach allowed for the gaze transfer between targets (vertical lines, Figure 4) to be omitted from subsequent analysis. The identified samples (that correspond to each floor target viewing period) were then used to allow the gaze floor intercept location to be analyzed relative to the target location.

Untreated gaze data for the left and right eye (blue and red lines, respectively) from one participant when looking at the six different floor targets.

To screen gaze data for inaccuracies and consistent with approaches applied to event classification filters (Duchowski et al., 2002), the viewing angle of each eye, in relation to the

Accuracy

The mean absolute error (MAE) was computed for each eye on a trial-by-trial basis. The distance between point of gaze and the floor target being fixated was computed for each sample, summed, then divided by the number of samples spent viewing the respective target (see Dietzsch et al., 2017). MAE was computed for each eye and then averaged and used for statistical analysis (Chai & Draxler, 2014; Willmott & Matsuura, 2005). As accuracy is commonly expressed in eye tracking literature as the visual angle (in degrees) between the point of gaze and the target location (Holmqvist et al., 2010; MacInnes et al., 2018), the visual angle associated with each floor-based target's MAE was computed as follows:

Precision

In line with extant literature (Niehorster, Zemblys, et al., 2020), precision of gaze position data was measured using the root mean square of the displacement between successive gaze position samples (RMS-S2S) and the standard deviation of the gaze position samples (STD). As such, both RMS-S2S and STD were calculated to measure precision for each of the identified fixation targets following the methods outlined in previous research (Niehorster, Zemblys et al., 2020). These values were calculated for each eye on a trial-by-trial basis with a world-based frame of reference. 6

Signal Processing

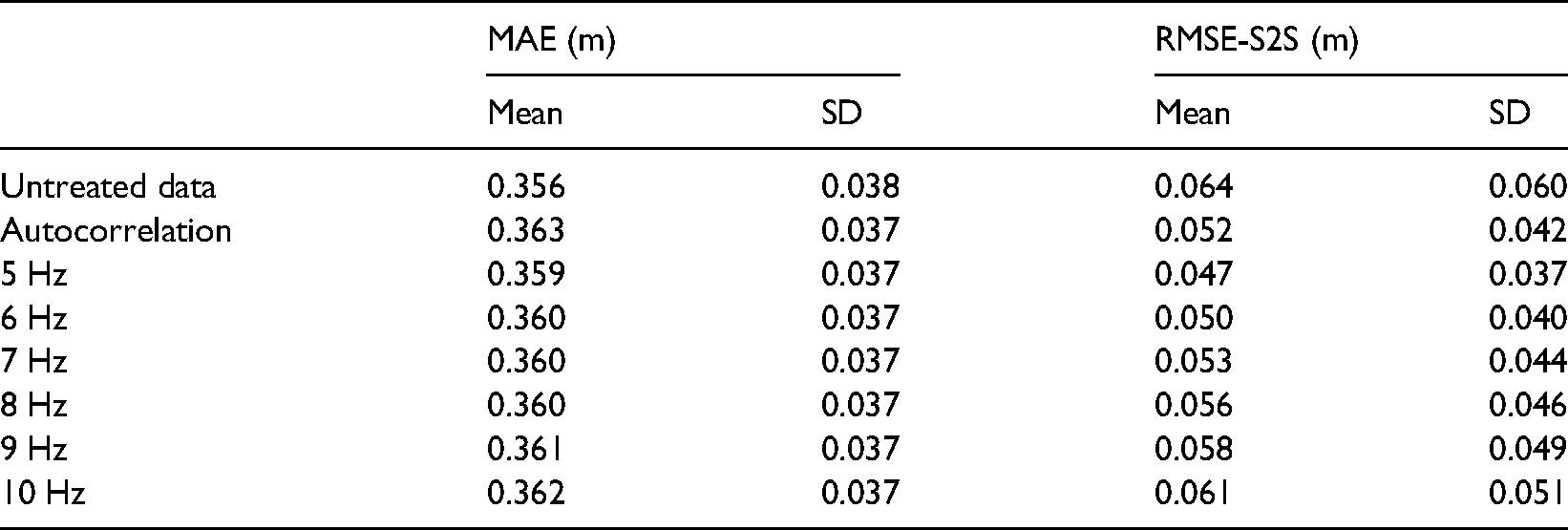

To consider the effect of signal processing on the resultant point of gaze, gaze origin and viewing angle data were filtered separately using a lowpass 4th order Butterworth filter, at the cut-off frequency determined using autocorrelation (Challis, 1999). The autocorrelation function identified a range of frequencies between 5 and 10 Hz (mean = 7.2 Hz). To assess the influence of cut-off frequency, eight conditions were tested with the point of gaze being computed using eye rotation data filtered at cut-offs of 5, 6, 7, 8, 9, and 10 Hz (derived from the range of cut-off frequencies identified, see Figure 5 for an example), the autocorrelation frequency and the original untreated data. The accuracy MAE and precision RMSE-S2S measures were calculated between point of gaze and each of the floor targets (as above) for each of the data treatment conditions.

Visual representation of different filter parameters treating the same data as presented in Figure 5. Black line shows raw, untreated, data; red line shows data filtered using the autocorrelation procedure; green and blue show data treated with filter parameters of 5 and 10 Hz, respectively.

Gap Filling

A method utilized by Musial et al. (2011) was adapted to ascertain the impact of the data treatment processes. Raw data were imported into MATLAB where two matrices (origin position and gaze orientation) were created for each eye. The location of missing data points in these matrices was indexed. The gaze orientation data were processed to compute viewing angle (eye rotation) using the procedure outlined in the methods section (Figure 1, angle

After establishing a baseline, akin to similar approaches taken in research evaluating the impact of data treatment on time series data (Musial et al., 2011), a second data set was created to represent missing data. To replicate missing data, a gap was created by removing values from the raw imported data. Using a custom MATLAB script, the position of the gap was designated using a random number generator function set to allocate a value ensuring a minimum threshold of 50 samples from the trial start and end points. The 50 sample cut-off was selected to strengthen the comparison by avoiding a situation where the created gap overlapped the data's start and end points (Grimshaw et al., 2019). The position of the removed samples was recorded. To examine the effect of gap size on the treated data, 11 gap size conditions were created with the size of the gap created being manipulated in five sample intervals between 0 (filtered signal data with no gap) to 50 samples (akin to 0–1 s of missing data). Previous research (Musial et al., 2011) undertaking similar comparisons have advocated large data samples to further strengthen analysis. As such, 50 randomly positioned gaps were created (non-simultaneously) within each eye's data set. This approach allowed a total of 1100 comparisons (11 participants, 2 eyes, 50 gaps) to be made for each of the gap size conditions. Following the creation of gaps, and data treatment process data either side of the gap MAE was calculated between the original and gap filled data to allow the effect of data treatment to be evaluated (Musial et al., 2011).

Statistical Analysis

For the MAE, RMS-S2S and STD measures, multilevel linear model repeated measures ANOVA's (alpha of 0.05) were undertaken using the nlme package in R (Pinheiro et al., 2020; RStudio Team, 2020). To explore the impact of target location, significant main effects were followed up using Tukey’s post-hoc tests, effect sizes (

Results

The point of gaze floor intercept (Figure 4) indicated that all participants completed the task as requested. Visual inspection suggested that as look-ahead distance increased, point of gaze accuracy decreased. Several instances of data loss (e.g., at approximately 2.5 s, Figure 4) for one, or both, eyes were identified. Across all trials mean missing data equated to 187.95 ± 160.05 samples (

Point of Gaze Accuracy

A multilevel repeated measures ANOVA indicated that floor target location had a significant effect on MAE, χ2(5) = 97.84,

The visual angle in degrees associated with the MAE for each floor-based target was computed. The results (Figure 6) indicated a mean accuracy of 2.55 ± 1.12° for all floor targets.

Accuracy at each floor-based target location. MAE is presented for each of the targets:

A multilevel repeated measures ANOVA indicated that floor target location had a significant effect on degrees of visual angle associated with the MAE, χ2(5) = 37.12,

The visual angle associated with the MAE values for each floor-based target location.

Point of Gaze Precision

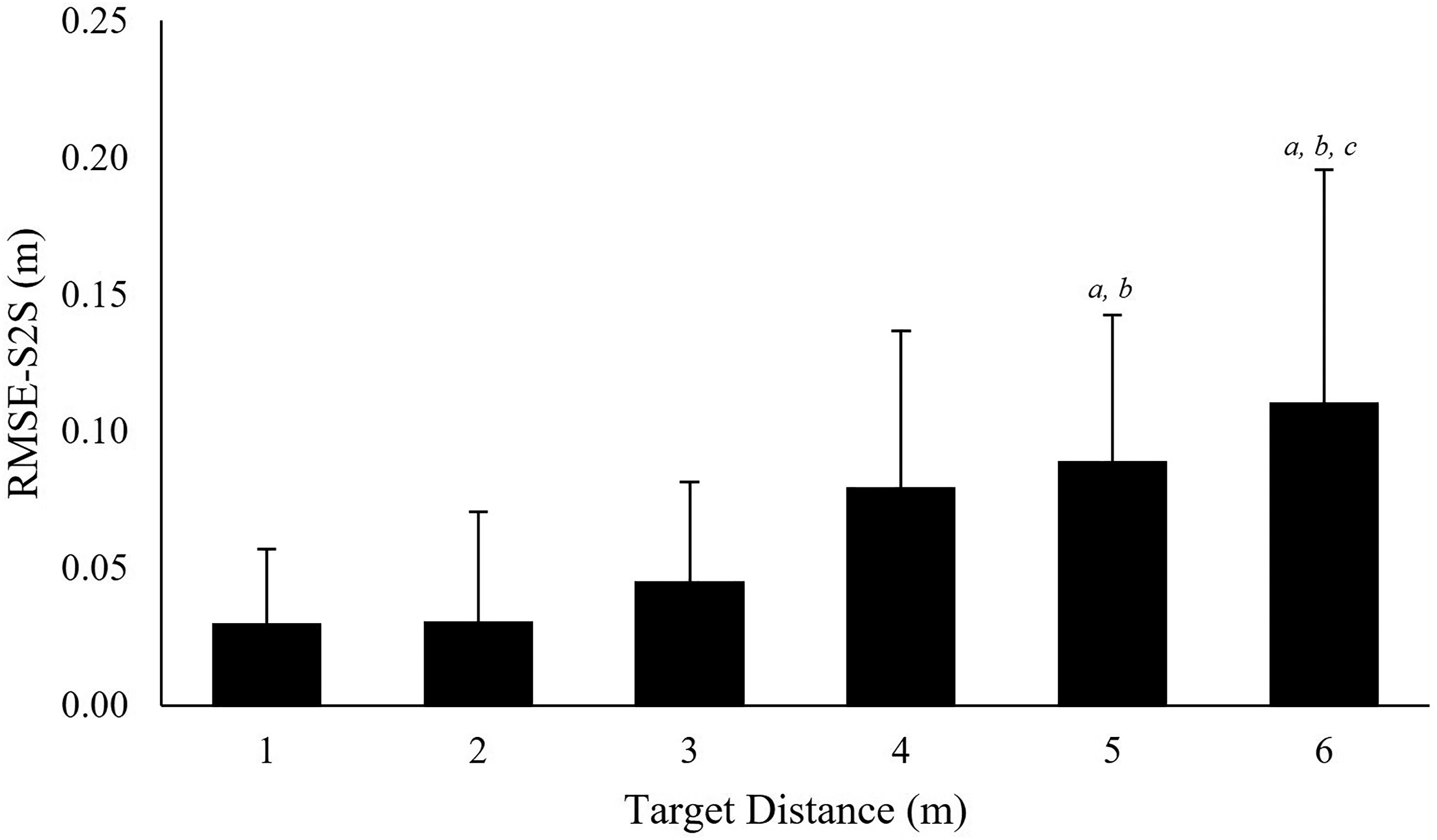

A multilevel repeated measures ANOVA indicated that floor target location had a significant effect on the RMSE-S2S precision measure, χ2(5) = 23.50,

Precision at each floor-based target location. RMS-S2S is presented for each of the targets:

Precision at each floor-based target location. STD is presented for each of the targets:

Signal Processing

Separate one-way ANOVAs (RStudio, 2020), identified no significant main effect for treatment condition for measures of MAE,

Accuracy and Precision of Different Data Filter Cut-off Frequencies.

Gap Filling

The results comparing the MAE between treated data and data with created gaps (Figure 10) showed that gap size had a significant effect on MAE, χ2(11) = 215.16,

MAE associated with treating different gap sizes. 0 gap size represents comparison between processed and unprocessed data.

Discussion

Integrating mobile eye tracking and optoelectronic motion capture presents a method of translating gaze data to a world centered frame of reference (e.g., the laboratory co-ordinate system). Such an integration has provided an additional method of collecting and analyzing human perceptual-motor behaviors. Our primary aim was to assess the accuracy and precision of eye tracking data collected using the integrated Tobii Pro Glasses 2 and Qualisys motion capture system. Gaze data accuracy and precision were considered as participants were asked to attend to floor-based targets at distances between 1 and 6 m. Consistent with geometric necessity results indicated a reduction in accuracy and precision associated with increased look-ahead distances. However, inconsistent with a geometric approach, reduced accuracy (e.g., increased error) was acknowledged at the most proximal floor target. The second aim was to explore if signal processing techniques may help overcome limitations, such as data loss and decreased accuracy and precision in eye tracking data with increased viewing distance and angle. Results indicated that data treatment did not significantly alter data quality measures. Most notably, non-significant differences in accuracy measures when interpolating gaps of up to 0.1 s suggest that gap filling may present a solution to small gaps (e.g., less than 0.1 s) occurring within gaze data sets. This result is consistent with gap filling thresholds outlined applied in extant gaze research (Hessels et al., 2017). These findings and their implications shall be explored in more detail in the subsequent sections.

Accuracy and Precision of Gaze Data

MAE results showed that point of gaze accuracy, in relation to world space, decreased at greater look-ahead distances (Figure 6). Specifically, when looking at Target 1 MAE was 0.13 m whereas when looking at target 6 MAE was a significantly greater 0.82 m. The reduced accuracy at greater viewing distances may be particularly important throughout perceptual-motor control research, such as studies of human locomotion (Ellmers et al., 2016; Hessels, Benjamins et al., 2020) and dynamic sport actions including goalkeeping in the penalty kick (Navia et al., 2017), where eye movements are characterized by fixations on floor-based locations. To further explore the effect of viewing distance on accuracy, the visual angle associated with each of the floor-based target locations MAE was computed (Figure 7). Our results suggested that accuracy, in degrees, at the most proximal target (4.27°) was significantly less accurate than all other targets. This reduction in accuracy may be associated with the eye tracker's capability to accurately detect pupil location at larger viewing angles (Hornof & Halverson, 2002; Pastel et al., 2020) or because gaze is further from the glasses (central) calibration point (Niehorster, Santini et al., 2020) as would be caused by floor-based proximal locations. In support of this suggestion, comparing our findings to research examining eye movements oriented towards wall mounted targets, MacInnes et al. (2018) reported a mean accuracy of 1.42 ± 0.58° for fixations on eye-height targets at distances up to 3 m (0.8°, 1.6°, and 1.8° for 1–3 m, respectively). Comparatively, we reported a mean accuracy of 2.85 ± 1.24° for floor-based targets up to the same distance (4.27°, 2.24°, and 2.02° for 1–3 m, respectively; Figure 7). The reduced accuracy presented in the current study affirms that the requirement to make proximal floor-based visual fixations appears to negatively influence accuracy. Considering the MAE and visual angle data together, the increased MAE at viewing distances of 4 m and greater (Figure 6) and larger viewing angles associated with more proximal locations (Figure 7) aligns with our hypothesis suggesting that both larger viewing angles (e.g., proximal locations) or large viewing distances (e.g., distal locations) may invite accuracy errors (MacInnes et al., 2018). In addition to distance related inaccuracy, research has shown that eye tracker slippage (device movement relative to the participants head) caused by participant movement, speaking, or facial expressions can reduce data quality (Niehorster, Santini et al., 2020). Although Niehorster, Santini et al. (2020) found Tobii Pro Glasses 2 to be fairly robust to slippage induced inaccuracy, the link between larger viewing distances and greater MAE emphasizes that researchers must take greater care to reduce all potential sources of error when collecting gaze data from participants attending to distal locations. 7

Results identified that precision measures (RMSE-S2S and STD) increased at greater look-ahead distances (Figures 8 and 9). Specifically, precision measures at target 1 were 0.03 m RMSE-S2S and 0.08 m STD whereas target 6 measures were 0.11 m RMSE-S2S and 0.31 m STD. The reduced precession observed at greater viewing distances has potential consequences for future eye movement research. For example, automated gaze behavior classification functions (e.g., fixation classification filters) are a common feature of eye tracking analysis packages. Within these packages, fixations are typically classified relative to a head-based frame of reference as periods of stable eye movement (in degrees) (Hessels et al., 2018; Kothari et al., 2020). However, automatic gaze classification functions are limited when applied to free-moving tasks, such as ambulation, due to the capacity of participants to adjust eye position to counteract head movement (Hessels et al., 2018). Alternatively, adaptive locomotion literature has commonly classified fixations as periods of gaze stability in relation to areas of interest in the world (e.g., Ellmers et al., 2020). Integration of eye tracking with optoelectronic motion capture offers the capacity to express point of gaze within the environment thereby affording event classification during free-moving tasks. However, the increased variability (e.g., STD, Figure 9) identified when participants attended to distal (>3 m) floor-based targets may indicate a possible challenge to automating such an approach. Specifically, the geometry of the experimental set up used in the current study appeared to introduce a nonlinear increase in noise as a function of distance. Whilst utilizing the gaze orientation signal for event classification may negate the issue of nonlinear noise with increasing distance (e.g., Hessels et al., 2017; Nyström & Holmqvist, 2010), it would not provide a possible solution to determining gaze events within dynamic everyday environments that require changes in look-ahead distance to, for example, safely plan routes through cluttered environments (e.g., Matthis et al., 2018) or intercept a distal foot target (Hildebrandt & Cañal-Bruland, 2020).

Despite automated event classification becoming widespread throughout gaze behavior research, the algorithms that facilitate behavior classification are dependent on a head-based frame of reference and are often contained within software packages that offer researchers limited flexibility over the analysis process (Niehorster, Hessels et al., 2020; Vansteenkiste et al., 2014). Previous work reviewing the treatment of gaze data has acknowledged that researchers should be mindful of the fact that results may be heavily dependent on the algorithms and parametrizations used (Kiefer, et al., 2017). Our findings reinforce this point and signpost that further work is required to investigate the ramifications of viewing distance on automatic gaze behavior classification when head to world transformation of gaze data is required, particularly regarding dynamic tasks where floor-based viewing distances exceed 3 m.

The reduction in data quality associated with increased viewing distances appears to be consistent for both the integrated data collection methods examined in the current study and scene camera data collection methods described in previous research (e.g., MacInnes et al., 2018). As such, selecting a viewing distance upper limit prior to the commencement of an experiment may offer a means of promoting data quality. Specifically, given that there were no differences between accuracy (MAE) in precision (STD) measures when participants fixated on targets between 1 and 3 m away, a cut-off distance of 3 m may be considered appropriate for research measuring floor-based point of gaze. Alternatively, viewing distance-based thresholds may be quantified relative to specific research questions. For example, research examining locomotor pointing in the context of a long jump task have considered areas of interest of 20 × 120 cm (

Processing Gaze Data

Results from the current study highlight less precise data as occurring at larger viewing distances and viewing angles (see Figure 4 for an example). Instances of data loss were also noted (

Filtering Gaze Data

Findings from the current study revealed that compared to the untreated point of gaze data, applying a 4th-order Butterworth filter at the autocorrelation determined cut-off frequency did not significantly change gaze data quality measures. Furthermore, no changes were identified when comparing untreated data to data filtered using a standardized cut-off frequency. These findings also suggest that using the autocorrelation function to identify a cut-off frequency does not significantly change data quality compared to filtering using a single cut-off frequency. However, perceptual-motor control literature has frequently acknowledged that gaze behaviors are influenced by a myriad of factors including the performance environment (Matthis et al., 2018), the task being performed (Huys & Beek, 2010), and the participant's abilities (Ellmers et al., 2020). Accordingly, the use of the autocorrelation function may present a flexible method of treating gaze data that respects the diversity of perceptual-motor behaviors not captured within the data set of the current study. For instance, further research is required to establish the effect of these data treatment processes on gaze data collected in settings such as fast-paced movement or target interception (e.g., Navia et al., 2017). Furthermore, based on the varied data qualities present within each trials (e.g., reduced precision at greater look-ahead distances; Figure 4), future research may wish to consider if autocorrelation approaches could be used to establish adaptive filter parameters which account for changes in the signals frequency content (Crenna et al., 2021; Erer, 2007).

Gap Filling Gaze Data

Previous literature pertaining to data collected via both optoelectronic capture and eye tracking have highlighted instances of missing data (Duchowski, 2007; Hessels et al., 2015; Spörri et al., 2016). Our findings were consistent, noting that missing data was commonly overserved. To overcome this limitation, a quintic spline was applied to the data set. Because reduced accuracy has been identified as a limitation of treating larger gaps using splines (Howarth & Callaghan, 2010) our aim was to compare treated and original data when filling gaps of different sizes (Musial et al., 2011).

When not filling gaps, no significant difference was identified between treated and the original gaze intercept data. The absence of differences indicates that the treatment process did not significantly change point of gaze location when there were no gaps in the data. Further, the lack of significant difference up to a gap size of 0.1 s (Figure 10) suggests that the data treatment process was sufficient to overcome missing data spanning less than 0.1 s. However, significant differences were observed at gap sizes of 0.2 s or more, which is consistent with previous research suggesting increased error when treating larger gaps (Howarth & Callaghan, 2010) and gap sizes thresholds (0.1 s) outlined previously in gaze methodology research (Hessels et al., 2017). To account for this, establishing a maximum gap size threshold based on the largest non-significant gap size may present one method of limiting the accuracy reduction associated with treating gaze data. For example, our findings suggest that the data treatment method was sufficient to overcome gaps in the data up to an upper gap size threshold of 0.1 s. Although this upper gap size threshold may offer a guide for future research, caution is recommended in universally applying this threshold. Accordingly, future research may undertake a similar analysis in order to consider the implications associated with treating data collected across a wider array of research methods. Alternatively, considering MAE associated with each gap size may present a method of establishing an upper gap size threshold. For example, the MAE associated with filling gaps of 30 samples (0.19 m) is similar to the MAE associated with look-ahead distances of 3 m (0.19 m). As such, it may be appropriate for researchers to establish a threshold based on the size, or location, of areas of interest being evaluated, with larger areas being less sensitive to the accuracy loss incurred by filling larger gaps.

Although similar procedures are offered through commercial software packages (e.g. Tobii pro lab), the algorithms in such software have been considered inflexible, with results being influenced by a process that researchers have limited scope to control (Niehorster, Hessels, et al., 2020). Moreover, such packages often focus on classifying gaze behaviors rather than promoting measurement accuracy (Hessels et al., 2017; Kiefer et al., 2017). Considering that these processes influence the reproducibility of eye tracking research, investigators have recommended, and developed, “open source” eye tracking analysis packages (Niehorster, Hessels et al., 2020). Akin to these approaches, exporting the eye position (motion capture) and orientation (eye tracker) data directly into processing software (e.g., MATLAB) vastly increases the range of treatment options available. Moreover, the ability to analyze gaze data using “open source” or processing software invites future researchers to consider the benefits of evaluating different (e.g., left eye, right eye, version, or vergence) signals expressed with a world reference frame (Hooge et al., 2019). Furthermore, greater focus on the development of algorithms for the categorization of gaze events, such as fixations, pursuits, and saccades, while the head is free (Kothari et al., 2020; Niehorster, Hessels, et al., 2020) could be beneficial to the wider research community. Future research may wish to consider if spatiotemporal analysis of world-space gaze location may offer a parsimonious approach to categorizing gaze events. Overall, this represents a major advantage to gaze behavior research and signifies that the integration may offer a platform that encourages greater clarity and innovation regarding the capture and analysis of gaze data.

Conclusion

The primary aim of this study was to assess the accuracy and precision of eye tracking data collected using the integrated Tobii Pro Glasses 2 and Qualisys motion capture system. Gaze data accuracy and precision were evaluated as participants attend to floor-based targets between distances of 1–6 m. Supporting our primary hypothesis, which predicted reduced accuracy when participants attend the most proximal and distal floor targets, we found that both accuracy and precision were reduced at viewing distances of greater than 3 m. Further, we found evidence suggesting that greater viewing angles, such as those caused by attending to ground-based locations at approximately 1 m, also reduced accuracy. These findings highlight a need for future research to review the quality of gaze data collected at distances greater than 3 m. As the increased error at greater viewing distances can be defined geometrically and is applicable across a wide range of contexts, future researchers should consider the implications of data quality throughout experimental design particularly when utilizing a combination of eye tracking and motion capture. With this in mind, future research may wish to collect gaze data with and without motion capture integration to compare the quality of data collected through both methods.

The second aim was to explore if decreased accuracy and precision of gaze data with increased viewing distance and angle, as well as loss of gaze data could be addressed via signal processing methods. We found that filtering the data using a low-pass Butterworth filter at a variety of cut-off frequencies identified using an autocorrelation function did not significantly change data quality, however, gap-filling using a quintic spline was sufficient to overcome missing data spanning less than 0.1 s. Future research should build on these findings and consider if procedures outside of the scope of this paper, such as adaptive filtering, offer solutions to these issues. Finally, with the integration of gaze and motion capture becoming a viable methodology in the study of human behavior, we have provided several primary considerations for the collection and analysis of gaze data that may help inform future methodological decisions.

Supplemental Material

sj-zip-1-ipe-10.1177_20416695221116652 - Supplemental material for Evaluating the integration of eye-tracking and motion capture technologies: Quantifying the accuracy and precision of gaze measures

Supplemental material, sj-zip-1-ipe-10.1177_20416695221116652 for Evaluating the integration of eye-tracking and motion capture technologies: Quantifying the accuracy and precision of gaze measures by Rhys Hunt, Tim Blackmore, Chris Mills, and Matt Dicks in i-Perception

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This publication and the work it describes were co-funded by the Health and Safety Executive (HSE) and a shared research panel of six industry partners.

Ethics Approval

Approval was obtained from the University of Portsmouth ethics committee. The procedures used in this study adhere to the tenets of the Declaration of Helsinki.

Consent

Informed consent was obtained from all individual participants included in the study.

Open Practices and Data Availability Statement

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.