Abstract

Top-down modulation is an essential cognitive component in human perception. Despite mounting evidence of top-down perceptual modulation in adults, it is largely unknown whether infants can engage in this cognitive function. Here, we examined top-down modulation of motion perception in 6- to 8-month-old infants (recruited in North America) via their smooth-pursuit eye movements. In four experiments, we demonstrated that infants’ perception of motion direction can be flexibly shaped by briefly learned predictive cues when no coherent motion is available. The current findings present a novel insight into infant perception and its development: Infant perceptual systems respond to predictive signals engendered from higher-level learning systems, leading to a flexible and context-dependent modulation of perception. This work also suggests that the infant brain is sophisticated, interconnected, and active when placed in a context in which it can learn and predict.

Perception is supported by the integration of bottom-up and top-down processes. The bottom-up processes propagate sensory information forward along the cortical hierarchy. Top-down processes enable communication from higher-level perceptual regions or cognitive systems to lower-level regions through feedback neural connections (Friston, 2005; Gilbert & Li, 2013; Lamme et al., 1998; Rao & Ballard, 1999; Summerfield & de Lange, 2014). Although bottom-up processes have largely been the focus of research in perception, recent advances in psychology and neuroscience have uncovered the crucial roles of top-down processes in modulating, stabilizing, and optimizing perception (de Lange et al., 2018; Lamme, 2018). Indeed, it is now known that feedback neural connections allow perceptual systems to receive top-down signals from numerous types of information stored in the brain, including contextual information, statistical regularities, attentional templates, and semantic knowledge (Gandolfo & Downing, 2019; Kok et al., 2017; Oliva & Torralba, 2007; Squire et al., 2013). In addition, altered top-down processes have been linked to abnormal perceptual capacities in populations with autism spectrum disorder (Kolodny et al., 2020) and schizophrenia (Fletcher & Frith, 2009), as well as in those born prematurely (Emberson et al., 2017). Although there is an emerging case for the importance of top-down processes in perception, these findings largely come from studies of adults, who have relatively mature cognitive systems and neural connections. The early emergence and development of top-down processes in perception have been largely unexplored. To address this gap, the current study examined top-down perceptual modulation in 6- to 8-month-old infants.

Given the importance of top-down perceptual modulation in adults, it is essential to resolve the developmental origins of this ability: Does top-down modulation emerge gradually over development, or is it available early with the potential to drive perceptual development? This is a pressing issue because it has been established that infants have more sophisticated cognitive and learning abilities than previously believed. For example, neuroimaging work has established that infants are employing higher-level neural systems (e.g., the prefrontal cortex) in a variety of tasks (Grossmann, 2013; Jaffe-Dax et al., 2020; Werchan & Amso, 2017; Werchan et al., 2016). These learning abilities enable infants to form rich representations of the external world, which could be a source of top-down signals. However, neural connections in infancy, especially the long-range ones, are relatively immature and underdeveloped (Cao et al., 2017; Dehaene-Lambertz & Spelke, 2015; Dubois et al., 2014; Menon, 2013). The resultant inefficient neural transmission might prevent infants from engaging in meaningful top-down perceptual modulation. Despite this inefficiency, there is a growing theoretical interest in the role of top-down processes in early perceptual development (Amso & Scerif, 2015; Dehaene-Lambertz & Spelke, 2015; Emberson, 2017; Hadley et al., 2014; Markant & Scott, 2018).

To date, there is suggestive evidence of the availability of top-down perception in infancy. Neuroimaging studies have shown that the infant visual system may be modulated by top-down signals as the result of learning (Emberson et al., 2015, 2017; Gliga et al., 2010; Kouider et al., 2015). Similar top-down neural modulation on the somatosensory system was recently found among premature neonate brains (Dumont et al., 2022), suggesting that the neural architecture for top-down perceptual modulation may emerge early in life. As infants’ top-down processes were restricted to neuroimaging findings, what remains unknown is whether these neural signatures of top-down modulation were behaviorally meaningful. Establishing behavioral signatures of top-down perceptual modulation is crucial for numerous reasons. First, prominent critics of top-down processes have argued that neuroimaging evidence cannot be considered evidence of top-down perception (Firestone & Scholl, 2016). Second, these neuroimaging findings could have a variety of cognitive consequences, from modifying perception to supporting learning through communicating error signals to other brain regions (e.g., Jaffe-Dax et al., 2020). Third, neural changes can happen below a threshold for behavioral changes. This was particularly the case when considering early competencies in immature brains (Karuza et al., 2014). Therefore, the current study focused on behaviorally investigating a key characteristic of top-down perception in infants: flexible perceptual modulation by predictive cues.

Statement of Relevance

Adult perception is an active process relying heavily on learned knowledge to predict what we are seeing, hearing, and feeling. However, the early emergence and development of this predictive nature have been largely unexplored. Does it emerge as the result of cognitive maturation, or is it available early to support human development? In this study, we found that 6- to 8-month-old infants could use learned cues to influence their perception of motion: A field of moving dots would appear to infants to move left or right when infants heard melodies associated with these directions. These findings suggest that the active integration of learned knowledge into perception is already sophisticated in infancy and plays a significant role in driving cognitive development.

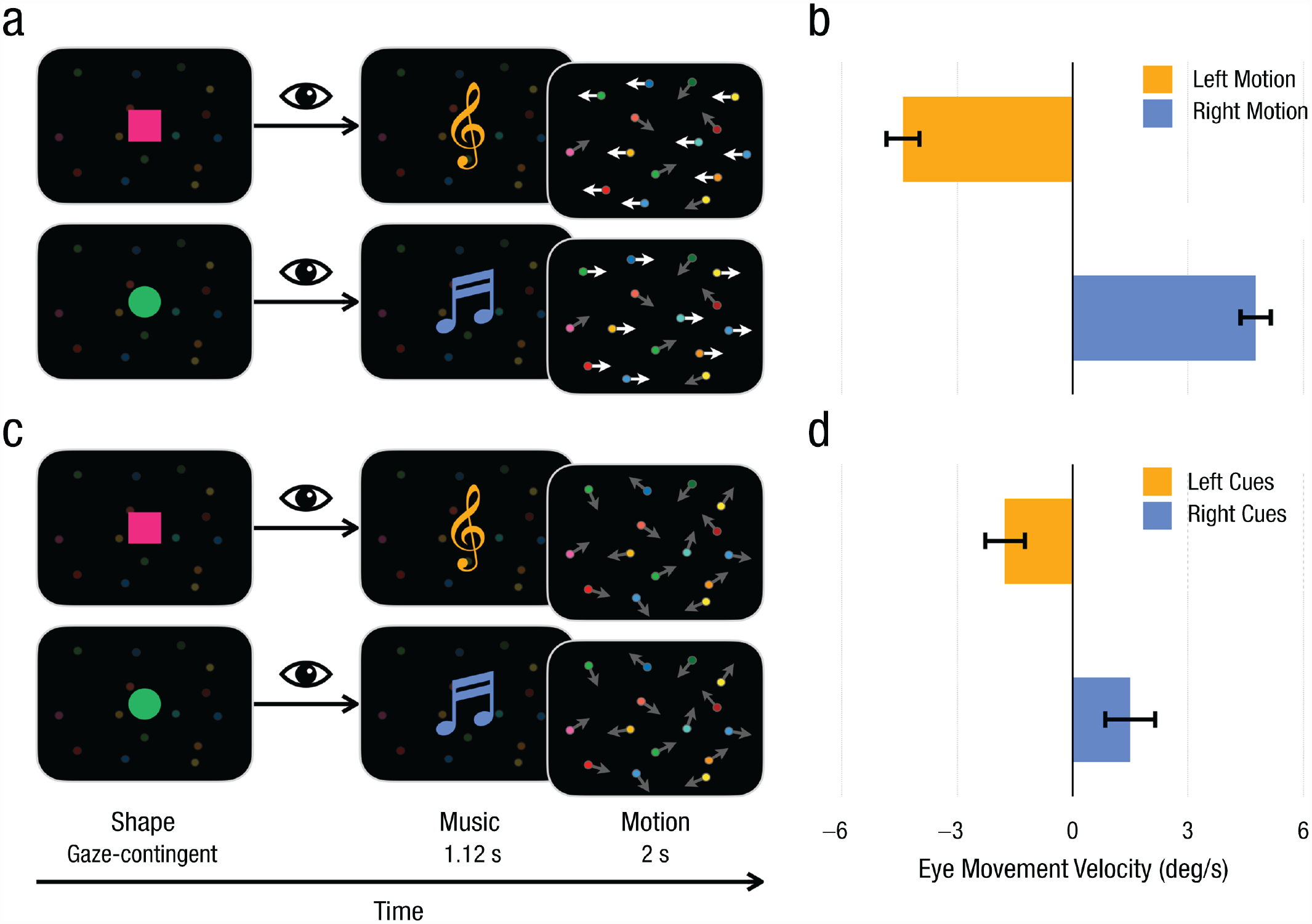

To investigate flexible changes in perception, we relied on a highly robust measure of motion perception that is quantifiable on single trials in infants with a high degree of rigor. Using this measure of perception, we constructed our experiments so that 6- to 8-month-old infants first learned to associate audiovisual cues (Fig. 1a) with a field of directional motion. Then we examined whether the learned cues could modulate infants’ perception of motion direction evidenced by a stimulus that does not present any coherent directional motion (Fig. 1c). Flexible modulation of motion perception would be revealed if the leftward and rightward motion cues induced differential motion percepts when no coherent directional motion signal was available. Importantly, top-down perceptual modulation would be highly flexible, as the motion perception would be biased differently according to specific cues, and rapid, as changes would arise from minutes of experience.

Experimental paradigm for all the experiments and results from the test phase of Experiment 1. Example trials are shown for the (a) 60%-directional-motion and (c) 0%-directional-motion conditions. When infants looked at the visual object, music would play, followed by a motion display that lasted 2 s. Bright and dark arrows are shown here to indicate coherent horizontal motion and random motion, respectively. The graphs show (b) average horizontal eye-movement velocity when left and right motion were presented and (d) average horizontal eye-movement velocity when left and right cues were presented. Negative values indicate leftward eye movement, and positive values indicate rightward eye movement. Error bars indicate ±1 SE.

We used eye movements to quantify infants’ motion perception in a single trial. Infants’ perception of directional motion can be measured by the average velocity of smooth-pursuit eye movements that specifically respond to the motion signals on each trial. The smooth-pursuit measure will reflect the direction and strength of the perceived motion field: The direction was indexed by the positive/negative sign of the averaged eye-movement velocity, and the strength of the perceived motion field was indexed by the absolute value of the averaged velocity. Importantly, we used 1,200 dots to create motion stimuli. Given the number and size of the dots as well as the inclusion of a high degree of decay for individual dots (i.e., individual dots persisted on the screen for a very brief time), these eye movements were unlikely to index the pursuit of an individual dot but reflected a response to the general field of motion. Although there is rapid development in smooth pursuit in the first year of life, we investigated motion perception under easy experimental conditions (e.g., low velocity of motion at 8° per second [°/s]) and at an age in which smooth pursuit is relatively mature (Richards & Holley, 1999). There is another type of eye movement (optokinetic nystagmus [OKN]) that also follows directional motion and can act as an index of infant motion perception. Therefore, it is not necessary to conclusively differentiate between OKN and smooth pursuit. For simplicity, we will refer to them as smooth-pursuit eye movements. In addition, building on this established literature, the current study validated the use of this measure using a psychophysical approach (Experiment 2).

Open Practices Statement

None of the studies reported in this article were preregistered. All experiment programs, experimental material examples, deidentified data, and analysis scripts have been made publicly available on OSF and can be accessed at https://osf.io/xn8g7/.

Experiment 1

Method

Participants

Twenty healthy infants between 184 and 240 days old (M = 208.05 days, SD = 18.22; nine females) with normal vision and hearing participated in Experiment 1. Three additional infants participated but were excluded from the final sample because of eye-tracker calibration failure (n = 1) or fussiness (n = 2). None of the infants participated in other experiments reported in this article. All participants in the current study (Experiments 1–3) were recruited by calling or emailing families from local communities. These families have expressed interest in participating in infant studies with their children. The power analyses used to determine the necessary sample sizes are described in the Supplemental Material available online. All experiments were reviewed and approved by the Princeton University Institutional Review Board (No. 7211) and the McMaster University Research Ethics Board (No. 3665).

Materials and procedures

Participants first learned to associate the audiovisual cues with motion directions (i.e., the learning phase). A musical melody and a visual object comprised the audiovisual cues, whose features predicted motion directions. We manipulated two features of the melody (melody type: ascending and descending; instrument: piano, glockenspiel, harp, and pipa) and two features of the visual objects (shape: square and circle; color: red and green). The motion display was created with 1,200 colorful dots (0.2° of visual angle) moving at the speed of 8°/s on the screen. A majority (60%) of dots were randomly selected to move coherently in one direction (left or right, across trials), which created a sense of directional motion. The remaining 40% of the dots moved in random directions. Past studies indicate that this is a strong motion signal and that infants perceive a direction of motion from it (Richards & Holley, 1999). We prevented infants from tracking individual dots by having, on every frame (16.67 ms), 5% of the dots (n = 60) disappear and reappear in randomly generated new locations (i.e., 5% limited lifetime). Each dot stayed on the screen for 333 ms on average.

On each learning trial, participants first saw a visual object that moved up and down continuously at the screen center (e.g., a red square). Once the eye tracker detected that participants had continuously looked at the visual object for 100 ms, the visual object disappeared and a melody (e.g., an ascending piano melody) was played for 1.12 s. At 200 ms before the melody ended, the 1,200 dots appeared and continuously moved for 2 s to present the 60% directional motion. To facilitate the learning of the associations, we designed each block so that infants viewed the same motion direction over four consecutive trials. The left- and right-motion blocks were presented in a shuffled order (left and right or right and left) for six blocks (three left-motion blocks and three right-motion blocks). To avoid carryover effects of directional eye movement across trials, we presented a nondirectional motion display (i.e., dots moved in random directions) between trials with a duration varying between 3 s and 6 s.

The learning phase was followed by the test phase examining whether the learned cues could bias motion perception. The test phase was similar to the learning phase except for one difference. In 25% of the test trials, after audiovisual cues, infants saw all 1,200 dots moving in random directions (0%-directional-motion test trial). The remaining 75% of the test trials were identical to the learning trials (60%-directional-motion test trial). The order of these trial types was randomized within a given block. All other procedures were identical to those in the learning phase. The test phase continued until the infants became inattentive. All infants completed at least 24 trials (three left-motion blocks and three right-motion blocks). Thus, our analyses focused on the first 24 test trials for the current experiment. The same number of trials were analyzed for Experiments 1a through 3.

We used an infant-controlled gaze-contingent procedure to trigger the stimuli presentation for each trial. This procedure offered several advantages to increase data quality and eliminate biases in eye-movement behaviors. First, it guaranteed that infants were looking at the screen and their eyes could be reliably tracked by the eye tracker right before the onset of motion stimuli. Moreover, because the shape was always in the middle of the screen and infants needed to look at it to trigger motion stimuli, their gazes were close to the screen center when the motion was presented. This ensured that participants had equal opportunities to move their eyes to the left or right. Thereby, any observation of directional eye movements should not have been biased by their initial looking locations (e.g., eyes were more likely to move to the right when the gaze was on the left side of the screen). Finally, this procedure offered immediate audiovisual feedback to reward infants’ attention, which encouraged them to focus on the screen. If infants did not look at the screen for more than 5 s, an experimenter (who was behind the screen and unaware of the stimuli on it) oriented infants’ attention to the screen with finger snaps. If infants failed to trigger a given trial, the experimenter started the trial manually once the infants’ eyes could be tracked by the eye tracker. Throughout the process, experimenters made decisions based on the real-time eye-tracking status data on the eye-tracker screen. Because the experimenter was unaware of the trial contents, their decision to trigger a trial was independent of experimental design and thus could not bias the results.

The study used an EyeLink 1000 eye tracker (SR Research, Ottawa, Ontario, Canada) with a 35-mm lens (sampling rate = 500 Hz). Infants were seated 60 cm to 70 cm in front of the eye tracker (measured by the eye tracker). We used a 5-point calibration procedure, which sequentially displayed sound and video of colorful rings at all four corners and the center of the screen. Calibration accuracy was accessed via EyeLink validation procedures, which measured the error between the stimuli locations and the gaze location estimated through the calibration parameters. Moreover, to ensure eye-tracking accuracy throughout the experiment, we performed drift corrections every eight trials to recalibrate. For more experimental details, see the Supplemental Material.

Results

We averaged horizontal smooth-pursuit velocity to index infants’ perception of directional motion because of a close link between the perception of motion direction and smooth-pursuit eye movement (Banton & Bertenthal, 1996; Banton et al., 2001; Richards & Holley, 1999; Thier & Ilg, 2005). When directional motion is perceived, the velocity of eye movements matches the speed of motion signals (Pola & Wyatt, 1980). The dots moved at 8°/s; therefore, eye movements that represent the perception of the motion should be around 8°/s. However, eye movements include not only a directional-motion-perception-related component but also other components, such as drifting (< 1°/s), tremor (< 1°/s), OKN, and saccades (both > 10°/s), which are irrelevant to motion perception. To exclude these irrelevant eye-movement components and focus on motion-perception-related eye movement, we used a speed filter to extract the eye movement around the speed of motion. Specifically, we created a filtering window around 8°/s with 1°/s variance (7°/s–9°/s) to retain the motion-related speed and exclude other aspects of the eye movements. After the filtering window was applied, only the time points where the relative motion was within the filter range were included. All other time points (e.g., those that had saccades) were removed by the filter. This filtering was performed on the basis of the speed of eye movement in both the left and right directions. We assigned the leftward eye-movement velocity as negative and the rightward eye-movement velocity as positive. Then, the filtered eye-movement velocity was averaged across the entire 2-s motion presentation period. The dependent variable (DV) was the sum of the velocity of all included data points divided by the total number of the included points. If there were no biases in an infant’s directional eye movements, the DV was close to 0°/s. If the eye movements were biased toward the right, the DV was positive. If all data points were leftward eye movement, the DV was negative.

We used the default velocity algorithm from EyeLink’s DataViewer software (Version 2.6.1) to estimate eye-movement velocity. First, it calculated the directional changes in eye-movement coordinates by subtracting the horizontal coordinate (in pixels) at a given data point from that of the previous data point (Xt – Xt − 1). Then, coordinates changes (in pixels) were converted to changes in visual angles with the distance between participants’ eyes and the screen (in millimeters), the screen size (in millimeters), and the screen resolution (in pixels). The resultant velocity measure was further smoothed by averaging the velocities of the previous nine data points and those of the following nine data points. To derive the DV (average eye-movement velocity), we averaged the velocity of each data point across the 2-s window of motion display (i.e., from the onset to the offset of motion presentation).

As shown in Figure 1d, in the testing phase, infants’ average eye-movement velocities were biased by the preceding audiovisual cues—left cues: M = −1.76°/s, SD = 2.65, 95% confidence interval (CI) = [−2.96, –0.56]; right cues: M = 1.50°/s, SD = 3.17, 95% CI = [0.02, 2.98]), paired-samples t(19) = 4.32, p < .001, Cohen’s d = 0.97—even when no directional motion was presented (0%-directional-motion trials). Results on both trials differed from zero—left cues: t(19) = −3.08, p = .006, Cohen’s d = 0.47, right cues: t(19) = 2.12, p = .047, Cohen’s d = 0.69, one-sample t tests. The diverging eye movements suggested that motion perception was modulated by top-down signals. Robust smooth-pursuit eye movements were observed when the field of motion was presented in the testing phase—left motion: M = −4.41°/s, SD = 2.17, 95% CI = [−5.43, –3.40], one-sample t(19) = −9.08, p < .001, Cohen’s d = 2.39; right motion: M = 4.76°/s, SD = 1.99, 95% CI = [3.83, 5.69], t(19) = 10.70, p < .001, Cohen’s d = 2.03, one-sample t(19) = 12.76, p < .001, Cohen’s d = 2.85, compared the left and right motion conditions with paired-samples t test (Fig. 1b). A repeated measures analysis of variance (ANOVA) with motion-signal availability (0% vs. 60%) and motion/cue direction (left vs. right) as within-subjects variables and the speed of smooth pursuit as the DV unsurprisingly showed that the strength of motion perception induced by 60%-motion signals (very strong motion signals) was much stronger than the strength of motion perception induced by predictive audiovisual cues, F(1, 19) = 29.57, p < .001, η p 2 = .61. The main effect of motion/cue direction, F(1, 19) < 0.01, p = .948, η p 2 < .01, and the interaction of motion-signal availability and motion/cue direction failed to reach significance, F(1, 19) = 0.34, p = .567, η p 2 = .02.

Experiment 1a

In Experiment 1a, we replicated the top-down effects of Experiment 1 under more restricted conditions. Specifically, the audio cue was predictive of motion direction but the visual cues (color and shape) were not. The links between regions that are sensitive to form and color (lateral occipital gyrus and V4) and motion (medial temporal) can be both feedforward and feedback (Lamme et al., 1998). However, if we could induce top-down modulation with only audio cues, this would further suggest that feedback connections were used to enable this perceptual modulation.

Method

Participants

Fifteen healthy infants between 184 and 261 days old (M = 220.40 days, SD = 20.17; four females; for the power analysis, see the Supplemental Material) with normal vision and hearing participated in Experiment 1a. Seven additional infants participated but were excluded from the final sample because of eye-tracker calibration failure (n = 2) or fussiness (n = 5). None of the infants participated in other experiments reported in this article.

Materials and procedures

The experimental materials and procedures were identical to those in Experiment 1 except for one difference: The color and shape of the moving attention-getter presented before music melodies and motion displays were constant within each participant. Across participants, the color and shape were randomly selected from the two colors and two shapes used in Experiment 1.

Results

Replicating the findings of Experiment 1, results showed that the audio cues of left and right motion made a significant difference in infants’ eye-movement velocity when the random motion stimulus was presented (left cue: M = −1.11°/s, SD = 3.26, 95% CI = [−2.91, 0.70]; right cue: M = 1.46, SD = 2.61, 95% CI = [0.01, 2.90]), paired-samples t(14) = 4.99, p < .001, Cohen’s d = 1.29. Moreover, the top-down effects were equivalent across Experiments 1 and 1a: A mixed 2 (experiment: 1 vs. 1a) × 2 (directional motion: 0% vs. 60%) ANOVA, collapsed across motion/cue directions, revealed that there was no significant effect of experiment or interaction between experiment and directional motion on the size of smooth-pursuit eye movement, ps ≥ .671, η p 2s ≤ .01 (Fig. 2, orange bars). The 60%-motion signal led to stronger smooth-pursuit eye movements than the 0%-motion signal, F(1, 38) = 62.58, p < .001, η p 2 = .66. This finding dovetails with recent work, suggesting that a short amount of experience can result in the modulation of the visual system based on auditory input (Emberson et al., 2015).

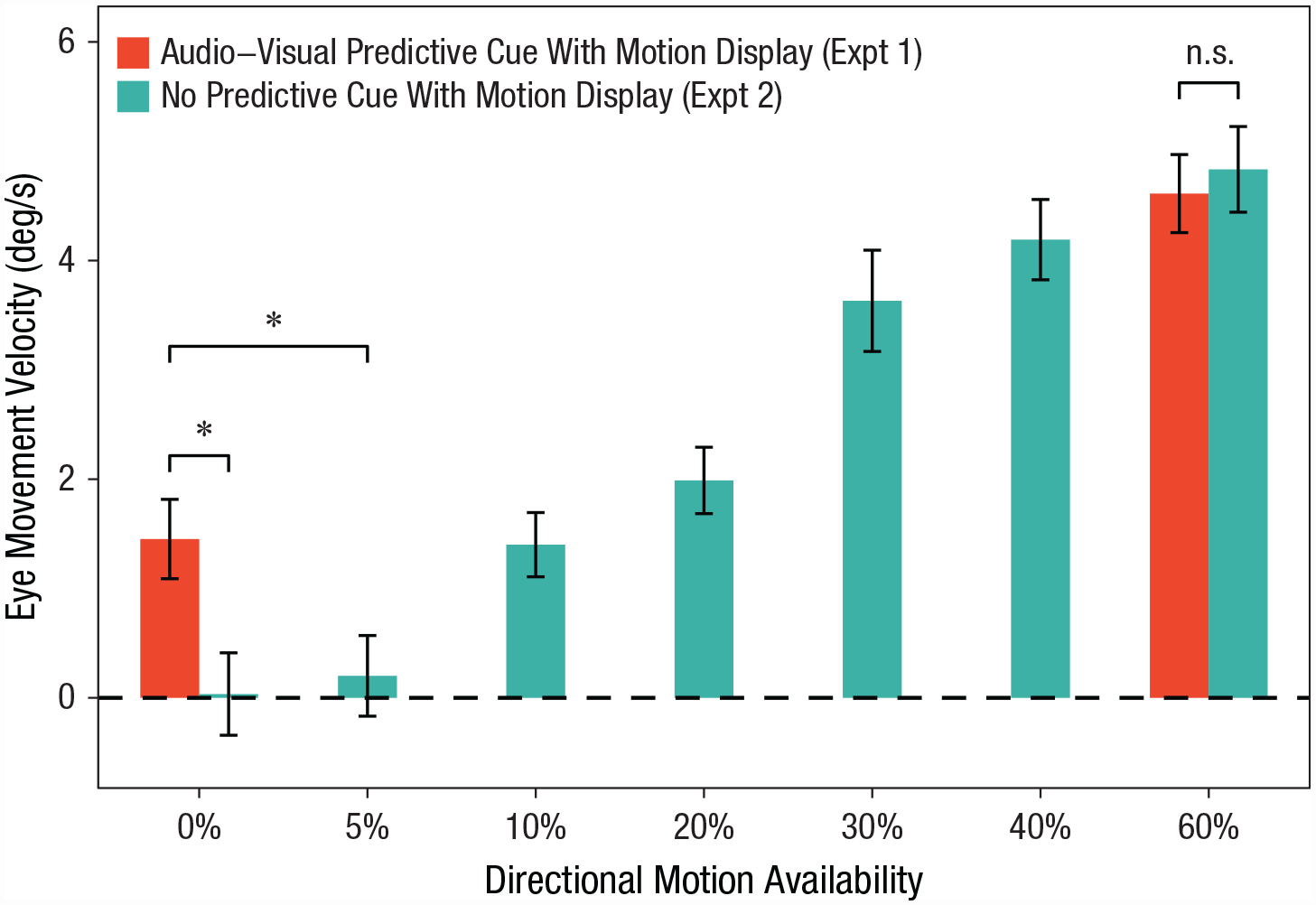

Eye-movement velocity in Experiments 1A and 2 for each directional-motion condition. Infants’ motion perception induced by predictive cues was equivalent to that induced by actual 10%- to 20%-directional-motion signals. Asterisks indicate significant differences between top-down motion perception (Experiment 1a) and bottom-up motion perception (Experiment 2). Error bars indicate ±1 SE.

Experiment 2

We employed a psychophysical approach to quantify the relationship between different bottom-up motion signals and smooth-pursuit eye movement. This approach allowed us to probe the strength of the top-down perceptual modulation in Experiment 1.

Method

Participants

Twenty healthy infants between 181 and 262 days old (M = 212.10 days, SD = 15.61; nine females) with normal vision and hearing participated in Experiment 2. Two additional infants participated but were excluded from the final sample because of fussiness. None of the infants participated in other experiments reported in this article. We decided to use the same sample size as in Experiment 1 (for the related power analysis, see the Supplemental Material).

Materials and procedures

We created seven levels of motion-signal availability by manipulating the proportion of dots moving coherently to the left or right. In addition to the 0%- and 60%-directional-motion levels that were used in Experiment 1, we included 5%-, 10%-, 20%-, 30%-, and 40%-directional-motion levels. We also used gaze-contingent audiovisual events to trigger motion presentation, as in Experiment 1. However, these audiovisual cues did not predict the direction of motion and thus could not produce top-down signals.

Each participant needed to finish three blocks of trials. Each block contained seven motion-availability levels in two directions, resulting in 14 trials (0% trial presented twice per block). These trials were presented in random order within each block. The procedure for each trial was identical to that in Experiment 1, except that the preceding audiovisual events were not predictive of any motion direction: The audio (melody type and instrument) and visual features (shape and color) of the audiovisual events were evenly distributed over left- and right-motion trials in a pseudorandom manner. In total, there were 42 trials (7 motion-availability levels × 2 directions × 3 blocks).

Results

As expected, with increased motion, the average smooth-pursuit eye movements increased significantly, F(6, 114) = 37.81, p < .001, η p 2 = .67, one-way repeated measures ANOVA (Fig. 3). Next, we compared these data with those obtained from Experiment 1 and found that the strength of motion perception induced by the predictive cues was equivalent to those induced by 10%- or 20%-motion signals—10%: t(38) = 0.11, false discovery rate (FDR)-corrected p = .913, Cohen’s d = 0.03; 20%: t(38) = −1.13, FDR-corrected p = .308, Cohen’s d = 0.35, independent-samples t tests. However, significant differences in motion perception were found between the top-down signals and other motion-availability levels (FDR-corrected ps ≤ .029, Cohen’s ds ≥ 0.76, independent-samples t tests).

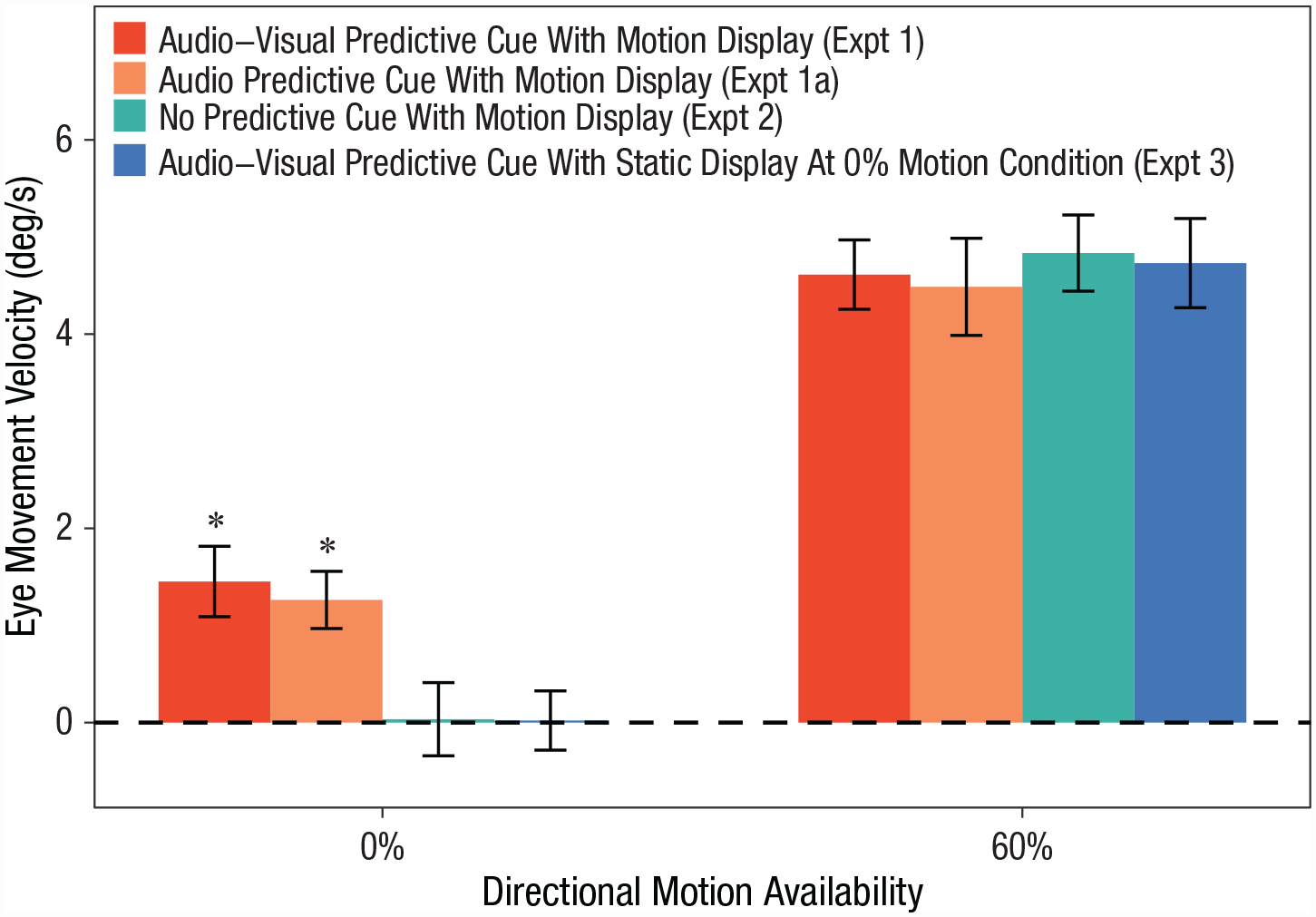

Eye-movement velocity in Experiments 1 through 3 for the 0%- and 60%-directional motion conditions. Asterisks indicate experiments in which eye-movement velocity was significantly above 0° per second. Error bars indicate ±1 SE.

To compare the findings from Experiments 1 and 2, we ran a mixed ANOVA examining the effects of top-down signal availability (experiment: 1 vs. 2) and motion-signal availability (directional motion: 0% vs. 60%) on the size of smooth-pursuit eye movements, collapsed across motion/cue directions. The results indicated that the top-down effect on motion perception was constrained to weak or ambiguous bottom-up signals, suggested by the significant interaction, F(1, 38) = 4.81, p = .034, η p 2 = .11. Post hoc analysis revealed a significantly larger smooth-pursuit eye movement in Experiment 1 than in Experiment 2 when the 0%-directional-motion signal was presented, t(38) = 2.71, Bonferroni-corrected p = .020, Cohen’s d = 0.86. No effect of top-down signal for 60%-directional-motion trials between the two experiments was found, t(38) = −0.42, Bonferroni-corrected p = 1.000, Cohen’s d = 0.13. The mixed ANOVA also showed that the 60%-motion signal led to larger smooth-pursuit eye movement than the 0%-motion signal, F(1, 38) = 113.38, p < .001, η p 2 = .75. No significant main effect of top-down signal availability was found, F(1, 38) = 2.60, p = .115, η p 2 = .06.

Experiment 3

We investigated whether the predictive cues in Experiment 1 induced a type of motor conditioning or anticipation of motion. If these cues were directly invoking smooth-pursuit eye movements without modifying perception, they should be insensitive to the sensory input and would be evoked by the predictive cues in the absence of any motion signals (Santos et al., 2012). To test this hypothesis, we presented 1,200 static dots on the 0%-directional-motion trials in Experiment 3.

Method

Participants

Twenty healthy infants between 185 and 239 days old (M = 232.05 days, SD = 22.37; nine females) with normal vision and hearing participated in Experiment 3. Two additional infants participated but were excluded from the final sample because of fussiness. None of the infants participated in other experiments reported in this article. We decided to use the same sample size as in Experiment 1 (for the related power analysis, see the Supplemental Material).

Materials and procedures

The materials and procedure were identical to those in Experiment 1 except that participants saw static dots on the screen after hearing the melodies for 25% of test trials (static trials). The remaining 75% of test trials were 60%-directional-motion trials, in which participants saw 60% directional motion on the screen. These trials were identical to those in Experiment 1.

Results

On the static test trials, infants’ eye movements were not affected by the predictive audiovisual cues (eye-movement velocity was not different from 0 in either left- or right-cue conditions)—left cues: M = 0.53°/s, SD = 2.13, 95% CI = [−0.28, 1.40], one-sample t(19) = 1.11, p = .280, Cohen’s d = 0.25; right cues: M = 0.57°/s, SD = 1.80, 95% CI = [−0.47, 1.53], t(19) = 1.39, p = .173, Cohen’s d = 0.31, and did not differ from each other, paired-samples t(19) = 0.05, p = .959, Cohen’s d = 0.01 (Fig. 2, blue bar). A mixed two-way ANOVA with the factors experiment (1 vs. 3) and motion-signal availability (0% static vs. 60%) on the size of smooth pursuit (collapsed across motion/cue directions) revealed a significant interaction, F(1, 38) = 4.37, p = .043, η p 2 = .10, indicating that the top-down effect was specific to the scenario where ambiguous motion signals are presented (i.e., eye movements are sensitive to the sensory input). Post hoc analyses determined a significant difference between the two experiments for the 0% static trials, t(38) = 3.02, Bonferroni-corrected p = .009, Cohen’s d = 0.96, but not in the 60%-motion trials, t(38) = −0.20, Bonferroni-corrected p = 1.000, Cohen’s d = 0.06. The mixed ANOVA also showed a significant main effect of motion-signal availability, F(1, 38) = 12.56, p < .001, η p 2 = .75. No significant difference in smooth-pursuit eye movement was found between the two experiments, F(1, 38) = 3.00, p = .091, η p 2 = .07. Thus, these eye movements were not obligatorily produced by the audiovisual cue and likely arose from top-down perceptual modulation rather than motor conditioning.

Discussion

Using a sensitive and robust measure of motion perception, we showed that 6- to 8-month-olds’ motion perception can be rapidly and flexibly modulated by learned predictive cues. Notably, the observed flexibility and context sensitivity in infant perception has previously not been reported. After a brief period of learning, the same sensory input (an ambiguous motion display) resulted in differential perception depending on what audiovisual (Experiment 1) or audio (Experiment 1a) cues preceded it. Identical bottom-up input was given in these instances, but differential perceptual outcomes were observed. Thus, we concluded that top-down signals were available to the visual system at 6 months. This behavioral evidence of top-down modulation was consistent with recent infant brain-imaging findings demonstrating that the perceptual cortices can be activated by learned cues (e.g., Dumont et al., 2022; Emberson et al., 2015). Whereas these findings from past imaging studies suggested the availability of top-down processes in infants’ brains, the current findings further indicate that those neural signatures are behaviorally meaningful and possibly shape early perceptual development.

The current findings showed several parallels to prior findings regarding top-down perception in adults. First, predictive cues could effectively modulate motion perception after a brief learning period (Hidaka et al., 2011; Kafaligonul & Oluk, 2015). Second, comparing the results of Experiments 1 and 2, we observed the top-down modulation only when directional motion was absent (0% directional motion) but not when the motion was present (60% directional motion). These findings were in line with those for adults: Top-down effects were strongest when bottom-up signals were weak or ambiguous (Bar, 2004; Hupé et al., 1998). Overall, these strong parallels suggested a developmental continuity of top-down mechanisms in supporting perception. Despite potential continuity, top-down perceptual modulation may also become increasingly efficient, flexible, and specialized with age because of the continuous optimization of feedback neural connections (Price et al., 2006), ever-expanding internal representations of the external world (Xiao & Emberson, 2019), and the maturation of feedback neural connections in the first year of life (Nakashima et al., 2021). Future work is needed in these areas.

Findings also contrast feedforward and feedback models in early perceptual development. Crucially, top-down perceptual modulation was established after a brief learning phase (24 trials). Most prior studies showed that a long period of sensory experience was essential to establish changes in perception (e.g., specialization in infant face perception over several months of face experience; Pascalis et al., 2002, 2005). These findings led to a common theory about infants’ perceptual development as feedforward models (Aslin & Smith, 1988; Dehaene-Lambertz & Spelke, 2015; Maurer et al., 2005; Maurer & Werker, 2014; Scott et al., 2007), where sensory input progressively shapes neural networks to optimize perception. These models are unlikely to support the rapid, context-dependent changes in perception found here. Instead, the current findings suggest the involvement of other higher-order cognitive systems, which are specialized for the formation of newly learned information rapidly and effortlessly. For example, in adults, the hippocampus can quickly form representations of the relation between arbitrary stimuli and cues. The reactivation of the associative representations by predictive cues could lead to the facilitation of predicted stimuli in the corresponding perceptual system (Hindy et al., 2016). Because feedback connections are essential to pass signals from these higher-order systems to perception systems, we refer to this mechanism as a feedback model. This feedback model presents an additional and unique pathway regarding how experience shapes perceptual development.

Other studies have found context-dependent changes but did not provide sufficient evidence for feedback mechanisms. For example, Castro and Wasserman (2016) reported learning-induced context-dependent pecking preference in pigeons. However, pecking preference is not a measure that one can closely link to perception in comparison with higher-order systems such as decision-making. Moreover, the contextual cues and stimuli were from a single perceptual modality, so it is unclear whether feedback connections would be necessary to change perception if these context-specific changes were indeed perceptual. Thus, other studies have shown context-dependent changes in behavior but did not provide specific evidence that top-down modulation was the likely mechanism.

Though the current findings are robust, their generalizability might be limited by the demographics of the current sample. The infant participants came from families with relatively high socioeconomic status. Another limitation is that the measure (smooth-pursuit eye movement) depends on the maturation of infants’ oculomotor capacity (> 4 months). To evaluate the generalizability of early top-down perception, future studies should recruit demographically diverse samples and use measures suitable for younger infants.

To summarize, the current study showed that infants can engage in top-down perception. These behavioral findings highlight the critical involvement of higher-order cognitive systems in perceptual processing in infancy. They also challenge the views that infants’ brains largely use feedforward processes and are functionally disconnected across regions (e.g., Cao et al., 2017) and that early perceptual development is driven exclusively by feedforward mechanisms (Aslin & Smith, 1988; Dehaene-Lambertz & Spelke, 2015; Maurer & Werker, 2014). Instead, this study suggests that feedback models are needed to explain the flexible and rapid changes in infant perception mediated by top-down processes. Future work is needed to further delineate how bottom-up and top-down processes support typical perceptual development and how they might be altered by atypical development.

Supplemental Material

sj-docx-1-pss-10.1177_09567976231177968 – Supplemental material for Visual Perception Is Highly Flexible and Context Dependent in Young Infants: A Case of Top-Down-Modulated Motion Perception

Supplemental material, sj-docx-1-pss-10.1177_09567976231177968 for Visual Perception Is Highly Flexible and Context Dependent in Young Infants: A Case of Top-Down-Modulated Motion Perception by Naiqi G. Xiao and Lauren L. Emberson in Psychological Science

Footnotes

Acknowledgements

We thank Richard N. Aslin, Rebecca L. Gomez, Amy W. Needham, and Nathaniel D. Daw for their comments on early versions of this article. We thank Mingbo Cai, Daphne Maurer, Yusuke Nakashima, So Kanazawa, and Masami K. Yamaguchi for their comments on the experimental design and data analysis used in this study. We also thank Jolie Luk, Jack Cloake, Si Jia Wu, and Marta Hanlon for their assistance in proofreading the manuscript of this article.

Transparency

Action Editor: Vladimir Sloutsky

Editor: Patricia J. Bauer

Author Contributions

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.