Abstract

Scientific-consensus communication is among the most promising interventions to minimize the gap between experts’ and the public’s belief in scientific facts. There is, however, discussion about its effectiveness in changing consensus perceptions and beliefs about contested science topics. This preregistered meta-analysis assessed the effects of communicating the existence of scientific consensus on perceived scientific consensus and belief in scientific facts. Combining 43 experiments about climate change, genetically modified food, and vaccination, we found that a single exposure to consensus messaging had a positive effect on perceived scientific consensus (g = 0.55) and on belief in scientific facts (g = 0.12). Consensus communication yielded very similar effects for climate change and genetically modified food, whereas the low number of experiments about vaccination prevented conclusions regarding this topic. Although these effects are small, communicating scientific consensus appears to be an effective way to change factual beliefs about contested science topics.

Keywords

Humanity is facing a number of formidable challenges. The planet is warming, and rising sea levels and more extreme weather events, such as floods and extreme heat, are causing a public health crisis (Masson-Delmotte et al., 2019). A substantial proportion of the global population is facing undernourishment (United Nations, n.d.). Although safe and effective genetic-engineering technology could at least partially alleviate this problem, this technology is restricted in many countries, and food products that result from this technology are unwanted by substantial parts of the public (Scott et al., 2018). And even when safe and effective vaccines are available, a considerable number of citizens in many nations are hesitant to take them up (Larson et al., 2016).

These issues—climate change, genetically modified food, and vaccination—have at least one thing in common: They are highly contested topics among parts of the general public despite clear scientific evidence, as reflected in strong scientific consensus. Inaccurate beliefs about these topics can have detrimental effects on tackling the challenges that we face. For instance, climate-change belief is an important predictor of the intention to behave in climate-friendly ways (Hornsey et al., 2016), perceived benefit and perceived risk are important predictors of acceptance of gene-editing technology (Siegrist, 2000), and the perception of adverse effects of vaccines is an important factor in vaccine uptake (Smith et al., 2017). Additionally, any democracy benefits from having an informed electorate.

To help the public understand the scientific facts surrounding these topics, science-communication strategies may play an important role. One easy-to-implement and often-studied science-communication intervention to close the gap between scientific facts and the public’s belief in those facts relies on communicating the scientific consensus, a high degree of agreement among scientists (van der Linden, 2021). In addition to providing people with an inherently valuable piece of information, the strategy of communicating scientific consensus relies on two heuristics: trust in experts and the idea that consensus implies correctness (van der Linden, 2021). When people are not aware of the scientific consensus, communicating consensus may lead to an updated estimate of the scientific consensus, which in turn will act as a gateway to changing personal factual beliefs (Lewandowsky et al., 2013). This reasoning is captured in the gateway-belief model (van der Linden, Leiserowitz, et al., 2015; van der Linden, Leiserowitz, & Maibach, 2019). The gateway-belief model is supported by a number of studies demonstrating that communicating the existence of scientific consensus can be an effective strategy to elicit accurate perceptions of scientific consensus (i.e., people’s estimate of the degree of consensus among scientists), even on controversial issues such as climate change, genetically modified food, and vaccination (Goldberg et al., 2019b; Kerr & Wilson, 2018; Lewandowsky et al., 2013; van der Linden, Clarke, & Maibach, 2015; van der Linden, Leiserowitz, & Maibach, 2019).

However, there is also conflicting evidence, leading to discussion about the effectiveness of consensus communication as a science-communication strategy (Bayes et al., 2020; Landrum & Slater, 2020; van der Linden, 2021). Some scholars argue that people might not see experts who adopt positions incongruent with their preferences as knowledgeable or trustworthy (Kahan et al., 2011) or that people might experience reactance from being exposed to a scientific-consensus message (Ma et al., 2019; also see Dixon et al., 2019; van der Linden, Maibach, & Leiserowitz, 2019). Others argue that even if the scientific consensus itself is accepted by individuals, this may not necessarily cause them to change their personal beliefs (Bolsen & Druckman, 2018; Dixon, 2016; Pasek, 2018). These arguments may be especially applicable to contested science topics such as climate change, in which trust in scientists is relatively low compared with trust in scientists in other fields, and personal beliefs are fairly stable (e.g., Pew Research Center, 2016). Thus, there is debate about the effectiveness of scientific-consensus messaging in informing the public, and it is unclear whether the effects of such messages differ by topic.

The current meta-analysis contributed to the debate by meta-analytically testing the effects of scientific-consensus communication related to informing the public. The main objective was to assess the effects of scientific-consensus communication on (a) perceived scientific consensus and (b) belief in scientific facts regarding contested science topics. We focused on climate change, genetically modified food, and vaccination because they are contested among substantial parts of the public (e.g., Larson et al., 2016; Leiserowitz et al., 2021; Scott et al., 2018) and because we expected to find multiple experiments per topic to meta-analytically synthesize. To assess these effects, we included only randomized experiments in the meta-analysis. In line with the gateway-belief model, our hypothesis was that exposure to a message conveying scientific consensus would lead to a higher estimate of scientific consensus than not being exposed to such a message. Similarly, we hypothesized that those individuals exposed to a message conveying scientific consensus would change their beliefs to be more in line with the scientific consensus than those individuals not exposed to such a message. Furthermore, we aimed to investigate whether the effectiveness of consensus communication differs by topic, but we had no a priori hypotheses regarding such differences.

Statement of Relevance

Climate change, undernourishment, and vaccine hesitancy are among the greatest challenges humanity is facing right now. These challenges and their solutions are contested topics among parts of the public, despite clear scientific evidence, which is reflected in strong consensus among scientists about the facts regarding these challenges and their solutions. Inaccurate beliefs—for instance, that climate change is not real, that genetically modified food is unhealthy, or that vaccination is unsafe—can have detrimental effects on support for these challenges and the behavior needed to address them. One of the most studied interventions to correct beliefs relies on communicating scientific consensus. There is debate, however, regarding the effectiveness of this intervention in changing perceptions of scientific consensus and bringing about accurate beliefs about contested science topics. The current meta-analysis shows that exposure to scientific-consensus messaging has a significant positive effect on perceived scientific consensus and on belief in scientific facts. Although these effects are small, the intervention is easy to implement.

In addition to comparing the effects of consensus communication between topics, we conducted sensitivity analyses and examined reporting bias, aiming to provide insight into the robustness of the effects. Finally, we conducted an exploration of moderators other than the contested science topic that might explain the effectiveness (or ineffectiveness) of scientific-consensus communication. Much discussion about the gateway-belief model centers around differential effects of scientific-consensus communication for specific groups (e.g., Landrum & Slater, 2020; Ma et al., 2019; van der Linden, Leiserowitz, & Maibach, 2019). To explore whether scientific-consensus communication might be more or less effective for certain groups, we examined moderating effects of conservatism and preexisting perceptions of consensus and beliefs. In the United States, where most experiments were conducted, political conservatism is related to skepticism toward science in general and climate science specifically (Azevedo & Jost, 2021; Lewandowsky & Oberauer, 2021; though see Rutjens et al., 2018, for skepticism about genetically modified food). Such a skeptical attitude might make conservatives more likely to distrust scientists and exhibit reactance to scientific-consensus messages or to downgrade the informational value of scientific consensus itself. In contrast, however, some climate-change research has found that consensus communication might neutralize the effect of political orientation, increasing climate-change acceptance particularly among conservatives (Lewandowsky et al., 2013; van der Linden, Leiserowitz, & Maibach, 2019). For the same reasons as skepticism related to conservatism, scientific-consensus messaging might also not be accepted by people with conflicting preexisting perceptions of consensus or factual beliefs (Pasek, 2018). But again, this communication strategy may also neutralize such conflicting beliefs (van der Linden, Leiserowitz, & Maibach, 2019). Thus, the potentially moderating roles of conservatism and preexisting perceptions and beliefs are yet unclear.

Method

To avoid bias and provide evidence of our analysis intentions, which is an important benefit of preregistering meta-analyses (Quintana, 2015), we preregistered the protocol for this meta-analysis on OSF (https://osf.io/jm3ns/; for all deviations from the preregistered protocol, see https://osf.io/3tkwy/). All confirmatory parts of this work were preregistered. For the exploratory parts, we explicitly clarify in the Results section whether they were preregistered or not. The data and analysis scripts for the meta-analysis are available on OSF (https://osf.io/9gnkc/). The reporting of this meta-analysis was guided by the standards of the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) statement (Page, McKenzie, et al., 2021).

Literature search

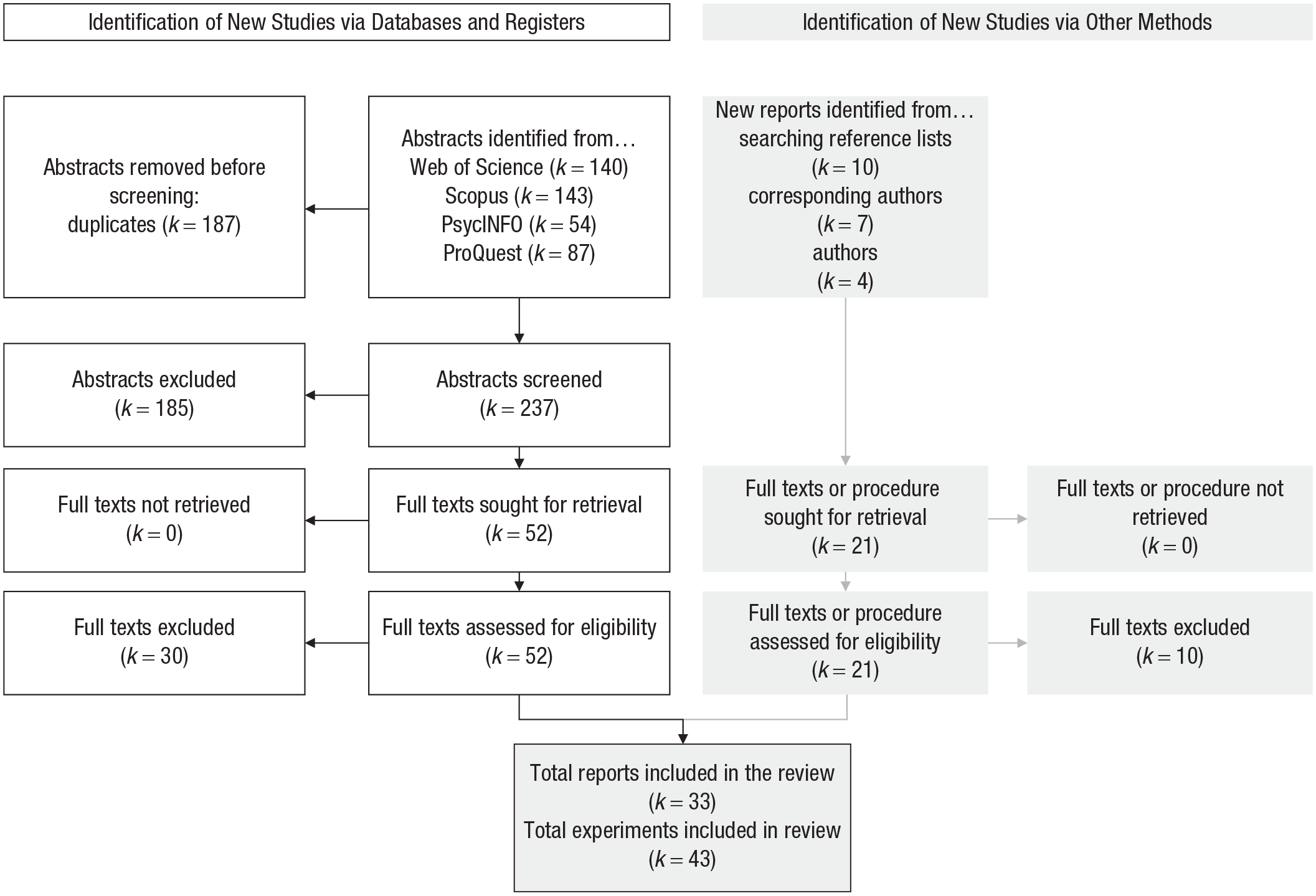

We searched for published and unpublished articles in three ways: (a) We searched electronic databases, (b) we examined the reference lists of the articles that met the inclusion and exclusion criteria, and (c) we contacted corresponding authors of included articles to ask for other relevant work. See Figure 1 for a flow diagram illustrating the literature-search process.

Flow diagram of the literature-search process.

First, we searched the electronic databases Web of Science, Scopus, PsycINFO, and ProQuest. We used sets of keywords to search for experiments investigating scientific consensus and a belief about one of the three contested science topics (see Table 1). This search took place March 15, 2021. All search results (k = 424) were imported in the Rayyan web application (Ouzzani et al., 2016), where duplicates (k = 187) were removed. Two coders then reviewed all abstracts to indicate whether the article contained any relevant experiments (~93% agreement, Krippendorf’s α = .79). Discrepancies between the two coders were resolved through discussion. The following inclusion criteria were used to screen abstracts. First, the article had to report an experiment with a between-subjects manipulation of scientific-consensus communication (information about scientific consensus provided vs. no information about scientific consensus [control]). Second, the topic of consensus communication had to be climate change, vaccination, or genetically modified food. Third, one of the dependent variables had to be a measure of perceived scientific consensus related to the consensus message or a measure of participants’ belief in a factual statement. Fourth, the participant sample had to be adult humans. Fifth, the article had to be written in English.

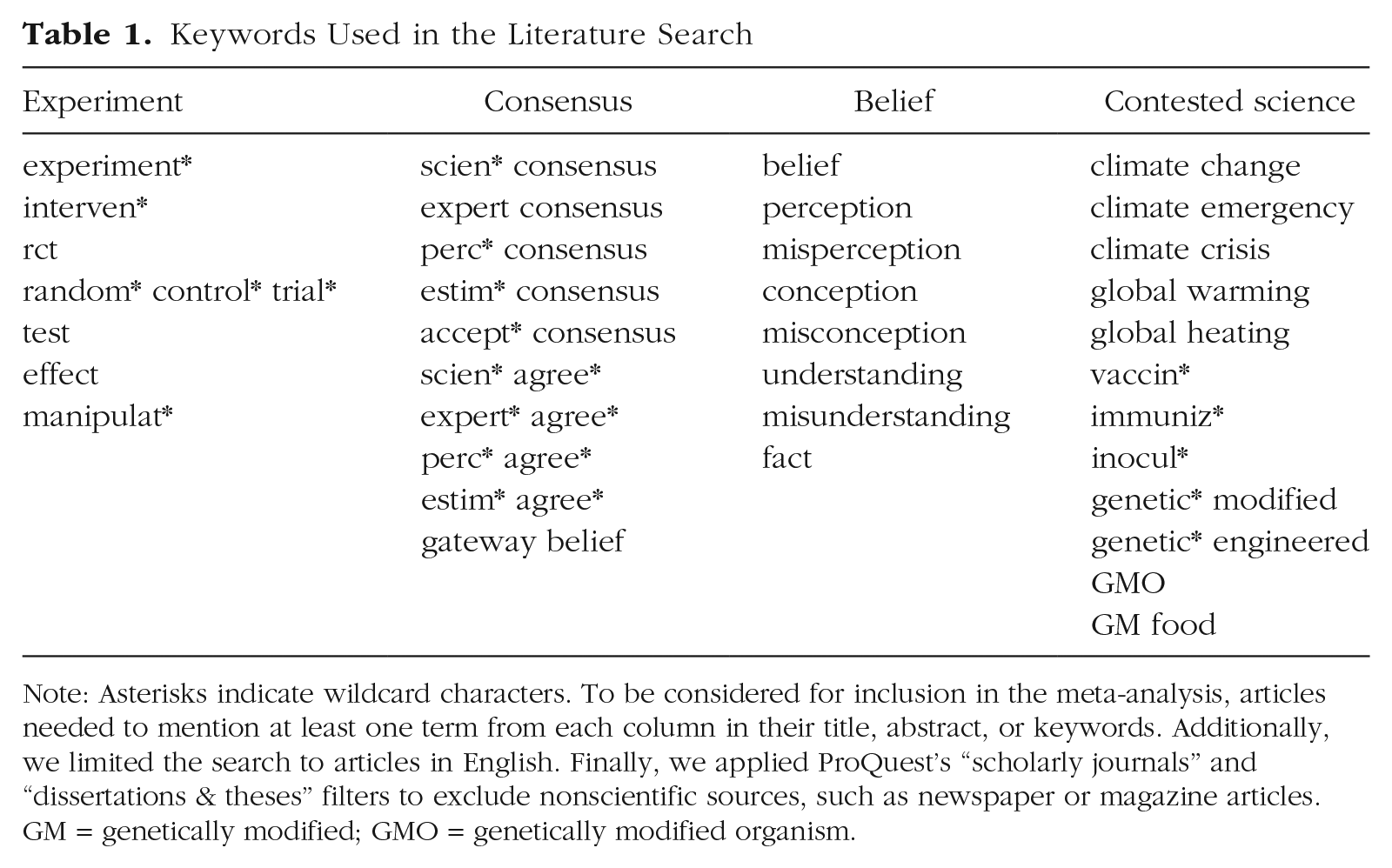

Keywords Used in the Literature Search

Note: Asterisks indicate wildcard characters. To be considered for inclusion in the meta-analysis, articles needed to mention at least one term from each column in their title, abstract, or keywords. Additionally, we limited the search to articles in English. Finally, we applied ProQuest’s “scholarly journals” and “dissertations & theses” filters to exclude nonscientific sources, such as newspaper or magazine articles. GM = genetically modified; GMO = genetically modified organism.

Once all abstracts were screened, full texts of the remaining records (k = 52) were obtained. Two full texts were not publicly available and were successfully requested from the relevant corresponding authors. All full texts were reviewed by two coders (~92% agreement, Krippendorf’s α = .88) for the following exclusion criteria. First, participants’ belief in the factual statement of interest could not have been manipulated with misinformation in addition to the experimental manipulation of interest (e.g., exposing participants to misinformation before or after a consensus message). Second, the article had to report original data not published elsewhere. Third, the experiments of interest had to adhere to the inclusion criteria that were used to screen the abstracts. If an article did not adhere to these criteria, the full text (or the relevant experiment in the full text) was excluded from the meta-analysis.

After screening abstracts for inclusion criteria and full texts for exclusion criteria, we searched the reference lists of the remaining articles (k = 22) for other relevant articles. Full texts of new, potentially relevant articles were assessed for eligibility (k = 10) using the same criteria as before and added to the list if two independent coders agreed that they should be included (100% agreement). For those new articles added to the list (k = 3), the reference list was searched as well, but this led to no new potentially relevant articles.

Finally, in May and June 2021, we contacted corresponding authors of articles in the list (N = 18) and asked them to identify any relevant work that was missing. We also considered whether we had encountered any missing relevant work ourselves. All new articles (or procedures of experiments when no manuscript was available; k = 11) were screened using the same inclusion and exclusion criteria (100% agreement between two coders). This yielded eight new unpublished articles. Adding these and the three articles from the reference-list search to articles from the database search resulted in a total of 33 articles containing 43 experiments that were included in this meta-analysis.

Extracting data

The first author extracted relevant data from the final list of included experiments. The preregistered protocol included a data-extraction procedure for effect-size data, describing how to handle situations such as multiple consensus-messaging conditions and multiple dependent variables. We focused on immediate effects of consensus communication and included experiments both with passive and active control conditions (e.g., reading a mock news article about the relevant topic without consensus information). With regard to the outcome measures, we extracted postmanipulation information related to perceptions of scientific consensus and belief in scientific facts that were specifically addressed in the consensus message. Thus, all designs were treated as between-subjects posttest designs. Meta-analysis using standardized mean differences (such as Hedges’s g) does not allow one to combine effect sizes from postmanipulation scores with effect sizes from difference scores (using both pre- and postmanipulation scores; Deeks et al., 2021). This means that even if the experiment included a premanipulation measure of one of our outcomes, this information was not used to calculate the effect size. Premanipulation scores were used, however, in the exploratory analyses of preexisting perceptions of scientific consensus and preexisting belief in scientific facts.

If an experiment contained multiple between-subjects manipulations of consensus communication (e.g., a condition with a consensus message in text and a condition with a consensus message in a bar chart), these were synthesized to obtain a single comparison with the control group. Messages reporting at least 82% agreement among scientists (based on the lowest percentage of agreement that was considered high consensus in the included works; Kobayashi, 2018), messages simply stating that there is a scientific consensus (e.g., “On [genetically modified food] there is a rock-solid scientific consensus”; Hasell et al., 2020), and messages stating that a large number of scientists are in agreement (e.g., “a recent report produced by 300 expert scientists”; Bolsen & Druckman, 2018) were included as scientific-consensus conditions. If an experiment contained multiple, unaggregated measures of a factual belief that were used as dependent variables, only the measure that was most specifically related to the consensus message was extracted (e.g., if a consensus message focused on the safety of vaccines, we extracted information related to participants’ concerns regarding the safety of vaccines while ignoring a more specific measure of participants’ belief in a link between vaccines and autism). If multiple, unaggregated measures of a factual belief were used as dependent variables and more than one of those beliefs were specifically addressed in the consensus message, those measures were aggregated by standardizing them (if they were not measured on the same scale) and taking the mean. For example, if a consensus message focused on both the reality of climate change and its human causation, we extracted information regarding measures of belief in climate change and of belief in its human causation.

Of course, not all situations could be foreseen (e.g., multiple between-subjects control conditions). Therefore, ad hoc decisions had to be made during data extraction when we encountered situations that were not described in the preregistered protocol. Following the same procedure, a second author independently coded a random sample of five experiments after training, which yielded the same results. The complete procedure—with preregistered and ad hoc decisions discussed separately—can be found at https://osf.io/3tkwy/.

For each experiment, means, standard deviations (or standard errors), and sample size per condition (n) were extracted. If this information was not available from the article, we requested the missing statistical information from the corresponding author. In all but one case, the requested information was obtained (the effect size in question was coded as missing). Means, SDs or SEs, and n were used to calculate Hedges’s g, which was recoded where necessary so that a positive value indicated higher perceived scientific consensus in line with the scientific facts and more scientifically accurate beliefs compared with control conditions.

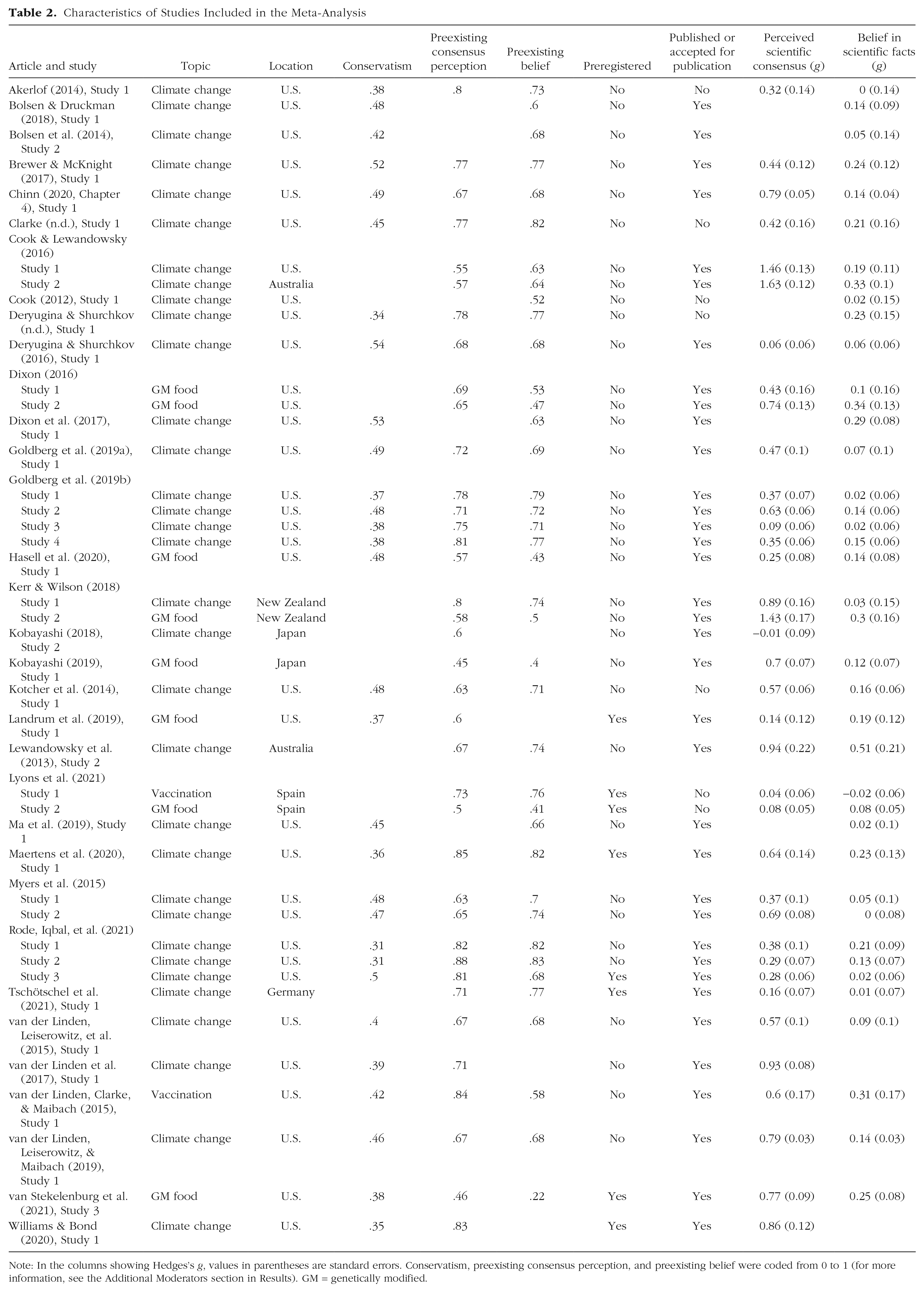

Following our preregistration, we also extracted information describing the contested science topic that the experiment focused on (i.e., climate change, genetically modified food, or vaccination). All other variables that were extracted were obtained in a data-driven manner. Analyses related to these variables are therefore labeled “exploratory.” These variables included (a) sample conservatism (if the experiment was conducted in the United States, to ensure that conservatism measured more or less the same construct across experiments), (b) preexisting perceptions of scientific consensus and preexisting beliefs, and (c) whether the experiment was preregistered. See Table 2 for a list of the information that was extracted.

Characteristics of Studies Included in the Meta-Analysis

Note: In the columns showing Hedges’s g, values in parentheses are standard errors. Conservatism, preexisting consensus perception, and preexisting belief were coded from 0 to 1 (for more information, see the Additional Moderators section in Results). GM = genetically modified.

Analytic strategy

The meta-analytic estimates, expressed in Hedges’s g and 95% confidence intervals (CIs), of the effect of scientific-consensus communication on perceived scientific consensus and beliefs were estimated using random-effects models. We used the restricted maximum likelihood (REML) estimator to estimate τ2 and the Hartung-Knapp-Sidik-Jonkman (HKSJ) method for tests and 95% CIs. The REML estimator performs better than other often-used heterogeneity estimators, and the HKSJ method outperforms other methods of estimating summary effects and CIs (Langan et al., 2019). To describe between-experiment heterogeneity, we report the Q test for heterogeneity and the I2 statistic in addition to the estimate of τ2.

We used the dmetar (Version 0.0.9000; Harrer et al., 2019), meta (Version 4.18-1; Balduzzi et al., 2019), and metafor (Version 3.0-1; Viechtbauer, 2010) packages for R (Version 4.0.3).

Results

The final set of experiments (k = 43) yielded 37 effect sizes (total N = 32,398) for the effect of scientific-consensus communication on perceived scientific consensus and 40 effect sizes (total N = 33,985) for the effect of scientific-consensus communication on belief in scientific facts. None of the experiments contributed more than one effect size per outcome. Most experiments investigated scientific-consensus communication about climate change (k = 33) or genetically modified food (k = 8); only two experiments focused on vaccination.

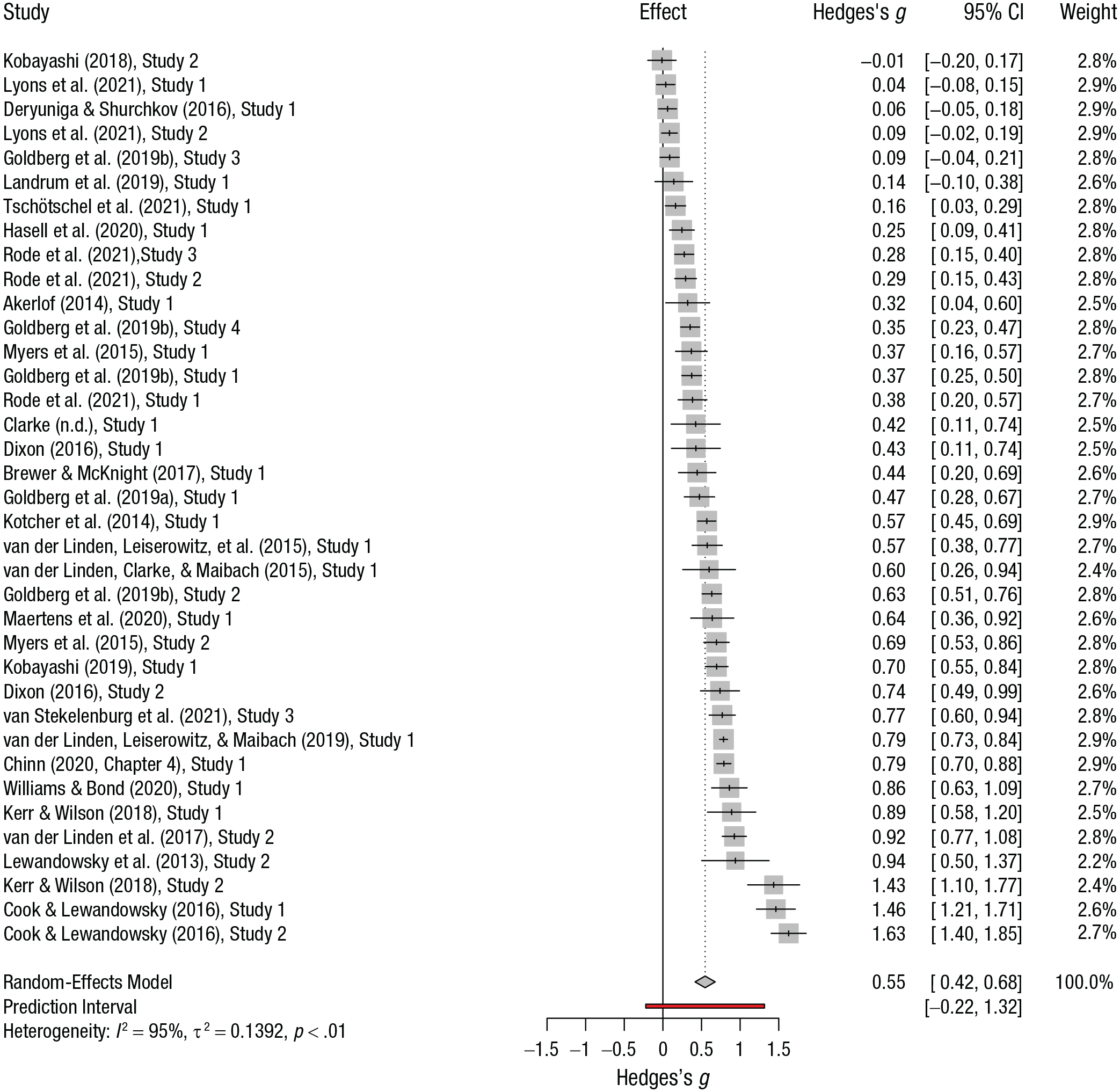

Perceived scientific consensus

The estimated average effect size of scientific-consensus communication on perceived scientific consensus, g = 0.55, 95% CI = [0.42, 0.68], differed significantly from zero, t(36) = 8.52, p < .001, meaning that a statistically significant meta-analytic effect was identified. Figure 2 shows a forest plot of the observed outcomes and the estimated average effect. Heterogeneity in the effects of scientific-consensus communication on perceived scientific consensus between experiments was high, τ2 = 0.139, Q(36) = 752.51, p < .001, I 2 = 95.2%, 95% CI = [94.2%, 96.1%]. This high heterogeneity suggests that the experiments might not share one common effect size, which is reflected in a quite broad 95% prediction interval ranging from −0.22 to 1.32. Prediction intervals reflect the distribution of true effect sizes in random-effects meta-analysis (Borenstein et al., 2009, pp. 127–133). In contrast to CIs, which describe the range in which the population effect is likely to be found, the 95% prediction intervals describe the range in which future effects of individual experiments might be expected (IntHout et al., 2016).

Forest plot showing the effects of scientific-consensus communication on perceived scientific consensus (k = 37 experiments). In the Effect column, each vertical mark indicates the effect size, and each horizontal line indicates the 95% confidence interval (CI). The prediction interval is shown for the effect size of the random-effects model. The square, shaded areas represent each study’s weight in the meta-analysis.

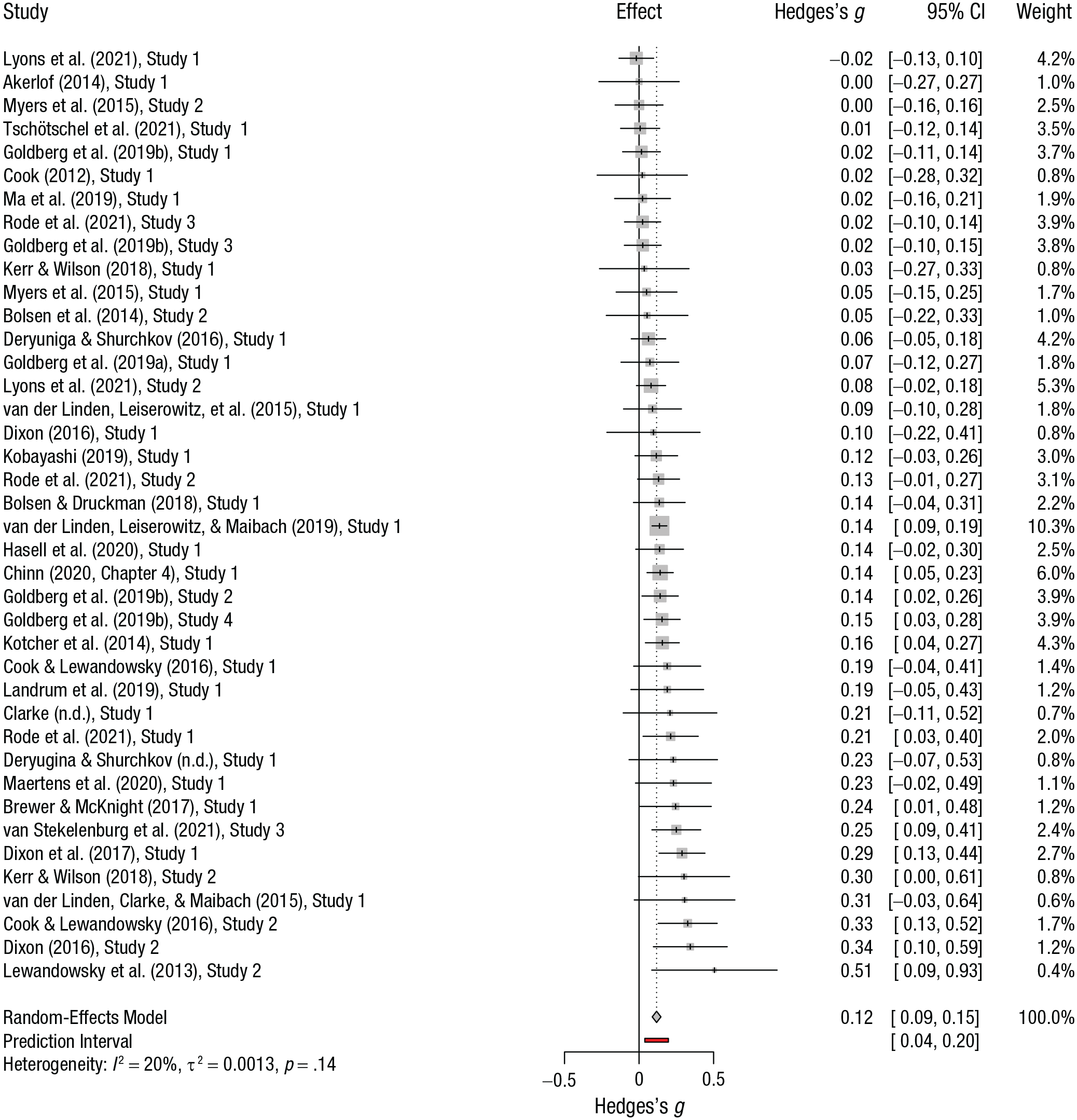

Belief in scientific facts

The estimated average effect size of scientific-consensus communication on belief in scientific facts, g = 0.12, 95% CI = [0.09, 0.15], differed significantly from zero, t(39) = 8.15, p < .001, meaning that, here too, a statistically significant meta-analytic effect was identified. Figure 3 shows a forest plot of the observed outcomes and the estimated average effect. There was no statistically significant heterogeneity in the effects of scientific-consensus communication on belief in facts between experiments, τ2 = 0.001, Q(39) = 48.72, p < .137, I2 = 19.9%, 95% CI = [0%, 46.2%]. This is reflected in a relatively narrow 95% prediction interval, which ranged from 0.04 to 0.20.

Forest plot of the effects of scientific-consensus communication on belief in scientific facts (k = 40 experiments). In the Effect column, each vertical mark indicates the effect size, and each horizontal line indicates the 95% confidence interval (CI). The prediction interval is shown for the effect size of the random-effects model. The square, shaded areas represent each study’s weight in the meta-analysis.

Topic

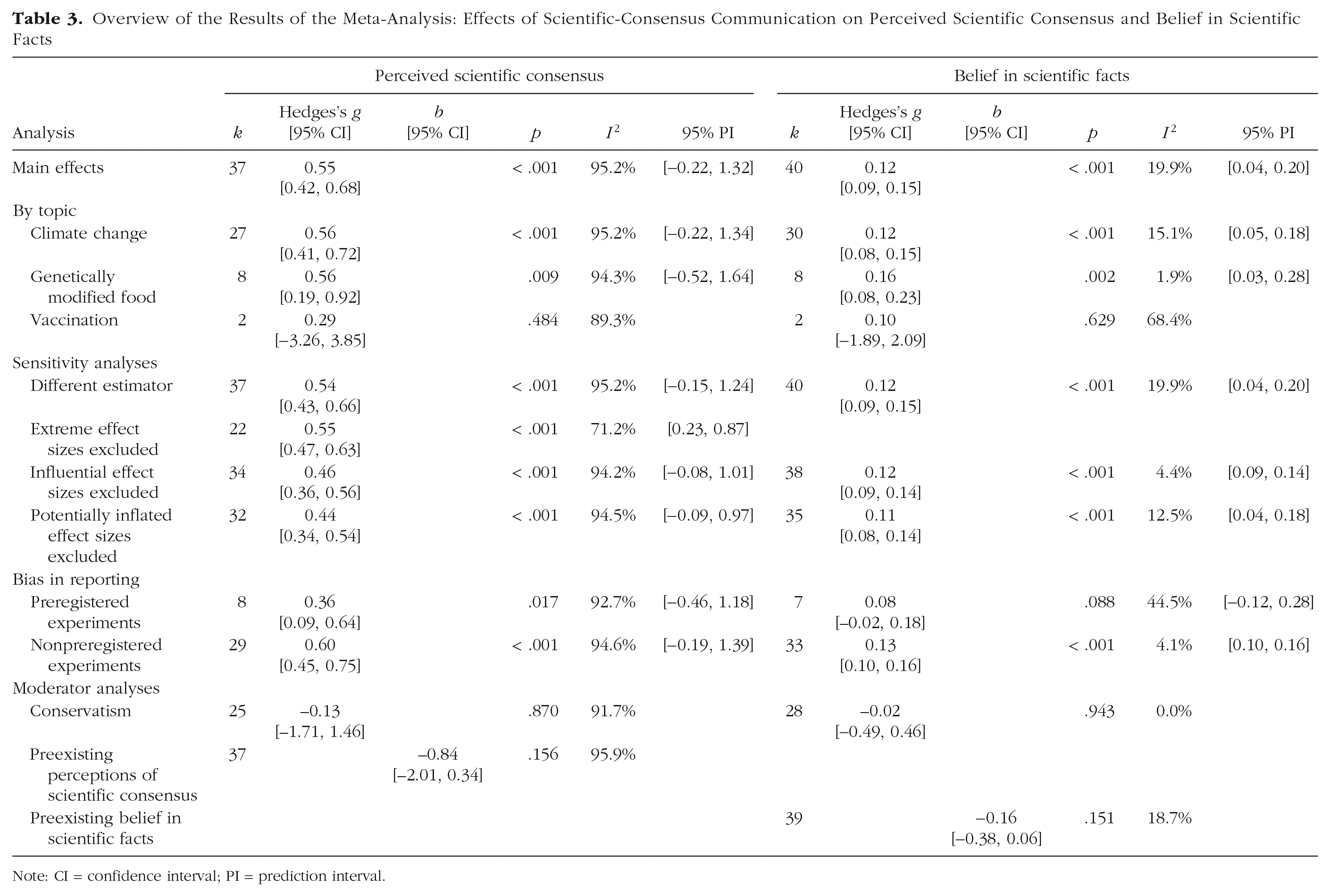

A second preregistered goal of this meta-analysis was to investigate the effect of consensus communication per contested science topic, which is not only of theoretical and practical value, but also functioned as a sensitivity analysis for the overall effects of scientific-consensus communication. A random-effects model for between-subgroup effects demonstrated that there were no significant subgroup differences between the three topics related to perceived scientific consensus, Q(2) = 0.87, p = .649. The effects for climate change (k = 27), g = 0.56, 95% CI = [0.41, 0.72], and genetically modified food (k = 8), g = 0.56, 95% CI = [0.19, 0.92], were almost identical in size. The estimated effect of scientific-consensus communication regarding vaccination was smaller (k = 2), g = 0.29, 95% CI = [−3.26, 3.85], but this effect was based on only two experiments. See Table 3 for an overview of all results.

Overview of the Results of the Meta-Analysis: Effects of Scientific-Consensus Communication on Perceived Scientific Consensus and Belief in Scientific Facts

Note: CI = confidence interval; PI = prediction interval.

Moving to factual beliefs, we again found no significant subgroup differences between topics, Q(2) = 1.36, p = .507, although the effect for climate change (k = 30), g = 0.12, 95% CI = [0.08, 0.15], was smaller than the effect for genetically modified food (k = 8), g = 0.16, 95% CI = [0.08, 0.23]. The effect for vaccination (k = 2) was even smaller, g = 0.10, 95% CI = [−1.89, 2.09], though again this was based on only two experiments.

Sensitivity analyses

Following our preregistration, we conducted two additional sensitivity analyses, aiming to provide insight into the robustness of the effects of scientific-consensus communication. First, because different heterogeneity estimators in meta-analysis can produce different results (Langan et al., 2019; e.g., van der Linden & Goldberg, 2020), we compared the results obtained using the REML estimator and HKSJ CIs with those obtained using a conventional random-effects analysis (using the DerSimonian-Laird estimator without the HKSJ method). This resulted in almost-identical estimated effect sizes both for perceived scientific consensus, g = 0.54, 95% CI = [0.43, 0.66], and factual beliefs, g = 0.12, 95% CI = [0.09, 0.15].

Second, we searched for extreme and influential effect sizes. Regarding perceived scientific consensus, a large number of extreme cases (k = 15) was identified as completely falling outside the 95% CI of the pooled effect (i.e., the 95% CI of an effect did not overlap with the 95% CI of the pooled effect). Rerunning the main analysis without these 15 extreme cases resulted in an almost identical average estimated effect size, g = 0.55, 95% CI = [0.47, 0.63], although it should be noted that the I2 was lower (71.2%) and that the 95% prediction interval ([0.23, 0.87]) no longer included negative effects. Using a stricter criterion for extreme cases—effect sizes falling outside the 95% prediction interval—we identified only three effect sizes as extreme (Cook & Lewandowsky, 2016, Studies 1 and 2; Kerr & Wilson, 2018, Study 2). We also identified these three experiments as influential cases by investigating the standardized residuals and Cook’s distance, following general advice from Viechtbauer and Cheung (2010). Excluding these effect sizes substantially reduced the average estimated effect size for perceived scientific consensus, g = 0.46, 95% CI = [0.36, 0.56], but heterogeneity remained high (I 2 = 94.2%, 95% prediction interval = [−0.08, 1.01]). Additionally, these three effect sizes, as well as two others (Kerr & Wilson, 2018, Study 1; Lewandowsky et al., 2013, Study 2), were extracted from experiments in which a posttreatment check directly related to the experimental manipulation was used to exclude participants. This procedure may have affected the results of these experiments (Montgomery et al., 2018), potentially inflating effects. For this reason, we conducted an additional, unplanned sensitivity analysis to investigate the effect of these five cases on the meta-analytic estimate of the effect of scientific-consensus communication on perceived scientific consensus. The results again yielded a slightly smaller estimate, g = 0.44, 95% CI = [0.34, 0.54], but heterogeneity remained high, I 2 = 94.5%, 95% prediction interval = [−0.09, 0.97].

Moving to factual beliefs, we did not identify any extreme effect sizes using the 95% CIs or 95% prediction intervals. Two influential effect sizes were identified on the basis of the standardized residuals and Cook’s distance (Dixon et al., 2017, Study 1; Lyons et al., 2021, Study 1), but removal of these two effect sizes had almost no effect on the results of the main analysis, g = 0.12, 95% CI = [0.09, 0.14]. Finally, we reran the analysis without the five cases in which a procedure was used that potentially inflated effects of a consensus message on perceived scientific consensus and thus potentially also indirectly inflated effects of scientific-consensus communication on belief in scientific facts. The results yielded a slightly smaller main effect of consensus messaging on belief in scientific facts, g = 0.11, 95% CI = [0.08, 0.14].

Additionally, we conducted a nonpreregistered, analysis in which we created graphic display of heterogeneity (GOSH) plots (Olkin et al., 2012) to explore patterns of heterogeneity in the data and extend our search for extreme and influential cases. We found no obvious patterns that could explain the high heterogeneity as indicated by I2 for perceived scientific consensus. In line with the results from the investigation of extreme and influential cases, there was some indication that the average estimated effect size for perceived scientific consensus might be inflated somewhat. We did not find that the main results related to factual beliefs changed substantially with different combinations of experiments (see https://osf.io/3tkwy/).

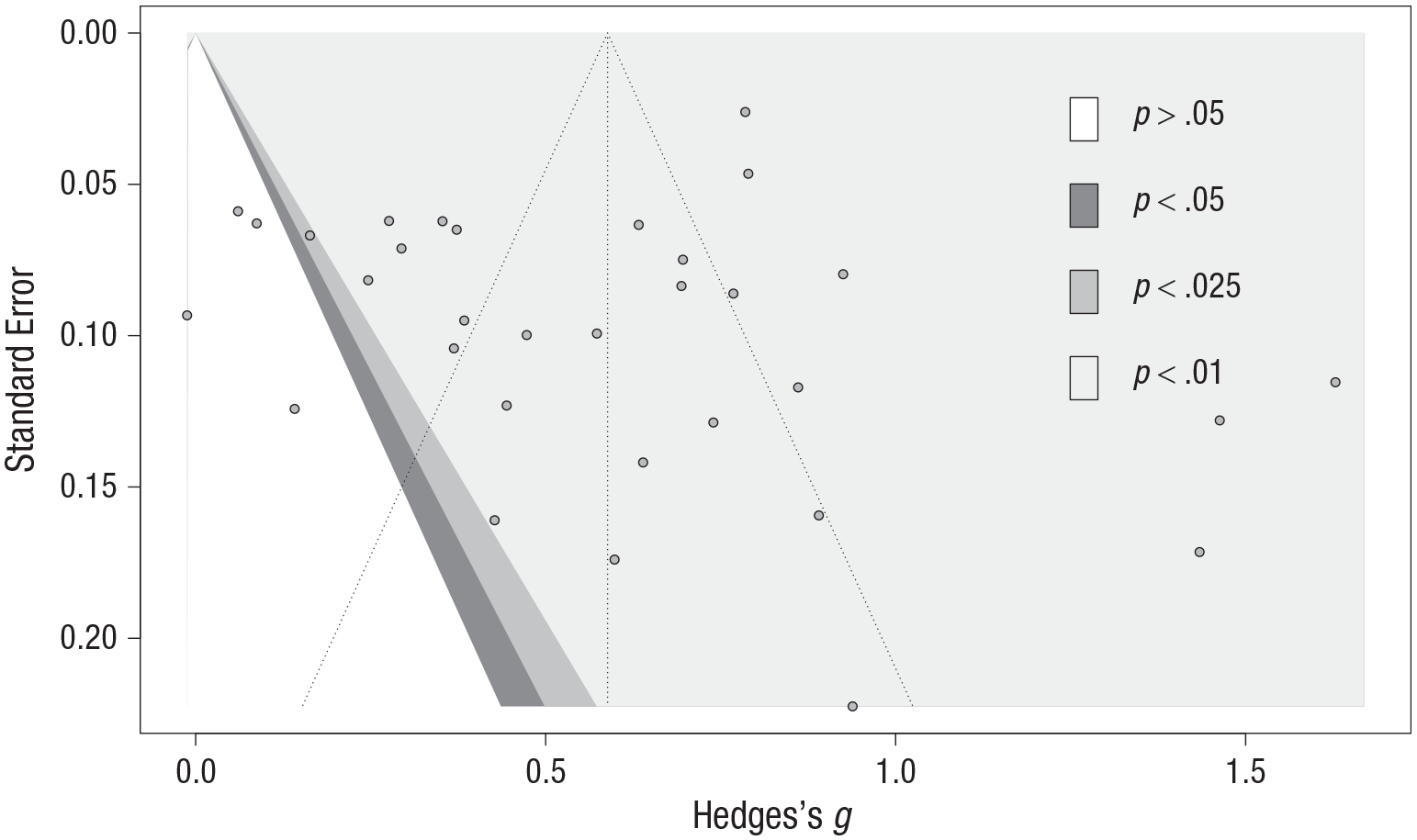

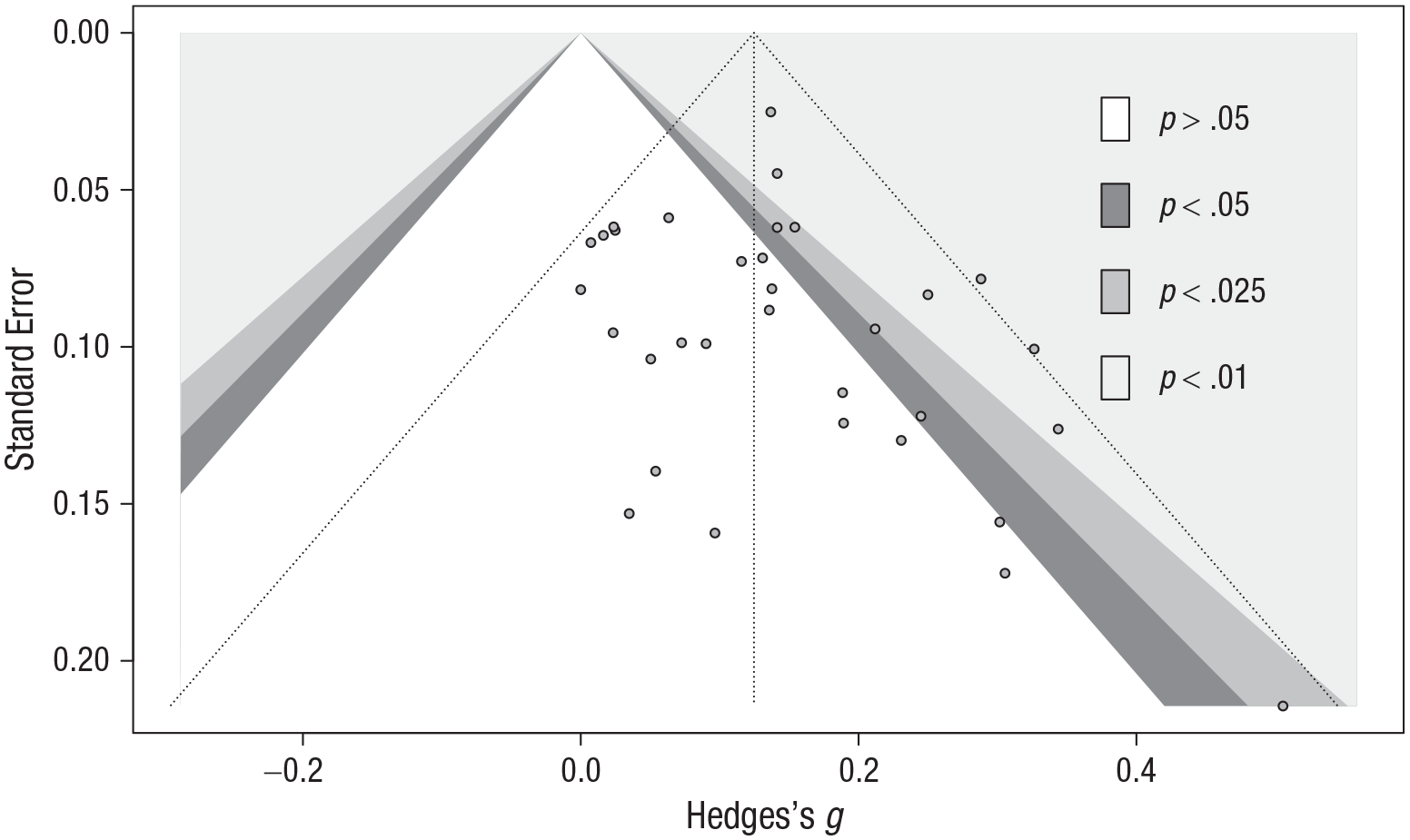

Reporting bias

Following our preregistration, we investigated reporting bias in two ways. First, we investigated evidence of a small-study bias, which is an indirect indicator of reporting bias, in the subset of experiments from articles that were published or accepted for publication at the time the present article was written (k = 32 for perceived scientific consensus, k = 33 for factual beliefs). Visual inspection of the contour-enhanced funnel plot of the effects of scientific-consensus communication on perceived scientific consensus revealed no asymmetry (see Fig. 4). This was confirmed by Egger’s test for asymmetry, which was nonsignificant, intercept = −0.47, 95% CI = [−3.83, 2.89], t = −0.27, p = .786. Visual inspection of the contour-enhanced funnel plot of the effects of scientific-consensus communication on belief in scientific facts revealed some asymmetry (see Fig. 5). Egger’s test, however, was again nonsignificant, intercept = 0.55, 95% CI = [−0.28, 1.37], t = 1.30, p = .202.

Contour-enhanced funnel plot of the effects of scientific-consensus communication on perceived scientific consensus. The funnel plot shows observed effect sizes as a function of their standard errors. The dotted lines indicate the pooled random-effects estimate. The gray dots represent individual experiments. Shading in the triangular regions indicates significance.

Contour-enhanced funnel plot of the effects of scientific-consensus communication on belief in scientific facts. The funnel plot shows observed effect sizes as a function of their standard errors. The dotted lines indicate the pooled random-effects estimate. The gray dots represent individual experiments. Shading in the triangular regions indicates significance.

Second, using the same subset of articles that were published or accepted for publication, we implemented the three-parameter selection model (McShane et al., 2016) to assess the effects of publication bias on our meta-analytic estimates. Selection models are intended to model the effect of publication bias on the selection of studies included in a meta-analysis. They are based on the assumption that the size, direction, and p value of study results and the size of studies influence the probability of their publication (Page, Higgins, & Sterne, 2021). Neither of the tests provided a reason to believe that the meta-analysis was biased by a lower selection probability of nonsignificant results—perceived scientific consensus: χ2(1) = 0.92, p = .337; factual beliefs: χ2(1) = 0.01, p = .935.

In addition to these two preregistered investigations of reporting bias, we conducted a nonpreregistered analysis in which we explored whether there were differences in effect sizes between preregistered experiments and nonpreregistered experiments, including both published and not-yet-published experiments. Effect sizes in psychological research have been found to differ substantially between preregistered and nonpreregistered work (Schäfer & Schwarz, 2019), most likely because the effects in nonpreregistered experiments are overestimations. In the current meta-analysis, however, there were no significant subgroup differences between preregistered and nonpreregistered experiments related to perceived scientific consensus, Q(1) = 2.95, p = .086, and factual beliefs, Q(1) = 1.45, p = .229. It should be noted, however, that this subgroup analysis was likely underpowered because of the low number of preregistered works and the high heterogeneity in the case of effects on perceived scientific consensus. This low power is reflected in the wide CIs around the estimated subgroup effects. Notably, though, for both perceived scientific consensus and factual beliefs, the estimated average effect was smaller for preregistered experiments (scientific consensus: k = 8, g = 0.36, 95% CI = [0.09, 0.64]; factual beliefs: k = 7, g = 0.08, 95% CI = [−0.02, 0.18]) than for nonpreregistered experiments (scientific consensus: k = 29, g = 0.60, 95% CI = [0.45, 0.75]; factual beliefs: k = 33, g = 0.13, 95% CI = [0.10, 0.16]).

Additional moderators

Analyses of the following moderators were not preregistered. Because standard meta-regression methods can suffer from inflated false-positive rates, we conducted permutation tests to control for spurious findings (Higgins & Thompson, 2004). The general results of these permutation tests did not differ from the results of the standard meta-regression described below (see https://osf.io/3tkwy/ for more detail).

Conservatism

All samples of experiments conducted in the United States that included a measure of political ideology (k = 30) were coded on how conservative (vs. liberal) the samples were. To make the best of the available information from the original experiments, we calculated a continuous score of sample conservatism from 0 to 1, which indicated the ratio of participants who identified themselves in any way as conservative. Overall, the samples skewed liberal (M = .43, SD = .07). The measure of conservatism was then included in a meta-regression of the original effects of scientific-consensus communication on perceived scientific consensus (k = 25) and factual beliefs (k = 28).

The moderator tests were nonsignificant for both perceived scientific consensus, b = −0.13, t(23) = −0.17, p = .870, and factual beliefs, b = −0.02, t(26) = −0.07, p = .943. Additionally, we explored whether conservatism might interact with topic (in effect forming a three-way interaction among scientific-consensus communication, conservatism, and topic), comparing climate change with genetically modified food. Here, too, the interaction effects were nonsignificant—perceived scientific consensus: b = −1.68, t(20) = −0.53, p = .600; factual beliefs: b = −0.95, t(23) = −0.90, p = .378.

Preexisting perceptions of scientific consensus

All samples were coded on their preexisting perceptions of scientific consensus. Where possible, we used premanipulation measures of perceived scientific consensus for the consensus conditions, which is the most relevant indicator of how much room for improvement there was in the treatment conditions. If no premanipulation measure was available, we used the postmanipulation score of perceived scientific consensus of the control condition as a proxy. These scores were all recoded on a scale from 0 to 1; higher scores indicate a higher estimate of scientific consensus in line with the scientific facts. Overall, preexisting perceptions were quite high (M = .69, SD = .11), which in most experiments indicated that an estimated 69% of scientists agreed about the scientific facts.

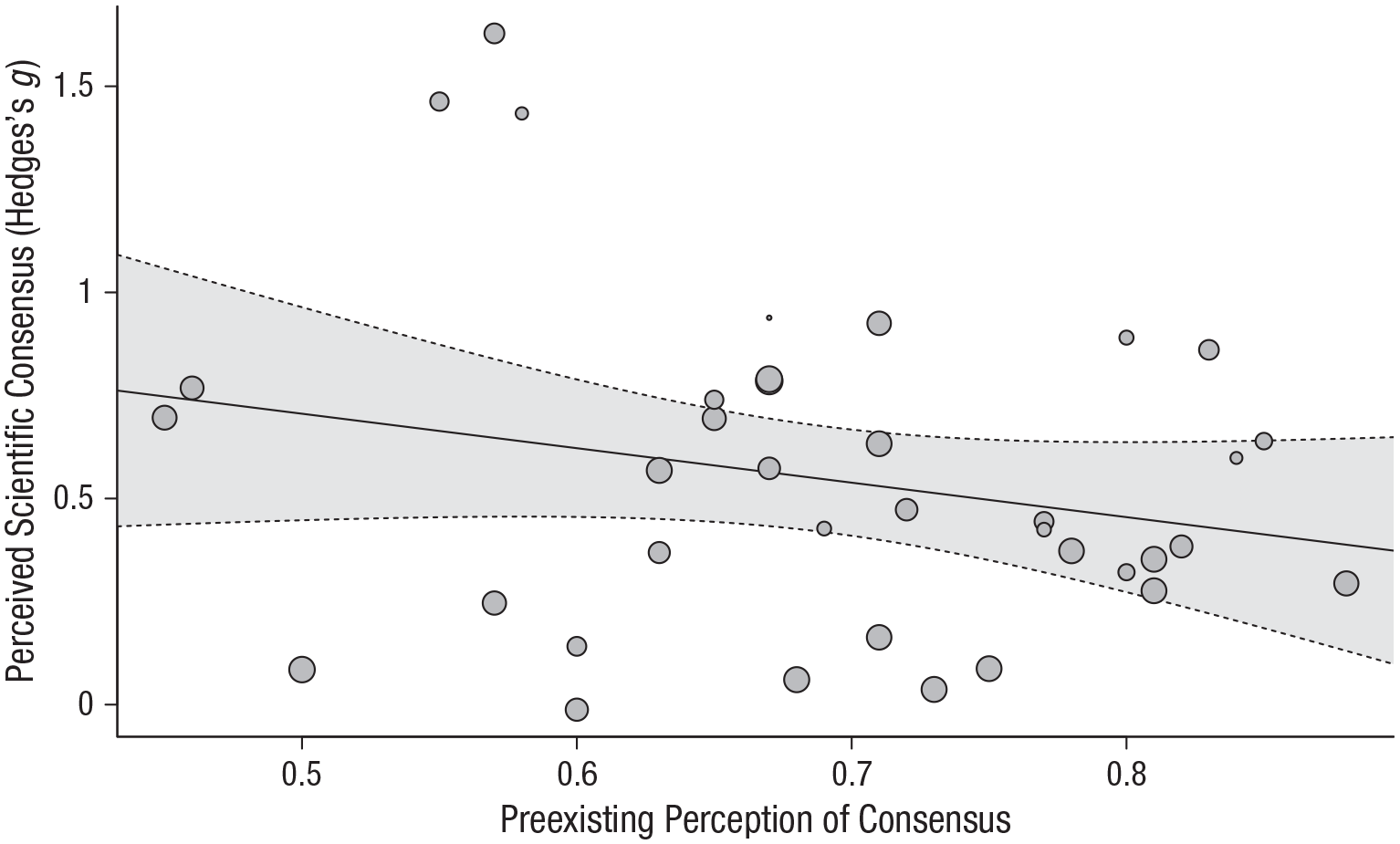

Meta-regression indicated no significant moderating effect of preexisting perceptions of consensus, b = −0.84, t(35) = −1.44, p = .159. The trend indicated that there might be a negative moderating effect of preexisting perceptions of consensus: Samples with low consensus perceptions yielded larger effects of consensus messaging (see Fig. 6). It should be noted, however, that this trend largely disappears if the five experiments with potentially inflated effects of consensus messaging on perceived scientific consensus (see the Sensitivity Analyses section) are excluded, b = −0.09. Here, too, a potential interaction among preexisting perceptions of consensus and topic was explored, including only the experiments on climate change and genetically modified food. Again, no significant interaction effect was found, b = 1.52, t(31) = 0.80, p = .428.

Meta-regression scatterplot showing the relation between preexisting perception of consensus and the effect of scientific-consensus communication on perceived scientific consensus. Point sizes reflect the weight that experiments received in the analysis; bigger points indicate more weight. The solid line represents the best-fitting regression, and the gray area represents 95% confidence intervals.

Preexisting belief in scientific facts

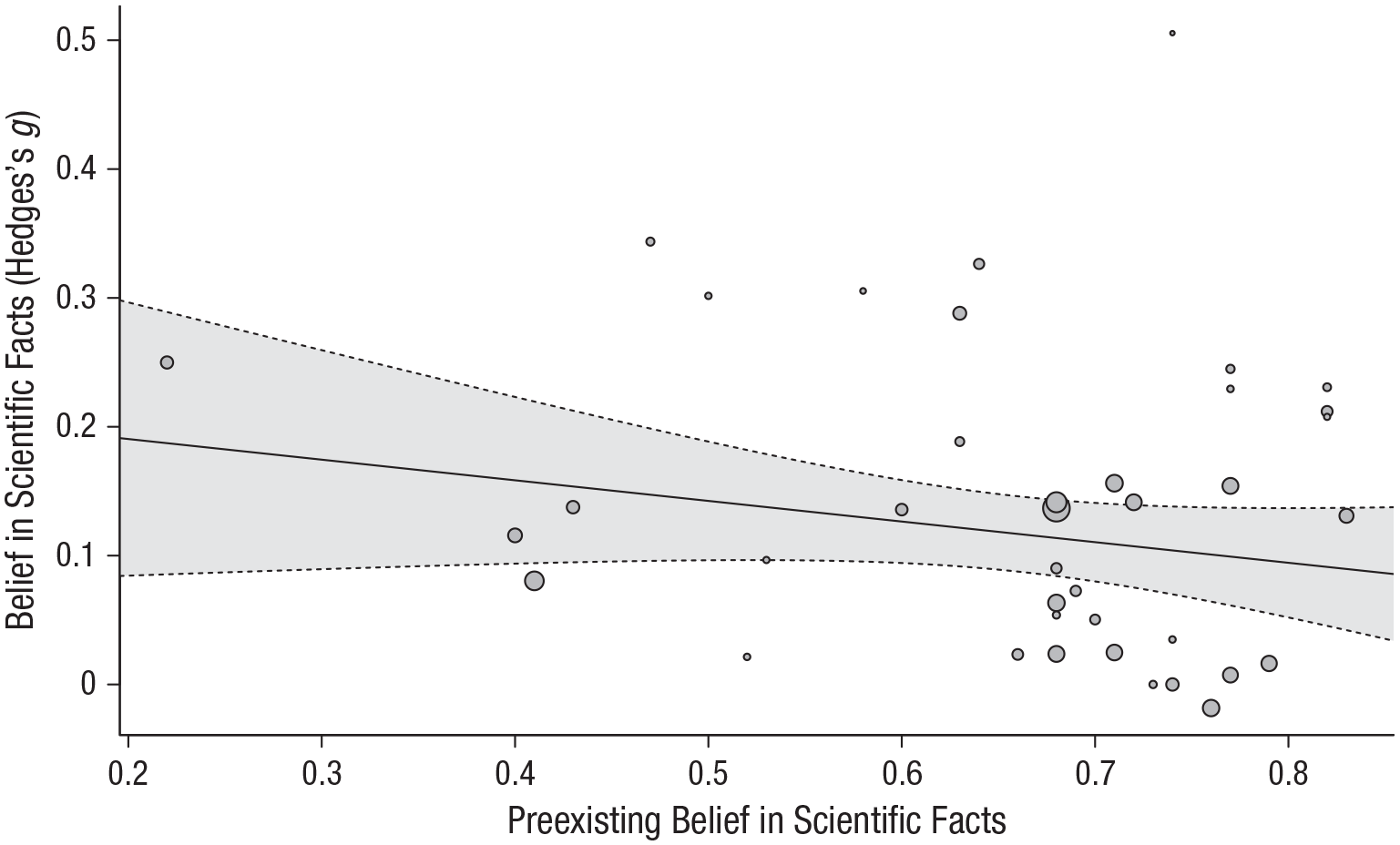

A similar procedure was employed to code samples on their preexisting belief in scientific facts, also on a scale from 0 to 1; higher scores indicate beliefs more in line with the scientific facts. Overall, preexisting beliefs were quite accurate (M = .65). Meta-regression indicated no significant moderating effect of preexisting beliefs, b = −0.17, t(34) = 1.51, p = .141, but provided tentative evidence for a negative association (see Fig. 7). Here, too, a potential interaction among preexisting perceptions of consensus and topic was explored, including only the experiments on climate change and genetically modified food. Again, no significant interaction effect was found, b = −0.13, t(30) = −0.24, p = .810.

Meta-regression scatterplot showing the relation between preexisting belief in scientific facts and the effect of scientific-consensus communication on belief in scientific facts. Point sizes reflect the weight that experiments received in the analysis; bigger points indicate more weight. The solid line represents the best-fitting regression, and the gray area represents the 95% confidence interval.

Discussion

This meta-analysis assessed the effects of communicating the existence of scientific consensus on perceptions of scientific consensus and belief in scientific facts regarding contested science topics. The results of 43 experiments demonstrate that, across topics, single exposure to scientific-consensus messaging had a significant positive effect on perceived scientific consensus (g = 0.55). This effect was slightly smaller than a recent estimation of the median effect size in (nonpreregistered) social-psychology studies (g = ~0.63; Schäfer & Schwarz, 2019) but larger than a recent estimation of the median effect size in communication science (g = ~0.37; Rains et al., 2018). The estimated average effect of scientific-consensus communication on factual beliefs was smaller (g = 0.12) than for perceived scientific consensus but still significant.

These findings demonstrate that scientific-consensus communication is an effective approach to help people find out the facts about contested science topics. But although the effect of scientific-consensus communication on perceived scientific consensus was large enough to be practically relevant after a single exposure, its effect on factual beliefs was smaller and might be practically relevant only if it can be magnified (e.g., with repeated exposure; Anvari et al., in press; Funder & Ozer, 2019). Moreover, most experiments included in the current meta-analysis were conducted online and consisted of single, short messages presented in a very controlled setting. Future research should test whether these effects persist in less controlled environments (see also van der Linden, 2021), whether they persist among populations that might be less inclined to sign up to participate in scientific research, and whether larger effects can be achieved through repeated exposure or more elaborate messages. Such experiments in the more noisy, real-life media landscape would also be of great value for science-communication practice.

There is some concern among science-communication scholars that accurate information, such as scientific-consensus messages, might not be effective in informing the general public or might even backfire, leading to contrary-belief updating by some individuals (e.g., Hart & Nisbet, 2012; Kahan et al., 2011). Communicating the facts would thus result in large-scale polarization. In contrast, the findings of this meta-analysis are in line with findings of other recent work (e.g., Nyhan, 2021; Swire-Thompson et al., 2020; van Stekelenburg et al., 2020; Wood & Porter, 2019) showing that communicating accurate corrective information, in this case information regarding agreement among scientists, is a viable strategy to inform the public. Although it is unlikely that the effects of scientific-consensus messaging are exactly the same for all individuals, this meta-analysis indicates that the likelihood of such messages resulting in null effects or backfiring overall are slim.

Concerning potential differing effects between parts of the public, sample conservatism did not significantly moderate the effect of scientific-consensus communication, nor did it interact with topic to do so. The moderating effects of the sample’s preexisting perceived scientific consensus and factual beliefs were also nonsignificant. If anything, plots indicated that scientific-consensus communication might be more effective for skeptics than for people whose personal beliefs are already more or less in line with scientific evidence. Of course, there is also more room for these people to change their beliefs. Thus, although we encourage caution in interpreting the results of potentially underpowered meta-regression analyses (see limitations, described below), we found no evidence suggesting that scientific-consensus messaging could be ineffective or backfire among specific populations. Difficulty in determining when scientific-consensus communication is effective and for whom, combined with the relatively small immediate effect on belief in scientific facts, is likely what has kept the discussion about its effectiveness as a science-communication strategy going.

The main results appear rather robust. First, regarding the three different topics, we found that the effects of scientific-consensus communication were very similar for climate change and genetically modified food. We identified only two experiments that investigated scientific-consensus communication in the context of vaccination (although more research might be upcoming because of the COVID-19 pandemic; e.g., Kerr & van der Linden, 2022), which prevents us from drawing conclusions regarding that topic specifically. Second, regarding extreme and influential cases, the main results related to perceived scientific consensus might be inflated somewhat (removal of extreme, influential, or potentially inflated effect sizes yielded gs of 0.44 and 0.46), whereas the effect related to belief in scientific facts was largely robust in this context. Finally, we found no evidence of publication bias. However, it should be noted that descriptive statistics show that the estimated average effects for preregistered experiments were smaller than for nonpreregistered experiments.

The main results of this meta-analysis are in line with those of recent meta-analytic work focusing on climate-change communication (Rode, Dent, et al., 2021). This research focused on a variety of interventions and outcomes but included 18 articles testing scientific consensus and showed that scientific-consensus communication has a significant, positive effect on climate-change attitudes, g = 0.09, 95% CI = [0.05, 0.13]. Interestingly, this and other meta-analytic work (Hornsey et al., 2016) suggest that changing beliefs is easier than changing support for policy or willingness to act. One potential explanation is that it takes some time for changes in factual beliefs to affect these further-downstream variables that are more closely related to real-life consequences. Future work (including future meta-analyses) might focus on long-term effects, investigating whether and how communicating scientific consensus might influence not only perceptions of consensus and beliefs but also variables such as support for policy or behavior in the long run.

There are three limitations to this meta-analysis that should be taken into account when interpreting the results. The first one pertains to the moderator analyses. Because of the nature of the aggregated data that forms the input of the meta-analysis, there was limited variation in the samples’ levels of conservatism and preexisting perceptions of scientific consensus and factual beliefs (an aggregation bias; Deeks et al., 2021). Additionally, political conservatism was investigated as a moderator only in U.S. samples, to ensure that conservatism measured more or less the same construct across experiments. These factors may have decreased power to detect a true moderating effect, resulting in wide CIs around the meta-regression estimates. The consistently high heterogeneity in effects of consensus messaging on perceived scientific consensus does indicate that substantial variation in effects on this outcome (but not on belief in scientific facts) between experiments is left unexplained.

A second limitation is that the current meta-analysis investigated effects of scientific-consensus messaging only on perceptions of scientific consensus and factual beliefs related to the specific information about which scientific consensus was communicated. When a consensus message stated that “90% of medical scientists agree that vaccines are safe,” we included effect sizes related to personal belief about the safety of vaccines in the meta-analysis but not effects for potentially related beliefs about issues such as the false link between vaccines and autism. Therefore, the average estimated effects should be most applicable to situations in which the scientific-consensus message is highly related to the factual belief one aims to affect. Future meta-analytic work might investigate whether and how the effects of scientific-consensus messages influence beliefs that were not directly addressed.

A third limitation is related to the indirect effect of scientific-consensus communication on belief in scientific facts through perceived scientific consensus, as hypothesized in the gateway-belief model (van der Linden, Leiserowitz, et al., 2015). Statistical experts have substantial reservations with the way that mediation analysis is often conducted to test for indirect effects in social-science research (e.g., Bullock et al., 2010; Fiedler et al., 2011; Montgomery et al., 2018). Many of these reservations also pertain to the research synthesized here. This prohibits us from conducting statistical procedures to meta-analytically test the hypothesized indirect effect. Nonetheless, the results of the meta-analysis can be interpreted as tentative support for the hypothesis that the effect of consensus messaging on personal beliefs is a function of a change in perceptions of consensus: The effect sizes related to perceived scientific consensus and belief in scientific facts in the included research correlated substantially (r = .56, p = .001). This correlation, however, is only a very tentative indication of a potential indirect effect. Future research is needed to specifically address the causal chain hypothesized in the gateway-belief model.

To conclude, communicating a high degree of scientific consensus regarding contested science topics can be a useful tool in science communicators’ toolkit. Such messaging increases perceptions of scientific consensus as well as the accuracy of beliefs related to these science topics. Notably, an important benefit of consensus messaging is that it comes at a low cost and consists simply of informing people. It does not mislead or covertly change behavior; it empowers the public by providing valuable information that can support informed decision making. It is up to the recipient of the message to decide whether this information is of value to their personal beliefs. The current work suggests that many people put at least some value in scientific consensus and are willing to update their beliefs if they are not in line with such consensus.

Footnotes

Acknowledgements

We thank all the authors of works included in the meta-analysis for their cooperation. We especially thank Christopher Clarke for his help in finding relevant works and acquiring information from included works.

Transparency

Action Editor: Mark Brandt

Editor: Patricia J. Bauer

Author Contributions

A. van Stekelenburg, G. Schaap, and J. van ’t Riet reviewed and coded the experiments included in the meta-analysis. A. van Stekelenburg analyzed the data and drafted the manuscript. All authors wrote the manuscript and approved the final version for submission.