Abstract

People feel tired or depleted after exerting mental effort. But even preregistered studies often fail to find effects of exerting effort on behavioral performance in the laboratory or elucidate the underlying psychology. We tested a new paradigm in four preregistered within-subjects studies (

Keywords

What are the consequences of exerting effort? Many people intuitively believe in ego depletion (Francis & Job, 2018), the idea that exerting effortful control depletes one’s energy (Baumeister & Vohs, 2016). However, high-powered preregistered studies (Garrison, Finley, & Schmeichel, 2019; Hagger et al., 2016), meta-analyses (Carter, Kofler, Forster, & McCullough, 2015), and theoretical reviews (Friese, Loschelder, Gieseler, Frankenbach, & Inzlicht, 2019; Inzlicht & Friese, 2019) suggest that laboratory depletion effects are small or potentially nonexistent and that previous work suffers from limitations such as ineffective experimental manipulations and low statistical power. Here, in four preregistered studies, we developed a paradigm that addresses previous methodological limitations and provides insights into the effects of effort exertion.

Ego-Depletion Controversy

In the first tests of ego depletion (i.e., Baumeister, Bratslavsky, Muraven, & Tice, 1998), one group of participants initially completed a difficult self-control task (depletion group; e.g., forced to eat radishes instead of chocolates), and another group completed an easier task (control group; e.g., allowed to eat chocolates). Both groups then completed a second, unrelated self-control task (e.g., worked on unsolvable puzzles), which served as the dependent variable (e.g., persistence duration on puzzles). The depletion group showed reduced self-control on the second task compared with the control group, providing evidence for ego depletion—the idea that self-control runs out after use (Friese et al., 2019).

Subsequent studies found that depletion influenced diverse outcomes even when the depletion or outcome tasks did not entail self-control or inhibitory control (e.g., Moller, Deci, & Ryan, 2006; Schmeichel, 2007), suggesting that exerting effort and experiencing fatigue (rather than recruiting inhibitory control) led to depletion effects. Critically, the first meta-analysis of 198 published tests suggested that the effect (

Subsequent evidence, however, suggested otherwise. Researchers began reporting replication failures or much smaller effect sizes (e.g., Tuk, Zhang, & Sweldens, 2015). Meta-analyses that were conducted to correct for publication bias (i.e., when significant results are published more frequently than nonsignificant ones) suggest that depletion might be unreal (Carter et al., 2015; Friese & Frankenbach, 2019), although subsequent work has questioned the validity of existing bias-correction techniques (Carter, Schönbrodt, Gervais, & Hilgard, 2019). These critiques were bolstered by further failures involving either large-scale preregistered replications or reanalyses of large data sets not originally gathered to investigate ego depletion (Etherton et al., 2018; Hagger et al., 2016).

Starting Anew: A Novel Approach

Although the field appears to have hit a dead end, it might be too soon to jettison ego depletion because researchers have relied mainly on one paradigm (i.e., between-subjects laboratory sequential tasks) and has yet to fully examine other approaches. For example, studies using archival data sets, field data, or experience sampling suggest that depletion or carryover fatigue effects may be apparent in people’s everyday lives (e.g., Dai, Milkman, Hofmann, & Staats, 2015; Hirshleifer, Levi, Lourie, & Teoh, 2019). Although ecologically valid, these studies often cannot control for real-world confounds. Our goal was to create a laboratory paradigm to provide converging evidence to facilitate future research. We also tested the idea that laboratory depletion effects are akin to real-life fatigue effects in that people shift their priorities when tired, resulting in disengagement from ongoing tasks (Inzlicht, Schmeichel, & Macrae, 2014).

Strong manipulation and within-subjects design

Instead of using standard depletion paradigms, which often use demanding tasks that are thought to tap inhibitory control, we focused on designing a manipulation that robustly elicited states (e.g., effort, fatigue) typically associated with depletion (Friese et al., 2019). We used the symbol-counting task, which draws on the shifting and updating aspects of executive function (Garavan, Ross, Li, & Stein, 2000). Crucially, we modified the task so that it adapted trial-by-trial to each participant’s performance, which ensured that the task was highly demanding for each participant. Second, we used a completely within-subjects design to reduce error variance and increase statistical power (Francis, Milyavskaya, Lin, & Inzlicht, 2018). To minimize demand characteristics and learning effects, we had participants complete the low-demand and high-demand tasks on two separate days roughly 1 week apart.

Drift-diffusion modeling

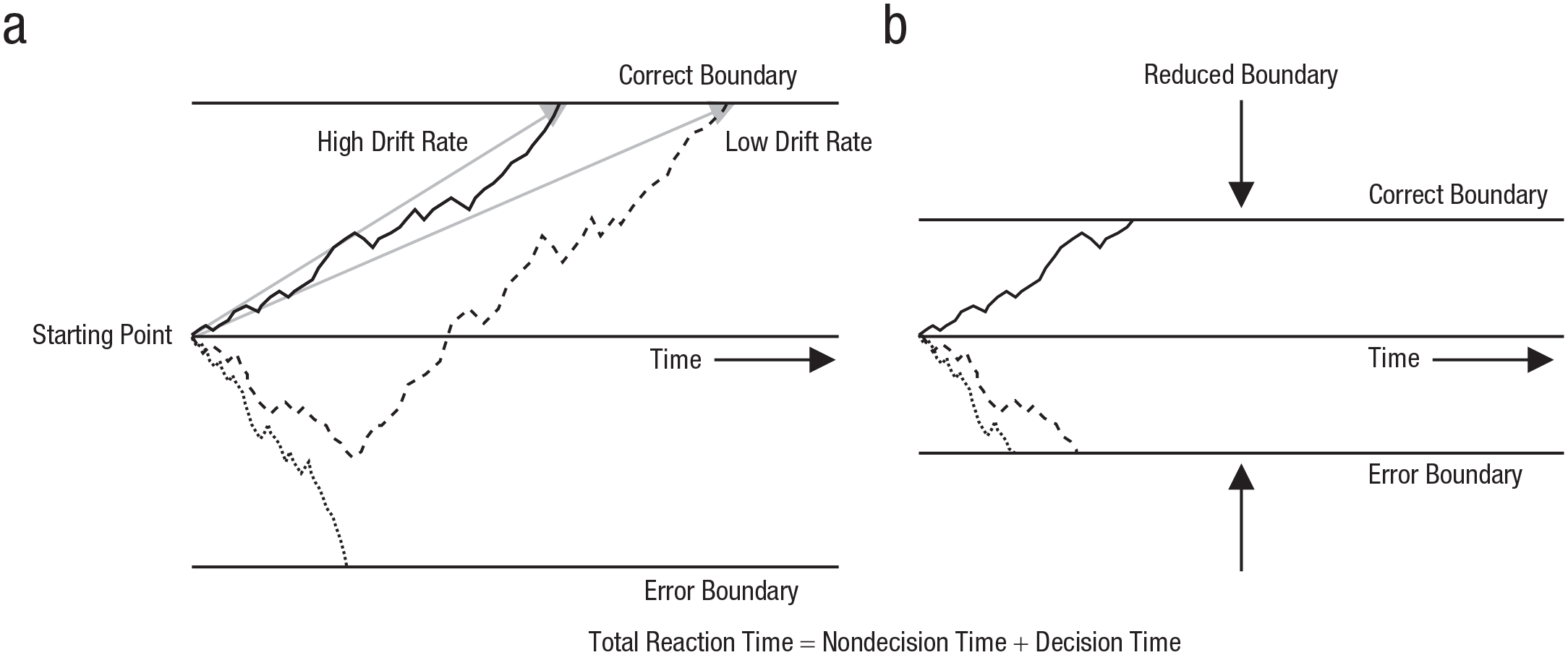

After the demand manipulation, participants completed the Stroop task, which is often used to assess inhibition abilities (Miyake & Friedman, 2012). Importantly, in addition to performing traditional behavioral analyses on reaction time and accuracy, our primary interest was to transform these observed measures into latent variables assumed to underlie performance. We fitted drift-diffusion models, which assume people make speeded decisions by gradually accumulating information until an evidence boundary is reached (Fig. 1; Ratcliff & McKoon, 2008). This model not only resolved the speed/accuracy trade-off in reaction time tasks (Ratcliff & McKoon, 2008) but also allowed us to examine whether fatigue effects affect information-processing speed (drift-rate parameter) and response caution or impulsivity (boundary parameter; see Fig. 1 for an explanation). Specifically, we fitted the EZ-diffusion model (Wagenmakers, Van der Maas, & Grasman, 2007), which, despite being simpler, often outperforms the full diffusion model and better detects experimental effects (Dutilh et al., 2019; van Ravenzwaaij, Donkin, & Vandekerckhove, 2017). Given the success of this modeling approach in explaining individual differences and how experimental manipulations influence psychological processes (Evans & Wagenmakers, 2019), these latent variables can provide insights into the psychology underlying depletion.

Schematic illustrating the drift-diffusion model, which decomposes the joint distributions of reaction time and accuracy into latent variables including drift rate, decision boundary, nondecision time, and starting point (Ratcliff & McKoon, 2008). Panel (a) shows three simulated decisions with different diffusion processes or paths. Each path depicts how one decision process evolved over time. The solid black and dashed lines depict decision processes reaching the correct boundary (i.e., correct responses made) at different rates; higher drift rates terminate sooner at the boundary (i.e., yielding faster reaction times). The light-gray arrows show the direct paths to the correct boundary for these two processes. The dotted line depicts a process that terminated relatively quickly at the error boundary (i.e., resulted in a fast error response). Panel (b) shows what would happen if the boundaries were reduced for the same three decisions. Decision processes would terminate at the boundaries sooner, although drift rates would remain unchanged, reflecting less evidence accumulation and resulting in noisier or more error responses and faster reaction times. The decision process depicted by the dashed line terminated prematurely at the error boundary. Boundary widths reflect either individual differences in response caution or experimental manipulations (e.g., emphasizing speedy responses reduces boundaries, whereas emphasizing accuracy increases them).

Hypotheses

Studies 1 and 2 were conducted in the laboratory. Studies 3 and 4 were conducted online. We varied the duration of the high-demand task across studies. In Study 1, we preregistered only traditional analyses on the Stroop task but ran exploratory diffusion-model analyses. Studies 2, 3, and 4 were preregistered, confirmatory experiments that tested two primary hypotheses: The high-demand experimental manipulation would reduce boundary and drift rate more than the low-demand manipulation would. These predictions reflect our prediction that exerting effort should reduce subsequent overall task engagement rather than specifically inhibitory control.

Method

Participants

Study 1 was designed to primarily evaluate the effectiveness of our within-subjects demand manipulation and its effects on traditional Stroop behavioral measures (i.e., accuracy and reaction time). Two hundred fifty-three undergraduates participated (178 women, 71 men, 4 other; mean age = 18.80 years,

Within-subjects design

To reduce error variance and increase statistical power, we used within-subjects designs in all four studies. To minimize demand characteristics and learning effects, we had each participant complete the low-demand and high-demand tasks on two separate days. In Studies 1 and 2, undergraduate participants completed the two tasks in two different weeks. Both sessions occurred on the same day of each week at the same time of the day. Each participant was pseudorandomly assigned to complete either the low-demand or high-demand task on the first day on the basis of his or her allocated participant number. Participants in Studies 1 and 2 received course credits for completing the study. In Studies 3 and 4, MTurk workers also completed the two tasks in two different weeks. However, because participants recruited via this online platform usually complete tasks at their convenience, they completed the second task 7 to 12 days after they completed the first task. They also did not have to complete the two tasks at the same time of the day. Each was randomly assigned to complete either the low-demand or high-demand task on the first day. Participants in Studies 3 and 4 received $2.90 and $3.60, respectively, for completing the study.

Procedure and sequential-task paradigm

Task 1: experimental manipulation

The low-demand task required participants to watch a 5-min wildlife video. The high-demand task required participants to complete a titrated symbol-counting task (study materials and code are available at https://osf.io/45gyk/; see Garavan et al., 2000); the high-demand task lasted approximately 20, 15, 5, and 10 min in Studies 1, 2, 3, and 4, respectively. We did not match the durations of the low-demand and high-demand tasks in Studies 1, 2, and 4 because we wanted to avoid inducing boredom with long but easy control tasks, which might lead to levels of subjective fatigue comparable with those from exerting cognitive effort on a demanding task (Milyavskaya, Inzlicht, Johnson, & Larson, 2019) and potentially undermine the demand manipulation. Further, previous work using unbalanced designs such as ours have reported stronger effects (Sjåstad & Baumeister, 2018).

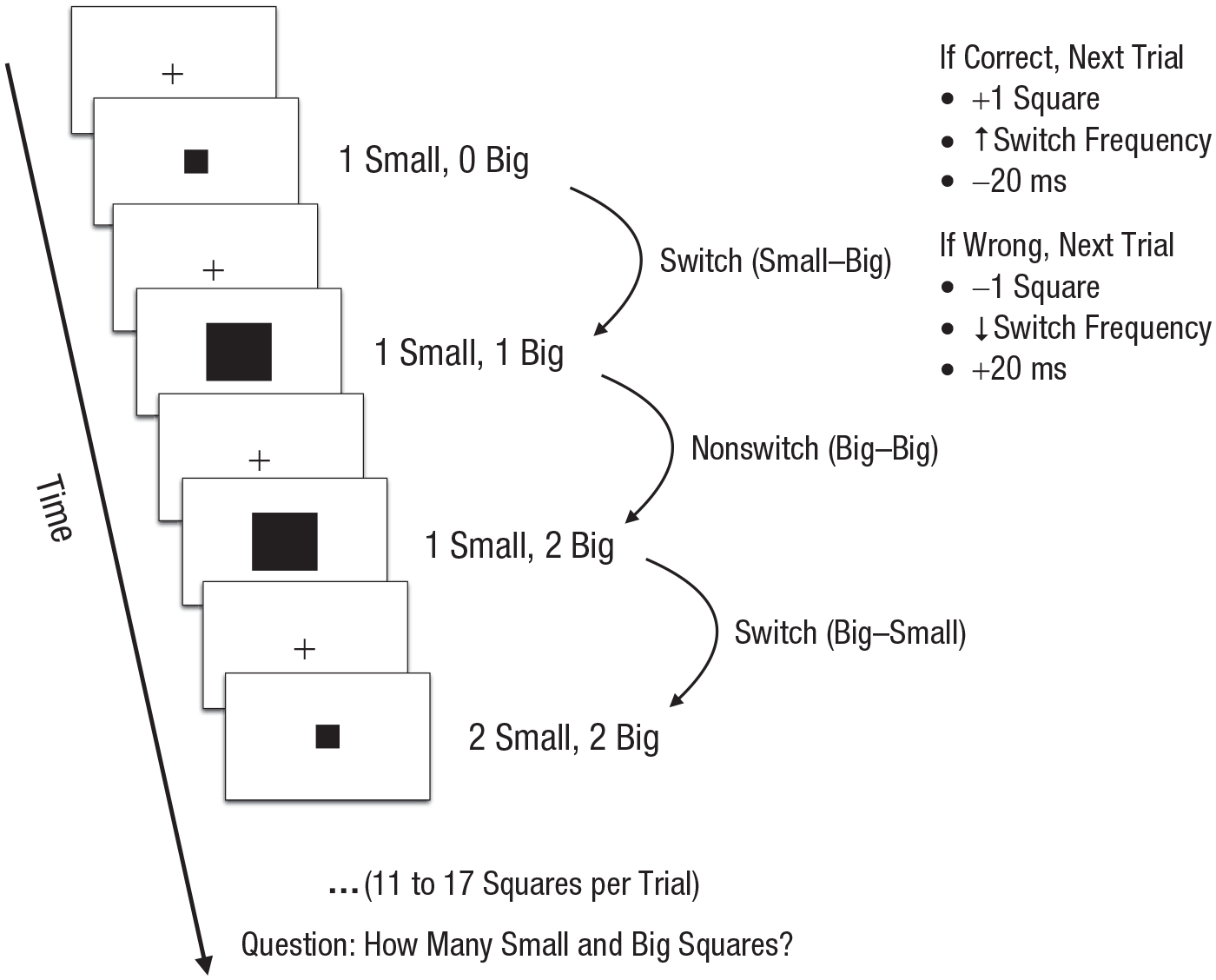

The symbol-counting task is a cognitive task that parametrically manipulates executive demands (Garavan et al., 2000). On each trial, participants had to count the number of small black squares that had been presented. Thus, the task heavily taxes the shifting and updating (but not inhibition) aspects of executive function (Miyake & Friedman, 2012). To further increase the difficulty of the task, we calibrated the task for each individual such that difficulty was adjusted trial by trial according to the participant’s performance on the previous trial. On each trial, multiple small and big squares were presented sequentially (between 11 and 17 squares per trial), and each square was preceded by a fixation cross (Fig. 2). The first trial began with 12 squares and a switch frequency of 5 (i.e., the squares within a trial switched 5 times, from small to big square or big to small square). At the end of each trial, participants indicated how many small and how many big squares were presented. That is, participants had to keep a running tally of two lists. If participants responded correctly, the total number of squares in the next trial increased by one, the switch could also increase, and the square display duration decreased by 20 ms (see Table S1 in the Supplemental Material available online for details on how the switch frequency was determined on each trial and other task details). If participants responded incorrectly, the number of squares on the next trial decreased by one, the square display duration increased by 20 ms, and the switch frequency decreased. These calibration procedures helped to ensure that even without drawing on inhibition processes, the task was demanding and tiring for all participants regardless of individual differences in executive-function abilities.

Example trial from the titrated symbol-counter task used in the high-demand manipulation (adapted from the study by Garavan, Ross, Li, & Stein, 2000). This calibrated task heavily taxes the shifting and updating aspects of executive function. On each trial, multiple small and big squares were presented sequentially, and participants reported the number of small and big squares presented at the end of the trial. If participants responded correctly, the total number of squares in the next trial increased, the switch frequency increased, and the square display duration decreased. If participants responded incorrectly, the total number of squares on the next trial decreased, the switch frequency decreased, and the square display duration increased.

Measures of phenomenology

After completing the low-demand and high-demand tasks, participants answered five questions (presented in random order) about the task and their current mental state using a sliding Likert scale: (a) mental demand: “How mentally demanding was the [task/video task]?” (from

Task 2: outcome measure

After completing the experimental manipulation and manipulation checks, participants completed a Stroop task with 120 congruent and 60 incongruent trials. On each trial, a word (“red,” “blue,” or “yellow”) was presented in either the color red, blue, or yellow, and participants had to indicate the font color of the word by pressing a key (V = red, B = blue, N = yellow). The same mapping was used for all participants and was displayed at the bottom of the screen throughout the task. On congruent trials, the word and color matched (e.g., the word “red” shown in red font); on incongruent trials, the word and color did not match (e.g., the word “red” shown in blue font). Congruent and incongruent trials were interleaved randomly, and the stimulus on each trial remained on screen until the participant responded or until 2,000 ms had elapsed. If participants failed to respond on three consecutive trials, they were reminded to respond faster and more accurately. Participants practiced 12 trials before completing 180 experimental trials.

Exclusion criteria

We preregistered the same four exclusion criteria

1

for all four studies to exclude low-quality data (e.g., see the Exclusion Criteria section at https://osf.io/6sncm/). First, we excluded participants whose overall accuracy on the high-demand task (titrated symbol-counter task) was less than 20% (3, 9, 25, and 28 participants were excluded in Studies 1, 2, 3, and 4, respectively). Second, for the dependent variable (Stroop task), we excluded trials on which reaction time was faster than 250 ms (0.52%, 0.26%, 1.66%, and 0.93% trials were excluded in Studies 1, 2, 3, and 4, respectively). Third, we used a robust outlier-detection approach (median absolute deviation) rather than the commonly used but problematic ±3-

Diffusion-model fitting

We fitted the EZ-diffusion model (Wagenmakers et al., 2007) to each participant’s Stroop data, which transformed the observed reaction time and accuracy variables into the latent variables of drift rate, boundary, and nondecision time (for code, see Lin, 2019). This model does not compute the starting-point bias because it assumes that the starting point is equidistant from the two boundaries. Furthermore, the boundary parameter is generally assumed to be determined before stimulus onset and therefore should not vary as a function of Stroop stimulus congruency. However, using the EZ-diffusion model in our case prevented us from forcing the boundary to be the same for all stimuli. We therefore obtained separate boundary-parameter estimates for congruent and incongruent Stroop stimuli, assuming that participants rapidly adjusted their boundaries immediately after stimulus onset.

Despite these assumptions and the fact that the EZ-diffusion model is simpler than the full model, several studies have shown that the former often outperforms the latter (Dutilh et al., 2019; van Ravenzwaaij et al., 2017). To verify the EZ-diffusion model results, we ran exploratory but preregistered analyses (osf.io/7qcxa) to fit more appropriate models (i.e., diffusion model for conflict tasks; Evans & Servant, 2019), which led to conclusions similar to those obtained via EZ-diffusion modeling (see Figs. S2 and S3 in the Supplemental Material).

Preregistered hypotheses and analyses

Phenomenology

We expected participants to report higher mental demand, effort exerted, frustration, boredom, and fatigue in the high-demand than in the low-demand condition.

Primary hypotheses

We expected the high-demand condition to have a smaller boundary than the low-demand condition after controlling for Stroop congruency (trial type: congruent vs. incongruent), which would reflect less cautious or more impulsive responding after completing the high-demand task. We also expected the high-demand condition to have lower drift rate than the low-demand condition after controlling for Stroop congruency, which would reflect a slower information-processing rate. These analyses reflected our belief at the time that our demand manipulation should reduce overall task motivation and engagement rather than specifically reduce self-control or inhibition abilities. Note that we preregistered these two hypotheses only for Studies 2 to 4 but not Study 1, for which we preregistered only traditional Stroop behavioral effects. Finally, another plausible outcome 2 we did not preregister (and failed to observe in our data) is that effort exertion reduces drift rate and that participants might compensate by increasing boundary separation to ensure they maintain acceptable accuracies on the task.

Secondary hypotheses

We also tested additional hypotheses to indirectly examine the effects of high and low demand, but the primary effects described above did not hinge on these secondary effects. On the basis of the results from Study 1, we expected that (a) participants who reported feeling more fatigued, frustrated, or bored 3 after completing the first task would have lower drift rate or boundary on the Stroop task and (b) incongruent Stroop trials would be associated with lower drift rate and boundary 4 than congruent Stroop trials.

Exploratory analyses

We investigated the effects of our manipulations on traditional Stroop behavioral outcomes (reported in the Results section). Note that we preregistered these behavioral effects in Study 1 but not Studies 2 to 4. In addition, we verified the results of the EZ-diffusion model by running exploratory preregistered analyses (osf.io/7qcxa) that involved fitting more complicated diffusion models (Evans & Servant, 2019; Ulrich, Schröter, Leuthold, & Birngruber, 2015). Finally, because Stroop performance might be influenced by practice or learning effects (because of our within-subjects design), we also tested for session-order effects.

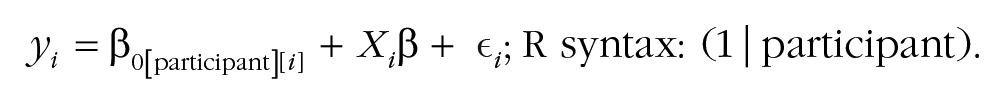

Statistical analyses

Continuous predictors were mean-centered on participant, and categorical predictors were recoded before model fitting: condition (low demand = −0.5; high demand = 0.5) and Stroop congruency (congruent = −0.5; incongruent = 0.5). We fitted Bayesian multilevel models using the R package

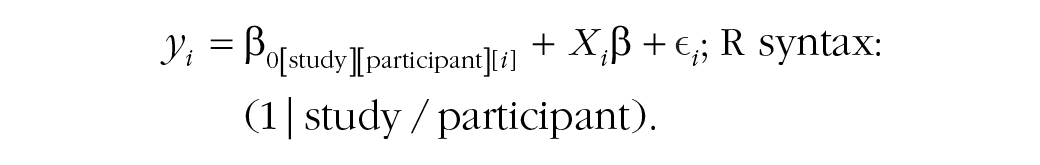

To meta-analyze the four studies to obtain an overall effect, we fitted three-level varying-intercept multilevel models in which data were clustered within participants, who were in turn clustered within studies:

For the condition effect (high demand vs. low demand) in each model, we used an informed Gaussian prior of

Because the prior influences the posterior, we performed prior-sensitivity analyses by refitting the models using normal priors with the same standard deviations as the informed priors but centered around 0 for the effect of interest; effects not directly testing our demand manipulation had the prior

For each model, we ran 20 Markov chain Monte Carlo chains with 2,000 samples and discarded the first 1,000 samples (as burn-in). For each effect, we report the mean of the posterior samples and the 95% highest-posterior-density (HPD) interval, which is the narrowest interval containing the specified probability mass. We used bridge sampling to compute Bayes factors (BFs), which reflect the amount of evidence favoring one model over a reduced model that does not contain the effect or hypothesis of interest. To ensure the stability of the results, we report BFs that were the mean of five BF computations. BFs equal to 1 indicate equal evidence for the null and experimental hypotheses. BFs greater than 1 indicate evidence in favor of the experimental hypothesis: from 1 to 3 indicate anecdotal evidence, from 3 to 10 indicate moderate evidence, from 10 to 30 indicate strong evidence, and greater than 30 indicate very strong or decisive evidence (Lee & Wagenmakers, 2013; but for problems with BFs, see Gelman & Shalizi, 2013). Conversely, BFs less than 1 indicate evidence in favor of the null hypothesis. Smaller values indicate stronger evidence for the null hypothesis: BFs from 0.33 to 1 indicate anecdotal evidence, from 0.10 to 0.33 indicates moderate evidence, from 0.03 to 0.10 indicates strong evidence, and less than 0.03 indicate very strong or decisive evidence. All data, materials, and code for the main analyses can be found at https://osf.io/45gyk/.

Preregistered-Analysis Results

Phenomenology

Demand

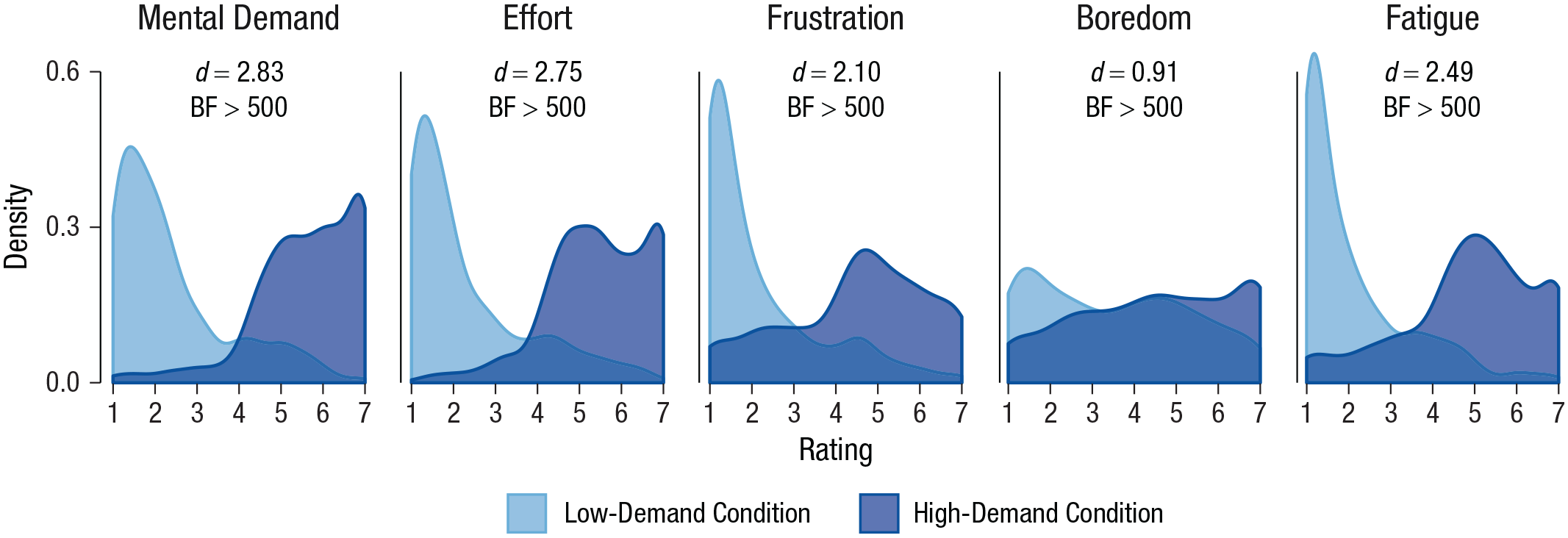

We found strong and consistent effects of condition on self-reported mental demand. In all studies (Fig. 3), mental demand was much higher in the high-demand than in the low-demand conditions (Study 1:

Phenomenology collapsed across studies: kernel-density estimates as a function of condition (high demand vs. low demand), separately for each of the self-reported ratings of mental demand, effort, frustration, boredom, and fatigue. The area beneath each density curve sums to 1. See Table 1 for detailed statistics. BF = Bayes factor.

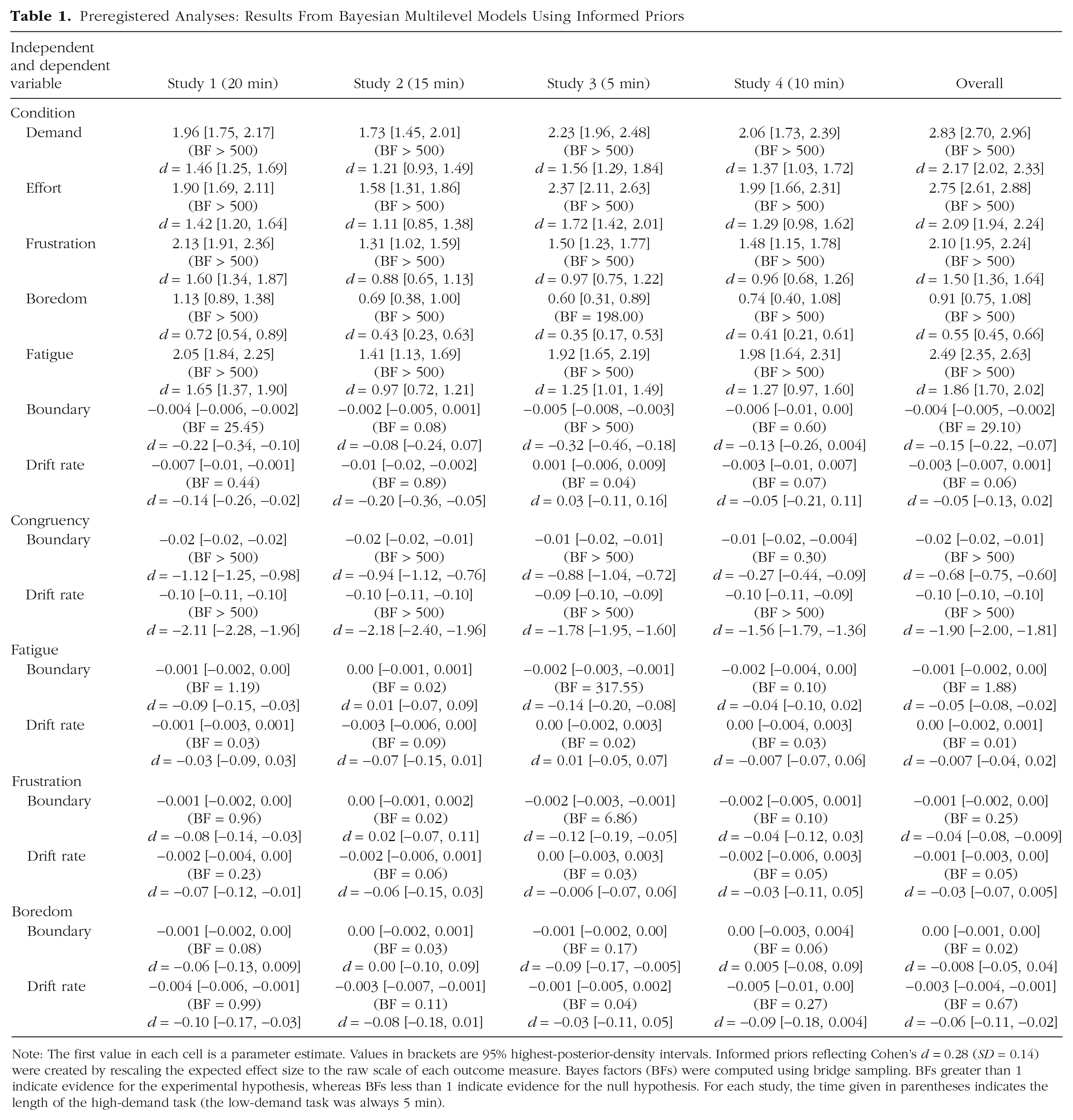

Preregistered Analyses: Results From Bayesian Multilevel Models Using Informed Priors

Note: The first value in each cell is a parameter estimate. Values in brackets are 95% highest-posterior-density intervals. Informed priors reflecting Cohen’s

Effort

Self-reported effort was also much higher in the high-demand than in the low-demand condition in all studies (Study 1:

Frustration

Similarly, participants reported feeling more frustrated in the high-demand condition than in the low-demand condition in all studies (Study 1:

Boredom

Self-reported boredom was higher in the high-demand condition than in the low-demand condition in all studies (Study 1:

Fatigue

Finally, participants reported higher fatigue in the high-demand condition than in the low-demand condition in all studies (Study 1:

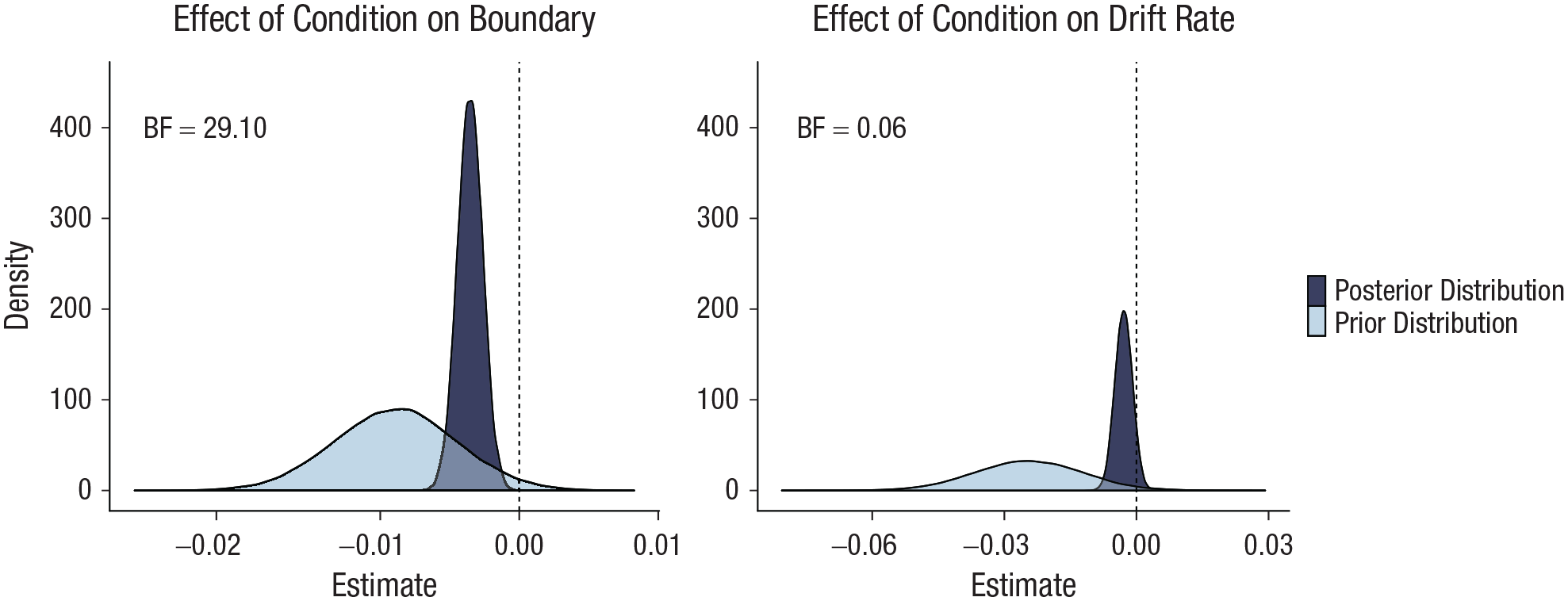

Latent parameter: boundary

We report the effect of condition on the boundary parameter after controlling for Stroop congruency, which had strong effects on the boundary parameter in all studies (Fig. 4, Table 1). The boundary parameter was smaller in the high-demand than in the low-demand condition in all four studies (Study 1:

Bayesian posterior- and prior-density distributions for the effect of condition (low demand vs. high demand) on the boundary (left) and drift-rate (right) parameters obtained from meta-analytic Bayesian multilevel models. Prior distributions reflect expectations about the effect sizes before empirical data are collected: Informed priors reflecting Cohen’s

Exploratory analyses including session order and the session-order-by-condition interaction in the models showed that practice or learning effects were strong and consistent with previous work (e.g., Dutilh, Krypotos, & Wagenmakers, 2011). Boundary separation was smaller in the second than first session in all studies (BFs > 500), but order did not interact with condition, and the effect of our demand manipulation remained small but highly robust,

Latent parameter: drift rate

We also report the effect of condition on drift rate after controlling for Stroop congruency, which had strong effects on the drift-rate parameter in all studies (Fig. 4, Table 1). The effects of condition on drift rate were inconsistent across studies (Study 1:

Exploratory analyses including session order and the session-order-by-condition in the models showed that practice or learning effects were strong; these effects were consistent with previous findings (e.g., Dutilh et al., 2011). Drift rate was higher in the second than in the first session in all studies (BFs > 500), reflecting improved task performance. Order did not interact with condition, and drift rate did not differ between the high-demand and low-demand conditions (see Fig. S6 and Table S3).

Finally, to verify the results of the EZ-diffusion model, we ran exploratory analyses that fitted the diffusion model for conflict tasks, which is specifically designed for cognitive control tasks such as the Stroop. It models information integration during conflict tasks as a function of controlled and automatic processes; the automatic process varies over time according to a gamma function (Ulrich et al., 2015). Consistent with the results of the EZ-diffusion model, these analyses showed that effort exertion reduced boundary separation but had no effect on information integration via controlled (drift-rate parameter) or automatic (ζ parameter) processes. These results bolster our interpretation that exerting effort or being depleted does not selectively impair one’s ability to inhibit automatic processes (see Figs. S2–S5 in the Supplemental Material for details and additional results from the regular analytic diffusion model).

Phenomenology and latent-parameter relations

Boundary–fatigue relation

Increased self-reported fatigue was associated with smaller boundaries in three studies (Study 1:

Boundary–frustration relation

Increased self-reported frustration was associated with smaller boundaries in three studies, although only Study 3’s 95% HPD intervals did not include 0 (Study 1:

Boundary–boredom relation

Self-reported boredom was not associated with the boundary parameter in any of the four studies. All 95% HPD intervals included 0 (Table 1).

Drift rate–fatigue relation

Self-reported fatigue was not associated with the drift-rate parameter in any of the four studies. All 95% HPD intervals included 0 (Table 1).

Drift rate–frustration relation

Self-reported frustration was not associated with the drift-rate parameter in any of the four studies. All 95% HPD intervals included 0 (see Table 1).

Drift rate–boredom relation

Self-reported boredom was associated with reduced drift rate in Studies 1 and 2 (Study 1:

Exploratory analyses found that session order did not interact with fatigue, frustration, or boredom (see Tables S4 and S5 in the Supplemental Material).

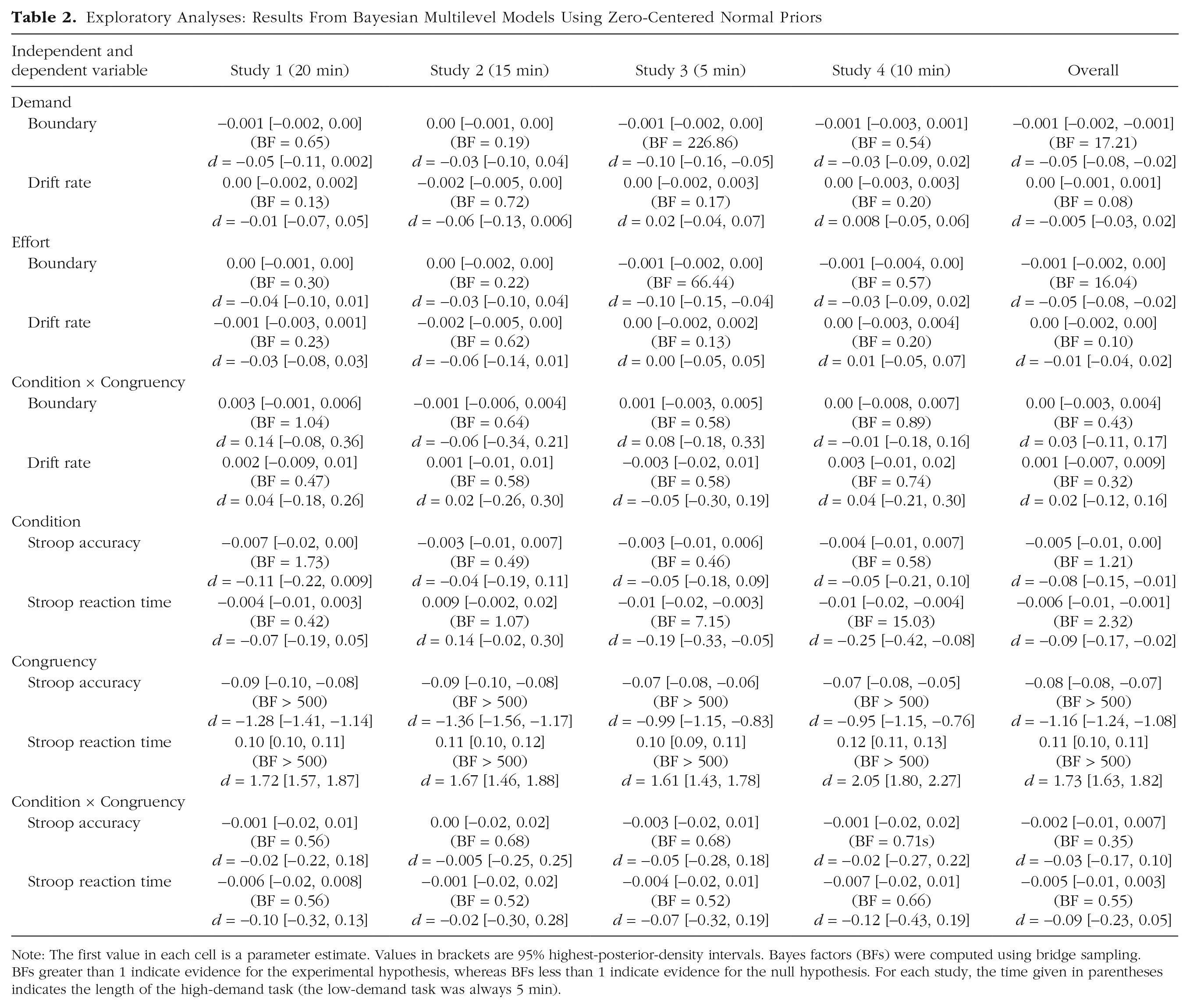

Exploratory-Analysis Results

Other (nonpreregistered) analyses might provide further insights into the psychology of effort exertion and ego depletion (see Table 2). Note that because we did not preregister the analyses below, we used normal priors centered around 0 instead of informed priors.

Exploratory Analyses: Results From Bayesian Multilevel Models Using Zero-Centered Normal Priors

Note: The first value in each cell is a parameter estimate. Values in brackets are 95% highest-posterior-density intervals. Bayes factors (BFs) were computed using bridge sampling. BFs greater than 1 indicate evidence for the experimental hypothesis, whereas BFs less than 1 indicate evidence for the null hypothesis. For each study, the time given in parentheses indicates the length of the high-demand task (the low-demand task was always 5 min).

Phenomenology and latent-parameter relations

Boundary–demand relation

Results from the individual studies showed that self-reported demand was not consistently associated with changes in boundary (Study 1:

Boundary–effort relation

As with the results for the boundary–demand relation, results from the individual studies for the boundary–effort relation were mixed (Study 1:

These two results above suggest that increased feelings of mental demand and effort led to less cautious responding on the Stroop task, although we note that these two ratings correlated strongly in all four studies (

Drift rate–demand relation

Self-reported demand was not associated with drift rate in any of the studies. All 95% HPD intervals included 0 (Table 2).

Drift rate–effort relation

Self-reported effort was also not associated with drift rate in any of the studies. All 95% HPD intervals included 0 (Table 2).

Latent parameter: boundary (condition–congruency interaction)

We fitted a model in which the boundary parameter was predicted by condition, Stroop congruency, and their interaction. Here, we focused on the interaction term because it indicates whether the effect of condition on the boundary parameter varied as a function of Stroop congruency. In all four studies, the 95% HPD intervals of the interaction term included 0 (see Table 2). Results from the three-level multilevel model were similar,

Latent parameter: drift rate (condition–congruency interaction)

We also fitted a model in which the drift-rate parameter was predicted by condition, Stroop congruency, and their interaction. In all studies, the interaction effect was close to 0 (Table 2), which suggests that the effect of condition on the drift-rate parameter did not vary as a function of Stroop congruency.

Behavioral effects: Stroop accuracy

We modeled Stroop accuracy (proportion correct) as a function of condition, congruency, and their interaction. The congruency effect was robust and consistent across studies (Table 2), and the results from the three-level multilevel model indicated that accuracy was lower on incongruent trials (

Behavioral effects: Stroop reaction time

We also modeled Stroop reaction time (trials with correct responses) as a function of congruency, condition, and their interaction. As expected, the congruency effect was strong and consistent across studies (Table 2): Results from the three-level multilevel-model meta-analysis indicate that reaction times were slower on incongruent trials (

Discussion

Our results provide insights into the psychology of effort exertion. Across four studies, our demand manipulation (high vs. low) was highly depleting because it robustly elicited strong effort and fatigue sensations. Diffusion-model analyses provided insights into the effects of effort exertion on cognitive processes that have been previously unexamined.

For the two preregistered effects on the latent parameters, Bayesian analyses provided strong evidence for reduced boundary but not drift rate after participants completed the high-demand but not the low-demand task. The lack of evidence for reduced drift rate suggests that effort exertion did not worsen participants’ subsequent task performance or their abilities to process information. However, reduced boundary separation suggests that participants responded less cautiously, as if they cared less about the task and had lost some of their will to persist and engage fully.

Crucially, the reduced-boundary effect was not limited to situations involving inhibition (i.e., incongruent Stroop trials), consistent with our theoretical position that exerting effort leads to task reprioritization and disengagement with ongoing tasks (Inzlicht et al., 2014). Accordingly, depletion should impair performance on incongruent

Further evidence for our theoretical view comes from the finding that participants reported increased boredom in the high-demand than in the low-demand condition, which might indicate unsuccessful attentional engagement when people feel either unable or unwilling to engage with ongoing tasks (Westgate & Wilson, 2018). Moreover, participants who reported increased fatigue, demand, or effort also had reduced boundary parameters, but these exploratory effects should be interpreted with caution.

Our findings suggest that even when tasks elicit strong subjective states related to fatigue, traditional behavioral measures might lack sensitivity to detect downstream effects. Instead, latent variables might be more sensitive. For example, the reduced-boundary effect was about twice as large as the reduced overall Stroop reaction time and accuracy effects, likely because the diffusion model solves the speed/accuracy trade-off associated with reaction time tasks. Given the strengths of the diffusion model (see Evans & Wagenmakers, 2019), we suggest that other researchers apply similar approaches or reanalyze previous depletion studies that used speeded reaction time tasks.

Depletion proponents might celebrate because our results provide strong evidence for and further insights into depletion effects as well as against the null hypothesis that depletion effects do not exist. Skeptics, however, will hasten to highlight various limitations. Only one of two hypotheses were confirmed—and only meta-analytically, with merely two of four individual studies providing evidence for our preregistered hypotheses. Nevertheless, the small but meaningful effect size (reduced boundary effect:

Conclusion

Our paradigm robustly elicited feelings such as effort and fatigue, highlighting its utility for studying these subjective states. Bayesian analyses provided strong evidence for the idea that people disengage after exerting effort. Although we failed to find support for all our hypotheses, we have learned that laboratory depletion effects are elusive even with strong manipulations and latent variables that capture meaningful cognitive processes. But our rigorous approach has much potential to facilitate future empirical and theoretical developments.

Supplemental Material

Lin_OpenPracticesDisclosure_rev – Supplemental material for Strong Effort Manipulations Reduce Response Caution: A Preregistered Reinvention of the Ego-Depletion Paradigm

Supplemental material, Lin_OpenPracticesDisclosure_rev for Strong Effort Manipulations Reduce Response Caution: A Preregistered Reinvention of the Ego-Depletion Paradigm by Hause Lin, Blair Saunders, Malte Friese, Nathan J. Evans and Michael Inzlicht in Psychological Science

Supplemental Material

Lin_Supplemental_Material_rev – Supplemental material for Strong Effort Manipulations Reduce Response Caution: A Preregistered Reinvention of the Ego-Depletion Paradigm

Supplemental material, Lin_Supplemental_Material_rev for Strong Effort Manipulations Reduce Response Caution: A Preregistered Reinvention of the Ego-Depletion Paradigm by Hause Lin, Blair Saunders, Malte Friese, Nathan J. Evans and Michael Inzlicht in Psychological Science

Footnotes

Acknowledgements

We thank Colin Kupitz, Joachim Vandekerckhove, and Shravan Vasishth for their guidance. We also thank Julian Quandt for spotting mistakes in the preprint of this article.

Transparency

H. Lin, B. Saunders, M. Friese, and M. Inzlicht developed the study concept and contributed to the study design. H. Lin performed testing and collected the data. H. Lin and N. J. Evans analyzed and interpreted the data under the supervision of B. Saunders, M. Friese, and M. Inzlicht. H. Lin drafted the manuscript, and the remaining authors provided critical revisions. All of the authors approved the final version of the manuscript for submission.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.