Abstract

The cordial existence of robots and humans in the same workstation is an important aspect of the deployment of autonomous systems. Usually, robots are integrated with auxiliary sensors for monitoring the surroundings and tackling unexpected actions. The incorporation of LiDAR (light detection and ranging) and SLAM (simultaneous localization and mapping) has gained the researcher’s attention these days. The current study aims at developing a collision-free motion for an autonomous robot to ensure the least possible travel period at internodes. First, a SLAM-based algorithm for a static environment is proposed. A low-cost microcontroller Arduino UNO is used here along with LiDAR VL53L0X and is integrated with MATLAB to store and display the acquired data. The A* algorithm is used to navigate between the shortest paths on the obtained map. The robot is also integrated with radio frequency identification (RFID) sensors to automate the process. The proposed system would be useful for material handling, assistance, and cleaning purpose in large warehouses or households.

Introduction

Some of the areas that signify the use of smart and intelligent logistics are automating workflow and process automation, supply chain, robots, transport, black boxes, better demand prediction, live tracking, etc. 1 Robotics in logistics is a very broad topic. In fact, it concerns every area of the supply chain—that is from warehouse automation to robotics used in ports, and to drone deliveries. 2 Looking little bit deeper, narrowing down the subject to strictly warehouses or households, the robotics must be considered in such aspects as stated here. The first is the automation of the flow of goods (installation of pallet or container conveyors allows improvement in the flow of goods between different areas of the warehouse, and even between different facilities).3–6 The second is automation of picking process (implementation of semi-automatic systems such as pick-by-light or devices for voice picking pick-by-voice, which support warehouse workers in the process of preparing orders, indicating, e.g. the number of goods to be picked) or order dispatching automation (installation of equipment sorting goods by carrier or equipping docks with a system for automatic loading and unloading of goods enhanced with the installation of devices sorting goods by carrier or equipping docks with a system for automatic loading and unloading of goods).7–9 The robots currently available are suitable for large-scale operations, however, small-scale integration of these robots is not only costly but also impractical. 10 Small-scale use of such robots can be characterized by less load carrying capacity and less computational strain at less expense, with technologies to integrate these robots into the internet use of such robots can be beneficial.11–14

The robot proposed herein consists of an Arduino microcontroller and sensors used to monitor proximity detection and mapping purposes. The mobile robot considered here is wheeled indoor robot with LiDAR sensors mounted on top. Output of sensor is input for MATLAB which is connected with help of Bluetooth module. A* algorithm is used to navigate between the shortest paths on the obtained map. The robot is also integrated with radio frequency identification (RFID) sensors to automate the process. The objective is to design the prototype of the robot to achieve economics in the handling of raw material, work in progress, and finished products. The development of a conscience-friendly robot by integrating hardware and software components with ergonomics is proposed. An affordable proximity detection system for future development of autonomous robots and vehicles is advocated. It aims to increase in automation of small warehouses and businesses, productivity, and efficiency of warehouse operations. It assists rudimentary tasks to robots so workers can work without fatigue and would minimize the possibility of accidents. These robots can be used in any indoor environment due to their programming. The main focus is on small-scale warehouses where loading and unloading operations are quite frequent. This demand can be met by using a robot which consumes less power by traveling through the shortest path available. These robots can also be used in restaurants as a food delivery system. They can be used in hospitals for rudimentary tasks like transportation of goods and assistance services. They can be used in hotels for delivery and assistance services. Supermarkets are similar to inventories but with the more dynamic environment, with high computation these environments can also be successfully navigated to provide services to the customers such as item search and navigation assistance. Major contributions of this study are:

MATLAB-based controller was used to generate a map of indoor environment by using ToF (time of flight) sensor, which was integrated with A* algorithm to generate the shortest path. The shortest path is followed by the robot by using pure pursuit controller while sensing the obstacles ahead by the same TOF sensor. The robot is also integrated with RFID sensor to the sense the tag on objects placed on the robot and navigates autonomously from source to destination. The robot is equipped with lead screw mechanism for raising and lowering the platform where objects are placed. The prototype is designed to carry and lift objects weighing 1 kg.

Design and calculations

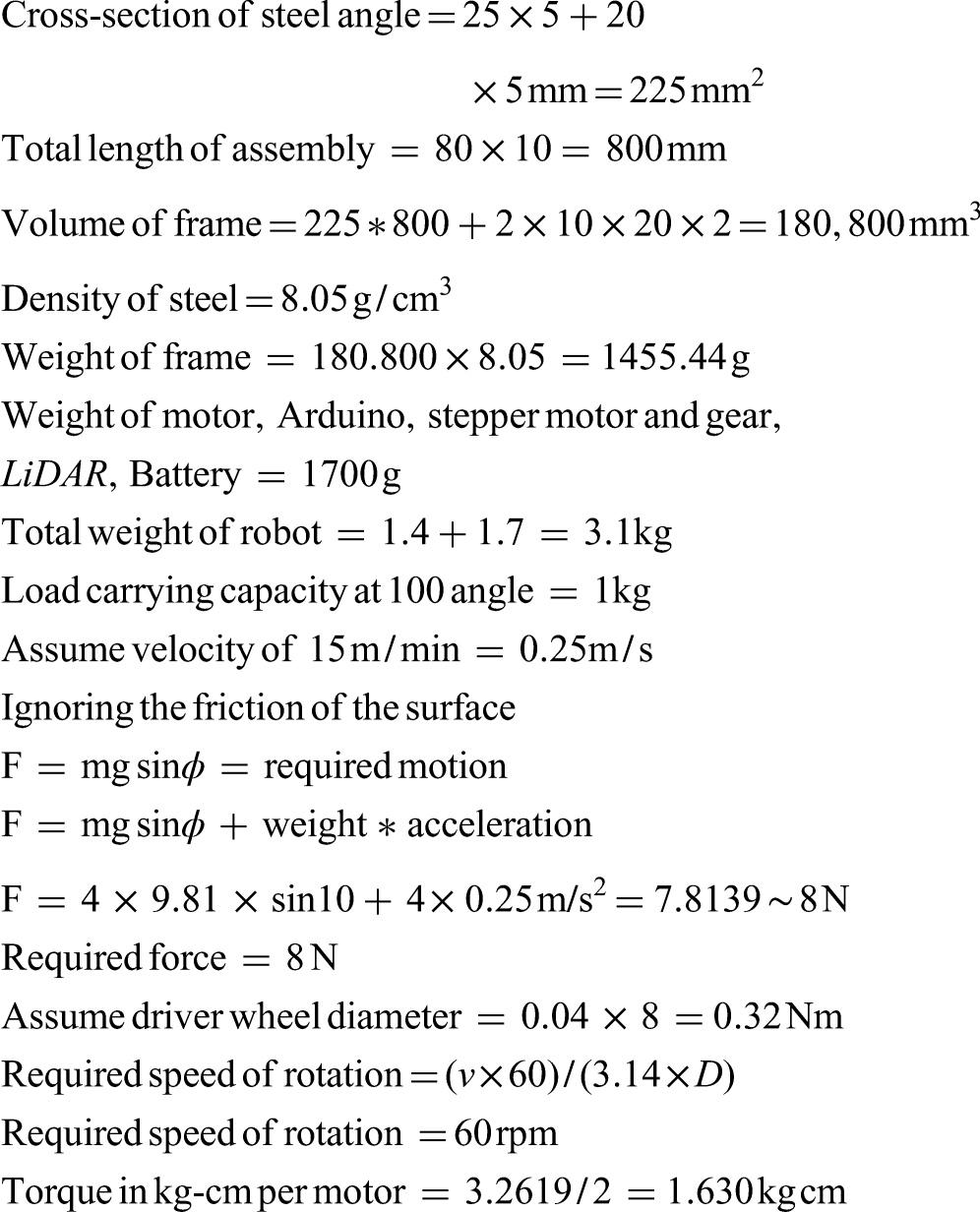

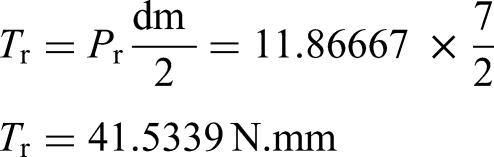

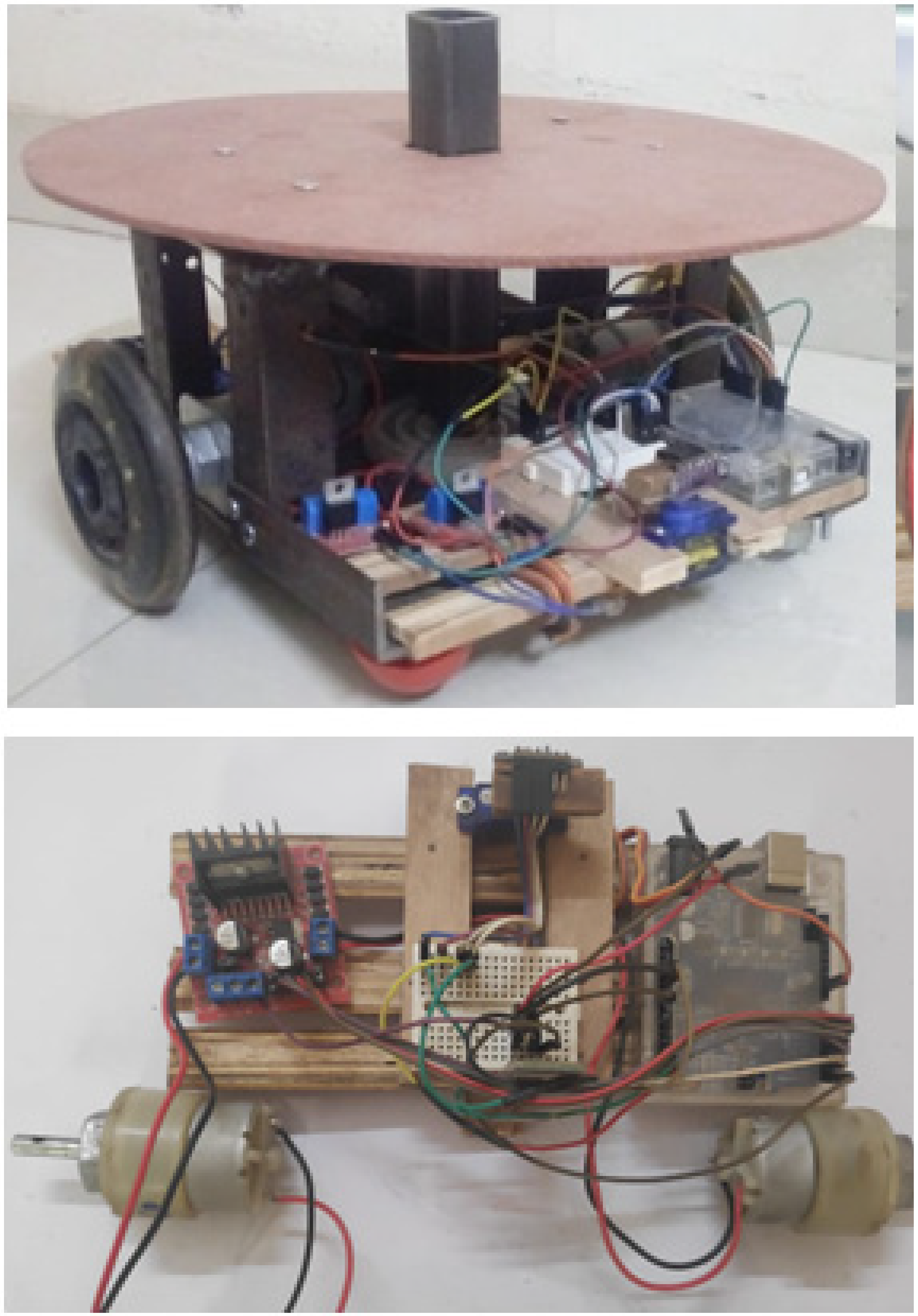

The prototype is manufactured with mild steel angle of L-cross section for frame with plates of 2 mm thickness and wooden strips for mounting Arduino and other electronic components. The density of mild steel is 7850 kg/m3. The mild steel angle of a thickness 3 mm, height/width is 25 mm, mild steel plate of a thickness is 2 mm, wood strips of thickness is 8 mm, width is 15 mm. Figure 1 shows cross-section of a mild steel angle and its properties.

Cross section of a mild steel angle and its properties.

Load calculations for MS plate

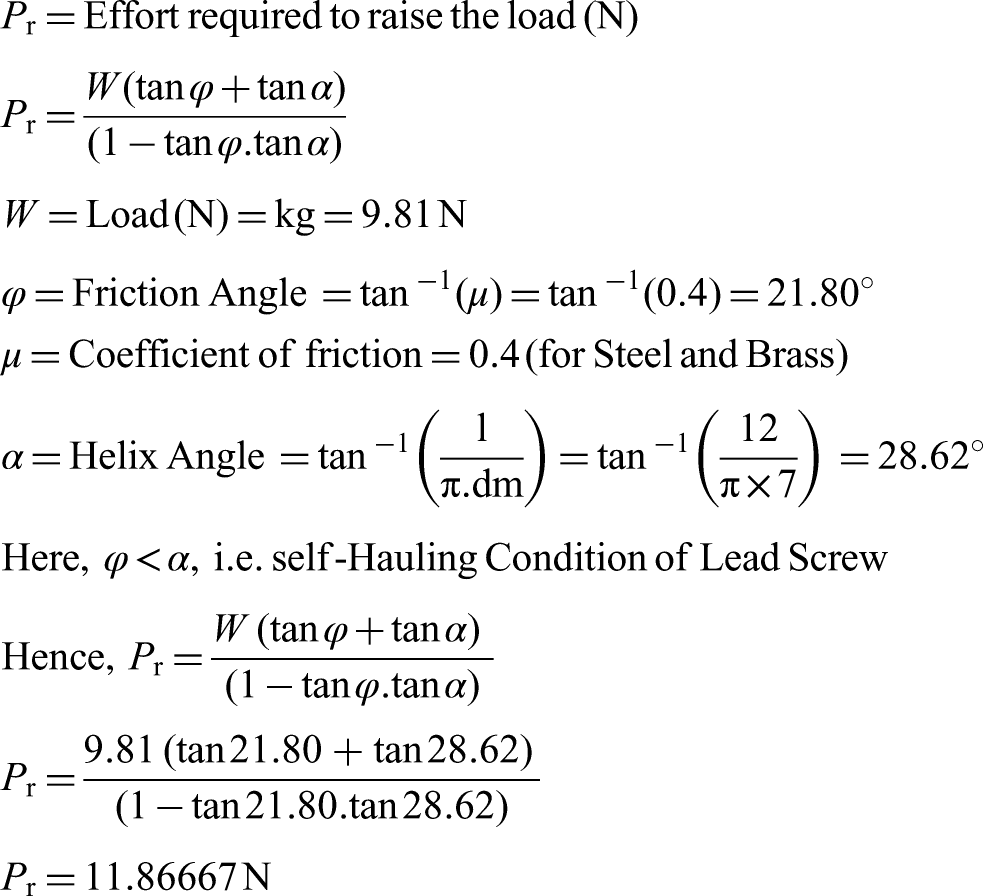

Calculations for Lifting Mechanism:

Torque required raising load is calculated as follows,

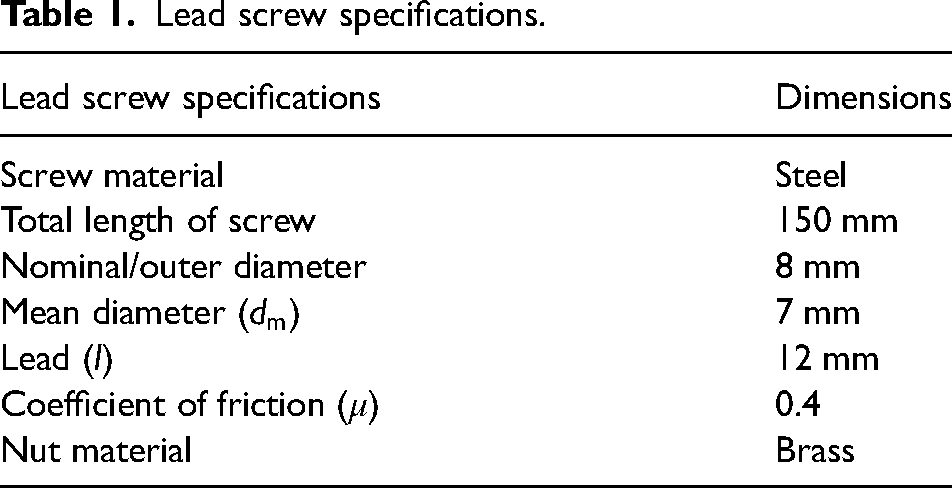

Lead screw specifications.

Calculations for gear and pinion

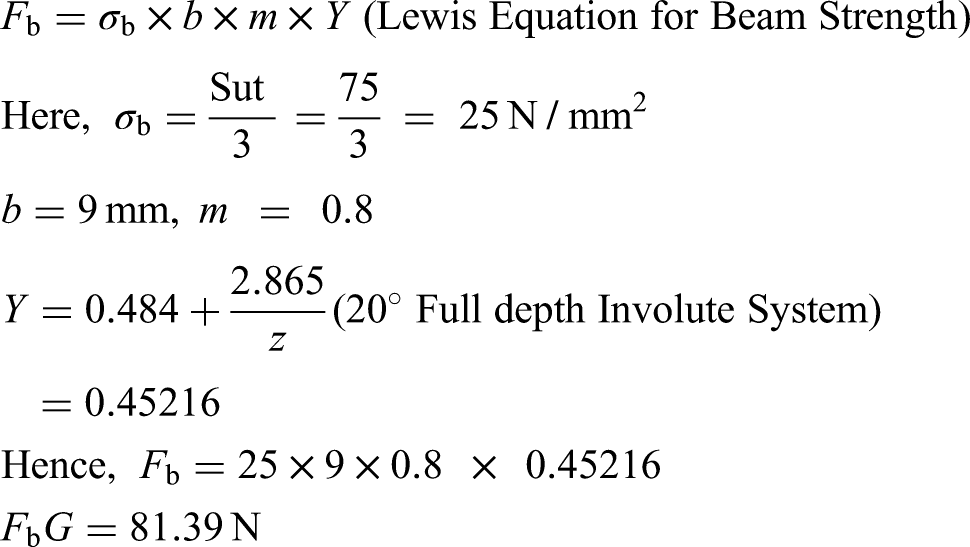

Beam strength of gear

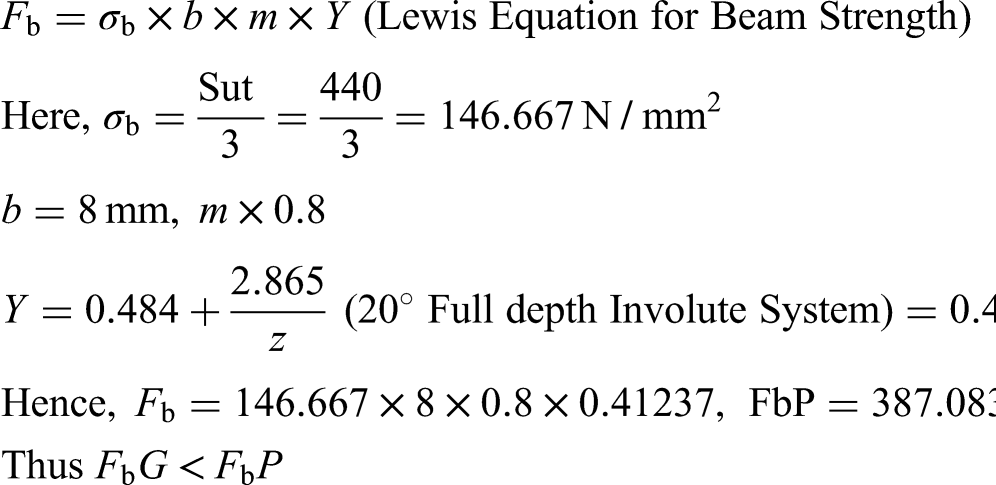

Beam strength of pinion

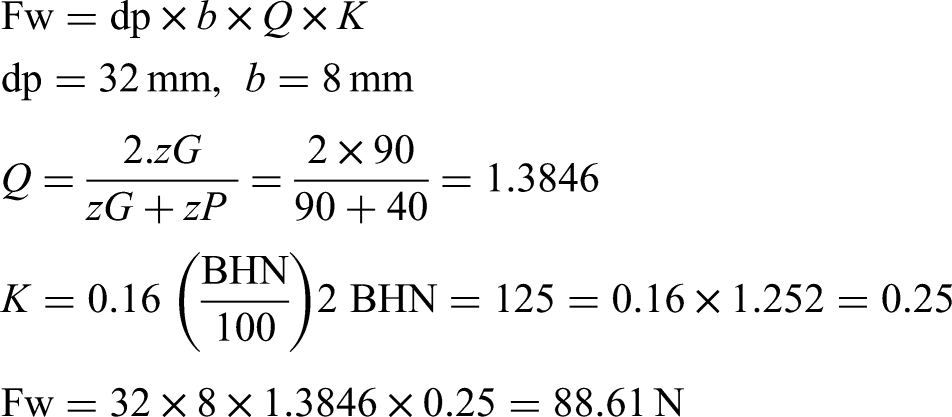

Wear Strength of Gear Pair:

Wear strength is maximum tangential load the gear tooth can take without wear failure.

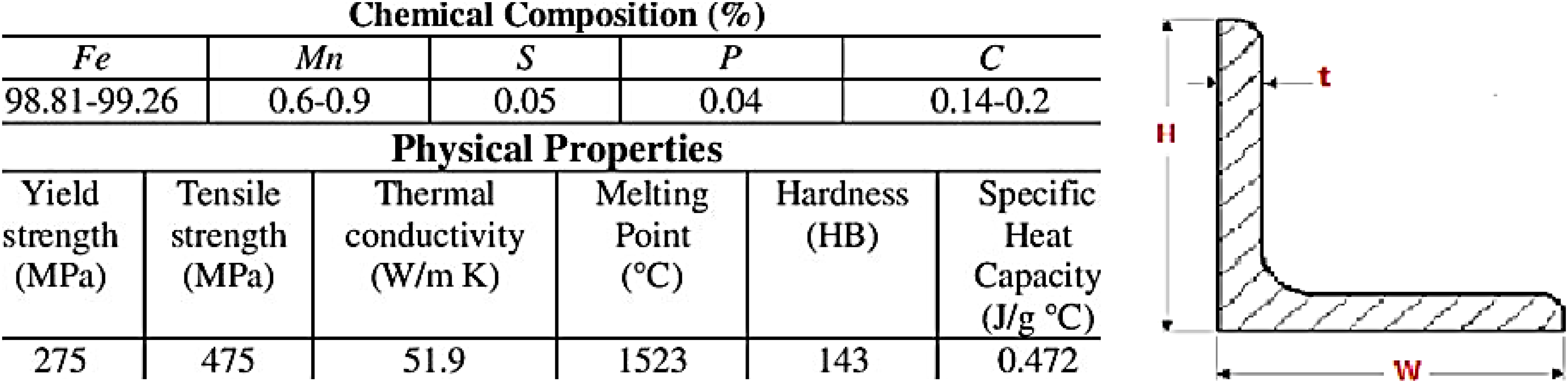

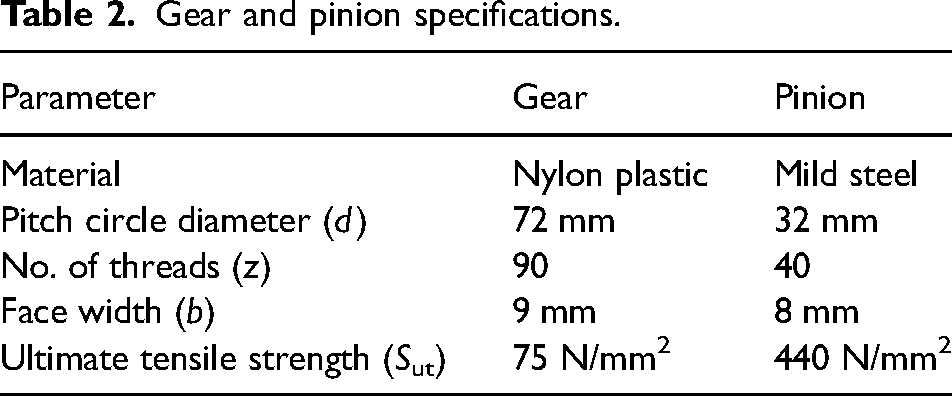

Table 2 shows a gear and pinion specifications.

Gear and pinion specifications.

Selection of components

In this section, selection of motors, lifting mechanism, LiDAR & Arduino UNO is discussed.

Selection of motors

The motor of 4.5 kg-cm (0.44 Nm) was selected for drive operation and 4 kg-cm stepper motor for lifting mechanism, which is more than sufficient as per the design considerations. The power supply required to drive this motor is 6 V at 1 A. Hence for 1 hour of operation power supply of 6V and 3.5 Ah was selected.

LiDAR and Arduino UNO

LiDAR or light detection and ranging senses distance by pulse of a laser. Basically what a laser range finder does but it goes a step farther.

15

The laser beam or laser pulse is swept across the target to be measured and the LiDAR device take multiply readings of the distance.

16

This provides a profile cross section of the target. This repeats over and over again until a 3D picture or model can be constructed from the data. LiDAR is most often used on a moving device, such as a plane or satellite flying over a landscape.

17

It can penetrate leaves in forests so as to get duel reflections from the ground and tree top which is very helpful in mapping the surface contours and determining tree height.

18

LiDAR technologies use near infrared light to illuminate target surface, and then distance and reflectivity measure of the surface can be estimated from the reflected light.

19

The key benefit of this technology is both elevation and intensity image can be obtained with high accuracy.

20

All reflected points are gathered to generate a 3D model of the illuminated object/surface. This is known as 3D point cloud. Therefore, 3D model of an entire area can be captured using this technology. Many useful products can be derived from LiDAR point cloud.

21

This video can provide a preliminary idea about acquired LiDAR data. LiDAR is like a camera that takes a picture of distance to objects instead of the color of the object.

22

For each sampling location the LiDAR scanner/camera records the distance to objects in the field of view creating 3D map of the volume of space scanned.

23

LiDAR can use different methods to achieve this. One uses a physical scanner that projects a pulsed laser beam in a fan shaped pattern that scans from left to right up to down and then repeats.

24

The laser fires an extremely short and intense pulse of light and the waits to see how long it takes to see a reflection of the light.

25

The longer it takes the farther away that piece of the world is from the scanner. If it does not see a reflection it assumes the world is very far away in that direction.

26

In a very short amount of time the laser has scanned a large area and returned a 3D map of the world around the scanner.

27

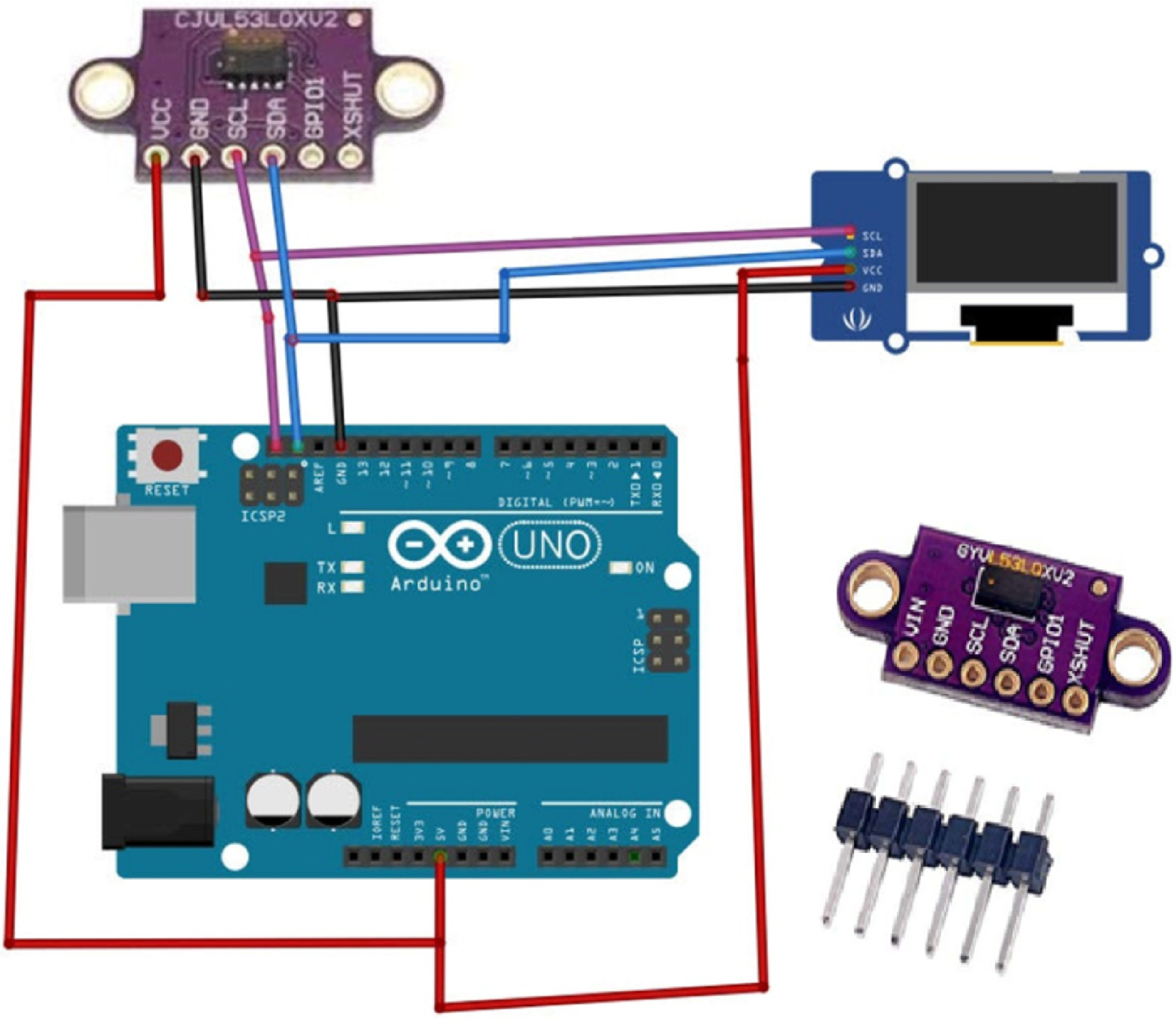

Figure 2 shows a circuit of VL53L0X and Arduino Uno. LiDAR VL53L0X consists of 940 nm laser VCSEL (vertical-cavity surface-emitting laser), VCSEL driver, and ranging sensor with advanced embedded micro controller.

28

LiDAR VL53L0X Measurements: 4.4 × 2.4 × 1.0 mm LiDAR VL53L0X Range: Measures absolute range up to 2 m in indoor environment (reported range is independent of the target reflectance) LiDAR VL53L0X is eye safe, has single power supply, I2C interface for device control and data transfer along with Xshutdown (reset) and interrupt GPIO.

Circuit of VL53L0X and Arduino Uno.

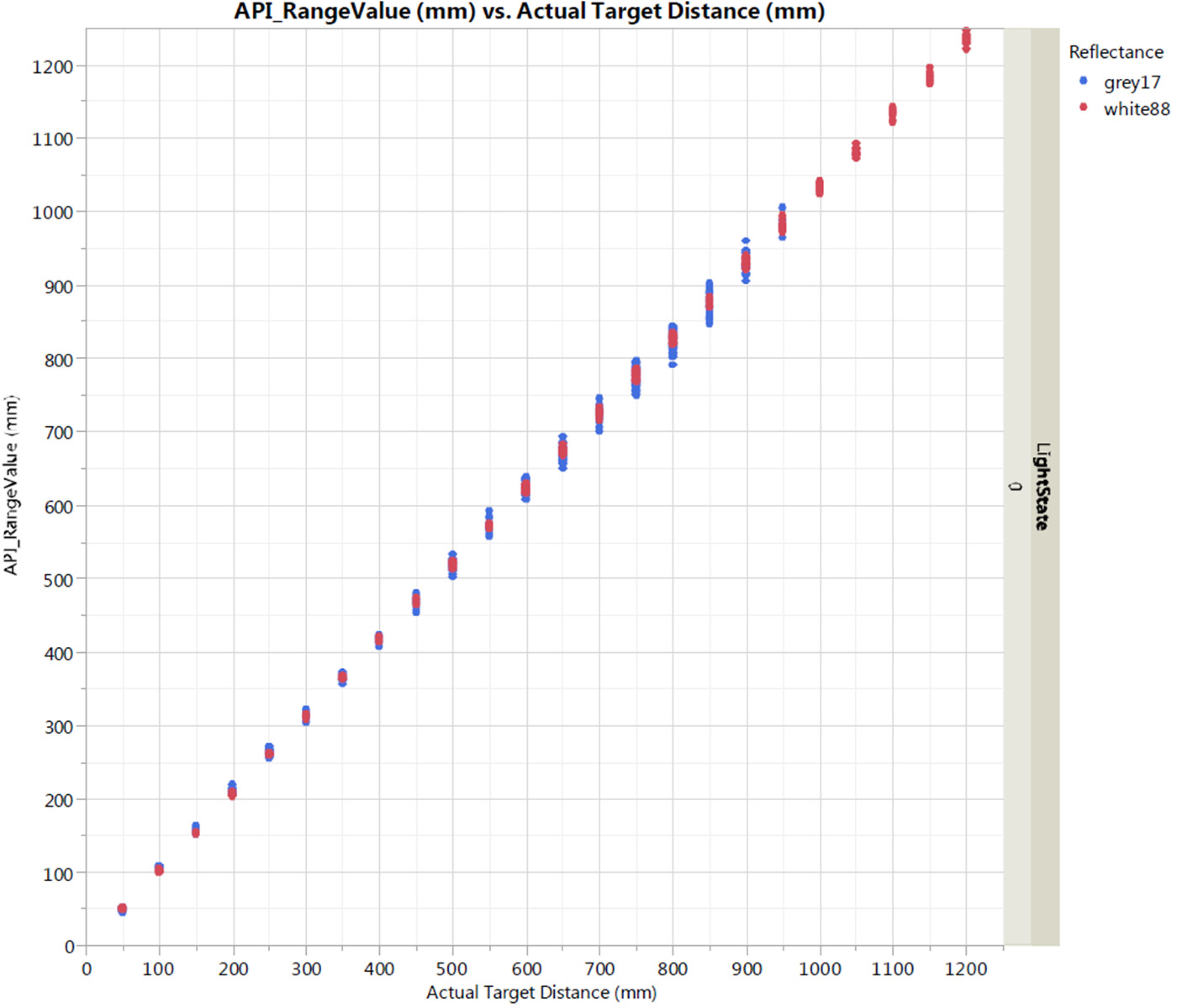

LiDAR uses laser light at much higher frequencies than RF (300 MHz has a wavelength of 1 m, while UV and infrared is around 200–1000 nm). 29 This means that LiDAR works over a shorter range (because higher frequency signals attenuate faster), but it offers higher accuracy in resolving the detail of the object reflecting the laser. So it can be used for measurements such as 3D scanning of an object's surface, where the distance of the scan depends on the input power and efficiency of the system. Figure 3 shows a typical ranging performance in default mode of VL53L0X. As most of the computation was done on MATLAB, Arduino UNO was purely used for communication and control for which it was more than sufficient. Two drive motors, two stepper motors, two I2C interface/connections, and a Bluetooth module have been used in this investigation. The program uploaded consumed less than a quarter of Arduino's memory and the speed was sufficient for our application.

A typical ranging performance in default mode of VL53L0X.

Use of open source platform such as ADXL335 accelerometer, 30 power sensors in face milling, 31 microphones, 32 gyroscopes, 33 thermocouples, 34 fluid flow sensors, 35 etc., integrated with microcontrollers such as Arduino, 36 Raspberry Pi, 37 etc., has been increased in design of such systems.38,39 In addition to this, use of metal matrix composites for making body of the structure has been increased which makes the skeleton robust. 40 Application of ML-based techniques for decision-making in condition monitoring is also becoming popular day by day due to its intelligent nature. 41

Lifting mechanism

The lifting mechanism used here is consists of a lead screw coupled with a rotating gear and a nut coupled to a square shaft which is used to lift the load up and down according to the rotation of gear which is rotated with the help of pinion, coupled to a stepper motor. The lifting capacity is limited to 1 kg for the purpose of prototyping.

Design of algorithms

In this section, design of algorithm is presented including A* algorithm, algorithm for RFID, SLAM algorithm for navigation and pure pursuit algorithm.

A* algorithm

A* is the best-first search, or informed-search code, which is formulated via weights: commencing from a specific node, it targets to discover a track to the certain aim node taking the lowest cost. 42 Normal A* search initializes from a start state, which is prioritized by the sum of distance and a heuristic to the goal. 43

When the frontier reaches a goal state, an optimal path has been found. Bidirectional A* has a pair of search frontiers initialized from both the start and goal states.

44

Each node on the frontiers can be described either by distance from the start + heuristic to the goal, or by distance to the goal + heuristic to the start, whichever is appropriate.

45

When the frontiers meet, an optimal path has been found. But, it might face multiple goal states. Or, it might be that there is not any distinction between start and goal states—there is just a need of making a connection between a pair of nodes in a graph, for example.

46

Therefore, bidirectional search can be generalized to N-directional search, where the frontier is prioritized by:

distance from the start (or goal), plus the minimum of the heuristic to any of the goal states, OR distance to the goal, plus the minimum of the heuristic from any of the start states

47

Computing the heuristic multiple times may eventually become too costly to be practical. Or, adding a lot of goal states to the search may negate some of the benefit. But “tri-directional search” can be practical and advantageous, depending on the problem domain.

48

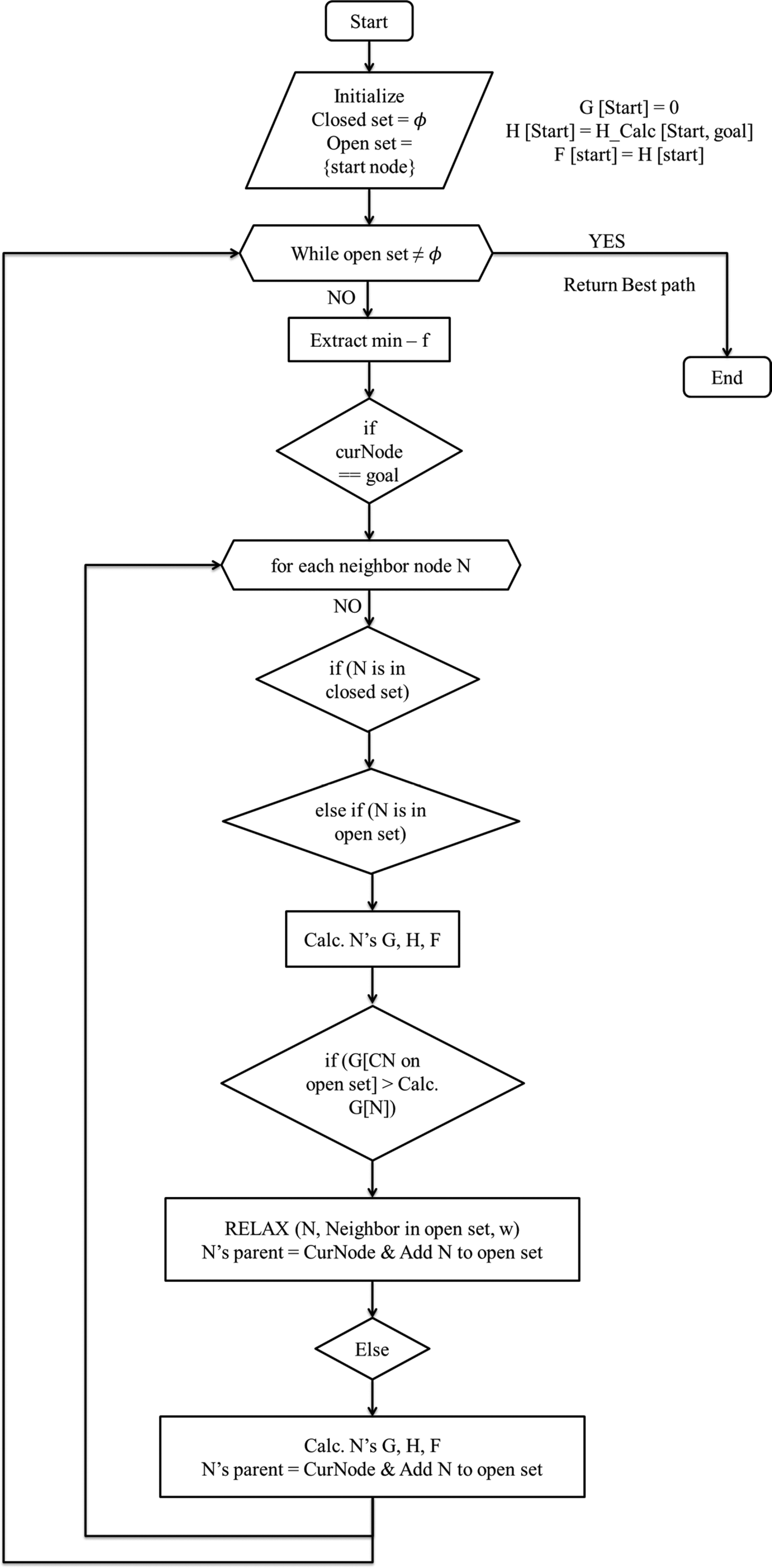

Figure 4 shows a pseudo code for A* algorithm.

Flow chart for A* algorithm.

RFID

RFID stands for radio frequency identification and as the name says the RFID technology works by identification of the particular frequency. Usually, these RFID consists of two parts, the transmitter and receiver. The receiver would be an RFID module whereas the transmitter is basically a card or a small key tag which is capable of producing a particular frequency. 49 No two RFID tags will produce the same frequency. Coming to the structure of the module, it consists of a microchip which can recognize the RFID frequency up to a certain range. Inside the transmitter, there will be a complete circuit. The power for the transmission of the radio frequency waves comes from a tiny capacitor which will be hooked up inside the circuit. 50 As soon as you hold the tag near the module, the electromagnetic field produced by the module charges the capacitor which acts as a power source for the tag, that is the transmitter. RFID, a popular radio frequency and identification technology, is used by various brands to monitor their stocks in warehouses. 51 The RFID system is similar to a bar code scanning system that you can find in any supermarket. One of the best benefits of using an RFID system is they are quite precise when compared to any other technology. RFID is composed of two major elements. The tag is actually a sophisticated little (they can be grain-of-rice sized) device, that contains a loop antenna, a microchip, and sometimes an external capacitor. 52 When the properly-tuned reader coil comes near it, some of the emitted radio signal from the reader is absorbed by the tag's antenna, and it uses some of that power to operate the microchip, which then returns a digital code using nearly the same radio frequency. The code can be short (as in 64-bits) or very long (up to several thousand bytes). However, the longer codes require more time and more power, so usually the designers choose the shortest code they can for the application. 53 RFID or radio frequency identification has many uses such as identifying and tracking items in retail stores. It is used to check inventory levels and also to curb shoplifting and theft by employees, in airports for customer luggage tracking, on large construction sites for tracking workers. It can even record slip and fall accidents, and workers can be tracked continuously. There are many more uses for RFID like child tracking etc. As the price of tags has drastically decreased since inception, more people are using this inexpensive method of control. 54

SLAM algorithm for navigation

The full name of SLAM is simultaneous localization and mapping, which translates to instant localization and map construction. 55 There are two key words here: localization and map construction, which means that the robot will determine its position in an unknown environment while building a map, and finally output a map like this. 56 SLAM used herein was the part of seeded toolbox provided in MATLAB which uses pose graphs, that is remembering few characteristics of the previously visited site. This technique is called bundle adjustment. Therefore when the same site is visited again the algorithm detects the site and closes the loop. 57 To implement SLAM one needs an odometry sensor and a LiDAR sensor. The odometer gives an idea of the position of your robot on the 2D map. The LiDAR sensor will helps to build the map during the learning phase. Also one can use an Xbox Kinect sensor to build a 3D map. But then there is need of a high graphics computer to process that map. 58 SLAM is used for mapping an unknown terrain. It also locates itself in the map it created. This is important for dynamic environment as the surrounding is constantly changing. This is why it was used for DARPA Grand Challenge in 2005 where autonomous vehicle competes. SLAM was selected herein for the application of inventory control as the environment is less dynamic and thereby eliminating the use of more data points. 59

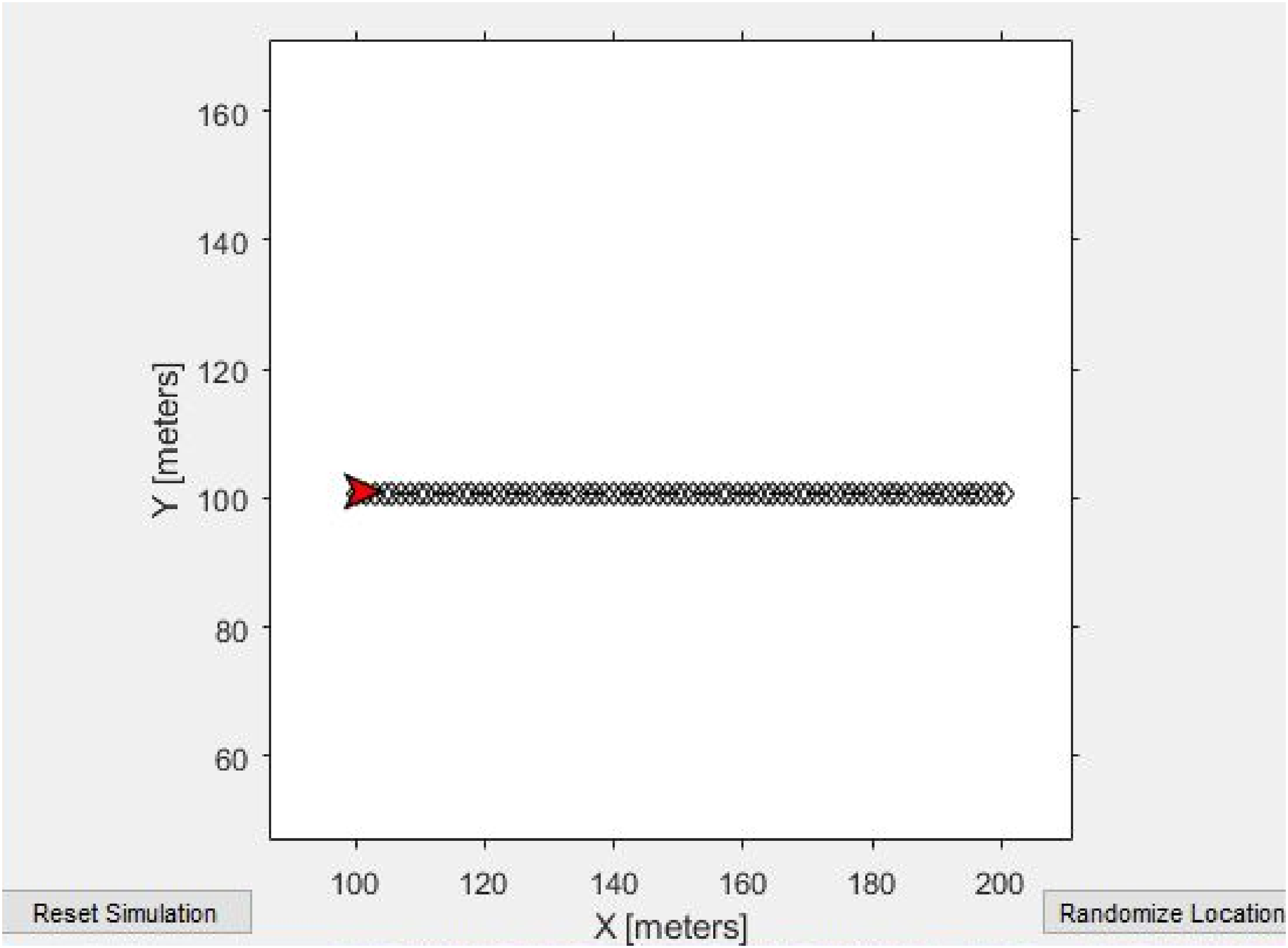

Pure pursuit algorithm

It is a tracking code based on estimation of the curvature through which vehicle moves from source to its destination. 60 The main task is to select an aim and calculates the curvature. 61 The input to the pure pursuit algorithm is a variable called LookAhead distance along with the path generated for the robot with A* algorithm as input. 62 As LookAhead distance increases so does the curvature of path, the robot follows. This curvature is helpful for smooth motion of the robot as it avoids instantaneous turns from the shortest path generated with A* algorithm. 63 The drawback of using pure pursuit is that, as the LookAhead increases there is a possibility that the goal co-ordinates are crossed during transition. To compensate for it is required to provide a goal range instead of goal co-ordinates, that is the range is which the robot is said to have reached the goal.63,64

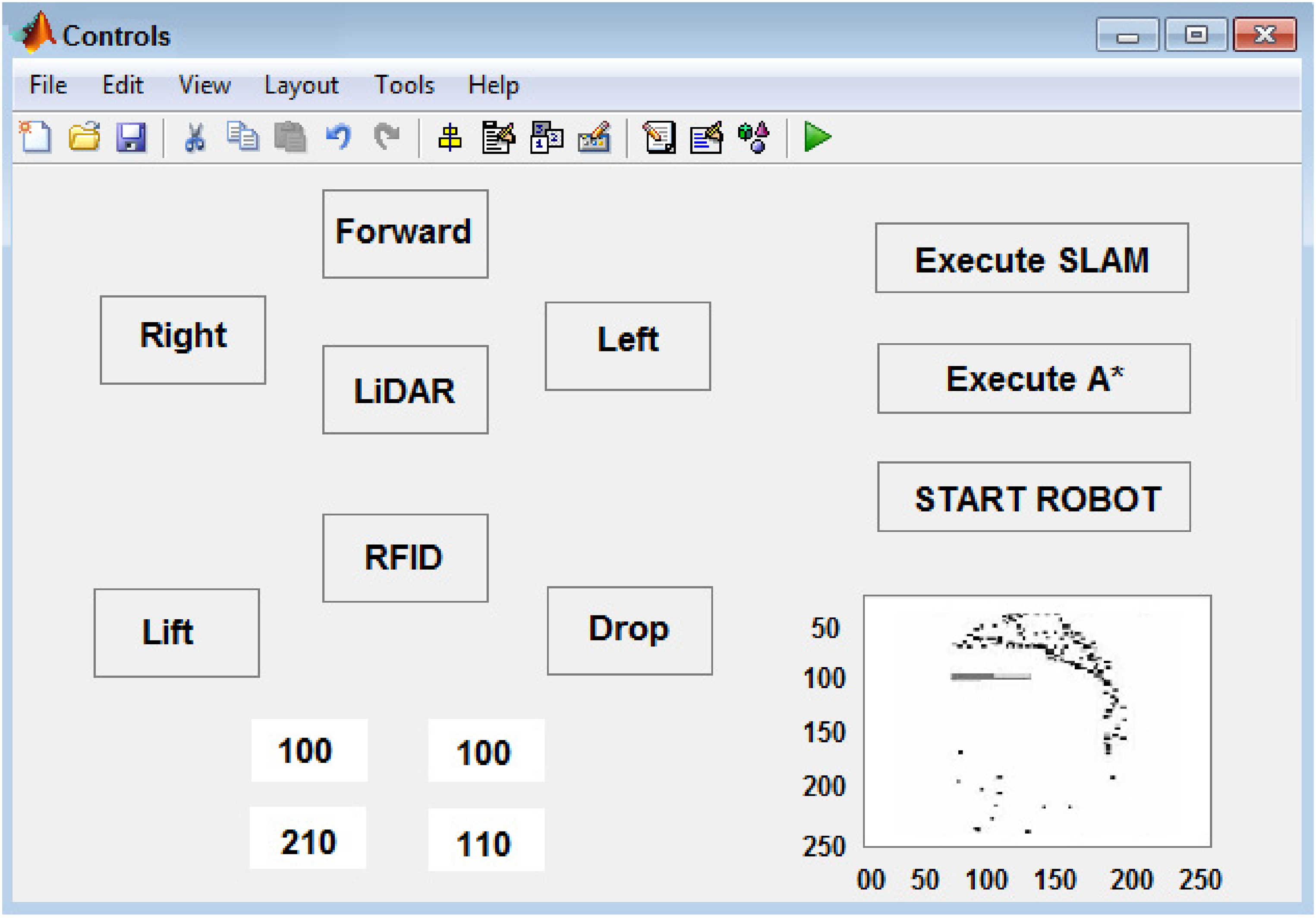

Prototype development, testing and results

Figure 5 shows a prototype LiDAR operated inventory control and assistance robot. Figure 6 shows A* Algorithm running to find shortest path. Figure 7 shows a pure pursuit controller with robot (in red). The time required for scanning of 5 scans was 6 minutes. As the path is simple and short the time required for A* was 15 seconds but it may increase to 28 minutes for much complicated paths. The robot moves according to the path described by pure pursuit controller and requires user input if any obstacle is detected. LiDAR is the visible or near-visible equivalent of radar. So it is a system that is use for detecting objects (present or not hypothesis), ranging (determining the distance to an object) and tracking these objects. This is done with EM waves that are transmitted coherently to the receiver such that Doppler information can be extracted. In the case of LiDAR, it is using wavelengths in the nano-meter range, whereas radar uses micrometer down to centimeter range. Both terms, radar and LiDAR, describe systems used for ranging and direction finding of objects, but with different technology and different purposes.

Prototype LiDAR operated inventory control and assistance robot.

A* algorithm running to find shortest path.

Pure pursuit controller with robot (in red).

RF waves transmit over long distances, and are reflected by large metal objects, which is why it was originally used in the military to detect ships and aircraft. However, there's not a lot of accurate detail to extract from these signals, so ranging and direction finding are always approximate. LiDAR is a newer technology (1960s, whereas radar was developed in the 1890s), with the name being a portmanteau of “light” and “radar.” The interpretation as an acronym supposedly came later. With the use of RGB-D (camera and depth) sensors it is apparent that the robot will be able to detect not just objects and human in its vicinity but also be able to predict their motion and thereby enhance safety and become more conscious-friendly. This can be implemented using YOLO (you only look once) like algorithms to detect objects which uses convolutional neural networks (CNN).

Conclusion

The design of the LiDAR system for proximity detection is incorporated for the development of an anti-collision system for vehicles/robots. This system is capable of sensing proximity up to 1.5 m with an accuracy of ±5 mm. This system is useful for indoor robots and the future development of autonomous and semi-autonomous Vehicles. It is a cost-effective solution with low computational needs. The time required for operation is less than 7 minutes for simple routes but may increase to 20 minutes for scanning and finding the shortest routes for long distances. The use of a servo control system is unreliable and needs to be replaced with more accurate control systems. Loop closure is a major problem encountered by SLAM and needs to replace with much more computational algorithms. For dynamic environments use machine learning algorithms like neural networks can be used to navigate the robot autonomously. The use of a powerful microcontroller like Raspberry Pi can eliminate the need for MATLAB as the programs can be directly run on the mentioned platform. The use of a powerful microcontroller can also eliminate the need for Bluetooth technology as the range of such modules is no more than 100 feet. Integration of Wi-Fi modules can not only connect the robot to the internet for real-time data analysis but also help to monitor the condition of the robot. The use of active RFID technology can help increase the range from a few millimeters to a few meters which are a considerable advantage over the traditional barcode or QR scanners. Linear actuators with low losses of frictional energy can save a lot of electric energy thereby reducing the number of charging cycles and increasing the battery life of the robot. The use of a Li-ion battery is not only beneficial because of less charging time but also less harmful to the environment. With the use of SLAM techniques like visual SLAM which uses RGB camera along with depth (TOF) sensor one can generate 3D point cloud. Also inertial measurement unit (IMU) sensors can be used to provide better odometry readings. Incorporation of machine learning techniques is a must for recognizing the objects in the proximity of robot. Along with this robot should also be able to sense hazardous situations (e.g., fire) with smoke sensors and alert the people around.

Footnotes

Acknowledgements

The manuscript was accepted and presented at 2nd International Conference and Exposition on Advances in Mechanical Engineering (ICAME-2022) held during 23–25 June 2022 at College of Engineering Pune (COEP), Maharashtra, India.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.