Abstract

This work is the first to address vehicle trajectory prediction under extreme handling conditions relevant to drift-assist ADAS, extending the operational envelope of current trajectory prediction approaches beyond normal/near-linear driving regimes. The proposed framework is based on physics and driver gaze to predict the driver’s desired course during drifting. We introduce kinematics-based models for trajectory prediction to consider the unique vehicle dynamics in drifting including high sideslip and counter steering. Dynamics-based models are also utilized to account for the driver’s desired yaw rate and sideslip angle. Moreover, the driver’s gaze behavior during drifting is analyzed and two gaze-based travel-point and waypoint models are further adopted for trajectory prediction. In order to fuse the predictions from the above models, a t-distribution-based regression is applied to accommodate more outliers in the extreme drifting maneuver. Furthermore, a Gaussian process-based online learning model is deployed using the prediction error of previous timesteps to correct the current prediction according to vehicle and driver states. Driver-in-loop drifting data collected from the driving simulator of Cranfield University is utilized for validation of the effectiveness of the proposed framework.

Keywords

Introduction

Drifting is a distinctive cornering method defined by high sideslip and counter steering, where the yaw rate direction opposes the steering input. 1 The vehicle operates at its handling limit with the rear tyres fully saturated in adhesion,2–7 which requires a cooperation of throttle and steering in order to maintain the vehicle dynamics at an unstable equilibrium.8,9 This technique requires advanced driving skills or the intervention of a sophisticated control system.

Significant research has been reported in the area of autonomous drifting to demonstrate the capability of vehicle dynamics controllers in extreme operating conditions. The desired vehicle trajectory in such cases is determined by the path planning layer10–19 of the autonomous driving controller where various control methods11,13,20–32 are applied. Different from autonomous drifting, for drift assist control in the field of Advanced Driver Assist System (ADAS) where the driver is expected to be responsible for path tracking, trajectory prediction is essential in our proposed drift assist control framework. 33 After driver drift intention recognition, 34 trajectory prediction interprets the desired trajectory and serve as the reference for the subsequent drift assist control concepts proposed in our work.35,36 With trajectory prediction, the assist system can better intervene with the vehicle motion during drifting to override unreasonable driver inputs 37 or simply help the driver maintain the desired course. 36 This study concentrates exclusively on trajectory prediction as the interface between driver intention and our proposed drift-assist control concepts.35,36 Novel low-level drift tracking controllers utilizing this prediction will be presented in future work.

Previous studies have extensively studied the topic of trajectory prediction in normal driving,38,39 the approaches are generally classified into three types: physics-based, which depends on vehicle dynamics40,41 or kinematics42–47 model and its state equations in an iterative process; maneuver-based, which considers vehicle future motions and the driver’s intentions (i.e., lane changing); interaction-aware prediction, where the vehicle interacts with other participants on the road, for instance multi-vehicle interactions and lane-change decision-making using social and game-theoretic frameworks.48–50 Categorizations based on different algorithms51–53 could also be found where learning-based approaches are discussed in detail to better consider road and interaction-related input information.

In contrast to normal driving, drifting is a more challenging scenario for trajectory prediction since the prediction error increases significantly when extreme maneuvers are involved. 54 In this article, given that drifting is a racetrack maneuver not suggested on public roads, we do not take into account surrounding vehicles or any prior map information. Instead, our focus is solely on internal vehicle signals, with novel kinematics and dynamics models proposed to consider the unique vehicle motions during drifting.

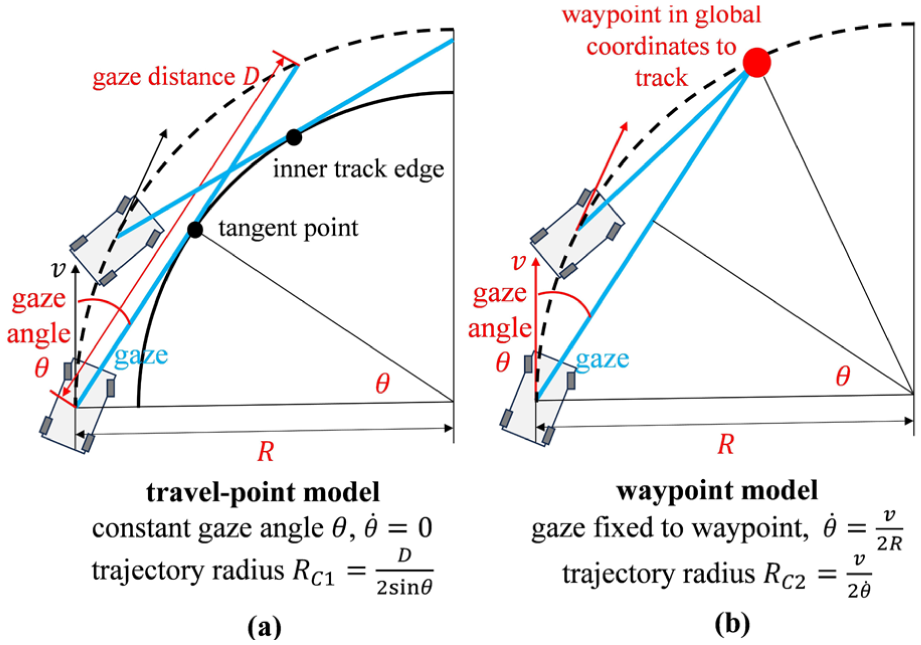

Moreover, this article further considers the driver’s gaze behavior for drift trajectory prediction. A previous study 55 has suggested that gaze information is vital to understand the trajectory demand of a human driver. In normal cornering, both the longitudinal56,57 and lateral55,58 vehicle behaviors have a strong correlation with gaze movements. Recent studies have discussed the possibility of using gaze angle to guide steering control design. 59 Unlike the above gaze studies in normal driving/racing cornering, this article firstly discusses the gaze behaviors observed in drift cornering, followed by implementing two gaze-based trajectory prediction models60–62 for drift trajectory prediction.

In order to fuse the data from different prediction models and to improve the trajectory prediction accuracy, earlier studies42,47,63 have introduced the Kalman filter (KF), which assumes that the prediction model’s errors follow a normal distribution (white noise). More recently, the Interacting Multiple Model (IMM)64–69 has been further applied to account for various vehicle motion behaviors (such as velocity tracking or distance keeping 65 ) and switch between different prediction models (i.e., CV or CA model 67 ) accordingly, with each model has its own KF.

Unlike the aforementioned studies that presume the error from a certain prediction model adheres to a normal distribution, this article adopts a t-distribution regression that accommodates less samples and more outliers,70,71 making it more suitable for extreme maneuvers with heavy-tailed noise.72–74 Furthermore, rather than distinguishing vehicle motions between limited behavior modes through IMM, we also propose an online learning-based approach to correct the model outputs utilizing historical data (detailed in section “The Gaussian process-based learning model”).

The trajectory prediction framework

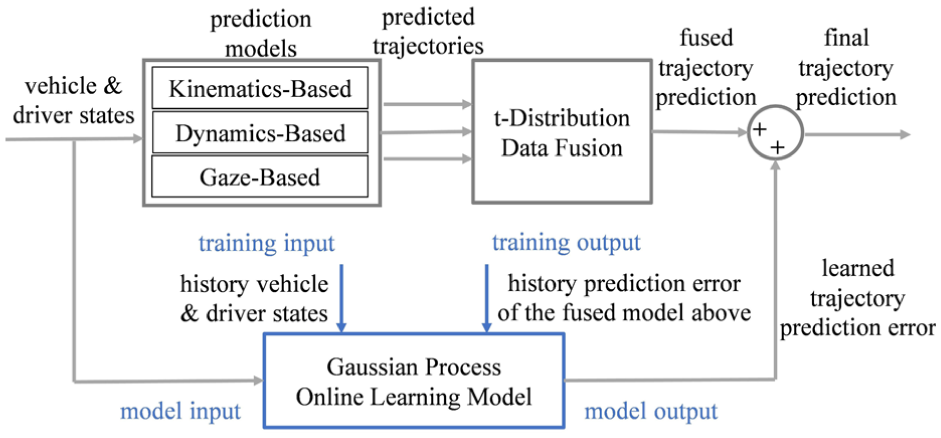

To serve in our proposed drift assist control framework, 33 interpreting the desired trajectory after driver drift intention recognition 34 and providing reference for the subsequent drift assist control concepts,35,36 this article proposes the following framework as shown in Figure 1 for trajectory prediction during drifting. The novelties and contributions are as follows:

(1) The introduction of physics-based drift trajectory prediction models, namely kinematics-based drift trajectory prediction models (Model A) that capture the unique vehicle dynamics behaviors in drifting, including high sideslip angle (A1) and counter steering (A2); and dynamics-based drift trajectory prediction models (Model B) to account for the desired yaw rate (B1) and sideslip angle (B2) from the driver’s inputs.

(2) The adaptation of gaze-based trajectory prediction models (Model C) for drifting. After analyzing the gaze behaviors observed during drifting, two prediction models (travel-point and waypoint, C1 and C2) are adopted and fused for output.

(3) A t-distribution based data fusion of the predictions from the above models, which accommodates less samples and more outliers, therefore is more suitable than normal distribution for extreme maneuvers like drifting.

(4) A Gaussian process-based online learning model based on vehicle and driver states, in order to learn the trajectory prediction error of the fused output above, and improve the accuracy of the final trajectory prediction.

Framework of this article.

The driving simulator to provide data for validation

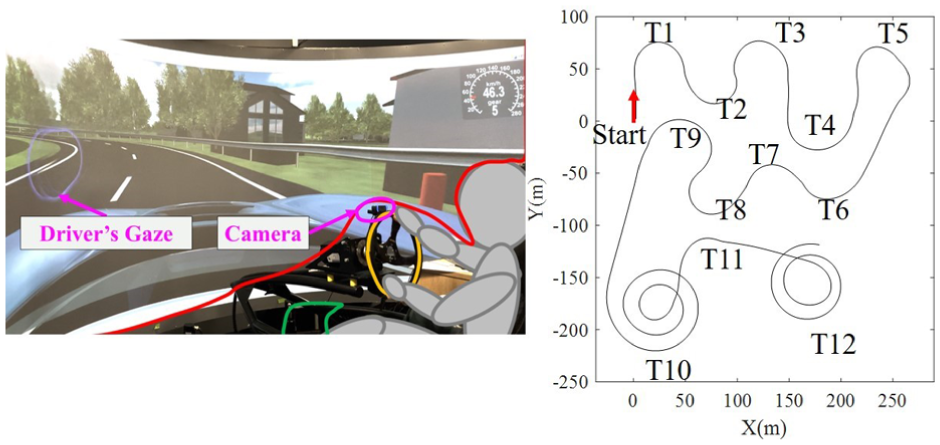

Driver-in-loop data is collected using the driving simulator of Cranfield University, in order to support the evaluation of the trajectory prediction model proposed in this article. Detailed specifications about the simulator are elaborated in our previous study.33,35 In this work we added a camera to capture the driver’s gaze behavior with the software Beam Eye Tracker. 75 The drift track is shown in Figure 2 which includes both turning directions, different radii (20–50 m), and curvature-varying corner segments (i.e., T10, T12). The experimental dataset includes three drivers (students from Cranfield University, no professional drift driver), for the multi-driver evaluation (Table 1), four laps from each driver are used (12 laps in total).

The driving simulator and the drift track.

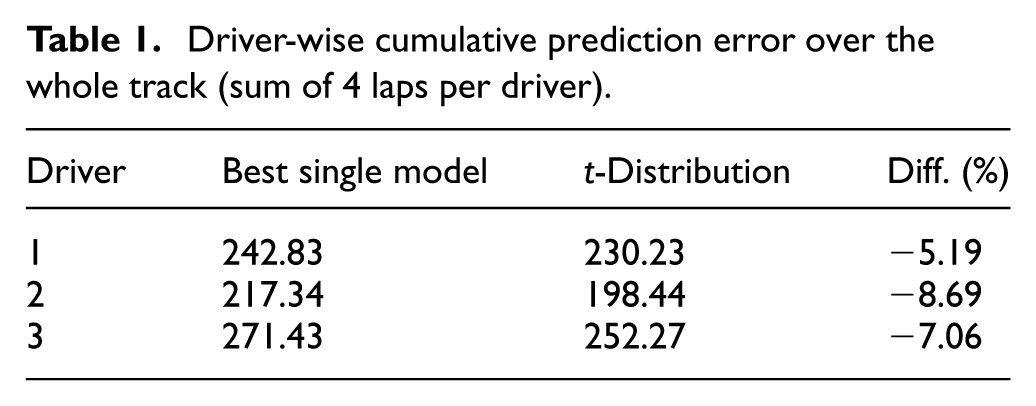

Driver-wise cumulative prediction error over the whole track (sum of 4 laps per driver).

The physics and gaze-based trajectory prediction model for drifting

Given that drifting is a racetrack maneuver where no other vehicles or obstacles are present, this article presents a trajectory prediction framework only relies on vehicle physics (Model A: kinematics-based and Model B: dynamics-based models) and the driver’s gaze behaviors (Model C: gaze-based models).

Model A: Kinematics-based trajectory prediction model

Previous studies have extensively discussed the possible kinematics and dynamics-based models for trajectory prediction. Both of the two types of models rely on a particular vehicle dynamics model for iterative processes. In contrast to dynamics-based models (normally bicycle model40,41), kinematics models ignore detailed dynamics like tyre forces, and mainly depend on the current vehicle states and driver’s input. Therefore kinematics models are more commonly used for trajectory prediction with low computation complexity. 52 For example, the ‘Constant Acceleration (CA)’ model 42 is applied and compared with the “Constant Turn Rate Constant Tangential Acceleration (CTRA)” model. 43 The CTRA model 44 is considered together with the “Constant Turn Rate and Velocity (CTRV)” model and the “Constant Curvature and Acceleration (CCA)” model. Results based on the above models are also discussed in studies.45–47

Together with the aforementioned CTRV, CTRA, and CCA models which are originally proposed for normal cornering, this article further develops the following kinematics models to consider the unique vehicle behaviors in drifting (i.e., high sideslip angle and counter steering1,2,35,36) for trajectory prediction.

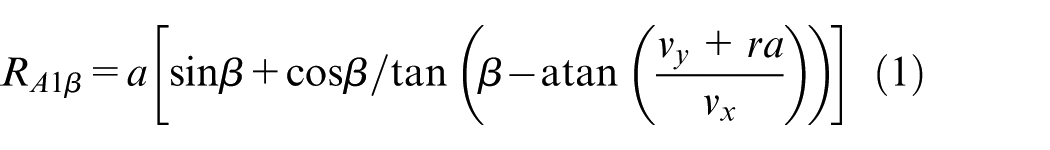

(1) Model A1: Sideslip-based kinematics model

As previously mentioned, drifting is a distinctive cornering technique characterized by high sideslip angle,1,2,35,36 which must be considered in the kinematics model. Given the sideslip angle

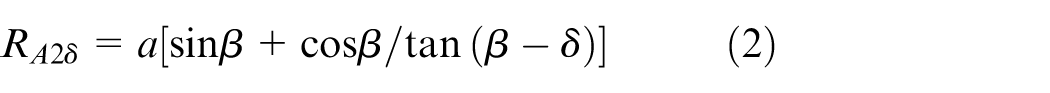

(2) Model A2: Steering angle-based kinematics model

In addition to the sideslip angle, we further consider the other crucial aspect of drifting: counter steering (the steering direction opposes the yaw rate) which is not explicitly included in (1). Notably, for steady-state drifting, while the rear wheels may be fully saturated with a high sideslip angle, the front wheels are typically unsaturated with a limited sideslip angle (i.e., <7 deg according to study,

2

similar findings in literature8,21). This configuration allows the front to adjust lateral force, enabling the driver to change the vehicle’s direction in drifting through steering. Therefore, by assuming the sideslip angle of the front axle to be zero, the kinematics radius based on steering angle

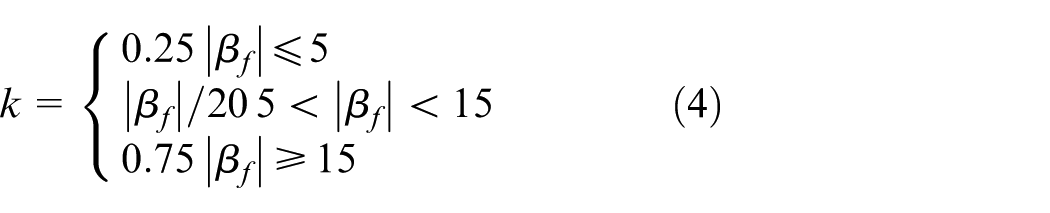

(3) Data fusion of Model A1 and A2

Next, we propose a weighted function to integrate the kinematics-based radii (

Sideslip-based: (a) and steering angle-based and (b) trajectory prediction for drifting.

Specifically, for drifting on a track with constant radius, our previous studies33,35 indicate the driver must coordinate the steering with CoG sideslip angle to maintain the intended trajectory. For instance, when the driver demands more CoG sideslip by applying throttle, additional counter-steer is required to balance the yaw moment and to stay on track, while simultaneously keeping the front-axle sideslip low, as discussed in the previous section. In this sense, throughout drifting the front axle typically operates in an unsaturated region with low sideslip, enabling counter steering to serve as a stabilizing input rather than a direct determinant of drift radius.

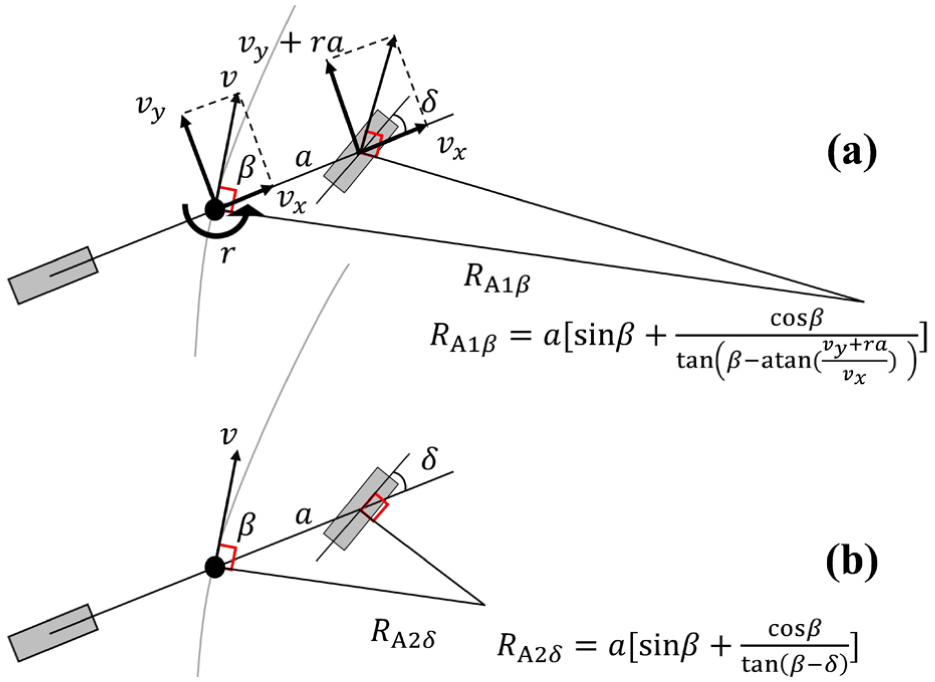

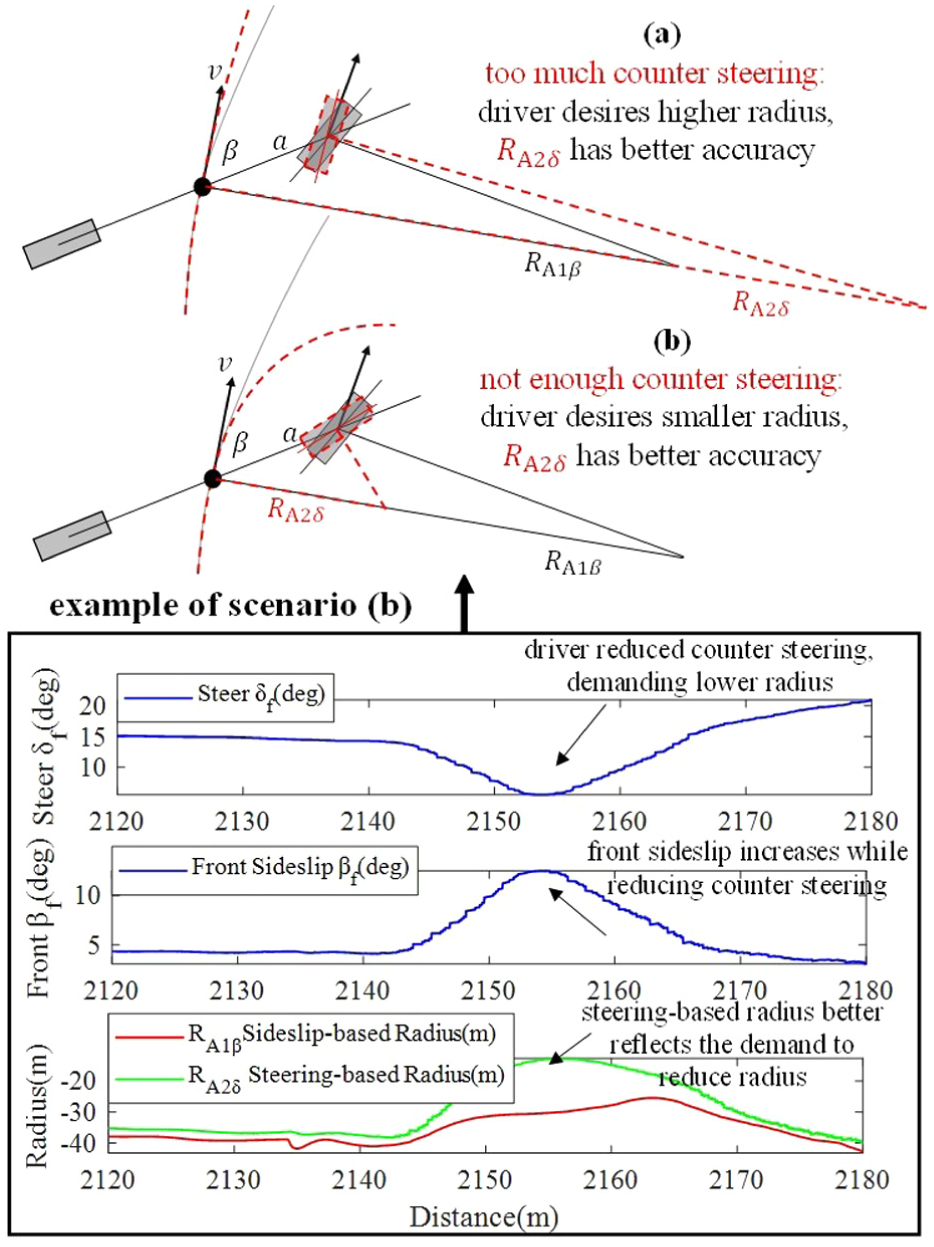

But what if the driver applies a “wrong” amount of counter steering and a greater sideslip for the front axle? Two scenarios are considered and illustrated in Figure 4. Firstly, when the counter steering is excessive, it is presumed that the driver is requesting a higher radius (Figure 4(a)). Conversely, when the counter steering is insufficient, it is presumed that the driver demands a lower radius (Figure 4(b)). In both scenarios, the steering-based radius

Based on the above observations, the following weight function (3) is designed to calculate a fused kinematics-based radius

It should be noted that counter-steering does not monotonically determine the drifting radius. Instead, the steering input is used as an indicator of the driver’s intended direction of radius modification, which becomes meaningful only when the front-axle sideslip deviates from its typical unsaturated level during drifting.

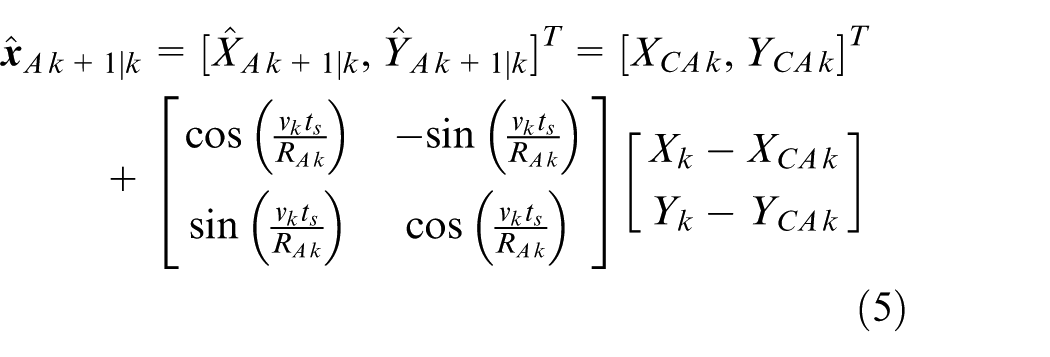

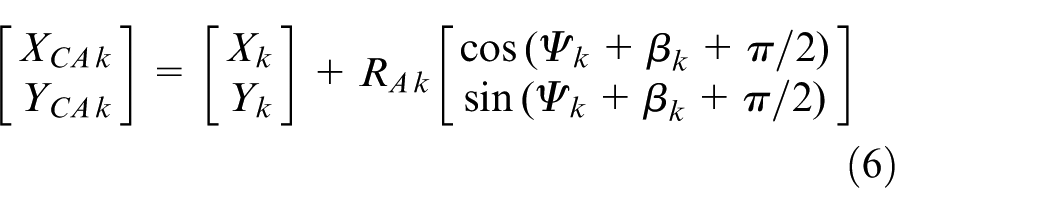

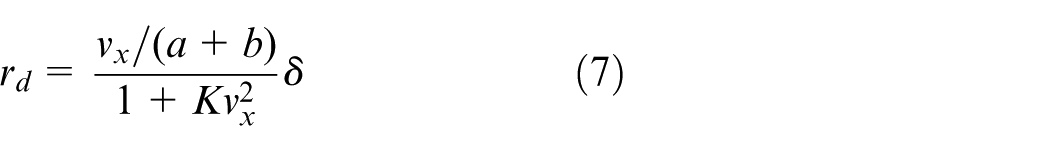

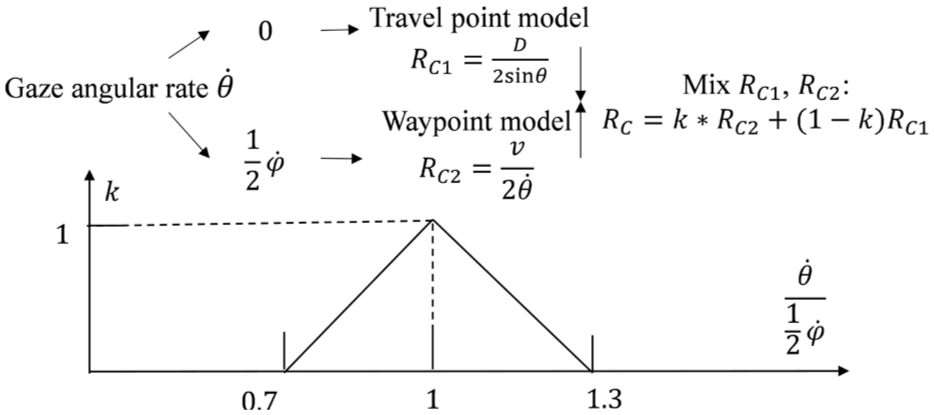

(4) Trajectory calculation using Model A

The predicted vehicle trajectory at timestep

Trajectory calculation using Model A.

Model B: Dynamics-based trajectory prediction model

Dynamics models are capable of considering more intricate details in vehicle modelling, such as vehicle mass and tyre adhesion.40,41 Nevertheless, this doesn’t ensure improved accuracy in trajectory prediction, 52 as it depends on precise vehicle modelling (otherwise risks introducing greater error). Furthermore, these models primarily depend on the current state of the vehicle without considering the driver’s inputs in future timesteps. Despite the limitations of dynamics-based models, this article considers them for their capability to handle detailed vehicle behaviors such as the variation of sideslip angle, which is essential for the subject of drifting.

This article will present two dynamics-based models in the subsequent sections, both of which are derived from a single-track vehicle model, with modifications to account for drifting behaviors.

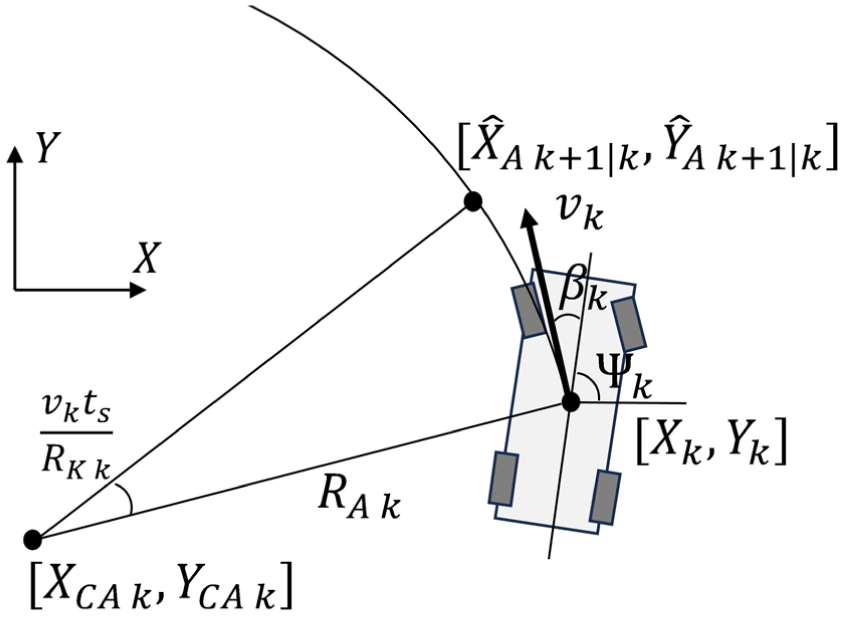

(1) Model B1: Desired yaw rate-based dynamics model

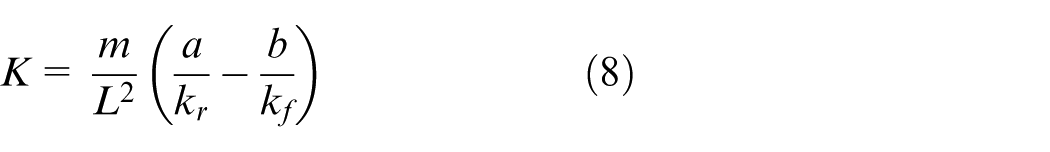

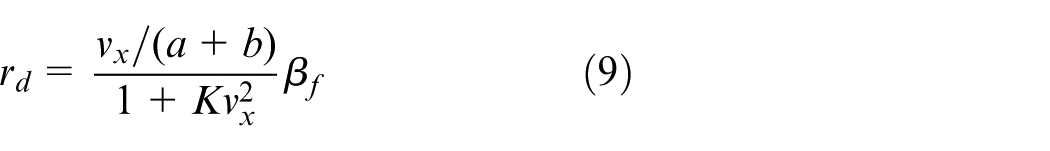

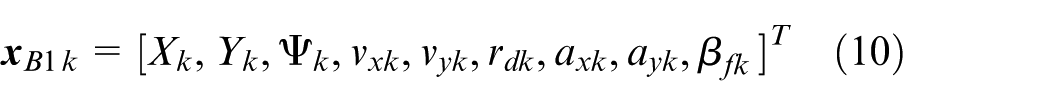

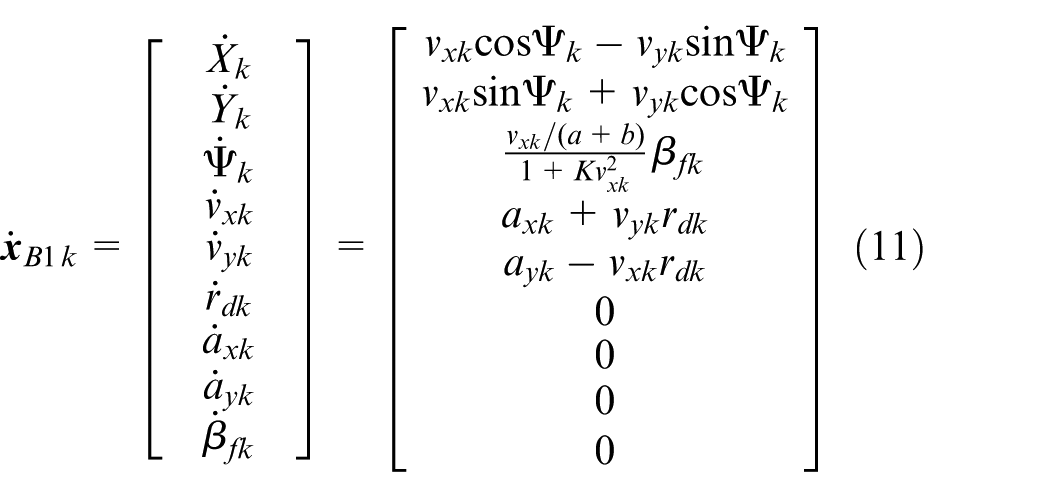

We first consider the linear single-track vehicle model

76

and its steady-state yaw rate

But in the context of drifting (which operates outside the validity of the linear approximation), the above equation for desired yaw rate cannot be applied directly, as drifting is a cornering technique that involves counter steering.1,2,35,36 However, as discussed in the previous section of Model A2, despite counter steering, the front wheels always have unsaturated sideslip angle,2,8,21 to provide lateral force that allows the driver to alter the vehicle’s direction (yaw) in drifting through steering and track the desired path.35,77,78 Therefore, assuming small sideslip angle for the front tyres, we replace the steering angle

(2) Trajectory calculation using Model B1

Assuming the actual yaw rate follows the above desired yaw rate

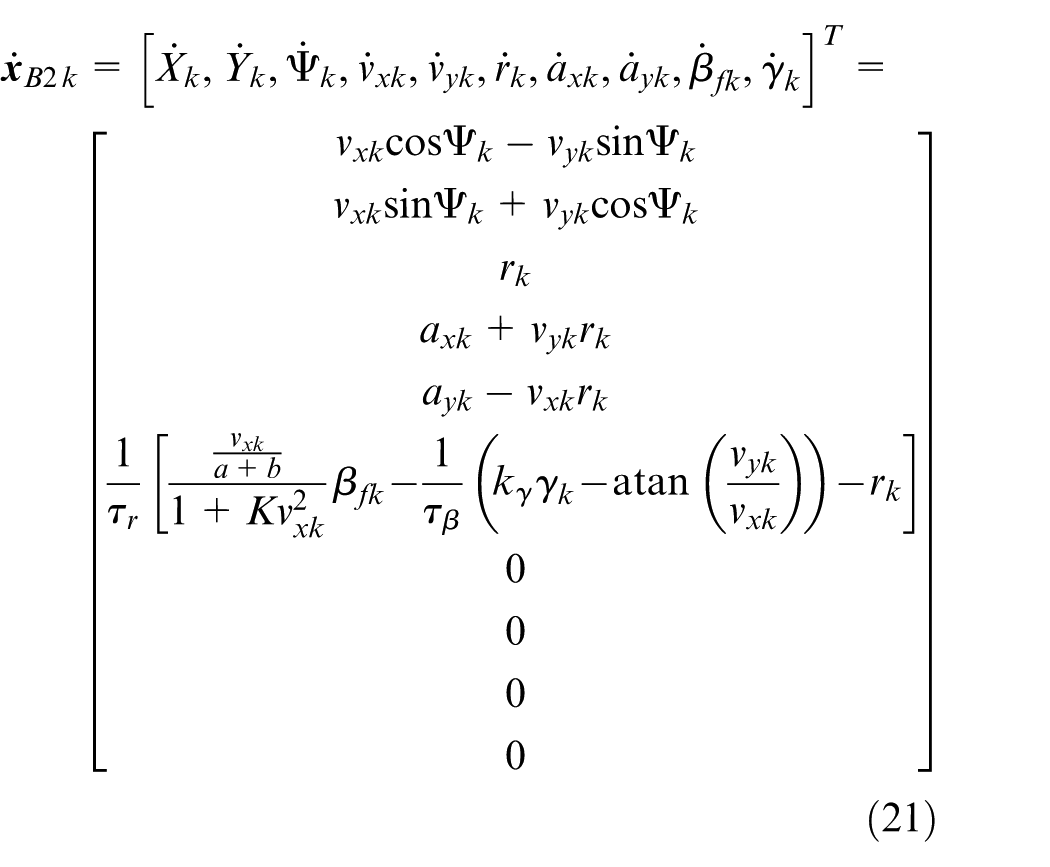

(3) Model B2: Desired yaw rate and desired sideslip-based dynamics model

In order to better reflect the vehicle motions during drifting, we expand upon the previous yaw rate-based dynamics model by further incorporating the “desired” sideslip dynamics according to driver’s throttle input. Previous researches10,21 have indicated that during steady-state drifting the sideslip angle is strongly related to the drive force/slip ratio of the rear wheels. In our previous studies33,35 we have also proposed an intuitive drift control concept that interprets the desired sideslip angle based on the driver’s throttle input, which was subsequently validated through a human trial. Based on the above discussion, the desired sideslip

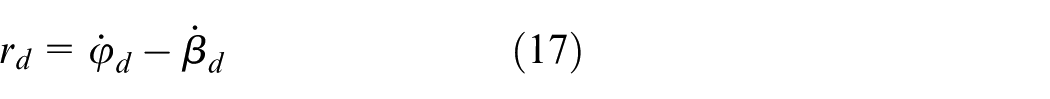

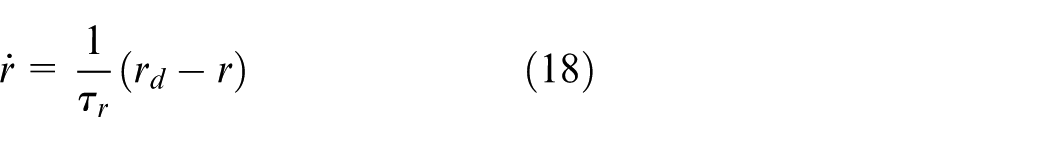

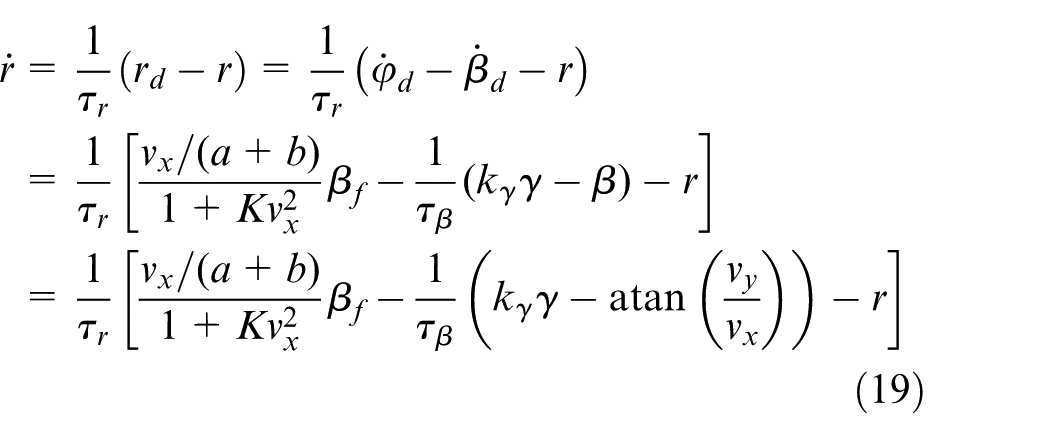

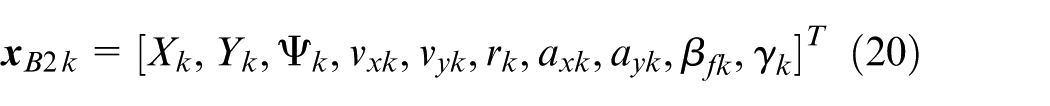

Besides the sideslip dynamics, we also take into account the path angle rate

With the above desired path angle rate

Then, take (13–17) into (18), we have:

(4) Trajectory calculation using Model B2

Similar to the previous Model B1 (10–12) but with desired sideslip dynamics involved, the prediction of this desired yaw rate and sideslip-based model for timestep

Model C: Gaze-based trajectory prediction model

An earlier study 55 has already demonstrated the importance of gaze information in understanding the driver’s desired trajectory. In detail, for longitudinal vehicle motion (straight line acceleration/deceleration), the gaze preview distance (the longitudinal distance in front of the vehicle where the driver is looking at) is normally around 1–3 s ahead of the vehicle.56,57 During cornering correlation is observed between gaze angle and vehicle yaw rate, while an increase of the gaze angle is reported approximately 1 s before the vehicle begins to rotate (in yaw).55,58

In this section we first discuss the gaze behaviors observed in drifting as opposed to normal driving or even racing conditions considered in the previous studies. Two gaze-based trajectory prediction models are introduced respectively: travel-point model (C1) and waypoints model (C2).60–62 A fusion method is further proposed in this article to post-process the outputs from both models.

(1) Gaze behaviors in drifting

Before introducing the detailed trajectory prediction models, we examine whether the above gaze patterns discovered in normal driving are valid in drifting.

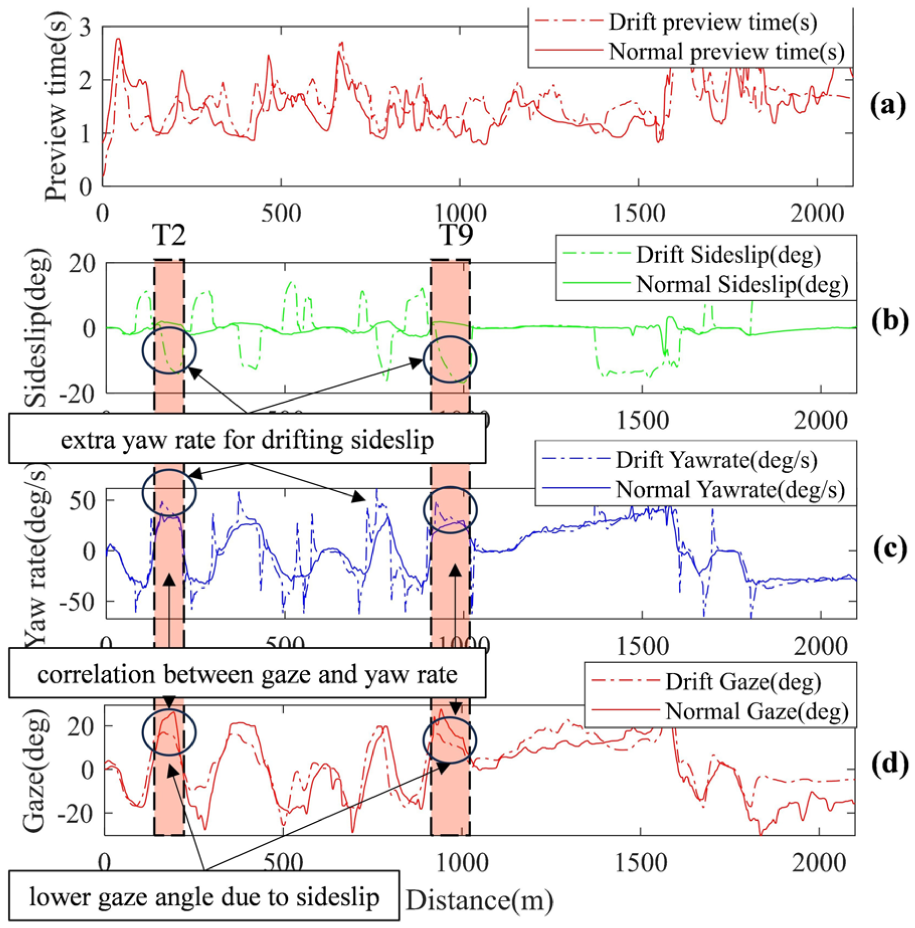

For both drifting/normal driving vehicle, the data collected from our driver-in-loop test (one driver doing a drifting lap and normal cornering lap respectively, Figure 2) generally agrees with the findings in studies.55–58 Longitudinally, the preview time (calculated by longitudinal preview distance/vehicle speed, the preview distance is determined by the gaze pitch angle and the vertical position of the eyes above ground) for both drifting/normal driving is around 1–3 s (Figure 6(a)). Laterally, we take the time intervals T2 and T9 as examples, as shown in Figure 6(c) and (d). An overall correlation is observed between yaw rate and gaze angle, both in drifting and normal driving. Also, for drifting there is higher yaw rate (Figure 6(c)) to generate high sideslip (Figure 6(b)) so that the vehicle body rotates deeper towards the corner. Consequently, less gaze angle (Figure 6(d)) is required to track the same point on the path (i.e., apex of a corner) compared to normal driving.

Gaze behaviors: ((a) preview time, (d) gaze angle) and vehicle motions ((b) sideslip, (c) yaw rate) comparison between drifting and normal driving.

(2) Model C1: travel-point gaze model for trajectory prediction

As shown in Figure 7(a), given a corner with fixed radius, the travel-point model relies on the assumption that the gaze angle (

(3) Model C2: Waypoint gaze model for trajectory prediction

In contrast to travel-point model, recent studies57,60,82 have suggested that the waypoint model is predominantly used by human drivers. The gaze is tracking a certain point in the global coordinates,

60

and the retinal image is stabilized by compensatory eye movements (CEM)

83

therefore

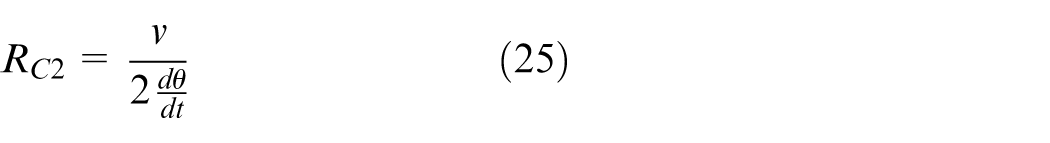

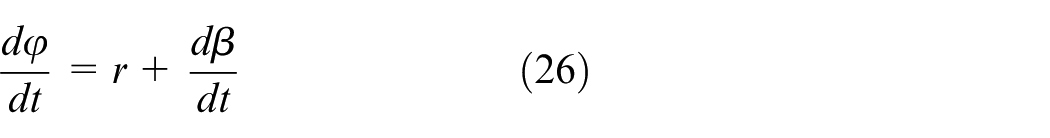

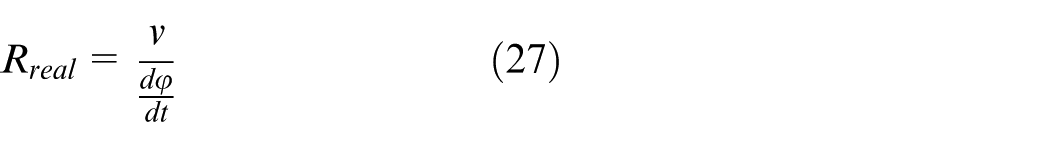

As shown in Figure 7(b), the waypoint model assumes a waypoint to be tracked by gaze in the global coordinates, so the relationship between gaze angle rate

(4) Data fusion of Model C1 and C2

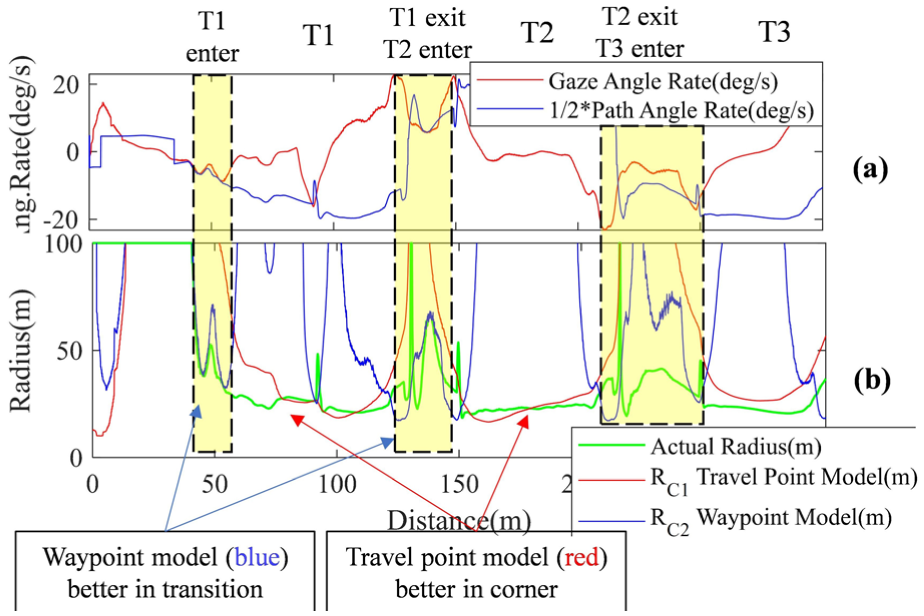

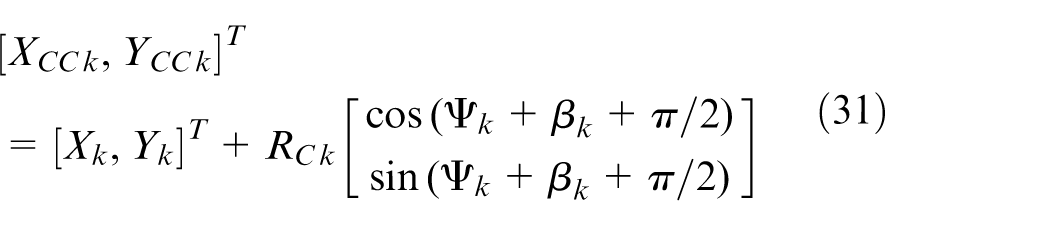

As will be demonstrated in this section, there are occasions where each of the two gaze-based models outperforms the other. Therefore, we propose a method to integrate the two models in such a manner that we attain an improved prediction of the path throughout the vehicle’s operational range. To mix the results from the above two gaze models, we further consider the path angle rate

Assuming that the gaze behavior is following the waypoint model (

To justify this, the gaze angular rate

Gaze angle rate: (a) and predicted radius (b) comparison between the travel-point and waypoint model.

Based on the above observations, the following weight function (Figure 9 and equation (29)) is designed to predict the desired radius based on gaze (the gains are selected by manual tuning). Specifically, when the gaze angular rate shows more dependency on the path angle rate

It should be noted that the adopted gaze models are not intended to describe a universal driver gaze policy, but rather serve as practical abstractions for trajectory prediction in drifting scenarios, where systematic gaze studies are still limited and to be developed in future work.

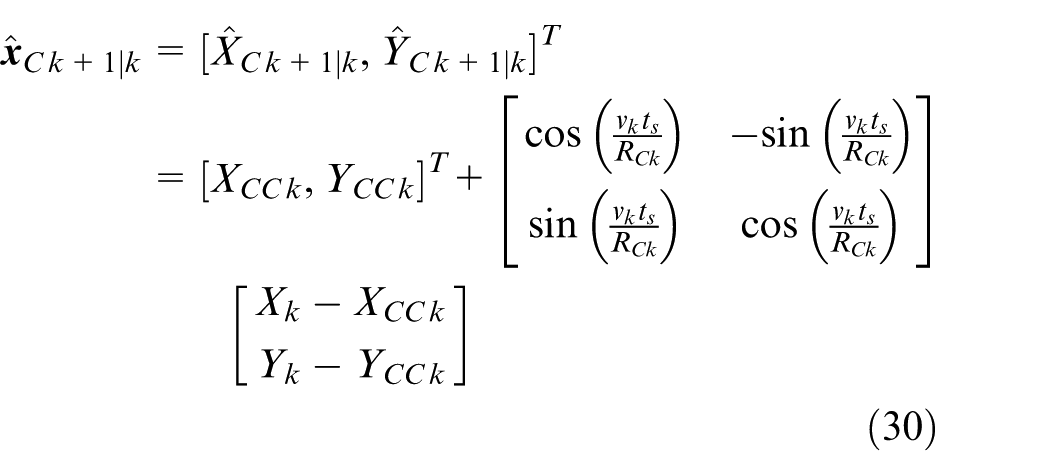

(5) Trajectory calculation using Model C

Given the gaze-based predicted trajectory radius

Data fusion of the travel-point and waypoint model.

The t-distribution-based data fusion for all prediction models above

To reduce the trajectory prediction error in drifting we consider combinations of three types of models:

(1) Kinematics-based models: The sideslip and steering angle-based kinematics model (Model A), and the CTRV, CTRA, and CCA model 44 ;

(2) Dynamics-based models: The desired yaw rate-based dynamics model, and the desired yaw rate and sideslip-based dynamics model (Model B1 and B2);

(3) Gaze-based model: The fusion of the travel-point and waypoint model (Model C).

In order to fuse the data from different models and to improve the trajectory prediction accuracy, earlier studies42,47,63 have introduced a Kalman filter (KF), which assumes that the prediction model’s errors follow a normal distribution (white noise). More recently, the Interacting Multiple Model (IMM)64–69 was applied to account for various vehicle motion behaviors (such as velocity tracking or distance keeping 65 ) and switch between different prediction models (i.e., CV or CA model in Kim et al. 67 ) accordingly.

Unlike the aforementioned studies presuming that the error from a certain prediction model adheres to a normal distribution, this paper adopts a t-distribution regression that accommodates less samples and more outliers,70,71 making it more suitable for extreme maneuvers with heavy-tailed noise.72–74 Furthermore, rather than distinguishing vehicle motions between limited behavior modes through IMM, we also propose an online learning-based approach to correct the model outputs utilizing historical data.

Inputs for data fusion

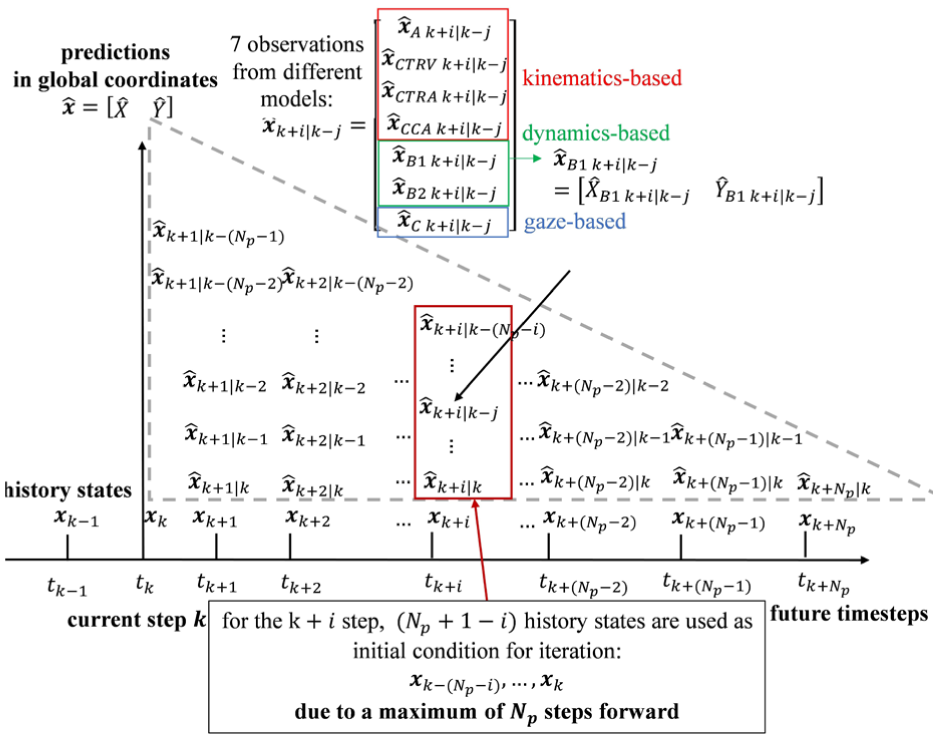

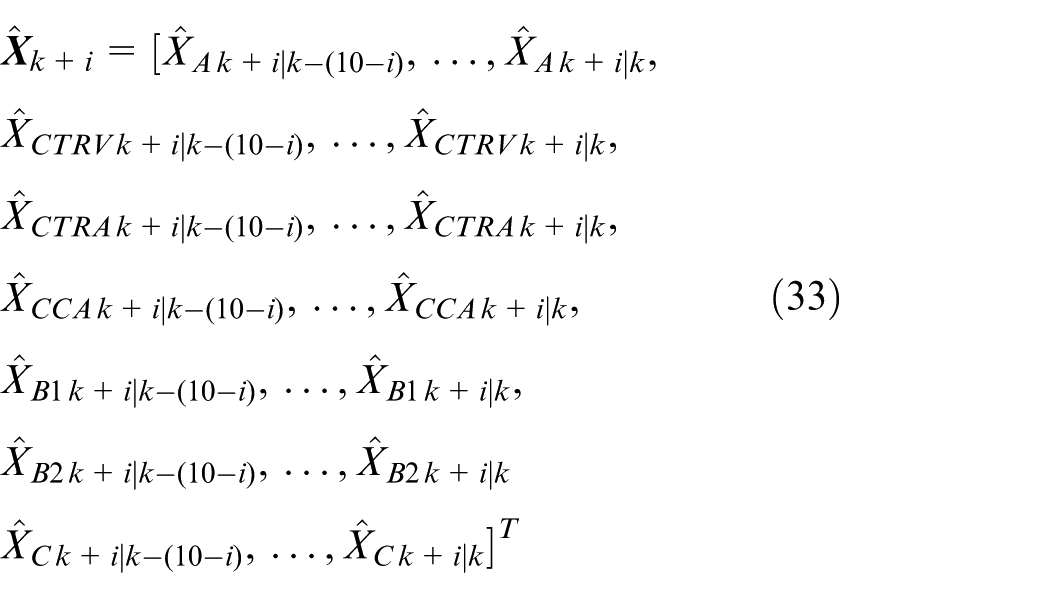

As shown in Figure 10, a prediction horizon of

Inputs for the t-distribution regression.

The t-distribution regression

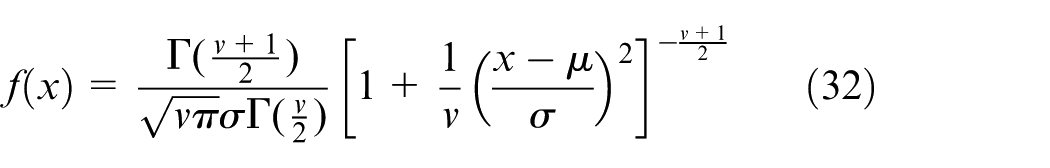

The univariate t-distribution is defined by a probability density function as (32) where

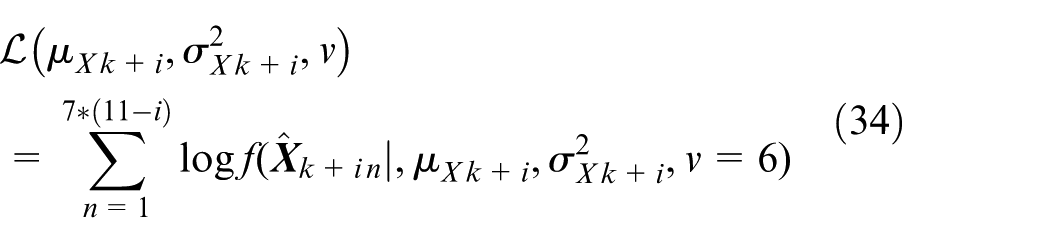

To fit a t-distribution to data predicted by the seven different models at a given future timestep (i.e., with a prediction horizon of

The t-distribution regression results

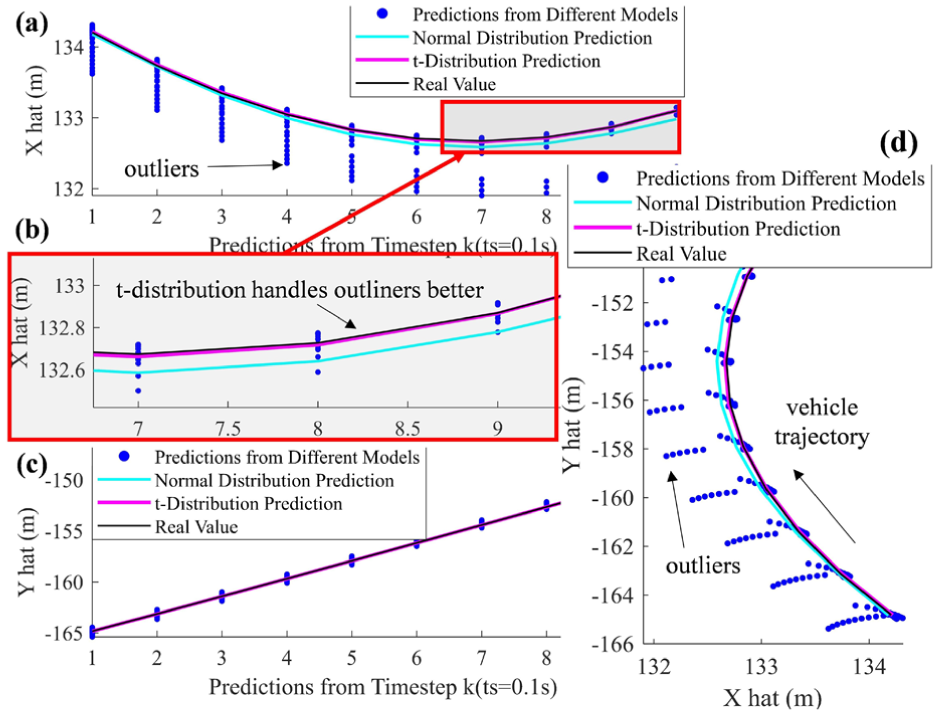

(1) t-Distribution versus normal distribution at a given timestep

The results of t-distribution fitting at a given timestep are demonstrated in Figure 11 below. As depicted in Figure 11(a) and (c), significant noise could be observed from the prediction of

Trajectory prediction comparison (d) between t-distribution and normal distribution (predicted X in (a) (b) and Y in (c)).

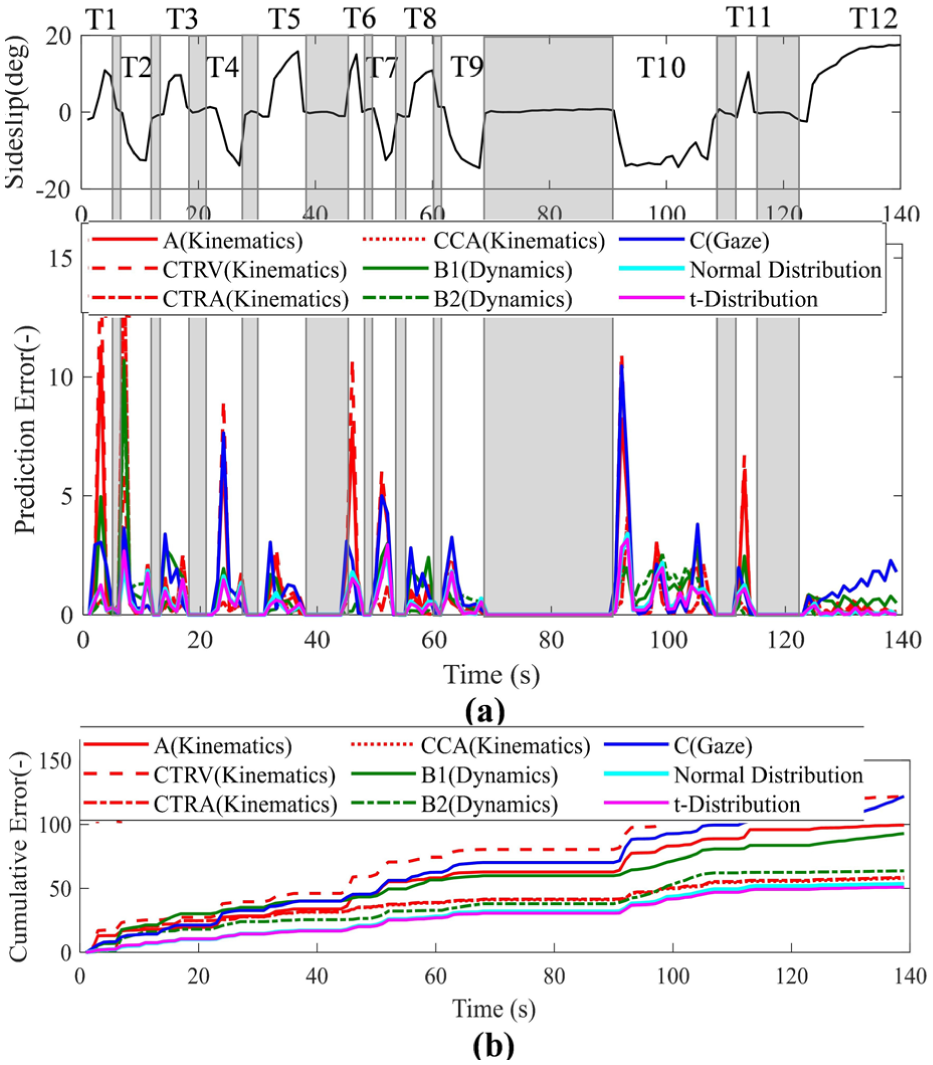

(2) The prediction error comparison on the whole track

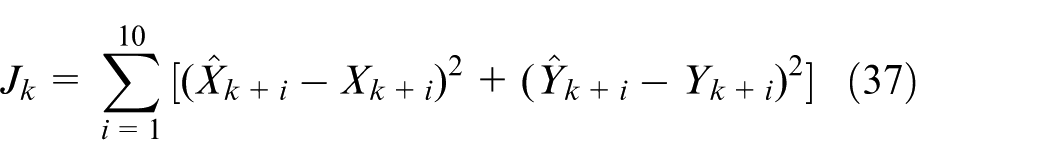

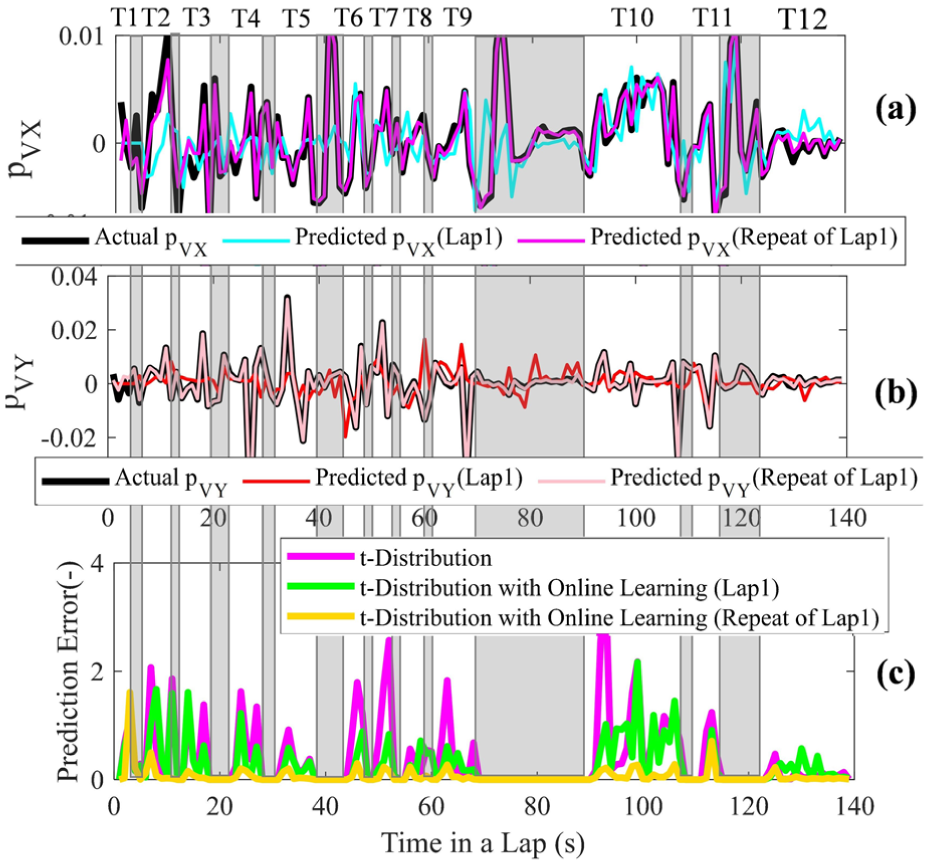

The prediction errors of different models for the entire track at a given lap are illustrated in Figure 12. It is important to note that only the errors during drift cornering are considered with a predict horizon of 1 s (10 steps forward), denoted as (37). A 1 s prediction horizon is used throughout this study to match the prediction horizon of the downstream MPC-based drift assist controller which will be addressed in future work and fit in our framework. 33 With 1 s prediction horizon and a discretization step of 0.1 s, the proposed trajectory prediction framework requires an average of 74 ms per cycle with a standard deviation of 4.8 ms, in MATLAB on a desktop platform with Intel i7-10750H CPU, demonstrating feasibility for real-time drift assist applications.

Prediction error at each timestep (a) and cumulative error (b) on the whole track.

For the aforementioned kinematics (Model A, CTRV, etc.), dynamics (B) and gaze-based (C) models, from Figure 12(a) we observe that no single model can maintain a consistent advantage/disadvantage in all corners. For instance, in T2 the gaze-based trajectory produces the lowest error, whereas in T12 it performs poor; In T12, the kinematics-based models demonstrate the highest accuracy, however in T2, T6 they significantly diverge from the actual trajectory. This absence of a distinct pattern underscores the necessity of data fusion utilizing distribution-based approaches. As shown in Figure 12(b), the fused outputs from normal/t-distribution produce less cumulative error compared to the other models, and the t-distribution-based regression performs better than normal distribution, since it is less affected by outliers as previously discussed in Figure 11. This fusion approach inherently performs adaptive suppression of model outputs producing heavy-tailed errors, while retaining all models to ensure robust performance across diverse drifting phases.

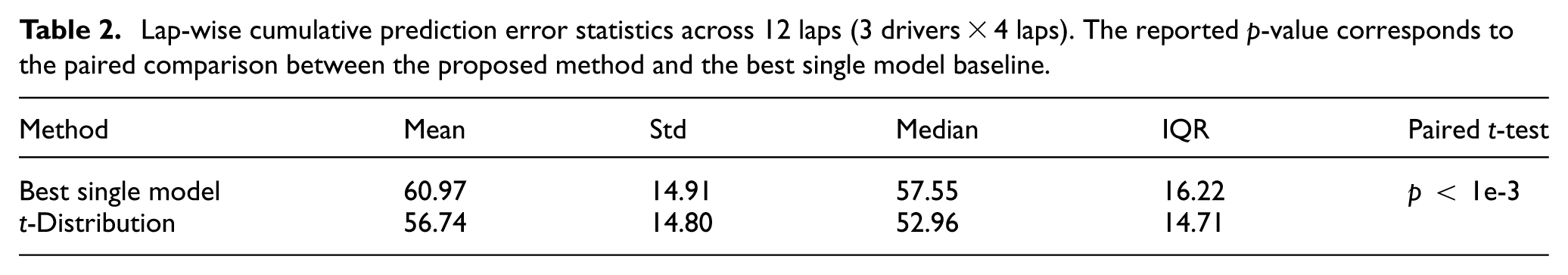

To further evaluate multi-driver consistency, as shown in Table 1, over the whole track, the proposed t-distribution fusion consistently achieves the lowest cumulative prediction error across all three drivers (four laps per driver), indicating the robustness of this proposed framework. In addition to driver-wise aggregate results, we further analyze lap-wise cumulative prediction error across all 12 laps (3 drivers × 4 laps). Table 2 reports mean ± standard deviation as well as median and interquartile range (IQR). A paired statistical test is conducted between the proposed method and the best single model baseline. The statistical analysis indicates a consistent and statistically significant reduction in prediction error achieved by the proposed method.

Lap-wise cumulative prediction error statistics across 12 laps (3 drivers × 4 laps). The reported p-value corresponds to the paired comparison between the proposed method and the best single model baseline.

The Gaussian process-based learning model

Previous research 54 has indicated that the trajectory prediction error increases significantly in the scenarios when risky driving behaviors are involved, and the physics-based approaches have concerning performance as they are based on simplified vehicle dynamics.52,88,89 Thus learning-based trajectory prediction has been extensively studied in recent years,52,53,88 and numerous algorithms have been utilized. Earlier studies90,91 started from artificial neural networks (ANN), and recently the recurrent neural networks (RNN) such as long-short term memory (LSTM)92–94 are more preferable as they can consider a sequence of historical states while predicting the vehicle trajectory during lane changes 93 or on winding roads. 95 Furthermore, the performance has been further improved through the use of transformer network,96,97 which are more effective in dealing with long-term dependencies. Additionally, convolutional neural networks (CNN) has also been applied to for image-based trajectory prediction. 98

In contrast to all the neural network-based methods above that require extensive of data for offline model training, this paper employs a Gaussian process-based 99 online learning approach, which has been successfully implemented in controller design to predict the system dynamics error in extreme maneuver, applicable to vehicles,100–102 robots 103 and quadrotors. 104 For example, 100 utilizes Gaussian process regression to predict the vehicle dynamics uncertainty in autonomous racing, and therefore reduce the lap time by 10% in an extreme environment where the vehicle behaviors are highly nonlinear and complicated to identify.

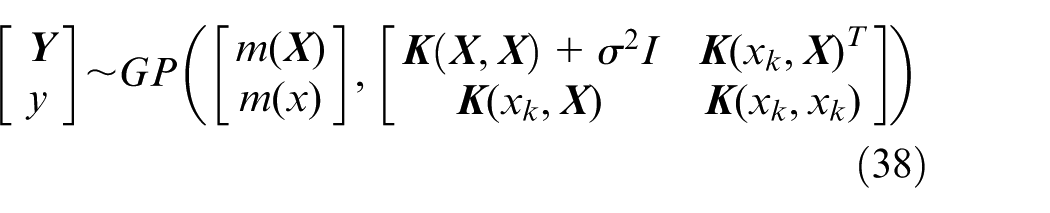

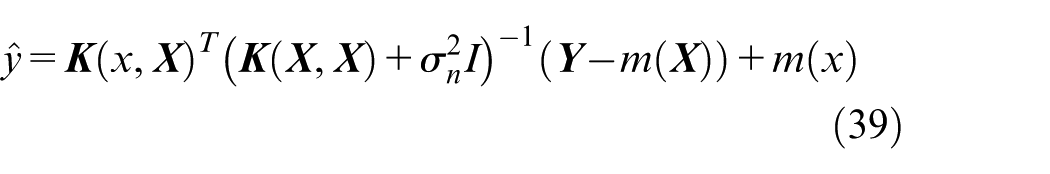

The Gaussian process model

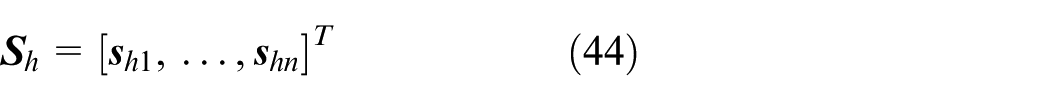

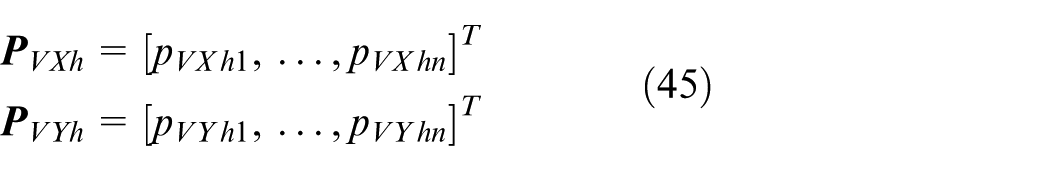

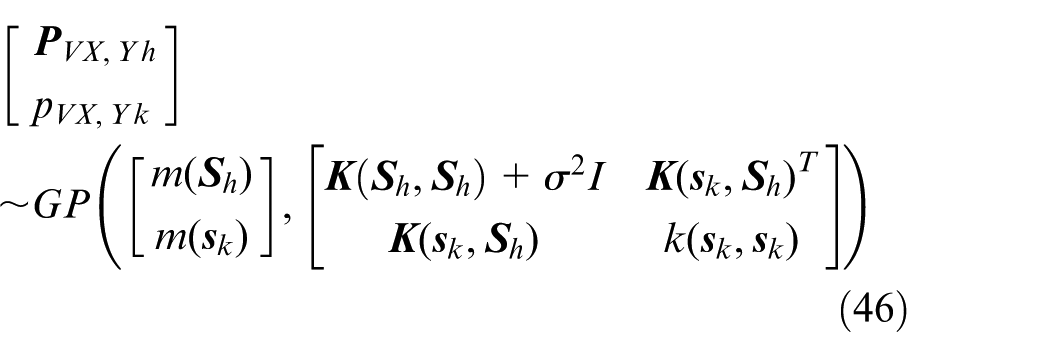

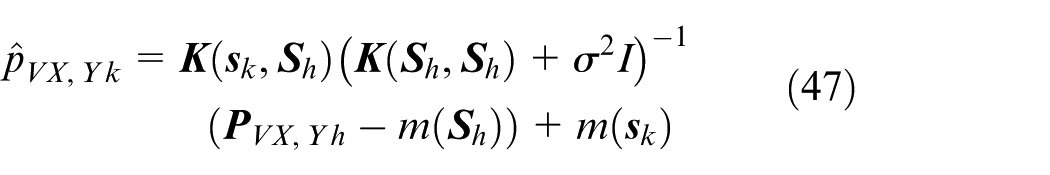

Given a series of training inputs

Inputs and outputs of the Gaussian process-based learning model

The inputs for learning-based trajectory prediction are normally classified into four types 53 : (1) history trajectory of the target vehicle, for example the trajectory data in a grid map 105 or through intersections106; (2) history trajectory of the target vehicle and the surrounding vehicles107,108; (3) simplified bird’s eye view and (4) raw sensor data. As for the outputs, three main types are discussed 53 : (1) the intention of a particular maneuver, such as the lane changing intention 109 ; (2) unimodal trajectory and (3) multimodal trajectory, the difference is the former only represents one specific maneuver, for example the lateral offset during lane change, 110 or the vehicle location in intersections.

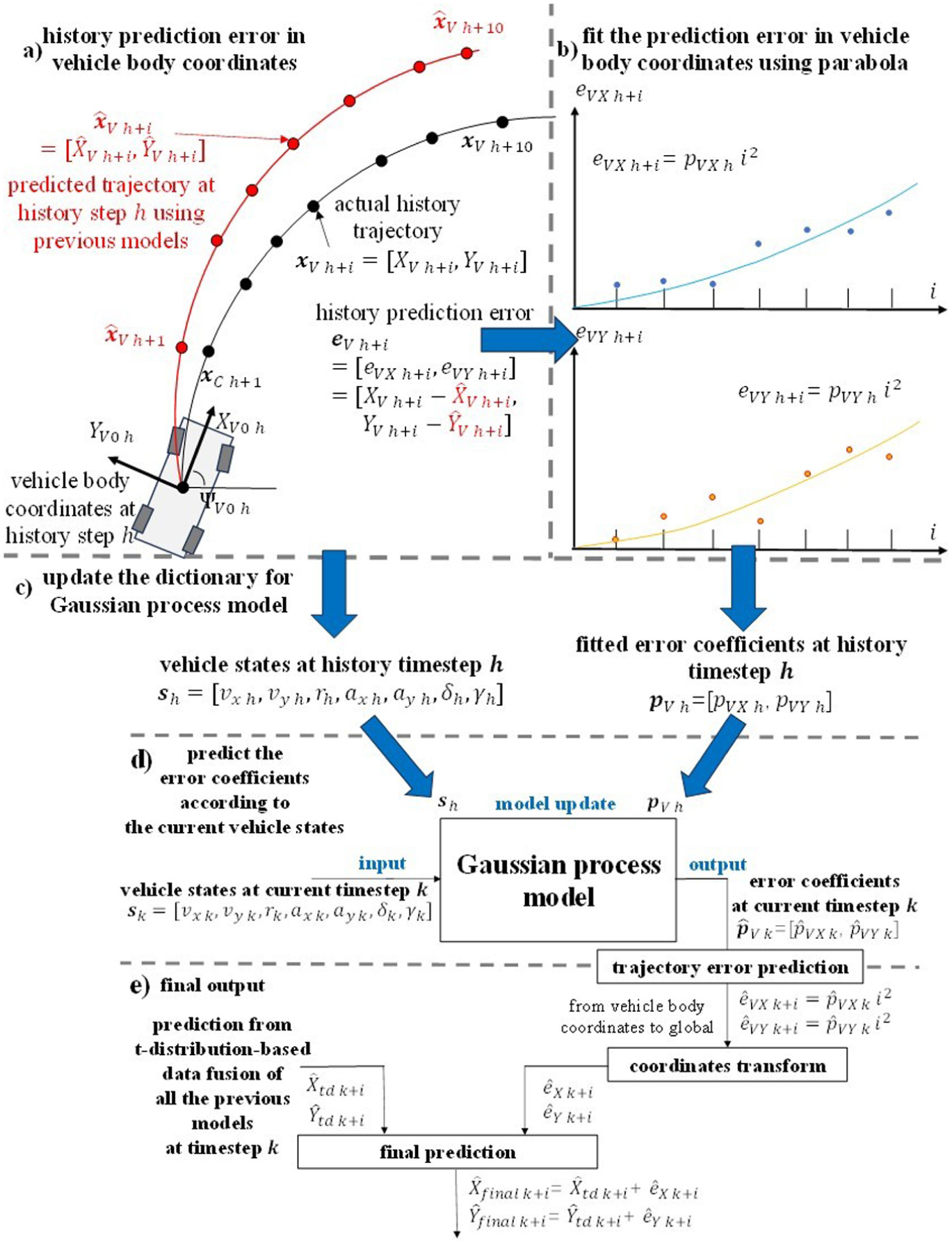

For this article that considers a specific drifting behavior performed mainly on racetrack with no surrounding vehicles and limited environment information, as shown in Figure 13(a)–(d), the history ego vehicle states

(1) Model inputs: Coordinates transform and the fitted coefficients for trajectory prediction error

As shown in Figure 13(a), to simplify and unify the expression of the trajectory prediction error from a history timestep

Next, in the vehicle body coordinates, the know trajectory prediction errors

The framework of the Gaussian-process based learning model, to learn the trajectory error of the previous physics and gaze-based models: (a) history prediction error, (b) data fitting, (c) Gaussian model update, (d) error prediction at current timestep, and (e) final output.

(2) Model updates and outputs: predict the trajectory prediction error based on the current vehicle states

The fitted coefficients

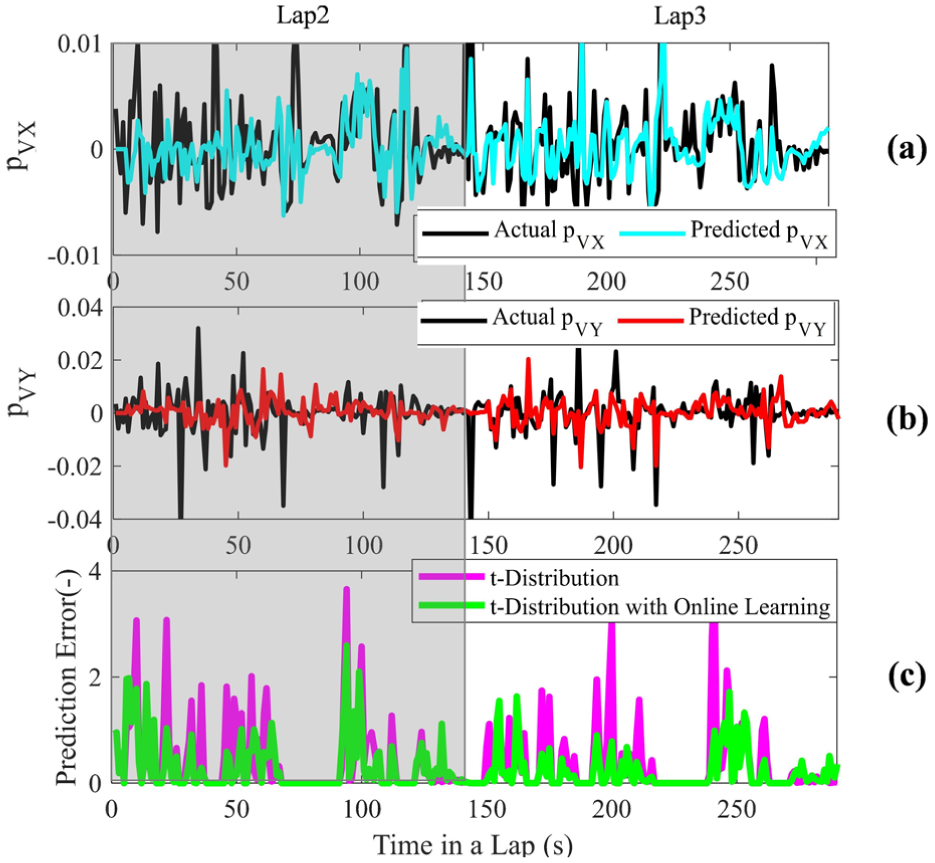

Trajectory prediction error after adding the learning-based model

To evaluate the improvements provided by the learning-based model in trajectory prediction, the results from two specific scenarios will be discussed: (1) the prediction and error in a given lap (Lap 1 in Figure 14) followed by a repeat of the same data from that lap; (2) the prediction and error in two continuous laps (Lap 2 and 3 in Figure 15). Note that the GP learning behavior is illustrated using representative consecutive laps for clarity; this does not limit the overall dataset size used for the previous multi-driver evaluation.

Trajectory prediction error with learning model added (Lap 1 and a repeat of the data from Lap 1 for online training): predicted coefficients in (a) (b), error in (c).

Trajectory prediction error with learning model added (data from Lap 2 and 3 for online training): predicted coefficients in (a)(b), error in (c).

For the first scenario, as shown in Figure 14, the learning model is enabled after 5 s in Lap 1 to produce predictions of

For the second scenario where the model is trained and evaluated in two continuous but different laps (Lap 2 and 3 in Figure 15), similarly the learning-based model is enabled after 5 s. The proposed method achieves improved prediction-error coefficient estimation and reduced trajectory prediction error in the subsequent lap (Lap 3 52.75 vs 41.32 without/with learning), demonstrating the effectiveness of online adaptation without offline retraining. It is worth noting that the degree of improvement is not uniform across all corners. For segments such as Lap3 T10/T12, the initial correction performance can be limited or temporarily degraded, since these segments exhibit dynamic characteristics that differ from earlier corners and require sufficient local observations before accurate error compensation can be learned. As additional data are accumulated through repeated traversal, the correction performance would improve accordingly (similar to Figure 14).

These results highlight the intended behavior of the proposed online correction framework: accurate compensation when similar driving patterns reoccur, and rapid adaptation when encountering new data, within a fixed short prediction horizon. An interesting direction for future work is to further investigate efficient strategies for managing the GP database, including update and forgetting mechanisms, to improve online learning efficiency and robustness.

Conclusion

Based on our proposed drift assist control framework, 33 this study concentrates exclusively on trajectory prediction as the interface between driver intention 34 and drift-assist control concepts.35,36 In this article we present a physics and gaze-based framework with a Gaussian process-based online learning approach, to predict the vehicle trajectory during the extreme maneuver of drifting.

Kinematics, dynamics and gazed-based models are considered in this article for trajectory prediction without prior environment knowledge. The kinematics-based models are designed to consider the unique features of high sideslip and counter steering during drifting. The dynamics-based models are applied to interpret the driver’s desired yaw rate and sideslip angle through steering and throttle. In addition, the driver’s gaze behaviors during drifting are also analyzed in this paper, followed by the travel-point and waypoint models to predict the trajectory based on gaze information.

A t-distribution-based regression is applied in this article for data fusion of the predictions from the above models, since there are more outliers in the extreme drifting maneuver.

Besides, an online learning model based on Gaussian process is proposed to learn the error of the aforementioned prediction models, providing prediction corrections in real time according to the current vehicle and driver states.

The driver-in-loop drifting data from the simulator of Cranfield University is utilized to prove the effectiveness of the proposed framework.

Footnotes

Acknowledgements

The financial and intellectual contributions of Rimac Technology are acknowledged for their role in supporting this research.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by Rimac Technology.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.