Abstract

The digital 3D representation of manufactured parts plays a crucial role in the quality assurance of small, individualized lot sizes. Machine vision systems, such as optical 3D scanners, guided by an industrial robotic arm, allow for a contactless full digital reconstruction of surface geometries. In order to digitize the whole geometry, it is necessary to acquire 3D scans from multiple viewpoints with respect to the part, which in combination cover the entire surface. With efficiency in mind, this results in an optimization problem between a high surface area coverage and low measurement effort, referred to as the view planning problem. In the presented work, two popular viewpoint candidate generation methods are implemented: Firstly, a surface-based random sampling method, which generates viewpoints within a solution space, in which visibility of a given model surface can be expected. Secondly, a view sphere viewpoint generation approach, which is independent of the object geometry but avoids clustering by generating evenly spaced viewpoints on a sphere around the centre of the object. Using an adjustable remeshing procedure, a multi-stage approach is implemented by generating multiple meshes with different resolutions. Through this, the benefits of working on a coarse mesh, such as fast viewpoint candidate evaluation and selection, are combined with the level of detail of a fine mesh. It is found that it is possible to considerably reduce the mesh resolution while maintaining a reasonably high surface area coverage on a reference model. Applying the proposed procedure to the view planning for a state-of-the-art 3D fringe light projection scanner with highly sophisticated scanning capabilities, it is demonstrated that the view sphere approach is more suitable for this use-case due to the large measurement volumes of the 3D scanner. The frequently used random sampling approach requires an excessively higher computational effort to achieve similar results in comparison.

Introduction

With growing demand for individualized and complex products, modern quality assurance procedures need to be able to quickly adapt to changing boundary conditions. Optical measurement techniques are gaining in popularity due to their versatility, 1 which allows for the creation of complete three-dimensional (3D) models of a wide variety of products. These complete geometric models enable the detection of deviations from the product design, which in return serves the purpose of modelling and monitoring individual products during their production lifecycle in the form of a digital twin of the product.2,3

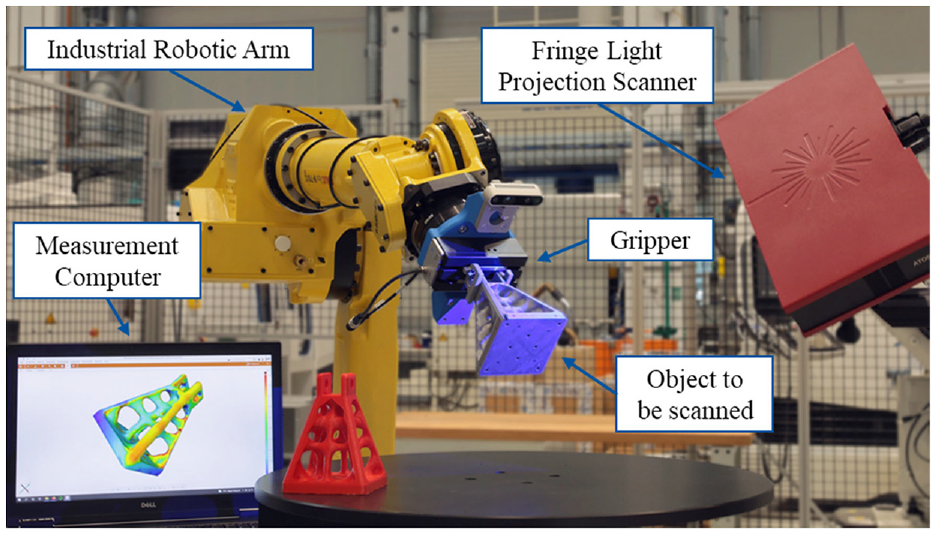

To facilitate the repeated full 3D capturing of the product geometries, automated scanning procedures need to be developed.4,5 Industrial robotic arms offer the flexibility that is needed to handle products with varying geometries. In Figure 1 a measurement cell including a robot for grasping and positioning small to medium sized objects and a fringe light projection scanner, which is used to create pointclouds of the surface of the handled objects, is shown. In order to generate an all-encompassing 3D scan of the object, it is necessary to determine robot poses that position the object in the measurement volume of the scanner in a way that the whole surface can be captured in succession.

Setup for the automated grasping and scanning of manufactured objects at the Chair of Production Metrology and Quality Management. In order to create digital 3D representations of an object, it is grasped by a gripper attached to the end effector of an industrial robotic arm and repeatedly placed in different poses in the measurement volume of a fringe light projection scanner.

View planning

The previously introduced task of finding a finite set of viewpoints, that is, camera poses, that, if attained by a sensor, enable inspection, reconstruction or recognition of a given object is commonly referred to as the view planning problem. 6 Inspection is a special case of object feature detection, in which the entire geometry of an object is considered important rather than separate features. It is assumed that a model of the object is available a-priori to the measurement task. Based on this model, viewpoint poses can be tested for their potential information gain. 6 In object recognition, view planning is used to detect a real-world object by comparing it to a set of input models. The procedures of performing view planning for inspection and object recognition are similar, as they both require a-priori knowledge of the object. They are therefore often referred to as model-based view planning. 7

A closely related subject to that of view planning is coverage path planning. However, in addition to determining a set of suitable viewpoints, the aim is to incorporate a path planning strategy. Thereby, a feasible path between the viewpoints is computed, avoiding obstacles and adhering to robot constraints.8,9

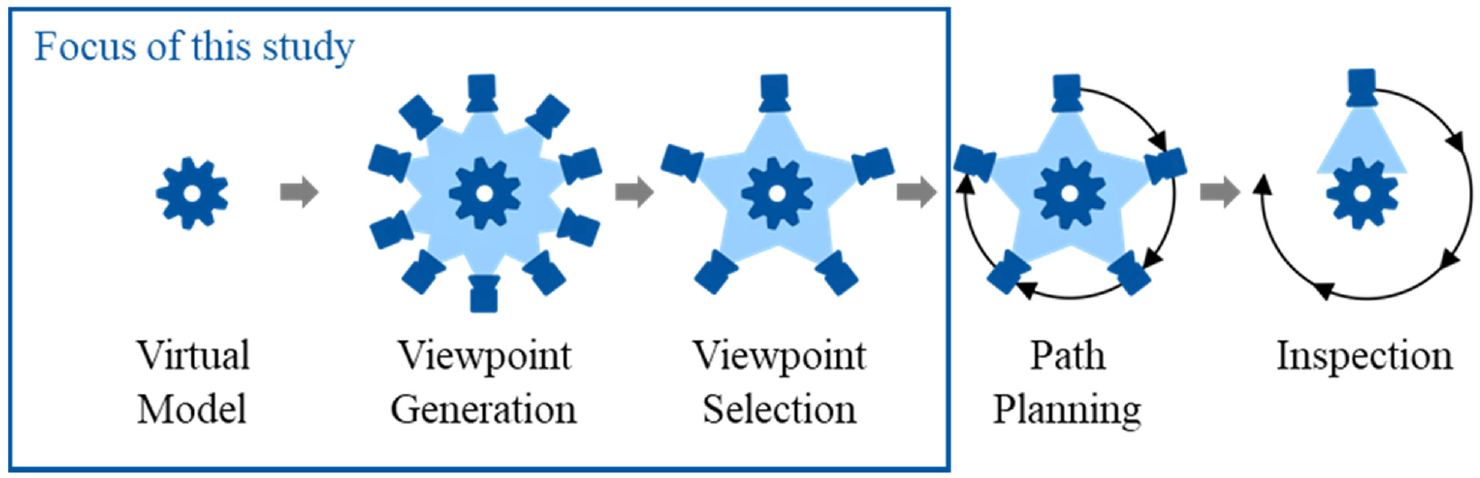

As depicted in Figure 2, the whole coverage path planning procedure can be split up into the five steps: virtual model, preparation of the input model; viewpoint generation, determination of viewpoint candidates; viewpoint selection, removal of unnecessary viewpoint candidates; path planning, ordering of the remaining viewpoints; inspection, the actual measurement process.

The process of coverage path planning. After selecting a virtual model of the object to be scanned (dark blue cog), an initial set of viewpoints (dark blue cameras) is generated. As not all of these viewpoints are needed to fully capture the object, a subset of only the necessary viewpoints is selected. In order to plan the physical inspection, a path (black arrows) with the shortest possible length connecting the remaining viewpoints is calculated. (Depiction based on Mosbach et al. 1 ).

Structure of this work

The first three steps encapsulate the view planning problem and define the structure of this study. In Section 2, the current state of the art of existing approaches is presented for each of these steps. Following this, the methodology used and implemented to solve the objectives of each step is outlined in Section 3. In order to validate the proposed methods, an evaluation of selected approaches for each step is conducted in Section 4, through which several alternative setups are compared, recommendations are given, and favourable configurations are deducted. Based on these results, a combination of different methods is implemented for a full view planning procedure, along with the presentation of sample results obtained from exemplary simulations. Finally, in Section 5, a summary of the work and an outlook on possible future improvements is given.

In summary, the following contributions are made to the view planning problem:

Adaptation of view planning methods for the robot-based inspection of small to medium sized manufactured products with a fringe light projection scanner

Inclusion of occlusion determination algorithms into the viewpoint selection process

Introduction of a new view planning approach, combining multiple of the investigated methods, balancing the advantages and disadvantages of the individual algorithms

State of the art

In this section, an overview will be provided on relevant and recent research conducted on the view planning and coverage path planning problem.

The existing approaches can be partitioned into computational geometry, graph theory and set theory methods. 10

Computational geometry methods are often constrained to two-dimensional applications, which can be described through the Art Gallery Problem. 11

Graph theory methods are only applicable to simple models and their use remains mostly theoretical, since rising model complexity increases the computational efforts significantly.6,12

Set theory methods are based on the consideration that a discretized geometry element can be either visible or invisible from a given viewpoint. The general procedure is to employ a viewpoint candidate generation scheme and to analyse the geometry element visibility for each candidate, which is also called the generate-and-test paradigm. This approach to model-based view planning is the one most commonly used in scientific research.10,13

Virtual model

Model-based approaches to the view planning problem work on the basis of the existence of an underlying digital model of the object to be inspected. While this condition may not hold true for numerous applications, it allows for a more thorough planning of well-suited viewpoints. It is therefore beneficial to use a model-based approach, if such a model is available. Some studies apply a combination of non-model-based and model-based approaches, where previously know geometries are used to train machine learning models, which can later on be a applied to cases where the object to be scanned is unknown.13–15

To perform calculations concerning the geometry of a real-world object, a digital geometric model must exist, which ideally resembles the object perfectly, retaining all its topological details. However, models are usually represented through discretization in the form of a finite set of elements. Two common solutions are voxel-grid representations and polygonal meshes. While voxel grids are often used if the to be measured object or volume is unknown16–18, model-based approached commonly use triangular meshes. 19

For most cases in production engineering, polygonal meshes are created from an existing parametric model of the object through a tessellation algorithms. In view planning, some approaches use the benefits of multiple mesh representations. By applying a remeshing algorithm, the level of detail of the model can be adjusted in order to reduce computational efforts.19,20

Viewpoint generation

According to Glorieux et al. the overwhelming majority of methods proposed for viewpoint sampling rely on randomisation approaches. These require a high number of viewpoint candidates to be generated and evaluated prior to selection, which in return increases the difficulty of picking the necessary candidates. Hence, the authors propose a heuristic to sample viewpoints in a more sophisticated manner by including objectives and constraints to the sampling procedure. The primary objectives are to generate viewpoints that have a high number of covered primitives and to focus on primitives that have not already been covered. The resulting set covering all candidates is determined through either a linear programming solver or a greedy algorithm. 21

In the work by Englot and Hover, the viewpoints sampled are added to a data structure they term a redundant roadmap. The redundancy aspect is formulated by requiring each object primitive of a triangular mesh to be inspected by at least a specified number of viewpoints. 22

Fu et al. follow a similar strategy by likewise using a roadmap construction technique to attain the shortest possible coverage path. The roadmap nodes are constructed through randomly sampling admissible robot configurations instead of a viewpoint search space. 23

Since the configuration of the robot is known in the use case at hand, randomly sampling admissible robot configurations is a valid approach. However, the solution space of admissible robot positions is extraordinarily large, which results in a low probability of finding configurations that can observe previously uncovered areas; a downside which is also noted by the authors. Therefore, using this methodology is likely to be inefficient for use cases, such as the one at hand, in which the evaluation of single measurement poses is computationally costly due to potentially complex objects to be inspected.

Another approach to viewpoint generation, are deterministic generation strategies:

For example, a view sphere is employed for viewpoint generation in the open-source view planning library for non-model-based view planning by Vasquez-Gomez et al. 24 and as part of a machine learning based solution to generate the next-best view in a non-model based scenario. 25 Here, viewpoints are equidistantly distributed on a sphere around the target. View sphere construction is especially popular with non-model-based approaches that attempt to reconstruct the geometry of an object for which only the rough dimensions, such as the bounding box, are known. A survey provided by Zeng et al. gives an overview of non-model-based methods that use view sphere viewpoint generation. 26

Through employing view sphere viewpoint generation, it is guaranteed that objects are observed from all general directions, in contrast to random sampling, in which potential good viewpoint areas can be missed by chance. However, this method is only suitable for scenarios in which the dimensions of the object to be measured do not massively exceed the measurement volume of the sensor. Other implementations construct an icosahedron instead of a sphere around the object, which can equally be regarded as a discretized sphere, and recursively subdivide the triangular surface facets into four triangles, at each centre of which viewpoints are placed.6,24,27

Viewpoint selection

The number of generated viewpoint candidates in set theory methods usually massively exceeds the number of viewpoints required to fulfil the coverage requirement. However, it is assumed that the probability of attaining the optimal viewpoint candidates increases with the number of candidates generated. Choosing the best viewpoint candidates that, when used in conjunction, provide full coverage of the object, is equivalent to the mathematical set cover problem.6,28

The set cover problem in the context of view planning states that the goal is to find the best subset from a set of given viewpoints, which provides the highest coverage of an object while maintaining low cardinality. This problem is often solved using a greedy algorithm, as it was identified to be the best polynomial time approximation to the problem and there is no method that systematically outperforms it.26,28,29

Englot and Hover use both the greedy algorithm and a linear programming relaxation algorithm independently for set cover solving due to their effectiveness and good approximation results. They note that the computational effort for using the linear programming relaxation method is more expensive than that of the greedy algorithm. Which is why, for high model complexity, they only provide greedy solutions. 22

Since the maximization problem of finding a viewpoint that provides the highest information gain is performed in each iteration, the optimality of the resulting viewpoint set is expected to be comparable to that of a greedy set cover approach that has ideal viewpoint candidates available. Furthermore, since they only add a limited number of viewpoints in each iteration, a combinatorial approach optimizing the entire solution with respect to a low cardinality viewpoint set is expected to perform better.

Overview

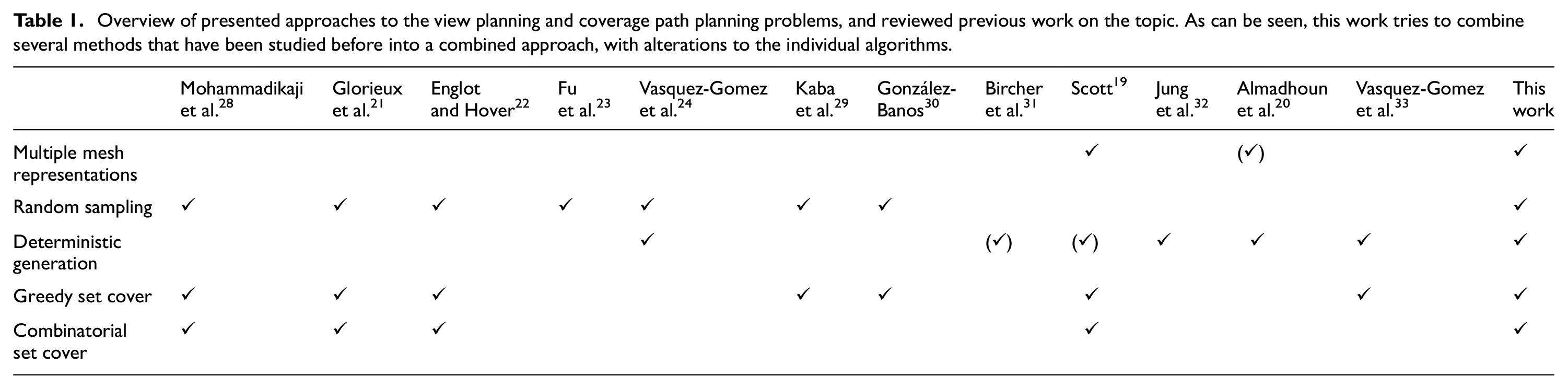

In Table 1 the previously discussed methods are summarised in the categories: multiple mesh representations for the virtual model step, random sampling and deterministic generation for the viewpoint generation step, and greedy set cover and combinatorial set cover for the viewpoint selection step. The presented studies and additional similar works found in scientific literature are listed as columns with the rows indicating which methods were utilized within the individual approaches. However, none of the approaches combines all discussed methods into a single procedure. Since all methods have advantages and disadvantages, it can be presumed that such a combination can benefit from several advantages of the methods while avoiding disadvantages.

Overview of presented approaches to the view planning and coverage path planning problems, and reviewed previous work on the topic. As can be seen, this work tries to combine several methods that have been studied before into a combined approach, with alterations to the individual algorithms.

Methodology

The methods implemented correspond to a model-based view planning approach, which is preferable to a non-model-based approach due to the availability of geometric models in the use case at hand. Furthermore, a set theory approach is chosen, since it is the most widely used and therefore the most thoroughly investigated approach among model-based view planning methods. 10

Hardware

The underlying use case is to conduct an automatic measurement using an optical 3D scanner. The specific model employed is a structured light scanner of the type GOM™ ATOS Core 300™. According to its data sheet, the scanner has a maximum measuring distance of 555 mm and a minimum measuring distance of 325 mm with a horizontal aperture angle of 37.6° and a vertical aperture angle of 29.3°. 34 For a surface to be measurable, a minimum angle, called the incidence angle, between the surface and the optical ray of the camera must not be exceeded. While an incidence angle is not specified in the data sheet, it can be assumed 20° according to experience with the sensor.

The implementation of the algorithms is evaluated using a 2 × 2.4 GHz Intel™Core i5-6200U CPU with 8 GB of RAM and an Nvidia™ GeForce 940M GPU.

Virtual model

The necessary computations, especially the occlusion determination, increase in complexity with larger mesh resolutions. Since the resolution of the mesh can vary arbitrarily, a method to modify the number of facets while maintaining the general geometric shape of the object is required. Through remeshing, the computation times required can be decoupled from the resolution of the input model. To this end, the geometry processing software PyMesh 35 is used. It provides functionalities to collapse short edges of triangles, thereby removing certain triangles that have unfavourable geometries and replacing them with larger ones, and to split long edges, which subdivides a triangle into several smaller triangles. Iteratively and alternatingly using those two methods, a new mesh that may have a different resolution while retaining the geometry can be created.

Multi-stage mesh processing

Using the remeshing approach, two individual meshes that have different resolutions are created: a coarse mesh and a fine mesh. The resolutions of which can be specified freely to balance between a short execution time and a high model accuracy. This approach follows that of Scott, who uses a coarse mesh for viewpoint generation, followed by visibility evaluation on a fine mesh. 19

Viewpoint generation

To apply the generate-and-test paradigm of set theory approaches, a method needs to be employed to generate viewpoints. The goal is to obtain a viewpoint set that has a high probability of containing a subset that achieves a high coverage while maintaining a low cardinality, or even to contain the optimal viewpoint candidates. Two common methods are implemented, a random sampling method and a view sphere sampling approach.

Surface-based random sampling

A random sampling approach is used, as it provides the ability to generate viewpoints based on the specific geometry of an object. By generating viewpoints based on the position and pose of specific facets, the view planner can react to areas that are not sufficiently covered by a given set of existing viewpoint candidates by generating additional viewpoints specifically for those areas. This can be applied to hard-to-reach surface areas, such as undercuts or obstructed areas, as well as broad, easy-to-measure areas.

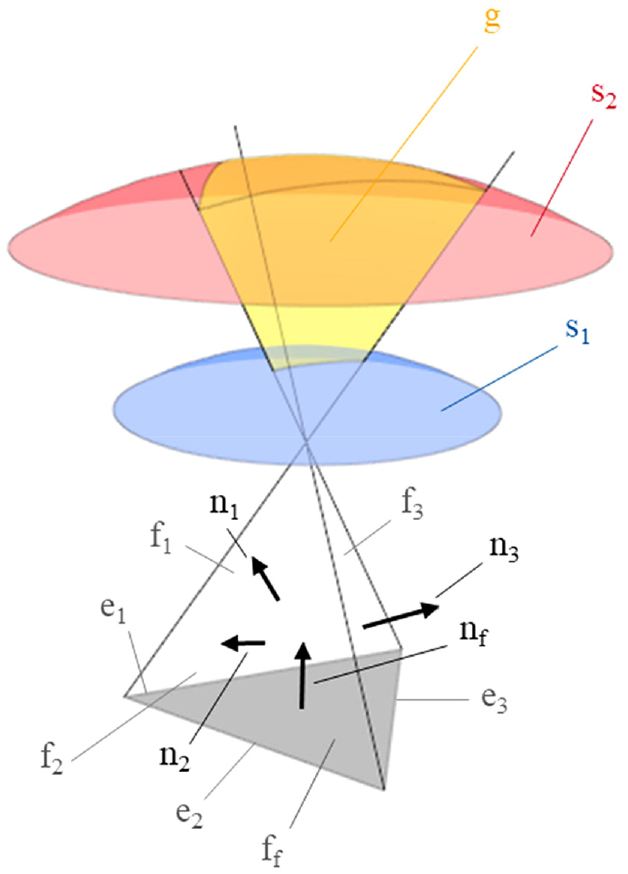

Considering the incidence angle constraint, there exists a solution space (g) for each facet, in which a viewpoint can potentially measure the respective surface patch, if occlusion by other parts of the geometry is neglected. The solution space, as depicted in Figure 3, has the shape of a cropped pyramid. Each face of the pyramid (f1, f2, f3) lies on a plane with one edge (e1, e2, e3) of the facet (ff), while the surface normals (n1, n2, n3) of the planes are angled to the facet normal (nf) at the specified incidence angle. The pyramid is cropped by two spheres (s1, s2) centred at the triangle, the radii of which are defined by the maximum and minimum measuring distance. Sampling the viewpoint within this solution space does however not guarantee visibility and only provides a candidate viewpoint, since the facet will have to be checked for occlusion.

Geometrical representation of the algorithm implemented for the surface-based random sampling approach. The grey triangle at the bottom represents the facet (ff) that is intended to be covered by the newly generated viewpoint. The remaining sides of the pyramid (f1, f2, f3) are created based on the incidence angle between their normal vector (n1, n2, n3) and the normal vector of the facet (nf). The blue (s1) and red (s2) surfaces are created from spheres centred at the centre of the facet, defining the minimum and maximum distance from the facet. The resulting yellow volume (g) represents the solution space in which a random viewpoint is to be generated. The illustration is not to scale for better readability. (Depiction based on Bircher et al. 31 ).

The procedure of using a cropped-pyramid-shaped space as the admissible viewpoint space is following the work of Bircher et al. who however do not use a random sampling strategy within this space but rather use it as a space constraint in which the viewpoint can be moved to achieve a more favourable connection between viewpoints. Neither do they consider occlusion. 36

View sphere sampling

To evaluate deterministic viewpoint generation, a view sphere sampling approach is employed. This approach is chosen over a recursive icosahedron subdivision approach, as the number of viewpoints candidates can be specified more accurately, rather than being constrained to the icosahedron sequence. Furthermore, a view sphere is expected to be a good choice for the use case at hand, as the dimensions of the test objects do not massively exceed the measurement volume of the employed sensor. It is therefore also preferred over a cartesian fixed position viewpoint generation scheme as presented by Almadhoun et al. 20

To this end, a virtual sphere centred at the centre of the bounding box of the model is constructed. The radius is determined by the average of minimum and maximum measurement distance of the used optical sensor. On this sphere, several equidistributed viewpoints are generated, the number of which can be specified. The orientation of the viewpoints is chosen so that they point towards the centre of the sphere. 37

Viewpoint selection

After the set of viewpoint candidates is generated, decisions need to be made on which subset of viewpoints is the most suitable choice for a high coverage while maintaining the cardinality of the subset as low as possible.

Visibility checks

In a first step, the visibility of facets from the respective viewpoints needs to be determined. The result is stored in a visibility matrix as binary visibility information. Using a visibility matrix is useful for regarding the viewpoint selection process as a set cover problem, which is defined for a binary constraint coefficient matrix and thus facilitates the use of state-of-the-art set cover solvers.

Additionally, the facets need to be checked for occlusion by other parts of the mesh or by any additional occluders, such as the robot gripper. The occlusion determination is implemented by two different methods: a method based on z-buffering, which is traditionally used in rendering, 12 and a ray-shooting method using the Moeller-Trumbore-ray triangle intersection algorithm, 38 which is used by raytracing algorithms. 39

The implemented z-buffering method differs from the traditional method in that each z-buffer element does not only hold a single instance, which is overwritten as soon as a facet that is closer to the camera and covering the element in question is encountered. Instead, the elements are kept alongside each other unless the grid cell is completely covered by a single facet closer than the others are. This is necessary as, in contrast to the occlusion culling methods used for rendering, the goal is not to find the colour of each grid cell and therefore displaying the facet that is the closest, instead, the visibility of each individual facet must be determined.

The implementation choice of this method is motivated by its resemblance to occlusion queries, which likewise operate on a z-buffer and are considered to be among the most efficient methods for occlusion determination. 40

As an alternative to z-buffering, the ray-triangle intersection algorithm proposed by Moeller and Trumbore 38 is implemented, as it is considered one of the most potent ray-triangle intersection algorithms due to its efficiency and simplicity. 41 The algorithm works by determining the intersection point of a ray with the plane spanned by the triangle. The intersection point is then calculated as a scalar factor, which is multiplied by the ray vector and then added to the camera position. By comparing the factor with the euclidean distance between the camera position and the point being tested, it can be determined whether the point is occluded by the triangle or whether it lies in front of it.

Set cover solving

Having established the visibility information for each viewpoint, it can be determined, which set of viewpoints is most informative and contributes most to the overall coverage.

The greedy algorithm is the most popular method for solving this set cover problem in view planning applications. 29 To this end, it is determined, which of the viewpoints covers the biggest surface area using the visibility matrix and the individual surface areas of the facets. The viewpoint achieving the highest coverage is selected for the output viewpoint set. This process is repeated iteratively, only considering facets for surface evaluation that have not been covered up to that point, until all facets that can be observed have been covered by at least one viewpoint. Since the observed surface area is by principle monotonically decreasing with each iteration, it is computationally sensible to stop the procedure as soon as the magnitude of the area added by the next viewpoint is lower than a predefined threshold.

As an alternative to the greedy algorithm, the solver SetCoverPy, 42 based on lagrangian relaxation, is used. For the underlying application, a unicast set cover problem is assumed, in which the costs for viewpoints do not vary. This is due to the absence of a metric that would penalize certain viewpoints. The lagrangian relaxation-based combinatorial solver is chosen, as its use is frequently mentioned in scientific research, along with the related method of linear programming relaxation.19,22,29 Furthermore, the availability of SetCoverPy as a dedicated set cover solver is another advantage, as the underlying heuristic therein is specifically targeted at this problem, through which no additional parameter tuning has to be conducted, as it might be the case with more general linear programming solvers.

Evaluation

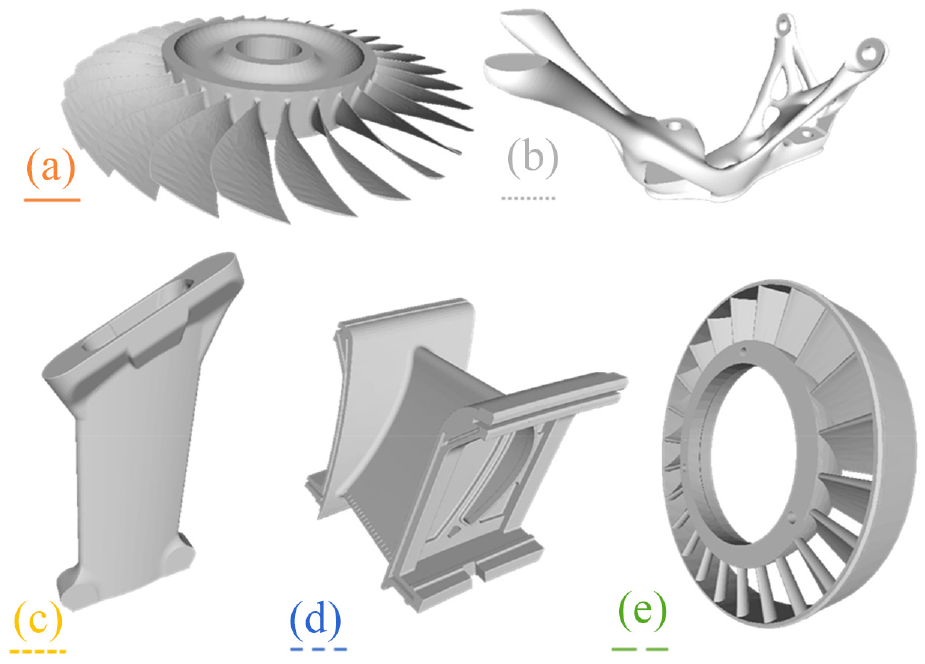

In this section, the methods previously introduced will be evaluated for their applicability to the use case. This will include testing of several process parameters, such as the degree of remeshing, the different viewpoint generation schemes and set cover solving variants. For this, the methods are applied to five test objects, as depicted in Figure 4, representing geometries commonly manufactured with additive manufacturing.

Test objects with different distinct features used for the evaluation of the proposed procedure. Colour of the enumeration and depicted linestyles correspond to charts in this section. Object A ((a), orange, solid line), Object B ((b), grey, dotted line), Object C ((c), yellow, short dashed line), Object D ((d), blue, medium dashed line, © Siemens Energy 2022), Object E ((e), green, long dashed line).

Virtual model

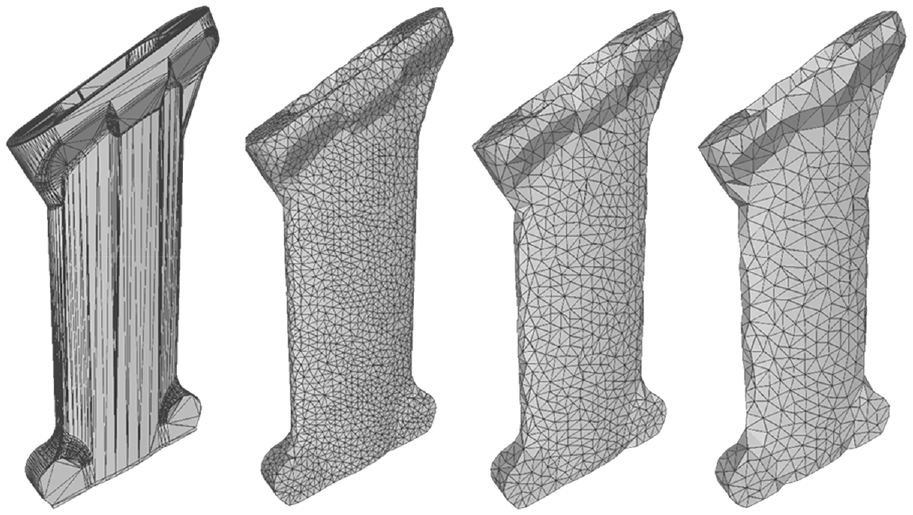

The result of an exemplary remeshing process, attaining different mesh resolutions, is depicted in Figure 5. It can be observed that the remeshed models all manage to resolve the high number of obtuse triangles into more regular triangles, with the triangle quality increasing with the resolution. While on the finest of the remeshed models, the triangle quality appears to be consistently good, the coarsest model comprises a number of triangles with internal angles exceeding 90°.

Meshes resulting from remeshing the initial mesh of Object C with varying resolution parameter. From left to right: the original model, a 10,108-facet representation, a 4346-facet representation, and a 2388-facet representation.

Multi-stage mesh processing

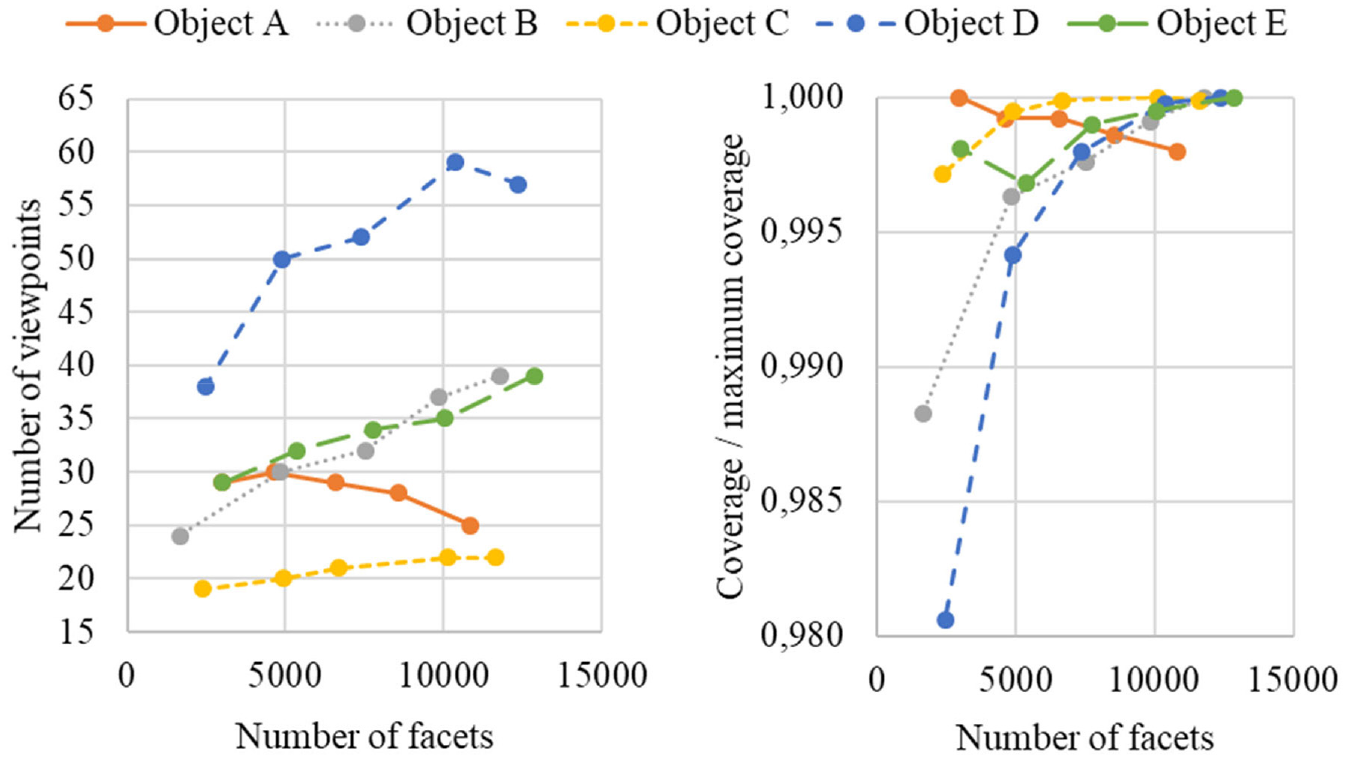

In the following, the effectiveness of using multiple mesh representations to obtain good viewpoint candidates through a coarse model that can be processed more easily is evaluated. For this, viewpoint candidates created through view sphere sampling with 100 points are evaluated and selected on meshes with variable resolution. The coverage of these viewpoints is calculated on a mesh consisting of approximately 15,000 facets, the results of which are depicted in Figure 6. The set cover problem is solved through lagrangian relaxation.

Left: Number of selected viewpoints and resulting coverage when selecting viewpoints generated by view sphere sampling on meshes remeshed to different resolutions. Right: The surface area coverage achieved by the selected viewpoints on a reference model of approximately 15,000 facets. Due to internal structures, the overall coverage is reduced for some of the models, as some facets are impossible to detect by external viewpoints. For easier comparability, the coverage has been normalized to the maximum coverage achieved in the respective series.

An observation made is that, for the majority of test objects, the use of a simplified model results in a lower number of viewpoints required for a similar coverage of the object. This is due to the nature of the set cover problem requiring full universal coverage. If facets cannot be covered by any viewpoint candidate, they are removed entirely from the set cover problem to allow for a solution. However, if a single viewpoint candidate covers a facet, it needs to be included in the candidate selection. Since the number of viewpoint candidates is kept constant during the experiment, the higher the resolution of a model, the higher the chance that a viewpoint has unique visibility of a facet and therefore must be selected in the covering set.

Multi-stage mesh processing can consequently be used to evaluate viewpoints on a coarse mesh to determine good viewpoint candidates at a lower computational effort while still providing efficient viewpoint configurations.

Viewpoint generation

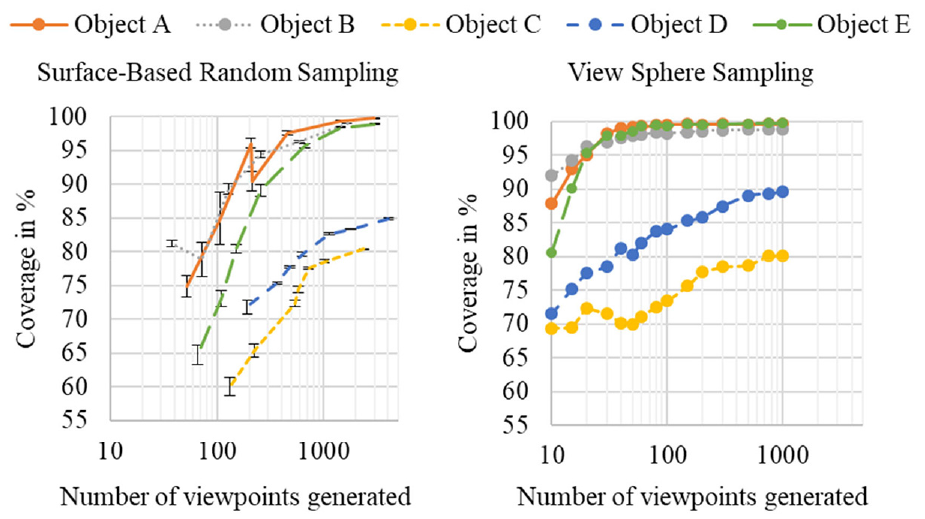

The view sphere sampling and surface-based random sampling viewpoint generation methods can be evaluated by calculating and comparing the coverage achieved with a full set of viewpoints.

Surface-based random sampling

The experiment on surface-based random sampling is conducted by varying the sampling mesh resolution parameter, thereby varying the number of viewpoint candidates generated. The resulting candidate set is evaluated for the combined coverage on a higher resolution mesh.

The results of the experiment are depicted on the left in Figure 7. The values shown are the average of five repetitions using the same configurations. The coverage includes surfaces that possibly cannot be inspected with certain parameter choices, such as surfaces that lie on the inside of a hollow object. Object C and Object D contain such geometry, which is why coverage on these parts does not approach a value close to full coverage. The surface areas added through an increase of viewpoint candidates are those that lie on the inside of the model and are therefore hard to inspect. However, since the incidence angle constraint is set to 20° for the experiments, some of these surfaces can be inspected, although the solution space that allows for this is potentially small. Therefore, with a rising number of viewpoints, the chance that viewpoints fall into this space increases as well.

Left: Average coverage and corresponding standard deviation following surface-based random sampling with varying viewpoint set size, created by remeshing to different resolutions. Right: Coverage following viewpoint generation from a view sphere with varying number of viewpoints.

View sphere sampling

To evaluate the view sphere sampling method, no viewpoint selection is carried out; instead, the combined visibility of all viewpoints is used. The results of this experiment are depicted on the right in Figure 7. It can be observed that for almost all test objects, the coverage converges towards a certain fraction of the overall surface area.

In conclusion, although the number of viewpoints available is significantly lower than when using surface-based random sampling, the maximum achieved coverage is similar or even higher for view sphere sampling.

Viewpoint selection

After potential viewpoint candidates have been generated, a minimal subset of viewpoints needed for a full surface area coverage needs to be selected. First, multiple methods for occlusion determination are implemented and their performances are compared against each other. Using the created visibility matrix, both implemented set cover solvers are evaluated.

Visibility checks

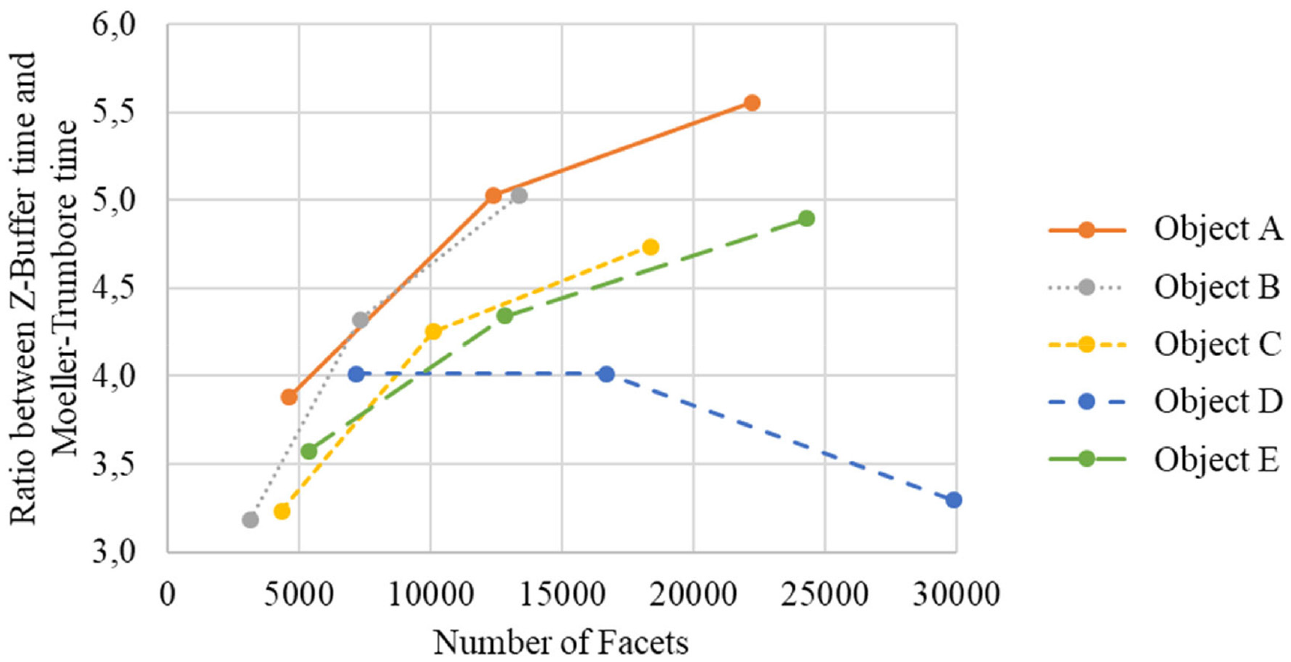

The z-buffer method can be compared to the GPU-accelerated Moeller-Trumbore algorithm. Figure 8 depicts the performance of both algorithms as a ratio between each other over the number of facets. It can be noted that for all models except Object D, the benefits of the Moeller-Trumbore algorithm becomes increasingly striking with rising model accuracy. The mitigation of this effect on Object D can be explained by the large interior surface of the model, which results in a high number of occlusions. The z-buffer algorithm will stop execution upon finding an occlusion, whereas the Moeller-Trumbore algorithm finishes all triangle tests due to them being launched in parallel.

Ratio between occlusion determination computation times using the single-cell z-buffer method and the Moeller-Trumbore algorithm for varying model resolutions.

In conclusion, the Moeller-Trumbore implementation outperforms the z-buffer approach in all test cases, finishing at least three times as fast. It is therefore recommended to use this approach whenever possible. However, if no appropriate GPU is available, the z-buffer method offers an alternative.

Set cover solving

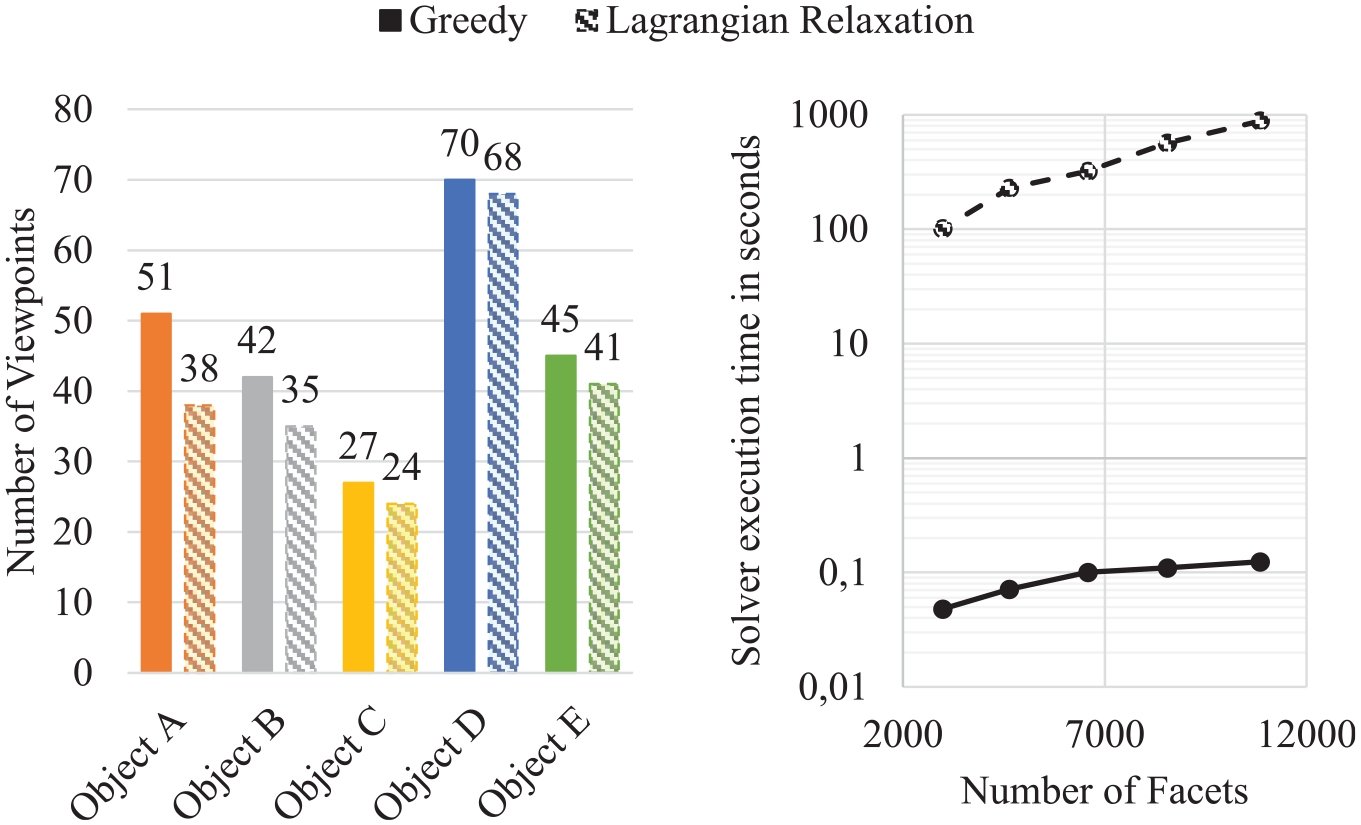

Both previously covered set cover solvers, the greedy and the lagrangian relaxation approach, can be compared through evaluation of the number of viewpoints required to achieve the maximum coverage of a given set of viewpoints. To achieve similar test conditions in each run, view sphere sampling is used since it is deterministic and achieves the same results in every run. The test is conducted on every test object with a fixed resolution parameter of 0.1. The results are depicted on the left in Figure 9.

Left: Number of viewpoints required for the inspection of the different test objects, using both greedy solving and lagrangian relaxation solving. Viewpoint candidates are created using view-sphere generation with 100 points. Right: Set cover solver execution times for Object A plotted over the number of facets with a constant number of 100 viewpoints.

For this experiment, no stop criterion is used for the solvers. Therefore, the overall coverage achieved by both solvers is the same for a given object. It can be observed, that the lagrangian relaxation-based solver outperforms the greedy algorithm in every instance. For the test objects with a high number of undercuts, the lagrangian relaxation method does not produce a result that is significantly better than that of the greedy approach. This can be explained by the circumstance that those undercut areas have a smaller effective solution volume in which viewpoints can lie. Therefore, viewpoints generated in this area need to be added to the set of selected viewpoints regardless of the solving approach used. On the other hand, models that do not exhibit such geometry can profit from combinatorial optimization since there are multiple viewpoints capable of registering a given facet.

However, the greedy algorithm reaches a solution significantly faster than the lagrangian relaxation solver, as can be seen on the right in Figure 9. The execution time of the latter increases with a rising number of candidate viewpoints as well as a rising number of facets. For example, reaching a solution for a mesh consisting of 10,000 facets and 100 viewpoints can take approximately 15 min to complete when performing the standard 20 iterations. 43

Full procedure

Based on the previously discussed results, a combination of the different methods is implemented. Two representations of the geometry of the object, a fine and a coarse mesh are used. An initial viewpoint generation is carried out through view sphere sampling, which is the basis for a visibility check on the facets of the coarse mesh. Subsequently, a surface-based random sampling step is executed for those facets on the coarse mesh that have not been covered by the previous step. The resulting set of viewpoints is minimized using either a greedy algorithm or a lagrangian relaxation solver. Thereafter, based on the fine mesh and the selected viewpoints, facets that are not covered by any viewpoints are determined. If any are found, another surface-based random sampling step is applied to them. The resulting candidate set is again minimized using a set cover solver. In a final step, the selected viewpoints are fed to the Lin-Kernighan-Helsgaun heuristic travelling salesman problem solver, yielding a short connection between the viewpoints.

The methods are implemented as part of a Robot Operating System (ROS) 44 package based on the open-source coverage path planner StructuralInspectionPlanner, 31 which was extensively modified to include all presented algorithms.

Results

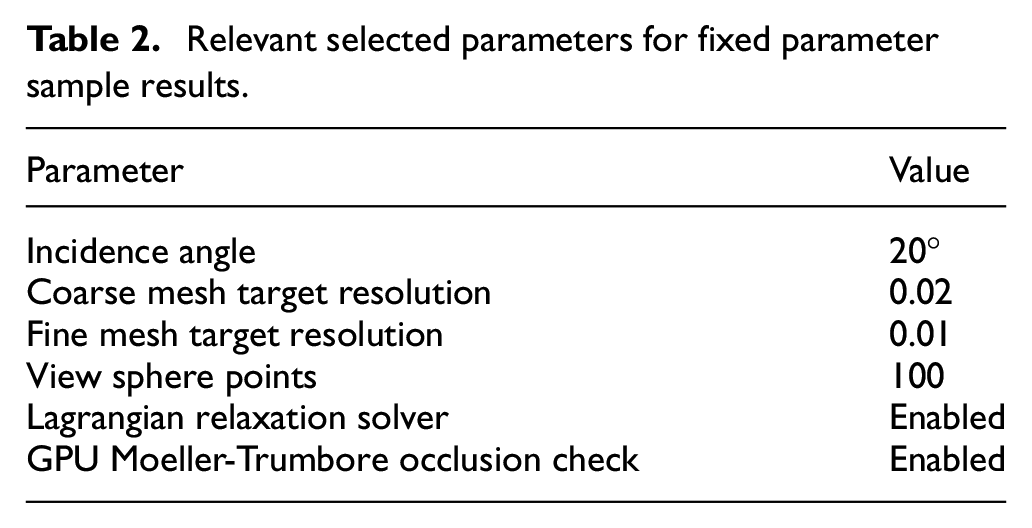

For demonstration purposes, the following results are obtained by using constant parameters for all objects, the relevant parameters are listed in Table 2.

Relevant selected parameters for fixed parameter sample results.

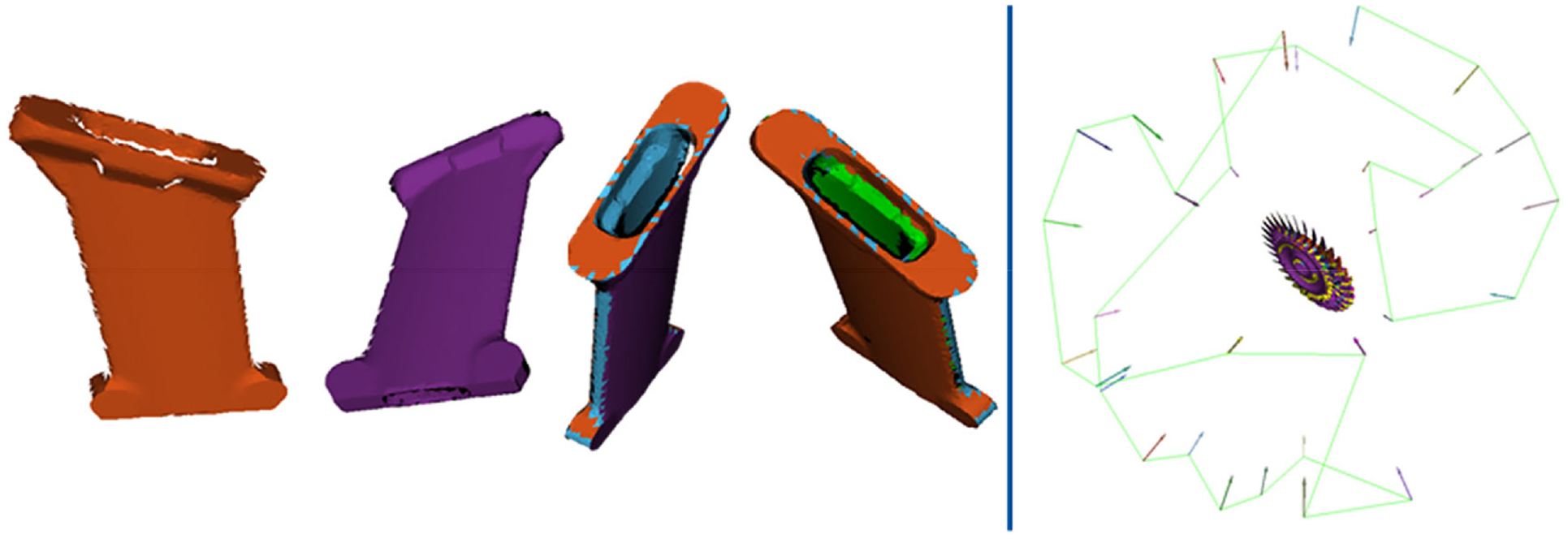

The following illustrations show the area covered by a specific viewpoint in alternating colours. In Figure 10, the first four acquisitions of an exemplary view plan for Object C are depicted from the perspective of the respective viewpoint. The colour of the facets corresponds to the viewpoint they are covered by. The viewpoints usually overlap each other, so that areas might be covered by multiple viewpoints. This overlap is not depicted in the images though, as the colouring procedure is sequential and only newly covered facets are coloured according to their viewpoint.

Left: A sequence of the first four viewpoints of Object C, proceeding from left to right. The colour of the facets indicates which viewpoint they are covered by according to the following order from first to fourth viewpoint: orange, purple, cyan and green. The perspective is according to the respective viewpoints. Right: Object A sample result. The selected viewpoint configuration with their connecting tour (green line) and the covered surface facets, each represented by a colour according to the observing viewpoint (coloured arrows).

For a complete procedure on Object A, a coarse and a fine mesh comprising 2984 and 12,394 facets, respectively, are used. The overall calculation time is 120 s, with 10 s being used cumulatively for occlusion determination and about 105 s for the solving process. The computation times of all other process steps are negligible. The achieved coverage is 99.74 % on the fine model with a total number of 31 viewpoints required for inspection. The remaining uncovered area is located close to the base of some blades, as these are the most difficult areas to inspect due to the high occlusion potential. These areas are visible as black areas in the bottom right of Figure 10. The black areas at the top of the image are rendering artefacts due to z-fighting. The viewpoint positions and poses are additionally illustrated as coloured arrows, with the respective object area they observe coloured accordingly. The green line connecting the viewpoints is the solution tour generated by the travelling salesman problem solver.

Conclusion

Within this work, a simulation environment is created for the purpose of view planning, utilizing the Robot Operating System. Therein, a triangular mesh model of the to be scanned object is used to assess the applicability of the generated viewpoint candidates.

To gain independence from the input mesh model resolution, a remeshing procedure is conducted to obtain a desired mesh resolution. Through remeshing, unfavourable obtuse triangles can be removed and replaced by triangles that are more regular, through which a statement on their visibility becomes more meaningful. Using the remeshing procedure, a multi-stage approach is implemented by using two meshes with different resolutions, through which the benefits of working on a coarse mesh, such as fast viewpoint candidate evaluation and selection, can be combined with the level of detail of a fine mesh. It is found that the mesh resolution can be considerably reduced, while still maintaining a reasonable surface area coverage on a reference model.

Two viewpoint candidate generation methods are implemented:

A surface-based random sampling method, which generates viewpoints within a solution space in which visibility of a given model surface can be expected, and a view sphere sampling approach, which is independent of the object geometry but avoids clustering by generating evenly spaced viewpoints on a sphere. It is found, that for the use case at hand, the view sphere sampling approach is more suitable, due to the large measurement volume of the applied 3D scanner, whereas the random sampling approach requires an excessively higher computational effort to achieve similar results.

Given the generated set of candidate viewpoints and the corresponding model facets that are visible from those positions, the task of selecting a suitable, low cardinality subset of viewpoints is solved using either of two set cover solvers: a greedy algorithm and a lagrangian relaxation algorithm. Results indicate that the latter is able to obtain a smaller viewpoint set than the former, while achieving the same surface area coverage. However, this comes at a higher computational cost.

The different methods are combined in a full coverage path planning procedure, which is demonstrated to be capable of generating a set of 3D scanner poses based on an existing model that facilitates the creation of a geometric model of a manufactured part.

Future work

While the applicability of the proposed procedure has been demonstrated within a simulated environment, practical evaluation is still outstanding. Furthermore, potential improvements to the implemented methods are proposed as follows:

The current remeshing procedure generates mesh triangles of similar size. While this is useful for replacing unfavourable triangles, it results in representing large, planar regions of objects through an unnecessarily high number of facets. An adaptive remeshing procedure, which represents regions with an adequate number of triangles according to their geometric complexity, while maintaining a good triangle regularity, could improve the accuracy of the object representations without a drastic increase in the number of facets.

The view sphere sampling approach achieves good results on the test objects; however, it is constrained to object geometries that do not exceed bounds defined by the specifications of the sensor. For significantly larger or longer objects, the view sphere method would fail. Therefore, the versatility of the approach could be improved by deploying multiple view spheres depending on the geometry of the object. Furthermore, other than a sphere, other shapes such as ellipsoids could prove useful.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The support of the Federal Ministry of Education and Research (Bundesministerium für Bildung und Forschung BMBF) through the funding of the research project ‘IDEA - Industrialisierung von Digitalem Engineering und Additiver Fertigung’ (Industrialization of Digital Engineering and Additive Manufacturing, 13N15000) is gratefully acknowledged.