Abstract

In the context of Industry 4.0, Cyber-Physical Production Systems (CPPS) and digital twins are key technologies for the management of huge amount of data generated by Industrial Internet of things (IIoT) devices. However, the interoperability and flexibility of different components is still an important challenge so as to integrate them in the process and fit all industrial specific needs. Thus, the main contribution of this paper is to propose a database architecture and a data model associated allowing multiple agents to work collaboratively and synchronously to perform high-level tasks. Therefore, it fulfils requirements and needs of Industry 4.0: interoperability, scalability, flexibility and resilience. The proposed architecture and model are implemented on a cyber-physical production system (CPPS) which is used in order to show and discuss several use cases examples.

Keywords

Introduction

In the context of Industry 4.0, Cyber-Physical Production Systems (CPPS) 1 and digital twins 2 are key technologies that operate using a huge amount of data generated by Industrial Internet of things (IIoT) devices. They allow easing interaction with the physical production system through human-machine interface, sensors and actuators and supervision at various levels. These levels start from the physical level composed of different physical components of the production system like production machines, conveyors, robots, products, etc. The physical components can integrate sensors, actuators or input/output systems for human-machine interaction. The borderline between the cyber level and the physical one is defined by the communication level allowing to collect data about the physical world including human and to communicate tasks and operation to them.

The cyber part is composed of different sub levels allowing to transform information in knowledge and make decision based on this knowledge. 3 Lee et al. 4 proposed the 5C architecture of cyber physical systems, where the manufacturing system is composed of four levels, namely: Conversion, Cyber, Cognition and Configuration. At the cyber level, digital twins 5 are situated in the architecture proposed by Bagheri et al. 6 However, it is defined as a representation of the virtual models of the production system, and data exchanged between the physical and virtual components allow working on simulation, optimisation or prediction of the behaviour of the physical system. 7 This definition exceeds the cyber level of the 5C architecture and also places the digital twins at the cognitive level.

The manner of presenting information and controlling devices in new generation of manufacturing systems brings to lights new technologies such as advanced human-machine interfaces like augmented reality (AR) and virtual reality (VR).8,9 AR communicates real time relevant information to the operator at the shop floor level, helping him to efficiently perform his tasks. 10 VR can be used to explore new layout or configuration of the system 11 or to train and develop skills of human resources. They also represent a communication interface, which should be in the communication level. 12

Other activities in the workshop need accurate and real time data such as dynamic scheduling, security system, inspection, etc. Data exchanged could represent a large amount of structured, semi-structured or unstructured data stored in the database associated to supervision systems of the workshop. 13 The modelling of the CPPS should be able to represent the different aspects of the activities performed by the manufacturing system. Several models in the literature have been developed with different layers and entities. An interesting comparison of different models is presented in Alam and El Saddik, 12 where each model is evaluated regarding its computation base, communication, control cloud integration, configuration and model type. Most of the developed models seek to implement a defined technology such as the implementation of digital twins or the use of cloud computing, etc.

In fact, the use of Industry 4.0 components, such as cloud and fog computing or big data, 14 allows the management of complex manufacturing systems through optimised decision-making based on a near real-time knowledge extraction and the integration of artificial intelligence algorithms in production devices. 15 In this new generation of factories, the increase of automatic dynamic decisions and actions requires the availability of the right information and resources at the right time to ensure the reactiveness and the resilience of the system facing external or internal disruptive events. 16 Key issues for data exchange, storage and processing architecture are: (i) interoperability, due to the various components and standards used in industry; (ii) scalability and (iii) effectiveness, which are essential for the development and evolution of new services. 17

Several researchers have proposed adapted architectures of database and data exchange to satisfy different challenges of the industry 4.0. Uhlemann et al. 5 presented a new framework for the implementation of new industry 4.0 concepts in SMEs (Small and Medium-sized Enterprises) such as digital twins. Alam and El Saddik 12 developed a new architecture to favour the use of Cloud computing. Lee et al. 4 proposed an architecture based on the 5C model to improve the availability of production tools through an efficient maintenance strategy. Gabor et al. 18 proposed an architecture based on three main groups, which are: physical works, world model and cognitive system. An architecture of the information systems able to easily represent the interaction between different entities of the system is presented. Recently, a four-layer architecture is developed by Malakuti et al. 19 to provide more flexibility and interoperability of the system. Recently, García et al. 20 proposed a methodology for the adoption of the Industry 4.0 paradigm in SMEs through digital retrofitting by systematically designing and implementing the proper IT infrastructure, using technological tools for easy access. However, the proposed methodology requires monotonous and repetitive tasks from the developer such as the one-way binding of the databases with their respective variables in the OPC UA information model. 21

All the developed architectures of data model are defined in a specific aim. They do not usually address the communication and data exchange issues between different parts of the system. In addition, they are linked to the adopted model of CPPS and do not propose a standard communication manner to improve interoperability, flexibility and autonomous behaviour of the manufacturing systems. 22 That is why this paper proposes a database architecture compliant with RAMI 4.0. It allows data storage and exchange between various components of CPPS while satisfying Industry-4.0 needs in terms of flexibility, scalability and resilience. A well representative use-case is presented and discussed to show the applicability of the proposed solution. The main contribution of this paper is to propose an architecture and data model which are able to: (i) make agents (operators, robotic arms, Autonomous Guided Vehicles AGVs, etc.) able to realise high-level tasks by breaking them down into low-level operations, which they execute collaboratively and synchronously; (ii) improve factory responsiveness and resilience toward disruptions by assigning operation to agents of different abilities, using different regrouping or coalition strategies; (iii) render agents interoperable and (iv) make agents able to make and share decisions.

The remainder of this paper is structured as follows. Section 2 presents the components and needs of the Factory 4.0. Based on this analysis, Section 3 presents the proposed architecture and its components. Section 4 explains the data model associated to the architecture proposed. Finally, Section 5 contains two illustrative use cases of the proposed architecture to prove that it answers a part of the needs identified.

Factory 4.0: Components and needs

The introduction of Industry 4.0 principal, through the installation of new generation of manufacturing systems, is composed of four main components. 17 The first component is a set of systems that generate data about the factory, such as: Industrial Internet of Things, machines sensors, robots, mobile robots and other tools used by stakeholders. 8 The second component is an information system that gathers generated data and processes it locally, through fog computing or cloud computing. 23 The third component processes all those data to improve factory efficiency. 24 In one hand, there are algorithms and tools, which automatically make decision before, during and after the production process. In the other hand, several tools such as digital twin, simulation, virtual reality and augmented reality, help human taking decisions by getting a realistic representation of the current status of the factory. As example, a mono-objective optimisation process usually provides an automatic decision, whereas a multi-objective one provides several possible solutions to support human decision-making. 25 Finally, the fourth component of the Factory 4.0 is composed of humans, robotic systems and machines that act in the factory. Associated to a variety of components of Factory 4.0 and their interdependence, it is necessary to identify the challenges and needs that require the development of a specific architecture, which are:

-

-

-

-

-

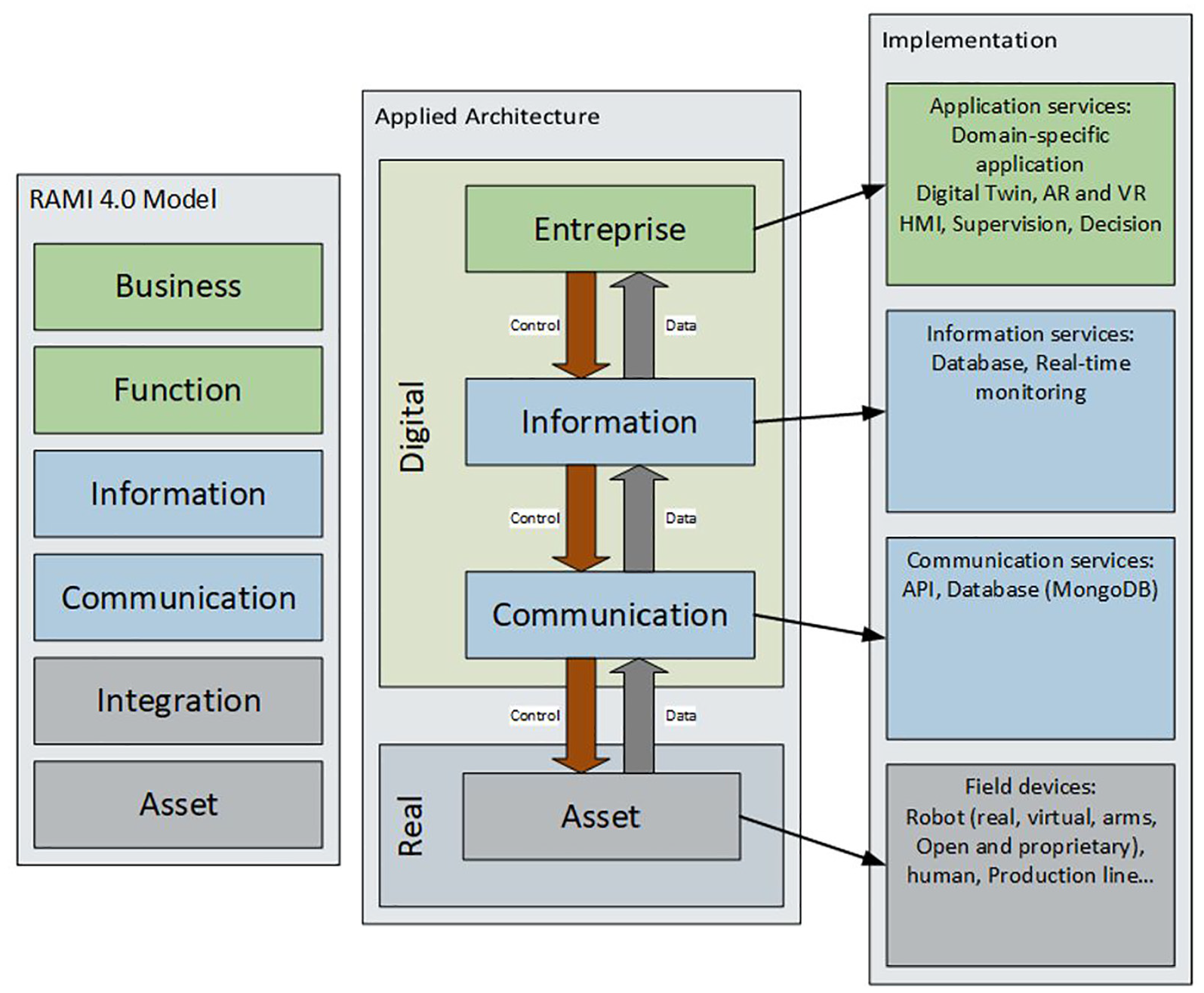

According to the RAMI 4.0 (Reference Architectural Model Industrie 4.0) standard of Industry 4.0, 29 this paper deals with the information, orders and organisation of communication passing between agents (or assets) from the different worlds (Model, State and Physical). In Figure 1, the implantation of Industry 4.0 based on Ye and Hong 30 is presented. As a specificity, the database appears on two levels. At the information level, as it centralises all production and machine statuses information. At the communication level, since it is a central point of communication between agents. In ‘field devices’, the virtual robots considered are the assemblies of several entities offering all the possibilities of their components as a single asset.

Refined Industry 4.0 implantation (based on Ye and Hong 30 ).

Architecture description

As explained in previous sections, people and decision-making tools need data about the factory status to take the best decisions. However, the factory contains several systems, or more generally agents (sensors, robots, machines, tools, applications used by workers, etc.), with heterogeneous data format and capabilities. That is why, an interoperable way of exchanging data and an architecture able to adapt to each agent capability has been defined.

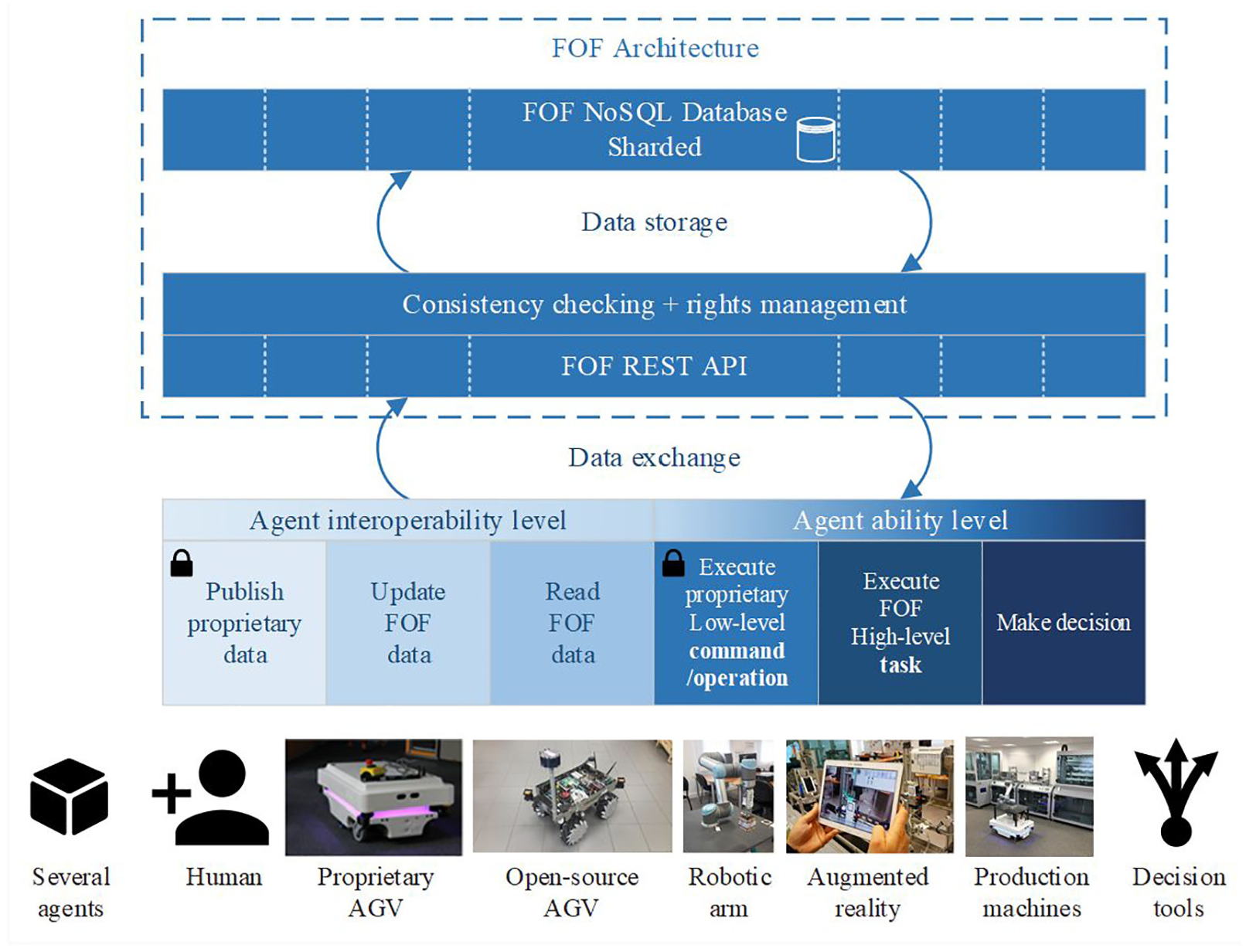

Therefore, in order to design this architecture, three interoperability levels and three ability levels are identified for each agent in the factory (see Figure 2– Factory Of the Future (FOF) API). The three interoperability levels are:

-

-

-

The three ability levels are these ones:

-

-

-

According to these interoperability and ability levels, each agent reads and updates data through a communication channel (Figure 2). Even if OPC-UA is the main standard accepted for Industry 4.0, more and more research works are dealing with merging architectures for IIoT connectivity 32 and particularly, with making OPC-UA a RESTful services oriented architecture;33,34 the REST advantages are to enhance OPC UA interoperability towards resource-constrained devices 35 and to decrease number of requests for getting an information. 36 That is why this paper presents the architecture with a REST API, since OPC-UA is going to the direction of session-less service as it has recently implemented publish-subscribe (PubSub) feature in 2018.

High-level architecture for the Factory Of the Future (FOF). Each agent has up to three interoperability levels and three ability levels.

All in all, the roles of this communication channel is: (i) to use the standard implemented in order to make all the agents able to communicate, (ii) to check the availability and consistency of the data sent, in order to avoid storing not well-formed data and (iii) to store data about the factory status in the FOF database (Figure 2– consistency checking).

As the data are dynamically evolving, the proposed architecture is based on a NoSQL database technology, more precisely a MongoDB engine.37,38 The consistency-checking component is managed horizontally, since data is shared with several parts, which can be deployed on several servers. Consequently, if some devices are added/removed/changed (machines, robot, etc.) and more storage spaces are needed; more servers can be added to support the load. Moreover, as the factory status is continuously known, the agility and flexibility of the system can be evaluated in real time. Based on this knowledge, any intelligent agent endowing decision-making ability evaluates several scenarios, and then takes the opportunity of all the possible flexibility of the manufacturing system in real time. Once the appropriate scenario chosen, the decision agent assigns tasks to the selected resources and shares the decision taken into ‘high-level tasks’ and ‘low-level operations’.

Communication is based on an API (Application Programming Interface) linked to a NoSQL database. All agents are communicating through an Ethernet network via cables or Wi-Fi. This technique allows us a quick validation of the methods described in this document. A gateway has been created on each CPPS to allow a communication with the API using REST HTTP requests.

The next section is going to describe the data model used in order to improve agents’ capabilities.

FOF data model

Architecture

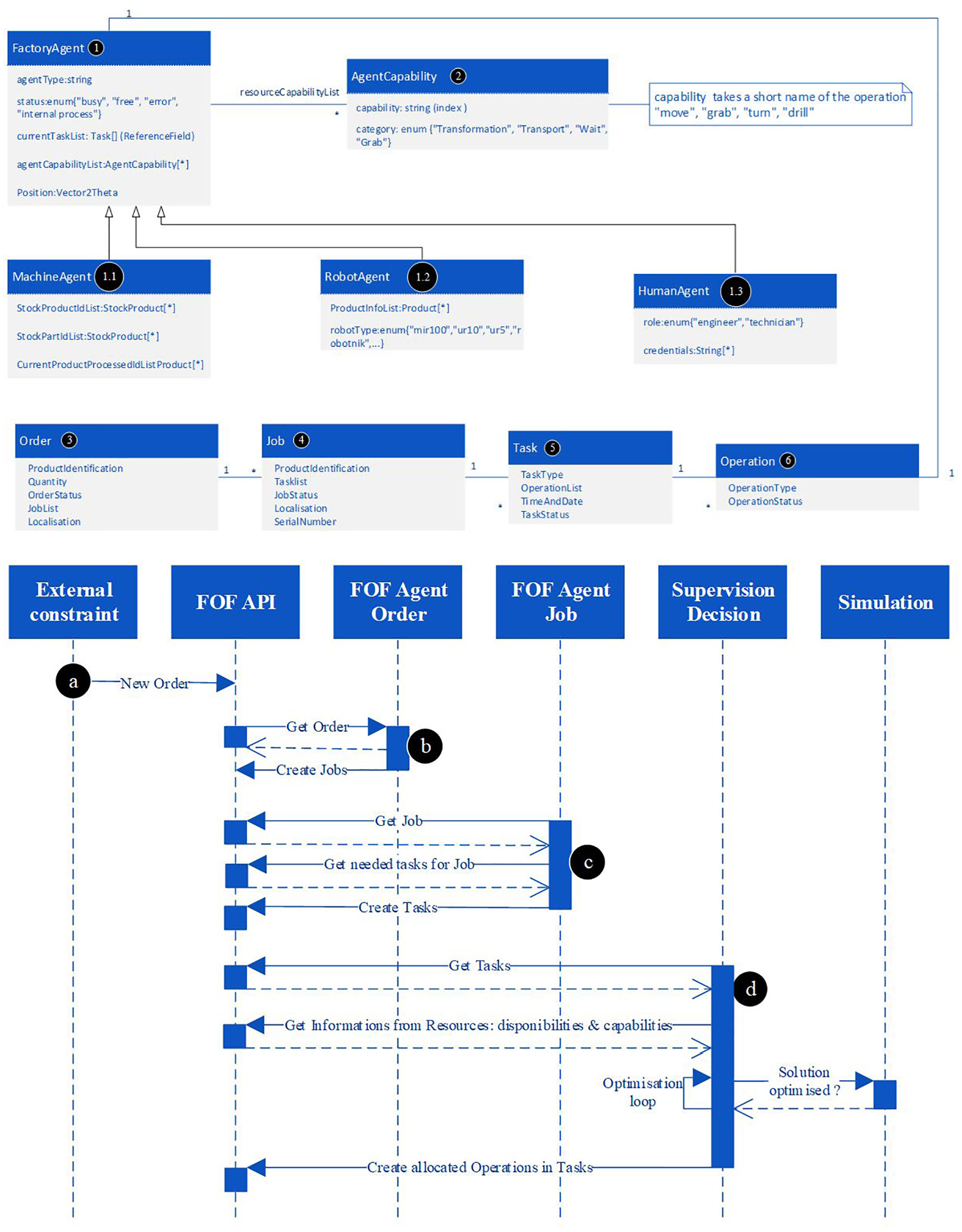

The database includes all information to create operations allocated to each agent in the factory starting from orders. Figure 3 presents different parts of the database detailed below.

The FOF Data Model proposed and a sequence diagram illustrating its usage to assign operations to factory agents.

First, the FOF model defines the agents available in the factory through the FactoryAgent (see Figure 3-1). It mainly defines which type of agent it is (i.e. robot, human, machine, stock, etc.) and the agent capabilities (Figure 3-2), i.e. which operation the agent is able to perform in the factory, such as: ‘move’, ‘grab’ or ‘drill’. Moreover, the FactoryAgent gives its status in order to make the decision agent aware of its availability in the factory. In Figure 3, three examples of FactoryAgent classes are illustrated (1.1, 1.2 and 1.3) with their specific properties. At this level, human, robot and machine are considered as an integral part of the factory.

With this information available in near real-time, tasks and operations can be assigned to agents by any decision tool. The FOF model splits this process in four main classes. The Figure 3 (bottom) shows these classes and illustrates how they are used in a sequence diagram in order to assign operations to agent in the factory. The four classes are:

-

-

-

-

Once Task and Operation are assigned to each agent by a Supervision Decision agent, each agent involved in a Task is aware of what and in which order it must performs Operation. A detailed use case is going to be provided in the next section; it explains how each agent is behaving during the execution phase.

From job to order

Agents represent any available resource. They are the digital links between the database and the physical actors of each job. For instance, if another manufacturing plant is considered as a resource/supplier for the company. The supplier manufacturing may offer capabilities (Figure 3-2) such as ‘Manufacture part of type X’. As mentioned in Section 4, the supplier manufacturing plant may interpret this type of formulation as an order. In this case, the agent ‘Other manufacturing plant’ is connected to our FOF API as an agent, and to the other manufacturing plant as an ‘external constraint’ (cf. Figure 3-a).

Use cases discussion

This section, first describes the CPPS used in the laboratory to implement the FOF architecture. Then several use cases are explained in order to illustrate the various way the FOF architecture can be used.

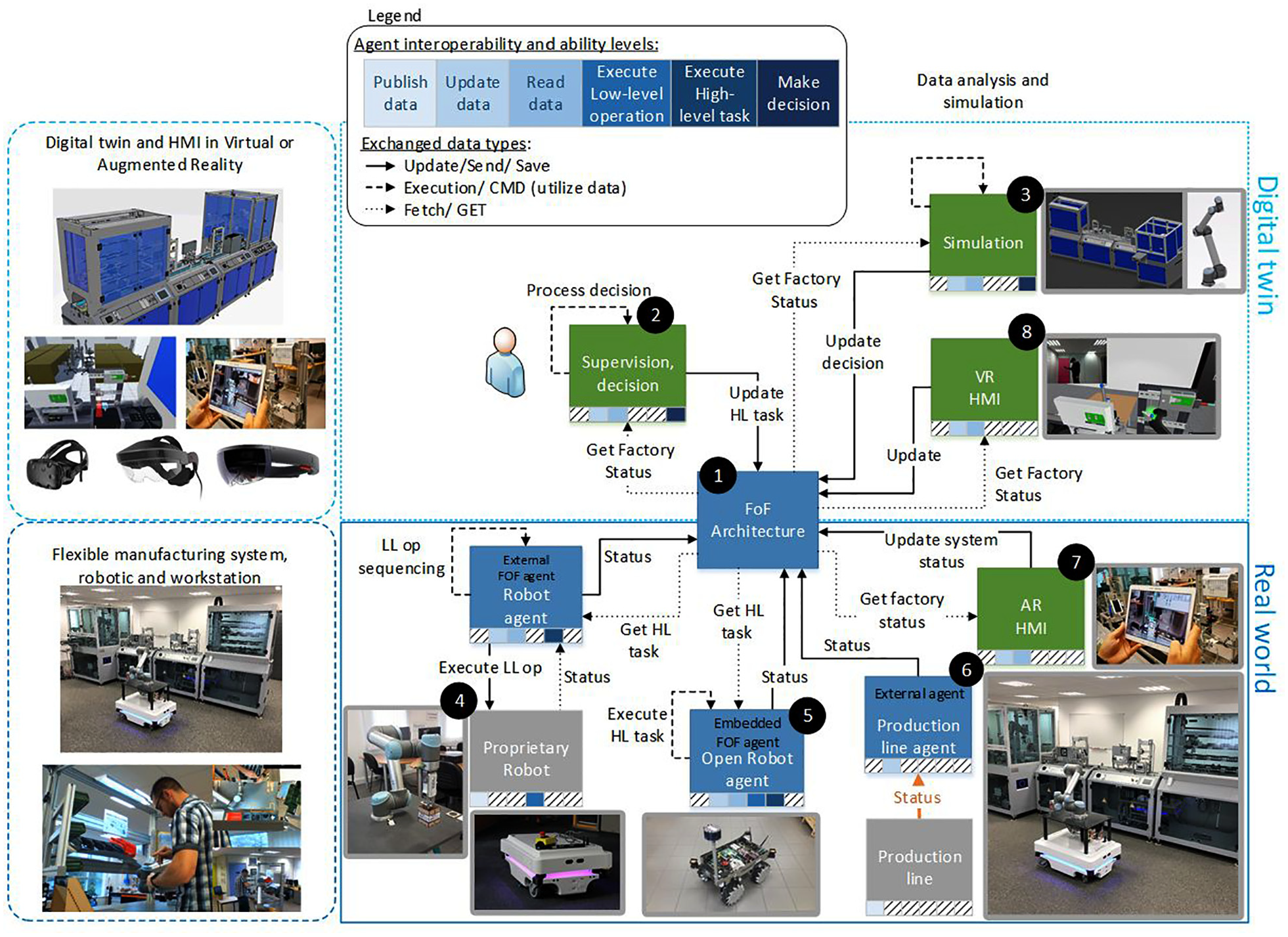

The proposed architecture has been implemented in the laboratory CPPS, a flexible manufacturing system (FMS) involving several robots with different capabilities in a shop floor layout context (see Figure 4). This CPPS is composed of:

A physical production system including a set of manual workstations and an automatic production system. It also contains a set of 3D printers and laser cutters used for the production of several kinds of part. Three mobile robots with different interoperability, ability levels and capacities ensure transportation tasks. Pick and place operations can be ensured by human operators or robotic arms. Several types of products can be produced and AR devices can be used to help operators in production or maintenance tasks (see Figure 4).

A digital twin and VR environment to support decision or design process, and besides, to train operators on assembly or maintenance tasks. An internal cloud is used to collect, process and store data.

CPPS use case and associated architecture.

In Figure 4, data flow is displayed in different line styles: dotted lines mean that an agent is getting data from the FOF cloud, dashed lines mean that the agent process data internally, and solid lines mean that data is updated by the agent to the FOF Architecture in order to make every agents aware of the new data status. In the next sections, the benefits of the proposed architecture on different use cases based on this CPPS are discussed.

Distributed supervision based on digital twin

The advantage of the proposed architecture is to allow a distributed supervision and the generation of different levels of decision. It considers the capability and smartness of each agent in the production systems such as mobile robots or robotic arms. The implementation of a distributed supervision system needs a rapid horizontal and vertical data exchange between the different production system components. It means that different decision-making technics can be used for the optimisation of the decision in the process. The developed architecture allows the representation of the status of each element and an accurate representation of the operations assigned or made by each agent of the production system. As a result, the status of each agents in the factory is available in the FOF Architecture (see Figure 4-1). Consequently, the Supervision decision agent (see Figure 4-2) gets the factory status and uses simulation (see Figure 4-3) to optimise the tasks assignment in the factory. Once done, the task assignment is registered into the FOF architecture, to make every agent aware of what he/she/it needs to perform. Several control architectures were developed with local and global decision-making process. For example, Goumeye et al. 39 have developed a scheduling mechanism able to make global decision and change this decision locally if a disturbance of the system occurs. This mechanism needs to define a global solution based on information about the system and its accurate state. In addition, it needs information on each event of the system in a short time to ensure that each robot can modify its behaviour regarding the unexpected change in the manufacturing system. If the data are not synchronised, it risks creating conflicts between the global decision and the local one. The proposed architecture can provide these data to avoid this kind of conflict and ensure that each other robot is aware of the launched operations and tasks.

Enhancing robot capabilities and flexibility

In industry, some proprietary robots are limited to perform low-level operations, like moving to a specific point (see Figure 4-4). In order to make them more flexible and able to perform high level-tasks, it is possible to develop an external agent, which completes the low-level capability of the robot. This agent regularly reports the status of the proprietary robot. Therefore, the FOF architecture stores it in order to make all the other agents aware of its status. With this information, supervision decision component is able to assign some new high-level tasks involving the proprietary robot, for instance ‘move a product X from position A to position B’, which implies coordination of several agents. The external agent of the robot manages the synchronisation of operations and sends the corresponding low-level operation (or command signals) to the proprietary robot in order to make it able to execute this high-level task. If a robot is equipped with an open operating system, the agent for dealing with high-level tasks is directly embedded in the robot’s middleware to add the high-level tasks ability (see Figure 4-5). As a result, the proposed architecture allows managing heterogeneous robots and elements thanks to the proposed data model.

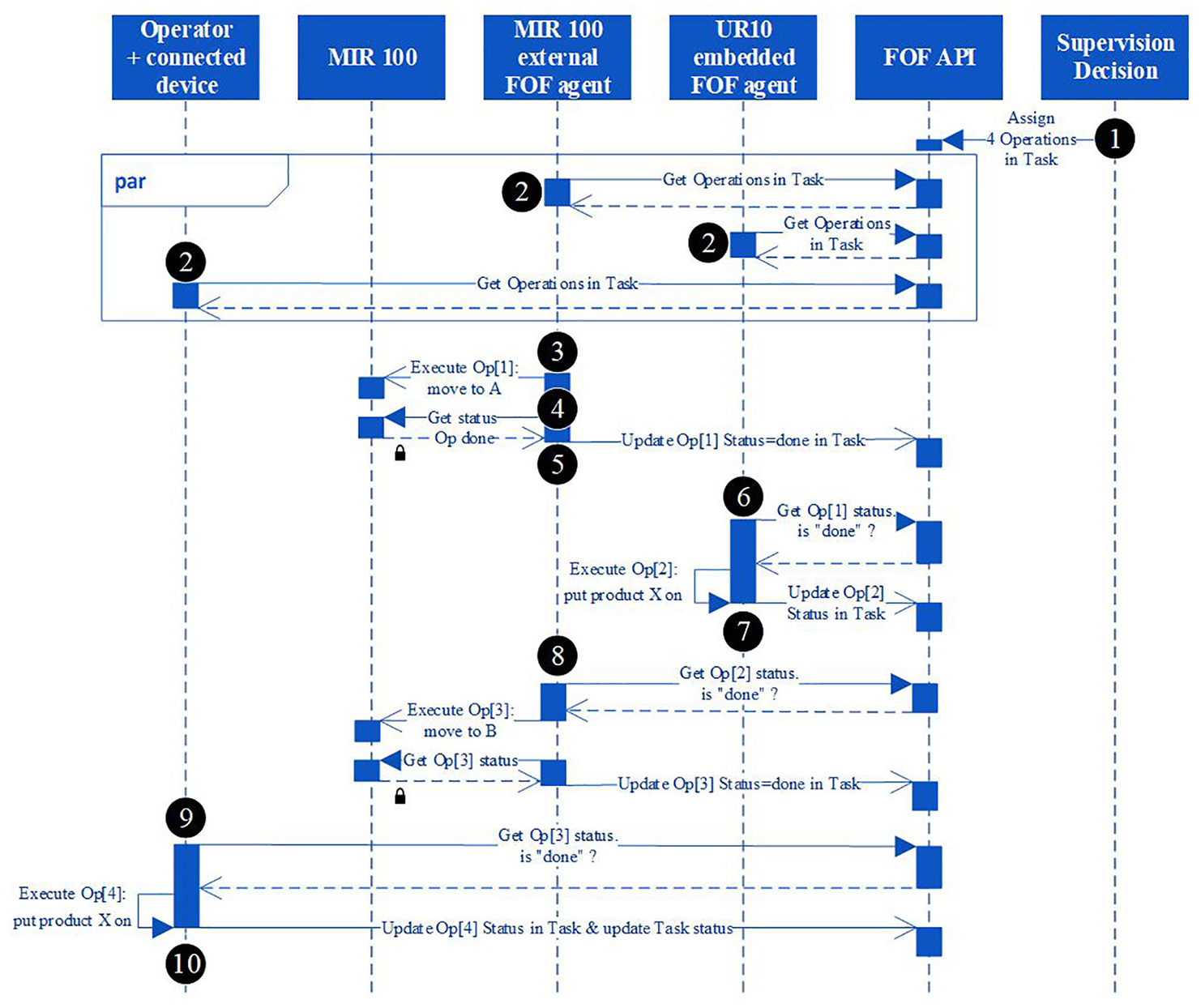

As an example, a sequence diagram for the ‘move product X from position A to position B’ task is presented in Figure 5. In this example, this high-level task is composed of four operations and involves three different agents: an operator, a MIR 100 AGV and a robotic arm UR10. As the MIR 100 is a proprietary robot, it has only the ‘publish proprietary data’ interoperability level and the ‘Execute low-level operation’ ability level. Therefore, the MIR 100 external FOF agent completes its interoperability level with ‘Update FOF data’ and ‘Read FOF data’ and ability levels with ‘Execute FOF high-level task’.

Sequence diagram of the ‘move product X from position A to position B’ with the proposed FOF architecture. Lock icon means that agents are exchanging through the proprietary format.

First, the ‘Supervision Decision’ agent creates a new Task composed of four successive Operations, which are respectively assigned to the MIR 100, UR10, MIR100 and Operator (see Figure 5-1).

First, as every agents are regularly pulling information next to the FOF API, each ones are getting operations they are involved into (see Figure 5-2).

In this scenario, the ‘MIR 100 external FOF agent’ (MIR 100 ext.) is involved in the first operation of the task. Therefore, the MIR 100 ext. sends the low-level operation event to execute a ‘move to A position’ to the ‘MIR 100’ (see Figure 5-3). Then the ‘MIR 100 ext.’ regularly gets the status of the ‘MIR 100’ move command (see Figure 5-4). When the latter is arrived to the right position, the ‘MIR 100 ext.’ updates the status of the first Operation of the Task into the FOF API (see Figure 5-5).

Meanwhile, as the UR10 agent is involved in second operation of the Task, it regularly pulls the Op[1] status next to the FOF API (see Figure 5-6). If the Op[1] is done, therefore, the UR10 executes the second operation that consists in grabbing product X and putting it on the MIR100. Once done, the status of the Op[2] is updated to the FOF API (see Figure 5-7).

As the MIR 100 is also involved into the third operation, it is moving to the point B of the factory, in the same way as the first operation (see Figure 5-8)

Finally, for the fourth and last operation, an operator is involved. It thus receives an information on his connected device that asks him to put the product X on the B place. Once done, the Op[4] status and Task status are accordingly updated to the done status.

Advanced digital twin HMI through AR or VR

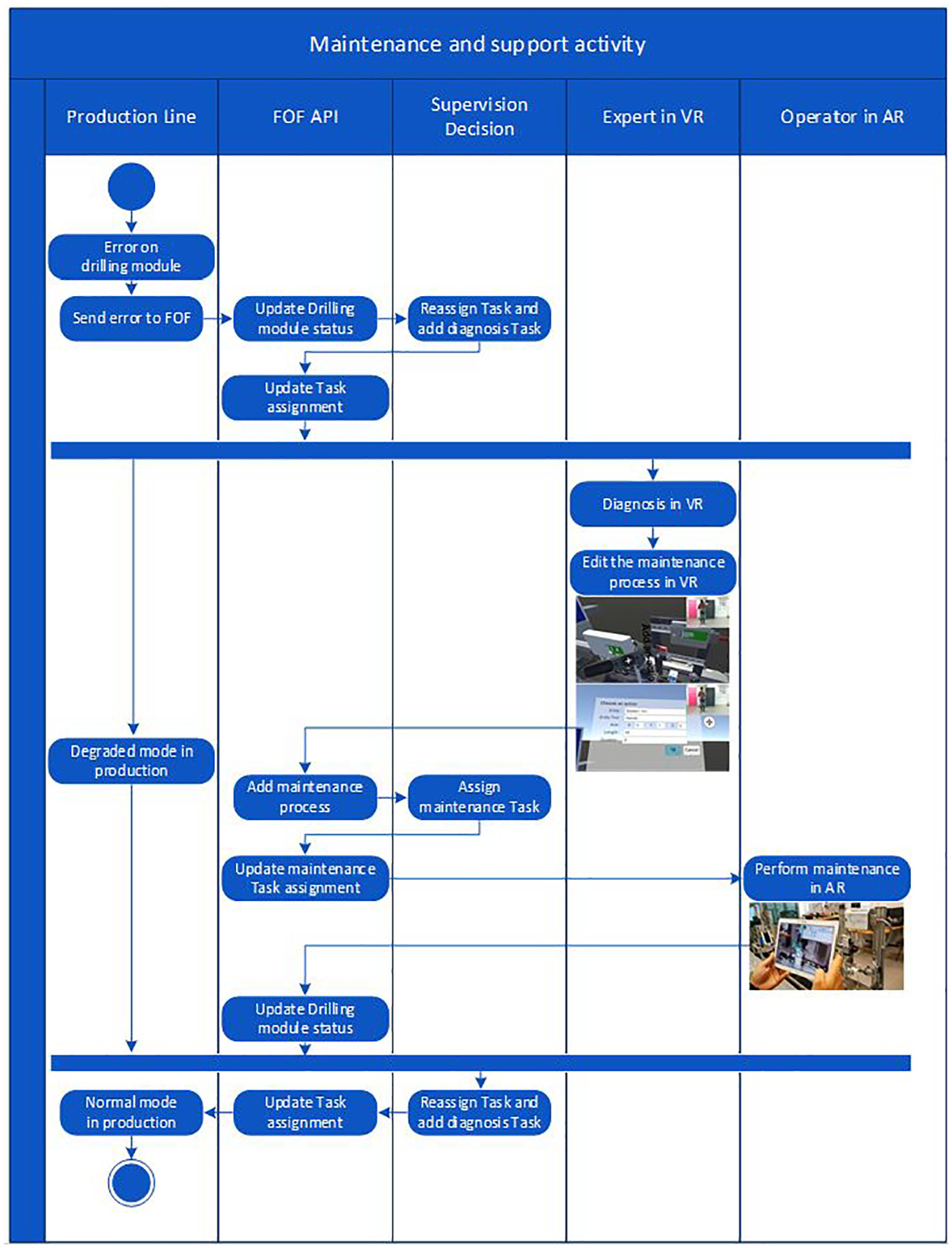

With the proposed architecture, the production line reports its status to the digital twin (see Figures 4-6 and 6). Therefore, if a problem occurs on a machine of the factory, an information about this malfunction is sent to the FOF architecture. Consequently, the ‘supervision decision’ component dynamically adapts the production by passing in degraded mode and reassigns production operations to another machine agent. Meanwhile, if this is an unknown problem, a diagnosis task is assigned to a remote expert, if he is not physically on site.

Activity diagram of the maintenance and support activity through AR and VR tools while the production is in degraded mode.

The expert can diagnosis the issue thanks to the Digital Twin used inside a Virtual Reality environment. Then, he describes the process for repairing the machine and the procedure is stored inside the FOF API. Then, a maintenance task is created and assigned to an authorised operator. Thanks to the digital twin representation, the maintenance task is performed with augmented reality through a tablet, smartphone or HoloLens, which are human-centred tools. Once done, the status of the machine is automatically updated. Therefore, the ‘supervision decision’ component is aware of the new machine status and dynamically reassigns tasks to it.

Secondly, as explained in Havard et al., 9 virtual reality, associated with simulation, is very useful for supporting decision to stakeholders. In fact, it allows visualising, at scale one, the behaviour of an element of the production system, while using simulation, in order to get a realistic behaviour.

Discussion and possible improvements of the architecture

In the era of globalised supply chain, cloud manufacturing is one of the main tools towards knowledge-based production. This evolution is necessary since there is an increasing demand for efficient operations in global networks in a turbulent environment. It requires continuous adaptation of manufacturing systems, and use condition monitoring to keep a healthy system. Consequently, tools for future engineering and manufacturing management are necessarily digital and distributed. The proposed architecture in this article goes in that direction, by providing an adaptable and interoperable tool allowing a modular design, parameterisation, supervision and control of a factory of the future. It can be easily integrated in messages exchanged between each agent and the central database. These data is used in order to manage condition monitoring. It enables high-level decision-makers to be quickly aware of the real state of the workshop. This will allow them making more accurate strategic and tactical decisions.

Despite the numerous advantages of the proposed architecture and data model, it presents some limitations. The first limit is about the uncontrolled behaviour of agents when data are not transmitted on time, especially if the task needs collaboration between different heterogeneous elements. To reduce the probability to completely lose the connection with any agent in the system, the supervision system is able to collect the same data from different sources. For instance, if a robot transports a part to a machine and it cannot update its task status, the machine can update this information because it received the part. Another limitation is the non-adaptation to huge data exchanges. This issue is not considered in the paper because it is assumed that several technical solutions are developed in order to ensure Big Data transmission such as the fog computing and 5G.

Conclusion

Based on a review of components and needs in Cyber-Physical Production System, this paper proposes a Factory Of the Future (FOF) architecture which allows gathering data in a unified way and thus manages interoperability of heterogeneous systems (proprietary robots, open robot, machines and human-centred tools).

The architecture proposed is based on three interoperability levels and three ability levels of each agent involved in the factory 4.0 (operators, robotic arm, Automated Guided Vehicle (AGV), decision tools, augmented reality or virtual reality tools and simulation tools). The FOF model allows each agent to define its capability to perform operation (‘grab’, ‘move’, ‘drill’). Thanks to that, the paper shows that agents (operators, robotic arm, AGV, etc.) are able to realise high-level tasks by breaking them down into low-level operations and then collaboratively and synchronously executing them.

Then, the paper focuses on some use cases showing the factory improvements about responsiveness and resilience toward disruption. Indeed, the FOF data model allows knowing factory status in near real-time. Therefore, some supervision decision tool can update the operation assignment according to the agents’ status in order to adapt production to the unplanned events. Then, it shows that the proposed architecture makes factory more flexible and agile.

Moreover, data stored inside the FOF database allows usage of human-centred tools such as augmented reality and virtual reality to support human resources and helps them to take bright decisions.

For the moment, the decision is defined once in the process and thus made by the same agents. Future works will focus on integrating REST OPC-UA and on dynamically updating ‘decision making’ that an agent can perform during the production process.

Footnotes

Acknowledgements

This part of the work is done within the framework of the project NumeriLab, financed by the Normandy region and the FEDER.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.