Abstract

The integration of process planning and scheduling is a very important problem because it proposes a new idea for improving the performance of a manufacturing system. At present, most existing studies on this problem are static, which assumes that all the jobs to be processed are available in the beginning. However, the practical processing situation is dynamic, such as new job arrivals. Since dynamic production situations are different with static cases, it is important to study the characteristics of actual production situations. In this article, the characteristics of dynamic integrated process planning and scheduling problem with job arrivals are studied. A novel mixed integer linear programming model is established to accommodate new job arrivals, and three criteria (makespan, stability, and tardiness) are considered. New periodic and event-driven rescheduling strategies are presented. In the proposed strategy, newly added jobs together with uncompleted jobs will be rescheduled by non-dominated sorting genetic algorithm-II to obtain the optimal Pareto front when the rescheduling procedure is triggered. The entropy-based weight assigning method together with the Technique for Order of Preference by Similarity to Ideal Solution method is adopted to determine an appropriate schedule among the resultant non-dominated solutions. A set of well-known benchmark instances is employed to investigate the characteristics of the dynamic integrated process planning and scheduling problem with random job arrivals. Experimental results show that the length of a scheduling interval, the number of newly added jobs, and the shop utilization have an important influence on the efficiency of a manufacturing system.

Introduction

Process planning and scheduling are two important functions in a manufacturing system.1,2 The former focuses on determining appropriate machine tools, resources, and processing schemes,3–5 while the latter allocates operations to each machine with certain criteria. Usually, based on the divide and rule principle, 6 the two functions are performed in a successive and independent sequence:7–9 scheduling will be carried out after the process planning. This paradigm ignores the inherent relationship between the two functions. Drawbacks caused by splitting the two functions, for example, low facility utilization, high scheduling conflicts, and more human intervention, have been well identified and discussed.7,10–12 Realizing the existing drawbacks, researchers have developed lot of approaches to address the integrated process planning and scheduling (IPPS) problem. Two kinds of solution methodologies are covered in existing papers: mathematical programming approaches and approximation approaches, for example, meta-heuristic algorithms and artificial intelligence methods. Özgüven et al. 13 proposed mixed integer linear programming (MILP) models to deal with the problem; however, their models are suitable for small-scale instances only. Tan and Khoshnevis 14 transformed a non-linear model into an MILP model for the IPPS problem. Nevertheless, due to the excessive CPU time, applications of mathematical programming are limited. Meta-heuristic-based algorithms have garnered tremendous attentions and have been widely adopted due to their impressive features, such as short computational time. Kim et al. 15 are among the pioneers who studied the IPPS problem; they reported a typical set of benchmark instances (Kim’s benchmark) which are further solved by their developed symbiotic evolutionary algorithm (SEA). Later, Shao et al. 16 proposed a genetic algorithm (GA)-based approach for solving small-scale IPPS instances. Concerning Kim’s benchmark, Lian et al. 7 suggested a novel algorithm, the imperialist competitive algorithm (ICA), to settle this problem although the improvements of Kim’s benchmark are limited. Li et al. 17 further developed another effective GA-based hybrid algorithm for the IPPS problem; in their algorithm, a tabu search with an effective neighborhood structure is employed. 18 Later, they again developed a novel active learning genetic algorithm (ALGA) by introducing an active learning effect into GA. 19 In recent years, Lihong and Shengping 20 suggested an improved genetic algorithm (IGA) for this problem, and new genetic representations with genetic operators were developed. Substantial progress has been observed in Zhang and Wong’s work; 21 an object-coding GA for the IPPS problem was reported in their work. Other than the approaches mentioned above, other approaches can also be observed, including the grammatical optimization approach, 22 the simulated annealing-based optimization approach, 23 the modified particle swarm optimization (PSO) algorithm, 24 and agent-based methods. 25

A great deal of IPPS problems have been studied. However, a manufacturing system in the real world is often subject to many sources of uncertainty. One main source of uncertainty lies in random job arrivals. Thus, a predefined schedule will fail unless a timely new schedule is prepared. In a dynamic situation, a new feasible schedule, which takes in account the new jobs and unfinished jobs, will be generated when a new job arrival occurs. The process of generating a new feasible schedule in response to the unexpected disturbances is recognized as the rescheduling process. There are two kinds of rescheduling strategies in general: periodic and event-driven rescheduling strategies. In periodic rescheduling, a new schedule is generated after a certain scheduling interval. In event-driven rescheduling strategy, the rescheduling procedure is triggered whenever new job arrivals occur. As claimed in relative papers,26,27 the new schedule may suffer from a greater deviation to the original schedule and the initial schedule will not be preserved any more. In such a case, pre-allocated resources for each machine, such as raw materials, will be altered. What’s more, the unfinished jobs may be put off, and hence, the tardiness should be considered as another criterion in dynamic scheduling except the makespan and the stability criteria. In this article, the multi-objective issue is addressed in a Pareto manner.

Existing papers pay more attention to the rescheduling strategies for job shop scheduling problems (JSPs)27–29 and flexible job shop scheduling problems (FJSPs). 30 The only two studies about the rescheduling of IPPS problems are reported by Lv and Qiao 31 and Wong et al., 26 respectively. Lv and Qiao considered new job arrivals, machine breakdowns, and order cancelations simultaneously, and their algorithm achieved satisfactory makespan values. Wong et al. presented a hybrid multi-agent system (MAS) to address the dynamic IPPS problem with machine breakdowns and new part arrivals. However, it is worth mentioning that the study on the impacts of rescheduling has scarcely been considered. It cannot be denied that different parameters in rescheduling, for example, the length of a scheduling interval, have important impacts on the performance of schedulings. However, one cannot tell which disturbance is the dominant one and how it affects the performance of a manufacturing system. The primary goal of this research is to give a minute study on the factors (e.g. interval length) that affect the performance of a manufacturing system with new job arrivals over time. We consider both periodic and event-driven rescheduling strategies in this article, and new job arrivals are deemed as disturbances. An MILP model for the dynamic IPPS problem is established. Then, the non-dominated sorting genetic algorithm-II (NSGA-II)-based solution methodology for rescheduling is presented. At each rescheduling point, a new schedule is generated for the coming scheduling interval. To strike a compromise between the three criteria (makespan, tardiness, and stability), the entropy-based weight assignment method and the Technique for Order of Preference by Similarity to Ideal Solution (TOPSIS) decision-making strategy are employed to determine the most appropriate schedule for the coming scheduling interval. Finally, the Kim’s instances are adopted as the test-bed instances in the experimental study. 15

The remaining sections are organized as follows: Section “Problem description” gives the preliminaries of the dynamic IPPS problem. A novel MILP model will be proposed in section “Mathematical modeling.” In section “NSGA-II-based solution methodology,” we describe the details of the proposed NSGA-II-based methodology. Experimental results with discussions will be presented in section “Experiments with discussions.” Some concluding remarks are given in section “Conclusion.”

Problem description

In this research, a mathematical model is established to express the inherent nature and important characteristics of the dynamic IPPS problem. The proposed model is extended from the model of the static IPPS problem.

19

The proposed model generates a schedule for the next rescheduling interval, and the subscript

The IPPS problem can be defined as follows:

24

Given a set of

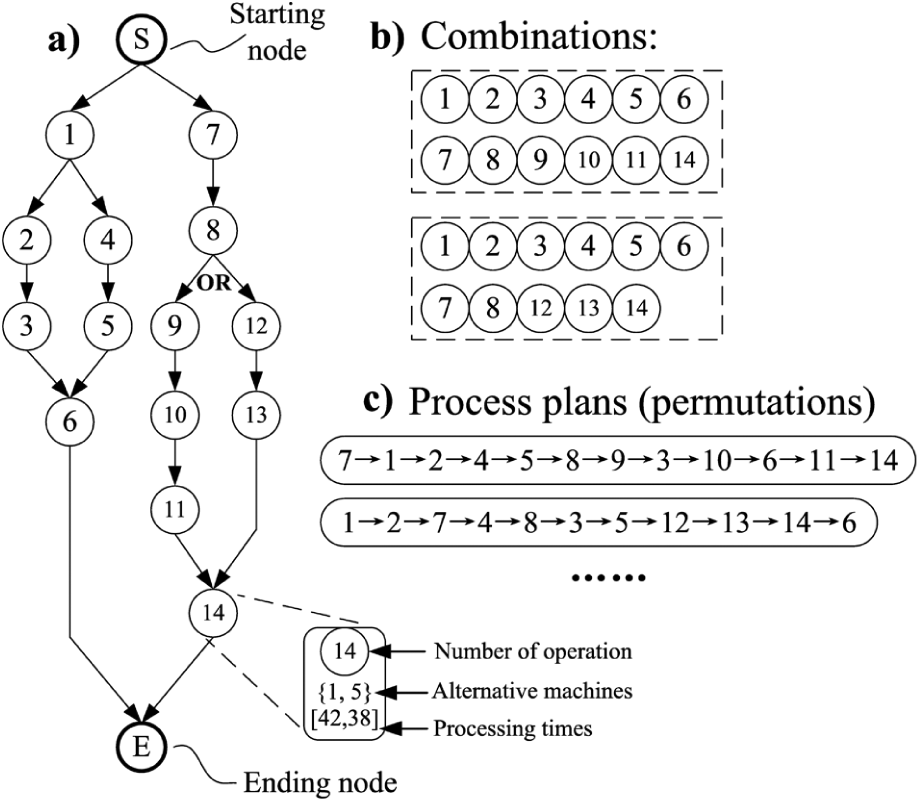

This problem can be expressed by a network graph as illustrated in Figure 1(a)). In the network graph, the starting node and the ending node are two dummy nodes which represent fictitious operations with processing time zero and do not require any machines. Other nodes are operation nodes. In an operation node, the operation number with its alternative machines and corresponding processing times are specified. An arrow between two nodes specifies a precedence relationship of the two operations: if operation node A points to node B, then operation B should follow operation A directly or indirectly. Moreover, if a bifurcation is marked with “OR,” this means that only one OR link path of the two should be traversed. In other cases, the two link paths of a bifurcation both should be traversed (see Figure 1(a)).

A network graph.

For a given network graph, operation combinations can be first determined by visiting different OR link paths. In Figure 1(b), there are two combinations. After that, a process plan can be generated by adopting one of the feasible operation permutations generated from a combination. Two possible process plans, generated from the two combinations, are given in Figure 1(c)).

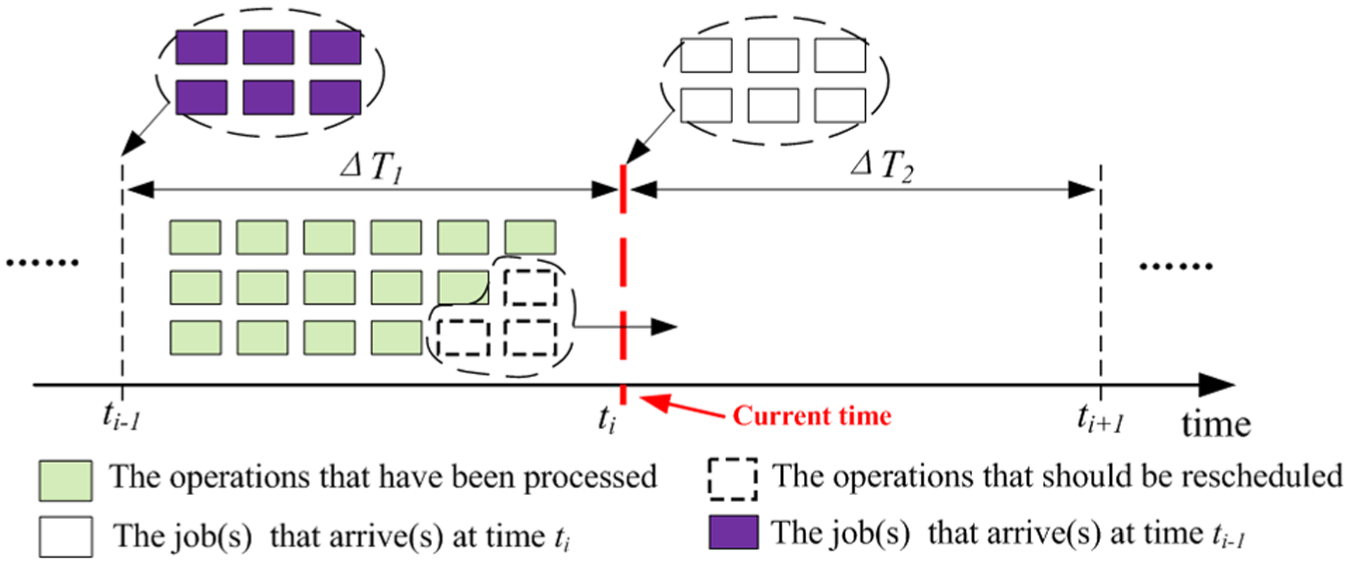

In a real job shop, as illustrated in Figure 2, jobs arrive continuously over time at either specified or random arrival dates. In each rescheduling point

A rescheduling strategy.

Mathematical modeling

The MILP model of the dynamic IPPS problem is extended from the models of the static and mono-objective IPPS problem,19,32 and it is established based on the following assumptions:

Jobs and machine tools are independent, and all the machines are available at time zero.

Each machine can handle at most one job at a time, and different operations of a job cannot be processed at the same time.

Job pre-emption is not allowed.

Within a rescheduling interval, a job will be immediately transferred to another machine for the next operation after the current operation is finished with the time for transferring neglected. Between different intervals, the starting time of an unfinished job depends on the new schedule.

Setup time of an operation can be included in the processing time.

Notations, sets, parameters, and variables used in this model are furnished in Appendix 1.

In this model, the letter “

Objectives

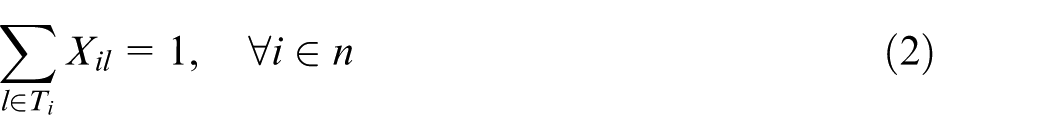

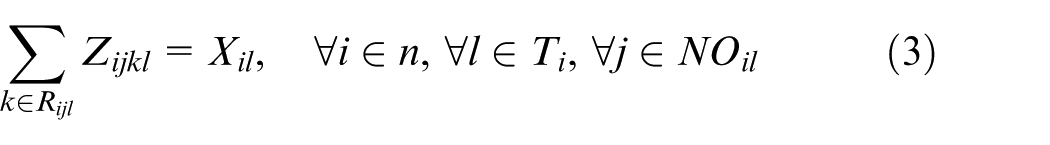

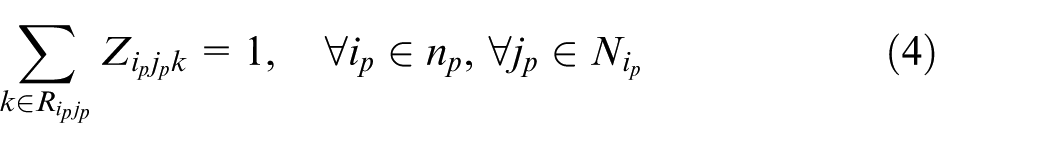

For new jobs, each job should select exactly one process plan as stated in constraint set (2). For the unfinished jobs in last interval, corresponding process plans have already been determined

The operations belonging to the unselected process plans are eliminated; otherwise, each selected operation is assigned to exactly one machine

For operations of the unfinished jobs, each of them should be processed by exactly one machine

The starting time of each new job should be no earlier than the rescheduling point

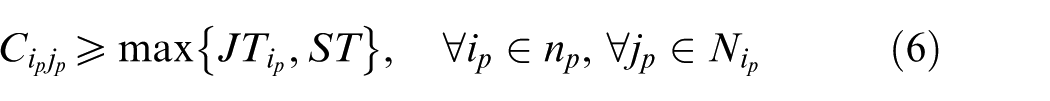

Each unfinished job should start at a proper time as shown in constraint set (6)

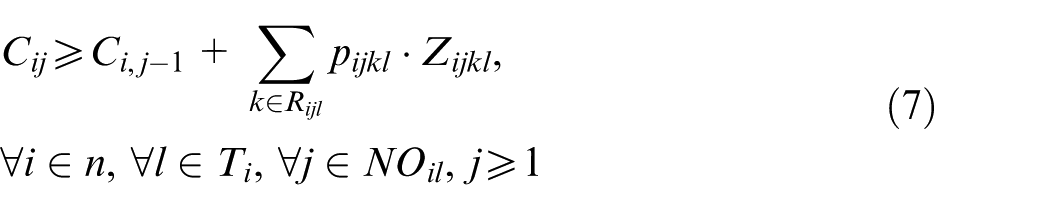

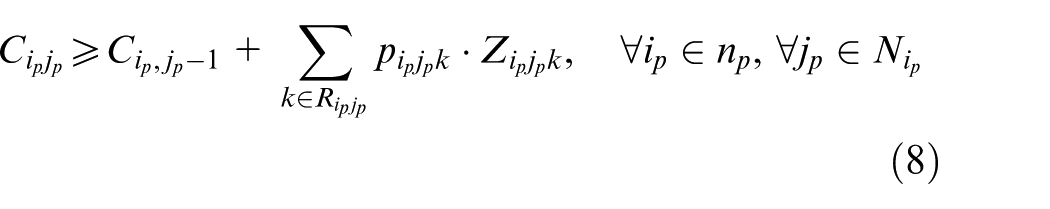

Following two constraint sets are introduced to schedule two sequential operations of the same job. Constraint set (7) schedules two sequential operations of a new job; constraint set (8) schedules two sequential operations of an unfinished job in the previous interval. After this, a correct precedence relationship between two operations of the same job is guaranteed because the completion time of the latter operation should be equal or greater than the completion time of the former plus the processing time

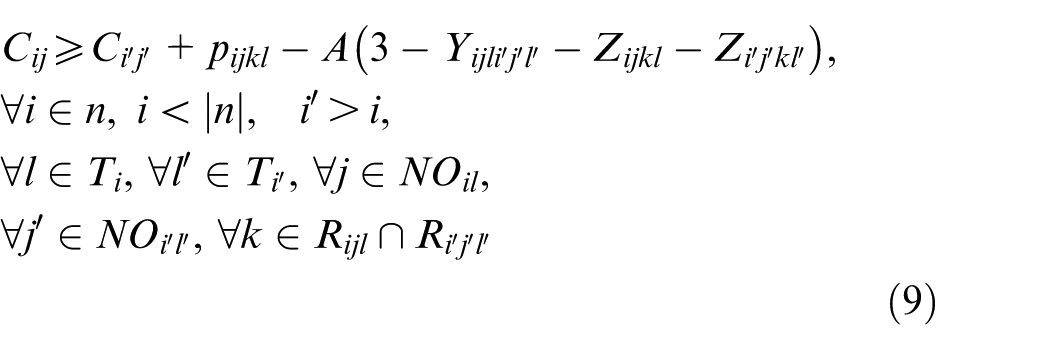

Constraint sets (9) and (10) state that a precedence relationship between two operations, which belong to two different new jobs and to be processed on the same machine, should be satisfied

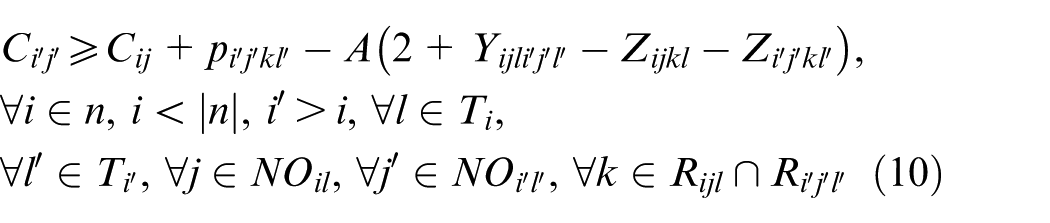

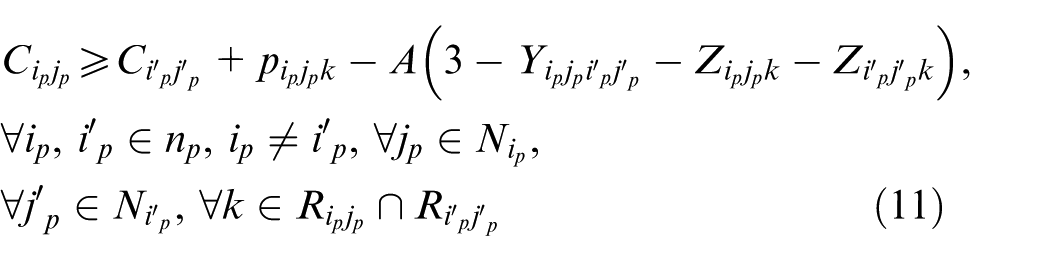

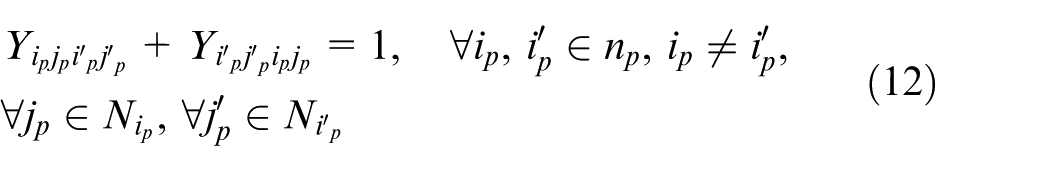

Similarly, constraint sets (11) and (12) reschedule two unfinished jobs on the same machine such that a precedence relationship between two unfinished jobs on the machine is established

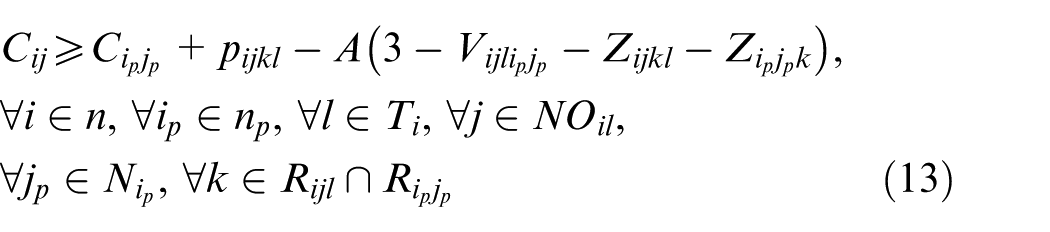

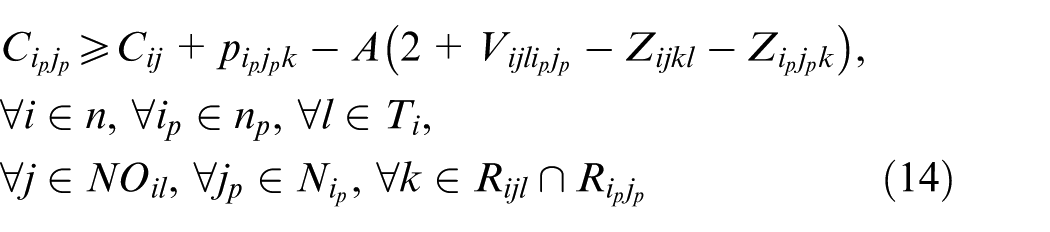

Since there are two kinds of jobs—the new jobs and the unfinished jobs—a precedence relationship between a new job and an unfinished job on the same machine is specified by constraint sets (13) and (14)

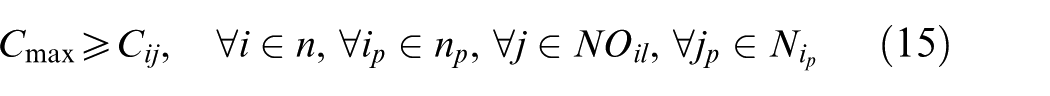

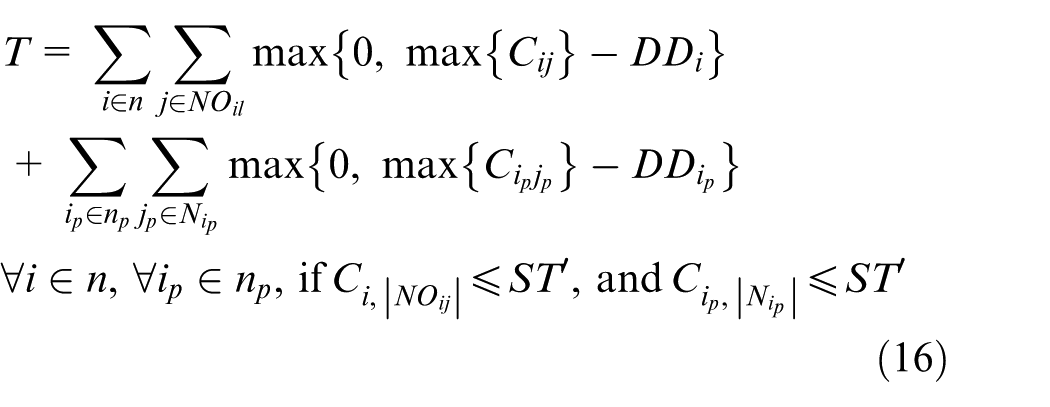

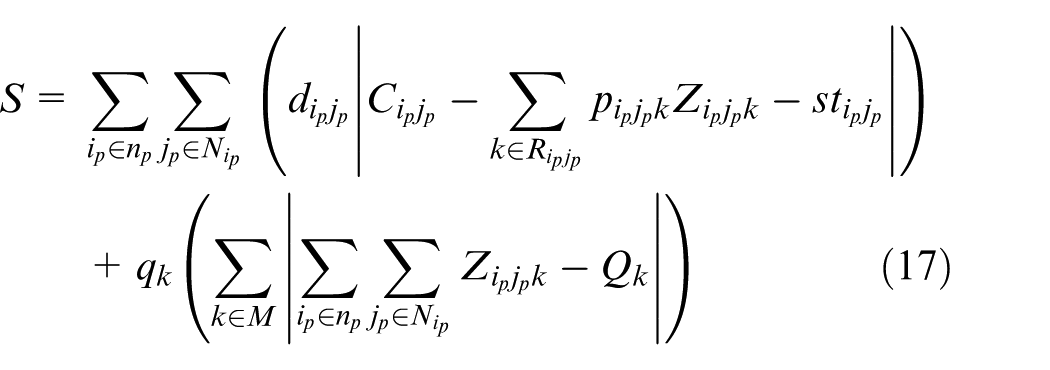

Constraint sets (15), (16), and (17) determine the values of three objectives. Constraint set (15) determines the makespan, and constraint set (16) determines the total tardiness of all the job

The last objective is about the stability. Two stability indicators related to the part’s and machine’s deviation costs are measured in constraint set (17). Once an operation is pre-scheduled on a machine, corresponding manufacturing resources, such as raw materials and human workers, are expected to be ready right before its starting time. Any changes of its starting time will alter the resource allocation, and hence, the resources are either expedited or delayed to cope with the new schedule.

26

In constraint set (17),

NSGA-II-based solution methodology

Since the dynamic IPPS problem is an extension of the FJSP, it is certainly a strong NP-hard problem. On the other hand, the MILP formula for this problem described above is a multi-objective problem. In this study, the well-known NSGA-II 33 is introduced to cope with the multi-objective dynamic IPPS problem. NSGA-II is deemed as the most powerful meta-heuristic algorithm to address multi-objective problems. The details of the procedure will be described later.

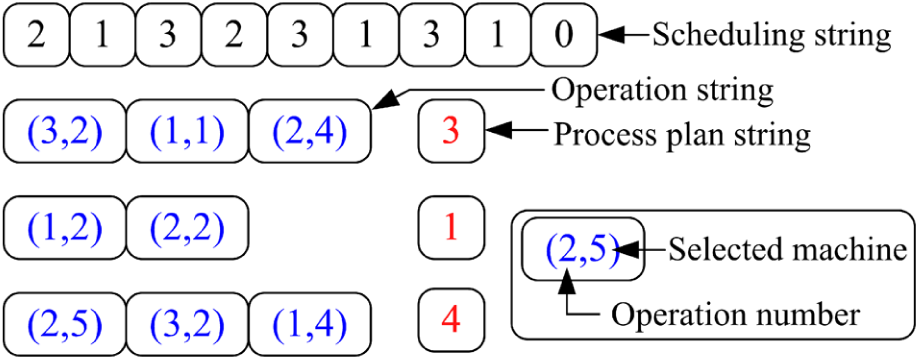

Encoding and decoding

The chromosome structure is illustrated in Figure 3. A chromosome consists of a scheduling string, some process plan strings, and corresponding operation strings. The scheduling string shown in Figure 3 adopts an operation-based coding scheme.

34

This coding scheme brings convenience to the decoding process. For a newly added job, the job number in this string appears

The coding scheme.

Other two kinds of strings are about process planning. There are a total of

The active scheduling procedure is adopted to transform the chromosome into a feasible schedule scheme. A number

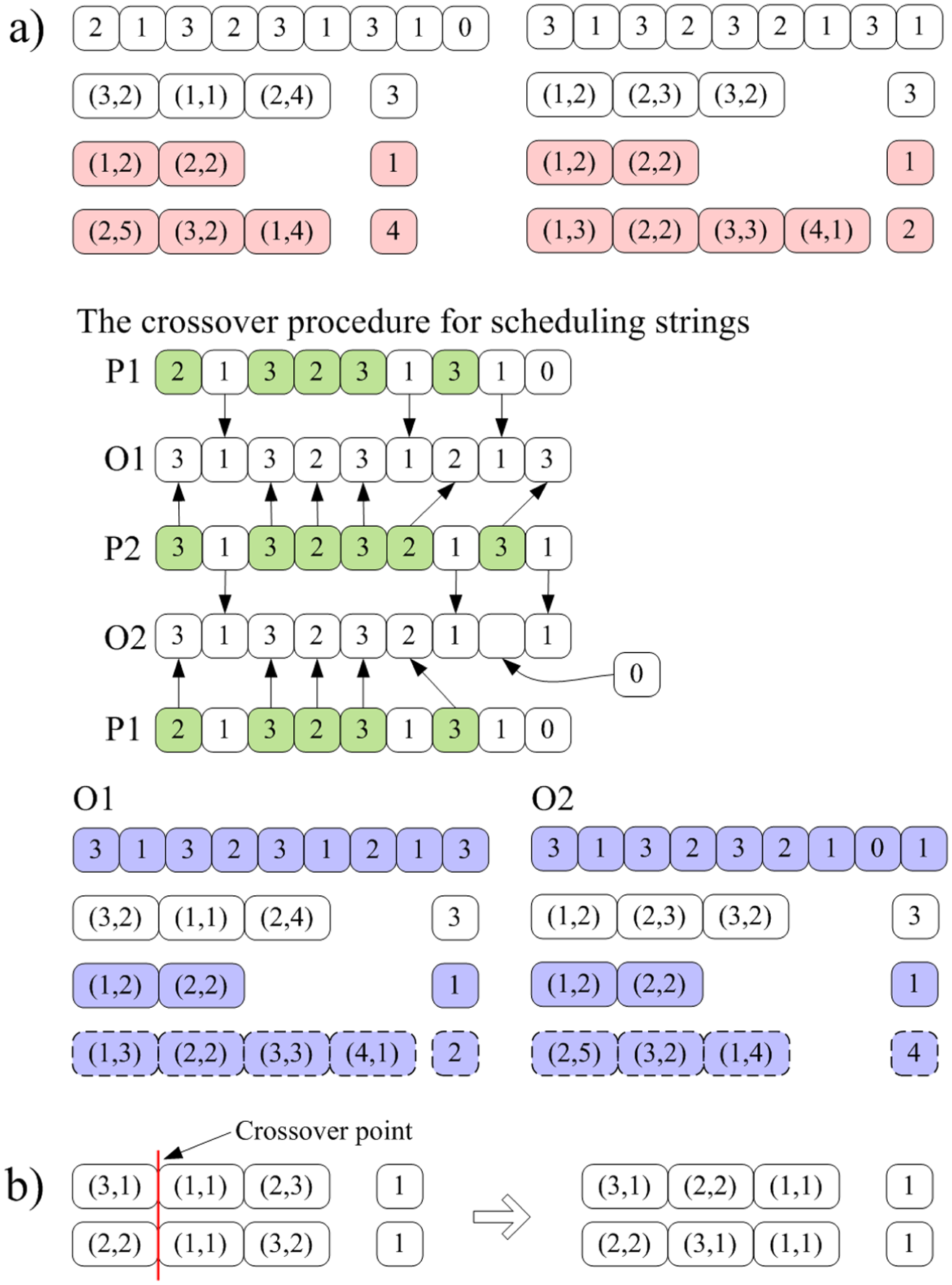

Genetic operators

Figure 4 illustrates the crossover procedure. Randomly exchange the operation strings together with corresponding process plan strings of some randomly selected jobs in two individuals; other operation strings and process plan strings in both individuals are kept still. Then, two empty scheduling strings O1 and O2 are initialized. The job IDs in the scheduling string of parent 1 (P1), which reflect the unselected jobs, are passed on to the same positions as in the offspring 1 (O1). These job IDs are removed from parent 2 (P2), and the remaining elements are copied into the undetermined positions in O1 in the same order as they appear in P2. A repairing procedure is adopted to resolve the illegitimacy; it deletes redundant symbols or adds necessary symbols after checking the actual number of operations of each selected job. Figure 4(a)) gives an example. Last two jobs in two different chromosomes are selected, and a “0” is filled in a position in offspring 2 (O2) to avoid infeasibility.

Crossover operators: a) the first crossover operator and b) the second crossover operator.

In the IPPS problem, the same operation combination of a job may have different operation permutations. The proposed coding scheme allows the NSGA-II to take this advantage by performing the second crossover operator as shown in Figure 4(b). An order crossover (OX)-based one-point crossover method is adopted. If two operation strings of a job in two individuals adopt the same combination, this crossover procedure is performed. According to Figure 4(b)), the two operation strings have the same operations because they both adopt the first combination; after that, two new feasible operation stings are generated with new feasible permutations.

For the selection operator, a simple tournament selection is adopted. The mutation procedure is performed by changing a machine for an operation and swapping two numbers in two randomly selected positions in the scheduling string.

Decision method

After the NSGA-II procedure, a Pareto front which contains several non-dominated solutions will be generated. However, only one schedule will be selected for the forthcoming scheduling interval. The TOPSIS method, 36 based on the concept that the chosen alternative should have the shortest distance from the ideal solution and farthest from the negative-ideal solution, is adopted. A set of weights for all the objectives in TOPSIS is generated by the entropy-based weight assigning method. 36

Objective function

In this article, we consider three objectives: makespan, tardiness, and stability. Makespan is defined as the total time required to process all the jobs. It is calculated by simply taking the difference between the starting time (zero, in this article) and the completion time of the last job. Tardiness is defined as the sum of the difference between the completion time and due date for each job in the case where the job is finished after the due date. For stability, two dimensions of stability are modeled as formulated in equation (17). The first measure is about the total deviations of the starting times between the new schedule and the old schedule for all the unfinished jobs. The second measure associates penalties induced by machines’ deviation costs; it is reflected in the changes of the numbers of unfinished operations on machines as expressed by the second term of equation (17).

Key parameters

In event-driven rescheduling, jobs will arrive at random times over the whole scheduling horizon. A widely accepted fact is that a Poisson distribution provides a good approximation for dynamic job arrivals in a job shop.

37

The arrival rate,

where

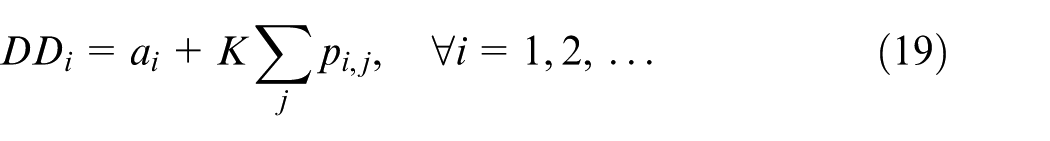

The total work content (TWK) rule is adopted to generate due dates for each job; it equals the sum of the job arrival time and total job processing times

where

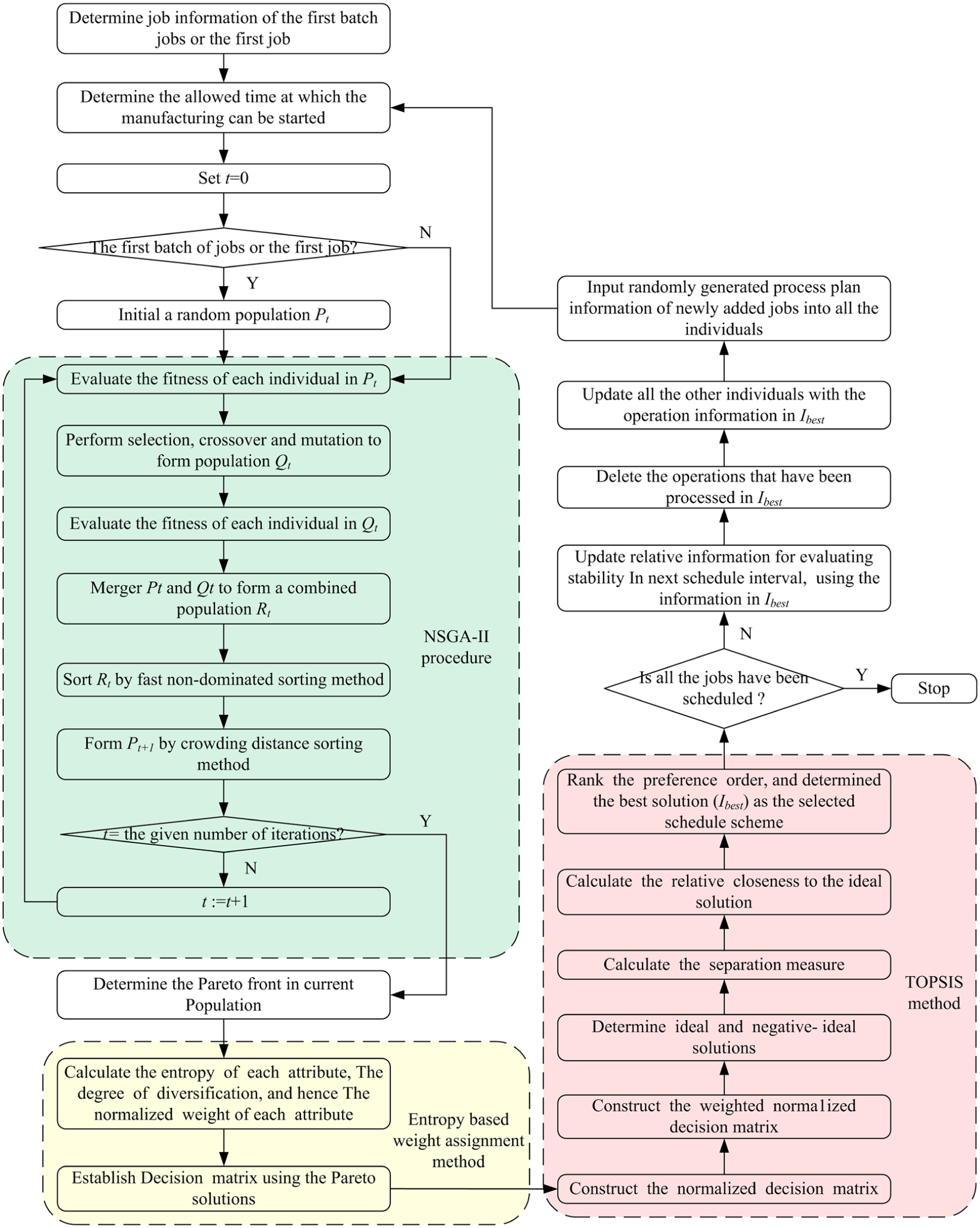

The main framework

Figure 5 illustrates the working flow of the algorithm. At each rescheduling point, unfinished operations are combined with the newly added jobs and a new schedule is established.

The flow chart of the dynamic scheduling strategy.

In event-driven rescheduling, the rescheduling point is the time at which a new job arrival occurs. In a real job shop environment, jobs arrive continuously over time, and newly added jobs will not be scheduled immediately. Instead, new jobs will be accumulated and considered for processing at the next rescheduling point. To make the problem correspond to the real-world situations as close as possible and make it manageable for analysis, we assume that all the newly added jobs during an interval can be added at one time at the forthcoming rescheduling point.

Experiments with discussions

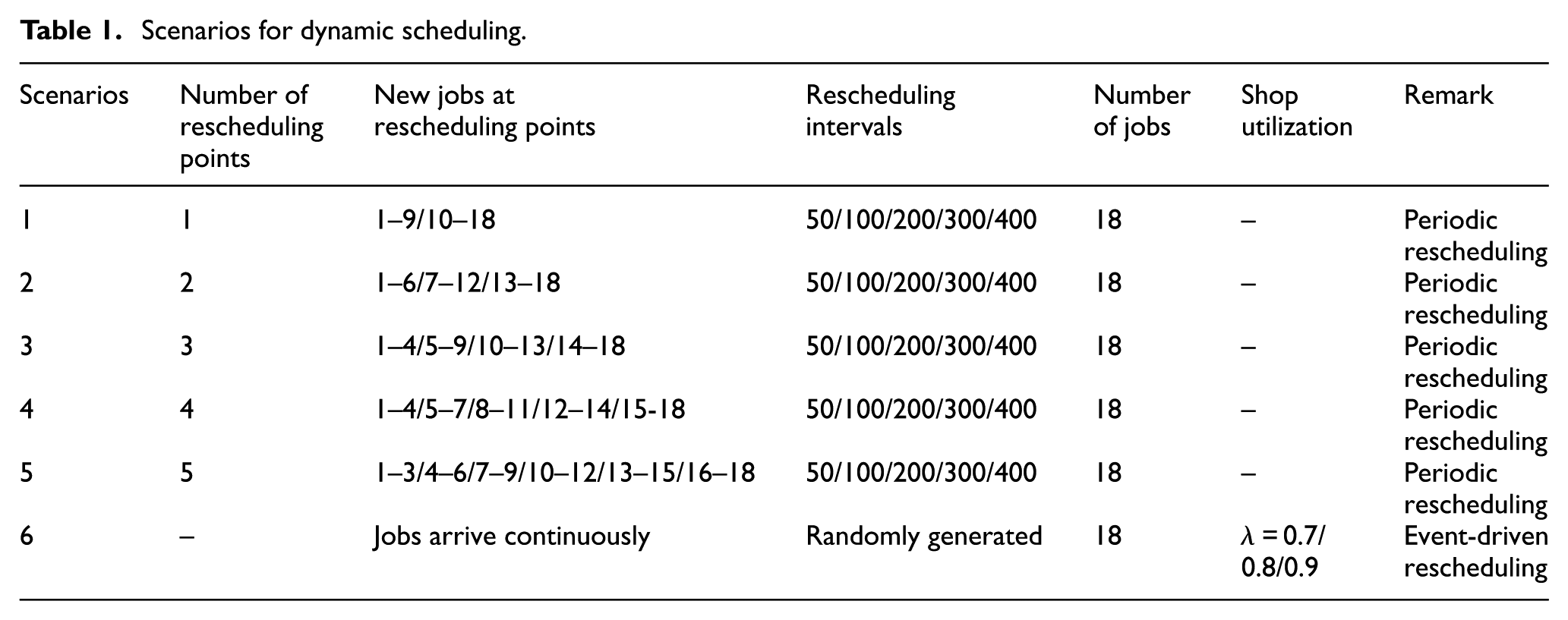

To study the effect of the factors and different scenarios on the performance of the manufacturing system, experiments are performed. We adopt the typical benchmark data proposed by Kim et al. 15 18 jobs will be processed by 15 machines. It has been extensively reported that a job shop with six machines is adequate to represent the complexity involved in a large dynamic job shop. 38 We consider both the periodic and event-driven rescheduling methods in our experiments with the scenarios listed in Table 1. Scenarios 1–5 are about periodic rescheduling, while the last scenario in Table 1 concentrates on event-driven rescheduling. In both dynamic rescheduling methods, the algorithm terminates when all the 18 jobs are finished (each case has the same total workload). All the experiments in this article are implemented in C++ and tested on a PC with Intel i5-3470 3.2 GHz CPU and 4 GB of RAM.

Scenarios for dynamic scheduling.

Parameter setting

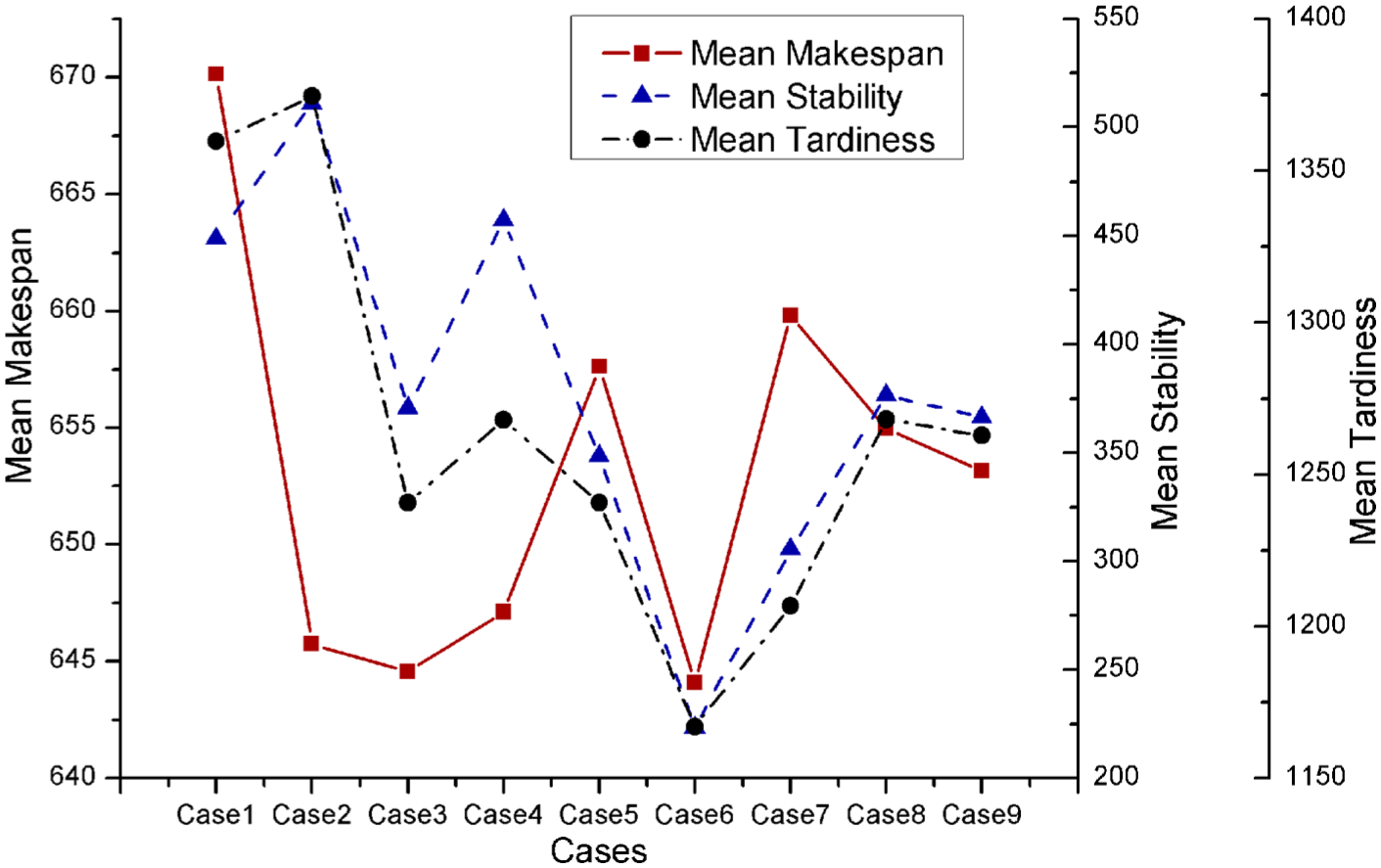

Parameter adjustments are performed to seek a good parameter setting since appropriate parameters are efficacious on the solution quality. The first scenario in Table 1 with rescheduling interval 200 is chosen to evaluate the parameters. Following values for the two parameters

Thus, nine cases are defined as follows:

Case 1:

Case 2:

Case3:

Case 4:

Case 5:

Case 6:

Case 7:

Case 8:

Case 9:

We have run the algorithm 10 times on the considered scenario for the nine cases. The mean values of the Pareto solutions of all the combinations are plotted in Figure 6. This figure shows that we can obtain the best Pareto front that considers three criteria by setting

Mean values of Pareto solutions.

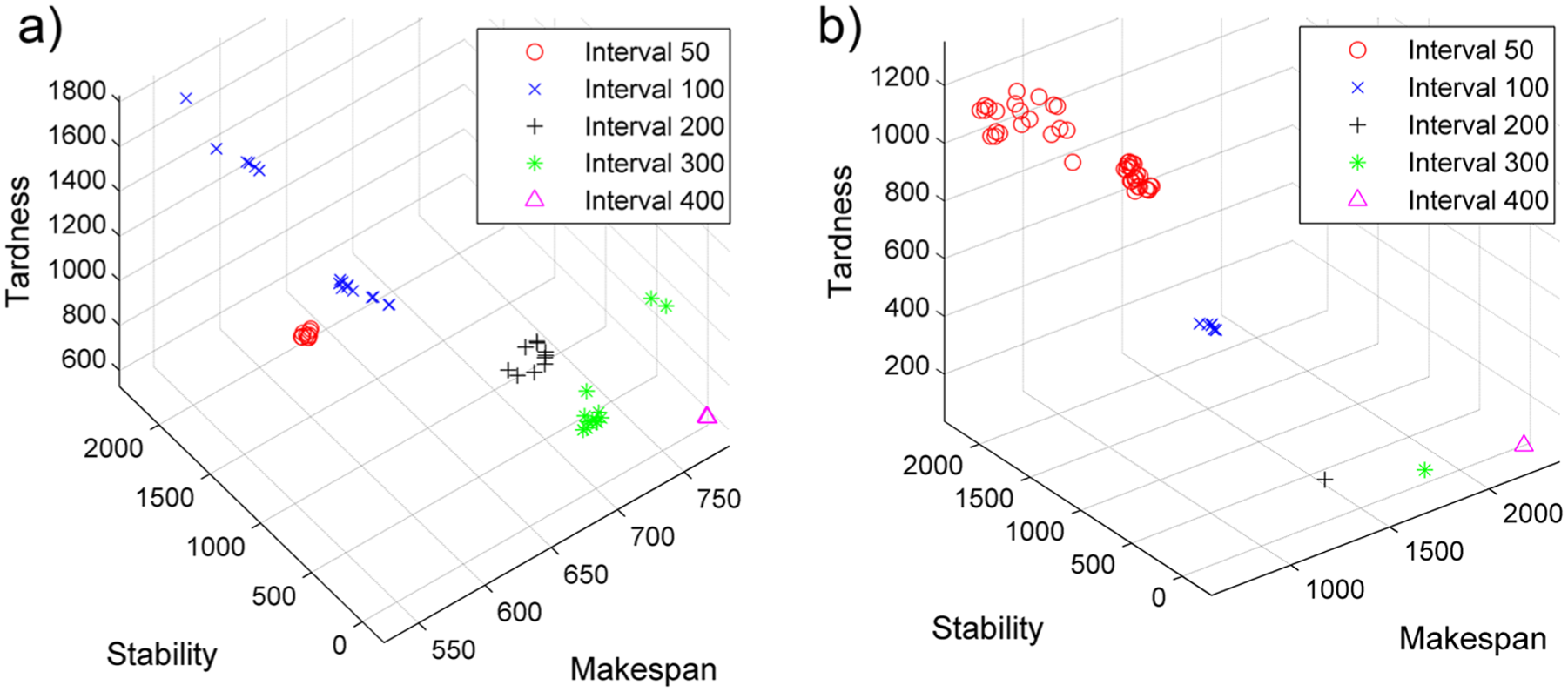

Periodic rescheduling

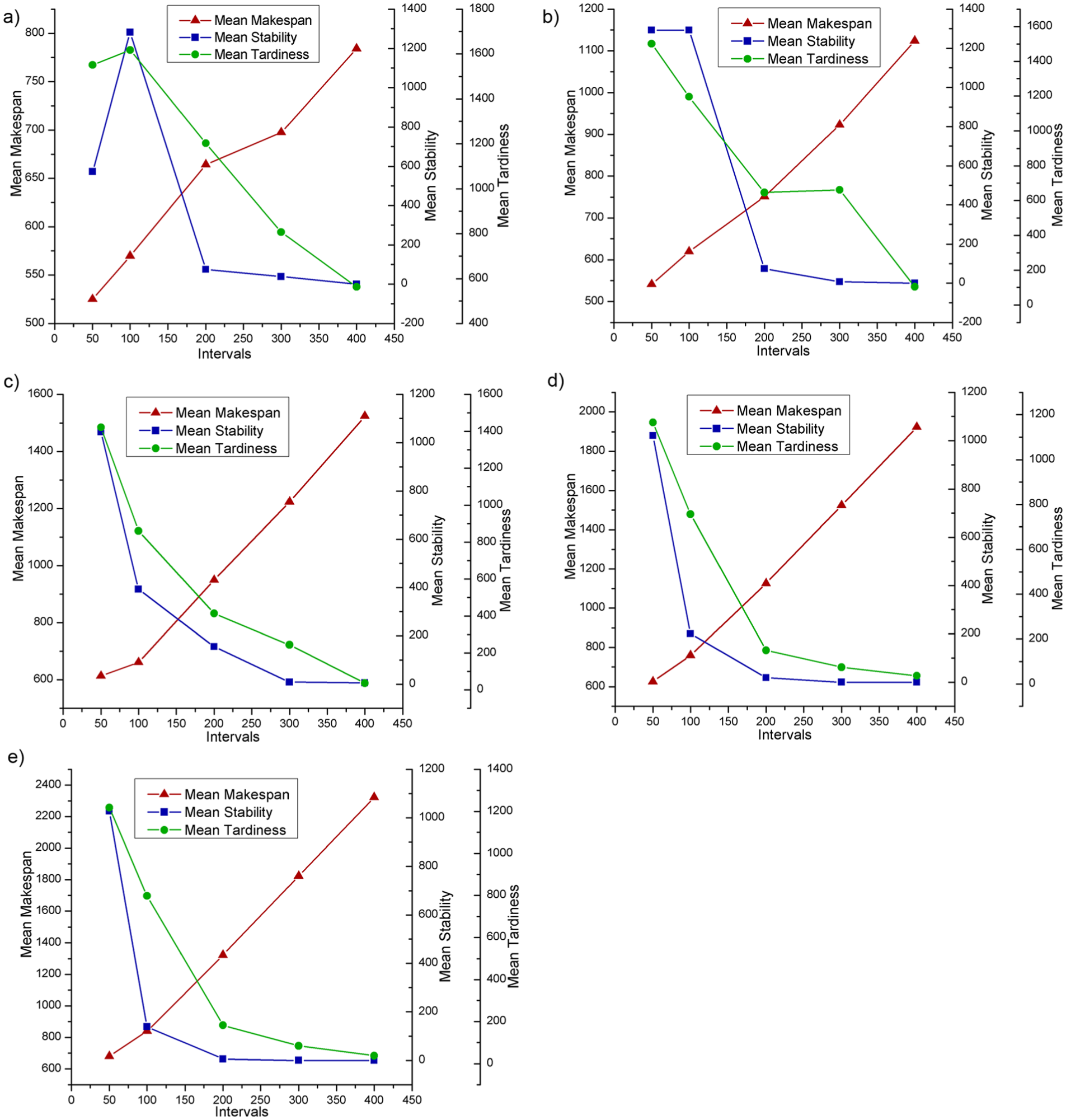

Pareto fronts for Scenario 1 with different interval lengths are presented in Figure 7(a). In Scenario 1, only one rescheduling is performed. With short rescheduling intervals, satisfactory makespan values can be ensured. However, the stability and the tardiness criteria deteriorate with short intervals. With a shorter rescheduling interval, there will be more unfinished operations, and hence, the probability of misalignments in starting times and the number of operations on a machine between the old schedule and the new schedule will increase. Moreover, the tardiness will increase with more unfinished operations although the interval is relatively short. On the contrary, the stability and the tardiness criteria have been greatly improved with long intervals although long intervals cause deteriorations in makespan values. In fact, with longer intervals, more operations can be processed in the current interval before the next rescheduling point, and it comes out that there are less unfinished operations. For the extreme case (Scenario 5) where there are more intervals, the corresponding plot is presented in Figure 7(b). It can be observed again that lengthening the rescheduling interval improves stability and tardiness criteria but deteriorates makespan measure. In addition, this also reflects that it is necessary to consider multiple objectives rather than single objective in dynamic IPPS problems because the makespan criterion conflicts with other criteria, such as the tardiness and the stability criteria, as reflected in Figure 7.

Pareto fronts of (a) Scenario 1 and (b) Scenario 5.

Figure 8(a) is the line chart of averages of the Pareto solutions of Scenario 1. As analyzed above, when the interval is less than 100, the makespan value seems acceptable. Due to the short interval length, only a few operations have the chance to be processed in the current interval. In such a case, most of the operations will be detained and processed in the next schedule. It can be observed that there is a slowdown of makespan value between the interval 200 and 300 in Figure 8(a). This indicates that most operations in the rescheduling interval can be finished without being rescheduled with such interval length. After that, the makespan value increases again. This is mainly brought on by a rise in interval length. We can also find that both stability and tardiness perceptibly receive improvements with the intervals that are larger than 300 according to Figure 8(a). For the mean stability in Figure 8(a), it decreases after an initial increase with a length between 50 and 100. We obtain the worst stability with an interval length 100, and after that, a sharp improvement occurs at the interval length 200. We study the reason behind the phenomenon. In the situation where the rescheduling interval is 50, only few operations are processed in an interval, and most operations should be rescheduled with different starting times. By narrowing the rescheduling interval, most of the unprocessed operations can be started on the same machine with almost the same starting times as the cases in the initial schedule. On the other hand, when the time interval is long enough, most of the operations can be finished in the current interval, and the stability criterion receives a large improvement. Based on such analysis, there exists a “peak point” at which the stability shows the worst performance. Thus, the optimal value of the rescheduling length in Scenario 1 should be set between 200 and 300. For Scenario 2, the optimal value of the rescheduling length is about 200 as shown in Figure 8(b). Different with the case in Figure 8(a), there is no increase in stability with a interval length between 50 and 100 in Figure 8(b). This objective receives no improvement both with interval lengths 50 and 100 because there are less new jobs for each interval in Scenario 2, and the peak point of the stability criterion in Figure 8(b) arises in advance. This conclusion is verified in Figure 8(c) and (d): with less and less new jobs in each interval, peak points in these figures appear in the leftmost regions. Hypothetically, if there were more newly added jobs in each interval, the peak point would appear later after the abscissa value 100. This left-shifting tendency of the peak point can also be observed in Figure 9(b)).

Averages of the Pareto solutions: (a) Scenario 1, (b) Scenario 2, (c) Scenario 3, (d) Scenario 4, and (e) Scenario 5.

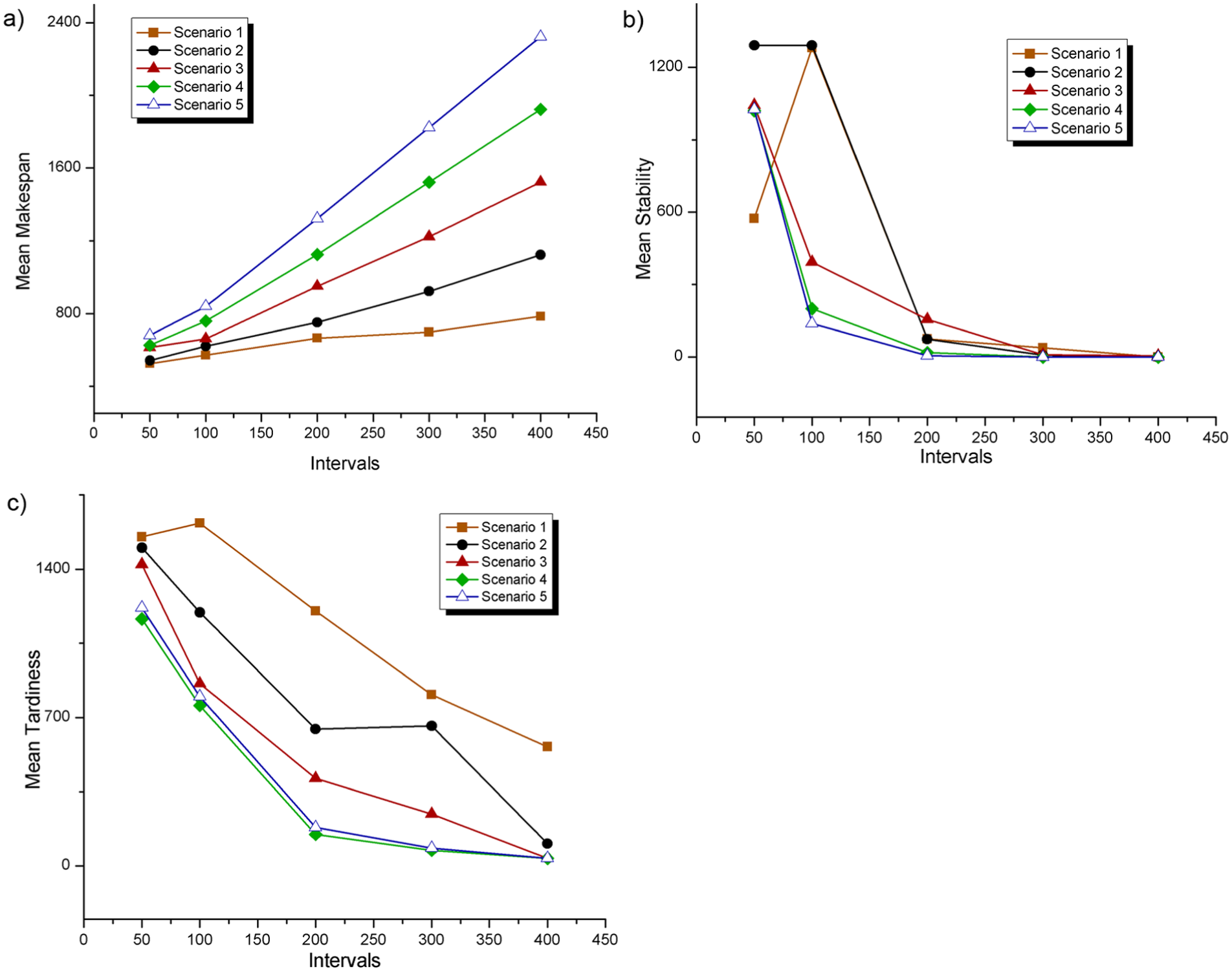

Averages of five scenarios with (a) makespan criterion, (b) stability criterion, and (c) tardiness criterion.

In Scenarios 3–5, due to fewer new jobs added in an interval, increasing the length of a rescheduling interval will lead to a dramatic increase in makespan. For the other two criteria in Figure 8(c)–(e), they both receive improvements with increasing interval length. Figure 9(a) gives the average makespan values of the Pareto solutions in five scenarios. Clearly, small rescheduling intervals will not lead to a dramatic increase in the makespan criterion; conversely, for a large rescheduling interval in a scenario which has frequent reschedulings (e.g. Scenario 5), the makespan increases dramatically. In general, the stability criterion is improved with the increasing rescheduling interval (Figure 9(b)). However, there are hardly any improvements for this criterion when the rescheduling interval is larger than 300 because the mean stability values are very close to 0 according to Figure 9(b). The tardiness criterion also improved with a large rescheduling interval according to Figure 9(c). Moreover, it receives more improvements in a scenario with more reschedulings. The reason behind this is that the unfinished jobs will have more chance to be finished in the coming intervals if there are more reschedulings. If there are less reschedulings (less intervals), unfinished jobs will certainly have less chance to be finished because they may be detained to the final interval, and hence the tardiness criterion may deteriorate.

Event-driven rescheduling

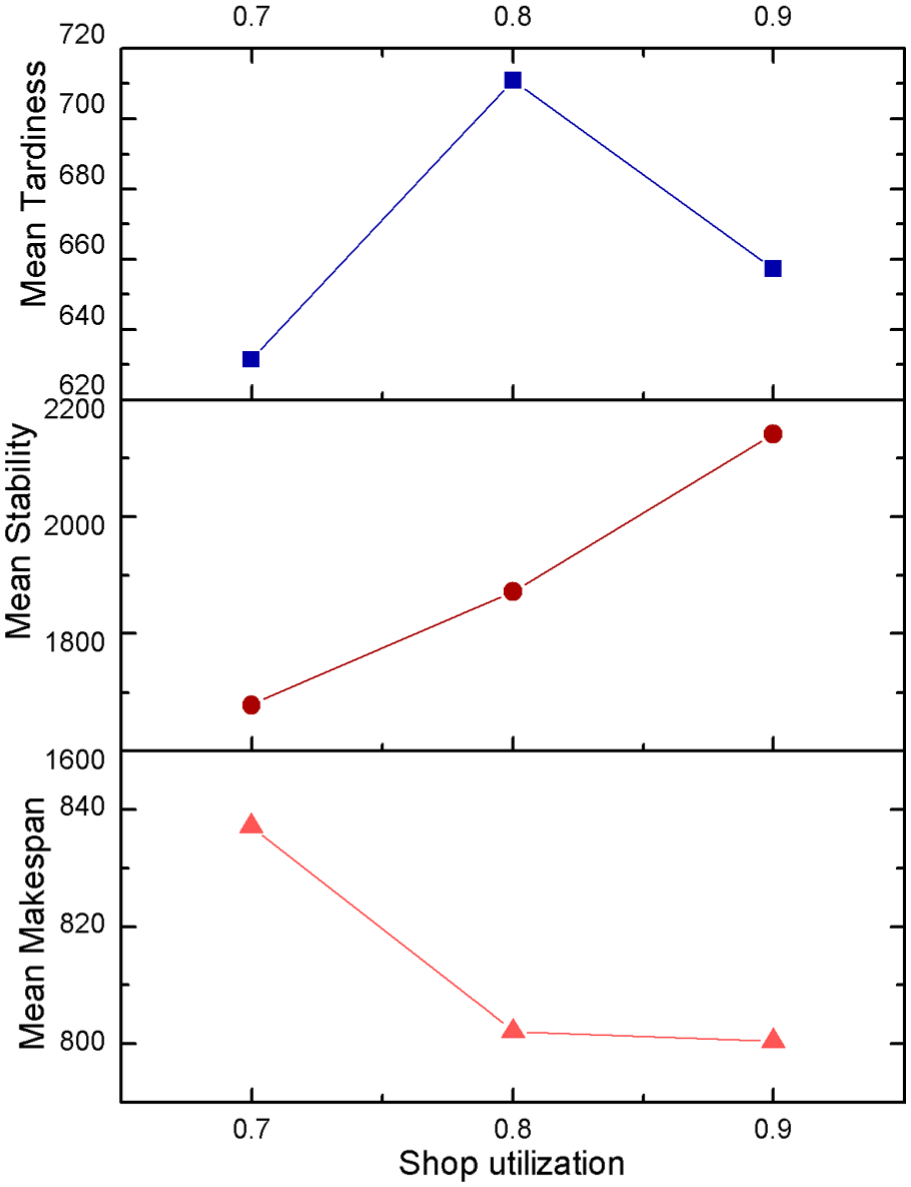

In this paradigm, the rescheduling is performed whenever a new job arrives (Scenario 6). Since the time between arrivals of jobs is controlled by the arrival rate

Averages of Pareto fronts of 10 simulations with three criteria.

A large shop utilization means shortening a rescheduling interval, and this will affect the stability criterion. As analyzed in section “Periodic rescheduling,” the peak point of the mean stability depends mainly on the number and the length of intervals and the number of new jobs in each interval. According to Figure 10, the mean stability shows a low performance when the interval is short (a large shop utilization) because frequent new job arrivals will result in more unfinished operations and this increases the risk of deteriorations of this criterion. Different with other two criteria, a busy status in a shop is helpful to the makespan criterion. A large shop utilization means shortening the interval between two job arrivals, and there are more operations to be detained to the last interval in such cases since less operations can be finished in a small interval. In such a case, the last interval is much longer than other intervals and more unfinished jobs will be finished in the last interval. This situation is very close to the deterministic scheduling case where there is only one interval without random job arrivals but competitive makespan values. Naturally, scheduling more unfinished jobs in the last interval will benefit the makespan criterion. For the tardiness criterion, it reaches the peak with a medium shop utilization (

Conclusion

In this article, we study the characteristics of the IPPS problem with new job arrivals. A novel MILP model for the dynamic IPPS problem has been deduced. More importantly, a periodic and event-driven rescheduling framework is presented, and NSGA-II is applied to each scheduling interval with three criteria. Extensive experiments have been conducted, and the results show that the length of a scheduling interval, the number of new added jobs, and the shop utilization have an important influence on the efficiency of a manufacturing system. These resultant conclusions can be employed to guide real-life dynamic scheduling problems.

In this work, the processing time is deterministic. However, processing times are uncertain and subject to some probability distribution in real-world situations. Therefore, uncertain processing times can be further considered in the dynamic IPPS problem as a further research direction.

Footnotes

Appendix 1

Acknowledgements

The authors thank the anonymous referees for their insightful and valuable suggestions.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research is supported by the Funds for International Cooperation and Exchange of the National Natural Science Foundation of China (Grant No. 51561125002), National Natural Science Foundation of China (Grant Nos 51275190 and 51575211), and the Fundamental Research Funds for the Central Universities (HUST: 2014TS038).