Abstract

In the field of large-scale dimensional metrology, new distributed systems based on different technologies have blossomed over the last decade. They generally include (1) some targets to be localized and (2) a network of portable devices, distributed around the object to be measured, which is often bulky and difficult to handle. The objective of this article is to present some diagnostic tests for those distributed large-scale dimensional metrology systems that perform the target localization by triangulation. Three tests are presented: two global tests to detect the presence of potential anomalies in the system during measurements and one local test aimed at isolating any faulty network device(s). This kind of diagnostics is based on the cooperation of different network devices that merge their local observations, not only for target localization but also for detecting potential measurement anomalies. Tests can be implemented in real time, without interrupting or slowing down the measurement process. After a detailed description of the tests, some practical applications on Mobile Spatial coordinate Measuring System-II (MScMS-II) – a distributed large-scale dimensional metrology system based on infrared photogrammetric technology, recently developed at DIGEP-Politecnico di Torino – are presented.

Keywords

Introduction and literature review

In the last decade, there has been an increasing development of distributed dimensional metrology systems, that is, instruments consisting of multiple devices that are positioned around the object to be measured and cooperate during the measurement activity.1–3 The majority of these systems have been developed in the field of large-scale dimensional metrology (LSDM), concerning the measurement of medium- to large-sized objects (i.e. according to the definition by Puttock, 4 ‘objects with linear dimensions ranging from tens to hundreds of meters’), in industrial environments. Typical industrial applications are (1) reconstruction of curves/surfaces for dimensional verification and (2) assembly of large-sized mechanical components, in which levels of accuracy of several tenths of millimetres are generally tolerated.

The reason behind the development of distributed LSDM systems is simple: arranging a portable measuring instrument around the object to be measured is often more practical than the vice versa. 5

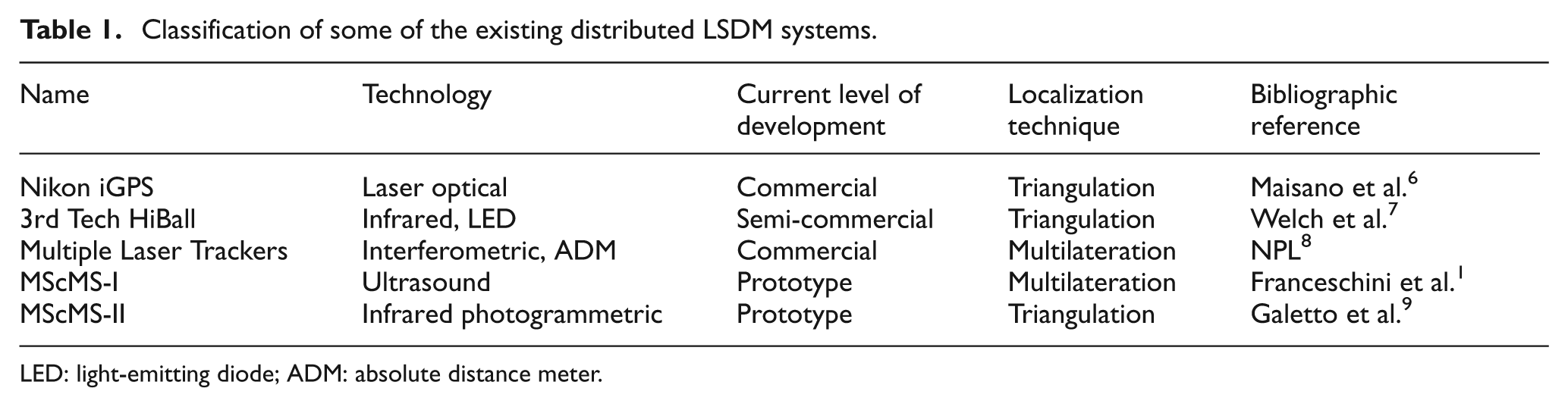

Existing measuring systems differ in technology (e.g. laser optical, photogrammetric, interferometric and ultrasound); some of these are consolidated and available on the market, while others are only prototypes. Table 1 classifies some systems, reporting key features and bibliographic references for the reader.

Classification of some of the existing distributed LSDM systems.

LED: light-emitting diode; ADM: absolute distance meter

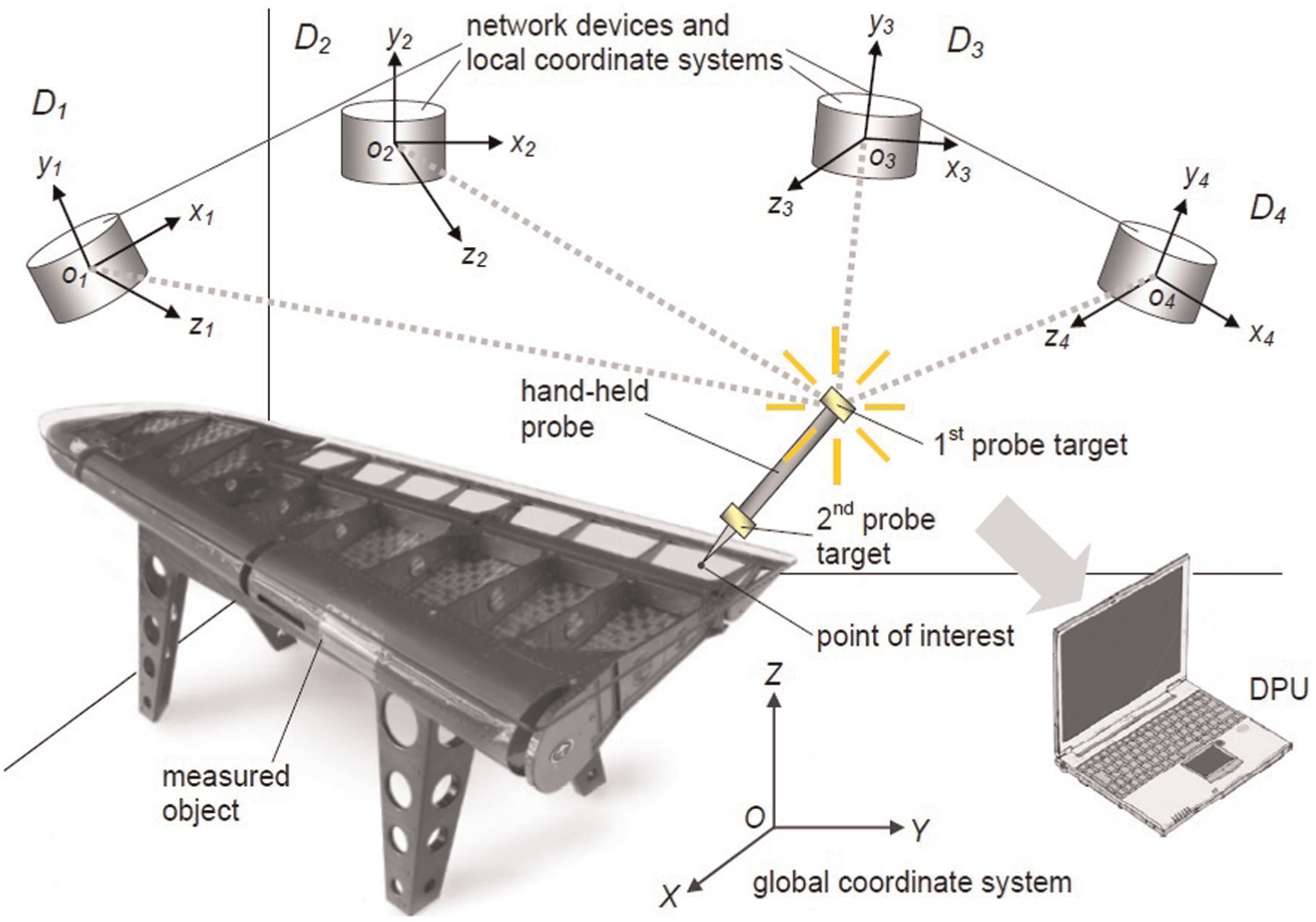

The common features of these systems are as follows (see Figure 1):

A network of devices distributed around the object to be measured;

A hand-held probe for measuring the spatial Cartesian coordinates (XYZ) of the points of interest;

A centralized data processing unit (DPU), which receives local measurement data from network devices.

Schematic representation of a generic distributed LSDM system.

The probe is equipped with some targets and a stylus, which is in contact with the point of interest. A probe calibration process allows to know the relative positions between probe targets and stylus. The localization of probe targets allows to determine the probe position/orientation and – consequently – the stylus position. Since it acts as a filter, the stylus radius is chosen depending on the measurement task. This is a typical problem of classical contact coordinate measuring machines (CMMs); for more information, see Butler. 10

In certain cases (e.g. for Mobile Spatial coordinate Measuring System-II (MScMS-II)), probe targets are passive sensors, while in others (e.g. for iGPS), they are active and can have a processing capability which makes them able to perform local measurements (typically angles or distances) with respect to network devices.

As shown in Table 1, there are two typical techniques for localizing probe targets: 10

Triangulation, using the angles subtended by the targets, from the local perspective of at least two network devices;

Multilateration, using the distances between the targets and at least three network devices.

The number of devices involved in the localization of a target depends on their mutual positioning/orientation and communication range. For distributed LSDM systems, as well as for metrological systems in general, reliability of measurements is essential and can be increased by the use of real-time diagnostic tools able to detect measurement accidents and discard/correct (part of) the measurement results.

The purpose of this article is to present some novel statistical tests for the online diagnostics of distributed LSDM systems based on triangulation, in the case of quasi-static measurements – that is, targets are stationary or are moved at very low speeds during their localization. These tests make it possible to identify possible measurement accidents and, subsequently, to isolate the (potentially) faulty network devices. This kind of diagnostics can be classified as cooperative since it is based on the cooperation of different network devices that merge their local angular measurements.

The three statistical tests that will be discussed are divided in two categories:

Two global tests aimed at evaluating the reliability of measurements, based on their variability.

A local test that – when a measurement is not considered reliable by (at least one of) the global tests – identifies the potentially faulty device(s) and (temporarily) excludes them from the measurement process, without interrupting it.

After a detailed description of each test, some real application examples using MScMS-II – that is, a prototypical distributed LSDM system based on infrared photogrammetric technology, recently developed at the Industrial Metrology and Quality Engineering Laboratory of DIGEP-Politecnico di Torino – are shown.

The remaining of this article is structured in four sections. Section ‘Background information’ provides some background information, which is helpful to grasp the subsequent description of statistical tests: (1) basic concepts concerning distributed LSDM systems’ diagnostics, (2) a general description of the localization problem for systems based on triangulation and (3) a brief description of MScMS-II, on which the diagnostic tests will be implemented. Section ‘Online diagnostic tests’ provides a detailed description of the statistical tests (global and local, respectively) with some experimental examples. Finally, Section ‘Implications, limitations and future research’ summarizes the original contributions of this research, focusing on its implications, limitations and possible future developments.

Background information

Basic concepts concerning diagnostics

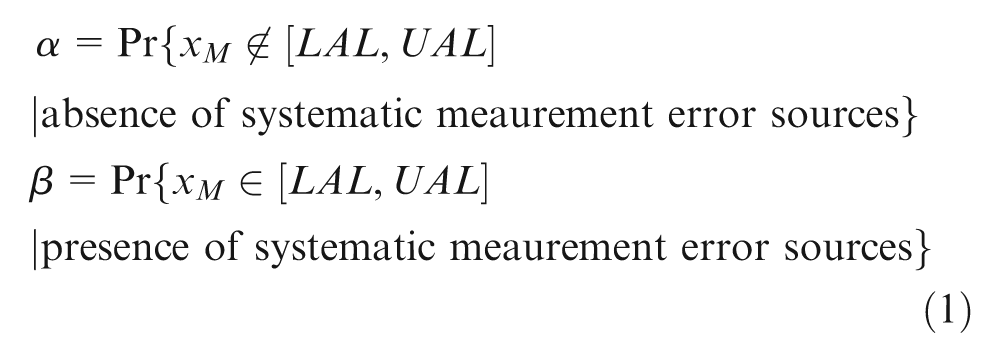

In general, the concept of reliability of a measurement is defined as follows: For each measurable quantity x, it can be defined an acceptance interval [LAL, UAL] (where LAL stands for lower acceptance limit and UAL for upper acceptance limit).

1

The measure xM of the quantity x, produced by a measurement system, is considered reliable if

Type I and Type II probability errors (misclassification rates), respectively, correspond to

Usually, LAL and UAL are defined considering the natural variability of the measurement system (which is linked to its metrological characteristics of accuracy, reproducibility, repeatability, etc.), in the absence of systematic error sources. 11 The authors are aware that systematic errors can never be eradicated completely, especially when they are relatively small and interrelated with each other. The assumption of only random errors is not valid in general, even though could be adequate for many applicative situations.

For distributed systems, local anomalies of one or more network devices can distort or even compromise the whole measurement. On the contrary, when these anomalies are recognized, the measurement results can be corrected, (temporarily) excluding malfunctioning device(s). This is the reason why distributed systems are – to some extent – rather ‘vulnerable’ but can be successfully protected by appropriate diagnostic tools.

For distributed systems, a typical diagnostic approach is based on the so-called model-based redundancy, where the replication of a physical instrumentation – which is typical of the physical redundancy approach – is substituted by the use of appropriate mathematical models. 12 These models may derive from physical laws applied to experimental data or from self-learning method (e.g. neural networks) and allow the detection of system failures by comparing measured and model-elaborated process variables. This diagnostic approach is made possible by the fact that for distributed systems, the number of network devices generally involved in a measurement is greater than the number strictly necessary for performing the localization of target(s).

This type of diagnostics is based on the cooperation of network devices, whose local observations are used in conjunction, not only for target localization but also for detecting possible measurement anomalies or accidents.

Diagnostic tools based on this philosophy are implemented for GPS-assisted aircraft navigation, where the global positioning system (GPS) can be seen as a very large-scale-dimensional metrology distributed system, in which localization is performed by multilateration. 13 Furthermore, Franceschini et al. 14 give a detailed description of some online diagnostic tools for MScMS-I, an indoor distributed LSDM system based on multilateration.

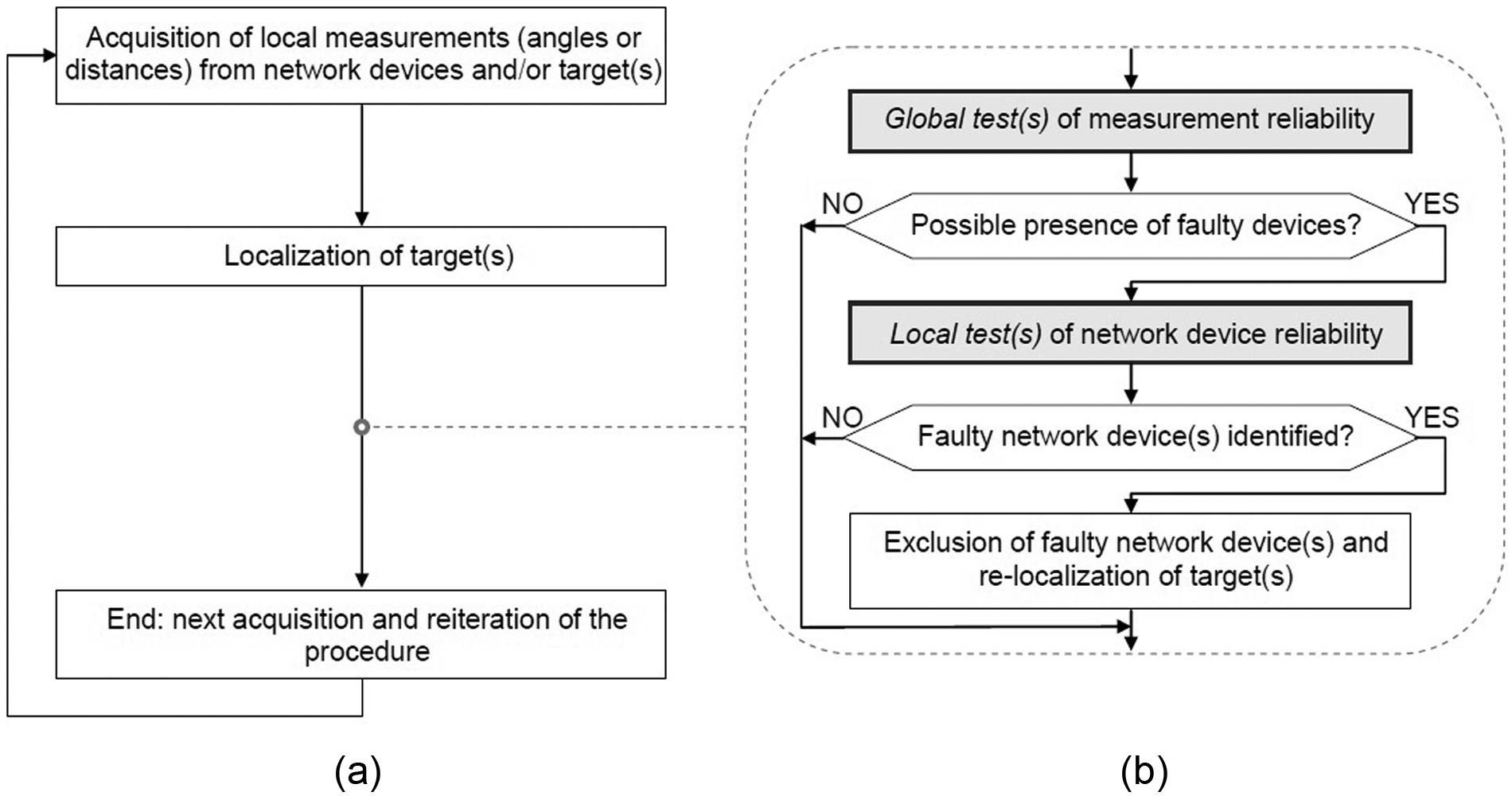

As mentioned in Section ‘Introduction and literature review’, this diagnostic generally includes two types of tests (global and local), aimed, respectively, at (1) evaluating unreliable measurements and (2) identifying and (temporarily) excluding purportedly faulty network devices. The flowchart in Figure 2 illustrates a typical sequence of implementation of these tests.

Flowchart showing the logical implementation sequence relating to the online diagnostic tests: (a) localization procedure and (b) online system diagnostics.

The triangulation problem

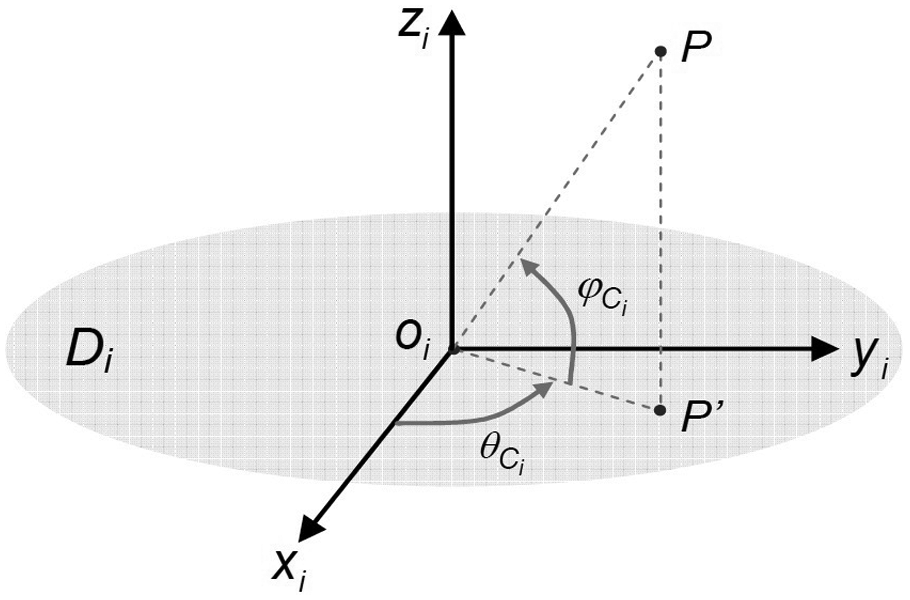

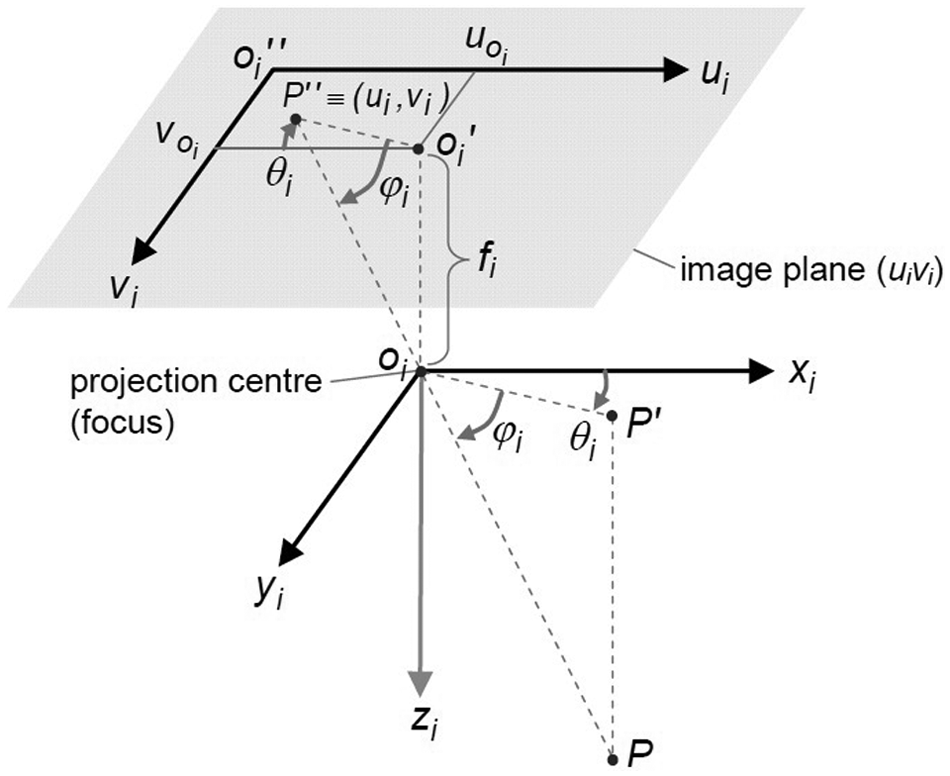

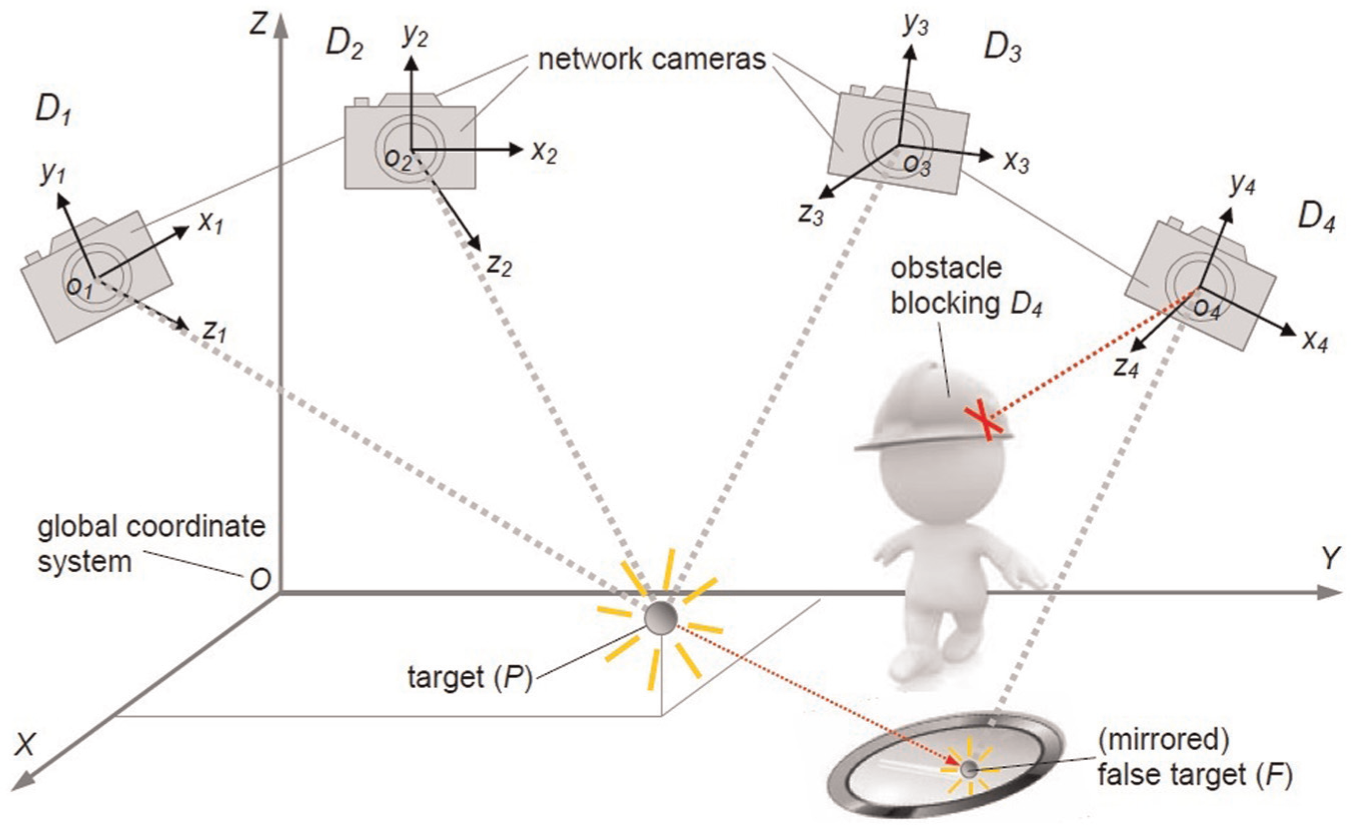

Figure 1 depicts a distributed LSDM system consisting of a number of network devices (D1, …, DN) positioned around the object to be measured. OXYZ is a global Cartesian coordinate system. Each of the devices has its own spatial position and orientation; for each ith device, it is defined a local coordinate system oixiyizi, roto-translated with respect to OXYZ.

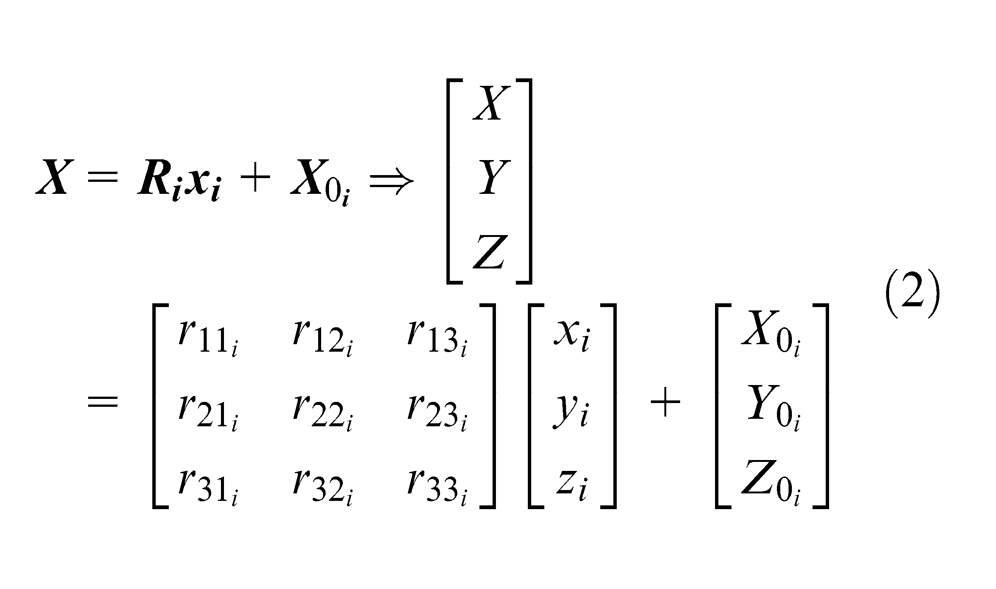

A general transformation between a local and the global coordinate system is given by

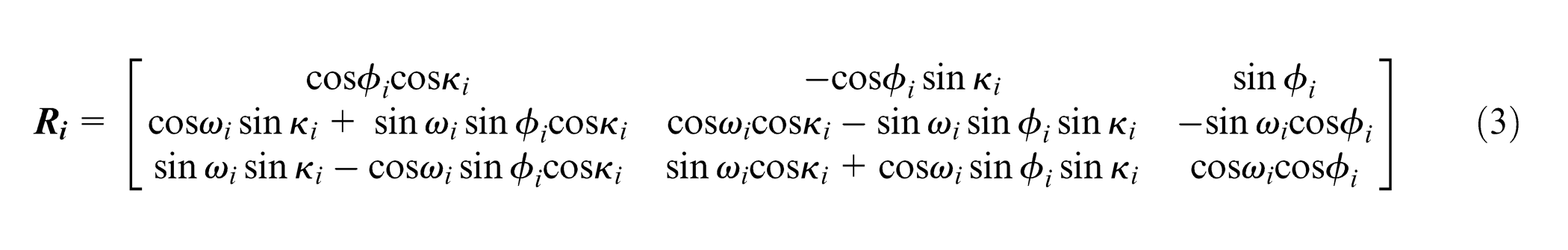

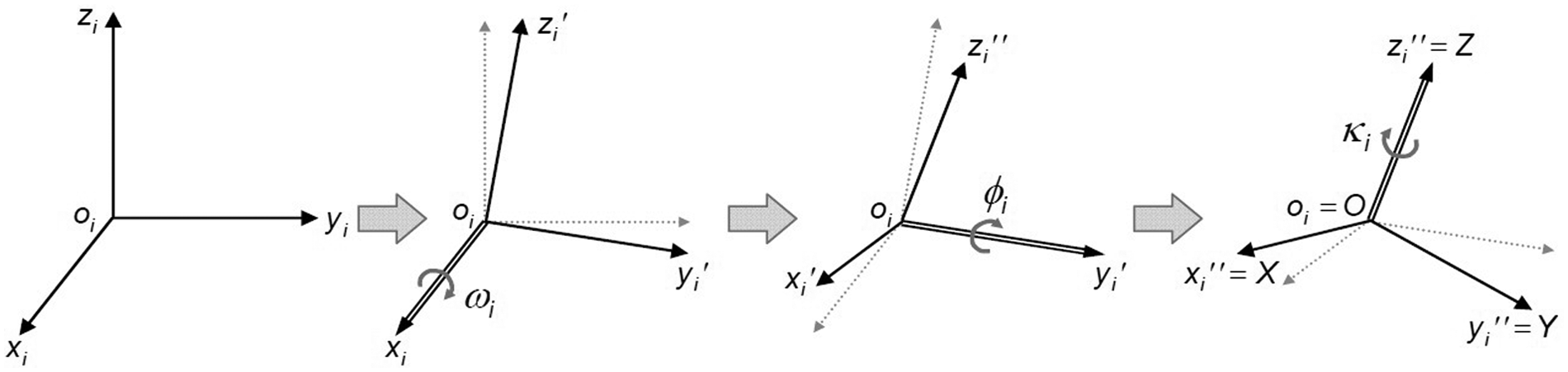

where ωi represents a counterclockwise rotation around the xi axis; ϕi represents a counterclockwise rotation around the new yi axis (i.e.

Rotation parameters regarding the transformation between a local coordinate system (oixiyizi) and the global one (OXYZ).

The (six) location/orientation parameters related to each network device (i.e.

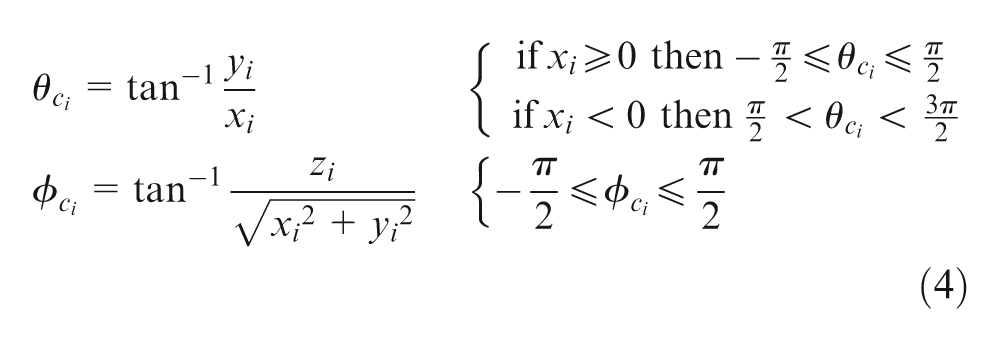

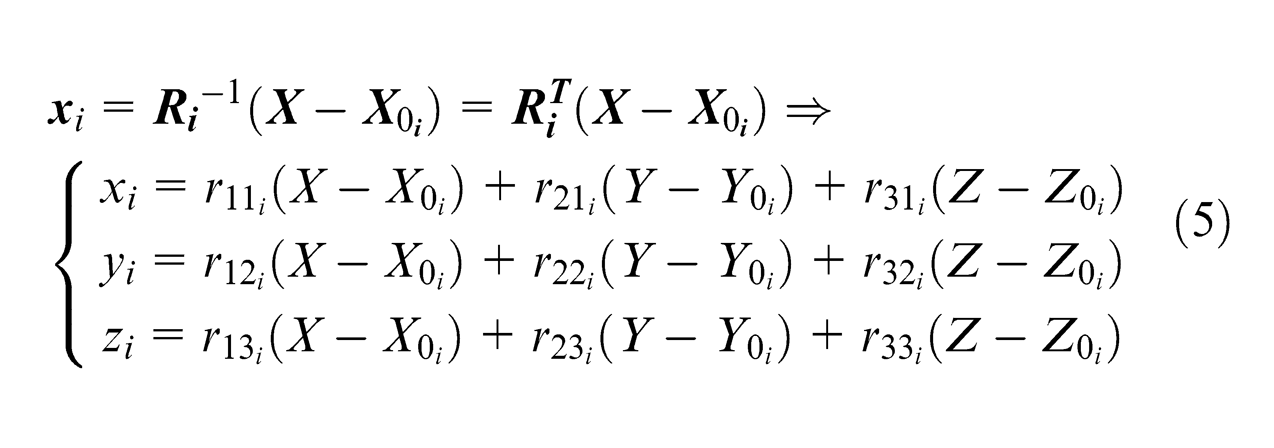

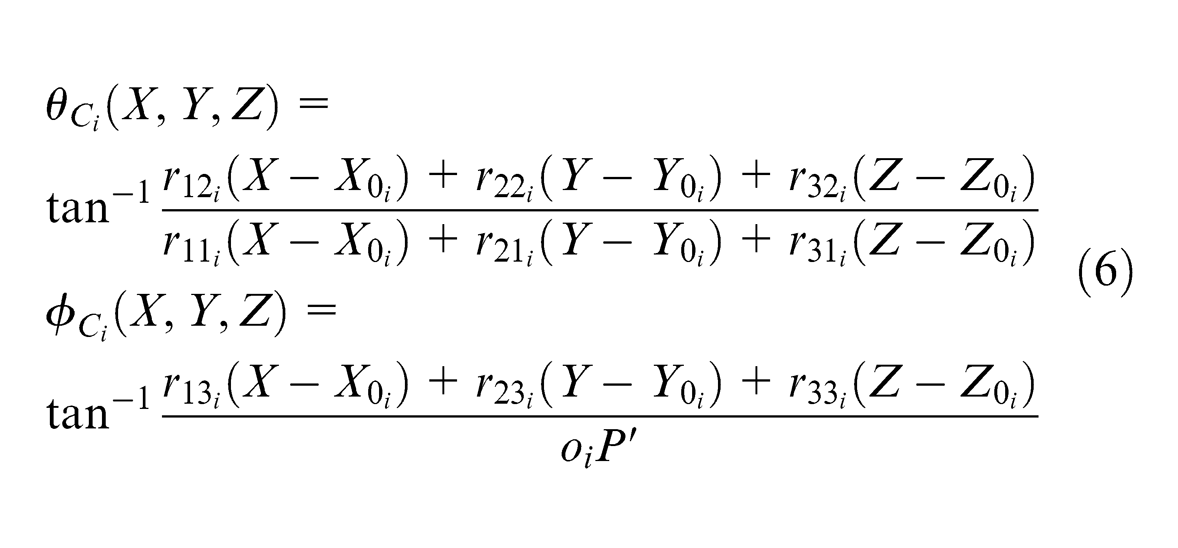

The point to be located is P ≡ (X, Y, Z). From the local perspective of each ith device, two angles – that is,

For a generic network device (Di), two angles – that is,

Regarding the two angles in equation (4), the subscript ‘ci’ means that – for the ith network device – they are calculated as functions of the local coordinates of P ≡ (xi, yi, zi).

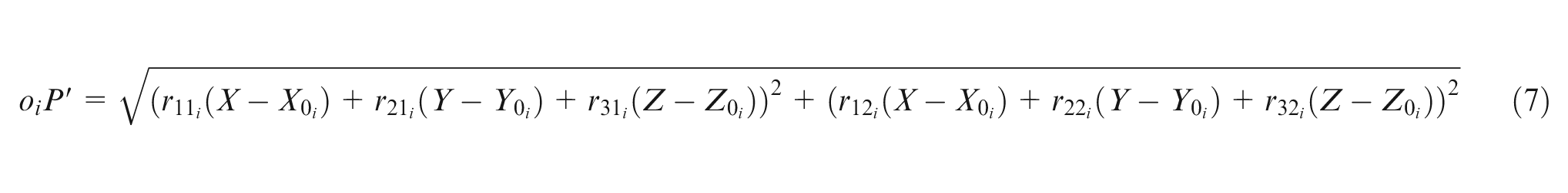

The resulting formulae of

being

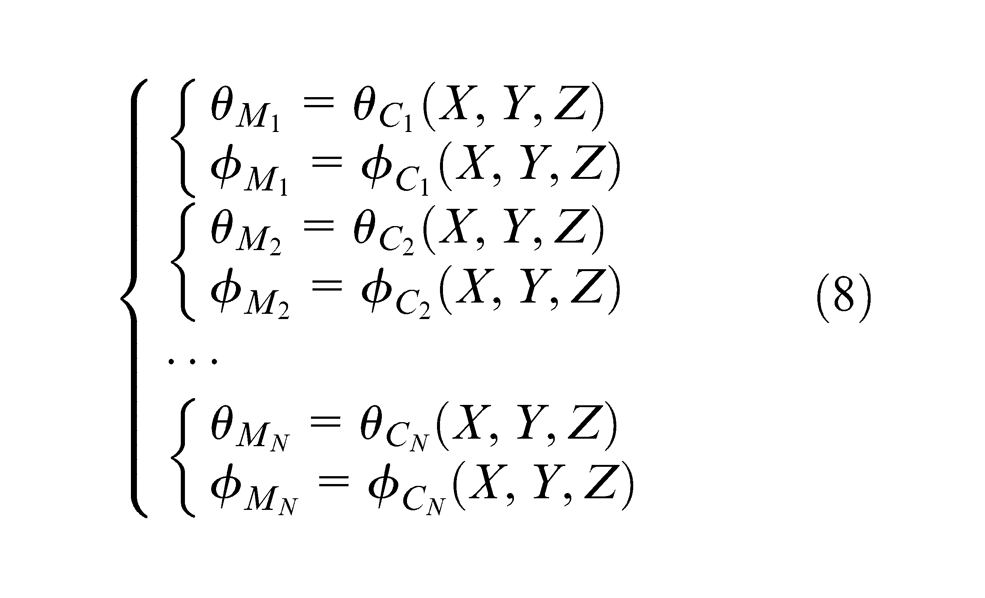

Using the two angular local measurements (

where N is the number of network devices (with a priori known location and orientation) involved in the measurement.

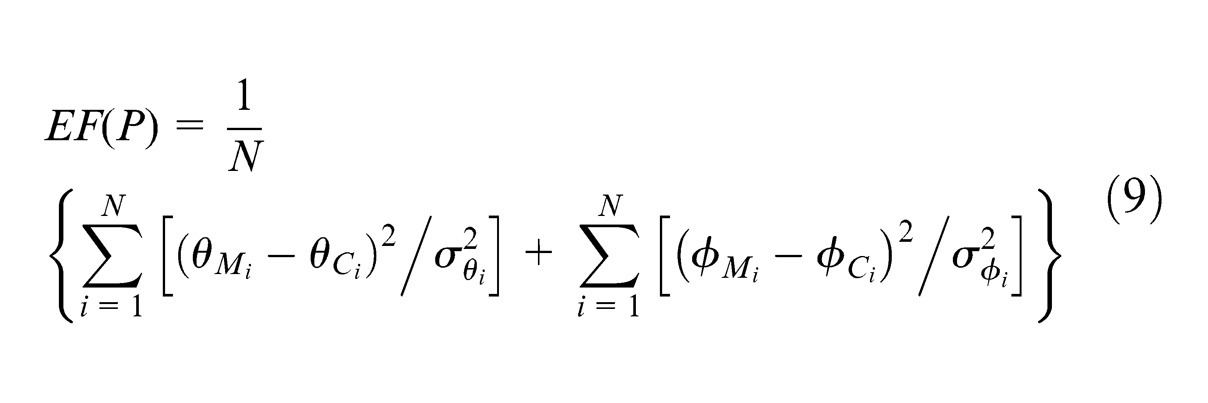

The system in equation (8) can be solved when P is ‘seen’ by at least two devices (2 angles × 2 devices = 4 total equations). Since the triangulation problem is overdefined (more equations than unknown parameters), it can be solved using a minimization approach. 16 The position of P can be estimated by the iterative minimization of a suitable error function (EF). There are many possible choices of the EF to minimize for solving the localization problem. That one in equation (9) was defined trying to keep it as simple and general as possible

where P is the point to be localized, whose unknown coordinates (X, Y, Z) are the solution of the problem;

It is worth remarking that the determination of the

Finally, since the proposed EF is non-linear, its minimization can be computationally expensive. The burden of computation can be eased by employing a suitable linearization technique, for example, techniques based on first-order Taylor expansion, Newton–Raphson method and so on.

The MScMS-II

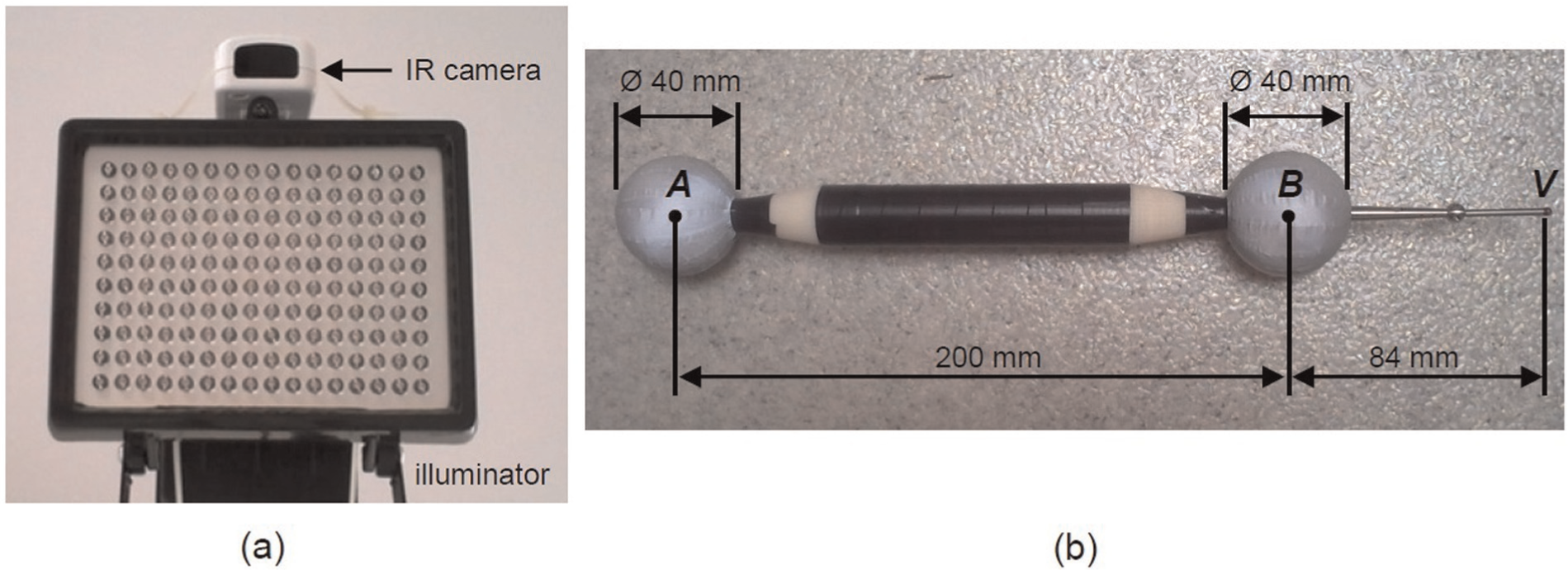

The MScMS-II is a prototypical measuring instrument, based on infrared (IR) photogrammetric technology. Network devices are low-cost IR cameras associated with IR illuminators, while the hand-held probe has two reflective spheres, whose centres are A and B, and a stylus (V), in contact with the point(s) of interest (see Figure 5). Reflective spheres act as passive targets illuminated by the illuminators. Alternatively, they can be replaced with active spherical targets that emit IR light, not making it necessary to use illuminators.

Elementary components of the MScMS-II: (a) IR cameras (acting as network devices) and IR illuminators and (b) hand-held probe with two spherical targets (A and B) and a stylus (V). 9

The localization of the probe targets allows to uniquely determine the coordinates of the probe stylus, being A, B and V positioned on the same line, at known distances. The measurement uncertainty of MScMS-II for three-dimensional (3D) point coordinates is included within several tenths of a millimetre; for additional details, see Galetto et al. 9 The hand-held probe was manufactured by a rapid prototyping process, with dimensional error of the order of a few hundredths of a millimetre, that is, at least 1–2 orders of magnitude lower than the measurement uncertainty of MScMS-II. Therefore, the assumption that the spheres and the stylus are exactly on the same line is not unrealistic.

Since A and B have the same diameter, the orientation of V can be ambiguous. However, this problem is overcome by the fact that in the measurement process, the probe is always pointing down (see Figure 1), with sphere A to a higher level with respect to sphere B.

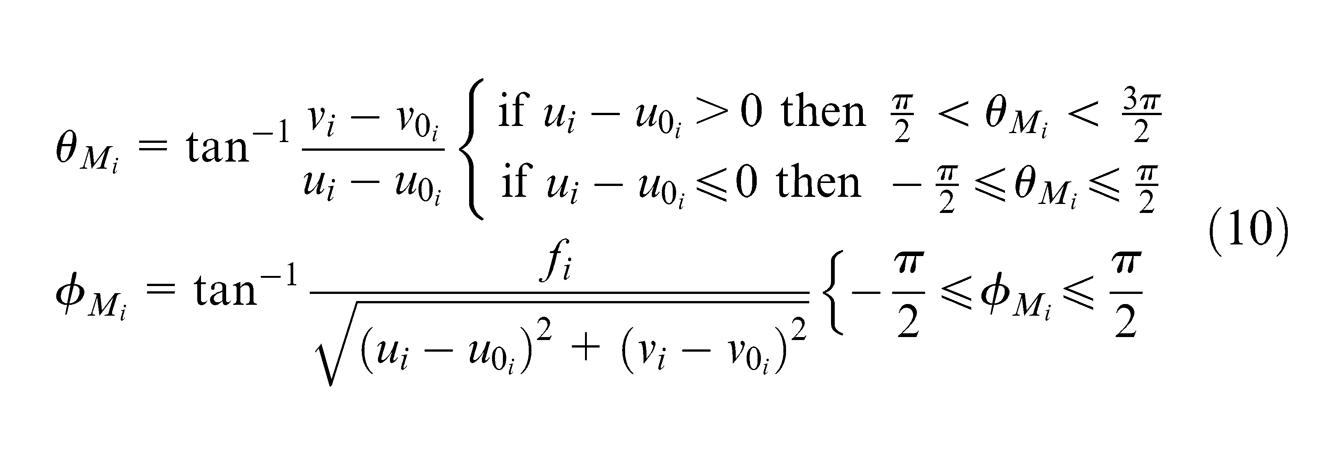

The attention now focuses on each ith network device (camera). Given the position P″≡ (ui, vi) of the projection of target P on the camera’s image plane uivi, which is parallel to the plane xiyi of the local coordinate system, and knowing some intrinsic parameters of the camera – that is, the focal length (fi) – it is possible to determine the angles

where ui and vi are the coordinates of the projection (P″) of P on the image plane;

Note that

For a generic ith network device, representation of the local coordinate system, with origin (oi) in the projection centre (or focus), and the image plane uivi – parallel to the plane xiyi, at a distance fi (i.e. the focal length).

Angles

Being based upon IR optical technology, MScMS-II is sensible to many influencing factors. The most common measurement accidents are

Vibration or accidental movement of the cameras;

Partial occlusion (e.g. by obstacles interposed between network device(s) and target(s)) or target overlapping;

False targets due to IR light reflection on polished surfaces or the presence of other external uncontrolled IR light sources.

These and other potential causes of accidental measurement errors must be taken under control to assure an acceptable level of accuracy. These aspects are examined in detail in Galetto and Mastrogiacomo. 18

Online diagnostic tests

With the aim of protecting the system, MScMS-II implements some statistical tests for online diagnostics. Three tests are analysed in the following sub-sections:

Test 1: global test on the EF;

Test 2: global test on the distance between probe targets;

Test 3: local test for identifying purportedly faulty device(s).

Test 1: global test on the EF

By definition (see equation (9)), EF(P) ≥ 0 for all the points in the measurement volume

EF(P) is strictly positive even in the point of correct localization;

EF(P) converges to a point that is not the correct one. As a result, a local minimum may be confused with the global minimum.

The first diagnostic criterion is aimed at identifying all the non-acceptable minima solutions for EF(P), in order to prevent system fails. Such criterion enables MScMS-II to distinguish between reliable and unreliable measurements.

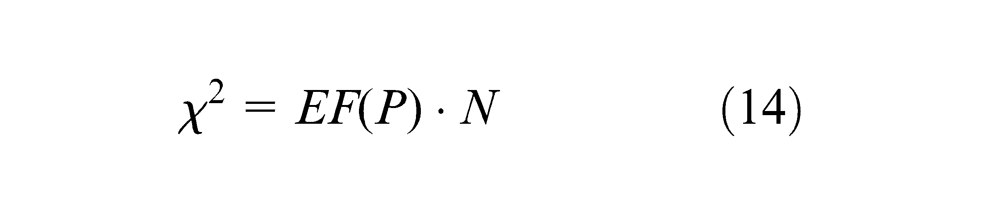

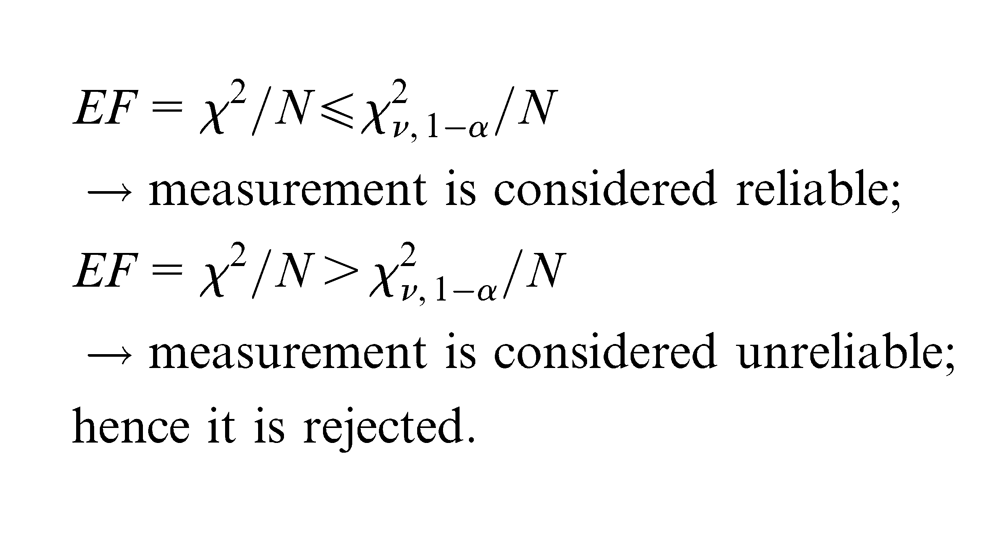

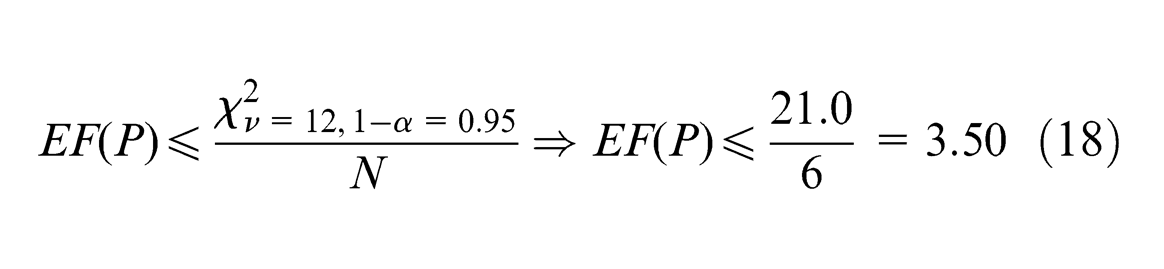

Let us consider a solution

If

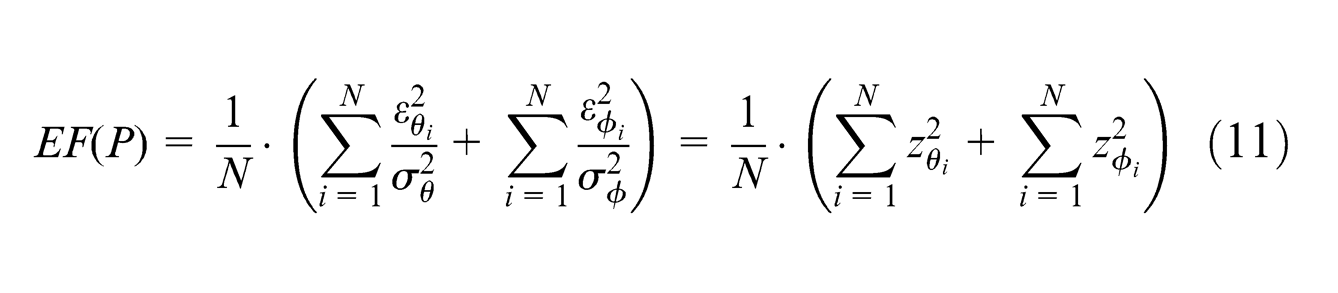

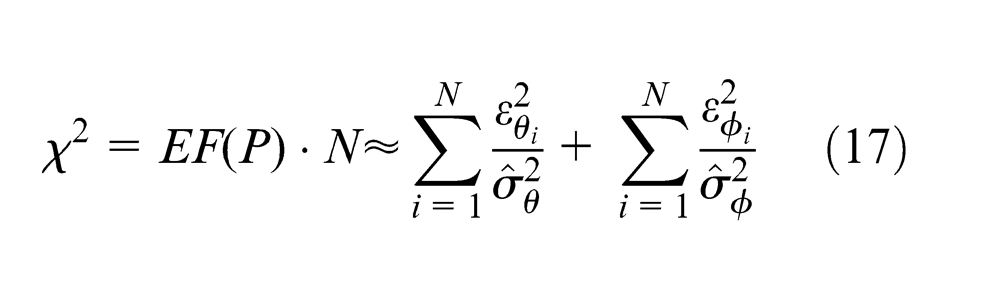

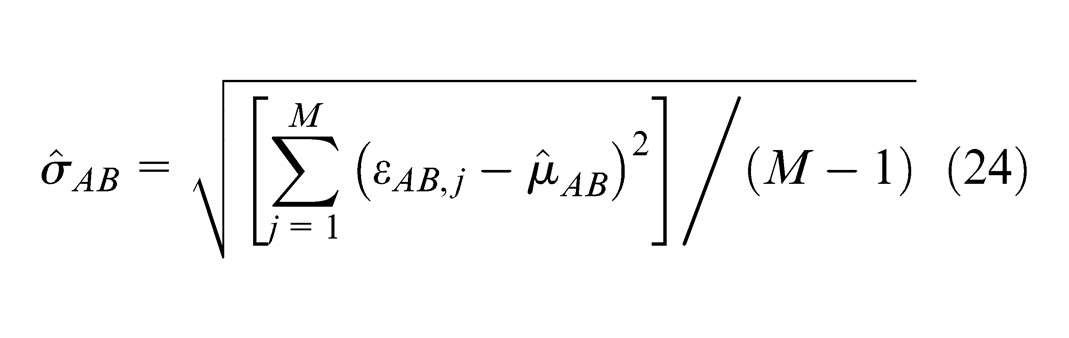

EF(P) can be seen as the sum of the squares of N+N realizations of two series of normally distributed random variables (

Equation (11) therefore can assume the following form

where

The residual standard deviations, that is, σθ and σϕ, can be a priori estimated for the whole measurement volume, for example, during the phase of installation and calibration of the system.

Equation (12) can be expressed as

Since χ 2 is obtained by adding two chi-square distributed variables with N DOF each, it will follow a chi-square distribution with 2·N DOF. 17

Every time the localization of a probe target is performed, MScMS-II diagnostics calculates the following quantity

Assuming a risk α as a type I error, a one-sided confidence interval for the variable χ

2

can be calculated.

The test drives to the following two alternative conclusions

Set-up of test parameters

The risk level α is established by the user. A high α prevents from non-acceptable solutions of the minimization problem, although it might drive to reject good solutions. On the contrary, a low α speeds up the measurement procedure, although it might drive to collect wrong data due to the consequent increase of the type II error β.

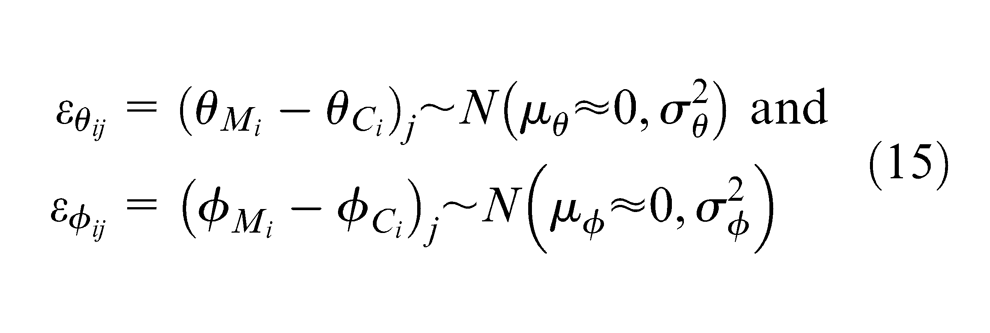

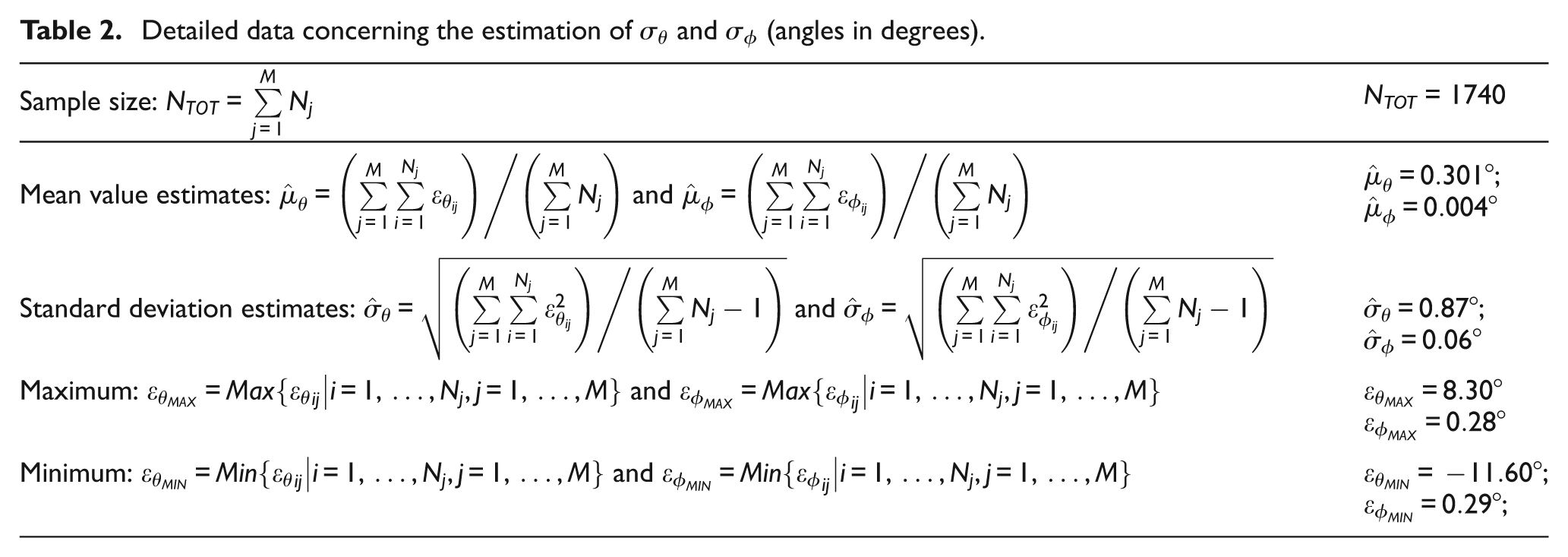

The residual standard deviations σθ and σϕ can be determined empirically, on the basis of experimental angle measurements. In this case, σθ and σϕ are estimated from the residuals obtained by measuring a sample of points randomly distributed in the whole measurement volume

Given a set of M points randomly distributed in the measurement volume and measured by a single target (with a random sequence of measurements), two sets of Nj residuals (i.e.

In the absence of systematic error causes and time or spatial/directional effects, it is reasonable to hypothesize that

being

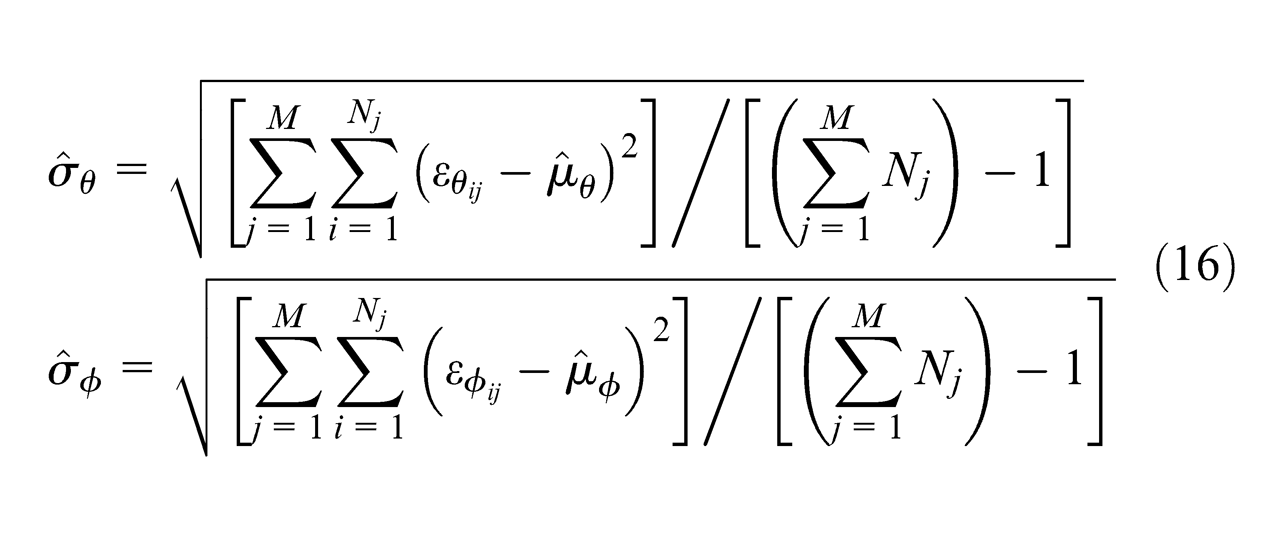

The standard deviations σθ and σϕ may be estimated as follows

The resulting values of

Experimental example

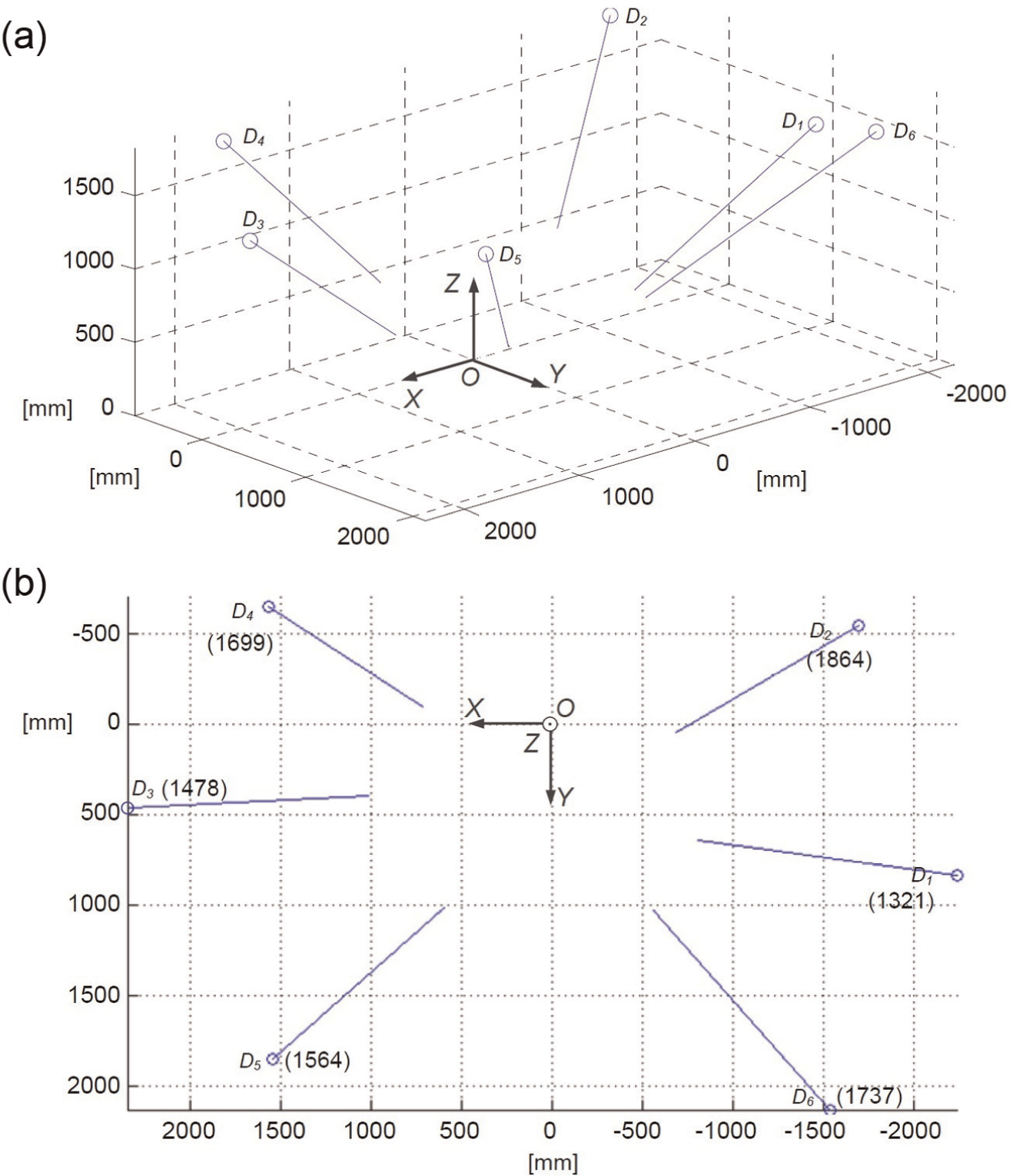

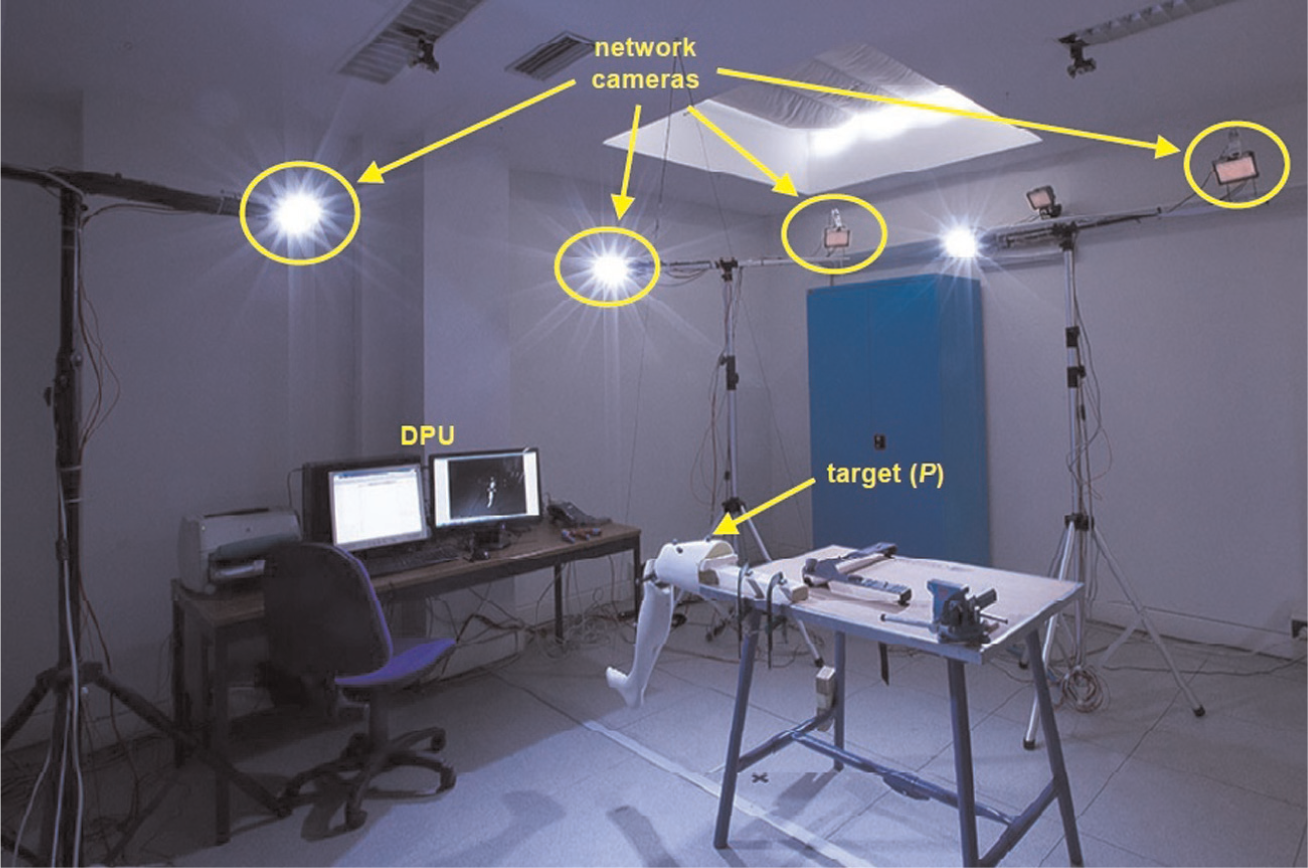

It was used a network consisting of six cameras (D1, …, D6) with known position and orientation, distributed in the measurement volume as schematized in Figure 7. Each camera’s position/orientation is determined through a semi-automated network calibration procedure, illustrated in detail in Svoboda et al. 19 Figure 8 shows an image of the experimental set-up.

Representation of the positioning and orientation of the MScMS-II network devices used in the application example: (a) 3D view and (b) XY plane view, with Z values relating to the position of each device in parentheses. OXYZ is the global coordinate system (coordinates in millimetres). The measuring volume contains six network cameras (D1, …, D6), whose outgoing vectors (in blue) represent their orientation (colours in online).

Area of the Industrial Metrology and Quality Engineering Laboratory of DIGEP-Politecnico di Torino, where the experiments were performed.

The standard deviations σθ and σϕ were empirically estimated according to the following steps:

M = 290 points distributed in the measurement volume were measured using a single target. The rough position of each point is randomly decided using a random number generator.

The coordinates of each point (Pj, j = 1, …, M) were evaluated by minimizing the EF in equation (9). With respect to

Measurements were performed in a controlled environment (e.g. temperature, light and vibrations were kept under control) and the distributions of residuals were thoroughly analysed, in order to exclude measurement accidents, for example, time or spatial/directional effects, IR light reflection, presence of external IR sources or other non-random causes of variation in general.

The zero-mean normal distribution of each of the two sets of residuals was verified by the Anderson–Darling normality test at p < 0.05. 17

The standard deviations of the two sets of residuals were estimated by equation (16). Table 2 reports the resulting

Detailed data concerning the estimation of

Note that (1) the mean value of both the sets of residuals is roughly zero and (2) the

The hypothesis that

In conditions of maximum visibility (i.e. N = 6 network devices), the acceptance limit for EF, assuming a type I risk level α = 0.05 and ν = 2·N = 2·6 = 12 DOFs, becomes

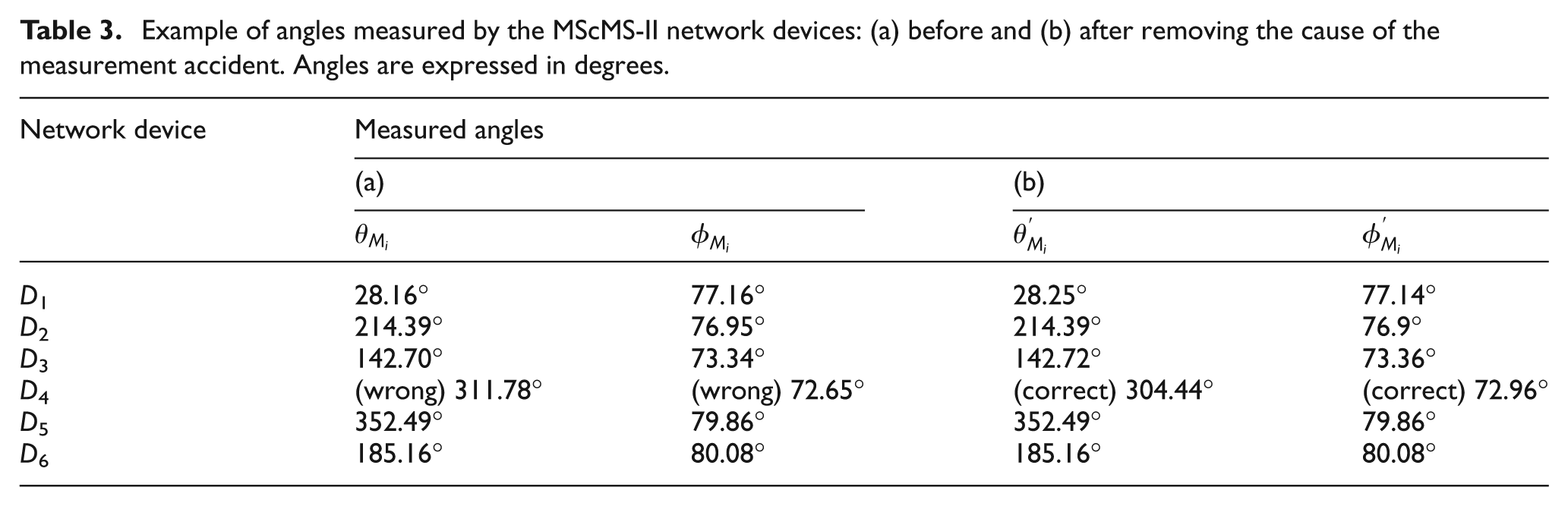

Let us now consider a possible accident that can occur using a MScMS-II or a generic system based on IR photogrammetric technology for locating targets: false targets. Referring to the configuration in Figure 7, suppose that a generic point P inside the measurement volume has to be localized. All the network devices, with the exception of one, that is, D4, are able to correctly measure the angles (

On the contrary, being unable to see P since it is blocked, device D4 wrongly considers F as a target (see the representation in Figure 9). The consequence is that the angular measurements by D4 are wrong. See the example in Table 3(a).

Representation of a possible measurement accident for the MScMS-II: the authentic target P (with high light intensity) is not detected by D4 because of the interposed obstacle. On the contrary, the false target F– which is ignored by the other cameras because of the low light intensity – is erroneously detected by D4.

Example of angles measured by the MScMS-II network devices: (a) before and (b) after removing the cause of the measurement accident. Angles are expressed in degrees.

In this case, the algorithm will produce the following wrong localization solution:

After removing the obstacle, the new angles observed by D4 are

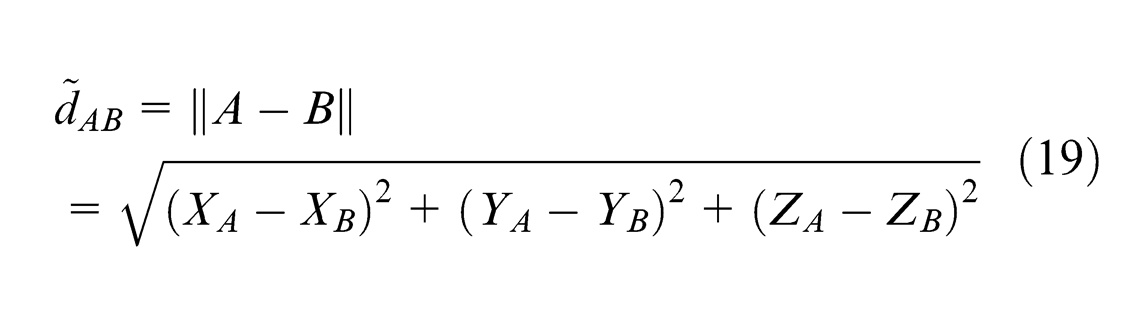

Test 2: global test on the distance between probe targets

As described in Section ‘The MScMS-II’, the hand-held probe is equipped with two targets – that is, A ≡ (XA, YA, ZA) and B ≡ (XB, YB, ZB). The distance between the two probe devices (dAB) is a priori known (see Figure 5(b)). On the contrary, having localized the two targets, their Euclidean distance can be estimated as

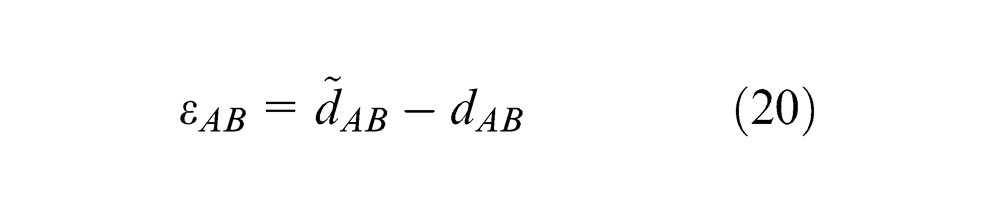

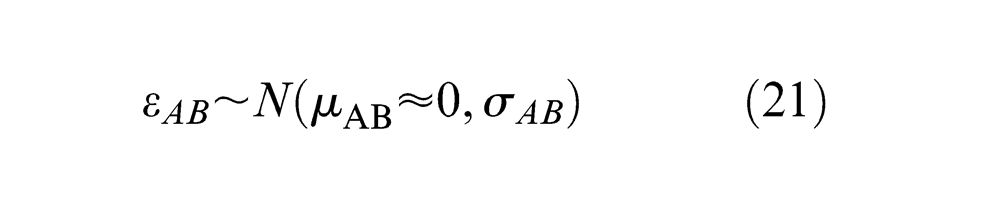

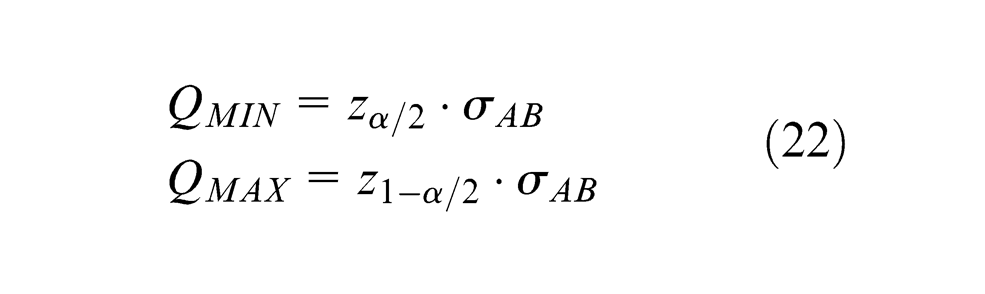

The residual εAB is defined as

In the absence of spatial/directional effects, it is reasonable to associate the εAB values to a zero-mean normal distribution (this hypothesis will also be tested empirically)

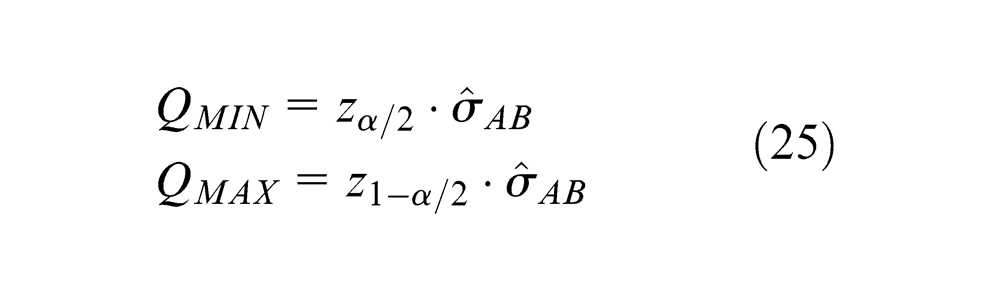

Assuming α as a type I error, a further statistical test can be performed in order to evaluate measurement reliability. Let QMIN and QMAX be, respectively, the (α/2)-quantile and (1 −α/2)-quantile of a normal distribution with mean µAB = 0 and standard deviation σAB.

For a given value of α, QMIN and QMAX can be expressed as multiples of the standard deviation σAB

where zα/2 and z(1-α/2) are the α/2- and (1 −α/2)-quantiles of the standard normal distribution. They can be determined by ϕ−1(α/2) and ϕ−1(1 −α/2), respectively, being ϕ−1(Pr) the inverse cumulative distribution function relating to the standard normal distribution.

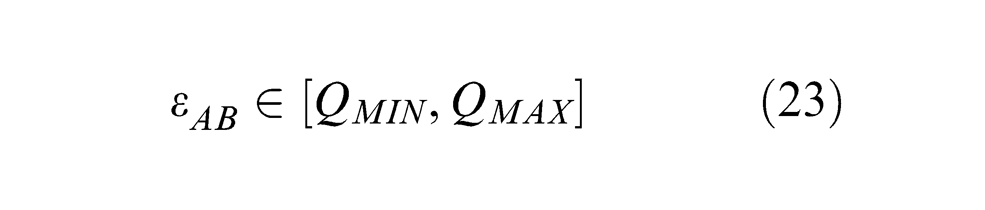

Again, the σAB value can be a priori estimated, during the preliminary stage of the system installation and calibration. Every time a measurement is performed, MScMS-II diagnostics calculates the quantity in equation (20). [QMIN, QMAX] is assumed as the symmetrical acceptance interval for the measurement reliability test; that is, if the calculated residual εAB satisfies the condition

the measurement can be considered reliable, hence it is accepted.

Set-up of test parameters

As usual, the risk level α is established by the user. Similar to the previous diagnostic test (in Section ‘Test 1: global test on the EF’), the standard deviation σAB can be evaluated empirically, on the basis of a reasonable number of angular measurements.

A set of M points randomly distributed in the measurement space

In the absence of systematic error causes and time or spatial/directional effects, it was hypothesized the same normal distribution for all the random variables

The standard deviation may be estimated as

The resulting value of

Experimental example

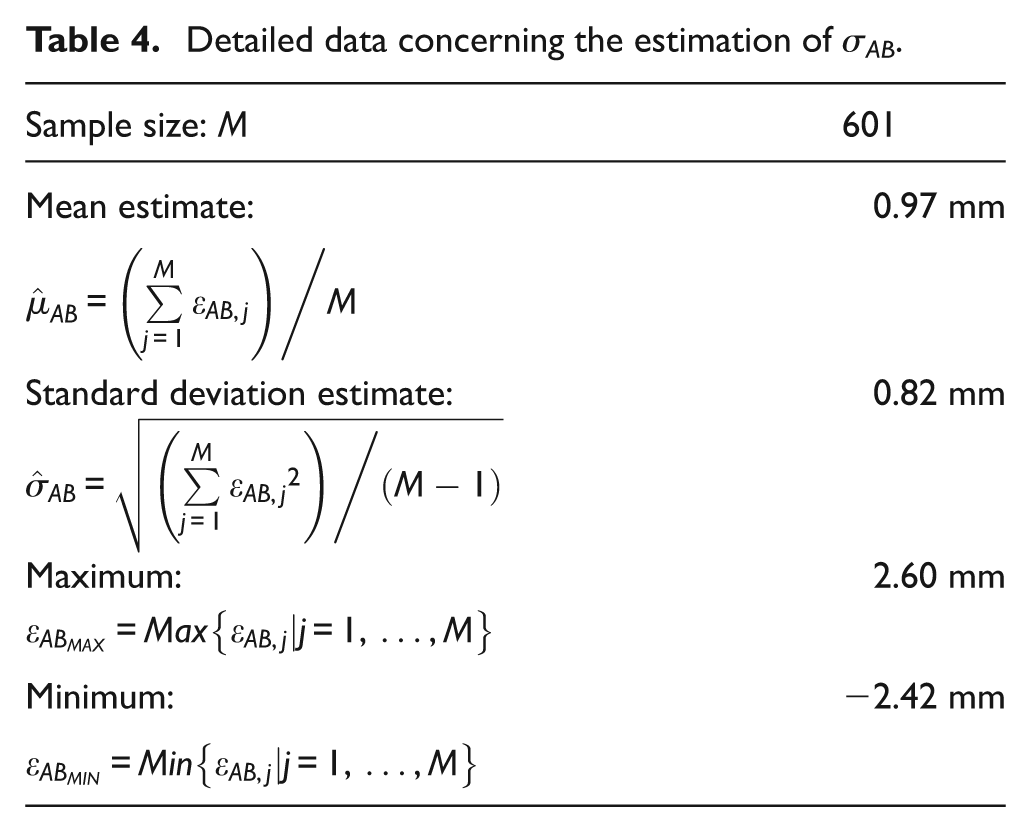

In order to estimate σAB, the following steps were followed:

A sample of M = 601 points, randomly measured by the hand-held probe, was considered.

The coordinates of each probe target were evaluated by solving the triangulation problem seen in Section ‘The triangulation problem’, and dAB is estimated according to equation (19). A sample of 601 residuals (

The zero-mean normal distribution of residuals was verified by the Anderson–Darling normality test at p < 0.05.

The standard deviation σAB was estimated using equation (24). The result is

Detailed data concerning the estimation of σAB.

Having assumed α = 5%, the resulting (1 −α) = 95% confidence interval for

Now, considering a measurement similar to that exemplified in Section ‘Experimental example’, let suppose that probe target A is placed on point P. Due to the false-target effect, the localization algorithm produces an incorrect localization of target

The residual concerning the a priori known distance AB is

After the obstacle is removed, the new coordinates of A become

Test 3: local test for identifying purportedly faulty device(s)

If at least one of the global tests fails, a local test needs to be performed for failure isolation. The philosophy is to correct the results of a dubious measurement, by excluding the network device(s) that purportedly caused the fault, without losing the observations from the remaining network devices. In this way, the target localization process is never interrupted, even in the presence of local anomalies.

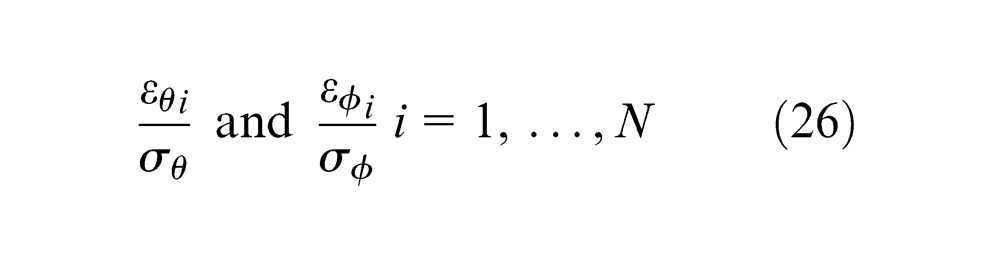

Referring to the measurements carried out by each network device, the two types of residuals defined in Section ‘Test 1: global test on the EF’ can be standardized as

where

The standardized residuals can be used for outlier detection with uncorrelated and normally distributed observations in a sense that if the ith observation is not an outlier, then

Local testing is easy under the assumption that there is only one purportedly faulty device (or outlier) in the current measurement: the local angular observation with the largest (absolute value of the) standardized residuals, provided that it is beyond the confidence interval, is regarded as an outlier and the corresponding network device (Di) is excluded from the triangulation problem.

The assumption that there is only one outlier is a severe restriction in the case measurements from more than one network devices are degraded. However, the procedure can be extended to multiple outliers iteratively: after exclusion of a potentially faulty device, the statistical test and the rejection of one other device can be repeated for that epoch until no more outliers are identified. 13 Of course, assessment for such multiple outliers may give rise to extensive computations. However, they represent a very rare event.

Set-up of test parameters

The parameters

Application example

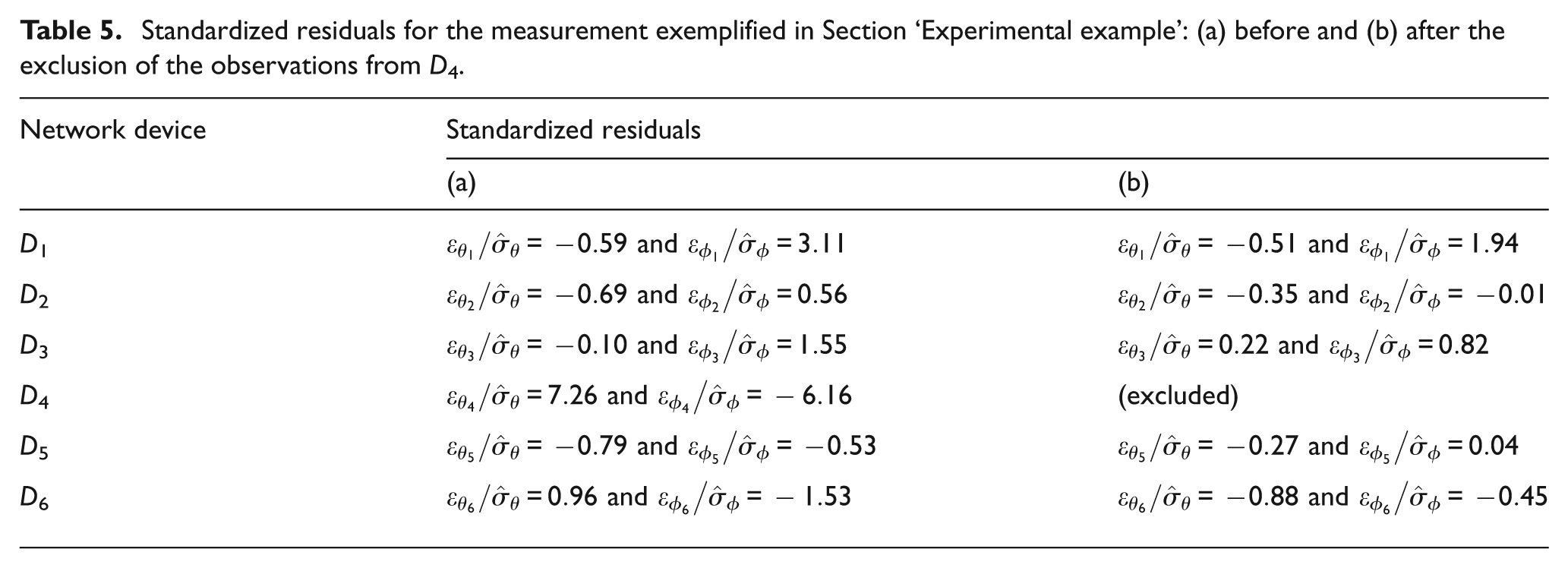

Returning to the example presented in Section ‘Experimental example’ (in which device D4 detects a false target), the relevant normalized residuals are reported in Table 5(a).

Standardized residuals for the measurement exemplified in Section ‘Experimental example’: (a) before and (b) after the exclusion of the observations from D4.

In this calculation, the

D4 is then excluded and, repeating the localization, the new output is (83.2, 1036.5, 299.5) (mm). All the standardized residuals are now contained within the confidence interval (see Table 5(b)).

Not surprisingly, the Test 1 – performed using only the observations from the five remaining devices – is satisfied; precisely,

Implications, limitations and future research

The online diagnostics presented in the article make it possible to monitor measurement reliability in real time, on the basis of some statistical tests. Although tests were implemented on MScMS-II, they are deliberately general and can be applied to any distributed LSDM system based on triangulation (e.g. iGPS and HiBall).

An important characteristic of these tests is their ability to selectively exclude faulty network device(s), without interrupting the measurement process. In addition to these tests, note that MScMS-II implements other tests, specifically related to photogrammetric technology (e.g. tests concerning epipolar geometry), which were deliberately ignored in this article. For more information, see Svoboda et al. 19 and Luhmann et al. 20

The tests described in this article require the estimation of some parameters, primarily the standard deviations related to the measurement residuals. These parameters can be evaluated empirically by performing some preliminary measurements under controlled conditions, according to the reasonable assumption of the absence of time or spatial/directional effects. This operation can be performed during the system set-up and calibration, with no additional effort. 21

Since the online implementation of these tests requires a certain computational capacity, it could slow down the measurement process. However, this consequence is minimized due to (1) the high capacity of existing processors and (2) test segmentation (i.e. local Test 3 is performed only after at least one of the global Tests 1 and 2 has detected the presence of potential anomalies). Also, a reduction of the computational workload can be achieved by linearizing the EF.

Finally, it should be remarked that in (global) Test 2, it was considered a hand-held probe with two targets. However, it may be extended to probes with multiple targets (i.e. the so-called 6-DOF probes): in this case, there would be multiple a priori known distances. 7

Future development of this research will be aimed at developing other diagnostic models for dynamic measurements (e.g. mobile object tracking). One possibility may be the integration of the models presented in this article with techniques based on the Kalman filtering. 18

Footnotes

Declaration of conflicting interests

The authors declare that there is no conflict of interest.

Funding

This research received no specific grant from any funding agency in the public, commercial or not-for-profit sectors.